Abstract

This meta-analysis examines the effectiveness of gamification in enhancing student motivation and deep learning within higher education. Despite its growing use, empirical evidence on gamification’s impact remains mixed and inconclusive. To address this, the study synthesised findings from 22 independent studies using a random-effects model. The results revealed a statistically significant and large overall effect size (Cohen’s d = 1.12, 95% CI [0.42, 1.83], p < .001), confirming that gamification positively influences learning outcomes. However, substantial heterogeneity (I2 = 98.84%) indicates variations across studies due to differences in design, type, context, and implementation. Moderator analyses identified key factors influencing effectiveness. Higher effect sizes were observed in disciplines such as Business, Mathematics, and Engineering, particularly among graduate students. Gamification was more effective when applied in offline settings, delivered over moderate durations (3–6 months), and designed using structured frameworks like the MDA model (Mechanics, Dynamics, Aesthetics). Competitive games also showed stronger impacts compared to simpler, narrative-based approaches. Deep learning and academic performance benefitted most, while effects on engagement were smaller. The study highlights the importance of context-specific and theory-driven gamification strategies tailored to learners’ needs and institutional environments. Overall, findings affirm that when carefully implemented, gamification is a powerful tool for improving learning outcomes in higher education.

Plain Language Summary

This study explores how adding game-like features to university teaching, such as points, badges, leaderboards, and challenges, can help students learn better. This idea, called gamification, is becoming more common in higher education. To find out how well it works, we combined results from many earlier studies. We found that when teachers use gamification carefully, it can make learning more enjoyable and help students perform better. It can also encourage them to stay motivated and take an active part in their lessons. However, not every game feature works in every situation. The success often depends on the type of course, the way the teacher designs the activity, and how students respond. Based on these findings, the study recommends that universities train teachers to use game-based methods effectively, design lessons that put students at the centre of learning, and support innovation through good policies. In simple terms, using game ideas in teaching can make learning more engaging and effective when done with clear goals and thoughtful planning.

Keywords

Introduction

A significant number of educational establishments, including second-cycle and tertiary, have embraced the usage of e-learning platforms for course delivery in recent years (Khaldi et al., 2023). An e-learning platform is a contemporary addition to, and occasionally a substitute for, traditional educational delivery methods (Górska, 2016). E-learning platforms have become more popular in recent years, particularly since the COVID-19 pandemic spread. During the lockdowns, most universities shifted from face-to-face to online instruction so students could continue their studies. (Dai et al., 2024; Sofiadin & Azuddin, 2021). Over time, though, online learning environments revealed several challenges. Student motivation gradually declines, which leads to a lack of involvement and engagement in the classroom. However, teachers find it difficult to keep students’ interest, which results in a near-complete cessation of online learning, with high dropout and low completion rates (M. Li et al., 2023).

Various pedagogical interventions have been explored to address this challenge, including adaptive learning, personalised learning analytics, and interactive multimedia environments (du Plooy et al., 2024; Sajja et al., 2025). Although promising, these methods need advanced technology and may not maintain students’ intrinsic motivation long-term. Gamification, however, offers a comparatively cost-effective and psychologically engaging strategy by incorporating elements of play, challenge, and reward into the learning process (García-López et al., 2023). Gamification motivates learners by developing feelings of competence, autonomy, and connexion, which sustain engagement and persistence (Fuentes-Riffo et al., 2023; L. Li et al., 2024). However, the extent to which these motivational effects persist across different learning environments remains uncertain. This implies that gamification’s success depends on its contextual and design alignment rather than its mere inclusion.

To better understand this pedagogical approach, it is essential to clarify what gamification entails and how it has evolved in educational research. The concept refers to the integration of game elements into non-gaming contexts (Deterding et al., 2011; Garland, 2015), a term first introduced in 2008 (Marczewski, 2013). Gamification supports student learning through game-like tasks and challenges (Wang & Zheng, 2021; Zhan, He, Li, He, & Xiang, 2022) and can take various forms, from badges and leaderboards to complex simulation environments (Bodnar et al., 2016; Zhan, Zhong, Shi, et al., 2022). Lopez and Tucker (2019) and Jing et al. (2025) found that gamification can increase motivation, participation, and engagement in learning. Yet, not all gamified approaches achieve these outcomes. Studies differ substantially in the quality of design, learner characteristics, and instructional integration. These inconsistencies highlight the need for a unifying theoretical framework that explains how and when gamification leads to positive learning outcomes.

Accordingly, this study is anchored in the Theory of Gamified Learning (Landers, 2014), which conceptualises gamification as a system of game features that interact with instructional content to shape learner behaviours, attitudes, and ultimately learning outcomes. Landers (2014) outlined four key components: game features, instructional content, learner behaviours, and outcomes. When these align, game features influence behaviours and attitudes, leading to better learning results. Importantly, the model implies that misalignment between game mechanics and instructional intent may suppress or even reverse motivational effects, a nuance often overlooked in empirical studies.

In practice, the model suggests that gamification works best when game features match learning goals and effectively engage student behaviours. Building on this, our study adopts Landers’ theoretical parameters as moderator variables, design principles, gamification type, duration, and learning environment, to capture the predicted sources of variability. Moreover, to address the psychological processes underlying these behavioural effects, Self-Determination Theory (Rigby & Ryan, 2011) complements Landers’ framework by explaining how competence, autonomy, and relatedness sustain motivation. Both theories together provide structural and motivational explanations for gamification’s impact.

While these frameworks collectively show how gamification can shape learning, they differ in explanatory scope. Landers’ model clarifies the structural pathways between game elements and behavioural outcomes (Mekler et al., 2017), whereas Self-Determination Theory focuses on the motivational mechanisms that sustain learning (Rigby & Ryan, 2011). However, both suggest that gamification’s effects are context-dependent and mediated by design quality and learner experience. Findings on gamification’s impact on academic performance remain mixed and inconclusive (R. Huang et al., 2020; M. Li et al., 2023; Seaborn & Fels, 2015). This indicates the need for research that tests not only whether gamification works, but under what pedagogical and contextual conditions it does so.

Although some studies have reported no significant effects of gamification (Rachels & Rockinson-Szapkiw, 2018), others show positive results in learning and motivation (Buckley & Doyle, 2017; C. H. Chen & Chiu, 2016; M. Li et al., 2023). The inconsistency across findings likely reflects differences in study design and context. For instance, Hanus and Fox (2015) observed decreased motivation when competitive elements conflicted with course objectives, implying that poor alignment between game mechanics and pedagogy may suppress positive effects. In contrast, studies that integrated narrative or collaborative game features often yielded stronger motivational gains (Homer et al., 2018; Zhan, He, Tong, Liang, et al., 2022). These divergent findings highlight the need for a systematic synthesis of empirical evidence to determine the contextual and design conditions under which gamification effectively enhances learning outcomes.

Moreover, Inocencio (2018) linked these inconsistencies to differences in measurement. Studies often assess academic performance, motivation, and engagement (Rivera & Garden, 2021; Zainuddin et al., 2020). Academic performance serves as a concrete indicator of gamification’s impact on application and knowledge acquisition (Rivera & Garden, 2021). Motivation is critical as it affects students’ attention, effort, and persistence (Brophy et al., 2013), while engagement reflects students’ commitment to their studies and tasks (Segura-Robles et al., 2020). Nevertheless, previous studies have lacked consistency in the measures used to evaluate gamification outcomes (Sailer & Homner, 2020; Tomaselli et al., 2015).

The efficacy of gamification in learning and educational environments, particularly in higher educational contexts, has not been conclusively confirmed by meta-analyses, despite substantial research efforts in this area (Hamari et al., 2014; Seaborn & Fels, 2015). These significant gaps remain. First, the effectiveness of gamification across diverse educational contexts and learning outcomes remains unclear. Second, the mechanisms by which game features influence motivation, engagement, and performance are underexplored. Third, methodological inconsistencies and the lack of systematic evaluation of moderators limit the generalisability of prior findings. Addressing these gaps is essential for advancing theory and providing evidence-based guidance for the design and implementation of gamified learning interventions.

Therefore, the intent for this analysis was to statistically synthesise the body of studies on the effects of gamification on behavioural, motivational, and cognitive learning outcomes. This meta-analysis aimed not only to address whether learning should incorporate gamification but also to explore the methodologies for its implementation. Additionally, we examined potential moderating factors that contribute to effective gamification, thereby addressing the conceptual diversity associated with the term (Sailer, Hense, Mayr, & Mandl, 2017). In addition, we added situational, methodological, and contextual factors to account for different study designs, research settings, and the methodological rigour of primary research. We performed sensitivity analyses using leave-one-out and assessed publication bias to determine the stability and robustness of our findings.

Identifying the factors that influence gamification’s effectiveness is key. In line with Landers’ theoretical framework, this study examines key moderators (Sailer, Hense, Mayr, & Mandl, 2017). Furthermore, our study also included methodological, contextual, and situational moderators because they may have an impact on how gamification affects learning outcomes (Hamari et al., 2014) and because gamification research frequently has methodical shortcomings (Dicheva & Dichev, 2015; Dichev & Dicheva, 2017). Therefore, these factors based on literature were considered; design principles (Cordero-Brito & Mena, 2020; González-Fernández et al., 2022; Manzano-León et al., 2021; Navarro-Mateos et al., 2021), intervention duration (Kim & Castelli, 2021; Lei et al., 2022; Mahmud et al., 2020; Mays et al., 2020; Racey et al., 2016; Tsay et al., 2018), learning environment (Denny et al., 2018; B. Huang & Hew, 2015; Ninaus et al., 2015; Stansbury & Earnest, 2017), educational discipline (Bai et al., 2020; Sun-Lin & Chiou, 2017; Tsai et al., 2020), education level and game type.

Present Meta-Analysis

In this meta-analysis, we synthesise previous studies that examine the effects of gamification on students’ learning outcomes in higher education. Several reviews and meta-analytic results indicate that gamification generally has a positive effect on various learning outcomes; however, these effects are not always consistent. Therefore, we statistically synthesised the present state of gamification research in this meta-analysis to examine the impact of gamification on students’ motivation and deep learning in comparison to traditional teaching techniques. We respond to the following questions:

Research Questions

1. To what extent does gamification enhance student motivation and deep learning? (overall effect size)

2. What factors may moderate the effects of gamification on students’ motivation and deep learning? (moderator effects)

Methods

Literature Search

Using academic databases and reference lists of previous meta-analyses and reviews, we thoroughly looked for the published peer-reviewed literature pertinent to our meta-analysis. Initially, we examined the following literature databases to find meaningful studies: ProQuest Education (264), Web of Science, ERIC (1,348), and Google Scholar (500). To ensure that only studies providing an empirical analysis of gamification were captured, the search focussed primarily on titles, abstracts, and keywords. This approach minimised the inclusion of publications that merely mentioned gamification or related terms once in the full text without presenting relevant empirical data (Scherer et al., 2020).

The terminologies “gamification” and “gamif*” were employed in the scholarly literature search across all fields of study. This increased the search’s sensitivity and also followed the search parameters applied in the Seaborn and Fels (2015) review. Two sets of keywords were utilised for this review. The initial group of terms included all possible combinations of the terms “gamification,”“gamify,” and “gamified learning,” using the notation “gamif*.” The following search query was used to find the second set of search terms: gamif* AND (education OR learning OR class OR course OR outcomes OR performance OR deep learning OR influence OR impacts OR effects). These terms involve terms related to learning, class, course, performance, results, deep learning education, impact, influence, or effect.

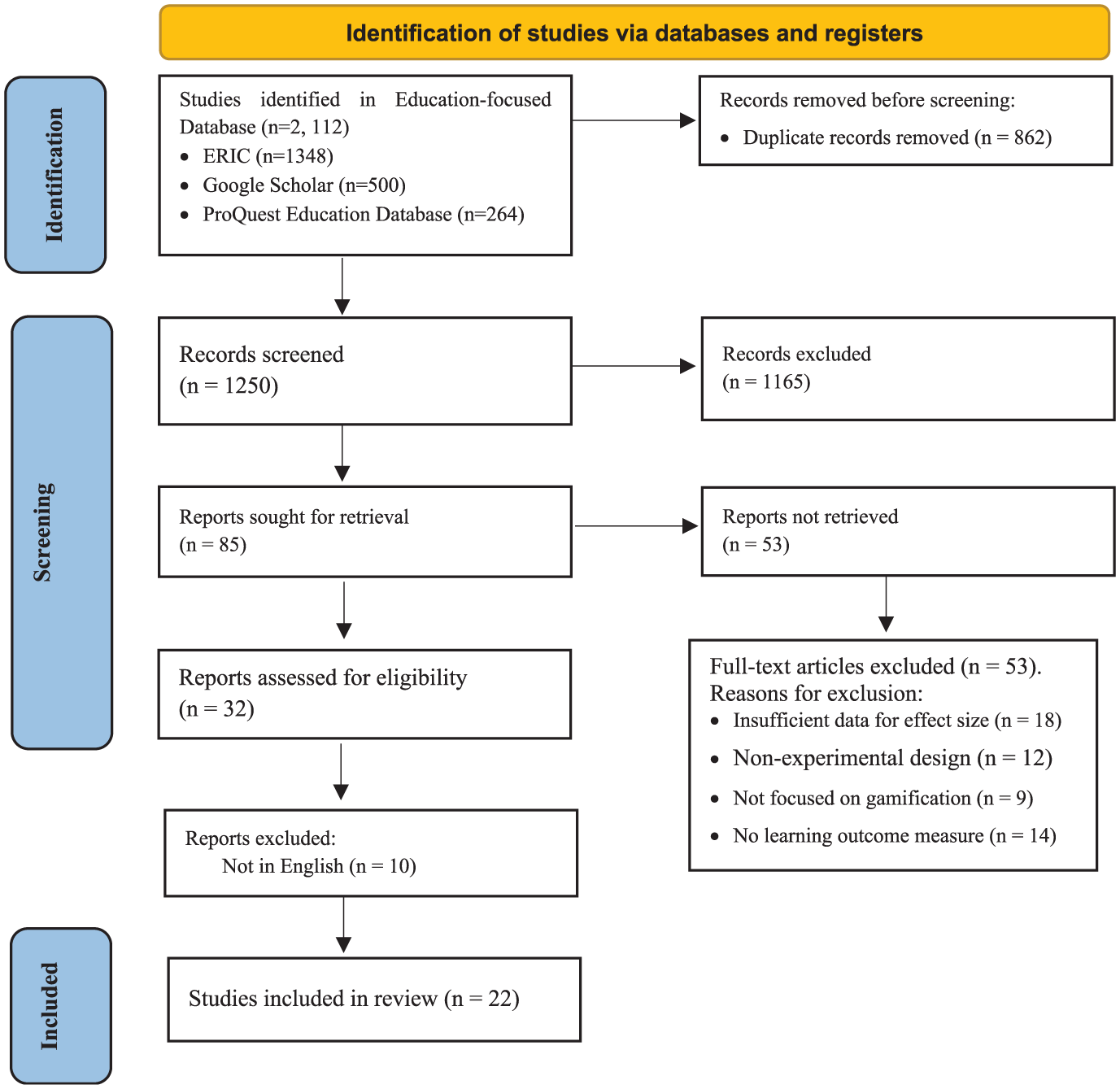

We adopted the same search terms in Google Scholar in addition to these databases in order to expand the reach of our literature search. We used the software programme Publish or Perish (version 8), which enables the automatic download of all search results that are available. Lastly, we also went through Garland’s (2015) meta-analysis, as well as Dichev and Dicheva (2017), Seaborn and Fels (2015), and Hamari et al. (2014). In all, 2,112 entries (January 2025) were obtained and subsequently examined for duplication. The publication years were restricted to 2015 to 2024, and all duplicates were eliminated. This is because foundational studies conducted before 2015 have already summarised evidence from earlier work. Our study explicitly seeks to extend these reviews by synthesising more recent empirical findings and addressing gaps identified in those earlier syntheses. The remaining 1,250 publications in total were sent for further screening. A summary of the search and subsequent screening procedures is made available in Figure 1.

Screening and Coding

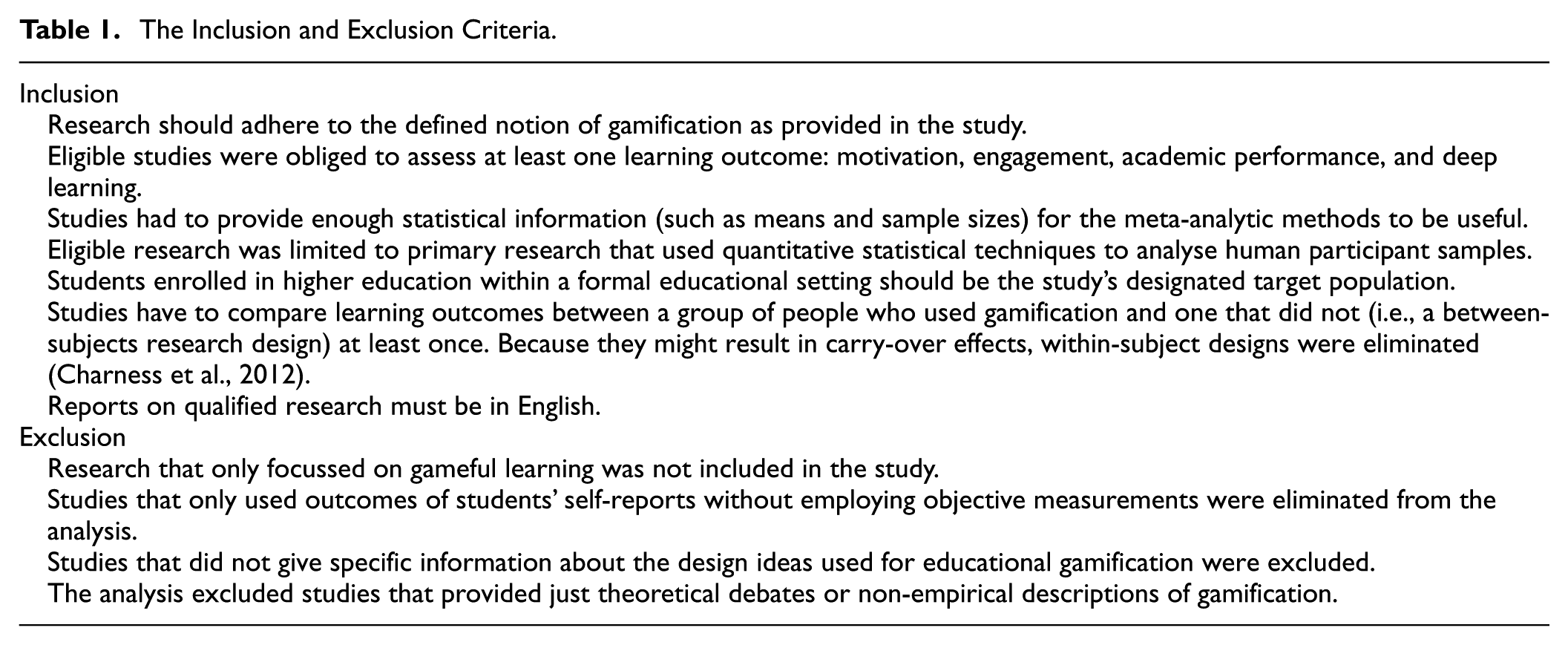

The Preferred Reporting Items for Systematic Reviews and Meta-Analyses (PRISMA) standards were adhered to during the selection procedure of the study. The PRISMA standards were considered during the selection procedure of the study. A total record of 2,112 was initially identified through comprehensive searches of electronic databases and registers. These databases included ERIC(n = 1,348), Google Scholar (n = 500), and ProQuest Education Database (n = 264), which have Scopus-indexed papers using Publish or Perish software (v.8). After removing 862 duplicate records, 1,250 unique records were left for screening. During the title and abstract screening phase, 1,165 records were excluded based on predefined eligibility criteria (see Table 1). The remaining 85 full-text articles were assessed for eligibility, of which 53 were excluded for the following reasons leaving 32: insufficient data for effect-size computation (n = 18), non-experimental or quasi-experimental design (n = 12), non-relevance to gamification in education (n = 9), and absence of learning outcome measures such as motivation or deep learning (n = 14). Additionally, 10 articles were excluded because they were not written in English.

Ultimately, 22 studies met the inclusion requirements and were added to the final meta-analysis. This research provided sufficient quantitative data to compute effect sizes (Cohen’s d). Also, the study reported on at least one relevant outcome related to student motivation, engagement, academic performance, or deep learning in the context of gamified learning interventions. The reliability of the results was increased by this thorough and open selection process. This ensured that nothing but only quality and methodologically sound studies were adopted for the study. Figure 1 above exhibits the flowchart process for our study’s search, screening, identification, coding, and extraction phases (Table 2).

Inclusion and Exclusion Criteria

The Inclusion and Exclusion Criteria.

Study Selection

PRISMA study flowchart of the selection process.

The Coding Table Adopted in This Study.

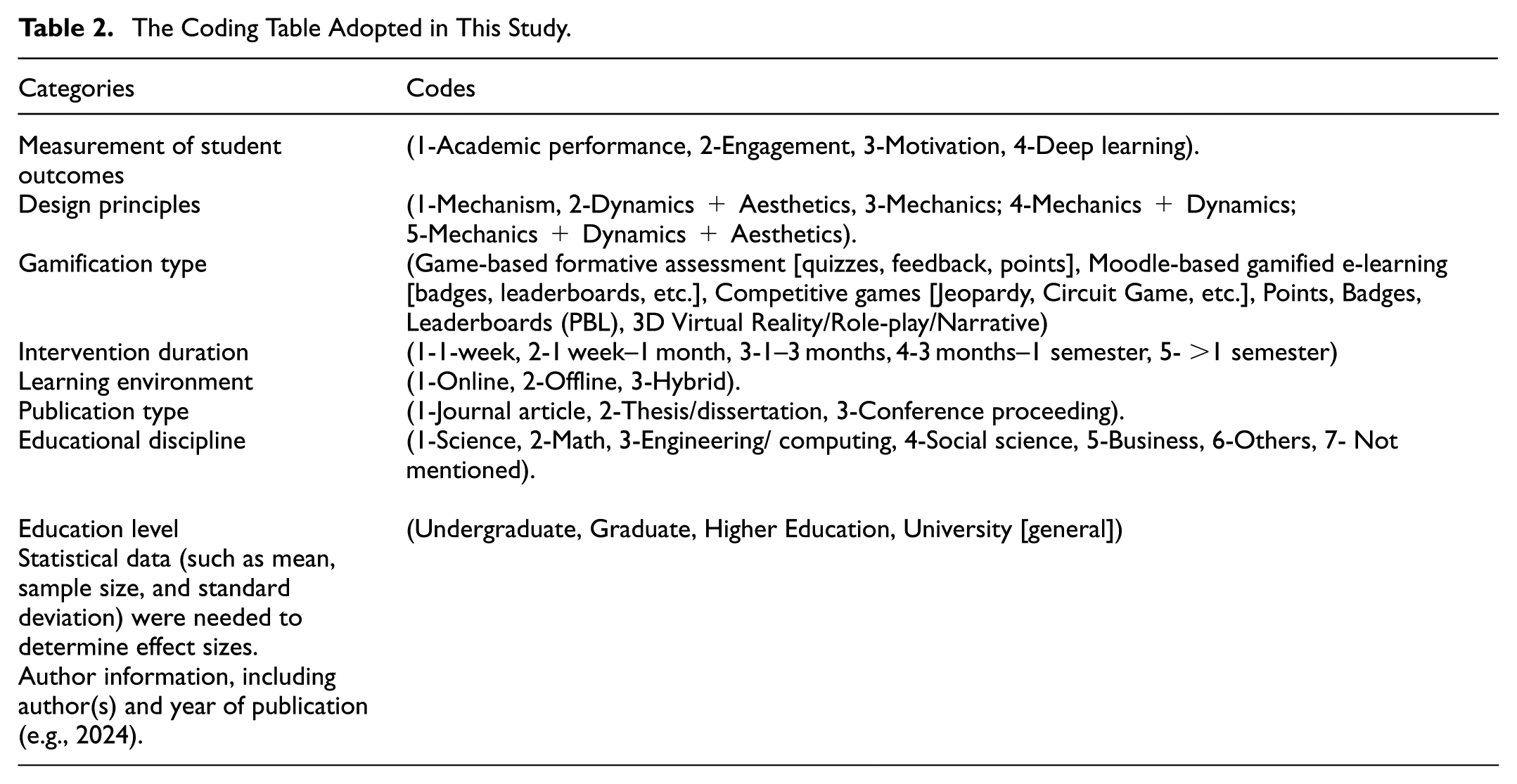

Coding Scheme

Based on the aforementioned research questions, the game types and gamification applications using the existing literature on gamification were coded as follows:

The moderators and coding categories were selected explicitly to operationalise Landers’ (2014) theoretical distinctions between game features and their interface with instructional content. Each moderator captures a source of variance in how gamification is expected to activate behavioural mechanisms that mediate learning outcomes.

Reliability of Coding

The coding method was practiced in seven studies after three coders were equipped and coached on how to complete the task. The findings were then reviewed and discussed to make sure that all of the data were coded according to the standards of coding that had been created. Subsequently, each of the three coders independently coded all qualifying studies that were included. Between the three coders, the complete agreement percentage was. Discussion was used to settle any disagreements. High inter-coder reliability was ensured by the rigorous training and thorough coding procedure adopted in this study. This improved the authenticity and precision of the outcomes. Table 3 gives a summary of the research evidence examined through a descriptive summary of the included studies.

Effect of Gamification on Student Motivation and Deep Learning (Summary of Studies and Effect Sizes (Cohen’s d)).

Statistical Analyses

For our research, STATA version 17.0 was used. Various meta-analytic features are included in the STATA software package, such as the capacity to determine the size effect, identify publication bias, and analyse moderator effects. The random effect and fixed effect models are both known models for determining effect sizes. According to the fixed effect model, sampling mistakes are the only reason for variation in effect sizes between studies since it assumes a constant genuine effect size across all of them (Borenstein et al., 2021). According to the model of random effects, effect sizes in variability could result from both sampling errors and between-study variations. We decided to use the random effects model in this study since we saw a reasonable amount of between-study variance. Studies conducted in a variety of fields and situations with a range of user types were applied in this meta-analysis. Additionally, there was an implementation of a range of design concepts and the use of gamification in different learning contexts and for different lengths of time in these studies. Therefore, it is reasonable to believe that between-study variances accounted for a significant portion of the variance. In order to reduce the estimated between-study variance and within-study variance, the model of random effects was used. This allocates weights to each of the studies adopted in the analysis using the reciprocal of variance approach.

Effect Size

Effect sizes were used to represent the standardised difference in learning outcomes between gamified instruction and traditional teaching approaches. In this meta-analysis, Cohen’s d was adopted as the standardised metric to quantify the magnitude of the effect across studies. Cohen’s d is a widely recognised measure in educational research for comparing group differences, particularly in interventions involving treatment and control conditions (Lipsey & Wilson, 2001). The computation of effect sizes and corresponding statistics was conducted using STATA (Version 17). To maintain consistency and reduce bias in estimation, each study contributed a single effect size to the conducted analysis. In cases where a study included multiple independent samples, each one of the samples was treated as a separate unit of analysis, following standard meta-analytic procedures (Borenstein et al., 2021). In order to avoid inflating the influence of a single study, effect sizes were aggregated for studies that reported multiple outcomes or comparisons within the same sample.

Following the estimation of the total effect size, heterogeneity among studies was examined using the Q statistic. This tests whether the noted variability in effect sizes exceeds what would be anticipated by chance. The results indicated substantial heterogeneity, prompting further investigation through moderator analyses. In addition, T2 (tau-squared) and I2 statistics were calculated to quantify the degree of heterogeneity. T2 estimates the between-study variance, while I2 represents the proportion of total variance as a result of true heterogeneity rather than sampling error (Borenstein et al., 2021). To better understand the sources of variation in gamification effectiveness, a series of moderator analyses was conducted. These analyses examined the potential influence of variables on the overall effect size. The incorporation of these moderators was guided by theoretical relevance and prior empirical findings in the literature.

Publication Bias

According to Boutron et al. (2022), publication bias is defined as the tendency for statistically significant research discoveries to be reported and published more frequently than incorrect and non-significant findings. Egger’s linear regression test (Appendix 1) and the funnel plot were adopted in this study to quantify publication bias. According to Sterne et al. (2011), an asymmetric figure (see Figure 2) in the funnel plot suggests possible publication bias, while a symmetrical picture shows no publication bias. Regarding Egger’s test, the effect sizes on the accuracy measures were regressed in order to ascertain whether publication bias existed (Egger et al., 1998). Publication bias is absent when the regression intercept is near zero. The trim-and-fill method aims to retrieve missing studies and update the total effect under the assumption that the missing studies were included in the analysis due to publication bias (Duval & Tweedie, 2000).

Forest plot of the moderating factors influencing the effects of gamification (Cohen’s d).

Sensitivity Analyses

Sensitivity analysis serves to detect major uncertainties (Christopher Frey & Patil, 2002; Y. Liu et al., 2024) and evaluate the robustness of findings, particularly studies that may be at a heightened bias risk (Deeks et al., 2021). For instance, when the selection criteria included in a meta-analysis are questionable as a result of insufficient information, the sensitivity analyses can be performed by omitting this study, which is potentially problematic. The overall effect size derived from this adjusted analysis will then be compared to that of the general sample. If the overall outcomes and conclusions remain unchanged, the findings are deemed indifferent to this uncertainty. Conversely, if discrepancies arise, researchers should seek to clarify these uncertainties and obtain additional information. In instances where such clarification is unattainable, the findings must be analysed with a degree of caution suitable.

Results

Description of Primary Studies

A meta-analysis of 22 studies examined how gamification impacts student motivation and deep learning in educational contexts, summarised in Table 4. Globally, the majority of the research is conducted in Europe (32%), followed by Asia (27%) and North America (23%). The majority (68%) were published between 2015 and 2018, when gamification research reached its highest point. Because most of them were peer-reviewed journal publications, they demonstrated scientific rigour. The study was primarily concerned with higher education, and 55% of the participants were undergraduate students. The majority of research in engineering and computing (46%), followed by interdisciplinary fields (32%), indicates that gamification is especially useful in STEM environments. Moodle-based platforms and competitive game formats were the most popular, followed by Points, Badges, and Leaderboards (PBL) systems (36% of studies). Online delivery accounted for 59% of the majority of interventions, which lasted 1 to 3 months and combined game mechanics, dynamics, and aesthetics in full design frameworks. Across all studies, 2,847 students were included in the meta-analysis; the research sizes ranged from 24 to 577 participants. From small effects (d = 0.05) to large ones (d = 7.34), the results demonstrated significant variation. With 68% of research demonstrating small to medium impacts and a median effect of moderate (d = 0.56), it appears that gamification can be effective, but results greatly rely on context and implementation quality. Most studies assessed academic achievement (32%) and motivation (41%), with a smaller percentage looking at engagement and deep learning.

Moderating Factors Influencing the Effects of Gamification on Student Motivation and Deep Learning.

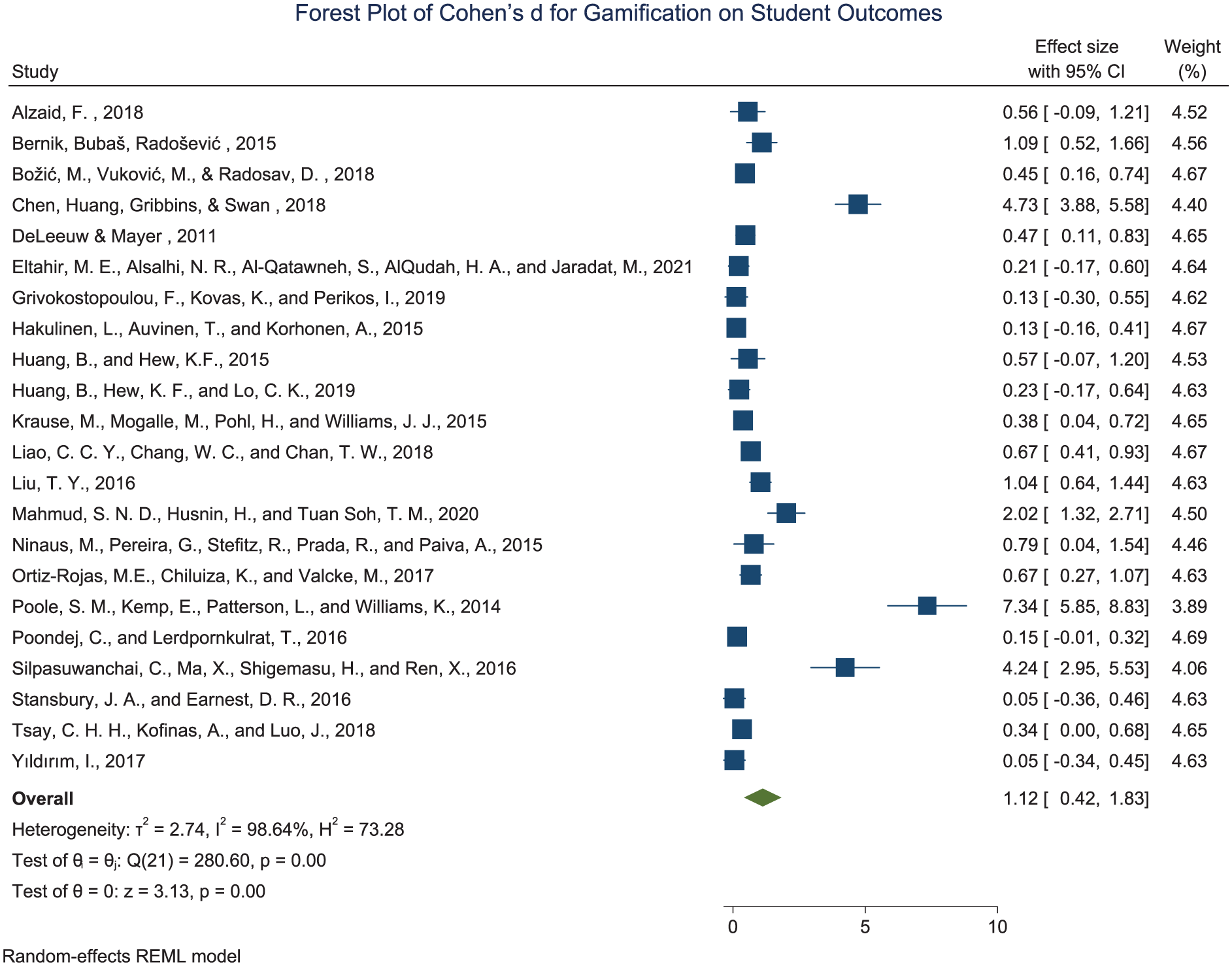

Effect of Gamification on Student Motivation and Deep Learning

Table 3 presents a summary of 22 independent studies examining the effect of gamification on the motivation of students and deep learning outcomes. Each study is listed with key variables including author(s), publication year, country, sample size in the experimental and control groups, and effect size reported as Cohen’s d. The table also includes the standard error and 95% confidence interval for the effect size of each study. The effect sizes ranged widely, with values as low as d = 0.05 (Stansbury & Earnest, 2017) and as high as d = 7.34 (Poole et al., 2014). This indicates substantial variability in the reported efficacy of gamification interventions employed. Several studies, including those by Chen et al. (2018), Mahmud et al. (2020), and Silpasuwanchai et al. (2016), reported large effect sizes. This suggests that gamification can significantly improve the engagement of students as well as learning outcomes under the right conditions.

On the other hand, a few studies (Stansbury & Earnest, 2017; Yildirim, 2017) reported negligible or minimal effects, implying contextual or implementation differences. Figure 3 displays a forest plot visualising the meta-analytic results of the 22 studies from Table 3. It shows the individual and overall effect sizes (Cohen’s d) of gamification on student outcomes. Each horizontal line is a representation of a study, with the square showing the point estimate of the effect size. The line also indicates the 95% confidence interval. The square size reflects the weight of each study in the meta-analysis. The overall pooled effect size is Cohen’s d = 1.12 with a 95% confidence interval of [0.42, 1.83]. This shows a statistically significant and large overall effect (p = .001). The shape of the diamond at the bottom of the plot represents this combined estimate. The heterogeneity among studies is high, as reflected in the Q-statistic (Q = 280.00, p = .00) and I2 = 98.84%. Indicating substantial variability across studies that is not due to chance alone. This heterogeneity may stem from differences in study design, gamification tools, duration of intervention, and subject area. Overall, the results from both the tabular and graphical analyses affirm that gamification has a significantly positive effect on the students’ motivation and deep learning. However, the magnitude of its effectiveness varies widely depending on contextual factors.

Forest plot of the effects of gamification on student motivation and deep learning (Cohen’s d).

Moderating Factors Influencing the Effects of Gamification

Table 4 shows a summary of moderating variables and their categories that influence the effectiveness of gamification interventions on the motivation and deep learning of students. The table includes the number of studies in each category (K), effect sizes (Cohen’s d), standard errors, and corresponding confidence intervals of 95%. These moderators were analysed to determine which contextual or design factors may enhance or diminish the gamification effect in academic settings. Among the educational disciplines, Business (d = 3.84), Math (d = 2.39), and Engineering/Computing (d = 1.31) demonstrated relatively high effect sizes. This indicates strong positive outcomes when gamification is applied in these fields. In contrast, Social Science (d = 0.42) and other disciplines (d = 0.42) showed weaker effects. This implies possible subject-specific differences in the adaptability or relevance of gamification strategies. On the educational level, graduate students experienced the most (d = 2.65), followed by university students in general (d = 1.62). However, studies categorised under “Higher Education” showed a minimal effect (d = 0.19), which may reflect institutional or pedagogical differences. The type of gamification used was another strong moderator. Competitive games (e.g., Jeopardy, Circuit Game) produced the highest effect (d = 4.18), followed by points, badges, and leaderboards (d = 1.38). More narrative-based forms like 3D virtual reality or role-play yielded near-zero effects (d = 0.09), indicating these may be less impactful in academic environments.

Regarding intervention duration, interventions lasting 3 to 6 months or up to one semester had the highest effect size (d = 2.98). This implies that moderate-length exposure is most effective. Shorter or unspecified durations produced significantly lower effects, and durations longer than one semester also showed diminished impact (d = 0.36). This may be due to diminishing novelty or engagement over time. In terms of learning environments, offline gamification had a larger effect (d = 2.21) than online (d = 1.08) and hybrid formats (d = 0.21). This reveals that face-to-face implementation may better facilitate engagement and interaction with gamified elements. When measuring outcomes, deep learning (d = 3.30) and academic performance (d = 1.89) showed the highest effects, followed by motivation (d = 0.74). However, engagement outcomes yielded the lowest average effect (d = 0.38), implying that while gamification supports cognitive gains, its impact on sustained behavioural engagement may be limited. Design principles also moderated effectiveness. Combining mechanics, dynamics, and aesthetics yielded a significant impact (d = 1.08), and combinations such as mechanics + dynamics (d = 2.65) further enhanced outcomes. Simpler or unspecified combinations had comparatively smaller effects.

Figure 4 displays these moderating effects using a forest plot. Each moderator category is plotted with its individual effect size and 95% confidence interval. The overall pooled effect from all moderator analyses was Cohen’s d = 1.31 (95% CI [0.95, 1.68]), showing a statistically significant and large effect across moderators. The high heterogeneity (Q (32) = 331.55, p < .001; I2 = 93.57%) underscores the influence of contextual and implementation factors on gamification’s effectiveness. This moderator analysis highlights that gamification’s success is dependent upon its disciplinary context, educational level, duration, delivery environment, and design principles. Strategic alignment of these factors can increase gamification’s educational impact significantly.

Funnel plot of effect size data.

Publication Bias

We studied the extent to which publication bias occurred employing several approaches. A funnel plot was first constructed to assess potential publication bias. This represents a generally accepted method for identifying such bias in meta-analytic research (Borenstein et al., 2021). The funnel plot in Figure 2 displays the distribution of individual study effect sizes (Cohen’s d) plotted against their corresponding standard errors. Visual inspection of the funnel plot discloses a notable asymmetrical distribution around the assumed overall effect size (θIV). While the majority of studies cluster around the central vertical line representation of the pooled effect estimate, there seems to be a conspicuous gap in the lower-left quadrant of the plot. This suggests a potential absence of studies with small effect sizes and high errors of the standard deviation. Since the outcome of unpublished studies is challenging to obtain, only published conference papers, journal papers, and other papers obtained from the Education-focussed Database (ERIC, ProQuest Education & Google Scholar) were incorporated in this study. This may result in the existence of potential publication bias.

To complement the visual assessment, Egger’s linear regression test (Appendix 1) of funnel plot asymmetry was conducted to provide a statistical evaluation of potential publication bias. The test yielded statistically significant results, t (20) = 4.53, p < .001, bias = 5.85, SE = 1.29, indicating significant asymmetry in the distribution of the funnel plot. Hence, the trim-and-fill analysis was conducted for the bias correction. We applied trim-and-fill correction using the nonparametric method (Appendix 2), and the results indicate that no studies were imputed, and the funnel plot was judged to be symmetric. This shows no substantial publication bias in the included studies. The algorithm did not detect asymmetry or missing studies to trim or impute as a result of the small number of studies. Subsequently, sensitivity analysis was conducted to examine the robustness of the estimates.

Sensitivity Analyses

To assess the robustness and reliability of the outcome of the meta-analyses, we conducted comprehensive sensitivity analyses examining the stability of effect size estimates under various analytical conditions. This analytical approach systematically removes each study from the meta-analysis one at a time and recalculates the overall effect size based on the remaining studies (Viechtbauer & Cheung, 2010). The leave-one-out analysis (Appendix 4) revealed remarkable consistency in the pooled effect size estimates across all iterations (Appendix 3). When individual studies were sequentially omitted, the recalculated effect sizes ranged from 0.841, 95% CI [0.354, 1.328] when Study 17 was excluded, to 1.178, 95% CI [0.445, 1.912] when Study 20 was excluded. The overall effect size from the complete dataset was 0.98, 95% CI [0.66, 1.31], and the leave-one-out estimates clustered closely around this central value, with the majority of iterations producing effect sizes between 1.08 and 1.18.

Importantly, all leave-one-out analyses maintained statistical significance (p ≤ .004), indicating that no single study was solely responsible for the overall effect observed. The consistency of effect size estimates across iterations suggests that the meta-analytic results are robust and not unduly influenced by any individual study. Despite the existence of publication bias detected through Egger’s test and funnel plot. The sensitivity analysis also revealed that the exclusion of certain studies resulted in minimal changes to the confidence intervals. Also, most iterations showed relatively narrow confidence intervals that consistently excluded zero. This pattern reinforces the stability of the positive effect size estimate and provides additional dependence on the reliability of the meta-analytic findings.

Discussion

This study investigated the effect of gamification on the motivation and deep learning of students by synthesising evidence from 22 independent studies. The findings provide moderate to strong evidence for the integration of gamification in educational contexts, while also revealing meaningful variations in its implementation and effectiveness. The overall pooled effect size from the analysis was statistically significant (Cohen’s d = 1.12, 95% CI [0.42, 1.83], p < .001). This indicates that, on average, gamification is associated with improved learning outcomes of students. The results affirm the educational value of game-based components such as badges, points, leaderboards, and immersive challenges in promoting student motivation and deeper cognitive engagement (M. Li et al., 2023; Lopez & Tucker, 2019). The findings align with the theory of gamified learning (Landers, 2014), which posits that game elements influence learning indirectly through behavioural and attitudinal mediators. Consistent with this theoretical stance, the meta-analytic evidence suggests that gamification tends to enhance learning when its design effectively supports motivation, feedback, and goal orientation. From a theoretical perspective, this reinforces the view that gamification is a mediating mechanism rather than a direct cause of learning gains. This implies that educators should design and adopt pedagogically integrated gamification strategies. This should align closely with instructional objectives rather than assuming that the mere presence of game elements guarantees improved outcomes.

However, the analysis also revealed significant heterogeneity among the studies (Q = 280.00, I2 = 98.84%). This suggests that the effects of gamification vary considerably depending on contextual and methodological factors. This inconsistency is consistent with earlier findings (Sailer & Homner, 2020; Seaborn & Fels, 2015), which identified the lack of methodological standardisation and theoretical grounding as persistent challenges in gamification research. For instance, large effect sizes reported by Poole et al. (2014) and Chen et al. (2018) were linked to highly immersive or competitive interventions, while studies like Yildirim (2017) and Stansbury and Earnest (2017) showed minimal gains. This is potentially due to limited gamification features or poor instructional alignment. In Stansbury and Earnest (2017), the gamified intervention in an industrial/organisational psychology course produced higher student perceptions of learning but did not yield statistically significant improvements in actual performance. This implies that while gamification may enhance subjective experiences, it may fail to translate into outcome gains unless the design aligns closely with instructional goals and assessment measures.

To address these disparities, the second phase of the analysis examined several moderating variables based on the literature. The moderator analysis revealed an even higher overall pooled effect size (Cohen’s d = 1.31, 95% CI [0.95, 1.68], p < .001). This further affirms the effectiveness of gamification when contextually optimised. These findings underscore that gamification is not universally effective, but rather its impact is moderated by factors: educational discipline, learner level, gamification type, intervention duration, learning environment, design principles, and outcome measurement.

For example, interventions in Business (d = 3.84), Mathematics (d = 2.39), and Engineering/Computing (d = 1.31) yielded higher effect sizes than in Social Science and interdisciplinary fields. This suggests that gamification may be particularly effective in applied or problem-solving disciplines, where tasks naturally lend themselves to game-like mechanics (Bai et al., 2020; Subhash & Cudney, 2018). These findings should not be interpreted as evidence that gamification “works best” in certain disciplines universally. But rather that it may be more readily adaptable in settings where learning tasks already involve problem-solving and competition. Theoretically, this supports the gamified learning framework’s emphasis on instructional alignment. It suggests that gamification yields the greatest benefit when game mechanics mirror the cognitive processes inherent to the learning task. The implication is that educators in less structured disciplines might need to embed narrative or collaborative elements to achieve similar motivational effects.

In terms of educational level, graduate students benefitted most (d = 2.65). This is likely due to intrinsic motivation and greater self-regulation (Manzano-León et al., 2021), whereas minimal effects were observed for general “higher education” studies (d = 0.19). This could be due to generic or poorly designed interventions. Yet, this should be interpreted carefully, as the number of studies involving graduate samples was limited. The implication for theory is that learner characteristics interact with gamified design, reinforcing Landers’s (2014) behavioural pathway model. For practice, designers should consider tailoring gamified experiences to learners’ motivational profiles and autonomy levels. Gamification type also influenced results. Competitive games (e.g., Jeopardy, Circuit Game) were especially effective (d = 4.18), while narrative-based strategies like 3D virtual reality and role-play were much less impactful (d = 0.09). This finding supports the theory of self-determination (Rigby & Ryan, 2011), which highlights the relevance of competence and challenge in motivating learners. However, the extreme difference should be treated as indicative rather than definitive due to variability in study designs and small subgroup counts. It also reflects that novelty alone without pedagogical alignment may fail to produce meaningful outcomes. The implication for practice is that competitive mechanics can be effective but must be used judiciously to avoid anxiety or exclusion among learners.

In duration, interventions lasting 3 months to one semester yielded the highest outcomes (d = 2.98). This indicates that medium-term exposure allows for deeper engagement and sustained motivation (Kim & Castelli, 2021). Shorter durations might not be sufficient for meaningful cognitive processing, while prolonged exposure can lead to “gamification fatigue” (Racey et al., 2016). Thus, the optimal duration appears contextual, reinforcing the theoretical expectation that motivation is dynamic rather than static. This finding suggests that periodic re-design of gamified elements may sustain engagement over longer terms. The learning environment further influenced effectiveness. Offline, face-to-face gamification strategies performed better (d = 2.21) than online (d = 1.08) or hybrid (d = 0.21) formats. This finding is consistent with earlier work (B. Huang & Hew, 2015; Stansbury & Earnest, 2017). It highlights the importance of physical interaction and immediate feedback as key components for learner engagement. This does not imply that online gamification is ineffective, but that social interaction and immediacy of feedback, central tenets of the gamified learning theory, may be less pronounced in digital settings. For practice, designers of online gamified courses should embed synchronous or peer-feedback mechanisms to replicate these conditions. Moreover, outcome measurement was a significant factor. Gamification had the most substantial effects on deep learning (d = 3.30) and academic performance (d = 1.89), while smaller effects were found for motivation and engagement. These results reinforce the premise that gamification can move beyond surface-level engagement to foster cognitive and performance-based outcomes. This is in line with Landers (2014) assertion that gamification modifies the behavioural pathway between instructional content and learning achievement.

Lastly, design principles were critical. The highest impacts were seen in interventions applying mechanics and dynamics (d = 2.65) or full MDA frameworks, mechanics, dynamics, and aesthetics (d = 1.08). This reflects a move beyond simplistic “pointification” (Seaborn & Fels, 2015) towards more holistic and theory-based designs, such as those promoted by the MDA model (González-Fernández et al., 2022; Hunicke et al., 2004). Theoretically, it validates the MDA model’s contribution to the gamified learning theory by illustrating that richer affective and dynamic layers foster deeper engagement. Practically, it encourages instructional designers to integrate cognitive and emotional dimensions into game mechanics for more meaningful learning experiences.

Conclusions

This study addressed the ongoing challenge of low student motivation in higher education by evaluating the effectiveness of gamification in enhancing student learning outcomes. Despite extensive implementation, existing studies have reported mixed results. To clarify these inconsistencies, this study synthesised data from 22 independent studies, focussing on both overall effects and moderating factors. The findings revealed a significant and large effect size, indicating that, on average, gamification is associated with improved learning outcomes of students. The moderator analyses further demonstrated that discipline, gamification type, learner level, intervention duration, learning environment, and design principles influence effectiveness. Higher impacts were observed in Business, Mathematics, and Engineering, among graduate students, in offline settings, and for interventions using competitive games and structured MDA design models. Deep learning and academic performance showed the highest gains, while engagement effects were smaller. These results highlight the importance of context-specific design. Gamification should be strategically tailored to the subject area, learner characteristics, and institutional setting rather than implemented uniformly. These results do not mean gamification is universally “most effective” in specific fields. Instead, it suggests gamification fits more easily in contexts where learning already includes problemsolving and competition. Offline (face-to-face delivery) environments appear to enhance outcomes, and the integration of well-structured game mechanics significantly strengthens results. In conclusion, the findings support the argument that gamification is associated with improved learning outcomes when implemented thoughtfully. Its impact varies significantly depending on design, context, and delivery. Therefore, educators, policymakers, and instructional designers must avoid universal approaches and instead tailor gamified interventions to their unique educational settings, learner characteristics, and desired outcomes.

Policy Implications

Based on the results of this meta-analysis, the following policy recommendations are suggested to guide educational practice and research. Firstly, policymakers should advocate for gamification to be tailored to specific educational disciplines, learner levels, and learning environments. For example, applied fields like Business and Engineering show higher responsiveness to gamified interventions and should be prioritised for implementation. Moreover, institutions should integrate comprehensive gamified frameworks such as the MDA model (Mechanics, Dynamics, Aesthetics) rather than simplistic point-based systems. This ensures sustained engagement and learning depth. In addition, educational planners should consider gamification interventions that last between 3 and 6 months, as these durations were found to produce the most significant effects without leading to fatigue. Furthermore, teachers and instructional designers should receive training and professional development in gamification theory and practice to ensure pedagogical alignment and maximise student outcomes. Lastly, gamification as a recognised strategy should be incorporated in higher education policy frameworks to enhance student participation, particularly in digital and remote learning contexts.

Limitations and Future Research

This study, while offering notable insights into the effect of gamification on the motivation and deep learning of students, is subject to several limitations. First, the sample size of included studies (n = 22) remains relatively small. The geographic and disciplinary coverage was uneven, which may limit the generalisability of the results. Second, despite the use of standard tests, the potential for publication bias cannot be entirely ruled out. However, the sensitivity analysis showed consistency of effect size estimates across iterations. This suggests that the meta-analytic findings are robust, despite the existence of publication bias. This reinforces the stability of the positive effect size estimate and provides additional confidence in the reliability of the meta-analytic findings. Also, limiting studies to 2015 to 2024 may exclude relevant earlier research, potentially affecting comprehensiveness. Notably, the high heterogeneity across studies indicates substantial variability in research designs, intervention types, and outcome measures, which limits the generalisability of the findings. The predominance of short-term studies also constrains the understanding of the effectiveness of gamification in the long term. The literature was also disproportionately focussed on STEM and business disciplines, with limited exploration of gamification in the humanities, hybrid learning contexts, and non-Western settings. These limitations imply that conclusions drawn here should be interpreted cautiously and viewed as indicative rather than definitive. To address these gaps, future research should employ longitudinal, theory-driven designs that assess the durability of gamification’s effects. Also, it should explore a broader range of academic fields and cultural contexts, and consider learner-specific variables to augment the relevance and efficacy of gamified learning interventions.

Footnotes

Appendix

Sensitivity Analysis: Leave-One-Out Meta-Analysis Summary.

| Omitted study | Effect size | [95% conf. interval] | p-value | |

|---|---|---|---|---|

| 1 | 1.154 | 0.415 | 1.893 | .002 |

| 2 | 1.129 | 0.388 | 1.870 | .003 |

| 3 | 1.160 | 0.421 | 1.899 | .002 |

| 4 | 0.939 | 0.312 | 1.565 | .003 |

| 5 | 1.159 | 0.420 | 1.898 | .002 |

| 6 | 1.171 | 0.435 | 1.907 | .002 |

| 7 | 1.175 | 0.440 | 1.909 | .002 |

| 8 | 1.175 | 0.440 | 1.910 | .002 |

| 9 | 1.154 | 0.414 | 1.893 | .002 |

| 10 | 1.170 | 0.434 | 1.906 | .002 |

| 11 | 1.163 | 0.425 | 1.901 | .002 |

| 12 | 1.150 | 0.409 | 1.890 | .002 |

| 13 | 1.132 | 0.390 | 1.874 | .003 |

| 14 | 1.084 | 0.350 | 1.818 | .004 |

| 15 | 1.143 | 0.403 | 1.883 | .002 |

| 16 | 1.149 | 0.409 | 1.890 | .002 |

| 17 | 0.841 | 0.354 | 1.328 | .001 |

| 18 | 1.174 | 0.439 | 1.910 | .002 |

| 19 | 0.984 | 0.314 | 1.654 | .004 |

| 20 | 1.178 | 0.445 | 1.912 | .002 |

| 21 | 1.165 | 0.427 | 1.903 | .002 |

| 22 | 1.178 | 0.445 | 1.912 | .002 |

| Theta | 1.124 | 0.421 | 1.827 | .002 |

Note. Model: Random-effects, Method: REML, number of studies = 22.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

Data sharing not applicable to this article as no datasets were generated or analysed during the current study.