Abstract

Learning analytics is pivotal for supporting learning, with recent research shifting from concepts to reviews. However, understanding factors influencing learning outcomes in higher education through analytics systems remains underexplored. This study aims to fill this gap, providing a holistic view of factors affecting learning outcomes in higher education through a systematic literature review. The goal is to unravel the intricate connections among factors, learning environments, research scopes, and theory. The search yielded 1,662 results, with 27 studies selected. “Engagement” emerged as the most prevalent factor, followed by “platform behavior,” while “demographic features” and “self-assessment” were least prevalent. Most research focused on learning outcomes as final grades, lacking a consistent theoretical definition. Studies were mainly conducted in virtual and blended learning environments, spanning multiple courses or focusing on a single course. Despite extensive research on learning behavior and engagement in learning management systems, prevalence does not necessarily indicate a comprehensive understanding of factors influencing learning outcomes. This implies a potential gap in information gathering or analysis for deeper insights. Moreover, the lack of a consistent theoretical framework and the restriction of analyses to final or partial grades in virtual or blended learning environments suggest areas for improvement in learning analytics research. The study proposes directions for enhancing research in this field to unlock its full potential.

Introduction

Learning Analytics (LA) measures, collects, analyzes, and reports data about learners and their contexts. Since 2012, researchers have highlighted the potential impact of LA in existing models of education, in particular within higher education institutions (HEIs), that generate significant insights into the learning process (Siemens, 2012). LA aims to aid instructors and academic staff in the understanding and optimization of the learning environments to make final decisions in the educational field (Siemens & Baker, 2012). Some of the benefits of using LA are student advising, performance progress monitoring, supervision and feedback, intelligent tutoring systems, institution policymaking (Namoun & Alshanqiti, 2021; Qiu et al., 2022), and fostering a deeper understanding and support for student learning (Viberg et al., 2018). These systems also have the potential to assist practitioners in making pedagogically informed decisions related to teaching contexts as well as reflecting on valuable information to collect in learning management systems (LMS; Lockyer et al., 2013).

Recently, the field of LA has transitioned from conceptual papers to review articles (Blumenstein, 2020). Nevertheless, the majority of these Systematic Literature Reviews (SLR) are mainly aimed at researchers rather than practitioners (García-Peñalvo et al., 2022) and there are many areas associated with learning outcomes (LO) in the LA research field that still need to be fully explored (Dawson et al., 2019). In the exploration of uncharted areas within educational research, it is evident that while some studies identify predictors of LO, many neglect indicators related to learning or curriculum. Current research predominantly concentrates on students’ historical performance or demographic data. This oversight highlights the importance of delving into factors influencing LO, specifically those intrinsic to the learning experience and curriculum design (Qiu et al., 2022). Identifying these factors and the contexts where they have been collected could significantly contribute to designing robust systems that support various aspects within HEIs more efficiently, including learning and quality assurance processes. This becomes particularly crucial given the challenging and intricate nature of conducting effective research to evaluate LOs. Higher education institutions are growing interested in comprehending LOs, encompassing concerns about students’ skill competencies, continuous improvement, retention, dropout-risk, and more (Goss, 2022). Therefore, gaining a deeper understanding of the factors associated with LO becomes essential.

To begin, establishing a common ground for defining LOs is crucial. LOs represent a collection of knowledge, skills, and attitudes expected of students upon graduation. Various authors define LOs as comprising disciplinary knowledge, professional aptitudes, and interpersonal skills (Crawley et al., 2014; Yorke, 2007). This understanding of LOs is coherent with the Bologna declaration, which pursues a uniform structure for higher education in European countries. The Bologna process significantly changed the pedagogical model of HEIs, transforming the learning process from its planning to its evaluation (Crawley et al., 2014). In so doing, programs are leaving behind the content-based approach in favor of a learning-centered model; a turning point for universities since they no longer evaluate content but learning outcomes (Agencia Nacional de Evaluación de la Calidad y Acreditación (ANECA), 2013; Crawley et al., 2014; Gonzáles & Wagenaar, 2003). In this context, the concept of learning outcomes extends beyond the simple assessment of knowledge to encompass a more intricate evaluation of skills (Gil-Jaurena & Kucina-Softic, 2016). While consensus exists in the definition of LOs in certain scientific statements like the European Qualifications Framework, accrediting agencies (International Engineering & Alliance, 2021), and the new educational paradigm of outcome-based-education (Kaliannan & Chandran, 2006) there is no standard way of framing them in literature (Deller et al., 2015). For example, LOs are also understood as the measurable level of academic achievement, that is, as the quantifiable result of a student’s effort on various evaluation learning activities (Blumenstein, 2020; Shi et al., 2020). This is how an abundant sample of papers treats learning outcomes and academic performance as synonyms.

Some research has identified factors associated with academic performance (Chaudhary & Singh, 2022), however, their findings are limited to sociodemographic information or teacher qualities. Limited studies also highlight how the curriculum or personal factors are associated with LOs (Khampirat, 2021). Some authors have run research to validate factors explaining academic performance, including personal and context variables (Yao et al., 2019), or incorporating personality traits, learning approaches, and how these elements relate to the student’s intention to continue their studies (Smyth et al., 2017). Li et al. (2022) conducted a study to review empirical evidence regarding factors influencing student persistence and retention in STEM disciplines within higher education, along with exploring how these factors are measured and quantified in LA practices. The study identified several predictive variables, including student personal characteristics, enrollment features, prior academic performance, current academic performance, and behavioral and academic engagement. However, it is essential to note that the study was limited to STEM disciplines.

Recent systematic literature reviews (SLR) offered insights into predicting student learning outcomes in LA research. For example, Xiao et al., conducted an SLR spanning from 2002 to 2016, wherein they identified the types of features crucial for predicting student learning outcomes, as well as the prediction algorithms employed for this purpose (X. Hu et al., 2017). They also scrutinized the contexts, targets, and performances of these predictions, aiming to enable effective matching of prediction methodologies with specific circumstances. However, this research was confined to findings within the disciplines of Computer Science, Electrical Engineering, Education, Learning Information Systems, and Management.

They found that existing studies tended to predict course performance (successful/unsuccessful), course grades, and student retention/drop-out in online/blended learning contexts using data drawn from electronic sources regarding LOs.

Other SLRs have concentrated on the conceptualization of LOs, starting from the paradigm of outcome-based education (Namoun & Alshanqiti, 2021). Namoun and collaborators (Namoun & Alshanqiti, 2021) conducted a study to identify how student academic performance was measured through LO, explore the intelligent models and techniques devised to predict student academic performance using these outcomes, and investigate the predominant predictors of student performance inferred from LOs. Notably, this research did not specifically target HEIs but encompassed the broader educational field. The findings revealed a notable absence of a theoretical definition for “student outcome,” signifying a weakness within the LA research domain. Another observation highlighted by the authors was that most experimental datasets came from a single educational entity or isolated courses, and only a few studies modeled program-level outcomes. This limitation was previously identified by Dawson et al. (2019), who stated that most research developed on LA focused on independent courses and minor projects. Dawson et al. pointed out that continuing research using the same approach would not significantly impact education. Further research should replicate and evaluate studies to propose new theories of learning, adoption, or design of effective educational environments, guided by feedback derived from data.

García-Peñalvo et al. (2019) conducted an SLR to present an overview of the prevailing research activity concerning predictive analytics in higher education. They aimed to emphasize the primary purposes behind employing predictive algorithms and explore their implementation methodologies. One of the key findings of their study was that LA research is typically applied within constrained scenarios, often limited to a singular course. This indicates the challenge of developing a predictive application capable of effectively operating across diverse teaching and learning methodologies, portraying it as challenging.

Despite multiple attempts to analyze different dimensions of LA, such as identifying the effects of employing LA on LOs (Foster & Francis, 2020) or investigating factors influencing teaching and learning in broader educational settings (Chaudhary & Singh, 2022; Namoun & Alshanqiti, 2021; Viberg et al., 2018), there still needs to be an unaddressed gap. No study has thoroughly examined the factors linked to LOs within LA systems specifically targeting HEIs. Such an investigation would encompass various critical aspects associated with developing and implementing these systems, including scope, learning environments, theoretical background, and the definition of LOs.

Dawson et al. (2019) emphasize on the need for a systems-oriented approach to identify how LA can improve LOs in complex organizational systems, incorporating data sets beyond technology and including other design, cognitive, and emotional dimensions. To do so, comprehensive studies must analyze diverse elements that interact in educational contexts when factors associated with LOs are identified.

Despite LOs serving as a fundamental benchmark for assessing the efficacy of HEIs, there remains to be more empirical evidence elucidating the factors associated with LOs through LA systems, along with the specific contexts in which these factors have been identified. The analysis of previous literature shows that in this research field, there is a predominant tendency to extract information on Learning Management Systems (LMS) or basic demographics and student grades (Blumenstein, 2020; Dawson et al., 2019). Nevertheless, this approach limits the scope of the studies to the “low-hanging fruit” (Dawson et al., 2014), where most data analyzed corresponds to those available in LMS. Dawson et al. (2019) suggest studying LA with a systemic and integrated approach to facilitate the development of targeted educational interventions and systems.

We aim to bridge this gap by offering comprehensive research coverage through an SLR to provide a holistic understanding of the factors associated with LOs measured through LA systems in HEIs. This study aims to elucidate the intricate interconnections among data, learning environments, research scopes, and theory. This endeavor aims to establish a robust framework, guiding decision-makers, developers, and educators in designing effective LA systems that enhance and support the learning process. Identifying the factors associated with LOs and understanding their contextual relevance should guide practitioners and researchers. This knowledge will assist in making informed decisions regarding which elements to collect in future systems and research endeavors, as well as identifying the emergence of trends and constraints that may influence the field’s future direction.

This paper presents the SLR methodology in Section 2, the results of the SLR regarding the content of the selected literature in Section 3, discussion in Section 4, and the study’s conclusions and limitations in Section 5.

Methodology

The primary purpose of an SLR is to add evidence to answer the research question and support the development of evidence-based practice in different study fields. Our study’s main purpose is to comprehensively understand the factors associated with LOs evaluated through LA systems in HEIs.

This section describes in detail the planning, research questions, inclusion and exclusion criteria, the query strings, and the review process according to the specifications of PRISMA (Kitchenham & Charters, 2007; Okoli, 2015; Page et al., 2021).

Review Planning

The protocol for developing the SLR following the PRISMA guidelines considers the definition of research objectives, the description of the eligibility criteria, the selection of information sources, the search strategy, the data management procedure, and other relevant information to make the review traceable. These aspects are described in the following sections.

Research Questions

The main research question is:

In addition, the following sub-questions were raised to have a comprehensive approach to the phenomena:

The scope of the study is defined through the PICOC framework as follows (Petticrew & Roberts, 2006):

Inclusion and Exclusion Criteria

The inclusion and exclusion criteria to answer the different research questions are the following:

Note: In this study, we included papers that explicitly used the terms “learning outcomes” and “learning analytics.”

Justification of the Data Source

The papers have full access and are published in peer-reviewed journals, books, and conferences indexed in SCOPUS and Web of Science. These databases were selected because they are multidisciplinary and have high-quality standards. An instrument was designed to check the quality of studies before their inclusion in the final literature review. This checklist aims to identify gaps to decide if each work’s contribution is interesting for the systematic review. The quality checklist is based on Kitchenham and Charters (2007). To do so, researchers adopt Fink’s suggestion (Fink, 2010), which is to select the items of a validated review checklist and the quality evaluation questions most appropriate to their specific research queries. The authors also considered the main topic areas and suggestions Greenhalgh (2014) and Petticrew and Roberts (2006) provide for evaluating quality.

The question associated with the criterion was scored with the following four values: Fulfilled (8 points), Partially fulfilled (6 points), Barely fulfilled (4 points), and Not fulfilled (0 points). Hence, each paper can obtain a maximum of 48 points according to its quality measured to the mentioned criteria. The studies that reach 80% of the maximum score, corresponding to 38.4 points, are included. The items of que quality criteria are the following:

Are the objectives of the investigation clearly described?

Is the methodological design adequate for the proposed research problem?

If the study involves assessing a technology, is the technology clearly defined?

Are the data collection methods adequately described?

Are the statistical methods described?

Are all study questions answered?

Query Strings

The search terms considered the results of the PICOC method connected by the AND and OR Boolean operators, thus, the following query strings were produced:

– “Learning outcomes” (AND) “Factors (OR) Models” (AND) “Higher Education.”

– “Learning analytics” (AND) “learning outcomes” (AND) “higher education.”

– “Learning analytics” (AND) “learning outcomes” (AND) “models.”

Review Process

The papers selection process was developed considering the following steps:

A spreadsheet was created in Google Drive to list all the papers found during the query search.

The title and abstract of the papers listed in the sheet “First phase” were analyzed and the inclusion and exclusion criteria were applied. Then, if the information of the title and abstract were not sufficient to include or exclude them, the entire content of the paper was read to decide.

All duplicated papers are marked using a red color in the “first phase” sheet.

All duplicated papers marked in red are deleted; the resulting list is copied to the “First phase without duplicate” sheet, maintaining the original list with duplicates

All SLR were exclude from the study.

The information of papers classified as “Second phase” is copied to a second sheet named “Second phase.” The papers in the second sheet are subject to the quality assessment checklist.

The papers that obtained 80% or more of the maximum score are selected for the final phase and copied onto the sheet named “Final papers.”

Then, the papers in the “Final papers” sheet are classified based on their content, considering the research questions.

For tackling selection bias, the search was carried out among out by the three reviewers. Thus, the selected articles were rigorously revised considering the inclusion criteria.

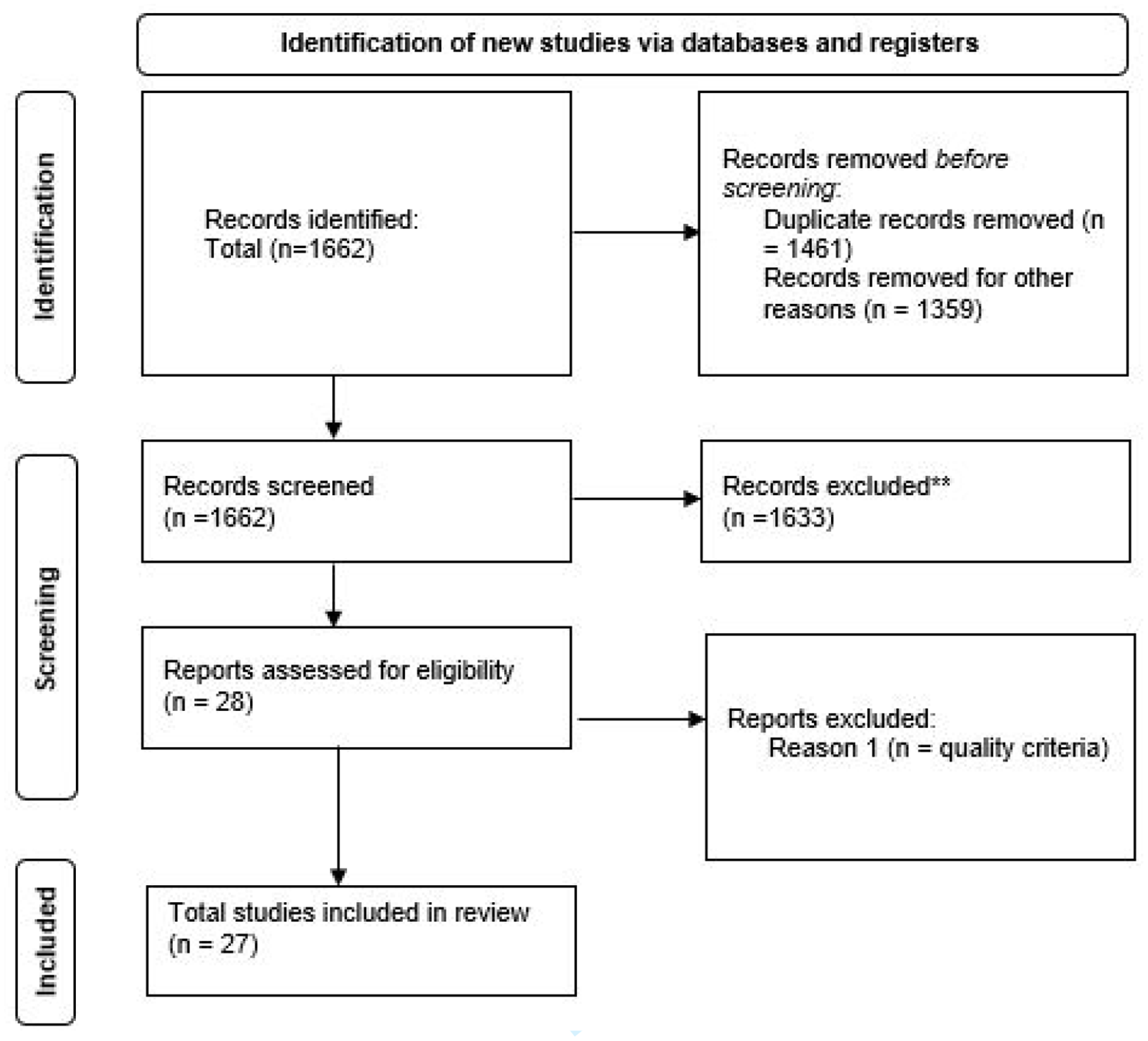

In Figure 1 we present the flowchart of the steps followed during this systematic literature review.

PRISMA 2020 flow diagram for updated systematic reviews which included searches of databases, registers, and other sources.

Results

We classified the information from the selected papers into various analysis themes to reach this objective. The themes were categorized as (1) “Learning Strategies,” encompassing specific learning activities like flipped classrooms, gamification, and worked examples, social interaction elements were excluded from this category. (2) “Demographic Features,” which involve participant information such as continent of origin, age, and gender. (3) “Engagement,” which focuses on student actions within the learning environment, including exercises, assignments, interaction with course materials, and watching instructional videos. (4) “Behavioral Connection,” which pertains to platform interaction behavior, measuring login frequency, the pace of usage, and navigation patterns. (5) “Feedback,” which involves automatic performance feedback from the LMS. (6) “Formative Assessment,” that includes task value scaffolding for task understanding. (7) “Previous Assessments,” which considers prior assessment activities and grades, (8) “Self-Assessment,” which involves learners evaluating their own learning quantity and quality of the learning process. Finally, (9) “Social Interaction,” which encompasses collaborative work, social engagement via smart learning environments, cognitive presence in forums, and active forum participation.

Of the articles included, 25 research studies reported factors associated with learning outcomes. However, some articles identified up to four different types of associated factors. These were categorized according to the previously described themes to obtain descriptive statistics. A total of 47 factors associated with achieving LOs were identified. The most prevalent factor was “engagement” (34.5%), followed by “platform behavior” (19.1%). The least prevalent factors were “demographic features” (2.1%) and “self-assessment” (2.1%; Table 1).

Categorization of Associated Factors to Learning Outcomes into Themes.

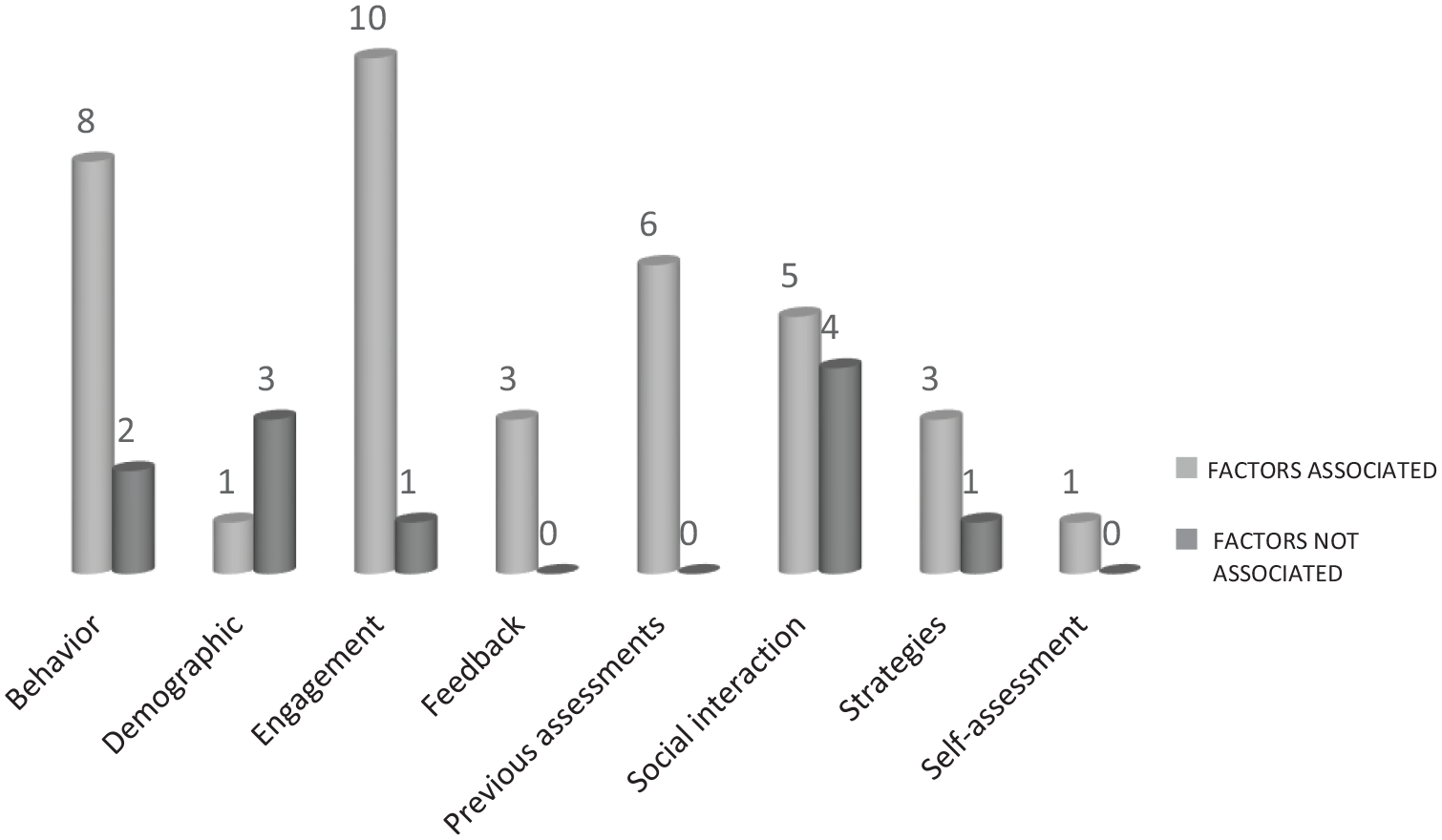

Only nine studies (33%) report “not associated” factors. Table 2 presents the number of studies that reported and that did not report factors associated with learning outcomes according to the analysis themes. It can be observed that across all categories, the percentage of studies reporting associated factors with LOs exceeds the studies that did not report related factors, except for the sociodemographic information theme. Within this category, it was identified that the continent of origin is an associated factor with LOs, whereas gender, age, and cultural background do not appear to be associated to LOs. It can be observed that 29.6% of the articles identified factors within the “behavior” category, followed by 22% of studies that identified factors related to the “previous assessment” category (Figure 2).

Categorization of Studies that do and do not Report Factors Associated with Learning Outcomes.

Categorization of studies that do and do not report factors associated to with learning outcomes.

Additionally, a specific analysis was conducted on factors associated and not associated with LOs for each of the themes. Descriptors of these factors were listed to facilitate their comparison and analysis (Annex 2). This allowed us to analyze those categories, yielding mixed results. Despite the studies identifying non-associated factors being lower in percentage terms, it was noted that the number of logins, time spent navigating the platform, watching videos in the LMS, participating in forums, and engaging in flipped classroom activities were identified both as associated and non-associated factors to LOs.

There exists a divergence in the conceptualization of learning outcomes across the various studies examined. We observed that authors employ the term learning outcomes in diverse ways, such as equating them to final grades, overall or partial assessment scores, GPA (Grade Point Average) distinguishing between successful and unsuccessful learners through nominal categorization, tracking learning gains throughout the course assessments, or as a cumulative representation reflected in the total final grade (Figure 3).

Studies categorization according to how they measure learning outcomes.

According to our query, most research defines learning outcomes as final grades (51.8%). The second variable most used for measuring learning outcomes was the final exam (22.2%; Table 3).

Studies Categorization According to How They Measure Learning Outcomes.

Note. Nominal categorization refers when authors split the grades between successful versus failing learners or pass versus fail.

While analyzing the articles included, we delved into whether the concept of “learning outcomes” was operationalized from a theoretical perspective. Our findings revealed that out of the 27 articles incorporated, none employed a theoretical definition to delineate this concept.

Regarding the educational modality in which the learning analytic system was applied, we identified that 12 studies were developed in virtual learning environments (44.4%), 10 studies were developed in blended learning educational environments (37%), three studies were carried on face-to-face learning environments (11.1%), and two studies did not specify this information (7.4%; Table 4). We generate a new category “not specified” in those cases where the paper does not mention the learning environment modality in which data was collected.

Educational Modality of Selected Studies Categorized.

Another crucial aspect of interest in this research involves determining whether the employed LA systems are rooted in a theoretical basis or an educational reference framework. An analysis of the theoretical frameworks utilized in each selected study was undertaken to accomplish this. Out of the total number of papers, 11 studies (40.8%) explicitly reported a theoretical framework supporting the employed LA systems (for more details, see Annex 1).

Out the total selected studies, only one study conducted its analysis using a sample from one academic program (3.7%). Nine studies (33%) presented their findings based on samples encompassing multiple courses within the same institution, while 17 studies (62.9%) reported their data considering a single course within the participating institution. None of the studies included in this review used data across different university institutions. The categorization of articles based on their participants can be observed in Table 5.

Sample Scope of Selected Studies.

Discussion

Our main research question sought to identify what factors are associated to LOs in LA research. From these papers, multiple articles highlighted up to four distinct types of factors associated with LOs. These identified factors were systematically categorized based on earlier themes to derive descriptive statistics. A total of 47 factors linked to LOs were recognized. The most frequently occurring factor was “engagement” (34.5%), followed by “platform behavior” (19.1%). Conversely, the least prevalent factors observed were “demographic features” (2.1%) and “self-assessment.”

As evident from the data, both “behavior” and “engagement” emerged as the most prevalent factors. Increased involvement in the LMS, including active engagement in learning activities, the frequency of clicks, or the duration of connection time, is a promising predictor of academic success. These findings align with prior research in educational big data, particularly within the realm of e-learning, which consistently demonstrates the correlation between learning behavior on platforms and academic performance (De Silva et al., 2024; Qiu et al., 2022). However, these findings demand careful consideration due to the probable influence of the data extraction method on the prevalence of learning behavior across studies. A significant portion of LA data originates from LMS, making this information readily accessible and easily gathered. Consequently, it is discernible that learning behavior and engagement have emerged as extensively researched factors, with researchers actively developing models proficient in predicting LOs through the utilization of LMS data. However, asserting the supremacy of these factors over others in terms of their association with LOs would be premature, particularly given the nature of information processed by LA tools. Despite the invaluable utility of LMS data, these systems may overlook critical information that is not automatically collected (Chaudhary & Singh, 2022; Dawson et al., 2019; Qiu et al., 2022).

The third variable identified as most studied and related to LOs is previous assessment. Previous assessments appear to be important predictors of final grades. Some arguments behind these results are that previous grades tend to reflect student’s consistency in academic achievement over time. Previous grades can also indicate a student’s level of commitment and motivation toward their studies. Students who have achieved high grades in the past often exhibit higher levels of commitment and motivation to continue achieving good results (Yağcı, 2022).

This literature review identified five studies indicating an association between LOs and social interaction. The studies in this SLR highlighted aspects linked to LO, such as mutual shared attention during learning activities, active participation in discussions that stimulated peer responses through significant contributions, engagement in collaborative work, and cognitive presence measured by the depth and complexity of comments within forums. These results are consistent with previous research, which has identified online collaborative learning activities as a significant predictor of LOs (Al-Samarraie & Saeed, 2018). It effectively improves academic performance and other cognitive processes (Alloway & Alloway, 2012; Al-Rahmi & Zeki, 2017). The common thread among all these factors within the social interaction theme associated with LOs is how students establish their cognitive presence during social learning activities. When examining studies that did not report an association between social interaction and LOs, it becomes evident that these articles solely assessed the number of forum posts. According to Chi and Wylie (2014) interactions are deemed beneficial only when they contribute to knowledge beyond the presented learning materials and other peers’ contributions, leading to meaningful replies and discussions. Hence, social interaction within the forum and instructional techniques encouraging peer-to-peer engagement through discussions and collaborative problem-solving substantially affect learning (Blumenstein, 2020; Joksimović et al., 2015).

Another factor associated with LOs is pedagogical strategies. Previous research has demonstrated the direct impact of pedagogical approaches on LOs (Khampirat, 2021), particularly active learning strategies. It is widely acknowledged in the literature that integrating real-life and authentic learning tasks effectively promotes cognitive skills,self-efficacy, and enhances LOs (Blumenstein, 2020). Finally, it can be confirmed that the factor associated with sociodemographic aspects is not widely explored in the literature. However, when studied, it has been corroborated that factors such as gender or age do not appear to be associated with LOs. To comprehensively understand the factors linked to LOs as identified in LA research, it is crucial to comprehend the characteristics and contexts of the studies in which these factors were gathered. Therefore, the outcomes of the additional research questions posed in this study will be deliberated upon.

Our second research question sought to identify how are LOs understood in learning analytics systems. Our study found that the conceptualization of LOs varied among the papers studied. According to the definition adopted in this research, LOs is a complete set of knowledge, skills, and attitudes that students should possess once they leave the university (Caspersen et al., 2017; Crawley et al., 2014; Yorke, 2007). The evidence derived from the first research question corroborates that there were even five different definitions for LOs. However, none of the studies included in this review evaluate LOs using the theoretical definition proposed by the literature. As a matter of fact, from the total amount of papers included none of them present a theoretical definition of the concept. Most studies use “final grade” concerning LO. Others use concepts such as GPA, learning gains, intermediate evaluations, and final exam score. This phenomenon is coherent with the findings made by Foster and Francis (2020) and Namoun and Alshanqiti (2021), who faced similar disparities in LOs definition in their research. The first observation is that literature uses the concept of “learning outcomes” incautiously, without adopting any formal definition in most cases, a fact that we consider a significant weakness when it comes to making a valid statement of LOs evaluation. There exist several well-recognized issues associated with using grades to assess LOs. The main concern is that these measures lack standardization, making comparisons between academic programs or institutions challenging, as final grades or GPA amalgamate diverse assessment types, ranging from very traditional educational formats to innovative educational contexts (Blumenstein, 2020; Yorke, 2007; Zhang et al., 2022).

The third question of this systematic review pretends to understand which educational modality (virtual, blended, and face-to-face environments) where factors linked to LOs data were gathered. We identified that most studies collected data from virtual learning environments. That was expected, considering that most studies used the available data of their LMS. However, despite its important implications, some authors point out that these systems need to include important information that is not automatically collected (Dawson et al., 2019). In that regard, there needs to be more experience in collecting information related to the curriculum (Dennehy et al., 2023; Hilliger et al., 2020), and curricular information can bring significant insights to an academic institution. However, for researchers to develop an LA tool that considers this type of information, they must conceptualize the curricular process and define how it can be collected to create more accurate LA tools (Wiley et al., 2020). Another case is social and psychological factors, which relies on self-reports or standardized tests, which must be collected through a systematic process (Dawson et al., 2019). LA systems that include this information would enrich the models with relevant data for decision-making. In this sense, it is necessary to think of strategies to collect this kind of data without extenuating work from the user and going beyond the available information in LMS. The findings from this research question are closely intertwined with those obtained in the principal research question of this studies. The factors identified in LA systems might be influenced by the nature of the data and learning contexts that these systems collect.

As part of our investigation’s fourth research question, this systematic review assessed whether LA systems incorporated theoretical frameworks in their development, implementation, and analysis. Our findings revealed that merely 40% of the selected papers referenced theories associated with learning to underpin their systems, while the remainder did not. However, this highlights a noteworthy concern regarding the development of LA systems, emphasizing the importance of integrating the latest insights into learning mechanisms to ensure the effectiveness of these systems as functional tools for decision-making. Ifenthaler (2017) stresses the necessity of integrating theories and fundamental learning principles within LA to effectively transform acquired data into meaningful insights for the educational process. This underscores the crucial role of interdisciplinary collaborations in advancing this research domain. Additionally, Giannakos and Cukurova (2022) emphasize the significance of learning theories as they provide a robust and scientifically grounded framework for interpreting observations and elucidating learning phenomena. According to these authors, there needs to be a solid theoretical foundation that relegates research outcomes to mere compilations of data. Wong et al. (2019) conducted an SLR to explore the theories utilized in LA systems, and their findings align closely with ours. They pointed out that a good understanding of how learning occurs, how learning can be supported, and how student characteristics influence learning are needed if LA aims to understand and optimize learning. Furthermore, they argue that the learning theories should be an integral aspect of the research narrative and explain how the theory informed each decision in developing an LA tool. These observations are consistent with Guzmán-Valenzuela et al. (2021), who found that education and learning theories must be revised in LA research. Similar results were identified by Giannakos and Cukurova (2022) noted a need for more robust engagement with theory in their SLR. Their study revealed that theory is not consistently integrated or does not sufficiently contribute to theoretical knowledge within the LA field. In conclusion, they noted a limited focus on theory within LA research. While researchers highlight the necessity of LA investigation grounded in learning theories (Valle et al., 2021), different SLR (Motz et al., 2023; Schwendimann et al., 2017), including the results of our study, have shown this is not the case.

Future research in learning analytics must evaluate learning theories to formulate hypotheses, research methods, and interpret data. This has significant potential in improving learning theories, considering the massive amount of data an LA system can process. At the same time, the availability of a vast amount of scientific data about learning can aid in improving LA systems. In this regard, Siemens claimed that learning is a social process, and one cannot entirely reduce it to algorithms (Siemens, 2013). It is essential to clarify that this study did not categorize the learning theories mentioned in those selected papers because it is outside its primary purpose. However, for a better understanding of the impact of LA in learning and educational practice, future research must integrate learning and educational theories to guide LA labor through a holistic approach (Peña-Ayala, 2018). Incorporating theories from the learning sciences can broaden the scope of information integrated into learning analytics (LA) systems. This integration could enhance predictive models, fostering a deeper understanding of how students and instructors engage with these systems. Additionally, it can facilitate the inclusion of cultural aspects to improve usability and provide insights into how students can optimize their learning outcomes through the effective use of LA systems (Vuong, 2023). This integration could lead to a beneficial impact on both theoretical comprehension and practical applications, ultimately contributing to the enhancement of teaching and learning processes.

The fifth research question sought to identify the predominant scopes (institutions, academic programs, courses, single course) where the selected studies collect their data. Out of all the studies analyzed, just one study gathered data across an academic career. Most investigations (62.9%) obtained data from individual courses, while 33% collected data from multiple courses within the same university. None of the studies incorporated data from multiple universities. Our results are consistent with what was pointed out by Dawson et al. (2019) and Liz-Domínguez et al. (2019) claimed that there is a predominance of small-scale exploratory studies and a deficit of research undertaking evaluative studies that attempt to verify findings and provide explicit feedback to revise, refine, or develop new theories and models. So, it is possible to consider that the development of studies in LA is still in its infancy. It is necessary to develop even more studies involving various institutions and consider other essential elements in the teaching/learning process, and not only those available by default in LMS. In addition, a clear limitation identified in the literature is the sample of databases, often constrained by their practical applications in real-world settings. The small sample sizes and contextual limitations in data collection hinder the generalizability of findings (Sghir et al., 2023). Furthermore, leveraging datasets from various disciplines to validate predictive models is pivotal for drawing meaningful conclusions, especially considering that disciplines and fields are recognized for their distinct signature pedagogies (Wilson et al., 2017).

Lonn and Koester (2019) highlight the necessity of LA researchers in developing their tools to obtain the data required to improve the educational process. Their case study considered the needs of stakeholders and different office members to attend to the institutional needs, including academic, demographic, and curricular information. In this sense, this is the next step in developing LA tools: build systems that respond to the academic community’s needs (Wiley et al., 2020). This limitation about the kind of data traditionally collected in LA systems converges with the theoretical gap in LA studies. Previous investigations conceive the learning process as a dynamic social experience where students’ individual approaches regarding learning are essential. Other studies have identified that the interaction between context and individual variables comes together in predicting academic performance (Ornelas & Ordonez, 2017). Thus, both academic and non-academic factors significantly influence its achievement. However, this theoretical background has not been evident in our SLR.

Conclusions

Our study’s main purpose is to comprehensively understand the factors associated with LO evaluated through LA systems in HEIs. Our survey quest found several challenges and areas that need to be explored prevailing in the existing LOs prediction models studied through LA tools. This systematic literature review analyzed LA from an educational perspective, implying that our focus was on something other than technical issues.

Identifying LOs predictors or associated factors effectively ensures quality in learning processes (Qiu et al., 2022). Predictive models can be developed using machine learning techniques based on these factors. This approach enables developers and practitioners to incorporate valuable information, thereby facilitating the provision of timely attention in scenarios involving dropout risks, accreditation processes, feedback, intelligent feedback systems, and various other applications.

The most extensively researched factors revolve around learning behavior and engagement within LMS. However, these findings might be rooted in a common underlying phenomenon: LMS enables automatic collection of students’ usage behavior, readily available for researchers. This, however, does not imply a higher prevalence of factors associated with achieving LO. Instead, it suggests that such valuable information may not be consistently gathered or analyzed for deeper insights. We stress the importance of interpreting these results cautiously, considering the context in which the studies included in the SLR, collected their data. Factors such as the learning environment, scope, and theories underpinning LA systems enable a more comprehensive understanding of the phenomena.

Our study also identifies an absence of a standardized concept concerning LOs within the field of LA. Presently, LA research tends to examine LOs without a consistent theoretical framework, often restricting the analysis solely to final or partial grades. To advance this field, future investigations must integrate theoretical insights from disciplines such as education, psychology, or cognitive science to underpin and enhance LA research. In addition, we recommend formalizing a clear definition of the variable “learning outcomes” before developing predictive models that measure its attainment for future research.

Moreover, most of the included studies conducted their research within virtual and blended learning environments. This outcome is unsurprising, given that a substantial portion of information in LA systems is automatically sourced from LMS. However, this situation indicates a constraint: many LA systems need more essential information regarding the learning process. For example, curricular information, psychological data, or even students’ demographic information. Hence, the subsequent phase in LA research involves devising strategies to gather data without imposing excessive burdens on users. This step aims to surpass the automatically collected data within LMS by integrating various educational processes within the institution. The objective is to obtain the most valuable and comprehensive data possible. It is recommended that LA systems be developed to explore other educational fields, considering the specific needs of the users and attending to the major challenges that education institutions face. In doing so, future researchers should consider the different stakeholders of the educational systems through an interdisciplinary perspective, enriching the systems with valuable information for academics and decision-making (De Silva et al., 2024; Hilliger et al., 2024).

Regarding the theoretical foundations guiding the development and implementation of LA tools, we advocate for future investigations to incorporate contemporary learning theories as a fundamental aspect of their research. It is essential to elucidate how these theories informed each decision during the tool’s development (Vuong, 2023; Wiley et al., 2020). Simultaneously, LA analysis presents an exceptional opportunity to enhance learning theories, given the extensive volume of data that an LA system can process. Future research in learning analytics must evaluate learning theories to formulate hypotheses, research methods, and interpret data. Researchers must transform their scientific approach from exploratory analyses to evaluative research that studies LA actions’ impact on greater scales, considering even systemic transformations in institutions.

Since 2012, Siemens has emphasized that data would play a crucial role in the future of education, highlighting learning analytics (LA) as a promising field for exploring models and factors associated with learning outcomes, as well as enhancing learning experiences. However, this field remains in its early stages, and significant interdisciplinary efforts are required to fully realize its potential. Based on our findings, we conclude that future research in LA should focus on addressing the needs of stakeholders and designing systems grounded in educational objectives, rather than solely on the available information. This approach will better promote actionable insights from the data.

Limitations

This SLR study may involve specific threats to the validity of Neiva et al. (2016). One of those threats is the author’s bias while assessing primary sources. To mitigate this bias in this study, the authors defined the research protocol using PRISMA guidelines (Page et al., 2021), which included inclusion and exclusion criteria, as well as a quality checklist, and a peer review of sources. In addition, researchers opened the data to the public. Another limitation of this study was the access to the full text of primary sources not in all cases it was possible to access the full text. Thus, this study cannot guarantee that all the relevant works about this field were retrieved. Finally, we must consider publication bias, the tendency for studies with positive or statistically significant results to be published more frequently, leading to an incomplete representation of all available evidence. Finally, the low number of negative results published within the chosen articles may have influenced the outcomes of our review. This notably restricts the applicability of the implications and recommendations derived from our study.

Supplemental Material

sj-docx-1-sgo-10.1177_21582440251347374 – Supplemental material for Learning Outcomes Evaluation Through Learning Analytics Systems in Higher Education: A Systematic Literature Review

Supplemental material, sj-docx-1-sgo-10.1177_21582440251347374 for Learning Outcomes Evaluation Through Learning Analytics Systems in Higher Education: A Systematic Literature Review by Mónica Hernández-Campos, Antonio Gonzalez-Torres and Francisco José García-Peñalvo in SAGE Open

Footnotes

Acknowledgements

We thank Professor Phd. Yuen Law for reviewing the manuscript. We also acknowledge the valuable support in the search of the assistant Francini Mata and Calen Sibaja.

Ethical Considerations

No applicable.

Author Contributions

Mónica Hernández-Campos, Antonio Gonzalez-Torres, and Francisco García-Peñalvo analyzed the papers included in the systematic literature review. Mónica Hernández-Campos wrote the manuscript and Antonio Gonzalez and Francisco García-Peñalvo provided guidance, and suggested key revisions. The three authors read and approved the final manuscript.

Funding

This research was supported by Universidad de Salamanca and Instituto Tecnológico de Costa Rica.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The dataset(s) supporting the conclusions of this article is(are) available in the Zenodo repository (Hernández-Campos et al., 2024).

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.