Abstract

The use of game elements as a means to stimulate students’ motivation has increased in a number of disciplines across the world after 2010, but apart from a few exceptional cases, it has not been possible to show a significant effect on academic achievement. In this study, the effects of game elements on academic achievement have been investigated among 458 students in a teacher education program, distributed across 10 course instances over 11 years (2010–2020). Statistical analyses indicated that a game element consisting of a number of hand-in assignments contributed significantly to the total points of the final written exam. Although a comparison with the students’ results in the two previous modules showed that two thirds of the positive effect could be explained by selection, the remaining one third of the positive effect could still be explained in terms of the game elements significantly improving the achievements on the final written exam.

Introduction

The teacher’s task to stimulate motivation in students is a timeless and ancient challenge. The present study examines how a form of examination comprising two optional but equal routes to a passing grade has affected academic achievement in a teacher training course in history at a Swedish university over several years. The alternative to the traditional written exam at the end of the final module has been the opportunity to submit individual hand-in assignments throughout the course and receive points for them that have later been added to the sum total of points obtained for the written exam. The purpose of using these hand-in assignments has been to retain the course requirements while on the one hand offering those students who want it a gentler lead-up to the written exam situation, and on the other hand encouraging students to prepare for the written exam through individual challenges that are similar to the exam-specific preparation and involve some time pressure. Thus, the opportunity to collect points individually has entailed the use of certain game elements in an activity which is not in itself defined as a game; a phenomenon labeled gamification in international research (Brigham, 2015).

The use of game elements in higher education has increased, especially after 2010, but the usefulness of this approach has been a matter of contention (Brigham, 2015; Holmes & Gee, 2016; Mora et al., 2017; Subhash & Cudney, 2018). The emergence of gamification in this context derives from two global but separate processes of change. The first one concerns the recruitment of students. For a couple of decades around the turn of the millennium, higher education underwent the greatest shift since the empirical revolution of the 19th century. The share of each age group that started studying at university increased rapidly from 15% to 40% in some countries. Two consequences of this development, according to Biggs and Tang (2011) in a book on constructive alignment, were that the average ability, level of study experience, and level of ambition decreased, and that the rise in student numbers created competition among the new higher education institutions that were established at the time. The reason was that the development created incentives, in educational systems funded through fees, to accept large numbers of students (Biggs & Tang, 2011, pp. 3–7).

Even though Swedish university education is free of charge for domestic students and financed mainly by public funds, a similar development has taken place in Sweden as well since the size of the government grant for first- and second-cycle courses and study programs depends partly on student numbers and partly on the student completion rate (a bit simplified: the higher the numbers and the higher the completion rate, the higher the grant). However, the per-capita funding for each registered student based on his or her academic achievement varies greatly between different fields of study, and students in the humanities and the social sciences receive by far the smallest subsidy (Regeringen, 2021).

The other process of change is described by Brigham (2015). In 2015, the share of persons born after 1980 started making up a majority of the American labor force. An important shared trait of members of this group is that they grew up with mobile phones and Internet-based information technology, while older segments of the population encountered the new technology as adults. According to Brigham, 85% of all Americans aged 18 to 29 owned a smartphone that same year, 2015. One of the consequences of the younger generations growing up with new technology is their increasing demand for interactive electronic games developed in parallel with it. Gamified education does not in fact require digital technology, but its emergence has still been connected to the fast and cheap individual or group interaction that this technology affords (Brigham, 2015).

Although both described processes may seem to express an obvious need and an obvious possibility, it is important to be able to establish that the effects of the use of game elements in teaching are both significant and expedient. We believe that an intervention that only results in already high-achieving students performing even better is not sufficiently effective. The intervention needs to lead to better study performance among other students as well.

The aim of this study has been to examine to what extent gamification has contributed to the academic performance on a course at a teacher education program at a Swedish university. As will be outlined below, the research has been conducted without the use of a control group, which means that it has been necessary to try to establish whether or not the game element led to significantly improved study performance for the student group as a whole. The questions that the study has attempted to answer are therefore the following:

How did the game element of the course influence student achievement in accordance to the intended learning outcomes?

To what extent can the achievement of intended learning outcomes by means of game elements be explained by selection?

The theoretical foundation of the gamification of education is self-determination theory (SDT), a common theory used to explain and stimulate intrinsic motivation in a range of contexts. In an educational context, it aims to help students achieve learning goals by supporting the design of motivational teaching and examination activities that address three hypothesized psychological needs, namely: (1) a perceived sense of competence, (2) freedom of choice (autonomy), and (3) affinity (with teachers and other students). The fulfillment of these needs is considered to contribute to the internalization of external motivators. An example is teaching that replaces perceived demands to achieve learning outcomes in order to keep funding with study motivation related to achieving learning outcomes because they are seen as interesting and meaningful (Ryan & Deci, 2000). As Savolainen (2018) has characterized the theory, these satisfied needs “push” the desired behavior. However, since the study has not focused on the students’ subjective experiences of their work processes, these theoretical assumptions in themselves have not been empirically tested. In the concluding discussion, the empirical results are considered in the light of these assumptions only, with no other claim than to endow them with possible meaning.

Research Overview

The research design is based on the experiences that have been conveyed over the last decade in English-language research on the effects of games and game elements in higher education. The development of the research area until 2018 is briefly reviewed here through three previously published research summaries, and a few key references are then covered for the most relevant results of the last few years.

There are different forms of game elements in education. In a survey by Holmes and Gee (2016), game-based teaching and learning are discussed as a new and slowly increasing phenomenon starting a few years after the turn of the century, especially in an American context. The trend has assumed a range of different shapes, however, and has primarily been formed by individual teachers aiming to increase students’ motivation in primary and secondary school (K-12 settings). The corresponding development in higher education is described as similar. A lack of support from higher education institutions has, for good or for bad, made it necessary for teachers interested in the issue to experiment with gamification elements on their own and based on their own ideas.

Holmes and Gee (2016) divided the presented game elements into a number of broadly defined categories, the most well-known of which is associated with simulated situations, used for a long time in military education.

It is, however, the category that Holmes and Gee (2016) call Game-inspired that is relevant here. It comprises game-inspired elements in otherwise traditional forms of education that are used to catch the students’ attention or guide their actions in a certain direction. An obvious and, it would seem, good example of this category is when a number of assignments are “unlocked” in a certain order as they are completed in order to reach a clearly defined goal. The primary gain of this educational design, compared to the alternative of presenting all assignments at the same time in connection with the course introduction, is that it supposedly helps students focus on the right things in the right order.

Holmes and Gee (2016) summarize the critique that has been levelled at gamified education and that must be taken seriously when new study programs are designed: The game elements may be irrelevant in relation to the intended learning outcomes, they may move the focus from those outcomes to the game elements themselves, or—worst of all—they may contribute to the camouflaging of poor teaching or an otherwise deficient course content. The key challenge for a continued successful development of gamified educational environments, the authors conclude, is therefore the ability of the proponents to counter skepticism with constructive solutions (Holmes & Gee, 2016).

Another group of authors led by Mora et al. (2017) establish in another detailed survey that the overall experience of gamification in higher education has mainly resulted in limited positive effects or none at all, at the same time as the design and implementation of strategies have taken up a great deal of time. The authors have carefully selected 27 out of 2,314 texts on gamification in higher education, published in English 2011 to 2015 (Mora et al., 2017).

The conclusion drawn by Mora et al. (2017) is that the frameworks for learning examined in the survey could be used in higher education and that the interdisciplinary frameworks could have a supplementary function, even though they are primarily based on the element of repetition. Notwithstanding this result, the authors draw a picture of a still immature range of motivation-enhancing frameworks, in relation to which issues of achievability, ethics, and the cost of investment (in terms of time and money) are not discussed enough. In almost all the examined texts there is an emphasis on clearly formulated goals as crucial for successful application, but despite these experiences it is concluded that a surprisingly large number of the examined frameworks intended for learning were made up of vaguely designed ad hoc solutions which would be difficult to replicate and apply in another study program and with another teacher (Mora et al., 2017).

In a third research overview of peer reviewed scholarly articles written in English and published between 2012 and 2017, Subhash and Cudney (2018) examine a great number of other studies on the same subject. With few exceptions, this selection of 41 articles covers other studies than the ones analyzed by Mora et al. (2017).

Within the large group of courses which include some game elements, the vast majority of studies examined indicate significantly increased levels of motivation in courses including game elements, for instance expressed through the participants’ choices of more challenging tasks, but there are few studies that show significant effects on knowledge acquisition and they are based on a relatively limited selection. Even though several studies have focused on test groups and control groups taught in parallel, the latter with a traditional approach without game elements, the authors point out that the distribution in two groups has not always been randomized. Moreover, in several cases the relevance of student performance related to game elements appears uncertain in relation to the intended learning outcomes, which can sometimes be explained as a result of vaguely formulated objectives, but sometimes indicates a weak connection between objectives and game elements. In some cases, the results show that the quality of goal attainment has been lacking entirely, even though participants have reported experiences of increased motivation. In a couple of cases, it was shown that the game elements had at least increased the amount of time spent on independent study, which can be described as an advantage, at least in the light of limited teaching resources. The study results were summarized: The course setups including game elements have great potential, but that special emphasis must be placed on design and implementation (Subhash & Cudney, 2018).

The fact that a significant number of articles have been published over the last few years on the ”Effects of Gamification in Higher Education” has not decisively altered our impression of a still somewhat immature research domain. In the search engine OneSearch, including data from over 20 databases, the search string “effects AND gamification AND higher AND education” yields hundreds of hits for studies published in English in peer reviewed journals between 2018 and 2020. Even when excluding studies that have only examined students’ attitudes to game elements or the perceived value of them, the selection is substantial and spans all the inhabited continents of the world. Almost all the studies found concern the subject areas of mathematics, technology, computer science, psychology, economy, or medicine. However, the extremely condensed content of journal articles typically makes it difficult to form a general overview of course setups, the design of game elements, or how game elements have been applied in practice in educational settings. For this reason, studies focused exclusively on experienced motivation will not be further treated here, with a couple of exceptions. Chapman and Rich (2018) presented results from six instances of a course in “organizational behavior” in the US, where 124 students contributed self-evaluations of how 15 optional game elements available on the electronic course page affected their study motivation. Several elements were rated high on a five-grade scale of how each one of them had affected study motivation, but one of them stood out. As many as 78% of the respondents said that “Points for assignments” influenced motivation “more” or “much more.” Only 40% to 50% of the respondents gave equivalent rates to game elements like offering or receiving peer review, unlocking certain tasks through completing other tasks, or reporting the individual achievements of participants openly (Chapman & Rich, 2018).

The fact that the espousal of game elements in higher education does not vary, either in relation to gender or in relation to a personal interest in gaming, is highlighted in the conclusions of a questionnaire study by Davis et al. (2018). Out of 87 participants in an informatics course, 61% reported that they had learnt more thanks to a relatively extensive use of game elements. Support for game elements based on cooperation surpassed that for game elements based on competition. At the same time, 16% of respondents in the same group reported having experienced that the game elements did not influence learning at all, and as many as 23% even stated that learning had suffered from the inclusion of game elements (Davis et al., 2018). The authors do not say so explicitly, but a reasonable consequence of this outcome is that the use of game elements in a course must necessarily be—and be understood as—optional. It was probably not a great comfort to the minority that a majority of students were happy with the course setup.

The effects of game elements in higher education, specifically in relation to flipped classroom practices, were analyzed by Huang and Hew (2018). The results showed major and significant differences between test groups and control groups, taking two courses in library and information science on a Master program. Students in both test groups completed many more of the assigned tasks in between teaching sessions, and moreover did so with higher quality. The authors’ analysis of the result gives rise to five recommendations, and the following aspects seem particularly relevant in this context: (1) Explain all the conditions from the very beginning. Game elements that create confusion about what and how much is expected of the student will lead to frustration. (2) Connect game elements to the teaching and the intended learning outcomes, and emphasize the way in which they support learning. (3) Design game elements in such a way that they promote cooperation and mutual encouragement among students, rather than competition. (4) Differentiate the tasks related to game elements according to level of difficulty, and create several increasingly difficult tasks rather than only one large and challenging task. (5) Design game elements in such a way that all students are afforded the same chance to use them. For instance, avoid tasks that will be easier to complete with access to a certain computer hardware or software (Huang & Hew, 2018).

The typical research design of empirical studies from the very last few years is, like before, quasi-experiment in regular courses, but it is more common than before to divide the student group in two, a test group and a control group, and to use diagnostic tests before, during, and after the course, sometimes combined with analysis of qualitative data from interviews with the students. The study by Huang and Hew (2018), discussed above, uses this particular design.

Finally, a number of studies published in 2020 have pinpointed the way in which the effect of game elements may drop over time within the same course.

In two instances of a flipped classroom course in computer science, the students were given the opportunity to reach a set number of points, either through exercises during and between teaching sessions, completed individually or in groups, or in combination with a number of game elements which involved digital emblems. While there was very high activity in the game elements initially, this changed rapidly during the first few weeks and then kept decreasing almost throughout the rest of the course (Corvalán et al., 2020). This type of rapidly increasing and then steadily decreasing commitment, identified as a consequence of what Tsay et al. (2020) call the Novelty Effect, that is, that a new pedagogical strategy in itself produces an initial interest which then dwindles, is described as a built-in limitation of all game elements. Tsay et al. (2020) tried to circumvent this effect in their own pedagogical project. After a certain novelty effect of the game elements was demonstrated during the first year of the study, this effect could be almost entirely eliminated the second year, partly because the game elements were revised or replaced in accordance with the students’ evaluation of their perceived relevance in relation to the course content, and partly because the teachers paid more attention to the game elements (Tsay et al., 2020). The study conducted by Tsay et al. (2020) did not include a discussion about selection either, however, despite the fact that recruitment to the two test groups was based on voluntary self-registration.

Lastly, important results have also been published by Sanchez et al. (2020), who investigated the effects of game elements among 473 students taking an introductory course in psychology for one semester, over three parallel course instances during two consecutive semesters. Through game elements, the test group was encouraged to do repeated attempts in completing a large number of quizzes on the course content, while the control group was not encouraged in that way. Sanchez et al. (2020) show that the use of game elements proved to have a significantly positive effect specifically on the final course grades for medium- and high-performing students, as opposed to low-performing students, defined as students with study results at least one standard deviation below the average for the entire group. They explain the difference as either a result of the game elements enhancing the perceived self-efficacy of medium- and high-performing students, or a consequence of low-performing students being distracted rather than supported by the game elements. Based on the assumption that the students’ level of performance corresponds to their working memory capacity, the authors conclude that whichever of the two explanations is correct, game elements should be used carefully and with awareness that they may be beneficial for some groups of students and disadvantageous for others (Sanchez et al., 2020). The point is that the two alternative explanations are not mutually exclusive. A reasonable interpretation of this conclusion is (once again) that the use of game elements must be based on each individual student’s voluntary participation.

The conclusions of the studies reviewed above can be summarized as follows:

Game elements must be clearly connected to and relevant for the intended learning outcomes of the course or program, their educational function should be limited, and their use must not move focus away from the intended learning outcomes to the game elements themselves.

All game elements that are included in a study program should be presented no later than the introduction of the program.

They must not be based on or create a sense of insecurity, or require the students to have access to certain kinds of expensive equipment.

The design of game elements should be justifiable in terms of other ethical and economic aspects.

Game elements promoting interaction or cooperation among students have generally been more highly rated by students compared to game elements intended to stimulate competition.

Game elements unrelated to examination seem to have had a very limited impact on academic achievement.

Game elements should not be used for a long period of time, and not in more than one course per semester.

A minority of students have experienced that the game elements are detrimental to learning. This fact, combined with results that indicate that low-performing students have not been able to take advantage of the game elements or have possibly even been distracted by them, shows that the use of game elements in education has to be based on voluntary participation on the part of the individual student as well as the possibility to discontinue use at any time during the course or program.

Although almost all the studies reviewed have used a quasi-experimental design, the analysis of results has not always touched upon the weaknesses of that design, for instance in relation to selection, and specifically the risk that the students who are already motivated and high-performing have chosen to use the game elements when use has been a matter of the course participants’ voluntary participation.

However, the present study has been designed to take into account critical voices and obvious weaknesses in previous studies, which will be described in the next section.

Materials

The present study has focused on a teacher training course in the humanities, which has rarely been done in previous studies. Specifically, the course in question comprises 30 ECTS (European Credit Transfer and Accumulation System) credits, which is equivalent to 20 weeks of full-time study. It is an introductory first-cycle course in world history offered at 100% pace at a Swedish university each year between August and January. The course is typically taken as a part of the Secondary Education Teacher program (upper secondary-school), either as a campus course or a distance course, but it can also be taken as a freestanding course. For many of the students, this is the first university course taken.

Content of the Course

The course is divided into four modules that cover four historical epochs and that are studied and examined one at a time and not in any way in parallel. Module 1 (6 ECTS credits) covers the oldest period until approximately the year 800, while modules 2 and 3 (7.5 ECTS credits each) cover the middle ages and the early modern age, respectively. Examination in modules 2 and 3 is based on two individual written exams.

However, it is the fourth and final module that has been in focus for this study. It covers the history of the 20th century (9 ECTS credits, equivalent to 6 weeks of full-time study), and is examined using a written exam in late January. In addition to a few separately performed exercises in economic history, history education, and research methods which belong to the fourth module, this written exam examines some of the intended learning outcomes shared by all three versions of the course. Upon completion, the student should be able to describe the development of democracy and dictatorships during the 20th century, the causes for and consequences of the world wars and the cold war, and the emergence of the Swedish welfare state. The course literature related to the intended learning outcomes comprises around 500 pages in total, a bit more than half in English and the rest in Swedish.

The Focused Exam

The written exam in module 4 had the same design between 2010 and 2020. Out of a collection of around 50 study questions from the course literature, four questions were chosen for the exams 2015 to 2020, and the answers were rated by the first author of the present article with 0 to 10 points each, including the possibility to award half points within this range (e.g., 5.5 points). Between 2010 and 2014, three of the questions were chosen in this way by the same first author, while the fourth question was formulated independently by another teacher, who also rated the answers 0 to 10 points as described above. The sum total of points decided the grade (Distinction, Pass, or Fail). The limit for a grade of Pass was 23.5 points (60% of the maximum) and the limit for a grade of Distinction was 35.5 (90% of the maximum), no matter how the points were distributed. The level of difficulty for each individual study question can be characterized as moderate, at most. Although comparative questions have sometimes been used, most answers have been possible to find in a limited and well-defined part of the course literature. The large number of questions still made the examination task seem challenging to most students, which is why they were encouraged to prepare for the written exam through discussing possible answers to the study questions in study groups with up to 10 course participants that were created when the course started in August.

Without going further into detail here regarding the way in which the exam questions were selected or how the answers were rated, it can be noted that the total sum of points required for a grade of Pass was considered equivalent to the minimum level of demonstrated knowledge corresponding to the relevant intended learning outcomes.

Hand in Assignments—Quizzes

Except for the course instance 2012/2013, all students during the period in question were offered a game element connected to the written exam, consisting of seven hand-in assignments, so-called quizzes. If passed, they generated points that were later added to the total sum of points obtained for answers to exam questions. Thus, the game element constituted an alternative route to reaching one of the grade levels, that is, the total sum of at least 23.5 or at least 35.5 points, respectively. Quizzes 1 to 6 generated one point each, while quiz 7 generated two points. All quizzes were presented when the module started in early December and could then be completed in any number and any order. All quizzes were assessed by the first author of the present article, usually within one workday after they were submitted. If an answer was not passed, it was possible to submit a revised version until the deadline, set for the day before the written exam. Each quiz could only be passed once. Since the minimum number of points required for a grade of Pass for the exam was 23.5, thus about one third of these points could be obtained before the written exam took place.

The design of the game element was based on the fact that there is a lack of international guidelines or consensus regarding how knowledge can be applied in the humanities. Moreover, it is a key aspect of both study and research in this group of subjects that the same source or material can be interpreted in very dissimilar ways by different readers or audiences. For all quizzes, therefore, the only purpose was to stimulate students to formulate independent, individual, and research-based arguments in relation to questions of varying difficulty which had been formulated by the teacher, the authors of the course literature, or the students themselves. The quizzes were not linked to the study questions or each other, but all of them were based to a greater or lesser extent on the course literature and increased in difficulty from number 1 to number 7. The content of the quizzes varied, as did the procedure for completing them. In preparation for the written exam 2014 to 2020, they had the following design: quizzes 1 to 2 were easy and called on students to write relatively brief answers to a few or all questions in a selection linked to certain more specialized sections in the main course book, which were not covered in the study questions. Quizzes 3 to 4 were moderately difficult and focused on reasoning about separate texts which treated the course content in more depth. In order to be able to complete them, the students had to read some of the course literature. Quiz 5 was a practical exercise related to early 20th century women’s fashion in the Western world, carried out individually or in groups and documented and discussed in text and images. Quizzes 6 to 7 were more advanced specialized tasks which placed higher demands on the students’ reading of the course literature. The answer to quiz 6 was presented orally in a video recording, and the answer to quiz 7 was submitted in writing. Interpreted in accordance with a revised version of Bloom’s taxonomy (Owen, 2016), quizzes 1 to 2 could be seen as linked to the cognitive process “to remember,” quiz 3 “to understand,” quiz 4 “to apply,” quizzes 5 to 6 “to analyze,” and quiz 7 “to evaluate” the course content. The design of the quizzes before the written exam 2010 to 2012 was not identical with the quiz design used over the next few years, but very similar, and with the same progression of difficulty. In all versions, the game element was designed based on SDT, in such a way that the relatively quick feedback and awarded points were assumed to strengthen the students’ sense of competence. The choice to answer between all or no quizzes aimed to increase the feeling of autonomy and that the opportunity to prepare the answer of each quiz within the study group was expected to stimulate the affinity with other students (Ryan & Deci, 2000).

To sum up: A score of at least 23.5 was required for a passing result on the examination task. Up to 40 points could be obtained by answering four exam questions, selected from a group of approximately 50 study questions known in advance. An additional up to 8 points could be obtained by answering up to seven quizzes before the exam. Based on these conditions, the work with study questions, individually or in study groups, formed an essential part of the exam preparations for all students. However, the game element offered an option to obtain some of the required points for a passing grade. The components of the game element were varying in complexity but limited in scope, leaving ample space for individual solutions, and except for quiz 5 based on the reading of relevant course literature. None of the quizzes were designed to create a sense of competition among the students. On the contrary, they required individual and independent achievements that were instead stimulated by interaction between students, especially in study groups.

The course content in the module has of course not stayed exactly the same over time. The design of study questions and quizzes has varied, parts of the course literature have been substituted, new editions of the same literature have replaced old editions, but the basic structure and assessment criteria of the examination task have been retained, which on the whole suggests that the research presented in the present study can be considered realizable and meaningful.

Methods

Since all students in each course instance have been offered the same options in preparation for the written exam in module 4, there is no control group available for comparison. In order to answer the research questions, the following data have been collected for the course instances 2009/2010 to 2011/2012 and 2013/2014 to 2019/2020 from the assessment sheets created when the exam results from each module have been registered: students’ names, gender, grades, and the sum total of points from the written exam in module 4, divided into the points obtained for exam questions and the points obtained for the game element (i.e., passed quizzes). In addition, the grades and points for written exams in modules 2 and 3 were collected.

In the next step, recurrent students from previous semesters were excluded from the data, leaving only students taking the exam in module 4 for the first time. Within this selection, the grades and points obtained in written exams in modules 2 and 3 have been kept only in those cases when these written exams were completed in the same course instance as the written exam in module 4.

Finally, after all the data listed above had been compiled for each course participant, the data were anonymized (Svanberg, 2023).

Since the quasi-experimental design of the study means that the analysis is not based on comparison with a control group, the outcome of the written exam in module 4 has been compared with the outcome of the written exam in the two previous modules within the same course instance.

Because the game element generated points that were added to the result of the final exam, there were obvious reasons for students to submit answers to quizzes. What we have investigated, however, is whether the game element also had a significant effect on the exam, and to what extent this effect can be explained by selection. Therefore, the analysis of academic achievements in module 4 is based solely on the total of points obtained for answers to exam questions, that is, excluding points from the game element (the quizzes), which means a sum total of between 0 and 40 points. This variable has been used as an outcome variable.

Analysis

The achievement variable has been used in the analysis both in its original continuous form and in a categorized version, more specifically: 0 to 23 points = Fail (coded 0); 23.5 to 35.0 points = Pass (coded 1), and 35.5 points or more = Distinction (coded 2). The continuous form of the achievement variable has been used for mean value comparisons and for linear regression analyses, and the categorical version of the variable has been applied in contingency table analyses, and in binary logistic regression analyses.

The variable that describes the number of points obtained for quizzes has been handled in a similar manner, used as an independent variable. A continuous variable based on the sum of points that each individual student has obtained through completing the quizzes was created. This variable too has been used in its original continuous form, but is also categorized and dichotomized in order to facilitate continency table analysis, as well as binary logistic regression analyses.

Thus, students who have not obtained points from any quiz constitutes one category (coded 0), while students who have obtained points from one or several quizzes constituted another (coded 1). In other cases, the following categories have been used: No points obtained for any quiz (coded 0); 1 to 3 points obtained for quizzes (coded 1); 4 to 6 points obtained for quizzes (coded 2); 7 to 8 points obtained for quizzes (coded 3).

These two variables have been analyzed using different statistical techniques. In an initial step the average achievement during each year was analyzed by comparing the average score along the continuous exam variable with division for year of investigation. In the linear regression analyses, the continuous number of quizzes was entered as an independent variable and the points obtained at the exam as a dependent variable. In addition, these analyses were taking into account the effect of exam results in module 3 and in module 2, in different models.

In addition, the categorized variables (exam results and number of quizzes) were subjected to binary logistic regression analyses. Thus, the number of quizzes was entered as independent variable and the grade variable as dependent variable.

The software IBM SPSS 26.0 has been used for this purpose.

Results

The selection from the course instances 2009/2010 to 2019/2020 includes 497 students, and 39 of them completed the written exam in 2013, when it was not possible to submit quizzes before the exam. Of the remaining 458 students from the other 10 course instances included here, 154 were women (33.6 %). A t-test shows that the female students’ average points obtained for exam questions, 22.01, do not differ significantly from the male students’ average points, 22.42 (p = .642).

Table 1 shows an overview of the outcome of the first examination in each course instance when it comes to points given for passed quizzes. It shows the result for a total of 497 unique individuals, 39 of whom completed the written exam in the course instance 2012/2013. It is evident in the table that many students have taken the opportunity to submit quizzes when it has been possible. Similarly, Table 2 shows the average points given for the exam questions in module 4, comparing different categories based on the number of points obtained for quizzes. Even though some cells include few individuals, and comparisons will therefore be very uncertain, it is possible to discern a general pattern. There is a clear difference in outcome between the group of students who submitted many quizzes and the group who submitted few or none. Students who did not complete any of the quizzes tend to obtain fewer points in the exam, while students who obtained 4 to 6 or 7 to 8 points from quizzes, did tend to obtain more points for the exam. In the written exam that took place in January 2012, the 16 students in the group that had obtained 7 to 8 points for quizzes received 26.0 points on average for the four exam questions, while the 8 students in the group that had obtained 0 to 4 points for quizzes received only 17.7 points on average for the same questions. This pattern was recurrent in most years of investigation, but was less apparent in 2015 and 2017.

Number of Students and Outcome of Quizzes Submitted in a First-Cycle History Course in January of the Course Instances 2009/2010 to 2019/2020 (N = 497).

Mean Exam Results, Excluding Points Obtained for Quizzes, With Students Grouped in Four Categories Related to Points Obtained for Quizzes Over the 10 Years Included in the Study 2009/2010 to 2019/2020 (N = 497).

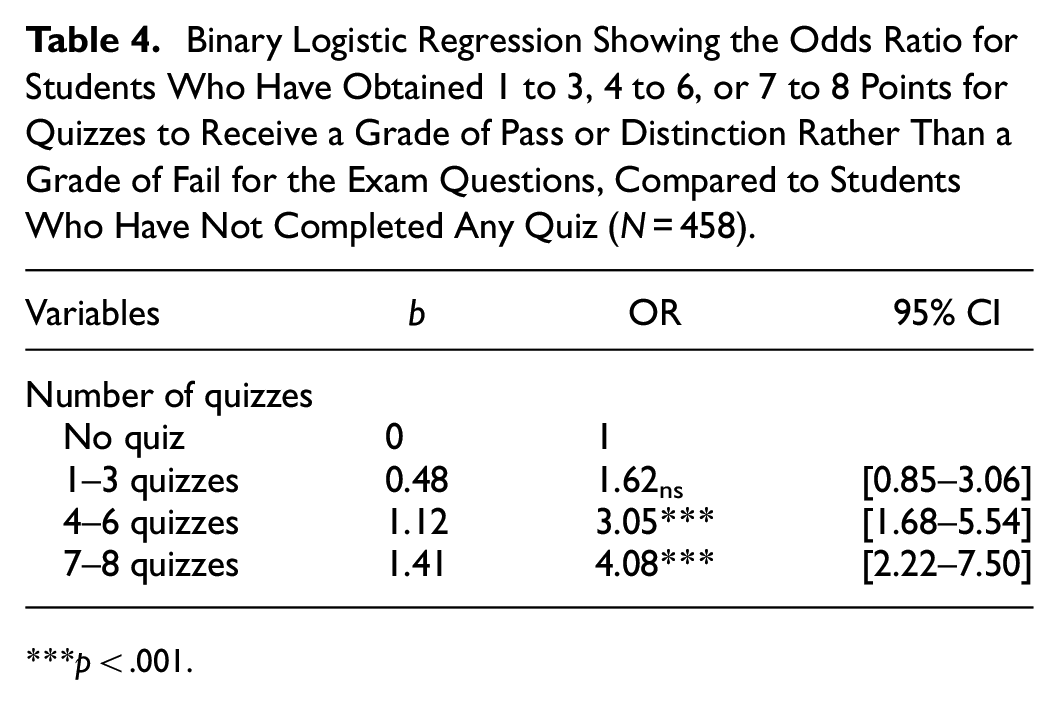

Table 3 shows the result of a binary logistic regression analysis of the relationship between the number of points obtained for quizzes and the exam results (grades). Table 3 shows that the odds ratio for students who have completed at least one quiz to receive a grade of Pass or Distinction rather than a grade of Fail (based exclusively on the points obtained for exam questions) is almost three times as high (OR = 2.84), compared to those who have not completed any quiz. Similarly, Table 4 shows the result of a binary logistic regression analysis that confirm and clarify that there is a positive relationship between the number of points obtained for quizzes and the exam results in module 4 (based on the sum total of points obtained for answers to exam questions). The more quizzes a student completes, the greater the odds ratio is for that student to receive a grade of Pass or Distinction compared to a grade of Fail for the examination in this module. For example, the odds ratio for students who have obtained 7 to 8 points for quizzes to receive a grade of Pass or Distinction is about 4 times as high (OR = 4.08), compared to students who have not obtained any points for quizzes. The odds ratio then decreases gradually as the number of points obtained for quizzes drops.

Binary Logistic Regression Showing the Odds Ratio for Students Who Have Completed at Least One Quiz to Receive a Grade of Pass or Distinction, Compared to Students Who Have Not Completed Any Quiz (N = 458).

p < .001.

Binary Logistic Regression Showing the Odds Ratio for Students Who Have Obtained 1 to 3, 4 to 6, or 7 to 8 Points for Quizzes to Receive a Grade of Pass or Distinction Rather Than a Grade of Fail for the Exam Questions, Compared to Students Who Have Not Completed Any Quiz (N = 458).

p < .001.

Of the 458 students in the sample that has been analyzed, 280 completed the written exam in module 2 in the same course instance, 277 had completed the written exam in module 3, and 246 had completed both written exams, in addition to the written exam in module 4. Table 5 shows the relationship between the number of points obtained for quizzes and the results of exam questions in module 4 (Model A), adjusted for exam results in module 3 (Model B), and adjusted for the results in module 3 and module 2, respectively (Model C), using linear regression analysis. The analysis shows that there is a positive relationship between the number of points obtained for quizzes and the results of exam questions in module 4. For each point obtained for quizzes, the exam points in module 4 increase with 1.13 points on average. When the result in module 3 is included, the effect of the quizzes decreases, but remains statistically significant. This is also true when the exam results in both module 3 and module 2 are taken into consideration (Model C).

Linear Regression Analysis of the Relationship Between the Number of Points for Quizzes and the Number of Points Obtained for the Written Exam in Module 4, Adjusted for Exam Results in Modules 3 and 2 (N = 246).

Note. Model A shows the bivariate relationship between points for quizzes and exam results in module 4; Model B: adjusted for exam results in module 3; Model C: adjusted for exam results in both module 3 and module 2.

p < .05. **p < .01. ***p < .001.

Discussion

Compared to a majority of previous studies in the area, the difference is that the focus in this study has been to investigate the use of game elements in relation to the quality of goal attainment and how the outcome can be explained through selection. Even though the design of the game element has largely followed the recommendations formulated in the study by Huang and Hew (2018), the relatively small number of students on the course annually means that it took several years before the actual effects of gamification could be established.

The fact that the game element, consisting of seven quizzes, have encouraged many course participants to collect extra points before the final written exam has been evident from the very beginning, and corresponds to the results from Chapman and Rich (2018). However, to what extent have the quizzes supported the students’ ability to complete the actual written exam, and to what extent can the difference be explained by the fact that many course participants who were already high-performing students have chosen to complete the quizzes, as expressed in the two research questions? The answer can be summarized as follows:

The analysis shows that each point, out of eight possible from the game element (i.e., passed quizzes), has improved the result obtained for the answers to exam questions with 1.13 points on average, in a written exam with a maximum of 40 points.

Two thirds of this average improvement can be explained in terms of selection, since course participants who received a grade of Pass or Distinction for modules 2 and 3 in the same course instance also tended to collect points for quizzes in module 4. The remaining third of the difference can however be explained, with a high degree of certainty, as a motivation-enhancing effect of the quizzes themselves.

In spite of the fact that the game element touched on other parts of the course content than did the exam questions, it significantly contributed to improving the results from the exam questions. As pointed out above, the quizzes during all the years were designed so that they did not directly overlap with the content of the collection of study questions that formed the basis for the exam. This suggests that the demonstrated effect of the game element is an expression of an increased study motivation.

Although the significant effect of the game element on the quality of exam answers may seem limited, it is important to keep in mind that the points generated by the quizzes themselves, that is, all points in the second row in Table 1, have been added to the sum total of points given for answers to exam questions after the grading of the exams. When all these points are included, the effect of the quizzes on the academic achievement as a whole must be considered substantial. Since the rewards of the game element did not turn into an advantage until the very end of the module, it can reasonably be surmised that no part of its effect on academic achievement is due to the novelty effect described by Corvalán et al. (2020), Tsay et al. (2020), or Sanchez et al. (2020).

If the result is an expression of increased study motivation, to what extent can the empirical result be explained through the assumptions of SDT (Ryan & Deci, 2000)?

As initially emphasized, we can only speculate about this since the students’ perceived study motivation has not been examined. The design of the game element in any case opens up for such an interpretation. The students’ freedom of choice throughout the study process clearly supports the assumption of the importance of perceived autonomy. The call to prepare the answers within the study group as well as the relatively quick feedback on submitted answers to the quizzes in any case provide some support for the significance of the assumptions about perceived competence and perceived affinity with others. If this is true, the game element could be interpreted as having “pushed” the students’ behavior in the direction of investing more time in reading and reflecting on the course literature in a way that made it easier to answer the exam questions (Ryan & Deci, 2000; Savolainen, 2018). However, the result show that SDT can explain only one third of the effect of the game element on the written exam.

Selection, which can thus explain two-thirds of the quizzes’ effect on the exam result, may at first appear to be a simple explanation. If study results are seen as expressing the level of motivation, the outcome can be interpreted simply as an indication that motivated students who entered module 4 more often chose to complete quizzes and consequently performed even better in the written exam than they would have otherwise. Students with low motivation were then not able to take on the quizzes as well, in addition to the study questions, to the same extent as the motivated students. If, however, we do not assume a causal relationship between motivation and achievement, a more nuanced interpretation of the results is necessary. Is it not possible for a high-performing student to be sick and tired of studying, and is it not possible that a highly motivated student may have difficulties in achieving good study results due to financial or social reasons?

If the result is considered in the light of interpretations of similar empirical results presented by Davis et al. (2018) and Sanchez et al. (2020), there is a possibility that the medium-performing and high-performing students, regardless of the level of study motivation, had their perceived self-efficacy boosted by the game element to a greater extent than the low-performing students, which in that case indirectly stimulated them when they worked with study questions. According to the same interpretation, moreover, low-performing students with a lower working memory capacity might have been distracted by the game element used in the module.

Even with this nuanced interpretation, the result still supports gamified education, but adds an important caveat in relation to previous research, namely that individual students should be encouraged to use the game element only if they feel that such elements increase study motivation, and that students should be explicitly advised to ignore them if they are experienced as a distraction or even a damper on motivation. Used correctly, game elements have positive consequences for the student group as a whole: More students reach the intended learning outcomes within the duration of the course, while fewer students have to fail and realize that everything must be done all over again. Fewer retakes also mean that the teacher can save time without having to lower the level of requirements.

Study Limitations and Future Research

There are several limitations related to this study worth consideration. For instance, the study naturally includes the course’s unique design; more, fewer, or more difficult quizzes might have given a different result. Similar remarks can be made on the syllabus, the literature, the study questions, the staffing and the schedule. Among the less obvious limitations that an international readership should be aware of are, however, a couple of cultural aspects, one of which was mentioned initially, namely that university education in Sweden is free. If the studies are interrupted, the possibilities to re-register on a course are unlimited as long as the course exists. The possibility of obtaining some form of employment for a period is good. For a long time now, the country has lacked housewives in the generations studying at university. Among almost all young couples, married or unmarried, with or without children, there are two income earners. Overall, this means that the threshold for either starting or dropping out of a course is likely to be lower than in some other cultural contexts. The extrinsic motivation to successfully complete an academic education is therefore likely to be lower in Sweden than in many other parts of the world, which is why a significantly higher percentage would likely have chosen to answer quizzes in such a cultural context. The effect of selection would thus also probably have been higher.

To conclude, it can be stated that the application of the assessment strategy presented here seems like a prime example of the lonely and interested teacher’s solo project, which Holmes and Gee (2016) describe as the typical background of gamified higher education. The game element in the course has remained and been revised continuously by the first author of the present article, primarily based on gut feeling, on encouraging comments from students in submitted answers and oral and written course evaluations, and on personal impressions of how the different examination tasks have been understood. Despite these experiences, several questions within the field are still open for discussion, and especially Mora’s et al. (2017) reflections are worth considering. How does the game element relate to the rest of the course content? Is the time it takes to use it justifiable, both from a student perspective and from a teacher perspective? And to what extent does it prepare the students for continuing studies or for their own professional careers as teachers? Questions abound, but those are for future research to investigate.

Footnotes

Acknowledgements

The text has been translated from Swedish by Anna Linzie. The authors would like to thank Niklas Jakobsson, Johan Samuelsson, and Joakim Wendell for valuable comments on earlier drafts of this article.

Author Note

This research was conducted while Daniel Bergh was at the Department of Political, Historical, Religious, and Cultural Studies, Karlstad University, Karlstad, Sweden. He is now at the Department of Education and Special Education, University of Gothenburg, Gothenburg, Sweden and may be contacted at

Author Contributions

All authors contributed to the study conception and design. Material preparation and data collection were performed by Mikael Svanberg and analysis were performed by Daniel Bergh. The first draft of the manuscript was written by Mikael Svanberg and Daniel Bergh and both authors commented on previous versions of the manuscript. Both authors read and approved the final manuscript.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.