Abstract

While previous research has shown how cross-disciplinary research informs impactful research publications and patents, much less is known about how such work alters the capacities and subsequent investigative behaviors of researchers themselves. We address this topic with an exploratory longitudinal case study of a mid-size cross-disciplinary team funded between 2010 and 2018. With data from 12 years of surveys and interviews, we show that the researchers perceived significant changes to their collaboration practices and gains in their subsequent scientific and cross-disciplinary institutional knowledge, including public and cross-disciplinary communication abilities. These results provide information for funders of cross-disciplinary research about the potential long-term impacts of such projects on team members. As cross-disciplinary teams become more prevalent to address critical problems, these results also indicate routes for further fruitful research to better understand how membership on such teams impacts individual team members.

Plain Language Summary

Through a longitudinal case study, we evaluate the development of interdisciplinary capacities of individuals working in a cross-disciplinary team. While analyses of many aspects of interdisciplinary teamwork have been published, there are relatively few longitudinal studies. Also, very few prior studies address development of capacities within individual members of the team. Understanding development of individual capacities is an aspect which is critically important in assessing the long-term impacts, beyond the grant period, of investments in cross-disciplinary team building.

Keywords

Introduction

Researchers form cross-disciplinary teams to address questions and problems that outstrip the resources and specialized knowledge of traditional academic disciplines or fields (K. L. Hall et al., 2018; National Research Council, 2004, 2015; Stokols et al., 2008). Such teams comprise researchers with different formal training, subject matter expertise, organizations, geographic locations, career stages, social identities and life experiences, etc. A common expectation is that the individuals in such groups will complement each other so that by doing their individually expert tasks they will be better able to address practical problems, uncover assumptions, bridge conceptual frameworks and methods, vet and iterate results, and ultimately produce the requisite knowledge that motivated the formation of the team (Vladova et al., 2025). In this way, cross-disciplinary teams provide a means of extending processes like organized skepticism beyond traditional social and epistemic divisions of intellectual labor (Longino, 1990; Merton, 1973).

There is ongoing research to understand how cross-disciplinary teams are successful or not (Fiore, 2008; National Research Council, 2015; Schölvinck et al., 2024). Bozeman et al. (2001) called for more studies about how new experiences engender capacities among researchers themselves, including cognitive skills, substantive scientific and technical knowledge, and contextual skills. Lee and Bozeman (2005) indicated that the manner and structure of collaboration may be key aspects for developing these skills and competencies. Nonetheless, it remains unclear how cross-disciplinary collaboration “alters and reshapes the works (and workers) that were originally joined” (Leahey, 2016, p. 92), partly given a lack of longitudinal analyses of teams (Edelenbos et al., 2017).

To address how cross-disciplinary projects influence the capacities of researchers taken as individuals, we report the results of an exploratory and descriptive longitudinal case study focused on a long-term project with a mid-size team. We studied how team members perceived the effects of team participation on their scientific knowledge, which we define as content and theoretical knowledge particular to a field, and on their institutional knowledge, which we define as knowledge about the components, histories, activities, and causal outcomes of science institutions (Hodgson, 2006; Ostrom, 2009) and organizations (March & Simon, 1993).

Briefly, our results indicate that as the project evolved, team members spent significantly more time on cross-fertilization activities, and that there are qualitative associations between that change and increases both in individuals’ perceived cross-disciplinary scientific knowledge and in their cross-disciplinary institutional knowledge and skills. Furthermore, the team members increased the amount of time they spent working on informal public education and engagement, and there is a qualitative association between that change and their perceptions of increased capacities to communicate with general publics and partners beyond higher education organizations. These results provide baseline expectations for funders and evaluators of cross-disciplinary research regarding the potential long-term impacts of such projects on team members, as well as testable claims for hypotheses-directed research and evaluations about the impacts of cross-disciplinary research on individual researchers.

Background

Many kinds of stakeholders want to know how to structure cross-disciplinary teams to increase the chances that particular teams will be successful. Individual researchers want this knowledge to improve their daily work, pursue their research agendas, and advance their careers, especially by improving communication with each other and community partners (Nancarrow et al., 2013; O′Rourke et al., 2013; Pohl & Hirsch Hadorn, 2007; Repko & Szostak, 2020; Thompson, 2009; Vladova et al., 2025). Potential community partners want this knowledge so they can productively work with academic and industry partners to ameliorate problems. Funders and academic and industry administrators want this knowledge so they can design their organizations and institutional rules to foster cross-disciplinary research when appropriate (James Jacob, 2015; Klein & Klein, 2010b; Lepori et al., 2023; Schölvinck et al., 2024). Researchers develop knowledge about cross-disciplinarity in an array of fields, including science of science (Fortunato et al., 2018; K. L. Hall et al., 2018; Jacobs & Frickel, 2009) and science policy and evaluation (e.g., National Research Council, 2015; Porter et al., 2008; Schölvinck et al., 2024).

Three general challenges confound a robust understanding of how cross-disciplinary teams succeed or don’t (for a full review see Laursen et al., 2022 and Vladova et al., 2025). Each challenge constrains techniques for operationalizing theoretical constructs, measuring the effects of teams, and building increasingly general theories about which team factors are likely to foster productive outcomes. First, there is substantial variation in meanings for cross-disciplinarity (Huutoniemi et al., 2010; Klein & Klein, 2010a; Sugimoto & Weingart, 2015), raising concerns that the phenomenon is over-theorized and under-operationalized, which forestalls the understanding and evaluation of cross-disciplinary projects (Newman, 2024). There can be important differences in the design, execution, and evaluation of, for instance, multi-disciplinary teams compared to trans-disciplinary teams (Klein & Klein, 2010a, 2010b).

Second, teams vary substantially in organizational structures, ranging from ad-hoc and loose associations to formal teams with specified roles and lines of communication (Evaluation Associates, 1999; Leahey, 2016; Schölvinck et al., 2024). They can be ephemeral, like working groups established to address a specific topic, or they can function over years or even decades (e.g., Long-Term Ecological Research Networks, Redman et al., 2004) spanning departments, schools, institutions, and nations (K. L. Hall et al., 2012; Leahey, 2016). Until results have been validated across structures, we cannot conclude the features that enable the success of loosely organized long-term communities of practice, for example, will be the same as those that work for short-term formal project teams. Furthermore, common cultural divides in research between natural and social science, or between quantitative and qualitative approaches (Pfirman & Laubichler, 2023) can lead teams to confront challenges such as hostile competition for explanatory superiority (Lélé & Norgaard, 2005; MacMynowski, 2007), disciplinary defaulting (J. T. Klein, 2005), or disciplinary bullying (Leslie et al., 2015).

Third, many investigators have focused on published knowledge such as patents and peer reviewed journal articles, the most analyzed output of cross-disciplinary teams (e.g., Berkes et al., 2024; Lungeanu et al., 2014; Okamura, 2019). Researchers partly focus on those products because they exist in large and accessible databases with good metadata that enable computational studies. In these studies, multi-authored products are often treated as outputs of collaborative teams, and by proxy cross-disciplinary teams (Fiore, 2008; Leahey, 2016). Funders and universities increasingly use metrics like number of products or citations as these measures of previous success to inform decisions about who to fund, reward, and promote, prompting rebuke from those who study the phenomena of research (Hicks et al., 2015; Sugimoto, 2021). Stokols et al. (2008) advocated for combining methods to understand collaborative processes and outcomes, and Leahey (2016, p. 13) found that “surprisingly few studies incorporate more than one method or measure.” While some studies have compared citations before and after collaboration (e.g., Rawlings & McFarland, 2011), comparatively few studies focus on other kinds of knowledge produced from cross-disciplinary teams, such as published data sets, protocols, conference presentations, or new course modules or content for informal and formal education courses.

Bozeman et al. (2001, p. 718) argued that such “product-oriented evaluations tend to give short shrift to the generation of capacity in science and technology.” One result of these and related challenges is that there are few studies that characterizes the influence of cross-disciplinary work on the long term research capacities of individuals, and scarcely any that use longitudinal designs (for fruitful recent exceptions see Rabello et al., 2024; Roelofs et al., 2019). Some studies have begun to indicate that when researchers work in cross-disciplinary teams, they perceive some changes in their capacities for skills like communication and management (e.g., Chan & Wheeler, 2023; Leigh & Brown, 2021; Marg & Theiler, 2023; Vanney et al., 2024). These studies often rely on one-time interviews of cross-disciplinary researchers who reflect on their overall research approach and activities. Furthermore, Vanney et al. (2024) identified intellectual virtues like open mindedness and intellectual empathy that, when held by researchers, correlate with experience, productivity, and satisfaction in cross-disciplinary work. But there is a dearth of information from panel style longitudinal studies that are especially useful to characterize changes, trends, and associations on and between human behaviors and traits, and that enable further experimental testing and investigations into the causes of those changes (Ruspini, 2002). As a result, we are only beginning to learn in what ways cross-disciplinary research changes those who do it, let alone how.

Our study complements recent work but extends it with data collected from a project over more than a decade. This design enables us to describe trends in how researchers perceived their capacities evolving, and to suggest potential mechanisms for project design may have influenced those capacities.

Cross-disciplinary projects and teams are potent objects for further study and evaluation (Laursen et al., 2022; Lyall et al., 2013; Lyall & Fletcher, 2013). Given the variations for possible kinds of cross-disciplinarity, collaborations, and individual research capacities, it remains an open project to document how different varieties of the first two influence the formation of the third (K. L. Hall et al., 2018; Leahey, 2016; Rabello et al., 2024; Stokols et al., 2008). Evaluators analyze project impacts partly by documenting stasis and change in patterns of cross-disciplinarity, collaboration, and research capacities.

Ultimately, funders want to know the extent to which their cross-disciplinary programs succeed at addressing significant problems, discovering new knowledge, or integrating field-specific knowledge and researcher social networks, and augment researchers’ capacities. As Bozeman et al. (2001, p. 728) add, the

‘evaluation problem’ at the individual level is to determine the extent to which the project or program has enhanced the S&T human capital of participants. As a result of the project, are the participants better able to contribute to future scientific and technical endeavors? Has their S&T human capital increased, has it increased in ways for which there is likely a future demand, and has it increased because of participation in the project or program?

To begin to address these general themes, we report here an exploratory and descriptive case study (Gerring, 2012, 2016; Gerring & Cojocaru, 2016), focused on a cross-disciplinary project funded from 2010 to 2018. We focus on the competencies developed by the team members over the course of the project. Exploratory research aims to discover and describe phenomena previously little described and poorly understood, especially to identify regularities that can be interpreted in theories and later experimentally tested (Stebbins, 2001). We pursued an exploratory study because of the dearth of empirically-grounded reports about the influences of cross-disciplinary work on the capacities of researchers, especially from longitudinal studies. Given the aim to discover and describe trends as candidates for further study, exploratory studies align with methods for descriptive analysis to address what-questions, collect and analyze multiple kinds of data relevant to research questions, and identify trends and qualitative associations among trends (Gerring, 2012).

We surveyed and interviewed team members multiple times throughout the span of the project, and again 5 years after the project had ended. This longitudinal aspect enabled the team members to assess changes in their capacities over time and to reflect on the extent to which changes were long-lasting or influential to their careers. We address the following explicit questions.

What cross-disciplinary practices did the team members perceive they used to interact with each other, and did those practices change over the history of the project?

What perceived impacts did those interactions have on their individual behaviors and capacities for cross-disciplinary institutional knowledge, skills, and interest for similar research?

Future studies can use our results that address those questions to inform future hypothesis-driven research to address why-questions and to identify more precise phenomena, quantitative correlations, and causal relations.

Theoretical Framework

We develop a theoretical framework that relies on constructs of cross-disciplinary work and of cross-disciplinary institutional and scientific knowledge, as indicated in our research questions. Cross-disciplinary work involves researchers engaging in the institutions of more than one discipline. Such engagement includes, but isn’t limited to, the following activities (Rhoten & Pfirman, 2007):

We use “cross-disciplinarity” as an umbrella term to cover a range of constructs such as interdisciplinarity, multidisciplinarity, and transdisciplinarity without privileging any of those particular constructs (O’Rourke et al., 2016; Vladova et al., 2025). These specific concepts have more precise functions than we require for our study, but they share a family resemblance that we wish to preserve. We sidestep the debate about the relative fruitfulness of these constructs, as we do not need their precision for our research questions.

We note two general concepts of institution to specify our construct of institutional knowledge. In one sense, institutions are “the prescriptions that humans use to organize all forms of repetitive and structured interactions including those within families, neighborhoods, markets, firms, sports leagues, churches, private associations, and governments at all scales (Ostrom, 2009, p. 3). Or more succinctly, they are “systems of established and prevalent social rules that structure social interactions” (Hodgson, 2006, p. 2). In a second more specific and everyday sense, institutions are organizations. Organizations are formal instantiations of systems of social rules, and we distinguish them from institutions in the more general sense because they specify shared or corporate aims, roles for people to pursue those aims, and the central coordinating systems for communication and decision making (March & Simon, 1993). In this more general sense, institutions include higher education or science as a social endeavor, and in the more specific sense, relevant organizations include particular universities or laboratories.

We define institutional knowledge as knowledge about the components, histories, activities, and causal outcomes of institutions and organizations. To characterize and study the effects of institutions as systems of social rules, researchers characterize a group of people interacting with each other, the implicit and explicit rules they follow, their interactive behaviors and intentions, and the effects of those behaviors (Ostrom, 2009). Those who participate in institutions also develop knowledge about those things. While this knowledge can be experiential, unsystematic, and often tacit, participants nonetheless rely on this knowledge to advance their individual and group interests and goals.

Institutional knowledge about science includes knowledge about what the institutions of science are, how they operate, and how to participate in them. This knowledge includes but goes beyond what Bozeman et al. (2001) called capacities or contextual skills for doing science, which can change over time (see also Vanney et al., 2023, 2024). One kind of institutional knowledge is project knowledge, or knowledge about how to design and conduct research projects (Meunier, 2019). Project knowledge helps researchers distribute work among project participants, structure activities relative to research goals, and move results, methods, and tools across projects. Researchers develop institutional knowledge about the particular organizations in which they participate, especially labs, universities, or research institutions. They also develop this knowledge for interacting with funders—for writing, reviewing, and administering grants (Elliott et al., 2023).

We distinguish institutional knowledge about science from more standard conceptions of scientific knowledge. Constructs of scientific knowledge usually denote a mix of knowledge about how to reason, the content and uses of theories and models, and bodies of facts relevant to particular fields or problems (Darden & Maull, 1977; Dunbar & Klahr, 2012; Maienschein & Students, 1999). Researchers develop disciplines as social institutions or sets of rules for conducting, evaluating, and rewarding research (Darden & Maull, 1977; Fiore, 2008; Merton, 1973).

Disciplinary training, then, inculcates participants with a body of empirical, theoretical, and methodological knowledge, and with norms for behaving toward one another. Depending on the extent of institutional training they receive, scientists will vary in the amount of institutional-disciplinary knowledge they have and can effectively use. Researchers also develop disciplinary identities, such that they identify with being a member of a discipline (e.g., chemist, biologist, social scientist, oceanographer) and follow its rules.

Researchers can develop cross-disciplinary identities. These researchers can see themselves as not belonging to any single extant discipline, and they can develop professional anxieties due to lack of fit within traditional structures, and feelings that their expertise and contributions are not recognized and valued (Lattuca, 2001; Martin & Pfirman, 2017). For members of cross-disciplinary teams to work effectively with each other, leaders must design team structures and interpersonal environments that are trusting, safe, open minded, clear about expectations, and goal directed (J. T. Klein, 2013; National Research Council, 2015; Piso et al., 2016; Thompson, 2009; Vladova et al., 2025; Wagenknecht, 2016).

Researchers develop cross-disciplinary knowledge in at least two senses. They develop cross-disciplinary scientific knowledge when they learn from fields previously less familiar to them, such that they learn about bodies of empirical, theoretical, and methodological knowledge or about how to use new tools, inference methods, etc. (National Research Council, 2004). They develop cross-disciplinary institutional knowledge when they learn more about and how to follow systems of rules for behaving in multiple disciplines. Put differently, they develop capacities for interacting with researchers who work in those disciplines, filling different roles in organizations, writing for different journals or kinds of audiences, applying to different kinds of funders, etc.

We hypothesize that by conducting cross-disciplinary work, researchers develop cross-disciplinary knowledge, both scientific and institutional. We also expect that newly developed cross-disciplinary institutional knowledge is less explicit or known to the participants than is their newly developed scientific knowledge. These expectations motivate our use of repeated surveys and interviews as a means for scientists to reflect upon their potentially tacit institutional knowledge.

Case Description

We studied a research team that conducted a project between 2010 and 2018. The U.S. National Science Foundation (NSF) (NSF, 2010) funded the team through the Climate Change Education Partnership (CCEP) program. The NSF required funded teams to represent a range of disciplines that spanned common cultural divides in research such as basic and applied, or hard or soft. The solicitation stated:

Each CCEP must include representation from at least each of the following communities: climate scientists, experts in the learning sciences, and practitioners from within formal or informal education venues. This combined expertise will ensure that educational programs and resources developed through the activities of each CCEP reflects current understanding about climate science, the best theoretical approaches for teaching such a complex topic, and the practical means necessary to reach the intended learner audience(s). (Climate Change Education (CCE): Climate Change Education Partnership (CCEP) Program, Phase I (CCEP-I). (NSF PROGRAM SOLICITATION 10-542, p.1).

The awarded project was named the PoLAR Climate Change Education Partnership (PoLAR Partnership). The team received a 2-year Phase I award (2010–2012) and a 5-year Phase II award (2012–2017, no cost extension to 2018).

The PoLAR team included 5 primary investigators and 21 total people representing more than 12 organizations, with a majority of 12 members participating in both Phases. Team members were selected for participation based on their interest in advancing climate education, disciplinary/field expertise, institutional context, and commitment to collegial collaboration (Boix Mansilla et al., 2016; K. L. Hall et al., 2018). They came from both formal and informal education organizations. Some had capacities to reach target audiences or had experience with games or education technologies. The project also aimed to research the efficacy of the outreach projects it designed. Following guidance from J. T. Klein (2005), team members were selected because they were known to be patient, willing to learn, sensitive toward and tolerant of others, and willing to try new things (see also Vanney et al., 2024; Vladova et al., 2025). The team evolved over time with a regular flux of graduate student researchers, postdoctoral researchers, practicing educators, etc.

The PoLAR Partnership project aimed to engage adult learners and broader publics to understand and respond to climate change in polar regions. The team designed, implemented, and researched a portfolio of educational activities and products with explicit communication goals and based on empirical evidence, learning theory, and education practice, including current and emerging learning technologies. The PoLAR team developed and implemented eight core subprojects (Turrin et al., 2020; https://thepolarhub.org/core-projects/). Each subproject usually had 2 team leaders, 1 to 3 additional core PoLAR team members, and several external collaborators. The products included card games, apps, role playing simulations, collaborative storytelling experiences, online quizzes, and podcasts. The PoLAR team designed these activities and products to be easy to disseminate and fun to use in homes, museums, classes, and communities.

The eight subteams engaged with learners via science fairs, camps, in person and online classes, workshops, and media coverage. These activities included diverse stakeholder communities including general publics, teachers mostly for grades 5 to 12, and Alaskan Indigenous leaders, educators and community members. Altogether the project teams reached more than 3 million participants. The team also studied the educational effectiveness of its products, publishing more than 20 research articles in peer-reviewed journals.

PoLAR leaders used various strategies to foster a collegial team with productive cross-disciplinary collaboration:

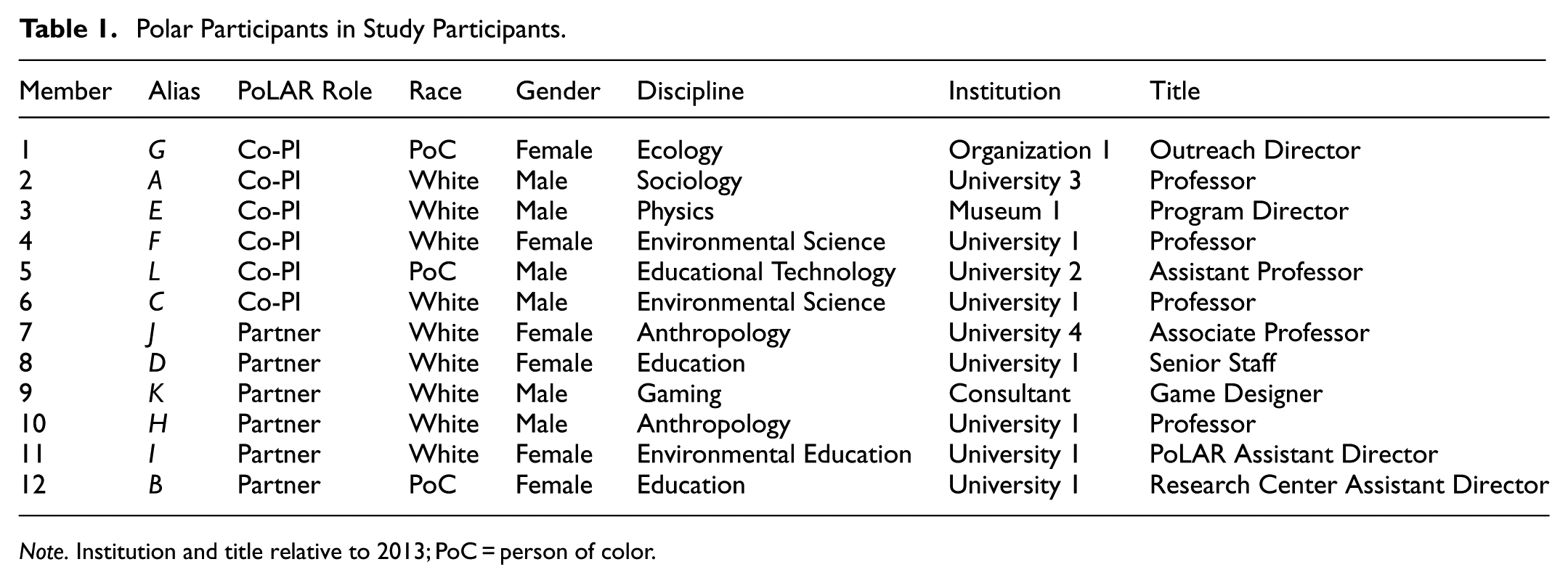

This study focuses on how PoLAR Partnership team members experienced cross-disciplinarity over the course of the project. For that reason, we focus on the 12 members who participated for the entire project (Table 1). All held leadership roles, including six co-principal investigators. Of these 12, 3 are people of color, 6 are women, 4 began the project as early or mid-career researchers, and 3 worked at organizations other than universities. Six were tenure track faculty, four held other types of university positions, and two worked outside of universities. These 12 contributed survey and interview data at multiple points of the project, and again 5 years after the project had ended, when 8 remained at their original organizations.

Polar Participants in Study Participants.

Note. Institution and title relative to 2013; PoC = person of color.

Methods

In collaboration with PoLAR leadership, Goodman Research Group, Inc. (GRG) designed and conducted a multimethod research study and project evaluation. Between 2010 and 2018, GRG collected data from project members twice via electronic survey (2013 and 2016) and three times by focus groups with PIs not present to foster honest feedback. A consultant, previously the GRG evaluator, conducted another round of individual phone interviews in 2022. To design data collection tools and strategies, GRG used the cross-disciplinary framework of cross-fertilization, team collaboration, field creation, and problem orientation (Rhoten & Pfirman, 2007). Prior to participating in the study, team members provided GRG with informed consent. The study was approved by the Columbia University Institutional Review Board (Protocol IRB-AAAJ7852), while the 2022 phone interview component was approved by Arizona State University IRB (Protocol STUDY00015457).

Survey

GRG developed The PoLAR Interdisciplinary Collaboration Survey (see Supplemental Materials). Indicators for collaboration and cross-disciplinary institutional knowledge were culled from the literature about interdisciplinary work (Boix Mansilla & Gardner, 2003; Hoadley et al., 2008; Stokols et al., 2008) and from different tools that have been used as measures of effective teams (Ditkoff et al., 2005; MindTools, 2013). GRG supplemented these indicators with questions designed to identify different types of benefits and challenges participants might perceive as a result of participating in PoLAR. This survey had 25 questions, 8 open-ended and the rest closed-ended. Seven Likert questions included a total of 76 items for rating.

GRG administered the survey online twice. Each time, the results represented at least one team member from each of the eight institutions formally involved in the project. GRG administered the first survey in May 2013, when 14 of 16 PoLAR members completed it within 5 weeks. GRG administered the second survey in February 2016, and 16 of 17 members completed it within 1 month. The 2016 survey added two open ended and two closed ended questions based on interests the participants had identified in early data collection and adapted from an Interdisciplinary Work Survey (Hagood et al., 2018). During survey periods, GRG also conducted interviews and focus groups to add data for triangulation, validity checks, and for interpretation of the survey results.

GRG designed the survey to be reliable and checked to ensure that the responses were valid. To increase reliability of self-reported survey data, survey question phrasing was kept consistent, anchor points on rating scales were kept the same, and multiple items were used for various constructs. Some questions, at both time points, were asked with the phrasing “to date.” This may have increased reliability due to use of the same language, although it may also have led to recall bias in responses, as participants considered their experiences over time and the time period was longer at the Time 2 survey point. To verify response validity, survey data and ratings were triangulated to the extent possible with focus group discussions and interviews asking for examples of work (e.g., publications and presentations given). Given their validation function, analysis of those interviews and focus group discussions and interviews are not reported here.

For this study, we analyzed responses from 12 participants who consistently participated in the project from beginning to end. These 12 participants completed the survey both times, so we could analyze their results to address the research questions posed here, which have a longitudinal dimension. We used SPSS to analyze survey responses and Microsoft Excel to produce graphics. Co-author SP is among those 12 and did not participate in survey data analysis or interpretation.

Five-Year Follow Up

In 2022, the evaluation consultant conducted retrospective phone interviews, for which 8 of the 12 core participants responded. As the participants no longer collaborated in PoLAR, the original survey instrument could not be meaningfully re-used. The consultant crafted nine open-ended questions to elicit participants’ thoughts about their evolved skillsets and career trajectories.

The interviews avoided any potential misapplication of the survey, and they provide a complementary source of data relevant to our two research questions. The survey would have asked participants to reflect on the previous few years of collaborating in PoLAR, by then long completed; or to recall their statuses in the last years of the project, which likely would have been inaccurate due to 5 years of different work and experiences interfering with memories. In neither case would the data have been reliable or meaningful. Instead, the interview questions were designed to provide information about the current statuses of the participants and their opinions about long-term influence of the project. We report the analytic results of the 2022 interviews separately from those of the survey, given the span of time from the final survey to the 2022 interviews, the categorical change in participants’ collaboration status and temporal perspectives, and the decrease in participation. Rather than triangulating the validity of survey results, the 2022 interviews provide a different source of data to explore trends and identify qualitative associations.

Interviews were recorded by shorthand and reconstructed into full written statements for analysis, so quotes are not word-for-word representations. Two analysts adopted the analytic framework of grounded theory (Charmaz, 2014). They manually coded the interview responses independently in Microsoft Excel and used constant comparative analysis (Boeije, 2002) to group content inductively for recurrent topics. They met twice to compare coding, resolve discrepancies, and reach unanimity on two primary themes and three subthemes. Given the small size of the final interview population, we represent quotes without attribution so as to protect interviewee anonymity amongst themselves.

Results

Question 1

We analyzed the survey responses to identify the practices in which participants perceived that they interacted with each other, and to determine the extent to which those practices changed over the course of the project. These practices include the extent to which the participants estimated the amount of work they did relative to the four disciplines, and to Rhoten and Pfirman’s (2007) four dimensions of cross-disciplinarity (Table 2).

Average Proportion of Project Time Spent by Discipline, N = 12.

p < .05.

On average, participants estimated that they worked less on climate science as the project evolved. In 2013, the participants reported an average proportion of 31% of project time spent on climate science (M = 30.67, SD = 26.14), while in 2016 they reported an average proportion of 24% (M = 23.75, SD = 19.79), a statistically significant decrease (t = 2.47, df = 11, p = .031, 95% CI [0.75, 13.08]) with a large effect size (Cohen’s d = −.71; small sample correction Hedges’s g = −0.63). Not statistically significant but notable is the increase in average estimated proportion of time spent on informal education practice (2013: M = 30.00 (30%), SD = 16.20; 2016: M = 36.67 (37%), SD = 31.20; t = −1.43, df = 11, p = .181, 95% CI [−16.94, 3.60]) with a moderate effect size (Cohen’s d = .41, Hedge’s g = 0.37). Also not statistically significant are differences in average reported proportion of time spent working in learning science (rough decrease of 4%) and formal education practice (rough increase of 4%).

As individuals, team members reported they were cross-disciplinary, with each participant judging in both surveys that they worked in more than one discipline. The number of disciplines and proportions of work for each discipline varied across years and people. In 2013, one participant reported working in two disciplines, six participants reported three disciplines, and five participants reported working in all four disciplines. By 2016, two participants reported working in two disciplines, two participants reported three disciplines, and eight participants reported working in all four disciplines. In 2013, the average number of fields was 3.33 (SD = 0.65), while in 2016 the average was 3.50 (SD = 0.80), but this was not a statistically significant change. Nearly all participants estimated that they spent a large proportion of project time working on informal education, with several splitting time primarily between informal education and climate science, or between informal and formal education. Between 2013 and 2016, three participants reported working less on informal education, two reported working about the same amount, and seven reported working more (Figure 1).

Individual participants’ estimated proportions of project time spent working in informal education in 2013 and 2016, N = 12. Respondents sorted by percent informal education time in 2016. Most team members reported an increase in the percent of work time spent working in informal education.

Next we analyzed survey results to characterize how project participants judged the amount of work they did specific to the four dimensions of cross-disciplinarity: cross-fertilization, team-collaboration, field-creation, and problem-orientation (Table 3). When collecting data, we gave this framework along with explanations of the terms to study participants as a reference tool (Rhoten & Pfirman, 2007).

Average Proportion of Project Time Spent by Cross-disciplinary Dimension, N = 12.

p < .05.

On average, participants estimated that they worked more in cross-fertilization as the project evolved. In 2013, participants reported an average proportion of 20% of work effort spent on cross-fertilization (M = 20.00, SD = 13.48), while in 2016 they reported an average proportion of 28% (M = 28.33, SD = 16.28), a statistically significant increase (t = −2.46, df = 11, p = .032, 95% CI [−15.79, −0.87]) with a large effect size (Cohen’s d = .71, small sample correction Hedge’s g = 0.63). Correspondingly, they judged that they worked less on the other three dimensions, but there was no statistically significant difference for any single dimension.

Individually, team members reported that they contributed to the PoLAR project using multiple ways of working. In 2013, 9 of the 12 participants reported they participated in the PoLAR CCEP in all 4 categories, while 3 reported working in 3 categories. Comparing cross-fertilization, the category with the greatest group change over time, from 2013 to 2016, three participants reported working less on cross-fertilization, two reported about the same amount of work, and seven reported working more on that dimension (Figure 2).

Participants’ estimated percentages of work in cross fertilization by individual in 2013 and 2016, N = 12. Respondents sorted by percent cross-fertilization time in 2016. The figure shows that most participants reported an increase in the percent of work time spent using cross-fertilization approaches from 2013 to 2016.

Altogether, these results indicate that each participant judged themselves as operating in multiple disciplines while using activities from across the cross-disciplinarity dimensions. This means that the contributions of the individuals themselves were multi-disciplinary, rather than just from the perspective of one field or discipline. Therefore, the project instantiated cross-disciplinarity at both the project design level, and within the daily activities of each individual. As the project evolved, participants judged themselves more likely to work in additional disciplines and in informal education in particular. They also judged themselves as working more on cross-fertilization where they brought together tools, concepts, data, methods, or results from different fields and/or disciplines. This indicates a sense of learning and growth in their own individual cross-disciplinary capacities.

Question 2

We analyzed survey responses first to characterize the extent to which participants perceived that the project influenced their cross-disciplinary knowledge and skills, and second to characterize their dispositions for further cross-disciplinary research.

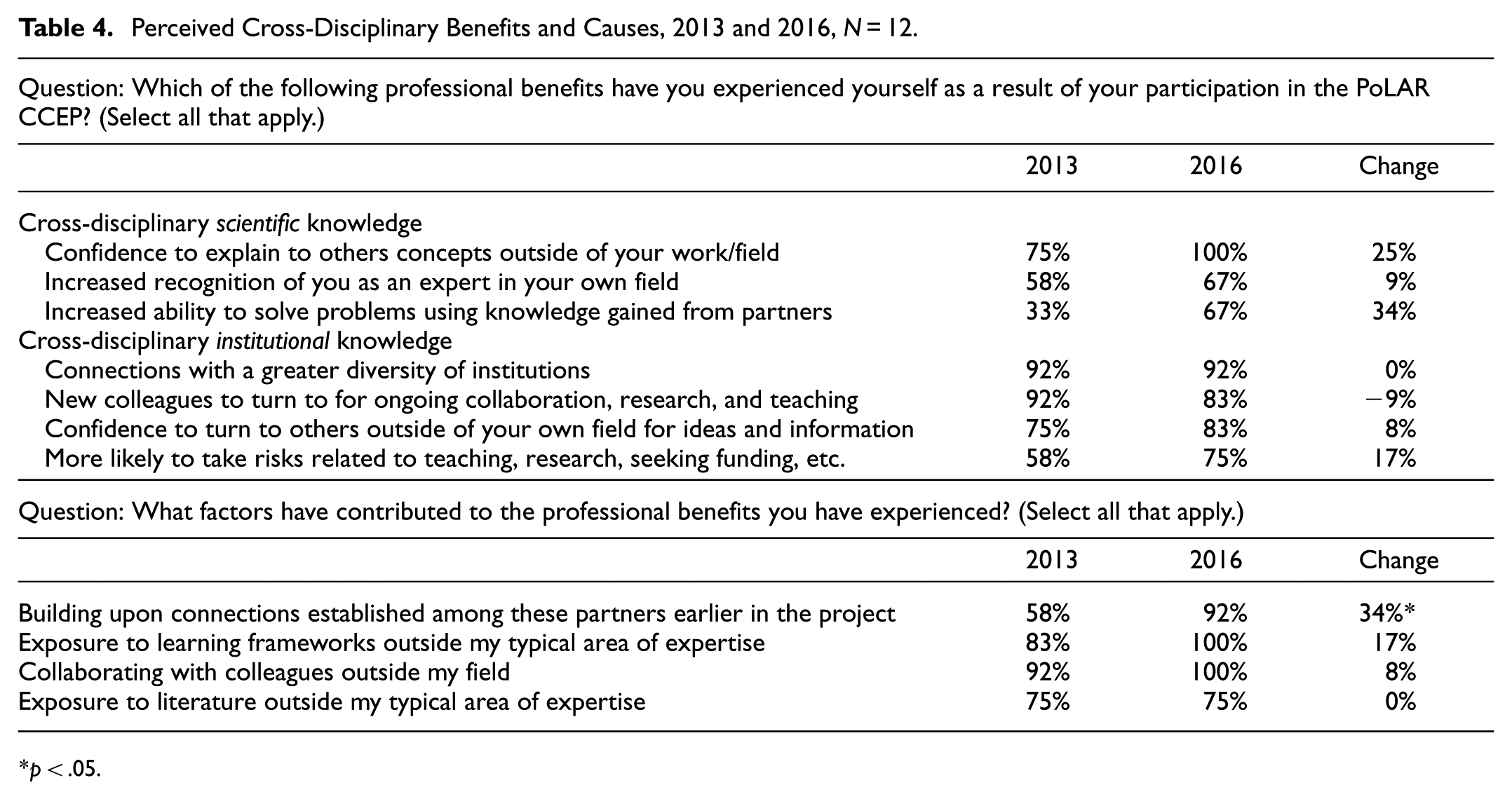

Participants perceived a range of benefits to their cross-disciplinary scientific knowledge and to their cross-disciplinary institutional knowledge (Table 4). For scientific knowledge, these benefits include increases in confidence to explain concepts from other fields and in solving problems with knowledge from other fields. For institutional knowledge, these benefits include knowing about the operations of a greater variety of institutions, new colleagues for collaborations, increased confidence to do so, and a greater propensity to take professional risks with teaching, research, and seeking funding. There were several noteworthy positive changes over time in the proportion of participants who perceived those benefits (e.g., problem solving, confidence, risk taking). But no change was statistically significant, likely due to small sample sizes and large standard deviations.

Perceived Cross-Disciplinary Benefits and Causes, 2013 and 2016, N = 12.

p < .05.

Participants noted several programmatic causes for their perceived benefits in cross-disciplinary knowledge (Table 4). At least 75% of them in both years credited those benefits to their exposure to new learning frameworks and new literatures, and to collaborating with colleagues outside of their fields. There were no statistically significant changes over time in the proportions of participants who credited those causes; notably, a high percentage of participants cited those causes even in 2013, after just a few years of project participation. There was a significant change from 58% of participants in 2013 to 92% in 2016 who attributed professional benefits to building connections with partners established earlier in the project (t = −2.35, df = 11, p = .039, 95% CI [−0.65, −0.02]) with a medium to high effect size (Cohen’s d = 0.68, Hedge’s correction g = 0.63). This finding indicates that with more time, participants can make stronger connections with each other.

The participants also reported their optimism and dispositions for further cross-disciplinary collaborations and research publications. Both the 2013 and 2016 surveys indicated that participants were optimistic about future collaboration. In 2013 one participant said they had already presented or published results outside of their fields, while six were confident that they would in the future. By 2016, nine participants had presented outside of their fields, while the remaining three thought maybe they would in the future. Similarly, seven participants had published outside their field, and the remaining five thought maybe they would.

Finally, as team members were chosen based on their openness to cross-disciplinary collaboration, we gauged the extent to which participants relied on each other to help them work across these disciplines. The 2013 survey asked participants how often they turned to other PoLAR participants in each discipline with questions about understanding tools, concepts, data, methods, or results. All 12 participants reported seeking advice from a team member in each of the 4 disciplines, including their own.

In 2016 participants reported that these PoLAR collaborations increased their abilities to appreciate contributions of others, to be open to trying new methodologies, and to identify the type of knowledge they themselves bring to teams (Table 5). These responses were to newly included Likert questions (1—Not at all, to 5—A great deal) adapted from (Hagood et al., 2018) to assess more specifically how participants perceived PoLAR collaborations affected their cross-disciplinary knowledge, abilities, and attitudes.

Perceived Increases in Institutional Knowledge and Skills, 2016.

Five Year Follow-Up

Participants discussed how the PoLAR project influenced their careers in a variety of ways, which we group into two primary themes: cross-disciplinary institutional knowledge and cross-disciplinary scientific knowledge. Within both of these themes, participants discussed new skills and mindsets they developed through participating in the project, how project participation changed their self-perceptions as researchers, and how they carried those capacities into later work.

The most commonly discussed theme was cross-disciplinary institutional knowledge, for which participants focused on subthemes of developing new skills and mindsets for pursuing future cross-disciplinary projects and for their approach to community partnerships and communication.

Regarding new institutional knowledge for pursuing future cross-disciplinary projects, and plans for doing so, participants described a range of competencies they developed while working on PoLAR. They noted the value of discussing with collaborators early and regularly about motivations, expectations, and goals, “It has really expanded my thinking about how we do these partnerships,” and that PoLAR gave them an “idea of how those types of collaborations happen, how they work, what motivates people.” One participant said “my experience with PoLAR opened up that door for me, if only in my own mind.”

One participant listed new skills including “project management, grant management, research management, IRB experience, supporting data collection, all kinds of things related to managing and supporting the grant and research side of things.” Another participant noted they learned that “different projects don’t have to be tightly placed in a single structure or puzzle for a set of projects to advance.” Researchers who were earlier in their careers during PoLAR noted the value of this experience:

I really appreciate the opportunity to be inside the nitty gritty of managing a major NSF grant. It is a very valuable experience that will follow me through my career. Also working with [PoLAR leaders] and how [they] navigated working with NSF and writing the proposals. It was quite different from how I’ve heard and seen others approach it. Knowing [they’ve] been very successful shows there’s more than one way to be successful.

Most also mentioned increased confidence to collaborate with people from many different fields, saying “I’ve done other projects where there were two disciplines involved, but PoLAR was all over the map,” and “Oh, it made a huge difference!” One said “I feel more confident in these different arenas because I’ve been part of the PoLAR project.” Another participant expounded:

In that rich, complicated interdisciplinary world of science and education, I Increased my professional confidence in that kind of work…[the project] gave me a model about what that type of challenging collaboration could look like and be successful. I can’t say it wouldn’t have happened without PoLAR, but it bolstered my knowledge and skills, and was very helpful.

Regarding community partnerships, participants focused on new skills and mindsets for collaborating with particular groups. For example:

The thing I started to learn in PoLAR is that it’s really important to place the needs of the community we’re trying to serve–for us Indigenous communities–and to use methods that are meaningful to them. Our [subproject] started with Indigenous knowledge from elders and led with a prayer to make sure we honor and respect their knowledge.

And

PoLAR really shaped the way I think about community engagement and public participation, learning about and responding to effects of climate change. It made me think about what it means to do deep engagement across cultures and across knowledge systems, [and] shaped how I approach research. I now describe myself as a community-based participatory researcher, a label that stems directly from my experiences with the project.

Regarding communication, participants stressed that “the communication of climate science is just as important as the science itself,” and that the project fundamentally changed their approach to communication.

PoLAR was not like anything I’ve been involved with before or since. High on [the] creative scale. We used many different ways to try to communicate about PoLAR and climate information to different kinds of audiences. Thinking about all of that stuff–and new terms gamification–I’ve used it a ton since.

For the second theme of cross-disciplinary scientific knowledge learned from other PoLAR team members, participants typically noted they learned new information and new methodological techniques, and that they expanded their understanding of climate change. For example;

Being in the PoLAR partnership was incredibly instructive for me about the state of the climate crisis. People came in with info, data, ideas about the climate crisis from all sorts of different facets. I internalized all that. It was very formative for my mental map of the climate crisis moving forward.

And

The number of times we collaborated on presentations and in different outreach venues and with different audiences–it just exposed me to a variety of different presenters, and a variety of different findings and data I was able to pull in. From that range of exposure, I picked up a lot and I’m able to incorporate it and know where to go now for new data.

Discussion

Summary of Results

We summarily address our two motivating questions. Question 1. What cross-disciplinary practices did the team members perceive they used to interact with each other, and did those practices change over the history of the project? Briefly, our results indicate that as the project evolved, participants generally perceived an expansion in their activities and abilities across disciplines, both in terms of the number and kinds of disciplines (climate science, learning science, formal education practice, informal education practice), and in the dimensions of cross-disciplinarity (cross-fertilization, team-collaboration, field-creation, problem-orientation). In particular, participants reported spending increasingly more time on informal learning and on cross-fertilization.

Question 2. What perceived impacts did those interactions have on their individual behaviors and capacities for cross-disciplinary institutional knowledge, skills, and interest for similar research? Our results indicate that, as the project evolved, more participants perceived they had developed greater cross-disciplinary institutional knowledge, skills, and resources; especially for identifying the particular value provided to the project by themselves and by those from different disciplines. Participants also noted that their participation in the project increased their cross-disciplinary scientific knowledge, in particular the content of information produced in other disciplines and for problem solving.

These results show fluidity in team structure and individual activities, and widespread open mindedness and tolerance for change and learning. Previous research has shown that none of those aspects are necessary precursors or outcomes of interdisciplinary work. J. T. Klein (2005) describes disciplinary defaulting, in which team members privilege their individual goals and perform only those activities for which they have disciplinary expertise. This is especially likely to happen in teams like PoLAR that span the natural to social sciences, quantitative to qualitative approaches, and basic to applied work (e.g., Pfirman & Laubichler, 2023). Additionally, team members might shut down potential collaborations if they compete for status by appealing to perceived hierarchies of disciplinary quality, degree rank, home university or organizational prestige, or demographic characteristics (e.g., age, race, sex, etc.; J. T. Klein, 1990, 2005). If team members default to their disciplinary activities or compete for status, they will likely engender interpersonal conflict and stultified team structures, collaboration practices, and receptivity to learning new institutions, norms, and activities particular to other disciplines. In contrast, our results indicate that project leaders can forestall those effects by vetting colleagues and designing project activities to blur rather than entrench disciplinarity.

Limitations

We note several general limitations for this study. This study is a descriptive and exploratory single case study, so we triangulated evidence from multiple data collection procedures, rather than focusing data collection to test particular hypotheses. As a result, the findings here share the limitations of descriptive and exploratory projects–especially in comparison to rigorous causal inferential analyses–including that descriptive studies often lack well-defined criteria for evaluating or correcting claims to new empirical knowledge (Gerring, 2012). Furthermore, this study did not use a design to identify causes, as there are no data collected from baseline pre-tests, control groups, or distinct projects for comparison; so it lacks the information needed to enable stronger statistical analyses (e.g., regressions) or counterfactual inferences (e.g., structural equations), thereby forestalling substantive or for generalizations to projects or teams with similar characteristics of PoLAR (Gerring, 2012; Pearl et al., 2016).

To mitigate the first risk of multiple data sources, we relied on stable data survey instruments and used descriptive statistical analyses as much as possible. For qualitative analyses, we followed standard techniques to lessen the chances of bias and misinterpretations, including using multiple analysts and constantly comparing new data against developing codes (Boeije, 2002). Regardless, we stress here that our results indicate descriptive trends and associations among phenomena, rather than settled correlations or causal explanations, and we urge their interpretation accordingly. Relatedly, we note that our data represent self-perceptions from PoLAR participants, and that such data are insufficient to establish causal mechanisms for social phenomena.

To mitigate the second risk of being a single case study with no causal inferences, we acknowledge the limited scope for potential generalization and for comparative case studies. Most directly, our case is an instance of a larger set of scientific teams with education and public engagement as regular activities and goals. We hypothesize that the trends and associations described are most meaningfully compared and externally validated against studies of cross-disciplinary projects that are long-term (5 + years), mid-size (8–20 members), with several structural subcomponents (e.g., the eight subprojects). The more our results are compared or extended to cases of teams that differ from those properties and aims, the more those extensions should be interrogated.

Finally, we cannot rule out the potential influence of things not measured in the study on our reported trends. For example, without pretest results or control variables, it is possible that our trends result primarily from baseline increases in the participants’ professional maturity over time. Furthermore, the funding program and the project leadership selected people who were willing to adapt their normal practices, learn, and engage across disciplines (a recommended practice, Vladova et al., 2025). This selection may have biased the trends, which may not hold for teams with members recalcitrant to changing disciplinary behaviors or with more closed mindsets about so-called disciplinary status (Leslie et al., 2015; Pfirman & Laubichler, 2023). Combined with the small study sample, we stress the importance of the reported effect sizes.

Significance of Results

The most important result of this study is the perceived growth in time that participants devoted to cross-fertilization activities (Table 3 and Figure 2). As the project evolved, participants significantly increased the proportion of time they spent on these activities, which include knitting together tools, concepts, data, methods, or results from different fields and/or disciplines. PoLAR participants commented in open-ended survey responses in 2016 that they saw “much to be gained in learning from and iterating with experts in other fields which has the effect of expanding both personal and professional perspectives,” noting that PoLAR “…opened up new intellectual domains,” and “…brought me into contact with new people, ideas, and approaches that are on the cutting edge and that can be applied not only to this project, but actually to most of the projects of other researchers and educators.” This result aligns with work indicating that by encountering different methodological norms and practices, researchers can be productively uncomfortable, prompting them to question their disciplinary assumptions, learn, and build stronger teams (Leigh & Brown, 2021; Roelofs et al., 2019).

There is a qualitative association or trend between time spent on cross-fertilization and change in cross-disciplinary scientific knowledge (Table 4, qualitative interviews). As they spent more time in cross-fertilization activities, participants also reported gains in cross-disciplinary scientific knowledge, especially their abilities to understand and present results from other disciplines and to collaboratively solve problems. These two temporal changes indicate a potential causal relation between amount of time spent on cross-fertilization and the strength of individual cross-disciplinary scientific knowledge and skills, especially for making informed judgments about designing and executing cross-disciplinary projects and evaluating potential knowledge, methods, and collaborators from other disciplines. By similar reasoning, there is potentially a more surprising causal relation between cross fertilization and cross-disciplinary institutional knowledge, for which participants also reported gains (Tables 4 and 5, qualitative interviews).

Next, the results indicate that the project may have influenced participants’ abilities to communicate with each other and with other researchers. Previous research indicates communication is perhaps the most important aspect that influences the outcomes of cross-disciplinary projects, such that good communication practices among the members lead to new and successful knowledge, products, and impacts for the team (Andersen & Wagenknecht, 2013; Lakhani et al., 2012; Leigh & Brown, 2013; Nancarrow et al., 2013; National Research Council, 2015). This study weakly corroborates that relation, and it goes beyond earlier research to document some impacts of open and safe communication between team members. The participants of this study reported greater confidence collaborating with colleagues outside of their disciplines and turning to them for advice, something they did both in doing the projects and while researching the efficacy of the projects (Tables 4 and 5; Turrin et al., 2020). As the project evolved, the members reported no significant decrease in their relative proportion of time spent on team-collaboration activities (Table 3), indicating that sustained collaborative activity over many years may lead to greater perceived capacities for cross-disciplinary communication.

This study indicates that the project may have influenced the abilities of participants to communicate with non-academic community partners and publics. Here we rely on the qualitative analysis of the 5-year follow up interviews, in which a theme that most participants discussed was about mindsets and capacities for such communication skills. We note that while this capacity is not notably mentioned in the 2013 and 2016 surveys, it nonetheless may have been influenced by the greater proportion of time that participants spent on informal education practice as the project evolved (Table 2). Those activities likely enable researchers to practice and develop their communication skills to explain science and project resources and show how to implement them. For similar projects in the future, we suggest additional and more specific items be added to the survey instruments to check for such influences.

Altogether, the results of this study corroborate earlier work that individual researchers–and not just research teams–are important loci for cross-disciplinarity (Bozeman et al., 2001; Collins, 1986; Rawlings & McFarland, 2011). PoLAR led to sustained changes in participants that extended beyond their time in the project, including broader research mindsets, institutional knowledge, and communication skills (5-year follow up). Once someone engages in cross-fertilization, they carry new perspectives with them, potentially ready to apply to the next endeavor. They also potentially carry with them the confidence and willingness to take professional risks in teaching, research, and seeking funding (Table 4).

Finally, the results of this study corroborate the importance of team structures for outcomes of new knowledge and impacts on individual researchers. For now we sidestep issues of external validity to teams that are bigger or smaller or draw members from a different set of disciplines. We instead focus on features of team structure that can be incorporated that do not depend on size or discipline. Some have distinguished stable teams from ad-hoc teams (J. T. Klein, 2005; Sandi, 1990). In stable teams, the members are familiar with each other, have routines for activities and interpersonal relations, and tend not to recruit members from other disciplines based on needs for expert scientific knowledge of methods or content. Ad-hoc teams, on the other hand, have more flexible structures, with team members sharing power, learning together, and adjusting their work to the needs at hand and recruit people with specific skills from different disciplines. They have the advantage of a fresher approach and chances for insight and creativity. Yet, they may take a longer time to establish communication and to overcome barriers.

PoLAR had both stable and ad-hoc teams, as encouraged by both phases of the CCEP solicitation. Based on the first 2 years of funding, several subprojects with team leaders were identified for further development, but additional projects were taken up as opportunities developed over the next 5 years. Team members shifted their contributions to different projects over time as projects moved from design, to development, implementation, and evaluation and research, and as their interests and expertise evolved. The participants reported general and increased enthusiasm and energy for collaboration and communication, contrasting with the decrease reported by Edelenbos et al. (2017) for a 3-year project. We suggest that the difference lies largely in different lengths of the projects. As PoLAR was funded for longer, participants had the intellectual time for the “internal bridging interactions, reflexivity and knowledge integration” that (Edelenbos et al., 2017, p. 461) found to be “important for furthering interdisciplinary working.”

The value of the findings from this study depends on the extent to which they apply to other cross-disciplinary teams and on their ability to motivate further studies to develop empirically confirmed theories of cross-disciplinary research, such that those theories have at least some of the features of generality, precision, or descriptive accuracy.

Further Directions for Research

We suggest several routes by which future studies can build upon our constructs and results to inform future hypothesis-driven research, address why-questions, and identify more precise phenomena, quantitative correlations, and causal relations. First, we encourage the further use, testing, and development of the theoretical constructs of cross-disciplinary scientific knowledge and of cross-disciplinary institutional knowledge. While the constructs aren’t perfectly distinct in practice, by distinguishing them in theory we might better be able to characterize and investigate the effects of research not just in new knowledge products, but also on the individuals who develop the knowledge.

Next, we encourage further exploration and experimental studies for the two qualitative associations we identify. The first association is that as they spent more time in cross-fertilization activities, participants also reported gains in cross-disciplinary scientific knowledge, especially their abilities to understand and present results from other disciplines and to collaboratively solve problems. Researchers should design future studies to measure and test formal correlations and causality between these two phenomena. If confirmed, that relation would be unsurprising because it is a particular instance of a learning relation—the more time people spend doing an activity, the better they become at it and at understanding information specific to it (Ericsson, 2006). The second association also connects increased cross fertilization with gains in institutional knowledge. If experimental studies confirmed this relation and identified causes, the relation would be surprising because it could indicate indirect learning relations between individual activities and individual capacities adjacent to those activities.

Relatedly, evaluators for future cross-disciplinary projects should design their studies to determine the extent to which cross-fertilization influences individuals’ long term capacities, especially for things like kinds and quantity of research outputs, publication profiles, career paths, and professional society involvement. Such designs will help address primary evaluation questions such as: what will happen after a grant (e.g., Lyall et al., 2013); and what will be the longer term impact once the funding is gone? At the outset of PoLAR, we had not planned the 5 year follow-up interviews, but as we heard over the years from participants about the impact participation in PoLAR had on them, we decided to conduct an assessment. As participants had had time to reflect on the impacts of PoLAR on themselves, the interviews yielded significant information about the development of individual capacities. We encourage project designers and evaluators to do more mid- and long-term follow up studies to identify a project’s sustained impacts on its team members.

Increasingly, funding for large projects requires some sort of outreach or engagement to broaden their impact. It would be useful to detail how working on these educational and/or community projects affect researchers who do them, and if teams can fruitfully and reliably use outreach for team building. In our experience, such participation has value even for team members who don’t do outreach, as the whole team can use information gained from engaging with the public, which requires repeated explanations and answering questions, to hone or suggest new research agendas.

We suggest that changes in participants’ perceived public communication skills likely resulted from an increased amount of time that they perceived they spent on informal education practice. Future studies should validate that result against similarly structured projects that also involve non-academic public engagement. More generally, there remains an opportunity for future studies to characterize how cross-disciplinary work that includes public engagement influences participants’ long term practices, mindsets, and persistence with public engagement. Given that such work is often poorly rewarded early in academic careers, there could be differences in the impact of researchers’ capacities depending on their stage of career when doing the public engagement. More junior participants might not receive academic promotion and tenure, even if they become adept at public engagement.

Next, there is an opportunity to study projects not as single cases or dyad comparisons, but whole portfolios of funded projects that can function as natural experiments with which to test causal claims (K. L. Hall et al., 2018). It would have been valuable to be able to compare results regarding development of individual capacities with the other five NSF-funded CCEP projects, as all had the same initial conditions of responding to the same RFP, similar funding levels, and similar project durations, but used different approaches to leading and structuring the teams. While evaluations of portfolios have been done (e.g., Lepori et al., 2023; Ruegg, 2007), such work often focuses on the products of scientific knowledge those projects produced. Funders might more clearly earmark portions of their program funds for meta-projects that document–for the primary projects–different team structures, leadership styles, patterns of activities, and outcomes for new knowledge and for individual and team capacities, as with organizational “natural experiments” (K. L. Hall et al., 2018, p. 543). Causal studies would require further kinds of data collection and analysis beyond surveys and interviews, including the collection and analyses of organizational records, ethnographic field notes, and systematic comparisons of descriptive categories across cases to infer causal recipes (Ragin, 2009).

Similarly, there is an opportunity to test our results for cross-disciplinary teams with members from different or multiple countries. Such studies might reveal country-specific cultural factors not tracked here but still relevant to promoting or hindering the development of cross-disciplinary capacities (e.g., Leigh & Brown, 2021; Roelofs et al., 2019). Perhaps most informative, however, would be studies with geopolitical research contexts that differ substantially from those in the global north.

Future Directions for Leadership Research and Practice

Project leadership practices are especially notable as topics for future research, and in the short term they also indicate lessons learned from particular projects and heuristics for best practices. Accordingly, we next discuss some leadership practices used in PoLAR, and we reflect on how they may have influenced the trends reported here. In general, our results highlight many opportunities to better study the relations between styles and activities of team leaders, communication among team members, and team structures (J. T. Klein, 2005; March & Simon, 1993).

Leadership is important in establishing mechanisms for successful cross-disciplinary work (Rabello et al., 2024; Vladova et al., 2025). As Vladova et al. (2025, p. 175) conclude, a key question for project participants is “How do I and my collaborators plan and coordinate the individual contributions as well as the common…goals?” The PoLAR leadership implemented strategies to foster productivity. By establishing ad-hoc project teams based on emerging interests, all participants were involved in multiple projects as leader, contributor, and/or reviewer. This seemed to foster senses of both responsibility and belonging across subprojects. It also enabled PoLAR leadership to recognize subproject leaders as subject matter experts, and then to delegate authority to them to address new priorities or conflicts that arose in the context of their subprojects (Vladova et al., 2025).

Another leadership strategy that was built into the project design was the use of boundary objects (Star & Griesemer, 1989). Cross-disciplinary teams gain from activities intentionally designed to connect and integrate different disciplinary viewpoints, especially to address team member concerns about how to overcome foundational disciplinary difference and create common language (Vladova et al., 2025, p. 175). For example, Heemskerk et al. (2003, p. 1) found that “[j]ointly developing a [conceptual] model not only helped the participants to formulate questions, clarify system boundaries, and identify gaps in existing data, but also revealed the thoughts and assumptions…the process of model building can help scientists, policy makers, and resource managers discuss applied problems and theory among themselves and with those in other areas.” But a boundary object need not be a professional product like a journal article to enable researchers to span disciplines. The PoLAR project aimed to develop and implement novel educational resources, that is, creating boundary objects for formal and informal settings. In turn, this meant that much of the time, people were working on creation and testing boundary objects, and therefore the boundary objects served as ways to cross bridges within the team itself.

A third significant leadership strategy employed in the PoLAR project was to engage each team member by fostering multiple professional and personal roles and contributions. While PoLAR team members could, for example, play games with family members, many projects have public facing aspects that could involve more team members in addition to the education, outreach, community experts. It could be by participating in public-facing events with their collaborators, team members learned to appreciate their the collaborators from different disciplines as whole human beings and ultimately for their expert contributions. All of the project administrators went on to pursue graduate studies, crediting this project as one of the reasons they felt well-positioned to do so. As quoted above “I now describe myself as a community-based participatory researcher, a label that stems directly from my experiences with the project.”

Conclusion

Between 2010 and 2022 we studied a cross-disciplinary research team to explore and describe trends in how team members perceived their collaboration practices with each other and their perceived changes in individual capacities. We analyzed collaboration practices by proportion of time according to the disciplines team members perceived themselves participating in, and by the kinds of cross-disciplinary activities they perceived themselves performing. As the project evolved, we find evidence for an increase in time spent on cross-fertilization activities, and an association between that change and an increase both in individuals’ perceived cross-disciplinary scientific knowledge and cross-disciplinary institutional knowledge and skills. We note especially that the team members described changes in their capacities for team collaboration and for communicating with publics and partners outside of higher education, and willingness to take risks in future cross-disciplinary endeavors.

As exploratory and descriptive results from a single case, these results await external validation from future studies, especially to test our suggested qualitative associations against more precise quantifiable correlations and potential causal regularities. We encourage the use of our constructs for cross-disciplinary scientific knowledge and cross-disciplinary institutional knowledge to better characterize and evaluate the impact of cross-disciplinary research on individuals, and perhaps to design projects that effectively address urgent and critical problems.

Supplemental Material

sj-docx-1-sgo-10.1177_21582440251407623 – Supplemental material for Researchers Report Stronger Interdisciplinary Capacities by Participating in a Long-Term Cross-Disciplinary Team

Supplemental material, sj-docx-1-sgo-10.1177_21582440251407623 for Researchers Report Stronger Interdisciplinary Capacities by Participating in a Long-Term Cross-Disciplinary Team by Steve Elliott, Stephanie Pfirman and Elizabeth Bachrach Simon in SAGE Open

Footnotes

Acknowledgements

For feedback on an earlier version of this paper, we thank Monica Gaughan and Julia Melkers. Much of the research for this paper was conducted while S.P. was a faculty member at Barnard College, Columbia University, New York, New York 10027, USA; and while E.B.S. was an evaluator with Goodman Research Group, Cambridge, Massachusetts 02139, USA.

Ethical Considerations

The study was approved by the Columbia University Institutional Review Board (Protocols: 8/13/2010 for IRB-AAAF2862 and 8/1/2012 for IRB-AAAJ7852), while the 2022 phone interview component was approved by Arizona State University IRB 2/25/2022 (Protocol STUDY00015457).

Consent to Participate

A study of survey participants. Prior to participating in the study, study participants provided written and signed informed consent.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the U.S. National Science Foundation (1043271 and 1239783 to S.P.).

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The datasets generated during and/or analyzed during the current study are available from the corresponding author on request.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.