Abstract

Artificial Intelligence (AI) has fundamentally transformed knowledge production and dissemination. It has revolutionized educational paradigms, especially in music education. AI adoption is a transformative force, reshaping the teaching and learning landscape. This study examined the relationship between AI adoption and academic performance in music education. Data were collected from 624 questionnaires at 66 institutions. We analyzed the data with SPSS 25.0, Mplus 8.0, and HLM 7.0. The findings include: (1) AI adoption is positively correlated with students’ academic performance. (2) Academic self-efficacy mediates the relationship between AI adoption and academic performance. (3) AI dependency also acts as a mediator between AI adoption and academic performance. (4) Academic self-efficacy and AI dependency together have a chain-mediating effect between AI adoption and academic performance. (5) Organizational AI readiness is positively associated with students’ academic performance. (6) Organizational AI readiness moderates the relationship between AI adoption and academic performance. These findings enrich music education theories and provide actionable insights for enhancing the academic performance of music majors.

Plain Language Summary

Artificial intelligence (AI) is transforming the way music is taught and learned. But how exactly does using AI affect music students’ grades? This study surveyed 624 students from 66 colleges to investigate the issue. The results show that using AI tools does help students perform better academically, primarily by boosting their confidence in learning (academic self-efficacy). However, an overreliance on AI can also play a role. Importantly, the support and readiness of the school itself are crucial; schools well-prepared for AI not only see better student performance but also maximize the positive impact of AI adoption. In short, AI serves as a powerful booster for music education, and schools play a crucial role in ensuring this technology is utilized effectively to help students succeed.

Keywords

Introduction

Artificial intelligence (AI) is causing a profound shift in music education, particularly in China’s higher education sector. This shift is mainly driven by AI adoption, which involves adding artificial intelligence technologies to daily activities. The goal is to boost efficiency and outcomes. This change depends on technological tools and organizational support (Cooper & Brem, 2024; S. Z. Liang et al., 2024). While AI’s influence in many fields is acknowledged, its use in music education brings a unique transformation (Merchán-Sánchez-Jara et al., 2022). The use of AI in China’s music classrooms is actively changing both educational methods and thinking patterns (Ma & Jiang, 2023). This progress includes innovative tools such as AI-powered piano teaching systems that utilize MIDI signal extraction and neural networks (M. Liu & Huang, 2021), RBF algorithm-based models for esthetic training (X. Chen, 2021), virtual composition assistants, and automated performance assessment tools (Burrows et al., 2018). These technologies address long-standing challenges in China’s music education, including a lack of quality resources outside major cities, high student-to-teacher ratios that limit personalized feedback, and the absence of standardized, objective evaluations for performances. As a result, AI is guiding music education into a more efficient and intelligent era. This shift has significant effects on student academic performance.

Academic performance in music education encompasses cognitive understanding, skill development, such as playing instruments and artistic creativity (Eres et al., 2021; Soderstrom & Bjork, 2015). Scholars differ on how AI affects these areas. Some literature is optimistic, saying AI has a positive impact. Supporters claim that AI customizes learning and gives quick, objective feedback on skills. This can improve how students learn and understand theory (Burrows et al., 2018). Other studies mention that AI helps with metacognitive support and intelligent tutoring. These features boost engagement and outcomes (Chiu et al., 2023; Grassini, 2023; Selwyn, 2022; Seo et al., 2021; Somasundaram et al., 2020; Wu et al., 2024). In contrast, many researchers express caution, highlighting significant drawbacks. They contend that AI’s reliance on existing datasets may prevent it from replicating the nuanced and empathetic feedback provided by human experts. Consequently, this could hinder the cultivation of deeper artistic and interpretive skills, potentially stifling individual creativity and the development of a unique artistic voice (Derakhshan, Teo, & Khazaie, 2024; Derakhshan, Wang, & Ghiasvand, 2024). These differences lead to an ongoing debate about the overall impact of AI on music education outcomes.

To clarify and address this debate, this study proposes a research model and hypotheses grounded in established theories to systematically examine how the adoption of AI affects the academic performance of music majors. Through rigorous empirical analysis, the study aims to reveal the mechanisms at play and provide robust evidence regarding the net impact of AI on student achievement in music education.

Theoretical Review and Research Hypotheses

Theoretical Review

Social Cognitive Theory (SCT) posits that individual behavior, personal factors (cognition, beliefs, and emotions), and the external environment interact dynamically and bidirectionally, rather than unidirectionally (Bandura, 1986; Schunk & DiBenedetto, 2020). In this study, AI adoption is the core behavioral factor, referring to students’ use of tools such as composition software (AIVA) or accompaniment systems (SmartMusic) for real-time feedback. Adopting these tools may influence two personal cognitive factors: academic self-efficacy and AI dependency. Increased AI adoption can boost self-efficacy, the learner’s confidence in completing tasks (e.g., performing a complex piano sonata), by improving confidence and efficiency (J. Liang et al., 2023; Meyer et al., 2019; Yilmaz et al., 2024). In contrast, frequent reliance on generative AI may also foster dependency, such as difficulties harmonizing melodies without AI assistance when performing without AI correction, which could potentially undermine problem-solving abilities (X. Huang et al., 2020; S. Zhang et al., 2024). These two mechanisms—enhanced self-efficacy and increased dependency—have opposite implications for academic performance. Although studies show AI can improve motivation and outcomes, few have examined how both mechanisms affect academic results. Thus, further investigation into the dual impact of generative AI on these key cognitive factors is warranted. SCT suggests AI adoption alters self-efficacy or dependency, which in turn determines learning performance.

Organizational AI readiness is a crucial environmental factor that influences the impact of AI adoption on academic performance. Organizational readiness theory (Hossain et al., 2016; van de Weerd et al., 2016) refers to an organization’s preparatory state and its ability to provide resources for the adoption of AI technology (Prikshat et al., 2023). Its core dimensions are financial support, professional human resource allocation, infrastructure, and management support (Hossain et al., 2016), which collectively form necessary conditions for AI application development (Prikshat et al., 2023). In music colleges, this may mean investing in high-fidelity audio interfaces for AI analysis, hiring music technology experts, maintaining high-speed computing resources, and securing administrative endorsement for curriculum integration. Organizational factors influence the implementation of AI systems in college music education (Parker & Grote, 2022; Prikshat et al., 2023; Seeber et al., 2020). These factors include institutional resource support systems, which help explain differences in adapting to technological change (Lazarus & Folkman, 1987). For example, local influence and group norms shape the evaluation of AI teaching equipment (Gursoy et al., 2019). Thus, inter-college differences in AI readiness may lead to varying student cognitive states during the evaluation and use of AI technology, affecting adaptability and the effectiveness of feedback with AI-assisted tools (Makarius et al., 2020). This includes proficiency in AI-powered score-following or using AI-generated rhythmic feedback. Most existing literature examines the link between organizational AI readiness and academic performance through single-level analyses (Alghazzawi, 2025; Frick et al., 2021; Issa et al., 2022; Jöhnk et al., 2021), but, as an organizational-level variable, the suitability of single-level methods requires further exploration.

This analysis focuses on three key variables—academic self-efficacy, AI dependency, and organizational AI readiness—to examine how AI adoption affects music majors. By explaining how behavior (AI adoption), personal factors (academic self-efficacy and AI dependency), and environment (organizational AI readiness) interact, the framework covers both positive and negative effects of AI in music education.

AI Adoption and Academic Performance

AI technology has become deeply integrated into music education (Alqahtani et al., 2023). From the perspective of SCT, which emphasizes the proactive role of human behavior in shaping outcomes (Bandura, 1986; Schunk & DiBenedetto, 2020), AI adoption is conceptualized as a fundamental behavioral factor. As a core behavioral manifestation involving the use of intelligent tutoring systems and composition platforms, AI adoption is theorized to directly influence students’ academic performance, the key outcome variable.

Firstly, AI adoption promotes the reconstruction of personalized learning paradigms (Dai et al., 2023). Students can utilize digital musical instruments equipped with audio analysis features to evaluate performances, pinpoint strengths and weaknesses, track progress, and refine their techniques. These instruments offer tailored suggestions based on students’ knowledge and learning styles (Bauer, 2020). Adaptive platforms like SmartMusic and Yousician utilize machine learning algorithms to dynamically generate customized training programs tailored to learners’ musical abilities and progress trajectories. Intelligent tutoring systems offer immediate error correction suggestions by analyzing technical elements, such as rhythm control and intonation adjustment, in real-time (Agarwal & Greer, 2023).

Secondly, AI adoption enhances creative capabilities. Tools like Doodle Bach and Soundtrap create an experimental creative field through style simulation and technique reconstruction, facilitating a contextualized understanding of diverse composition techniques (Knapp et al., 2023). Virtual accompaniment systems, such as Cadenza and MyPianist, adapt to performance parameters in real-time, creating a realistic ensemble experience (Sánchez-Jara et al., 2024). Intelligent tools like EarMaster 7.0 build an integrated learning system that connects theory and practice through dynamic difficulty adjustment.

Finally, AI adoption promotes intelligent assessment and immersive learning. Automatic scoring systems, such as PosyMus, improve teaching efficiency while ensuring assessment consistency (L. Chen, 2025; H. Liu & Guo, 2025). Analysis of performance data provides precise support for optimizing teaching strategies (Amirova et al., 2023; Lv, 2023). The integration of AI and XR technologies marks the beginning of a new era in immersive learning. For example, PianoVision reconstructs the visual experience of piano practice through virtual scenarios, and the MuseLab Conductor project enables gesture recognition-based conducting of a virtual orchestra (H. Liu & Guo, 2025).

In summary, personalized learning optimizes cognitive absorption efficiency, intelligent creative tools expand the boundaries of artistic expression, and immersive environments deepen the internalization of skills. Ultimately, an AI-driven new music education ecosystem is constructed (Al Ka’bi, 2023; Alqahtani et al., 2023; Dai et al., 2023). This transformation enhances quantitative academic performance indicators and marks a paradigm shift from skill training to comprehensive quality cultivation, fostering creativity and artistic individuality to improve the academic performance of college students. This study proposes:

The Mediating Role of Academic Self-Efficacy

According to SCT, Self-efficacy beliefs are formed through four primary sources: mastery experiences, vicarious learning, verbal persuasion, and physiological states (Bandura, 1986; Bandura & Adams, 1977). These beliefs influence behavior through cognitive, motivational, affective, and selection processes, making Self-efficacy a central mechanism in the adoption of new technology.

AI adoption can substantially enhance students’ academic self-efficacy via three core mechanisms aligned with these theoretical foundations. Firstly, AI systems provide scaffolded mastery experiences through real-time feedback systems (Alasadi & Baiz, 2023) and adaptive learning paths (Yilmaz et al., 2024), facilitating progressive skill development and alleviating performance anxiety in complex musical practice (Shahzad et al., 2024). Secondly, AI-generated content tools foster vicarious learning by showcasing creative techniques and solutions, boosting creative confidence (K. L. Huang et al., 2024) and enhancing narrative intelligence (Pellas, 2023) through iterative assistance. Thirdly, intelligent diagnostic systems offer personalized verbal persuasion by identifying technical deficiencies in real time (Kumar et al., 2024) and providing targeted training that enhances the sense of mastery (Makransky & Petersen, 2021). Collectively, these AI-related interventions enhance students’ engagement in music technology application (Lee et al., 2024) and artistic practice (Kong & Lin, 2023) by strengthening the four sources of self-efficacy. This study hypothesizes:

Self-efficacy beliefs affect behavior and performance through multiple channels. Cognitive processes impact goal setting and analytical thinking, motivational processes influence task perseverance and effort investment, affective processes regulate stress and emotional responses, and selection processes shape activity choices and environmental preferences (Bandura, 1986; Bandura & Adams, 1977). Research shows that students with enhanced musical Self-efficacy engage in longer practice sessions (G. L. Liu et al., 2024) and are more willing to attempt advanced composition (Berweger et al., 2022), reflecting the cognitive and motivational mechanisms of SCT. This psychological construct promotes strategic learning behaviors, including efficient error correction models (Schunk & DiBenedetto, 2020), the cultivation of a growth mindset (Guo et al., 2023), and systematic rehearsal planning (J. Liu et al., 2024). Empirical evidence validates that high self-efficacy is associated with higher performance success rates (Fathi et al., 2021), improved academic achievement in music theory (Wang & Wang, 2024), and enhanced skill development among music students (Becerra et al., 2020; Ji & Shin, 2019; J. Liang et al., 2023; Wang et al., 2022). This study hypothesizes:

Based on

The Mediating Role of AI Dependency

SCT provides a robust framework for understanding AI dependency as a maladaptive psychological mechanism within technology-mediated learning. Grounded in Bandura’s (1986) triadic reciprocity model, AI dependency emerges from the dynamic interplay of behavioral factors (technology use patterns), personal factors (coping strategies), and environmental factors (academic pressures), signifying a failure in self-regulation. This perspective redefines AI dependency not merely as a behavioral tendency but as a breakdown in the self-regulatory mechanisms central to SCT.

In music education, performance expectations set by teachers, parents, and students create environmental pressures (Dwivedi et al., 2019). SCT contends that when individuals face persistent demands without adequate coping resources, they develop maladaptive patterns that disrupt the balanced agency within the triadic model (Bandura, 1999). The pursuit of academic goals under continuous pressure can induce psychological strain (Bedewy & Gabriel, 2015), thereby increasing susceptibility to behavioral issues (Reddy et al., 2018). Stressed individuals may adopt expedient strategies that offer immediate relief but impede long-term self-regulatory development (Bandura et al., 1999). AI technology provides convenient academic support, temporarily satisfying needs and alleviating pressure (Rani et al., 2023; Zhu et al., 2023). However, from an SCT perspective, this environmental resource becomes problematic when it hampers the development of personal agency and self-regulatory capabilities (Bandura et al., 1999). Although AI applications, such as chatbots, can address psychological concerns (Meng & Dai, 2021), excessive reliance may erode perceived self-efficacy and internal control (Bandura et al., 1999), leading to increased dependence on technology for academic and emotional support and thereby exacerbating dependency (Rani et al., 2023). Thus, we hypothesize:

The triadic reciprocity model further elucidates how maladaptive factors, such as AI dependency, yield negative outcomes through distorted agency dynamics (Bandura, 1986; Romeo et al., 2021). The growing prevalence of technology fosters dependency, triggering behavioral adaptations (Davis, 1989). SCT specifies that when environmental technology resources dominate triadic interactions, they undermine personal agency and distort behavioral patterns (Bandura, 1999). Although AI holds great potential in music education, it carries dependency risks (Kohnke et al., 2024). For example, intelligent practice systems (e.g., Yousician) provide real-time feedback that can supplant self-reflection, degrading error-diagnosis abilities (Song & Song, 2023). This aligns with SCT’s concept of proxy agency, where over-reliance on external agents undermines personal competence development (Bandura et al., 1999). Similarly, AI composition tools (e.g., AIVA) delegate creative thinking to algorithms, reducing cognitive burden (Risko & Gilbert, 2016). SCT cautions that such cognitive offloading, while relieving immediate pressure, may weaken internal cognitive engagement and self-regulatory capacity, adversely affecting deep learning (Risko & Gilbert, 2016). Consequently, students with long-term AI dependency often exhibit anxiety in artistic decision-making and show skill deficiencies without technological support (Niloy et al., 2024), demonstrating how disrupted agency mechanisms negatively impact academic performance (S. Huang et al., 2024; S. Zhang et al., 2024). This represents a breakdown in SCT’s concept of balanced reciprocity, where an overemphasis on environmental technology hinders the development of personal agency. Therefore, we hypothesize:

Integrating

Academic Self-Efficacy and AI Dependency

Within the SCT framework, enhanced self-efficacy can influence technology dependency through distinct psychological mechanisms (Arefi et al., 2022; Bandura, 1986). Bandura et al. (1999) posits that personal success, especially through self-effort, significantly enhances self-efficacy. This construct refers to an individual’s belief in their capacity to organize and execute actions to achieve goals (Bandura & Adams, 1977; Bandura & Locke, 2003). Elevated self-efficacy increases confidence in approaching new tasks. In educational technology, individuals with high self-efficacy may view AI as a means to enhance their abilities, much like they would when handling tasks independently. This cognitive link between self-efficacy and the perceived value of AI can gradually foster technology dependency.

Technology dependency theory suggests individuals repeat behaviors that yield positive rewards and a sense of achievement (Brenner et al., 2021). As students develop self-efficacy, they may recognize generative AI as a vital resource for achieving educational goals (Backfisch et al., 2021; Kumar et al., 2024). Increased confidence encourages exploration of new technologies, and successful trials with AI reinforce the perceived efficiency of these tools (Makransky & Petersen, 2021; Meyer et al., 2019). Repeated use of efficient tools establishes dependency patterns, with self-efficacy indirectly promoting dependency by enhancing the perceived utility of AI (Backfisch et al., 2021; Kumar et al., 2024).

Conversely, students with low academic confidence often face frustration and difficulties completing tasks, leading them to seek external support (Jackson et al., 2019). AI becomes an appealing option by providing quick solutions to academic queries, improving short-term performance (Rahman & Watanobe, 2023). This immediate satisfaction and performance enhancement can increase reliance on AI, also contributing to a positive correlation between self-efficacy and dependency. Based on this analysis, we hypothesize:

Building upon

It should be noted that in this chain-mediation of

Organizational AI Readiness and Academic Performance

Within the SCT framework, organizational AI readiness serves as a critical environmental factor influencing academic performance (Arefi et al., 2022; Bandura, 1986). It establishes a resource foundation for AI adoption and optimizes learning contexts through enhanced implementation capacity (Fan et al., 2025), enabling personalized instruction and fostering innovation-conducive institutional cultures that systematically improve student outcomes (Fan et al., 2025). The Resource-Based View posits that organizational resources translate into unique capabilities, enabling sustainable competitive advantage (Barney et al., 2021). In higher education, AI infrastructure—supported by financial budgets (Pumplun et al., 2019)—facilitates data-intensive tasks and promotes the effective utilization of AI (Gursoy et al., 2019). For example, the Technical University of Munich developed ChatGPT applications for learning preparation, interactive activities, and formative assessment (Li et al., 2024). Institutions with high readiness actively identify AI applications, allocate resources efficiently, and integrate technological advancements into teaching innovations (Makarius et al., 2020; Scherer et al., 2021), thereby enhancing the efficiency of AI-to-pedagogy conversion. Such environments create opportunities for cognitive enhancement, significantly boosting academic performance (J. Chen et al., 2023; Song & Song, 2023). Thus:

The Moderating Role of Organizational AI Readiness

SCT posits that environmental factors serve as boundary conditions that moderate the relationships between behavior and outcome (Bandura, 1986). Triadic reciprocity indicates environments interact with personal factors to shape outcomes (Schunk & DiBenedetto, 2020). Resource-rich settings strengthen relationships through implementation fidelity, whereas constrained environments weaken them due to barriers (Alghazzawi, 2025; Fan et al., 2025). Organizational readiness creates an implementation climate that systematically influences how AI adoption translates into academic performance.

High-readiness institutions enhance the understanding of AI functions (e.g., perception, prediction) and interdisciplinary skills (Hofmann et al., 2020; Pumplun et al., 2019), thereby improving mastery of tasks such as music composition (Shahid et al., 2024). For instance, Cornell University guides students in iterative text generation for creative outputs (Li et al., 2024). They also facilitate adaptation to AI-driven reforms, fostering positive emotions and integrating technology into practices such as online teaching and flipped classrooms (El Alfy et al., 2017; Pozas et al., 2022). Conversely, low-readiness institutions face resource scarcity and support deficiencies, resulting in implementation inefficiency and weakened adoption attitudes (Shen et al., 2024; Snyder-Halpern, 2001), ultimately hindering academic performance. Therefore:

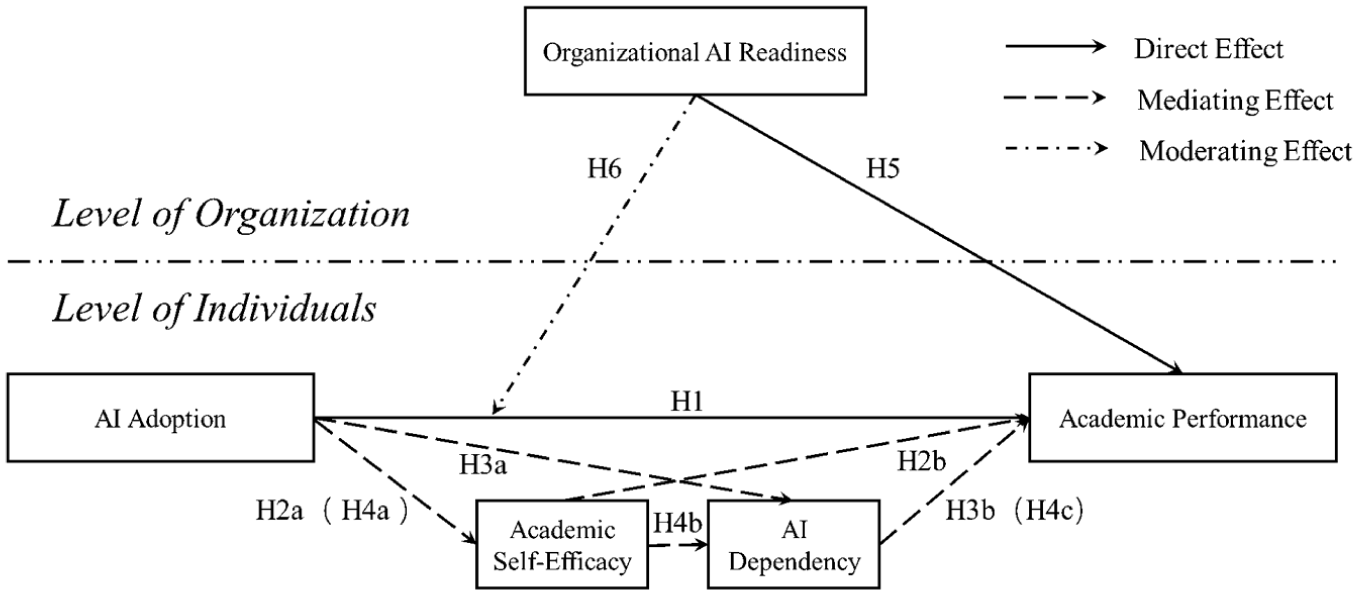

Based on the above analysis, the hypothetical model (Figure 1) is proposed.

Hypothetical model.

Methods

Sample and Data Collection Procedures

This study adhered to international ethical standards and Chinese regulations, obtaining full ethical approval. Data were collected via an online questionnaire platform with a two-section structure. The first section introduced the study, collected demographic information, explained the research purpose, and ensured anonymity and data usage were restricted to scientific purposes. All data were encrypted and stored on a certified platform with scheduled post-publication disposal. Electronic informed consent was implemented at the section’s end, detailing the research objectives, data procedures, and the right to voluntary participation, as well as the option to withdraw without penalty. Access to the second section containing core measurement items required an active selection of “I agree.” The design proactively addressed ethical concerns, such as AI dependency; consent materials explicitly acknowledged this risk and provided researcher contacts for support. Questionnaire item order was randomized to minimize response bias.

Ethical considerations included the use of stratified sampling to ensure proportional representation of institutions. The data collection period spanned 5 months from February 1 to June 30, 2024. A pretest was conducted with 50 music majors from excluded art colleges to assess the psychometric properties of the questionnaire, including reliability and validity. Privacy protections were evaluated in consultation with a cybersecurity expert to ensure compliance with China’s Personal Information Protection Law and the General Data Protection Regulation standards. Pretest feedback optimized several questionnaire items, enhancing the instrument’s quality. The estimated completion time was approximately 7 min.

To ensure national representativeness and institutional diversity, a rigorous multistage sampling procedure was implemented. A comprehensive sampling frame was established based on the official list of higher education institutions provided by China’s Ministry of Education. From this frame, 68 art colleges and conservatories were selected to ensure proportional representation across China’s seven geographical regions: Northeast, North, East, Central, South, Southwest, and Northwest. Following institution selection, academic affairs offices collaborated to adopt a systematic random sampling method for participant recruitment. Random lists of eligible music majors were generated from official rosters, and a systematic sampling interval was adjusted according to institution size to ensure fair selection. Subsequently, 680 personalized invitations were distributed via institutional email systems, official WeChat work groups, and university-approved online learning platforms (e.g., Blackboard), ensuring full replicability. To maintain sample integrity, a technical quota restricted platform access for any college after 10 completed responses had been received. Sending three weekly reminder emails to non-respondents increased response rates, achieving an initial response rate of 94.3% (641 responses).

Following the collection, a multifaceted data quality control protocol was implemented, referencing the literature (Fan et al., 2023; Feng et al., 2025). This included automated CAPTCHA and IP duplication checks, time-based filtering excluding responses under 4 min or over 10 min, attention validation via two embedded check items, and logical consistency verification through reverse-coded pairs. A minimum threshold of 5 valid responses per institution was established for multilevel analysis, excluding two colleges that failed to meet this criterion.

After careful review, 624 valid responses from 66 colleges were finally retained. Among them, the number of qualified responses retained from all colleges exceeded 5. This meets HLM software’s relevant criteria for sample size (Maas & Hox, 2006). Among the 624 students, 258 were male (41.3%), and 366 were female (58.7%); 139 were freshmen (22.3%), 157 were sophomores (25.2%), 163 were juniors (26.1%), and 165 were seniors (26.4%); the students’ ages ranged from 16 to 25 years old, with an average age of 20.3 years and a standard deviation of 1.6.

Measurement of the Variables

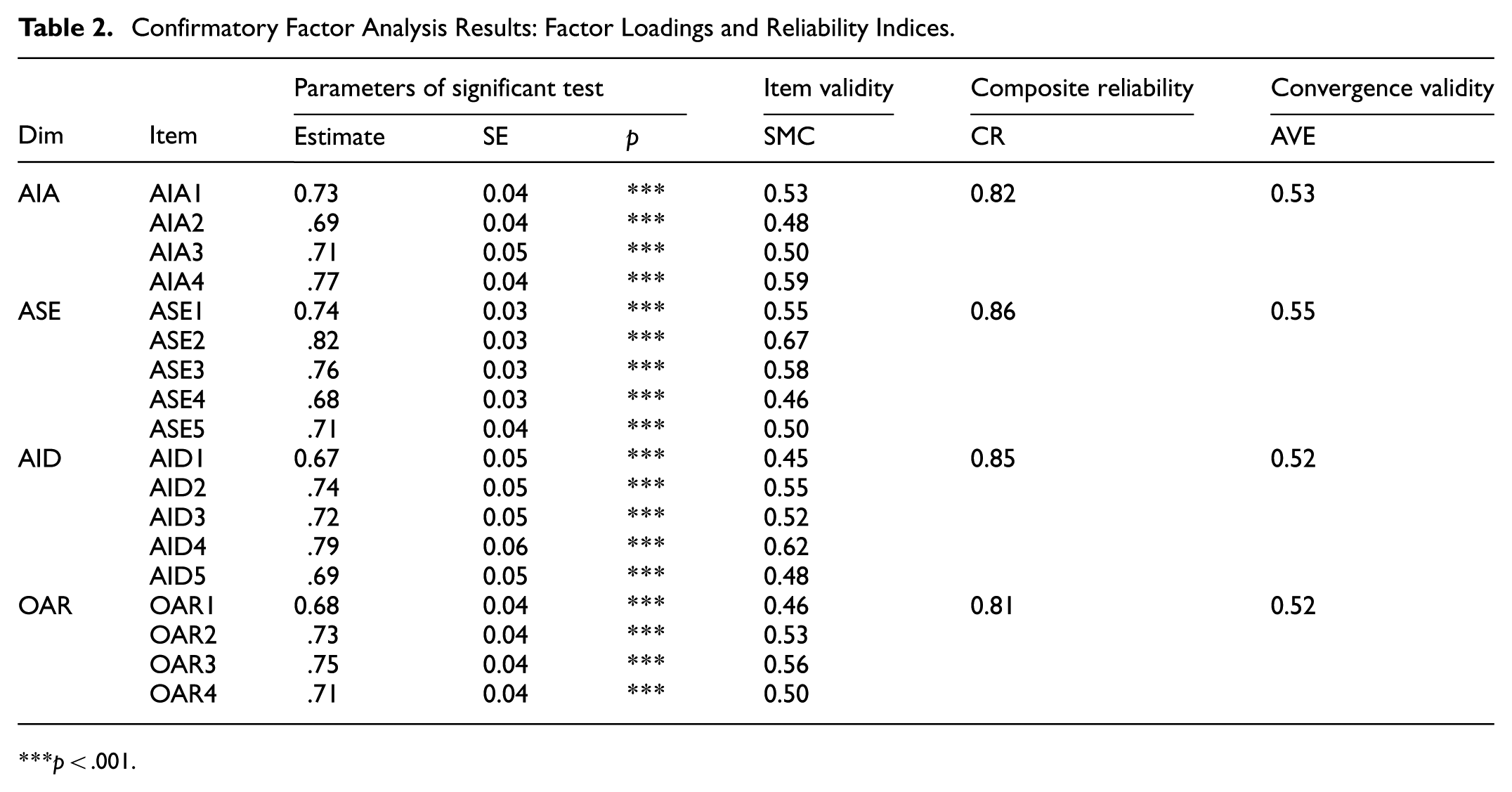

The measurement details of the four key constructs in the study are comprehensively documented in Table 1. As shown in Table 1, all measurement items exhibit adequate factor loadings, which range from 0.67 to 0.82, confirming strong item reliability. The Cronbach’s α values range from .82 to .92, indicating excellent internal consistency among all scales.

Comprehensive Measurement Properties of Key Variables.

Note. AIA = AI adoption; ASE = academic self-efficacy; AID = AI dependency; OAR = organizational AI readiness.

All items were measured on a seven-point Likert scale (1 = strongly disagree, 7 = strongly agree).

To control the differences in exam difficulty among different schools, students’ academic performance was measured by their class rankings in the most recent semester’s exams. The data on these rankings were directly provided by the surveyed schools rather than self-reported by the students. Specifically, the academic performance of students ranked in the top one-seventh was scored as “7,” those in the bottom one-seventh as “1,” and the others were scored from “6” to “2” accordingly.

In this study, gender, grade level, and age were incorporated as control variables into the data analysis.

Analysis Tools

In this study, the exploration of the internal mechanisms between AI adoption and academic performance primarily employs data analysis tools such as SPSS 25.0, Mplus 8.0, and HLM 7.0.

Sample Common Method Bias Test

This study implemented procedural and statistical controls to address potential common method bias. Methodological safeguards during questionnaire design included item sequence counterbalancing and strict anonymity protocols to minimize priming and social desirability bias. Harman’s single-factor test indicated no substantial bias, with the largest factor explaining 39.16% of the variance, which is below the 50% threshold (Podsakoff et al., 2003). Further latent common method factor analysis confirmed bias control: model fit improved significantly (RMSEA decreased by 0.01, SRMR dropped by 0.02, CFI and NFI increased by 0.04 and 0.03). Thus, common method bias was statistically controlled.

Results

Reliability and Validity

As shown in Table 2, all measures demonstrate strong psychometric properties, with factor loadings above 0.67. The composite reliability (CR) values range from 0.81 to 0.86, exceeding the 0.70 threshold, and average variance extracted (AVE) values all exceed 0.52, surpassing the 0.50 criterion, supporting the measurement model’s reliability and convergent validity. Furthermore, Table 3 shows that the square root of each construct’s AVE (0.72–0.74) exceeds the inter-construct correlations (0.27–0.47), providing evidence of discriminant validity by indicating that each construct shares more variance with its indicators than with other constructs.

Confirmatory Factor Analysis Results: Factor Loadings and Reliability Indices.

p < .001.

Discriminant Validity Assessment: Correlation Matrix with AVE Square Roots.

Note. The bold diagonal font is the square root value of AVE, and the lower triangle is Pearson correlation coefficient. AP = academic performance.

Model Fit

The model fit indices are χ2/df = 2.92, CFI = 0.94, TLI = 0.95, RMSEA = 0.04, and SRMR = 0.05, indicating that the model has a good fit.

Basic Characteristic Test

In this research, organizational AI readiness is regarded as a shared construct. The average

Hypothesis Testing

Null Model

The following describes the models. The results reveal that the within-group component (σ2) is 0.52, the between-group component (τ00) is 0.14, and ICC1 is .21. According to the standard (Cohen, 1988), an ICC1 of .21 implies a high correlation. This indicates that group differences are significant and a general regression model is not suitable for analysis.

Based on the ICC1 value of .21, we calculated ICC2 as .72, which exceeds .70 and supports the reliability of between-group differences. The rwg value of 0.78 further validates data aggregation. Together, ICC1 (.21), ICC2 (.72), and rwg (0.78) support the use of multilevel modeling and aggregating individual-level data into group-level constructs.

Level 1:

Level 2:

Mixed Model:

Random Coefficients Regression Model

The models are presented below, and results in Table 4 verify

Level 1:

Level 2:

Mixed Model:

Results of Random Coefficients Regression Model.

p < .001. **p < .01. *p < .05.

To bolster the rigor and depth of this research, we have integrated comprehensive model comparison results based on two prominent information criteria: the AIC and the BIC. A meticulous analysis demonstrates that, when compared with the simplified model, the full model with random slopes achieves a remarkable reduction of 42.3 in AIC and 38.7 in BIC. Such a substantial decline in both AIC and BIC values serves as compelling evidence that the increased complexity of the full model leads to a marked improvement in model fit. These findings not only strengthen the scientific foundation of our study but also make valuable contributions to the existing body of knowledge in the field, further validating the superiority of the proposed full model in accurately depicting the intricate data structure.

Direct and Indirect Effects

Table 5 shows that the total effect of AI adoption on academic performance is 0.16, with a direct effect of 0.19 and an indirect effect of −0.03. The mediating effects of Ind1 (AIA → ASE → EIB), Ind2 (AIA → AID → EIB), and Ind3 (AIA → ASE → AID → EIB) are 0.11, −0.09, and −0.05 respectively. After 5,000 bootstrap iterations, the 95% confidence intervals of Ind1, Ind2, and Ind3 exclude 0, supporting

Test of Mediating Effect.

p < .001. **p < .01. *p < .05.

The comparative analysis of these standardized coefficients indicates that the positive mediating effect of self-efficacy, with a coefficient of β equals .19, slightly outweighs the adverse effect of dependency, which has a coefficient of β equals negative .16. Meanwhile, the chain mediating effect represents the weakest among the three pathways, showing a coefficient of β equals negative .09. These differential magnitudes and directions highlight the complex balance between adaptive and maladaptive mechanisms operating within the relationship between AI adoption and academic performance.

Intercepts as Outcomes Model

The models are presented below, and Table 6 shows the results, verifying

Level 1:

Level 2:

Mixed Model:

Results of intercepts as outcomes model.

p < .001. **p < .01. *p < .05.

Slope as Outcomes Model

The models are presented below, and the results in Table 7 verify

Level 1:

Level 2:

Mixed Model:

Results of Slope as Outcomes Model.

p < .001. **p < .01. *p < .05.

The moderating effect of organizational AI readiness (OAR).

As depicted in Table 8, the hypothesis testing results offer comprehensive backing for the research model. The analysis confirms the direct impact of AI adoption, the dual mediating and sequential mediating functions of academic self-efficacy and AI dependency, and both the direct and moderating effects of organizational AI readiness on students’ academic performance.

Results of Tests for All Hypotheses.

Note. A response of “Yes” indicates that the hypothesis is supported, while a response of “No” suggests that the hypothesis is not supported.

Discussion

The findings are robustly explained by SCT, validating its triadic reciprocity framework between behavior, personal factors, and environment in technology-enhanced learning. The positive correlation between AI adoption and academic performance (

The chain-mediating effect (

Organizational AI readiness solidifies the environmental component of the triad. Its direct association with performance (

In summary, this study empirically demonstrates the dynamic model of SCT: AI adoption correlates with academic performance through self-efficacy and dependency. The observed suppression effect highlights the need to consider countervailing pathways to prevent obscuring the true benefits. These processes operate within the environmental context of organizational AI readiness, which exerts both direct and moderating influences on the overall relationship.

Theoretical Contribution

This study introduces two key theoretical innovations that advance the understanding of AI’s role in higher education learning processes.

First, it establishes a dual-mediating-pathway framework linking AI adoption to academic performance. While existing literature emphasizes AI’s positive correlations via metacognitive support (Wu et al., 2024), this research reveals that AI adoption is concurrently associated with both a positive pathway (through self-efficacy) and a negative pathway (through dependency). This model resolves apparent paradoxes by demonstrating how the same technology engages opposing psychological mechanisms (X. Huang et al., 2020; S. Zhang et al., 2024), offering a nuanced explanation for AI’s contradictory effects in university learning contexts.

Second, the study introduces a methodological innovation by addressing the multilevel nature of organizational influences. Prior research has relied on single-level analyses of organizational readiness, which limits the theoretical explanatory power (Alghazzawi, 2025; Frick et al., 2021; Issa et al., 2022; Jöhnk et al., 2021; Z. X. Zhang, 2010). This study empirically demonstrates that group-level differences in organizational AI readiness are significant, establishing the necessity of using HLM 7.0 to account for nested data structures. By modeling organizational readiness as a group-level construct, the study enhances the theoretical precision and explanatory power of technology adoption research in higher education.

Practical Implications

For educators, use a structured pedagogical framework to move beyond binary views of AI. This method utilizes technology to enhance student self-efficacy through scaffolded learning. It also aims to limit dependency risks. Select AI tools that offer feedback but gradually reduce support as students become more competent. Assignments should prompt students to analyze AI-generated content, such as comparing AI-created harmonies with traditional works. Use reflective journals to supplement these assignments. Include mandatory AI-free practice sessions to help reinforce basic skills.

For students, develop AI literacy by using metacognitive practices. This approach boosts self-regulation and helps prevent dependence. Keep a log of AI interactions, noting how often you use AI, why you use it, and what results you get. This helps you see if AI really adds value to your learning. Try the 3B Framework: use AI briefly for inspiration, brainstorm independently, and then build without AI. Additionally, arrange structured peer reviews of how AI is utilized in creative projects to maintain a balanced level of engagement.

For university administrators, it is essential to create a strong infrastructure and utilize resources effectively. Utilize a tiered technology acquisition model that prioritizes discipline-specific tools and tailored training. Establish an AI governance committee comprising faculty, IT staff, and students to ensure ethical oversight. Update guidelines as needed. Offer teaching innovation grants to support the integration of AI into teaching. Start with pilot departments and use train-the-trainer models for phased, affordable deployment.

Limitations and Future Directions

This study has several limitations. First, the cross-sectional design presents challenges in examining dynamic processes of variables. Longitudinal research is needed to enhance the accuracy of findings. Second, the study examined only a subset of relevant variables. Future research should investigate additional mediating or moderating factors from alternative theoretical perspectives to elucidate the formation pathways and internal mechanisms of academic performance. Third, as the study used data from China, the conclusions might be influenced by cultural specificities. Cross-cultural research is recommended for future studies. Fourth, reliance on self-reported measures for key constructs might lead to social desirability bias. Future studies could adopt mixed methods, such as behavioral observations, peer assessments, or longitudinal tracking of learning behaviors, to improve data triangulation and validity.

Conclusions

This study elucidates the dual pathways through which AI adoption influences academic performance in music education. The results demonstrate a significant positive correlation between AI adoption and academic performance, mediated by both academic self-efficacy and AI dependency, which operate as sequential mediators in a chain-mediating mechanism. Furthermore, organizational AI readiness directly enhances academic performance and positively moderates the relationship between AI adoption and performance. These findings extend existing theories in technology-enhanced music education by revealing coexisting enabling and constraining mechanisms, while providing actionable insights for optimizing AI adoption strategies in higher music education systems through balanced cultivation of self-efficacy and dependency management.

Footnotes

Ethical Considerations

This study strictly adhered to internationally recognized ethical standards and complied with all relevant Chinese regulatory requirements. The research protocol, involving human participants, received full ethical approval (Approval No.: EA-2024-024) from the Institutional Review Board at Pingdingshan University’s School of Economics and Management.

Consent to Participate

Before participating, all individuals involved in the study gave written informed consent, confirming their voluntary participation and understanding of the research procedures.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The datasets used and/or analyzed during the current study available from the corresponding author on reasonable request.