Abstract

Generative Artificial Intelligence (GenAI) is exerting an increasingly profound influence on education. However, existing research lacks a systematic investigation into postgraduate students’ behavioral patterns and influencing mechanisms in academic writing. Addressing this gap, the first phase of this study collected 49 qualitative responses from Chinese postgraduates, identifying six key constructs: perceived usefulness, facilitating conditions, information privacy risk, security risk, attitude, and distrust. In the second phase, drawing on the Technology Acceptance Model (TAM) and Distrust Theory, Structural Equation Modeling (SEM) was conducted with 382 survey responses. The findings reveal that attitude, facilitating conditions, perceived usefulness, and security risk are significant direct predictors of intention to use GenAI. Attitude fully mediates the effect of distrust and partially mediates the effects of facilitating conditions, perceived usefulness, and security risk. This model explains 67.9% of the variance in usage intention. Highlighting attitude and risk perception as key mechanisms, this study contributes by integrating TAM with Distrust Theory, providing novel evidence from the Chinese postgraduate context and offering practical implications for higher education.

Plain Language Summary

Artificial intelligence (AI) tools such as ChatGPT are increasingly used in universities for writing support. Yet, we know little about how students in different cultural and educational contexts decide whether to use them. This study explores how Chinese postgraduate students view the benefits and risks of using AI for academic writing. We combined in-depth interviews with a large student survey to understand their choices. Students saw clear benefits: AI can speed up writing, help with grammar, and suggest ideas. But they also reported concerns. Two main risks stood out. Security risks (such as inaccurate or unreliable outputs) worried them more than privacy risks (such as data collection), because security problems directly threatened the quality of their work. Many students also expressed distrust, often based on past negative experiences, but this tended to affect their attitudes rather than directly stopping them from using AI. The survey confirmed these insights. Students’ attitudes—whether they felt positive or negative about AI—were the strongest factor shaping their intention to use these tools. Perceived usefulness and supportive study conditions (like good internet access) encouraged adoption, while security concerns reduced trust and confidence. Overall, the study shows that students weigh the value of efficiency against the risks of reliability when deciding to use AI. These findings suggest that universities should provide clear guidance, technical support, and ethical safeguards to help students use AI responsibly and effectively in academic writing.

Keywords

Introduction

With the rapid advancement of artificial intelligence technologies, generative artificial intelligence (GenAI) has been increasingly integrated into higher education, offering personalized learning support and driving innovation in academic writing and research (Ruiz-Rojas et al., 2023). Powered by deep learning and natural language processing, GenAI can generate text, code, and multimedia content, and perform semantic understanding and contextual reasoning. These capabilities have demonstrated significant advantages in supporting academic writing, research data analysis, and instructional design (Baidoo-anu & Owusu Ansah, 2023; Tate et al., 2023). In China, higher education institutions are increasingly recognizing the potential of GenAI in educational applications. However, challenges such as strict academic integrity policies, data privacy concerns, and low levels of technological trust contribute to varying degrees of acceptance among postgraduate students. Despite the growing interest, there remains a notable research gap regarding the application of GenAI in academic writing among Chinese postgraduate students-particularly in terms of its usage patterns, influencing factors, and behavioral intentions (L. Wang & Ren, 2024).

Existing studies have demonstrated that GenAI is widely utilized in various educational contexts, including language learning (Ma, 2024), academic writing (Jin et al., 2025), the development of critical thinking skills (Essien et al., 2024), research supervision (Y. Dai et al., 2023).

In addition, prior studies have employed diverse approaches—including regression analysis, thematic analysis, and neural networks—to examine students’ intention to use GenAI (Derakhshan, 2025; Ilic et al., 2024; Ma, 2024).Building on prior approaches, this study employs the TAM, complemented by Distrust Theory, to examine Chinese postgraduates’ intention to use GenAI in academic writing.

The complex interplay between technology trust, distrust, and user behavior has not been sufficiently explored in the current literature. Concerns over academic misconduct, insufficient content credibility, and data privacy risks have intensified postgraduates’ distrust of GenAI technologies (Strachan et al., 2024). While Distrust Theory has been widely applied in business and information technology, its use in educational settings remains limited. Therefore, integrating TAM with Distrust Theory offers a more comprehensive framework for understanding the complex factors that shape postgraduates’ intention to use GenAI in academic contexts.

This study seeks to answer the following research questions:

What factors affect Chinese postgraduate students’ intention to use GenAI tools in academic writing?

Based on the TAM and Distrust Theory, through which underlying mechanisms (including both direct and indirect effects) do these factors affect postgraduates’ intention to use GenAI in academic writing?

To what extent do these factors account for the variance in Chinese postgraduate students’ intention to use GenAI tools for academic writing?

Literature Review

Chinese Postgraduates and GenAI

Generative artificial intelligence (GenAI) is now widely applied in higher education, particularly in academic writing and research support. Among Chinese postgraduates, GenAI tools such as ChatGPT and DeepSeek are widely used to improve linguistic accuracy, writing fluency, and research productivity (Anderson et al., 2025; Rasul et al., 2023). However, students also express concerns regarding data privacy, information reliability, and ethical use (K. Dai & Liu, 2024; B. Liu, 2023). Empirical studies show that GenAI can assist in research design, data analysis, and workflow automation, reducing cognitive load and enhancing performance (Shahzad et al. 2025; H. Wang et al., 2023). Yet, risks related to misinformation, plagiarism, and algorithmic bias remain significant barriers (Allen & Jevons, 2023; Bittle & El-Gayar, 2025).

Overall, existing research presents a dual picture of opportunities and challenges. On the one hand, perceived usefulness, institutional support, and learning efficiency encourage adoption; on the other, privacy risk, security concerns, and low trust inhibit use. Most previous studies treat these factors separately, focusing on isolated aspects such as language learning or data security. Few have proposed a unified theoretical explanation that simultaneously captures the motivational and psychological mechanisms driving postgraduates’ intention to use GenAI. Addressing this gap, the present study integrates the Technology Acceptance Model (TAM) and Distrust Theory to develop a comprehensive framework linking positive and negative determinants of adoption.

Integration of TAM and Distrust Theory

The Technology Acceptance Model (TAM) provides a foundational lens for understanding users’ behavioral intentions toward new technologies. It posits that perceived usefulness and perceived ease of use shape users’ attitudes and, consequently, their intention to adopt technology (Davis, 1989; Venkatesh & Davis, 2000).In educational contexts, TAM has been extended to include contextual variables such as environmental support, trust, and self-efficacy (Alyoussef, 2021; Ma, 2024; Tseng et al., 2022).These extensions emphasize that students’ acceptance of technology is not only cognitive but also shaped by institutional and technical conditions. In postgraduate education, perceived usefulness captures students’ belief that GenAI enhances research and writing efficiency, while facilitating conditions represent the availability of supportive infrastructure and resources. Consistent with qualitative evidence, usability concerns were minimal, so perceived ease of use was excluded to maintain parsimony.

While TAM emphasizes enablers of technology use, Distrust Theory explains the psychological barriers that discourage adoption. Distrust is a multidimensional construct encompassing cognitive skepticism, emotional resistance, and behavioral avoidance (Dimoka, 2010; Lewicki & Bunker, 1996). In the GenAI context, distrust arises from doubts about content reliability, transparency, and accountability. Perceived information privacy and security risks are primary sources of such distrust (Y. Liu et al., 2024; Pavlou et al., 2007) Privacy risk concerns unauthorized data collection or misuse of academic materials, whereas security risk involves system vulnerabilities and misinformation that undermine the credibility of AI-generated content (Strachan et al., 2024).These risks heighten users’ vigilance and anxiety, weakening trust and lowering their willingness to use GenAI for academic purposes.

Integrating TAM and Distrust Theory enables a more balanced understanding of technology adoption by capturing both enabling and inhibiting mechanisms. Perceived usefulness, attitude, and facilitating conditions represent motivational drivers, while perceived privacy risk, security risk, and distrust function as psychological barriers. This integration aligns with calls to extend traditional acceptance models by incorporating emotional and risk-based factors, especially in contexts where data sensitivity and academic integrity are paramount (Fan et al., 2021; Ying et al., 2021). In this study, the two perspectives are combined into a unified framework in which postgraduates’ adoption of GenAI is viewed as a value–risk trade-off: functional gains and institutional support promote positive attitudes, whereas distrust and perceived risks suppress them.

Usage Intention

Usage intention is the most immediate predictor of actual behavior across major acceptance frameworks, including TAM, the Unified Theory of Acceptance and Use of Technology (UTAUT), and the Theory of Planned Behavior (Ajzen, 1991; Venkatesh et al., 2003). It reflects the strength of individuals’ motivation to perform a behavior and is shaped by both cognitive and affective evaluations—such as perceived usefulness, attitude, and perceived risk (J. F. Hair et al., 2019; Zhang et al., 2024). In the educational domain, positive perceptions of usefulness and institutional support tend to enhance students’ intention to adopt digital tools, while distrust and risk perception can weaken it (Chin et al., 2022; Dehghani et al., 2023).

Despite growing attention to GenAI in education, few studies have systematically examined postgraduate students’ behavioral mechanisms, especially in academic writing. Most prior work focuses on general learning or relies on single-method designs, leaving the interplay between motivational and risk-related factors underexplored (Creswell & Clark, 2017; Jin et al., 2025).By integrating TAM and Distrust Theory within a sequential mixed-method design, this study examines how enabling and inhibiting factors jointly shape Chinese postgraduates’ intention to use GenAI tools in academic writing.

Phase One: Thematic Analysis

Building on these theoretical foundations and research gaps, the present study adopts a two-phase design to systematically investigate Chinese postgraduates’ use of GenAI in academic writing. The next section introduces the research methodology, beginning with Phase One (qualitative) and followed by Phase Two (quantitative).

Methodology

This study adopted a sequential exploratory mixed-method design, which combines an initial qualitative phase followed by a quantitative phase (Creswell & Clark, 2017; Ivankova et al., 2006). In this approach, the qualitative findings serve as the foundation for developing the conceptual framework and measurement instruments used in the subsequent quantitative analysis. The rationale for choosing this design lies in the complexity of the research problem: understanding postgraduate students’ intention to use GenAI in academic writing requires not only identifying key constructs through in-depth exploration but also testing their relationships within a larger population. By integrating qualitative insights with quantitative validation, the study enhances both the depth and generalizability of the findings.

Participants and Data Collection

In the first phase, a stratified random sampling technique was employed to select participants.

First, all postgraduate students at Jiangxi Science and Technology Normal University were categorized by academic year using the university’s Learning Management System. Then, 21 students were randomly selected from each academic year based on their student ID numbers, resulting in a total sample of 63 participants. This sampling approach ensured that students from each year level were proportionally represented. In addition, Jiangxi Science and Technology Normal University was selected as it provides a diverse academic environment for postgraduate education. However, as the data were collected from a single institution, the representativeness may still be limited.

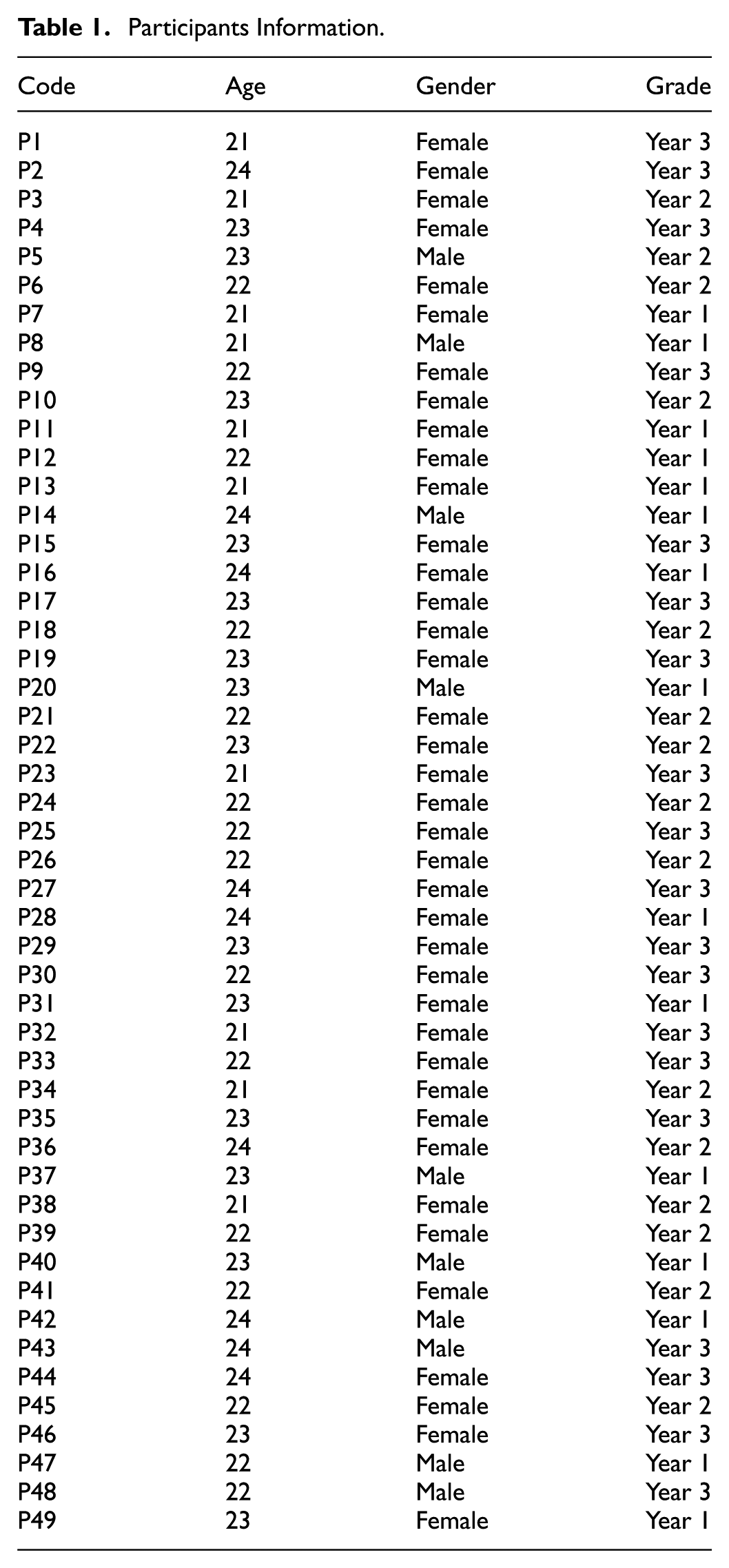

Before completing the questionnaire, participants were informed about the purpose and scope of the study and were assured that their privacy would be fully protected. Each participant provided a handwritten informed consent form indicating their interest and agreement to participate in the research. Participants retained the right to withdraw at any time during the questionnaire process; however, once withdrawn, they could not return to complete the questionnaire again. Open-ended responses were collected using paper-based questionnaires rather than semi-structured interviews, for several empirically grounded reasons. First, paper questionnaires are more cost-effective and do not require trained interviewers. They can also be distributed to multiple respondents simultaneously, saving time and resources (Dillman et al., 2014). Second, paper-based data collection eliminates potential interviewer bias, allowing respondents to express their views more freely without being influenced by the interviewer’s attitudes or behaviors (Bradburn et al., 2004). The questionnaire took approximately 140 min to complete and was administered in Chinese. During the session, participants were prohibited from using electronic devices, searching for information, or seeking outside assistance. A total of 63 responses were collected. Following established practices in qualitative research (Burchett, 2014) 14 responses were excluded based on transparent and rigorous criteria: (a) the respondent had never used GenAI; (b) responses were overly brief and lacked meaningful information; or (c) responses were irrelevant to the research topic. These criteria ensured that the remaining 49 responses provided sufficient depth and relevance for thematic analysis. To minimize potential fatigue, although the questionnaire required an extended completion time (approximately 140 min), measures such as clear instructions and optional short breaks were implemented to reduce respondent fatigue. Table 1 summarizes the participants’ demographics: all were aged 21 to 24 years, with 10 males and 39 females, drawn from three different postgraduate grade levels to ensure representativeness across cohorts.

Participants Information.

Instruments

The data collection instrument consisted of two main sections. The first section gathered demographic information, including participants’ gender, age, academic discipline, place of origin, and average daily duration of GenAI use. The second section comprised four open-ended interview questions to elicit detailed insights into participants’ experiences with GenAI in academic writing. The specific questions were as follows: (1) What impact do you think GenAI tools have had on your academic writing? (2) What tasks do you use AI tools for in your typical academic writing process? (3) In what ways do you think AI has supported your academic writing? (4) In what ways do you think AI has hindered your academic writing?

These four guiding questions were designed specifically for the qualitative phase. They serve as exploratory prompts to generate empirical insights that contribute to answering the three overarching research questions introduced in the Introduction. More specifically, they were developed to systematically explore postgraduate students’ behavioral patterns and experiences using GenAI tools during academic writing. By addressing both the supportive and obstructive aspects of GenAI use, the study aimed to provide a balanced understanding of its practical roles and influencing factors in academic learning and research.

Data Analysis

The qualitative data were analyzed using thematic analysis (Braun & Clarke 2006; Rubin & Rubin, 2005), a flexible method widely used in education and technology studies for identifying recurring patterns (Brooks & Young, 2015). Its flexibility makes it suitable for exploring the complex interplay among technological applications, psychological responses, and learning outcomes in the context of GenAI for academic writing.

The analysis proceeded through a three-phase coding process. First, two researchers independently coded all 49 responses and generated preliminary codes. Second, they discussed divergences and refined the coding scheme. Third, an external expert reviewed the coding structure and provided feedback, leading to the final coding manual. To assess reliability, inter-coder consistency was calculated: Cohen’s Kappa reached 0.82 in the first round and 0.91 after refinement, indicating substantial to almost perfect agreement (Landis & Koch, 1977).

Open coding was applied, with text segments assigned descriptive codes (e.g., “automatically detecting grammar errors and offering revision suggestions” → grammar proofreading). Related codes were then grouped into categories and themes. For transparency, a representative coding framework is presented in Table 2 (Findings), where excerpts are aligned with codes, sub-themes, and themes. All responses were originally in Chinese and translated into English by bilingual researchers, with independent cross-checking to ensure accuracy and minimize bias.

Representative Thematic Coding Framework.

Findings

The thematic analysis provided valuable insights into the factors influencing postgraduate students’ intention to use GenAI in academic writing. The results revealed six major constructs: perceived usefulness, facilitating conditions, Information privacy risk, security risk, attitude, and distrust. Consistent with this, perceived ease of use did not emerge as a salient construct in the qualitative phase.

Salient Role of Perceived Usefulness

Perceived usefulness, a concept from the Technology Acceptance Model (Davis, 1989), refers to the degree to which an individual believes a technology will enhance performance. In this study, it captures postgraduate students’ perceptions of how GenAI supports their academic writing. Overall, students acknowledged the supportive role of GenAI in facilitating research and writing tasks. As illustrated by one participant:

When writing my thesis, GenAI helps me find relevant references more quickly and even includes links to the original websites for easy verification. (P3)

Students also emphasized that GenAI can tailor research plans to individual learning needs, improving efficiency and organization:

The personalized research plan it provided helped me allocate my time more effectively and focus on areas where I’m weaker. The entire process felt much more organized. (P7)

Finally, GenAI was valued for its data-processing capabilities, which aided analysis and reporting:

When analyzing experimental data, I often struggle to create charts independently. GenAI can quickly process the files and generate the necessary graphs, allowing me to easily identify data patterns and save much time writing the report. (P22)

Together, theses excerpts illustrate that postgraduate students consistently valued GenAI for improving efficiency, broadening academic perspectives, and facilitating collaboration, which collectively define the construct of perceived usefulness in this study.

Facilitating Conditions

Facilitating conditions, a construct originally proposed in technology acceptance research (Venkatesh & Davis, 2000), refer to the degree to which an individual believes that adequate institutional and technical infrastructure exists to support use. In the present study, this concept is applied to the availability and reliability of infrastructure for GenAI-assisted academic writing. Postgraduate students expressed strong concerns about the availability and reliability of such support systems. Network connectivity emerged as one of the most pressing issues. Many students feared that poor internet conditions could disrupt their use of GenAI tools, compromising the efficiency of their research and writing processes. As one participant explained:

I’m worried that poor internet conditions will affect my ability to use GenAI tools. If the connection is unstable, it might interrupt operations and even delay my research progress and reduce the quality of my results. (P4)

Students also demonstrated a clear awareness of the limitations of current technical support systems. Frequent technical failures and the difficulty of resolving these issues posed significant challenges. Participants reported that when technical problems arise, support is often slow or ineffective, adding to their frustration. This was reflected in their comments:

In this era of advanced technology, technical support is critical. But when dealing with complex GenAI tools, we frequently encounter technical issues, and resolving them is both difficult and costly. It’s frustrating and discouraging. (P22)

In sum, participants’ concerns about infrastructure, connectivity, and technical support underscore that facilitating conditions are critical contextual factors shaping their ability and willingness to adopt GenAI in academic writing.

Concerns About Information Privacy Risk

Information privacy risk refers to concerns about the unauthorized collection, disclosure, or misuse of personal or academic data (Pavlou et al., 2007), It highlights users’ worries that their sensitive information, such as drafts or research materials, might be exposed or exploited without consent. In this study, it is extended to concern about privacy threats when postgraduate students use GenAI tools for academic writing. Data leakage emerged as one of their most serious worries, as students feared that sensitive information could be illegally obtained using such tools, posing threats to their personal rights and academic reputations. As expressed by one respondent:

I’m deeply concerned that my personal information could be leaked. If that happens, it might lead to harassment or even damage my academic reputation and future career prospects. (P18)

Students also showed a strong awareness of the fragility of current information security systems. The frequency of privacy breaches, combined with the complex and challenging process of defending one’s rights, contributed to their anxiety. They cited challenges such as difficulties in collecting evidence and unclear legal frameworks. As emphasized by students:

In this digital era, privacy protection is crucial. But with the power of advanced AI technologies, our data seems increasingly vulnerable. Defending our rights after a privacy breach is so costly and complicated—it makes us feel powerless. (P25)

Taken together, these narratives highlight that fears of data leakage and insufficient legal protection are central to students’ perception of Information privacy risk when using GenAI.

Security Risk

Security risk, refers to risks stemming from the technical side of GenAI tools, including vulnerabilities, hacking, operational failures, or inaccurate outputs that compromise the reliability and safety of the system (Y. Liu et al., 2024). Unlike information privacy, which focuses on unauthorized data disclosure or misuse security risk reflects concerns about the robustness and dependability of GenAI technologies. Several participants reported experiences of being misled by inaccurate content, which caused deviations in their research direction and resulted in considerable loss of time and effort. One participant recalled:

While writing my thesis, I relied on materials provided by GenAI, but the serious flaws made me doubt whether the system itself was reliable and safe for academic use. (P21)

Logical inconsistencies and biased data further undermined the accuracy of knowledge construction, impairing students’ ability to make sound judgments and impeding academic progress. As highlighted in this case:

The logical flaws in GenAI outputs not only disrupted my analysis but also raised doubts about the system’s stability and security in supporting academic research. (P24)

Overall, the students’ experiences of misinformation, logical flaws, and biased outputs reveal that security risk extends beyond data safety to the reliability and accuracy of GenAI outputs, directly influencing academic trustworthiness.

Attitude

Attitude, a fundamental construct in social psychology (Garner et al., 2011), refers to an individual’s positive or negative evaluation of performing a behavior. In this study, it is applied to examine postgraduate students’ evaluation of adopting GenAI for their academic writing tasks.

Positive Attitude

Overall, most postgraduate students held a positive attitude toward GenAI tools, recognizing the convenience and efficiency they bring. Students believed that GenAI significantly reduced the time and effort required for academic tasks, greatly enhancing research and writing productivity and enabling them to accomplish more within limited timeframes. As one student affirmed:

GenAI helps me quickly retrieve information and analyze problems when dealing with complex academic tasks. It has improved my writing efficiency and given me more time for in-depth research. (P12)

In addition, students acknowledged that GenAI contributes to developing their academic skills, including creative thinking, data analysis, and interdisciplinary knowledge integration. They believed these benefits support their scholarly work and provide a strong foundation for future career development. This was evidenced in participants’ reflections:

Interacting with GenAI has taught me to approach problems from different angles, boosting my creative thinking in ways that have proven extremely valuable for my academic research. (P16)

Negative Attitude

Despite these advantages, some students also expressed reservations and concerns about GenAI. They worried about potential errors related to data security issues, such as data leakage or manipulation. These concerns were perceived as serious threats to personal privacy and the integrity of academic work. As a participant cautioned:

If my research data were leaked or tampered with, the consequences would be devastating. It could completely undermine the credibility of my work and even cause irreversible damage to my academic career. (P25)

Other highlighted risks of over reliance on GenAI in academic contexts:

Relying too much on GenAI makes me feel that my originality and creativity are being limited. It can reduce my motivation to think independently. (P41)

These reflections show that while many postgraduates held positive attitudes toward GenAI due to its efficiency and skill-enhancing potential, lingering concerns about data safety temper enthusiasm, reflecting a nuanced evaluation of its adoption.

Distrust

Distrust, a construct originally conceptualized in information systems research (Harrison McKnight & Chervany, 2001), refers to the absence of trust, skepticism, or vigilance. In this study, it is used to explain postgraduate students’ hesitation and lack of confidence in GenAI due to prior negative experiences. Some postgraduate students reported a lack of trust in GenAI, primarily due to past negative experiences. One of the main contributors to this distrust was misinformation. Students who had previously been misled by inaccurate content began questioning the reliability of GenAI tools. As recounted by one student:

I once made a wrong decision based on the information it provided, which made me seriously doubt its reliability. Now, I’m hesitant to trust anything it suggests. (P41)

In addition, poor service quality—such as slow response times and limited functionality—and frequent technical issues, including system crashes and incorrect outputs, further eroded students’ trust in GenAI. This was illustrated by a respondent’s experience:

When I urgently needed help, its slow and often incorrect responses disappointed me and gradually eroded my trust. (P34)

In summary, repeated negative experiences, misinformation, and technical failures contributed to students’ distrust, indicating that distrust functions as a psychological barrier that may indirectly reduce their intention to use GenAI.

Overall, the qualitative findings provide preliminary answers to the overarching research questions. First, the findings identified six key constructs influencing Chinese postgraduates’ intention to use GenAI in academic writing: perceived usefulness, facilitating conditions, and attitude as enablers, and information privacy risk, security risk, and distrust as inhibitors. Second, the narratives suggested that perceived usefulness and facilitating conditions primarily shaped intention through their positive effects on attitude, while privacy and security risks elevated distrust and weakened attitudes; distrust therefore emerged as an indirect rather than direct determinant of intention. Third, although the qualitative phase cannot estimate explanatory power, it specifies the relevant constructs and directional linkages, laying the foundation for the quantitative analysis in Phase Two, where the variance explained in intention will be systematically evaluated.

Building on these qualitative insights, Phase Two operationalizes the six identified constructs and uses PLS-SEM to test their direct, indirect, and mediating effects on postgraduate students’ intention to use GenAI in academic writing.

Phase Two: Quantitative Study

Research Hypotheses

In the second phase, this study integrates TAM and Distrust Theory as the theoretical foundation, incorporating six key variables identified in Phase 1 to construct a hypothesized model. The quantitative model operationalizes these qualitative themes as antecedents and introduces Usage Intention as the dependent variable. Building on Section 2, TAM is applied to explain adoption drivers and Distrust Theory to capture trust-related barriers, providing a comprehensive lens for understanding postgraduates’ willingness to use GenAI in academic writing (Saif et al., 2024; Strachan et al., 2024). The hypothesized model is illustrated in Figure 1.

Hypothesized model.

To address the research questions systematically, the qualitative findings from Phase 1 were translated into measurable constructs. Each construct corresponds to a dimension of the research questions, while the hypotheses specify the expected relationships among these constructs. This approach ensures that the broad research questions are examined through a structured, testable model, thereby enhancing the logical progression from qualitative exploration to quantitative validation.

Previous studies have consistently shown that perceived usefulness significantly influences user attitude (Eksail & Afari, 2020; D. Huang & Chueh, 2022; Jou et al., 2024). For example, based on the TAM, Eksail and Afari (2020) investigated the factors influencing pre-service teachers’ intention to use computer technologies. Their results indicated that perceived usefulness was a key predictor of attitude. Similarly, D. Huang and Chueh (2022) employed meta-analysis and SEM to examine the determinants of mobile application usage intention. Their findings confirmed that perceived usefulness significantly affected users’ attitudes toward adopting mobile apps. In the context of academic writing, if postgraduate students perceive GenAI tools as useful in improving efficiency, enhancing creativity, and supporting research tasks, they are more likely to form favorable attitudes toward their use. Based on these findings, the following hypotheses are proposed:

Previous studies have also suggested that perceived usefulness plays a critical role in reducing skepticism and distrust (Dimoka, 2010; Fan et al., 2021; Liotti et al., 2023). For instance, Dimoka (2010) showed that higher functional value mitigates users’ cognitive skepticism and behavioral avoidance, both of which are central components of distrust. Similarly, Liotti et al. (2023) emphasized that when individuals perceive digital technologies as beneficial in supporting their tasks, their emotional resistance and suspicion decrease, thereby lowering overall distrust. In the context of academic writing, if postgraduate students perceive GenAI tools as useful for improving efficiency, enhancing creativity, and supporting research tasks, their distrust toward such tools is expected to decline. Conversely, when perceived usefulness is low, skepticism and resistance may increase, leading to higher levels of distrust. Based on this rationale, the following hypothesis is proposed:

In addition, existing literature identifies facilitating conditions as a key predictor of attitude (Abbad, 2021; Jou et al., 2024; Zerbini et al., 2022). For example, Abbad (2021) analyzed self-reported data from 370 university students in Jordan to explore factors influencing their adoption of e-learning systems. The results indicated that students who perceived adequate access to resources and technical support developed more positive attitudes and stronger usage intentions. Similarly, Zerbini et al. (2022) conducted a comprehensive meta-analysis to examine the drivers of online shopping behavior. Their findings revealed that better-facilitating conditions led to more positive attitudes and stronger consumer behavioral intentions. Based on this evidence, the following hypotheses are proposed:

Prior research has identified a significant relationship between facilitating conditions and distrust (Pienwisetkaew et al., 2023; Sarfraz et al., 2022; Yuen et al., 2023). For example, Sarfraz et al. (2022) found that insufficient facilitating conditions can heighten students’ distrust toward technology, ultimately reducing their acceptance of e-learning systems. Using the UTAUT2, Pienwisetkaew et al. (2023) investigated behavioral intentions related to mobile technologies for agricultural waste valorization and concluded that robust facilitating conditions can help alleviate user distrust–especially among older adults. Based on this evidence, the following hypothesis is proposed:

Studies have shown that when individuals perceive high information privacy risk and consequently experience distrust, their intention to adopt technology decreases significantly (Chen & Zhao, 2023; Chin et al., 2022; Mashatan et al., 2022). For instance, Chin et al. (2022), drawing on the Theory of Reasoned Action and Motivated Avoidance Theory, analyzed responses from 234 participants and found that perceived privacy concerns negatively affected users’ trust in mobile payment systems, which in turn reduced their usage intention. Similarly, Mashatan et al. (2022) explored trust in encrypted payment systems and found that perceived information privacy risk was a major factor in shaping trust, and that higher trust significantly enhanced adoption intentions. Based on these results, this study posits that postgraduate students’ concerns about personal privacy in academic writing will negatively impact their trust in GenAI, influencing their attitudes and behavioral intentions. Conversely, when privacy concerns are low, distrust diminishes, increasing the intention to use. Accordingly, the following hypotheses are proposed:

Building on the qualitative findings (see Section 3.2), this study distinguishes information privacy risk—concerns about unauthorized data disclosure—from security risk—concerns about system vulnerabilities and unreliable outputs. Prior studies further suggest that security concerns play a critical role in shaping users’ trust and attitudes toward technology (Chin et al., 2022; Dehghani et al., 2023; D. Huang & Chueh, 2022). For instance, D. Huang and Chueh (2022) demonstrated that perceived security risk had a significant negative impact on user attitudes and further increased levels of distrust. Based on these results, the following hypotheses are proposed:

Previous research has established that attitude is critical in shaping usage intentions (Chai et al., 2023; Hung, 2021; Sadiq et al., 2023). For example, Hung (2021) analyzed self-reported data from 276 users of medical robots in Taiwan and found that positive attitudes significantly predicted usage intention. Similarly, Chai et al. (2023), drawing on the TAM and the TPB, investigated remote work adoption during the COVID-19 pandemic and found that individual attitude significantly promoted the adoption of remote technologies. Accordingly, this study proposes:

Prior studies have shown that distrust is crucial in shaping user attitudes (Chung et al., 2012; Dong et al., 2024; Ying et al., 2021). For instance, using the Protection Motivation Theory, Ying et al. (2021) demonstrated that hotel customers’ distrust significantly reduced their positive attitudes toward the services. Similarly, Chung et al. (2012) found that Chinese consumers’ distrust of marketers was pivotal in forming negative attitudes and weakening purchase intentions. Based on these findings, the following hypothesis is proposed:

Methodology

Participants and Data Collection

Consistent with the sequential exploratory mixed-method design introduced in Section 3.1, Phase 2 employed a structured questionnaire survey to examine postgraduate students’ intention to use GenAI in academic writing. The questionnaire was distributed via Wenjuanxing, one of China’s most widely used survey platforms, which has been shown to provide efficient data collection and broad reach in academic research (Y. Huang et al., 2024).

Participants were recruited from postgraduate programs at multiple higher education institutions across China. A snowball sampling method was employed in three sequential steps to ensure diversity and representativeness. First, the researchers identified a “seed participants” group representing different faculties, academic years, and disciplinary backgrounds. These participants were informed of the research objectives and received the questionnaire link. Second, the seed participants were encouraged to forward the survey to their classmates, department-level groups, course-based communication channels, and academic networks such as laboratories or scholarly discussion groups. Finally, the survey was promoted through social media platforms—including WeChat, university BBS forums, and QQ academic groups—to expand its reach and capture responses from a broader pool of potential participants who were not directly contacted. All participants provided informed consent before completing the survey, confirming that they fully understood the research purpose and participated voluntarily. The entire research process adhered to established ethical guidelines.

Data collection commenced on December 15, 2024, and concluded on February 15, 2025, yielding 451 questionnaire responses. To ensure data quality, we applied three screening criteria: (a) Respondents who reported no prior use of GenAI were excluded from the analysis (as the study specifically targeted postgraduate students with actual usage experience, in order to investigate real perceptions and behaviors rather than hypothetical attitudes). (b) A pretest indicated that the questionnaire typically required 2 to 5 min. Therefore, any responses submitted in under 90 s were considered rushed and invalid. (c) Excessive answer duplication was also used as a criterion for exclusion.

After applying these quality control measures, 382 valid responses were retained for subsequent analysis. In this study, the maximum number of arrows pointing to a single construct in the structural model is six. According to (J. Hair et al., 2017), to detect an R2 value of .10 at a 1% significance level, a minimum sample size of 217 is required. With 382 valid responses, the sample size in this study far exceeds the minimum threshold, thereby ensuring the robustness and reliability of the findings. Table 3 presents the demographic information of the participants. We use the demographic information summarized in Table 3 to characterize the sample and to assess coverage across year levels and disciplines, thereby clarifying the context and boundaries for generalizing the findings (e.g., a predominately female and humanities-oriented cohort).

Demographics Information.

Measurement Instruments

All measurement scales used in this study were adapted from previously validated research instruments (Table 4). Phase One identified six antecedent constructs: Perceived Usefulness, Facilitating Conditions, Information Privacy Risk, Security Risk, Attitude, and Distrust. To address the overarching research questions in Phase Two, we additionally measured Usage Intention as the dependent outcome variable. Accordingly, the quantitative model includes six predictors and one outcome. All items were measured using a 5-point Likert scale ranging from 1 (“strongly disagree”) to 5 (“strongly agree”).

Constructs, Measurement Items, and Sources.

Note. All items were measured on a 5-point Likert scale (1 = strongly disagree, 5 = strongly agree). Items were adapted from prior studies as indicated in the source column.

To ensure cultural and linguistic validity, we employed a back-translation procedure (Brislin, 1970). Two bilingual experts independently translated the scales into Chinese, and a third bilingual expert—blind to the original wording—back-translated them into English. Discrepancies were discussed and resolved by the research team until consensus was reached. A pilot test with 20 postgraduate students was then conducted to check clarity and readability, and minor adjustments were made based on their feedback.

Perceived Usefulness Scale measures students’ evaluation of the practical value of GenAI tools (Davis, 1989). It consists of three items (e.g., “Using AI tools improves my research and writing efficiency”). The scale was validated by Ates and Garzón (2022) with a Cronbach’s alpha of .83.

Usage Intention Scale assesses students’ behavioral intention to use GenAI tools for academic writing in the future (Venkatesh et al., 2003). It contains three items (e.g., “I intend to try using AI tools for academic writing within the next month”). The scale was validated by Wedlock (2016) with an internal consistency of 0.92.

Security Risk Scale, adapted from Jun et al. (2016) cybersecurity risk scale, measures students’ perceived risk of system vulnerabilities, hacking, and inaccurate outputs when using GenAI tools. Although two items in the Security Risk scale involve concerns about personal information (e.g., illegal access), in our theoretical framework such concerns conceptually belong to information privacy risk. We nevertheless retained the original items to ensure consistency with established scales and comparability with prior studies. It includes three items (e.g., “While using AI tools, I believe my device is at high risk of being hacked”). It was validated by Arpaci and Sevinc (2022) with a Cronbach’s alpha of .85.

Attitude Scale evaluates students’ positive or negative evaluations of the value of AI tools in academic settings (Wiedmann & von Mettenheim, 2021). It includes three items (e.g., “Using AI tools enhances my motivation and learning skills”). The scale was validated by Grassini (2023), with a reliability of 0.88.

Facilitating Conditions Scale measures students’ perceptions of the availability of resources, skills, and compatibility required to use AI tools (Ajzen, 1991; Taylor & Todd, 1995). It includes three items (e.g., “I have access to the necessary equipment and internet environment to use AI tools”). It was validated by Zolait (2013) with a Cronbach’s alpha of .86.

Information Privacy Risk Scale focuses on students’ concerns about the unauthorized collection and use of personal information during AI tool use (Smith et al., 1996). It contains three items (e.g., “I am concerned that AI tools may collect too much personal information”). The scale has been validated by Stewart and Segars (2002), with internal consistency ranging from 0.78.

Distrust Scale measures the negative impact of lacking technological trust on adoption behaviors (Chau et al., 2013; McKnight & Choudhury, 2006). It includes four items (e.g., “I doubt the functionality and effectiveness of AI tools as learning aids”). It was validated by Min and Zickar (2023), with a Cronbach’s alpha of .83.

This study used a structured questionnaire to collect data for testing the hypothesized model. The reliability and validity of all measurement scales were examined using established criteria, and the results are reported in Section 4.3.1.

Statistical Analysis

This study employed Partial Least Squares Structural Equation Modeling (PLS-SEM) for data analysis. PLS-SEM has been widely adopted as a multivariate statistical technique in social science research (J. F. Hair et al., 2019). Combining the features of multiple linear regression, principal component analysis, and canonical correlation analysis, PLS-SEM demonstrates exceptional capability in handling complex multivariable relationships (Fornell & Bookstein, 1982). PLS-SEM offers several key advantages: it is suitable for small sample sizes, non-normally distributed data, exploratory research designs, and models with high complexity (J. Hair et al., 2017). Given that this study involved a relatively small sample size (N = 382), with 7 constructs and 24 items, and aimed to explore a complex model structure (Schuberth, 2021), PLS-SEM was deemed an appropriate and robust analytical method.

The PLS-SEM analysis in this study consisted of two main stages: the measurement model and the structural model. The measurement model focused on assessing the reliability and validity of the measurement instruments, while the structural model involved testing for multicollinearity (VIF), evaluating path significance, and examining R2, Q2, and mediation effects (J. F. Hair et al., 2019).

Results

Measurement Model

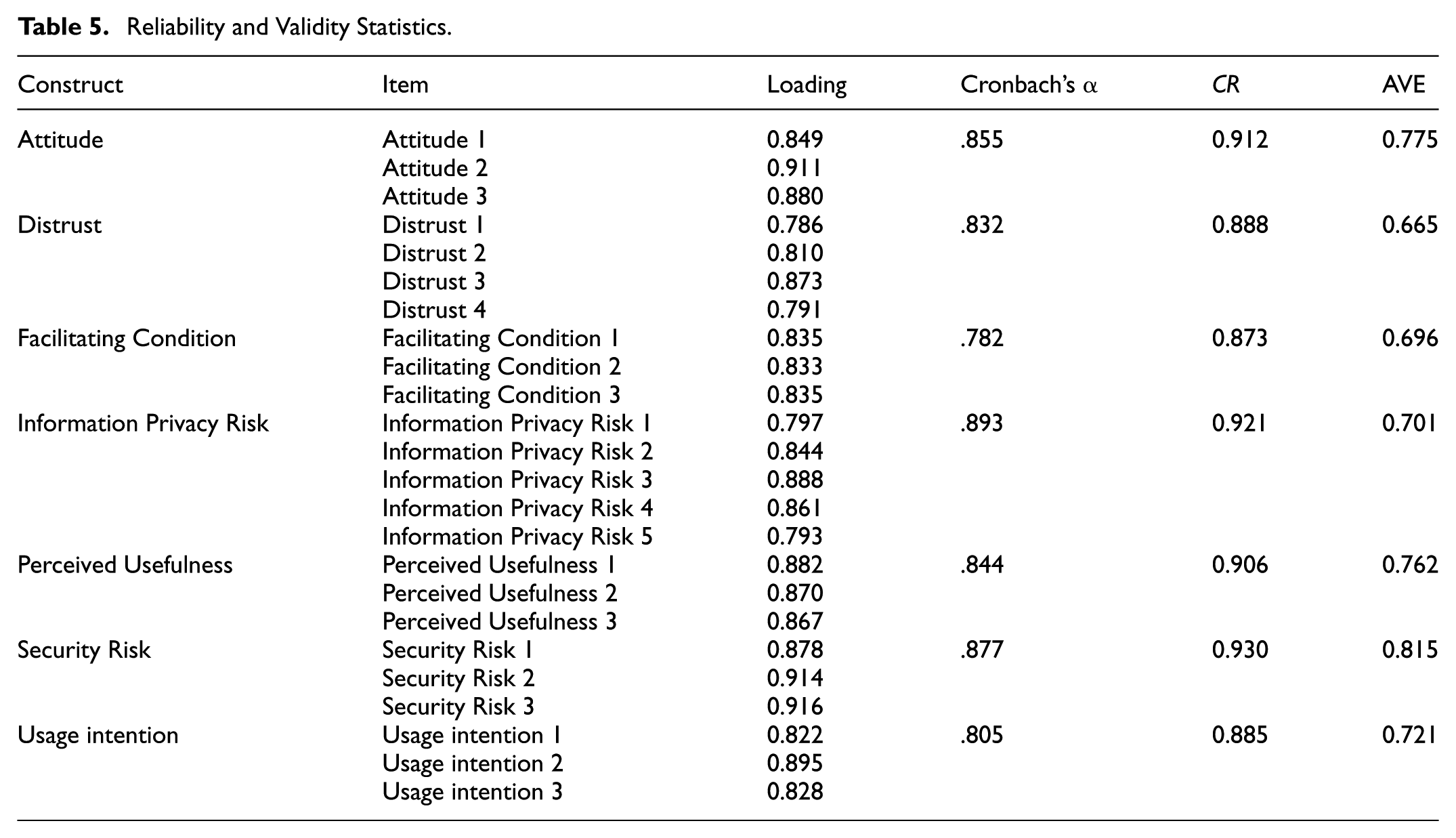

Following the guidelines proposed by Hair et al. (2019), the measurement model was assessed to examine the reliability and validity of the measurement instruments. Reliability was evaluated using outer loadings and composite reliability (CR). Under established thresholds, outer loadings should surpass 0.708 to indicate acceptable item reliability. As shown in Table 5, all items met this criterion. Additionally, CR values for all constructs surpassed 0.70, confirming satisfactory internal consistency reliability. Validity was assessed through both convergent validity and discriminant validity. Convergent validity was supported as the average variance extracted (AVE) for each construct surpassed 0.50, indicating that the constructs explained more than half of the variance in their indicators (J. F. Hair et al., 2019). Discriminant validity was evaluated using the Heterotrait-Monotrait Ratio of Correlations (HTMT) and the Fornell-Larcker criterion. According to the HTMT criterion, values should be below 0.85, and all values met this requirement (Table 6).The Fornell-Larcker criterion requires that the square root of the AVE for each construct is greater than its correlations with other constructs. This condition was also satisfied (Table 7), confirming discriminant validity (J. F. Hair et al., 2019).

Reliability and Validity Statistics.

Discriminant Validity (HTMT Criteria).

Discriminant Validity (Fornell-Larcker Criteria).

Note. The bolded diagonal values represent the square roots of the AVEs.

To further address potential concerns of common method bias, all measurement items were subjected to Harman’s single-factor analysis using principal component extraction without rotation. The first factor explained 33.89% of the variance, which is below the 40% threshold, suggesting that common method bias was not a major concern (Podsakoff et al., 2003).

Structural Model

We calculated Variance Inflation Factor (VIF) values for all latent constructs to assess potential multicollinearity issues within the model. As shown in Table 8, all VIF values were below the conservative threshold of 3 (J. F. Hair et al., 2019), indicating that multicollinearity was not a concern.

VIF Values.

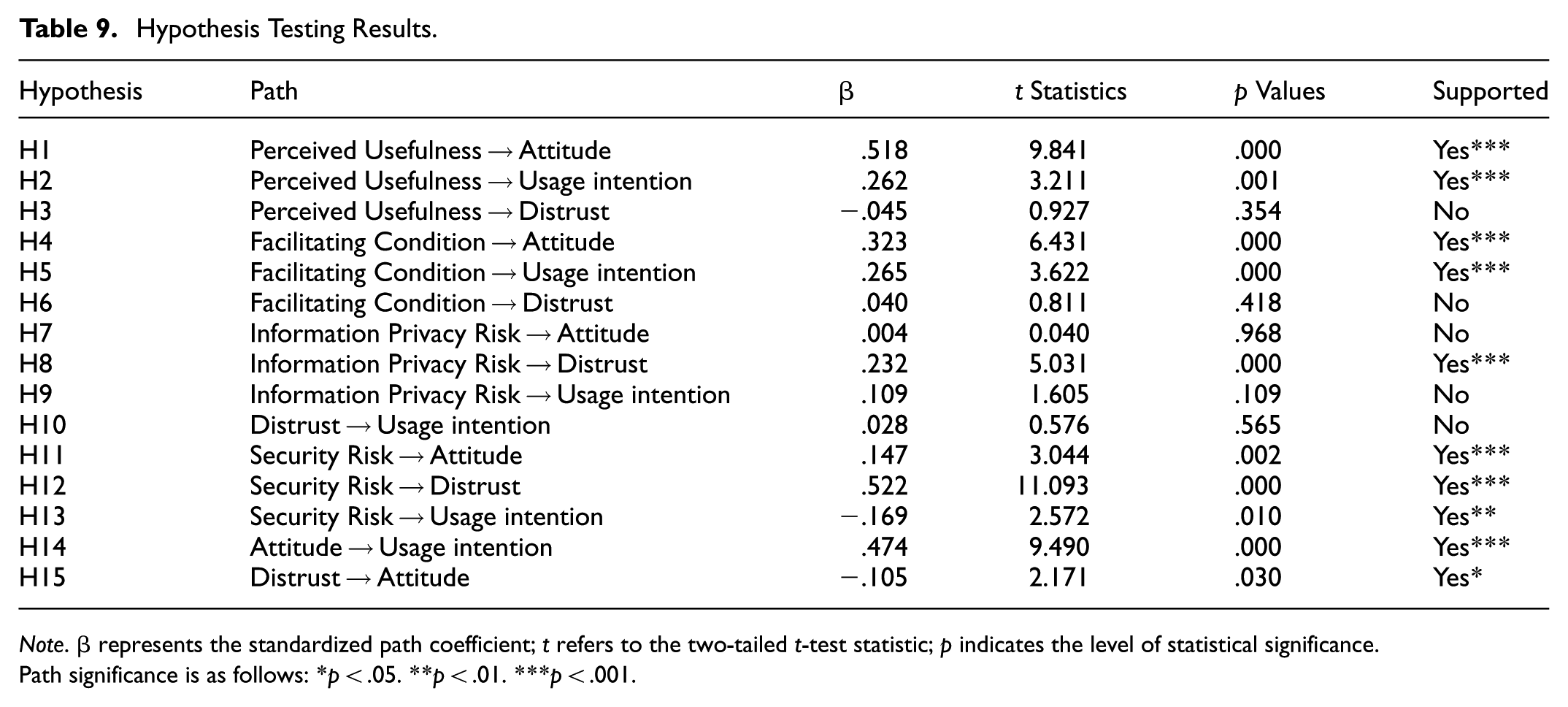

The significance of path coefficients was assessed using the bootstrapping procedure in PLS-SEM. t-values and p-values were used to evaluate whether the standardized path coefficients (β) were statistically significant at the 5% level. A path is considered critical if p < .05 and t > 1.96. As shown in Table 9, among the direct predictors of usage intention, attitude had the most substantial effect (β = .474, t = 9.490, p = .000), followed by facilitating conditions (β = .265, t = 3.622, p = .000), perceived usefulness (β = .262, t = 3.211, p = .001), and security risk (β = −.169, t = 2.572, p = .010). In contrast, information privacy risk (β = .109, t = 1.605, p = .109) and distrust (β = .028, t = 0.576, p = .565) were not significant predictors of usage intention. Among the direct predictors of attitude, perceived usefulness had the most decisive influence (β = .518, t = 9.841, p = .000), followed by facilitating conditions (β = .323, t = 6.431, p = .000), security risk (β = .147, t = 3.044, p = .002), and distrust (β = −.015, t = 2.171, p = .030). However, information privacy risk showed no significant relationship with attitude (β = .004, t = 0.040, p = .968). Concerning predictors of distrust, security risk had the most substantial effect (β = .522, t = 11.093, p = .000), followed by information privacy risk (β = .232, t = 5.031, p = .000). In contrast, perceived usefulness (β = −.045, t = 0.927, p = .354) and facilitating conditions (β = .040, t = 0.811, p = .418) were not significantly associated with distrust.

Hypothesis Testing Results.

Note. β represents the standardized path coefficient; t refers to the two-tailed t-test statistic; p indicates the level of statistical significance.

Path significance is as follows: *p < .05. **p < .01. ***p < .001.

This study employed PLS bootstrapping with 5,000 subsamples to assess the structural model’s strength and statistical significance in path coefficients (J. F. Hair et al., 2019). The R2 values of endogenous variables were used to evaluate the model’s explanatory power, while Stone-Geisser’s Q2 values were applied to examine its predictive relevance (J. F. Hair et al., 2019). As shown in Table 10, the model demonstrated satisfactory explanatory capability: The R2 value for Attitude was .629, indicating that its predictors explained 62.9% of the variance in attitude. The R2 value for Distrust was .467, meaning that the model accounted for 46.7% of the variance in distrust. The R2 value for Usage Intention was .679, suggesting that its antecedents explained 67.9% of the variance in usage intention. Furthermore, as presented in Table 10, all Q2 values were more significant than zero, supporting the conclusion that the model exhibits adequate predictive relevance.

Predictive Validity and Predictive Relevance.

Mediation Analysis

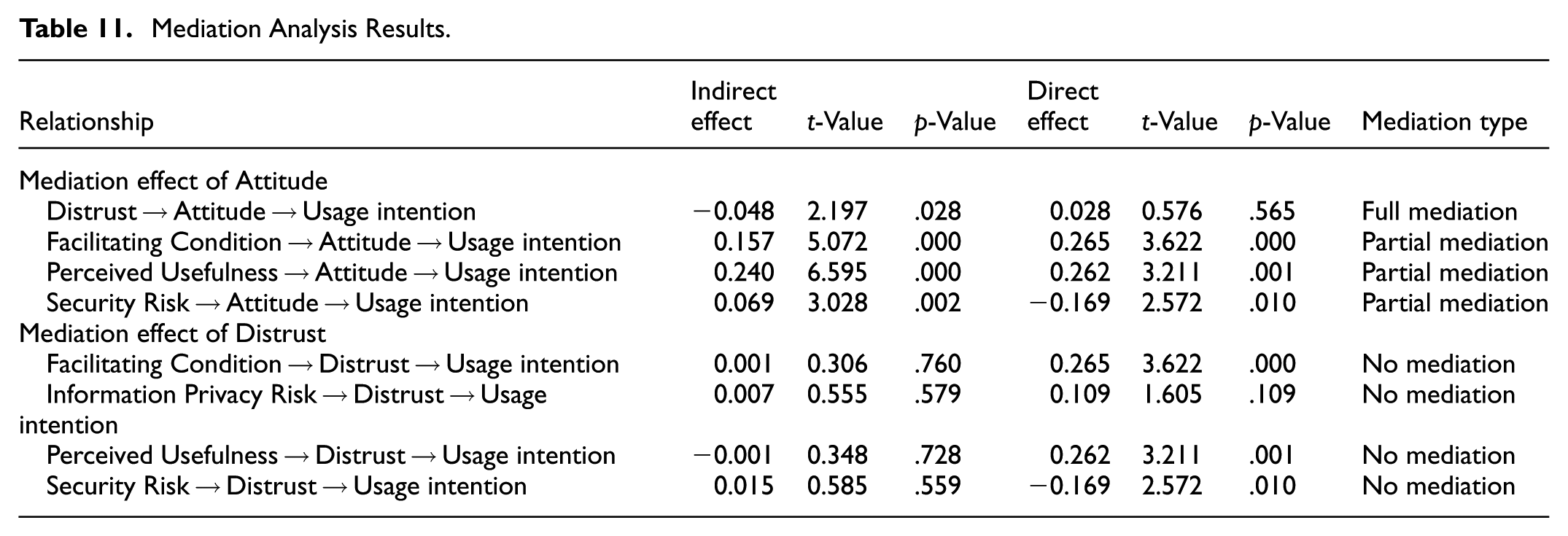

We employed the bootstrapping method in PLS-SEM to evaluate the mediating roles of attitude and distrust in the relationships between perceived usefulness, facilitating conditions, information privacy risk, security risk, and usage intention in academic writing (Nitzl et al., 2016). The analysis focused on direct and indirect effects to determine the type and strength of mediation. As shown in Table 11, attitude fully mediated the relationship between distrust and usage intention (β = −.048, t = 2.197, p = .028). Additionally, attitude partially mediated the relationships between Facilitating conditions and usage intention (β = .157, t = 5.072, p = .000), Perceived usefulness and usage intention (β = .240, t = 6.595, p = .000), and Security risk and usage intention (β = .069, t = 3.028, p = .002). However, distrust did not exhibit significant mediating effects in any of the following relationships: Facilitating conditions → Usage intention (β = .001, t = 0.306, p = −.760), Information privacy risk → Usage intention (β = .007, t = 0.555, p = .579), Perceived usefulness → Usage intention (β = −.001, t = 0.348, p = .728), Security risk → Usage intention (β = .015, t = 0.585, p = .559).

Mediation Analysis Results.

Discussion

This study examined Chinese postgraduate students’ intention to use GenAI tools in academic writing. Beyong confirming benefits reported in prior work-personalization efficiency, and idea generation (Chan & Hu, 2023; Ma, 2024; Rasul et al., 2023), our 49-participant qualitative phase contributes three context-specific insights. First, students clearly differentiated “security risk” (hallucinations, logical flaws, and traceability problems that jeopardize academic soundness) from “information privacy risk” (unauthorized collection/secondary use of personal data), attributing distinct consequences to each—an insight that anticipates the stronger role of security risk in the quantitative results (see Table 2). Second, distrust was typically experience-based—triggered by misinformation and poor service quality—and operated indirectly by dampening attitudes rather than directly suppressing intention, foreshadowing our mediation findings (Strachan et al., 2024). Third, facilitating conditions were framed as institutional levers: reliable connectivity and timely technical support reduced hesitation and distrust, whereas fragile infrastructure amplified concerns. Together, these contributions move beyond alignment with prior studies by offering a mechanism-level, context-grounded account of how Chinese postgraduates weigh value against risk when deciding whether to use GenAI for academic writing.

The second-phase SEM analysis confirmed several qualitative findings and supported multiple research hypotheses (Table 8). The finding that attitude was the strongest predictor of usage intention (β = .474, p < .001), while distrust showed no significant effect, demonstrates that students’ emotional evaluations and overall acceptance of GenAI tools are central to shaping their usage decisions. This finding is consistent with the Extended UTAUT proposed by Venkatesh and Davis (2000), emphasizing attitude as a key determinant of technology adoption. One participant explained: “I’m very interested in GenAI, so I actively look for tutorials to optimize how I use it.”

Interestingly, distrust did not have a significant direct effect on usage intention, contrasting with Esmaeilzadeh (2020). While distrust suppressed technology adoption in health contexts, postgraduate students often perceive GenAI as a task-oriented tool, prioritizing functional efficiency over distrust (Holmes & Tuomi, 2022; Kim, 2023). In addition, as public understanding of artificial intelligence improves, students are increasingly open to experimentation and more willing to adoptemerging technologies (Ivanov, 2023; Nussberger et al., 2022). Pedagogically, this suggests that distrust operates indirectly through attitudes, with usefulness and support conditions outweighing distrust in shaping adoption.

Among the antecedents of attitude, perceived usefulness (β = .518, p < .001) and facilitating conditions (β = .323, p < .001) emerged as the most significant positive predictors. Security risk (β = .147, p < .01) had a weaker yet still considerable influence, while information privacy risk showed no significant effect on attitude (β = .004, p = .968). While previous studies often highlight privacy concerns as a barrier to technology adoption, our findings suggest a different pattern in the Chinese academic context. One possible explanation is that students’ awareness of privacy protection remains relatively low, leading them to prioritize the functional benefits of GenAI tools over potential risks. Moreover, because most students access these tools through institutional networks with established data management policies, they may perceive an additional layer of implicit protection, which reduces the salience of privacy concerns. Thus, their broader concerns about ethics, accuracy, and social impact are consistent with—but extend beyond—the specific dimension of information privacy risk identified in our model. These contextual factors together help explain why information privacy risk did not significantly affect students’ attitudes or intentions. These findings support the core assumptions of Davis’s (1989) TAM, which posits that users are more likely to adopt technologies they perceive as helpful in enhancing task performance and easy to use rather than prioritizing concerns about potential risks. One participant noted: “What matters most to me is whether GenAI can help me write a good paper—far more important than any potential risks.”

Further analysis revealed that security risk (β = .522, p < .001) and information privacy risk (β = .232, p < .001) were the primary drivers of distrust. In contrast, perceived usefulness (β = −.045, p = .354) and facilitating conditions (β = .040, p = .418) showed no significant effect. This finding aligns with the results of Golda et al. (2024), who identified concerns about security and privacy as the primary sources of users’ diminished trust in GenAI. Notably, while such problems may not directly inhibit usage intention, they often influence behavior indirectly through cognitive or emotional pathways, particularly by shaping users’ attitudes. One participant remarked: “GenAI is certainly convenient, but if it involves handling sensitive personal information, I tend to be cautious—especially regarding data processing and security.”

Synthesizing the qualitative and quantitative evidence, we now address the study’s three overarching research questions. First, we identified six factors shaping Chinese postgraduates’ intention to use GenAI for academic writing—perceived usefulness, facilitating conditions, and attitude as enablers, and information privacy risk, security risk, and distrust as inhibitors. Second, the mechanisms indicate that perceived usefulness and facilitating conditions foster intention primarily via more positive attitudes, whereas privacy and security risks elevate distrust and temper attitudes; accordingly, distrust exerts a mainly indirect rather than direct influence on intention. Third, the integrated model demonstrates meaningful explanatory and predictive performance (R2 = .679 for usage intention, .629 for attitude, .467 for distrust; see Table 10), supporting the value of combining TAM with Distrust Theory in this context.

Beyond alignment with prior studies, the data contribute three context-grounded insights: First, students distinguish “security risk” from “information privacy risk” with different academic consequences, anticipating the stronger role of security risk observed quantitatively; Second, distrust is experience-based (e.g., misinformation, poor service quality) and affects intention indirectly via attitude; Third, facilitating conditions function as institutional levers—reliable connectivity and timely technical support reduce hesitation and distrust, whereas fragile infrastructure amplifies concerns.

Theoretical Implications

This study makes four key theoretical contributions to the literature on AI adoption behavior in educational contexts. First, it provides an incremental extension of TAM by incorporating distrust-related constructs. While TAM has been extensively applied in educational technology research, distrust has received relatively limited attention in AI-driven systems. By incorporating distrust and its antecedents (e.g., security risk and information privacy risk), this study adapts TAM to capture inhibitory factors in postgraduate academic-writing contexts.

Second, this study employed a sequential exploratory mixed-methods design, allowing the model to be more closely aligned with real user contexts. Unlike many TAM-based studies that rely on predefined variables, this research identified key influencing factors through thematic analysis in the first phase. It validated them through SEM in the second phase. This approach enhanced the realism and theoretical validity of the model and offered a replicable pathway for theory building in studies of emerging technologies.

Third, the findings indicate that distrust did not have a significant direct effect on usage intention, but instead influenced intention indirectly via attitude. This diverges from classical TAM-based studies that typically assume universal and direct predictors of intention (Davis, 1989; Venkatesh & Davis, 2000). For instance, research in digital learning contexts has reported significant direct associations between distrust (or trust) and behavioral intention (Kim, 2023; Tseng et al., 2022), whereas our results suggest that such effects may be contingent upon specific academic and cultural contexts. This pattern also contrasts with findings from other domains, such as Esmaeilzadeh (2020), where distrust exerts a stronger direct effect. Taken together, these results indicate that TAM’s “one-size-fits-all” assumption does not fully capture adoption dynamics. By integrating Distrust Theory, the present study refines TAM by showing that negative psychological constructs may influence intention primarily through attitudinal mediation rather than direct effects, thereby extending TAM beyond its classical formulation and situating it within the Chinese postgraduate context.

Finally, this study reveals alternative pathway mechanisms influencing usage intention. For instance, although security risk does not directly suppress usage intention, it indirectly affects user decision-making through its influence on attitude and distrust. This suggests that risk perception may shape behavior mainly through affective mediators, rather than direct rational evaluation. Future work should explore such mechanisms in other educational settings and test the moderating role of cultural or contextual differences in risk-based decision-making.

Practical Implications

This study examined the intention to use GenAI tools in academic writing among postgraduate students in China, uncovering the direct and indirect effects of factors such as perceived usefulness, facilitating conditions, attitude, security risk, information privacy risk, and distrust. The findings indicate that perceived usefulness and facilitating conditions significantly influence usage intention through attitude, while security risk primarily influences indirectly via attitude and distrust. Notably, distrust itself did not demonstrate a significant direct effect on usage intention. These results enrich the research on technology acceptance theory and AI application in education and provide valuable practical guidance for educational policymakers, higher education institutions, AI technology developers, and postgraduate students.

First, for policymakers. Since security risk affects intention mainly indirectly, efforts should focus on reducing negative risk perceptions and fostering positive attitudes. Beyond general regulations, governments could issue transparent data-handling guidelines and support AI literacy campaigns to strengthen students’ confidence in safe adoption.

Second, for universities. Because attitudes strongly mediate the effect of usefulness and facilitating conditions, institutions should demonstrate functional value in academic contexts. Integrating GenAI into writing or methodology courses and offering hands-on training can help students recognize benefits, reduce uncertainty, and build more positive attitudes, Discipline-specific integration may further enhance relevance, such as focusing on efficiency and data processing for STEM students, and on critical argumentation and academic writing style for humanities and social sciences students.

Third, or technology developers. As security and privacy risks increase distrust, developers should improve transparency, controllability, and data protection. Clear communication of security policies and enhanced user control can mitigate perceived risks and strengthen trust in GenAI tools.

Fourth, for postgraduate students. Students can treat GenAI as an auxiliary support tool rather than a replacement, while applying critical evaluation to outputs. Participation in AI-related training and proactive learning can enhance risk awareness, reduce uncertainty, and increase confidence in effective use.

Limitations and Future Research Directions

Despite its theoretical and practical significance, this study has several limitations that warrant consideration and offer directions for future research. First, the cross-sectional design and reliance on snowball sampling limit the ability to capture changes in students’ perceptions over time and may introduce sampling bias by over-representing digitally active or more receptive participants. Future studies could employ longitudinal designs and stratified sampling strategies to improve representativeness and track evolving attitudes. Second, the theoretical model primarily drew upon TAM and Distrust Theory. Intention formation, however, may be influenced by broader factors such as technological literacy, prior experience, or sociocultural context. Future research could extend the model by incorporating situational and emotional dimensions. Third, the study sample was drawn from a single institution and showed a gender imbalance, which may affect generalizability. Involving multiple universities across regions and striving for more balanced gender representation would strengthen external validity. Lastly, although we refined the definitions and measurements of information privacy risk and security risk, some conceptual overlap may remain. Future studies should adopt more fine-grained measurement instruments to further strengthen the discriminant validity of these constructs.

Conclusion

In summary, the integrated TAM–Distrust model explains 67.9% of Chinese postgraduates’ intention to use GenAI tools for academic writing. Beyond its explanatory power, our model extends prior work by incorporating elements of Distrust Theory, treating distrust primarily as an indirect attitudinal pathway and distinguishing security from information privacy risk within a unified framework. Attitude emerged as the strongest predictor of intention, while distrust showed no direct effect; instead, risk perceptions—especially security risk—shaped behavior indirectly through attitude. Together with perceived usefulness and facilitating conditions, these findings illustrate a value–risk trade-off in which functional gains and supportive infrastructure foster adoption, whereas concerns about accuracy and data handling constrain it. Importantly, by integrating TAM with Distrust Theory, this study contributes a novel theoretical framework that enriches understanding of technology acceptance in higher education.

Footnotes

Ethical Considerations

The researchers confirm that all procedures involving human participants were conducted in accordance with relevant guidelines and regulations for studies involving human participants (e.g., the Declaration of Helsinki). This study was approved by the Ethics Committee of Jiangxi Science and Technology Normal University (Approval No. JXSTNU-2024-12-0012).

Consent to Participate

Written informed consent was obtained from all participants prior to their participation, and confidentiality and anonymity were strictly maintained throughout the study.

Author Contributions

Conceptualization, Mengmeng Wang, Fang Feng and Rongyan Zhu; methodology, Fang Feng; software, Chunhui Wu; validation, Jia Wei and Rongyan Zhu; formal analysis, Tinglan Huang; investigation, Mengmeng Wang; resources, Chunhui Wu and Fang Feng; writing—original draft preparation, Fang Feng; writing—review and editing, Mengmeng Wang; supervision, Mengmeng Wang and Jia Wei; funding acquisition, Mengmeng Wang. All authors have read and agreed to the published version of the manuscript.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was funded by Department of Education of Jiangxi Province (grant numbers: JY23108 and JY20102).

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.