Abstract

This study explores the influence of procedural transparency and legal-rights awareness on stakeholder perceptions of fairness in AI-mediated language assessments across Saudi universities. Despite the increasing integration of artificial intelligence (AI) tools into educational assessment, significant concerns persist regarding the transparency of algorithmic scoring processes and stakeholders’ understanding of their legal rights under relevant data-protection frameworks. Using a descriptive correlational approach, this research investigates these concerns by gathering insights from students, faculty members, and administrators from five universities across Saudi Arabia. The findings highlight a strong relationship between greater procedural transparency and reduced perceptions of algorithmic bias. Additionally, higher awareness of legal protections under the Saudi Personal Data Protection Law (PDPL) and the Saudi Data and Artificial Intelligence Authority (SDAIA) Ethics Principles further diminished bias perceptions among participants. Notably, perceptions of transparency varied significantly by stakeholder role, with administrators reporting the least clarity about AI assessment processes. These insights underline the necessity of clear, accessible explanations regarding AI decision-making processes and targeted educational initiatives to enhance stakeholder legal awareness. Overall, this study contributes to a deeper understanding of how transparency and legal accountability can foster sustainable trust and fairness in AI-based educational assessments, offering actionable recommendations for institutional governance and policy development.

Plain Language Summary

This study explores how transparent AI systems and awareness of legal rights can improve fairness in university language assessments. It looks at students, faculty, and administrators in Saudi universities and finds that people are more likely to trust AI grading tools when they understand how the system works and know their legal protections. The study shows that clear explanations and better education about rights can reduce feelings of unfairness and bias in automated assessments. The findings suggest that schools should make AI scoring systems easier to understand and teach users about their rights to create fairer learning environments.

Introduction

The proliferation of artificial intelligence (AI) in educational contexts has transformed language assessment practices, promising scalable, consistent, and cost-effective solutions (Al Fraidan, 2025). Automated essay scoring (AES), speech-recognition systems, and AI-driven writing evaluators are increasingly employed to assess learners’ productive skills, reducing reliance on human raters while accelerating feedback cycles (Balfour, 2013; Williamson, 2020). However, these technologies raise profound fairness concerns: training data often under-represent non-standard dialects or cultural expressions, leading to systematic score disparities (Floridi & Cowls, 2021; Litman et al., 2020).

In parallel, the concept of legal accountability for AI systems—encompassing transparency obligations, enforceable rights to explanation, and robust appeal mechanisms—has gained traction internationally (Wachter et al., 2017). Accountability frameworks emphasize both procedural safeguards (e.g., data-impact assessments, human-in-the-loop oversight) and substantive fairness metrics (e.g., demographic parity, equalized odds) to ensure that algorithmic decisions align with normative standards of justice (Barocas et al., 2019; Veale & Binns, 2017).

Saudi Arabia, under its Vision 2030 agenda, has prioritized digital transformation and AI innovation across sectors, including higher education (Saudi Vision, 2030, 2020). Universities nationwide have adopted AI-based placement tests, automated grading platforms, and proctoring solutions to enhance access, administrative efficiency, and learning outcomes (Alotaibi & Alshehri, 2023; Al-Shehri, 2022). Concurrently, the Kingdom has established a comprehensive legal and regulatory framework to govern AI deployments: the Personal Data Protection Law (PDPL; Royal Decree M/148, 2023) introduces GDPR-style legal awareness rights and requires impact assessments for automated decision-making, while the Saudi Data and Artificial Intelligence Authority’s (SDAIA’s) AI Ethics Principles articulate normative commitments to fairness, transparency, explainability, and accountability (SDAIA, 2023). At the institutional level, King Faisal University’s Privacy Policy codifies these obligations by mandating data-minimization, informed consent procedures, impact assessments, and channels for appeals related to automated systems. Given Saudi learners’ increasing immersion in digital platforms, there is a growing need to align AI systems with learners’ sociolinguistic realities (Al Fraidan, 2023).

Despite these legal and policy advances, critical gaps remain in understanding how AI-mediated language assessment tools function in practice, whether they perpetuate unintended biases against Saudi EFL learners, and the extent to which stakeholders can invoke legal remedies when contesting AI-generated scores. This study addresses these gaps by (a) empirically assessing algorithmic bias and stakeholder perceptions of transparency and fairness in AI-based language assessment tools; (b) evaluating awareness and effectiveness of legal and institutional accountability mechanisms under Saudi AI governance frameworks; and (c) proposing integrated technical, policy, and educational interventions to ensure that AI in language assessment upholds principles of justice, trust, and legal compliance.

Building on prior work in fairness-through-awareness (Wachter et al., 2017) and algorithmic accountability (Diakopoulos, 2016), this study is guided by the following conceptual model: greater procedural transparency and legal-rights awareness are expected to reduce stakeholders’ perceptions of algorithmic bias in AI-mediated assessment. Thus, we hypothesize that both transparency and legal awareness levels will be significant negative predictors of perceived bias.

Literature Review

Theoretical Foundation

This study is anchored in the fairness-through-awareness theoretical framework introduced by Wachter et al. (2017), which underscores the essential role of transparency in fostering fairness in algorithmic decision-making. According to this framework, ensuring that stakeholders understand how algorithmic decisions are reached significantly reduces perceptions of bias and promotes trust. Fairness-through-awareness suggests that transparency is not merely about disclosing algorithmic mechanics, but actively involves meaningful explanations that empower stakeholders to interpret and challenge automated outcomes.

Moreover, the study draws upon Diakopoulos’s (2016) accountability framework, which provides a complementary perspective on procedural and substantive accountability in algorithmic governance. Diakopoulos emphasizes that achieving accountability requires not only transparency in how algorithms function but also clearly defined processes through which stakeholders can appeal or contest algorithmic decisions. Such procedural accountability mechanisms include systematic audits, impact assessments, clearly defined avenues for stakeholder feedback, and legal safeguards that explicitly protect individual rights. By combining these theoretical frameworks, the current research places particular focus on the integration of transparent AI explanations and robust accountability measures—including legal frameworks and appeal processes—as essential components in achieving stakeholder trust and ensuring ethical AI use in educational assessments. This integrated theoretical approach enables the study to provide practical recommendations that address both the cognitive dimensions of stakeholder understanding and the structural dimensions of legal and procedural governance.

AI in Educational Assessment Globally

Automated assessment tools such as AES and speech-recognition systems have matured to offer reliability comparable to human raters, enabling institutions to handle large-scale testing efficiently (Balfour, 2013; Williamson, 2020). United Nations Educational, Scientific and Cultural Organization (UNESCO, 2021) highlights that AI can personalize learning and provide rapid feedback, yet cautions that uneven data representation risks exacerbating educational inequities. Research indicates that non-native or minority dialects are frequently under-represented in training corpora, leading to disproportionate score penalties for these groups (Holstein et al., 2019; Williamson, 2020).

Fairness Metrics and Bias Mitigation

Algorithmic fairness is operationalized through statistical measures—such as demographic parity (equal positive outcomes across groups) and equalized odds (comparable error rates)—yet these metrics alone may overlook contextual nuances in language use (Barocas et al., 2019; Veale & Binns, 2017). Dwork et al. (2012) advocate for fairness definitions that incorporate individual treatment alongside group metrics, while Zhang et al. (2018) demonstrate adversarial de-biasing techniques to reduce performance disparities in natural language processing models. Incorporating culturally grounded features into training data has also shown promise for improving fairness in localized contexts (Abubakari, 2025).

Accountability, Explainability, and Legal Rights

The “right to explanation,” as discussed by Wachter et al. (2017), posits that individuals subject to automated decisions deserve intelligible justifications. Technical solutions—such as LIME (Ribeiro et al., 2016) and SHAP (Lundberg & Lee, 2017)—offer post hoc interpretability for model outputs, but their utility for non-technical stakeholders remains under investigation (Kroll et al., 2017). Diakopoulos (2016) emphasizes the need for procedural audits—algorithmic impact assessments—and substantive remedies—effective appeal mechanisms—to operationalize accountability. In educational settings, “human-in-the-loop” designs are recommended to combine automated efficiency with expert oversight (Holstein et al., 2019).

Recent Advances in Transparency and Trust in AI

Recent research has increasingly highlighted the dynamic and complex relationship between transparency and trust in AI-mediated systems. Lee and Cha (2025) specifically investigated transparency’s multifaceted role, demonstrating empirically how transparency attributes—accuracy, clarity, and disclosure—distinctively influence stakeholders’ trust perceptions in AI. They argued that transparency does not uniformly foster trust; rather, it positively influences trust perceptions predominantly when coupled with perceived AI functionality and helpfulness (Lee & Cha, 2025). Similarly, Barredo Arrieta et al. (2020) emphasized that transparency is critical for AI accountability and responsible use, particularly in decision-making contexts that significantly impact individuals. In addition, Shin (2021) demonstrated empirically how explainability and transparency in AI positively affect user trust and acceptance, noting that transparent explanations enhance user trust by reducing perceived uncertainty. Furthermore, Dwivedi et al. (2023) provided insights into the multidisciplinary challenges and opportunities presented by advanced conversational AI such as ChatGPT, emphasizing transparency’s essential role in fostering broader acceptance and mitigating user skepticism. Collectively, these recent studies reinforce the need for a nuanced understanding of AI transparency, suggesting transparency alone is insufficient without ensuring the trustworthiness, accountability, and effective communication of AI outcomes.

Regional Perspectives on AI in Educational Assessment

Within the Gulf Cooperation Council (GCC) region, several countries have actively pursued the implementation of AI-driven assessment technologies in educational contexts, emphasizing transparency, ethical standards, and accountability. For instance, the United Arab Emirates (UAE) has implemented robust national frameworks for AI integration through its UAE AI Strategy 2031, which specifically highlights transparent, responsible, and ethical use of AI systems in educational assessment, evaluation, and curriculum development. Similarly, Qatar’s National Vision 2030 underscores digital transformation initiatives in education, incorporating clear ethical standards and promoting transparency to foster public trust in AI applications. In Oman, under the national digital transformation initiatives outlined in Oman Vision 2040, there is a growing emphasis on adopting advanced technologies, including AI, to enhance educational quality and accessibility. Although explicit national policies addressing AI-mediated assessments are still emerging in Oman, the broader commitment to technology-driven education reform reflects an increasing awareness of the need for clear regulatory frameworks and ethical considerations. Despite these regional efforts, empirical research explicitly examining legal accountability and procedural transparency in AI-based educational assessment remains sparse across Gulf nations. This research gap underscores the importance of this study, particularly within the context of Saudi Arabian universities, and provides a comparative lens to inform broader regional developments.

Saudi AI Governance in Education

Under Royal Decree M/148 (2023), the PDPL mandates that any “automated decision-making” affecting personal data include clear disclosures, pre-implementation impact assessments, and robust data-subject rights (e.g., access, correction, objection). SDAIA’s AI Ethics Principles (2023) extend these norms by recommending designated roles (e.g., Responsible AI Officers) and continuous monitoring throughout AI lifecycles. Institutional policies, such as King Faisal University’s Privacy Policy, operationalize legal requirements at the organizational level through explicit consent protocols, data-minimization practices, and appeal channels for automated systems. However, studies reveal limited awareness of these protections among stakeholders, indicating a need for greater transparency and education (Al-Shehri, 2022; Alotaibi & Alshehri, 2023).

Methodology

Operational Definitions

In this study,

Research Questions

This study was guided by the following research questions:

To what extent do stakeholders perceive transparency in AI-mediated language assessments within Saudi universities?

How does the perceived transparency relate to perceptions of algorithmic bias in these assessments?

What is the level of stakeholder legal-awareness regarding the Saudi Personal Data Protection Law (PDPL) and SDAIA’s AI Ethics Principles?

To what extent do transparency and legal-awareness predict perceived algorithmic bias across different stakeholder groups (students, faculty, and administrators)?

Research Design

This study employed a descriptive correlational design to examine relationships among perceived transparency of AI-mediated language assessment, experienced algorithmic bias, and awareness of legal-ethical protections within Saudi higher education. Such a design is well suited to exploring naturally occurring associations without experimental manipulation (Creswell & Creswell, 2018). Data were gathered via an online survey administered during March 2025, and spanned five universities—including King Faisal University—purposefully selected to represent both public and private institutions across the Eastern, Central, and Western provinces of Saudi Arabia.

Participants

A total of 180 stakeholders participated: 120 students (85 undergraduate, 35 graduate) majoring in English language or applied linguistics, 40 faculty members with at least 2 years’ experience using AI assessment tools, and 20 administrators responsible for educational-technology policy and quality assurance. Recruitment employed purposive sampling through institutional email lists; of 250 invitations sent, 182 responses were received (72.8% response rate), with 180 complete surveys retained for analysis. Student participants ranged in age from 18 to 35 years (

Instruments

The instrument consisted of a four-part questionnaire, developed in English and translated into Arabic. The first section, a Transparency Scale (10 items; 5-point Likert from 1 = “Strongly Disagree” to 5 = “Strongly Agree”), assessed clarity of AI scoring processes (e.g., “I understand how the AI system computes my writing scores”). The second section included Bias and Appeal Items (four yes/no questions) exploring whether participants perceived unfair scoring and whether they attempted appeals. The third section, Legal-Awareness Items (five multiple-choice questions), evaluated knowledge of the Saudi Personal Data Protection Law (PDPL) and the SDAIA AI Ethics Principles. Finally, Open-Ended Prompts invited recommendations for policy and technical improvements. Three domain experts rated each item (Lawshe’s CVR ≥0.78; Lawshe, 1975), followed by a 20-participant pilot. The final 10-item Transparency Scale achieved good reliability (α = .82). The original English-language questionnaire was translated into Arabic by two bilingual linguistics experts, followed by a back-translation into English conducted independently by two additional linguistics experts to ensure semantic consistency. Discrepancies were resolved through discussion by an expert panel consisting of three bilingual academic specialists in linguistics and assessment. Subsequently, a pilot test involving 20 bilingual participants was conducted, resulting in minor revisions to enhance clarity. The internal consistency (Cronbach’s alpha) of the final Arabic questionnaire was found to be .82, indicating high reliability.

Data Collection

The researcher administered the survey through

For quantitative analysis, descriptive statistics (percentages, means, standard deviations) summarized key variables, and Pearson correlation coefficients assessed associations among transparency, bias (coded 1 = bias experienced; 0 = no bias), and legal-awareness (total correct responses), with statistical tests conducted in IBM SPSS Statistics 27 (IBM Corp., 2020). A significant negative correlation between transparency and bias (

Qualitative responses (

To minimize any potential risk of harm, the study design focused on non-invasive procedures—namely, the voluntary and anonymous completion of an online questionnaire. No sensitive personal information (e.g., names, student IDs, contact details) was collected. Participants were explicitly informed that their responses would remain confidential, analyzed in aggregate form only, and used solely for academic research purposes. No deception or manipulation was involved.

The potential benefits of the research—including promoting fairness, transparency, and trust in AI-mediated educational assessment—outweigh any minimal risks, as the study offers insights that may lead to improved practices and policies that directly benefit students, faculty, and institutional governance.

Ethics and Informed Consent Statement

Ethical approval for this research was granted by the [XXX Institutional Ethical Committee] (Approval Code: ethics 2024-0456). Informed consent was obtained from all participants prior to their participation in the study. The online survey included a digital consent form that explained the purpose, procedures, and voluntary nature of the research. Participants were assured of their right to withdraw at any time without penalty, and that their responses would remain anonymous and confidential. No personally identifying information was collected, and the study followed all principles outlined in the Declaration of Helsinki and the Saudi PDPL (Royal Decree M/148, 2023). This study posed minimal risk to participants and involved only non-invasive procedures.

Data Analysis Procedures

Descriptive statistics, including means, standard deviations, and percentages, were computed to summarize participant responses. Pearson correlation coefficients were calculated to examine relationships between transparency, bias experiences, and legal-awareness. One-way ANOVA tests were conducted to identify differences in transparency ratings across stakeholder groups (students, faculty, administrators), followed by Tukey’s HSD post hoc analysis for subgroup comparisons. Chi-square tests assessed categorical differences in bias perceptions between groups. Hierarchical logistic regression models were then employed to investigate predictors of perceived bias, specifically controlling for stakeholder role, transparency perceptions, and legal-awareness. Model robustness was evaluated through multicollinearity checks (Variance Inflation Factor [VIF]), Hosmer–Lemeshow tests for goodness-of-fit, and residual diagnostics, including Cook’s distance and leverage values. Qualitative data from open-ended responses underwent thematic analysis following Braun and Clarke’s (2006) six-phase method, with inter-coder reliability (κ) ensuring coding consistency.

Results

In this section, we present key findings structured as follows: (a) participant demographic characteristics; (b) descriptive findings on transparency ratings, perceived bias, and legal awareness; (c) correlational analyses between transparency, bias, and legal awareness; (d) stakeholder group comparisons; (e) logistic regression results for predicting perceived bias; and (f) model diagnostics and validation.

Participant Demographic Characteristics

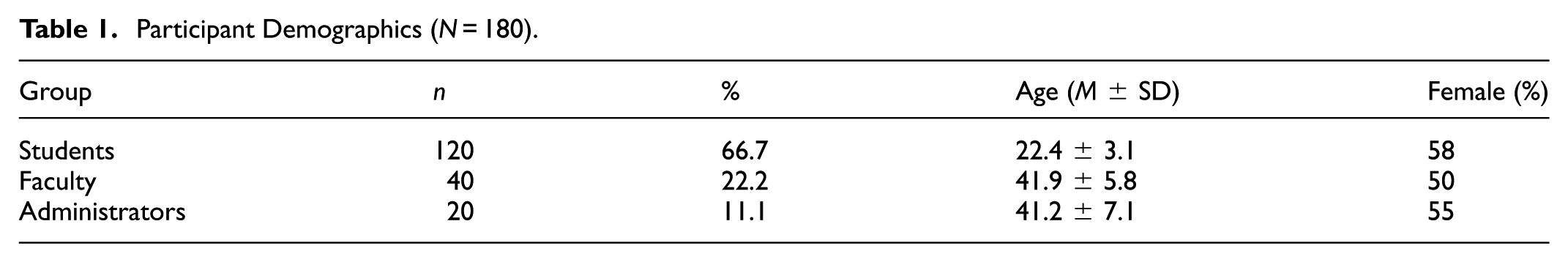

A total of 180 participants were surveyed, representing diverse perspectives across Saudi universities. The sample was composed primarily of students (

Participant Demographics (

Descriptive Findings: Transparency, Bias Experience, and Legal Awareness

Key variables examined in this study include transparency ratings, perceived bias, appeal attempts, and legal-awareness. Participants reported moderate transparency levels with an average transparency rating of 3.87 (

Descriptive Statistics for Primary Variables.

Participants’ transparency ratings distribution is further illustrated in Figure 1, clearly highlighting that over half the participants rated transparency moderately to high, although a substantial proportion (15.6%) indicated significant confusion regarding AI scoring processes.

Distribution of transparency ratings.

Correlational Analysis: Transparency, Bias, and Legal Awareness

Pearson correlations revealed meaningful relationships among the key constructs (see Table 3). Transparency ratings were strongly and negatively correlated with perceived bias (

Pearson Correlations Among Key Variables.

Subgroup Differences Based on Stakeholder Roles

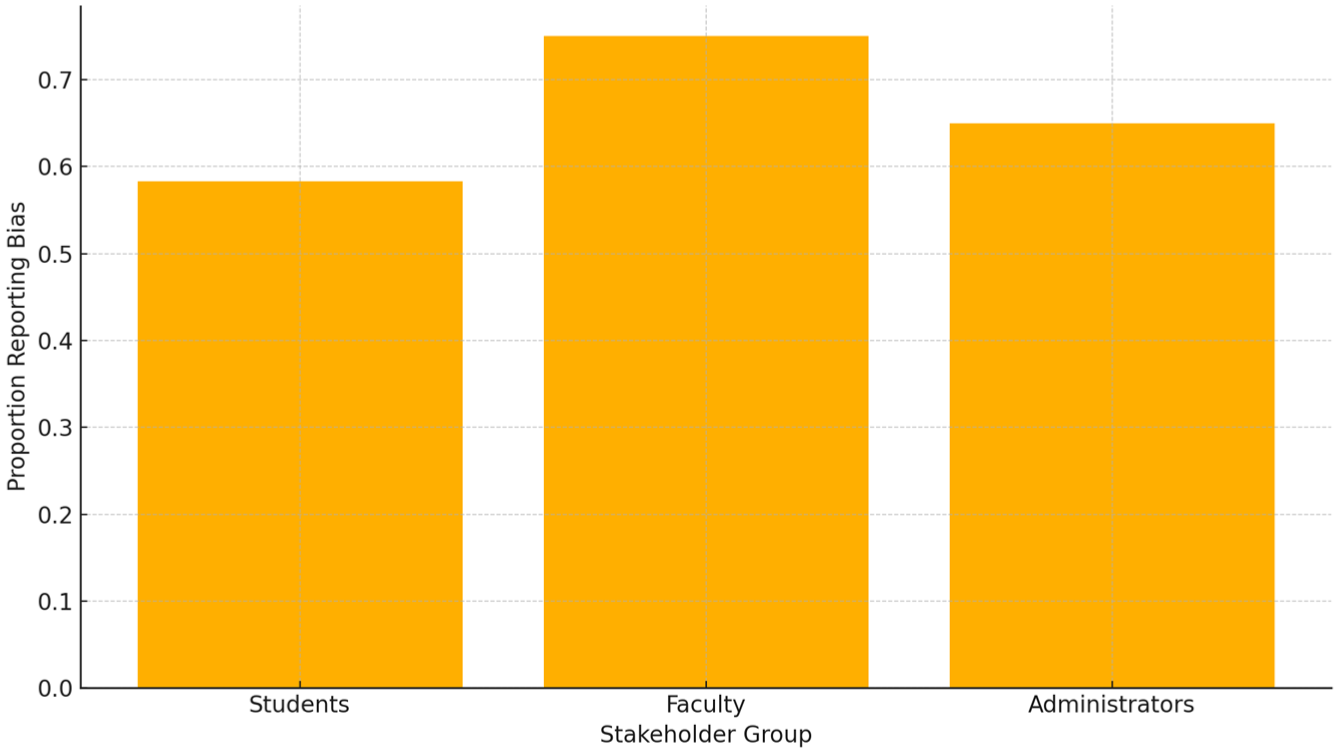

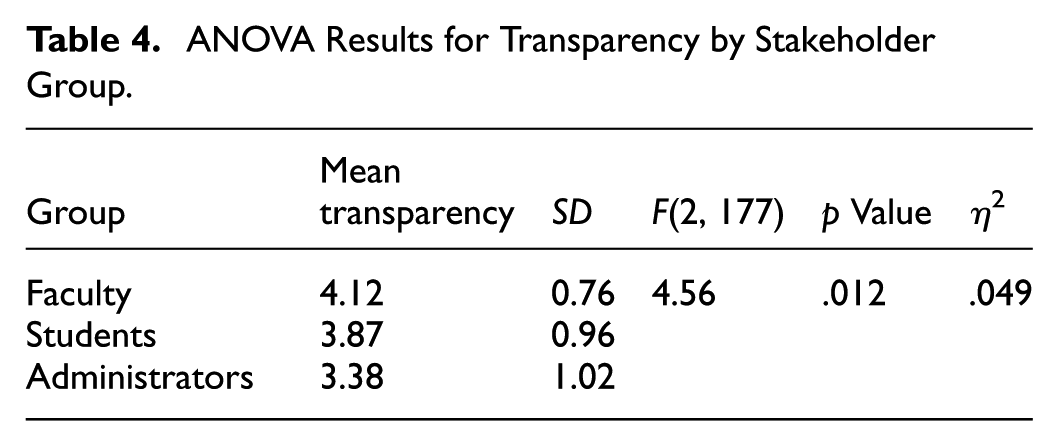

One-way ANOVA revealed significant differences among stakeholder groups in transparency ratings (

Proportion reporting bias by stakeholder group.

ANOVA Results for Transparency by Stakeholder Group.

The proportion of respondents perceiving bias by stakeholder group is shown clearly in Figure 2.

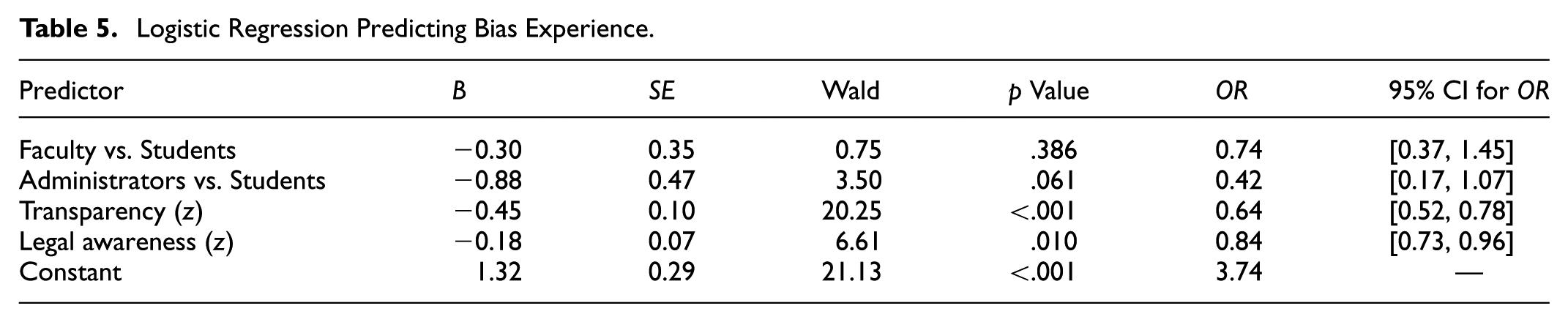

Multivariate Logistic Regression Results Predicting Perceived Bias

Hierarchical logistic regression analyses clarified the predictors of perceived bias (Table 5). Controlling for stakeholder group, both transparency (

Logistic Regression Predicting Bias Experience.

The predicted probabilities based on transparency ratings are visualized clearly in Figure 3.

Predicted probability of perceived bias by transparency

Model Diagnostics and Validation

Model diagnostics confirmed robustness and validity. Multicollinearity (VIF between 1.02 and 1.31), Hosmer–Lemeshow test (χ2(8) = 6.12,

Our findings collectively reveal that transparency and legal awareness significantly mitigate perceived bias in AI-mediated assessments. Stakeholder groups differ markedly in perceptions of transparency and bias, highlighting the necessity for targeted transparency interventions and legal awareness programs tailored to different stakeholder groups. These results underscore transparency’s critical role in enhancing trust in AI-driven educational assessments, especially within the context of Saudi higher education.

Discussion

This study set out to examine how procedural clarity (transparency) and stakeholder legal-awareness influence perceptions of fairness in AI-mediated language assessment within Saudi higher education. Drawing on data from 180 students, faculty, and administrators across five universities, our findings reveal three key insights: (a) transparency of scoring processes is a robust negative predictor of perceived algorithmic bias; (b) legal awareness of the Saudi PDPL and SDAIA Ethics Principles further attenuates bias perceptions; and (c) stakeholder role moderates transparency perceptions, with administrators reporting the lowest clarity. Below, the researcher interprets these results in light of existing AI-fairness and accountability frameworks, discuss practical and policy implications, acknowledge study limitations, and propose avenues for future research.

These findings provide direct responses to the guiding research questions and offer interpretive depth from an applied linguistics perspective. In response to RQ1, participants reported moderate levels of transparency, with notable variation across stakeholder roles. This aligns with research suggesting that perceived transparency in assessment systems directly impacts language learners’ motivation, trust, and willingness to engage in feedback loops (Carless, 2009; Hamp-Lyons, 2007). Regarding RQ2, the strong negative correlation between transparency and perceived bias reinforces the idea that clearly communicated assessment criteria and scoring processes can mitigate learners’ sense of unfairness—a central concern in second language assessment contexts where test-takers are highly sensitive to procedural justice (Kunnan, 2000). For RQ3, the observed low levels of legal awareness indicate a disconnect between institutional policy and individual understanding, echoing concerns raised by scholars about students’ limited access to metacognitive strategies in technology-mediated language testing (Hamp-Lyons, 2007). Finally, RQ4 revealed that both transparency and legal awareness significantly predicted perceived bias, even when accounting for stakeholder role. This suggests that procedural clarity and data rights literacy are not just governance concerns, but core elements of assessment literacy in applied linguistics (Fulcher, 2012; Inbar-Lourie, 2008). These results affirm that building transparent and legally informed AI-mediated assessment systems contributes directly to equitable and sustainable second language evaluation practices.

Transparency as a Cornerstone of Fairness

Consistent with theoretical models of “fairness through awareness” (Wachter et al., 2017) and empirical findings in automated scoring research (Floridi & Cowls, 2021; Williamson, 2020), our strong negative correlation (

Legal-Awareness as a Partial Shield

Our results extend the accountability literature by demonstrating that legal awareness—knowledge of data-protection rights under the PDPL and ethical guidelines from SDAIA—provides a modest but significant reduction in bias perceptions (

Role-Based Variations and Organizational Implications

The significant role differences in transparency perceptions (

The results of this study strongly support and extend the theoretical propositions of the fairness-through-awareness framework (Wachter et al., 2017) by demonstrating empirically that increased transparency in AI-mediated language assessments significantly reduces stakeholder perceptions of bias. According to this framework, transparency does not simply involve disclosure of algorithmic processes, but entails providing meaningful explanations that enable stakeholders to critically evaluate and trust the outcomes. Our findings provide clear evidence of this principle: participants who reported higher levels of understanding regarding AI scoring exhibited notably lower concerns about bias, affirming the critical role transparency plays in fostering fairness perceptions.

Moreover, the findings closely align with Diakopoulos’s (2016) accountability framework, highlighting both procedural transparency and substantive accountability as vital dimensions for the ethical use of algorithmic systems. The observed relationship between legal-awareness and bias perceptions underscores the necessity for explicit accountability mechanisms—including legal safeguards, clearly defined appeal procedures, and robust institutional oversight. Our results illustrate that higher levels of stakeholder awareness regarding their data-protection rights and available appeal mechanisms significantly enhance perceived fairness and trust in AI-driven assessments.

These theoretical connections are especially relevant within the Saudi educational context, given the Kingdom’s explicit commitment under Vision 2030 and associated frameworks (PDPL and SDAIA’s guidelines) to ensure ethical and transparent AI governance. By empirically validating these theoretical claims within this regional setting, the study contributes valuable insights to the broader international discourse on AI accountability and fairness. Consequently, our findings highlight the importance of incorporating explicit transparency measures and clear accountability structures into educational policies, thus ensuring sustainable, equitable, and trusted AI integration into language assessment contexts.

Generalizability of Results Based on Theoretical Framework

While our study was conducted within Saudi Arabia, its results align closely with theoretical propositions of fairness-through-awareness and algorithmic accountability, indicating potential applicability beyond this regional context. The correlation between transparency and reduced bias perceptions supports the generalizable principle that clarity and explainability in AI decisions universally enhance trust. Similarly, the impact of legal-awareness underscores the broader importance of educating stakeholders about their rights in AI-mediated processes globally. Hence, although localized, the findings have wider theoretical and practical implications for institutions worldwide that implement AI tools for educational assessment.

This study carries important implications for applied linguists and language assessment practitioners. In high-stakes EFL contexts such as Saudi Arabia, students often face challenges in expressing culturally appropriate or idiomatic language that aligns with native-trained AI scoring rubrics. Low transparency may exacerbate learners’ anxiety and reduce trust in AI tools, ultimately affecting their motivation and performance. Language teachers should be equipped to mediate between students and opaque algorithmic systems, providing “translation” of scoring logic and scaffolding critical awareness of how language is evaluated. Additionally, institutional policies should ensure that AI tools are trained on linguistically diverse corpora, reflecting the syntactic, pragmatic, and rhetorical features of Saudi EFL writing, thereby reducing cultural-linguistic underrepresentation.

Practical and Policy Recommendations

Grounded in our findings, the researcher recommends a multipronged approach:

Institutions should consider focused transparency training specifically for administrative stakeholders. The findings provide strong empirical support for institutions and policymakers to prioritize transparency initiatives and targeted education on data-protection rights, clearly underscoring their practical importance in ensuring ethical AI usage.

This study also contributes to the field of applied linguistics by contextualizing stakeholder perceptions within the sociolinguistic realities of Saudi EFL education, where AI-mediated writing and speaking assessments are increasingly shaping students’ academic and professional trajectories.

Limitations and Future Directions

While our cross-sectional design offers a valuable snapshot of stakeholder perceptions, causal pathways between transparency, legal-awareness, and bias experience cannot be definitively established. Longitudinal studies or experimental interventions (e.g., randomized transparency enhancements) could more rigorously test causality. Additionally, our reliance on self-reported measures may introduce social-desirability bias; embedding unobtrusive behavioral indicators (e.g., appeal-submission logs) would strengthen inferences. Future research should also explore interactions with demographic factors (e.g., language proficiency, prior AI exposure) and evaluate the effectiveness of specific transparency tools in real-world pilot implementations.

Operationalizing Explainability for Non-Technical Users

While tools like LIME (Local Interpretable Model-Agnostic Explanations) and SHAP (SHapley Additive exPlanations) are well-established in AI research, their practical value in education hinges on making their outputs accessible to non-technical users. For example, in AI-based essay scoring platforms, LIME could be integrated into the user interface to visually highlight which features (e.g., vocabulary diversity, argument structure) most influenced a student’s score. Similarly, SHAP values could be simplified into a color-coded dashboard showing positive or negative contributions of different essay components to the overall grade. These interpretive summaries could be embedded within student feedback portals and teacher dashboards, empowering both groups to understand, trust, and improve based on AI assessments without needing technical expertise.

Conclusion

This study offers a multifaceted examination of fairness and accountability in AI-mediated language assessment within the Saudi higher-education context. By integrating empirical data on stakeholder perceptions with rigorous statistical modeling and policy analysis, the researcher has demonstrated that procedural transparency and legal-rights awareness play pivotal roles in shaping user trust and fairness judgments. Specifically, clearer explanations of AI scoring processes substantially reduce perceived bias (

Our findings carry both practical and policy significance. Technically, embedding explainable-AI modules (e.g., LIME, SHAP) and user-centered decision summaries into assessment platforms can yield large gains in perceived fairness, particularly when moving stakeholders from minimal to moderate transparency levels (Lundberg & Lee, 2017; Ribeiro et al., 2016). Institutionally, cross-sectoral AI Governance Committees should be established to ensure that administrators, faculty, and students share a common understanding of system design, performance metrics, and recourse channels (Kroll et al., 2017). From a regulatory standpoint, sector-specific PDPL implementing regulations should mandate regular fairness audits and public reporting of bias-mitigation efforts as criteria for accreditation, thus operationalizing SDAIA’s non-binding Ethics Principles into enforceable standards (SDAIA, 2023).

Moreover, our research resonates with broader international imperatives to ensure trustworthy AI in education. UNESCO (2021) has underscored the need for policies that balance innovation with equity; our work provides concrete, data-driven pathways to achieve this balance. Aligning with Saudi Vision 2030’s emphasis on digital transformation (Saudi Vision, 2030, 2020), these recommendations support the Kingdom’s ambition to become a global leader in ethical AI deployment.

From an applied linguistics perspective, this study highlights the importance of aligning AI assessment tools with culturally and linguistically responsive practices. Ensuring transparency in algorithmic scoring for EFL learners is not only a technical imperative but also a pedagogical one—critical for maintaining motivation, fairness, and trust among diverse language users in digitally mediated educational contexts.

Looking ahead, longitudinal and experimental studies should evaluate the causal impact of transparency and legal-rights interventions on bias perceptions and learning outcomes. Investigations into demographic moderators—such as language proficiency and AI-tool familiarity—will further refine targeted strategies. Ultimately, by marrying rigorous empirical research with thoughtful technology governance, Saudi higher education can not only safeguard student equity but also serve as a model for AI fairness and accountability in language assessment worldwide ensuring sustainability of AI-mediated assessment. By quantifying transparency’s protective effect and the partial buffer of legal-awareness, this research advances AI & Law theory and informs Saudi Vision 2030’s digital-equity ambitions.

Footnotes

Acknowledgements

We would like to acknowledge all the people who facilitated this project including administrators, faculty members, family members, and the research participants for their cooperation in this project. Special thanks to Alanoud Alwasmi for her great contribution in this study and extended to Hiam Al Fraidan for her continuous support.

Ethical Considerations

This study received ethical approval from the Research Ethics Committee at King Faisal University (Approval Code: KFU024-0456), and all procedures conformed to the Declaration of Helsinki and Section 8.05 of the APA Ethical Principles. To minimize potential risks, only anonymous online surveys were used. No identifying information was collected. Participants were fully informed of their rights, including voluntary participation and withdrawal at any time without penalty.

Consent to Participate

It was obtained electronically prior to participation. Data were collected and stored in compliance with the Saudi Personal Data Protection Law (PDPL).

Author Contributions

Abdullah Al Fraidan: Conceptualization, Methodology, Data Collection, Formal Analysis, Writing – Original Draft, Writing – Review & Editing, Supervision, Project Administration.

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was funded and supported by the Deanship of Scientific Research, Vice Presidency for Graduate Studies and Scientific Research, King Faisal University, Saudi Arabia, [Grant, KFU253791].

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The datasets generated and analyzed during the current study are available from the corresponding author on reasonable request.