Abstract

This study explores the integration of generative AI (GAI) tools in second language acquisition (SLA) through an extended Technology Acceptance Model (TAM). Focusing on Saudi and Korean English language learners (ELLs), it examines the relationships among perceived ease of use, perceived usefulness, perceived benefits, and actual use (i.e., task-based behavior) of GAI in English foreign language (EFL) learning. While perceived usefulness captures the functional utility of GAI tools for specific language learning tasks, perceived benefits encompass broader educational outcomes, including enhanced language proficiency, greater academic support, and increased learner confidence. Data were collected from 681 participants (407 Saudi, 274 Korean) and analyzed using mean scores, correlation analysis, and structural equation modeling (SEM). The model demonstrated a satisfactory fit, explaining 79% of the variance in actual use, with the model accounting for 70% of the variance in perceived benefits. Comparing models with and without perceived benefits revealed a 6% increase in explained variance (ΔR 2 = .06). Cross-cultural differences emerged in learner perceptions and adoption intentions, providing a deeper understanding of how students engage with GAI tools in language learning contexts. These findings offer practical insights for the effective integration of AI in SLA settings.

Plain Language Summary

This study looks at how generative AI (GAI) can help students from Saudi Arabia and South Korea learn English. It focuses on how easy it is to use these AI tools, how useful students find them, and how often they actually use them for learning. We gathered information from 681 students (407 from Saudi Arabia and 274 from South Korea) using surveys and statistical methods to see how these factors are connected. The results showed that GAI can greatly improve students’ English communication skills and that it has the potential to change the way languages are taught. We found that Saudi and Korean students have different experiences with these technologies, which suggests that culture and context play important roles in how students adopt new learning tools. Overall, the study highlights the benefits of using generative AI in language learning and offers advice on how to best integrate this technology into education. This research aims to help educators and institutions understand how to effectively use AI to support language learners.

Keywords

Introduction

The rapid integration of generative artificial intelligence (GAI) into higher education is reshaping the landscape of second language (L2) and English foreign language (EFL) learning and teaching. Tools such as ChatGPT and other large language models are now being explored for their potential to scaffold writing, improve vocabulary, and simulate conversation practice for English language learners (ELLs). GAI refers to artificial intelligence systems capable of generating original content, such as text, audio, and visuals, based on prompts and training data (Brown et al., 2020). Within the context of L2 pedagogy, these tools afford dynamic, personalized, and interactive learning environments that mirror authentic communicative tasks, offering new avenues for practice, feedback, and learner autonomy (Chen et al., 2020).

Although prior research has examined GAI’s technological capabilities and learner engagement in educational settings (Allen & Mizumoto, 2024; Guo & Wang, 2024; Song & Song, 2023), the theoretical understanding of how learners perceive and adopt GAI tools, especially across culturally and linguistically diverse contexts, remains underdeveloped. Most research in Western higher education overlooks the influence of sociocultural factors and L2 proficiency on learners’ acceptance and use of GAI tools (Liu & Ma, 2024; Yan, 2023). This gap is critical, as non-Western learners may hold different expectations regarding teachers, technology, and language learning.

To address this gap, the study examines university students in Saudi Arabia and South Korea, two high-context, collectivist cultures (Hofstede, 2001) that differ in educational systems, English policies, and technological environments. In both contexts, English is a strategic priority, tied to national goals of internationalization, academic competitiveness, and workforce development. Thus, the adoption of GAI is not solely a matter of convenience or novelty but one situated within broader educational and societal imperatives. However, learners’ willingness to adopt such tools may depend on their L2 proficiency, their perceived educational value of the technology, and how the tools align with their culturally rooted expectations for interaction and learning.

To explain the behavioral dynamics underlying GAI adoption, this study draws on the Technology Acceptance Model (TAM; Davis, 1989), a well-established framework in educational technology research. While TAM’s core constructs, perceived ease of use (PEOU) and perceived usefulness (PU), remain central, this study proposes an extended framework tailored to the L2 context. Specifically, it introduces perceived benefits as a theoretically grounded refinement of TAM’s “attitude” component. Whereas PU captures functional utility (e.g., improving task performance), perceived benefits encompass affective and developmental outcomes salient in language learning, such as increased confidence, skill development, and a sense of academic progress. This addition aligns with calls in second language acquisition (SLA) research to account for the motivational, emotional, and contextual dimensions of language learning in technology-mediated environments (Mekheimer, 2025).

Moreover, to examine sociocultural influences on technology adoption, the study draws on Hofstede’s (2001) dimensions, particularly uncertainty avoidance, collectivism, and power distance. Learners from high uncertainty avoidance cultures, like Saudi Arabia and South Korea, may prefer structured, predictable learning systems (Tarhini, 2016), while collectivist norms may influence learners to value technologies that support group cohesion or institutional success. Additionally, varying degrees of power distance may affect learners’ preferences for autonomous versus teacher-directed use of AI tools (Rabayah, 2019). These cultural dynamics are essential in interpreting cross-cultural variation in GAI adoption, and recent studies affirm that such factors meaningfully influence technology acceptance patterns (Mupaikwa & Bwalya, 2023).

Lastly, to further contextualize learner interaction with GAI, the study draws on insights from cross-cultural pragmatics. As House and Kádár (2024) argue, communication is shaped by culturally embedded norms regarding politeness, directness, and the interpretation of social cues. GAI tools, often trained on Western linguistic data, may generate outputs that conflict with learners’ cultural expectations for appropriateness or clarity. These pragmatic mismatches may influence not only learners’ satisfaction with AI-generated responses but also their overall trust and willingness to engage with the tool (Klimova & Hua Chen, 2024). Developing intercultural competence, therefore, becomes crucial in helping learners navigate these challenges and derive meaningful benefit from AI-enhanced L2 instruction.

By integrating TAM with perspectives from SLA, educational technology, and cultural theory, this study offers a comprehensive model for examining how learners from distinct cultural backgrounds evaluate and engage with GAI tools. It highlights the importance of moving beyond functional affordances to consider emotional, cultural, and pedagogical dimensions of AI adoption in global language learning contexts.

Literature Review

The Technology Acceptance Model

The TAM is a well-established framework for explaining how and why individuals choose to adopt and use a particular technology. Developed by Davis in 1989, the TAM posits that two key factors drive an individual’s decision to utilize a technology: PEOU and PU. PEOU refers to the degree to which a person believes that using the technology would be free of effort or difficulty, and PU can refer to a student’s perception of how emerging technology improves learning tasks in terms of efficiency and effectiveness. According to Davis (1989), PEOU directly influences PU, meaning that if a person perceives a technology to be easy to use, they are more likely to also perceive it as useful. Therefore, both PEOU and PU shape an individual’s attitude toward the technology, which subsequently influences their behavioral intention to use it. This intention serves as the primary predictor of actual usage behavior. However, emerging cross-cultural studies suggest that the influence of PEOU and PU varies across sociocultural contexts, challenging TAM’s generalizability (Tarhini, 2016).

Attitude, as a mediating construct in the TAM, reflects a user’s overall evaluation of a technology based on cognitive and affective responses. In this study, perceived benefits are conceptualized as learners’ perceptions of affective and developmental outcomes, including improvements in language skills (vocabulary, grammar, writing, and reading), enhanced confidence in English use, academic progress (e.g., doing well in class), and motivation to engage with authentic English materials. These aspects extend beyond task-specific utility in L2 learning contexts (Guo & Wang, 2024). This reconceptualization also responds to critiques of TAM’s limited engagement with affective and value-laden learner responses, offering a more psychologically grounded account of technology acceptance in educational settings (Schorr, 2023).

Several studies have affirmed the validity of TAM in AI-based language learning (Barrot, 2023; Rong et al., 2024; Su et al., 2023; H. Wu et al., 2024; Zou & Huang, 2023). However, few studies go beyond measuring acceptance to interrogate why learners perceive these tools as useful or how these perceptions translate into academic gains. This gap suggests a need to extend TAM with constructs like perceived benefits to better capture learners’ value-based engagement (Guo & Wang, 2024).

More recently, scholars have noted that while TAM has been widely applied in educational technology, it does not fully account for the complexities of language learning. Calls for contextualized extensions emphasize affective, cultural, and pedagogical dimensions in L2 settings (Abdullah et al., 2024; Guo & Wang, 2024; Su et al., 2023).

Perceived Ease of Use

The construct of PEOU is particularly salient in the context of GAI, where ease of access and intuitive interfaces are often cited as key facilitators of users’ acceptance (Yang et al., 2024). Boudouaia et al. (2024) found improved usability perceptions among users, aligning with Ghafouri et al. (2024), who noted that GAI tools streamline writing and help students focus on key tasks. Vo and Nguyen (2024) and Rong et al. (2024) similarly echo these findings, showing that students who considered ChatGPT user-friendly also recognized its benefits for language acquisition. This suggests that technology perceived as accessible is often regarded as more beneficial (Qu & Wu, 2024; Shaikh et al., 2023) and is more likely to be actively engaged with (Ali et al., 2023).

Zou and Huang (2023) found that PEOU strongly predicted PU (B = 0.817), linking ease of use to perceived writing efficiency. In contrast, H. Wu et al. (2024) showed that while PEOU influenced both PU and attitude, only attitude predicted adoption intention, highlighting that the path from ease of use to adoption may depend on whether task performance or user disposition is emphasized. Notably, H. Wu et al. (2024) diverge from traditional TAM assumptions (Davis, 1989), finding that PU failed to predict either attitude or behavioral intention, indicating that attitude, rather than PU, potentially has a stronger role in AI adoption in this AI context.

Perceived Usefulness

Language learning involves multiple skills, including listening, speaking, reading, and writing, all requiring mastery of vocabulary, grammar, and syntax. PU of GAI tools in supporting these skills is gaining attention, though their precise impact on measurable learning outcomes remains uncertain (Barrot, 2023). Recent studies recognize GAI’s value in reducing the time and effort needed for tasks such as vocabulary building, grammar correction, and comprehension, thus enhancing learning efficiency (Lund et al., 2023; Yan, 2023). Barrot (2023) also views ChatGPT as a practical writing assistant, offering immediate feedback on grammar and syntax, but advises against overreliance, stressing the importance of original drafting to ensure deeper learning.

Extending Barrot’s (2023) functional framing, Su et al. (2023) illustrated ChatGPT’s usefulness in supporting students with key academic tasks such as outlining, revising, proofreading, and reflecting on their work. These capabilities demonstrate how students perceive the tool as useful for enhancing the quality and efficiency of their work. Building on this, Allen and Mizumoto (2024) note that while students predominantly favor AI technology for editing and proofreading, they assert that such technology provides effective feedback that enhances clarity and cohesion in their writing, along with preferences for specific prompts to improve their work.

Moreover, GAI tools facilitate collaborative learning and minimize errors, making them valuable in both academic and professional contexts (Geher, 2023; Holmes & Tuomi, 2022). The ability of GAI to suggest innovative ideas, themes, and perspectives is closely aligned with the PU construct, providing essential support throughout the language learning process (Kasneci et al., 2023). This functionality broadens learners’ perspectives and supports new directions in language learning, enhancing the quality of academic work. Such personalized AI support enhances engagement and supports meaningful, interactive language learning, keeping learners actively involved and motivated (Fauzi et al., 2023; Javaid et al., 2023).

Additionally, GAI tools’ ability to translate text across languages further contributes to their PU by ensuring accuracy and grammatical correctness, especially for students writing in a non-native language (Lund et al., 2023; Stockwell, 2024). Furthermore, GAI platforms like ChatGPT may be effective at reducing L2 apprehension commonly associated with lower L2 proficiency students (Hayashi & Sato, 2024), making it a valuable resource for those who struggle with language confidence. The tool’s capacity to produce accurate and consistent content by drawing on a vast database of information establishes it as a reliable resource, further reinforcing its PU (Imran & Almusharraf, 2023).

Perceived Benefits With GAI

While PU and perceived benefits may appear conceptually similar, this study distinguishes them as separate constructs within the TAM framework. PU focuses on how effectively AI chatbots enhance specific tasks, including brainstorming, grammar checking, and text revision, targeting task-specific utility rather than broader user attitudes. In contrast, perceived benefits with GAI reflect users’ value-based evaluations, including outcomes such as increased learner confidence and academic support. Recognizing the difference between these concepts helps in evaluating AI tools’ effectiveness and guiding improvements to better support language learners.

In this study, perceived benefits refer to broader learner outcomes beyond the task-specific focus of PU, including motivation, confidence, and academic support. While PU reflects functional utility, perceived benefits capture affective dimensions that shape how learners internalize the long-term value of GAI (Shoufan, 2023). Conceptually, perceived benefits serve as a refined extension of the “attitude” component in the original TAM, aligning with the notion that attitudes toward technology include not only utility but also emotional and motivational responses (Davis, 1989). Building on Shoufan’s distinction, Vo and Nguyen (2024) found that students held positive attitudes toward ChatGPT in language learning, highlighting helpfulness as a central factor influencing their perceptions. Within the attitude dimension, learners valued ChatGPT’s supportive features as a complement to traditional methods. Similarly, Shaikh et al. (2023) and Ali et al. (2023) highlight its perceived helpfulness in idea generation, language clarification, and engagement. Vo and Nguyen (2024) also apply Shoufan’s (2023) framework, framing helpfulness as a key affective component, reinforcing the inclusion of perceived benefits in extended TAM models.

Actual Use

Actual use refers to the extent to which users engage with a technology or system in practice. It reflects the real-world application of the technology by users, as opposed to their intentions or attitudes toward it (Davis, 1989). Alfadda and Mahdi (2021) identify actual use as a critical outcome variable in TAM, as it ultimately determines the success and effectiveness of the technology in fulfilling its intended purpose. Empirical studies by Barrot (2023) and Su et al. (2023) reinforce this view in the context of GAI, illustrating how students engage with AI tools not just for basic text generation but for a range of higher-order writing functions, including feedback, explanation, vocabulary development, coherence, and revision. These applications reflect the practical depth and pedagogical relevance of actual use in language learning, aligning closely with TAM’s emphasis on usage as a key metric of adoption and impact.

The actual use of GAI in educational settings has been documented with favorable learning outcomes. Guo and Wang (2024) observed that ChatGPT delivered significantly more feedback than traditional teacher input, which primarily focused on content-related issues. This increased feedback capacity suggests that students may benefit from more comprehensive evaluations of their work. Furthermore, AI assistance has been linked to improvements in writing motivation, self-efficacy, and collaborative tendencies (Teng, 2024). These outcomes underscore the need to view actual use not merely as tool utilization but as a complex interplay of technological affordances, learner psychology, and culturally influenced expectations.

L2 Proficiency and the TAM

Prior research suggests that L2 proficiency influences learners’ perceptions of technology use. Garcia and Pena (2011) and Niño (2009) found that lower-proficiency learners often rely on external scaffolding and technological aids for comprehension and production, making GAI tools especially useful due to their immediate, personalized support. In contrast, Lai and Gu (2011) observed that higher-proficiency learners tend to exhibit stronger metalinguistic awareness and self-regulation strategies, which may reduce their reliance on technology like GAI and lead to lower perceived usefulness. Together, these studies suggest that PU may vary across proficiency levels depending on learners’ cognitive and strategic resources.

Findings regarding PEOU are more mixed. Some studies suggest that factors like digital literacy and learner autonomy can mediate the relationship between language proficiency and ease of use (Reinders & White, 2016). However, the intuitive, conversational nature of GAI tools likely minimizes usability barriers. Recent research shows that language-learning chatbots are highly usable across proficiency and technical skill levels. A systematic review by Huang et al. (2022) found that “technological affordances” of educational chatbots consistently include “ease of use,” underscoring their user-friendly design. Similarly, a recent pilot study involving a conversational chatbot with foreign-language learners (Běláková & Klimová, 2024) found that both novice and more proficient students reported high usability and accessibility, with minimal differences in perceived effort. These findings suggest that GAI tools offer a stable user experience across diverse learners.

Lower L2 proficiency may also contribute to more favorable attitudes toward GAI tools, as these learners often associate tool use with increased confidence and immediate language support (Ali et al., 2023; Barrot, 2023; Cai et al., 2024). These learners frequently engage with GAI to complete tasks such as drafting, revising, or exploring vocabulary, thereby demonstrating higher task-based usage (Al-Zumor et al., 2013). This reliance on supportive feedback and scaffolding suggests that lower-proficiency students may not only perceive greater usefulness but also turn to these tools more frequently for targeted academic tasks. Based on this, the current study hypothesizes that L2 proficiency will predict PU but not significantly influence PEOU.

Cross-Cultural Technology Acceptance Research

Despite the increasing global reliance on digital tools for language education, there remains a limited number of empirical studies that explicitly adopt a comparative cross-cultural perspective. Existing research has demonstrated that while technology can enhance motivation, engagement, and linguistic outcomes, its effectiveness often varies depending on cultural and contextual factors. For instance, a comparative study between Malaysian and German learners of Mandarin Chinese found that technology played a key role in supporting language acquisition, with cultural differences influencing motivation and perceived usefulness (Abdullah et al., 2024). Similarly, P.-H. Wu and Marek (2016) reported a successful implementation of mobile-assisted language learning using the LINE app in a study involving Japanese and Taiwanese students, where cross-cultural collaboration significantly contributed to the development of communication skills.

In another study, Pradana et al. (2022) conducted a cross-cultural analysis involving learners from Russia, Indonesia, and Egypt, revealing that educational technologies enhanced language learning engagement and learner autonomy, though cultural expectations and pedagogical practices varied. Viberg and Grönlund (2013) examined students in Sweden and China, noting generally positive attitudes toward mobile technologies for language learning. However, their findings highlighted that individual-level factors, particularly gender, were more predictive of students’ attitudes than Hofstede’s cultural dimensions, suggesting that cultural generalizations alone may not fully capture learners’ technology acceptance behaviors.

Adding to this, Almusharraf & Bailey (2023a, 2023b) conducted a cross-cultural study comparing EFL students in Saudi Arabia and South Korea regarding their perceptions and practices related to machine translation tools. Utilizing the TAM, the research focused on PU, PEOU, and user attitudes to predict the actual use and future intentions for machine translation tools. Their findings revealed both shared and divergent patterns in technology use, highlighting how collectivist cultural orientations, pragmatic norms, and L2 proficiency interact with constructs like perceived usefulness and actual use in different cultural settings. Fajaruddin et al. (2024) further emphasized that the efficacy of technology-enhanced language learning is influenced by several contextual variables, including geographic location, methods of proficiency assessment, and the specific technologies employed. Their findings underscore the importance of tailoring digital language learning programs to the specific cultural and regional contexts in which they are implemented.

Proposed Study

Building on the theoretical foundations reviewed above, the present study investigates how ELLs engage with GAI tools through the lens of an extended TAM. Specifically, the study examines how learners’ PEOU and PU influence their perceptions of broader educational value (perceived benefits) and actual use of GAI in L2 contexts. In addition, the study considers the role of L2 proficiency as an external antecedent to PU and PEOU, recognizing that language skill level may shape how learners perceive and interact with AI-driven support systems.

The study also explores cultural variation by comparing students in Saudi Arabia and South Korea, two high-context, collectivist societies with differing educational systems and digital infrastructures. To capture both learner-level and cultural factors, the study adopts a cross-sectional, cross-cultural design and tests the hypothesized structural model using structural equation modeling. The research is guided by the following questions:

Research Question 1

How do perceived ease of use and perceived usefulness influence the perceived benefits and actual use of GAI among English language learners, and how is L2 proficiency associated with these perceptions?

Research Question 2

How do the relationships among PEOU, PU, perceived benefits, and actual use differ between Saudi and Korean English language learners?

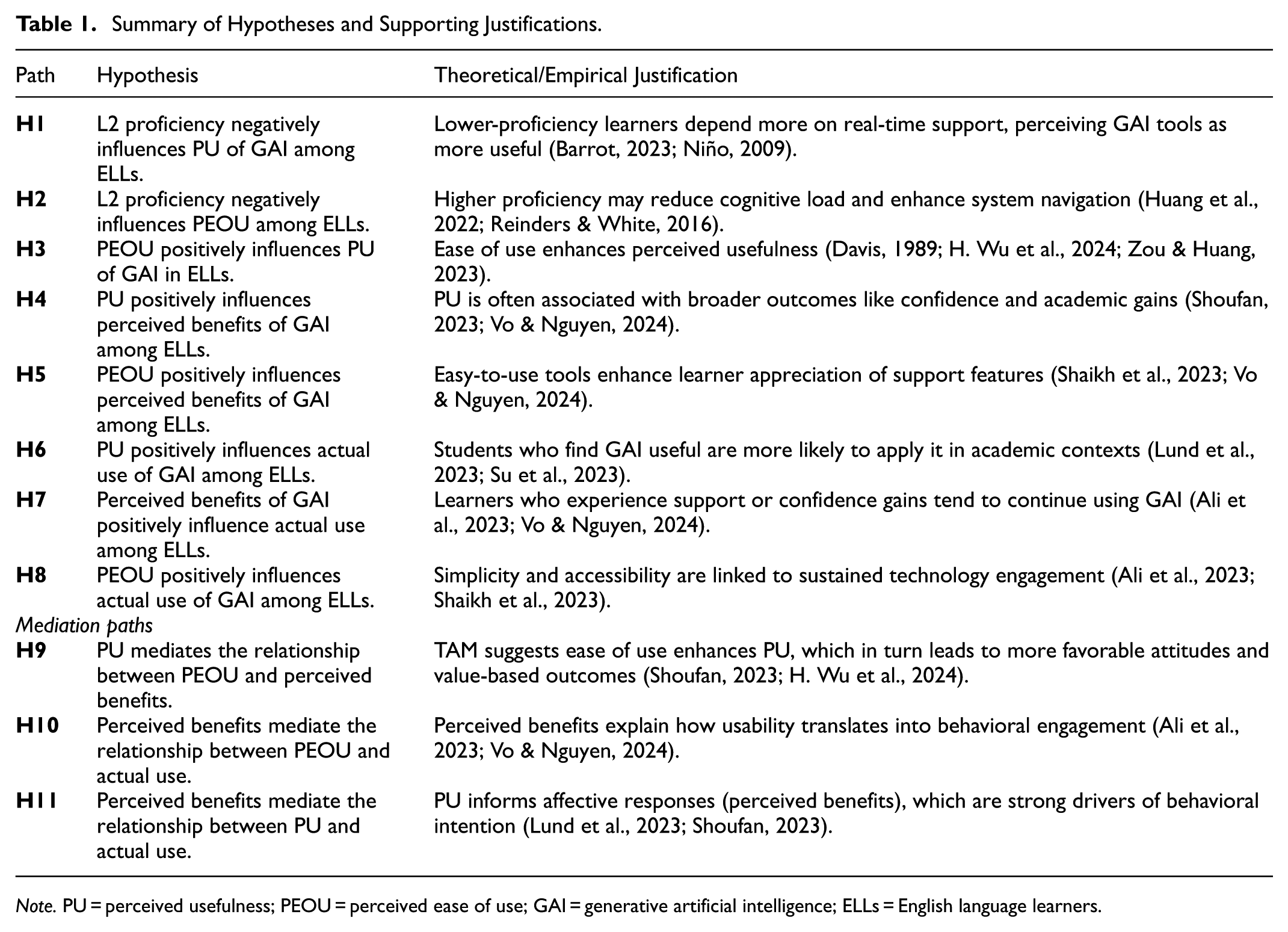

Based on prior research, the study proposes a set of direct and mediation hypotheses (

Summary of Hypotheses and Supporting Justifications.

Note. PU = perceived usefulness; PEOU = perceived ease of use; GAI = generative artificial intelligence; ELLs = English language learners.

Proposed model.

In the model, PU is defined as the learner’s perception of how GAI supports specific academic tasks such as L2 writing, content generation, editing, revising, and comprehension. Perceived benefits capture broader educational outcomes associated with GAI use, including vocabulary development, writing confidence, and academic support. Actual use reflects how learners engage with GAI tools in practice, such as seeking feedback, reviewing examples, or generating practice questions. L2 proficiency is modeled as an external antecedent to PU and PEOU rather than as a direct predictor of perceived benefits or actual use.

Methods

This study employed a cross-sectional research design to explore direct and indirect relationships between perceptions and actual use of GAI. The study also examined the moderating effect of geographic region by comparing how Saudi and South Korean ELLs perceive and use GAI for language learning. Using a cross-sectional design, it captured student beliefs 2 years after GAI platforms like ChatGPT became publicly available. Participants were university students in Saudi Arabia and South Korea enrolled in EFL courses emphasizing reading, writing, listening, and speaking. This design facilitates an immediate understanding of how GAI has been integrated into their learning experiences across different cultural contexts (Hofstede, 2001; Schwartz, 2014).

Participants

A total of 681 students (407 from Saudi Arabia and 274 from South Korea) participated in this study. The participants’ ages ranged from 18 to 31 years (M = 21.7, SD = 2.03). On average, students rated their English proficiency moderately above average, with a mean score of 6.23 (SD = 2.23) on a scale of 1 to 10, where 1 represents a true beginner and 10 represents an expert level. In terms of academic standing, the sample included 219 freshmen, 88 sophomores, 199 juniors, and 184 seniors. The students represented a variety of academic majors, including English Language and Literature (n = 150), Business and Economics (n = 120), Science and Engineering (n = 90), Social Sciences (n = 75), Media and Communication (n = 50), Arts and Design (n = 40), Nursing and Health Sciences (n = 60), Education (n = 35), Hotel and Tourism Management (n = 30), and Computer Science and Information Technology (n = 31). Table 2 displays demographics parsed between Saudi and Korean groups.

Demographic Information for Saudi and Korean Students.

Note. Nine students were later removed due to outlier issues.

Questionnaire Design

The actual use scale was influenced by prior machine translation studies in L2 learning (Almusharraf & Bailey, 2023a; Pham et al., 2022; Wei, 2021), but was adapted to reflect the interactive and generative functions of GAI. Whereas earlier MT research focused on direct translation, the present scale captures broader language learning applications unique to GAI, such as simulated assessments and real-time language scaffolding. Accordingly, the scale adopts a task-based behavioral approach that measures how students engage with GAI tools for specific academic tasks, including grammar explanations, writing feedback, and vocabulary development. This reflects recent adaptations of the TAM framework in educational contexts that prioritize meaningful, pedagogically relevant engagement over raw usage metrics (Granić & Marangunić, 2019). To support content and construct validity, the scale was developed based on classroom observations, exploratory discussions with EFL students, and expert reviews conducted by three PhD-level educators with advanced training in language studies. These reviewers confirmed item clarity and alignment with real-world GAI-supported learning tasks.

The PU items were adapted from L2 writing studies focusing on before-writing, when-writing, and after-writing strategies. These items were modified from established L2 writing strategy scales (Bailey, 2019; Kao & Reynolds, 2017; Maarof & Murat, 2013) to reflect the use of GAI during the brainstorming, monitoring, and editing stages of the L2 writing process. Additionally, three items were added to assess reading comprehension of texts, understanding assignment directions, and preparation for oral presentations. Overall, the PU items are task-specific and highlight how GAI assists with particular activities related to language learning.

The perceived benefits of the GAI component consisted of seven items measuring the broader impact of using AI chatbots, reflecting overall improvements in skills, confidence, and academic success. By incorporating ELL perceived benefits with GAI as a mediating variable, the study’s model can better explain how and why students transition from perceiving AI tools as useful for specific language tasks to actually developing foreign language skills. The construction of these items was influenced by Almusharraf & Bailey (2023a) study on ELL student perceptions of machine translations and Al-Zumor et al.’s (2013) study on the perceived advantages of blended learning. Items for the PEOU component were modified from Davis’s (1989) TAM questionnaire to suit the context of GAI for language learning purposes.

All items were rated on a 5-point Likert scale, with 1 being “strongly disagree” and 5 being “strongly agree.” Cronbach’s alphas for the scales ranged between .849 and .916, indicating strong internal reliability. A cronbach’s alpha of .70 or higher is generally considered acceptable for internal consistency (Nunnally, 1978), demonstrating that the scales in this study met and exceeded this threshold. To enhance transparency and replicability, the full list of items for each construct is provided in the Appendix 1.

Procedures

The survey was reviewed by four instructors with graduate degrees in TESOL. The final version was determined to meet the necessary standards of validity and quality. Subsequently, the questionnaire items were translated into Korean by one professional translator and into Arabic by another, each with over 10 years of experience in translating research documents, including questionnaires, proposals, and academic articles. The finalized versions of the questionnaire were converted into a web-based format and piloted with a small group of students to ensure its suitability. The pilot study was conducted with 11 students (four Saudi and seven Korean) and three Saudi instructors to evaluate the clarity, cultural relevance, and usability of the questionnaire in both languages. While not part of the target sample, the Saudi instructors were well-positioned to evaluate item clarity, linguistic accuracy, and cultural appropriateness. Participants were asked to complete the translated surveys and provide feedback on item wording, layout, and clarity. Minor modifications were made based on this feedback, such as simplifying wording in two PU items and adjusting one perceived benefits item for clearer cultural relevance. No major issues were reported with translation accuracy; the professional translators worked collaboratively with bilingual reviewers to deliver conceptual equivalence across versions. This process helped improve the face validity and cultural appropriateness of the instrument before full-scale data collection. The pilot test confirmed that the questionnaire items were clear and effectively measured the study’s variables.

Participants were recruited through convenience sampling during instructor-led sessions across four EFL university programs. In South Korea, students were enrolled in English communication courses at two universities, one metropolitan, one rural, where two instructors facilitated data collection across 10 classes. In Saudi Arabia, participants were English majors at two public universities in Riyadh. Surveys were administered during class, with instructors explaining the study’s purpose and voluntary nature. Participation was not incentivized, though nearly all students present completed the questionnaire.

The research methodology employed in this study was reviewed and approved following the institutional review board (IRB) policies and procedures of Imam Mohammad Ibn Saud Islamic University (IMSIU). The IRB approval number (638732135358736291) was granted in January 2025. The study involved only a voluntary, non-invasive questionnaire. Students were informed they could decline or skip any items without consequence, and informed consent was obtained prior to participation. Surveys were completed under instructor supervision, and all study communications were conducted in students’ native language (Arabic or Korean) to ensure comprehension.

Data Analysis

A combination of SPSS (version 29) and AMOS (version 27) were used to analyze data and answer research questions. Mean scores and Pearson correlation analysis were initially carried out to identify levels of GAI use and perceptions. Then, Pearson correlation was carried out to identify bivariate relationships among variables of interest. Next, the data were analyzed using AMOS (version 27). The analysis began with Confirmatory Factor Analysis (CFA) using Maximum Likelihood Estimation (MLE) to validate the proposed model. This approach allowed for the examination of the relationships among the model variables (e.g., PEOU, PU, perceived benefit, and actual use) and tested whether the items loaded onto their expected factors as hypothesized. Following the CFA, the study’s structural model was constructed and tested using AMOS to evaluate the hypothesized relationships among the variables. Configural invariance testing was performed to ensure that the model was consistent between Saudi and Korean groups, allowing for meaningful comparisons.

Data Cleaning

Data cleaning was conducted to ensure dataset quality. Nine multivariate outliers were identified and removed using Mahalanobis distance, based on a chi-square threshold of 15.09 for 5 degrees of freedom at p < .01 (Tabachnick & Fidell, 2013). Cook’s distance was also assessed using a threshold of 1 (Belsley et al., 1980), but no additional influential cases were found. The final sample for CFA and SEM analyses consisted of 681 participants.

Results

Preliminary View

Initially, mean score and Pearson correlation analysis were carried out to give a preliminary view of the data emanating from the questionnaire. Gender and age were added to the correlation analysis to give a broader understanding of the relationships among the variables of interest. Mean scores for individual items and overall scales are demonstrated in the Appendix 1. PEOU had the highest mean score (M = 3.50, SD = 1.13), indicating students can understand and are confident in using GAI tools for language learning. Actual use resulted in the lowest mean score (M = 2.85, SD = 1.29), with the lowest scoring item on the use of GAI for making practice questions.

Correlation analysis is displayed in Table 3. Older students had more positive attitudes, as shown by a statistically significant positive correlation between age and both actual use and perceived benefits. No differences in reported perceptions or use of GAI between genders. Increasing levels of L2 proficiency were associated with decreased levels of actual use and PU. Statistically significant relationships were present among all four observed TAM variables: PU, PEOU, perceived benefits with GAI, and actual use. The next step in this study involved carrying out structural equation modeling on the proposed model.

Correlation, Reliability, and Validity Measures of Study Variables.

p < .05 . **p < .01.

Reliability and Validity of Study Variables

Regarding reliability and validity measures (see Table 3), all values met or exceeded established thresholds for acceptability. Average Variance Extracted (AVE) values were above the recommended threshold of 0.50, indicating adequate convergent validity (Hair et al., 2014). Composite Reliability (CR) values exceeded 0.70, demonstrating strong internal consistency across constructs (Hair et al., 2014). Additionally, cronbach’s alpha scores for all variables were above .80, supporting strong internal reliability (Nunnally, 1978). Discriminant validity was confirmed by verifying that the square root of each construct’s AVE exceeded its inter-construct correlations (Hair et al., 2014). Based on these reliability and validity measures, structural equation modeling (SEM) was conducted to test the proposed model.

Research Question 1

To address the first research question, a combination of Exploratory Factor Analysis (EFA) using SPSS and Confirmatory Factor Analysis (CFA) using AMOS was conducted. A sample size of 5,000 was used, with a 95% confidence interval applied as bootstrapping parameters. The model included four factors, with L2 proficiency specified as an external variable.

CFA and SEM Model Fit Indices

Model fit indices such as CMIN/df, NFI, RFI, IFI, TLI, and CFI are standard in SEM to assess how well the hypothesized model fits the data, with values closer to 1 indicating a better fit. Additionally, RMSEA and PCLOSE provide error estimates; lower RMSEA values and significant PCLOSE values suggest better model accuracy (Hair et al., 2010).

The results from the model testing were as follows: CMIN/df = 4.217, NFI = 0.892, RFI = 0.878, IFI = 0.915, TLI = 0.904, CFI = 0.915, RMSEA = 0.069, and PCLOSE < 0.001. These indices indicate a satisfactory model fit. The model for both Saudi and Korean students is illustrated in Figure 2. This satisfactory model fit supports the validity of the identified factors and their relationships, providing a framework to address the first research question and to understand how these factors interact with L2 proficiency.

Tested structural model of GAI use.

The results showed that the model explained 79% of the variance in actual use and 70% of the variance in perceived benefits of using GAI for language learning. To assess the role of perceived benefits, we compared the model with and without this construct. The version without perceived benefits explained 73% of the variance in actual use, whereas the model including perceived benefits (Figure 2) explained 79% (ΔR 2 = .06), indicating a 6% increase in explanatory power when perceived benefits are included.

Table 4 presents the standardized coefficients for direct paths and mediation. Most hypotheses were supported; however, a few paths, such as the one between PEOU and actual use, were not statistically significant. In this path, the statistically significant correlation between PEOU and actual use, evident through bivariate correlation (see Table 3), disappeared when added to the model (β = .038), indicating full mediation. Therefore, the relationship between PEOU and perceived benefits is explained through PU.

Confirmed Hypotheses With Path Coefficient Regression Weights.

p < .05. **p < .01. ***p < .001.

L2 Proficiency had a standardized beta coefficient of −.09 with PU, indicating that less proficient students may find GAI tools slightly more helpful for language tasks like L2 writing and comprehension. It had no significant effect on PEOU (β = −.05), suggesting all students can effectively use GAI tools for L2 writing and comprehension and SLA regardless of L2 proficiency. The strongest relationships were seen between PU and perceived benefits (β = .70) and between perceived benefits and actual use (β = .42), indicating the importance of perceived benefits in predicting actual use. Additionally, the direct path between PU and actual use remained significant (β = .48).

Overall, the model suggests that perceived benefits and PU are significant predictors of actual use as measured by language skill accomplishments, with indirect effects through PEOU and L2 proficiency, although ease of use showed weaker effects.

Research Question 2

To explore cross-cultural differences in the use of GAI for language learning, research question two examined the path coefficients for Saudi and Korean speakers separately, aiming to identify any significant differences in the path relationships. Descriptive statistics were calculated to compare the models between Saudi and Korean students.

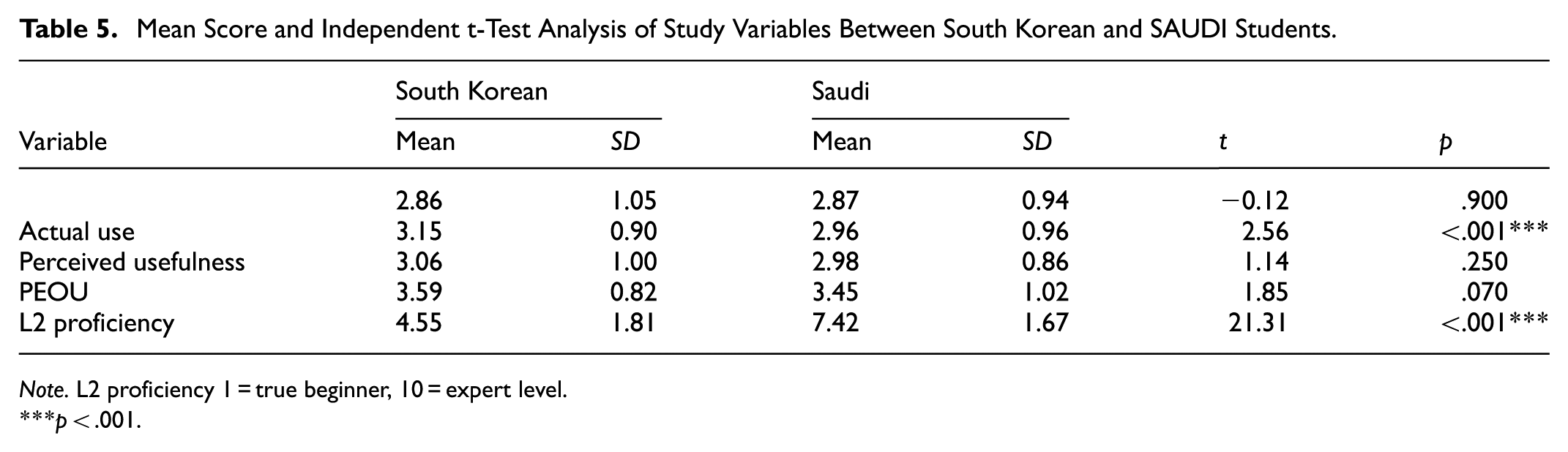

Table 5 presents the mean scores and results from the independent t-test analysis. A few statistically significant differences emerged, particularly in the mean scores for perceived benefits, PEOU, and L2 proficiency. Korean students reported higher scores for both PEOU and perceived benefits. While both groups exhibited high levels of PEOU, the Korean students’ scores were marginally higher. The most notable difference was in L2 proficiency, with Saudi students showing significantly higher levels.

Mean Score and Independent t-Test Analysis of Study Variables Between South Korean and SAUDI Students.

Note. L2 proficiency 1 = true beginner, 10 = expert level.

p < .001.

We next conducted invariance testing to go forward with group comparisons. Initially, configural invariance was assessed through separate confirmatory factor analyses for the Saudi and Korean groups. The model fit indices for the Korean group indicated an acceptable fit: CMIN/df = 2.237; NFI = 0.886; RFI = 0.870; IFI = 0.933; TLI = 0.924; CFI = 0.933; RMSEA = 0.067. Similarly, the model fit indices for the Saudi group were also considered acceptable: CMIN/df = 2.920; NFI = 0.872; RFI = 0.854; IFI = 0.912; TLI = 0.899; CFI = 0.911; RMSEA = 0.069. Criteria for configural invariance was met. Once configural invariance was established, researchers carried out a metric analysis to compare path coefficients across groups to see if the relationships differed.

A metric analysis using a bootstrap sample of 5,000 with a 95% confidence interval was conducted to examine differences in structural relationships between the Korean and Saudi groups. The analysis revealed three direct paths that significantly differed between the groups. Table 6 presents the results for the South Korean and Saudi models.

Comparison Between South Korean and Saudi Students.

Note. PU = perceived usefulness; PEOU = perceived ease of use; Perceived benefits = perceived benefits with GAI; Actual use = actual use of GAI.

p < .05. **p < .01. ***p < .001.

For the Saudi group, the effect of L2 proficiency on PU was significant (β = −.13, p = .004), suggesting that higher English proficiency negatively impacted PU. In contrast, this path was not significant for the Korean group (β = −.11, p = .065), indicating that English proficiency played a more critical role in PU within the Saudi context than in the Korean context.

The path from PEOU to GAI perceived benefits was significant for the Korean group (β = .30, p = .001) but not for the Saudi group (β = .08, p = .055), suggesting that PEOU had a stronger impact on perceived benefits for the Korean group. Lastly, the effect of PEOU on actual use was significant for the Saudi group (β = .15, p = .001) but not for the Korean group (β = −.06, p = .136), indicating that ease of use directly influenced actual use in the Saudi group but not in the Korean group.

Next, group differences in the strength of path relationships were assessed, regardless of significance. Although the direction and significance of most paths were consistent, the relationship strength varied. Model constraints tested whether path coefficients differed significantly, revealing group-level differences for

Discussion

Several significant findings emerged from this study. Most notably, students reported frequent and diverse uses of GAI for language learning tasks, with the highest-rated activities involving grammar explanation, writing feedback, and vocabulary learning. These tasks are pragmatically and linguistically complex, involving interpretation of cultural meaning, context, and appropriateness. GAI appears to support learners effectively in these areas, with consistent use predicting both perceived benefits and actual use, reinforcing its role in language development (House & Kádár, 2024). This pattern was observed in both Saudi and Korean groups, though some cross-cultural differences emerged. In particular, while students in both groups reported high levels of PEOU in accessing platforms like ChatGPT, the influence of L2 proficiency on PEOU varied between the groups. This suggests that learners’ cultural-linguistic orientations and language confidence influence their perceptions of GAI’s usability and usefulness.

From a cultural-pragmatic lens, these findings align with House and Kádár’s (2024) assertion that learner interactions with technology are influenced by culturally conditioned norms of appropriateness and communication. Similarly, Klimova and Hua Chen (2024) emphasize that learners’ trust in AI support tools is influenced by how well these tools align with expected educational and communicative practices. In addition, educational technology frameworks underscore that the value of digital tools extends beyond content delivery to include perceived pedagogical support, autonomy, and engagement (Granić & Marangunić, 2019; Mishra & Koehler, 2006). While this study does not directly measure agency, the consistent and purposeful use of GAI tools suggests that students engage with them in ways that support self-directed learning.

Hypotheses 1 to 3

Regarding

Hypotheses 4 to 8

PU with GAI showed a positive path coefficient with reported perceived benefits of using GAI for language learning. The positive relationship between PU and these perceived benefits can be attributed to several affordances of GAI, including brainstorming and idea generation (Kasneci et al., 2023), enhanced feedback (Geher, 2023), and increased efficiency in reducing time and effort (Lund et al., 2023; Yan, 2023). Additionally, the adaptability of GAI in customizing learning experiences to meet individual needs has further strengthened its perceived value in academic settings (Cooper, 2023). These findings reinforce the importance of extending TAM to include value-based constructs such as perceived benefits that consider emotional and developmental outcomes overlooked in the original PU-attitude linkage (Schorr, 2023; Shoufan, 2023).

The consistently high PEOU scores in the present study reaffirm the suitability of platforms like ChatGPT, Poe, and Gemini for L2 learners. These findings highlight the role of affective responses, such as reduced cognitive load and increased confidence, which are influenced by both technological design and cultural context. From a cross-cultural perspective, high ease of use may mitigate learners’ anxiety in high uncertainty avoidance contexts, such as South Korea and Saudi Arabia, where structured learning environments are often preferred (Hofstede, 2001; Tarhini, 2016).

Hypotheses 7 and 8

The complementary relationship between

In contrast,

Indirect Effects: Hypotheses 9 to 11

The indirect effects provide further insight into how PEOU, PU, and perceived benefits influence actual use of GAI in language learning.

Beyond the indirect pathways, a comparison of models with and without perceived benefits confirmed their incremental contribution. Including perceived benefits increased the variance explained in actual use from R 2 = .73 to R 2 = .79 (ΔR 2 = .06). This improvement in explanatory power provides insights into how perceived benefits serve both as a mediating mechanism and as a contributing attitudinal component that strengthens TAM’s ability to account for language learners’ engagement with GAI.

Comparison Between Saudi and South Korean Contexts

The multigroup analysis revealed notable differences between the Korean and Saudi groups in the structural relationships within the model. Three direct paths were identified as significantly different based on the significance levels across the two groups. First, the effect of L2 proficiency on PEOU was significant for the Saudi group and not for the Korean group. This finding suggests that Saudi learners may be more influenced by their language skills when evaluating the usefulness of AI chatbots or other educational technologies. This could indicate a cultural or contextual difference in how these students approach language learning, possibly reflecting a greater reliance on technology to support their language acquisition (Farhi et al., 2023).

Furthermore, the results indicate that PU more strongly predicted perceived benefits for Saudi students, suggesting they view AI tools as particularly valuable for improving their language learning. This could be due to AI addressing specific learning needs or gaps in proficiency (Teng, 2024). Additionally, PEOU was a stronger predictor of actual use among Saudi students, indicating that ease of navigation and usability play a key role in encouraging their use of AI tools. Even if users perceive the tools as less useful, a user-friendly interface can promote greater engagement, as technology that is easy to use is often viewed as more beneficial (Shaikh et al., 2023) and more likely to be actively used (Ali et al., 2023; Qu & Wu, 2024).

Conversely, the finding that PEOU predicted perceived benefits more for Korean students may indicate that they find these technologies easier to use. Korean students might have a lower barrier to entry with respect to technology, leading them to perceive a higher level of benefit from using AI tools (Lee & Kwon, 2024). Students in such environments are likely to value PEOU as a critical factor in determining the perceived benefits of a tool. This could reflect differences in prior exposure to technology or educational practices in their respective contexts. Also, the finding that PU predicted actual use more strongly among Korean students than Saudi students suggests that Korean students are more likely to engage with AI chatbots when they find them useful. This could indicate a higher motivation or willingness to utilize technology in their learning processes when they recognize its usefulness, potentially reflecting a more proactive approach to language learning.

These group-specific differences may be better understood through established cultural and educational frameworks. According to Hofstede’s cultural dimensions, Saudi Arabia scores very high on power distance and uncertainty avoidance (Hofstede Insights, n.d.). These traits are often associated with hierarchical, teacher-centered learning environments and a cautious approach to adopting new educational technologies unless they are formally endorsed or institutionally integrated (Al-Issa, 2018). In contrast, South Korea’s strong long-term orientation and competitive academic culture have fostered extensive ICT integration and support for learner autonomy. National initiatives, such as those implemented by the Korea Education and Research Information Service (KERIS, 2020), reflect the country’s commitment to educational technology. South Korean students are also likely to have more familiarity with blended learning and self-regulated use of digital tools (Roh & Kim, 2019). These cultural and pedagogical distinctions may help explain the structural differences observed in the model: PU was a stronger predictor of actual GAI use among Korean learners, while PEOU more strongly predicted use among Saudi learners. This pattern aligns with broader cultural values, technological familiarity, and classroom traditions in each context.

Although gender was not a primary focus, the uneven distribution, particularly the higher proportion of Saudi female participants, may have influenced attitudes toward AI, motivation, or instructional preferences. Prior studies suggest gender can shape learners’ expectations and comfort with educational technologies (Viberg & Grönlund, 2013), warranting further exploration as a moderating variable in cross-cultural GAI adoption. Similarly, the greater number of Korean freshmen and Saudi upperclassmen may have impacted familiarity with academic writing and GAI tools. Future research should examine the year of study as a potential moderator in technology acceptance.

Despite the observed cross-cultural differences, the study also revealed notable similarities between Saudi and Korean learners. Both groups reported high levels of PEOU and frequent engagement with GAI for tasks such as grammar support, writing feedback, and vocabulary learning. These shared patterns suggest a common recognition of GAI’s utility in supporting core L2 skills, regardless of regional context. This convergence may reflect broader trends in globalized digital literacy and the intuitive design of GAI platforms, which appear accessible and pedagogically relevant across diverse linguacultural settings.

Pedagogical Implications

From a pedagogical perspective, the findings underline the importance of ensuring that GAI tools are not only easy to use but also perceived as highly beneficial to students’ learning outcomes. Since PU and perceived benefits strongly predict actual use, educators should emphasize the practical applications of AI tools in language learning, helping students understand how these tools can enhance their skills. For example, instructors could design assignments that explicitly incorporate GAI to help students practice writing, comprehension, or even peer review. By integrating these tools into regular coursework, students may see more immediate perceived benefits, which could increase actual use.

While students reported relatively high engagement with GAI for tasks such as writing feedback, grammar support, and vocabulary learning, these functions involve subtle pragmatic and cultural dimensions. As House and Kádár (2024) argue, learners interpret communicative appropriateness through culturally conditioned norms, which can affect how they evaluate GAI responses, especially when those outputs feel impolite, too direct, or misaligned with cultural expectations. Educators should therefore integrate digital intercultural pragmatics into AI-based language instruction, helping students navigate and evaluate GAI content critically, as also emphasized by Klimova and Hua Chen (2024).

The significant demographic differences, particularly in age and L2 proficiency, suggest that educators should adopt differentiated strategies for AI integration based on learner profiles. For younger or less proficient students, scaffolding may be necessary to encourage greater engagement with AI tools. On the other hand, older students or those with higher proficiency might benefit from more advanced, self-directed tasks that challenge their language skills while utilizing AI.

The comparison between Korean and Saudi groups emphasizes the need for tailored instructional strategies that highlight practical benefits for Saudi students while enhancing usability for Korean students through guided support. Educator training should focus on understanding cultural differences in technology perception, and AI tools should be designed to be user-friendly to improve PEOU, especially for Saudi learners. Implementing feedback mechanisms will allow for real-time adaptations to teaching methods, while collaborative learning opportunities can leverage the strengths of both groups. Continuous assessment of AI tool effectiveness, integration of culturally relevant content, and activities that build self-efficacy and motivation are essential to creating an inclusive learning environment that meets the diverse needs of all students.

Conclusion

The current study, using TAM, explored student perceptions of GAI among Saudi and Korean English Language Learners, focusing on four key TAM constructs: PEOU, PEOU, perceived benefits, and learning outcomes. Structural equation modeling of data from 681 students (407 Saudi and 274 Korean) revealed a strong model fit, accounting for 79% of the variance in actual GAI use. While both groups demonstrated high levels of PEOU, Korean students reported marginally higher perceived benefits, yet Saudi learners exhibited significantly higher L2 proficiency. Notably, L2 proficiency was negatively associated with actual AI use, suggesting that more proficient students may rely less on AI assistance. However, the comparison between constrained and unconstrained models showed no significant difference in fit indices between the two groups, indicating that GAI tools may be equally effective across both cultural contexts.

The results offer several key insights that have both theoretical and practical implications for future research. First, the finding that PEOU scored the highest, while the actual use of GAI tools scored the lowest, suggests a gap between students’ ability to understand these tools and their willingness or need to use them. Future studies could explore the factors contributing to this gap, such as potential barriers like motivation, relevance to coursework, or perceived time efficiency. Moreover, the positive association between age and actual use indicates that older students may be more adaptable or see greater value in these tools. This demographic factor should be considered in longitudinal studies that examine the evolving role of GAI in language learning.

The data showed a negative association between L2 proficiency and both actual use and PEOU, suggesting that students with higher proficiency might be less reliant on GAI tools for language learning. This raises questions about the specific needs and expectations of different proficiency levels when interacting with technology. Researchers should investigate how AI tools can be tailored to offer more advanced features that appeal to higher proficiency learners, perhaps shifting the focus from basic assistance to more complex language tasks, like advanced writing or critical reading skills. Additionally, the potential full mediation of PEOU by other factors, such as PEOU and perceived benefits, opens avenues for exploring mediating variables in AI adoption models, including motivational factors or task-specific uses.

While this study offers valuable insights into how Saudi and Korean English language learners perceive and engage with generative AI, it is important to acknowledge certain limitations. Chief among them is the reliance on self-reported data without triangulation from actual usage metrics, which may constrain the depth and accuracy of the findings. Future research should incorporate multiple data sources, such as system logs or classroom observations, to provide a more comprehensive and validated understanding of technology use in language learning contexts. In addition, group differences in L2 proficiency and academic standing, particularly the higher reported proficiency among Saudi students, may have influenced the behavioral outcomes observed in the model, independent of cultural or pedagogical factors. While all participants were enrolled in EFL courses, differing academic majors, English majors in Saudi Arabia versus general education students in South Korea, may have influenced motivation, proficiency, and GAI perceptions, affecting cross-cultural comparisons. Furthermore, the cross-sectional design of the research does not capture the long-term impact of GAI on language learning outcomes. Another limitation lies in the lack of qualitative data, which could have provided deeper insights into students’ experiences and challenges with AI tools. Finally, the study did not account for other external factors, such as institutional support or specific teaching practices, which could influence the effectiveness of GAI in EFL settings. Future research could address these limitations by incorporating longitudinal designs, diverse populations, and mixed methods approaches.

Footnotes

Appendix

Mean Scores and Standard Deviations for Survey Scales.

| M | SD | ||

|---|---|---|---|

| Actual use for language learning | |||

| 1 | I ask AI chatbots (e.g. ChatGPT, Poe, etc.) to explain English grammar rules and concepts. | 3.04 | 1.19 |

| 2 | I seek feedback on English writing from AI chatbots (e.g. ChatGPT, Poe, etc.). | 2.97 | 1.24 |

| 3 | I compare my English writing with examples created by AI chatbots (e.g. ChatGPT, Poe, etc.). | 2.91 | 1.18 |

| 4 | I learn new English words and phrases using AI chatbots (e.g. ChatGPT, Poe, etc.). | 2.87 | 1.26 |

| 5 | I use AI chatbots (e.g. ChatGPT, Poe, etc.) to simulate English tests and quizzes. | 2.50 | 1.30 |

| Total | 2.85 | 1.29 | |

| Perceived usefulness for EFL | |||

| 6 | It is most useful to use AI chatbots (e.g., ChatGPT, Poe, etc.) when double-checking what you wrote in English. | 3.30 | 1.15 |

| 7 | It is most useful to use AI chatbots (e.g., ChatGPT, Poe, etc.) when revising your work. | 3.23 | 1.17 |

| 8 | It is most useful to use AI chatbots (e.g., ChatGPT, Poe, etc.) while writing something in English | 3.23 | 1.16 |

| 9 | It is most useful to use AI chatbots (e.g., ChatGPT, Poe, etc.) when editing. | 2.96 | 1.18 |

| 10 | It is most useful to use AI chatbots (e.g., ChatGPT, Poe, etc.) when checking my reading comprehension of a text. | 2.90 | 1.20 |

| 11 | It is most useful to use AI chatbots (e.g., ChatGPT, Poe, etc.) when preparing oral presentations. | 2.82 | 1.25 |

| 12 | It is most useful to use AI chatbots (e.g., ChatGPT, Poe, etc.) when reading directions. | 2.58 | 1.25 |

| Total | 3.01 | 1.19 | |

| Perceived benefits of GAI | |||

| 13 | AI Chatbots (ChatGPT, Poe, etc.) help with my vocabulary. | 3.20 | 1.15 |

| 14 | AI Chatbots (ChatGPT, Poe, etc.) help my writing skills. | 3.20 | 1.20 |

| 15 | AI Chatbots (ChatGPT, Poe, etc.) help me do well in English classes. | 3.09 | 1.18 |

| 16 | AI Chatbots (ChatGPT, Poe, etc.) will help you increase your confidence in using English. | 3.07 | 1.25 |

| 17 | AI Chatbots (Chatbot, Poe, etc.) help with my grammar skills. | 3.04 | 1.22 |

| 18 | AI Chatbots (ChatGPT, Poe, etc.) help my reading skills. | 2.88 | 1.22 |

| 19 | AI Chatbot (ChatGPT, Poe, etc.) helps you read books, online newspapers, and website articles written in English. | 2.80 | 1.29 |

| Total | 3.04 | 1.21 | |

| Perceived ease of use | |||

| 20 | I can use AI Chatbots (e.g., ChatGPT, Poe, etc.) anytime, anywhere as long as I have internet | 3.69 | 1.23 |

| 21 | AI Chatbots (e.g., ChatGPT, Poe, etc.) are clear and understandable | 3.51 | 1.07 |

| 22 | I can easily understand AI Chatbots’ (e.g., ChatGPT, Poe, etc.) translations | 3.45 | 1.11 |

| 23 | Even if you translate complex vocabulary, the AI Chatbot (ChatGPT, Poe, etc.) can understand it. | 3.35 | 1.11 |

| Total | 3.50 | 1.13 |

Acknowledgements

The authors would like to thank Imam Mohammad Ibn Saud Islamic University (IMSIU) for supporting and funding this project.

Ethical Considerations

The research methodology employed in this study was reviewed and approved following the institutional review board (IRB) policies and procedures of Imam Mohammad Ibn Saud Islamic University (IMSIU). The IRB approval number (638732135358736291) was granted in January 2025.

Consent to Participate

All participants gave informed consent for inclusion before participating in the study.

Author Contributions

Asma Almusharraf contributed to the study through conceptualization, data curation, project administration, funding acquisition, supervision, visualization, and writing—both the original draft and the review and editing processes. Daniel Bailey contributed to the study through conceptualization, formal analysis, methodology, validation, visualization, and writing—both the original draft and the review and editing processes.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported and funded by the Deanship of Scientific Research at Imam Mohammad Ibn Saud Islamic University (IMSIU) (grant number IMSIU-DDRSP2502).

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The data that supports the findings of this study are available from the author upon reasonable request.