Abstract

The rise of misinformation on social media has been a cause for concern globally. While existing research has focused on individual characteristics that drive misinformation belief and sharing, research comparing platforms are limited. In particular, platforms owned by Meta – Facebook, WhatsApp, and Instagram – have been scrutinized for their role in misinformation engagement. Addressing this gap, we conducted an experiment to examine how cross-platform differences on Meta-platforms impact susceptibility to and intentions to share misinformation. The findings suggest that participants perceived the misinformation as more credible on Instagram than on Facebook and WhatsApp. However, we did not find statistical differences in misinformation-sharing intentions across platforms. Additionally, we found that individuals with low cognitive ability were more likely to share misinformation across conditions irrespective of perceived accuracy levels. Individuals with high cognitive ability were more likely to share the post only if they perceived the misinformation to be credible. These results indicate that cross-platform differences can drive how individuals perceive misinformation, but cognitive ability may act as a buffer against misinformation susceptibility. The findings of our study underscore the importance of examining inter-platform disparities in how individuals engage with misinformation.

Plain language summary

This study looks at how people engage with misinformation differently across social media platforms, focusing on Meta-owned platforms: Facebook, Instagram, and WhatsApp. Participants in the study were randomly shown the same piece of misinformation on one of these platforms. The findings showed that people were generally worse at spotting the misinformation on Instagram compared to Facebook and WhatsApp. However, there were no significant differences in how likely people were to share the misinformation across the three platforms. The study also found that cognitive ability played an important role. People with lower cognitive ability were more likely to share misinformation, regardless of how accurate they thought it was. In contrast, those with higher cognitive ability were only likely to share it if they believed it to be true. This suggests that differences between social media platforms can affect how people interpret misinformation, but cognitive ability can help some individuals resist misinformation more effectively. These findings highlight the importance of studying how different platforms influence people’s interactions with misinformation.

Introduction

The advancement of social media platforms has transformed how individuals consume and share information. A recent poll from Pew Research showed that at least 72% of Americans reported relying on social media for news (Aubin & Liedke, 2024). While social media have allowed for increased information availability, they have also facilitated the spread of misinformation. Existing research has defined misinformation as false or inaccurate information “where the source is unaware that the information is false” (Jennings & Stroud, 2023). Existing research has found that if not corrected, misinformation on social media can negatively influence attitudes toward vaccines (Hernandez et al., 2021; Rasul & Ahmed, 2023), lower trust in media (Ognyanova et al., 2020), shape political attitudes negatively (Berinsky, 2017; Weeks & Garrett, 2014), and alter perceptions of reality (Pennycook & Rand, 2021) among other detrimental consequences. While misinformation is not a new problem (Freiling et al., 2023), it has become a serious concern due to the rapidly expanding information environment (Ecker et al., 2024). As such, it is vital to understand how individuals engage with misinformation on social media platforms.

In particular, social media platforms owned by Meta – Facebook, Instagram, and WhatsApp – have been at the center of misinformation research. For instance, misinformation was found to be heavily shared on Facebook during the 2016 US presidential election (Allcott & Gentzkow, 2017). Likewise, other studies have found misinformation to be shared on WhatsApp (Rossini et al., 2020) and Instagram (Massey et al., 2020). Yet, to the best of our knowledge, no studies have examined cross-platform differences across Meta-owned platforms. Rather, existing studies focus on misinformation on either a single Meta platform (Jennings & Stroud, 2023; Kalogeropoulous & Rossini, 2025; Mena et al., 2020) or two platforms (Rossini et al., 2020). Since most social media users hold multiple accounts on different platforms for various purposes (Waterloo et al., 2018), it is crucial to understand how individuals engage with misinformation on different platforms.

A growing body of research has argued that platform affordances, or the unique features and functionalities of a platform (Eriksson Krutrök & Lindgren, 2022), can play an important role in user behavior and information sharing (Sundar et al., 2021). For example, visual platforms such as Instagram are primarily focused on images and short videos and often lack credibility cues (Winter & Krämer, 2014), which may render users susceptible to misinformation or result in unintentional sharing of misinformation. Similarly, misinformation may be more believable due to a lack of source cues and may be shared with close ties (Resende et al., 2019). Understanding platform differences in misinformation engagement could aid in developing targeted interventions to counter misinformation.

Further, individual-level factors such as analytical thinking or cognitive ability have been argued to play a critical role in how individuals react to misinformation (Ahmed & Rasul, 2023; Pennycook & Rand, 2019). However, it is not entirely clear how these factors interact with platform affordances. For instance, does cognitive ability equally affect individuals’ likelihood of sharing misinformation across platforms? And do visual or more private messaging features amplify or mitigate this behavior?

In order to address these gaps, this study examines how cross-platform differences on Meta-owned platforms (Facebook, Instagram, and WhatsApp) impact users’ susceptibility to and intentions to share misinformation. More specifically, we conduct a between-subjects, posttest-only experiment to compare user perceptions of misinformation accuracy and sharing behaviors across three unique platforms. In addition, we assess whether cognitive ability moderates these relationships (see Figure 1 for a conceptual model). Ultimately, this study sheds light on the interplay between platform affordances and user characteristics, which provides a deeper understanding of how misinformation engagement occurs on different platforms.

Conceptual framework.

Platform Differences and Misinformation Susceptibility

Previous studies have explored the factors influencing an individual’s susceptibility to misinformation. For instance, studies conducted using German and US samples have found that personality traits, particularly extraversion, is positively associated with susceptibility to misinformation (Calvillo et al., 2021; Sindermann et al., 2021). Similarly, using a sample of participants from Singapore, Ahmed and Rasul (2022) found that extraversion, openness, and neuroticism were related to COVID-19-related misinformation belief. While individual-level characteristics such as dispositions, values, and cognitive ability have been extensively studied in Western and non-Western contexts (Bryanov & Vziatysheva, 2021; Escolà-Gascón et al., 2023; Islam et al., 2020; Tandoc et al., 2021), other determinants like social media trust, network characteristics, and perceptions of platforms are relatively understudied.

Existing research offers varied perspectives on the degree of trust individuals place in information encountered on social media. Some studies suggest high trust in social media information (Bantimaroudis et al., 2020; Mitchell et al., 2019), while others indicate a pronounced skepticism (Shearer & Grieco, 2019). This trust, or lack thereof, has implications. Empirical research suggests that a heightened trust in news from social media correlates with an increased susceptibility to conspiracy theories and misinformation (Xiao et al., 2021). The Trust in News Project report underscores this by revealing that Americans trust news from Facebook the most, followed by Instagram and WhatsApp (Mont’Alverne et al., 2022). However, a study by Wike et al. (2022) paints a contrasting picture, with many Americans expressing growing distrust in platforms like Facebook, associating them with societal divisions and threats to democracy. This sentiment extends to the journalistic community, with some attributing the decline in public trust in the news to the influence of these platforms (Newman et al., 2022).

Further, due to the different design and nature of Meta platforms, susceptibility to misinformation may differ. The nature of one’s social media network also plays a pivotal role in shaping perceptions. Each platform, Facebook, WhatsApp, or Instagram offers a unique networking experience that can affect the information environment (Al-Rawi & Prithipaul, 2023). For instance, WhatsApp is often the go-to for close-knit interactions between strong ties, which can result in susceptibility to misinformation due to the group members being perceived as credible. Indeed, message credibility has been found to increase when information is endorsed or recommended by known others (Metzger & Flanagin, 2013). Prior research has found that individuals encounter information on Facebook frequently due to weak social ties (Nielsen & Schrøder, 2014), which may also result in exposure to misinformation. Likewise, individuals use Instagram to maintain existing relationships and for entertainment (Chen, 2018), which may render individuals susceptible to false beliefs as their guard is low. The choice of platform often aligns with the type of interactions individuals seek (Hayes et al., 2016a). Despite consistent relational depths across platforms, the nature of social support can vary (Hayes et al., 2016b). This suggests that perceptions of misinformation’s accuracy might differ across platforms, independent of network characteristics.

Furthermore, the sheer volume of misinformation on a platform can sway public perceptions. Scholars have raised concerns about rampant misinformation on Facebook (Lazer et al., 2018) and identified that WhatsApp serves as a primary conduit for disseminating misinformation, particularly political content (Farooq, 2018; Garimella & Eckles, 2020). As an example, one study found that participants indicated a high perceived level of exposure to misinformation on Facebook and WhatsApp in Brazil (Rossini et al., 2021). Other studies have found visual misinformation prevalent on Instagram (Rovetta & Bhagavathula, 2020). Massey et al. (2020) found significant evidence of HPV vaccine-related misinformation on Instagram. Due to the widespread presence of misinformation, platforms have introduced fact-checking measures, such as flagging dubious claims (Facebook, 2023). However, their efficacy is debatable, especially when users resort to lazy reasoning, a cognitive shortcut bypassing critical thinking and relying on superficial cues (Pennycook & Rand, 2019).

There is a consensus among scholars that repeated exposure to misinformation on platforms like Facebook, Instagram, and WhatsApp can solidify trust in false beliefs (Pennycook et al., 2018). However, it is unclear whether the perceived accuracy of misinformation varies across these platforms. Addressing this gap is crucial, as understanding these nuances can inform strategies to combat misinformation and guide platform-specific interventions.

Given the complexities surrounding misinformation on social media and the varying perceptions across platforms, we propose the following research question:

Platform Affordance and Misinformation Sharing

Social media platforms are characterized by distinct features, commonly called “affordances.” These affordances may play a role in facilitating or hindering the spread of misinformation. For instance, an affordance that can amplify misinformation is the algorithm determining the order of posts in a user’s social media feed. Algorithms are designed to optimize engagement; therefore, they often promote clickbait content (Iyengar & Massey, 2019). For instance, Instagram was found to recommend COVID-19 misinformation to its users (Bond, 2021), and Facebook’s algorithm prioritizes user-generated posts, thereby increasing the likelihood of exposure to false information (Rossini et al., 2020).

Another critical platform affordance is the media type of its posts. While some platforms primarily facilitate text-based posts, others are geared toward visual content. Much existing research has focused on text-based misinformation on platforms like Facebook (Bode & Vraga, 2015; Vraga & Bode, 2018). However, visual misinformation on platforms such as Instagram and WhatsApp is becoming increasingly concerning. Evidence suggests that social media use correlates with sharing visual misinformation, including deepfakes (Ahmed, 2021, 2022). Recent research indicates that visual misinformation is particularly prevalent on WhatsApp, especially during election periods in certain countries (Tardáguila et al., 2018). This highlights a significant research gap in understanding the nuances of misinformation spread across different platforms.

The patterns observed in the spread of visual and text-based misinformation underscore the importance of understanding user motivations. Therefore, while platform affordances, such as algorithms and media types, significantly influence the spread of misinformation, the individual behaviors of users cannot be overlooked. For instance, platforms that foster peer comparison and self-promotion could trigger the desire for social recognition and belonging (Islam et al., 2020) or the “fear of missing out” (FOMO; Ahmed, 2022), thereby predicting the intentional sharing of misinformation. However, there remains a lack of clarity on how these dynamics differ across platforms, emphasizing the need for further research. Given the intricate interplay of platform affordances, media types, and individual motivations in the spread of misinformation, we propose the following research question:

Perceived Claim Accuracy and Sharing Intentions

The proliferation of misinformation on social media platforms has raised concerns about its impact on users’ perceptions and behaviors. A foundational premise in this domain is the link between belief in misinformation and its subsequent sharing. Research consistently underscores that repeated exposure to a claim amplifies its perceived veracity, a cognitive bias known as the illusory truth effect (Dechêne et al., 2010; Effron & Raj, 2019; Udry & Barber, 2024). This effect suggests that claims, when encountered repeatedly, become easier to process, making them appear more accurate, even if they are inherently false (Alter & Oppenheimer, 2009; Smelter & Calvillo, 2020; Wang et al., 2016). Ambiguous statements, in particular, are especially susceptible to the illusory truth effect (Fazio et al., 2019; Pennycook et al., 2018). Remarkably, this effect is so potent that it can lead people to believe even outlandish claims, as evidenced by experiments showing increased belief in the flat Earth conspiracy theory after repeated exposure (Fazio et al., 2019).

Given the potency of the illusory truth effect, its implications are important to consider. Continuous exposure to misinformation increases the likelihood of belief, and this belief, in turn, is a precursor to sharing (Halpern et al., 2019). This cycle is exacerbated when misinformation is propagated by trusted acquaintances or influential public figures, turning unsuspecting users into conduits for further misinformation spread. This is evident in research findings that a handful of “superspreaders” are responsible for a significant portion of misinformation on social media (Allen et al., 2020; Guess et al., 2020).

Delving deeper into the dynamics of misinformation sharing, certain factors emerge as catalysts. Regular social media usage and trust in information from contacts are pivotal in misinformation dissemination (Halpern et al., 2019). Crucially, these factors are mediated by the perceived credibility of the misinformation. Rasul and Ahmed (2023) further emphasize that the perceived accuracy of misinformation directly correlates with sharing intentions. Compounding this issue are social media algorithms that prioritize and amplify viral content. Given that users often engage in lazy reasoning online, coupled with frequent encounters with misinformation (Chadwick & Vaccari, 2019; Pennycook & Rand, 2019), there is a heightened risk of users accepting and disseminating false claims. Hence, we hypothesize the following:

The Contingent Role of Cognition

A growing body of research underscores the protective role of cognitive ability against the belief and dissemination of misinformation (Kantorowicz-Reznichenko et al., 2022; Pennycook & Rand, 2021; Tandoc et al., 2021). These scholars argue that when individuals engage in deliberation and careful reasoning, they can discern between truthful and false claims. For instance, Pennycook and Rand (2021) illuminated the significant interplay between emotion and susceptibility to fake news. Their research suggests that individuals who rely more on emotion are more likely to believe in fake news. In contrast, those who engage in analytical thinking and deliberation are less prone to such beliefs. These findings align with the dual-process theory, which posits that higher levels of analytical thinking, also known as motivated system 2 reasoning, can override an individual’s intuitive response to information and shield against misinformation (Pennycook & Rand, 2021). Given the robust evidence, we hypothesize that:

However, there are concerns about the passive consumption of content on social media platforms. Many users rely on heuristics and intuition rather than critical evaluation, making them vulnerable to deceptive content. Pennycook and Rand (2020) further highlighted that a heightened emotional state could predict a greater belief in fake news, emphasizing the importance of emotional regulation in discerning information accuracy. Moreover, cognitive overload can diminish deliberation, increasing susceptibility to misinformation (Ahmed & Rasul, 2023; Bago et al., 2020). This lack of critical engagement may amplify misinformation due to uninformed sharing behaviors. Given these dynamics, it is anticipated that individuals with higher cognitive skills will navigate social media with a more discerning eye. However, less is known about how cognitive ability interacts with platform affordances. As such, we pose our final research question:

Method

Sample and Procedure

A total of 250 residents from the United States were recruited for this study by the market research firm Qualtrics LLC. Participants were recruited through Qualtrics LLC, a market research panel aggregator, using a quota sampling approach to ensure a balanced distribution across age and gender. The survey presentation was designed by the authors using Qualtrics’ proprietary survey software. To confirm eligibility, prospective participants were screened through demographic questions on the first page of the online survey. Those who did not meet the criteria or declined consent could not proceed. To enhance data integrity, Qualtrics employed digital fingerprinting technology to filter out bots and ensure responses came from valid human participants (ESOMAR, 2021).

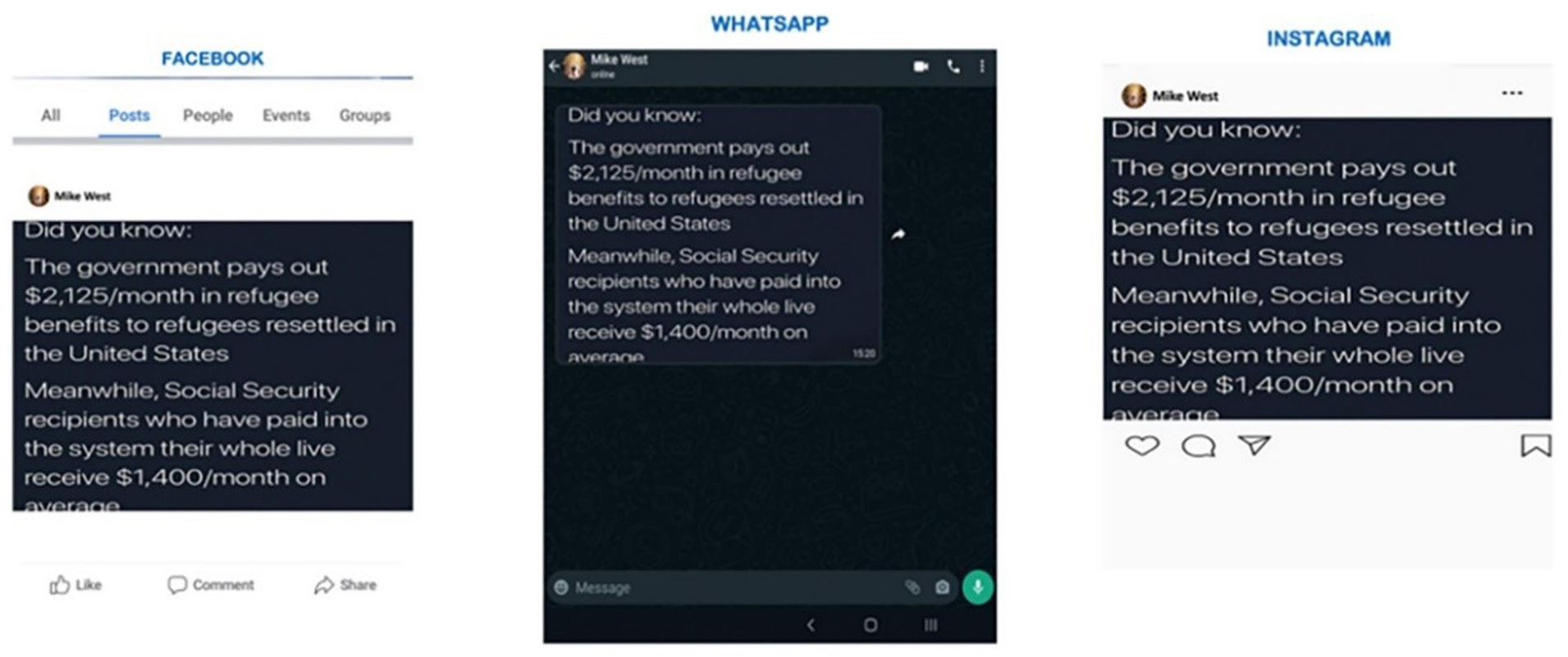

All participants first answered questions about their demographics, media use habits, political motivations, and cognitive ability. Subsequently, each participant was randomly assigned to one of three platform-oriented misinformation conditions: (a) Facebook, (b) Instagram, and (c) WhatsApp. In each condition, the participants were exposed to the same misinformation stating that “The government pays out $2125/month in refugees resettled in the United States. Meanwhile, social security recipients who have paid into the system their whole life receive $1400/month on average.” The misinformation was presented to participants as being posted on the respective platform interfaces. For example, those in the Facebook condition were shown the misinformation resembling a Facebook post and vice versa for the participants in the Instagram and WhatsApp conditions (see Figure 2 for details).

Social security misinformation across platforms.

Finally, after exposure to the misinformation, the participants were asked to rate their perceived claim accuracy and sharing intentions of this post. For the stimulus, we used a real-world piece of misinformation, as this approach enhances the validity of our findings. The claim has been debunked by various fact-checkers to be false (Cox, 2021; Putterman, 2021), and the participants were thoroughly debriefed about the false nature of the stimuli at the end.

We also examined to check for any characteristic differences across conditions. No statistical differences were found across the groups. The results are included in the Appendix A, Table A1.

This study was reviewed and approved by the ethics review board at Nanyang Technological University. All participants provided written informed consent to participate in the study and for their data to be published.

Measures

The perceived claim accuracy of respondents was measured by asking them to rate how accurate they thought the claim that the government pays $2,215/month in refugee benefits to refugees but $1,400/month to social security recipients in the platform condition on a scale (1 = not at all accurate to 5 = extremely accurate). This approach was adapted from previous literature (Ahmed & Rasul, 2023; Pennycook & Rand, 2019; Ståhl & van Prooijen, 2018).

Sharing intentions of respondents was measured by asking how likely they were (1 = extremely likely to 5 = not at all likely) to share this post on their social media page. The item was reverse-coded, so a higher value represents greater sharing intention.

Cognitive ability was measured through a 10-item Wordsum test, a previously established scale that shares high variance with general intelligence. This approach has been used in existing research (Apuke et al., 2024; Littrell et al., 2020). More specifically, the wordsum test has been used in studies focused on misinformation and cognitive ability (Ahmed, 2021; Ahmed & Rasul, 2023; Pennycook & Rand, 2019). The participants were provided with a source word (e.g., broaden) to match the closest associated word from a list of five target words (e.g., school; noon; captain; room; and board). The correct responses to the ten items were combined to create a scale of cognitive ability (M = 4.64, SD = 2.66, α = .75).

The study also employs a set of control variables, which includes participant demographics, news media use, and partisanship. Demographics controls included age (M = 38.11, SD = 10.85), gender (55% female), education (Median = bachelor’s degree), income (Median = $7,000–$8,999), and race (65% White). Media use measured how frequently (1 = not at all–5 = daily) participants used the following mediums to get news about political and public affairs (a) television (M = 3.53, SD = 1.10) (b) radio (M = 2.90, SD = 1.21) and (c) print newspaper (M = 3.19, SD = 1.31). Partisanship was measured by asking respondents about their political leanings (1 = strong Democrat–7 = strong Republican, M = 3.03, SD = 2.23).

Results

We ran a one-way ANCOVA to compare how participants rated their perceived accuracy of the post across three conditions, controlling for demographics, partisanship, and cognitive ability (RQ1). A significant main effect of platform conditions on claim accuracy was observed by the study (F (2, 234) = 6.57, p < .001). A post hoc test was then implemented to examine the differences between the conditions. It was found that participants perceived the claim to be more accurate in the Instagram condition (M = 3.53, SE = .12) compared to Facebook (M = 3.08, SE = .11, p < .001) and WhatsApp conditions (M = 2.94, SE = .10, p < .001). However, no significant differences were found between the Facebook and WhatsApp conditions. We also found significant main effects for cognitive ability (F = 14.35, p < .001).

Next, the differences in sharing intentions across social media platform conditions were examined (RQ2), and no statistically significant differences across platforms were found [F (2, 234) = 0.102, p = .90]. The means for perceived accuracy and sharing intentions are illustrated in Figure 3.

The means plot for perceived claim accuracy and sharing intention across conditions.

Next, the study explored if sharing intentions are driven by perceived claim accuracy (H1). A regression model was constructed with sharing intention as the dependent variable to test this assumption. The results are presented in Table 1. As observed, perceived claim accuracy was positively associated with sharing intentions (β = .162, p < .001). Those who believe the misinformation is true are likelier to share it on social media. Hence, H1 is supported.

Regression Examining Perceived Claim Accuracy and Sharing Intention.

dummy.

p < .05. **p < .01. ***p < .001.

Next, it is also observed that cognitive ability is negatively associated with both perceived accuracy (β = −.224, p < .001) and sharing intentions (β = −.198, p < .001). As such, those with high cognitive ability are less likely to share the post on social media – supporting H2.

Finally, the study explored whether cognitive ability will moderate the association between perceived claim accuracy and sharing intention (RQ3). An interaction term between the perceived claim accuracy and cognitive ability was created and regressed on sharing intentions. The interaction was statistically significant (Table 1, β = .302, p < .05). The relationship is plotted in Figure 4. Individuals with low cognitive ability are more likely to share the post irrespective of perceived accuracy levels. However, individuals with high cognitive ability are more vulnerable to sharing the post if they perceive the misinformation as accurate.

The moderation effect of perceived claim accuracy and cognitive ability on sharing intention.

Discussion

In an era marked by the rapid dissemination of information, this study offers insights into the nuanced effects of cross-platform differences on users’ perceptions and behaviors concerning misinformation. The findings suggest that platform differences may play a unique role in shaping user perceptions of misinformation. Further, our findings highlight the role of cognitive ability in misinformation sharing behavior.

Our primary findings demonstrate distinct cross-platform differences in how users evaluate misinformation. Despite all platforms in the study being owned by Meta, users’ perceptions of misinformation accuracy varied significantly. This highlights the intrinsic differences in platform design and user experience that can influence belief systems. However, these differences did not translate into variations in sharing intentions. Our study reaffirms that individuals with lower levels of cognitive ability are generally more susceptible to sharing misinformation, but those with higher levels of cognitive ability are not immune. When they perceive misinformation as accurate, their likelihood of sharing it increases, underscoring the complex interplay between cognitive ability and misinformation engagement. The following paragraphs highlight three main findings and their theoretical implications.

Our study found that users perceive misinformation differently across Meta-owned platforms. Instagram, for instance, emerged as a platform where misinformation is perceived as more accurate than Facebook and WhatsApp. This could be attributed to several factors. First, Instagram’s design features, such as the visibility of users who have liked a post may serve as heuristics for the credibility of information. Indeed, prior research has argued that in online environments such as social media, endorsements are used as heuristics to determine the credibility of information (Metzger & Flanagin, 2013). The source of information and credibility cues are often unavailable or less prevalent on Instagram due to its design as an image-based platform (Winter & Krämer, 2014). Therefore, users rely on source cues such as trustworthy individuals (i.e., celebrities) who have liked a post on Instagram to determine the credibility of the information (Mena et al., 2020). Second, the reputation of platforms may influence the public’s trust in platforms. For instance, Facebook has faced significant scrutiny due to high-profile controversies. WhatsApp has also had its share of misinformation challenges, especially in contexts like spreading false information during critical events (Farooq, 2018; Garimella & Eckles, 2020; Rossini et al., 2020). Nevertheless, the perceived accuracy similarities between Facebook and WhatsApp may suggest that other factors are also at play.

The design and affordances of each platform offer another lens of interpretation. For example, Instagram’s visual-centric nature might amplify the believability of misinformation, as visuals are often perceived as more trustworthy than text, as they mimic reality (Sundar et al., 2021). Visual misinformation on Instagram in the form of videos or images may exhaust users, resulting in lower systematic processing of information (Peng et al., 2023). In contrast, WhatsApp is a private messaging app where individuals can interact with close ties or join public groups, where mis- and disinformation are prevalent (Evangelista & Bruno, 2019; Resende et al., 2019). However, because WhatsApp does not reveal to individuals the source from which a message is forwarded, users may be more critical of the information they encounter. Prior research also points out that users are likely to only engage with people they know on WhatsApp (Waterloo et al., 2018), as such, they are more likely to be corrected and correct others when exposed to misinformation (Rossini et al., 2021). Lastly, Facebook allows users to engage in a multitude of social activities in addition to being exposed to news via a feed, which algorithmically pushes content shared by users that one has engaged with. This may increase the probability of misinformation exposure (Rossini et al., 2020).

Additionally, we found that while platform differences influenced perceived accuracy, they did not significantly impact sharing intentions. This aligns with Pennycook and Rand’s (2021) assertion that perceived accuracy and sharing intentions, though related, are distinct. Alternatively, other factors, such as the norm of conflict avoidance, may impact sharing intentions. Recent work has argued that social media users may fear speaking out against misinformation due to conflict (Chadwick et al., 2024). Likewise, it is also possible that individuals may perceive false information to be accurate but may be reluctant to share it to avoid conflict with close ties or due to fear of social or political backlash.

Our findings about the role of cognitive ability align with prior work on its importance as a direct predictor and a moderator in users’ engagement with misinformation. Those with higher cognitive ability were less likely to perceive misinformation as accurate and less likely to share it. This aligns with the literature suggesting that analytical thinking can buffer against misinformation (Ahmed, 2021). Furthermore, cognitive ability also moderated misinformation-sharing behavior, implying that while individuals with low cognitive ability were more likely to share misinformation regardless of its perceived accuracy, those with high cognitive ability were more discerning than others. However, when they perceive a claim as accurate, they are more inclined to share it, reflecting confidence in their judgment. This nuanced finding resonates with Pennycook and Rand’s (2021) work, which suggests that while cognitive ability can act as a shield against misinformation, it does not render individuals immune, especially when convinced of a claim’s veracity. It is important to note that in addition to the perceived accuracy of claim, there may be other factors that interact with cognitive ability to impact misinformation sharing, such as personality traits (Ahmed & Rasul, 2023). Future research may benefit from examining additional factors that may enhance or minimize cognitive ability’s role in misinformation engagement.

Our findings have practical implications regarding platform differences in misinformation engagement. First, the differences in perceived accuracy across platforms suggest that information is processed differently based on the specific platform and its intended use. Most individuals hold multiple social media accounts for different purposes (see Waterloo et al., 2018). As such, approaches to combat misinformation should take into account individual differences in platform use. For instance, since private, intimate interactions characterize WhatsApp use, it may result in more effective misinformation correction, especially with close ties (Rossini et al., 2020). Others have suggested that algorithmic interventions may be more effective on Facebook due to its function as a news source (Bode & Vraga, 2018), although partisan differences exist (see Jennings & Stroud, 2023). Lastly, since Instagram is a visual social media platform, humorous logic and fact-based corrections have been found to be effective in combating misinformation (Vraga et al., 2020). While platform differences may play a key role in misinformation engagement, a one-size-fits-all approach, especially due to dwindling fact-checking resources (Blanding, 2025), still has merit. Recent work has indicated that interventions such as the reverse image search are effective in combating visual misinformation (Qian et al., 2022). Since images are present on Facebook, Instagram, and WhatsApp, this could be an alternative approach to mitigate the harmful effects of misinformation without requiring specific interventions for each platform. In addition to platform differences, at the user level, cognitive ability has been shown to be an important factor against misinformation (Ahmed & Rasul, 2023; Martel et al., 2021). Therefore, further understanding the role of cognitive ability can inform educational initiatives, equipping users with critical thinking skills to navigate the digital information landscape.

In addition, our findings also have theoretical implications in understanding misinformation engagement. First, by comparing three social media platforms owned by Meta (Facebook, Instagram, and WhatsApp), we extend the literature focused on examining misinformation engagement through platform affordances (Mena et al., 2020; Rossini et al., 2020; Sundar et al., 2021) by revealing how platform-specific features may impact the processing and sharing of information. Second, our findings reveal that individuals with higher levels of cognitive ability are less likely to perceive misinformation as accurate but are still susceptible to sharing misinformation if they perceive it as accurate. This implies that while cognitive ability may serve as a buffer against misinformation, it does not guarantee immunity. This may be particularly concerning in visual or private messaging contexts, where credibility cues may be limited.

Limitations

While our study sheds light on crucial aspects of misinformation engagement, it has limitations. Relying on self-reported data introduces potential biases, as participants might be influenced by social desirability, affecting the authenticity of their responses. Similarly, we rely on self-reported measures of media use, which can introduce bias due to overreporting (Prior, 2009) or underreporting in some cases. Additionally, we are not certain of the long-term perceptions of misinformation across these platforms. Despite these constraints, our research underscores the multifaceted nature of misinformation engagement, emphasizing the roles of platform differences and cognitive ability. As misinformation challenges persist in the digital landscape, studies like ours provide foundational knowledge to inform future interventions and policy decisions.

Conclusion

Ultimately, our study examined how cross-platform differences on platforms owned by Meta (Facebook, Instagram, and WhatsApp) affect individuals’ perceived accuracy and sharing intentions of misinformation. We also assessed whether cognitive ability moderated this relationship. Our findings revealed that participants were more likely to perceive misinformation to be credible on Instagram compared to Facebook and WhatsApp. Further, we found that those with lower levels of cognitive ability were more likely to share misinformation regardless of perceived accuracy. Those with higher levels of cognitive ability were also more likely to share misinformation, but only if they perceived it to be accurate. This study highlights the importance of platform affordances and individual-level characteristics in understanding how individuals engage with misinformation. Given Meta’s increasing withdrawal from fact-checking, it is more important than ever for researchers to examine misinformation dynamics on social media platforms and develop effective interventions.

Footnotes

Appendix A

We also explored if there are characteristic differences between the three conditions. ANOVA and Chi-square tests suggest that the three conditions do not differ across their demographic characteristics, political motivations, or cognitive ability. The results are presented below.

Ethical Considerations

The study was approved by the Institutional Review Board at the School of Social Sciences, Nanyang Technological University (1102024).

Consent to Participate

All participants provided their consent prior to taking part in the study and had the choice to drop out from the study at any stage without any penalties. All participants also provided consent for the data to be used in a publication.

Author Contributions

SA designed the study, arranged the funding, analyzed the data, and wrote the manuscript. MER and AT analyzed the data and wrote the manuscript. AWTB wrote the manuscript.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The study was supported by Nanyang Technological University.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The data may be available on reasonable request.