Abstract

This meta-synthesis study examines the use of digital assessment tools in education, focusing on their prevalence, benefits, limitations, and recommendations for effective integration into teaching processes. Based on 41 empirical studies published between December 2012 and January 2023, this study follows the thematic synthesis approach proposed. Studies were selected using defined inclusion criteria from ERIC, Scopus, and ISI Web of Knowledge databases. A coding process was conducted independently by two researchers, and thematic categories were derived through consensus and cross-validation. The synthesis identified frequently used tools such as e-portfolios, web-based systems, and LMS platforms. Findings indicate that while digital tools provide personalized feedback and facilitate learner autonomy, they face limitations such as technical constraints and limited capacity for assessing higher-order thinking skills. This study concludes that addressing these challenges is essential for realizing the full potential of e-assessment in education.

Plain Language Summary

This study looks at how digital tools are being used to assess students in education. In recent years, digital tools such as e-portfolios, online quizzes and learning management systems (LMS) have replaced traditional paper-based assessments. These tools offer many benefits, including immediate feedback for students, the ability to track individual progress, and personalised learning experiences. For teachers, digital assessments make it easier to manage large groups of students and provide a clearer picture of student performance. The study reviews 41 research articles published between 2012 and 2023 to understand how these digital tools are being used, what benefits they offer and what challenges educators face when using them. E-portfolios and web-based assessment systems are among the most commonly used tools. These tools help students to take charge of their learning by allowing them to monitor their own progress. They also save time and provide teachers with useful data on how students are doing. However, digital assessment is not without its challenges. Some tools don’t fully capture complex skills such as critical thinking or problem solving, and there are problems with technical reliability and internet access, especially in areas with poor infrastructure. In addition, some students and teachers find these tools difficult to use due to a lack of technical knowledge. The study recommends adding more features to these tools, such as different types of assessments and stronger security measures to prevent cheating. While digital tools have great potential to improve education, they need to be refined to better meet the needs of all students and teachers. In conclusion, digital assessment tools can make learning more efficient and personalized, but challenges such as technical problems and incomplete measurement of skills need to be addressed to make them more effective in education.

Introduction

The rapid development of the digital age has led to radical changes in the education sector, as in many other areas of life. In particular, the integration of information and communication technologies (ICT) in education has required the redesign of many components, from teaching methods to assessment processes (Laurillard, 2013). This transformation of education has required not only the use of technological tools, but also the redesign of pedagogical approaches. The integration of ICT into the educational process has brought to the fore skills such as creative thinking, problem-solving, collaboration and digital literacy, often referred to as 21st-century skills, and the traditional understanding of education has been replaced by a digital understanding of education (Redecker & Johannessen, 2013).

The growing incorporation of learning analytics tools, which enable real-time data collection and visualization of learner progress, has significantly influenced how educators monitor and adjust instruction (Ifenthaler & Yau, 2020). In parallel, AI-supported feedback systems have gained prominence for their ability to deliver personalized, adaptive, and timely feedback, thereby improving learner autonomy and instructional responsiveness (Perez-Sanagustin et al., 2014). Moreover, gamification strategies are increasingly integrated into digital assessment environments to enhance student engagement, foster competition, and increase intrinsic motivation through elements such as badges, points, and progress bars (Murillo-Zamorano et al., 2021). These recent advancements illustrate a shift from static, content-based evaluations to dynamic, interactive, and learner-centered assessment ecosystems. By incorporating these developments into the literature review, the revised introduction better captures the current state of the field and provides a more robust foundation for understanding the relevance and necessity of conducting a comprehensive meta-synthesis on e-assessment tools.

In digital education, assessment processes, like other teaching activities, have undergone a significant transformation, with traditional paper-based assessment methods gradually being replaced by digital assessment tools. This concept, referred to as e-assessment, encompasses assessment processes carried out in a digital environment and involves the use of various digital tools to measure student performance (Newhouse & Njiru, 2009). E-assessment tools, which include a wide range of instruments and methods, from online exams to digital rubrics, e-portfolios and learning management systems (LMS) (Barbera, 2009), offer significant advantages such as providing immediate feedback to students, tracking individual development processes and personalizing learning pathways (Rattiya et al., 2022). In addition, the relevant literature highlights that they provide teachers with the ability to manage large groups of students, comparatively analyze student performance, and monitor educational processes more efficiently (Safsouf et al., 2021).

Studies indicate that the integration of digital assessment tools into teaching processes makes learning processes more efficient and student-centered (Besser & Newby, 2020; Nicol & Macfarlane-Dick, 2006). Immediate feedback ensures students’ active participation in their learning processes, allowing them to quickly recognize their mistakes and learn from them (Kleij et al., 2012). Furthermore, the use of digital feedback tools allows for a more holistic approach to student performance and the development of personalized learning pathways (Stödberg, 2012).

One of the main components of e-assessment tools, LMS, are integrated platforms that allow educators to deliver digital content, administer exams and monitor student performance (Tan et al., 2020). While supporting a student-centered approach to learning, LMS also enabled teachers to closely track individual student development (Aljaloud et al., 2022). Through the feedback tools built into LMS systems, students can quickly identify their mistakes, which promotes more effective learning processes. In addition, digital tools such as e-portfolios contribute to individual learning processes by allowing students to present and evaluate their own work in a digital environment (Abrami & Barrett, 2005). E-portfolios not only enable students to closely monitor their own learning, but also provide opportunities for in-depth analysis of their development through longitudinal documentation, reflective writing, and iterative feedback cycles.

However, the widespread use of digital assessment tools in education has come with certain limitations. The effectiveness of e-assessment tools, how they should be used in educational processes, and the advantages and disadvantages they bring are frequently discussed in the literature (Appiah & Van Tonder, 2018; Karunarathne & Wijewardene, 2021; Rolim & Isaías, 2018). Gikandi et al. (2011) addressed the efficient use of digital assessment tools and the challenges encountered in educational processes, arguing that specific standards need to be developed for the effective integration of digital assessment tools in education. Although digital assessment processes have the potential to increase efficiency and transparency, the lack of a standardized assessment model has been identified as a key issue for educators to use these tools effectively (Shrestha et al., 2014; Stone, 2018).

While e-assessment has become an increasingly integral part of digital education, existing systematic reviews and meta-analyses often present limitations in scope and depth. For example, prior studies have primarily focused on specific educational levels, particularly higher education, without addressing diverse contexts such as K-12 or vocational learning environments (Johnson et al., 2022; Redecker & Johannessen, 2013; Zhan & Niu,2023). Moreover, many reviews emphasize general digital tools without analyzing the pedagogical functions, cognitive outcomes, or implementation challenges of specific e-assessment systems. Few studies examine how these tools align with formative assessment principles, support learner autonomy, or provide actionable feedback.

This study addresses these gaps by systematically synthesizing 41 empirical studies with a dual focus: the technical design and pedagogical implications of various e-assessment tools. Through thematic synthesis, it provides a more comprehensive understanding of the benefits, challenges, and future directions for the integration of e-assessment in diverse educational contexts. This approach offers a distinct contribution by bridging the gap between technological functionality and educational theory in the evaluation process.

In summary, given the findings of current research, there is a need for more in-depth exploration of the advantages and disadvantages of e-assessment tools and further investigation into how these tools can be effectively integrated into teaching processes. Based on this need, the aim of this study is to contribute to the existing literature by examining the advantages, limitations and recommendations for more effective use of e-assessment tools in teaching processes, analyzed in relation to the dependent variables. In line with this aim, the following research questions are addressed:

(1) What are the most commonly used tools in studies investigating e-assessment tools in education?

(2) What are the most commonly investigated dependent variables in studies investigating e-assessment tools in education?

(3) What are the advantages of e-assessment tools mentioned in studies investigating e-assessment tools in education?

(4) What are the disadvantages of e-assessment tools mentioned in studies investigating e-assessment tools in education?

(5) What are the suggestions for improvement of e-assessment tools mentioned in studies examining e-assessment tools in education?

Method

This study was conducted using the thematic synthesis approach of meta-synthesis, primarily based on the methodological framework proposed by Dinçer (2014, 2018a). This approach was preferred due to its suitability for integrating and interpreting findings from diverse qualitative and mixed-method studies on e-assessment tools. The synthesis involved identifying common themes, comparing patterns, and generating interpretative insights across the selected studies. The research seeks to identify commonly used e-assessment tools, the dependent variables associated with these tools, their advantages and disadvantages, and suggestions for improving these tools.

In meta-synthesis studies, the criteria for the selection of individual studies to be reviewed must first be defined. In this study, the criteria and the corresponding procedural steps were carried out within the framework of the following guidelines:

(1) Studies were systematically searched from three major databases: ERIC, Scopus, and ISI Web of Knowledge.

(2) Searches using the keywords “e-evaluation,” “e-evaluation tools,” “e-assessment” and “e-assessment tools.”

(3) Studies must have been published between December 2012 and January 2023.

(4) The studies must not be theoretical, but rather focus on practical applications related to e-assessment tools.

(5) The e-assessment tool used in the studies, its purpose, variables, benefits, limitations and recommendations must be clearly stated.

(6) Studies were included if they: Were published within the specified timeframe. Focused on practical applications of e-assessment tools. Clearly defined the tool used, its purpose, variables, benefits, limitations, and recommendations.

(7) Studies were excluded if they: Were purely theoretical or conceptual. Lacked clarity in describing the e-assessment process. Did not include empirical findings.

Accordingly, the steps of the study are as follows:

Literature review: In this stage, a literature review related to the research was carried out. The databases ERIC, Scopus and ISI Web of Knowledge were searched using the keywords “e-evaluation,” “e-evaluation tools”, “e-assessment” and “e-assessment tools” and the results were listed with their citations.

Timeframe and inclusion criteria: The search included all accessible studies published between December 2012 and January 2023. This timeframe was selected because it corresponds to the period during which digital transformation in education accelerated significantly due to advancements in educational technologies and the widespread adoption of e-assessment tools, particularly in response to remote and hybrid learning needs. Studies outside this period were excluded. In addition, only studies that focused on practical applications rather than theoretical content were included. After compiling a comprehensive list of relevant studies, the studies were grouped according to the inclusion and exclusion criteria. A total of 41 articles were included in the review.

Coding of studies and thematic analysis: The 41 studies were coded independently by two researchers using a codebook derived from the research questions and initial readings. The reporting was aligned with the PRISMA guidelines for transparent reporting of syntheses, as shown in Figure 1. Studies were labeled (A1–A41), and their content was coded under the categories: keywords, year, tool type, purpose, variables, benefits, limitations, and recommendations. The complete list of these individual studies can be found in Appendix 1. The content of the articles was analyzed based on the categories of keyword, year, tool, purpose, variables, benefits, limitations and recommendations, focusing on similarities and differences. The selected studies were examined within the framework of the research questions, focusing on the themes of keyword, year, citation, e-assessment tool, purpose, variables, benefits, limitations, recommendations. The reliability of the research was ensured through a systematic coding process. This approach aligns with the principles of investigator triangulation, which emphasizes the use of multiple researchers to analyze the same data. In line with this technique, two researchers independently coded the data and then compared their results to enhance the reliability and consistency of the analysis. Any discrepancies were discussed and resolved through consensus, leading to the development of a unified coding scheme. The intercoder reliability was calculated using Cohen’s Kappa, which yielded a coefficient of 0.91, indicating substantial agreement. To further strengthen the reliability, the agreement between coders was calculated using the formula P = [Na / (Na + Nd)] × 100 (Miles & Huberman, 2014), yielding a reliability coefficient of 92.00%.

PRISMA flow diagram illustrating the selection process of included studies.

Synthesis of findings: The findings obtained were further detailed and synthesized within the themes to ensure coherence, and the study was completed.

In summary, the screening process eliminated duplicate studies with different keywords but identical content. Out of a total of 8,728 retrieved articles, 8,688 were excluded and 41 met the study criteria and were included in the review. Of the 8688 excluded articles, 3,108 were outside the date range of the study and were therefore eliminated. The remaining 5,579 articles were excluded because they contained only theoretical information and no practical applications. In addition, the same articles were retrieved using both the keywords e-assessment and e-evaluation and the keywords e-evaluation tools and e-assessment tools. The number of articles from each database and keyword search is shown in Figure 1.

The validity and reliability of this meta-synthesis study depend on the validity and reliability of the individual studies included. To ensure the reliability of the meta-synthesis, coding was carried out separately by two different researchers and then compared. Any discrepancies in coding were discussed and resolved to create a unified coding framework based on common themes. Finally, data analysis was carried out by creating themes, organizing these themes and grouping them based on the coding. Their frequencies were then examined to gain further insights.

Results

To answer the question “What are the most commonly used tools in studies investigating e-assessment tools in education?”, the individual studies included in the analysis (k = 41) were coded within eight themes, as shown in Table 1. The coding results showed that e-portfolios (k = 10) and web-based assessment tools (k = 10) were the most frequently used e-assessment tools in the individual studies. These were followed by LMS (k = 9), web 2.0 tools (k = 4), virtual reality environments (k = 3), mobile applications (k = 2), social networks (k = 2) and computer-assisted instruction programs (k = 1).

Findings on the Most Commonly Used Tools in Studies Investigating E-Assessment Tools in Education.

In order to answer the second research question, “What are the most commonly investigated dependent variables in studies investigating e-assessment tools in education?”, the 41 studies included in the review were coded and analyzed. As shown in Table 2, the most frequently examined dependent variable was automatic feedback (f = 9). This was followed by learning competence (f = 7), student motivation (f = 6), assessment of academic performance (f = 5), effectiveness of self-assessment (f = 4), use of ICT (f = 3), communication skills (f = 3), effectiveness of peer collaboration (f = 2), competence in e-portfolio creation (f = 2), literacy skills (f = 2), self-regulation skills (f = 2), critical thinking skills (f = 1), support of student autonomy (f = 1) and acceptance of online examinations (f = 1).

Findings in Terms of the Most Commonly Investigated Dependent Variables in Studies Investigating E-Assessment Tools in Education.

To answer the question, “What are the advantages of e-assessment tools mentioned in studies investigating e-assessment tools in education?”, the 41 studies included in the research were analyzed, and the coding results related to the advantages are presented in Table 3. Upon examining Table 3, the advantages of e-assessment tools are grouped under nine themes. The most frequently mentioned advantage is the “providing feedback” theme (f = 13).

Findings on the Advantages of E-Assessment Tools Mentioned in Studies Examining E-Assessment Tools in Teaching.

The theme of providing feedback was identified in several studies with different interpretations. In study A9 it is described as “supporting the development of effective dialogues through peer feedback”, while in study A20 it is referred to as “constructive feedback from teachers and peers”. Studies A15, A19, A32, A36, A40 and A41 highlight the benefit of “providing immediate feedback.” In addition, studies A36, A40 and A41 emphasize “the speed of the e-assessment tool,” while study A15 highlights “fair grading.” Study A17 discusses “the effectiveness of the e-assessment tool in providing feedback,” A19 highlights its contribution to “self-regulation” and A32 mentions its role in “organizing the learning process.” Furthermore, in study A31 feedback is defined as “the feedback the teacher gives to the student,” in study A34 it is noted that “feedback provided through discussions and forums through mutual interaction” is beneficial and in study A38 the feedback feature of the e-assessment tool is praised for “its ability to personalize feedback.”

Another theme related to the benefits of e-assessment tools in the studies is time management. Time management is interpreted in studies A6, A15, A32, A36 and A38 as “saving time by using e-assessment tools.” In contrast, studies A27, A34 and A35 emphasize the benefit of allowing students to “use these tools at their convenience.”

In the context of academic assessment, studies A1, A5 and A15 point to the advantage of ensuring validity and reliability in measuring levels of knowledge. Study A18 notes that “e-portfolios are an effective way to assess student learning”, while study A22 emphasizes that “Web 2.0 tools help to assess the knowledge level of teacher candidates.” In addition, Study A23 mentions that “a well-designed web-based assessment tool allows for easy measurement of reading comprehension skills.”

Another benefit of e-assessment tools is the issue of personal skills. Study A4 concluded that “e-portfolios facilitate education by providing coordination in learning,” while studies A10, A21, A22 and A37 identified contributions to “career development and personal growth.” Study A12 emphasized the improvement of “communication and archiving skills.” In addition, study A24 highlighted the benefit of e-assessment tools, stating that “participating in peer assessment and content analysis helps to develop critical thinking.”

The theme of self-regulation appeared in Study A3 where it was found that e-assessment tools “help students to check their own learning”. Study A6 found that “e-portfolios facilitate learning management” and studies A25, A26 and A28 concluded that these tools help students to “increase self-regulation skills by reviewing their own progress.”

The issue of individual tracking was mentioned in studies A1 and A31, with the benefit of “tracking student success.” Study A38 highlighted the benefit of “measuring personal tracking and feedback through the automatic marking feature of the e-assessment tool”; while study A39 emphasized that “the web-based assessment tool’s ability to identify individual deficiencies” was beneficial.

The final theme regarding the benefits of e-assessment tools, collaboration, was highlighted in studies A2, A6 and A13 where it was stated that e-portfolios facilitate collaboration through various activities. Study A29 stated that “the use of LMS enhances collaboration,” emphasizing the collaborative benefits of these tools.

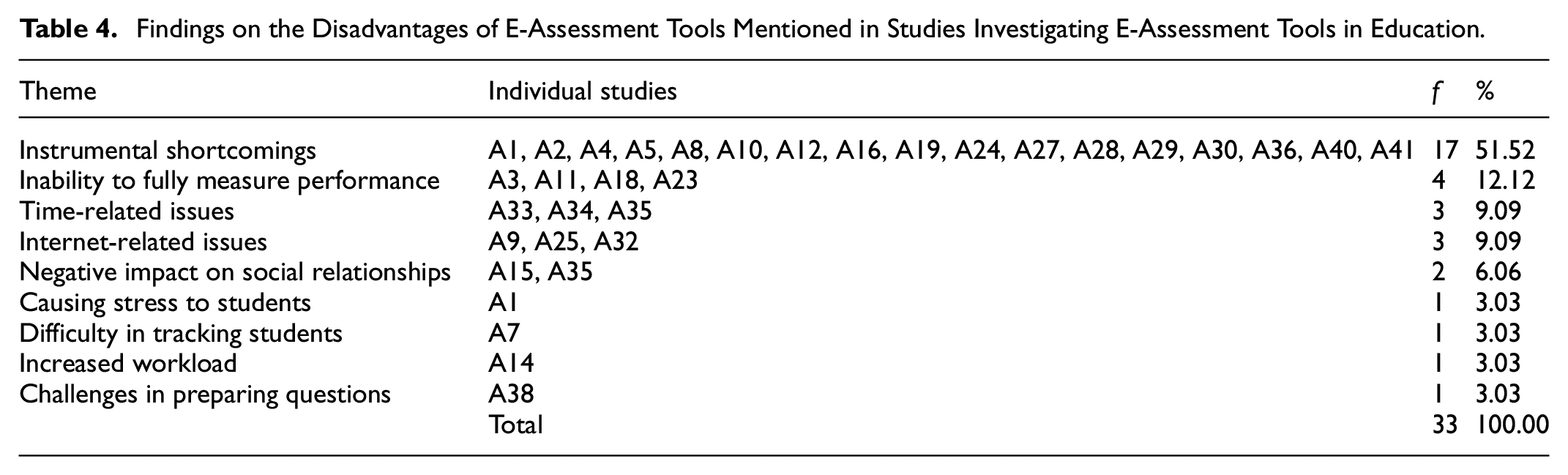

With regard to the fourth research question, “What are the disadvantages of e-assessment tools mentioned in studies investigating e-assessment tools in education?”, 11 studies were excluded from the analysis because they did not report any findings related to disadvantages. However, 30 studies that provided relevant data were included in the analysis. The findings regarding the disadvantages mentioned in these studies are presented in Table 4.

Findings on the Disadvantages of E-Assessment Tools Mentioned in Studies Investigating E-Assessment Tools in Education.

Table 4 shows that the disadvantages associated with the use of e-assessment tools in teaching can be categorized into nine different themes. All of these challenges relate to the practical application of the tools. The most frequently cited limitation is instrumental shortcomings (f = 17), followed by inability to fully measure performance (f = 4), time-related issues (f = 3), internet-related issues (f = 3), negative impact on social relationships (f = 2), causing stress to students (f = 1), difficulty in tracking students (f = 1), increased workload (f = 1), and challenges in preparing questions (f = 1).

In the findings on the disadvantages of e-assessment tools, the theme of instrumental shortcomings was mentioned in both studies A1 and A4, with “lack of explanations and guidelines for the use of e-portfolios” as the reason. In study A2, the disadvantage was identified as “the content of the e-portfolio not being consistent with the assessment.” Both studies A5 and A36 pointed out that “web-based assessment tools are only effective in measuring knowledge (recall) and lack the capacity to assess understanding, application, analysis, evaluation and creation.” Study A8 mentioned that “of the three features of e-portfolios (writing, sharing and discussion), only writing is used, which does not fully reflect students’ level of assessment.” Study A24 noted that “students only use the chat and blog features of the e-assessment tool, although it has many features.” Study A41 noted that “the web-based assessment tool only contains short quizzes,” which was seen as a limitation.

In studies A10 and A28, the inability to “personalize the assessment tool according to students’ characteristics and needs” was reported as a disadvantage. Studies A12 and A16 highlighted the “lack of representation of the real world” as another drawback. Study A27 mentioned the potential negative impact of “background noise during the assessment process,” while study A29 reported problems due to “synchronization issues and the limitation of only allowing 1MB file size for uploads.”

The theme of inability to fully measure performance was noted in all related studies (A3, A11, A18, A23) as “the inability of the assessment to fully measure performance in terms of knowledge and skills.” For example, in study A3 it was noted that although the students assessed by e-assessment achieved higher grades, the other group showed better overall performance.

The issue of time-related was mentioned in Study A33 as “students take too long to find answers, which prevents them from seeing all the questions.” Study A34 highlighted the issue of “long upload times for assignments” and study A35 highlighted the extended time needed for “students to complete the e-portfolio collaboratively with peers.”

The issue of Internet-related problems was highlighted in study M9, where “unreliable Internet connection” was mentioned as a disadvantage. Study A25 reported that “browser security permissions caused difficulties for students during assessments,” and study A32 mentioned the issue of “Internet connectivity not being available everywhere” as a challenge.

Under the theme of negative impact on social relationships, study A15 mentioned that “although the tool helped to ensure fair grading, students complained that some peers received undeservedly high grades.” Study A35 found that “peer feedback led to competition between students, which negatively affected social relationships.”

Finally, in study A1, students “experienced stress while creating e-portfolios,” while in study A7, “teachers struggled to monitor students due to their heavy workload.”. Study A14 reported that “teachers’ workload increased,” which was also coded under different themes.

For the fifth research question, “What are the suggestions for improvement of e-assessment tools mentioned in studies examining e-assessment tools in education?”, 20 studies were excluded from the analysis because they did not provide any suggestions. However, 21 studies that did include recommendations were analyzed and the findings in relation to the suggestions are presented in Table 5.

Findings on the Suggestions for Improvement of E-Assessment Tools Mentioned in Studies Examining E-Assessment Tools in Education.

Upon reviewing Table 5, suggestions for improving e-assessment tools used in teaching are grouped under five themes. The most common recommendation, found in many of the studies, is adding different application plugins to e-assessment tools (f = 10). This suggestion involves incorporating additional features and plugins to provide an integrated working environment for e-assessment tools.

The coding for the theme “Adding different application plug-ins to e-assessment tools” is based on study A11, which suggested that “the use of new approaches such as augmented reality, 3D printing or artificial intelligence can transform teaching and learning. Competence areas arising from the increasing use of remote and virtual laboratories (especially in distance learning) may also extend DiKoLAN in the future.” In study A16, it was noted that “there are indicators that ESRI Story Map has some space for improvement. It should be enhanced by better utilizing the five cognitive processes (word selection, image selection, word editing, image editing, and integration).”

Study A17 recommended that “the system should allow users to upload images and audio while posting reflections in forums.” In study A28, it was mentioned that “iSELF needs the possibility of being embedded into Learning Management Systems.” Study A29 proposed that “future features to be added to the system include offline functionality, accessible vocabulary tools, group and resource sampling automation, ease of use, etc.” Study A32 noted that “iPads allowed only a limited number of students’ names to be visible on the screen. To overcome this issue, the teacher’s screen should have the capability to display more than 25 students.” Study A35 stated that “although electronic portfolios provide flexibility in giving and receiving feedback, there is still a need for human interaction in assessment. These two types of assessment could be combined.” Study A36 suggested that “since a task requiring physical examination, such as a clinical examination, cannot be reasonably performed, assessment could instead be done by demonstrating clinical technique on a simulated patient.” Study A37 recommended that “various methods of artificial intelligence could be used to prevent plagiarism in online examinations.” These recommendations reflect a wide range of technological improvements and additional features to improve the effectiveness and functionality of e-assessment tools.

The theme of integrating different types of assessment into e-assessment tools was expressed in several studies. In study A7 it was suggested that “adding tasks or types of tasks to the web-based assessment tool that help to stimulate students’ creativity would further support the development of writing skills.” In study A12 it was noted that “the web-based assessment tool should be designed to assess not only the product but also the process.”

Study A24 recommended that “the web-based assessment tool should be adapted to allow for project-based assessment,” while study A30 suggested that “the video-based learning tool should be designed to engage learners in the learning process through interactive activities.” Study A33 suggested that “while testing the assessment tool, it should simultaneously assess student learning.” Finally, study A38 recommended “adding games within the virtual reality environment to increase motivation.” These recommendations highlight the need for e-assessment tools to incorporate a variety of assessment methods to more effectively evaluate different aspects of student learning and engagement.

The theme of having different modes for e-assessment tools” was expressed in several studies. In study A9, it was recommended that “an e-portfolio should include a feature that directs users to multiple approaches.” Study A24 suggested that “while testing the assessment tool, it should also test students’ learning.” Study A29 proposed that “LMS should be designed to work offline as well.”

The final single-frequency theme was found in study A36, which stated that “an institution should consider subscribing to a preferred platform and, if possible, invest in a stable security system to protect the assessment process.” These recommendations emphasize the need for e-assessment tools to be versatile, offering multiple modes of operation and enhanced security to cater to different contexts and ensure the reliability of assessments.

Discussion

The concept of e-assessment, which refers to assessment processes conducted in digital environments, has been increasingly used in education and teaching in recent years. Numerous studies have shown that e-assessment tools improve student performance, accelerate feedback processes and strengthen the objectivity of the assessment process (Appiah & Van Tonder, 2018; Jiao, 2015; Shalatska et al., 2020; Stödberg, 2012; Tawafak et al., 2019). Although research suggests that e-assessment tools help to reduce teacher workload and enable students to better monitor their own learning processes (Appiah & Van Tonder, 2018), some studies have reported conflicting findings (Crisp, 2011; Jiao, 2015; Redecker, 2013). In order to use e-assessment tools more effectively, it is crucial to identify the reasons for these divergent findings. Such identification can be achieved by analyzing the tools, variables, advantages and disadvantages in a grouped manner (Gupta et al., 2019).

In order to compare the results of e-assessment tools and analyze them using the meta-synthesis method, it is essential to first identify which e-assessment tools researchers have included in their studies. This assessment is crucial in order to identify which tools are more effective and widely used (Gikandi, et al., 2011).

By critically engaging with conflicting findings, this study aims to offer a more nuanced understanding of when and how e-assessment tools are effective. Rather than assuming uniform benefits, it highlights the need for context-aware implementation and continuous evaluation of technological integration in assessment practices. Engaging with these conflicting findings allows for a clearer picture of when and how e-assessment tools function effectively. Rather than assuming consistent benefits, the results suggest the importance of contextualized use and ongoing assessment of technological integration in educational practice.

One notable finding of this meta-synthesis is the presence of contradictory evidence regarding the effectiveness of e-assessment tools. While many studies report enhanced student engagement, motivation, and learning outcomes associated with tools such as LMS-based quizzes, e-portfolios, and real-time feedback systems, others highlight notable challenges. For instance, some tools contribute to surface-level learning or passive participation, particularly when poorly aligned with instructional goals. These divergences may result from contextual variables like students’ digital literacy, institutional infrastructure, or pedagogical approach.

Additionally, while some research emphasizes the value of e-assessment in supporting learner autonomy and personalized learning, other studies question its capacity to adequately assess higher-order cognitive skills or foster critical thinking. These inconsistencies underscore the importance of evaluating not just the presence of digital assessment tools but also their alignment with pedagogical principles and instructional design quality.

In this study, a review of the individual studies included in the meta-synthesis highlights that e-portfolio systems are among the most commonly used tools. E-portfolios not only assess student performance but also promote student-centered learning (Buyarski & Landis, 2014; Tosh et al., 2005; Shalatska et al., 2020; Stödberg, 2012). They facilitate the accumulation and assessment of student work in digital environments and speed up feedback processes, making them a preferred option (Abrami & Barrett, 2005; Barrett, 2011). Cambridge (2010) and Redecker and Johannessen (2013) further support this finding, noting that e-portfolios offer a broader educational perspective by helping students acquire 21st-century skills, rather than focusing solely on academic achievement.

However, according to the synthesis, some researchers argue that e-portfolios can be excessively time consuming and difficult to manage effectively, especially with large groups of students. It has also been noted that students sometimes view e-portfolios as just a task, thus failing to fully realize its potential as a meaningful learning tool (Birks et al., 2016). Furthermore, some studies mention that the technical infrastructure and digital literacy required for e-portfolios can be challenging, especially for students with limited access to digital resources (Tosh et al., 2005). Garrett (2011) also suggests that e-portfolios do not always outperform more traditional methods of assessing student achievement, and that the subjectivity of qualitative assessment could affect its accuracy. This is another important consideration when using e-portfolios. For example, Xanthou’s (2013) study on the use of e-portfolios reported that students were less motivated due to the system’s technical complexity, and instructors struggled to provide individualized feedback due to time constraints. Similarly, Groshans et al. (2019) found that their digital story map-based assessment system does not fully address the five cognitive processing dimensions (e.g., word selection, image selection, organization, and integration) and requires improvement. These findings demonstrate that technical aspects of digital tool design can directly impact learning quality. In this context, elements such as technical infrastructure and user experience must be considered alongside pedagogical effects.

The widespread use of web-based assessment tools and LMS in research is linked to the need to assess large groups of students quickly and efficiently, a conclusion supported by several studies (Shalatska et al., 2020). Systems such as Moodle and Blackboard are preferred because they combine many functions on a single platform, from sharing course materials and facilitating student interaction to managing assessment processes and organizing learning activities (Stödberg, 2012). However, the literature also presents studies that report mixed results regarding these systems. For example, despite the versatile features offered by LMSs, some studies suggest that students feel like passive users in these systems and do not experience an active learning process (Crisp, 2011). In addition, the technical infrastructure and digital literacy required by these systems may disadvantage students with low levels of digital literacy or limited access to technological resources (Dinçer, 2018b; Dinçer & Çengel-Schoville, 2022; Garrett, 2011). Therefore, while LMSs offer significant convenience in the teaching and assessment process, there is ongoing debate about whether these systems are an ideal solution for all students and teaching contexts.

The preference for Web 2.0 tools, particularly platforms such as Kahoot and Socrative, has been linked to their role in facilitating interactive and collaborative learning processes (Shalatska et al., 2020). These platforms encourage active student participation, making the assessment process more engaging and enjoyable, while also providing teachers with instant feedback to create more interactive lessons (Appiah & Van Tonder, 2018). As a result, they have been supported for their ability to increase student engagement and make lessons more dynamic. However, some studies have raised concerns that the entertainment-focused nature of Web 2.0 tools may lead to superficial learning processes, with students focusing on short-term knowledge acquisition rather than deep learning (Greenhow et al., 2009). In addition, the effective use of these tools in the classroom depends on the technical infrastructure and digital skills of teachers, which may lead to inequalities between teachers and schools, as reported in some studies. Furthermore, the limited research on their pedagogical alignment and impact on learning outcomes suggests that these tools may not always be effective in all educational contexts (Redecker, 2013).

In meta-synthesis studies, reporting on dependent variables frequently studied in research allows for a broader evaluation of general findings and helps to identify new research topics (Dinçer, 2018a). After reviewing the results of this meta-synthesis, it was concluded that e-assessment tools have different effects on different dependent variables. Variables such as automatic feedback, learning competence and student motivation were identified as the most frequently studied aspects of the impact of digital tools in education (Maier & Klotz, 2022). Most studies found that the ability of e-assessment tools to provide immediate feedback has a positive impact on student achievement (Crisp, 2011; Redecker, 2013; van der Kleij et al., 2012).A widely accepted finding is that automatic feedback increases student engagement in the learning process and improves performance (Jiao, 2015).

However, some studies have reported conflicting results regarding the effects of these tools. For example, a study by Gikandi et al. (2011) found that the feedback provided by e-assessment tools did not always lead to meaningful learning gains and was sometimes limited to superficial learning for certain students. Similarly, Boud and Molloy (2013) emphasized that the impact of feedback on learning is not solely determined by its frequency, but also by how students interpret and act upon it. Redecker (2013) also identified limitations, highlighting the challenges of pedagogical alignment and shortcomings in technical infrastructure that can limit the effectiveness of e-assessment tools. Furthermore, it has been observed that research on the assessment of academic performance often focuses on feedback-related issues, but does not sufficiently address the valid and reliable measurement of this performance. The lack of in-depth examination of academic performance in some studies suggests that this crucial variable requires further investigation (Garrett, 2011).

In summary, research has predominantly focused on individual learning outcomes such as feedback, student performance and motivation, while social learning processes such as peer collaboration have been insufficiently explored. E-assessment tools have been found to focus primarily on the assessment of individual performance and do not adequately support elements such as peer interaction and group work, which are essential aspects of collaborative learning (Redecker, 2013). This limitation has been attributed to the lack of appropriate methods for measuring peer collaboration, the complexity of collaborative assessment processes, and the challenges of structuring such processes effectively in digital environments.

The most emphasized theme in the research is feedback, which has been identified as one of the most important contributions of e-assessment tools in education. Feedback enables students to recognize their mistakes in the learning process and to improve their learning experience by correcting these mistakes. This conclusion is linked to the ability of feedback to improve learning. The speed and effectiveness of digital feedback systems demonstrate that these tools offer significant advantages over traditional feedback methods. McLaughlin et al. (2014) supports this conclusion, emphasizing that automated feedback systems play a crucial role in improving students’ academic performance, especially when timely feedback has a positive impact on learning. Moreover, it is considered crucial for students to reinforce their knowledge and correct their mistakes quickly.

However, the impact of feedback is not only linked to the speed of delivery, but also to how students interpret and apply the feedback. Boud and Molloy (2013) argue that the impact of feedback on learning depends not only on its frequency, but also on how students make sense of it and act on it. Therefore, the quality of the feedback and the active participation of the student are crucial in ensuring the effectiveness of the feedback.

On the other hand, Entwistle (2010) warns that feedback systems in e-assessment tools can encourage superficial learning, leading students to focus solely on test results rather than deeper knowledge acquisition. This highlights the need for careful design and use of these systems. Researcher suggests that “students who use feedback solely to improve short-term performance can damage long-term deep learning processes,” emphasizing the importance of mindful implementation.

Time management, identified as the second theme in terms of dependent variables, highlights the contribution of digital assessment tools to the efficient assessment of large groups of students. It was concluded that e-assessment tools save time for both teachers and students by speeding up evaluation processes and ensuring that feedback is delivered promptly. Haleem et al. (2022) found that these digital tools shorten the duration of examinations, allowing teachers to work more efficiently. In addition, several studies highlight that these systems allow for more detailed and comprehensive monitoring and assessment of student performance. Gikandi et al. (2011) also argue that online assessment tools allow teachers to track and analyses student performance more effectively.

One of the major benefits of digital assessment tools is their ability to help students develop self-regulation and motivation skills. Self-regulation has a significant impact on students’ ability to plan and manage their own learning processes (Zimmerman, 2002), and it has been concluded that e-assessment tools serve as an effective method of enhancing these skills, providing students with the opportunity to take a more active role in managing their learning. Perez Sanchez et al. (2022) also highlight that digital collaborative learning platforms not only improve students’ academic performance, but also enhance their social skills, adding another layer of benefit to these tools.

In the meta-synthesis conducted to identify the disadvantages of e-assessment tools, the first theme, instrumental shortcomings, relates to the inadequacy of the technological infrastructure and the inability of e-assessment tools to fully meet current educational needs (Crisp, 2011). Teachers and students may find it difficult to use these tools effectively due to user interface challenges, technical issues, and functionality problems. Redecker (2013) also emphasizes that these shortcomings reduce the efficiency of the instructional process and limit the potential to fully assess student performance. Despite these drawbacks, some researchers argue that e-assessment tools are constantly evolving and that these technological limitations are likely to be resolved over time (Shalatska et al., 2020). As technology advances, it is expected that these tools will become more user-friendly and many of the current limitations will be addressed.

The second key theme, the inability to fully measure performance, reflects the limitations of e-assessment tools in assessing student learning in depth. These tools often focus on testing students’ surface-level knowledge and fail to adequately assess critical thinking skills and deeper learning (Entwistle, 2010). E-assessment tools often rely on limited question formats, such as multiple-choice questions, and struggle to fully assess higher-order skills such as critical thinking, creativity and problem-solving (Appiah & Van Tonder, 2018). Despite these limitations, some researchers argue that e-assessment tools that provide personalized feedback can help students manage their learning processes (Redecker, 2013). However, this does not eliminate the need to address the lack of flexibility and technological infrastructure of the tools.

Other significant disadvantages include time management issues and internet-related problems. Technical interruptions and connectivity issues during online exams, especially in regions with poor infrastructure, have been shown to negatively affect student performance, as noted in several studies (Jiao, 2015). Time management issues arise from factors such as the inability to complete online exams on time, or interruptions due to technical difficulties. In addition, when working with large groups of students, time constraints are often associated with teachers preparing questions and managing the assessment process, leading to further challenges.

The synthesis found that in regions with poor internet infrastructure, the use of e-assessment tools poses significant challenges, sometimes to the point where assessments cannot be carried out at all. For example, Redecker (2013) noted that internet connectivity issues prevent students from accessing exams in distance education and disrupt the assessment process. This situation limits the effectiveness of distance education programs and makes it difficult to accurately measure student performance, leading to the conclusion that e-assessments should not be conducted under such conditions.

Although not often mentioned in the literature, the negative impact of e-assessment tools on social relationships and the stress they can cause emerged as a notable concern in the meta-synthesis. These findings suggest that this is a significant limitation that warrants further investigation. Wang and Woo (2007) argued that digital tools may not be able to replicate the social interactions found in face-to-face education and may reduce collaboration between students. This, in turn, could hinder the development of students’ social skills and weaken classroom dynamics, a conclusion supported by Shalatska et al. (2020). On the other hand, some studies (Appiah & Van Tonder, 2018) suggest that collaborative digital tools can enhance interactions among students, further emphasizing the need to explore this limitation in more depth.

Finally, the themes of increased workload and challenges in preparing questions highlight the impact of e-assessment tools on teachers. Boud and Molloy (2013) emphasize that if e-assessment tools are not properly structured, they can create additional workload for teachers. The process of preparing questions, managing feedback, and setting up the technical infrastructure may require teachers to invest more time and effort, potentially leading to significant challenges (Appiah & Van Tonder, 2018). This suggests that, without adequate support and optimization, these tools could become a source of considerable strain for educators.

In meta-synthesis studies, thematizing and grouping research recommendations provide important insights. In this meta-synthesis, the main recommendation for improving e-assessment tools was the addition of various application plugins. This theme is seen as an important step in increasing the functionality of e-assessment systems, making student assessment processes more flexible and inclusive. The addition of different plug-ins would allow the tools to be adapted to different learning needs and teaching strategies by providing specialized modules for exams, assignments and projects. This would allow teachers to use different approaches to assessment and help students adapt to different learning styles (Wang & Woo, 2007).

However, alongside these improvements, technical challenges may arise. Software compatibility of different plug-ins and the need for technical support could be significant barriers, particularly for institutions with limited budgets or inadequate technological infrastructure. For example, Bennett and Lockyer (2004) highlight that technical difficulties in implementing plug-in software could create access problems for smaller schools, highlighting a major barrier to the widespread adoption of e-assessment tools. This suggests that while such advances have great potential, addressing technical and resource constraints is critical to their successful implementation.

Another important recommendation highlighted by the research is the integration of different types of assessment in e-assessment tools. According to the meta-synthesis, traditional digital assessment tools are often limited to multiple-choice and short-answer questions that primarily test students’ surface-level knowledge (Entwistle, 2010). However, integrating creative assessment methods that promote deep learning and critical thinking into digital platforms would allow for a broader range of student assessments (Shraim, 2019). For example, Appiah and Van Tonder (2018) argue that incorporating methods such as e-portfolios, open-ended questions and project-based assessments would enable the measurement of students’ analytical thinking, problem-solving and creative skills.

The integration of different types of assessment is seen as a crucial step in broadening the scope of e-assessment tools and more effectively assessing students’ complex skills. This view is supported by Shalatska et al. (2020), who note that such an expansion of digital tools would support both individual and collaborative learning processes. These innovations would not only improve students’ academic performance, but also foster critical thinking and creative problem-solving skills in the long term.

Another key recommendation is the addition of different modes to e-assessment tools. It is thought that the inclusion of adaptable modes, tailored to students’ individual learning speeds and needs, could make digital tools more flexible and student-centered (Zimmerman, 2002). For example, some students may prefer to receive feedback quickly, while others may need more time to reflect. By allowing for customization of both examination modes and feedback processes, these tools could optimize student motivation and learning outcomes (Redecker, 2013). Appiah and Van Tonder (2018) also highlight that this flexibility would be beneficial in meeting the needs of students with different learning styles, further supporting the value of making e-assessment tools more adaptable.

Another critical, but less frequently emphasized, area for improvement in e-assessment tools is security. Issues such as cheating, identity verification, and data security in digital exams and assessment tools pose serious challenges and may undermine students’ trust in the assessment process (Jiao, 2015). Crisp (2011) highlights that advanced security systems are essential to protect student data and ensure academic integrity. In this context, improving identity verification systems and enhancing exam security could make digital assessment tools more reliable.

However, developing such security systems can be costly, particularly for schools with limited budgets and technical infrastructure. Bennett and Lockyer (2004) point out that implementing security systems requires both financial resources and technical expertise, which may prevent some institutions from easily adopting these solutions. Therefore, the widespread implementation of digital security systems and the resolution of security vulnerabilities are likely to depend on increasing technological infrastructure and budgetary support.

Finally, the issue of implementation challenges highlights a crucial factor to consider in terms of the practical applicability of the proposed improvements. The addition of different modes, the use of plug-in software, and the enhancement of security systems may place additional workloads on teachers and students. Shalatska et al. (2020) suggest that such innovations may be difficult to implement in regions with inadequate technological infrastructure, and McLaughlin et al. (2014) notes that some educational institutions are unable to fully utilize digital assessment tools due to infrastructure limitations and budget constraints. In summary, these factors suggest that the effective implementation of e-assessment tools may not be equally feasible in all educational institutions, which is a significant limitation that could hinder their widespread adoption.

Conclusion

The aim of this study was to conduct a meta-synthesis of 41 individual studies that examined the use of e-assessment tools in education, providing a comprehensive assessment of their prevalence, impact, advantages and disadvantages. Based on the findings and literature review, it was concluded that e-assessment tools have an important place in education. Their effective use can improve student performance, motivation and learning processes.

This research has shown that the most commonly used e-assessment tools are e-portfolios, web-based assessment tools and LMS. E-portfolios are preferred because they promote student-centered learning, facilitate the accumulation of digital work and speed up feedback processes (Abrami & Barrett, 2005; Barrett, 2011). Web-based assessment tools and LMSs have been found to meet the need to assess large groups of students quickly and efficiently (Shalatska et al., 2020; Stödberg, 2012).

The most frequently investigated dependent variables in the studies were automatic feedback, learning competence and student motivation. Automatic feedback is widely acknowledged to increase students’ engagement in the learning process and improve their performance (Crisp, 2011; Jiao, 2015). Improvements in learning competence and motivation are associated with e-assessment tools that promote students’ self-regulation and self-assessment skills (Zimmerman, 2002).

One of the most important benefits of e-assessment tools is their ability to provide immediate feedback, allowing students to quickly recognize and correct their mistakes (McLaughlin & Yan, 2017). In addition, benefits such as time management, effective assessment of academic performance and personal skill development further enhance the value of e-assessment tools in education.

However, the main disadvantages identified include instrumental shortcomings, incomplete measurement of performance, and issues related to time and internet connectivity. Inadequate technical infrastructure and lack of digital literacy limit the effective use of these tools (Redecker, 2013). Furthermore, some tools do not fully assess deep learning and critical thinking skills, which is seen as a major limitation.

The analysis of the studies’ recommendations highlighted the importance of adding different application plug-ins and integrating different types of assessment into e-assessment tools. These suggestions aim to improve the functionality of the tools and make the student assessment processes more flexible and inclusive (Wang & Woo, 2007). In addition, the improvement of security systems and the inclusion of different modes in the tools were also recommended.

In summary, this meta-synthesis study highlights the importance and potential of e-assessment tools in education. The results show that these tools are effective in increasing student achievement, improving learning processes and simplifying assessment tasks for teachers. However, addressing disadvantages such as technical infrastructure limitations, incomplete performance measurement and potential negative effects on social relations remains crucial for their optimal use.

Educational institutions and teachers should carefully consider these advantages and disadvantages when selecting and using e-assessment tools, and take steps to improve their technical infrastructure and digital literacy. In addition, future research should explore the long-term effects of these tools, their use at different levels of education and their integration with pedagogical approaches.

Limitations

This study has several limitations that should be acknowledged. First, the study only considered publications from December 2012 to January 2023. While this timeframe was chosen to reflect recent trends and developments in e-assessment tools, earlier influential studies that could provide valuable historical context may have been excluded.

Second, the inclusion criteria restricted the languages of the studies to English and Turkish. While this decision ensured consistency and accurate interpretation in the analysis, it introduces potential language bias and limits the generalizability of the findings to other linguistic and cultural settings.

Third, the literature search was confined to three major databases: ERIC, Scopus, and ISI Web of Knowledge. While these are reputable sources, relying solely on them may have excluded relevant studies from other databases, local journals, or gray literature, such as these, reports, and non-indexed conference papers, which could lead to publication bias.

Additionally, as a meta-synthesis based on qualitative thematic analysis, this study inherently involves interpretive judgment. Despite efforts to reduce subjectivity through a structured coding process, intercoder reliability checks, and expert validation, the synthesis process is still partly influenced by the researchers’ perspectives.

Suggestions

Based on the findings of this meta-synthesis, this study offers several practical suggestions and future research directions to improve the use and understanding of e-assessment tools in education.

First, digital platforms should integrate a wider range of assessment types. In addition to traditional quizzes and tests, these platforms should support performance-based assessments, peer evaluations, and project-based tasks. For example, tools such as Moodle could incorporate multimedia submissions, collaborative assignments, and self-reflection journals to promote comprehensive evaluation.

Second, e-portfolios should be redesigned to support multimodal and longitudinal learning evidence. In addition to written entries, they can incorporate audio-visual content, screencasts, and peer reviews. These features allow students to engage more deeply with content and enable educators to track developmental progress over time.

Third, institutions should invest in technical features such as automated feedback systems, plagiarism checkers, and learning analytics dashboards. Integrating Natural Language Processing (NLP)-based plugins, for example, can provide real-time formative feedback, thereby enhancing learner support and engagement.

Fourth, ongoing professional development for educators is essential. Training programs should cover not only technical proficiency, but also instructional design, formative assessment strategies, and equity in digital evaluation. The effective use of e-assessment tools relies on informed pedagogical application.

Future Research

Based on the insights gained from this meta-synthesis, future research should address several key areas to improve understanding of and implementation of e-assessment tools.

First, future studies should examine the use of these tools in various educational settings, such as primary (K–12), vocational, adult, and non-formal contexts. Much of the current literature focuses on higher education, leaving significant gaps in how these tools function across different learner groups and instructional environments.

Second, researchers should examine the long-term effects of e-assessment tools on learning outcomes, including their influence on critical thinking, collaboration, self-regulation, and digital literacy. Longitudinal and mixed-methods studies are especially needed to capture these complex and evolving impacts.

Third, the role of sociocultural, infrastructural, and linguistic factors shaping the adoption and effectiveness of e-assessment technologies requires further investigation. Cross-cultural and comparative research can shed light on how local contexts affect tool performance and user experience.

Fourth, future work should incorporate gray literature and non-English sources to create a more inclusive synthesis of global practices. This would reduce publication bias and elevate underrepresented voices in academic discourse on e-assessment.

Lastly, research should also examine emerging technologies such as AI-powered adaptive assessments, gamification, and learning analytics, focusing on their ethical implications, scalability, and pedagogical alignment.

Footnotes

Appendix

Individual Studies Included in the Meta-analysis.

| Code | Individual study |

|---|---|

| A1 | Mason, R., & Williams, B. (2016). Using eportfolio’s to assess undergraduate paramedic students: a proof of concept evaluation. International Journal of Higher Education, 5(3), 146-154. http://dx.doi.org/10.5430/ijhe.v5n3p146 |

| A2 | Buente, W., Winter, J. S., Kramer, H., Dalisay, F., Hill, Y. Z., & Buskirk, P. A. (2015). Program-based assessment of capstone eportfolios for a communication ba curriculum. International Journal of ePortfolio, 5(2), 169-179. |

| A3 | Domun, M., & Bahadur, G. K. (2014). Design and development of a self-assessment tool and investigating its effectiveness for e-learning. European Journal of Open, Distance and E-learning, 17(1), 1-25. |

| A4 | Domínguez, A. S., Morales, L., & Tarkovska, V. (2014). The role of eportfolios in finance studies: a cross-country study. Higher Education Studies, 4(1), 18-25. http://dx.doi.org/10.5539/hes.v4n1p18 |

| A5 | Xanthou, M. (2013). An intelligent personalized e-assessment tool developed and implemented for a Greek lyric poetry undergraduate course. Electronic Journal of E-Learning, 11(2), 101-114. |

| A6 | Manoban, A. (2021). Project-based learning and e-portfolios for preservice teachers in japanese language education. Journal of Education and Learning, 10(4), 40-50. https://doi.org/10.5539/jel.v10n4p40 |

| A7 | Sağlamel, H. & Çetinkaya, S. E. (2022). Students’ perceptions towards technology-supported collaborative peer feedback. Indonesian Journal of English Language Teaching and Applied Linguistics, 6(2), 189-206. http://dx.doi.org/10.21093/ijeltal.v6i2.978 |

| A8 | Pitts, W. & Lehner-Quam, A. (2019). Engaging the framework for ınformation literacy for higher education as a lens for assessment in eportfolio social pedagogy ecosystem for science teacher education. International Journal of ePortfolio, 9(1), 29-44. |

| A9 | Meletiadou, E. (2021). Using padlets as e-portfolios to enhance undergraduate students’ writing skills and motivation. IAFOR Journal of Education, 9(5), 67-83. |

| A10 | Korhonen, A. M., Ruhalahti, S., Lakkala, M. & Veermans, M. (2020). Vocational student teachers’ self-reported experiences in creating eportfolios. Internationa Journal for Research in Vocational Education and Training, 7(3), 278-301. https://doi.org/10.13152/IJRVET.7.3.2 |

| A11 | Kotzebue, L. V., Meier, M., Finger, A., Kremser, E., Huwer, J., Thoms, L. J. & Thyssen, C. (2021). The framework DiKoLAN (Digital competencies for teaching in science education) as basis for the self-assessment tool DiKoLAN-Grid. Education Sciences, 11(12), 775. https://doi.org/10.3390/educsci11120775 |

| A12 | Firmansyah J., Suhandi A, Setiawan A. & Permanasari A. (2022). Evaluation of virtual workspace laboratory: cloud communication and collaborative work on project-based laboratory’, journal on efficiency and responsibility. Education and Science, 15(3), 131-141. http://dx.doi.org/10.7160/eriesj.2022.150301 |

| A13 | Torres, J. T., García-Planas, M. I. & Domínguez-García, S. (2016). The use of e-portfolio in a linear algebra course. Journal of Technology and Science Education, 6(1), 52-61. http://dx.doi.org/10.3926/jotse.1811 |

| A14 | Mettiäinen, S. (2015). Electronic assessment and feedback tool in supervision of nursing students during clinical training. Electronic Journal of e-Learning, 13(1), 42-56. |

| A15 | Murray, J. A. v& Boyd, S. (2015). A preliminary evaluation of using WebPA for online peerassessment of collaborative performance by groups of online distance learners. International Journal of E-learning &Distance Education, 30, 112-124. |

| A16 | Groshans, G., Mikhailova, E., Post, C., Schlautman, M., Carbajales-Dale, P. & Payne, K. (2019). Digital story map learning for STEM disciplines. Education Sciences, 9(2), 75-98. http://dx.doi:10.3390/educsci9020075 |

| A17 | Shemahonge, R. & Mtebe, J. (2018). Using a mobile application to support students in blended distance courses: A case of Mzumbe University in Tanzania. International Journal of Education and Development using ICT, 14(3), 167-188. |

| A18 | Buyarski, C. A. & Landis, C. M. (2014). Using an ePortfolio to assess the outcomes of a first-year seminar: Student narrative and authentic assessment. International Journal of ePortfolio, 4(1), 49-60. |

| A19 | Farrús, M. & R. Costa-jussà, M. (2013). Automatic evaluation for e-learning using latent semantic analysis: A use case. International Review of Research in Open and Distributed Learning, 14(1), 239-254. |

| A20 | Cohen, D. & Sasson, I. (2016). Onlıne quızzes ın a vırtual learnıng envıronment as a tool for formatıve assessment. Journal of Technology and Science Education, 6(3), 188-208. http://dx.doi.org/10.3926/jotse.217 |

| A21 | Abdallah, M. & Mansour, M. M. (2015). Virtual task-based situated language-learning with second life: Developing EFL pragmatic writing and technological self-efficacy. Arab World English Journal (AWEJ), Special Issue on CALL, (2), 150-182. http://dx.doi.org/10.2139/ssrn.2843987 |

| A22 | Gökbulut, B. (2020). The effect of Mentimeter and Kahoot applications on university students’ e-learning. World Journal on Educational Technology: Current Issues. 12(2), 107-116. https://doi.org/10.18844/wjet.v12i2.4814 |

| A23 | Hui, K. S., Saeed, K. M. & Khemanuwong, T. (2020). Reading comprehension ability of future engineers in Thailand. Mextesol Journal, 44(4), 1-21. |

| A24 | Ćorić Samardžija, A., & Bubaš, G. (2014). The use of Elgg Social Networking Tool for Students’ Project Peer-Review Activity. In 8th International Conference on e-Learning 2014 (pp. 118-124). |

| A25 | Navarro, C., Delgado, I., & Calderón, M. G. (2019). Multimedia instructional unit for the approach of statistical topics in the high school diploma for adults program using the exelearning technological tool. Journal of Educational Psychology-Propositos y Representaciones, 7(2), 91-106. http://dx.doi.org/10.20511/pyr2019.v7n2.229 |

| A26 | Lu, Z., Li, X. ve Li, Z. (2015). AWE-based corrective feedback on developing EFL learners’ writing skill. In Critical CALL –Proceedings of the 2015 EUROCALL Conference (pp. 375-380). http://dx.doi.org/10.14705/rpnet.2015.000361 |

| A27 | Maphosa, V., Dube, B., & Jita, T. (2020). A UTAUT evaluation of whatsapp as a tool for lecture delivery during the covid-19 lockdown at a Zimbabwean University. International Journal of Higher Education, 9(5), 84-93. |

| A28 | Theunissen, N., & Stubbé, H. (2014). iSELF: The development of an internet-tool for self-evaluation and learner feedback. Electronic Journal of e-Learning, 12(4), 313-325. |

| A29 | Paschalıs, G. (2017). A compound LAMS-MOODLE environment to support collaborative project-based learning: A case study with the group investigation method. Turkish Online Journal of Distance Education, 18(2), 134-150. https://doi.org/10.17718/tojde.306565 |

| A30 | Vural, O. F. (2013). The impact of a question-embedded video-based learning tool on e-learning. Educational Sciences: Theory and Practice, 13(2), 1315-1323. |

| A31 | Román, P. E., Torres, J. E. O., Hernández, R. A. L., & Martínez, C. R. V. (2020). Entorno virtual e-evaluaciones como herramienta de gestión en grupos numerosos. Vivat Academia, 151, 107-125. http://doi.org/10.15178/va.2020.151.107-125 |

| A32 | Franklin, R., & Smith, J. (2015). Practical assessment on the run–iPads as an effective mobile and paperless tool in physical education and teaching. Research in Learning Technology, 23, 27986. https://doi.org/10.3402/rlt.v23.27986 |

| A33 | Al-Azawei, A., Baiee, W. R., & Mohammed, M. A. (2019). Learners’ experience towards e-assessment tools: A comparative study on virtual reality and moodle quiz. International Journal of Emerging Technologies in Learning (Online), 14(5), 34-50. https://doi.org/10.3991/ijet.v14i05.9998 |

| A34 | Abdou, R. A. E. (2020). Effects of e-assessment tools in academic achievement and motivation towards learning among pre service kindergarten teachers in Turaif. Amazonia Investiga, 9(28), 211-224. https://doi.org/10.34069/AI/2020.28.04.24 |

| A35 | Hunt, P., Leijen, Ä., & van der Schaaf, M. (2021). Automated feedback is nice and human presence makes it better: Teachers’ perceptions of feedback by means of an e-portfolio enhanced with learning analytics. Education Sciences, 11(6), 278. https://doi.org/10.3390/educsci11060278 |

| A36 | Tan, C. Y. A., Swe, K. M. M., & Poulsaeman, V. (2021). Online examination: a feasible alternative during COVID-19 lockdown. Quality Assurance in Education, 29(4), 550-557. https://doi.org/10.1108/QAE-09-2020-0112 |

| A37 | Ali, S., Hafeez, Y., Abbas, M. A., Aqib, M., & Nawaz, A. (2021). Enabling remote learning system for virtual personalized preferences during COVID-19 pandemic. Multimedia Tools and Applications, 80, 33329-33355. https://doi.org/10.1007/s11042-021-11414-w |

| A38 | Pearson, C., & Penna, N. (2023). Automated marking of longer computational questions in engineering subjects. Assessment & Evaluation in Higher Education, 48(7), 915-925. https://doi.org/10.1080/02602938.2022.2144801 |

| A39 | Kroeze, K. A., Van Den Berg, S. M., Veldkamp, B. P., & De Jong, T. (2021). Automated assessment of and feedback on concept maps during inquiry learning. IEEE transactions on learning technologies, 14(4), 460-473. https://doi.org/10.1109/TLT.2021.3103331 |

| A40 | Barra, E., López-Pernas, S., Alonso, Á., Sánchez-Rada, J. F., Gordillo, A., & Quemada, J. (2020). Automated assessment in programming courses: A case study during the COVID-19 era. Sustainability, 12(18), 7451. https://doi.org/10.3390/su12187451 |

| A41 | Gordillo, A., Barra, E., & Quemada, J. (2014). Enhancing web-based learning resources with quizzes through an authoring tool and an audience response system. In: 2014 IEEE Frontiers in Education Conference (FIE) Proceedings (pp. 1-8). |

Ethical Considerations

Consent to Participate

Not applicable.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.