Abstract

Artificial Intelligence (AI) education has become increasingly important as it is a key component of digital literacy that everyone should possess. However, this domain remains largely unexplored, especially at the primary school level. The purpose of this study is to examine whether combined unplugged and plugged-in activities can be more effective than solely plugged-in activities in enhancing AI literacy among primary school students. A repeated-measurement quasi-experimental study with a control group was conducted among 56 fourth-grade students. There were three learning phases for the intervention: in Phase 1, both the experimental group (EG) and control group (CG) received the same AI introduction lessons; in Phase 2, the EG engaged in unplugged activities and the CG engaged in plugged-in activities; and in Phase 3, both groups worked with plugged-in activities. We measured AI literacy development through an AI self-efficacy scale and a drawing assessment which were administered after each phase. In between and after the instruction, it is concluded that the inclusion of unplugged activities effectively improved students’ AI self-efficacy in a short period; mixed unplugged and plugged-in activities were beneficial for promoting students’ perception of AI. These findings offer some practical implications for AI education in primary schools, and educators need to strategically integrate both unplugged and plugged-in activities, optimizing their timing and sequence to maximize students’ AI learning performance.

Keywords

Introduction

Artificial Intelligence (AI) has become a key topic in our daily lives. AI applications such as facial recognition, service robots, and auto-driving profoundly impact the way we work and live (Ali et al., 2019). AI is no longer just necessary for computer scientists; it has become a vital digital competency required by everyone (Ng et al., 2021b; Su et al., 2023). The demand for AI education is growing, and it is crucial to develop a comprehensive understanding of AI technologies for K-12 students at an early age (Chai et al., 2021; Lee & Kwon, 2024; Touretzky et al., 2019). Therefore, educators should not only pay attention to the “material tools” but also emphasize the development of AI literacy (UNESCO, 2022), which enables students to assess AI technologies and critically interact with AI systems (N. Wang & Lester, 2023).

In response to this demand, researchers have focused their attention on AI education (e.g., Dai, Lin, Liu, Dai, & Wang, 2024; Dai, Lin, Liu, & Wang, 2024; Henry et al., 2021; Vartiainen et al., 2021;). Association for the Advancement of Artificial Intelligence and Computer Science Teachers Association (2019) proposed the “five big ideas” to explain essential AI concepts for K-12 students. Additionally, the International Society for Technology in Education (2022) provided a set of guidelines to assist teachers with AI instructional strategies and curriculum resources for all grade levels in K-12. Although teaching AI is most commonly carried out through plugged-in activities, educators also use other approaches such as unplugged activities (Liu & Zhong, 2024; Ng, Su et al., 2023; Yim & Su, 2025). Plugged-in activities rely on electronic devices and technological tools, while unplugged activities do not involve the use of electronic devices in the teaching process (Bell et al., 1998). However, there is insufficient research on the specific impact of the integration of these two different approaches on students’ AI literacy. This study aims to address this research gap by designing a series of mixed unplugged and plugged-in activities and evaluating the impact on primary school students’ AI literacy.

Literature Review

AI Literacy for K-12 Students

AI literacy is a set of skills that enable people to understand how AI works without the need to master a programing language (Rodríguez-García et al., 2020; Su et al., 2023). Long and Magerko (2020) define AI literacy as skills that enable individuals to evaluate AI technologies, interact with AI systems, and effectively utilize AI in everyday life scenarios. The AI for K-12 initiative has explored and refined the content of AI education for K-12 levels (Touretzky et al., 2019). They framed the guidelines, defining five big ideas in AI that every student should possess, highlighting essential AI concepts for each grade band (http://ai4k12.org). The Five Big Ideas include: (1) Perception, (2) Representation and Reasoning, (3) Learning, (4) Natural interaction, and (5) Societal impact. These concepts provide a strong foundation for research on AI literacy (Huang et al., 2024; Long & Magerko, 2020). However, more research is needed to focus on applying these five big ideas to design related AI curricula and evaluate students’ learning outcomes in AI (Yang et al., 2023).

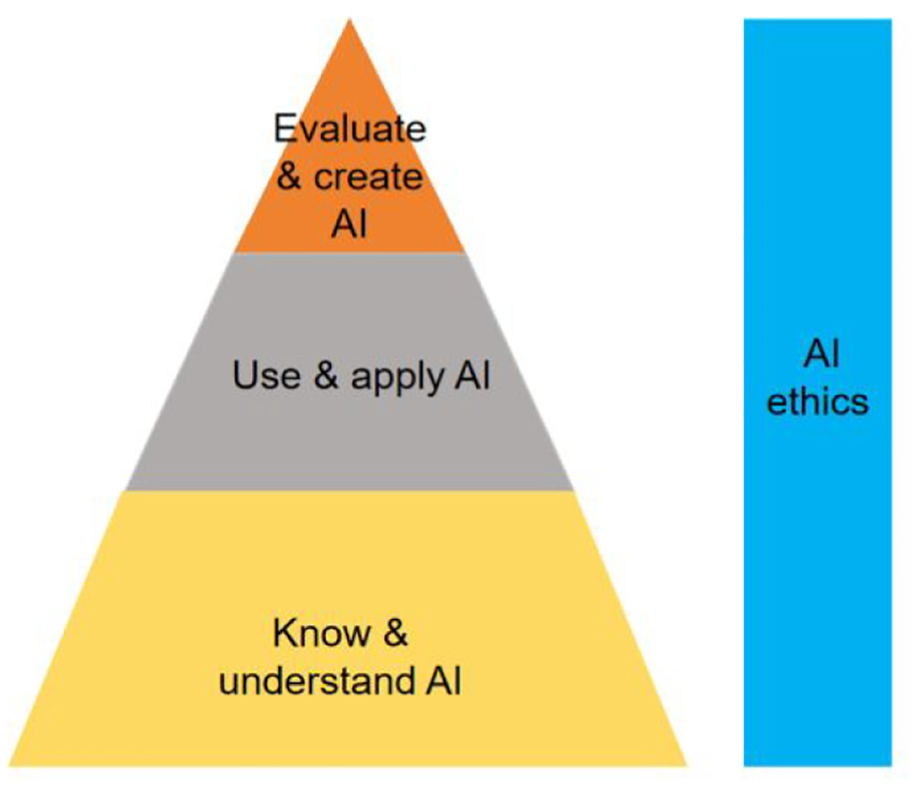

To design related AI literacy curricula, some researchers adopted the theory of Bloom’s Taxonomy (Ng et al., 2021b). For instance, Wong et al. (2020) suggested that students should develop AI literacy around three core dimensions: understanding AI concepts, applying AI technologies, and acknowledging AI ethics and safety. Ng et al. (2021a, 2021b) conceptualized AI literacy as encompassing four cognitive domains: knowing and understanding AI, using and applying AI, evaluating and creating AI, and AI ethics. These four cognitive domains of AI literacy offer a meaningful theoretical framework for developing AI curricula at primary schools.

Unplugged and Plugged-in Activities in AI Education

Unplugged activities utilize traditional teaching resources, such as paper and pencil, graphic aids, board games, and physical movement, to promote students’ understanding of computer science concepts (Bell et al., 2009; Brackmann et al., 2017). Without employing any digital tools or computational devices, these activities could be used to teach related computational thinking skills (Battal et al., 2021). For the specific pedagogy, researchers have applied debates, role-playing, and embodied learning to foster AI literacy in unplugged activities (Dai, Lin, Liu, & Wang, 2024; Henry et al., 2021; Ng, Su et al., 2023; Yang et al., 2023). These pedagogy-oriented unplugged activities not only enhanced students’ engagement but also encouraged them to actively construct and accumulate AI knowledge (Dai, Lin, Liu, Dai, & Wang, 2024; Liu & Zhong, 2024). Embodied cognition theory suggests that people’s abstract concepts are connected to sensorimotor processes, highlighting the importance of physical movement, sensory experiences, and emotional engagement in enhancing learning (Slepian & Ambady, 2014). In fact, many features of cognition are embedded and deeply dependent on the individual’s physical characteristics (Foglia & Wilson, 2013). Based on the unique role of physical movements and sensory experiences, applying embodied learning in unplugged activities could possibly help primary school students learn and understand AI.

Meanwhile, the plugged-in activities emphasize the use of electronic devices and technological tools, such as computers, programing software, online learning platforms, and robotics, to facilitate the learning process (Yim & Su, 2025). Educators have introduced a range of interactive learning tools, including robot programing kits (Rodríguez-García et al., 2020; Williams et al., 2019), Scratch (Dai, Lin, Liu, Dai, & Wang, 2024), teachable machine (Vartiainen et al., 2020), etc., to help students gain a deeper understanding of the principles behind AI technologies. Scratch is a widely used visual block-based programing language, which is more friendly for young learners as it provides “lower floor” programing (Maloney et al., 2010; Sáez-López et al., 2016). It helps primary school students understand foundational AI concepts without the need to learn complex programing languages (Zhang & Nouri, 2019). Web-based machine learning tools support students in developing an essential conceptual perception of AI by providing them with practical exercises (Shamir & Levin, 2022; Vartiainen et al., 2021). Taking the AI for Oceans activity (https://studio.code.org/s/oceans) as an example, it can help students learn basic principles of machine learning and recognize potential biases in AI models caused by the training sets (Lee & Kwon, 2024). Through these plugged-in activities, students could gain a more intuitive learning experience, which helps them understand how AI works.

These two kinds of activities are well-documented for their effectiveness within AI education. However, the literature mainly presents them respectively, without a deeper evaluation of their integrative and comparative effectiveness within primary education. Therefore, this study explored the advantages of these two combined activities and pedagogical insights for AI education.

Assessments in AI Education

To assess students’ AI learning outcomes, researchers have used quantitative methods such as surveys and knowledge tests, as well as qualitative methods including artifact analysis, performance evaluation, interviews, and field observations (Yim & Su, 2025). For example, Ng, Wu et al. (2023) developed a self-reported questionnaire on AI literacy (AILQ) to evaluate the development of AI literacy of middle school students. Chiu (2021) and Dai, Lin, Liu, Dai, and Wang (2024) developed AI knowledge tests to evaluate the effectiveness of the AI curriculum. As for the qualitative assessment, Vartiainen et al. (2021) conducted interviews to explore how students teach machines to learn. Dai, Lin, Liu, Dai, and Wang (2024) used a drawing assessment to evaluate students’ understanding of AI. In sum, combining both quantitative and qualitative assessment approaches offers a comprehensive picture of students’ AI learning outcomes. Considering students’ cognitive and expressive developmental stages in this study, we used a self-reported questionnaire and a drawing assessment to evaluate their AI literacy.

Research Questions and Hypotheses

In short, there is still limited understanding of the impacts of how combining two approaches, compared with solely plugged-in activities, impacts AI literacy development. To address this gap, we intentionally developed an AI literacy course for fourth-grade students, involving both two kinds of activities, and examined the impact on students’ AI self-efficacy and perception of AI. We also utilized mixed assessment methods to evaluate the effectiveness. The present study is guided by the following research questions:

Research question 1 (RQ1): How effective are the mixed unplugged and plugged-in activities, compared with solely plugged-in activities, in enhancing primary school students’ AI self-efficacy?

Research question 2 (RQ2): How effective are the mixed unplugged and plugged-in activities, compared with solely plugged-in activities, in promoting primary school students’ perception of AI?

Based on the connection between physical movements and cognition (Yang et al., 2023), we incorporated embodied learning into unplugged activities in the current study. And it is particularly suitable for fourth-grade students who typically exhibit more concrete thinking. On the other hand, empirical evidence supports the importance of active exploration in plugged-in activities (Li & Song, 2019; Dai, Lin, Liu, Dai, & Wang, 2024; Rodríguez-García et al., 2020). It is reasonable to anticipate that hands-on inquiry-based learning in plugged-in activities can also enhance students’ AI learning. Due to the different effects of the two kinds of activities, we hypothesize that the mixed unplugged and plugged-in activities are expected to be more effective in enhancing students’ AI literacy. Specifically, we proposed the following hypotheses:

H1: The mixed unplugged and plugged-in activities are more effective than solely plugged-in activities in enhancing primary school students’ AI self-efficacy.

H2: The mixed unplugged and plugged-in activities are more effective than solely plugged-in activities in promoting primary school students’ perception of AI.

Method

Participants

The participants in this study consisted of 56 fourth-grade students from a primary school in a western urban city, in China. These students were from two nature classes and selected for experimental and control groups randomly. As shown in Table 1, the experimental group (EG), consisting of 28 students (15 boys, 13 girls), engaged in mixed activities, namely unplugged activities initially and then plugged-in activities; the control group (CG), consisting of 28 students (14 boys, 14 girls), participated throughout only in plugged-in activities. None of the participants had prior exposure to AI-related instruction in their computer science courses. Both groups were taught by the same instructor with 6 years of teaching experience.

Demographic Information of the Groups.

Research Design

This study employed a repeated-measurement quasi-experimental design with a control group. The intervention consisted of three learning phases, each ending with a corresponding test: a pre-test, mid-test, and post-test. During the span of a 9-week intervention, a total of nine lessons were implemented. Each lesson lasted 45 min. Figure 1 illustrates the implementation process.

The experimental flow chart.

In the first learning phase, both groups concurrently attended two lessons focused on introducing common AI applications in daily life. After completing this initial phase, a pre-test was administered. During the second phase, the intervention was applied: the EG participated in four lessons of unplugged activities, while the CG engaged in four lessons of plugged-in activities. At the end of this phase, a mid-test was conducted to evaluate the impact of different activities. In the third phase, both groups underwent three lessons of the same plugged-in activities, focusing on applying AI concepts through digital interfaces and practical applications. This phase concluded with a post-test to measure the overall learning outcomes.

AI Learning Activities

In this study, both unplugged and plugged-in activities were developed, enabling students to interact with AI technology through diverse approaches, thereby enhancing their AI literacy. All activities were closely connected to students’ real-life experiences.

Themes

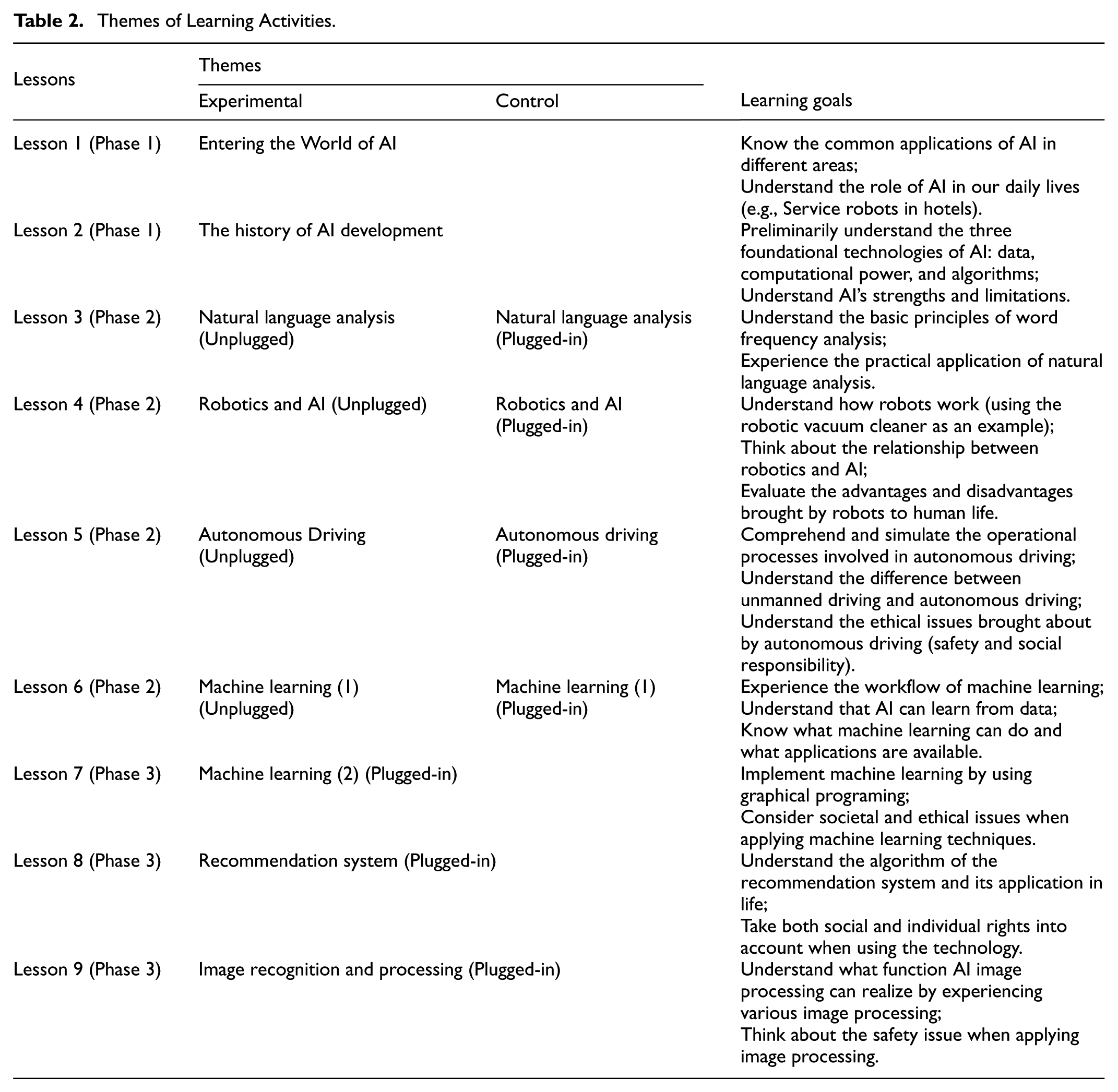

The AI course was designed around the most popular and widely used AI technical tools, such as machine learning, autonomous driving, AI robotics, and daily AI applications. Table 2 displays the specific themes and corresponding learning goals of the nine lessons.

Themes of Learning Activities.

Activities

Based on the conceptual framework of AI literacy proposed by Ng, Wu et al. (2023; see Figure 2), the specific learning activities of each lesson were organized in the following sequence: “Introduction to AI technology - Understanding AI basic concepts - Learning the principles and implementation processes of AI technology - Applying AI technology - Reflecting on the impact of AI technology.”

The activities procedure of each lesson, as adapted from Ng, Wu et al. (2023).

In the unplugged activities of Phase 2, we incorporated embodied learning into learning tasks, emphasizing the crucial role of interaction between the individual and the environment, as well as the importance of physical and sensory experiences in the learning process (Slepian & Ambady, 2014). For example, students in unplugged activities collaborated on a role-playing game to grasp the principles and processes of relevant AI technology (see Appendix 1). Students respectively simulated an AI chip, sensors, obstacles, and an autonomous car to understand how AI technology safely manages the car under various road conditions. Through physical movements and team collaboration, students directly experienced the workflow of autonomous driving, enhancing their understanding of how AI functions.

In the plugged-in activities of Phase 2 and 3, students engaged with AI using electronic devices. For example, students used a web-based machine-learning platform (AI for Oceans from code.org) to understand the machine-learning process (see Appendix 1). By interacting with the AI agent, students explored how the AI was trained to identify objects as either “a fish” or “not a fish.”

Research Instruments

AI Self-Efficacy Scale

Self-efficacy refers to an individual’s belief in their ability to successfully perform tasks or achieve goals (Bandura, 1977). It plays a significant role in influencing how people think, feel, motivate themselves, and behave. Students with high self-efficacy in educational contexts are more likely to actively engage in classroom activities that involve motivation, cognition, and behavior (Martin & Rimm-Kaufman, 2015). In the context of AI education, self-efficacy has been adapted to a specific type: AI self-efficacy and it refers to one’s general belief in their ability to use and interact with AI (Y. Y. Wang & Chuang, 2024), which can affect learners’ attitudes toward AI and their actual use of AI (Hong, 2022).

Ng, Wu et al. (2023) developed a self-reported questionnaire on AI literacy (AILQ) for middle school students across the four dimensions: Affective, Behavioral, Cognitive, and Ethical (ABCE). In this study, an AI self-efficacy scale was adapted from the AILQ for primary school students. The adapted scale includes 39 items across the four dimensions of the ABCE framework (e.g., I am confident that I can do well in the AI related tasks). Students were asked to rate their agreement with each item on the five-point Likert scale from strongly disagree to strongly agree. The adapted version was tested among 251 primary school students. The results of the Confirmatory Factor Analysis show that χ2/df = 1.54, CFI = 0.92, TLI = 0.91, RMSEA = 0.05, and SRMR = 0.06, indicating that the adapted scale has a good structural fit. Furthermore, the standardized factor loadings for each item range from 0.56 to 0.84, with all values exceeding 0.50, suggesting that these indicators effectively measure their corresponding constructs. Additionally, the Average Variance Extracted values for the four dimensions range from 0.51 to 0.56, all surpassing 0.50, which further supports the scale’s convergent validity. Simultaneously, the Composite Reliability values for the four dimensions range from 0.91 to 0.92, all above 0.60, indicating a high level of internal consistency among the constructs. In summary, these indicators demonstrate that the scale has good construct validity. The reliability of the AI literacy scale was also analyzed with Cronbach’s alpha coefficients (.95 > .80).

Drawing Assessment

We conducted a drawing assessment in the three tests to explore the changes in students’ perception of AI. Drawing assessment is a tool that can represent students’ understanding of scientific concepts (Carey, 2000). It is widely used in science education to evaluate students’ understanding of abstract concepts, especially for young learners with limited language skills (Chang et al., 2020; Dai, Lin, Liu, Dai, & Wang, 2024). In this research, students were asked to draw a picture in response to the guiding questions: “What do you think artificial intelligence is?” and “What comes to your mind when mentioning artificial intelligence?.” The teacher encouraged them to use written words to explain their drawings. Figure 3 displays examples of students’ drawings.

Student drawings collected from the pre- and post-tests.

We coded the students’ drawings to identify the level of their perception of AI. The Five Big Ideas and their guidelines (https://ai4k12.org) were used to categorize the AI concepts depicted in the drawings. The students’ drawings were then analyzed to identify which parts reflect each big idea. The level of each student’s perception of these big ideas was evaluated using a four-level coding system (see Table 3), adapted from Dai, Lin, Liu, Dai, and Wang (2024)’s five-level code list. The code list ranged from no awareness, awareness, and partial scientific fragments to scientific understanding, scoring from 1 to 4. First, two researchers independently coded a collection of 15 pieces of student drawings, achieving an initial agreement rate of 86.67%. After discussing and resolving the disagreements, they independently coded another 20 drawings, reaching a further agreement rate of 95.00%. Following further discussion, all remaining disagreements were resolved, leading to 100% agreement. Subsequently, all drawings were coded according to the finalized criteria.

The Four-Level Code List and Criteria.

Results

In this study, the effectiveness of mixed unplugged and plugged-in activities versus solely plugged-in activities was assessed primarily through the analysis of test scores. Given that the test data did not follow a normal distribution, non-parametric tests were employed, including the Mann-Whitney U test for independent samples and the Wilcoxon Signed-Rank Test for paired samples.

RQ1: Impact on AI Self-Efficacy

Difference Between Groups

Table 4 displays the mean scores and standard deviations for AI self-efficacy from the scale’s tests. Overall, the results of the Mann-Whitney U test indicated there was no significant difference in the pre-test between the two groups (U = 373.00, p = .755, d = 0.08), as well as no significant difference in the mid-test (U = 336.00, p = .358, d = 0.25) or post-test (U = 312.00, p = .189, d = 0.36). While the mean scores of the EG in the pre-test and mid-test were slightly higher than those of CG, the score of the EG in the post-test was slightly lower than that of the CG.

AI Self-Efficacy Average Result.

Changes Within Each Group

Table 5 shows the changes in scores for the pre-mid, mid-post, and pre-post tests in AI self-efficacy within each group. The results of the Wilcoxon Signed-Rank test revealed that the EG’s mid-test overall score was significantly higher than that of the pre-test score (Z = 2.09, p = .037, d = 0.86), as well as the construct of Ethics (Z = 2.03, p = .042, d = 0.83). These results indicated that the unplugged learning activities significantly improved students’ AI self-efficacy, particularly in the ethics dimension. However, for the EG, the post-test overall score was slightly lower than the mid-test score (Z = −1.89, p = .059, d = −0.76). In contrast, for the CG, the results showed no significant increase in the overall scores from the pre-test to the mid-test (Z = 1.50, p = .136, d = 0.59), nor from mid-test to post-test (Z = 0.89, p = .374, d = 0.34).

AI Self-Efficacy Average Differences.

p < .05. **p < .01.

In addition, for the EG, no significant increase was observed from the pre-test to post-test scores, indicating that students’ AI self-efficacy did not significantly increase following the mixed unplugged and plugged-in activities (Z = 0.31, p = .758, d = 0.11). Conversely, for the CG, the results showed a significant increase in AI self-efficacy from the pre-test to the post-test (Z = 3.11, p = .002, d = 1.45), as well as the constructs of Affect (Z = 2.91, p = .004, d = 1.32), Behavior (Z = 2.44, p = .015, d = 1.04), and Cognition (Z = 3.18, p = .001, d = 1.50).

RQ2: Impact on Students’ Perception of AI

Difference Between Groups

As shown in Table 6, the descriptive statistics and results of the Mann-Whitney U test revealed no statistically significant difference between EG and CG in the perception of the pre-test (U = 382.00, p = .863, d = 0.04). Similarly, no significant difference was found between the groups in the mid-test (U = 321.00, p = .240, d = 0.32). However, for the post-test, the overall score in drawings of the EG was significantly higher than that of the CG (U = 240.50, p = .012, d = 0.70), suggesting that the mixed unplugged and plugged-in activities were more effective in enhancing students’ perceptions of AI compared to the activities involving only plugged-in methods.

Comparative Analysis of Drawing Scores.

p < .05.

Changes Within Each Group

Table 7 illustrates the within-group average changes in drawing scores from the pre-test to mid-test, mid-test to post-test, and pre-test to post-test. The results of the Wilcoxon Signed-Rank test showed statistically significant increases from the pre-test to the mid-test in both the EG (Z = 3.57, p = .000, d = 1.83) and the CG (Z = 3.01, p = .003, d = 1.38). For the EG, significant improvements were also found in the constructs of “Representation and Reasoning,”“Learning,” and “Natural Interaction.”

Average Differences in Drawing Scores.

p < .05. **p < .01.

Additionally, a statistically significant increase in drawing scores from the mid-test to the post-test was observed only in the EG (Z = 2.90, p = .004, d = 1.31). This indicates that, despite both groups engaging in the same plugged-in activities during the third phase of the learning activities, the EG students made more significant progress than those in the CG. For the EG, significant improvements were also found in the constructs of “Perception” and “Societal impact.”

Furthermore, the results showed significant increases in drawing scores from the pre-test to the post-test for the EG (Z = 4.56, p = .000, d = 3.40) and the CG (Z = 3.46, p = .001, d = 1.73). For the EG, the results demonstrated significant improvements across all the constructs; for the CG, the constructs of “natural interaction” (Z = 0.78, p = .439, d = 0.30) and “societal impact” (Z = 1.93, p = .053, d = 0.78) did not significantly improve.

Discussion

This study aimed to explore and compare the effectiveness of mixed unplugged and plugged-in activities with solely plugged-in activities in enhancing AI literacy among primary school students. To examine the effectiveness, researchers conducted the pre-test, mid-test, and post-test to measure fourth-grade students’ AI self-efficacy and their perception of AI.

The Impact of Different Types of Activities on AI Self-Efficacy

Regarding AI self-efficacy, the EG experienced significantly greater improvement after participating in the 4-week unplugged activities in phase 2. This result suggests that unplugged activities in AI literacy education can effectively increase primary school students’ AI self-efficacy. It confirms previous findings by Hermans and Aivaloglou (2017), allowing us to conclude that students tended to show higher self-efficacy when they began with unplugged activities. Unplugged activities like game-based learning have been shown to enhance students’ interest, motivation, and self-confidence (Ma et al., 2022), These factors likely help explain the significant improvement in AI self-efficacy in the EG. In unplugged activities, students became more actively involved and confident in their learning tasks (Hermans & Aivaloglou, 2017). A unique contribution of embodied and collaborative learning embedded in unplugged activities has been evidenced to be beneficial for fourth-grade students’ AI self-efficacy.

The CG demonstrated a significant increase in AI self-efficacy after participating in the 7-week plugged-in activities in Phases 2 and 3. The result confirms previous research (Ma et al., 2021) in related areas of computer science education, which suggested that the plugged-in activities within problem-solving contexts promoted students’ computational thinking self-efficacy. Younger students gained a richer visual learning experience by using technical tools in plugged-in activities, which helped meet their needs (Long & Magerko, 2020). In addition, the plugged-in activities provided more hands-on learning experiences and timely feedback (Thuneberg et al., 2018). Therefore, combining hands-on learning experiences with technology in plugged-in activities could possibly enhance students’ AI self-efficacy.

However, inconsistent with our initial hypothesis, the EG did not experience a significant increase in AI self-efficacy between the pre- and post-test after participating in the mixed unplugged and plugged-in activities. Specifically, the EG showed a significant improvement after the 4-week unplugged activities, followed by a decline after the 3-week plugged-in activities. This finding stands in contrast with a study by del Olmo-Muñoz et al. (2020), which concluded that unplugged activities followed by plugged-in activities were beneficial for student’s motivation. In the current study, the plugged-in activities involved complex tasks such as block-based programing. Nevertheless, this posed a challenge for the students of the EG, who had not been exposed to such activities in Phase 2, when they were transitioning from unplugged to plugged-in activities. This may explain the observed decrease in the EG students’ AI self-efficacy.

The Impact of Different Types of Activities on Perception of AI

Regarding the students’ perception of AI, both the EG and CG experienced significant increases in drawing scores between the pre- and post-test. However, the EG showed a significantly higher overall drawing score compared with the CG in the post-test. Additionally, the EG demonstrated significant improvements across all “five big ideas” following the entire intervention. These results align with our hypothesis that mixed unplugged and plugged-in activities are more effective than solely plugged-in activities in promoting primary school students’ perception of AI. The significant outperformance emphasizes the role of physical movement and sensory experiences in learning (Abrahamson et al., 2020; Slepian & Ambady, 2014; Wilson & Foglia, 2011). Meanwhile, plugged-in activities, especially web-based machine learning, provided opportunities for hands-on, inquiry-based exploration (Thuneberg et al., 2018). Since embodied learning in unplugged activities and hands-on inquiry-based learning in plugged-in activities differ in their focus (Yang et al., 2023), these differences might result in varied AI learning outcomes. It leads us to infer that by integrating both two types of activities, students in the EG could gain more interactive and comprehensive learning experiences, consequently enhancing their perception of AI. These findings suggest that it is more appropriate to initially introduce unplugged activities in AI literacy education. This sequence may help students build a strong foundational understanding of AI concepts.

Conclusion, Implication, and Limitations

In order to establish the objectives of this work, we mainly examined the effectiveness of mixed unplugged and plugged-in activities, compared with solely plugged-in activities, in improving primary school students’ AI self-efficacy and perception of AI. Using a repeated-measurement quasi-experimental design, we found that: (a) the unplugged activities significantly increased students’ AI self-efficacy in a 4-week learning period, however, when combined with the plugged-in activities, they did not positively impact students’ AI self-efficacy; (b) the mixed unplugged and plugged-in activities were more effective than the solely plugged-in activities in improving students’ perception of AI.

These findings have practical implications for AI education in primary schools and point to the potential of combining unplugged and plugged-in activities to promote students’ AI literacy. This study provides evidence and support for integrating embodied learning into unplugged activities, which positively influenced students’ AI self-efficacy and perception of AI. Therefore, educators are encouraged to explore how to incorporate the Five Big Ideas to design high-quality unplugged activities in AI education and effectively integrate them with plugged-in activities to facilitate their AI learning performance.

There were some limitations in this study. First, we implemented the research with a limited sample size from a single primary school setting, which may affect the generalizability of the findings. The small sample size also limits the statistical power of the study, potentially affecting the reliability of the conclusions drawn. Future research should aim to expand the sample size, ideally across multiple grades, schools, and districts, to improve the representativeness and robustness of the findings. Second, this study only focused on the short-term impact of the mixed activities on learning outcomes, without examining the long-term impact. Future research should explore the sustained effects after a longer period. Third, the learning outcomes of the AI course in this study were measured using an AI self-efficacy scale and a drawing assessment rather than knowledge tests and artifacts assessment. These two approaches we adopted were somewhat subjective. Future studies could focus on developing age-appropriate and more objective assessment tools to accurately measure the development of students’ AI literacy.

Footnotes

Appendix 1

Following are two cases of the designed activities:

Ethical Considerations

The authors obtained approval from the Ethics Committee of Shanxi Normal University before commencing their research

Consent to Participate

All participants willingly volunteered and provided written informed consent to participate in the study. They were aware of their right to withdraw from the experiment at any point. Participants were assigned numerical identifiers instead of using their names to ensure confidentiality. The collected data were only used for research purposes.

Author Contributions

Jie Ruan: Conceptualization, Methodology, Data curation, Formal analysis, Writing—original draft, Writing—review & editing. Hongliang Ma: Conceptualization, Methodology, Resources, Visualization, Writing—review & editing, Supervision. Junmei Sun: Conceptualization, Methodology, Data curation, Writing—review & editing. Haichuan Chu: Conceptualization, Methodology, Investigation, Data curation. Bin Jing: Data curation, Writing—review & editing. Yuxi Zhang: Writing—review & editing.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work is supported by the National Social Science Fund of China [BCA240056].

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The data that support the findings of this study are available on request from the corresponding author.