Abstract

This research explores how the social development of artificial intelligence (AISD) impacts its social acceptance (SCA) through both social trust in AI technology (AIST) and social ethical perception of AI technology (AIEP). The results show that AIST serves as a mediator between AISD and SCA, while AIEP serves as a moderator between AIST and SCA, indicating during the process of promoting AI technology that enhancing technological transparency, protecting data privacy, and taking ethical issues into account are all critical to improving SCA. By building and verifying a holistic model that encompasses direct impacts, mediation, moderation, and moderated mediation, this paper offers beneficial insights into the social acceptance mechanism for AI technology and serves as an empirical basis and theoretical support for the development and effective promotion of AI technology.

Introduction

With the rapid development and broad application of artificial intelligence (AI), social trust in AI technology (AIST), social ethical perceptions of AI technology (AIEP), and social acceptance of the development of AI technology (SCA) have all become the focus of relevant academic and industrial circles. The application of AI technology is seen in many fields, such as healthcare (Apell and Eriksson, 2023), education (Adıgüzel et al., 2023), and financial services (Rahman et al., 2023). Indeed, the use of these technologies has introduced a remarkable social transition, which has been accompanied by moral and ethical challenges and risks that further shape the social acceptance of the development of AI technology (AISD), such as privacy infringement, data bias, data-driven discrimination (Jin, 2018), and unemployment. Therefore, it is of great significance for broadening the application of AI technology to explore AI ethics and the social acceptance of AI and to understand the effects of trust as a mediator and ethical perceptions as a moderated mediator.

Moderated mediation captures the complexity of variable relationships by illustrating how a moderating variable can alter the strength or significance of the mediation pathway. Specifically, the moderator may influence the relationship between the independent variable and the mediator or the link between the mediator and the dependent variable. As a result, the indirect effect may vary across different conditions, reflecting the contextual dependencies of the mediated relationship.

To probe the relation between AISD and SCA, this study explores how trust and ethical perceptions impact the dynamic interaction between AISD and SCA as a mediator (mediating variable) and a moderated mediator, respectively. Although the literature has explored AISD and SCA (e.g., Adıgüzel et al., 2023; Apell and Eriksson, 2023; Vellido, 2019), their respective roles as a mediator and a moderated mediator and the mechanisms for mediation and moderated mediation remain unclear. This study hence examines the following problem. There is a significant gap in producing insights into the relation between AISD and SCA through trust and ethical perceptions. In particular, the specific mechanism for mediating and moderating the relationship with trust and ethical perceptions still remains to be clearly and systematically comprehended. Therefore, it is imperative to explore the mediation of trust and the moderated mediation of ethical perceptions in the AISD and SCA nexus, so as to promote the understanding and acceptance of AISD worldwide.

Based on the aforementioned problem, the following research objectives of this study are proposed. (1) This study shall reveal the direct impact of AI technology on its SCA through an in-depth analysis of the correlation between AI technology and its SCA. The first objective is to measure the direct impact of AI technology on the degree of its SCA. (2) This study evaluates the mediation of trust between AISD and SCA—namely, it explores how AIST serves as a bridge to connect AISD and SCA and whether AISD will be more accepted when AIST is built up. (3) This study evaluates the moderation and moderated mediation of ethical perceptions between trust and SCA, so as to identify and evaluate the mechanism for impacting the trust-SCA nexus with ethical perceptions.

Based on the aforementioned problem and research objectives, the following research questions shall be answered. (1) Is there a direct relation between AISD and SCA? Concerning this question, this study explores the direct impact of AISD (as an underlying variable) on SCA and verifies the basic model concerning the impact of AISD on SCA. (2) Does AIST serve as a mediator between AISD and SCA? With respect to this question, the focus is on the effect of AIST (as a mediating variable) on the mediation of the AISD and SCA nexus. (3) What is the effect of AIEP (as a moderating variable) on the moderation/moderated mediation of SCA with trust? By exploring these questions, this study fills the gap in the literature and provides broader insights, thereby promoting the development of AIST and AI-related ethics as well as expanding the social acceptance of AI.

Questionnaire-based surveys are conducted to collect data. This is executed before using Hayes’ PROCESS (an SPSS-based macro-level tool for statistical analysis) to analyze the mediation of AIST and the mediation and/or moderated mediation of AIEP (Hayes, 2018) and in order to reveal the impact mechanism in a more detailed manner. Doing so raises our understanding of the relation between ethical perceptions and SCA and provides instructive suggestions for promoting technological development and social welfare.

The rest of the paper runs as follows. The introduction covers the research motivation, the research objectives, and the research problem. Section “Theoretical and literature review” provides a theoretical and literature review. Section “Research methods” offers the research methodology and the research hypotheses. Section “Empirical analysis” gives an empirical analysis. Section “Discussion” presents a discussion. Last section concludes and offers suggestions. This paper is structured in a way that helps clarify the research process and the results. It also gives a systematic description of the theoretical-empirical interaction in the analytic process so that readers can gain a better understanding of the backgrounds, methodology, and results, as well as the paper’s significance for technological development and social welfare.

Theoretical and Literature Review

Based on the Technology Acceptance Model (TAM), the Innovative Diffusion Theory (IDT), and the Socio-Technical Systems Theory, this study looks into how AISD impacts SCA through AIST and AIEP.

Davis (1989) proposed TAM as a theoretical framework for explaining and predicting how users accept and use new technologies. Its key hypothesis is that a user’s intention to apply new technologies depends on two factors: perceived usefulness and perceived ease of use (Venkatesh and Davis, 2000). While perceived usefulness refers to the degree to which an individual believes that employing a particular technology improves his/her job performance, perceived ease of use refers to the degree to which an individual believes that utilizing a particular technology is effortless. The two factors further impact the user’s attitude toward using the technology, thereby eventually impacting the user’s intention and actual behavior toward the technology.

Rogers et al. (2014) proposed IDT to explain how innovation diffusion occurs within a social system. With the focus placed on four elements (innovation, communication channels, time, and a social system), this theory describes the five steps of innovation diffusion: knowledge, persuasion, decision-making, implementation, and confirmation. Rogers also categorized innovation adopters into innovators, early adopters, early majority, late majority, and laggards, while pointing out that successful innovation diffusion depends on features such as relative advantage, compatibility, complexity, trialability, and observability.

The Socio-Technical Systems Theory was set up by Emery and Trist (1960) before it was improved by many scholars. The theory emphasizes the interdependence between a technical system and a social system. The concept of joint optimization was then proposed (Di Maio, 2014), suggesting that both technical and human needs must be considered to achieve the optimal status of the overall system when designing or redesigning a system.

In summary, this study further delineates its integrative logic and application framework. First, the Technology Acceptance Model (TAM) primarily focuses on individual-level technology adoption behavior, with its core constructs being Perceived Usefulness (PU) and Perceived Ease of Use (PEOU). These variables determine users’ technology adoption intentions and actual usage behaviors. In this study TAM serves as a theoretical lens to explain how individuals develop trust and attitudinal acceptance toward AI technology based on their cognitive perceptions, which subsequently influence societal acceptance of AI technology.

Second, the Innovation Diffusion Theory (IDT) extends TAM’s individual-centric perspective by emphasizing the social diffusion process of technology. IDT identifies five key attributes that drive innovation diffusion: relative advantage, compatibility, complexity, trialability, and observability. This study applies IDT to illustrate how AI technology is widely adopted at the societal level and examines variations in technology acceptance across different social groups. IDT provides insights into how technology trust influences societal acceptance, asserting when a technology is perceived as congruent with societal needs and norms that public acceptance is likely to increase.

Finally, Science and Technology Studies (STS) offers a macro-level sociotechnical perspective, positing that technological development is intricately intertwined with social structures, cultural values, and ethical norms. STS underscores that technological progress is not merely a function of technological advancements, but also necessitates the co-evolution of technological and social systems. This study utilizes STS to explore how AI ethical perception moderates the relationship between technology trust and societal acceptance, demonstrating that neglecting ethical considerations in technological development may erode public trust and thereby impede widespread adoption.

TAM in essence explains individual-level AI adoption. IDT expands the analysis to the societal level, assessing how technology trust influences societal acceptance. STS highlights the critical role of ethical perception in shaping technology adoption. The integration of these three theoretical frameworks provides a comprehensive analytical lens, enabling a deeper understanding of the dynamic interplay among technology, trust, ethics, and societal acceptance.

Although IDT is primarily used to explain technology diffusion, its core constructs also serve as key determinants of technology acceptance. The core variables in this study—AI technology trust, ethical perception, and societal acceptance—-are closely aligned with IDT’s five key attributes influencing adoption.

Research on AI ethics, trust, and technology acceptance has extensively applied TAM, affirming the pivotal role of trust in the technology adoption process. However, most studies have predominantly examined the direct mediating effect of trust, with limited exploration of how ethical perception influences the indirect effect of trust on technology acceptance. The literature often treats ethical perception as an independent variable without thoroughly analyzing its dynamic moderating role in technology trust.

While TAM has been widely utilized in technology acceptance research, relatively fewer papers have integrated IDT and STS, leaving gaps in understanding the societal diffusion and sociotechnical interaction aspects of technology adoption. By synthesizing TAM, IDT, and STS, this study adopts a more holistic perspective on the AI technology acceptance process and addresses existing theoretical gaps through moderated mediation analysis. The findings herein contribute to a more nuanced understanding of AI adoption in both individual and societal contexts.

The following part of this section reviews the literature on the relation between AISD and SCA, the mediation of AIST in the relation between AISD and SCA, and the moderation of AIEP in the relation between AIST and SCA.

Literature on the Relation Between AISD and SCA

AISD has become a hot topic in recent years. The social acceptance of AI technology not only impacts the track of technological development, but also reflects human expectations and aspirations for future societies. As social awareness and understanding of AI technologies improve, users are taking increasingly positive attitudes toward adopting and supporting these technologies. For example, Cioffi et al. (2020) mentioned that as the development and application of AI technology result in the better use of data, the public may become more willing to accept and apply AI technology after learning that it is more convenient and displays higher efficiency. Verma et al. (2021) argued that as the application of AI technology in the fields of healthcare, education, transportation, and entertainment becomes more extensive and effective, social awareness and understanding of AI technology have increased significantly, resulting in greater SCA. Moon (2023) pointed out with the development of AI technology that many countries and regions have begun to formulate policies and rules to guide its development and application, so as to improve AIST and SCA. In general, with progress in AI technology and the broadening of its application, AISD will expand and SCA will continue to increase.

Literature About the Mediation of AIST in the Relation Between AISD and SCA

As the application of AI technology has become increasingly common in the fields of healthcare, financial services, and transportation systems, there have been increasingly more studies of AI’s impact on trust. For example, Cheatham et al. (2019) held that AI systems can rapidly process and analyze massive data, thereby improving the efficiency and accuracy of decision-making in the fields of medical diagnosis and financial risk assessment, which can help build and maintain AIST. Glikson and Woolley (2020) noted that AI systems enable the algorithm-based analysis of big data, and the decision-making process of AI systems is traceable through techniques for data visualization and interpretation. These actions help enhance the transparency of decision-making, and such transparency in turn helps build individual and organizational trust in AI systems. Dziedzic (2023) found with the assistance of AI technology that humans can build trust in a more extensive and in-depth aspect. For example, intelligent contracts in decentralized financial transactions provide a mechanism for guaranteeing the security and transparency of these transactions that is free from third-party involvement and directly enhances counter-party trust in transactions. AISD clearly has a significantly positive impact on the build-up of AIST (H2).

By improving transparency, efficiency, and accuracy of decision-making, AI technology enhances public trust in the technology itself and also promotes the building of social trust to a wider extent. In the future, the continuous progress in AI technology and the ongoing expansion of the scope of its application will play a more important role in building trust. However, when AIST is promoted, attention should also be paid to potential challenges arising from AISD, such as privacy infringement and algorithmic bias. In order to overcome these challenges, policymakers, technology developers, and all other walks of life should make a concerted effort to facilitate the healthy development of AI technology that produces a positive social effect.

As a key factor in promoting the relation between individuals and AI technology, AIST is also a crucial element in helping AI technology gain extensive social acceptance and widespread application. Choung et al. (2023a) stated that the public is more likely to accept and support AI technology when there is enough confidence about its performance, purposes, and impacts. Meyer-Waarden and Cloarec (2022) mentioned that understanding how technology works and how it can improve life quality or work efficiency can help reduce user resistance arising from the fear of the unknown (H3). In summary, AIST is a key factor at improving the social acceptance of AI technology. Building and maintaining trust require not only the transparency, reliability, and effectiveness of technology, but also the social, ethical, and psychological implications of technology. With the continuous development and application of AI technology, strengthening trust-building communication between the public, enterprises, and policymakers will play a critical role in promoting its healthy development and overall social welfare.

Literature on the Moderation of AIEP in the Relation Between AIST and SCA

As AI technology has gradually integrated into every aspect of society, public concerns about AI ethics have also been growing. These concerns include privacy infringement, the lack of transparency in decision-making, bias, and discrimination. The perception of ethical problems is an important factor impacting the measurement of SCA’s intensity and may serve as a moderator in AIST. Literally, AIEP refers to an individual’s perception and evaluation of the moral legitimacy or acceptance of a particular behavior or decision (Herman and Pogarsky, 2023). In terms of AI, the concept is about the public judgment of whether AI technology and its applications are equal, just, and conducive to social welfare.

As Choung et al. (2023b) pointed out, the public is more likely to trust an AI technology when people believe it conforms to certain ethical standards, is able to protect personal privacy, and equally processes information. On the contrary, when it concerns them that AI may lead to inequality or infringe upon individuals’ rights, they may become suspicious of AI technology to the detriment of AIST. Figueroa-Armijos et al. (2023) also stressed that positive ethical perceptions may enhance the positive impact of AIST on SCA, making people more willing to accept and use AI technology, while negative ones may weaken the impact of AIST and impair SCA. In other words, when AI technology is perceived as aligned with socially expected ethical norms, technology trust is more likely to facilitate societal acceptance. However, if AI is perceived as a threat to fundamental rights or core values, then even a high level of technology trust may not necessarily translate into broad societal acceptance (Floridi et al., 2018).

This study infers that AIEP serves as a moderator of AIST’s impact on SCA (H4). This effect can be explained through the concepts of ethical risk perception and moral threshold standards. When ethical standards are high, if technological development fails to meet public moral expectations, then the impact of established trust on societal acceptance may weaken. Conversely, when technology aligns with higher ethical standards, the positive influence of trust on acceptance becomes more pronounced. This indicates that ethical perception serves as a moderating factor in the mediating mechanism of technology trust. Therefore, given hypotheses H2, H3, and H4, this study further infers that AIEP serves as a significant moderated mediator of AISD’s impact on SCA through AIST (H5).

Research Methods

Based on the literature review about AISD, AIST, AIEP, and SCA, this research proposes a holistic framework that develops more insights into the relationships among AISD, AIST, AIEP, and SCA and provides a theoretical basis for analyzing the interference of ethical perceptions in the trust and SCA nexus. Figure 1 shows the framework.

Research structure.

This study proposes the following hypotheses based on the literature review and research framework.

Based on the research framework and hypotheses above, we conduct collection subsequently to systematically evaluate the correlations of AISD, AIST, and AIEP with SCA.

According to the Innovation Diffusion Theory (IDT), the diffusion of technology is influenced by multiple factors, among which relative advantage and compatibility are particularly critical in this study. Hypothesis H1 (impact of AI technology development on societal acceptance) can be explained through the IDT construct of relative advantage, suggesting that if AI technology enhances efficiency and effectiveness, then its societal acceptance will increase. Similarly, Hypotheses H2 and H3 (mediating role of AI technology trust) are supported by the IDT constructs of observability and compatibility, indicating when technology demonstrates high transparency and aligns with societal values that public trust in AI technology likely strengthens.

Hypotheses H4 and H5, which examine the moderating effect of ethical perception, correspond to the compatibility dimension in IDT, as the alignment between technology and societal values significantly influences its adoption rate. Although this study does not explicitly analyze the stages of technology diffusion, IDT provides theoretical support for the proposed hypotheses, complementing the analytical frameworks of TAM and STS.

Data Collection

This study collected data online through questionnaire-based surveys. The online surveys for data collection were conducted in the following steps. First, a structured questionnaire was designed based on the research variables (AISD, SCA, AIST, and AIEP), and a 5-point Likert scale (in which “1” represents “strongly disagree” and “5” represents “strongly agree”) was used to measure the interviewees’ attitudes (cf. Table A1). Second, the questionnaire link was published on multiple social media platforms, including X (formerly Twitter), LinkedIn, Facebook, and LINE.

The questionnaire was available from March 1 to May 31, 2024. During the 3 months, 914 valid questionnaires in total were received (excluding incomplete questionnaires and those to which the answers had a clear pattern). SPSS v29 was subsequently used to test Common Method Bias (CMB), reliability, validity, factor loading, and Average Variance Extracted (AVE) of the data, so as to determine the structural rationality of the data and the goodness of fit of the measurement model. Hayes’ PROCESS v4.2 (an analytic model and a macro tool for SPSS v29) was used to analyze the research framework of this study. When a macro is used for model testing, the corresponding model must be selected before testing the research framework of a study (Hayes and Rockwood, 2020). As moderated mediation analysis was involved in this study, Model 14 of Hayes’ PROCESS v4.2 was selected as the target model.

PROCESS Model 14 is appropriate for examining the indirect effect of a single mediator (AIST) in the relationship between the independent variable (AISD) and the dependent variable (SCA). It simultaneously assesses whether the moderating variable (AIEP) influences the strength of this mediation effect.

The rationale for adopting this model lies in the premise that ethical perception may alter the impact of technology trust on societal acceptance, thereby adjusting the mechanism of technology development’s influence based on individual ethical standards. Through the application of PROCESS Model 14, this study comprehensively examines how technology development affects societal acceptance via trust, while further analyzing the critical moderating role of ethical perception in this process. This approach offers a more nuanced validation of the mechanism and serves as a valuable reference for policy implications and applications.

Control Variables

To improve the internal validity of the research findings, gender, age, and the education level are the control variables. These variables have been verified to be factors on AISD’s impact in other papers. For example, Shrestha and Das (2022) revealed that gender bias persists in AISD, which reflects and amplifies gender discrimination in the training data, affecting the process of autonomous decision-making. Solutions to gender bias, including using bias-eliminating algorithms and diversifying data representation, help reduce or eliminate gender bias in AI systems. The focus of this field is on improving methodologies and inclusive practices to ensure the equality and diversity of AISD. Seo et al. (2021) pointed out that age impacts the development and application of AI technology, as age differences significantly affect the use and acceptance of AI systems. Crompton and Burke (2023) mentioned that educational level significantly impacts the development and application of AI technology. In the field of higher education, the use of AI technology is under the impact of different educational levels.

CMB Test

The measurement of each construct in the questionnaire was conducted subjectively by single interviewees, which may cause CMB. CMB may increase or decrease the coefficient of the correlation between every two constructs, producing errors in the results. Therefore, this study adopts Harman’s one-factor test for the factor analysis of all questionnaire items. The results before rotation show that the cumulative variance explained of the first principal component is 39.235% (< 50%), indicating that there is no significant problem with CMB in the study (Podsakoff et al. 2003).

Reliability and Validity Tests

This study employs SPSS v29 to test the reliability and validity of data and to ensure the reliability and validity of the questionnaire. In general, a Cronbach’s alpha value of over .70 indicates that the tested questionnaire has good reliability (Jiang et al., 2022). With respect to this study, the alpha value for each construct is over .80, demonstrating good internal consistency between the constructs and their good reliability. This means that the questionnaire items are highly stable and consistent when corresponding constructs are measured and have a solid basis for further analysis.

We subsequently test data validity through the evaluation of convergent validity based on each construct’s calculation of AVE. When AVE is over .50, a construct is believed to have good convergent validity (Bell et al., 2021). With respect to this study, all AVE values are above .60, indicating each construct has good convergent validity. This means that the questionnaire items effectively reflect the measured latent variable, and most of the variance explained of each construct comes from the measured latent variable, rather than measurement errors. These tests further safeguard the measurement effectiveness of the questionnaire (cf. Table A2).

Empirical Analysis

After data collection and the reliability and validity tests, we conduct an empirical analysis to test the research hypotheses and reveal the relationships among variables. The empirical analysis comprises descriptive statistical analysis, correlation analysis, path analysis, and the moderated mediation test.

Descriptive Statistical Analysis and Correlation Analysis

To verify the factorial structure of each variable in the questionnaire, the four measurement variables are subject to factor analysis through principal component analysis. The results show that the data directions could be represented by four principal components, with the cumulative variance explained reaching 65.70%, while every questionnaire item significantly corresponds to a fit concept. This means that the questionnaire with the four variables has good convergent validity.

The confidence interval for the correlation coefficient between neither two of the four variables falls into 1, indicating that the four variables could distinguish themselves from each other. In other words, good discriminant validity exists. Table 1 offers the correlation coefficients, means, standard deviations (SDs), AVEs, skewness, and kurtosis for variables in this study.

Correlation Coefficient, Mean, SD, AVE, Skewness, and Kurtosis.

Correlation is significant at the .01 level (two-tailed).

The correlation coefficients are in the lower left section below the orthogonal line of the matrix.

The shared variances are in the upper right section above the orthogonal line of the matrix.

The mean of AISD is 4.081, indicating that the interviewees generally believe that there is significant progress in AI technology over the past few years. The mean of SCA is 3.907, designating that the interviewees’ acceptance of AI technology is not low. The mean of AIST is 3.349, signifying that the interviewees’ trust in AI technology is at a medium level. The mean of AIEP is 3.820, denoting that the interviewees believe that the ethical performance regarding AI is not bad.

In terms of SDs, SD of AISD is 0.684, indicating that variations in the opinions on AISD are moderate. SD of SCA is 0.649, meaning that variations in the opinions on SCA are slight. SD of AIST is 0.756, showing that variations in the opinions on AIST are not minor. SD of AIEP is 0.783, denoting that variations in the opinions on AIEP are not minor.

Skewness is a statistical measure used to assess the symmetry of a data distribution. When the skewness value is close to zero, it indicates that the distribution is approximately symmetric. A positive skewness value suggests a right-skewed distribution (i.e., the data exhibit a longer tail on the right side). A negative skewness value indicates a left-skewed distribution (i.e., the data have a longer tail on the left side).

The skew of AISD is 0.233 (positive), directing that the data distribution is longer on the right side of its peak, and more of the interviewees think highly of AISD. The skew of SCA is 0.142 (positive), indicating that the data distribution is longer on the right side of its peak, and more of the interviewees accept AI technology. The skew of AIST is 0.332 (positive), pointing out that the data distribution is longer on the right side of its peak, and more of the interviewees trust AI technology. The skew of AIEP is 0.299 (positive), indicating that the data distribution is longer on the right side of its peak, and more of the interviewees have positive ethical perceptions of AI technology.

Kurtosis is a statistical measure that quantifies the tailedness or peakedness of a data distribution. When kurtosis is approximately 3, it corresponds to a normal distribution, indicating a moderate peak with relatively balanced tails. A kurtosis value greater than 3 suggests a leptokurtic distribution, characterized by a sharper peak and heavier tails. A kurtosis value less than 3 indicates a platykurtic distribution, reflecting a flatter peak and lighter tails.

The kurtosis (excess kurtosis) values of AISD, SCA, AIST, and AIEP are −0.212, −0.315, −0.124, and −0.198, respectively. This means the data distributions are thin-tailed. Moreover, the data are less concentrated around the mean than in a normal distribution.

The statistics above show that the interviewees generally have positive opinions about AISD, high acceptance of AI technology, and positive ethical perceptions of AI technology, though their trust in AI technology is at a medium level. The right-skewed data distribution indicates that the interviewees tend to have positive opinions. Additionally, the negative kurtosis shows that the data distributions are thin-tailed, and the data are less concentrated around the mean. Therefore, although the interviewees have positive attitudes toward AISD and AIEP, there are some variations and discrepancies in AIST. In general, the data denote positive opinions on the development of AI technology and ethical perceptions concerning AI technology, while reflecting deviations in terms of trust.

Research Hypothesis Tests

This section presents tests of the research hypotheses with Hayes’ PROCESS v4.2. SPSS Statistics is used to conduct regression analysis and to test the total effect, before Model 4 and Model 14 of Hayes’ PROCESS v4.2 are used to test the mediation and the moderated mediation, respectively.

Total Effect Test

The main effect test is next conducted upon completion of descriptive statistical analysis to determine the direct impact of the independent variable (AISD) on the dependent variable (SCA). For this part, SPSS v29 executes regression analysis and tests the direct impact of AISD on SCA based on the analytic model of SCA = β0 + β1.AISD + ε. The results show that β0, β1, R2, F-value, and t-value are 1.571, .572, .365, 523.171, and 22.871, respectively. Hence, H1 is supported. In conclusion, the statistics above illustrate that the linear regression model is valid and explains 36.5% of the variance in the dependent variable, while the independent variable significantly impacts the dependent variable.

Mediation Test

For this step, the mediation factor is tested based on the simple mediating model with a single mediating variable proposed by Baron and Kenny (1986) on the premises of fulfilling the following three criteria: (1) the independent variable X significantly impacts the dependent variable Y when the mediating variable M is absent; (2) X significantly impacts M; and (3) the impact of X on Y weakens with the mediation of M, though the impact of M on Y remains significant. In other words, for the Baron and Kenny method for testing mediation hypotheses, the prerequisites include the existence of the significant effect of X on M and that of M on Y, as well as the weakening or disappearing significance of the effect of X on Y when Y is predicted by X and M (hence, M serves as a partial or complete mediator, respectively).

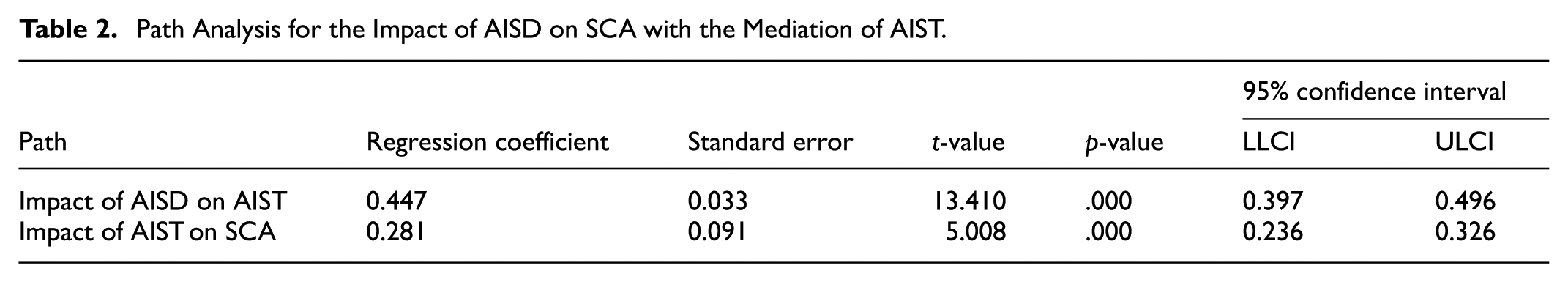

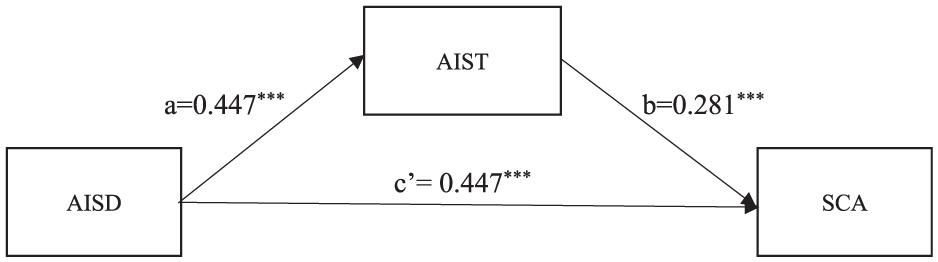

Figure 2 shows the significant impact of AISD on SCA when AIST is absent. Table 2 presents the significant effect of AISD on AIST and that of AIST on SCA. Figure 3 exhibits the decreasing correlation coefficient between AISD and SCA from 0.572*** (Figure 1) to 0.447*** (Figure 3) when SCA is predicted by AISD and AIST. Therefore, the prerequisites for the Baron and Kenny method are fulfilled—namely, AIST serves as the mediating variable. According to both Table 2 and Figure 3, the correlation between AISD and AIST and that between AIST and SCA are both significantly positive. Hence, H2 and H3 are supported.

Total effect of AISD on SCA.

Path Analysis for the Impact of AISD on SCA with the Mediation of AIST.

Path analysis for the impact of AISD on SCA with the mediation of AIST

Moderation Test and Moderated Mediation Test

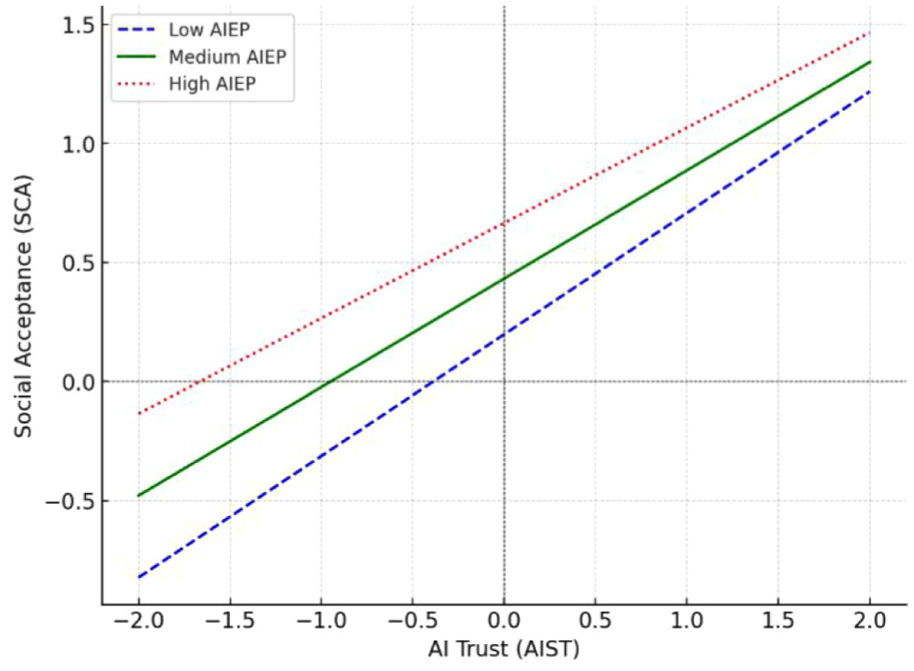

The variable AIEP is now taken into account to moderate the relation between AIST and SCA (Figure 4). The results show that the strength of the multiplicative interaction between AIST and AIEP on SCA is −0.055, which is significant with a p-value of .015 and a 95% confidence interval of −0.099 to −0.011 (excluding 0). Hence, H4 is supported.

Moderating effect of AIEP on AIST and social acceptance.

The moderated mediation test is tested based on the three steps proposed by Muller et al. (2005). First, the independent variable must have a significant effect on predicting the dependent variable. Second, the multiplicative interaction term (between the mediating variable and the moderating variable) must have a significant effect on predicting the dependent variable. Third, the mediating variable must have a significant effect on predicting the dependent variable. The results (in Table 3) satisfy the criteria for the moderated mediation test proposed by Muller et al. (2005), which is enough to recognize AISE as the moderated mediator in this study. Hence, H5 is supported.

Dependent Variable Model Analysis. Using SCA as the Dependent Variable.

Discussion

This study reveals how multiple factors work in synergy to influence the social acceptance of AI technology through the analysis of AISD, AIST, AIEP and their impacts on SCA. This section provides a detailed discussion of the results of this study and their implications.

First, this study explores the impact of AISD on SCA without any mediator or moderator. According to the results of the analysis based on the regression model for this impact (SCA = β0 + β1.AISD + ε), β1 is .572***, meaning that the value of SCA rises by 0.572 per increase of 1 in the value of AISD. This indicates that the progress in AI technology is able to significantly improve the social acceptance of AI technology. On the other hand, β0 is 1.571, meaning that the baseline for SCA is 1.571 when the AISD indicator is 0. This indicates that there is a basic level of SCA even without AISD.

Second, the mediation of AIST in the impact of AISD on SCA is explored. As Figure 3 shows, the indirect effect of AISD on SCA through AIST is a × b = 0.126***, reaching a significant level. This result serves as proof for the existence of the impact of AISD on SCA through the mediation of AIST. In other words, AISD not only directly improves SCA, but also indirectly does so by building up social trust in AI technology.

This study digs deeper by exploring the effect of the moderator AIEP on the path between AIST and SCA. The results show that AIEP is a significantly negative moderator in this relation, as the indicator value is −0.055**. This designates that the more intense AIEP is, the less the impact of AIST on SCA will be, possibly suggesting that excessive ethical requirements and concerns weaken the positive impact of social trust on social acceptance. To be more specific, the results mean that the indicator value for the impact of AIST on SCA drops by 0.055 per increase of 1 in the value of AIEP. In other words, the stronger the social perceptions of ethical problems about AI technology are, the more difficult is the conversion from social trust in AI technology into SCA. One potential cause for this result is that ethical problems result in more distrust and concerns. Even if people trust AI technology, they may refuse to fully accept it due to ethical problems.

Finally, the moderated mediation of AIEP in AISD’s impact on SCA through AIST is explored. The results show that the value of the moderated mediation is −0.025 (cf. Table 4), indicating that AIEP negatively impacts this mediation process, as the indicator value for AISD’s impact on SCA through AIST decreases by 0.025 per an increase of 1 in the value of AIEP. For this reason, even if AISD can promote social trust in AI technology, if there are strong social perceptions of ethical problems about AI technology, then it will be difficult to convert the trust into SCA. In other words, the existence of ethical problems undermines the positive impact of AIST, thereby affecting the outcomes of the entire process.

Coefficients of the Moderated Mediation.

Conclusion and Suggestions

This paper presents an empirical analysis to reveal the complex correlations among AISD, AIST, AIEP, and SCA, providing significant insights into the social impact of AI technology. First, the results show a significantly positive impact of AISD on SCA (β = .572, p < .001), which corroborates technological determinism, or the ability of technological progress to directly drive social transition and improve the social acceptance of technology (Venkatesh and Davis, 2000). However, it is not a simple, linear relation, but a relation with the moderation and mediation of multiple factors.

Second, this study verifies that AIST is a key mediator in the relation between AISD and SCA (the value of the indirect effect a × b = 0.126, p < .001), which emphasizes the importance of social factors (such as social trust) in the process of technological development (Di Maio, 2014). To be more specific, the progress in AI technology directly improves SCA and also indirectly does so by building up social trust in AI technology. Moreover, this study finds that AIEP serves as a significantly negative moderator in the relation between AIST and SCA (β = −.055, p < .05), highlighting the complexity and importance of ethical consideration in the process of AISD. Specifically, the positive impact of trust in technology on SCA weakens when the public perceptions of ethical problems about AI intensify. This shows a process of rational trade-off by the public when accepting new technologies.

Third and finally, this paper reveals that AIEP serves as a moderated mediator in the entire relation chain of AISD-AIST-SCA (effect value = −0.025). This supports the core idea of the socio-techno-ethical co-construction theory, or that technological development, social acceptance, and ethical consideration form a complex system in which they influence each other, reaching a dynamic balance (Rogers et al., 2014).

In conclusion, this study develops and verifies a holistic model that takes into account direct impacts, mediation, moderation, and moderated mediation, providing more insights into the mechanism for the social acceptance of AI technology.

The findings indicate that AI technology development has a significantly positive impact on societal acceptance (H1 is supported), which aligns with the relative advantage construct in IDT. This suggests when a technology enhances efficiency and convenience that its societal acceptance increases. Additionally, the mediating effect of AI technology trust (H2 and H3 are supported) can be explained through the concept of observability, indicating that when a technology exhibits greater operational transparency, public trust in it strengthens. Furthermore, the moderating effect of ethical perception (H4 and H5 are supported) corresponds to the compatibility dimension in IDT, as technologies that are perceived to align with societal ethical norms tend to achieve higher acceptance. Although this study does not explicitly examine the diffusion pattern of AI technology, the results remain conceptually consistent with key IDT principles, further reinforcing the theoretical foundation of this research.

From an innovative perspective, this study contributes to the literature in three key dimensions: theoretical framework, research methodology, and empirical analysis. First, the literature on AI ethics, trust, and societal acceptance has predominantly relied on the Technology Acceptance Model (TAM) as the primary theoretical foundation, often overlooking the dynamic processes of technology diffusion and sociotechnical interactions. By integrating IDT and Science and Technology Studies (STS), this study complements TAM’s individual-level perspective, offering a more comprehensive analytical framework that extends the discussion of technology acceptance beyond individual behavior to the broader societal diffusion and ethical adaptation of AI technology.

Second, while other studies have explored the impact of ethical perception on technology trust and acceptance, most have primarily examined its direct effects or independently analyzed the mediating role of trust, with limited focus on how ethical perception dynamically moderates this mechanism. By employing Moderated Mediation Analysis, this study further elucidates the critical role of ethical perception in the relationship between technology trust and societal acceptance, addressing theoretical gaps in the literature.

Finally, many other studies on AI ethics and trust have predominantly relied on qualitative interviews or small-scale surveys. In contrast, this study utilizes 914 valid survey responses and applies Hayes’ PROCESS Model 14 for quantitative analysis, empirically validating the mediating effect of technology trust and the moderating role of ethical perception with greater statistical robustness. This enhances the external validity of the findings. These contributions not only deepen our understanding of the societal acceptance mechanisms of AI technology, but also provide empirical evidence and scholarly insights to inform future technology diffusion strategies and policy development.

In summary, the findings of this study enrich the theory about technological acceptance and also provide an empirical basis for the development and effective promotion of AI technology. Specifically, the theoretical implications and practical contributions of this study are as follows.

Theoretical Implications

This study expands TAM: The scope of application of the traditional TAM is expanded through the integration of AIST and AIEP, which not only broadens TAM theoretically, but also improves its applicability in explaining the process of accepting highly complex technologies with potential risks (such as AI) (Venkatesh and Davis, 2000). In particular, this study reveals the role of ethics in the process of technological acceptance as a moderator, providing a new perspective for TAM.

It deepens IDT: The findings in this study provide new insights into IDT (Rogers et al., 2014), especially in terms of explaining the diffusion of innovative technologies with high uncertainty and social impacts, such as AI. The results show that both trust in technology and the ethical perceptions regarding technology play key roles in innovation diffusion, giving a new theoretical framework for IDT in explaining the social diffusion of complex technological systems.

It enriches the Socio-Technical Systems Theory:The results empirically support this theory by highlighting the importance of social factors (such as trust) and ethical factors in the process of technological development (Di Maio, 2014). It leads to a better understanding of the dynamic interaction between the technological system and the social system and provides a new perspective for the application of the theory in the field of AI.

It develops a new holistic model for the acceptance of AI technology: By building and verifying a holistic model that takes into account direct impacts, mediation, and moderated mediation, this study provides a more complete theoretical framework for understanding the mechanism for the social acceptance of AI technology. The model integrates technological, social, and ethical factors and provides a theoretical basis for future research that can be used for reference and expansion.

Practical Contributions

This study provides guidance on strategies for AISD: The results show the direct positive impact of AISD on SCA (β = .572, p < .001), which presents crucial inspiration for technology developers and policymakers—that is, the continuous promotion of the innovation of AI technology has a positive effect on SCA. Therefore, when formulating strategies for AI development, continuous resource investments should be made in technological innovation, and guarantees should be offered so that technological progress can practically respond to social demands.

It optimizes the mechanism for building up trust in AI: Because AIST serves as a key mediator in the relation between AISD and SCA (the value of the indirect effect a × b = 0.126, p < .001), this study provides an empirical basis for enterprises and governments to optimize the mechanism for building up trust in AI. Specifically, AI systems can be made more transparent and more explainable, and measures such as establishing a system of performance evaluation and certification can be taken, so as to enhance social trust in AI technology and to indirectly improve SCA.

It improves the governance framework for AI ethics: This study finds that AIEP serves as a significantly negative moderator in the relation between AIST and SCA (β = −.055, p < .05), which provides an important basis for improving the governance framework for AI ethics. Policymakers and enterprise managers should be aware that purely enhancing the ethical standards may weaken the positive impact of AIST on SCA. In view of this, it is imperative to find a balance between strict ethical standards and technological development when making codes of ethics, so as to guarantee the development of AI technology.

Differentiated promotion strategies are formulated: As AIEP is found to be a moderator in this study, enterprises and governments should take more precise, differentiated strategies for promoting AI technology. In the face of groups with high ethical sensitivity, besides emphasizing ethical guarantees concerning AI technology, the practical benefits of technology should be shown more in order to offset the potential negative impact of ethical consideration on technology acceptance. Furthermore, when AI ethical perception is low, technology trust significantly enhances societal acceptance, meaning that the public tends to determine acceptance based primarily on the functionality and transparency of the technology. However, when AI ethical perception is high, even with a high level of technology trust, the public may still remain cautious about the ethical risks associated with AI technology, thereby weakening the impact of trust on acceptance.

It creates a long-term mechanism for monitoring and evaluation: The results reveal the complex, dynamic interactions between AISD, AIST, AIEP, and SCA (value of the moderated mediation = −0.025), according to which ethical perceptions are not only a mediating variable, but also a variable that impacts trust (as a mediator). Specifically, the positive impact of trust on SCA weakens when there is a high level of ethical perceptions. Therefore, competent authorities should regularly evaluate SCA, AIST, and AIEP and timely adjust the strategies and governance measures for AISD according to the dynamic changes in the aforementioned factors.

In conclusion, this study both enriches and expands TAM and IDT from the theoretical perspective as well as provides an important empirical basis and a decision-making reference for the actual development, application, and governance of AI technology. This can help promote the balanced development of AI technology and society.

This study does have several limitations. First, although this study confirms that AIEP attenuates the impact of AIST on SCA, it does not directly test the underlying mechanism of this effect. Future research could further explore potential influencing factors, such as risk sensitivity, technology transparency, and social norms, to examine how AIEP modifies the pathway through which AIST affects SCA. Qualitative methods, such as in-depth interviews or focus group discussions, could be employed to gather richer user insights, complementing the limitations of survey-based data.

Second, this study relies on cross-sectional survey data, which prevent capturing long-term causal relationships between variables. Therefore, causal inferences should be interpreted with caution. Future research could adopt a longitudinal study design to track changes in AI technology trust and ethical perception over time, thereby verifying their long-term effects on societal acceptance and enhancing the robustness of the findings. Given that AI technology trust and ethical perception may evolve due to technological advancements, policy adjustments, or social events, it is essential to examine these dynamic shifts. For instance, public acceptance of AI technology may fluctuate in response to increased transparency or negative news coverage. Since this study does not account for such temporal variations, future research could conduct longitudinal data collection to analyze trends in AI-related trust and ethical perception and explore how these dynamics influence societal acceptance of AI. This would contribute to a more comprehensive explanatory model.

Third, since individual acceptance of AI technology may be influenced by multiple factors, future studies could incorporate control variables such as personal innovativeness and risk tolerance to further confirm whether the impact of AIST on SCA remains statistically significant. This would enhance the robustness of the findings and provide more precise policy recommendations.

Finally, as the findings of this study are derived from a specific cultural and regulatory environment, they may not be generalizable across different national or legal contexts. Future research could conduct cross-cultural comparisons to examine whether regulatory frameworks and ethical norms influence the AI technology trust and societal acceptance nexus. This would help assess the applicability of the policy recommendations proposed in this study and contribute to global AI governance discussions.

Based on the aforementioned findings and conclusions of this study, the following suggestions are proposed to advance the development and social acceptance of AI technology.

The connection between AISD and the social demands for AI technology should be enhanced: As the results reveal the direct positive impact of AISD on SCA, it is suggested that policymakers and technology developers should pay more attention toward making AI technology more closely combined with social demands. Specifically, a consulting mechanism for AISD with the involvement of stakeholders can be established to ensure that the development orientation of AI technology practically answers social concerns and demands, thereby improving technological practicality and acceptability.

Mechanisms for building AIST should be created: Considering that AIST serves as a key mediator between AISD and SCA, competent authorities should make efforts to create mechanisms for building AIST, which include: setting up AI technology to be more transparent by means such as making public the principles of algorithms and rules for the use of data; (b) enhancing the interpretability of AI systems so that users can understand the basis for AI decision-making; and (c) creating a system of performance evaluation and certification for AI technology to provide a reliable, trustworthy reference for the public.

The governance framework for AI ethics should be improved: As AIEP is found to be a significant moderator between AIST and SCA in this study, competent authorities should be dedicated to improving the governance framework for AI ethics. Measures they can take include: formulating codes of AI ethics and corresponding codes of conduct to provide specific guidance on the development and application of AI technology; (b) creating ethical review mechanisms for AI to enable the ethical review of high-risk AI applications; and (c) promoting ethical education regarding AI to improve the ethical awareness of developers and users.

Differentiated strategies for AI promotion should be implemented: As AIEP is found to be a moderator in this study, differentiated strategies should be taken for promoting AI technology. In the face of groups with high ethical sensitivity, the focus should be placed on delivering ethical guarantees concerning AI technology. For groups with low technology acceptance, the focus can target the practical benefits and security of AI technology to build trust and improve acceptance.

Interdisciplinary research and cooperation should be promoted: Considering the complex relations among AISD, AIST, AIEP, and SCA, academia-industry and interdisciplinary cooperation should be enhanced. Interdisciplinary research teams can be organized by including technological experts, sociologists, scholars of ethics, and policymakers to dig deeper into the social impacts of AI technology and the mechanism for accepting AI technology. Doing so provides a more comprehensive theoretical support for policy making and technological development.

A dynamic mechanism for monitoring and feedback should be established: In view of the rapid development of AI technology and the dynamic changes in the public attitudes toward AI technology, a long-term mechanism for monitoring and feedback should be set up. Based on the mechanism, social attitude surveys can be conducted regularly, a system for evaluating the impacts of AI technology can be established, and an AI governance platform with public involvement can be created. The mechanism may allow changes in SCA to be timely grasped, with development strategies and governance measures adjusted accordingly.

International cooperation and standardization should be strengthened: With a view to the global impact of AI technology, international cooperation should be enhanced to promote the internationalization of the standards and codes of ethics for AI technology. This not only assists at improving the overall reliability and acceptance of AI technology, but also promotes the development and effective governance of AI technology worldwide.

In conclusion, adopting the aforementioned suggestions form a guarantee that the development path of AI will align with social value and social ethics while the innovation of AI technology is promoted. Overall, harmonious progress between AI technology and society can be realized, and the contribution of AI technology to human welfare can be maximized.

Footnotes

Appendix

Assessment of the Measurement Model.

| Item variable | λ | α | CR | AVE | |

|---|---|---|---|---|---|

| AISD | AISD1 | .867 | .891 | .874 | .673 |

| AISD2 | .856 | ||||

| AISD3 | .676 | ||||

| AISD4 | .843 | ||||

| AISD5 | .844 | ||||

| SCA | SCA1 | .730 | .842 | .828 | .612 |

| SCA2 | .895 | ||||

| SCA3 | .904 | ||||

| SCA4 | .719 | ||||

| SCA5 | .626 | ||||

| AIST | AIST1 | .849 | .842 | .848 | .637 |

| AIST2 | .872 | ||||

| AIST3 | .609 | ||||

| AIST4 | .796 | ||||

| AIST5 | .835 | ||||

| AIEP | AIEP1 | .899 | .845 | .926 | .765 |

| AIEP2 | .894 | ||||

| AIEP3 | .794 | ||||

| AIEP4 | .896 | ||||

| AIEP5 | .885 |

Note. λ = factor loadings; α = reliability (Cronbach’s α); CR = composite reliability; AVE = average variance extracted.

Ethical Considerations

The manuscript has not been submitted to other journals. The manuscript has not been published elsewhere.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The dataset used in this study is available from the corresponding author upon reasonable request.