Abstract

Prior exposure to misinformation has been shown to increase beliefs associated with that misinformation when it is seen again, which is called the repetition effect, a phenomenon not unusual but understudied. This study aims to examine the influence of prior exposure to misinformation on misinformation effect in experimental settings. Using data from an experiment embedded in an online survey (N = 1,645), the current study examined the effect of prior exposure to misinformation in two commonly observed scenarios: a misinformation condition and a correction condition. The results confirmed the existence of the repetition effect by showing that presenting corrections may not protect individuals with prior exposure to misinformation when it is seen again. In addition, the more the individuals trusted the correction sources, the more they believed in the misinformation they had been exposed to. Knowledge can sometimes weaken, but cannot eradicate, the effect of prior misinformation exposure. The findings raise questions of study design concerning misinformation on balancing between external validity and confounding effects of repeated exposure to real-life misinformation. Also, it provokes thoughts and reassessment on theories of motivated reasoning and selective exposure, as well as the role of knowledge in combating misinformation.

Plain language summary

This study aims to test the effect of prior exposure to misinformation in two scenarios: (1) presenting the previously exposed misinformation only, and (2) presenting the previously exposed misinformation with a following correction. Three problems were addressed, including (1) to examine if prior exposure to misinformation leads to more trust in the misinformation (a test of the repetition effect); (2) to investigate if prior exposure to misinformation affects trust in misinformation when correction is presented (which will extend studies of prior exposure and the repetition effect); and (3) to find out which factor moderates the effect of prior exposure in each scenario, correction presented or not (which extends findings related to the differential susceptibility to misinformation). The results showed that presenting corrections may not protect individuals with prior exposure to misinformation when it is seen again. It showed a backfire effect of correction source credibility—the more the individuals trusted the correction sources, the more they believed in the misinformation they had been exposed to. Knowledge can sometimes weaken, but cannot eradicate, the effect of prior misinformation exposure.

Introduction

Misinformation has become a heated topic in global societies, and the number of studies on misinformation has increased rapidly in the past few years. A controlled experiment as a method that can explain causal mechanisms is frequently adopted in misinformation research. For example, one experiment presented misinformation to participants with different prior attitudes and found that those who had an attitude congruent with the misinformation perceived it as more credible (Hameleers et al., 2021). In addition, a five-day-long experiment in Singapore found that when a correction was sent to a chat group, the members rated it as more credible when it was sent by a familiar source than when it was sent by a source that they had never met (Tandoc et al., 2023).

Previous experiments on misinformation and how to counter it often used “real life” misinformation messages (i.e., those that have actually been found being distributed by individuals or groups) as experimental stimuli. This practice can increase the external validity of the experimental findings. However, there is always a chance that participants might have been exposed to a chosen stimulus before participating in an experiment. In addition, scholars must select misinformation messages that have been established as false by professionals before using them as experimental stimuli (e.g., Hameleers et al., 2021; Tandoc et al., 2023), and professionals mostly focus on topics that attract public attention (Soo et al., 2023).

A concern over using real-life misinformation messages as experimental stimuli is that prior exposure to misinformation can increase participants’ beliefs about that misinformation. Allport and Lepkin (1945) observed that the strongest predictor of belief in wartime misinformation was simple repetition, which has been termed the repetition effect. In addition, the theory of the truth effect suggests that people believe repeated information more than novel information (for a review, see Dechêne et al., 2010). The truth effect can be explained by processing fluency, which is the subjective ease of ongoing conceptual or perceptual processes (Hassan & Barber, 2021). Repetition increases the fluency of mental processing. Thus, we argue that it is important to determine the impact of prior exposure to misinformation for a robust causal inference with relatively high external validity.

This study aimed to test the effect of prior exposure to misinformation in two scenarios: (1) presenting the previously exposed misinformation only, and (2) presenting the previously exposed misinformation with a following correction. This study had three research goals: (1) to examine if prior exposure to misinformation leads to more trust in the misinformation (a test of the repetition effect); (2) to investigate if prior exposure to misinformation affects trust in misinformation when correction is presented (which will extend studies of prior exposure and the repetition effect); and (3) to find out which factor moderates the effect of prior exposure in each scenario, correction presented or not (which extends findings related to the differential susceptibility to misinformation).

Objectives, Problems, and Significance

This study aims to test the effect of prior exposure to misinformation in two scenarios: (1) presenting the previously exposed misinformation only, and (2) presenting the previously exposed misinformation with a following correction.

Three problems will be addressed, including (1) to examine if prior exposure to misinformation leads to more trust in the misinformation (a test of the repetition effect); (2) to investigate if prior exposure to misinformation affects trust in misinformation when correction is presented (which will extend studies of prior exposure and the repetition effect); and (3) to find out which factor moderates the effect of prior exposure in each scenario, correction presented or not (which extends findings related to the differential susceptibility to misinformation).

The significance of this study lies in three aspects. Firstly, it deepens understanding of mechanisms of inhibiting or suppressing repetition effects concerning misinformation exposure. Secondly, it raises questions about conclusions drawn from experimental studies that addressed the impacts of misinformation with real-life materials that subjects may have seen before. Furthermore, it gives a chance to reassess popular explanations of the cognitive effects of misinformation exposure, such as motivated reasoning, selective exposure, and the role of knowledge in combating misinformation.

Literature Review

The Repetition Effect of Misinformation

Prior exposure to misinformation increases perceived accuracy of it when the misinformation is repeatedly encountered (Hassan & Barber, 2021; Pennycook et al., 2018) and individuals tend to believe it, regardless of whether the information was convicted to be incorrect in the previous encounter (Hasher et al., 1977; Vellani et al., 2023). This is called the repetition effect, or illusory truth effect. The underlying mechanism is familiarity. A sense of familiarity leads to fluent cognitive processing of information, and individuals tend to pay less attention to the veracity of information when the message encountered is felt familiar (Swire et al., 2017). Even though people figure out correct answers when asking to pay more attention to prior exposed misinformation, their trust in it still increases in the absence of such notice (Fazio et al., 2015). The reason is that the cognitive fluency caused by familiarity can further reinforce the perceived truthfulness of the message, as human beings tend to mistakenly attribute the processing fluency to reliable information rather than to familiarity triggered by repeated exposure (Fragale & Heath, 2004; Henderson et al., 2021). Individuals, as a result, prefer not to change previous knowledge and thus form cognitive bias (Kahnemann, 2011). The repetition effect occurs even when considering the impacts of motivated reasoning and low believability of information (Pennycook et al., 2018). Thus, based on previous findings, this study posits the first hypothesis:

H1: People who are previously exposed to misinformation are more likely to trust it, compared to those who have not been exposed to the misinformation.

Can presenting a corrective message eliminate the repetition effect of misinformation? The answer may be negative. A large number of studies have pointed out that the influence of misinformation exposure is long-lasting. After exposure to or even an acceptance of the correction, misinformation continues to mislead people (e.g., Pennycook et al., 2018), and the finding is called the continued effect of misinformation (for a review, see Walter & Tukachinsky, 2020). Furthermore, the longer the time interval between initial exposure to misinformation and subsequent exposure to correction, the greater the likelihood that misinformation will remain in long-term memory (Ecker et al., 2015; Walter & Tukachinsky, 2020). The correction effect mostly stays in short-term working memory, and thus when long-term memory is activated, short-term memory often fails to make an influence (Ecker et al., 2015). Meanwhile, the cognitive fluency induced by familiarity also contributes to the credibility of misinformation (Johnson & Seifert, 1994; Swire et al., 2017). Therefore, repeated exposure to misinformation may strengthen people’s trust in misinformation even when correction is presented.

H2: People who are previously exposed to misinformation are more likely to trust it even when correction is presented, compared to those who have not been exposed to the misinformation.

The Moderating Roles of Trust and Knowledge

Although people’s judgment on information authenticity may be affected by multiple factors, there are usually two cognitive paths: (1) to verify information based on one’s judgments, which requires individuals to have rich knowledge reserves; (2) to verify based on the trust mechanism, that the judgment of information is authorized to a trusted third party (Tandoc et al., 2018). Since knowledge and trust are two core elements that have a direct impact on misinformation judgment, lots of studies have investigated the short-term effect of these two elements. However, research on the cognitive paths through knowledge and trust is lacking in studies on the repetition effect of misinformation. There are two major reasons.

First, some scholars assume no effect of source trust and knowledge. First, the sleeper effect implies that the effect of source trust can only be activated in a short period (Heinbach et al., 2018). Second, knowledge does not seem to immune people against misinformation, which is concluded as the knowledge futility theory (e.g., Arkes et al., 1989; Fazio et al., 2015; Yu & Shen, 2021).

Second, the mechanisms involved in repeated exposure are complex and intimidating. In real life, repeated exposure to misinformation often happens in separate periods, and thus the continuous changes of impacting factors need to be taken into account. The changing factors include cognitive processing, accumulation, forgetting, and retrieval. Also, the cognitive resources, as well as strategies formed by long-term cognitive practice, are changing over time. The complex process has barely been covered by previous research and is difficult to be revealed in short-term experimental settings.

However, it is important for us to explore the cognitive paths in the repetition effect of misinformation. Compared to one-time contact with misinformation, repeated exposure to misinformation matches real-life scenarios more.

Examining Trust as a Moderator

The common source of correction varies in cultural contexts. In the U.S., for example, third-party fact-checking agencies such as PoliFact and Snopes have become major sources of correction in recent years. Founders of these fact-checking organizations are usually previous journalism practitioners or experts (Jones, 2021). In China, an increasing number of third-party fact-checking sites are being founded to correct misinformation (e.g., Guokr). But in an authoritarian country, the verification of political or social misinformation is usually done by governmental institutions for the purpose of information governance. For example, the China Food Misinformation-Refuting website is founded by the Cyberspace Administration of China and the Ministry of Agriculture and Rural Affairs. Many provinces in China have their own fact-checking website developed by local governments, such as Shanghai Piyao (“Piyao” means “refuting misinformation” in Chinese) and Jiangsu Piyao.

Fact-checking agencies usually notice misinformation that has been disseminated for some time and publish a corresponding correction subsequently. However, in a hybrid media environment where individuals’ information channels and sources are diverse and dispersive, not everyone has the chance to encounter corrective messages. Research shows that people tend to avoid the channels they distrust, especially for mainstream and alternative news media (Kalogeropoulos et al., 2019). Therefore, individuals who have higher trust in agencies that do fact-checking are more likely to encounter fake news correction in the daily consumption of information.

Trust is a cognitive cue that activates the heuristic processing of information (Cook, 2001). With confidence in the source, people tend to give up on in-depth thinking and simplify information processing (Traberg et al., 2024). For example, when individuals accept a correction based on trust in the correction source, the heuristic processing does not remove false information from long-term memory, but only labels the misinformation as “false” to distinguish them from true information (Mitchell et al., 2005). However, since labels of the misinformation are forgotten faster than the misinformation itself (Brown & Nix, 1996), the correction effect based on shallow cognitive processing will disappear with the forgetting of labels. At the same time, as correction often presents the truth with repeating misinformation, exposure to correction may activate deep memory related to misinformation (van den Broek & Kendeou, 2008) and thus increase people’s perceived familiarity with the misinformation. Therefore, when the label is forgotten, the short-term suppressing effect of correction on misinformation will also disappear, while the sense of familiarity will remain. As a result, when the individual is exposed to the misinformation again, the sense of familiarity may strengthen cognition fluency and increase trust in misinformation (Johnson & Seifert, 1994; Swire et al., 2017). Since fluent cognitive processing can make people less alert about misinformation (Schwarz et al., 2007), individuals who perceive the misinformation are more susceptible to it (Henderson et al., 2021). In conclusion, when individuals are repeatedly exposed to misinformation without a following correction, previous acceptance of correction based on trust in the correction source may have already been forgotten, while perceived familiarity with the misinformation will further increase misinformation acceptance. In other words, the correction effect based on trust in the correction source may be transformed into a reinforcement of the repetition effect of misinformation. Therefore, we hypothesize the following:

H3: A higher level of trust in correction source strengthens the positive impact of prior exposure to misinformation on trust in misinformation when correction is not presented.

Will the effect in H3 show a difference if we present a correction to participants after repeated exposure to misinformation? Technically, it will be a combination of the long-term effect of repeated exposure to misinformation and the immediate effect of the correction as well as the correction source. Both effects are based on heuristic cues — the former is based on cognitive fluency triggered by familiarity, and the latter relies on trust in the source. Will trust in the correction source quickly increase trust in the correction and thereby eliminate the repetition effect of misinformation?

Brashier and Marsh (2020) point out that when people make authenticity judgments, they often take into account the various cues they are exposed to when making decisions. A study found that source is an important factor in increasing the correction effect (Yu et al., 2021). In other words, this study proposes that when correction is not presented, trust in the correction source may only strengthen the memory of misinformation and hence increase misbeliefs after repeated exposure to misinformation and correction source; while when correction is presented, trust in the correction source can immediately activate trust in the correction. Can the immediate activation of trust in the source be powerful enough to combat the repetition effect of misinformation? We aim to solve the following question.

RQ1: How does trust in correction sources affect the positive impact of prior exposure to misinformation on trust in misinformation when correction is presented?

Examining Knowledge as a Moderator

Knowledge is a long-term accumulated cognitive resource. In the situation of repeated exposure to misinformation, knowledge may be able to counter the simplified cognitive process activated by familiarity-induced cognitive fluency. J. P. Smith et al. (1994) point out that the long-term accumulation of knowledge changes people’s cognitive resources at both concrete and abstract levels. Knowledge in specific fields can directly relate to the completion of specific tasks, and the long-term accumulation of general knowledge can help to form more complex cognitive structures. Knowledge in specific fields, which is concrete cognitive resources, seems to be able to immune people against misinformation of specific topics only (Arkes et al., 1989); while general knowledge, which is abstract cognitive resources, may train people to process information deeply and help identify misinformation in different fields.

It is widely believed that people who are susceptible to misinformation lack knowledge in the field. Therefore, most of the previous studies focused on the impact of concrete cognitive resources (e.g., Arkes et al., 1989; Fazio et al., 2015; Min et al., 2020). However, studies have pointed out that familiarity prevents people from continuing to compare information when stock knowledge is partially matched with new information (Reder & Cleeremans, 1990; E. R. Smith et al., 2006). Thus, the reserve of concrete cognitive resources is not always helpful in combating misinformation (Fazio et al., 2015); what is worse, it may instead strengthen the acceptance of misinformation under the effect of familiarity (Arkes et al., 1989; Fazio et al., 2015). In that case, can general knowledge, and abstract cognitive resources, combat misinformation?

Confronting cognitive simplification requires people to actively use cognitive resources to process information in depth. Both motivation and ability are needed in the process. In other words, to confront cognitive simplification, individuals need to activate cognitive resources to process information in depth and have the ability to process and compare complex information in daily news consumption, including the consumption of misinformation and correction. In studies of news consumption, the motivations and abilities to combat misinformation are often reflected in people’s news consumption behavior (Baum, 2003). Individuals who consume “soft news” are more likely to sacrifice knowledge obtainment in exchange for instant pleasure; those who consume “hard news” (e.g., serious political news) obtain knowledge through processing complex and in-depth text (Motta, 2013).

Although serious news information may also be encountered when consuming soft news, only the in-depth processing of hard news can transform such information into general knowledge that can be stored in long-term memory (Baum, 2003). In other words, the accumulation of general knowledge not only indicates an individual’s storage of cognitive resources but also shows an individual’s ability to actively deploy cognitive resources to participate in information processing when consuming news. The accumulation of general knowledge means a higher level of cognitive maturity because it enables people to learn and process complex issues (Furnham et al., 2008; Neuman, 1986) and avoid oversimplification based on appearance (Gomez & Wilson, 2001). Also, the accumulation of general knowledge reflects the individual’s inherent preference for processing in-depth information (Eveland, 2004). Therefore, general knowledge has the potential to help individuals fight cognitive simplification based on cognitive fluency and provide cognitive resources as well as motivation for exploring the internal conflicts between misinformation and correction (Kendeou et al., 2014; Rhee & Cappella, 1997). Brashier et al. (2021) found that knowledge shares similar predictive power for misinformation acceptance with cognitive reflection, another type of abstract cognitive resource. To sum up, this study believes that when correction is presented, abstract cognitive resources, which are represented by general knowledge in this study, may have the opportunity to weaken the repetition effect of misinformation.

H4: Knowledge weakens the positive impact of prior exposure to misinformation on trust in misinformation when correction is presented.

However, when correction is not presented after repeated exposure to misinformation, can abstract cognitive resources counter cognitive simplification (e.g., cognitive fluency) triggered by familiarity? Studies found that knowledgeable individuals have a stronger motive to resist cognitive simplification (Kudrnáč, 2020; Vegetti, & Mancosu, 2020). Specifically, knowledgeable people do not simply accept a news story just because it is consistent with their opinions (Kudrnáč, 2020; Vegetti, & Mancosu, 2020); while individuals without knowledge tend to maintain individual cognitive coherence to the greatest extent with minimal effort (Gomez & Wilson, 2001). Therefore, knowledge as a cognitive resources seems to be able to counter cognitive fluency. However, seven studies by de Keersmaecker et al. (2020) show that the effect of cognitive fluency is too powerful that other cognitive resources hardly offset the repetition effect of misinformation. Given that previous studies have provided different explanations and outcomes of the impact of cognitive resources on misinformation combating, we propose a research question for the situation of repeated exposure to misinformation without correction:

RQ2: How does knowledge affect the positive impact of prior exposure to misinformation on trust in misinformation when correction is not presented?

The Research Framework

Drawn on the theory of repetition effect, the current study aims to examine the impact of prior exposure to misinformation on trust in misinformation. To reveal the cognitive mechanism behind the relationship, individuals’ cognitive resources—knowledge was examined as a moderator. A cognitive cue, trust in the correction source, was also expected to moderate the relationship. The model will be examined in two scenarios—if the correction is presented or not. The research framework is shown in Figure 1.

The research framework.

Method

Data

This study used experimental data embedded in an online survey project, Social Beliefs of Internet Users, which addresses perceptions and behaviors of active internet users in mainland China (Ma, 2017). The project is longitudinal and it is funded by the National Social Sciences Foundation and conducted every 1 or 2 years since 2012, which has become one of the most important datasets in studying the role of internet use in China. The 2017 survey was conducted from March to April 2017. It randomly assigned respondents to several experimental scenarios, including the two—misinformation only and misinformation with corrections—used in this study. The survey used quota sampling and recruited respondents from WenJuan.com, a third-party company that provides web-based survey services. Cookies examination is used to filter repeated answers to improve data quality. Finally, 2,379 valid questionnaires were collected. The 2017 survey randomly assigned respondents to several experimental scenarios, and this study used two of them. In total, 1,645 respondents were assigned to the two studied scenarios and their answers were analyzed in hypotheses testing.

Procedure and Stimuli

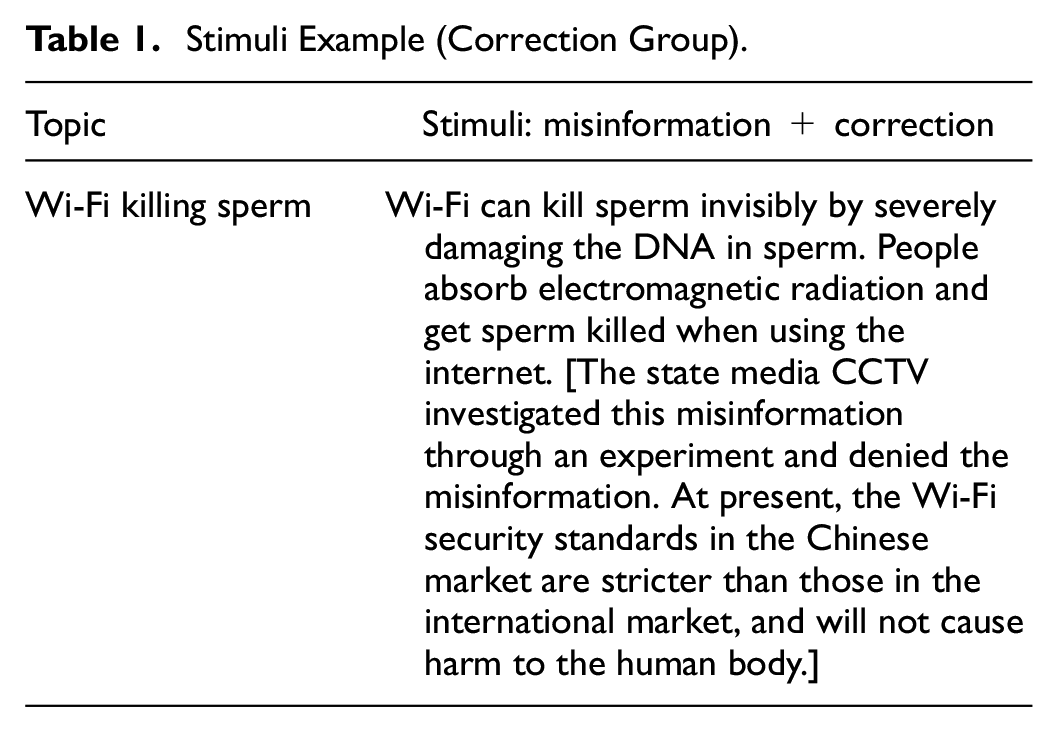

During the survey, 1,645 participants were assigned to two scenarios of this study. In one scenario, participants were exposed to four pieces of misinformation that had been virally accumulated on the internet in 2017. In another scenario, the participants were exposed to the same four pieces of misinformation, and a corrective message was shown following each of the misinformation messages. The source of the correction, either the government or the mainstream media, was shown in the corrective message. An example of a stimulus for the correction group is shown in Table 1. In both scenarios, participants were asked if they had been exposed to the misinformation message before the experiment. Participants were also asked if they trusted the misinformation to be true.

Stimuli Example (Correction Group).

The data fits the research goals for two reasons. First, although this study examines the repeated effect of misinformation in an experimental setting, this study hopes to imitate the real-life context of repeated exposure. Instead of fabricating misinformation and presenting the misinformation repeatedly within a short time during the experiment, this study adopted four pieces of misinformation that have been accumulated in a real-life context and compared participants who were exposed to the misinformation for the first time and those who were exposed to the misinformation more than once when participating in the experiment. In this way, the difference in repeated/not repeated exposure originates from the natural differences in participants (e.g., media use, information choice), rather than experimental settings. The setup increases the external validity of this study. Second, the experiment imitates real-life context by the selection of misinformation messages and correction sources. On the Chinese internet, hostile misinformation accounts for the highest proportion, followed by fearful and neutral misinformation (Ruan & Yin, 2014). The experiment adopted two hostile misinformation messages, one fearful, and one neutral. The topics of misinformation cover politics, foreign social issues, and domestic social issues. In addition, there are few fact-checking websites in China. Instead, the government and the government-controlled mainstream media (e.g., People’s Daily), are the major sources of correction (Guo, 2020). Third, participants who were assigned to the two conditions should have no significant differences. Based on the results of a series of chi-square tests and independent sample t-tests, samples of the two conditions showed no significant difference in demographics (e.g., gender, income, education level, place of residence, political affiliation, etc.), previous exposure to the displayed misinformation, trust in correction source trust, knowledge of current affairs, and media use (i.e., use of official and unofficial information channels). Therefore, the data meet the requirements of between-group homogeneity for random experiments.

Measurements

Trust in Misinformation

Respondents were asked if the story in each stimulus can happen in reality. Those who answered “yes” were coded as 1, and those who answered “no” or “uncertain” were coded as 0. The sum of the four items was used to measure trust in misinformation (no correction group: M = 1.00, SD = 1.05; correction group: M = 0.92, SD = 1.10).

Prior Exposure to Misinformation

Participants were asked whether they had heard of each misinformation message (1 = heard, 0 = never heard). Those who had heard of the message were considered as being exposed to the misinformation repeatedly in the experiment. The sum of the four items was used to measure the extent to which people were repeatedly exposed to misinformation (no correction group: M = 1.82, SD = 1.26; correction group: M = 1.86, SD = 1.31). The higher the score, the more misinformation messages participants were repeatedly exposed to.

Trust in Correction Source

The survey asked participants’ trust in the two presented correction sources: the government and national mainstream media. The participants were asked, “How much do you trust the government/national mainstream media (e.g., CCTV) as a source of information when emergencies occur?” Participants rated their trust on a 5-point Likert scale, where 1 means “not at all.” The mean of the two items formed the index of trust in the correction source (no correction group: M = 3.89, SD = 0.90; correction group: M = 3.84, SD = 0.90).

Knowledge About Important Issues

For general knowledge, we measured knowledge about important issues. Ten items were designed in the questionnaire to evaluate respondents’ knowledge about important issues. Exampled questions are “Who is the current vice president in China?”“During which prime minister’s term was China’s agricultural tax abolished?”“How long is one U.S. president’s term?” Each correct answer was coded as 1 and each incorrect answer was coded as 0. The total score of the 10 items was used to evaluate to what extent the respondents know about important issues in the world (no correction group: M = 4.27, SD = 3.03; correction group: M = 4.39, SD = 3.14, α = .83).

Control Variables

In addition to controlling the effect of demographic variables such as gender, age, household income, education level, political affiliation, and place of residence in data analysis, this study also controls for media use. The survey measured the use of official and alternative information channels. Previous studies have pointed out that the dichotomy between traditional media and new online media is no longer in line with the actual communication ecology in China (Wang, 2020) since a large number of traditional media institutions have settled on new media platforms. Dividing the information channels into official and alternative channels matches the Chinese media context more (Wang & Yu, 2022). Official information channels include the online and offline channels of traditional mainstream mass media (e.g., newspapers, TV stations, etc.) as well as government sources, and commercial portals (e.g., Sina.com) that reprint and forward traditional news organizations’ content. A study showed that business portals are more belong to official channels rather than offline channels (Wang, 2020). The questionnaire asked respondents about their access to information through various official channels (e.g., People’s Daily) on a 4-point Likert scale (1 = barely, 4 = almost every day). The mean of multiple items was used to measure people’s use of official information channels (no correction group: M = 2.52, SD = 0.63; correction group: M = 2.50, SD = 0.63, α = .67). Unofficial information channels can be categorized into three types: (1) platforms dominated by user-generated content, for example, Tianya, the popular Chinese online forum; (2) online or offline interpersonal communication channels (e.g., WeChat moments); and (3) overseas social media platforms (e.g., Twitter, Facebook) that can only be reached through virtual private network (VPN) service. Respondents were asked how frequently they use the listed channels of these three categories on a 4-point Likert scale (1 = barely, 4 = almost every day). The mean score was used to measure the use of unofficial information channels (no correction group: M = 2.34, SD = 0.57; correction group: M = 2.33, SD = 0.56, α = .66).

Data Analysis

We did a one-sample Kolmogorov-Smirnov test on the distribution of the dependent variable, trust in misinformation. The results showed that the dependent variable of the no-correction group satisfies the Poisson distribution (p = .378), while the dependent variable of the correction group does not satisfy the Poisson distribution due to over-dispersion (p = .001). Therefore, data analysis for the no-correction group is processed using the Poisson regression model; and data analysis for the correction group is processed by the negative binomial regression model. Hierarchical analysis was used to examine the hypotheses. In data analysis, a baseline model without moderators (see Table 2, Model 1, and Model 4) was established first, then the moderators were added (see Model 2 and Model 5), and the interaction terms were added at the end (see Model 3 and Model 6).

Predicting Trust in Misinformation.

Note. The Poisson regression coefficients are reported for the no-correction group. The negative binomial regression coefficients are reported for the correction group. The table only reports significant moderating effects. The likelihood ratio chi-square of the moderating effects is reported in order.

p < .05. **p < .01. ***p < .001.

Results

H1 and H2 suggested a positive correlation between repetitive exposure to misinformation and trust in misinformation, with correction shown or not. The positive correlation was supported in both conditions (no correction group: B = .27, SD = 0.03 p < .001; correction group: B = .32, SD = 0.04, p < .001).

RQ1 asked about the moderating role of trust in correction sources in the relationship between repeated exposure to misinformation and trust in misinformation in the no-correction condition. The data suggested that trust in correction source strengthens the positive impact of repeated exposure to misinformation on trust in misinformation (B = .06, SD = 0.03, p < .05). In other words, when correction was not presented, individuals who had more trust in the correction sources were more likely to trust misinformation as they were exposed to misinformation repeatedly, compared to those who had less trust in the correction source (see Figure 2). In the correction condition, however, trust in the correction source did not moderate the impact of repeated exposure to misinformation on trust in misinformation.

The moderation effect of trust in correction source on the relationship between prior exposure to misinformation and trust in misinformation in no-correction group.

In the no-correction condition, knowledge about important issues did not significantly moderate the relationship between repeated exposure to misinformation and misinformation belief (B = −.01, SD = 0.01, p = .87), which answered RQ3. In the correction condition, the moderating effect of knowledge about important issues was significant (B = −.03, SD = 0.01, p < .01). When correction was presented to individuals who had more knowledge, the positive correlation between repeated exposure to misinformation and misinformation belief was weakened (see Figure 3). Therefore, H4 was supported by the data.

The moderation effect of knowledge about important issues on the relationship between prior exposure to misinformation and trust in misinformation in correction group.

Discussion

Previous experimental studies on misinformation and correction effects have frequently adopted real-life misinformation as a stimulus without considering the effect of participants’ prior exposure to misinformation. To address the elephant in the room, this study investigated the impact of prior exposure to misinformation on trust in misinformation in two experimental scenarios: when the previously encountered misinformation was presented or not presented with a correction message. This study has three major findings. First, people who were previously exposed to misinformation were more likely to believe in it, even when a correction was presented following the misinformation message. Presenting a correction after repeated exposure to misinformation could only offset but not eradicate the repetition effect of the misinformation. Second, when people were previously exposed to misinformation, trust in correction sources strengthened the repeated effect of the misinformation. Third, general knowledge has limited influence in combating misinformation. Individuals who had more knowledge were less susceptible to misinformation only when corrections were presented. These findings also shed light on testing misinformation and correction effects in experimental studies.

The study’s theoretical and practical values lie in several aspects. First, the possible impacts of prior exposure on the design of misinformation studies are noteworthy. In consideration of external validity, it is common to use actual misinformation, such as fake news headlines, as experimental stimuli and to make causal inferences about misinformation exposure and the participants’ ensuing cognition, attitudes, or behaviors (e.g., Bago et al., 2020; Effron & Raj, 2020). However, few studies have considered the possible influence of prior exposure to the misinformation. According to the current findings, such prior exposure may weaken or strengthen the believability of the misinformation, depending on the presence of other cognitive cues. In other words, without considering the impacts of prior exposure, the findings of previous studies may have included confounds regarding the mechanism of interest. In addition, the current findings add further complications to interpreting study results when individuals misperceive that they have previously seen the (mis)information. For example, if a study uses a set of original misinformation but some elements in the message activate a sense of “having seen it before,” the findings can also be confounded without the identification of those who believe this to be repeated exposure and theoretically being impacted by it as if it was. The sense of “having seen it before” not only matters in a research context but also has relevance outside the research context. Considering that most people encounter a large volume of information each day, some elements (not the entire message being presented) may seem familiar, resulting in a misleading sense of familiarity with other aspects. Indeed, that is how some fake news is often fabricated—a false story is created by mixing elements from several accurate stories with incorrect information or reasoning. The result is a fresh story but one that feels familiar. In this way, the prior exposure effect becomes relevant and increasingly noteworthy in an era of post-truth, and future investigation is desperately needed.

Second, the effect of credible sources provokes additional thought on the theory of motivated reasoning and selective exposure. When it comes to trust in misinformation, selective exposure and motivated reasoning are often blamed, as both allow individuals to reject corrections coming from sources that contradict their own beliefs (Dosch, 2019; Sanderson et al., 2020). However, what if the correction comes from sources that individuals highly trust? According to the theory of selective exposure and motivated reasoning, the correction will work and reduce people’s trust in the misinformation in this case. However, the findings of the current study do not support this line of reasoning. One possible explanation could be that individuals who are low in trust of correction-provided media, especially the mainstream media in an authoritarian country in the current study, are more likely to engage in critical thinking and less prone to misinformation. Another possible explanation can be found in the combined action of familiarity and contradiction. When misinformation is presented with corrections, the contradiction triggered by the correction may reduce belief in the misinformation (Ecker et al., 2022); however, a sense of familiarity, brought about by repeated exposure to misinformation, can be strong enough to trigger a positive attitude toward it.

Third, the protection provided by general knowledge against misinformation seems moderate at most, which is a wake-up call to researchers who still have faith in the role of knowledge in fighting misinformation. Past research has paid much attention to domain knowledge and found that knowing a great deal about a topic increases false memory of the topic (e.g., Baird, 2003; Mehta et al., 2011). This is why the current study turned to general knowledge of important socio-political issues, which was expected to represent a habitual tendency to engage in in-depth processing of information and thus facilitate misinformation identification (e.g., Brashier et al., 2021; Eveland, 2004). However, the findings are not so encouraging. Knowledge of important socio-political issues is usually obtained from “hard” news use, and the findings seem to imply that heavy users of hard news are not so prepared to detect misinformation in the context of daily interaction; they are miserly with their cognitive resources, like those consuming soft news, and their knowledge about society makes no difference in detecting misinformation until a correction is presented. This matters because heavy users of hard news are often active in civic participation (Hardy & Scheufele, 2006; Scheufele et al., 2004), willing to engage in interpersonal discussions (McLeod et al., 1999), and likely to be opinion leaders in their local community (Shah & Scheufele, 2006). Their lack of ability to detect misinformation in the context of daily interaction not only adds further evidence to the theory of lazy thinkers (Pennycook & Rand, 2019) but also provides a wake-up call to those who anticipate differential effects of knowledge acquisition will impact misinformation susceptibility.

It is important to point out the limitations of this study. It examined only the basic corrective message (i.e., rejection of misinformation) provided by the most common correction sources, government institutions, and mainstream media. An increasing number of new entities are becoming involved in the practice of refuting misinformation (e.g., Guokr), and the writing styles of correction messages are more diverse (Yu et al., 2021). Not to mention fake images, fake video, and fake audio—all of which would be increasingly difficult to combat and correct in people’s minds. Future studies should pay much attention to different modalities of information as well as various correction sources and styles for combating misinformation should be examined in future studies. In addition, regarding the misinformation stimulus, this study did not select polarized topics that could potentially evoke an in-group bias. Future studies could further examine the processing routes described in this study with topics that could evoke emotions about bias caused by identity or opinions.

Conclusion

In conclusion, this study argued that future misinformation research should seriously consider the impact of prior exposure to misinformation on misinformation perceptions. By examining the moderating role of source trust and knowledge in two different scenarios (e.g., misinformation only and misinformation with corrections), this study found that a correction cannot eradicate the repetition effect of the misinformation, and that trust and knowledge may strengthen or weaken the repetition effect under varying conditions.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The author Wenting Yu received financial support for the publication of this article from Departmental Grant P0051043, Department of Chinese and Bilingual Studies, The Hong Kong Polytechnic University.

Data Availability Statement

Data sharing not applicable to this article as no datasets were generated or analyzed during the current study.