Abstract

The rapid spread of disinformation and misinformation created by deepfake poses threats and challenges to society. Most studies have analyzed deepfakes in terms of computer science, but there is now a pressing need to study them from the perspective of social science. This study aims to explore the factors that affect individuals’ intentions of watching or sharing deepfakes, the relationships between behaviors related to deepfakes and the impact of deepfakes to individuals or to the society. Accordingly, this study constructs a theoretical model and considers the impacts of deepfake content and quality on individuals’ behavioral intentions of deepfakes. This study adopts SPSS 26.0 to analyze the data set that involves 582 participants (Male = 234, Female = 348) in total. Results showed that attitude toward deepfakes, subjective norm, and perceived behavioral control positively influenced the individual’s behavioral intention regarding deepfakes, but perceived behavioral control did not have significant effects on behavioral tendencies on deepfakes when tested with other factors. Meanwhile, the quality of deepfake content was related to the individuals’ behavioral intentions of whether they were willing to watch or to share such videos, and there were gender, age, and education differences in individuals’ behavioral tendencies on deepfakes. Furthermore, the consequences of deepfakes were closely related to individuals’ sharing behaviors. The findings contribute to extended the TPB model, expanding the understanding of individuals’ differences in behavioral intentions of deepfakes. The findings also have implications for guiding the development of educational and training strategies to combat the negative effects of deepfakes.

Plain Language Summary

A deepfake is an artifact generated by artificial intelligence (AI)-driven tool to create “fake” content that is convincing enough to pass as an original form of whatever it mimics. With regard to the novelty of deepfakes, many studies have defined the term of deepfake, yet, empirical research on deepfakes is scarce and little attention has been paid to individuals’ behavioral intentions when they consume deepfakes. Hence, This study aims to explore the factors that affect individuals’ intentions of watching or sharing deepfakes, the relationships between behaviors related to deepfakes and the impact of deepfakes to individuals or to the society. Results showed that attitude toward deepfakes, subjective norm, and perceived behavioral control positively influenced the individual’s behavioral intention regarding deepfakes, and the consequences of deepfakes were closely related to individuals’ sharing behaviors. The findings have both theoretical and practical implications for individuals, social media platforms, technology developers, and society.

Keywords

Introduction

A deepfake is an artifact of media manipulation generated by artificial intelligence (AI)-driven tool to create “fake” content (e.g., fake video, image and audio) that is convincing enough to pass as an original form of whatever it mimics (Vaccari & Chadwick, 2020). This is achieved through generative adversarial networks (GANs), a form of a deep learning algorithm (Ahmed, 2021a; Korshunov & Marcel, 2018; Westerlund, 2019; Yankoski et al., 2021). Deepfakes can reproduce the verbal and/or visible, and because they are used to imitate, it may be said that deepfakes “alter” these qualities (Kietzmann et al., 2020). Due to the use of apps to deepfake content, it is also narrowly understood as a face-swapping or face reenactment technology used to create disinformation (Liv & Greenbaum, 2020).

Deepfakes are now hyper-realistic and spread rapidly online, particularly on social media (Vaccari & Chadwick, 2020; Yankoski et al., 2021). While deepfake technology can be used for positive purposes, such as filmmaking, education, scene restoration, games, entertainment, and healthcare (Kılıç & Kahraman, 2023), we cannot ignore its negative effects. As stated by the previous studies, deepfakes are often deployed amidst conspiracies, rumors, fake news, misinformation, and sexual abuse (Guess et al., 2019; Maras & Alexandrou, 2019). These influence the judgments of individuals and groups, with negative social consequences (Hancock & Bailenson, 2021).

The implications of deepfake have engaged scholars of computer science, information science, psychology, journalism, and law (e.g., Westerlund, 2019; Liv & Greenbaum, 2020). Computer scientists have focused on technologies and the development of tools to filter disinformation, for example, UADFV, FaceForensic++, and DeeperForensic (Liv & Greenbaum, 2020). Social scientists have explored deepfake via politics, psychologies, communication, law, policy, and society (Korshunov & Marcel, 2018), advocating legislation and regulation, corporate policies, and voluntary education and training to combat adverse effects (Westerlund, 2019).

With regard to the novelty of deepfakes, many studies have defined the term of deepfake, yet, empirical research on deepfakes is scarce and little attention has been paid to individuals’ intent when they consume deepfakes. Meanwhile, the use of deepfakes to spread disinformation, targeting politicians, celebrities, and ordinary people persist (Yankoski et al., 2021), but the link between individuals consuming and sharing a deepfake is still a question. This matters, since deepfakes may become vehicles for porn, sexual abuse and cyber aggression (Maras & Alexandrou, 2019). Therefore, to help guide a comprehensive review of deepfake and a mapping of its consequences to the society and the public, understanding individuals’ behavioral tendencies to deepfake is important to enhance the study and the malicious and benign elements of deepfakes (Ahmed, 2021a). Hence, the current study uses principles of the theory of planned behavior (TPB) (Ajzen, 1991) as a theoretical framework to present an analysis of primary data collected from an online survey panel.

This study aims to explore the factors that affect the individuals’ behavioral intentions of watching or sharing deepfakes. Meanwhile, this study wanna to further investigate the potential social consequences of deepfakes caused by audiences’ watching or sharing behaviors of deepfakes. Accordingly, this study is (i) to extend social science research of deepfake, testing deepfake phenomena quantitatively; (ii) to provide novel insights into the relationships between deepfake and individuals’ intentions through the TPB; and (iii) to consider the audience’s reactions of deepfakes and the dynamic process of behavioral interaction influencing deepfake outcomes. Furthermore, this study provides the importance and a reference for discussing the longer-term implications of deepfake and other similar technologies from the social science fields.

Conceptualization: The Origins of Deepfake Technologies and the Social Impact of Deepfakes

In 2017, a Reddit user called

Definitions of Deepfake.

As shown in Table 1, deepfake is often broadly understood as a technology based on deep learning in the context of generative adversarial networks (GANs), that is deployed (both intentionally and unintentionally) to circulate disinformation (often on social media) with the potential to adversely affect society (e.g., Vaccari & Chadwick, 2020; Korshunov & Marcel, 2018; Yankoski et al., 2021).

Most researchers noted the connection between deepfake and AI (e.g., Hopster, 2021; Kietzmann et al., 2020; Westerlund, 2019) since AI creates and applies algorithms to mimic human intelligence (i.e., aims to make computers think and act like humans) (Galaz et al., 2021; Pelaua et al., 2021). Both AI and deepfake technologies can generate virtual scenarios through machine learning and have many applications (Fletcher, 2018), use in health (Pelaua et al., 2021), education (Kervenoael et al., 2020), business (Chung et al., 2020), and service sectors (Kervenoael et al., 2020). Yet, deepfake is often seen as an “outlaw” technology, having been used for negative purposes (Vaccari & Chadwick, 2020).

As shown in Table 1, verbal and visible types are the two main characteristics of deepfakes (Ahmed, 2023). On social media, a deepfake artifact usually involves one or a combination of face-swapping video, voice-swapping audio or face-and-voice-swapping video technologies, and/or fictional images generated through machine learning algorithms (Yankoski et al., 2021). When deepfake information involving doctored multimedia content (especially videos and audio) is quickly and widely spread, individuals may be more likely to watch or circulate those “products” and may form false memories (Ahmed, 2021a). Thus, what you see is what you get is no longer unequivocally true.

Consequently, generally agreed views of deepfakes’ consequences are that deepfake can raise questions of ethics and harm, including an increasing crisis of confidence and threatening governments and democracy (Diakopoulos & Johnson, 2021). Meanwhile, deepfakes may also distort audiences’ perceptions of truth and falsity, cause cyber, and social security threats and threaten social order (Chesney & Citron, 2019; Hughes et al., 2021; Westerlund, 2019). Although deepfakes (such as those intended to entertain) may sometimes have positive effects, the severe consequences of deepfakes, especially the video format used to convey information, threaten greater harm than traditional, text-based, disinformation. Therefore, at the moment, there is more skepticism than trust in the possible applications of the deepfake technology (Kwok & Koh, 2021).

Through studies of the impact of deepfakes, the dominant view is of deepfake technology as a disruptive technology, and alienation of AI (Albahar et al, 2019; Kietzmann et al, 2020). In other words, deepfakes impact normal use of the AI technology and raise technological, and regulatory difficulties in detecting and filtering disinformation, to some extent (Yadlin-Segal & Oppenheim, 2021). Therefore, the distinction between deepfake and AI thus lies in a difference in outcomes (Brooks, 2021), and awareness of detecting deepfake is growing (Hancock & Bailenson, 2021); positive uses of face-swapping (e.g., filmmaking) are called AI, and negative uses are deepfake (Hasan & Salah, 2019; Yankoski et al., 2021).

In light of the reviewed literature, this study suggests that care must be taken when differentiating deepfake, AI, and similar technologies. There are differences between AI and deepfake, but differentiation is complicated by the tendency to regard deepfake as AI when it is used positively. Regarding the technology-neutral perspective, poor recognition of the distinction between AI and deepfake may affect the understanding of media ethics and the identification of the dangers of technological alienation (Kwok & Koh, 2021). That is, a mere analysis of deepfake principles or operating mechanisms is insufficient to combat it since deepfake is a form of interaction.

Consequently, this study aligns with prevailing academic perspectives on deepfake technology, characterizing it as a neutral deep learning tool rooted in Generative Adversarial Networks (GANs). This technology possesses the capability to yield both advantageous and disadvantageous outcomes. Our objective is to scrutinize the variations in behavioral intentions and perspectives towards deepfakes among distinct demographic groups, exploring the influencing factors. As the Technology Acceptance Model (TAM), grounded in the theory of planned behavior, delineates constructs governing acceptance, offering a comprehensive framework to assess a wide array of factors impacting technology acceptance and dependence. External variables include individual differences and environmental constraints (Ghazizadeh et al., 2012).

Additionally, the Unified Theory of Acceptance and Use of Technology (UTAUT) model builds upon TAM, introducing constructs such as performance expectancy, effort expectancy, social influence, and facilitating conditions. This model also incorporates four key moderators influencing the intention to use, namely gender, age, voluntariness, and experience (Chand et al., 2022). In light of these frameworks, our paper posits that considering the social media era, it is valuable to explore whether individuals’ behavioral intentions towards deepfakes differ across various groups. Consequently, we hypothesize that diverse groups, such as males and females, youth and adults, may exhibit distinct behavioral intentions and perspectives on deepfakes, thereby addressing RQ1.

Following the findings from RQ1, we also investigate how the audiences’ behavioral tendencies (e.g., watching or sharing behavior) might influence the deepfake effects. It might reasonably be assumed that if a deepfake is not spread or believed by its audience, any negative impact will be small, but this inference remains largely unstudied. Therefore, RQ2 is proposed.

Furthermore, netnography studies suggest an individual’s intention in choosing to watch and/or share deepfakes may also depend on content and quality, but have not been separately explored (Lübbeling, 2022). Accordingly, this paper questioned that:

The TPB Determinants and Individuals’ Behavioral Intentions on Deepfakes

This study mainly focuses on the relations between deepfakes and individuals’ behavioral intentions. In other words, this study attempts to discuss what factors can influence individuals’ watching and sharing behaviors on deepfakes. At this point, social and psychological questions are prompted: (1) Why do people watch or share deepfake products on social media?; (2) Do the attitudes towards deepfakes affect individuals’ behavioral intentions on deepfakes?; (3) Are there relationships between (social) norms and individuals’ behavioral tendencies on deepfakes?; and (4) Do individuals’ experiences affect their behavioral tendencies on deepfakes? To address these questions, the theory of planned behavior is a holistic model to guide this project and address the research questions from both internal and external elements.

The theory of planned behavior (TPB) was developed from the theory of reasoned action (Fishbein & Ajzen, 1977) and the concept of perceived self-efficacy (Bandura, 2002). Ajzen (1991) added perceived behavioral control as a factor to discuss dynamic interactive and decisive relationships between attitude, subjective norms, and intention. Specifically, Ajzen (1991) claims that attitude, subjective norm (SN), and perceived behavioral control (PBC) are the three main determinants to explain behavior, predict behavioral tendencies, and represent opportunities and resources to perform a behavior. Therefore, this study takes the TPB as a powerful framework to test how behavior-specific factors affect individuals’ behavioral intentions on deepfakes, predict their impacts, and investigate how we can regulate the negative consequences of deepfakes from the audience’s angle.

An attitude toward a behavior indicates the degree to which that behavior may be evaluated as favorable/unfavorable (Ajzen, 1991: 188). Ajzen (2002) also described instrumental and experiential attitudes as distinct components of overall attitude, though their influence may vary by individual and circumstance. Specifically, instrumental attitude indicates whether the target behavior is valuable and beneficial to the individual, while experiential attitude indicates whether the target behavior is enjoyable or pleasant (Ajzen, 2002; Li et al., 2010).

With regards to the studies of deepfakes, Wang et al. (2021) stated that there were two extremes directions of public attitudes towards deepfakes, in which one regarded deepfakes as the vortex of entertainments, while the other was standing on the opposite side of the technological ethics. However, Y. Lee et al. (2021) used 10 YouTube deepfake videos and 2,689 audiences’ comments to found that the majority of the audience expressed neutral or irrelevant attitudes towards deepfakes and the number of dislikes on the video had considerable influence on audience attitudes. In light of the existing research, we still know little about the associations between attitudes towards deepfakes and the watching or sharing behaviors of deepfakes. For example, if the public know the negative consequences of deepfakes and form more negative attitudes towards deepfakes, will they avoid to watch or share them? Accordingly, this paper holds that personal attitude contributes to behavioral intentions, directly testing individuals’ attitudes to infer their behavioral tendencies. Therefore, this paper hypothesizes that:

In additional to attitudes, the subjective norm is perceived social pressure to perform the behavior (Ajzen, 1991, p. 188). In 2002, Ajzen added that the subjective norm has two components: descriptive norm perception (how people often behave) and injunctive norm perception (what one is morally obliged to do). Specifically, the descriptive subjective norm refers to whether people believe that others undertake the behavior (Li et al., 2010), in which case the influential factors are group psychology and group norms (Stok et al., 2016). The injunctive norm reflects whether others approve of the target behavior (Li et al., 2010), which is always affected by external restrictions and others’ views (Stok et al., 2016). In practice, there is a few empirical studies to directly explore the influences of the subjective norm on deepfake behaviors. However, some researchers discussed the ethics behind deepfakes, stating that we shall focus more on the ethical implications of deepfakes, and establish a moral stance and call for anticipatory governance to regulate deepfakes (de Ruiter, 2021). Indeed, the rise of new media and the blooming development of technologies provide more challenges to understand individuals’ behaviors (e.g., creating, watching, or sharing) on deepfakes. In line with the TPB, this study emphasizes particular impacts of the perception of both the descriptive norm and injunctive norm on an individual’s behavioral intentions, hypothesizing that:

The TPB further suggests that perceived behavioral control is the perceived ease or difficulty of performing the behavior (Ajzen, 1991, p. 188), which reflects experience or habit, and likely impediments (Li et al., 2010). Generally, the more favorable the attitude and subjective norm regarding a behavior pattern, the greater the perceived behavioral control, and the stronger and more direct the function of behavioral intention (Ajzen, 2011). The use of the degree of perceived behavioral control to predict behavioral intentions and tendencies is always combined with the elements of attitude and subjective norm (Ajzen, 1991). Accordingly, this study argues that perceived behavioral control has become a core factor because it can somewhat influence behavioral tendencies separately. In other words, if experience or behavior has habitually generated negative results, subsequent behavioral intentions regarding deepfake may be weak (Luttrell & Wallace, 2021). The individual probably also prejudges the effects of their behavior (behavioral result), and if this generates negative anticipation of the target behavior, the intention might also be weak (Ahmed, 2021b). Therefore, this study explores the relationship between perceived behavioral control and the individual’s behavioral intentions concerning deepfakes, predicting that:

The proposed research model and related hypotheses are shown in Figure 1, which offers theory building sufficient for the task of further exploration of deepfakes and relevant technologies.

A research framework.

Method

Most previous studies have explained the performances and features of deepfakes, however, their focuses were on the technologies and the interactions between individual and technology. The current study aims to explore the individuals’ behavioral intentions of watching or sharing deepfakes depend on multiple factors, and the behaviors are related to the impacts of deepfakes. The design of the survey followed the core elements of the TPB model, and also considered the impacts of deepfake content and quality. The quantitative findings expanded and clarified the understanding of deepfakes, and provided a detailed picture of the impacts of deepfakes and the regulations to deepfakes.

Participants and Procedure

This study hosted an online sample questionnaire (www.wjx.cn). The survey link was shared through WeChat and Sina Weibo from October 2021 to November 2021. The electronic informed consent was shown on the first page of the questionnaire, including the introduction of the project and research aims. All participants were told that there were no right or wrong answers, and they could stop the questionnaire anytime. Meanwhile, the anonymity of the respondents and the confidentiality of their responses were strictly guaranteed. This questionnaire contains a test question to improve sample authenticity. The authors’ affiliated institution approved the survey.

In this study, the unit of analysis was individuals in China, Korea, Japan, and the UK who knew of and/or had watched and/or shared deepfakes on social media. The first question of the questionnaire asked the participants whether they have watched deepfakes or can recognize deepfake videos after watching. If their answer is “yes,” they will continue the survey, whereas, if their answer is “no,” the survey would stop. This means that the participants could know whether a video was a deepfake from the title (e.g., deepfake video of a pop star) or the tag (#deepfake video). Meanwhile, the participants might also identify whether a video was a deepfake after watching by asking others for confirmation. Accordingly, they could know whether the impact of that deepfake would be positive or negative.

Prior to formal test process, participants completed the online Chinese versions of the questionnaire for a pre-survey. During the formal test process, the final sample consisted of 582 valid answers. Twenty-three (3.8%) invalid questionnaires were returned, including questionnaires with incomplete responses and from people who did not know about deepfakes (i.e., they answered “no” to the first question).

Specifically, there were 92.4% of participants (

Materials

The measurements for the current study were adopted from the netnography observation and the prior literature, including the attitude scale (Li et al., 2010), the subjective norm scale (Lin, 2016), the perceived behavioral control scale (Ajzen, 2002; Armitage & Conner, 2001; Li et al., 2010; Taylor & Todd, 1995) and the deepfake feature scale (Davis, 1989). All measurement scales were a five-point Likert scale (from

Individuals’ Behavioral Intentions

Ten items regarding the individuals’ intentions of deepfakes (α = .969) were designed based on our netnography observation. A five-point Likert scale (from

Attitude

The measurement of attitude (α = .913) is based on Li et al.’s scale (2010) and it has two dimensions, instrumental attitudes (α = .861) and experiential attitudes (α = .906) with good reliability values in the current study. Items included “When I watch/share deepfake, I feel pleasant,”“Watching/Sharing deepfakes is helpful to my work, for example, I am a self-media worker and I can make benefits by coining videos,” and “Watching/sharing deepfakes is beneficial.”

Subjective Norm

Descriptive subjective norms and injunctive subjective norms were measured in Lin’s study (2016). We indicated the degree to which family, peers, and colleagues influence participants to undertake specific behaviors, and measured how external restrictions and others’ views affected the individual’s behavior regarding deepfakes. Sample questions include “My family members will be exposed to deepfakes,”“There are a lot of deepfake videos circulating in society,”“My family members do not let me have access to deepfakes,” and “People who are important to me keep me out of deepfake videos.” The Cronbach’s alpha (α) is .874 for the total scale and .796 and .918 for descriptive subjective norms and injunctive subjective norms, respectively.

Perceived Behavioral Control

The scale measured the extent to which participants can control their behaviors, combining scales per Taylor and Todd’s study (1995), Armitage and Conner’s study (2001), Ajzen’s framework (2002), and Li et al.’s scale (2010). The questions include “Even if I was not allowed to watch deepfake videos, I still do it,”“Even if I was not allowed to share deepfake videos, I still do it,”“If someone important to me has ever been punished for sharing deepfake videos, I will not do the same thing,”“If I will be going to be punished for watching deepfake videos, I will not do it,”“If I will be going to be punished for sharing deepfake videos, I will not do it,”“I have a habit of watching deepfake videos and will continue to do so,” and “I have a habit of sharing deepfake videos and will continue to do so.” The Cronbach’s alpha (α) is .943.

Deepfake Features

Items about deepfake content (α = .891) and quality (α = .890) were based on Davis’ (1989) study. Sample questions include “When facing deepfake videos, I pay more attention to the production quality of the video,”“When facing deepfake videos, I pay more attention to the interesting content of the video,”“When facing deepfake videos, I pay more attention to whether I am interested in the content of the video,”“If the dissemination of the deepfake video content has a positive social impact, I will watch it,” and “If the dissemination of the deepfake video content has a positive social impact, I will share it.”

Statistical Analyses

CFA is an analytical method to test the reliability and validity of the scale through AMOS 22.0. The results of the analysis were that goodness-of-fit of the model was statistically acceptable (

SEM Results (

For data analysis, the hypothesized relationships were completed in IBM SPSS Statistics for Windows version 26.0. Analyses comprised three steps. First, descriptive analysis explored the differences in individuals’ behavioral intentions on deepfakes between different groups (RQ1) and the direct or indirect relations between behavioral tendencies of deepfakes and the consequences of deepfakes (RQ2). Second, the regression analysis examined TPB variables’ influences on individuals’ behavioral intentions on deepfakes (H1–H3), further directly investigating the associations between deepfake features and behavioral intentions. Thirdly, the regression analysis also examined whether the content and the production quality of deepfakes may influence individuals’ behavioral intentions (RQ3). According to the above findings and previous studies, this study predicts the impacts of deepfake concerning audiences, targets, and the wider society.

Results

Descriptive Analyses

The absolute value of the Skewness and the absolute value of the Kurtosis of all items in the scales used in this study were below 3, indicating that all scales conform to a normal distribution (see Table 3). Therefore, RQ1 attempts to find individuals’ differences in behavioral tendencies on deepfakes. As shown in Table 4, associations among gender (

Data Characteristics and Potential Biases.

Test of Difference Between Behavioral Intention and Personal Determinants.

In addition, RQ2 inferred that the impacts and consequences of deepfakes may be linked to audiences’ behaviors. In our study, about half of all participants strongly agreed (19.86%) or agreed (30.48%) that if they know the deepfake video has a positive social impact, they would watch it. Nearly 60% strongly agreed (19.86%) or agreed (32.53%) that if a deepfake video had a positive social impact, they might share it, while 48.63% claimed they would not watch deepfake videos with negative social impacts, and 45.2% said they would not share negative deepfakes. It is hard to say whether a deepfake will have positive or negative effects, and the final influences are related to the depth and breadth of audiences’ sharing behavior. This diverges from previous studies, which often associate deepfakes with negative outcomes. Deepfakes present ethical problems, but this study shows their impacts and consequences depend on individuals’ behavioral tendencies.

Testing the Effects of TPB Determinants on Individuals’ Behavioral Intentions on Deepfakes

For the multicollinearity statistics, the maximum value of VIF is 4.254, indicating that there is no problem of multicollinearity between the independent variables. Therefore, H1a and H1b posited that instrumental attitude (

Regression Model Test Between Attitude and Behavioral Intention.

Dependent variable: behavioral intention.

When we tested H2a and H2b, the maximum value of VIF is 1.573, indicating that there is no problem of multicollinearity between the independent variables. Consequently, H2a and H2b inquired about the relationships between the subject norm and individual’s behavioral intentions on deepfakes (see Table 6). Results revealed that descriptive norm perception (

Regression Model Test Between Subjective Norm and Behavioral Intention.

Dependent variable: behavioral intention.

H3 further investigated the relation between perceived behavior control and behavioral intention on deepfakes that perceived behavior control can positively influence behavioral intention (

Regression Model Test Between Perceived Behavioral Control and Behavioral Intention.

Dependent variable: behavioral intention.

In addition to the above, the TPB is a holistic model inclusive of attitudes, subjective norms, perceived behavioral control, and intention (plus behavior). Therefore, this study tested attitude, subjective norms, and perceived behavioral control as a whole, discussing the predicted functions of the behavior intentions on deepfakes. As Table 8 showed that only attitude (

Regression Model Test Between Attitude, Subjective Norm, Perceived Behavioral Control and Behavioral Intention.

Dependent variable: behavioral intention.

Testing the Effects of Deepfakes’ Features on Individuals’ Behavioral Intentions on Deepfakes

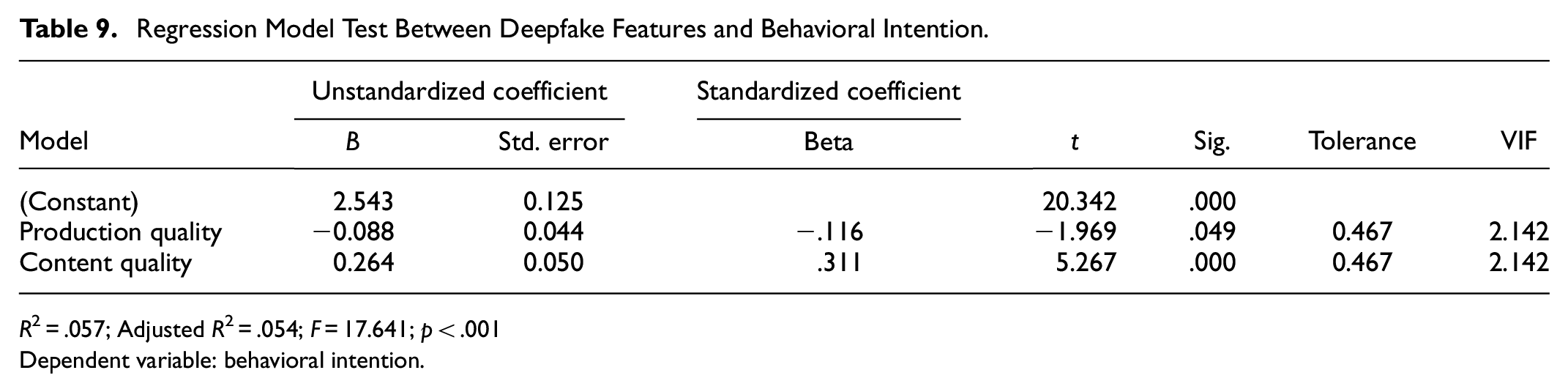

While the TPB factors increased the likelihood of identifying, watching, and/or sharing deepfakes, this study also found that deepfake features (e.g., content and quality) influenced behavioral intentions (see Table 9). RQ3 referred to the association between the quality of deepfake production and content, and behavioral intention. We found that content quality (

Regression Model Test Between Deepfake Features and Behavioral Intention.

Dependent variable: behavioral intention.

Discussion

This study has identified that attitude, subjective norms, and perceived behavioral control significantly affect an individual’s behavioral tendencies in watching and/or sharing deepfakes. Importantly, the effect of attitude on behavioral intention of watching or sharing deepfakes is obvious. Furthermore, we found that different gender groups, age groups and education groups have various tendencies on deepfakes. Finally, we found that deepfake content positively affects individuals’ watching and sharing behaviors, in which people generally prefer to watch or share interesting and well-made videos, but for deepfake videos, indeed, behavioral intentions mainly relate to attitude and subjective norms—the influence of production quality is minor.

Individual’s Behavioral Tendencies on Deepfakes

Previous studies rarely explored the individuals’ differences of actions on deepfakes. This study found that females were more likely to watch or share deepfakes and young adults (aged 26–40) had higher levels of behavioral tendencies of watching and/or sharing deepfakes. While there is no explicit literature addressing the reasons why females and young individuals may exhibit greater openness to watching and sharing deepfake products, we propose explanations from two distinct perspectives. Firstly, the divergence in technological focus between genders is noteworthy; males tend to concentrate more on the hardware aspects, while females show a greater interest in the content of the message (Wut & Lee, 2022). In this context, both males and females may derive entertainment value from deepfakes, but males may be more attuned to the underlying principles of deepfakes; in contrast, females may be drawn to aspects such as impersonations, humor, or creative storytelling (Hancock & Bailenson, 2021).

Secondly, user satisfaction exerts a positive influence on their adherence to new technologies and intentions to use them, and concurrently, this stickiness has been found to positively impact users’ intentions to engage with platform services (Lien et al., 2017). Young people, often referred to as digital natives due to their general media consumption habits, exhibit a greater familiarity with digital technologies. Their inherent openness and satisfaction with new technologies render them more receptive to and intrigued by exploring deepfake contents. Furthermore, they tend to share creative and entertaining technology-related content with others (Borrero et al., 2014). Simultaneously, performance expectancy significantly affects the usage intentions of young individuals. In essence, when the attitudes or views of young people align with the content of deepfakes (videos and news), they are more likely to believe in and willingly share deepfake contents (Shin & Lee, 2022).

Furthermore, there is a positive significant effect of educations on deepfake behaviors. Behavioral intention scores for three groups (middle school degree and below, high school degree, and associate degree) were higher than for participants with a bachelor’s degree, graduate degree, or above. In other words, the higher the level of education, the lower the behavioral intention regarding deepfakes. This may be because the well-educated can better distinguishes the credibility and implications of deepfakes. When choosing to watch or share, they might favor meaningful and positive content.

Consistent with prior research (e.g., Diakopoulos & Johnson, 2021; Y. Lee et al., 2021; Luttrell & Wallace, 2021), there is no doubt that attitude, subjective norm and perceived behavioral control were the determinants influencing individuals’ behavioral intentions regarding deepfakes. From the survey, experiential attitude is greater influenced, and similarly, the negative attitude was to those deepfakes used for darker purposes (Westerlund, 2019). We explain this phenomenon: watching and sharing videos are more for entertainment and curiosity so individuals generally choose to identify deepfake in an emotional way and individuals may reject to watch and/or share videos having negative content (that may make them unhappy or uncomfortable). This is also similar to the relations between deepfake contents and behavioral tendencies, in which the majority of participants’ responses were positive if the content of deepfakes was positive and/or had good reflections.

Meanwhile, subjective norms positively affect an individual’s behavioral intentions. In this study, about half of the participants strongly agreed or agreed that behavioral intention regarding deepfake videos mainly depended on their own opinions. In our life, individuals’ watching and sharing behaviors of certain (kinds of) videos depend mainly on the individual’s own choice, their understanding of such videos, and their physical and social environment (J. Lee et al., 2017). Especially when audiences face deepfakes, they may not distinguish the authenticity in the first instance. At this moment, behavioral tendencies mainly rely on descriptive subjective norms.

Furthermore, as we have seen, when the relationship between perceived behavioral control and behavior intentions is tested separately, it is positive, yet when it is considered along with other elements, the influence becomes very weak in this study. Although Ajzen (1991) stated that the resources and opportunities available to a person must to some extent dictate the likelihood of behavioral achievement, it does not mean that the participant is unable to watch deepfakes if perceived behavioral control does not predict intention or behavior. On the one hand, when we tested perceived behavioral control separately, it is positive. Therefore, we can infer that perceived behavioral control is literally about the ability to engage in the behavior because it can predict the behavior intentions directly. On the other hand, as shown in this study, watching deepfakes, as well as other kinds of videos, are depended on various variables and contexts. In other words, it is not based on the ability (e.g., finding platforms) to watch a video but also relates to the contents, quality, and understanding of the videos. This also echoes Ajzen (1991), which found perceived behavioral control’s significance to be affected by attitude, subjective norms personal experiences, and anticipations of behavioral outcomes.

Deepfakes’ Consequences to the Public and to the Society

Multiple previous studies have highlighted two main directions of researching on deepfakes: how to develop better algorithms of the use of deepfakes and how to regulate deepfakes through policy-making (Lu & Chu, 2023). For the first point, the development and easy deployment of deepfakes entail opportunities and benefits to society when the approach to deepfakes is regarded as a neutral technology. Deepfake is a GAN-based technology that is gaining increased use in a great number of fields, for example, it has benefits in the environment, health care, and education (Hopster, 2021). At this moment, the positive reflection may indicate that individuals have identified a deepfake with a good meta-frame. Meanwhile, most studies stated the potential harms which may be inflicted by deepfakes at various levels when they focused on the capacity of disinformation to deceive individuals, as discussed above (Hughes et al., 2021; Westerlund, 2019). Importantly, researchers may not fully considered potential uses of deepfakes in some context-bound environment, in which numerous poorly explored impacts, and risks of deepfakes as human, technologies, ethics, and society interact in novel ways (Galaz et al., 2021). For example, relationships between deepfake impacts and individuals’ behavior are only just beginning to be understood. As the results of this study indicated, from the audience perspective, the social impact of deepfakes can be more or less extensive, or be negative or positive depending on the complex interplay between human practices and a given context-specific way (e.g., physical and digital environment).

Indeed, it is challenging to simply summarize the deepfakes’ consequences into “deepfake and ethical issue,”“deepfake for advantages,” or “deepfake for disadvantages” because deepfakes cause both intentional and unintentional disinformation and the intentions and motivations of people towards deepfakes are complex. Accordingly, the concepts including “natural or artificial,”“true or fake,”“trust or distrust” are all re-considered. As Chesney and Citron (2019) argued, deepfakes (or other forms of digital deception) were undoubtedly dangerous, but they may not necessarily be disastrous.

Accordingly, it is timely and important to consider the regulations and policy to detect and manage deepfakes. Some countries have implemented laws to response to deepfakes. For example, the U.S. National Defense Authorization Act (NDAA) instructs the deepfake creation technology and possible detection and mitigation solutions (Okolie, 2023). The Swiss Civil Code (art 28 CC) encapsulates several rights and most centrally includes the right to protection of privacy affected by deepfakes (Toparlak, 2023). Notably, unlike other media technologies, audiences likely respond to deepfakes with mixed reactions because deepfakes sometimes have positive impacts. Thus, it is worthwhile to investigate not only whether the policies and regulatations to supervise deepfakes but also the multiple processes to produce positive impacts on the audience, which are crucial for developing this technology and maximizing its benefits.

As follows, this study states that the core step in conceptualizing deepfakes’ impacts as well as measures to combat negative deepfakes is to pinpoint the relations between deepfake and their audiences, and meanwhile, to improve the platforms’ capabilities to detect deepfakes timely. Such dilemmas are not only faced by deepfakes but other disruptive or innovative technologies should also. As Hopster (2021) points out, novel technologies, procedures, artifacts, and applications may trigger a process of disruption and social challenges. The core step is to find where the disruption begins.

Theoretical and Practical Implications

The overall results supported the hypothesized relationships built on the TPB paradigm. Therefore, this study determined the associations between the TPB factors and behavioral tendencies on deepfakes, which were important to understand the consequences to society and similar technologies. Accordingly, the current study extended the TPB model to the field of communication, media, and psychology research. More importantly, our study further expanded the understanding of individuals’ differences in behavioral intentions of deepfakes (or other similar disruptive technologies). By examining the associations between social interactions and deepfakes’ consequences, our study took the step in using TPB factors to address social problems in media and provided vital insight into future theory building (see Figure 2).

Schematic representation of behavior and social impact.

This study also has important practical implications. Prior research pointed out that a large number of potential audiences easily accepted the authenticity of the deepfake videos after they have been tampered with (Y. Lee et al., 2021). Facing the negative consequences of deepfakes, researchers have suggested ways to detect or combat them (e.g., Hancock & Bailenson, 2021; Westerlund, 2019). Adopting findings in this study, we noticed that distinguishing the authenticity or potential consequences of a deepfake video often requires much critical knowledge, which was consistent with the fact that there was no positive influence between education and behavioral intention of watching deepfakes. Considering, once more, it is crucial to consider approaches to manage negative deepfakes other than technical means. Therefore, this study has implications for the regulation of deepfake videos, from a social science perspective.

This research has implications for users of social media, social media platforms, technology developers, and broader society. In our study, we emphasize that deepfakes is indistinguishable from actual footage, so audiences have challenges knowing whether a video is a deepfake and whether the social impact of that deepfake would be positive or negative before watching. Accordingly, we suggest that an intervention would be needed which helps people to assess an information believability, to reject deepfakes and lead them to not share deepfakes when encountering them on social media. In particular, we suggested that prevention activities focusing on the reduction of negative deepfake consequences may pay more attention to establish effective detecting rules and strengthen individuals’ cognitive skills to understand and distinguish negative deepfakes. We shall improve digital media literacy and users’ information-processing capabilities (via education and training) to limit negative deepfakes. Meanwhile, due to the rapidly evolving and developing deepfake methods, society, and social media platforms need a range of methods to help individuals with lower cognitive skills understand and distinguish negative deepfakes. These approaches equip individuals to have a deeper understanding of deepfake-related behavioral achievements, and to consciously resist such videos and, with technological developments, may let positive versions of deepfake technology become the predominant form.

Limitations and Future Directions

This study used quantitative methods but like any study, it has limitations that may direct later work. Firstly, although this study used quantified elements from TPB, the injunctive norm have no positive influence. Importantly, when we test perceived behavioral control in group measurement, there also exerts no positive influence. This may relate to sample size, sampling group, and cultural features. We had a small sample in this study and most of the participants were Chinese. However, in China, the studies and definitions of deepfakes are not widely found, so perceived behavioral control (e.g., experience) indirectly influences behavioral tendencies toward deepfakes. Future studies could use more participants from diverse backgrounds, to test the significance and/or relationships identified here. Secondly, the personal determinants used were gender, age, and education. Other factors, such as hobbies, interests, and curiosity, could be considered. Finally, discussing deepfake from the perspective of social science involves regarding deepfake video as a neutral technology. This is a novel and intriguing attempt, but deepfakes are known to be indistinguishable from actual footage. In this study, we shall carefully design the survey to know whether the individual can distinguish deepfakes. The questionnaire used in this study asked the participants whether they can recognize deepfakes or not directly. For future work, could explore these research questions in more detail and could extend the framework and models to conduct a deeper exploration.

Conclusion

This study used the TPB as a theoretical framework, adding features of deepfake to discuss what are the core factors that affect individuals’ behavioral intentions of deepfakes. We showed that three TPB elements were important in terms of willingness to discern, watch, and/or share deepfakes which echoed Ajzen’s study. At this angle, this study is one of the first to assess the relations between individuals’ behavioral intentions and deepfakes, exploring how individuals’ behavioral tendencies influence the effects of deepfakes. This is vital for providing insights into the perception and interpretation of deepfakes to tackle adverse use of this technology. This study also warranted the need to pay more attention to the behavior of potential social and psychological interactions in our effort to recognize and restrict the impact of deepfake videos and other online disinformation. Briefly, the potential approaches were education and training strategies to improve people’s cognitive ability and critical knowledge to distinguish whether a video was a deepfake and whether the social impact of that deepfake would be positive or negative. This study has both theoretical and practical implications for individuals, social media platforms, technology developers, and society.

Footnotes

Acknowledgements

The researchers thank all the participants who help to complete the online survey.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research is funded by the National Social Science Fund of China under the project of “Research on Emotion Transmission Mechanism and Risk Control in Public Emergencies” (Grant No. 23BXW042).

Data Availability Statement

Data sharing not applicable to this article as no datasets were generated or analyzed during the current study.