Abstract

Machine translation post-editing (MTPE) is a process where humans and machines meet. While previous researchers have adopted psychological and cognitive approaches to explore the factors affecting MTPE performance, little research has been carried out to simultaneously investigate the post-editors’ cognitive traits and the post-editing task properties. This paper addresses this gap by focusing on perceived post-editing self-efficacy (PESE) as a key cognitive trait. By adopting mixed methods of keylogging, screen-recording, and subjective rating, this paper attempts to empirically assess the effects of student translators’ PESE and machine translation (MT) quality on their cognitive effort and post-edited quality. Data were obtained from 106 Chinese student translators concerning cognitive effort (indicated by processing time per word, pause density, pause duration per word, and perceived cognitive effort) of post-editing tasks and the post-edited quality (indicated by average accuracy score and average fluency score). Results show that MT quality significantly influences both the process and product within a PE task. PESE has effects on participants’ perceived cognitive effort and post-edited quality, but not on actual cognitive effort. No significant interaction effect of MT quality and PESE on PE performance was observed.

Keywords

Introduction

Research Background

In recent years, machine translation (MT) has been increasingly employed in the translation and localization industry (Afzaal et al., 2022; Koponen, 2016; Shah et al., 2024). The surge in content requiring translation, coupled with the need to enhance speed and productivity, has spurred the widespread adoption of machine translation post-editing (MTPE). Post-editing (PE) is the process of refining MT outputs through revisions by human post-editors (Guerberof Arenas, a, 2009), embodying a significant human-machine interaction. Extensive research has been conducted comparing the levels of human effort (Plitt & Masselot, 2010), speed (Green et al., 2013), and translation quality (Jia & Sun, 2023) associated with PE and purely human translation, as well as examining differences in PE tasks based on various MT technologies (Jia et al., 2019). Recent studies have focused on identifying the features of and the connections between the PE process and the final post-edited product (Nunes Vieira, 2016; Yang et al., 2023). The term “PE performance” has been used to encompass both process and product data in PE tasks (De Almeida, 2013).

Process-oriented studies have aimed to identify causal factors and reliable indicators of the effort exerted in PE activities, particularly cognitive effort. On the product side, the potential correlations between cognitive and other types of effort and the quality of the post-edited text—mostly measured in terms of fluency, accuracy, and error count—have garnered increasing interest.

Among the influential factors impacting PE performance, task properties and individual characteristics have attracted significant attention. On the task property side, MT quality has emerged as a critical factor, with numerous studies examining its correlation with PE effort and the resulting translation quality (Gaspari et al., 2014; Krings, 2001; Lacruz et al., 2014; O’Brien, 2011). Regarding post-editors’ characteristics, individual traits such as professional experience (De Almeida, 2013; Guerberof Arenas, 2014), translation skills (Green et al., 2013), motivation, self-regulation, and critical thinking (Yang & Wang, 2020), have been explored in relation to MTPE performance. However, other cognitive variables such as individuals’ self-efficacy have been largely overlooked in the field.

Self-efficacy (belief), as indicated by Bandura (1997), is defined as people’s beliefs about their capabilities to produce the required levels of performance. This construct has been positively linked to accomplishment and self-control adjustments in complex tasks, and has been shown to correlate strongly with cognitive task performance (Cifuentes-Férez et al., 2024; Rahimi & Zhang, 2019; Saks, 1995). Moreover, the relationship between self-efficacy and cognition, as well as its stimulating effect on translation performance, has sparked considerable interest in translation and interpreting studies (e.g., Araghian et al., 2018; Lee, 2014, 2018; Zhou et al., 2022).

Despite the growing body of research on PE performance, the role of post-editors’ self-efficacy remains largely unexplored. Previous studies have indicated a potential link between PE tasks and self-efficacy. To begin with, individuals’ self-efficacy when executing specific tasks significantly influences the outcomes of those tasks (Schwarzer, 1992). Additionally, PE constitutes a problem-solving process, where post-editors address lexical and syntactic issues stemming from the source text (ST) or MT outputs (Nitzke, 2019). Post-editors can effectively resolve these issues by utilizing external resources, thereby reducing their PE effort and enhancing the final output quality (Daems et al., 2017). During this process, information-seeking behavior, strongly associated with self-efficacy (Clark, 2017; Gkorezis et al., 2017), is crucial for acquiring external resources and solving problems (Nitzke, 2019).

Given these considerations, we hypothesize that self-efficacy may serve as a predictor for both the effort post-editors invest and the quality of their post-editing outcomes. Despite extensive research, previous studies have seldom concurrently investigated individual cognitive factors like self-efficacy alongside task-related attributes such as MT quality in the context of post-editing tasks.

Research Objectives

To address the research gaps aforementioned, the present paper aims to empirically assess the main and interaction effects of machine translation quality and perceived post-editing self-efficacy on the post-editing performance of Chinese student translators. Delving into the interplay between these variables can enhance our understanding of the cognitive processes involved in machine translation post-editing. It sheds light on how individual attributes and task-related factors collectively impact translator performance. Such insights are invaluable for designing more effective training methods in MTPE, facilitating deeper comprehension of the functional mechanisms underlying self-efficacy in translating tasks.

Literature Review

PE Performance

Analogous to translation performance, PE performance can be considered the culmination of participants’ actions while refining machine translations. Existing research suggests that a comprehensive analysis of PE performance should encompass both process and product data (Cui et al., 2023; Zhou et al., 2022).

PE effort has received considerable attention in PE process research. The much-quoted model of post-editing effort, proposed by Krings (2001), distinguishes three aspects: temporal effort, technical effort, and cognitive effort. Temporal effort pertains to the time spent on post-editing, while technical effort comprises keystrokes and cut-and-paste operations necessary for creating the post-edited version. Cognitive effort, the most challenging aspect to capture and measure, entails identifying errors in the MT, and determining the appropriate steps to rectify them (Koponen et al., 2020). The actual cognitive effort expended by post-editors has been assessed through pause indicators such as pause density and pause duration, and fixation metrics such as fixation duration and counts, using keylogging, and eye-tracking techniques (Carl et al., 2011; Krings, 2001; Lacruz et al., 2014; O’Brien, 2011). Some studies have explored the utilization of think-aloud protocols (TAPs; O’Brien, 2005; Sun et al., 2021) and physiological measures (Herbig et al., 2019) to gauge actual cognitive effort. Besides, the Task Load Index (NASA-TLX) developed by Hart and Staveland (1988), which features six workload-related subscales (mental demand, physical demand, temporal demand, effort, performance, and frustration level), has been adopted and adapted in translation research to measure translators’ perceived cognitive effort (Sun & Shreve, 2014).

In product-oriented studies of PE performance, the focus primarily rests on post-edited quality, encompassing aspects such as adequacy, clarity, accuracy, fluency, error numbers, and human raters’ preference for the PE outputs. Human rating (or ranking method) and error analysis have been predominantly employed to evaluate the PE output quality (Carl et al., 2011; Lommel, 2018).

MT Quality and PE Performance

MT quality is widely perceived as the determining factor of PE effort (Krings, 2001). To investigate the role of MT quality in influencing PE performance, various methods are employed to assess MT output quality, including human rating and automatic evaluation metrics. These studies, however, show inconsistency in their results. In Krings (2001), human raters are recruited to assess rule-based MT output sentences using a five-point Likert scale, observing that the relationship between MT quality and PE effort in terms of attention allocation was not always linear. O’Brien (2011) employs automatic evaluation metrics to measure MT quality and discovered a negative correlation between MT output quality and PE effort. According to Sanchez-Torron and Koehn (2016), post-editing MT output with higher BLEU scores results in superior final product quality while reducing PE effort in terms of time and operations. Daems et al. (2017) discover that more MT errors are associated with higher average pause ratios. Conversely, these errors are linked to lower fixation counts, fewer production units, and reduced scores in human-targeted translation edit rates (Daems et al., 2017). One reason for these conflicting results is that these studies took MT quality as the essential factor affecting PE performance, without controlling for ST complexity levels (Jia & Zheng, 2022).

The above studies have demonstrated the impacts of MT outputs on PE effort. As for its effects on the post-edited quality, Koponen (2016) concludes that when experienced post-editors encounter high-quality MT outputs, the final output quality surpasses that of human translation of the same ST. Castilho and Guerberof Arenas (2018) collect both process and product data in PE tasks and find that the more errors present in the original MT, the lower the final PE output quality and the correlation between MT quality and PE time; interestingly, they also observe that translation experience appears to positively influence processing speed but not error rates.

Upon reviewing prior studies, we identified three research gaps to be addressed. First, while the predictability of MT quality on PE performance has attracted considerable attention, the findings have been inconsistent, necessitating more empirical research evidence. Second, as Stymne and Ahrenberg (2012) suggest, relevant research should be further in different language pairs, including Chinese-to-English settings. Third, the interaction between MT quality and translators’ individual characteristics has rarely been explored in this field. According to Krings (2001, p. 173), “translation process research, in general, has found great individual variation in nearly all the characteristics of translators, and in general, the same is likely to hold for nearly any activity that humans are involved in.” However, evidence of the interactive impact of these two types of factors on PE performance is limited to a few studies (e.g., De Almeida, 2013; Moorkens et al., 2015). As a result, the interplay between MT quality, post-editors’ individual traits, and prominent cognitive factors such as self-efficacy warrants further investigation in MTPE studies.

Self-Efficacy and Task Performance in Translation Studies

Self-efficacy is a crucial factor in an individual’s life, as it underpins motivation and behavior, and may inspire better performance or persistence in challenging circumstances (Lee, 2018). Translation is a complex activity that involves not only language competence and expertise but also cognitive and emotional experiences (Hansen, 2005). The concept self-efficacy has already been addressed in translation studies, where it has been positively correlated with source language reading comprehension and decision-making in translation problem-solving (Bolaños-Medina, 2014). Translating self-efficacy is defined as the confidence translators have in their abilities to perform adequately in translation activities (Haro-Soler & Kiraly, 2019). The growing body of empirical research in translation studies highlights the need to investigate the relationship between the subjective traits (e.g., motivation, self-efficacy, self-regulation, etc.) of research participants and translation performance in different types of translation tasks (Jiménez Ivars et al., 2014; Lee, 2018; Yang & Wang, 2020; Zhou et al., 2022). The effects of translators’ self-efficacy on their performance in written translation tasks have been explored in many mixed-method studies.

Araghian et al. (2018) employ TAPs and keystroke logging to explore the effects of translation self-efficacy on trainee translators’ application of translation problem-solving strategies, suggesting that participants with lower self-efficacy tend to spend more time translating and exhibit more repetitive attempts at production and extensive revision (Araghian et al., 2018). Zhou et al. (2022) use triangulation to measure the correlation between written translation task complexity and the performance of Chinese students with high or low translation self-efficacy, finding that high self-efficacious students produce better translation output than their low self-efficacious counterparts. Additionally, in prior studies, instruments such as translation self-efficacy scales have been adapted from general self-efficacy scales (Bolaños-Medina and Núñez, 2018) or developed (Haro-Soler, 2018; Yang et al., 2021) to measure translators’ self-efficacy in written translation tasks.

Considering the rapid development of MT systems, it is recommended that different task types, such as MTPE, be taken into account to further contribute to translation performance research (Zhou et al., 2022). MTPE is a rather different task compared with written translation, as it requires a different set of skills than human translation, including expertise in risk assessment, strategic selection, and consultation (Nitzke, 2019). However, few studies have explored the relationship between MTPE performance and self-efficacy, a cognitive variable closely connected with language learning and problem-solving. Consequently, research on self-efficacy in PE tasks needs to be supplemented with empirical evidence.

The Present Study

In light of the above, the present study aims to analyze Chinese student translators’ post-editing (PE) performance by measuring their PE effort (cognitive effort in this study) and post-edited quality (indicated by the accuracy and fluency of final outputs), while considering the two variables of MT quality and student translators’ perceived post-editing self-efficacy (PESE). Data on PE tasks performed by student translators with high and low PESE are collected. The source texts (STs) have similar complexity levels, but the MT output quality levels vary.

A conceptual framework in Figure 1 is developed to delineate the variables contributing to and measures of PE performance. The framework promotes the idea that MT quality and perceived post-editing self-efficacy influence both participants’ PE behavior and product quality. Besides, MT quality might interact with perceived post-editing self-efficacy in influencing PE performance. MT quality and perceived post-editing self-efficacy are treated as independent variables and classified as high and low. The dependent variables are PE effort and PE quality, which represent PE performance. PE effort is measured through key-logging metrics such as processing time per word, pause density, and pause duration per word, while PE quality is assessed through manually calculated mean accuracy and fluency scores. The three research questions are: (1) How does MT quality influence student translators’ performance in PE tasks? (2) Does student translators’ perceived post-editing self-efficacy have an impact on student translators’ performance? If yes, how? (3) Do MT quality and perceived post-editing self-efficacy have an interaction effect on student translators’ PE performance? If yes, how?

Research framework.

Methodology

Participants

A total of 106 third-year students (9 males and 97 females) majoring in English, aged between 19 and 24 (M = 21, SD = 1.3) were recruited for the study from a comprehensive Chinese university. All participants were native Chinese speakers with English as their second language (L2). The participants’ scores on the LexTALE (Lexical Test for Advanced Learners of English), a test of vocabulary knowledge for medium to highly proficient speakers of English as L2, were used as measures of their L2 proficiency. According to Lemhöfer and Broersma (2012), the predictive power of LexTALE was compared to that of self-ratings, and students with a score of more than 60 points are proficient in English. These students had similar levels of experience utilizing MT technologies as support resources during translation but had not taken any PE training courses. Ethical approval was obtained from Hunan university ethics committee and all the participants signed an informed consent form before participating. The Letter of Information and Consent Form encompasses essential details such as the research purposes and procedures, anticipated benefits, the right to withdraw from the study without repercussions, and provisions for confidentiality and an explanation of the expected outcomes of the study.

Following the median split guideline suggested by Malik et al. (2021), students were divided into two groups based on the PESE measures. The top half of students (N = 53) with a PESE value above the median value were assigned to the high-PESE group, while the remaining half (N = 53) were assigned to the low-PESE group students. The two groups were significantly different in PESE (p < .001), but their LexTALE test scores present no significant difference (p > .05).

Materials

The materials in this experiment included two English STs, four MT outputs (with two in high quality and two in low quality), an adapted post-editing self-efficacy (PESE) scale, a pre-task questionnaire about participants’ basic information, and post-task questionnaires involving certain questions about participants’ ideas toward the experiment process.

Source Text

Two English texts (selected from newsela.com) of similar complexity were chosen as STs for the study. ST1 consists of 132 words, while ST2 comprises 126 words. Both texts address news topic and include references to specific places and institutional entities such as “Darfur,”“the International Criminal Court in The Hague,” and “PR coup.” The use of these terms and the requisite background knowledge can pose interpretive challenges. Consequently, it is imperative that participants engage in information seeking during the post-editing process to ensure the accurate comprehension and completion of their tasks.

To ensure and compare the similarity in complexity between the two STs, this study employed two types of measurements: readability level and syntactic complexity (Jia & Zheng, 2022). As shown in Figure 2, the readability indexes suggest that ST1 and ST2 are appropriate for 18 and 16 years of schooling for effective comprehension respectively. Flesch Reading Ease scores show that ST1 (27.2) and ST2 (33.6) are similar in reading ease. Sentence syntax similarity values were measured by the Coh-Metrix automatic text analysis tool (version 3.0) in terms of Syntactic structure similarity adjacent (SYNSTRUTa) and Syntactic structure similarity (SYNSTRUTt). The results showed that ST1 (0.044/0.057) and ST2 (0.015/0.022) present similar complexity.

Summary of ST complexity.

MT Output

The two source texts were input into six different machine translation systems: Google Translate, Bing Translate, Baidu Translate, Youdao Translate, SYSTRAN, and Systran Premium Translator. To maximize the effect of the variable of MT output quality, translations from the two systems with the most statistically significant differences in quality will be used as materials for the PE tasks in the experiment.

The 12 outputs were assessed by TAUS’s (2013) fluency and adequacy criteria on a 4-point Likert scale, with “1” denoting incomprehensible and “4” denoting faultless for fluency, and “1” indicating utterly inadequate and “4” indicating fully adequate for adequacy. Three professional translators were recruited to assess the MT outputs using this scale. Before the evaluation, the three raters were thoroughly briefed and calibrated on the TAUS’s (2013) criteria to ensure that they understood and applied the rating scale consistently. Upon completion of the ratings, there were no contested scores observed. Any minor discrepancies that emerged were discussed and reconciled, yielding unbiased final scores.

The quality of MT output from SYSTRAN was rated lower compared to the other five NMT systems. Output from Systran Premium Translator was rated significantly higher than that from SYSTRAN in fluency and adequacy for both two texts, with all the differences in the average scores being statistically significant. Both STSTRAN and Systran Premium Translator are products from SYSTRAN company but catering to different user needs with varying features, SYSTRAN is suitable for a variety of users, from individuals and small businesses to large enterprises; while Systran Premium Translator is a specific product within the SYSTRAN suite, offering advanced features and enhanced support for professional users (SYSTRAN, n.d.). The responses for Kendall’s W (0.944, p < .001) indicated a significant, strong agreement among raters. Consequently, the outputs from SYSTRAN and Systran Premium Translator were post-edited by the participants in the experiment.

Instruments

Various instruments were employed in the experiment to collect both qualitative and quantitative data. As seen in Figure 3, the PESE scale and the keylogging tool Inputlog were used to collect quantitative data, including participants’ perceived post-editing self-efficacy and keystroke indicators such as processing time per word, pause density and pause duration per word. The pre- and post-task questionnaires, as well as the screen-recording software EVCapture facilitated the collection of both qualitative and quantitative data.

Summary of data collection methods and tools.

Inputlog is a software tool used to collect data on keystrokes and is commonly employed in translation process research to gather pause data during translation or post-editing tasks (Swar & Mohsen, 2023). Participants submitted Word documents and IDFX files exported from Inputlog, enabling researchers to import these files into their own Inputlog software to view detailed pause indexes and resources accessed by participants for information retrieval. EVCapture software was installed on each participant’s computer. Participants were instructed to initiate the recording at the beginning of their post-editing tasks and to cease recording upon completion. Subsequent to the experiment, participants submitted the recorded .mp4 files to the researchers. Prior to analyzing other data, the researchers scrutinized these recordings to ensure that there were no actions violating experimental protocols, such as direct entry of MT outputs into grammar correction software, which could potentially influence the results. Furthermore, these screen recordings provided supplemental evidence such as the specific content retrieved by the participants, thereby aiding in the interpretation of the research findings.

The pre-task questionnaire (see Appendix C for details) comprised questions related to participants’ basic information, such as language background, their experience with MT software and their attitudes toward it. The post-task questionnaires (see Appendix D for details) contained questions about participants’ perceived MT output quality, their online consultation behavior, and the perceived difficulty of the post-editing task. Additionally, participants were asked to provide a written reflection on their overall performance, as well as their emotions and thoughts regarding the task. Furthermore, a scale adapted from NASA-Task Load Index (NASA-TLX; Hart & Staveland, 1988), featuring questions about mental demand, effort, frustration, and performance subscales, was incorporated into the post-task questionnaire to assess the participants’ perceived cognitive effort invested in the preceding task (see Appendix B for details).

The measurement of self-efficacy should be relevant to the domain of functioning, as domain-specific self-efficacy scales are more effective in predicting particular behavior than general self-efficacy scales (Bandura, 2006). Based on the prior discussion, the post-editing task differs from other translation tasks in aspects such as task complexity and tool usage, necessitating a distinct self-efficacy measurement instrument. Consequently, this study employs the term post-editing self-efficacy (PESE) to encapsulate the confidence post-editors possess in their ability to perform adequately in PE activities. This paper explores the influence of PESE on post-editing performance by adapting the scale of translating self-efficacy (TSE-C) from Yang et al. (2021), originally comprising 15 items on a five-point Likert scale. For this study, we articulated a notion of self-efficacy specific to post-editing tasks, expanding the scale to a seven-point Likert scale while preserving the original structure. The TSE-C scale, which assesses confidence in internal and external competence and psycho-physiological beliefs for managing translation tasks under challenging conditions, exhibited high reliability (Cronbach’s α = .93). To tailor it to post-editing contexts and validate this adaptation, we conducted an exploratory factor analysis (EFA) with 163 participants uninvolved in the main study. This adapted PESE scale (see Appendix A for details) demonstrated strong reliability and validity, with scores reflecting high consistency (Cronbach’s α = .98) and good model fit (KMO = 0.978; Cumulative variance = 83.35%).

Procedure

The experiment involves two sessions, as shown in Figure 4. The first session started with a warm-up to orient the participants to the experimental procedures. This was followed by a short pre-task questionnaire designed to collect basic demographic information with minimal time investment. After completing the questionnaire, participants underwent the LexTALE test and reported the scores. All participants met the requirements of 60 points in the LexTALE test. Finally, participants filled out and submitted the PESE scale. A 10-min break was then provided. These activities were designed to be completed within a 1-hr timeframe. In the second session, participants performed two post-editing tasks, one with high-quality MT output and another with low-quality MT output, with each task followed by a post-task questionnaire. Participants were asked to complete these activities without any time restrictions to better assess their natural performance and responses.

Experiment procedure.

Data Quality and Statistical Analysis

The process data (processing time per word, pause density, pause duration per word, and post-editing behavior) were monitored and recorded by the screen capture software EVCapture and keylogging tool Inputlog. And two professional translators with over 5 years of experience in C-E translation training were recruited to evaluate the product data—the post-edited texts. Due to technical problems, including computer malfunctions and file-saving failures, four students were unable to submit their Inputlog files or post-edited texts upon completion of their tasks. Therefore, these participants’ data were excluded from the dataset. As a consequence, there were 51 low self-efficacious students and 51 high self-efficacious students providing valuable data for our data analysis.

A series of descriptive and parametric analyses were conducted employing R. Linear mixed effects models (LMEs) analyses were the focus of parametric analyses. Figure 5 shows the various packages and functions employed for these analyses: the lme4 package (Bates et al., 2014) and the lmerTest package (Kuznetsova et al., 2017) facilitated the evaluation of standard errors, effect sizes, and significance values, as well as to assess fixed effects, together with ANOVA function; the effects package (Fox et al., 2020) was employed to calculate the effects of the models; and the emmeans package (Lenth et al., 2021) was adopted for post-hoc analysis. Overall, the data set comprised 204 observations. All six dependent variables were tested for normality using Shapiro-Wilk’s test and visual inspection. For those variables failing to meet normality criteria (p < .05), data transformation procedures were implemented. Specifically, log and square-root transformations were conducted, opting for the technique that most effectively achieved a normal distribution, as guided by McDonald (2009) and in line with statistical practices noted by Jia and Sun (2023). Ultimately, square-root transformations were found to be most effective and were uniformly applied to all variables to satisfy the prerequisites of the statistical models.

RQ-based data analysis format.

Six LME models were built for the dependent variables investigated: processing time per word, pause density, pause duration per word, perceived cognitive effort, average accuracy score, and average fluency score. All the above variables are numerical continuous variables. For fixed effects, the main effects of MT quality (high or low, referred to as MTH and MTL), PESE (high or low, referred to as PESEH and PESEL), and their interaction were entered into the models. For all six models, the random effects were always the participants. During data analysis, we first verified whether there was a significant main effect and then checked the interaction effect of MT quality and PESE.

Results

To assess the statistical differences between participants’ post-editing performance, a general comparative parametric analysis was conducted. The main effects of MT quality, PESE, and their interaction were reported when significant (p < .05).

Process Data: Cognitive Effort

Processing Time Per Word

The processing time per word of PE tasks was recorded automatically by Inputlog, and the interaction effect was plotted in Figure 6. The main effect of MT (F (1, 101) = 58.96, p < .001) was significant. Both PESEH and PESEL participants spent significantly less time processing MTH (M = 2.18) than MTL (M = 2.85) (β = −.67, SE = 0.09, t = −7.68, p < .001). However, the main effect of PESE (F (1, 101.16) = 0.17, p > .05), and the interaction effect of the two independent variables (F (1, 101.16) = 1.95, p > .05) were not significant.

Interaction effect between PESE and MT on processing time per word.

Pause Density

The participants’ pause density was recorded automatically by Inputlog, and the interaction effect was plotted in Figure 7. The main effect of MT (F (1, 101.49) = 58.7, p < .001) was significant. Both PESEH and PESEL participants showed significantly less pause density in MTH tasks (M = 1.17) than in MTL tasks (M = 1.71) (β = −.53, SE = 0.07, t = −7.66, p < .001). However, the main effect of PESE (F (1, 99.97) = 0.11, p > .05), and the interaction effect of the two independent variables (F (1, 101.49) = 0.02, p > .05) were not significant.

Interaction effect between PESE and MT on pause density.

Pause Duration Per Word

The participant’s pause duration per word was also recorded by Inputlog, and the interaction effect was plotted in Figure 8. The main effect of MT (F (1, 200) = 4.89, p < .01) was significant. Both PESEH and PESEL participants showed significantly less pause duration per word in MTH tasks (M = 1) than in MTL tasks (M = 1.36) (β = −.36, SE = 0.16, t = −2.21, p < .05). However, the main effect of PESE (F (1, 200) = 0.52, p > 0.05), and the interaction effect of the two independent variables (F (1, 200) = 0.07, p > 0.05) were not significant.

Interaction effect between PESE and MT on pause duration per word.

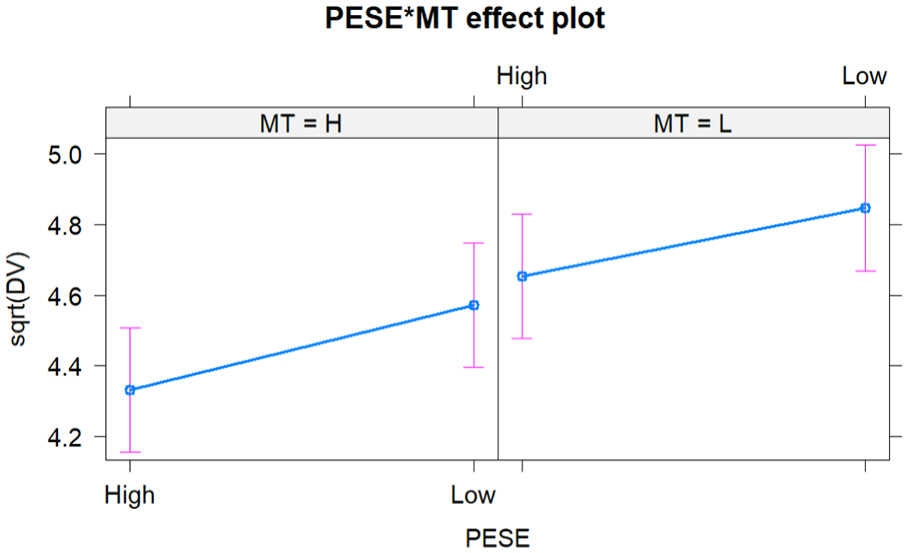

Perceived Cognitive Effort

The perceived cognitive effort of PE tasks was rated subjectively by the participants, and the interaction effect was plotted in Figure 9. The main effects of MT (F (1, 100.73) = 20.62, p < .001) and PESE (F (1, 100) = 4.02, p < .01) were significant. For both MTH and MTL PE tasks, PESEH participants (M = 4.49) perceived to cost significantly less cognitive effort than PESEL participants (M = 4.71) (β = −.22, SE = 0.11, t = −2, p < .05). Both PESEH and PESEL participants perceived to cost significantly less cognitive effort when post-editing MTH (M = 4.45) than MTL (M = 4.75) (β = −.3, SE = 0.07, t = −4.54, p < .0001). The interaction effect of the two independent variables was not significant (F (1, 100.73) = 0.12, p > .05).

Interaction effect between PESE and MT on perceived cognitive effort.

Product Data: Final PE Output Quality

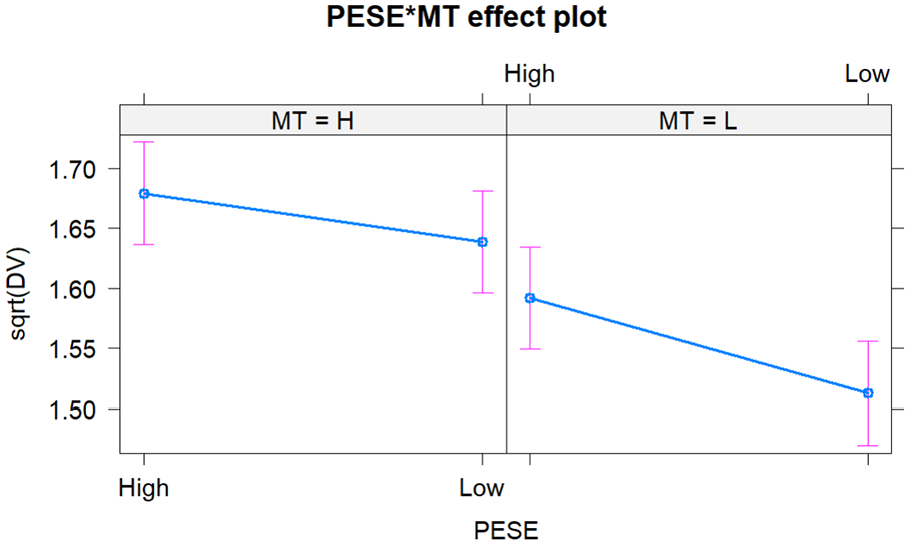

Average Accuracy Score

The average accuracy score of the final post-edited outputs was evaluated by two professional translators, and the interaction effect was plotted in Figure 10. The main effects of MT (F (1, 101.84) = 24.94, p < .001) and PESE (F (1, 99.99) = 7.43, p < .001) were significant. For both MTH and MTL PE tasks, PESEH participants’ (M = 1.64) final PE products were significantly more accurate than that of PESEL participants (M = 1.58) (β = .06, SE = 0.02, t = 2.73, p < .05). Both PESEH and PESEL participants perform better in accuracy in MTH (M = 1.66) tasks than in MTL (M = 1.55) (β = .107, SE = 0.02, t = 4.99, p < .001) tasks. The interaction effect of the two independent variables was not significant (p > .05).

Interaction effect between PESE and MT on average accuracy score.

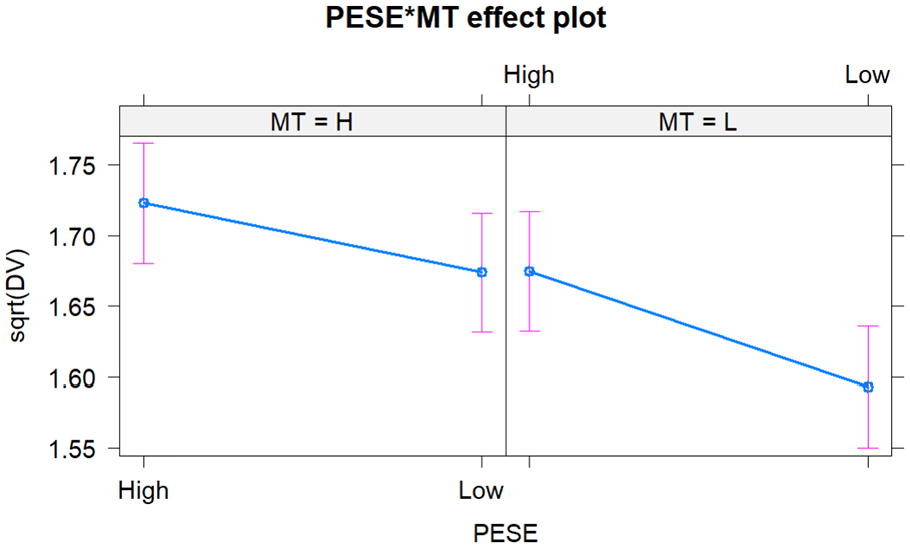

Average Fluency Score

The average fluency score of the final post-edited outputs was also calculated by the two professional translators, and the interaction effect was plotted in Figure 11. The main effects of MT (F (1, 101.19) = 11.87, p < .001) and PESE (F (1, 100) = 7.43, p < .001) were significant. The interaction effect of the two independent variables was not significant (p > .05). For both MTH and MTL PE tasks, PESEH participants’ (M = 1.7) final PE products were significantly more accurate than that of PESEL participants (M = 1.63) (β = .07, SE = 0.02, t = 2.74, p < .05). Both PESEH and PESEL participants perform better in accuracy in MTH (M = 1.7) tasks than in MTL (M = 1.63) (β = .06, SE = 0.02, t = 3.45, p < .001) tasks.

Interaction effect between PESE and MT on average fluency score.

Discussion

Integrating both process-based and product-based approaches, our analysis and discussions aimed to determine the influences of MT quality, students’ PESE, and their potential interaction effects on PE effort and post-edited quality.

Process-Based Findings

Effects of MT Quality on PE Effort

The obtained results revealed that MT significantly impacted PE effort for both students with high and low PESE, suggesting that high-quality MT output could assist students in saving time and energy while feeling less stressed during MTPE. Specifically, processing time per word when post-editing MTH was shorter than that for MTL. Furthermore, MT quality negatively influenced PE cognitive effort for both groups with differing PESE levels.

This result comes as expected and is congruent with previous research concerning the impact of MT quality on time spent on PE tasks, despite the utilization of various quality evaluation methods in those studies (e.g., Koehn & Germann, 2014). It is widely accepted that higher MT quality signifies improved fluency and adequacy, a consensus shared by both raters and participants, as evidenced in post-task questionnaires. Enhanced MT quality can potentially aid in addressing lexical, grammatical, and syntactic problems from the STs, ultimately reducing the time spent on consultation and deliberation. Interestingly, this finding contradicts a recent finding by Zouhar et al. (2021), which reports a weak and non-linear relationship between MT quality and PE time, suggesting that minor improvements in MT might not significantly decrease PE durations. One possible explanation for the discrepancy between our findings and those of Zouhar et al. (2021) is that they adopted the automatic metric BLEU to assess MT quality. In addition, their study involved 30 professional translators in English-to-Czech post-editing. Thus, differences in language pairs and participants’ expertise may contribute to the observed inconsistency.

The analysis exploring the impact of MT quality on pause density and pause duration per word revealed a negative influence on PE effort. In essence, students exerted greater actual cognitive effort when post-editing lower-quality MT outputs. This finding supports earlier observations by O’Brien (2011), who asserts that MT output quality is negatively associated with PE effort, and by Nunes Vieira (2016), who discovers that higher MT quality correlates with reduced cognitive effort for post-editors. Inputlog data, providing timestamps of the duration participants spent on specific pages, suggest that although the recorded pauses do not definitively indicate reading or cognitive processing of texts, they frequently aligned with screen activity indicative of reading facilitated by mouse movements rather than active text editing. This pattern was particularly evident with lower-quality MT outputs, where participants exhibited longer stays on task documents and more frequent pausing. suggesting a heightened level of cognitive processing was necessary to comprehend the text. Just as O’Brien (2006) points out, lower-quality MT necessitated multiple readings by participants to achieve better comprehension, indicating that cognitive processing was continuously occurring during this activity.

Regarding the impact of MT quality on students’ perceived cognitive effort, they reported that post-editing MTL was more effortful than post-editing MTH. This is understandable since evaluating and revising MTL requires greater cognitive effort. Our findings align with previous findings. For instance, Jia and Zheng (2022) investigates the interaction effect between ST complexity and MT quality on neural machine translation PE task difficulty, revealing a negative correlation between MT quality and PE task difficulty. In other words, PE tasks become less difficult as MT quality improves. Similarly, Zhou et al. (2022) find that complex tasks demand more cognitive effort. Wang (2021) concludes that student translators invested more cognitive effort in complex tasks than in simple ones, as indicated by their extended production duration and pause times.

Overall, irrespective of PESE level, MT quality has a highly significant negative impact on PE effort indicators.

Effects of PESE on PE Effort

Unexpectedly, PESE (1) was not observed to exert a significant influence on actual PE effort, while (2) it displayed a negative association with perceived cognitive effort. The first finding regarding PESE contrasts with our initial prediction that students with high PESE may perform better at searching for information and solving problems more efficiently, and thus cost considerably less PE effort than those with low PESE. Nitzke (2019) highlights that lexical problems are the primary contributors to PE task difficulty, often requiring online information consultation. Numerous studies have demonstrated that self-efficacy promotes information retrieval task performance (Clark, 2017; Gkorezis et al., 2017).

A potential explanation for these unexpected results is that the ease of access and rapid production of MT have made it an efficient method for students to acquire information and knowledge across various cultures and disciplines (Carl et al., 2016; Yang & Wang, 2020). Pre-task questionnaires revealed a generally favorable attitude toward MT, with participants indicating that MT technologies often assist in their translation assignments and practices. Consequently, most students chose to trust the lexical meaning, syntax, and translation style provided by MT, regardless of their self-efficacy levels, whether they perceived the MT output quality to be high or low in the post-task questionnaires. This suggests that post-editing trainers should meticulously design their teaching content. Specifically, they should carefully select both high- and low-quality texts for students to identify and rectify errors during post-editing. Such an approach will motivate students to view machine translation with an objective perspective, encouraging them to neither completely dismiss the technology nor overly depend on it.

As to why perceived cognitive effort is affected by PESE, evidence can be drawn from the concepts of these two constructs. Perceived cognitive effort is often defined in the literature as the post-task perception of effort exerted by post-editors (Gaspari et al., 2014). Post-editing self-efficacy, an adapted concept derived from social cognitive theory, refers to students’ confidence in their capabilities to identify and solve problems, as well as utilize strategies in PE tasks. The revised NASA-TLX scale, employed to assess participants’ perceived cognitive effort in the preceding task, includes questions regarding mental demand, effort, and frustration (Sun & Shreve, 2014). Although students in both PESEH and PESEL groups acknowledged that post-editing MTL was more demanding than working with MTH, those who have higher self-efficacy demonstrated greater motivation and confidence in overcoming the more demanding task, as shown in the post-task questionnaires.

Product-Based Findings

Effect of MT Quality on Post-Edited Quality

Irrespective of the PESE level, a significant main effect of MT quality was observed on the accuracy and fluency scores. Lower MT quality led to poorer translation quality, which corresponds to previous findings. For example, Sanchez-Torron and Koehn (2016) investigates the effects of varying MT quality on post-edited quality and concludes that inferior MT output led to worse translation quality after post-editing. Wu (2019) reports that higher text complexity led to greater inaccuracy and dysfluency in students’ performance. Zhou et al. (2022) examines the impact of task complexity, as indicative of accuracy and fluency, on product quality and find that increased task complexity resulted in lower translation quality.

Contrary to the current findings, Zouhar et al. (2021) observe no significant relationship between output quality and MT system performance among all 13 MT systems. Again, this discrepancy is likely due to differences in language pairs, participants’ translation experience, and varying levers of translation skill familiarity. Our results also diverge from Sun et al. (2021), which argues that difficult tasks had no influence on the translation quality of challenging texts but marginally reduced the translation quality of simpler texts. This inconsistency may be attributed to the distinct nature of human translation and PE task types. As Jia and Sun (2023) demonstrates, ST serves as the sole source of information offered for the translator to work on in human translation tasks, while MTPE relies on both ST and its MT output. While ST works mostly as a reference for the post-editor to correct MT errors, the MT output serves as an essential version of the translation. As ST complexities in this study were controlled to maintain similar levels, MT quality emerged as the primary factor influencing PE task difficulty. Thus, higher MT quality led to lower task difficulty and improved product quality for all students, regardless of their PESE levels.

Effects of PESE on Post-Edited Quality

Regardless of MT quality level, a significant main effect of PESE on the accuracy and fluency scores were observed. This outcome is not surprising, as an individual’s belief in their ability to perform a specific task significantly influences the final results (Schwarzer, 1992). Interestingly, PESE strongly predicted post-edited quality but not time or actual cognitive effort. A possible explanation is that PESE enhanced product accuracy and fluency by promoting more resourceful strategy use (Hoffman & Schraw, 2009; Lu et al., 2022; Zhou et al., 2022). PACTE Group (2005) group analyzed resource types utilized during the translation process, identifying two primary sources: internal and external resources. Internal resources encompass the translator’s existing knowledge, whereas external resources consolidate all information-gathering processes. Online information retrieval served as the primary external support in this study. Screen recordings revealed that almost all students engaged in word searching activities, while some employing other retrieval methods like encyclopedias to search for related information. Although Inputlog files allow us to track the duration users spend on specific pages (i.e., the word file for the tasks or information retrieval pages), no significant differences in information retrieval time between participant groups was observed. However, post-task questionnaires indicated that students with low and high PESE differed in their information-seeking activities. Specifically, PESEH students reported using a variety of online resources, including monolingual dictionaries, bilingual dictionaries, parallel corpora, and web encyclopedias. In contrast, PESEL students primarily relied on bilingual dictionaries and spent minimal time reviewing the content they retrieved. Consequently, the retrieval quality for PESEL students was poorer, potentially leading to a lower-quality final product.

Regarding internal support, participants could refer to the source text and rely on their internal knowledge to find a suitable translation if they deemed the MT output unacceptable for the target text (Nitzke, 2019). Zhou et al. (2022) suggests that it might be futile to resort solely to external resources when it comes to solving translation problems such as uncertainties and ambiguities. As a result, resourceful strategy use, or the combined effort of external and internal support, may yield better results in PE tasks. This rationale explains why the PESEH group consistently produced higher-quality post-edited texts than the PESEL group.

However, the present study did not identify significant interaction effects between MT quality and PE self-efficacy on PE effort and post-edited quality.

Concluding Remarks

Conclusion and Implications

The prevalence of MT presents both advantages and challenges for students attempting to successfully utilize the technology (Yang & Wang, 2020). The present study sets out to investigate the functions and interplay of MT quality and individuals’ PESE in MTPE tasks. The answers to the three research questions are (1) MT quality has a highly significant negative impact on indicators of PE effort and a positive impact on indicators of post-edited quality; (2) PESE has no significant influence on actual PE effort, but it negatively associates with perceived cognitive effort. PESE has significant positive effects on post-edited quality; (3) no significant interaction effects of MT output quality and PE self-efficacy on PE effort and post-edited quality has been found.

These findings suggest that post-editing instructors should integrate several strategic actions into their teaching methodologies, thereby enhancing the educational outcomes. Firstly, instructors are encouraged to purposefully select machine translation texts with varied levels of quality. By experiencing a range of MT outputs, students can better understand the nuances and inherent limitations of MT, such as varying quality levels and contextual misinterpretations. This will help students have a more accepting attitude toward machine translation and train them to use it sparingly rather than depending overly on it. Secondly, the study emphasizes the importance of fostering and enhancing students’ PESE in PE tasks, given its strong connection with students’ perceptions and final output quality. We propose that PE educators design diverse learning and practice activities based on the four sources of self-efficacy: enactive mastery experience, vicarious experience, verbal persuasion, and physiological and emotional states (Bandura, 1997). Specifically, instructors could assign routine group works to increase students’ mastery experience in performing PE tasks and facilitate vicarious learning from peers. Persuasive feedback and commentary on student performance may also serve to bolster their self-efficacy.

Limitations and Future Research

This study provides insightful observations into the performance and cognitive mechanisms of student translators when their post-editing self-efficacy and machine translation quality vary in PE activities. However, its limitations should be considered when interpreting the results. The participants were all students with similar educational backgrounds, and the study focused exclusively on one language pair and translation direction (L2 to L1). Future research could enhance generalizability by including professional translators and varying the translation directionality. Moreover, the complexity of the experimental procedures might have strained the participants, potentially affecting their performance in the second PE task. Although a mixed method combining key-logging, screen recording, and questionnaires was employed, integrating additional behavioral research tools such as eye-trackers could provide richer data. Such tools would allow for the precise measurement of time spent on specific words and sentences, and fixation indexes such as fixation counts and durations, offering deeper insights into cognitive mechanisms under specific conditions.

Looking ahead, there is substantial scope for further exploring the relationship between self-efficacy and post-editing tasks. Subsequent studies could examine the pedagogical implications of PESE, such as how collective efficacy influences group performance in post-editing and the potential benefits of self-efficacy in acquiring post-editing skills. Given that a link between self-efficacy and PE performance was established in this study, future research could replicate these experiments with different language pairs or work toward developing post-editing self-efficacy scales to test this link more rigorously.

Supplemental Material

sj-docx-1-sgo-10.1177_21582440241291624 – Supplemental material for When Student Translators Meet With Machine Translation: The Impacts of Machine Translation Quality and Perceived Self-Efficacy on Post-Editing Performance

Supplemental material, sj-docx-1-sgo-10.1177_21582440241291624 for When Student Translators Meet With Machine Translation: The Impacts of Machine Translation Quality and Perceived Self-Efficacy on Post-Editing Performance by Xinyang Peng, Xiangling Wang and Xiaoye Li in SAGE Open

Footnotes

Acknowledgements

We would like to thank all the participants in the study.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was supported by the National Social Science Foundation of China [22BYY015].

Ethics Statement

The research was approved by Foreign Studies College Research Ethical Review Board of Hunan University. Before participating, all participants provided their written informed consent. The Letter of Information and Consent Form contained essential details including the goals and procedures of the research, expected benefits, the option to withdraw from the study without penalties, and assurances regarding the confidentiality of data and a detailed description of the anticipated results of the study.

Data Availability Statement

The datasets generated during and/or analyzed during the current study are available from the corresponding author on reasonable request.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.