Abstract

Global polling has seen an increase in endorsement of conspiratorial beliefs in recent years, with social media platforms receiving criticism for their potential role in facilitating this spread. The current study analyzed the presence of emotions and themes in conspiratorial content (revolving around conspiracy theories or conspiratorial ideas) on Twitter (now “X”) and Reddit when compared to scientific and general discussion, and the ability of emotions and themes to predict engagement (likes, shares etc.) in conspiratorial spheres. Approximately four million words worth of content were collected from Twitter and Reddit and analyzed using the Linguistic Inquiry and Word Count (LIWC) tool to determine concentration of different emotions and themes. Conspiratorial discussions were found to show significantly higher rates of anger, anxiety, power, and death compared to both scientific and general discussions across both platforms. Engagement was successfully predicted within conspiracy discussions; higher levels of anger, anxiety, power, and death predicted higher levels of engagement in conspiracy discussions. Contrary to previous findings, positive emotions were predictive of lower levels of engagement in conspiracy-related discussions. The findings suggest that conspiratorial content relies on powerful negative emotional responses to gain traction and increase audience engagement. The findings are discussed in the context of cognitive processes involved in the adoption of conspiracy theories, and we offer suggestions for researchers developing educational interventions and social media platforms to reduce the spread of conspiracy theories online.

Recently, much focus has been placed on the prevalence of unfounded beliefs, with over 60% of articles mentioning “misinformation” (11,170 of 18,257) and “conspiracy theories” (2,841 of 4,710) being published between 2021 and 2024 according to the Scopus database. While “misinformation” refers broadly to inaccurate or misleading information, “conspiracy theories” are increasingly being treated as a subset of misinformation (Ren et al., 2023) and refer specifically to a group acting in secret for reasons unknown to the public (Douglas & Leite, 2017). They are defined by their contradiction to the widely accepted narrative for events and by their insinuation of malevolent influence (Goertzel, 1994).

Consuming conspiratorial content and subscribing to conspiracy theories has been linked to a range of negative outcomes (Douglas et al., 2015; Sassenberg et al., 2023). During the COVID-19 pandemic, belief in conspiracy theories predicted lower rates of vaccine uptake and protective behaviors in several international studies (Constantinou et al., 2021; Yang et al., 2021). Endorsement of conspiracy theories has also been linked to intergroup prejudice (Jolley et al., 2020; Tahmasbi et al., 2021), elevated anxiety (Douglas & Sutton, 2018; Leibovitz et al., 2021) and workplace incivility (Douglas & Leite, 2017). It has also recently been found that conspiratorial beliefs also often put strain on relationships and result in negative social outcomes for those that endorse them (van Prooijen et al., 2022). Conspiracy theories are often diffused online, with social media platforms identified as a common medium (Kou et al., 2017; Stecula & Pickup, 2021; Wang et al., 2019).

Content that goes “viral” on social media, meaning it is diffused at great speed and volume, often contains emotional content or framing (Brady et al., 2020). Brady and colleagues find that using “moral-emotional” language in social media posts can increase their diffusion by 20%. It has also been claimed that factually inaccurate content spreads faster than factual content on social media platforms (Vosoughi et al., 2018). The effect of negative emotionality on the spread of information online may be explained by the human tendency to be heavily influenced by perceived negative outcomes. Historically posited as “negativity bias” (Rozin & Royzman, 2001) and “loss aversion” (Kahneman & Tversky, 1979), this phenomenon outlines our propensity to give more weight to negative stimuli when compared to equally positive stimuli.

Outrage and use of other negative emotions has widely been found to receive greater attention and engagement online in the form of “likes” and “shares” in certain contexts, for example, following a natural disaster, an act of terrorism, or during public health crises (Li et al., 2020; van Prooijen & Douglas, 2017). These events are breeding grounds for conspiracy theories, which may be adopted as a means of reducing “cognitive dissonance” (Bowes et al., 2021). Cognitive dissonance refers to a specific form of discomfort and anxiety created by individuals being faced with information that is conflicting with their existing worldviews (Festinger, 1957). For example, during times of uncertainty (such as after a significant, negative event), certain individuals will experience this phenomenon as the occurrence of the event does not align with their view that the world is safe and predictable. Douglas and Leite (2017) describe this phenomenon in their seminal paper on the main motivations of conspiracy theory adoption. The authors posit three primary motivations, including an “epistemic” motivation; that individuals may adopt conspiracy beliefs to make sense of events and reduce uncertainty. Taken together, these findings suggest it is likely that engaging with emotionally charged conspiratorial content increases in uncertain times, allowing individuals to keep their existing worldviews intact by refuting mainstream explanations that cause them discomfort.

Engagement is also greatly predicted by social factors such as identity. Bakir and McStay (2018) note the common concern of misleading information in political discourse, noting highly emotional content as a driver of engagement. In example, Rathje et al. (2021) also find that in political discourse, out-group animosity increases the chance of a post being shared by 67% on average. This aligns with Social Identity Theory (Tajfel & Turner, 1978); the notion that a strong sense of group identity leads individuals to frame in-groups positively relative to out-groups. This extends to information evaluation, in that individuals will be more likely to believe information to be true when it aligns with in-group values or beliefs (Pereira et al., 2023). Sharing emotionally charged content can also allow the signaling of group membership and loyalty (Douglas & Leite, 2017). Conspiracy theories often provide such narratives, framing an out-group as malevolent and responsible for negative in-group outcomes. As such, they may be spread and adopted as a means of preserving social identities and facilitating connection with in-group members (Peters & Kaasima, 2007; Robertson et al., 2022). These factors comprise another of the primary motivations for conspiracy belief; the “social motivation” (Douglas & Leite, 2017).

A consistent finding is that more emotionally intense language, both positive and negative (e.g., anger, anxiety, awe, love) are associated with higher engagement—true for microblogs (Stieglitz & Dang-Xuan, 2014), video platforms (Nelson-Field et al., 2013), news articles (Berger & Milkman, 2012) and private messaging services (Yu et al., 2020). These findings are also replicated experimentally by Berger and Milkman. After successful manipulation of emotionality, findings show that both positive and negative high-arousal content are more likely to be shared in social media environments. It is proposed that extreme positive and negative emotions result in “activation” (inducing a state of arousal) that increases action, such as sharing content (Berger & Milkman, 2012) and cognitive load, reducing ability to assess truth (Martel et al., 2020).

While some studies show consistency, conflicting results emerge depending on content and medium. For example, Nelson-Field et al. (2013) find that positive high-arousal content is shared 30% more frequently than negative high-arousal content in videos. However, Lee et al. (2021) report that during and after a mass shooting, anger and sadness best predict engagement on Twitter. Similarly, Stieglitz et al. (2014) note that in political discourse, negative emotions drive engagement more effectively than positive emotions, contradicting Nelson-Field’s earlier findings. On Facebook, Choi et al. (2020) suggest that users are less likely to share or comment on stories with positive emotions, being drawn to content that evokes sadness. These findings suggest a complex relationship between emotion and engagement, one that likely varies by medium, audience, topic, and context.

How emotion impacts engagement in conspiratorial discussions online remains relatively unexplored. Both text and video involving conspiratorial content are found to have higher levels of negative emotional language when compared to scientific discussions (Fong et al., 2021) and videos aimed at debunking conspiracy theories, respectively (Kim & Chen, 2022). Fong and colleagues also note significantly higher presence of language related to death and religion in discussions about conspiracies than those about science. Negative emotional language is also shown to increase in conspiratorial discussions as the length of the discussion increases (Zollo et al., 2015). In the context of the COVID-19 pandemic, a study by Rains et al. (2021) compares linguistic features of conspiracy theories to other COVID-19 topics and racial injustice. The study shows no consistent significantly higher presence of positive or negative emotions in the conspiracy discussions compared to other topics. The study also investigated which features were associated with increased retweets in the conspiracy discussion, finding that categories of “friends” and “home” to be best predictive, with affective processes (including positive and negative emotions) showing a small negative relationship with engagement. There is a dearth of studies investigating how emotions and themes drive engagement within conspiratorial discussions broadly across platforms and to our knowledge, there are none investigating engagement in conspiracy discussions compared to control topics such as general discussion. As such, this takes a central focus in the current study.

The way in which emotion influences engagement online has been explored using several different methodologies (Schreiner et al., 2019). To categorize emotions, studies use automated tools to measure emotional valence (whether a post is positive, negative or neutral), also known as “sentiment analysis” (Choi et al., 2020; Stieglitz & Dang-Xuan, 2014). Other methods include manual categorization of emotional themes (Berger & Milkman, 2012; Kim & Chen, 2022; Lee et al., 2021), and self-report measures of emotional responses to stimuli (Martel et al., 2020). To categorize text into a larger number of discrete emotions and themes, tools have been developed and are often used in relevant research. One such tool is the Linguistic Inquiry and Word Count (LIWC; Pennebaker et al., 2015). LIWC is the most commonly used tool for linguistic analysis with over 5,000 citations and many uses in the social sciences (Addawood et al., 2019; Berger & Milkman, 2012; Brady et al., 2021; Fong et al., 2021; Giachanou et al., 2021; Klein et al., 2019). As a validated tool, it is appropriate for use in the current study.

Conspiracy beliefs are associated with many negative individual and societal outcomes (Douglas et al., 2015) and their proliferation on social media is likely to rapidly change form as mediums and methods for creating misleading content continue to develop. As such, it is timely and important to understand which characteristics make a conspiracy theory “successful” in terms of reaching the widest audience possible. In this paper we expand on previous research to investigate how conspiratorial content is diffused online. The aims of the current study are to further explore the emotions and themes present in conspiratorial discussions, and how they translate to engagement. Themes and emotional language present in conspiratorial content will be compared to both scientific and general discussion across two social media platforms, Twitter and Reddit. Further, we aim to examine which of these features are associated with higher engagement within conspiracy discussions. To determine whether general emotionality predicts engagement, we will investigate the ability of negative emotions and positive emotions to predict engagement, as well as the predictive ability of themes present in conspiracy discussions.

We expected that conspiratorial discussions would show a higher concentration of language representing negative emotions, particularly anxiety, anger, and sadness, in line with previous research (Fong et al., 2021; Lee et al., 2021). Furthermore, that these negative emotions would be associated with higher engagement across both platforms. We also hypothesized that positive emotions would predict higher engagement, as has been found in previous studies (Berger & Milkman, 2012; Nelson-Field et al., 2013; Stieglitz & Dang-Xuan, 2014). It was also expected that conspiratorial discussions would also have more frequent mentions of common themes including power, death, family and religion (Fong et al., 2021; Rains et al., 2021) and that these themes would show a positive relationship with engagement. The study expands on previous research by assessing whether the differences extend across multiple social media platforms using controls of both scientific discussion and general discussion, a method that has not previously been used. We aim to clarify mixed results regarding predictors of engagement in conspiracy discussions and improve the collective understanding of how conspiratorial content is diffused online.

Method

Materials and Procedure

Platform specific Application Programming Interfaces (APIs) were used with the Python programming language to collect posts, tweets and comments using parameters specified by the researchers. “Twitter” (Now “X”) granted permission for the researchers to collect text for the purposes of the study, and at the time of data collection, the use of Reddit for data collection was registered on their website, as was the platforms requirement. All data was de-identified via removing account names. Ethical approval was provided by Bond University Human Research Ethics Committee. The “PRAW” package (Boe, 2021) was used to collect content from Reddit and the “Scweet” package (Jeddi, 2021) for Twitter. These packages are specifically designed to collect text from Twitter and Reddit, making them appropriate for the current study. This validity of this approach has been demonstrated in prior research investigating psycholinguistics on both Twitter and Reddit (Fong et al., 2021; Giachanou et al., 2021; Klein et al., 2018; Shahi et al., 2021).

Level of engagement was recorded via the number of “upvotes” for Reddit (a system whereby audience members can increase or decrease visibility of posts and comments) and “retweets” for Twitter (similarly increasing visibility and a way showing support to one’s network). This would be used to determine if presence of emotional themes would influence the level of engagement a post or comment received within conspiratorial discussions (being conversations within a conspiracy-related community on Reddit or containing a conspiratorial hashtag on Twitter).

For the purpose of control, comparison samples of non-conspiratorial social media content were required. For the control text, posts and comments were collected from Reddit sorted by most recent without other filters. Similarly on Twitter, tweets and replies were collected without specifications of accounts or hashtags. Unfiltered material is used as a baseline of general discussion on each platform, against which a fair comparison to conspiratorial and scientific discussion can be made.

The data were collected from each social media platform in accordance with the function of each. Where Twitter uses hashtags to categorize content, Reddit consists of communities called “subreddits” that pertain to different topics, each with their own rules and guidelines. On Twitter, hashtags were utilized to collect tweets and replies for each category. For scientific discussions, the hashtag “#science” was used and for conspiratorial discussions, several hashtags were used to collect the same number of posts including “#plandemic,”“#flatearth,”“#newworldorder,”“#arrestfauci” etc. We acknowledge that results may differ depending on chosen keywords, however the above were the most common conspiratorial hashtags in circulation at the time of data collection and are considered to cover a variety of conspiratorial ideas. Similar strategies have been used in previous research, including using trending search terms, and keywords that represent conspiratorial ideas (Kim & Chen, 2022; Rains et al., 2021). Additionally, Twitter accounts were identified which regularly engage in scientific/conspiratorial discussion (determined by regular use of relevant hashtags mentioned above), posts and comments from these accounts were also collected for analysis.

As Reddit is separated by communities, social media posts from targeted communities were sampled to represent each discussion type. Conspiratorial content from Reddit was taken from communities of “r/Conspiracy,”“r/ConspiracyTheories,” and “r/NoNewNormal.” The community “r/NoNewNormal” has since been banned from Reddit. For scientific discussion on Reddit, posts and comments were collected from the discussions in “r/Science,”“r/AskScience,” and “r/EverythingScience.” It is noted that in social media discourse, there is a percentage of individuals whose participation is understood solely to be facetious and antagonize others (“trolls”). Discerning genuine contributions from such contributions would increase accuracy of analyses, however previous research has estimated only 4% to 12% of contributions on r/conspiracy fall in this category (Klein et al., 2019). As such, the amount of sampling error and the presence of increased error may well decrease power but with the available large sample size the risk of Type II error is diminished.

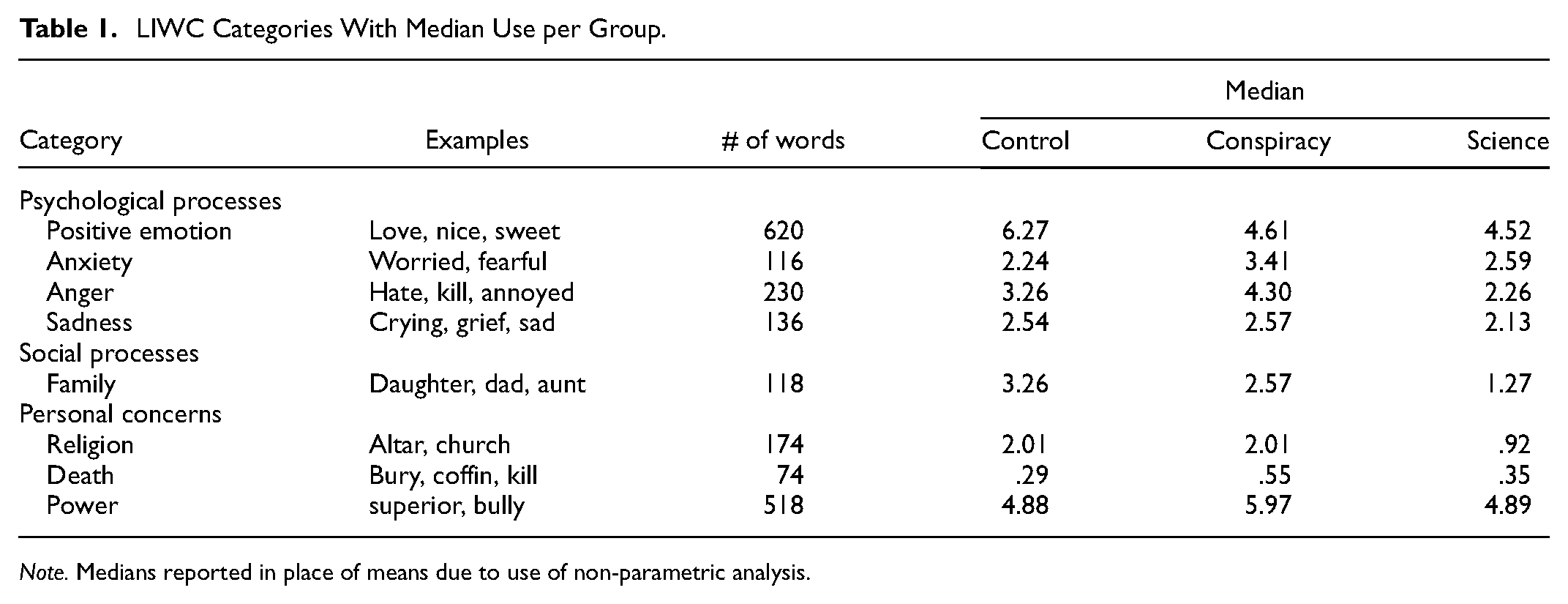

After cleaning the data to remove artifacts, analysis was performed using the Linguistic Inquiry and Word Count (LIWC) tool. LIWC has found to be a valid and reliable tool for linguistic analysis (Pennebaker et al., 2015). LIWC uses dictionaries of words categorized by criteria such as emotional valence (e.g., positive emotion, negative emotion, anger, anxiety), cognitive processes (e.g., insight, certainty, tentative language), drives (e.g., risk, power), time orientation (past, present, future), perceptual processes (e.g., seeing, hearing) and personal concerns (e.g., money, religion, death), see Table 1 for examples of relevant categories with median use from the data. Text files were created for each source for example, conspiratorial text from r/conspiracy. For each text file processed, LIWC identifies the percentage of words that belong to a variety of categories (see Table 1 for examples). As the dictionaries for each category were not of equal size, percentages were then scaled by the number of words the dictionary contained. The data were then analyzed with the Statistical Package for the Social Sciences (SPSS, Version 26.0). Each text file became a case for analysis in SPSS and the final data consisted of 26 cases, 8 for conspiracy discussion, 8 for science and 10 for control. The cases for scientific discussion contained a total of approximately 1.5 million words, conspiratorial content approximately 2.5 million and control approximately 800,000 words. The number of words for each category far exceeded the necessary number of words to make LIWC analysis meaningful. Moreover, to measure engagement, text was arranged by level of engagement—number of retweets for Twitter data, score for Reddit data (score is calculated by number of “upvotes” after subtracting number of “downvotes”). Retweets/posts/comments that scored higher in engagement were placed into text files (N = 10) as was text that showed lower levels of engagement (N = 10). These text files were then analyzed using the LIWC tool to compare emotional and linguistic differences between high/low engagement.

LIWC Categories With Median Use per Group.

Note. Medians reported in place of means due to use of non-parametric analysis.

Due to the nature of the data collected, non-parametric tests were utilized, in accordance with previous research (Bessi, 2016; Jolley & Douglas, 2014). Kruskall-Wallis tests were utilized to determine whether there were differences in categories of interest across the three groups, followed by Mann-Whitney U tests with Bonferroni correction to determine between which groups significant differences were present in the variables of interest. Discriminant function analysis was utilized to determine whether linguistic categories of interest (e.g., anxiety, anger, religion, power, death, anxiety) could discriminate between groups of low and high engagement text within conspiratorial content.

Results

Topic Differences

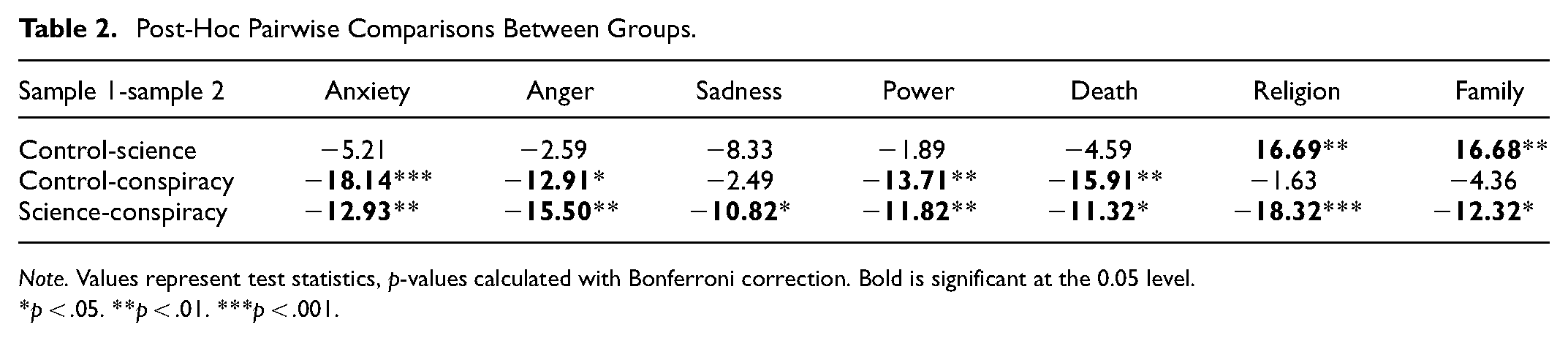

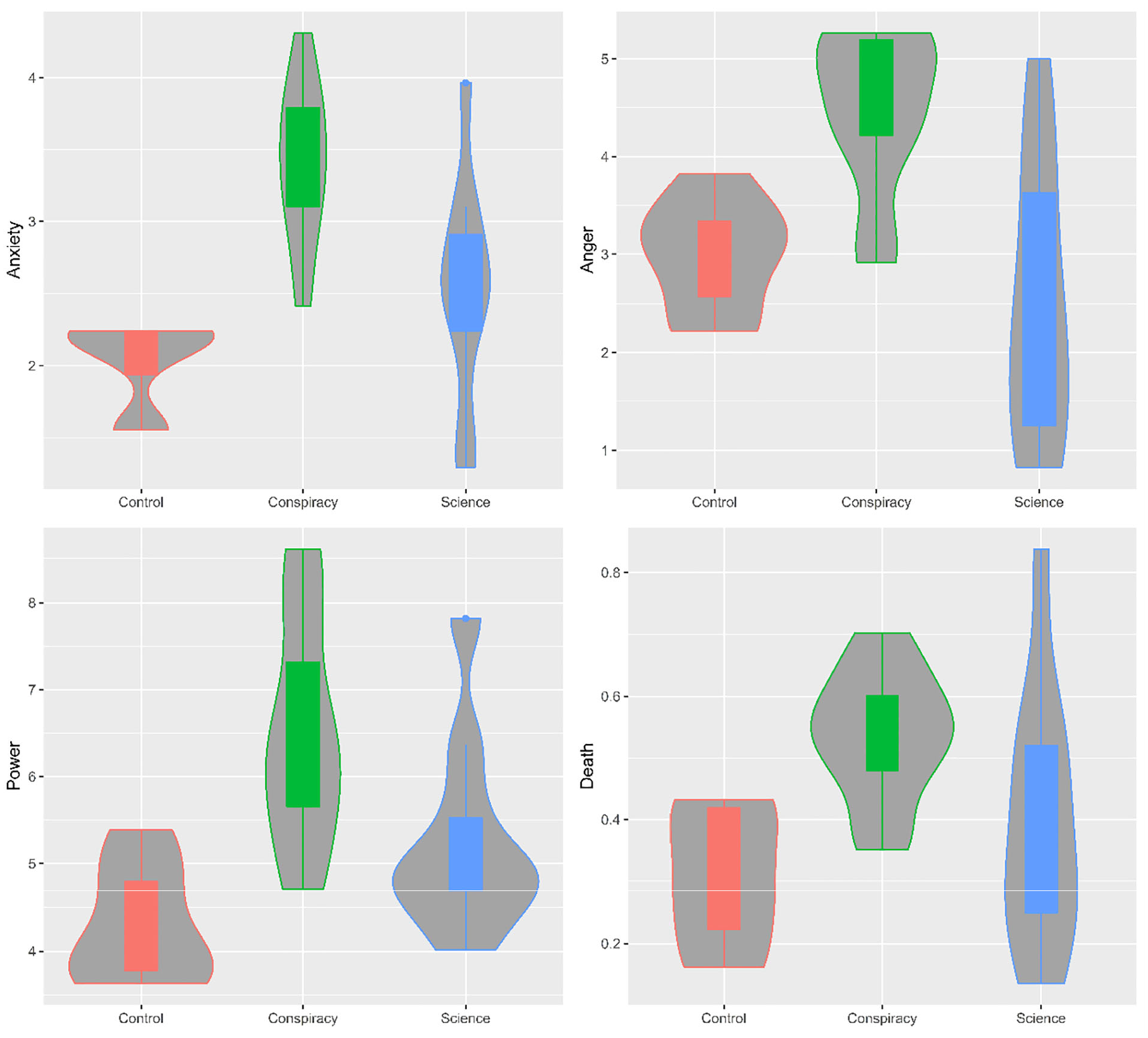

Statistical analysis was performed to observe differences in use of emotional language and mentions of family, power, death and religion between conspiratorial, scientific and general discussion across Twitter and Reddit. Kruskall-Wallis tests showed significant differences between groups for all topics of interest: anxiety, anger, sadness, power, death and religion. Pairwise comparisons were used to determine where differences were present. As expected, significant differences were observed between conspiratorial and general discussion for anxiety, anger, power and death. Contrary to expectations, no significant difference was observed between conspiratorial and general discussion for sadness, religion and family. Significant differences were observed for all topics between conspiratorial and scientific discussions. See Table 2 for pairwise comparisons with Bonferroni correction and Figure 1 for violin plots.

Post-Hoc Pairwise Comparisons Between Groups.

Note. Values represent test statistics, p-values calculated with Bonferroni correction. Bold is significant at the 0.05 level.

p < .05. **p < .01. ***p < .001.

Violin plots for LIWC categories split by discussion.

Engagement Within Conspiracy Discussions

Discriminant Function Analysis (DFA) allows for several predictor variables to be examined in their ability to predict a categorical outcome. DFA is used in this study as the technique is an analogue of multivariate one-way ANOVA where the outcome variable is a categorical variable, and the predictors are continuous. Among analyses designed to predict categorical outcomes, DFA is the appropriate analysis for this study. DFA shows increased accuracy in small sample sizes when underlying assumptions are met (Diekhoff, 1992). The data collected met these underlying assumptions.

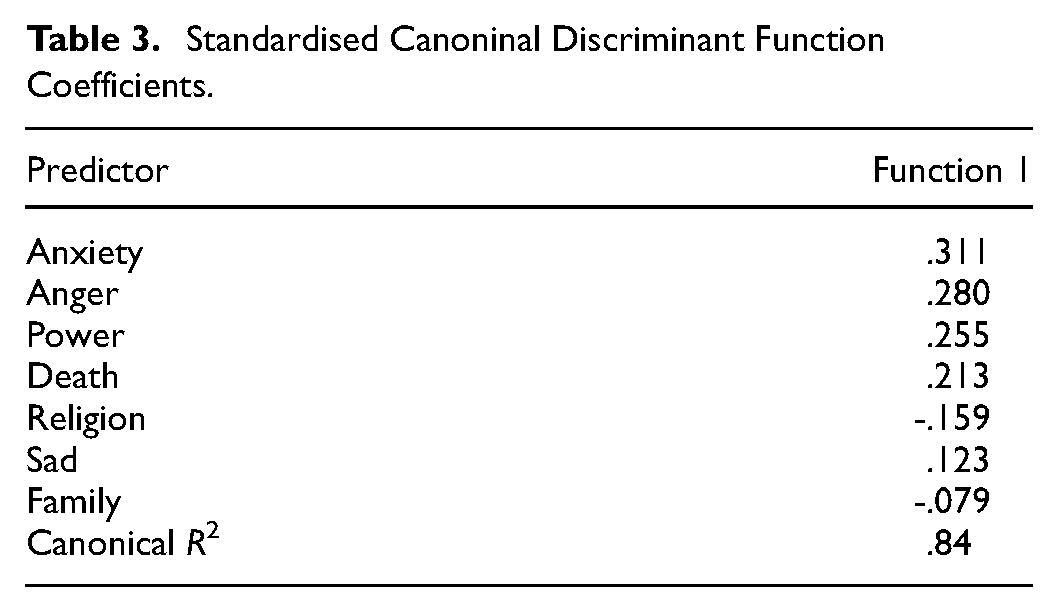

In the current study, the categorical outcomes were high engagement (n = 10) and low engagement (n = 10), determined by number of upvotes, likes etc. DFA results showed that using combined predictor variables of anxiety, anger, sadness, power, death, religion and family correctly predicted group membership with 90% accuracy, χ2(7) = 17.34, Wilks’λ = .302, p = .015. Cross-validated classification produced an accuracy of 80% and therefore the model was considered reliable. See Table 3 for category discriminant loadings. As expected, group centroid for high engagement was located at 1.44 and group centroid for low engagement was located at −1.44, representative of their relation to function 1 below. This indicates the variables successfully predicted engagement in conspiratorial content collectively, however, religion, family and sadness were the least helpful in predicting engagement as shown in Table 3. Anxiety, anger, power and death were positively associated with higher engagement.

Standardised Canoninal Discriminant Function Coefficients.

To determine whether this was an effect of emotionality in general, rather than valence, a further discriminant function analysis was utilized to examine whether positive emotions and overall tone would predict higher level of engagement as hypothesized. “Tone” being a composite score of overall emotional tone, which is one of “the only non-transparent” dimensions of the LIWC output, however the dimensions are developed by previous published findings from the LIWC lab, with higher scores being representative of a more positive emotional tone. Utilizing the “positive emotion” and “tone” categories as predictors, correct classification of high and low engagement occurred with 75% accuracy, χ2(2) = 7.71, Wilks’λ = .635, p = .021, canonical R2 = .60. Cross-validated accuracy remained at 75%. Tone was positively associated with function 1 (R2 = .93), as was positive emotion (R2 = .72). Contrary to the hypothesized effects, the group centroid for high engagement was located at −.72 and group centroid for low engagement was located at .72, indicating increased positive emotions and overall tone were associated with lower engagement in conspiratorial discussions.

Discussion

The purpose of the study was to examine the emotional and thematic differences between conspiratorial discussions on social media. Furthermore, to determine which emotions and themes would positively predict engagement within these discussions. To investigate the emotional and thematic differences in discussions across conspiratorial, scientific, and general discussion, a dictionary approach was taken using the LIWC tool. The frequency of words was analyzed, finding partial support for the first hypothesis; conspiracy discussions showed significantly higher use of anxiety, anger, power, and death when compared to both scientific and general discussion across Twitter and Reddit. It was also found that combined, the topics of anxiety, anger, power, death, religion, sadness, and family significantly predicted engagement in conspiratorial discussions. Anxiety, anger, power, and death were most useful in positively predicting engagement in the model, partially supporting the second hypothesis. Contrary to expectations, positive emotional language negatively predicted engagement in conspiratorial discussions, as they do in non-conspiratorial content (Berger & Milkman, 2012; Nelson-Field et al., 2013; Stieglitz & Dang-Xuan, 2014). This finding suggests that emotionality alone does not result in higher engagement in conspiratorial discussions as it does in other contexts, providing evidence that conspiracy theories indeed thrive solely on negative emotions and themes.

The results show support for the notion that conspiracy theories and discussions around them elicit strong negative emotional responses in the audience to gain their attention and incite motivation; from sharing information with networks, to forming groups and engaging in collective action (Berger & Milkman, 2012; Sylwester & Purver, 2015). As humans, we respond acutely to perceived threats, a cognitive function which is likely responsible for our success as a species but disadvantages us when assessing credibility of information (Martel et al., 2020). Considering inherent negativity bias (the propensity to place importance on negative information over equal, positive information), it appears that successful conspiracy theories leverage this to diffuse through online communities. This is conducive with personality correlates of conspiracy belief including anxiety, schizotypy, and paranoia (Dagnall et al., 2015; Hart & Graether, 2018), with individuals higher in these traits more likely to respond to negative valence and perceived existential threats.

The results have implications regarding the effects of conspiracy theories on adopters and how best to mitigate their spread. Evidence of strong negative emotional content dominating conspiracy discussions is likely to have consequences for those who accept it as truth. Conspiracy beliefs are often viewed via the gateway model; that belief in one significantly predicts belief in many more (Douglas & Leite, 2017). This suggests that endorsers adopt a “conspiratorial worldview” in which they begin to see conspiracies as ubiquitous. A sincere belief in a shadowy, faceless out-group posing constant threats to health and autonomy is likely to result in a negative feedback loop, particularly considering the audience’s established predisposition for anxiety and paranoia (Bowes et al., 2021). It is also likely to result in decreased trust of others and feelings of isolation, both of which have been identified as both antecedents and consequences of conspiracy theory endorsement (Bierwiaczonek et al., 2024; Dyrendal et al., 2021; Liekefett et al., 2022).

Conspiracy discussions containing higher level of anger, anxieties and conflict also might help to explain the finding that engaging in such online communities tends to lead to polarization (Zollo, 2019). Conspiracy theories have often fueled discord and have been linked to willingness to engage in violence (Vegetti & Littvay, 2022). Negative themes and emotions in conspiracy theories may also increase the likelihood of adopters withdrawing from society and increase the allure of engaging with online communities, as has been previously suggested in research of online conspiracy forums (Klein et al., 2019). It is recommended experimental research be pursued to investigate interventions based on these findings. Priming an audience as to how negative emotions are leveraged by conspiracy theories and informing them of negative outcomes that are associated may decrease their perceived credibility, associated sharing behaviors and likelihood of adoption. These findings would provide support for use of such a protective strategy against spread of conspiracy theories online. Measurements of physiological arousal during consumptions of conspiratorial content and the relationship to sharing intentions is also an area for future research to better understand motivations behind diffusion and subsequently inform intervention.

The lack of a significant difference in sadness between conspiratorial and control discussion was unexpected. This suggests that sadness is not a salient emotion in such discussions. It is widely accepted that sadness is a more muted emotion than anger or anxiety and is associated with decreased motivation and action. As such, sadness may not incite people in the same way as anxiety, anger, power, or existential threat do and thus does not provoke them to engage in or share conspiratorial content in the same way. Again, this outlines the importance of threat and concern as mobilizing aspects of conspiratorial content.

Conspiracy theories are widely thought of as a sense-making reaction to uncertainty, as outlined by their proliferation during widespread anxiety and the increased willingness to endorse conspiracy beliefs in high stress situations (Grzesiak-Feldman, 2013). Interestingly, it appears that conspiracy theories also aim to cause anxiety and rouse other negative emotional states, for which they then provide an actionable response. Although the adoption of conspiracy theories is widely viewed as a way of quelling anxiety via the reduction of uncertainty, evidence suggests that negative emotions increase as a result of conspiracy theory adoption. Considering this, the mechanism by which conspiracy beliefs are reinforced is intriguing and appears likely to revolve around cognitive processes including feelings of uniqueness, control, and intellectual superiority (Cichocka et al., 2022), rather than classical positive emotions or rewards. It is recommended researchers and policymakers remain cognizant of this when designing and implementing interventions.

The importance of these findings in the context of current societal and political discourse should be considered. Although a general predisposition to conspiracy theories remains relatively stable among the population over longer periods of time (Uscinski et al., 2022), the ability to widely and rapidly disseminate new conspiracy theories via social media is undeniable. The propensity for conspiracy theories to spread online has been well documented (Lee et al., 2021; Samory & Mitra, 2018), and has led to outcomes such as harassment, increased polarization between groups, and distrust of science during health crises (Kou et al., 2017; Zollo, 2019). The implications for negativity driving engagement on social media, and particularly for conspiratorial content suggests a requirement for educating users on how emotional content spreads and captures attention, but also for public pressure on social media platforms to reduce rewards for emotional, divisive, and conspiratorial content.

There are several intervention types that aim to reduce widespread adoption of conspiracy theories and misinformation via social media. These include educating individuals about common strategies prior to exposure (“pre-bunking”; van der Linden et al., 2023), adjusting digital environments to discourage adoption of unfounded or conspiratorial content (“nudging”; Pennycook et al., 2021; Rathje et al., 2023) and providing accurate, verified information in response to false or misleading content (“fact-checking”; Ziemer & Rothmund, 2024). The findings of this study outline the importance of educating individuals on how negative emotions and themes influence the diffusion of conspiratorial ideas online. This includes why individuals are more likely to see a conspiratorial idea repeatedly if it contains a stronger negative emotional message—particularly important as repetition of exposure strongly predicts endorsement of an idea (Ecker et al., 2022; Fazio et al., 2019). It is important for the public to understand that repeatedly seeing a conspiratorial post is not a reflection of credibility, but more likely a reflection of strong negative emotional and thematic content. Interventions should also aim to educate individuals on the effects that strong emotional responses and “activation” have on our reasoning abilities; that we are more fallible to false information when we are in a state of emotional arousal (Martel et al., 2020), and that conspiracy theories often rely on this for engagement.

Limitations

Although steps were taken to make the study as robust as possible, including use of control groups, systematic data collection, and use of established tools, there remain some limitations. The collection of source text from a restricted set of social media sites may be a limitation to the generalizability of the study. A replication with a broader sample including other forms of media beyond text may improve generality of the findings. However, we note that the same emotions and themes are likely to apply to images and videos on social media platforms. Future studies may wish to wish to confirm this across a variety of multimedia types. It would also be prudent for future researchers to utilize experimental studies to measure the effect that negative emotional language/negative themes have on an individual’s willingness to endorse conspiratorial beliefs. More specifically, to explore ways of mitigating emotion-driven attitude and behavior change within the conspiracy sphere.

We also assume in this paper that a causal relationship exists between emotions and engagement, as supported by experimental studies (Martel et al., 2020). We acknowledge that there may be a feedback loop, whereby content creators are intentionally creating more emotional and divisive content to gain greater engagement. This is considered plausible; however, our findings still apply to the initial relationship between emotion and engagement that would start such a feedback loop, rendering them highly relevant in addressing this ongoing issue moving forward. As mentioned in the method, we also acknowledge that the choice of conspiracy theory keywords may influence results. Choosing a variety of conspiracy keywords spanning several topics increases the external validity of the findings, however future researchers may wish to explore a variety of other conspiratorial topics. Of particular importance is the topic of ideologically driven conspiratorial beliefs. These were not targeted in the current study; however, have potential for showing differing effects based on type of ideology or group e.g. political affiliation, gender/sexual orientation, ethnicity.

Conclusion

This study aimed to explore the emotional and thematic features of conspiracy theories on social media, and which features drive engagement. The findings were partially aligned with findings about social media engagement broadly, and conspiratorial content specifically. We find that negative emotions (anxiety, anger) and themes (death, power) are significantly more present in conspiratorial discussions when compared to scientific and general discussions across two platforms, Twitter and Reddit. As expected, we find that negative emotions and themes drive engagement on both platforms, with higher concentrations of such language predictive of increased number of likes, upvotes etc. Unexpectedly, we find that a higher concentration of positive emotions and tone are predictive of lower engagement. This is contradictory to other findings, in which positive emotions are equally or in some cases more so predictive of increased engagement. This outlines specific characteristics of conspiracy-related discussions on social media platforms and hold implications for how to understand them, educate individuals, and intervene in their spread moving forward.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Data Availability Statement

Data will be made available for any reasonable request: