Abstract

Task design has been viewed to be essential in the context of language assessment. This study investigated whether increasing task complexity affects learners’ writing performance. It employs three writing tasks with different levels of complexity based on Robinson’s Componential Framework. A cohort of 278 participants was selected using a simple random sampling technique, and a corpus of 603 compositions was used for data analysis. The results showed that learners demonstrated higher scores and higher levels of coherence in the compositions of more complex tasks. The findings of this study contribute to the growing field of cognitive research by exploring the effects of task complexity on learners’ writing production. The results have implications for online automated writing assessment, writing test development, and the design of high-stakes language tests. The findings also provide recommendations on how tasks should be designed to develop learners’ language production skills in writing instruction.

Introduction

Writing tasks provided in language exams require special consideration because they originate from and guide the writing process, which results in samples for language assessment and evaluation (Liu, 2022; Xu et al., 2021). Thus, task design in language tests has been assigned a central role in language instruction and assessment (Allaw, 2021; An & Lee, 2021; Bläsing & Bornewasser, 2021). Skehan and Robinson predicted how task design elements affect learning by placing various levels of cognitive demand on learners’ cognitive resources and focusing their attention on aspects of language usage, production, and learning. Long (2017) argued that in a task-based syllabus, pedagogic problems should be used as the unit of analysis, with challenges progressing from easy to complicated and gradually approaching the demands of real-world activities. It is true that when designing a task and syllabus, it is essential to consider task complexity as an appropriate criterion for determining validity. The design of the college English-testing syllabus was intended to facilitate maximum learning and effective teaching with appropriate task complexity. It should be clarified that a task-based language syllabus should intensify as the tasks become more complex.

However, in real writing situations, the influence of task complexity on college students’ writing performance remains uncertain, meaning that instructors fail to provide students with an appropriate level of task complexity in classroom teaching, which may result in poor ability to handle different types of tasks (Golparvar & Rashidi, 2021). Writing instructors are expected to design diverse writing tasks with different levels of complexity across semesters. More challenging tasks for senior students might encourage them to practice and write more, instead of reciting sample essays or memorizing sentence patterns. As a result, teachers do not follow any guidelines for designing writing tasks for students in different semesters.

Based on the above discussion, previous research suggests that tasks with different levels of complexity may result in varying levels of writing performance (Adams et al., 2015; Wang & Hu, 2021). Golparvar and Rashidi (2021)’s study also evident the observed discrepancy between task complexity in pedagogical design and actual performance outcomes. A notable niche, that is, the direct impact of task complexity on writing performance, especially within the nuanced context of college English assessments in Southern China, remains less-investigated and need more empirical support from a diverse context.

To this end, the current study investigates how task complexity, particularly the reasoning demands dimension, influences writing scores and text features in student compositions. By operationalizing a gradient of task complexity, the study examines if and how varying complexities within the College English Test (CET) writing section correlate with differences in students’ writing performance. College English Test (CET) is a high-stakes standardized assessment for English language proficiency, with approximately 18 million tertiary-level undergraduates participating annually. This investigation is of great necessity and significance as the results can shed light on pedagogical design and language assessment (Rahimi & Zhang, 2018).

Literature Review

Task complexity is theoretically grounded on Robinson’s (2011) “Triadic Componential Framework Model” (or “Cognition Hypothesis”); it is manipulated by a cognitive factor that affects two dimensions: resource-directing and resource-dispersing, consisting of several dimensions which can be used in designing tasks. These two categories identify important differences in how these dimensions affect resource allocation during task performance. The last decade has seen an increasing empirical interest in the effects of attentional resource allocation on language production (Adams et al., 2015; Ismail & Samad, 2017; Salimi & Dadashpour, 2012); these empirical studies investigated the two categories of resource-directing and resource-dispersing and compared the effects of these variables on task performance.

Task Complexity and Writing Scores

The relationship between task complexity and writing scores has been the subject of considerable research interest in the field of second-language writing. Robinson (2001) believes that task complexity refers to the results of attention, memory, inference, or other information-processing demands imposed on language learners by the task structure. He argues that several dimensions, such as working memory, reasoning demand, and prior knowledge, can affect the complexity level of a task, resulting in differences in learners’ task performance, as previous literature suggests that simple tasks will always be less demanding than complex tasks (Wang et al., 2024) and that task complexity levels can be met by different task designs. According to Robinson, task complexity and attentional resources are correlated. Thus, fewer attentional resources are needed to process simple tasks, and less attention is needed. Conversely, a more demanding attention allocation is required in more complex tasks. Robinson’s argument suggests that when generating writing output in their second language, the writer must devote a portion of their cognitive capacity to the language, preventing other functions, such as higher-order functions for organizing and conversation, from being fully utilized.

Ruiz-Funes (2015) conducted a study on task complexity and writing performance, and the findings revealed a close connection between task complexity and writing performance in second-language writing. Task complexity was manipulated using topic familiarity, writing genre, and task type. Writing performance was assessed using complexity, accuracy, and fluency (Ruiz-Funes, 2015). An increased reasoning demand for tasks led to an increased language output.

Task classification, manipulating the cognitive demands of tasks (i.e., task complexity), has been the primary issue in the classroom context, among other task variables, as advocates of task-based language teaching have assumed that carrying out tasks ordered from simple to complex could promote L2 production and development (An & Lee, 2021). This study was motivated by Robinson’s Cognition Hypothesis and aimed to investigate the impact of task grading along the reasoning-demands dimension on students’ writing scores and text features.

Studies have indicated that learners’ writing performance is influenced by many factors (Darong, 2021; Golparvar & Rashidi, 2021; Li & Huang, 2022), such as writing task design in classroom instruction and task complexity in language assessment. Tasks are widely viewed as significant factors in the design of language tests; task characteristics, testing methods, testing production, and language assessment are important components of validation, language testing, and evaluation. This study was conducted in the context of language assessment. The researcher converted reasoning demand and prior knowledge into task complexity based on Robinson’s triadic componential framework. The differentiation of writing tasks by task complexity not only makes writing tasks comparable but also contributes to their design, especially for task sequences (Lawrence, 2017; Robinson, 2011). Accordingly, the tasks were graded as least complex, mid complex, or most complex.

To fill the existing research gap, the effect of task complexity manipulations within writing tasks on EFL college students’ writing scores and text features, this study aims to examine task-generated cognitive burden manipulated by prior knowledge, reasoning demand, and writing genres and its impact on learners’ writing performance. This study is expected to contribute to writing task designs in classroom instruction, college English tests, and high-stakes language testing and assessment. This study is an initial effort to use a multitask technique.

Task Complexity and Text Feature

The question of whether increasing task complexity affects learners’ written output has attracted considerable attention from scholars and educators. Several studies have explored the relationship between the complexity of writing tasks and textual features. For example, Tavakoli and Skehan (2005) examined the effects of task complexity on text features such as lexical diversity, syntactic complexity, and fluency. The results indicated that more complex tasks led to greater lexical diversity and syntactic complexity, as well as improved fluency in written output. Similarly, Pae (2012) investigated the effects of task complexity on using cohesive devices in written output. The findings showed that more complex tasks led to a greater use of cohesive devices, which are linguistic features that signal connections between ideas and help create coherent texts.

However, traditional documents rely mainly on surface measures, such as text and sentence lengths, and text-based measures, such as lexical diversity and word frequency, to predict or explain writing production (Connor, 1990; Ferris, 1994). Few studies have investigated the deep level of linguistic features, such as the meaning and intention of discourse (Engber, 1995). In contrast to previous studies, this study used the Coh-Metrix to measure surface and deep levels of discourse. McNamara et al. (2010) analyzed linguistic features and identified predictive indices of the Coh-Metrix tool for writing quality. According to this study, syntactic complexity, lexical diversity, and word frequency are the three most significant predictors of writing quality.

The assessment of text features was based on three constructs generated using Coh-Metrix 3.0. The three constructs are text fluency, lexical diversity, and grammatical complexity. These were the most relevant measures for discourse analysis. Learning to use articles, for example, is resource-directing, as learners are directed to produce specific aspects of language with increased complexity. Previous investigations of text features in English writing mainly focused on three dimensions: complexity, accuracy, and fluency. The Coh-Metrix effectively measures overall text difficulty using a readability formula suitable for L2 learners. A more detailed analysis of text difficulty is also identified along eight functional dimensions (narrativity, syntactic simplicity, word concreteness, referential cohesion, deep cohesion, verb cohesion, connectivity, and temporality) through calculating 53 lexical-grammatical and semantic features (Jiang & Han, 2018).

In task-related research, performance is often measured based on language complexity, accuracy, and fluency (Wang & Skehan, 2014). Text feature analysis was conducted on three major dimensions of the linguistic features of texts: complexity, accuracy, and fluency.

In this study, text fluency, lexical complexity, and grammatical complexity are highlighted as measures of writing quality. Measures of text features were generated using Coh-Metrix 3.0 (Graesser et al., 2004), which was employed to assess the text features of students’ writing performance. It is a computer-based automated tool based on computational science and corpus linguistics. A Coh-Metrix (www.cohmetrix.com) is one of the most comprehensively based, most sophisticated automatic tools available to assess texts today, which can be used to evaluate text and discourse automatically, encompassing a range of language and discourse measures (Petchprasert, 2021). A major advantage of this tool is its latent semantic analysis (LSA) technology, which goes beyond the superficial features of analysis. Latent Semantic Analysis (LSA) is a technique for creating vector-based representations of texts which are claimed to capture their semantic content (Dumais, 2004). The Coh-Metrix establishes a potential textual semantic space and then calculates the cosine of the angle between vectors represented by two linguistic units, namely, their semantic similarity (Landauer et al., 2013).

Methodology

Data Collection Procedures

This study employed three writing tasks selected from the Authentic CET-4 Writing Section (see Table 1). Writing tests were conducted over three consecutive weeks. Learners’ task performance was evaluated based on three independent raters’ assessments, and text features were assessed using three constructs–text fluency, lexical complexity, and grammatical complexity–generated by Coh-Metrix 3.0, a text analysis tool.

Information About Writing Tasks in the College English Test.

The participants were students from a comprehensive, open-enrollment college in Jiangxi Province, China. They were undergraduate test-takers who passed the College English test band 4 in 2019. This study employed a simple random sampling technique to select the participants. A cohort of 278 participants were selected as the research samples, unfortunately, owing to missing or incomplete data, 77 cases were removed from the dataset. The final research sample comprised 201 students who voluntarily agreed to attend the study.

Sampling Technique

The study utilized a random simple sampling technique to create a sample that is representative of the population with previous experience in writing the College English Test (CET). The inclusion criteria were specifically designed to select participants who had a direct and practical understanding of the CET, thus ensuring the study’s relevance to real-world language assessment scenarios Following Morgan’s (2011) criteria for determining sample size in research, a minimum sample size of 201 was deemed necessary for a population of 420 eligible test-takers. Initially, 278 participants were selected to account for potential data attrition. After screening for completeness and relevance, the final sample comprised 201 individuals. This methodical approach to sampling was taken to ensure statistical validity and reliability, as well as to support the generalization of the study’s findings to the broader population of CET test-takers.

Statistical Analysis

Upon collection, the data were subjected to rigorous analysis utilizing SPSS version 26 for statistical computations and Coh-Metrix 3.0 for text analysis. The analysis focused on evaluating the writing compositions based on a standardized rubric. To bolster the validity of the evaluation, three raters with substantial experience in language assessment were selected. These raters underwent a training session to align their marking strategies and ensure a consistent application of the assessment criteria across all samples. The high internal consistency score of 0.917 in their evaluations points to a strong inter-rater reliability, an essential aspect of trustworthy qualitative research.

Statistical comparison of writing scores across the three tasks was carried out using one-way ANOVA. This analysis method was chosen for its ability to determine whether there are any statistically significant differences between the means of independent (unrelated) groups. The writing scores were stratified into four distinct performance levels to facilitate a more nuanced understanding of the impact of task complexity on writing quality. Moreover, text features extracted through Coh-Metrix were also compared using one-way ANOVA to determine if task complexity influenced linguistic characteristics such as fluency, diversity, and grammatical complexity within the written texts. These methodological choices—rooted in robust statistical theory—were intended to distill clear insights into the relationship between task complexity and language production, thereby contributing to the development of effective language assessment tools.

Results

Student Writing Scores

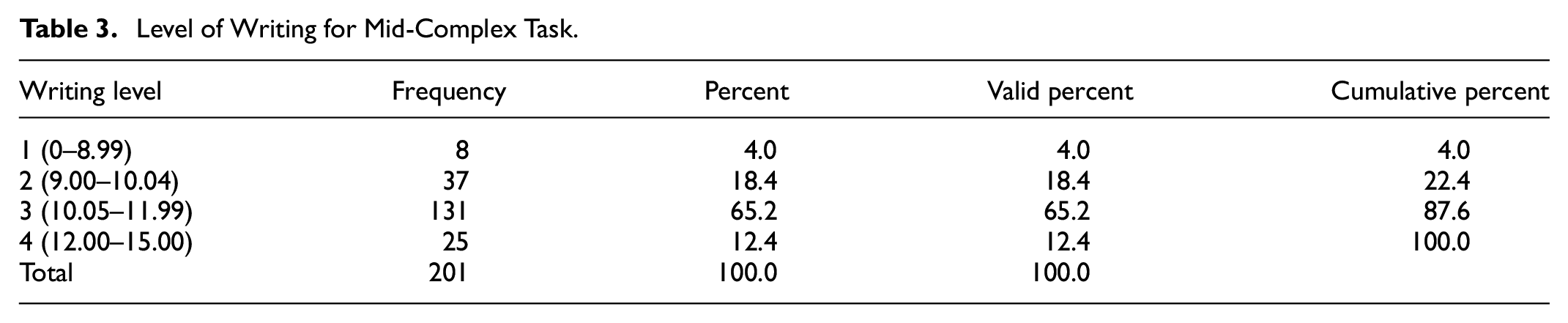

The researcher classified the students’ writing scores into four levels (0–8.99; 9.00–10.04; 10.05–11.99; 12.00–15.00). Detailed information on the students’ writing performance levels across the three writing tasks is shown in the following tables.

The writing levels of the three writing tasks were crystallized, as shown in Tables 2 to 4. Students’ writing levels on the College English Test differed according to task complexities. Most students who passed the test demonstrated moderately good writing levels and obtained a total score of more than 70%. More specifically, only 10.4% of the participants failed the least-complex writing task compared to 4% in the mid-complex writing task, and only 3% in the most-complex writing task, indicating that students performed worse (writing score below nine points) in the least-complex writing task, scored comparatively better in the mid-complex task, and were least likely to fail in the most complex task. In contrast, only three students (1.5%) performed excellently (writing scores > 12) on the least complex writing task. This figure increased to 25 (12.4%) in the mid-complex writing task and 27 (13.4%) in the most complex writing task. The number of students who scored at level 2 (score between 9.00 and 10.04) and level 3 (score between 10.05 and 11.99) accounted for the largest proportion in the least-complex writing task, at 44.3% and 43.8%, respectively. In comparison, 65.2% (131) and 60.7% (122) of the participants performed moderately well (level 3) in the mid- and most-complex writing tasks, suggesting that students performed much better in the mid- and most-complex tasks compared with the least-complex task.

Level of Writing for Least-Complex Task.

Level of Writing for Mid-Complex Task.

Level of Writing for Most-Complex Task.

Differences in Writing Scores

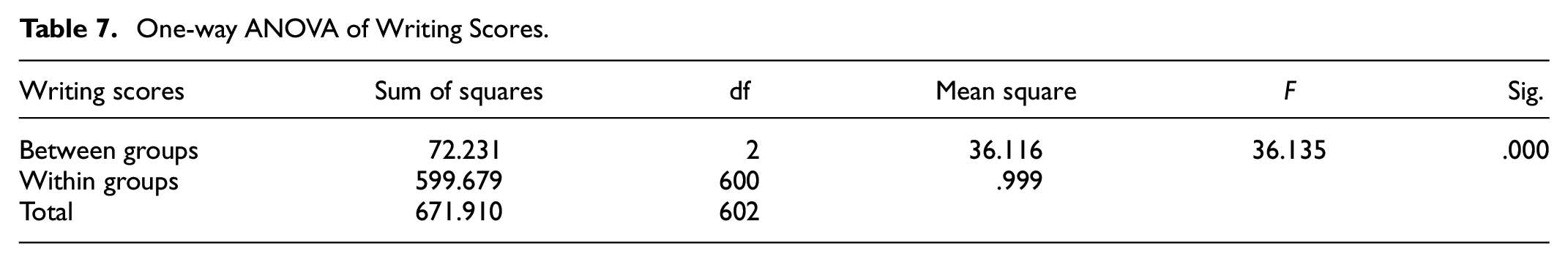

Descriptive analysis, Levene’s test, one-way ANOVA, and post-hoc tests were performed to assess the significance of the students’ writing scores on the three writing tasks. The results are shown in the following tables.

The descriptive statistics of the writing scores of the three writing tasks (Table 5) assessed by the three raters showed that the average score of the least-complex writing task was relatively low compared with the mid-complex and most-complex tasks. The mean value of the least complex task was 10.03, and the minimum and maximum values for this task were 5.33 and 12.67, respectively. The average value of mid-complex task predominated among the three tasks, at 10.80, and the minimum and maximum scores were 8.00 and 13.67, respectively. The second highest score was for the most complex task, at 10.72, with a standard deviation of 0.98, a minimum value of 8.00, and a maximum value of 13.67.

Descriptive Statistics of Writing Scores Among Three Writing Tasks.

The results of Levene’s Test for Equality of Variance in Table 6 show that p = .626 (p > .05), indicating that the error variance of the writing performance was equal across the three writing tasks, and the data met the hypothesis of homogeneity of variance.

Test of Homogeneity of Variances (Writing Scores).

The bold indicates the P-value from Levene’s test is .626, which is greater than the commonly used significance level of .05, meeting the condition for conducting an ANOVA.

When the means were compared using one-way ANOVA, a significant difference was detected at less than 1% in all the variants (Table 7), indicating a significant difference in the mean value of writing performance among the three writing tasks at p = .000 (p < .01). Thus, we rejected the null hypothesis and concluded that the writing performance of the three writing tasks was significantly different.

One-way ANOVA of Writing Scores.

Multiple comparisons of writing scores among the three tasks were analyzed using a post-hoc Tukey test, which showed that the writing scores of the least-complex task significantly differed from those of the mid-complex task and the most-complex task. However, the significance level between the mid-complex and most-complex tasks was insignificant, p = .735, which was higher than the threshold value of 0.05. Similarly, the LSD (least significant difference) results showed that the significance level of the least-complex and mid-complex writing tasks was less than 0.001. The same level can be observed between the least and most complex tasks. In contrast, the scores of the mid-complex and most complex tasks were not significantly different, with a significance value of 0.455.

Different Performance of Text Features

To compare the different text features across the three tasks, the researchers employed a corpus of 603 EFL college students. Each composition was analyzed using the Coh-Metrix to generate the text features of text fluency, lexical diversity, and grammatical complexity. The text features of each composition were analyzed and compared using one-way ANOVA. In particular, the text features of writing performance across the three tasks were assessed through text fluency, lexical diversity, and grammatical complexity. These data were generated using the latest version of Coh-Metrix 3.0, an online computational analysis tool that is theoretically based on computational and corpus linguistics. The six most relevant indices were employed to assess the text features with respect to text fluency (text length and LSA), lexical diversity (type-token ratio [TTA] of all words, lexical diversity, and VOCD of all words), and grammatical complexity (average sentence length [ASL] and sentence syntax similarity of all sentences across paragraphs). Detailed information is provided in Table 8.

Six Measures of Text Features.

Text fluency was assessed using two measures: text length and LSA. Research has shown that the longer the composition, the more skillful is the writer. Thus, text length was used to measure writing fluency. A longer text indicates a higher level of fluency in students’ writing compositions. Furthermore, LSA provides measures of semantic overlap between sentences or between paragraphs (Landauer, 2015). The LSA varies from 0 (low cohesion) to 1 (high cohesion).

Lexical diversity is the range of distinct word types or sorts of words present in a text relative to the total number of words (or tokens). When the total number of words (tokens) and the number of word types are equal, all words are distinct, meaning that when lexical diversity is at its highest level, the text is likely to have a very low level of cohesion. When a text contains many words, new words must be included in the context of the discourse. In contrast, when more terms are used repeatedly throughout the text, lexical diversity is lower (and cohesion is higher). In other words, a high TTR score is obtained for texts with low lexical diversity, and vice versa. We used TTR of all words and lexical diversity to measure lexical diversity.

We utilized ASL and STRUT (syntactic sentence similarity, all sentences across paragraphs) to assess grammatical complexity. Previous researchers synthesized studies on syntactic complexity in L2 writing and showed that it varies significantly according to L2 skill level; therefore, ASL was employed as a grammatical complexity metric. By comparing syntactic trees for each sentence pair, STRUT assesses the level of similarity in syntactic structures within a passage. Lower STRUT values may indicate greater grammatical complexity. Crossley and McNamara (2012) discovered that STRUT was one of the three factors that contributed to EFL learners’ writing readability, though they did not analyze L2 writers’ writing.

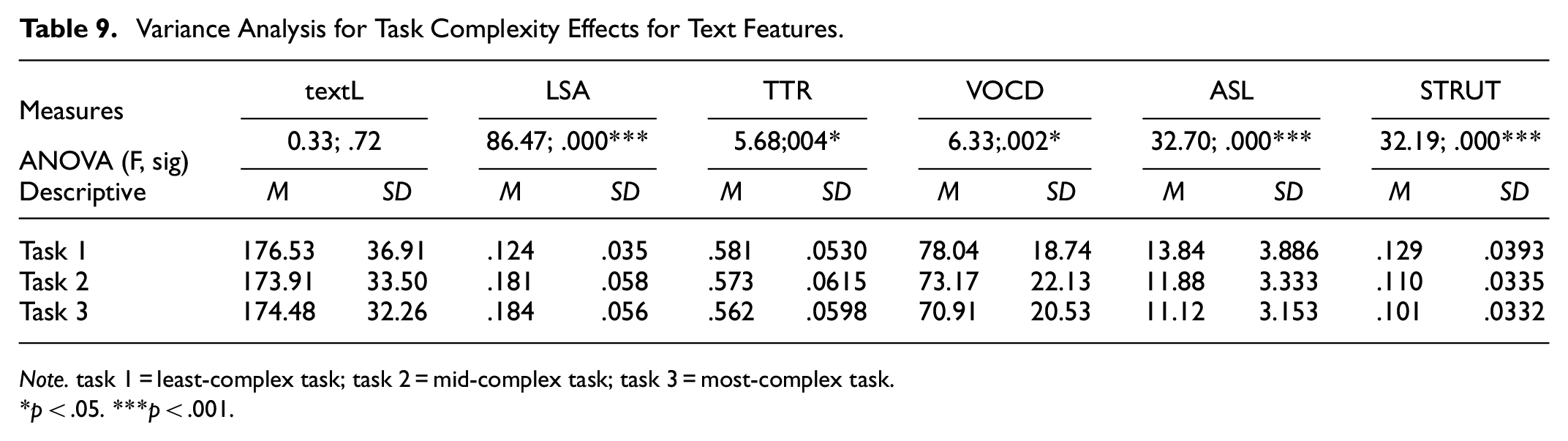

A one-way ANOVA was conducted to assess whether the six text features differed significantly across the three task types. ANOVA was used to compare 603 (201 × 3) compositions written by identical participants over three successive weeks. Significant differences were found among the five measures across the three task types, whereas no difference was found in ASL. Thus, the results indicate a significant effect of task type on text features. The details are presented in Table 9.

Variance Analysis for Task Complexity Effects for Text Features.

Note. task 1 = least-complex task; task 2 = mid-complex task; task 3 = most-complex task.

p < .05. ***p < .001.

As shown in the ANOVA results, the text features differed significantly across the three tasks among the five text measures—LSA, TTR, VOCD, ASL, and syntactic similarity—and all sentences across paragraphs.

Specifically, in terms of text fluency, text length did not differ significantly among the three task types, F = 0.33, p = .72. In contrast, a significant difference was found in the LSA measure, and LSA was used to measure the texts’ cohesion. The LSA measure varies from 0 (low cohesion) to 1 (high cohesion), suggesting that the higher the value, the higher the level of cohesion. The results demonstrate that the compositions of the mid-complex and most-complex tasks were more coherent. The LSA values were 0.181 and 0.184, respectively, compared to 0.124 in the least-complex writing task.

Additionally, lexical diversity differed among the three tasks. TTR’s values for three tasks were 0.581, 0.573, and 0.562, respectively; As mentioned above, when more words are used multiple times across the text, TTR is lower, and cohesion is higher; a lower value of TTR represents a higher level of lexical diversity, suggesting that cohesion in the most complex writing tasks was the highest, followed by the mid-complex and least complex tasks. Moreover, this feature was supported by lexical diversity VOCD values. The figures for the three tasks were 78.04, 73.17, and 70.91, respectively, indicating that writing in the most-complex task was more coherent than the mid-complex and least-complex tasks. The lexical diversity was lower, indicating a higher level of cohesion.

Finally, grammatical complexity was assessed using the ASL and sentence syntax similarity of all sentences across paragraphs. The ASL values for the three tasks were 13.84, 11.88, and 11.12, respectively, suggesting that sentences in the least-complex task compositions were longer than those in the mid-complex and most-complex task compositions. Moreover, lower STRUT values may indicate greater grammatical complexity (Zhang, et al. 2023). As indicated in Table 9, STRUT values for the three task types were 0.129, 0.110, and 0.101, suggesting that the compositions for the most complex tasks were grammatically the most complex.

In conclusion, text features differed significantly across the three tasks regarding text fluency, lexical diversity, and grammatical complexity. The results showed that writing requires latent coherence in thinking and concepts, not just surface discourse linkages. For lexical diversity, students tended to use words multiple times in the least-complex and mid-complex writing tasks compared to the most complex task. This result confirms that the most-complex and mid-complex tasks were more coherent. Finally, in terms of grammatical complexity, the compositions of the most complex task tended to be the most complex, followed by the mid- and least-complex tasks. Significant differences were observed in all three tasks. The results of text feature analysis generated by Coh-Metrix 3.0 showed a high quality of essays in the mid-complex and most-complex tasks, with better coherence, less repeated vocabulary, and more complex grammatical patterns.

Discussion and Conclusion

Learners demonstrated a higher writing performance on reasoning-demanding tasks (mid- and most-complex tasks). They displayed no significant differences in the tasks with respect to prior knowledge. The study found that an increased level of task complexity pushed students to generate higher writing scores, suggesting that learners’ attention was not limited by writing tasks requiring multiple working memories. They were equipped with a working memory capacity to process information that required multiple attentional capacities. Learners coordinate their attention during writing tasks. This study also found that prior knowledge of the topic did not significantly impact writing scores. Familiarity with the topic did not improve writing test outcomes.

The study found that identical learners (test-takers) performed differently when assigning different tasks on the College English Test. This study suggests that reasoning-demanding tasks can activate test-takers’ language skills and help them fully express their ideas. Learners were found to be more interested in writing argumentative essays (tasks with reasoning demands) than narrative writing (tasks without reasoning demands). Tasks with different complexity levels in the College English Test resulted in significant variations in test takers’ writing scores.

Enhanced Writing Performance on Reasoning Demanding Tasks

The first major focus of this study was to investigate the level of learners’ writing scores on the College English Test and compare the three writing tasks, as well as the impact of task complexity on EFL learners’ writing scores.

The analysis results indicated that a larger proportion of high-quality levels were distributed to the mid-complex and most complex tasks. These findings in the current study were supported by Robinson’s (2010) finding that more complex tasks can elicit students’ full attention to attend to all aspects of the tasks. According to Robinson’s (2001) argument, if the cognitive degree of task complexity increases, learners can improve their performance and complete tasks successfully. He argued that human attentional resources are unlimited, so that learners can attend to and process multiple linguistic aspects without trade-off effects in their language production (Cho, 2015). Learners do not attend entirely to all components of language performance simultaneously in a simple activity, but must fully pay attention to all aspects of the task to accomplish it in a complicated assignment.

According to Robinson’s cognition hypothesis (2001), increases in task complexity along the so-called resource-directing variables may not decrease language output but may contribute to higher structural complexity and greater accuracy of learner output if the cognitive task complexity dimensions belong to separate attentional resource pools. College learners have a mechanism to process reasoning-demanding writing tasks. Their attentional capacities can become coordinated and cooperative rather than disjointed and oppositional. Tasks manipulated according to reasoning demands push learners to release their potential abilities and coordinate their attention. Participants in this study had a strong working memory capacity to process information. Working memory refers to the storage and processing mechanism of complex cognitive activities such as reading and writing (Lu, 2010). This is why the students in this study demonstrated a higher level of performance with a lower degree of familiarity with the topics. This finding is of great significance for language instruction and assessment, as well as cognitive psychology.

Variations of Writing Scores Among Tasks

This study’s second research objective was to determine whether there was any significant difference in students’ writing performance (writing scores) among the three tasks with different complexity levels. Students’ writing scores were analyzed and compared using one-way ANOVA to determine whether the differences between group means were statistically significant. The study found that learners generated high-scoring essays on reasoning-demanding tasks (mid- and most-complex tasks). In line with previous research, task complexity may result in variations in learners’ writing performance (Abdi Tabari & Ivey, 2015; Adams et al., 2015; Jiaxin, 2015; Kuiken & Vedder, 2007; Simin & Farahman, 2017).

Learners perform better on writing tasks that require reasoning. This is because such tasks push them to generate higher-level language output. According to Robinson (2005), students focus more on complex tasks, improving their performance. Tasks with moderate reasoning demands help learners articulate their thoughts better, matching their writing habits and language proficiency. As learners perform tasks with a higher cognitive load, their language proficiency increases, resulting in better essays and higher writing scores. Another reason for students’ varying writing levels across tasks could be their past practice. Argumentative essay writing is the most prevalent essay genre encountered by college students across the curriculum and in large-scale writing assessments (Lu, 2010). This popularity leads students to invest more time in preparing for it. Practice with this type of writing familiarizes students with reasoning-demanding tasks. Writing teachers should use a variety of task types to train students in handling diverse content.

In conclusion, the reasoning demand of a task largely determines the processing of each output and final task performance. Students demonstrated a higher level of writing performance in reasoning-demanding tasks from the cognitive factors’ perspective.

Variations of Text Features Among Tasks

The results indicated that the students’ essays displayed different text features across the three tasks; increasing task complexity resulted in gains in coherence, lexical diversity, and syntactic complexity. Complex tasks are more likely to elicit multiple attentional faculties to generate a higher level of coherence, a more diverse lexicon, and more complex grammatical patterns. This finding is supported by Robinson’s (2010) argument that humans have different attentional capacities to process information, meaning that as task complexity increases, so does accuracy, complexity, and fluency.

Previous studies (Lawrence, 2017; Yang et al., 2015) claiming that increasing task complexity concerning reasoning demands resulted in higher gains in lexical and syntactic complexity. In contrast, no significant effect was detected on accuracy (Sattarpour & Farrokhi, 2017). Conversely, Lawrence (2017) found that as task complexity increased, syntax complexity and lexical length increased significantly, but lexical diversity showed no significant change (Lawrence, 2017). The current finding was also partially consistent with Ismail et al. (2012), who claimed that task reasoning demands and task conditions significantly affected grammatical accuracy (Ismail et al., 2012). Another similar study conducted by Faruji and Kharaghani (2019) among 90 intermediate EFL learners examined the impact of task grading along reasoning demand on syntactic complexity. The results indicated a significant positive impact of sequencing tasks from simple to complex on the syntactic complexity of intermediate Iranian learners (Faruji & Kharaghani, 2019).

The variation in text features among the three tasks again confirmed the robustness of the Coh-Metrix in analyzing texts based on various linguistic features. Coh-Metrix was found to be capable of meeting the needs of discourse analysis for test designers, textbook compilers, and instructors. It has been used to detect a wide range of textual variations. Previous studies have found variations in written discourse using the Coh-Metrix (Graesser et al., 2004; McCarthy et al., 2006). These investigations show that the Coh-Metrix is a powerful text analysis tool capable of assessing and distinguishing a wide range of text types from the chapter level to the sentence level.

The reasons learners displayed a higher level of coherence, lexical diversity, and grammatical complexity of text features in reasoning-demanding tasks (mid-complex and most-complex tasks) can be explained as follows. One explanation is that argumentative tasks, manipulated along reasoning demands, push learners to support their ideas using conjunctions, which contributes to a high level of coherence in essays (Cho, 2015). Another possible explanation could be that learners’ previous practice and the accumulation of argumentative writing led them to apply the samples they memorized, which resulted in more accurate language output.

In conclusion, this study found that different task complexities resulted in variations in text features in students’ written compositions, suggesting that task complexity is an important factor that affects students’ productive abilities. This study also confirmed that increasing task complexity leads to gains in coherence, lexical diversity, and grammatical complexity, suggesting that increasing task complexity may improve linguistic performance and stretch learners’ L2 understanding. This finding was supported by Robinson’s argument that humans possess multiple attentional capacities to process writing tasks. Task complexity did not compete with task performance or language attention.

Implications

The theoretical underpinnings of this study bolster Robinson’s Cognition Hypothesis, which posits that enhanced task complexity catalyzes cognitive engagement, thereby facilitating improved language production. The findings elucidate the dynamic nature of learners’ cognitive resources, suggesting that higher reasoning demands associated with task complexity can activate deeper language processing abilities without overtaxing the learners. This runs counter to the prevailing notion of a zero-sum game between task difficulty and cognitive load. Additionally, the study reveals an intriguing paradigm where prior knowledge of a subject matter is less influential on performance than the cognitive demands of a task. This sheds light on the potential for a more nuanced understanding of how language learning tasks can be structured to accentuate cognitive engagement over content familiarity, thereby enriching the academic discourse on second language acquisition.

From a practical standpoint, the implications of this study resonate with pedagogical strategies, assessment practices, and educational policy in English as a Foreign Language (EFL) contexts. The research advocates for the incorporation of incrementally complex tasks in the curriculum, which can engender substantial gains in language proficiency. This aligns with a pedagogical shift toward enhancing cognitive competencies over mere content retention, suggesting a need for teachers to diversify writing tasks that promote reasoning skills. Assessment-wise, the study signals a need to realign language evaluation metrics, like the College English Test, to mirror the cognitive capabilities of learners, rather than merely testing content knowledge. Moreover, the validation of Coh-Metrix 3.0 for text analysis reaffirms its utility in designing and assessing EFL materials, thereby supporting language educators in their quest to cultivate a richer linguistic output from learners. These practical insights carve a pathway for future educational initiatives to foster a cognitive-centric approach in language education, transcending traditional methodologies that prioritize rote learning.

The research findings of this study provided implications for task-based language testing, language assessment and writing instructions in classroom for the stakeholders. The findings related to task complexity and writing performance provided distinct implications for the knowledge of second language acquisition area, like the cognitive complexity framework of Robinson and Skehan, the writing process of the learners, and the linguistic performance of text features. Additionally, the findings also provided practical implications of language assessment for task design and writing test development in which cognitive complexity of tasks are expected to take into consideration. Finally, instructional implications were offered by showing the need to train the learners with diverse task types, like tasks of different degrees of topic familiarity, different levels of cognitive complexity, and different writing genres. In this way, students were supposed to be equipped with writing competence to face tasks with different challenges. Because the world has become increasingly text-oriented, writing has been designated as one of the most important abilities.

Limitations and Recommendations for Future Research

This study has some limitations. First, the limitations regarding the study sample and its impact on the generalizability of the findings must be addressed. Second, limitations concerning the research instrument, Coh-Metrics 3.0, which failed to detect grammatical errors in writing compositions need to be addressed. Researchers must amend errors before analyzing text features. Finally, the research design limitation needs to be addressed, and parallel groups need to be established to collect the data more effectively.

Developing theoretically motivated, empirically substantial, and pedagogically feasible sequencing criteria has long been acknowledged as a major research goal for operationalizing task-based approaches to syllabus design. Future studies should be conducted to further explore cognitive and affective aspects of writing. Furthermore, a qualitative study might be needed to explore the processing procedures of the writing process and probe why learners’ writing differed across task types and what kinds of task types could best elicit learners to generate high-quality text features that contribute to high-scoring essays.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the researor publication of this a/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The Impact of Task Complexity and Task Difficulty on Learners’ Writing Performance in College English Tests, funded by Jiangxi Agricultural University Teaching Reform and Research Project, Grant Number 2023B2ZZ55.

Data Availability Statement

The data that support the findings of this study are available on request from the corresponding author, Yumei Zou, upon reasonable request.