Abstract

As artificial intelligence (AI) permeates almost all aspects of our lives, university students need to acquire relevant knowledge, skills, and attitudes to adapt to the challenges it poses. This study reports the development and validation of a scale called the Artificial Intelligence Learning Intention Scale (AILIS). AILIS was designed to measure the different factors that shape university students’ behavioral intentions to learn about AI and their AI learning. We recruited 907 Chinese university students who answered the survey. The scale is comprised of 9 factors that are categorized into various dimensions pertaining to epistemic capacity (AI basic knowledge, programming efficacy, designing AI for social good), facilitating environments (actual use of AI systems, subjective norms, access to support and technology), psychological attitudes (resilience, optimism, personal relevance), and focal outcomes (behavioral intention to learn AI, actual learning of AI). Reliability analyses and confirmatory factor analyses indicated that the scale has acceptable reliability and construct validity. Structural equational modeling results demonstrated the critical role played by epistemic capacity, facilitating environments, and psychological attitudes in promoting students’ behavioral intentions and actual learning of AI. Overall, the findings revealed that university students express a strong intention to learn about AI, and this behavioral intention is positively associated with actual learning. The study contextualizes the theory of planned behavior for university AI education, provides guidelines on the design of AI curriculum courses, and proposes a possible tool to evaluate university AI curriculum.

Plain Language Summary

Purpose: The purpose of this study was to develop and validate a scale called the Artificial Intelligence Learning Intention Scale (AILIS). AILIS was designed to measure the different factors that shape university students’ behavioral intentions to learn about AI and their AI learning. Methods: We recruited 907 Chinese university students to answer the AILIS survey. Conclusions: The scale is comprised of nine factors that are categorized into various dimensions pertaining to epistemic capacity (AI basic knowledge, programming efficacy, designing AI for social good), facilitating environments (actual use of AI systems, subjective norms, access to support and technology), psychological attitudes (resilience, optimism, personal relevance), and focal outcomes (behavioral intentions to learn AI, actual learning of AI). Reliability analyses and confirmatory factor analyses indicated that the scale has acceptable reliability and construct validity. Structural equational modeling results demonstrated the critical role played by epistemic capacity, facilitating environments, and psychological attitudes in promoting students’ behavioral intentions and actual learning of AI. Implications: Overall, the findings revealed that university students express a strong intention to learn about AI, and this behavioral intention is positively associated with actual learning. The study contextualizes the theory of planned behavior for university AI education, provides guidelines on the design of AI curriculum courses, and proposes a possible tool to evaluate university AI curriculum. Limitations: One key limitation is the adoption of convenience sampling. In addition, this study could be further enriched by the collection of qualitative data. A third limitation is the cross-sectional nature of the data.

Keywords

Introduction

With its capacity to learn continuously, respond intelligently, and automatize work, artificial intelligence (AI) is very likely to be a powerful and disruptive form of technology. Researchers have suggested that recent AI advancements have brought about the fourth evolution of education that is pushing toward personalized learning, and it is demanding individuals to be more creative than robots (Hu, 2019). Educators need to be open-minded and acquire new technological, ethical, and data literacies to facilitate the change processes (Ng et al., 2023). Students also need to upskill themselves, learn about AI, and develop competencies to interact with it effectively (Dwivedi et al., 2021).

While AI holds promise in solving some of humanity’s most persistent problems (Chowdhury et al., 2022; Tomašev et al., 2020), concerns such as job security, AI surveillance, and abuse of personal data have emerged. Hence, people may develop both positive and negative attitudes toward AI depending on its application (Chowdhury et al., 2022; Schepman & Rodway, 2020). Studies have reported that AI can instigate anxiety among people (Y. Y. Wang & Wang, 2022). In education, teachers are facing challenges because they do not have actionable guidelines about how to deal with AI and harness it to improve teaching and learning (Zhang & Aslan, 2021).

In response to the emerging affordances and uncertainties, education authorities have begun delineating and implementing plans to educate students about AI and cultivate their competencies to work with AI (Huang, 2021; Knox, 2020). Hence, research pertaining to K-12 students’ motivation and confidence to learn about AI has emerged in recent years (Chiu et al., 2022; Lin et al., 2021).

These past studies are mostly concerned with AI education. Most AI education curricula equip students with basic scientific and technological knowledge associated with AI (e.g., machine learning; sensors; AI strengths and weaknesses) and discuss the advantages or disadvantages of AI for social development (Casal-Otero et al., 2023; Long & Magerko, 2020). In other words, AI education equips students with AI literacy (Long & Magerko, 2020; Ng et al., 2021).

As AI education programs are implemented in the K-12 context, various studies have been conducted to study students’ self-reported AI literacy and their psychological responses (e.g., confidence in learning AI; optimism, etc.) toward the newly created curriculum (Chiu et al., 2022; Lin et al., 2021; Ng et al., 2023). AI education has also been explored in the higher education sector (Zawacki-Richter et al., 2019), and the studies are more focused on individual system evaluation (e.g., Seo et al., 2021) or on student evaluations of small-scale interventions designed to equip university students with AI literacy (e.g., Kong et al., 2023). The importance of AI education has also given rise to recent publications that are focused on surveying or testing university students’ levels of AI literacy (Hornberger et al., 2023; Laupichler et al., 2023). These instruments are appropriate for university students who have attended introductory AI literacy programs as they are content-focused, but they may be too lengthy for baseline studies.

This study investigates factors associated with university students’ intention to learn AI and includes socio-technological and psychological factors, which typical AI literacy scales do not measure. Fostering university graduates’ AI literacy is dependent on their continuous intention to learn about AI and the formation of such intention is multifaceted. Hence, investigating students’ behavioral intentions from the perspective of the theory of planned behavior (Ajzen, 2020) is an important endeavor for the advancement of AI education and higher education more broadly.

Within the context of higher education, AI is emerging as a powerful tool that can learn from data and produce new understanding. University students from different majors should acquire AI literacy and explore its potential applications for their respective subject matter and its implications for their future careers. However, large-scale theory-driven studies on university students’ perceptions of learning AI seem to be lacking (Hornberger et al., 2023; Wang et al., 2023). Identifying factors that are positively associated with university students’ intention to learn AI and their actual learning of AI can facilitate the planning and design of AI education for university students.

There is a need to specifically focus on measuring AI-related constructs among university students as they are different from K-12 students in several ways. University students are typically more academically successful given that entrance into higher education is competitive. They are educated within specialized fields, with most of them entering the workforce within a few years. The learning environment is also somewhat different as universities allow more autonomy to their students and entail more self-regulation (King et al., 2024; Xie et al., 2023). It therefore seems beneficial for the higher education sector to create survey instruments to assess university students’ intentions to learn about AI as an important form of technological literacy. In addition, modeling the relationships between the identified factors would help educators to understand the possible relationships and hence work with an empirically supported theoretical model for curriculum design.

In this study, we created the AI Learning Intention Scale (AILIS) for university students by reviewing existing research. We aimed to identify important factors that may shape university students’ learning intention for AI. The AILIS highlights the learning environmental factors, epistemic capacities, and psychological attitudes needed for the cultivation of an AI-ready generation based on recent studies (Chiu et al., 2022). Validating a parsimonious yet comprehensive scale and then investigating the hypothesized relationships among these factors can facilitate future curriculum design and implementation by providing a means to measure the progress of AI education among university students. Therefore, our study aims to address the two research questions: (1) Is the AILIS a valid and reliable scale? (2) Are the hypothesized relationships supported?

The rest of this paper is structured as follows. Section 2 reviews the relevant literature to identify the factors and proposes a structural equation model with 17 hypotheses. Section 3 presents the research methods including the participants, development of AILIS, and the data analysis approach. The results of the research are shown in Section 4, and they are analyzed and discussed in Section 5. Finally, Section 6 concludes the paper.

Literature Review and Hypotheses

AI Technology and Education

AI technology encompasses the collection of techniques, skills, methods, and processes (Martínez-Plumed et al., 2021) that create AI systems or machines which may replace and/or augment humans in performing intelligent tasks. AI is usually defined as “a system’s ability to correctly interpret external data, to learn from such data, and to use those learnings to achieve specific goals and tasks through flexible adaptation” (Kaplan & Haenlein, 2019, p. 15). Most people encounter AI in automated intelligent tasks. Common intelligent tasks include knowledge representation and reasoning (e.g., knowledge-based chatbots), learning (e.g., recommendation systems), communication (e.g., machine translation and automated speech recognition), perception (e.g., facial and text recognition), planning (e.g., timetabling), physical interaction (e.g., robots, self-driving cars), and social and collaborative intelligence (e.g., stock market agents) (Martínez-Plumed et al., 2021).

The list of intelligent tasks is growing fast, with the boundaries between the roles of machines and humans shifting toward machine automation. AI technology is profoundly changing work in almost all fields including health, mechanics, finance, and even art. For example, the Chat Generative Pre-trained Transformer (ChatGPT) has attracted great attention recently and is considered to be likely to change writing, programming, and other works (Cooper, 2023). University students should quickly understand and adapt to the existing circumstances as the world of work changes. Therefore, universities should provide undergraduates with AI education, which can equip university graduates with basic AI knowledge.

To achieve better learning, the curriculum design for general AI education should foster learners’ continuous intentions to learn AI since advancements in the field are likely to continue. Hence, university students’ perceptions of AI need to be examined. Most current studies in the field of AI education focus on the K-12 settings, indicating an emerging consensus about the topics students need to learn (Touretzky et al., 2019; Xu et al., 2021). These include key concepts such as machine learning, the multiple applications of AI (Martínez-Plumed et al., 2021), and ethical issues related to the use of AI. However, teaching students about AI has only recently begun, and there seems to be a lack of research on general AI education in universities (Hornberger et al., 2023). This is a critical gap as university students might be the ones most affected by AI. After their university studies, they will enter a world of work that is experiencing rapid changes due to AI (Charlwood & Guenole, 2022; Devagiri et al., 2022).

Theoretical Underpinnings of the AILIS Scale

This study aimed to investigate university students’ behavioral intentions to learn AI, which forms the foundation of their readiness for an AI-infused world. Readiness describes the psychological state of mind in which an individual assesses whether s/he possesses some qualities and conditions to handle what is upcoming (Lokuge et al., 2019; Parasuraman, 2000). Attitude, skills, and knowledge are common factors widely used in curriculum documents to guide teachers’ work in cultivating students’ readiness for the AI future world (Chiu et al., 2022).

With reference to AI education, this study employed the Theory of Planned Behavior (TPB) (Ajzen, 2020) supplemented by the Technology Readiness Index (TRI) (Parasuraman, 2000) to provide the theoretical framework to identify the relevant factors that shape university students’ learning intentions, and to hypothesize the possible interrelationships among the factors. TPB has been applied in many domains to understand people’s intentions and actions, specifically in the domain of emerging technologies (Ajzen, 2020). In general, Ajzen proposed that people’s intentions are positively associated with their behaviors/actions, and their intentions are shaped by three categorical factors: perceived behavioral control, subjective norms, and attitudes toward the behavior. How these factors can be represented for specific phenomena needs to be contextually defined. In this study, we have contextualized these three aspects into epistemic capacity, facilitating environments, and psychological attitudes. Based on the TPB, Figure 1 provides an overview of the dimensions and factors and their hypothesized interrelationships based on past research for the proposed AILIS. The following sections elaborate on the identification of the factors and the 17 hypothesized relationships for this study.

The hypothesized interrelationships among the dimensions and factors of AI Learning Intention.

Epistemic Capacity

Reasoned action is undergirded by knowledge or beliefs people hold to inform their decision for the intended behavior (Fishbein & Ajzen, 2009). In the case of AI, people need to acquire epistemic capacity that includes Basic Content Knowledge about AI (AICK). In addition, technical skills (i.e., programming ability indicated by Programming Efficacy (ProE) and design thinking that can create socially good artifacts (Design for Social Good, SG) have been identified as important factors that maintain or enhance secondary students’ both intrinsic and extrinsic motivation, and then shape their behavioral intentions (Y. M. Wang et al., 2022).

The basic knowledge needed for one to make sound cognitive and emotive responses to AI has been delineated (Martínez-Plumed et al., 2021). AICK enables learners to understand how AI works before they can carry out other work like designing AI products (H1) and programming (H2), while programming efficacy enables learners to realize the design (i.e., H3). Programming skills are essential skills for the creation of AI models and are playing an increasingly important role in today’s AI education (Sun et al., 2023). These interrelationships (H1–H3) are supported by recent studies in K-12 settings (Chai et al., 2021; Chiu et al., 2022). Among the three epistemic factors, earlier studies have indicated that SG (H4) and ProE (H5) are positively associated with BI (Tellhed et al., 2022; Y. M. Wang et al., 2022). Furthermore, AICK is not directly associated with BI but is mediated through SG (Chai et al., 2021). SG denotes students’ active and creative synthesis of their knowledge, skills, perceived social desirability, and ethical considerations associated with the making of AI applications for the common good. Designing for the common good has been a key aim of computer engineering education (Goldweber et al., 2011). SG is defined as the innovative “use of AI knowledge to solve problems and improve people’s lives” (Foffano et al., 2022), and it is a significant predictor of students’ BI (Chai et al., 2020) (H4). In addition, programming skills are complex skills to acquire (Tsai et al., 2019), and existing study supports ProE as being predictive of BI (Tellhed et al., 2022) (H5). In sum, the epistemic capacity represented by these factors expands and contextualizes the TPB for AI education, corresponding generally to the perceived behavioral control dimension of the TPB.

Based on the TPB and past research (Chai et al., 2020, 2021), the following hypotheses were proposed:

H1: Students’ mastery of Basic Content Knowledge of AI (AICK) is positively associated with Design for Social Good (SG).

H2: Students’ mastery of Basic Content Knowledge of AI (AICK) is positively associated with Programming Efficacy (ProE).

H3: Students’ Programming efficacy (ProE) is positively associated with Design for Social Good (SG).

H4: Students’ Design for Social Good (SG) is positively associated with Behavioral Intentions to Learn AI (BI).

H5: Students’ Programming Efficacy (ProE) is positively associated with Behavioral Intentions to Learn AI (BI).

Facilitating Environments

Intention and actual behaviors are influenced by individuals’ environments (Ajzen, 1991). In an organization like a university, people’s adoption or rejection of AI technology could determine the opportunities that the organization may have in sustaining or expanding itself (Jöhnk et al., 2021). Assessing acceptance identifies the gaps organizations face with reference to the organization’s technological assets, staff capabilities, and management commitment (Alsheibani et al., 2018) which have implications for the education programs universities can provide. The organization’s technologically-rich technology-facilitating environments can be undergirded by theoretical frameworks including the TPB (Ajzen, 2020), the Technology Acceptance Model (TAM) (Granic & Marangunic, 2019), and the Technology Organization Environment (TOE) framework (Rjab et al., 2023).

Based on these theories, facilitating environments like innovation’s characteristics, management’s commitment, and organizational characteristics are factors that need assessment to examine their influence on learners’ learning intentions. These factors correspond generally to the subjective norms of TPB. These factors have also been reported as significant factors that shape school technology integration (Zhao et al., 2002). Access to Support and Technology (Aces) is a key factor that determines one’s intentions. One could hardly receive AI education without interacting with adequate learning resources that support learning (e.g., instructional materials or forums) and using AI technology. Currently, AI technologies are easily accessible via many applications or systems such as online translation, image-searching functions (e.g., Google Images), and generative chatbots. The wide access to AI technology and actual use coupled with its ease of use are likely to create a social norm for people to expect others to learn and use AI technology. Hence, this study hypothesizes that the Actual Use of AI Systems (AU) and wide Access to Support and Technology (Aces) are contributing factors to subjective norms (H6, H7).

Currently, various advances in the programming environment (e.g., block-based programming tools, tangible programming education applications, gamification) have been widely adopted in school programming education. Access to these supportive technological environments and learning resources have been reported to be effective in programming education and may contribute to students’ ProE (H8) (Chiu et al., 2022).

Subjective norms, or Supportive Social Norms (SN), refer to the normative beliefs about significant others’ (e.g., leaders, teachers, peers, parents) approval or disapproval of certain behaviors (Ajzen, 2020). This general social expectation has been translated into school provision of programming lessons, and technological environments facilitate students’ acquisition of programming skills (Chai et al., 2021); hence, SN should predict students’ ProE (Su et al., 2022; Wu, 2019) (i.e., H9). SN is closely related to students’ daily experiences, which should be another important factor that can predict students’ BI (Chai et al., 2020) (H10). This hypothesis can also be supported by Hossain et al. (2023) study, which pointed to a positive and significant relationship between subjective entrepreneurial norms and the entrepreneurial intention of Gen Z university students.

In sum, the following hypotheses were proposed based on the socio-technological environmental factors:

H6: Students’ Actual Use of AI Systems (AU) is positively associated with Supportive Social Norms (SN).

H7: Students’ Access to Support and Technology (Aces) is positively associated with Supportive Social Norms (SN).

H8: Students’ Access to Support and Technology (Aces) is positively associated with Programming Efficacy (ProE).

H9: Supportive Social Norms (SN) is positively associated with their Programming Efficacy (ProE).

H10: Supportive Social Norms (SN) are positively associated with Behavioral Intentions to Learn AI (BI).

Psychological Attitudes

Individual intentions to learn and use technology are determined by key psychological factors (Parasuraman, 2000). Parasuraman’s work on the Technology Readiness Index (TRI) highlighted the important role of optimism. Optimism reflects the individual’s belief that various and advanced technology affords individuals more control, efficiency, and flexibility. Building on TRI, Lin et al. (2021) proposed that optimism reflects an individual’s belief that various advanced technologies afford individuals more control and flexibility, and increase their efficiency. The TRI has been widely used in business settings. It views optimism as a personal trait (see also Zhao et al., 2002) that forms part of the underlying source for technology adoption.

The contextual differences between business and education settings imply that the factors and items will need recontextualization. One study on individuals’ growth or fixed mindset in relation to adopting AI revealed that adopting a growth mindset energized the participants, while a fixed mindset reflected a fatalistic outlook, which undermined the desire to adopt AI (Farrow, 2020). To foster educational development, Optimism (Op) was adapted from a positive psychology perspective (King et al., 2020; King & Caleon, 2021); and hypothesized to be positively associated with students’ BI (H16). Optimism has been reported to be positively associated with the intention to learn about genome sequencing (Taber et al., 2015). In addition, negative emotions are viewed as barriers to AI readiness that require educational efforts to develop students’ resilience to overcome obstacles. Many studies have simultaneously confirmed the positive effects of resilience and optimism on work outcomes (e.g., Miteva, 2023) and found that they were the best predictors of work engagement (Nieto et al., 2022), which provides some support for H16.

Resilience (Res) is defined as the attitude to counter adversity with adaptative actions which facilitate healthy adjustment among students in a school context (Borman & Overman, 2004; Smith et al., 2008; Wang et al., 2022, 2024). In the context of AI advancements, students need to be resilient in pursuing adaptive actions to strengthen their thinking and interpersonal skills. Hence, resilience might be positively linked to optimism, as it helps students cope with stress and maintain their Optimism (Op) (H12). While most prior studies reveal that optimism predicts resilience (e.g., Martínez-Martí & Ruch, 2017), this study challenges the existing literature and argues that university students may need to exert effortful learning before they can be optimistic about their future in an AI-infused world.

Personal Relevance (Rel) denotes students’ positive view of AI as a means to help them meet their personal goals (Keller, 2010) was added as part of the psychological attitude; it can also lead to students’ Op or BI (H11, H15). Past studies have found that personal goal relevance is positively associated with optimism (Madrigal, 2003) (H11). In addition, the relevance of AI technology can be interpreted as AI being useful in facilitating work and achievement, which TAM research consistently reported as a predictor for behavioral intention (H15) (Marangunic & Granic, 2015). These three psychological factors correspond generally to the dimension of attitude toward the behavior in TPB (Ajzen, 2020).

Students’ orientation toward designing and using AI for social good would contribute to optimism (H14) (Chai et al., 2020, 2021). In addition, convenient and useful programming tools or platforms designed for students can contribute to stimulating their interest and improving their emotions (Alulema & Paredes-Velasco, 2021; Xinogalos & Tryfou, 2021), and reduce the barriers to learning AI. Without access to AI technology and support to learn about it, university students would be deprived of the opportunities to develop relevant AI literacy to get ready for the workplace. Access and support have been identified as a key enabler of ICT competence (Boulton & Hramiak, 2014). Hence, Access to Support and Technology (Aces) is hypothesized to be positively associated with students’ Optimism (Op) (H13).

Hence, the following hypotheses were proposed:

H11: Students’ Personal Relevance (Rel) is positively associated with Optimism (Op).

H12: Students’ Resilience (Res) is positively associated with Optimism (Op).

H13: Students’ Access to Support and Technology (Aces) is positively associated with Optimism (Op).

H14: Students’ Design for Social Good (SG) is positively associated with Optimism (Op).

H15: Students’ Personal Relevance (Rel) is positively associated with Behavioral Intention to Learn AI (BI).

H16: Students’ Optimism (Op) is positively associated with Behavioral Intention to Learn AI (BI).

Focal Outcomes

People’s Behavioral Intentions to Learn AI (BI) and their Actual Learning of AI (AL) are the targeted outcomes of this study. Learning intention leading to actual learning is the main means for people to be ready for the AI-infused world. Studies based on TPB have confirmed that people’s BI can lead to their AL directly and significantly (T. Wang & Cheng, 2021); H17 was thus formulated.

H17: Students’ Behavioral Intention to Learn AI (BI) is positively associated with Actual Learning of AI (AL).

After we hypothesized the relationship between these factors, we used Structural Equation Modeling (SEM) to examine the predictive relations among the factors as reflected our research questions, as previously mentioned.

Research Methods

Participants

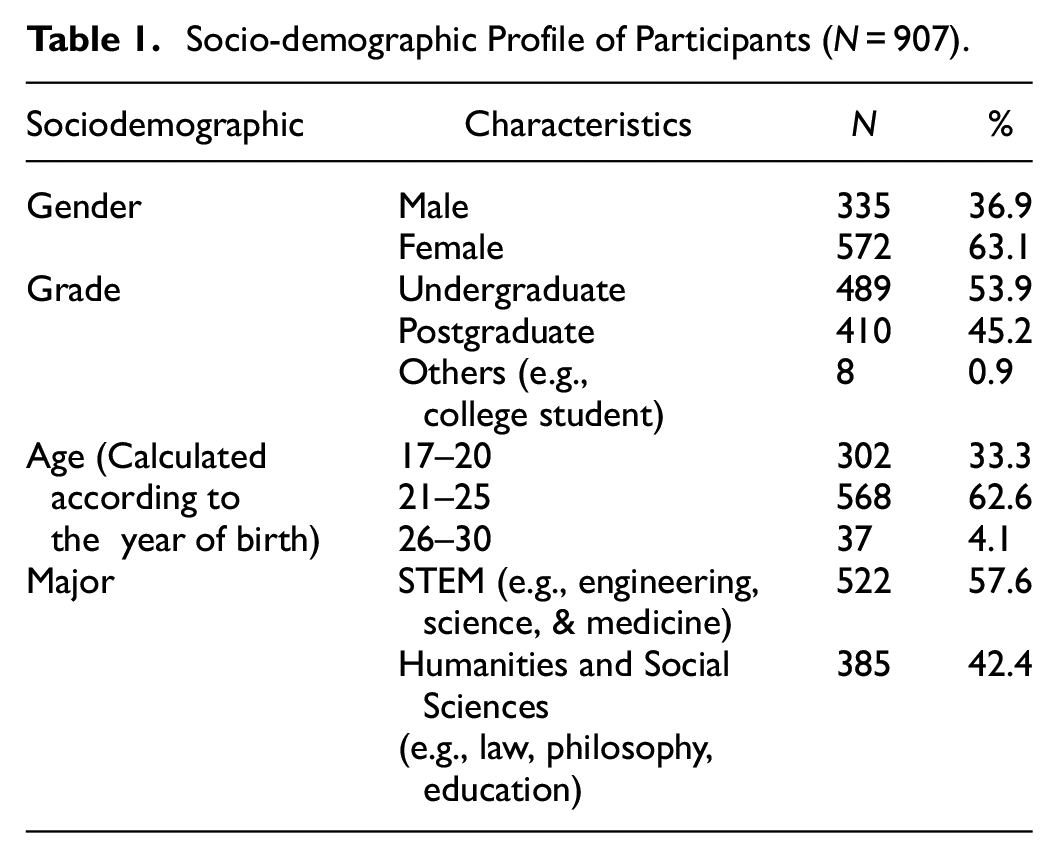

To collect data, the survey was distributed in the form of an online questionnaire during the 2022 fall semester. We explained to the participants in the questionnaire guide that this questionnaire is anonymous and that they can withdraw at any time. The questionnaire comprises two parts. The first part collects demographic data including gender, age, university, grade level, and discipline. A total of 907 university students from various universities in Beijing, Shanghai, Guangzhou, and Shandong participated in the survey. The descriptive statistics of the participants’ demographic data are shown in Table 1. Most of the participants were female students (63.1%). The mean age was 21.3 (SD = 1.5); 53.9% were undergraduate students (n = 489) while the rest were postgraduate and others. In terms of major, many students belonged to STEM disciplines including engineering (29.7%), science (19.1%), and medicine (8.8%), while others were from humanities and social sciences, for example, law, philosophy, and education. As students needed to have some basic knowledge to understand the questionnaire, only students who indicated that they had a preliminary understanding of AI were included in the study. This research has been approved by the Research Ethics Review Committee of the Faculty of Education at Beijing Normal University (IRB: BNU202203100017).

Socio-demographic Profile of Participants (N = 907).

Instrument

The second part of the questionnaire is the main body of AILIS, which comprises 11 factors organized within the four categories of (1) Epistemic Capacity, (2) Psychological Attitudes, (3) Facilitating Environments and (4) Psychological and Behavioral Outcomes. A 5-point Likert scale that ranged from 1 (strongly disagree) to 5 (strongly agree) was employed. Such 5-point Likert scales are quite common in educational and social science research and have been found to be appropriate for measuring attitudes across a wide range of domains (Croasmun & Ostrom, 2011).

Each category consisted of several factors, and each factor consisted of more than four items adopted or adapted from previous studies (see Table 2) with the exception of Aces (Access to Support and Technology), AU (Actual Use of AI Systems), and AL (Actual Learning of AI). The self-constructed items were based on commonly available resources and support for the use and learning of AI the authors gathered in previous interactions with users (Chai et al., 2021). All items were subjected to expert review by three professors (two in educational technology and one in educational psychology) for two rounds to establish content validity. Twenty-five university students were invited to participate in the pilot study in which they completed the questionnaire and raised questions about unclear items. Table 2 shows the definitions of the factors and also provides sample items with sources of items referenced.

The Definitions and Sample Items of Each Factor.

Data Analysis

This research used SPSS 26.0 and AMOS 26.0 to conduct the data analysis. Before data analyses were performed, normality was tested. A Confirmatory Factor Analysis (CFA) was executed to examine the construct validity of the questionnaire. Pearson correlations were obtained to examine relationships among the variables. Afterward, Structural Equation Modeling (SEM) using AMOS 26.0 was adopted to test the proposed model (Figure 1). These methods are often used together to validate the factor structure of a questionnaire and explore the relationships between variables (e.g., DiStefano & Hess, 2005).

SEM is a powerful statistical technique that allows the examination of complex relationships among multiple variables simultaneously, allowing researchers to examine direct and indirect effects within a single model. It provides different fit indices that allow researchers to assess the fit between the hypothesized model and the observed data. This can be used to evaluate the accuracy of the model and to evaluate the hypotheses posited by the researchers. Furthermore, SEM accounts for measurement error by explicitly modeling latent (unobserved) variables and their observed indicators. This increases the accuracy and reliability of the analysis (Byrne, 2010).

Results

The findings are reported firstly with the results of the Confirmatory Factor Analysis (CFA) and the correlations to provide the foundations for SEM. This is followed by the testing of the hypotheses.

Preliminary Analyses

All the measured items had appropriate skewness (ranging from −1.096 to 0.651) and kurtosis (ranging from −1.261 to 1.893), far smaller than the requisite maximum value of |3|, indicating that the data of all items were close to the normal distribution (Farooq, 2016).

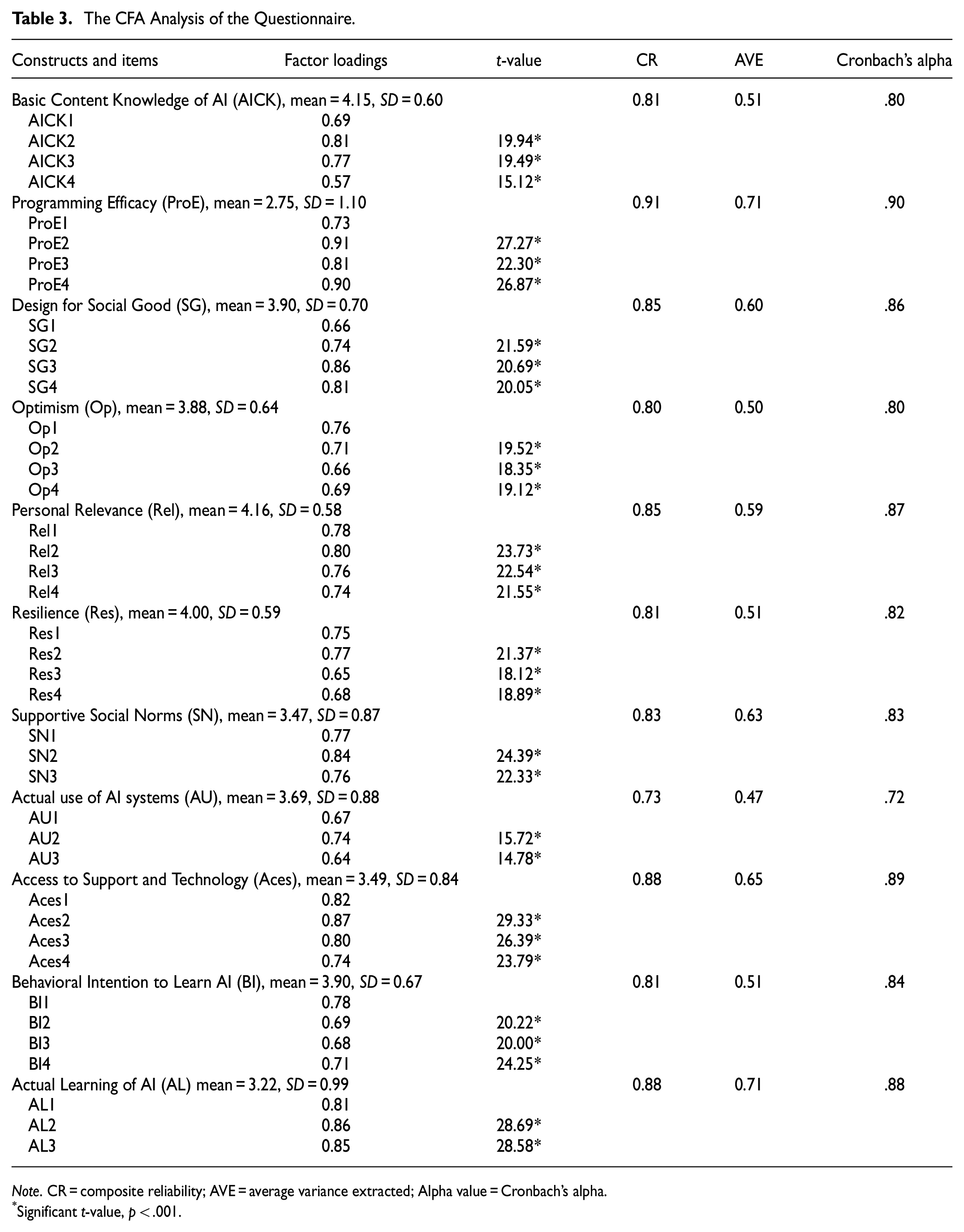

Confirmatory Factor Analyses

The results and the descriptive data of its factors are shown in Table 3 after removing some items from the analysis. These items were phrased too generally or were not sufficiently related to the corresponding factor. For example, one item of Actual Learning of AI was “I have found out how AI applications work through using them,” but this kind of work usually belonged to professional programmers or developers instead of learners.

The CFA Analysis of the Questionnaire.

Note. CR = composite reliability; AVE = average variance extracted; Alpha value = Cronbach’s alpha.

Significant t-value, p < .001.

The factor loadings of all the remaining items were statistically significant (p < .001) and higher than the threshold value of 0.50 (ranging from 0.57 to 0.90). The reliability (Cronbach’s alpha) coefficients for all these factors ranged from .72 to .90, exceeding the required .70 (Fornell & Larcker, 1981), indicating sufficient internal consistency. In addition, the Composite Reliability (CR) coefficients ranged from 0.73 to 0.91, all exceeding 0.70, and the Average Variance Extracted (AVE) values ranged from 0.47 to 0.71, exceeding 0.40 (Fornell & Larcker, 1981).

Regarding the model fit, the corresponding standards were adopted (Hair et al., 2014): indices of χ 2 / df (< 5.0), Root Mean Square Error of Approximation (RMSEA) (<0.10), Incremental Fit Index (IFI) (>0.90), Comparative Fit Index (CFI) (>0.90), and the Tucker-Lewis Index (TLI) (>0.90). In addition, indices of Goodness of Fit Index (GFI) (>0.80), and Adjusted Goodness of Fit Index (AGFI) (>0.80) were also used (Donald & Peter, 2000). The following fit indices were obtained: χ2 / df = 2.24 (<5.0), GFI = 0.92 (>0.80), AGFI = 0.90 (>0.80), IFI = 0.96 (> 0.90), TLI = 0.95 (>0.90), CFI = 0.96 (>0.90), RMSEA = 0.04 (<0.10), indicating that the fit was acceptable. Pearson’s correlation coefficients among the factors in the hypothesized model (Figure 1) were then calculated to detect the correlations among these factors, which were all significant. They are presented in Table 4. All the square root values of the AVE of each component were higher than the correlations between it and other factors, indicating good discriminant validity. Therefore, the structural validity and reliability of the questionnaire were confirmed.

Correlations Among the Factors and AVE of the Factors.

Note. The bold numbers on the diagonal are the square root values of AVE of each factor. The non-diagonal absolute value is the correlation coefficient of each factor. All the correlations are significant (p < .001). AICK = Basic Knowledge); ProE = Programming Efficacy; SG = Design for Social Good; Op = Optimism; Rel = Personal Relevance; Res = Resilience; Aces = Access to Support and Technology; AU = Actual Use of AI Systems; SN = Supportive Social Norms; BI = Behavioral Intention to Learn AI; AL = Actual Learning of AI.

Structural Equation Modelling (SEM)

Based on the correlation results, SEM was employed to explore the structural relationships among the factors of AI readiness. The fit indices of the structural model show that the model had an acceptable fit: χ 2 / df = 3.13 (<5.0), GFI = 0.88 (>0.80), AGFI = 0.86 (>0.80), IFI = 0.92 (>0.90), TLI = 0.92 (>0.90), CFI = 0.92 (>0.90), RMSEA = 0.05 (<0.10). Table 5 reveals that 16 hypotheses were supported, except for H2. The final structural model is shown in Figure 2.

Findings of Hypotheses Testing With SEM.

Note. **p < .01; ***p < .001. AICK = Basic Knowledge; ProE = Programming Efficacy; SG = Design for Social Good; Op = Optimism; Rel = Personal Relevance; Res = Resilience; Aces = Access to Support and Technology; AU = Actual Use of AI Systems; SN = Supportive Social Norms; BI = Behavioral Intention to Learn AI; AL = Actual Learning of AI.

The path coefficients of the structural model.

R 2 is used to judge how much variance in the outcome variable is accounted for by the predictors. In this study, the R2 values of ProE, SG, Op, SN, BI, and AL were respectively .35, .21, .47, .45, .73, and .59. According to Cohen (1988), R 2 values between .13 to .26 depict a moderate effect size, and those above .26 depict a strong or substantial effect size. Based on these criteria, the relationships among the variables were moderate to strong.

Discussion

In response to the need to educate learners about AI (Dwivedi et al., 2021; Mogaji et al., 2022) because of its rapid proliferation, this study investigated university students’ behavioral intentions to learn and their actual learning of AI. A survey entitled AILIS was created with eight factors adapted from earlier studies and three self-constructed factors. The survey was validated with 907 university students using CFA. SEM results indicated that 16 out of the 17 hypotheses were supported. Overall, the survey could contribute to AI education by validating scales that could provide means to measure how newly designed AI curricula are facilitating epistemic growth, psychological development and environmental provisions (Zhai et al., 2021).

The survey indicated university students’ strong behavioral intentions to learn AI (M = 3.90, SD = 0.67), with actual learning just above the neutral score of 3 (M = 3.22, SD = 0.99). All other dimensions ranged from 3.47 to 4.16. The programming efficacy of university students was lower than 3 (M = 2.75, SD = 1.10). The findings may indicate that university courses that introduce AI literacy with some experience in programming intelligent machines could be useful in addressing the university students’ need to develop AI literacy. Based on TPB (Ajzen, 2020), the study was able to identify relevant factors at the university level about the knowledge, skills, and attitude factors that are important in AI education (Chiu et al., 2022).

The Epistemic Capacities Needed for AI Education in Universities

To upskill learners for the AI age, it is necessary to create an AI curriculum with appropriate content and processes (Chiu et al., 2022). The epistemic factors identified in this study comprise basic AI knowledge, programming efficacy, and designing for social good. Contrary to a previous study (Chao et al., 2021), university students do not perceive basic AI knowledge as contributing to programming efficacy (H2) even though they are positively correlated. H2 is the only unsupported hypothesis. The contrary finding may be due to contextual differences as Chao et al.’s (2021) study was among students pursuing information technology (IT) or computer-related degrees.

Otherwise, AI basic knowledge is positively associated with social good (H1), and programming efficacy is positively associated with social good (H3). While programming was not identified explicitly as part of AI education, especially for K-12 classrooms (Touretzky et al., 2019; Xu et al., 2021), this study indicated that programming efficacy is positively associated with students’ design for social good and behavioral intentions to learn AI (Chai et al., 2021; Tellhed et al., 2022). The importance of designing for social good as part of the AI curriculum is recognized by university students as a significant predictor of their behavioral intentions to learn AI (Goldweber et al., 2011). It suggests that university students may have stronger intentions to participate in AI courses if the courses can empower them with programming skills and design tasks targeted at social good. The perception that AI education necessarily involves programming could be debated, especially for general literacy courses. Regardless, the significant association indicates that course design needs to address the close connections between programming skills and AI (T. Wang & Cheng, 2021).

The Facilitating Environments Needed for AI Education in Universities

As hypothesized, the present study shows that Subjective Norms (SN) are a significant predictor of students’ behavioral intention to learn AI (H10), which is consistent with past theories and research (Ajzen, 2020). The current proliferation of many AI applications in mobile devices allows students easy access and hence they can experience the actual use of AI (H6, H7), which has positively shaped social expectations manifested as schools supplying AI courses (e.g., Chiu et al., 2022).

As mentioned, programming is perceived to have a close relationship with AI (Wu, 2019). Students’ responses to social expectations are positively shaping their programming efficacy (H9) as hypothesized. In addition, the easy access to AI resources and the close link between the AI curriculum and learner-friendly programming activities (Su et al., 2022; T. Wang & Cheng, 2021) seem to also be shaping students’ programming efficacy (H8). Another positive association between access and optimism has been confirmed (H13). These findings indicate the importance of facilitating environments (Jöhnk et al., 2021), and extend the current understanding of the possible relations to the epistemic and psychological dimensions.

The Psychological Attitudes Needed for AI Education in Universities

Optimism and personal relevance were identified as important psychological factors that can directly impact students’ behavioral intentions to learn AI. This indicates that if students perceive the relevance of understanding AI technologies and remain hopeful about the emerging AI world, they are more likely to learn AI (H15, H16). The supported hypotheses were congruent with previous studies about psychological factors in AI education (Lin et al., 2021). The importance of establishing relevance for learners for any subject matter is well understood by educators (e.g., Keller, 2010). Relevance motivates one to acquire the skills and knowledge needed, which forms the basis of Optimism (H11).

Resilience helps students face the stress and challenges of learning (Caleon & King, 2021; King & Caleon, 2021; Smith et al., 2008), and hence contributes to students’ optimism (H12). The finding challenges the commonly reported optimism as predictor of relevance association (Martínez-Martí & Ruch, 2017) and indicates that there could be contextual differences in terms of the direction of influence. Optimism deters fatalistic outlooks and enables people to adopt positive action (H16) (Farrow, 2020; King et al., 2020). This is translated as intention to learn and actual learning (H17), which is well documented (Ajzen, 2020). In this study, the students’ perceptions of designing AI use for social good were positively associated with optimism (H14). Amid uncertainties brought about by AI technologies, designing for social good addresses the many fears people are facing (Wang & Wang, 2022) and can steer the course of technological development toward a more hopeful future. The finding that students’ orientation toward designing and using AI for social good could shape their intention to learn AI has been consistently reported in K-12 contexts (Chiu et al., 2022; Lin et al., 2021) and it is further supplemented by this study for university students. AI educators designers may need to pay attention to helping students to create socially good AI products (Casal-Otero et al., 2023).

Conclusions and Limitations

This study validated a psychometrically sound instrument that could be helpful in future research pertaining to AI education for university students. In addition, it revealed how epistemic, environmental, and psychological factors facilitate students’ intentions to learn AI and their actual AI learning. As AI has the potential to empower various fields, it is becoming increasingly common in the higher education sector, and a tool that can measure students’ attitudes to AI would be of significant value to university students, teachers, and administrators. As AI technologies may be applied differently across diverse subject matters, one possible future research is to conduct subject-specific investigations among university students to examine their intentions to learn AI across disciplines. We conjecture that students with a deep understanding of their subject matter may develop a more contextualized understanding of how to use AI. In other words, students’ mastery of the subject matter could be an important covariate to measure.

Despite its strengths, this study also has some limitations. One key limitation is the adoption of convenience sampling. Hence, the findings may need to be further tested with more representative samples, perhaps through stratified sampling. In addition, this study could be further enriched by the collection of qualitative data to understand and further unearth other relevant factors that could shape university students’ intention and learning of AI. A third limitation is the cross-sectional nature of the data. Hence, the relationships among the variables may need to be further verified using longitudinal approaches. As students learn more about AI over time, the relationships among the variables could also evolve. Future research may also further test the effects of different pedagogical approaches (e.g., design-based learning) on these measured factors.

Footnotes

Acknowledgements

None.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This project was funded by the Beijing Social Science Foundation Project (22JYA005).

Ethics

This research has been approved by the Research Ethics Review Committee of the Faculty of Education at Beijing Normal University (IRB: BNU202203100017).