Abstract

Innovation capability has become a necessary requirement for qualified teachers in the context of informatization. However, the validity and objectivity of existing assessments are unclear. Therefore, this study selected nine researchers to evaluate the instructional innovation capabilities of 60 pre-service teachers from a Chinese normal university. The Many-Faceted Rasch Model (MFRM) was used to measure and analyze the rater severity, difficulty of evaluation criteria, and instructional innovation capability of pre-service teachers. Combined with qualitative analysis, the results showed that most participants had moderate instructional innovation capability, and only certain individuals demonstrated a high level. In addition, innovation related to teaching content and learning activities was easier than innovation related to instructional and evaluation strategies. These results suggest directions for fostering pre-service teachers’ instructional innovation capabilities.

Plain Language Summary

Purpose: Using Many-Faceted Rasch Model (MFRM) to create a profile of pre-service teachers’ with regard to their instructional innovation capability. Methods: This study selected nine researchers to evaluate the instructional innovation capabilities of 60 pre-service teachers from a Chinese normal university. The MFRM was used to measure and analyze the rater severity, difficulty of evaluation criteria, and instructional innovation capability of pre-service teachers. Conclusions: Most participants had moderate instructional innovation capability, and only certain individuals demonstrated a high level. In addition, innovation related to teaching content and learning activities was easier than innovation related to instructional and evaluation strategies. Implications: Different instructional plans, the severity of following to the plan and rater severity might have caused differences in the evaluation results.

Keywords

Introduction

The information age has spurred an increasing demand for the ability to innovate (Jing et al., 2022; Tams & Dulipovici, 2022; Xu, 2022). Pre-service teachers, as practitioners of educational reform responsible for developing their students’ ability to innovate, should pay attention to the development of their own instructional innovation capability (Avsec & Savec, 2021). In recent years, organizations and institutions in many countries have instituted polices requiring this ability of pre-service teachers, particularly the ability to transform traditional teaching and set up a new type of classroom. For example, in 2010, the Australian Institute for Teaching and School Leadership Limited (AITSL) issued the Australian Professional Standards for Teachers, which requires pre-service teachers to possess (a) interdisciplinary knowledge and the ability to integrate it innovatively; (b) the ability to use modern information technology to change traditional teaching; and (c) the ability to diversify instructional activities to enhance students’ personalized learning (AITSL, 2010). The National Council for Accreditation in Teacher Education (NCATE) in the United States has stated that pre-service teachers need to adjust teaching content to the characteristics of students and evaluate students’ achievement based on data (NCATE, 2008). The Action Plan for Revitalizing Teacher Education (2018–2022) indicated that the instructional innovation capability of teacher-education students at normal universities should be improved, which was issued by the Ministry of Education in 2011 (Ministry of Education of the People’s Republic of China, 2011).

Many scholars have investigated instructional innovation capability, which has provided impetus and expectations for the development of this capability among pre-service teachers (Hervás-Gómez, 2022; Umamah et al., 2021; Walczyk et al., 2010). Some researchers have indicated that instructional innovation capability must include the ability to use and integrate information technology in innovative ways (Artacho et al., 2020; Ottenbreit-Leftwich et al., 2015). Petek and Bedir (2018) pointed out that in a technology-supported classroom, the ability to innovate is reflected in critical thinking and creativity. Other researchers have proposed that the ability to innovate in terms of teaching patterns (e.g., online collaborative learning and flipped classrooms) and instructional strategies is necessary for pre-service teachers (Brodahl et al., 2011; H. J. Tang, 2019; van Garderen et al., 2021).

In summary, the goal of enhancing pre-service teachers’ instructional innovation capabilities has been promoted and supported by both educational policies and researchers. This has brought new challenges and development opportunities to teacher education in normal colleges and universities, and the fostering of pre-service teachers’ instructional innovation capabilities has become a subject worthy of attention (Chen et al., 2022; Zhang, 2022).

As an important way to enhance teaching innovation, evaluation can provide data and guidance for enhancing pre-service teachers’ capabilities (Kong, 2020; Yi et al., 2022). Researchers have focused on assessing the general instructional capability of pre-service teachers. For example, Gutiérrez-Anguiano and Chaparro Caso-López (2020) designed and developed a self-assessment scale to measure teachers’ instructional practice capabilities. Memduholu and Kele (2016) preferred to assess students’ critical thinking and problem-solving abilities. Ansyari (2018) assessed teachers’ competence in instructional plans according to the components of the curriculum. With the growth in importance of innovative teaching, scholars have begun to study the behaviors associated with such teaching. For example, Pollard et al. (2018) investigated the creative teaching experience of STEM educators, and the Canadian Innovative Teaching Group has discussed cases of innovative teaching (Reilly et al., 2011). However, these evaluations of teachers’ innovative teaching are mostly based on surveys or interviews, and the validity and objectivity of such self-reported data have yet to be confirmed (Nisbett & Wilson, 1977).

Considering that pre-service teachers do not have a long-term, stable teaching placement, what kinds of data or tools can effectively demonstrate their instructional practice capability? According to E. C. K. Cheng (2014), instructional plans reflect the process by which teachers understand how students learn, develop and integrate their own knowledge and abilities, so as to acquire the teaching skills required for the implementation of teaching. Moreover, studies have evaluated aspects of teachers’ abilities by analyzing instructional plans (Hughes, 2014; Lyublinskaya & Tournaki, 2014; Vekiri, 2014). Accordingly, we analyze instructional plans as a measure of instructional innovation capability. However, the evaluation of these instructional plans may be influenced by the subjectivity of the evaluator and the scientificity of the evaluation criteria. Some raters may be too harsh, others may be too lenient, and the criteria may be too complex or too easy (Hamp-Lyons & Davies, 2008; Johnson & Lim, 2009). As these factors may affect the objectivity and authenticity of the evaluation, an objective evaluation method is needed.

The Many-Faceted Rasch Model (MFRM) constitutes a new approach to evaluating pre-service teachers’ instructional innovation capability because it can measure and verify evaluation results for multiple aspects (Kaliski et al., 2012). According to Engelhard (1992) and Linacre (1989), MFRM has the following advantages over classical test theory. First, Rasch measurements locate each aspect on a common underlying linear scale. This not only allows for traditional data measurements but also accounts for the severity of ratings and the difficulty of the criteria. Second, the Rasch technique removes the influence of sampling variability from the measurements. That is, the sampling method and whether the sample is normally distributed do not affect Rasch assessment results. Finally, the Rasch model can reveal the consistency of raters and evaluation criteria with the model by calculating the fitness of each level to the measurement model (Basturk, 2008). Engelhard (1992) points out that the Rasch model can be applied to any experimental setting to help researchers compare certain attributes or characteristics of raters and criteria. At present, MFRM has been widely used to evaluate referee scoring in international competitions, students’ performance in school subjects, professional interviews, medical nursing, and other fields (Boone et al., 2016; Chapman et al., 2013; Fischl et al., 2021; Han, 2015). Hence, it is appropriate to apply MFRM to evaluate pre-service teachers’ ability to innovate in their teaching.

The purpose of this study was to use MFRM to create a profile of pre-service teachers’ instructional innovation capability. This overcomes the shortcomings of existing methods and yields a more objective and precise understanding of pre-service teachers’ instructional innovation capability. We except this study to provide guidance for fostering this ability. The next section reviews the literature on instructional innovation capability, the assessment of instructional innovation capability, and the Many-Faceted Rasch Model.

Instructional Innovation Capability

Although instructional innovation capability has been widely discussed, a standard definition is lacking. For example, Rincon-Ussa et al. (2020) posited that innovative teachers acquire new roles and actions to conduct the teaching process, apply and transfer knowledge, and use new multimedia tools or resources to effectively innovate students’ learning. Y.-C. Cheng and Tsai (2012) argued that innovative teaching behaviors are reflected in evaluation methods, course materials, teaching ideas, teaching methods (strategies), learning resources, and class management. Similarly, Yao (2009) pointed out that, compared with general instructional capability, instructional innovation capability is reflected in the updating of teaching content, teaching methods, evaluation methods, and other elements of the teaching process. There is a consensus in the above studies that innovation in the various teaching elements can occur with information technology such as multimedia tools and digital resources. This is consistent with other scholars’ views that technology-infused teaching belongs to the category of innovative teaching (Artacho et al., 2020; Jang & Chen, 2010; Tondeur et al., 2012). However, other studies have found that the effect of using new technology to innovate in teaching is uncertain and may be influenced by the teaching environment, learner characteristics, and educator experience (Blasco-Arcas et al., 2013; Lantz, 2010; Moreno-Guerrero et al., 2021; Rana et al., 2016).

Based on existing research, instructional innovation capability in this study refers to the ability to design innovative teaching resources, tools, activities, evaluation to create innovative and effective classes. It is evident that instructional innovation capability involves not only introducing new methods or technologies, but also using them flexibly to transform and promote effective instruction. In summary, this review shows that instructional innovation capability is reflected in the innovation of various teaching elements. More importantly, it is the flexible integration and use of resources, tools, and technologies to produce innovative and effective classrooms that support students’ learning and development.

Assessment of Instructional Innovation Capability

Researchers have mainly assessed teachers’ instructional innovation using self-report techniques such as questionnaire surveys and interviews. For example, Fernandez-Cruz and Rodriguez-Legendre (2022) used a questionnaire to design a capability framework for university instructors to innovate in teaching. Other scholars have collected data on innovation capability in classroom teaching using questionnaire surveys (Mao & Zhang, 2018; Wang et al., 2010). Y.-C. Cheng and Tsai (2012) developed a questionnaire scale that included teaching content, instructional strategies, and instructional evaluation to evaluate teacher behaviors. In contrast, Fang et al. (2010) explored the impact of personal digital assistants on teachers’ instructional innovation capability through focus group interviews. They found that an increasing number of science and technology teachers innovated in teaching and consistently enhanced this ability by sharing professional knowledge and teaching experiences.

Although the analysis of self-reported data is the most common method for evaluating instructional innovation, the validity of such data has been questioned (Nisbett & Wilson, 1977). Other scholars have researched the reliability of self-reported data. Cohen and Lemish (2003) and Parslow et al. (2003) compared self-reported cell phone use with actual use, but their findings were inconsistent. Therefore, evaluating pre-service teachers’ instructional innovation capabilities only through their self-reported data may not yield accurate results, because differences in their understanding of the associated concepts and self-serving bias may affect the accuracy of the assessment. Obviously, more effective and objective methods of evaluating teachers’ instructional innovation capabilities should receive close attention.

The Many-Faceted Rasch Model

Initially, the two-faceted model is a relatively simple Rasch model that describes the relations between items and the subject’s abilities. After calibration, both items and subjects are placed on the same metric in logits, providing greater independence and objectivity (Linacre, 1989). The two-faceted Rasch model can be extended to the Many-Faceted Rasch Model (MFRM; Linacre, 1989). For example, if the raters of instructional innovation capability are included in the model, the two-faceted model becomes a three-faceted model (i.e., item, rater, and subject). In contrast to classical test theory, MFRM, based on item response theory, has some advantages in measurement, as shown in Table 1. Specifically, the advantages of the MFRM are as follows: MFRM can not only provide the measured values of every facet (e.g., item difficulty, rater severity, and subject ability), but also analyze other indicators such as their fit with the model and consistency of the score (Myford & Wolfe, 2003). In addition, MRFM can detect whether raters are biased and can locate specific variables and biases, allowing evaluators to control these biases to achieve more accurate results (Downing, 2010; Weigle, 1998). According to Engelhard (1992), MFRM improves the objectivity and fairness of evaluation because raw scores may be inaccurate if raters of different severities grade students of the same ability. In addition, other factors related to a particular study may influence the results of the assessment, and MFRM as a multi-faceted assessment method allows for a more comprehensive analysis.

Similarities and Differences Between CTT and IRT.

MFRM to some extent compensates for certain deficiencies of traditional measurement methods. Therefore, it is widely used in performance evaluation. For example, He et al. (2013) established a Rasch model with four aspects—raters, items, students, and English-language writing topic—to explore the possible bias generated by different raters when evaluating essays on different topics. In another example, Tavakol and Pinner (2019) established four facets (students, examiners, fields, and stations) using MFRM to perform a more complex analysis of the quality of structured clinical examinations. Currently, MFRM is considered a more effective assessment method and is used in structured interviews, language-proficiency assessment and evaluation-scale development and verification, and for other purposes (Aryadoust et al., 2021; Govindasamy et al., 2019; McLaughlin et al., 2017; Young, 2019).

Methods

Procedure

MFRM, based on project response theory, was used to evaluate and analyze the instructional innovation capability of pre-service teachers in China. The study was conducted in four phases. In the first stage, 60 pre-service teachers from a normal university were invited to write instructional plans for information technology subjects in combination with innovative teaching ideas. After completion of the instructional plans, the second stage of pre-evaluation and training was conducted. Nine professionals with instructional design experience were invited to be raters at this stage. Before the formal evaluation, pre-evaluation, training and discussions were conducted to ensure that the nine raters accurately understood the evaluation criteria. In the third stage, the instructional plans of pre-service teachers, including their scores and qualitative descriptions, were independently evaluated by all raters. The collected scoring data were analyzed using FACETS software and the pre-service teachers’ instructional plans were qualitatively analyzed in the final stage.

Participants

The study participants comprised 60 pre-service teachers majoring in educational technology at a normal university in Beijing, China. They had undergone systematic learning in three areas: teaching theory, instructional techniques, and micro-simulations and short educational apprenticeships. They did not have long-term, stable teaching placements, but their instructional plans could reflect the teaching skills needed to innovate in teaching. Therefore, the instructional plans completed by pre-service teachers were used to evaluate their instructional innovation capability. They selected a lesson from an information-technology textbook for primary and secondary schools published by various publishing houses in China as the teaching content on which to base their teaching plan. This plan generally included basic information about the topic, learning objectives, learning needs, important and difficult points of learning, instructional procedure, and possible instructional courseware. They tried their best to integrate innovative content, strategies, and evaluation methods based on their learning needs. Nine researchers in the field of instructional design were selected to use evaluation criteria to anonymously rate these pre-service teachers’ instructional innovation capabilities.

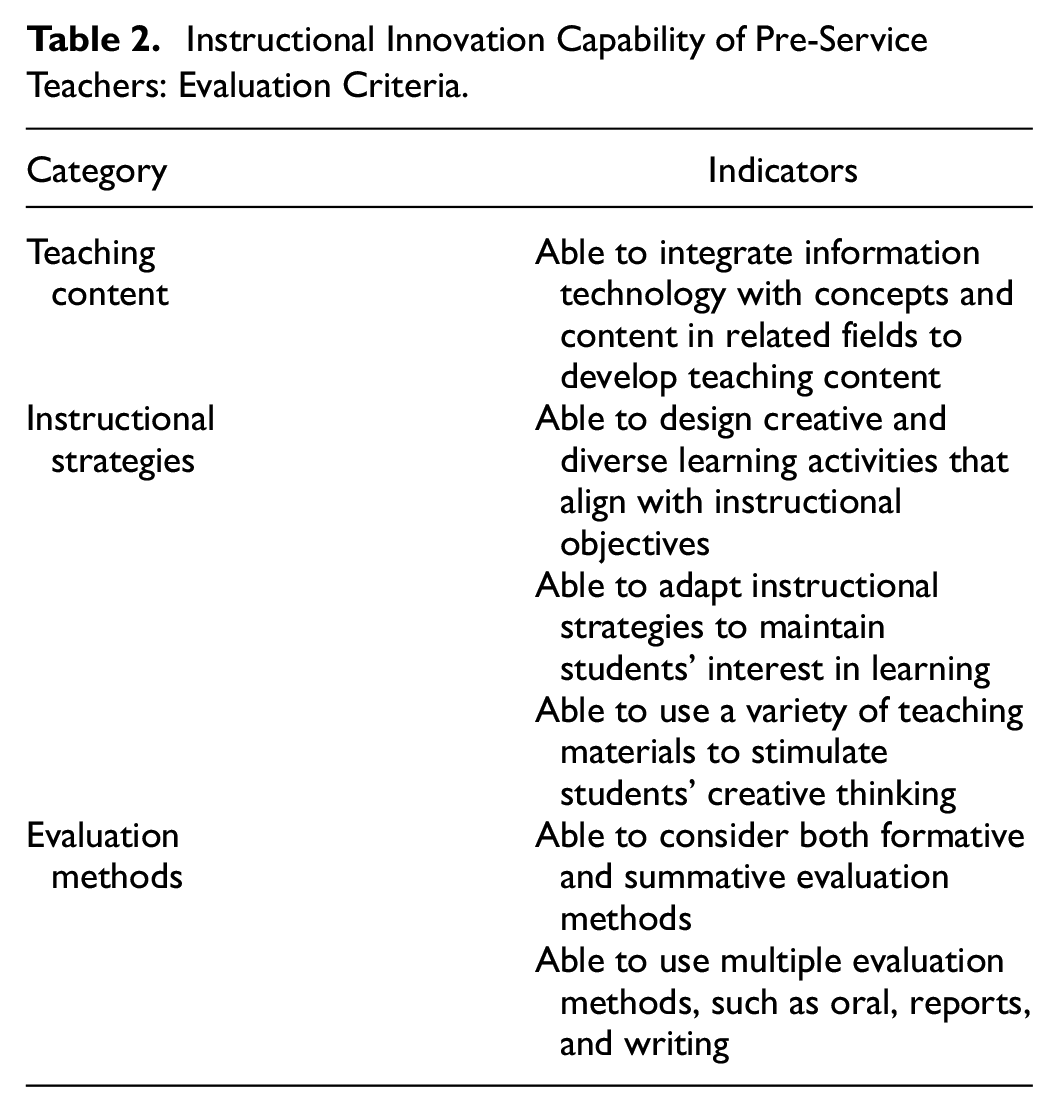

Evaluation Criteria

The scale used in this study for evaluating instructional innovation capability was adapted from the scale designed by Y.-C. Cheng and Tsai (2012). As shown in Table 2, the scale examines three aspects of instructional innovation capability: teaching content, instructional strategies, and evaluation methods. Innovation with regard to teaching content requires teachers to integrate information technology as well as concepts or content from related fields, as by using interdisciplinary knowledge and technology to complete teaching. In terms of instructional strategies, indicators of innovation include teachers’ ability to design creative and diverse learning activities that align with instructional objectives. Additionally, instructional strategies should be adjusted to maintain students’ interest in learning and creative thinking. With regard to assessment methods, innovation indicators included both formative and summative evaluations, as well as multiple assessment methods. These criteria are consistent with the a forementioned aspects of innovative teaching. The cumulative explanatory variance of the scale was 70.953%, and Cronbach’s α was .810. Raters scored the instructional plans on a five-point Likert scale from strongly agree (five points) to strongly disagree (one point).

Instructional Innovation Capability of Pre-Service Teachers: Evaluation Criteria.

Rater Training

Before raters scored the instructional plans, the research team first explained the purpose of the study, the evaluation criteria, and the importance of scoring according to the indicators. For training purposes, raters then pre-evaluated three randomly selected instructional plans according to the scoring criteria. Following this, indicators with widely varied scoring were discussed, along with aspects that the raters did not understand well. Finally, all the raters independently evaluated the instructional plans.

Data Analysis

FACETS (Version 3.83.6) software was used to conduct MFRM and perform data analysis. FACETS software is commonly used to analyze MFRM, and since the rater, item, and instructional plan constitute three separate facets, it is suitable for this study (Linacre, 2010). Three facets were established: rater, item, and a pre-service teacher’s instructional innovation capability. Specifically, the nine raters were coded as Raters 1 to 9; the 60 pre-service teachers’ instructional plans were coded from ID 1 to ID 60; and the six evaluation indicators were coded as Item 1 to Item 6.

The three facets of the model can be described as follows:

where:

Pnijk is the probability that subject n is rated k by rater j on item i

Pnijk –1 is the probability that subject n is rated k − 1 by rater j on item i

Bn is the measure value of subject n

Di is the difficulty of item i

Cj is the severity of rater j

Fk is the difficulty of the step-up from item k – 1 to item k and k − 1, M

Infit MS and Outfit MS were used as metrics to evaluate the model fit. According to Linacre (2002), the closer the fit is to 1, the more consistent it is with the model, and a range between 0.5 and 1.5 is acceptable. In this study, Infit MS and Outfit MS were between 0.6 and 1.3, indicating that the research data were suitable for MFRM analysis. If the value had been greater than 1.3, it would have meant that there were many disturbances and instabilities in the data, whereas if it were less than 0.6, the data might have included dependent samples and so were insufficiently independent. The reliability calculated by the model can also be used to represent the stability of the data, such that the closer the reliability is to 1, the more stable the data are.

Results

Model Estimation and Fit

This study used MFRM to analyze raters, items, and the instructional innovation capability of pre-service teachers. By considering the difficulty of the evaluation criteria and the severity of raters, differences in the instructional innovation capability of pre-service teachers were comprehensively investigated. Figure 1 shows the model-fitting results for each facet. According to the average parameters, the severity of raters was less than the difficulty of items and instructional innovation capability, indicating that the raters were relatively lenient in scoring. In terms of fit, both Infit and Outfit were close to 1, indicating a good fit for MFRM analysis. Meanwhile, the reliability of the three facets was greater than .8, and the p-value was less than .001.

Model estimation and fit for each facet.

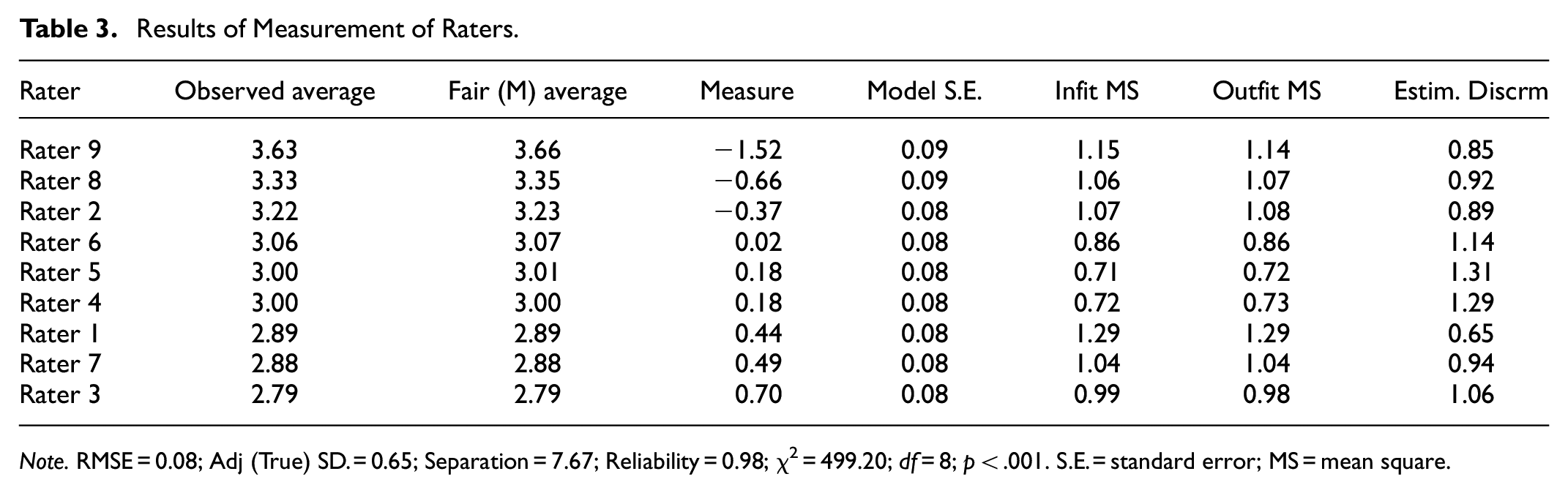

Analysis of Rater Severity

Table 3 reports the measures of rater severity, including the mean, logical measurement value, and degree of fit and separation. First, the Infit and Outfit of the nine raters are within the acceptable range. Second, the results show that the severity was appropriate for most of the raters, among whom Rater 9 was the most lenient (−1.52 logits) and Rater 3 the strictest (0.70 logits). FACETS software also provides the separation, Chi-square test result, and other data. The separation between the raters was 7.67, which exceeded the acceptable minimum score of 2.0. This means that rater severity can be divided into seven levels, indicating the independence of raters. Chi-square test results (χ2 = 499.20; df = 8; p < .001) also showed significant differences among the raters.

Results of Measurement of Raters.

Note. RMSE = 0.08; Adj (True) SD. = 0.65; Separation = 7.67; Reliability = 0.98; χ2 = 499.20; df = 8; p < .001. S.E. = standard error; MS = mean square.

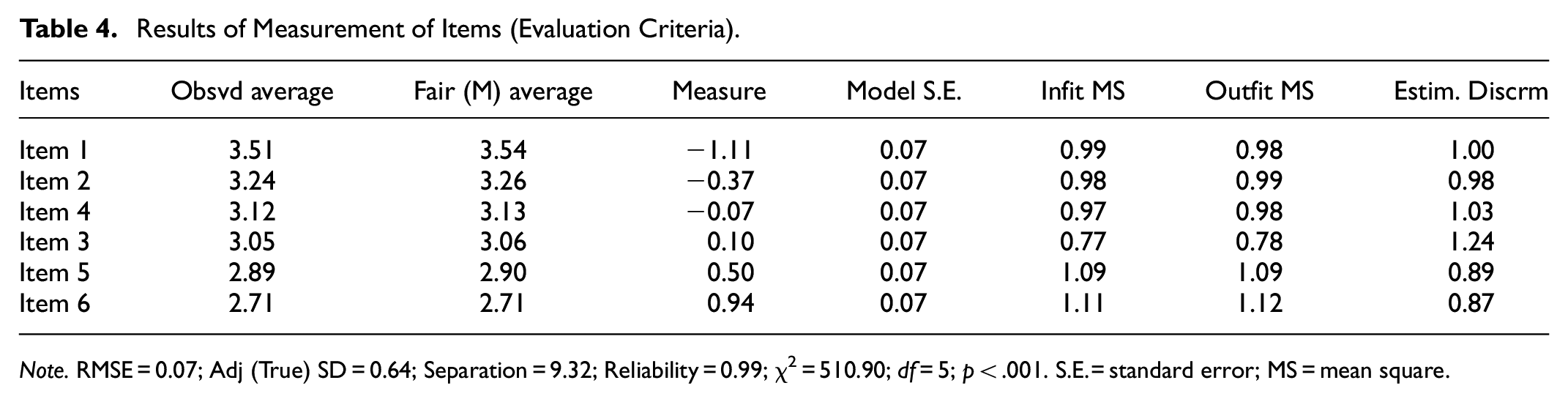

Analysis of the Difficulty of the Evaluation Criteria

Table 4 reports the measurement of the difficulty of items (evaluation criteria) where the higher the value (Measure), the more difficult the criterion is to achieve. Items 1 (Able to integrate information technology with concepts and content in related fields to develop teaching content) and 2 (Able to design creative and diverse learning activities that align with instructional objectives) were the least difficult to achieve. Items 5 (Able to consider both formative and summative evaluation) and 6 (Able to use multiple evaluation methods, such as oral, reports, and writing) were more difficult. This shows that most participating pre-service teachers could integrate information technology and related knowledge to develop innovative teaching content and learning activities that aligned with instructional objectives. However, using diverse and innovative assessment methods was difficult for them. The results for separation (9.32) and of chi-square tests show that there were significant differences among the evaluation criteria.

Results of Measurement of Items (Evaluation Criteria).

Note. RMSE = 0.07; Adj (True) SD = 0.64; Separation = 9.32; Reliability = 0.99; χ2 = 510.90; df = 5; p < .001. S.E. = standard error; MS = mean square.

Pre-Service Teachers’ Instructional Innovation Capabilities

According to the analysis of rater severity and the difficulty of the items presented above, the quality of the raters selected for this study was acceptable and the evaluation criteria were appropriate. Therefore, their application in the evaluation of instructional plans is effective. Table 5 reports the results for the 10 pre-service teachers who had the highest scores for instructional innovation. Among them, ID5 and ID23 received the highest scores, indicating that these two pre-service teachers demonstrated a high level of instructional innovation capability. The scores for all 10 were above 0.5 logits, indicating an above-average capability to innovate. The results for separation and of chi-square tests revealed significant differences in pre-service teachers’ instructional innovation capabilities.

Results for the 10 Highest-Scoring Instructional Plans.

Note. RMSE = 0.22; Adj (True) SD = 0.63; Separation = 2.90; Reliability = 0.89; χ2 = 549.70; df = 59; p < .001. S.E. = standard error; MS = mean square.

In addition to the quantitative analysis of instructional innovation capability, this study also required raters to write qualitative descriptions of instructional plans according to evaluation criteria, including highlights and shortcomings. The researcher identified the highlights and innovations of instructional plans of pre-service teachers in the high groupings by analyzing the qualitative description of the high and low groupings. It can be seen from their design highlights that pre-service teachers with instructional innovation could integrate knowledge from various disciplines. At the same time, they actively use information-based teaching tools and strategies to perform interesting teaching activities, pay more attention to the process, and apply more diverse teaching evaluations. The highlight is the lack of instructional plans among pre-service teachers in low groups.

Visualization of Measurement Results

Figure 2 presents a vertical scale of raters, items, and pre-service teachers’ instructional innovations, showing their distribution. The first column shows the logical measure values. The second column shows the severity of the raters, and it can be seen that the raters were roughly evenly distributed between 1 and −1 logits. Only Rater 9 fell outside the range of −1 logit, indicating that this rater was relatively severe. The third column is the logical measure value of the instructional innovation capability. It can be seen that the instructional innovation capability of these pre-service teachers is relatively scattered, although that of many of them is near 0 logits. This indicates certain individual differences in pre-service teachers’ instructional innovation capabilities. The fourth column is the logical measure value of items. The difficulty level of the six items was scattered along the measurement line. The logits of Items 3 and 4 are closest to 0 logits; Item 6, whose logits are close to 1, is the most difficult; Item 1, for which logits are less than −1, is the least difficult. Overall, the variables of the three facets are between 2 and −2, and most start to scatter along 0 logits. In addition, according to the scores for instructional innovation capability, most pre-service teachers exhibited a moderate level of innovation capability. Only two pre-service teachers demonstrated outstanding capability and five demonstrated a relatively weak capability to innovate.

Vertical rulers.

Figure 3 presents the probability curves of the data, which show the threshold at which the participating preservice teacher is likely to achieve a higher score. Note that as the level of instructional innovation capability on the logit scale increases, the likelihood of the participant attaining the next highest score also increases, thus forming a series of peaks (Schaefer, 2008).

Probability curves for participating pre-service teacher.

Discussion

Discussion of Results

This study explored the use of MFRM as an objective evaluation method to determine the profile of pre-service teachers with regard to their instructional innovation capability. We found the following: First, MFRM is an effective and innovative method of evaluating the instructional innovation capability of pre-service teachers. Second, using MFRM, we comprehensively and objectively analyzed the level of pre-service teachers’ instructional innovation. Finally, the empirical findings of this study provide guidance on how to foster pre-service teachers’ instructional innovation capability. These findings were confirmed as follows.

First, as a method for evaluating pre-service teachers’ instructional innovation capability, MFRM is objective, effective, and innovative. MFRM can evaluate at least three aspects of performance (level of innovation in teaching, rater severity, and difficulty of evaluation criteria). It can make assessment more objective and accurate by providing information such as the degree of fit, separation, and reliability (Linacre & Wright, 2002; Magdalena et al., 2013). The fitness and reliability of all levels met the requirements outlined by Linacre and Wright (2002), which means that the data in this study were effectively analyzed using MFRM. Specifically, the severity of the nine raters varied, and the instructional innovation capability of all pre-service teachers was scored differently, allowing for a deeper analysis. Furthermore, using MFRM to evaluate teachers’ instructional innovation capabilities is innovative. As mentioned above, instructional innovation capability was generally measured via a questionnaire survey (Fernandez-Cruz & Rodriguez-Legendre, 2022; Mao & Zhang, 2018), which might be affected by factors such as the severity of the raters and could lack objectivity. A literature search found that MFRM has been applied in many fields and has proven to be an operational and effective method. Therefore, this study adapted MFRM to evaluate the instructional innovation capability of pre-service teachers, which has produced meaningful results.

Second, by using MFRM, our analysis of the instructional innovation capability of pre-service teachers participating in this study was more objective and comprehensive. The results show differences in the level and distribution of instructional innovation capabilities. First, most pre-service teachers were able to develop teaching content based on technology and related knowledge, and to design activities that aligned with specific teaching objectives. As scholars have noted, teaching is not just about transferring knowledge but also about understanding teaching knowledge and the subject content and integrating relevant content and technology (Jang & Chen, 2010; Nilsson, 2008). For instance, the instructional plan of pre-service teacher ID6, which was evaluated as exhibiting a moderate level of instructional innovation capability, designed “The Technology and Application of Big Data” (the topic of a lesson in a Chinese information-technology textbook). The plan covered diversified content on population migration, the calculation of birth dates, and shopping. Another example comes from the instructional plan of pre-service teacher ID2, which explains the Euclidean algorithm. He incorporated independent learning and cooperative inquiry activities to guide student engagement. As most pre-service teachers have experienced a long period of learning pedagogical content knowledge and teaching theory (Hao, 2016; Jeschke et al., 2021), it was relatively easy for them to design teaching content and learning objectives. However, the results showed that many pre-service teachers experienced difficulties in selecting flexible instructional strategies and evaluation techniques, whereas only individual pre-service teachers could do so. These innovative teachers not only combine information technology with subject knowledge but also adopt diversified instructional strategies and evaluation methods that meet the new teaching requirements of the information age (Romero & Proulx, 2016; Tunjera, 2019). For example, ID 5 and ID 23, who obtained high scores in instructional innovation, chose a variety of student-centered teaching strategies and evaluation methods. For instance, game-based learning, blended learning, mind mapping, and scales assessing the learning process were included. Studies have shown that gamified learning, classroom games, and blended learning are innovations that can improve students’ learning and support their personalized evaluation (Hasan et al., 2017; Radley et al., 2016; Wu et al., 2023). Moreover, many evaluation methods, such as performance evaluation and mind mapping, are student-centered and can be used to monitor students’ learning processes and performance. Thus, they can be considered innovative teaching strategies (Guerra-Menendez et al., 2020; Kruit et al., 2020). This leads to the conclusion that innovative teaching and evaluation strategies are worthy of attention and are a breakthrough in the search for ways to foster pre-service teachers’ instructional innovation capabilities.

Third, the empirical findings of this study permit a deep analysis and reflection on the instructional innovation capability of pre-service teachers. The results show that this ability did not significantly improve during their teacher-education program, especially with regard to their choice of instructional strategies and evaluation methods, suggesting that current teaching training and practice cannot enable pre-service teachers to fully master innovative skills despite their need for strengthened innovative thinking in the context of information education (Hofer & Grandgenett, 2012; Wan et al., 2021). There is a consensus that teacher education programs in normal universities should be practice-oriented and provide opportunities for future teachers to accumulate educational practice and teaching experience (Darling-Hammond 2017; Janssen et al., 2015; Sang, 2011). However, pre-service teachers still encounter many problems during their internships, which ultimately leads to an inability to combine theory and practice and to gain more experience in innovating in their teaching (Al-Amin et al., 2021). For example, studies have indicated that pre-service teachers do not have opportunities to apply new teaching methods, because their instructors always use traditional teaching strategies (Boz & Boz, 2006; Rhoads et al., 2011). Moreover, they seldom receive guidance and feedback from instructors (Richert, 2002). S. Tang (2003) also found that pre-service teachers rarely had in-depth communication with their tutors during their internships, only exchanging information about teaching content and learning tasks. Therefore, it is of great importance that pre-service teachers learn from mentor teachers how to increase innovation in their teaching, so that they can truly innovate in their teaching and apply the new teaching methods learned.

Another aspect to consider is that teacher education in normal universities is generally a standardized process that begins with theoretical learning and teaching simulations, followed by an internship (Liu & Zhang, 2013; Xiang & Xie, 2011). It is worth considering whether such a program structure with distinct stages is conducive to developing the instructional innovation capability of pre-service teachers. According to the literature, theoretical learning in the university and teaching practice in a real classroom are two major parts of pre-service teacher education, and these two aspects need to be integrated with each other (Fosnot, 1996; Samaras & Gismondi, 1998). Nevertheless, there has always been a disconnect between theory and practice in teacher education (Buchanan, 2017). Perhaps the current structure of teacher education does not provide adequate opportunities for pre-service teachers to learn about teaching methods and practice them in innovative ways. Teacher education could consider a more flexible arrangement of the sequence and frequency of university learning and internships in classrooms (Qin & Zhai, 2022; Zheng, 2006). This could afford pre-service teachers the opportunity to observe and learn about various innovative instructional activities and apply them in practice during successive internships. This will help them test and reflect on the instructional strategies they have learned (Korthagen et al., 2006), so as to accumulate teaching experience, find innovative and effective teaching methods, and improve their instructional innovation capabilities.

Implications, Limitations, and Future Studies

This study is both innovative and significant. First, it demonstrates a more authentic and objective method to evaluate pre-service teachers’ instructional innovation capabilities by using MFRM based on item response theory, which integrates the subject, rater, and evaluation criteria. Second, this study provides a way of evaluating the individual performance of raters by using MFRM to select reliable raters in order to ensure the fairness of the assessment. Third, this study indicates that pre-service teachers are more adept at innovations in teaching content and instructional strategies than innovations in evaluation methods, providing a scientific basis for improving curriculums and teaching practices of pre-service teachers. Finally, this study accurately identified excellent teaching innovators and teaching conformists by rating each pre-service teacher’s instructional innovation capability, which provides a reference for identifying and individualized training of pre-service teachers. In conclusion, this study not only demonstrates a breakthrough and innovation in evaluation methods of pre-service teachers’ instructional innovation capabilities, but also provides guidance for the fostering of pre-service teachers’ instructional innovation capabilities.

Our study has some limitations that should be addressed in the future. First, the 60 pre-service teachers who participated in this study chose to prepare instructional plans for different types of courses (theoretical and applied learning), which might have caused differences in the evaluation results. In the future, another facet could be added to MFRM to evaluate teachers’ capability to innovate. Second, an instructional plan captures the teacher’s intention to innovate, but does the teacher always follow the plan exactly? One possibility for future research is to observe a certain number of students in their classrooms to see if they carry out the plan or change it (and why). Third, the severity of a rater may vary by the type of instructional plan and evaluation criteria used. We expect to conduct a study on this interaction in the future. Moreover, we expect to identify reliable raters and form a pool of experts who can objectively and effectively conduct multiple rater analyses.

Conclusion

The use of the MFRM in this study allowed us to consider the influence of rater severity and difficulty of evaluation criteria on the results, leading to an objective evaluation of pre-service teachers’ instructional innovation capabilities. First, the study demonstrates the effectiveness of MFRM in evaluating instructional innovation capability, which can guide those responsible for designing evaluations to select raters and evaluation criteria that lead to increased fairness and accuracy in the evaluation process. Second, through MFRM analysis, the real abilities of the participating pre-service teachers were determined. The results showed that the instructional innovation capability of participating pre-service teachers was mostly average, and only a few participants demonstrated outstanding capability. Finally, we found that it is difficult for pre-service teachers to innovate with regard to the choices of instructional and evaluation strategies, which provides direction for fostering their instructional innovation capabilities.

Footnotes

Acknowledgements

The authors would like to thank those pre-service teachers and raters who provided support for this article.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by 2023 Open Research Project “Artificial Intelligence in Education” in the College of Education in Capital Normal University.

Data Availability Statement

The datasets used and/or analyzed during the current study are not publicly available. Permissions could be obtained/required from the corresponding author on a reasonable request.