Abstract

Online assessment is a new introduction in many developing countries during the COVID-19 pandemic, including Malaysia, Lithuania, and Spain. The current study conducted a phenomenology study to probe insights about fairness in online assessment. The interview data were interpreted from the perspective of social psychology theory that emphasizes distributional, procedural, and interaction justice. The study selected participants through purposive sampling techniques. Distributional justice revealed that grading seems fair when students experience multiple assessments, clear rubrics, and effective feedback from lecturers. In distributional justice students experience injustice when there are unclear expectations of assessment. Regarding procedural justice, students’ unfairness was related to limited time, technical problems, and unclear assessment expectations. Interactional justice was related to privacy in communicating grades and weak interaction during the assessment. These results emphasize the importance of understanding the elements that influence students’ views of fairness and methods to improve assessment during online assessment in a university setting.

Introduction

Assessment is an important part of educational practice. Assessment practices are the foundation of students’ judgments of what is important to learn and where they should focus their learning efforts (Harlen & Crick, 2003). Students value assessment and see it as an indicator of their progress, certifying the skills acquired and opening the gates for employment. It also defines to what extent students have retained the required knowledge, which may guide teacher’s plans for teaching in the future (Pereira et al., 2016). According to Bloxham et al. (2011), assessment “shapes the experiences of students and influences their behaviour more than the teaching they receive” (p. 3). As a result, it can potentially enhance or impede students’ success.

Many assessment-related criteria have been linked to fairness, including equitable, consistent, balanced, beneficial, and ethically possible (Valentine et al., 2022). Previous research on fairness in higher education assessment has investigated students’ views on fairness across different areas. These areas include assessment methods (Burger et al., 2017), grading (Nesbit & Burton, 2006), and test fairness (Bazvand & Rasooli, 2022; Buckley et al., 2021; Zlatkin-Troitschanskaia et al., 2019). Among these domains, the perceived fairness of assessment methods has been the focus of more studies, indicating the main emphasis of fairness research in higher education assessment (Rasooli et al., 2019). Today, more than ever, fairness in online assessment in higher education is a need in the present day since its use has been amplified during the COVID-19 pandemic. The challenge became more pronounced when the online assessment appeared to be the only option, and educators scrambled to adapt the existing on-campus assessment to online delivery. The Covid-19 pandemic has intensified the pre-existing social, financial, and mental problems (Son et al., 2020). The change to online assessment has contributed to high stress, anxiety, and bad mood among students (Kecojevic et al., 2020). The negative impact was related to unfamiliar assessment type (Jones et al., 2021), lack of a conducive environment (Adedoyin & Soykan, 2020), increased workload (Fatoni et al., 2020), and lack of motivation (Sahu, 2020). Previous research has also shown that students remained silent and chose passivity to unethical and undemocratic exams owing to power imbalances (Ahmadi, 2021). If students’ experiences remain illusive, an online assessment may remain “sub optimal” (Laurillard, 2008).

The ongoing global pandemic certainly impacts assessment practice and they may never revert to the pre-COVID normality (Khan & Jawaid, 2020). It may imply that new assessment approaches must be initiated since face-to-face pedagogical practices may not be adequate during a tragic or pandemic situation. According to Fuller et al. (2020) TINA (there is no alternative) has paved the way for innovative assessments. It is pertinent to investigate fairness in assessment to ensure a smooth and successful transition can be designed (Rasooli et al., 2018). Tam (2022) recommended that more qualitative research be performed to understand assessment from the perspective of students.

Thus, the present study aimed to capture students’ lived experiences of online assessments during the COVID-19 pandemic within the university infrastructure when adjustments were made to the on-campus assessment format. This study adds to the current literature by providing empirical evidence for understanding students’ lived experiences during their online assessments in Malaysia, Lithuania, and Spain. The fairness in this study refer to the summative assessment.

Investigating the fairness in the assessment in higher education will provide fresh perspectives or solutions for students as well as educators who were forced to rely only on online assessment in a tragic situation. When learners’ concerns are identified, educators can adapt their assessment methods effectively and ease the online assessment. Higher education institutions must plan attainable assessment practices since empirical data related to online assessment is now emerging from higher education institutions (Slade et al., 2021). This study adds to the body of research in online assessment. The insights gained from this study can guide policymakers and educators to reduce fear and unfairness, eventually leading to higher retention rates in an online learning environment. The findings of this study have important implications for higher education institutions and can be extended to other countries around the globe.

The research questions for this study are:

What are the students’ experiences of fairness in online assessment during the early COVID-19 pandemic?

What are the students’ experiences of unfairness during the early COVID-19 pandemic?

Context of the Study

Since March 2020, Malaysia, Lithuania, and Spain have halted face-to-face classroom teaching, which has been substituted by online teaching and learning (OTL). University L is an esteemed establishment situated in Vilnius, Lithuania. MRU boasted a student community exceeding 7,500 individuals. The university is successful in attracting students from both within the country and internationally, thereby cultivating an inclusive and multicultural atmosphere conducive to learning. MRU provides an extensive array of academic programs at the undergraduate, graduate, and doctoral levels, covering diverse fields of study. University S holds a long-standing academic heritage as one of Spain’s oldest universities. It appeals to a wide range of students, encompassing both domestic and international individuals, University S has a population of 14,099. University M had around 30,000 students enrolled. USM, situated in Penang, Malaysia, is a well-known public research university. It provides a wide variety of academic programs spanning multiple fields of study. The three universities engage actively in research projects and partnerships, collaborating to enrich the academic environment and create more opportunities for both students and faculty members.

In Malaysia, University M has defined three methods of evaluation: (a) 70% course work and 30% final examination; (b) 80% coursework and 20% final examination; and (c) 100% coursework. All courses at University M must now be given and assessed online. In Lithuania, University L entirely reverted to distant mode. Moodle has been the primary repository for teaching resources. Moodle has been the primary repository for teaching resources. BigBlueButton or MS Teams, its video conferencing solution, is used for videoconferencing and seminars (though teachers could use other tools as well). All examinations (interim and finals) are conducted remotely with the university guidelines for online assessment.

At University L, the assessment is divided into two parts: the accumulative mark (all marks received by a student during the semester for class activities, project work, progress tests, and so on) accounts for 60% of the final mark, and the examination mark accounts for 40% of the final mark for the subjects taken. In Spain (University S), lecturers are encouraged to use Moodle, a video conferencing programme, and BB Collaborate. The lecturers were required to submit weekly progress reports on each subject to the degree coordinator. University S determined that the final test for all topics should not exceed 40% of the total grade. The remaining 60% of the grade was allocated among practical assignments, projects, and oral presentations.

As educational institutions in Lithuania, Spain, and Malaysia faced the challenges of transitioning to online education, it became crucial to understand the fairness of online assessment in these diverse settings. Indeed, evidence from international studies has shown that changes in assessment practices during the COVID-19 pandemic have led to heightened levels of stress, anxiety, and low mood among students (Kecojevic et al., 2020). This can be attributed to the combination of stressors commonly associated with academic transitions (Macaskill, 2018) and unfamiliar assessment formats (Jones et al., 2021). By examining fairness in the specific contexts of Lithuania, Spain, and Malaysia, this study aims to provide valuable insights related to the strengths and challenges faced by students in each country during the online assessment, thereby contributing to the development of interventions and support systems to alleviate academic unfairness and promote student well-being. The findings contributed to the development of best practices and recommendations for educational institutions and policymakers to enhance the fairness, validity, and reliability of online assessment methods in these specific contexts and beyond.

Theoretical Framework and Literature Review

The following section will discuss the theory that guides this study and the past studies related to assessment.

The Social Psychology Theory

The social psychology theory is a framework grounded in empirical evidence that outlines the fundamental principles, procedures, and interactions influencing how educators and students perceive fairness regarding the distribution of resources, such as grades, within educational settings (Chory, 2007; Rasooli et al., 2018). The theory considers learners’ view of fairness is influenced by three aspects: procedural, distribute, and interactional justice. Distributive justice explains the idea of deservingness, entitlement, and deprivation (Kazemi, 2016). It refers to grades, praise, and attention. Procedural details on transparency, voice, and consistency (Leventhal, 1980). With procedural justice, learners can infer how other members treat, and value them (Tyler & Lind, 1992). According to Greenberg and Cropanzano (1993), informational justice comprises interpersonal and informational justice. Interactional justice relates to the volume and quality of information communicated and the quality of interactions. It outlines how decision-making and assessment principles are conveyed to affected people.

A few studies have also used the procedural, distribute, and interactional justice. For example, Estaji and Zhaleh (2021) examined the viewpoints of 31 Iranian language teachers working in private language institutes. Their analysis revealed that while the teachers had different perspectives on classroom fairness, they emphasized the importance of distributive justice (equality, equity, and need), procedural justice (bias suppression, consistency, and voice), and interactional justice (exceptionally caring) in their understanding.

A study by Rasooli et al. (2019) investigated how university students perceive fairness and unfairness their emotional and behavioral reactions to these experiences. The results revealed that students’ understanding of fairness consisted of three principles: distributive, procedural, and interactional justice. They considered the distribution of outcomes, the procedures used for distribution, interpersonal relationships, and communication procedures when evaluating fairness. When describing fair incidents, students reported positive emotions like happiness, satisfaction, feeling valued, and hopefulness. On the other hand, they tended to experience negative emotions such as anger, upset, disappointment, and embarrassment in response to unfair incidents.

According to a systematic review of fairness in education and assessment literature by Rasooli et al. (2018), the majority of quantitative studies examining the social psychology theory of justice in classroom settings have primarily relied on the principles of distributive, procedural, and interactional justice derived from the workplace and legal environments. The authors further add that although there is some evidence supporting the prevalence of these principles in legal, organizational, and health contexts, theoretical studies have advocated for the empirical and qualitative exploration of fairness in instructional and assessment contexts employing these principles. Hence, there is a demand for further investigations in assessment employing qualitative methodologies to redefine social psychology theory. The combination of the three dimensions will shape how students perceive fairness or unfairness, which then influences their positive and negative emotional and behavioral responses.

Previous Studies Related to Online Assessment

This literature review section aims to investigate the current body of research and academic discussions regarding fairness in online assessment and studies related to online assessment during the COVID-19 pandemic.

Zlatkin-Troiitschanka et al. (2019) presented findings related to an assessment conducted on 7,664 business and economics students from 46 universities across Germany. The assessment utilized a domain-specific higher education entry test. Following the internationally recognized validation standards outlined by AERA (2014). The study identified that the students had difficulty completing the test, taking into account gender and language-related influence factors in assessing their test performance. One of these challenges reported by the study is finding a suitable way to address the disadvantages experienced by various groups of students to guarantee fairness and ethical integrity when developing and administering tests.

Bazvand and Rasooli (2022) conducted a study exploring how postgraduate university students perceive fairness in summative assessments in Iran’s higher education context. Through analyzing qualitative responses, two themes represented students’ views on unfairness: “equity” and “interactional fairness.” Moreover, students provided suggestions to improve fairness in assessments, including the importance of balancing power and collective knowledge in assessment practices and creating an advocacy body to protect students’ rights for fair assessments. These findings emphasize the factors influencing students’ perception of unfairness and propose methods to enhance fair assessment practices within a university setting.

During the COVID-19 pandemic, attention and priority shifted to deeply exploring how assessment can be conducted effectively in the virtual environment. For example, Krou et al. (2021) conducted a meta-analysis of 79 publications and discovered that students are motivated and convinced that online assessments are meaningful, and cheating is less common. Buckley et al. (2021) found that students experienced stress because of time constraints and anxiety during open book assessments and technical problems due to submission. A mixed method study by Slade et al. (2021) discovered that educators did not adjust the composition or relative weighting of their evaluation when they pivoted online during the COVID-19 pandemic. The most common technique was to convert existing on-campus evaluation to an online version. More recent study by Slack and Priestley (2022) observed that students opined that online assessment demands more effort than in-campus assessment.

Further, Alavi et al. (2022) conducted a quasi-experimental design that compared student academic achievement in online and classroom assessments during the COVID-19 pandemic. The findings found that the advantages of technology-enhanced assessment include the flexibility of online assessment, quick feedback, and fostering of self-regulated learning, while the drawbacks include technical issues, technology dependence, and technology literacy. The authors concluded that learners’ views related to online assessment vary, which needs to be addressed in future research.

Al Khalaf et al. (2022) implemented an online assessment with final dental students in UAE. The study reported that students who have experienced online assessment were more satisfied than those with less experience. The main issue highlighted by the students were limited time, inability to backtrack MCQ questions, and technical issues.

A cross-sectional study by Abd Elgalil et al. (2020) explored the perception of undergraduate pre-clinical medical students and reported that online exams created a sense of security. However, the students prefer campus format assessment to online assessment. In addition to this, high cost, lack of ability to focus, and only knowledge-based examinations can only be assessed online were some of the limitations highlighted by the students. Gamage et al. (2020) examined the security of online assessments and gave recommendations for practise.

The above-reviewed literature provided initial evidence mapping out students’ experiences and views about assessment during the pre and COVID pandemic era in higher education institutions. Taken together, these initial findings support the need for more research into online assessment experience during the COVID-19 pandemic. Also, a well-known fact is that online assessment is context-dependent and there is still a lack of in-depth studies that address students’ attitudes, experiences, and views of the online assessment during the COVID-19 pandemic.

There is a void in the literature concerning a deeper understanding of the underlying experiences of students’ related to fairness and online assessments during the COVID-19 pandemic. Recent studies have highlighted that more research is needed to understand technology-enhanced assessment (Ninković et al., 2021; Sayyed et al., 2022). Through implementing a phenomenological study, researchers can acquire a profound comprehension of the first-hand experiences of individuals engaged in online assessment during the COVID-19 pandemic. This qualitative methodology enables a comprehensive investigation of the participants viewpoints, interpretations, and significance assigned to online assessment, offering valuable insights into the intricacies of fairness in the online assessment.

Methodology

Research Design

The current study employed an interpretivist and critical-realist philosophy (Findlay et al., 2006). A descriptive phenomenological technique was used to thoroughly explain what participants experienced in the online assessment. Phenomenology is well-suited for investigating individuals’ subjective experiences and viewpoints. It offers a framework to comprehend how people comprehend and attribute significance to their lived experiences, unveiling the intricacies, intricacies, and fundamental essences within those experiences. This research involved an in-depth case study of online assessment. It illustrated how students construct their lived experiences of online assessment and attribute meaning to their experiences in the context of Malaysian, Lithuanian, and Spain universities. The social psychology framework was used, and inductive analysis was also employed to identify the emerging themes. Inductive analysis was used in this study to move from the concrete to the abstract, emphasizing non-linear processes that occurred in a natural environment (Lichtman, 2014).

Participants

The study recruited participants utilizing purposeful sampling. This sampling strategy involves selecting individuals who can fulfil a specific purpose in relation to the research question and offer valuable insights into a phenomenon (Creswell & Creswell, 2017). The inclusion criteria involve individuals who have experienced the online assessment during the COVID-19 pandemic. Participants who do not possess adequate language proficiency required for data collection and analysis will be excluded. The average age of participants is 21–27 years old, and they are third-year students who have experienced the traditional campus assessment and traditional assessment. To enlist students, three researchers shared details about the study with their students. Once the participants were briefed about the purpose and design of the study and had their queries addressed, they provided verbal consent.

In qualitative research, the sample size is not determined using statistical power calculations, typically used in quantitative research. Instead, the sample size is determined by considering the principles of data saturation and theoretical saturation. The data saturation in Lithuania was six for each country. Therefore, the total participant for this study is 18.

Participants’ names and identities were withheld and given pseudonyms to preserve their privacy and compel them to speak freely and openly. The pseudonyms were CP1, CP2, CP3, … The participants were instructed on the purpose of the study before the data collection. They were made aware that they could discontinue participation in the study at any time and without providing a reason. To gather participants’ experiences while they are still fresh in their minds, interviews of the participants took place following their online assessment. the week.

Trustworthiness of Qualitative Data

The present study was driven by the four criteria for qualitative research proposed by Guba and Lincoln (1994): confirmability, credibility, dependability, and transferability. Member checking was used to establish credibility (Creswell, 2009). The information provided during the interview was compared to the information in the processed data by returning the transcripts of the interviews to the participants. With a description of the environment and participants, transferability was obtained. When three lecturers coded the themes, investigator triangulation was used. The developers came to a 90% consensus. As a result, the conclusions are trustworthy, compelling, and accurately reflect the participants’ lived experiences.

Data Collection

A semi-structured interview was developed based on the Social Psychology Theory and based on previous studies related to assessment. Ono (2020) contends that an in-depth qualitative approach is preferable to statistical studies examining online classroom experiences, such as online assessments. Semi-structured interviews were conducted with the participants during the COVID-19 via Webex or MS Teams.

The interviews lasted for 30 to 60 minutes for each participant. The semi-structured nature of the discussions allowed the interviewer and participants to explore and discuss issues related to online assessment as Gilbert et al. (2007) highlight that interviews might provide richer insights into the phenomenon being investigated and answers to ‘‘why and how questions” (p. 571). The interviews took place in students’ native languages of Malay, Lithuanian, and Spanish and were verbatim transcribed by researchers in this study who are native speakers of each language.

The participants were prompted to specify or detail events they had personally encountered the phenomenon during the online assessment. The purpose was to give students a voice and shed light on specific ideas and events. They replied by providing first-person accounts detailing their resources and involvement in online assessment during the COVID-19 pandemic. When additional clarification was required from the educators, follow-up inquiries were sent to them.

Data Analysis

Inductive reasoning, as proposed by Creswell and Poth (2016), was used to thematically analyze the data in accordance with the method advised by Braun and Clarke (2006). The six-step thematic analysis process includes the following steps: (a) familiarizing oneself with the data and transcribing it all; (b) generating codes; (c) classifying codes into themes; (d) reviewing and refining themes; (e) succinctly defining and naming themes; and (f) producing a report from the emerging themes that is a descriptive, analytical, and argumentative narrative. To clarify important ideas, participants’ direct quotes were used.

For familiarizing the data, the most common words used were identified. When Participants’ keywords and their ideas were considered to generate initial codes. For example, “Most of the subjects distributed the percentage among the assignments” (S2), “accumulation of assessed assignment has an important share…” (L2) and “multiple assessments because of the questions are flexible and versatile” (M6). These keywords were then converted to the code of “multiple assessments.” The initial codes were examined repeatedly and categorized according to similar characteristics to search for themes. The themes were reviewed to ensure whether the initial codes belonged to the classification category. The classification categories of each piece were defined and given names indicating their unique characteristics. The code “multiple assessments” is further categorized as a distributional justice theme.

Six questions guided the interviews:

What are your experiences related to fairness during the online assessment?

What are your experiences related to unfairness during the online assessment?

How do you personally view the fairness of allocating resources, grades, or rewards in educational environments?

What specific elements of assessment procedures do you believe are crucial for ensuring fairness?

Have you encountered any instances where you believed the interactions during assessments were characterized by inequity or lack of fairness?

Can you explain your experience with examples?

The following section illustrate the interview guidelines suggested by Gay et al. (2006, p.420):

During the interview, the interviewer actively listens to participants, allowing them to express their thoughts and experiences without interruptions. The interviewer prioritized listening to it as it is crucial for gathering valuable information. When participants shared their thoughts, the interviewer ensured understanding by asking relevant follow-up questions. The questions were framed neutrally, avoiding any influence that may lead participants to answer in a specific way. This allows for unbiased responses and a more accurate understanding of their perspectives. This allowed them to express themselves fully before interjecting with additional questions or comments.

Findings

Findings of the overall themes and sub-themes relevant to distributional, procedural, and interactional justice are presented in Table 1.

Themes and Frequency Related to Distributional, Procedural, and Interactional Justice.

Due to the richness of the qualitative data, some themes tend to overlap. The excerpts were also presented with language errors as committed by the participants. The following section discusses the themes using with the use of excerpts from the interviews.

Distributional

Participants voiced fairness when multiple assessment opportunities were considered for the summative assessment. They were able to accumulate marks and good grades when several assessments were given to students. They argued that multiple assessments allowed the opportunity for a more equitable portrait of their achievement. The following are excerpts that are related to distributional justice from S2:

Most of the subjects distributed the percentage among the assignments.

that one had to do and afterwards everything was summed up

A similar idea was expressed by L2:

And I like what is called cumulative marks at my university. It is about the formative assessment. Accumulation of the assessed assignment has an important share in the final mark. It is 60 percent and the exam is 40. So hard-working students know before their examinations that they have accumulated a substantial part of the final mark. This is why I liked the possibility of improving drafts before Sending final versions to our teachers (L2)

M5 expressed the multiple assessments allowed him to be more critical, creative and analytical since they are given sufficient time to complete the assessment.

In my opinion, I like multiple assessments because most of the questions are flexible and versatile. It allows students to take their own time answering and figuring the questions out. It creates a different environment and exposure for students to learn on their own. I do realise that I like to do research on questions and do my analytical thinking based on it so it helps me to get an idea of what I want to answer. For example, a lecturer asks students to draw a mind map or short slides to represent their understanding. This not only enhances students’ creativity in explaining what they understand of the subjects, but it helps other students to understand it too.

Another category related to distributed justice is the rubrics set by the lecturers. Rubrics given allowed students to understand why they are given specific marks. This element was not evident in University M and University L. University S used rubric and students found it to be worth it since it guided them to complete their assessment effectively.

rubric as a guide because there were many assignments. The rubrics are very useful because it helps students to understand the reasons behind assessment and marks (S4).

Students were particularly pleased when they “got comments from their teachers and were given the opportunity to change and continue working for better scores” (S3). The feedback from the teachers also assisted them in “completing their subsequent task according to their explanations” (L7) and “being able to understand the issue much better” (M8). However, several participants highlighted unfairness related to distributed justice when the grades differed with no clear instructions. The following is the elaboration from L5:

when we sent assignments via Moodle, in our case, in our group, we were the first to send our work, and we received back our drafts, but when the professor corrected our work, we were given lower marks because we were the first in our group to submit the work. Those who improved their drafts later than us got higher marks. I think that the assessment was done carelessly. (L5)

One participant highlighted that unfairness when the platform was ineffective and led to inequitable performance. S4 lamented that:

In the subject called Practical English, we only had a final oral examination and nothing else, in fact, we did not have any classes. I do not know what was wrong with the system, whether the professor did something wrong or it was the fault of the platform, but when you finished doing it, you could see your correct answers. And you could try answering mistaken questions once again until you got the right answer

Similarly, M5 pointed out that:

We were required to write our names against the paragraphs we contributed to the essay. Therefore, when one of our group members INSISTED not to be corrected and pointed out to the fact that the paragraphs were named and therefore would be marked individually, the rest of the group members gave in. When the marks came in, it turned out that all the group members received the same mark and I believe the mark has been affected by us not being adamant in correcting the mistake(s) done by the said group member.

Figure 1 illustrates the categories and frequency related to distributional justice.

Categories and frequency related to distributional justice.

Procedural Justice

Several students mentioned procedural justice concerns when instructions were not transparent and consistent and experienced a mismatch in how grades were allocated. S2 mentioned unfairness when the instruction is given was not clear and changes of marks were made during the assessment and time. The particular lecturer was not consistent in giving instructions, which led to dissatisfaction and frustration since some of the students were unaware of the changes. S2 detailed that:

When the assessment criteria were changed, they were published in Moodle and, even though we knew the new criteria, it was not clear in many of the subjects what assignments exactly we had to do, for instance, in Music.

The lack of transparency in grades was highlighted by M2 when the lecturers asked them to work in a group. M2 highlighted how their working pattern changed because of ineffective instruction related to grades. The students experienced unfairness because their marks appeared to be the same as all group members. Such grades undermine transparency and contribute to unfairness.

I am always willing to help out a fellow student either in studies or who wishes to do the best for the assignments. But we were under the impression that the written assignment was to be marked individually but it was marked as a group. No proper instructions were given.

L6 experience unfairness when the lecturer did not follow the guidelines set:

One technique that I disliked the most was the assessment of an assignment that every student had to submit in the same folder on Moodle. The deadline was set and the teacher pointed out that they would review the works if submitted in advance and tell what could be improved before the deadline. Some students submitted their papers in advance and were expecting some reflection. However, the teacher reviewed the works only after the deadline date passed and expressed their dissatisfaction with some of the works. The students were upset because they could have worked on their papers had the teacher reviewed them earlier. (L6)

Another overriding concern is the ineffective assessment via chat. Such interactions were viewed as unfair since students tend to repeat the answers mentioned earlier. S4 detailed this:

I think in one subject they used a chat in the forum as a type of participation, but I don’t think it is a good form of assessment, because people repeat what the first or second student has written and write something like “I agree with xxxx or xxxx” without adding anything new. I don’t think this is a good assessment tool. But I have no doubts that I did not like the examination.

Similarly, M1 also emphasized on the technical difficulties when all students logging at the same time they experience low performance and frustration. In her words:

The idea of fixed date and time to answer on assessment with many student logging in from various places may cause system problem I believe the university management is aware about the issues faced by student in previous semester and have worked on system errors so it will not hang or lag while we attempt to answer this semester.

L4 reported unfair experience when:

Some software simply does not allow students to go over the questions they have already answered. I might receive low marks if my finger slipped or typed in a rush.

Lecturers were more concerned about the assessment strategies and exam challenges and limited time was given to stop students from cheating. This indirectly led to unfairness as students felt nervous and were not able to focus on answering the questions. The following are excerpts from participants.

I understand that the professors wanted to prevent us from cheating. But the thing is that when you have limited time for answering questions, you get nervous and you cannot read the questions attentively. (S1) The idea of a fixed date and time to answer an assessment with many students logging in from various places may cause system problem I believe University M management is aware of the issues faced by student in the previous semester and have worked on system errors so it will not hang or lag while we attempt to answer this semester. (M2) However, in some cases, the time of tests that we were given, was too short, and sometimes I got lower grades only because I felt too nervous to think critically. (L6).

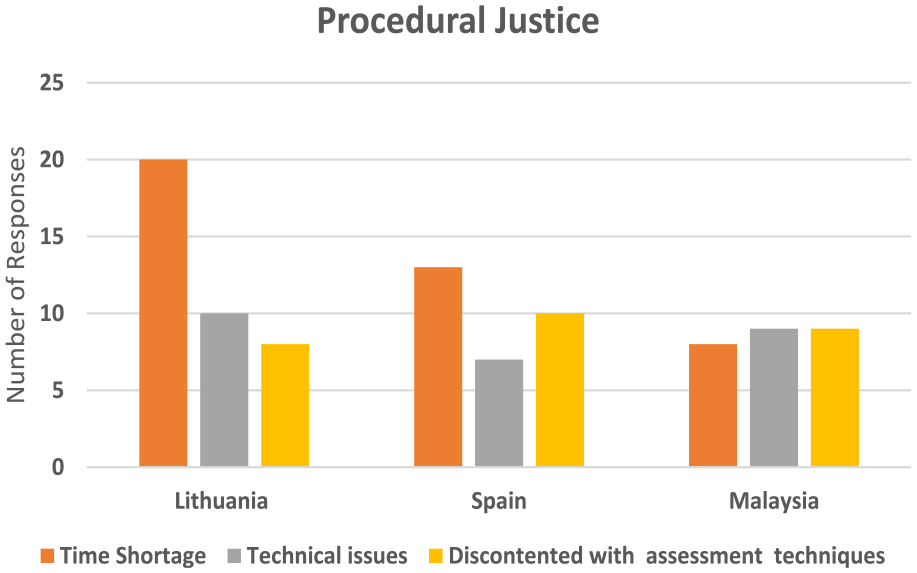

Figure 2 illustrates the categories and frequency related to procedural justice.

Categories and frequency related to procedural justice.

Interactional Justice

Participants elaborated on issues related to interactional injustice where their lecturer did not treat them respectfully and did not respect students’ privacy in communicating grades. L1 highlighted dissatisfaction when their assignments were criticised and further the lecturer gave more instructions that were not stated earlier. The students experienced power imbalance, leading to dissatisfaction and demotivating them to work further on their assignments. According to L1:

I think it is important for students to know what is expected of them. One of the teachers posted the requirements for written projects at the beginning of remote studies. My classmates and I followed them, but the teacher expected more from our projects. The teacher then explained to us what they meant during an online class, and we completed our subsequent assignment according to the explanations. After the assignments were submitted, the teacher criticised most of the work once again adding new requirements that were not specified either initially or after the first written assignment. Naturally, the students were frustrated. We decided to just accept it and do what we could. In my opinion, if there are some initial instructions that the students must follow to complete their assignments successfully, they should not alter them throughout the semester.

L4 opined that:

Also, the disclosure of the marks/points of each student in front of the class, even online, was not a very pleasant experience, especially since the results of the submitted tests were only visible to the students to whom they belong.

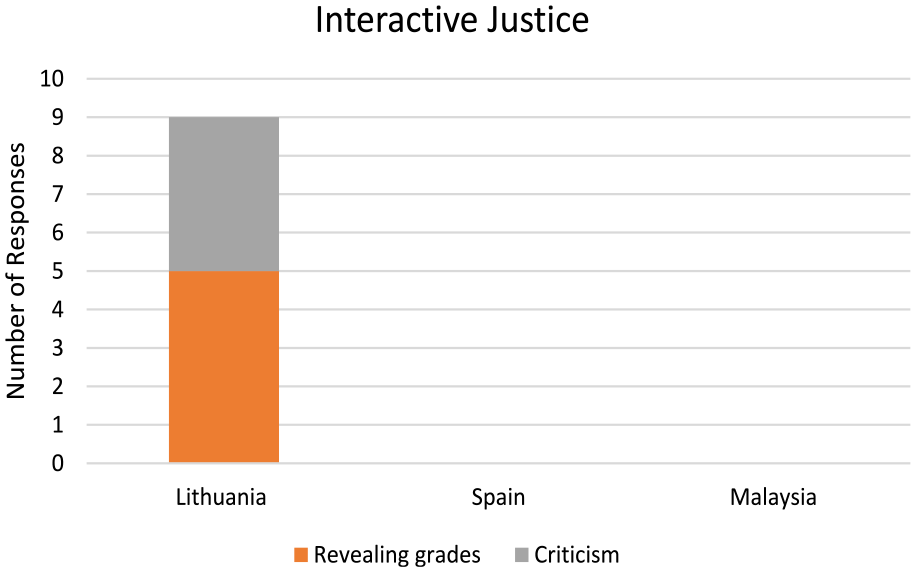

This unfairness was not found in University S and University L. Figure 3 illustrates the category and frequency related to interactive justice.

Category and frequency related to interactive justice.

Discussion

The current study was based on social psychology theory to provide evidence of how students experience fairness in assessment during the COVID-19 pandemic. The findings signify the fairness concern across three countries. The three countries lived experience seems to be similar to one another since the three countries were forced to a transition with multi-format online assessment. However, only rubrics were used in University S and not other universities. Also, procedural, distributional, and interactional justice was evident in this study. Interpreting using distributional justice, the grading seems to be fair when students were given opportunities to accumulate marks by attempting many assessments. They had also contended when lectures could understand the physical and mental stress and gave more suitable assignments. Previous studies have indicated multiple assessment opportunities as an important criterion of fair assessments (Gipps & Stobart, 2009). According to Johnson et al. (2021) carefully planned assessment throughout the semester during the pandemic leads to improved student satisfaction and performance. Also, they were guided by clear rubrics that allowed them to achieve good grades. Therefore, students seem convinced that the online assessment seems fair as it increases flexibility since students are in a pandemic. This is why Krou et al. (2021) highlighted that students are motivated and convinced that online assessments have value. The findings suggest that multiple assessments, rubrics, and effective feedback from instructors can contribute to effective online assessment.

Relating to procedural justice, students’ unfairness was related to limited time, technical problems, and unclear expectations of assessment. The findings support Hjelsvold et al. (2020) and Khalaf et al. (2022) idea that limited time during an online assessment is one of the important barriers to implementing the online assessment. Furthermore, technological restrictions place excessive pressure on students instead of encouraging them to engage fully with the assessment process (Tam, 2022).

Several researchers have highlighted that because teachers employed synchronous and asynchronous assessment without training, students’ dissatisfaction has been reported (Mirza, 2021; Shahrill et al., 2021). This sentiment was also highlighted by Hodges et al. (2020) and detailed that a well-planned online assessment is very different from switching to the online assessment in the middle of a crisis since the pace at which this switch is made may shock educators and students. The findings confirm the claim of other studies that demonstrated unclear expectations related to online assessments which also increased students stress and anxiety level (Buckley et al., 2021; Robertson & de Silva, 2020; Tam, 2022). Prior social psychology studies found that inconsistent and misaligned interpretation of grading is the theme of unfairness and injustice for students (Cheng et al., 2020).

The challenges related to interactional justice were privacy in communicating grades and weak interaction during the assessment. Participants in this study, as well as students in previous studies, experience disrespectful treatment (Tierney, 2015), accused of wrongdoing (Čiuladienė & Račelytė, 2016) and revealing grades publicly (Tierney, 2015) as a crucial component of unfairness in assessment. However, this justice was only found in University S.

The findings of this study are consistent with what Goldstein and Behuniak (2012) have stated that technology-enhanced assessment fundamentally differs from traditional classroom assessment. Instructors have new roles and responsibilities such as learning the required literacy to work in online settings and the ability to deal with problems.

Implication of the Study

The authors of this study opine that higher education institutions to respect students’ experiences and implement more liberal regulations during challenging times or when students are given online assessments to improve learning and protect students’ academic achievement, as well as their psychological well-being. This study serves as a wake-up call for higher education institutions to provide their instructors with relevant pedagogical and technological knowledge and skills related to assessment so that instructors can engage in “educational change toward more flexible models and practises” (Rapanta et al., 2020, p. 942).

The pandemic has highlighted the necessity of spreading technology literacy expertise throughout society and across borders so that the use of technology and e-assessment can effectively improve learners’ and educators’ knowledge. In that case, all countries worldwide should use their full potential to discover and use it during a tragic situation. This entails developing comprehensive policies and guidelines for online assessments. We concur with Dawson’s (2021) suggestions that humans should be involved in the decision-making process in academic integrity. We contend that adequate policy, guidelines, and support systems can achieve fairness in online assessment. There are a number of recommendations to improve online assessment practices from this study.

First, educators should consider multiple assignments with clear rubrics as suggested by the participants in this study.

Criteria of online assessment should be communicated clearly to the students before the assessment. There should not be any changes in the criteria during the assessment.

Effective feedback from the instructor should be introduced in task-based assignments.

Standardized evidence-based practice should be implemented when students have technical difficulties (slow Internet, unable to down and upload).

Higher education institutions should work to reduce technical challenges.

Technology training courses should be designed for instructors to reduce technology and technical failure. Technology literacy knowledge is important for students as well as educators.

Teachers must have a checklist and dos and don’ts during an online assessment.

Interactions with students need to be conducted to understand their understanding of their rights for fairer assignments.

Create a framework that necessitates a collective effort and the usage of various collaborative tools and interactive methods for practical assessment.

Limitations and Conclusions

The present study findings shed light on important aspects of fairness in online assessment within the higher education system in Malaysia, Lithuania, and Spain during a tragic situation. The main emphasis was placed on three elements concerning social psychology theory: procedural justice, distributive justice, and interactional justice. The findings will be a guide to develop a deeper understanding of what fair human judgments look like and how they can be optimized for learning. According to Gipps and Stobart (2009, p. 116) “we will never achieve the fair assessment, but we can make it fairer.” The openness of the format, scores and grades will expose the online examination process and offer an opportunity for instructors and students to debate on the online assessment. In summary, the researchers recommend that higher education institutions acknowledge the experiences of learners and establish useful protocols for online assessments during difficult periods. This approach will enhance learning, safeguard students’ academic accomplishments, and promote their overall physical and mental well-being.

The limited sample size of only 18 students may not capture the full range of experiences and perspectives concerning online assessment during the COVID-19 pandemic. Therefore, the study’s findings may not be generalizable to a larger student population or transferable to different contexts outside the specific countries examined. Nevertheless, this small-scale study can contribute valuable knowledge and serve as a foundation for further research in the field. Future research should consider a mixed method study for online assessment. Various universities throughout the three countries can be used to achieve a greater sense of fairness in the online assessment. Future studies should also include participants from other countries and more diverse participant samples to increase the generalizability of the findings.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the publication of this article: This work was supported by the Deanship of Research and Graduate Studies, Ajman University.

Data Availability Statement

Data sharing not applicable to this article as no datasets were generated or analyzed during the current study.