Abstract

The application of the language proficiency scales (LPS) in education validates its function, as it can explore the value in-depth. However, little systematic research on applying LPS has been conducted due to the complex intertwining of stakeholders and a lack of theoretical framework and practical approaches. Adopting the framework proposed by Y. Jin and Jie (2020), this study explored how the Common European Framework of Reference (CEFR) and China’s Standards of English (CSE) were used and impacted various stakeholders in the education context. The literature search was taken from WoSCC, Scopus, and CNKI from 2018 to 2022. Qualitative content analysis was used for systematic review. Results showed that policymakers used LPS in education policy guidance; teachers applied them as the benchmark of diagnostic assessment to get accurate language profiles of students and create new approaches to teaching; students used them as goal-setting guidance and self- or peer assessment criteria to track progress; test developers aligned them with tests to obtain reliable results; curriculum designers tailored descriptors and scales from CEFR to develop new curricula, align, or revise the existing ones; researchers used LPS as references to develop new rubrics, frameworks and assessing models. This study could provide insight in scientific application of LPS. However, it focused mainly on the CEFR and CSE with a framework for exploring the impact of language testing. Studies containing more scales and theorizing the framework of aftereffects of LPS should be encouraged.

Keywords

Introduction

Language proficiency scales (LPS) are extensively used for different purposes, such as course, syllabus and materials design (Nikolaeva, 2019), language learning, teaching, and assessment (J. Liu & Yang, 2021). The application value of LPS is empowered when it is designed, whether it is learning-oriented, assessor-oriented, or constructor-oriented (Jones, 2014). It is significant to study the application of LPS in the educational context. Evidence collected within the first few years after its publication is of prime importance and a key decision-making basis for the further implementation of the scale (Zhu, 2016) because it can tap the value of LPS in-depth and discover its positive role and impact on different stakeholders.

Exploring the application of LPS is a validation of its usefulness. The feedback from the stakeholders can back up the scale’s validity and provide evidence for its revisions or adaptation (Y. Jin & Jie, 2020). For example, the Common European Framework of Reference (CEFR) is the most widely used LPS for planning and evaluating curricula, certifications, examinations, and textbooks (Byram, 2020). After its publication, studies on its impacts amounted (Brunfaut & Harding, 2020; Byram, 2020; Green, 2018; Sahib & Stapa, 2022), revealing its strengths and weakness in education. The feedback contributed to the release of The Companion Volume (CV) in 2020 (Council of Europe, 2020). Exploring the application of LPS can also offer a better understanding of its usefulness in teaching, learning, testing, and curriculum design, providing enlightenment for teachers, students, testers, and policymakers, as the exploration can demonstrate how challenges are addressed and offer new perspectives on moving the field further (Harsch, 2014).

There are some influential language proficiency scales widely used for many years in international language education, such as ILR (Interagency Language Roundtable), ACTFL (American Council on the Teaching of Foreign Languages), CLB (Canadian language benchmarks), and CEFR (Common European Framework of Reference) (Zhou & Liu, 2021). CEFR is the most influential officially published scale worldwide and is widely used for curricula planning and evaluating, assessment, textbooks development, teaching, and learning.

China’s Standards of English Ability (CSE) in 2018 is a new scale developed to coordinate teaching, learning, and assessment (J. Liu, 2017). It is the first officially published scale in China, which owns the world’s largest population of English learners (Bolton & Bacon-Shone, 2020; H. Liu, 2016). After publication, CSE has been widely applied from primary schools to colleges (M. Liu & Huang, 2019; M. Liu & Liu, 2022; J. Liu & Yang, 2021; Peng & Liu, 2021; Xiong & Liu, 2020).

Considering their influence and number of users, this study selects CEFR and CSE as representatives of LPS to explore their application and impact on education. Research exploring the use of CEFR and CSE has included inclusive stakeholders and approaches. However, up to now, no systematic literature review has been conducted to collect evidence of their usefulness from different stakeholders. To bridge this gap, this study aims to tap the value of LPS in the educational domain by systematically reviewing related papers and providing a solid foundation for future academic research in education.

Framework of Systematic Review

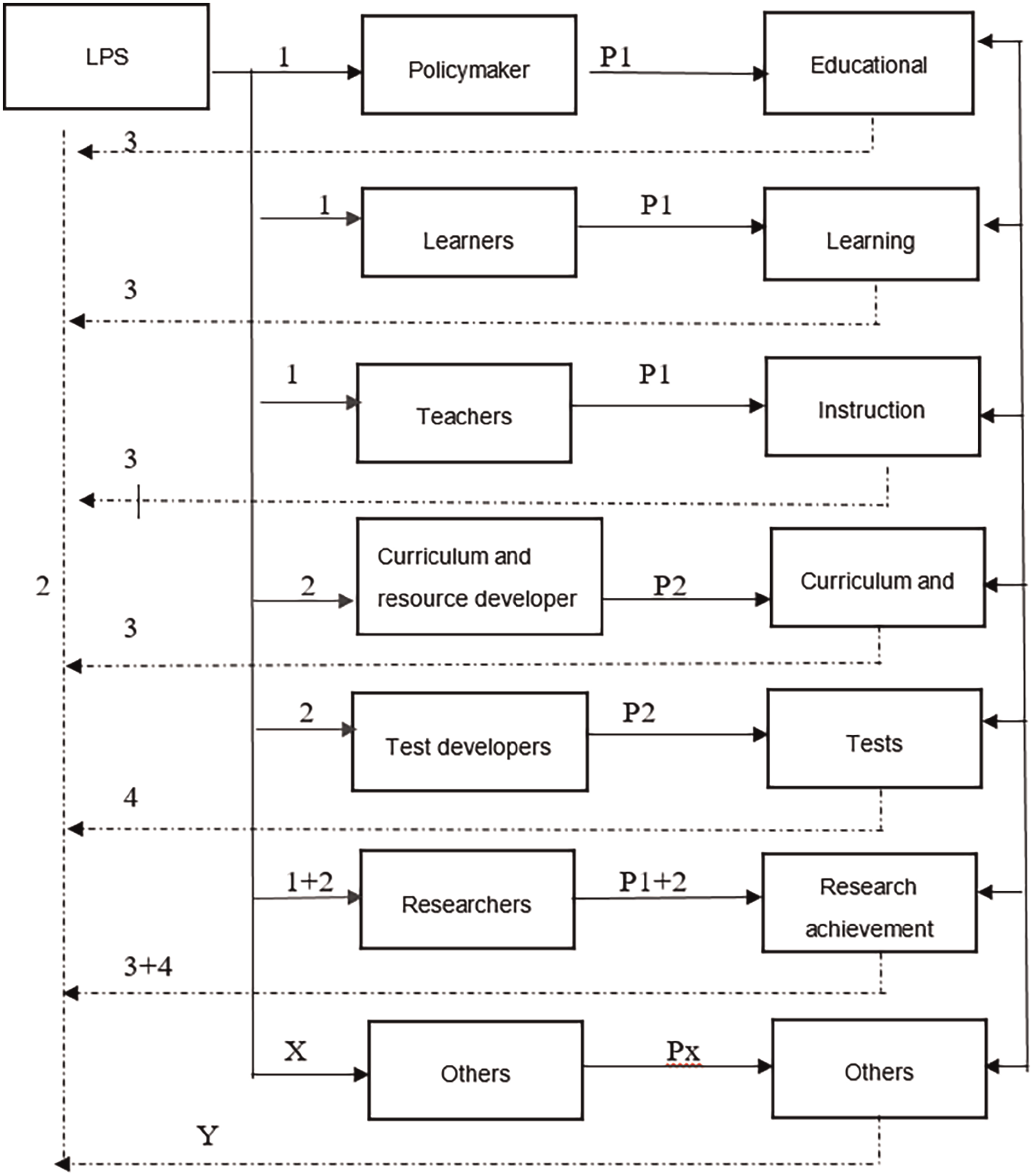

It is challenging to learn how LPS is used in the educational domain because there is a lack of theoretical frameworks and practical approaches. The general method is adopted from language testing because they share much in common. Bailey (1996) proposed a basic model that identified participants, processes, and products (3Ps) which may influence or be influenced by washback in language testing. Based on this 3Ps theory, Y. Jin and Jie (2020) constructed a model to study the application and impact of the CSE speaking scale, as shown in Figure 1.

Framework of t exploring the application of LPS.

This model illustrates seven types of stakeholders and the impact of LPS from educational and social domains. The solid arrows (numbers 1, 2) represent the impact of scales on stakeholders and their education practice; the dotted ones (marked 3, 4) indicate the washback of applied research to the scale. While X and Y refer to impacts other than the education domain. According to this model, the impact of LPS in education can be explored from six different stakeholders, as shown in Figure 1: stakeholders 1 to 6 from top to bottom are in the education domain, and the seventh stakeholder is concerned with how societies select talent by using scales.

Although Jin’s framework is designed for speaking scale, it contains the core elements of the mechanism of how the impacts are generated: how the stakeholders use LPS in teaching, learning, testing, and selecting talents, and the effects of the implementation. Hence, this study adopted this framework, and the research route is outlined in Table 1.

Method of Exploring the Impact of LPS.

Note. This table is adapted from Y. Jin and Jie (2020). Copyright 2020. Adapted with permission.

Since this study focused on implementing LPS in the educational domain, it will explore the application of LPS by stakeholders in education and their effects. The social effect was excluded. The questions guiding this study were:

How is LPS used in the educational domain?

What is the effect of using LPS?

Method

Resources and Database

To answer these questions, this study followed the Preferred Reporting Items for Systematic Reviews and Meta-Analyses protocol (PRISMA), and three databases were selected: China Knowledge Network (CNKI) core journals, WOS (core collection), and Scopus. Since CSE is a scale applied in China, literature on this range would likely be better studied and indexed by this CNKI database. And to ensure the quality of articles, only core collections were selected. WoS, the majority of which is a core collection(Carloni et al., 2018), is one of the two important and most comprehensive sources of publications and impact indicators worldwide (Pranckutė, 2021). The other is Scopus. WoS CC and Scopus are trusted publisher-independent global high-citation databases (Baas et al., 2020; Birkle et al., 2020) containing many peer-reviewed, high-caliber academic journals published worldwide. These databases could provide useful tools for systematic literature review.

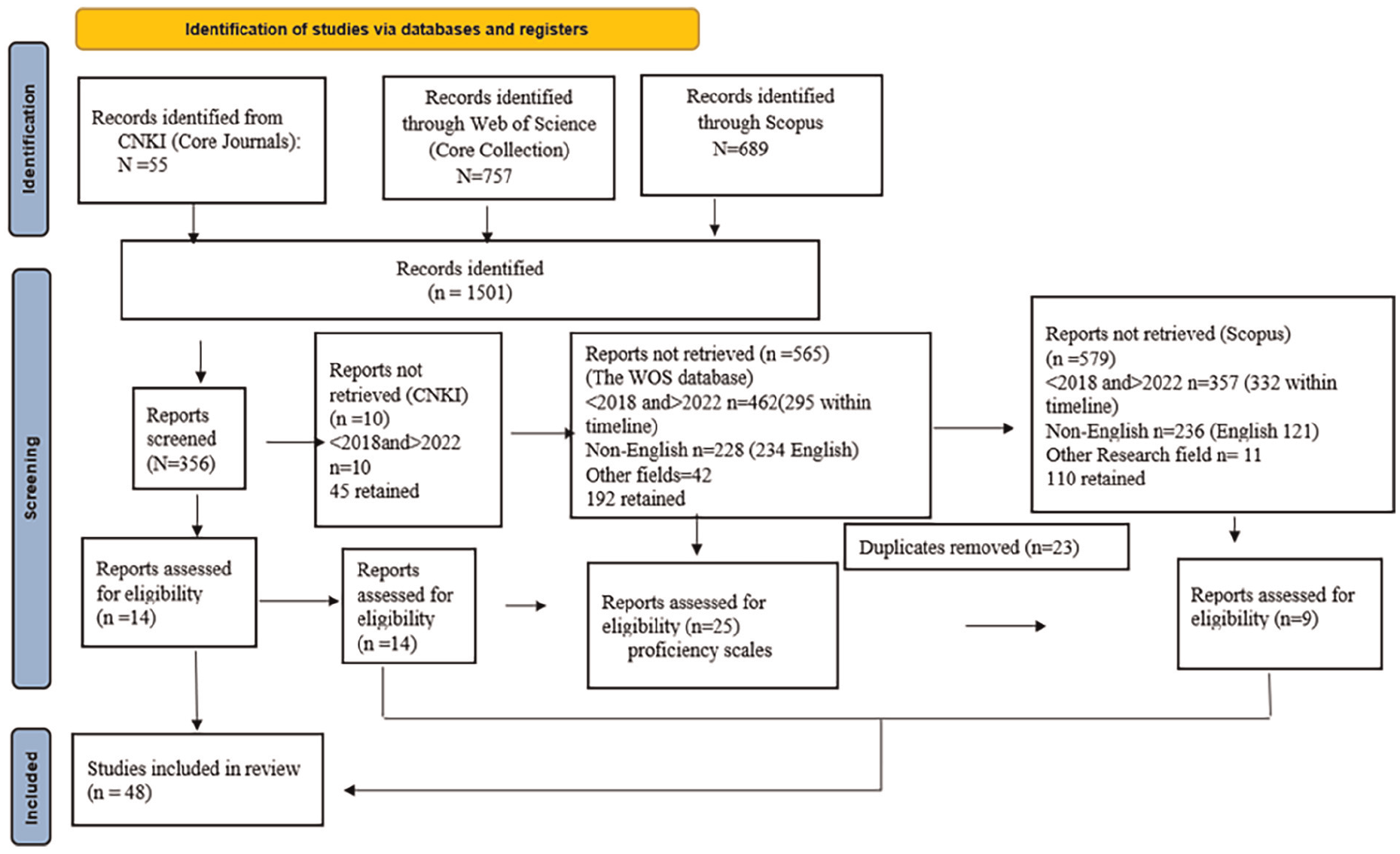

PRISMA

PRISMA (Preferred Reporting Items for Systematic Reviews and Meta-Analyses Statements) is the most commonly used reporting guideline for systematic reviews (Page et al., 2021). The methods and results are reported in sufficient detail to enable users to evaluate the applicability and credibility of the review findings. Besides, the PRISMA statement can make systematic review reporting more transparent, comprehensive, and accurate. Hence, it enables a thorough search for information and scientific techniques relevant to the use of LPS in education. The retrieval process is illustrated in Figure 2.

An overview of the search protocol based on the PRISMA statement.

Systematic Review Process

Identification

This systematic review was initiated in 2022 and mainly involved choosing keywords for information search. In the CNKI database, “CSE,”“China’s Standard of English Proficiency Scales,” and “application” were used as keywords. In WoSCC and SCOPUS, “Common European Framework of Reference for Languages,”“application,” or “CEFR,” or “China’s Standard of English Proficiency Scales,” were used as keywords. For this review focused on the application of language proficiency scales in the education context, “validation” was excluded (See Table 2). As a result, 1,501 papers have been detected searching any studies in which the keywords were stated. Fifty-five papers were retrieved from CNKI, 689 from Scopus, and 757 from WOS, respectively, as seen in Figure 2.

Keywords and Information Search Strategy.

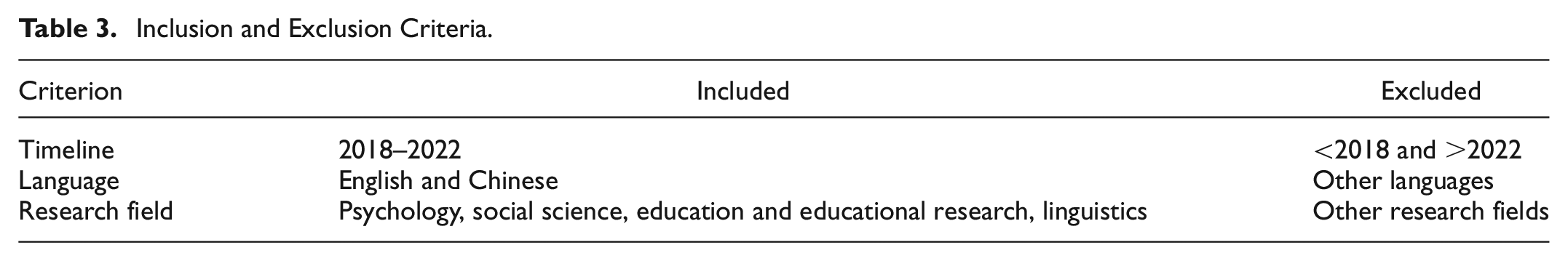

Screening (Inclusion and Exclusion Criteria)

Following the inclusion and exclusion criteria in Table 3, the second stage of the systematic literature review involved screening. The first criterion was time, which was controlled in 5 years (from 2018 to April 2022) for the following considerations: limiting the research within 5 years can ensure the freshness of the literature; besides, CSE was released in 2018, and the application came after its publication; additionally, the finalized ‘CEFR Companion Volume with New Descriptors’ was also published this year (Eaquals, 2018).

Inclusion and Exclusion Criteria.

The second criterion is language. Only English and Chinese publications in Scopus, WoSCC, and CNKI databases were included to overcome the distortion of meaning caused by the translation. Third, this research was refined in psychology, education, and linguistics. Other research fields were excluded, given the relevance of the articles published. As a result, 45 articles remained from CNKI core journal database, 110 from Scopus, and 192 from WOS, as seen in Figure 2.

Eligibility

Eligibility refers to the authors’ manual inclusion or exclusion of literature considering criteria in line with the research question and the study objectives. Among these 356 items, 23 papers from Scopus duplicated with WoSCC were deleted. Therefore, 333 papers were retained for manual appraisal. In this process, the authors reviewed the abstracts and full text to confirm their relevance: all the research should focus on applying LPS in education. Studies focusing on the development, validation, perception, and semantic analysis of CEFR and CSE were excluded. Reviews were also removed. Finally, 48 papers (14 articles from CNKI core journals, 9 from Scopus, and 25 from WOS) were retained for review. Sixteen of them focused on the application of CSE, and 32 on CEFR.

Categorizations

The 48 articles that remained were categorized by the authors following the framework stated in Figure 1 and Table 1. By qualitative context analysis of the abstracts and full texts, these articles were categorized into six groups according to stakeholders: policymakers, teachers, learners, curriculum and resource developers, testers, and researchers.

Synthesis and Findings

This section aims to unveil how different stakeholders apply LPS. After synthesis, results indicated that the application for CEFR covers five groups of stakeholders, except students; CSE focused on four groups, excluding policymakers and curriculum and resource developers. Among the reviewed articles, 8 papers concentrate on the policymakers (CEFR only);12 on teachers (8 for CEFR, 4 for CSE); 17 on testers (11 on CEFR, 3 on CSE, and 3 on them both); 3 on curriculum and resource developer (CEFR), 3 on students (CSE), 5 on researchers (2 on CEFR and CSE respectively, 1 article covers both), as seen in Figure 3. The effects of their application are also described in the following paragraphs.

Number of grouped articles.

Policymakers

CEFR is claimed to provide a common basis for elaborating language syllabuses, curriculum guidelines, and teacher development. The studies in Table 4 showed the adaptation of CEFR in different countries. Nishimura-Sahi (2020) analyzed the educational trends and domestic needs for practical communicative proficiency in English to increase Japan’s economic competitiveness on the global stage. The CEFR-Japan was developed and successfully implemented by assembling various actors-government officers, researchers, commercial actors, administrators, and teachers. The author suggested that CEFR should be borrowed selectively to serve as a viable solution to further long-term educational and political agendas. To ensure its viability, all actors—different stakeholders and publishing houses and materials (such as guidelines and books) should be brought together.

LPS Were Used by Policymakers.

Savski (2019) justified how to use CEFR in Thai and Malaysia. The communicative orientation was unsuccessful in these two countries, and post-communicative philosophy should be advocated. He proposed that content and activities should be developed for learners to reflect on their identities as individuals and members of society. The content-based instruction (CBI) approach matched closely with the action-based concept of CEFR, thus having great potential as an alternative to CLT. Besides, policymakers should also consider how to empower students with CEFR criteria.

Nguyen and Hamid (2021) explained the historical and social context of adopting CEFR in Vietnam. They claimed that the following factors contributed to accelerating the adoption of CEFR in local milieus: English language policy changes, the need for economic and political innovations, the initiatives to reform higher education, and administrators’ tendency to solve domestic issues by looking outward. Nguyen and Hamid’s study demonstrated how the CEFR unfolded on the ground and interacted with the local educational context. It also highlighted the importance of global standards attached by educational actors at different levels. Piccardo et al. (2019) probed into the successful strategies of introducing CEFR in Canada and Switzerland. Results demonstrated that teacher education and CEFR-based examinations were the most important practices.

However, the nexus to CEFR is not always successful and enjoyable. Luxembourg is a critical case presenting the conflicts between international language proficiency standards and local realities (Brunfaut & Harding, 2020). The distinct language ecology, streamed schooling, national curriculum, and ongoing exam reform project limited the setting practice of using the CEFR. A dogmatic approach to CEFR as a common currency cost high in this country. Hence, a better way of theorizing should be proposed to incorporate local knowledge into the standard-setting process without compromising procedural validity when international standards collide with local educational cultures.

Another example is the introduction of CEFR in Thailand (Franz & Teo, 2018).

CEFR failed in its postulated aims of teaching in basic education and teachers’ linguistic and instructional skills. Most instructors felt that CEFR was introduced as a tool for evaluating their proficiency scales, not in classroom teaching and assessment. Additionally, they claimed this tool was suitable for Europeans, not for them, as they failed to meet the targeted B2 levels, causing them to lose face.

Aziz et al. (2018) revealed problems with implementing CEFR in pre-primary and secondary schools in Malaysia, where teacher training still needed improvement. They stated that all stakeholders must be adequately coordinated, aware of their roles, and informed of recent developments. Superficial training should be complemented by more support from the government. Deygers et al. (2018) explored the impact of CEFR on European university admission exams. Their findings indicated that B2 is the most adopted level of university entrance. However, the CEFR levels are frequently abused for marketing purposes or to restrict university entrance.

Teachers

Teachers are the primary users of LPS, as seen in Table 5. Generally, they use LPS in assessment and teaching.

Application of LPS by Teachers.

For Assessment

Mazlaveckienė (2018) used the CEFR grammar scales to assess Lithuanian English Philology students. Results indicated that these students had a limited repertoire of grammatical structures ranging from level B1 to B2. It shed light on important trends in developing English Philology students’ foreign language competency in Lithuania. Zhao and Zhao (2023) explained how teachers and students in China co-constructed writing assessment criteria based on CEFR. The findings supported the efficacy and significance of developing these criteria for improving learners’ cognitive and metacognitive knowledge of writing and assessing. They highlighted the importance of learners’ competence in developing assessment criteria and implementing a future-drive self-assessment using the CEFR or LPS in local settings.

Shi and Zheng (2021) developed an intelligent diagnostic learning APP based on CSE, in which sports majors practise English adaptively. T-tests and questionnaires revealed their effectiveness in motivating and improving students’ learning outcomes. He et al. (2021) used CSE-based Cognitive diagnosis models (CDM) to assess the writing abilities of Chinese undergraduates. The linear logistic model analysis demonstrated that diagnostic results could distinguish masters from non-master and facilitate learning by increasing students’ competency through feedback and remedial activities. As the authors stated that using CSE for diagnostic purposes could provide methodological support for using a CDM-based approach in diagnostic assessment; it could also provide diagnostic feedback for L2 learners to improve learning.

For Teaching

The above articles concern how LPS were used in assessment, while the following articles focused on teaching practice. Zhong (2019) practised CSE in listening and speaking course in a vocational college by constructing a model combining self-assessment, peer assessment, AI assessment, and teachers’ assessment. This model successfully enhanced students’ sense of learning responsibility and produced customized learning objectives and strategies. It stressed the importance of applying CSE as guidance in teaching planning and Instruction. Xiong and Liu (2020) emulated the reform of ESP teaching for open universities in China based on CSE. The adapted teaching content in CSE, with references to students’ work backgrounds and assessment criteria based on CSE descriptors, made this course suitable for learners in distance education. And this method proved effective in motivating their interest and enhancing the learning outcome. Rehner et al. (2021) showed how K-12 teachers’ planning, classroom delivery, and assessment practices change after CEFR-related professional learning. Teachers prioritized speaking and listening with less time allotted to writing and reading after learning CEFR; they also shifted their focus away from previous attention to language structure and error correction toward real-life situations; besides, they focused more on students’ sociolinguistic and pragmatic competencies. As for assessment, teachers prioritised functional competence and pragmatic and sociolinguistic appropriateness, contrary to the initial focus on grammatical accuracy and orthographic control. These shifts in teachers’ planning, classroom delivery, and assessment practices after CEFR-related training signaled an apparent change of their grammar-based model to an action-oriented approach in which language learning took place in genuine communication in an authentic everyday situation.

Likewise, the study of Choong et al. (2021) illustrated how CEFR affects primary school English teachers’ behaviors in Japan. Before introducing the CEFR, not all teachers conducted speaking assessments and teaching. Their concepts of Instruction and evaluation changed with the incorporation of CEFR in the elementary English curricula. Thus, speaking was highlighted in teaching and assessment.

In Thailand, Poonpon et al. (2022) reported a model named TIGA based on CEFR and core curriculum for low English proficiency students in rural secondary schools. Results from their quasi-experiment found a significant improvement in the experimental group. This model emphasized the importance of teaching strategy in engaging low-proficient students, especially the authenticity of learning tasks.

Infante Mora et al. (2019) reported how the CEFR was used as a methodological and evaluative tool to chart students’ progress and give feedback at a university from Seville. Students’ language proficiency was greatly improved, and they were more confident in speaking. This report proved that feedback based on standards is crucial in learning, and teachers’ role as facilitators should be amplified.

Like the nexus of CEFR with local context policies, not all cases are enjoyable. Despite the introduction of CEFR in education policy, some ESL teachers in Malaysia (Sidhu et al., 2018) and Turkey (Yüce & Mirici, 2022) had limited comprehension of CEFR-aligned curriculum and could not use CEFR properly. Moreover, students were discouraged from reflecting on their work based on the tasks. More work should be done to overcome ESL teachers’ constraints and help them bridge their knowledge between policy and practice.

Learners

Table 6 reveals how students used CSE. Zhang and Wang (2022) explored the scaffolding role of the CSE writing scale in college students. Results showed that with the intervention of CSE, students’ assessing ability, writing skills, and learning confidence were significantly improved. Li (2022) examined the effect of CSE-based peer assessment and task value on Chinese undergraduates’ self-regulated learning (SRL). Results indicated that learners’ SRL was significantly improved. Another study from He and Zhang (2021) incorporated the CSE in diagnostic assessment, goals setting, and remedial instruction to facilitate learning. The quasi-experiment indicated significant improvement in listening skills. Students’ self-report demonstrated that they held positive attitudes about this approach to learning, especially the function of CSE in setting SMART (specific, measurable, attainable, realistic, and time-bounded) goals.

Application of LPS by Learners.

Course Designers and Resource Developers

Table 7 shows how curriculum and resource developers use LPS. The study by Mohamed (2021) offers a practical model for constructing a CEFR-aligned curriculum. First, it should be essentially action-oriented and concentrate on supporting students in putting their competence into practise. Second, branching each CEFR level into two sub-levels (e.g., A2 into A2.1 and A2.2) would be handy; courses designed to help learners track and monitor their progress could improve their sense of achievement and motivate them. Third, introducing different themes and integrating similar functions that produce equivalent results can be more successful. Forth, a grammar syllabus should be practical and accessible for classroom learning. Mohamed provided an example of options and modifications that teachers may need to consider in implementing CEFR in their contexts. Kalnberzina (2018) compared the intercultural component in secondary and tertiary education curricula. It revealed the compatibility of these documents despite their differences in terminology, context, and level of impact; Little (2018) explored how the CEFR was adopted in designing a curriculum framework for Irish immigrant primary schools. Part of the CEFR scales and descriptors were tailored to the Irish context. And the mediation skills in the CEFR can supplement the deficiency of analytical thinking and problem-solving abilities in secondary education.

How Curriculum and Resource Developer Use LPS.

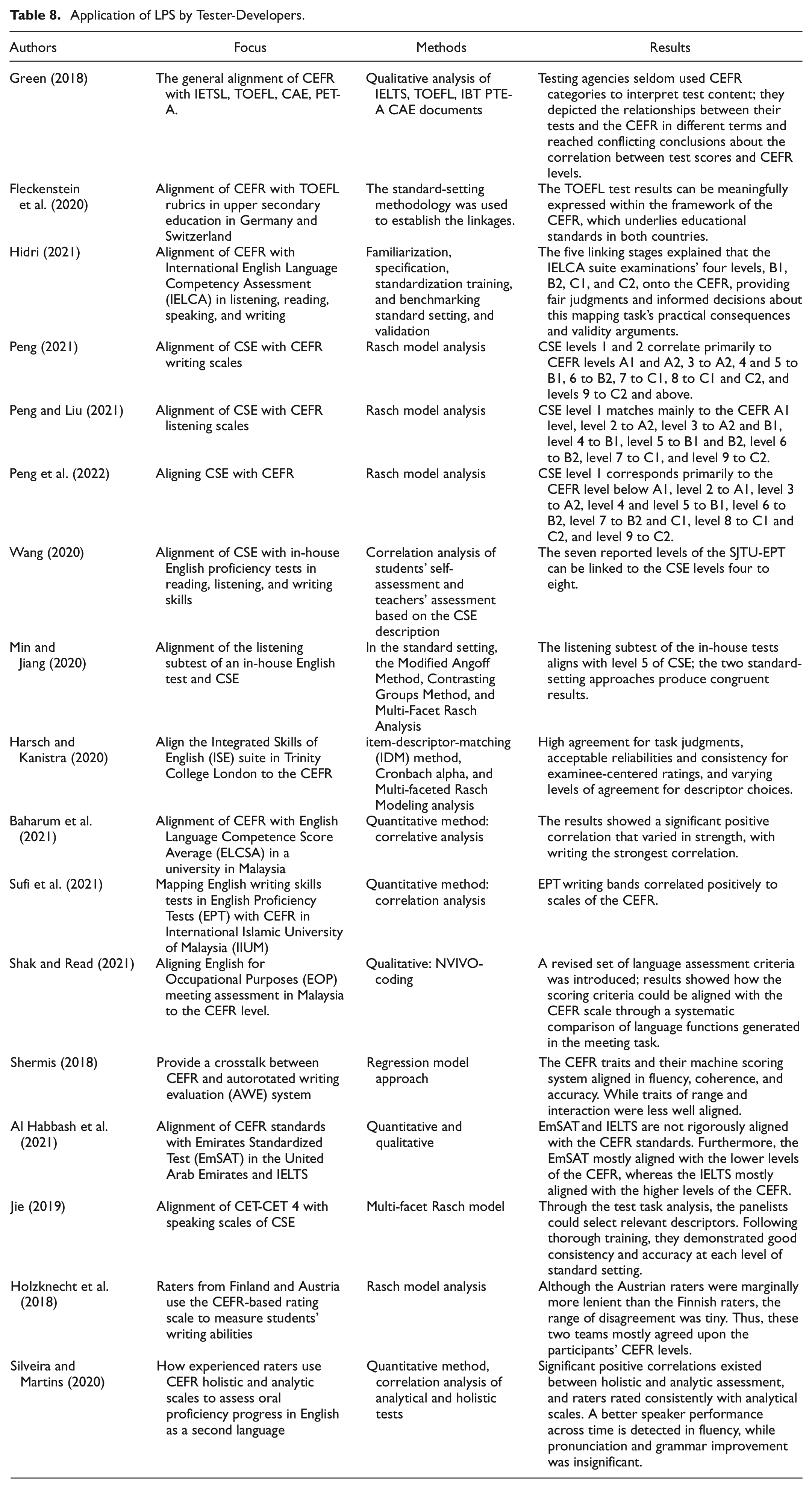

Testers-Developers

Tester developers generally use LPS to align tests and different frameworks as a criterion for rating.

Alignment

Table 8 indicates that tester developers mainly use LPS to align tests like IETSL and TOEFL in the international arena, large-scale tests in specific contexts, and in-house tests. Also, alignments between LPS were conducted.

Application of LPS by Tester-Developers.

Alignment With International Tests

Fleckenstein et al. (2020) aligned the writing rubric of TOEFL with CEFR in a standard-setting methodology in Germany and Switzerland. Results indicated that the TOEFL test could be meaningfully expressed within the framework of the CEFR. However, the study by Green (2018) showed that IETSL, TOEFL, CAE, and PET-A test agencies made little use of CEFR categories to explain test content and arrived at conflicting conclusions about the test scores and CEFR levels. Among these tests, PTE-A was the only one that defined “at” a level in terms of success likelihood in relation to “Can Do” descriptors for users; others’ band boundaries did not correlate directly to CEFR levels. He highlighted the importance of content and quality of assessment procedures. Hidri (2021) aligned the CEFR with International English Language Competency Assessment (IELCA) in listening, reading, speaking, and writing. He also demonstrated that the alignment of five major stages (familiarization, specification, standardization training, and benchmarking standard setting, and validation) could provide abundant evidence of dependable results and made the skills and items in test more specific to reflect the CEFR descriptors.

Hidri prioritized using CEFR to map tests by addressing different mapping stages. It could help teachers effectively use the CEFR descriptors to align IELCA tests and empower them to implement curriculum activities in class transparently and coherently.

Alignment With In-House Tests

Wang (2020) showed how the CSE levels 4 to 7 aligned with SJTU-EPT (Shanghai Jiaotong University English Proficiency Tests). The scores based on the descriptors of the CSE from the teachers and students claimed that the B and B+ levels in SJTU-EPT corresponded with level 6 in CSE, and the C and C+ aimed at level 5. Most of the A-level students corresponded with level 7, and D aimed at level 4 in CSE. Min and Jiang (2020) aligned an in-house English test subtest at Zhejiang University in China with CSE with Modified Angoff Method and the Contrasting Groups Method. The congruent results indicated that the tests align with level 5 of CSE.

Harsch and Kanistra (2020) aligned the ISE suite in Britain, Trinity College London, to the CEFR with an item-descriptor-matching (IDM) method and a complementary benchmarking approach. Results showed high agreement for task judgments, acceptable reliabilities and consistency for examinee-centered ratings, and varying levels of agreement for descriptor choices. In Malaysia, scholars aligned CEFR with Competence Score Average (ELCSA), English Proficiency Tests (EPT) in universities, and English for Occupational Purposes (EOP) meeting assessments (Baharum et al., 2021; Shak & Read, 2021; Sufi et al., 2021). Results showed an overall positive correlation between CEFR and these in-house tests, proving the acceptability and credibility of these tests. Shermis (2018) established a crosstalk between CEFR and one automated writing evaluation system (AWE) in America. The CEFR traits and their machine scoring system were clearly aligned in fluency, followed by coherence, and accuracy. While traits of range and interaction were less well aligned. The author highlighted that operationalizing “good writing” and advocating the traits in CEFR could help machine scoring accurately.

Alignment With Large-Scale National Tests

In China, CET-4 is a test with the largest population. Most college students attend this test. Jie (2019) aligned CET-4 with speaking scales of CSE, demonstrating good consistency and accuracy at each standard-setting level. Another alignment made by Al Habbash et al. (2021) revealed that the large-scale tests–Emirates Standardized Test (EmSAT). In United Arab Emirates was not rigorously aligned with the CEFR standards.

Alignment Between CEFR and CSE

A series of alignments between these scales were made by the same author, proving that CSE level 1 corresponds primarily to the CEFR level below A1, level 2 to A1, level 3 to A2, level 4 and level 5 to B1, level 6 to B2, level 7 to B2 and C1, level 8 to C1 and C2, and level 9 to C2 (Peng, 2021; Peng & Liu, 2021; Peng et al., 2022). As the author stated: these studies contributed to the internationalization of the Chinese assessment system and provided references for the alignment of language standards and language education in China.

Rating

Another type of application concerns how raters use CEFR scales to assess learners’ proficiency levels. Silveira and Martins (2020) explored how experienced raters used CEFR holistic and four analytic scales (vocabulary, grammar, fluency, and pronunciation) to measure students’ oral proficiency progress. The results demonstrated that the five scales were positively correlated, and raters were consistent in using these criteria. However, subscales detected significant progress in fluency only. Grammar and pronunciation improvement was hardly seen. The authors claimed that even in a communicative teaching context, grammar and pronunciation should be emphasized to coordinate the development of subcomponents of oral proficiency. The research from HoIzknecht et al. (2018) also showed that raters in Finland and Austria might differ in leniency; they agreed to a large extent on the CEFR levels of the participants if they are trained and experienced in the CEFR-based rubric.

Researchers

Researcher concerns with the development and improvement of scales (Y. Jin & Jie, 2020). As seen in Table 9, a Study by Y. Liu et al.(2021) demonstrated how the English proficiency standard for adult learners in open universities (OUSE) is developed based on CES and CEFR and how this scale is used to certify their learning outcomes. The authors reported the specific steps of designing and applying this scale, showing that the OUSE provided a benchmark for assessing adult learners in open universities. Ma and Chen (2021) constructed a pragmatic competence assessment model and standard based on the CSE, complementing the current assessment. Yang et al. (2021) developed Typical Interpreting Activity Scales (TIAS) based on CSE.

Application of LPS by Researchers.

Yannakoudakis et al. (2018) developed an Automated Writing Placement System for ESL Learners based on CEFR full scales. This model was incorporated into Cambridge English Write & Improve system to offer diagnostic feedback for learners, facilitating self-assessment, tutoring, and improvement in learning. Schmidt et al. (2019) developed a guidebook and tools to implement the CEFR for course design, simplifying the implementation of CEFR, and fostering the novice use of it.

These studies operationalized standards in LPS and guided language teaching and assessment from theoretical to practical levels.

Discussion

This paper reviewed and summarized the application of LPS in education, focusing on the CEFR and CSE. After eligibility, 48 articles met our inclusion criteria; 16 studies were about CSE, and 32 were on the CEFR. These studies showed how LPS was applied by policymakers, curriculum designers, researchers, test developers, teachers, and students.

Policymakers

Mohamed (2021) stated that the CEFR had been used more frequently at the macro level, that is, for policy-making. The results of this review, as seen in Figure 3, confirmed this finding. The CEFR is adopted globally for its open-mindedness and vagueness, which scholars often criticize. However, this quality made the CEFR flexible for local contexts (Savski, 2019). The reviewed papers demonstrated how the CEFR was adopted selectively in different backgrounds. The interaction of CEFR with the local setting can be positively and negatively influenced by local realities. Social needs necessitated the adoption of global standards, and the government or policymaker selectively borrowed criteria from the CEFR (Nguyen & Hamid, 2021; Nishimura-Sahi, 2022).

However, the introduction of foreign LPS should be highly cautious. They should be tailored to the specific context. Otherwise, conflicts may arise. The study of Brunfaut and Harding (2020) served as an extreme case of the tension between the CEFR and the local realities. The standard setting process of Luxembourg Épreuve Commune for English was highly influenced by the local realities, such as multilingual learning ecology, streamed schooling system, national curriculum, and exam reform. The lessons of contextualization of CEFR shed some light on policy-making in other countries. When global criteria and local context collide, a better way of theorizing how to integrate local knowledge and international standard should be proposed without compromising standard-setting procedures (Brunfaut & Harding, 2020).

Besides, the introduction of LPS in policy should be accompanied by updating teaching philosophy. As Savski (2019) claimed, the incompetence of the old version of communicative teaching cannot match the concepts advocated by the CEFR in Thailand and Malaysia. New agendas for policymakers to adapt to the practicing of CEFR were proposed: alter teaching philosophy to a post-communicative concept, devitalize the teaching process, and empower students with criteria.

Furthermore, introducing foreign standards should also prioritize the training of teachers. The unsuccessful adoption and implementation of CEFR in Thailand, Malaysia, and European universities (Aziz et al., 2018; Deygers et al., 2018; Franz & Teo, 2018) prioritized the significance of teacher training and synchronization of all stakeholders. Otherwise, the CEFR would be misused or abused.

Teachers

Teachers adopted LPS as an assessment for learning. LPS are benchmarks of assessments to evaluate students’ language proficiency more accurately and pinpoint essential trends in developing students’ language competency (Mazlaveckienė, 2018). They could also be references to diagnostic assessments, from which the feedback could inform students what remedial works should be performed to improve their language proficiency. This type of application highlighted the merits of advanced psychometric techniques to provide diagnostic feedback for L2 learners (He et al., 2021; Shi & Zheng, 2021).

The application of LPS could also lead to new approaches to teaching. Zhong (2019) constructed a model combing self-assessment, peer assessment, AI assessment, and teachers’ assessment to facilitate learning. Zhong’s study exemplified the function of CSE as goal-setting in the teaching process. Shi and Zheng (2021) designed an adapted smart testing system based on CSE to meet the objective and subjective needs for the practice of a “learning, teaching, and testing” integrated teaching model. This model highlighted how diagnostic assessment could be used to facilitate learning. Xiong and Liu (2020) explored using rubrics and contents adapted from CSE in assessing and improving English proficiency in open universities. The study of Zhao and Zhao (2023) demonstrated that the collaborative process improved the feasibility and usefulness of the CEFR descriptors and developed students’ cognitive and metacognitive knowledge and skills for setting up assessment criteria. These studies proved the effectiveness of LPS as a tool in improving learning outcomes and activating learning interests.

Teachers practised LPS creatively to facilitate students’ learning, and in turn, their concepts and teaching philosophies were affected by LPS. As CEFR promoted an action-oriented approach in language teaching, it changed teachers’ concepts from grammar-oriented learning to authentic-task-based learning, input-focused to out-put-focused teaching (Poonpon et al., 2022; Rehner et al., 2021). It also gave birth to the student-centered concept, emphasising the collaborative learning process of teachers and students (Zhao & Zhao, 2023).

Students

Generally, students used LPS as self- or peer-assessment tools and the goal-setting benchmark. Self-assessment based on LPS plays a scaffolding role in learning, and improved students’ language proficiency, assessment literacy, and confidence (Zhang & Wang, 2022). When integrated into learning, LPS improves the efficacy of self- and peer-evaluation, enhances students’ self-regulation, and boosts the value of assessment for learning (Li, 2022). Moreover, LPS could guide goal-setting and offer students a benchmark to analyze and reflect on their learning progress critically and actively and remedy their learning (He & Zhang, 2021). In the long term, LPS could be an essential learning guidance and assessment tool to cultivate independent lifelong learners.

Curriculum and Resource Developers

Adopting descriptors from LPS scales to local context and adding them to an existing curriculum document should be encouraged. Compiling descriptors from the CEFR, Mohamed (2021) developed a generic concise Arabic curriculum of salient features and aligned it with CEFR. This curriculum conformed to the CEFR’s philosophy, that is, transparent, coherent, and flexible. Kalnberzina (2018) added some CEFR cultural components and standards into the secondary school curriculum to develop learners’ intercultural decision-making abilities. These two studies set good examples of complementing existing curricula by aligning CEFR standards. Both studies highlighted the importance of adapting to local context and content alignment. However, the number of retained papers indicates that studies on this type need to be fleshed out by further explorations.

Testers-Developers

Test scores alone are insufficient to support administrators or teachers in making meaningful decisions, nor can the test takers be well-informed of their proficiency levels. Aligning the scores with the “can do” descriptors in LPS is significant in teaching, learning, and assessment (Wang, 2020).

The alignment of tests to LPS levels could provide learners with a valuable sense of their current language ability and a more detailed and comprehensive view of students’ linguistic profiles (Sufi et al., 2021). With the help of a competent teacher—this alignment might form the basis for further study or remedial learning (Fleckenstein et al., 2020). It could also reflect how to use unified standards to interpret students’ authentic language proficiency, provide feedback for teaching, and back up the learning plan and objectives (Wang, 2020).

Alignment with tests also accentuates the importance of using LPS to ensure the credibility and reliability of testing results (Hidri, 2021), especially for in-house test, which differs from school to school. The same score in different schools does not claim the same level of proficiency. Aligning in-house tests with LPS can bridge this gap by measuring students’ ability more accurately with a common benchmark and promote the accreditation of academic scores in different schools (Min & Jiang, 2020); it could also provide evidence for further improvement of language tests (Baharum et al., 2021).

Studies on alignment between different LPS are also crucial. As alignments could promote the recognition of standards from other areas and cultures, highlighting the significance of language scales in use and construction, helping the mutual-recognition of different standards (Peng & Liu, 2021; Peng et al., 2022).

Furthermore, LPS is a benchmark or a tool for language assessment. Slightly modified LPS can be a valid and reliable tool for assessing language proficiency. However, training LPS standards and the raters’ language proficiency levels should be reinforced (HoIzknecht et al., 2018; Silveira & Martins, 2020). Under these premises, using the LPS descriptors for rating can ideally lead to the same results across different contexts and achieve high congruence for all scales.

Researchers

LPS can provide a theoretical framework, methodology, and source of descriptors for developing new tools. The CEFR is well-known for benchmarking the design of a contextualized language assessment framework or systems. CSE also proved to be a practical reference for constructing new scales and assessment models (Y. Liu et al., 2021; Ma & Chen, 2021; Yang et al.,2021). And the new tools help to operationalize the practice of LPS (Yang et al., 2021), simplify their implementation, and usher in novice use of LPS (Schmidt et al., 2019). Finally, the new tools could contribute to students’ reflection on their errors, tracking progress, and facilitating learning (Yannakoudakis et al., 2018).

Conclusion

This research adopted the PRISMA systematic review method for an in-depth review of 48 articles regarding how different stakeholders use LPS in the education domain and the effect of applying LPS. The findings revealed that policymakers used LPS selectively to backbone their education decisions and reforms (Nishimura-Sahi, 2020). The adoption of CEFR should consider specific social contexts, such as language ecology, streamed schooling, the national curriculum, ongoing exam reform, and concrete economic and political situation (Nguyen & Hamid, 2021). Teachers used LPS to assess students’ language proficiency to gain an overall profile of students’ competence and diagnose their problems, achieving goals of assessment for learning (He et al., 2022; Shi & Zheng, 2021). New teaching models based on LPS were also adopted to improve learning confidence and outcomes (Shi & Zheng, 2021; Xiong & Liu, 2020; Zhong, 2019). Students used LPS as a benchmark to provide feedback from their self-assessments, track their progress, and set learning goals (He & Zhang, 2021; Li, 2022; Zhang & Wang, 2022). The intervention of LPS in learning could help cultivate self-regulated learners and enhance students’ motivation and learning outcomes. Curriculum designers tailored descriptors and scales from CEFR to develop new curricula, align the existing ones, and make revisions where necessary (Kalnberzina, 2018; Mohamed, 2021). Test developers aligned LPS with tests to make the results more reliable and credible, measure students’ ability more accurately, and provide evidence for improving language tests (Hidri, 2021; Sufi et al., 2021). Raters used LPS to assess more accurately (HoIzknecht et al., 2018; Silveira & Martins, 2020). With the methodology and theoretical framework, LPS could offer references for developing new rubrics and frameworks and assessing models for researchers (Ma & Chen,2021; Yang et al.,2021).

Despite the potential in education, to ensure the successful implementation of LPS, trainers, teachers, testers, and raters alike, all the stakeholders should update their knowledge and information, improve their language skills, and take their responsibilities; the government should make more effort (Aziz et al., 2018). The application of LPS in education is a systematic project supported by wide-ranging stakeholders and updated concepts and behaviors; it calls for capturing political interests, developing contextualized relevant resources, and providing sufficient teacher training (Nishimura-Sahi, 2020).

This review offers a panoramic view of how LPS are used in education, providing evidence for the application validity LPS. However, there are some limitations in this study, for it mainly concerns CEFR and CSE. Besides, it explored the application and impact of LPS by adopting a model based on language testing for the paucity of theory in LPS application. Studies theorizing the validation of the aftereffects of other LPS should be encouraged.

Footnotes

Acknowledgements

I would first like to thank my supervisor Dr Samah Ali Mohsen Mofreh, whose expertise was invaluable in formulating the research questions and methodology. Her insightful suggestion pushed me to sharpen my thinking and brought my work to a higher level. I also would like to thank Dr Zhong for helping appraise the articles and the anonymous peer reviewers whose feedback offered insightful points in revising this article.

Author contributions

AZ writing the original draft and revising the article. SM conceived the initial idea and supervised the literature and methodology. Moreover, she revised the first complete draft. SS helped in conceiving the idea and provided guidance and supervision on the theory and empirical side. He offered some suggestions on the final revision.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.