Abstract

Quality assurance of higher education has been carried out across the world in the past few decades. Therefore, setting up an effective internal quality assurance (IQA) system that fosters improvement in teaching and learning is of great significance. This research examined whether the IQA system was associated with the continuous improvement in the quality of academic programs in Vietnamese higher education institutions (HEIs). The study looked into the IQA system’s infrastructure, its instruments and its efforts at program improvement across different types of HEIs including infrastructure, instruments, and continuous quality improvement efforts in academic programs that are implemented across the different types of institutions. A total of 715 responses from the survey and 46 interviews were analyzed for this paper. The findings demonstrated that the IQA of academic programs in Vietnamese higher education built the fundamental infrastructure and used indirect instruments but did not frequently use the IQA results to continuously improve educational quality. On the basis of these findings, recommendations are made to improve the IQA system of academic programs.

Introduction

Quality assurance (QA) of higher education has been carried out in most of the higher education systems across the world in the past few decades. External quality assurance (EQA) and internal quality assurance (IQA) play an important role in monitoring, managing, and enhancing the quality of academic programs. In Vietnam, IQA implementation was initiated in higher education institutions (HEIs) in the early 21st century. Over the past 20 years, much progress has been made in the IQA system as a result of the Ministry of Education and Training’s (MOET) updated policies regarding compulsory institutional and programmatic accreditation. Every university established a unit specializing in QA to oversee IQA activities (Nguyen et al., 2017). In addition, international and national programmatic accreditation have been significantly shaping the IQA system in Vietnam. Therefore, academic programs planning to get accreditation have tended to use the Plan-Do-Check-Act (PDCA) model as a fundamental framework for IQA (Cao, 2020; Huynh & Nguyen, 2020; Nguyen, 2020), which is similar to the assessment cycle commonly used in the US HEIs (Allen, 2004; Suskie, 2009). Both these IQA frameworks facilitate the use of the IQA results for the continuous quality improvement of academic programs. In addition to programmatic accreditation, some Vietnamese HEIs have used International Organization for Standardization (ISO 9000:2015) to monitor all their institutional activities and improve their materials and processes (Trinh, 2020). When building the IQA system, Vietnamese HEIs were also mindful of aligning the program learning outcomes (PLOs) with the Vietnamese Qualifications Framework (VQF) (Tran et al., 2020). Some researchers shared their updating experiences through conference proceedings (Le, 2020; Nguyen, Nguyen et al., 2020; Vo, 2020) or discussed the challenges in the alignment process (Thi Hoai et al., 2018).

The IQA system of academic programs continues to receive attention from Vietnamese higher education. To facilitate the IQA process, first, the Vietnamese HEIs built a technology system, designed to: support the export of the self-study report for programmatic accreditation (Cao, 2020; Nguyen, 2020); integrate data among offices in the institution (Huynh & Nguyen, 2020); facilitate the sharing of data with internal entities such as Board of Trustees, President, Deans, and QA and academic affairs offices (Ho et al., 2020); and provide consistent, effective and cost/human resources savings for IQA activities institution-wide (Ho et al., 2020). The fundamental IQA infrastructure supported the IQA implementation in Vietnam. Second, to implement the IQA system effectively, academic programs engage stakeholders such as alumni (Pham & Doan, 2020), entrepreneurial organizations (Tran, 2020), and students (Nguyen, Tran et al., 2020; Trinh, 2020) to provide more feedback to institutions through surveys about program outcomes, course outcomes, and academic program support services. In addition to surveys, Vietnamese academic programs commonly use course grades and GPA as the major instruments to demonstrate evidence of student learning. Assessment of program learning outcomes (PLOs) is still a new approach to providing evidence of student learning in the Vietnam context.

The most important step in the IQA system for academic programs is the use of assessment results to improve the quality of academic programs. Both MOET policy and program accreditation require academic programs to make continuous improvements. Some changes made on the basis of employers and alumni surveys have been used to update the PLOs and curriculum and to improve teaching and student support from the student survey (Nguyen, 2020; Nguyen, Bui et al., 2020). However, Cao (2020) and Vo (2020) argued that institutions did not have a system to monitor the use of assessment results for quality improvement. Most Vietnamese IQA research has consisted of single case studies sharing the experiences of implementing an IQA component of academic programs. There seems to be no research on the national IQA system of academic programs in Vietnamese higher education to learn how the IQA system has progressed over the past 20 years. To fill this gap, this paper examines how the Vietnamese IQA system of academic programs in Vietnam HEIs is implemented across the different types of institutions.

Literature Review

IQA Infrastructure of Academic Programs Around the World

IQA of academic programs is a central objective for HEIs across the world. When UNESCO analyzed eight case studies implementing IQA world-wide, these universities provided three major IQA components: structure, instruments, and the assessment. IQA structure included the official guidance to promote IQA activities across the institution such as quality assurance manuals (Kuria & Marwa, 2017; Lamagna et al., 2017), quality handbook/manuals (AlHamad & Aladwan, 2017; Mcghee, 2021), the quality assurance policy (Chu & Westerheijden, 2018), and a quality assurance framework (Daguang et al., 2017; Lange & Kriel, 2017; Vettori et al., 2017). Although the institutions named the documents differently, the handbooks/manuals mainly included the definition of quality or QA, the principles of quality and QA, and how the institutions integrated the requirements of quality, QA, and accreditation standards with national, regional, and international trends in higher education such as INQAAHE Guidelines of Good Practice (International Network for Quality Assurance Agencies in Higher Education [INQAAHE], 2018).

QA offices and QA committees were the second component in the IQA structure at the institution, college, and department levels. These institutions named their QA offices differently, such as The Center of Higher Education Development and Quality Enhancement (Ganseuer & Pistor, 2017), the Center for Quality Assurance (CQA) (Lange & Kriel, 2017), institutional research (IR) and academic planning (Lange & Kriel, 2017) or the Quality Assurance and Accreditation Center (AlHamad & Aladwan, 2017). Similarly, the US institutions named these QA offices depending on the specific responsibilities. Normally the IR office facilitates the institution’s decision making through quantitative evidence. Meanwhile, the office of assessment is in charge of IQA activities. To facilitate IQA activities, a good practice is to establish an IQA committee to be responsible for the IQA tasks, such as providing feedback on IQA activities and discussing IQA results with multiple stakeholders. The committee should have representatives from administrators, faculty, and staff from across the institution. Notably, the UNESCO cases emphasized that institutional and specialized accreditation are the external drivers determining the effectiveness of the IQA system. Similarly, research from Jankowski et al. (2018) based on provost surveys revealed that accreditation served as a significant driver to provide accountability evidence. Of course, the philosophy of IQA activities is to booster quality improvement.

To build an IQA culture, Martin (2018) indicated that adequate financial incentives and leadership support engaged administrative staff and lecturers better in the IQA process. In addition, teacher engagement in IQA activities and teacher leadership in the IQA committee were also factors that impact effective IQA activities.

The ASEAN University Network - Quality Assurance (AUN-QA) manual proposed a quality model for an IQA system to improve higher education quality. AUN-QA also suggested clarifying the definition of IQA, choosing appropriate IQA instruments, embedding institutions’ strategies in the instruments of choice, and tuning into external requirements. These recommendations aligned with the UNESCO research findings. According to their proposal, four elements of an IQA system are the monitoring instruments, the evaluation instruments, the IQA processes for specific activities and specific IQA instruments (AUN-QA, 2020) (Figure 1). Monitoring instruments are used to keep track of student success, such as student progress, pass/dropout rate, and feedback from employers and alumni. The suggested evaluation instruments are student evaluations, course evaluations, and curriculum evaluations. AUN-QA monitoring and evaluation instruments shared similarities with UNESCO findings in relation to IQA for teaching and learning.

Quality model for IQA model (AUN-QA, 2020, p. 9).

IQA Instruments of Academic Programs

To promote IQA for academic programs, institutions need to choose suitable IQA instruments. Analysis of UNESCO research (Martin, 2018) revealed that good IQA instruments for teaching and learning are: course and program evaluation through stakeholders’ satisfaction surveys, in-depth interviews with stakeholders such as students, alumni and employers, and job market analyses. In the UNESCO research, the institutions did not identify whether the surveys were local or national. In the US, to provide reliable and benchmarking outcome assessment results, institutions use both national exams administered by private testing centers and local assessments collected from faculty within the academic program. Examples of direct national exams for learning outcomes in general education programs are General Education Assessment (GE), Collegiate Learning Assessment (CLA+), and Collegiate Assessment of Academic Proficiency (CAAP) (Pham, 2016). One popular indirect assessment that most US universities use to triangulate with direct assessment measures is the National Survey of Student Engagement (NSSE) administered by Indiana University or the student experience survey (SES) used in Australia. Assessment of student workload, evaluation of the courses, evaluation of the programs, and supervision of the teachers were all used by UNESCO’s eight case study institutions to evaluate their academic programs. Tracer studies of graduates, satisfaction surveys of employers and labor market analysis are common tools for assessing program employability. Noticeably for the domain of teaching and learning and for employability, the institutions used multiple tools to triangulate the IQA results. This approach aligns with U.S. assessment experts’ recommendations. In addition, according to Suskie (2009), while using multiple IQA instruments, it is necessary to prioritize the direct assessment measures of the performance of student learning, such as assignments, quizzes, or portfolios, and supplement them with indirect assessment measures, such as student perceptions in surveys, to provide evidence of student learning.

Methods

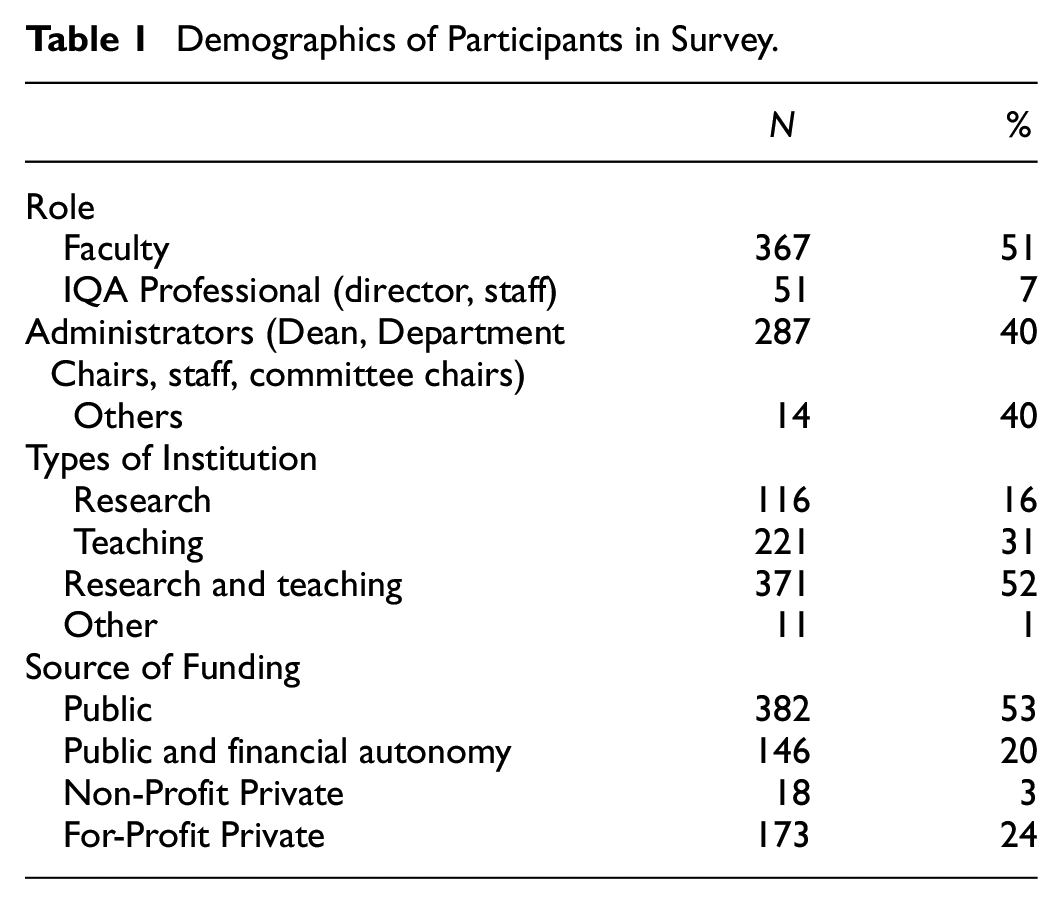

This research used both quantitative and qualitative approaches to collect data. A survey and interviews were the two primary data collection tools. The survey used items from the international survey of IQA administered by the UNESCO International Institute for Educational Planning (IIEP) (Martin & Parikh, 2017). The researchers contacted the authors and obtained permission to adapt the survey items for research purposes. The members of the research group translated the survey and reviewed it with other members for accurate translation. Next, the survey was sent via Google Form and in paper form to Vietnamese universities across three main geographic areas in Vietnam (the North, the Middle, and the South). The researchers received 736 responses, but some responses were not complete. Since the incomplete responses was less than 5% of the population, they were deleted (see Table 1), so the total number of responses to the survey was 715. The researchers checked for the survey’s internal reliability in the IQA infrastructure and IQA instruments, and the Cronbach Alpha was .78, and .76 respectively (these met the acceptable threshold of .70).

Demographics of Participants in Survey.

This research sought to answer two questions:

Which IQA infrastructure are the academic programs in Vietnam HEIs building?

Which IQA instruments are the academic programs in Vietnam HEIs implementing?

The researchers also interviewed 46 participants, including 3 provosts/vice provosts, 9 directors/vice directors of QA, 7 deans/vice deans, 8 QA staff, 3 independent experts, 11 students, and 5 faculty members. The average interview length was 30 minutes. The interviews were recorded and transcribed by the researchers. Thematic analysis was the principal method used to analyze the interview transcripts (Creswell, 2012). Moreover, peer debriefing was used to ensure the trustworthiness and credibility of the qualitative research approach (Janesick, 2015). Specifically, an independent researcher experienced in qualitative research and who was bilingual in Vietnamese and English was invited to review the interview transcripts, coding process, and final themes. This peer debriefer also checked the quotation translations from Vietnamese into English.

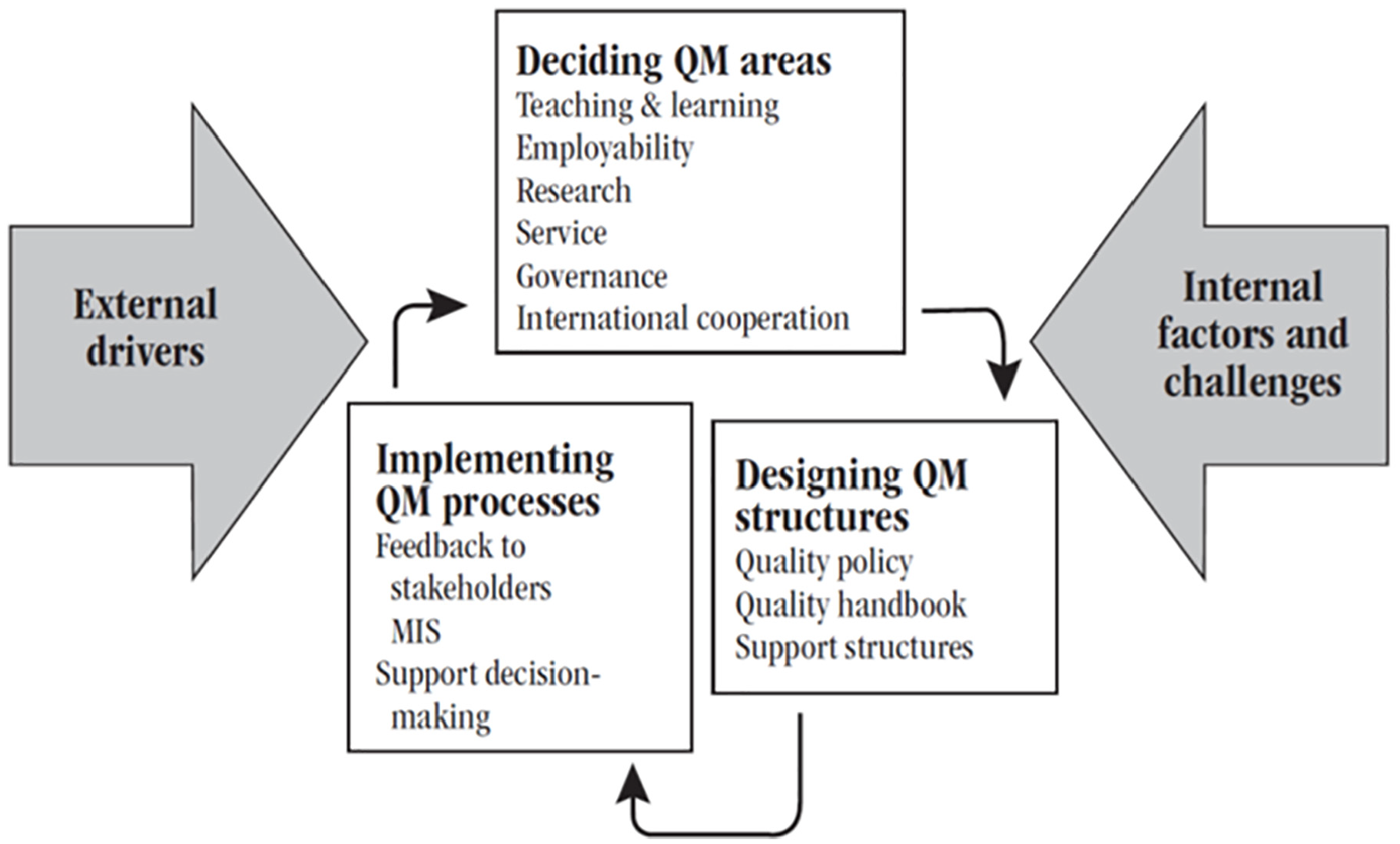

The systemic view of quality management (QM) by Martin and Parikh (2017) was the conceptual framework for this research (Figure 2). In this study, quality management focuses only on teaching and learning in the deciding QM areas.

A systemic view of quality management for the survey (Martin & Parikh, 2017, p. 20).

Results

IQA Infrastructure of Academic Programs

Most HEIs in Vietnam have a fundamental infrastructure for conducting IQA activities, such as an established IQA model, a handbook for conducting IQA activities, and IQA committees responsible for IQA activities. Most participants identified IQA models based on the MOET circular 07 in 2017 for academic programs, national and regional programmatic accreditation, and ISO 9000: 2015. Participants from the HEIs with financial autonomy said their institutions used ISO 9000: 2015 as an additional procedure to manage the IQA activities for the whole institution since their objectives were not only to meet the accreditation requirements but also to compete in international rankings. The QA staff from these institutions showed a commitment to the organizational procedure using ISO, but some faculty members indicated that the ISO procedure was applied institution-wide and provided little flexibility in the implementation process to accommodate program differences. One faculty member from this type of institution also clarified that each department and each academic program had disciplinary difference, and suggested that institutions should provide some flexibility for departments across the institutions to implement quality procedures successfully.

One QA director from a public HEI participating in an innovative national project for a specialized discipline emphasized their further use of ISO to meet the accountability requirements for funding from stakeholders. Half of QA staff and directors indicated that they just followed the MOET policy to “check off the list.” All participants mentioned programmatic accreditation as a major driver in building IQA models. However, some QA staff, QA directors, and chairs commented that institutions did not have a consistent IQA system for the whole institution. Institutions tended to have different IQA models for different programs following different types of programmatic accreditation, such as international programmatic accreditation (AACSB, ABET, ASSIN, and FIBBA) and regional and national accreditation (AUN-QA). If the academic programs did not plan to pursue programmatic accreditation, they committed only to MOET’s requirements rather than going through a formal IQA process.

To facilitate the IQA infrastructure, QA offices often have a quality policy statement and a strategic document to determine present and future decisions on quality issues (Martin, 2018). Results from this study’s survey showed that 50% of the participants chose “A quality policy statement is very important to my institution,” and 50% indicated that it is less or not important to the institution. To facilitate the implementation of the IQA process, a QA office often develops a QA handbook. Almost 90% of participants indicated, “my institution has an institutional quality management handbook,” and 86% of participants stated, “the practical activities of QM are clearly described in other institutional documents.” Meanwhile, 80% of participants checked “Yes” in “we are developing an institutional quality management handbook” (see Table 2). The interviews provided some additional information. Institutions with a QA office for 5 years and more had mainly implemented IQA activities. A QA director shared, “we used the guidelines in the handbook to improve the IQA activities for institutions annually.” Institutions with a QA office for 3 to 5 years agreed that it was important to have a handbook for IQA activities but recommended consultation from experts to work on the handbook. Institutions with a QA office for less than 3 years did not have an IQA system for all academic programs and did not have a handbook. These offices were mostly committed to the basic criteria—from the MOET policy—for opening an academic program.

Survey Responses Regarding IQA Handbook.

Information from the interviews reinforced the results of the quantitative survey. When asked about the functions of the QA committees, most QA staff and directors shared that they had such a committee in place, but it did not work regularly. The QA office was in charge of IQA activities. When they were asked about their engagement in the QA committee, faculty members were not aware of its existence.

To facilitate the IQA activities, in addition to quality policy, most institutions had developed a technology system to store data institution-wide. The participants shared their experience of collecting data for quality assurance activities. Some universities ordered a technology company to develop a local system to facilitate data storage primarily for course evaluation surveys, employers’ surveys, and faculty surveys. Students had to access the survey online with their ID and finish it before seeing the grade and registering for another class. Faculty members were able to log into see the survey results in the system, too. In addition, the local system was used for accreditation purposes. If the university participated in different types of programmatic accreditation, it set up other systems to store data for specific purposes. Three QA directors commented that the system was systematically structured to provide different privileges to different roles of academic staff regarding getting access to the system. For example, the deans shared that they had more privileges to log into different programs, while faculty members could only log into their own program. However, the QA staff argued that the technology functioned mainly for data storage but did not link with other offices to export multiple data files. One QA staff member clarified, “whenever we needed data for self-study accreditation report, the staff ‘ran around’ to ask for data from different offices, so it was not convenient at all.” Also, the system did not export data analysis to facilitate communication with university committees. Regarding the emerging QA offices with less than 3 years of operation, participants indicated limited use of technology for IQA activities.

IQA Instruments of Academic Programs

Data from the survey indicated that course evaluations by students and student satisfaction surveys were the two most popular IQA instruments used to collect data for program evaluation (see Table 3). Of the seven IQA instruments in the survey, students’ workload assessment was reported as having the lowest frequency of use. Data from interviews also reinforced this result. The QA administrators in the interviews shared strategies for collecting reliable surveys, such as asking for feedback before the courses ended and requiring students to fill in the survey in order to see their grades and register for a new course. For the paper survey, QA staff used 5 to 10 minutes of class time to collect information. The QA staff commented that online and brief surveys received a higher response rate than the paper ones, and it was easier to export the data to internal stakeholders for evidence. In addition, students in the interviews found it more convenient to complete the survey online. Faculty members were all aware of the importance of surveys in IQA activities and encouraged students to participate.

Survey Responses on IQA Instruments.

To improve the program learning outcomes and curriculum for academic programs, universities often conducted a survey to ask for employer, alumni, student, and faculty member feedback. However, the participants shared some challenges. Five QA staff and directors complained that although the university received feedback from the surveys, this process did not work systematically for the whole university. QA staff pointed out the challenges of engaging students and faculty members in the process since they did not see the value of the process. Some QA directors said that to get a higher response rate, they sometimes had to remind the faculty members to participate in the IQA process since it was included in the faculty annual report. During the interviews, students and faculty members agreed that the universities often asked for their feedback using course and program evaluations. Faculty members at the private universities shared that since the departments often had regular meetings, they filled in the survey in the meeting. Another QA staff member shared, “University also asked for employers and alumni feedback, but the data is not big and representative.” Deans and faculty members added that they often asked for employers’ feedback when sending the senior students to their company for a field trip. Most participants stated that universities did not have a formal process for getting regular input from stakeholders. Universities only solicited feedback when they needed evidence to provide for programmatic accreditation. Faculty members commented that departments did not have specific funding for stakeholder engagement, so it was challenging to collect regular and representative feedback from employers and alumni to improve the academic programs. Students knew about the course evaluation and academic support service; they filled in the survey 2 weeks before the final exam. If they did not do that, they could not see the course grade…they did it every semester. Some students shared that the survey was too long. They just checked “very good” in the survey items to enable the registration function in the system. They loved the convenience of an online survey but suggested shortening the survey items. QA staff shared that when switching to online surveys, they received a higher response rate.

Course grade and GPA were two major IQA instruments used by academic programs in Vietnam to provide evidence of student learning to stakeholders. Almost 90% of participants in the survey checked “Yes” for program outcomes assessment. However, during the interviews, the researchers explained the procedures to collect data for program outcomes assessment, and all interviewees thought their institutions had failed to implement this process to collect evidence of students’ learning at the program level. Some QA staff and directors shared they loved to collect this data to provide evidence of student learning since it was an updated requirement from AUN-QA programmatic assessment version 4.0. Another QA director from a regional university commented, “if the programs assessed the program outcomes, it would have a significant impact on the continuous quality improvement of academic programs.” The QA staff and directors added that testing was a major function of the QA office and created a heavy workload for the office. These participants argued that the current testing did not match the program learning outcomes, so it was challenging to collect outcome assessment data to provide evidence of student learning for the whole program. Some faculty members shared similar opinions about the testing. Institutions required faculty members to state program learning outcomes in the course syllabus and demonstrate alignment between program and course outcomes, teaching materials, and assessment measures. However, the faculty members were not sure if the final test matched with any program learning outcomes.

Discussion

IQA Infrastructure of Academic Programs

This research provided a broad picture of the IQA system in academic programs of Vietnamese HEIs, using a similar approach to that of Martin (2018) about IQA eight case studies around the world. Most Vietnamese HEIs developed a fundamental infrastructure to conduct IQA activities, such as setting up a QA office (Nguyen, Tran et al., 2020), having an IQA handbook to guide the IQA activities, and developing an IQA model to meet external requirements (Cao, 2020; Nguyen, 2020). These practices aligned with the findings from research on international experiences with conducting IQA systems around the world (Martin & Parikh, 2017). Depending on the maturity of the QA office at each institution, the IQA models were developed based on: the MOET policy about academic programs; national, regional and international accreditation (AUN, 2020); and ISO 9000:2015 (Huynh & Nguyen, 2020). The Vietnamese institutions that used ISO as an additional IQA model mostly had financial autonomy or needed to provide more accountability information to receive project funding from stakeholders. The research findings confirmed that the IQA model following MOET policy and ISO mainly focused on input and process. Therefore, the IQA system was more compliance-based. Meanwhile, the IQA model based on accreditation not only met the compliance requirements but also encouraged academic programs to use the IQA results for quality improvement. These accreditation-based IQA systems in Vietnam HEIs are aligned with AUN-QA and UNESCO quality management practices. However, this research study found that the Vietnamese HEIs did not implement IQA infrastructure effectively in ways suggested by international studies, such as having a quality assurance policy (Kuria & Marwa, 2017), and an IQA manual/handbook (AlHamad & Aladwan, 2017; Ganseuer & Pistor, 2017). To implement their IQA infrastructure more effectively, international experience has demonstrated that an IQA committee can make a significant contribution to overseeing institutional IQA activities (Jankowski et al., 2018; Martin, 2018). However, the study shows that the role of the IQA committee is still limited in the IQA process in Vietnam.

Technology can play a significant role in facilitating IQA implementation (Martin, 2018). Interview results in this study indicated that all participants mentioned they did have their system to store IQA data for the whole institution. However, they also argued that the technology system had no connection among the offices and limited functions to export analyzed information to facilitate the communication of IQA results to internal and external stakeholders. To upgrade the system to work its best for institutions’ needs, institutions will need to allocate more resources, which can be a major challenge in IQA activities. To improve the situation, international experience has demonstrated the trend of using an external assessment management system (AMS) and paying an annual membership to collect and analyze IQA data (Pham, 2021). This practice saves the institutions’ investment in IQA activities. At the same time, the institutions can use the best and most updated AMS version to support IQA activities.

IQA Instruments of Academic Programs

Data from the quantitative survey about IQA instruments showed that survey was the commonly used IQA tool to collect data from stakeholders (e.g., employers, alumni, students, and faculty) for program evaluation and program outcomes assessment in Vietnam HEIs. These two indirect measures, survey, and program evaluation, have also been similarly used in many other institutions worldwide (Kuh et al., 2015; Martin, 2018). However, Vietnamese HEIs did not use other direct IQA instruments (Huynh & Nguyen, 2020; Martin, 2018; Nguyen, 2020; Trinh, 2020). Even though surveys were frequently used in IQA activities, they were locally developed, and there was no way to check the reliability of the tools and their administration. Although researchers were asked at interview about establishing the reliability of information, not many QA staff addressed this matter and faculty members and deans were unaware of this. To facilitate IQA internal and external benchmarking, institutions in Europe (Martin, 2018) and America (Kuh et al., 2015) also used a national survey with high reliability to provide evidence of student learning to stakeholders. Kuh et al. (2015) emphasized that higher education leaders addressed feedback from the national survey quicker and more effectively than feedback from a local survey since the national survey results were not only used for internal quality improvement but also for external benchmarking. A national survey that can demonstrate reliability is yet to be developed as an IQA instrument in Vietnam HEIs.

In addition to the indirect IQA instrument (survey), one of the direct instruments most commonly used for evaluating the quality of academic programs—as reported by participants—was program outcomes assessment. The results from the survey indicated 80% of participants chose this instrument. However, in the interviews, the researchers found that course grade and GPA were considered as the program outcomes assessment. By contrast, international practices use different processes that may include choosing appropriate assessment measures, collecting data of student learning for the whole program, and, most importantly, using the assessment results for continuous quality improvement (Allen, 2004). Program outcomes assessment is still new in Vietnam (Dinh & Tran, 2020). Some institutions showed an effort to follow international practices and provided training for QA staff, middle administrators such as deans, program directors, and faculty members. However, implementation was still in the pilot stage. This result indicated that most academic programs used an indirect IQA instrument to evaluate programs. This finding aligned with the current issue that most Vietnamese HEI institutions developed their IQA system based on MOET policy, Circular 07/2017/ TT-BGDĐT, which only required the institutions to declare program learning outcomes but did not address conducting assessment and use of the program assessment results for continuous quality improvement (Dinh & Tran, 2020; Nguyen, 2020). Therefore, academic programs in Vietnam should consider using multiple assessment measures, both direct and indirect, to triangulate and facilitate the IQA system for academic programs (Suskie, 2009).

Conclusion

For the past 20 years, IQA activities have received much attention from administrators and leaders and have been addressed in the Higher Education Act and policy in Vietnam. Annually, many case study investigations are conducted to share experiences with implementing IQA activities. However, the single case studies have mostly addressed a specific component in the IQA system. Therefore, they have not provided a big picture of IQA in academic programs nationwide. This paper has examined the IQA system of academic programs implemented across different types of institutions in Vietnam. The findings of this research confirmed that Vietnam HEIs had built a fundamental infrastructure such as IQA models, quality management policies, quality assurance handbooks, a technology system, and an IQA committee to implement the IQA activities. However, more actions are needed to engage more internal stakeholders in the implementation of the IQA committee, especially the students and employers. It is time that Vietnamese HEIs consider having an external AMS to support the IQA activities since the current local technology system does not work effectively. For the IQA instruments, most academic programs in HEIs use surveys as an indirect IQA instrument to collect data for the IQA process. However, if the IQA process relies mainly on an indirect IQA instrument, it will not provide reliable data for administrators to make improvements in teaching and learning for the academic programs.

Based on the key findings of this research, the researchers have drawn some implications for improving the IQA of academic programs in Vietnam HEIs. The first is to increase the effectiveness of the IQA committee by encouraging multiple internal and external stakeholders to engage in the committees. This action will help to avoid the centralization of the IQA system in the QA office. Second, Vietnam HEIs with strong technology traits can consider developing an AMS that meets the requirements of the Vietnamese higher education context and selling this to other institutions to support IQA data storage. The third is to use more direct IQA instruments in the IQA process to guide the improvement of teaching and learning for academic programs. More training and workshops about program outcomes assessment should also be provided to encourage academic programs to use multiple IQA instruments to triangulate and provide better evidence of student learning in the IQA process. In addition to local surveys, national surveys should be developed, and HEIs encouraged to integrate them into the IQA process. The national IQA instrument enables HEIs to do external benchmarking and, as an indicator, to provide more accountability information to stakeholders. Most importantly, more consultation should be provided to HEIs for developing a systematic IQA model focusing on continuous quality improvement for all academic programs. Since IQA infrastructure and instruments are the first two fundamental steps in the IQA process, they facilitate the process toward continuous quality improvement.

In reference to this study, certain limitations in the sample of respondents need to be acknowledged. The sample may not represent the types and numbers of institutions in Vietnam; therefore, the results may not be generalizable to all HEIs in the country. This research provides a case study from a developing country demonstrating its efforts to evaluate the current IQA system and then use such results to improve the IQA activities nationally. The research findings and recommendations are valuable to international educators, especially in developing countries, to strengthen their current IQA system more effectively. Future research should examine good practices for engaging stakeholders in the IQA committees, experiences for developing and administering a national IQA survey and AMS, and approaches to program outcomes assessment that provide more reliable results for continuous quality improvement.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research is funded by Vietnam National Foundation for Science and Technology Development (NAFOSTED) under grant number: 503.01-2019.305.