Abstract

Although numerous mental health applications (apps) are available to the public, the process behind their development is unclear. Many researchers doubt the validity of the mental health content in existing apps and have criticized the lack of evidence-based content regarding the targeted mental health issues, such as trauma, anxiety, and depression. The purpose of this study is to systematically describe the entire process of designing, developing, and evaluating a mental health app in South Korea. Using an instructional systems model, we describe the entire mental health app design and development process through five phases: analysis, design, development, implementation, and evaluation (ADDIE). Using those phases, we conducted expert reviews and usability tests and examined whether the mental health app helped to improve the emotional and psychological issues of users. Overall, participants were highly satisfied with the mental health app, specifically for low- and medium-risk conditions. Our mental health app demonstrated its potential to cultivate mental health literacy and reach a large Korean audience. Further implications are discussed.

Mobile applications (apps) are widely available to the public for use either free or for a small charge (Lorca-Cabrera et al., 2021; Torous et al., 2018). According to Torous and Roberts (2017), more than 10,000 mental health apps are available for immediate download from iTunes and Android Play. The number of mental health apps is likely to exceed 20,000 in 2021. Because of their potential to reach the public, mental health apps are of great interest to researchers and practitioners (Inal et al., 2020; Kuhn et al., 2014; Ng et al., 2019).

One potential use for mental health apps is in early intervention. When in-person counseling is unavailable or takes too long to obtain, mental health apps can be used as an alternative approach before individuals develop severe symptoms (Greeson et al., 2020; Lorca-Cabrera et al., 2021). Leigh and Flatt (2015) found that not everyone has access to in-person services in the UK because of long waiting lists. They proposed that mental health apps be used to provide immediate assistance to people in need. Second, the global accessibility of mobile apps has improved significantly (Inal et al., 2020; Taylor & Silver, 2019). According to Taylor and Silver (2019), smartphone ownership is growing rapidly around the world. For example, 81% of adults (≥18 years old) in the US owned smartphones in 2018, which is 35% more of the population than owned smartphones in 2011. In addition, 95% of teens (ages 13–17) in the US have smartphone access (Anderson & Jiang, 2018). Those reports indicate that mental health apps on smartphones have great public accessibility. Third, mobile applications can reach a diverse audience, particularly, vulnerable populations such as young adolescents (Greeson et al., 2020). Because many people carry a mobile phone and use mobile apps in public places, individuals can use mental health apps inconspicuously without drawing unwanted attention (Kuhn et al., 2014). Fourth, mental health apps are cost-effective (Anastasiadou et al., 2019; Luxton et al., 2014; Olff, 2015). Olff (2015) argued that mental health apps can reduce waiting lists and reach more people globally than traditional care. Luxton et al. (2014) compared the costs of a stress reduction app and in-person care and found that 1,000 in-person sessions are more cost-effective than using a mental health app because of its high initial development fee. However, at more than 1,600 in-person sessions, the mental health app becomes more cost-effective.

Despite the great potential of mobile applications as psychological aids, a few concerns have been raised by researchers (Alyami et al., 2017; Lorca-Cabrera et al., 2021). For example, although many free or commercial mobile apps are available, the content of only a few apps has been validated. Alyami et al. (2017) searched the iTunes, Google Play, and Windows stores and then selected and reviewed 38 social anxiety-related apps that claimed to provide psychoeducation, symptom management, treatment self-assessment, or supportive resources. Only three of the apps provided information about the source of their content or organizational affiliations. Similar findings were reported by Lorca-Cabrera et al. (2021), who reviewed 43 mobile apps for caregivers of individuals with chronic conditions and disease. They found that authoritative sources were not incorporated in the design of the mobile apps, and they expressed concern that the apps posed potential health hazards to users because insufficient scientific evidence supported their claims.

Another concern is that usability information is not reported for most mental health apps (Alessa et al., 2019; Inal et al., 2020; Lorca-Cabrera et al., 2021). Because engagement in mental health is critical to help users improve their emotional and psychological issues, the end-user experience with a mental health app is very important (Ng et al., 2019; Torous et al., 2018). Torous et al. (2018) criticized current mental health apps because they are not designed with users in mind (e.g., poor usability) and do not address the problems users care most about, which leads to low user engagement. They emphasized the importance of usability and user-centric design to enhance user engagement with mental health apps.

In addition, researchers have expressed concern that most available mental health apps have not been tested with even a small number of participants (Alessa et al., 2019; Inal et al., 2020). Leigh and Flatt (2015) reviewed the apps accredited by the UK’s National Health Service (NHS) in the NHS health app library and found that nine of the thirteen apps did not provide evidence to substantiate their specific benefits to users and did not use NHS-accredited performance metrics. Those authors emphasized the importance of using evidence-based guidelines for publicly available mental health apps.

Carefully designed and developed mental health apps are important in improving the emotional and psychological issues of users (Alessa et al., 2019; Inal et al., 2020; Ng et al., 2019; Torous et al., 2018). Unfortunately, the process of developing mental health apps is rarely shared among researchers and practitioners, and even small-scale formative evaluation studies of mental health apps are rarely conducted (Alessa et al., 2019; Baumel & Muench, 2016). It is thus unclear how mental health apps are developed. We found very little research shared for the entire process of designing and developing a mental health app. Therefore, our purpose in this study is to systematically describe the entire process we used to design, develop, and evaluate a mental health app. We used the analysis, design, development, implementation, and evaluation (ADDIE) systematic instructional design model to both conduct and describe our work systematically (Brown & Green, 2018; Carey et al., 2021).

Mental Health Issues in Korea

This study was conducted in South Korea. For the past 10 years, Koreans have been exposed to many natural (e.g., earthquakes) and man-made (e.g., Sewol ferry) disasters, as well as epidemics (e.g., MERS) and pandemics (e.g., COVID-19). The Sewol ferry sank off the South Korean southern coast in the Yellow Sea. The number of dead and missing in this disaster was 304, of whom 250 were high school students on a field trip. Yang et al. (2015) conducted a study of 7,076 adults living in a victimized area to investigate their mental health 6 months after the Sewol ferry disaster. Their results showed that many adults reported higher rates of depression, stress, somatic symptoms, anxiety, and even suicidal ideation than adults living in a non-victimized area. Unfortunately, in Korea, the availability and accessibility of counseling services are inadequate (Roh et al., 2016). Kim and Kim (2018) reported that many Koreans experienced fear, anxiety, and vulnerability when Middle East Respiratory Syndrome (MERS) occurred in May 2015 in Korea. As a result of MERS epidemic in Korea, 38 died and 16,752 were quarantined. Lee et al. (2019) reported that among 148 MERS survivors, 72 (48.65%) had chronic fatigue, depressive symptoms, and post-traumatic stress disorder (PTSD) even 12 months later. More recently, COVID-19 has influenced not only Korean public mental health but also public mental health worldwide. According to Torales et al. (2020), the COVID-19 outbreak has produced stress, anxiety, depressive symptoms, insomnia, denial, anger, and fear throughout the world. Despite great concern about public mental health in Korea, no mental health app is available to help people understand and improve their symptoms after difficult and stressful events.

Project Overview

The current project was intended to enhance public mental health in Korea. Our goal in this project was to design and develop a solid mental health app for general public use in South Korea. This study was a multidisciplinary collaboration among counseling psychologists, mental health professionals, instructional designers, and app developers. To create a content-valid, evidence-based, practical mental health app, collaboration among experts in a multidisciplinary team is critical (Olff, 2015). The counseling psychologists created evidence-based content; the mental health professionals checked its validity and practicality; the instructional designers managed the project, designed storyboards, and worked closely with the app developers; and the app developers developed the prototype and created the mental health app. The present study was approved by the Institutional Review Board (IRB) of the Sungkyunkwan University (2014-10-004). Informed written and verbal consent were obtained from all individual participants included in the study.

Design, Development, and Evaluation of the Mental Health App

Our purpose here is to systematically describe the entire process we used in developing our mental health app. We used ADDIE, an instructional systems design model, to both develop and describe the entire process of designing, developing, and evaluating our mental health app (Brown & Green, 2018; Carey et al., 2021). ADDIE is one of the models most widely used in the field of instructional design, and it is increasingly being adopted in the medical field (Lafleur et al., 2021; Patel et al., 2018).

The analysis phase involves defining the learning goals; the design phase involves designing the content to address those learning goals; the development phase involves developing the learning content into the format of the target technology, such as a mobile app; the implementation phase tests the developed mobile app with the target audience; and finally, the evaluation phase evaluates user experiences and the effects of the mobile app. ADDIE linearly describes the development of effective learning material, including mobile apps; however, each phase of ADDIE dynamically and recursively interacts with all the other phases to accommodate specific contextual needs or situations, such as time constraints (Brown & Green, 2018; Carey et al., 2021). Because the ADDIE model allows us to systemically describe the entire process of mental health app development, it is an appropriate model for our research. We illustrate the ADDIE process in Figure 1.

Overview of the ADDIE phases used to design, develop, and evaluate a mental health app.

Analysis Phase

The analysis phase involved focus group meetings with mental health professionals, such as counselors and directors of mental health centers in Korea, researchers, and graduate students. The main purposes of the focus groups were to understand the needs of health professionals in the field, determine the goals for the app, and identify the core information needed to support those goals. In the focus groups, we used a loosely structured set of open-ended questions, including (a) what are the common mental health issues you encounter in your center? (b) could a mobile app help to alleviate the mental health issues your clients have? (c) what types of information would need to be included to address your clients’ mental health issues? and (d) what could be advantages or disadvantages of using a mobile mental health app? Based on the focus group results, we chose overarching goals for the mental health app: (a) provide users with basic information about mental health issues that commonly occur after extreme events, (b) help users develop insight or self-awareness about their mental health conditions, and (c) help users find available resources they can use to improve their mental health. In addition, we analyzed existing mental health apps, such as PTSD Coach developed by the Veterans Affairs National Center for PTSD in the United States (Kuhn et al., 2014) and Psychological First-Aid (PFA), to identify the types of menus, information structures, and interfaces used. Psychological First Aid (PFA) is a structured intervention for disaster survivors designed to reduce distress caused by traumatic events (e.g., disasters, terrorism, and emergencies) that affect everyday lives. PFA is recommended in federal guidelines as specified in the 2008 National Response Framework (U.S. Department of Homeland Security, 2008) to help with painful emotions and promote hope and healing. PFA has been widely endorsed by expert consensus and integrated into guidelines for mental health and psychological support in disasters and extreme events.

Based on the results of the focus group meetings with health professionals and a literature review, we decided to develop a general mental health app for a widespread Korean audience to cover both short- and long-term mental health symptoms, diagnosis tools, and feedback based on self-reported data and to provide information about available resources for in-person assistance. We identified five main goals for our Korean mental health app:

To help users develop an understanding of the natural reactions to extreme events, such as natural and man-made disasters, pandemics, car accidents, or extreme stress from social or family relationships.

To self-assess both short-term and long-term mental health symptoms.

To help users develop awareness about their mental health symptoms.

To assist others who have mental health issues.

To raise user awareness about available certified resources for support in dealing with mental health symptoms.

Design Phase

To achieve those five goals, we designed five menus: (a) understanding stress reactions, (b) self-assessment, (c) self-care practices, (d) other-care practices, and (e) resources. The “Understanding stress reactions” menu was designed to provide information about the physical and social-emotional reactions that might be experienced after stress.

The “Self-assessment” menu allows users to assess their own emotional, physical, and psychological health. Two types of self-assessment tools are provided: one is for symptoms caused by stress that occurred within the past month (Acute Stress Disorder: ASD) and the other is for symptoms caused by stress that occurred more than a month ago (PTSD). Users can choose to complete either the ASD self-assessment or PTSD assessment. Three types of feedback are provided based on the scores calculated from the user-provided responses. For ASD, if the calculated score is less than 13, the user is categorized as low-risk and offered written feedback with choices for self-care practices and other-care practices. If the calculated scores range from 14 to 18, the user is categorized as medium risk and given written feedback with a help-seeking menu. If the calculated score is 19 or higher, the user is categorized as high-risk and given written feedback with an assistance-seeking menu. The same mechanism is applied to the PTSD assessment, with users categorized as low-risk (scores less than 32), medium-risk (scores from 33 to 38), or high-risk (scores 39 or higher). Low-risk users receive options for self-care and other-care practices with text feedback, and medium- and high-risk users are strongly encouraged to seek in-person assistance.

The “Self-care practice” menu is designed to help users apply self-care practices. Basic and advanced self-care strategies are provided, as shown in Figure 2.

Self-care practices.

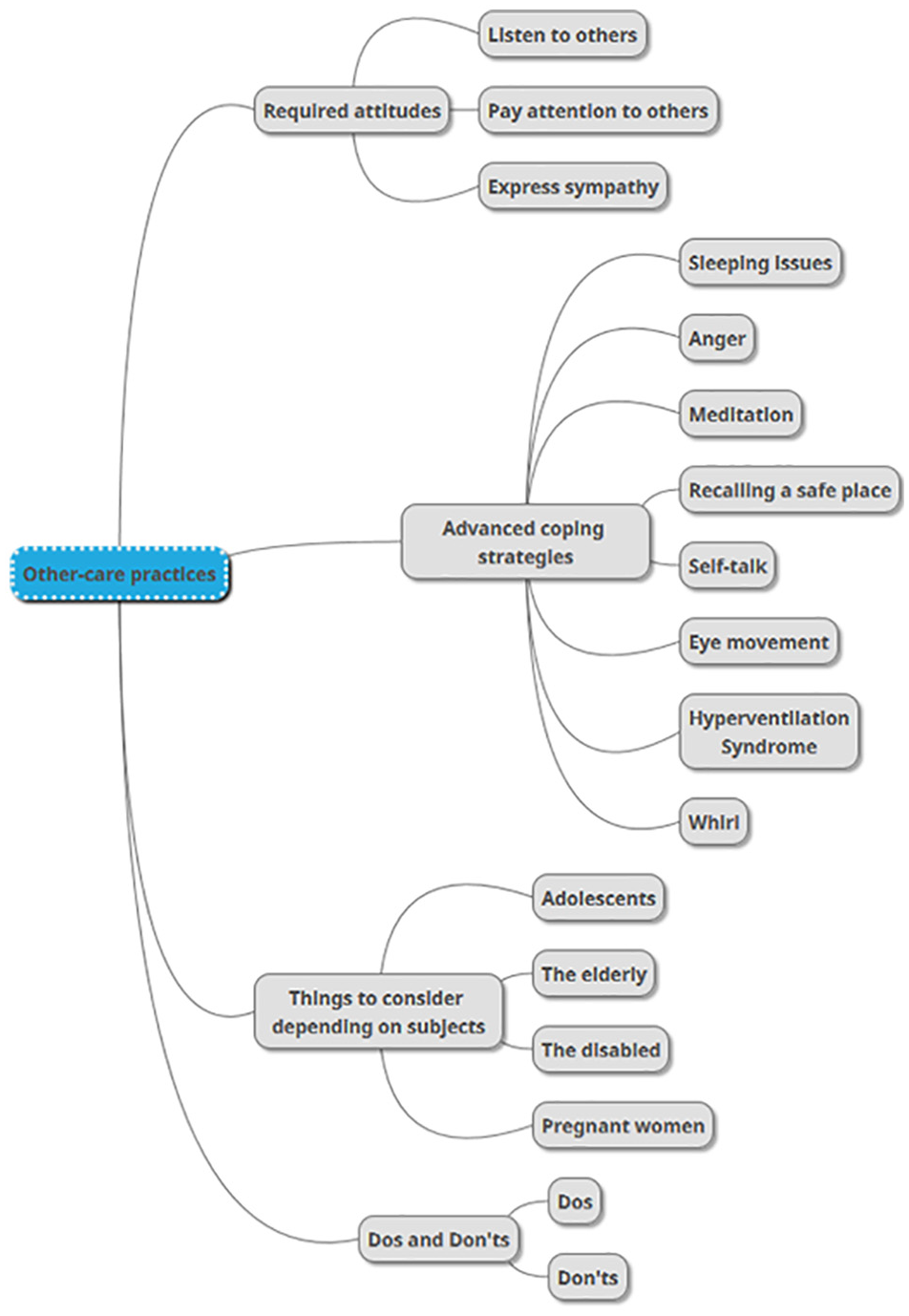

The “Other-care practices” menu offers information about how to take care of others who have emotional and psychological symptoms. Four submenus are presented (Figure 3): (a) attitudes required for taking care of those who have symptoms, (b) advanced coping strategies, (c) things to consider depending on the subjects, and (d) dos and don’ts.

Other-care practices.

Last, the “Resources” menu provides contact information (email, center address, and phone number) for support centers in 17 different regions in Korea. The email and phone links are automatically activated by touch, and the location information is linked with map services that show the distance and route to the center nearest the user’s location.

Development Phase

We developed and programmed our mental health app for use on Android devices. The app has text, graphics, audio and video files, and hyperlinks to support mental health. The content for each menu was provided as storyboards to the developers, who were hired by the grant for this study. The developers produced a mobile app prototype and made it available to the team of six mental health professionals, who have more than 10 years of counseling experience in national counseling centers. The experts received a temporary URL that allowed them to install the mental health app on their own mobile devices. The purposes of this expert review were to (a) validate the content presented by the mental health app and (b) check the usability of the app. Expert reviews were conducted three times. In the first expert review, the six experts were invited to answer three open-ended requests: (a) please provide the strengths and weaknesses of the mental health app; (b) please provide suggestions to improve the mental health app; and (c) please provide any opinions or comments on the mental health app. In addition, the experts were given worksheets containing all the menus and submenus and asked to provide comments on each of them after thoroughly exploring the app. The results of the first expert review are presented in Table 1. They were delivered to the developers in storyboard format. The developers then revised the app design and content accordingly. For example, after the first expert review, the “System information” menu became “Reactions after stress,” and the “Mental first-aid guidelines” menu became “Self-care” and “Behavioral know-how.”

First, Second, and Third Expert Reviews of the Mental Health App.

The second expert review was conducted after the prototype was revised. Five of the six experts from the first review participated, and the same three open-ended questions and worksheets were provided to facilitate the review. The results of the second expert review are presented in Table 1. After the second expert review, the term “Behavioral know-how” was changed to “Other-care practices.” Expert comments and survey results were again delivered to the developers in storyboard format.

The last expert review was conducted after the revision of the second prototype. Four experts participated in that review. The same three open-ended questions and worksheets were used. Suggestions were delivered to the developers in storyboard format and are described in Table 1. Changes were made accordingly. After the three rounds of expert review, the mental health app was finalized (Figure 4).

First page of the developed mental health app.

Implementation Phase

Two implementations were used. One was a usability test. Research recruitment email was delivered to counselors who were working in randomly chosen seven counseling centers in Seoul S. Korea. Twenty-one counselors volunteered in the study. They were provided with an hour-long orientation during which they were asked to download the mental health app onto their mobile devices. They were asked to use it at least three times per week for a month. After using the app for a month, they were invited to participate in the evaluation, as described below (see evaluation phase).

The other implementation examined the effects that the mental health app had on the symptoms that users reported. Potential users of the mental health app were recruited from three undergraduate courses and one graduate course. With the instructor’s permission, one of the authors visited the classes and provided orientation. The first 1.5 hours of class-time were spent screening users who could benefit from the developed mental health app. Using the Impact of Event Scale-Revised-Korean version (IES-R-K), we selected those who were in a low- or medium-level risk condition. After that screening, 50 users were chosen to participate in the study: 40 undergraduates and 10 graduate students. Most of the participants were female (N = 37), and their ages ranged from 20 to 37 years (M = 25.82, SD = 3.84). The students chosen completed three surveys: (a) ASD, (b) PTSD, and (c) PTSD Risk Factors. The participants were not told which groups they were categorized in. The other hour was used to help users understand how to use the mental health app. They were asked to use the mental health app at least three times per week for 2 months.

Evaluation Phase

Three evaluation questions were posed to the people who participated in the two implementations. The first question was addressed to the counselors through a survey, and the second and third questions were addressed to the student participants through research instruments.

How do experts evaluate the mental health app?

Does mental health differ significantly before and after participants used the mental health app?

What are the users’ opinions of the mental health app?

Evaluation procedures

The evaluation was approved by the institutional review board on the campus where this study was conducted. To address the first evaluation question, we surveyed the 21 mental health professionals. To address the second evaluation question, we returned to the classrooms after 2 months and administered the same three surveys, (a) ASD, (b) PTSD, and (c) PTSD Risk Factors, to assess symptom changes. To address the third question, we administered an open-ended survey. All participants were compensated with $10 gift cards.

Expert evaluation of the mental health app

For the usability test, 18 of the 21 counselors completed the survey. The 25 questions were adapted from a study by M.-H. Cho (2012) and asked about six dimensions of user experience: (a) navigation, (b) content, (c) accessibility, (d) design and development, (e) understanding, and (f) satisfaction. A survey is one of the methods most commonly used in mental health app research to measure user engagement (Ng et al., 2019). Participants answered the questions on a 5-point Likert scale on which 1 denoted “strongly disagree” and 5 denoted “strongly agree.” The results are presented in Table 2. Overall, the counselors evaluated the developed mental health app positively in terms of navigation (M = 4.32, SD = 0.73), content (M = 4.10, SD = 0.75), accessibility (M = 4.48, SD = 0.58), design and development (M = 4.34, SD = 0.71), understanding (M = 4.02, SD = 0.66), and satisfaction (M = 4.27, SD = 0.62).

Expert Review of Mental Health App Usability.

Note. A 5-point Likert scale was used: 1 = “strongly disagree” and 5 = “strongly agree.”

Comparison of mental health before and after use of the mental health app

We used four instruments to measure the effects of the mental health app on the users’ symptom levels: (a) the IES-R-K, (b) National Stressful Events Survey Acute Stress Disorder Short Scale (NSESSS-ASD), (c) National Stressful Events Survey PTSD (NSESSS-PTSD), and (d) PTSD Risk Factors (Table 3).

Scales Measuring IES-R-K, ASD, PTSD, and PTSD Risk Factors.

IES-R-K

The IES, developed by Horowitz et al. (1979), was used to assess the current degree of subjective distress experienced as a result of a specific event. We used a Korean translated version (Eun et al., 2005) of the IES-R, which has been widely used as a psychological instrument in the field of traumatic stress (Creamer et al., 2003). A total of 22 items measure three subscales, intrusion (8 items), avoidance (8 items), and hyperarousal (6 items). Higher scores indicate higher levels of subjective distress. Example questions are listed in Table 3. Item reliability on the overall scale in the present study was 0.94. Cronbach’s alpha for intrusion, avoidance, and hyperarousal was .90, .84, and .88, respectively. A 5-point Likert scale was used in which 0 indicates not at all, and 4 indicates very often.

NSESSS-ASD

The severity of acute stress symptoms was measured with a version of the Acute Stress Disorder Scale developed by Kilpatrick et al. (2013) and validated in a Korean context by Y. Cho and You (2016). It is based on the Diagnostic and Statistical Manual of Mental Disorders (DSM-5) diagnostic criteria and includes content regarding the classification of mental disorders with associated criteria for a more reliable diagnosis (American Psychiatric Association [APA], 2013). The NSESSS-ASD is a 7-item self-report tool that includes the DSM-5 field trials measurement to identify ASD and predict the risk for subsequent PTSD. Higher scores indicate higher levels of ASD symptoms. Item reliability for the pre-test and post-test scales was evaluated to be 0.79 and 0.70, respectively. A 5-point Likert scale was used in which 0 denotes not experienced at all, and 4 denotes extremely often.

NSESSS-PTSD

To measure PTSD symptom severity, we used a brief self-report measure (NSESSS-PTSD; Kilpatrick et al., 2013) developed based on the DSM-5 diagnosis criteria (APA, 2013). The 20 original items were reduced to nine items, which was validated in a Korean context (Y. Cho & You, 2016). Higher scores indicate higher levels of PTSD symptoms. Item reliability was evaluated as 0.85 (pre-test) and 0.81 (post-test). A 5-point Likert scale was used in which 0 denotes not experienced at all, and 4 denotes extremely often.

PTSD risk factors

PTSD risk factors were measured with 11 items derived from Cho and Yang (2013). The instrument includes three subfactors: (a) peri-traumatic dissociation (n = 3 items), (b) post-traumatic negative beliefs (n = 5 items), and poor social support (n = 3 items). The items of each construct were adapted and selected according to the context of the current study to measure dissociated symptoms at the time of stressful events, degree of negative beliefs, and degree of social support received from others. Higher scores indicate higher symptoms of each subfactor. Overall, the pre- and post-Cronbach’s alphas ranged from .85 to .98. A 5-point Likert scale was used in which 1 indicates strongly disagree, and 5 denotes strongly agree.

Effect of the app on symptoms

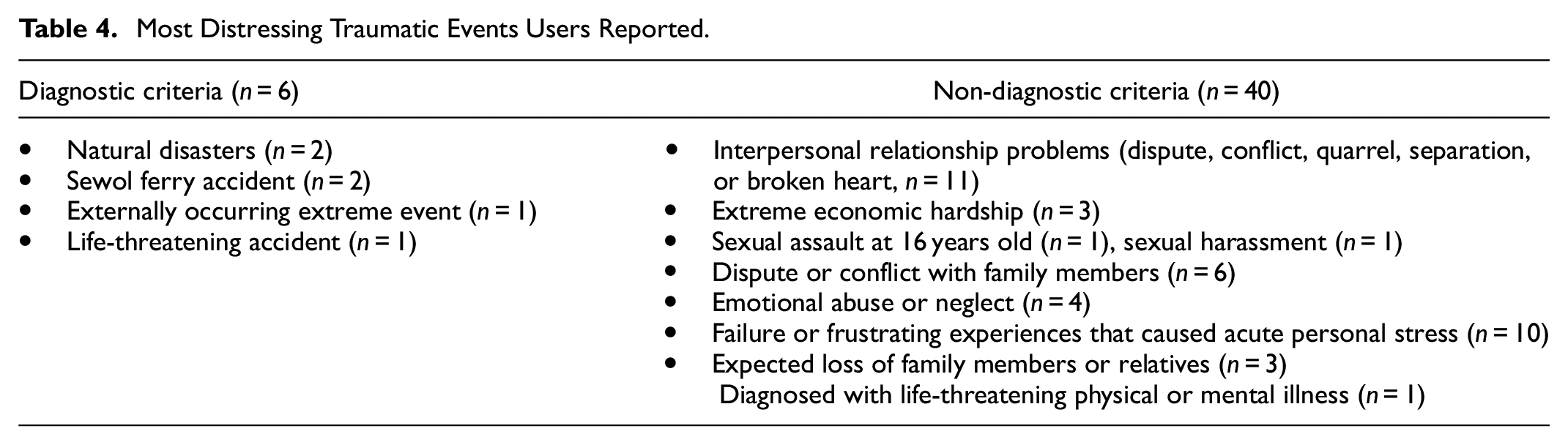

When a researcher asked the 50 participants recruited from the undergraduate and graduate courses to list their most distressing traumatic event, 46 participants reported the events listed in Table 4. The reported events were described using diagnostic and non-diagnostic criteria.

Most Distressing Traumatic Events Users Reported.

To assess the current degree of subjective distress experienced as a result of a specific extreme event, the IES-R-K was administered. The average overall IES-R-K score of the 50 participants was 1.24 (SD = 0.78). In particular, the averages of intrusion, avoidance, and hyperarousal were 1.14 (SD = 1.00), 1.12 (SD = 0.83), and 1.44 (SD = 0.90), respectively. Thus, the participants overall could be categorized as being in a medium risk condition, and the mental health app could be helpful for all 50 participants.

Paired-sample t tests were used to investigate whether the NSESSS-ASD, NSESSS-PTSD, and PTST risk factors of the participants changed after they used the mental health app for 2 months (Table 5). The results demonstrate statistically significant improvement in the scores of all three tests. Specifically, NSESSS-ASD improved significantly, t(49) = 5.51, p = .000, between time 1 (M = 1.03, SD = 0.74) and time 2 (M = 0.66, SD = 0.55). NSESSS-PTSD improved significantly, t(49) = 6.22, p = .000, between time 1 (M = 1.28, SD = 0.81) and time 2 (M = 0.79, SD = 0.61). PTSD risk factors also improved significantly. Peri-traumatic dissociation improved significantly, t(49) = 4.77, p = .000, between time 1 (M = 1.89, SD = 1.10) and time 2 (M = 1.54, SD = 0.91). Post-traumatic negative beliefs improved significantly, t(49) = 3.79, p = .000, between time 1 (M = 1.76, SD = 0.83) and time 2 (M = 1.43, SD = 0.65), and post-traumatic social support improved significantly, t(49) = 3.12, p = .003, between time 1 (M = 1.65, SD = 1.14) and time 2 (M = 1.37, SD = 0.86).

Comparison of PTSD Changes Between Time 1 and Time 2 (N = 50).

User opinion of the mental health app

Open-ended questions

We provided two open-ended questions to allow users to evaluate the mental health app: “What do you like best in the mental health app?” and “If one thing could be improved in the mental health app, what would you suggest?” We then conducted a qualitative content analysis of the 97 responses we received from the 50 participants. After reviewing the responses, one author trained two research assistants to code the responses. The unit of analysis was the meaning, so if a sentence had two meanings, it was coded with two themes. To create the coding schemes, the two research assistants reviewed 40% of the responses and generated coding schemes individually. Then, one of the authors and the two research assistants discussed, synthesized, and finalized the coding schemes. Using the finalized coding schemes, the two research assistants conducted blind-coding of all responses. The initial agreement between the two coders was 87.3%. Discrepancies were resolved through discussion among the researcher and the two research assistants. The answers to questions one and two above are presented in Tables 6 and 7, respectively.

Most Liked Aspects of the New Mental Health App.

Suggestions to Improve the PTSD App.

Aspects users liked best in the new mental health app

Below is a summary of the content analyses for the two open-ended questions. Users reported that what they liked best in the new mental health app was the content. Based on the frequencies of each coding theme, 25 meanings were related to the interface, and 93 meanings were related to the content, as shown in Table 6. Among the 25 interface related meanings, users were most satisfied with the hyperlink function (n = 12), menus (n = 8), and aesthetics (n = 5). Among the 93 content related meanings, users most liked taking care of others (n = 34), self-care (n = 31), resources (n = 12), self-assessment (n = 8), and understanding stress reactions (n = 8). Users most valued the specific methods in the other-care and self-care menus.

Further Suggestions to Improve the Mental Health App

For the second question, users suggested improvements in the interface and content, as shown in Table 7. Compared with what users liked, a smaller number of users responded to this question. For the interface, users suggested improvements in hyperlinks (n = 4) and aesthetics (n = 2). Regarding content, users suggested improvements in self-care (n = 20), other-care (n = 29), understanding stress reactions (n = 8), resources (n = 2), and self-assessment (n = 2). Overall, most of the suggestions regarding content focused on providing more detailed information. The users were actively looking for information and methods for self-care and other-care.

Discussion

The purpose of the study was to describe the entire process of designing and developing a mental health app. Using the ADDIE model, we described our process in five phases: analysis, design, development, implementation, and evaluation. Eighteen counselors participated in the usability test and highly rated the newly developed mental health app. In addition, 50 users reported significant improvement in their mental health, demonstrating the effects of the mental health app on emotional and psychological symptoms. The open-ended question results demonstrated that users were positive about the developed mental health app.

Our research contributes to the mental health app literature and the Korean community in several ways. In the literature, few studies have elucidated the entire process of designing and developing a mental health app (Alessa et al., 2019; Anastasiadou et al., 2019; Inal et al., 2020). In contrast to other studies, we provided details about the approaches we adopted in each phase of design and development with ADDIE. Our research is significant because it transparently illustrates the entire design and development process. In particular, this article will be helpful for those who plan to develop a mental health app for a target audience. Furthermore, our study shared how we validated the content with experts, conducted a usability test, and evaluated the effects of the mental health app on symptoms after extreme events and stress. Few studies have reported the usability and content validity of mental health apps (Alessa et al., 2019; Inal et al., 2020). This study has demonstrated how to conduct usability testing, check validity, and examine the effectiveness of an app on mental health with a formative evaluation. The entire process and all the approaches we adopted in this study can help other researchers, developers, and practitioners, either partially or entirely, as they develop their own apps.

Our research also contributes to the literature of usability and formative evaluation in mental health apps (Alessa et al., 2019; Inal et al., 2020; Ng et al., 2019; Torous et al., 2018). Clough and Casey (2015) criticized the traditional research pace of innovation in mental health because it is slow from conceptualization to community implementation. Mohr et al. (2017) estimated that on average it takes 15 years for innovation to move from conceptualization to the community. Clough and Casey argued that a small sample size design, collaboration, research, and empirical investigation during program development is essential to optimize use of technological advances such as a mental health app. Our research is responding to the call of Clough and Casey in that our work is an interdisciplinary collaboration of experts. We also conducted an empirical investigation by using pre- and post-testing of the mobile app. The approaches we used in this study contribute to the literature on mental health app development, usability, and formative evaluation.

In addition to its contributions to the mental health app literature, this study contributes to the Korean community in several ways. This is the first mental health app to be disseminated to a South Korean community. Our app can enhance public literacy about mental health in Korea. The app provides information about common emotional, physical, and psychological reactions to extreme events and stress; a diagnostic self-assessment tool with personalized feedback based on scientifically proven evidence; methods for self-care and other-care with text, audio, and video; and resources. Some people might feel that they alone experience certain emotional, physical, and psychological symptoms, which can cause depression, insomnia, or anxiety. By providing information about common reactions to extreme events and stress, we can help people to understand and cope with them. The mental health app we developed is the only one that is publicly available for free in Korea.

For the past 10 years, Koreans have been exposed to many natural (e.g., earthquake) and man-made disasters (e.g., Sewol ferry) and epidemics (e.g., SARS and MERS) and pandemics (e.g., COVID-19). Yet, there was a lack of public resources available to help them understand the emotional, physical, and psychological symptoms to be expected after such extreme events and stress. Internet and Wi-Fi connections in Korea are well constructed. Approximately 95% of adults (≥18 years old) own a smartphone (Taylor & Silver, 2019), and most teens (aged from 13 to 17) have access to a smartphone that will work with mobile apps. The newly developed mental health app can be a useful resource to help people better understand their symptoms and more actively deal with them to improve and maintain mental health.

Our mental health app also enhances accessibility to diverse age groups, particularly adolescents and young adults (Greeson et al., 2020; Punukollu & Marques, 2019). Young people might not feel comfortable obtaining counseling services because they often need to go to counseling centers with adults. Traditionally, obtaining counseling services in Korea is not perceived as positive. People have misconceptions about counseling services, believing that receiving help from a counseling service is only for those with extreme symptoms. Therefore, some people still hesitate to get in-person services, which means their mental health could get worse. Although the mental health app is not an alternative to in-person counseling, it provides information about symptoms and accessibility, which will make the Korean public well-informed about the symptoms and stress that follow extreme events and make people better able to cope with them, which essentially contributes to public mental health.

Limitations and Future Research

Despite the significance of the study, readers should be cautious when interpreting our results and applying our approaches in their own contexts. Several cautions should be considered. First, our user evaluation was conducted with a small number of mental health professionals and potential users. If we had enough funding and time, we could have conducted our usability test with a larger audience and different age groups. Those results would have been helpful in understanding user engagement in different age groups. Second, the effects of the mental health app were tested in volunteer participants from undergraduate and graduate courses. Although our participants represent people in their early 20s to middle 30s, examining the effects of our app in other age groups, such as teenagers, people older than 40, and seniors would be helpful for generalization. Third, in the usability test, many users suggested that we embed more multimedia resources, such as audio and video clips, in the mental health app. Current generations, particularly people in their early 20s to mid-30s might be inclined to use multimedia-based materials. However, due to budget and time constraints, we were not able to fully accommodate those suggestions. Continuously maintaining and updating the mental health information will be critical to the sustainability of the app. If our budget allows, we will continue to integrate new audio and video content into the app. Last, our analysis heavily relied on self-reported data. Although we asked the users to engage with the mental health app on a regular basis, we are not sure whether they did use it regularly or how long they used it each time. Collecting log data from the mental health app would give us a better understanding of how participants used the app. While we have incorporated “tipped scales” to direct “users to seek-in-person assistance” we recommend the use of a disclaimer. See below:

Prior to viewing our mental health app please keep in mind:

If you or someone you know needs immediate help, call the national center for suicide prevention at 1393 or 1577–0199.

It is the responsibility of the user to use their judgment and consult with their doctor or health care professional to make sure the app is appropriate for their treatment. We also recommend the helpline phone number and resources on this app.

Mental health apps are not a replacement for mental health treatment from a qualified professional.

This mental health APP is not officially affiliated with any products or companies clinically or financially.

This mental health APP is available to public for free.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by a grant, “Development of Psychological Modeling based on Disaster Analysis,” [MPSS SD-2014-40] from the Social Disaster Prevention Research Center, Ministry of Public Safety and Security, Republic of Korea. This work was supported by the Ministry of Education of the Republic of Korea and the National Research Foundation of Korea (NRF-2021S1A3A2A02089682).