Abstract

This study investigates the dynamic pattern of learners’ evaluation of their learning experiences on the MOOC platform at different stages. Data include 364 evaluative comments from the large MOOC in Consecutive Interpreting for a period of 15 weeks. The results of MANOVA test suggest that MOOC learners left significantly more comments on four areas at the later stage compared with those at the early stage: learning resources, learning community, learning opportunity, and student voice. It suggests that MOOC learners at a later stage are looking for a learning environment which supports self-assessment, peer feedback, and coaching to fulfil their personal needs and expectations. Moreover, qualitative thematic analysis suggests that learning resources addresses three areas: the design of the tasks, helpfulness, and diversity of the resources. Learning community largely refers to peer-feedback, co-instructions of peers, and instructors. Learning opportunities refers to the tasks which improve professional knowledge, self-regulated learning strategies, and language proficiency. Last, student voice indicates three types of tensions: the need for pre-existing knowledge to study MOOCs, learners’ diversified personal goals and teaching objectives of MOOCs, preferred learning strategies between MOOC designers and learners.

Introduction

With the advancement of modern information communication technology, distant learning has evolved from correspondence courses to various forms of teaching and learning on the Internet. As an important form of online education, massive open online courses (MOOCs) break the time and spatial constraints on learning, and offer a large audience around the world the access to knowledge of various disciplines—regardless of their cultures and backgrounds. With the deepening integration of technology and education, both the number of MOOCs and their registered learners keep growing year after year. Also on the rise is the amount of research on MOOC evaluation. As a revolutionary form of education, MOOCs offer almost complete freedom of participation and withdrawal to learners with varying levels of intention and motivation. Their effectiveness or success is therefore complex to evaluate (Al-Imarah & Shields, 2019). While there is a large volume of studies on course evaluation practice in a classroom setting, learners’ expectations and evaluation of their experiences with MOOCs have rarely been investigated—with more researchers using a single quantified index as the sole basis for evaluation, such as dropout rate and completion rate (Zhu et al., 2020). With both quantitative and qualitative analyses of the learners’ evaluation during a 15-week language interpreter training MOOC, Consecutive Interpreting, this study investigated learners’ expectations of their MOOC learning experience from seven dimensions: instructions, learning outcomes, learning resources, learning opportunities, learning communities, organization and management, and student voice, and revealed the changes in their expectations throughout the process. And students’ satisfaction to the course, an important evaluation indicator, relies on the fulfillment of these seven dimensions. Unlike the conventional off-line courses, where teachers can receive real-time feedback directly from students and adjust their teaching accordingly, a MOOC with its pre-made teaching videos and learning activities cannot satisfy any change of its learners’ expectation without preparation. Therefore, a solution to this is to track learners’ expectations throughout their entire learning process and prepare for the changes when designing the course. This study contributes to the knowledge of course evaluation practice from at least two perspectives. First of all, instead of collecting evidence by the end of the semester, it investigates the dynamics of students’ learning experience with MOOC and changing expectations of their instructors during the whole course. Secondly, instead of collecting numerical data through a course evaluation survey, this study analyzes online learners’ qualitative comments and evaluates their course experiences from multiple perspectives.

Literature Review

Learners’ satisfaction to MOOC experience has recently been recommended as a more important indicator for measuring the success of MOOCs than other indicators (Hew et al., 2019; Joo et al., 2018; Osuna-Acedo et al., 2017). Nevertheless, it is very challenging for MOOC instructors and designers to create a high level of satisfaction from learners, due to their complicated backgrounds and different expectations of their learning experiences (S. Watson, Loizzo, et al., 2016). Studies have shown that completion rate can predict satisfaction, as learners who dropped out courses had less MOOC satisfaction than those who had completed or were still taking courses (Hew et al., 2020). At the same time, intention fulfillment highly correlates to satisfaction (Rabin et al., 2019). Researchers argued that a high-quality MOOC should let learners achieve or learn what they wanted (Smith-Lickess et al., 2019). In addition, satisfaction can also improve learners’ motivation (e.g., Dai et al., 2020; Smith-Lickess et al., 2019), academic performance (e.g., C. Chen et al., 2018), and intention to continue (e.g., C. Chen et al., 2018; Joo et al., 2018; B. Li et al., 2018), as well as the possibility to attract new learners when satisfied learners shared their experience with other people (e.g., Ali et al., 2016; W. S. Chen & Yao, 2016; Hew et al., 2020). Studies have explored various factors to satisfaction from mainly the following aspects: instructors, learners, and course design or platforms.

The link between instructor-related factors and MOOC learners’ satisfaction level of courses and their learning outcomes remains unclear, due to contradictory evidence. On the one hand, some studies suggested that instructors are very important in shaping learners’ perception of the course and their learning experience (; Deng et al., 2019; Evans & Myrick, 2015). To summarize, the instructor’s presence (Pi et al., 2017; W. Watson, Kim, et al., 2016), knowledge about the subject matter, his/her manners of content presentation, enthusiasm, and humor positively affect learners’ satisfaction (Hew et al., 2019; S. Watson, Watson, et al., 2016). For example, research by Tawafak et al. (2018) indicated that the effect of teachers’ subject knowledge onto the effectiveness of the MOOC increased with the students’ perceived complexity of the course content. The researchers believed good presentation patterns of the teacher in MOOC helped students to understand difficult contents (Tawafak et al., 2018) and experienced lower cognitive loads (Yi et al., 2018), while poor presentation patterns did just the opposite. The instructor who reads merely from PowerPoint slides, rarely smiles, presents irrelevant contents, and avoids looking straight into the camera tend to lower learners’ satisfaction (Hew et al., 2019). Last, some other features in relation to the instructors are also identified as influential factors. For example, the design of MOOCs and the service and tools provided by MOOC platforms affect learners’ perception of the course. Technological supports from instructors also enhance their performance and satisfaction, and therefore positively affect their continued intention to use the MOOCs (Tawafak et al., 2018). In addition, assessments that challenge learners’ understanding of the course contents and allow them to apply what they have learned can improve learners’ satisfaction (Badali et al., 2022; Bali, 2014; Hew et al., 2020).

However, on the other hand, the role of MOOC instructors and their effect on learning experience have also been concluded as fairly limited, as MOOC learners’ autonomy and the use of collaborative learning are reported as the driving forces behind their learning process. For example, Haavind and Sistek-Chandler (2015) took a qualitative approach and found that the role of instructors was largely pedagogical: preparing and planning the MOOC experience. Thus, their real-time engagement into the course could yield only a limited effect on students. This finding echoed the research done by Deng et al. (2018), who, by reviewing existing literature, identified MOOC instructor characteristics and teaching context variables to learners’ engagement, and found that teaching variables had only a limited impact on learners’ engagement and learning outcome. However, the result was not recognized by researchers (L. Li et al., 2022) who investigated different categories of MOOCs, namely knowledge-seeking and skill-seeking ones, as learners’ positive experience of the latter were “driven by the instructor and their ability to present lectures and integrate course materials and assignments”. Some other studies identified the following features or design of MOOCs may increase learners’ satisfaction level as well as their learning gains: setting up clear learning goals, designing interactive learning activities, introducing clear rules of discussion engagement, and clear division of responsibilities between instructor teams and students (S. Watson, 2017).

Although MOOC instructors’ impact on learners’ satisfaction level and learning gains remains complicated, an increasing number of studies have concluded that learners’ satisfaction is determined not only by the course or instructors, but also the learners themselves (Y. Chen et al., 2019). This link has been addressed from the following two perspectives: psychology and learning behavior. Studies on the former aspect focus on areas such as learners’ motivation, emotion, and perception of their MOOC experience. The latter aspect—motivation—has been mentioned more frequently than other factors in the literature (Zhu et al., 2018), which has revealed its positive correlation to completion rate (Joo et al., 2018; Jung & Lee, 2020; B. Li et al., 2018; Rieber, 2017), retention or continuance intention (B. Li et al., 2018; Martin et al., 2018), and satisfaction (Durksen et al., 2016; Vázquez et al., 2018). Meanwhile, different types of motivation and their effects on satisfaction and learning outcomes have also been explored. An interesting finding revealed by Y. Chen et al. (2019) showed that social learners who scored low in connection and recognition motivation had the same level of satisfaction as high-motivation learners about their learning experience, and also perceived their experience equally as positive as them. Interest in knowledge was discovered to have the strongest effect on satisfaction. Researchers also found that the fulfillment of learners’ psychological needs closely connected to motivation, and in turn, contributed to their level of satisfaction. Such needs include autonomy, competence, relatedness (Buhr et al., 2019; Durksen et al., 2016; Fang et al., 2019; Martin et al., 2018). Moreover, perception of the course or learning experience is another hot topic. From general perception such as “the importance of the benefits of participating in the MOOC” (Rabin et al., 2019) to specific ones like perceived usefulness and ease of use (or usability; e.g., Joo et al., 2018; Tao et al., 2019; Tawafak et al., 2018), researchers found that positive perceptions enhance satisfaction and retention. On the other hand, negative ones, such as “the importance of the disadvantages of participating in the MOOC” (Rabin et al., 2019), perceived workload and difficulty (Hew et al., 2020) have not been found to be associated with satisfaction. The results indicate that what really matters to learners is things that can facilitate the learning experience, and they do not refuse to pay in effort.

Finally, learners’ negative emotion or sentiments have reportedly been linked with their levels of satisfaction, motivation, and intention to continue for further engagement with MOOC materials (Agonacs et al., 2020). The identified emotional status includes positive and negative comments (e.g., Wang et al., 2018; Zou et al., 2020), perceived boredom, and perceived enjoyment (e.g., Buhr et al., 2019; Tao et al., 2019). Wang et al. (2018) even pointed out that emotion might change at different stage of the course, and collected real-time comments and reviews for analysis. Yet, it was a pity that they did not clarify the stages, and the results reflected no change during the course.

In relation to the link between learning behaviors and their satisfaction level, it is reported that general participation patterns, such as auditing, completing, touring, and sampling, are closely associated with the course graduation rate. Since the patterns are affected by learners’ emotional tendencies, intervention measures are necessary to secure the sustainable development of the MOOC (Wang et al., 2018). Researchers further compared learners who successfully graduated from the course and those who did not, and found that the former used better self-regulated learning strategies than the latter, and reported higher levels of satisfaction and intention-fulfillment (Rabin et al., 2019). Meanwhile, the positive correlation of engagement with satisfaction (Fang et al., 2019; Rabin et al., 2019; Wang et al., 2018) and retention (Martin et al., 2018) has also been discovered. However, researchers had different opinions on the direction of the effect, as some believed that satisfaction was affected by engagement (e.g., Rabin et al., 2019), while others stated that the former enhanced the latter (e.g., Fang et al., 2019). Moreover, this correlation has been found to be mediated by interaction, particularly interaction among learners (or social interaction), because when they help others’ learning problems with the knowledge they have learned, they tend to feel capable (Fang et al., 2019). On the other hand, Tawfik et al. (2017) pointed out that this learner-learner interaction did not involve “high degrees of co-construction of knowledge” and the amount of discussion decreased as the course elapsed.

Finally, instead of presenting the influential factors as instructor-related and learner-related, some recent studies have argued that the interplay or the feature of interactivity between them may have a larger prediction power over learners’ satisfaction level and learning outcomes in MOOC platforms. For example, Chen et al. (2018) identified three types of interaction on the MOOC platform, namely human-system interaction, human-message interaction, and human-human interaction (through platform functions). Among them, human-message interaction influenced learners’ satisfaction most, which in turn, had a strong direct effect on learners’ continuance intention, whilst human-human interaction had the least influence.

To summarize the existing literature on learning experience and course evaluation on the MOOC platform, two research gaps can be witnessed. First of all, studies on learners’ satisfaction were carried out through surveys or interviews, with only a small number of studies looking at learners’ comments on the MOOCs (e.g., Hew et al., 2020). Surveys, with the convenience to provide large-scale data, usually limit learners’ free expression of opinions by pre-set questions and options of answers, while interviews, which allow higher flexibility in expression, can only generate small amount of data. However, learners’ comments on the MOOCs overcome the limitations of the above two ways of investigation. It is free for learners to comment on any aspect of the learning experience of the MOOC, and easy and possible for researchers to collect a large amount of comments with the help of the MOOC platform. Secondly, these studies mainly collected the comments from those who completed the MOOCs, ignoring the majority who only participated partially. In addition, the existing investigation into learners’ evaluation, be it through survey, interview, or comment analysis, was carried out by the end of the MOOC, presuming that learners’ expectation over their learning experience will not change during the whole course. However, some researchers realized that it took learners several months to complete a MOOC, and their attitude or perceptions might not stay unchanged during the process (Wang et al., 2018), and thus they suggested measuring the course at different stages (Smith-Lickess et al., 2019). This study filled these gaps by collecting learners’ positive and negative comments on their learning experiences in a MOOC course, from the first week until the end of the course, aiming to seize the dynamic and evolving patterns of learners’ expectation. Two research questions were asked: (1) What aspects of their learning experience do MOOC learners evaluate? (2) Do learners evaluate their learning experiences from various perspectives at different stages of the MOOCs?

Research Design

Research Context

The current research carries out a case study on the MOOC, Consecutive Interpreting, which is China’s first interpreting MOOC. Produced and operated by a team of five interpreter trainers and language interpreting practitioners from Guangdong University of Foreign Studies, the MOOC was launched on iCourse163.org, the country’s largest MOOC platform in February 2018. Focusing on skill training for consecutive interpreting, a form of language interpreting, the course consists of 52 English lecturing videos in 13 units, and takes 15 weeks to operate a full round. The course was available to all internet users without any limit on educational or social background. Yet, to complete the course, learners had to register in the first week and pass all the quizzes and interpreting tests, which were specially designed for the course, on a weekly basis. Discussions on specific learning topics were organized by the lecturers, and learners were free to participate. Meanwhile, learners were also encouraged to evaluate the course before they left by rating it on a 5-star scale and leave their comments, both of which were shown in the Course Evaluation box on the front page of the MOOC. Learners’ evaluative comments to the instructors were collected from the MOOC platform between April and July 2019. Though all learners registered and started the course in the first week, they could rate and comment anytime during the 15 weeks MOOC semester. This arrangement provides a unique opportunity to detect the possible differences in MOOC learners’ expectations at different stages of the course. In total, 40,381 learners registered on this course by the end of first week, and roughly 0.90% of them (n = 364) left their comments on their learning experience voluntarily for subsequent reviewers to read. This aligns with the findings from other studies which also report a consistently low completion rate and low level of intention to complete a MOOC course. This study is exempt from an ethics review by the faculty research committee for two reasons: first of all, the data downloaded from the MOOC platform does not contain learners’ personal information, such as their real names, gender, email address, occupations, or phone number. Secondly, all the information is also publicly available on the platform, aiming to attract prospective learners to enroll on the course. All the names that appeared in the comment area of the MOOC were chosen by the learners themselves.

Data Analysis

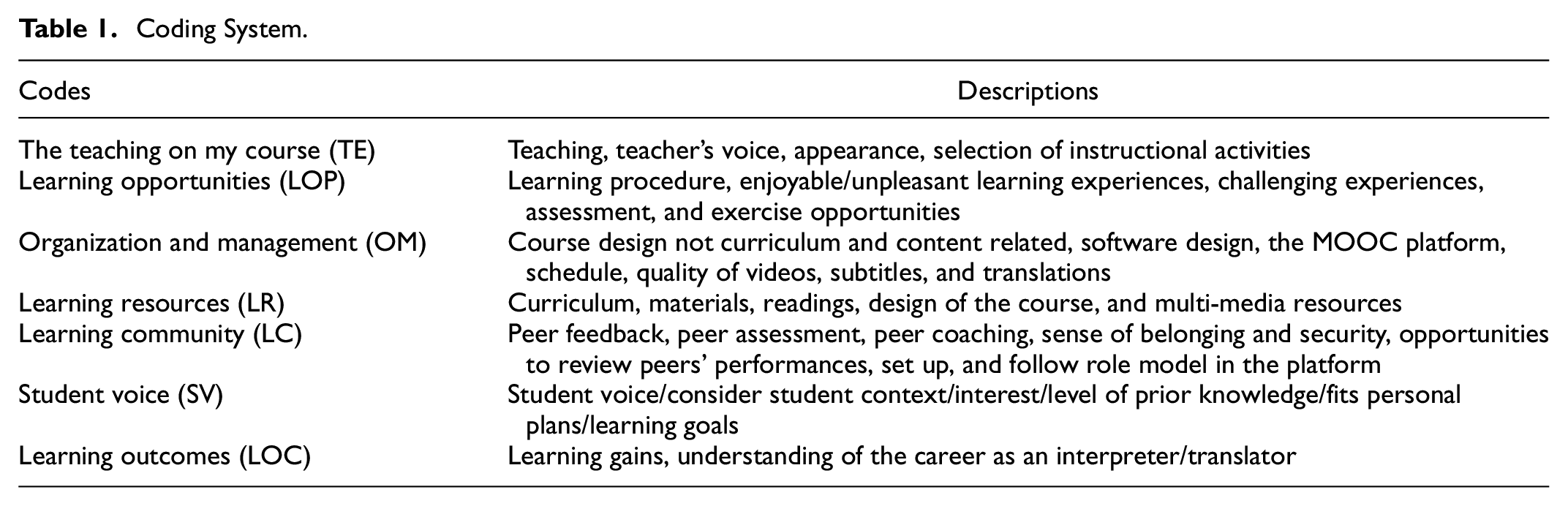

The initial coding system was developed based on the eight aspects of student learning experience from the UK National Student Survey, one of the most widely used and standardized instrument to measure students’ learning experience at higher-education level: (1) the teaching on my course, (2) learning opportunities, (3) assessment and feedback, (4) academic support, (5) organization and management, (6) learning resources, (7) learning community, and (8) student voice. Since these eight aspects were designed to measure campus based—learning experience, its applications to online learning experience proves to be challenging. Two research assistants had been invited to code 30 comments independently to test their understanding of the coding system. Their disagreements and other problems can be summarized into the following four points. As a result, some revisions have been made to ensure the reliability of coding.

First of all, two types of comments were excluded from further analysis. The first type is vague and short posts, as they are too general and therefore does not match with any of the code. For example, the comment “oh, my god” confused the reviewers as it shows neither a clear attitude of the learner nor a specific aspect of the learning experience. The post “good, awesome”, though showing the learner’s positive attitude, anchors none of the eight aspects as well. The second type of excluded posts mainly served as a reminder for self-motivating, self-encouraging, or self-regulating. The intended readers are the learners themselves (not instructors). For example, one learner commented that “the (MOOC) teachers teach me online, but I need to pay real efforts offline.” It looks like a meta-cognitive strategy which reminds the learner him/herself of the importance of combing on-and-offline learning strategies to achieve the learning goals.

Secondly, some posts look like self-reflections rather than a direct evaluation of neither teaching nor course design. They provided more insights on what MOOC learners expect from course designers and providers. For example, one post suggests that MOOC instructors may include more post-video tasks or exercises to help learners revise/apply what they learn on the MOOC platform. In other words, it can be coded as “learning opportunity” in a negative sense. Therefore, we added positive or negative comments as another perspective. The negative comments or “recommendation for further improvements” are very interesting. The learners tended to tone down their suggestions or recommendations, in order to appear more acceptable or less threatening. In other words, negative comments may not necessarily refer to criticisms here, they may also refer to a pedagogical suggestion to teachers or expectations on teachers to make pedagogical changes. For example, one learner commented: “Teacher’s instruction is clear and vivid. I can understand better if more details are included in the teaching of note-taking skills.”

Thirdly, taking consideration of or accommodating student’s individual needs is another popular demand or suggestion mentioned by the learners. This study labeled them as “student voice”. For example, one post stated “it guides learners from the easiest to very difficult skills and therefore it helps to lay a solid foundation for beginners, like me. Otherwise, I cannot catch up with the content”. It highlights that beginners’ poor foundations in interpreting and translation practice are well accommodated by the course design and instructions. MOOC learners’ diversified needs are evidenced by the fact that some learners enrolled on this course to improve their general English proficiency (using the multiple media resources as listening materials). Finally, the coding system of the data is presented in Table 1. Following recommendations of similar studies on coding qualitative data from online discussion forums (Anderson et al., 2001; Goshtasbpour et al., 2020), most of the posts were assigned with more than one code. Each post was assigned with a post ID, and two reviewers had been trained to understand the proposed seven aspects of the learning experience on the MOOC platform.

Coding System.

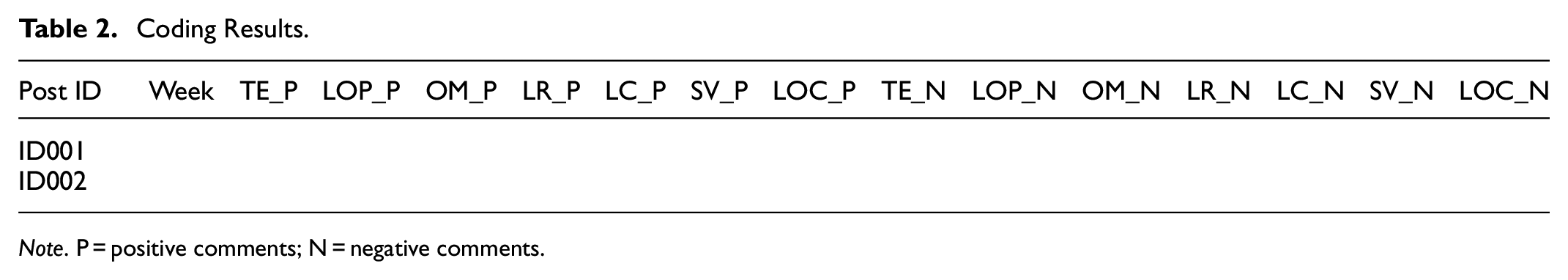

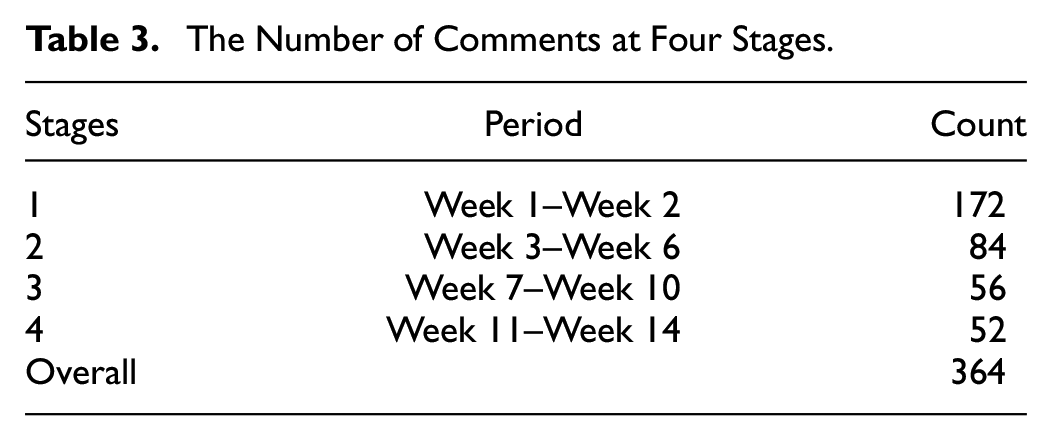

The results of the coding work, after the two research assistants approved each other’s coding, were stored in the Table 2. To answer the first research question, the frequency of each code was calculated to see the most frequently mentioned positive and negative areas by MOOC learners. Two MANOVA tests were conducted to test the associations between comments (positive and negative ones) and the four different periods of time. Each period is consisted of roughly 3 weeks (Table 3). A significant degree of association between comments and the four periods of time may suggest that those learners with a longer engagement with the MOOC course may pay attention or give suggestions on different areas of their learning experience.

Coding Results.

Note. P = positive comments; N = negative comments.

The Number of Comments at Four Stages.

After the significantly changed areas were identified, the first author and one research assistant collaborated to analyze the comments in relation to those areas. Both inductive and deductive methods were used to develop the coding scheme. The original items under each category serve as the initial coding scheme, and the repeatedly emerging phrases in the comments have also been flagged as possible sub-scales. Taking student voice as an example, the initial coding system includes: give feedback to instructors, staff values my opinion, staff acted on my feedback, student union, and student community. The coding of the comments was later shared with another research assistant for double checking, with any discrepancies being solved by the corresponding author.

Findings

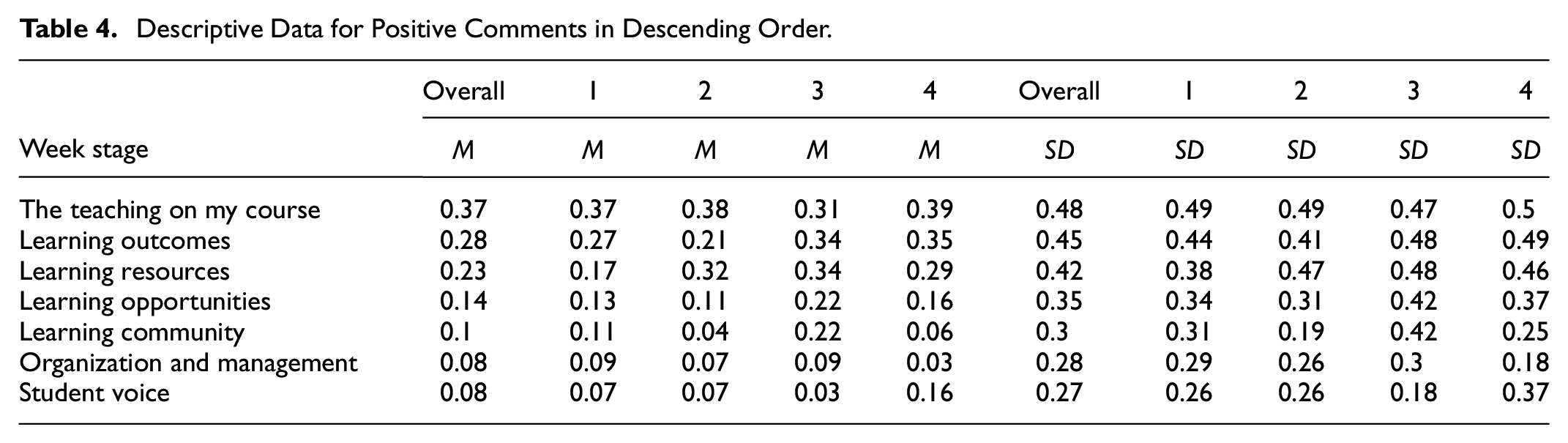

Overall, MOOC participants left more positive comments on the following three areas of their learning experience: the teaching of my course, learning outcomes, and learning resources, while they left more negative comments or suggestions on learning opportunities, student voices, and organization and management. It may be interpreted as they were more satisfied with the instructional sides of MOOC instructions, and asked for more guidance and opportunities to develop their self-directed learning strategies—with assistance in terms of course design and difficulty level of learning materials (Tables 4 and 5).

Descriptive Data for Positive Comments in Descending Order.

Descriptive Data for Negative Comments in Descending Order.

To answer the second research question, two follow-up MANOVA tests were conducted, with stages of MOOC courses as independent variables (four stages), and comments in seven positive areas and seven negative areas as dependent variables (Table 6). The interaction between positive comments on seven areas of MOOC learning experience and four stages was significant (F = 1.716, df = 21, Pillai’s Trace = 0.115, p < .05, partial η2 = .038), indicating learners’ compliments on their MOOC experiences varied at different stages of the MOOC course. The univariate F tests showed that there was a significant difference among the four stages of the MOOC course for positive comments on two specific areas: learning resources (F = 3.319, df = 3, p < .05, partial η2 = .032) and learning community (F = 2.721, df = 3, p < .05, partial η2 = .045) with a moderate effect size. It suggests that the longer the learners stayed with the MOOC platform, the more likely they may comment positively on the availability of diversified and wide range of learning and assessment opportunities. It can be interpreted as the learners at later stage of the MOOC are more willing to spend time on engaging with the wide range of resources and peers in MOOC platforms independently and collaboratively.

Multiple Comparisons.

Moreover, the interaction between negative comments on seven areas of MOOC learning experience and four stages was also significant (F = 1.661, df = 21, Pillai’s Trace = 0.112, p < .05, partial η2 = .031), indicating learners’ complaints, suggestions, or worries on their MOOC experiences varied at different stages of MOOC courses. The univariate F tests also showed that there was a significant difference among the four stages of MOOC course for negative comments on two specific areas: learning opportunities (F = 6.033, df = 3, p < .01, partial η2 = .001) and student voice (F = 3.439, df = 3, p < .05, partial η2 = .033) with a relatively small effect size. This finding indicated that the learners at the later stage had more concerns over the design of tasks and exercises on the MOOC platform.

Apart from the analysis of the changing patterns among the seven investigated areas of expectations on their MOOC learning experiences, content analysis of MOOC learners’ comments offers more insight on these areas and the relationships among them. This part addresses the sub-areas in MOOC learners’ expectations on learning resources, learning opportunities, learning community, and student voices.

In relation to learning resources, apart from the general comments on the learning resources, three themes emerge: (1) about the task design/course design (15 counts), (2) attractiveness and helpfulness of the content (11 counts), and (3) the quantity and diversity of the resources (6 counts). As for the task design or course design, positive comments address the following four areas: gradually increased difficulty level on a weekly basis, the inclusion of interaction components (i.e., discussion forums and Q and A sessions), the presentation of structure and outline of the course and video with high-quality solutions. The most frequently mentioned negative comments or suggestions were about the small number of exercises supervised by the instructors. As for the attractiveness and relevance of the contents, the most positive comments stated that the tasks and examples in the MOOC courses look similar to the professional practice, and are therefore very relevant to their future career development. Helpfulness here refers to two things: animations and subtitles on the videos, and teacher instructions on learning strategies in this MOOC course. Finally, the quantity and diversity of the learning resources refer to (1) the systematic review on the technique and knowledge in interpretations and (2) detailed explanations of the technique and knowledge with examples.

Although the quantity of comments on the learning community is small, it was mentioned significantly more frequently in the later stage of the MOOC course. The comments on the perspective of the learning community mainly address two areas: (1) co-instructions between instructors and classmates in classes (5 counts) and (2) changes in the level of motivation and goal setting as a result of peer feedback and sharing their work with each other (5 counts). Interestingly, the idea of co-instructions between teachers and learners were proposed by three learners as a solution for the limited numbers of explanations and examples in online learning resources. Examples submitted by and explanations offered by peers are highly appreciated by MOOC learners at a later stage. Moreover, the performance and presentations conducted by peers serve as exemplars for peers to study and follow.

Thirdly, learning opportunities does not simply refer to the opportunities to study and practice interpretation skills, it includes four themes: learning opportunities for improving (1) interpretation skills and proficiency (21 counts), (2) general knowledge and skills without mentioning specific areas (12 counts), (3) learning skills or self-regulated learning strategies in a MOOC environment (10 counts), and (4) academic language skills, for example: English speaking and listening skills (5 counts). Apparently, some MOOC learners treated this MOOC course in interpreting as a way to practice their academic English skills and self-regulated learning skills in the MOOC. Interestingly, most of the negative comments or suggestions on learning opportunities address the same issue: lack of instructions and guidelines to help MOOC learners to study in the MOOC environment, for example, one learner complained that the units in the second half the course presented very challenging tasks, which were too difficult for him or her to follow at home. Another learner suggested that the instructors need to teach him or her where and how to find extra exercises which align with the curriculum of this MOOC course in each week, as he or she wanted to practice the skills in that course after watching the videos on the platform.

Last, student voice in classroom instruction refers to teachers or administrative team members taking into account students’ concerns and suggestions when designing and delivering their courses. However, it means slightly different things in the context of MOOCs, as it deals with the extent to which the design and delivery of the course fit or match with students’ expectations or preferences. The comments in relation to student voice refer to four themes: firstly, the need for pre-existing knowledge to understand the courses (12 counts). For example, one learner commented that “It is a great course for me to study after work and I think I can do translation work later better and it spends less time on explaining the theoretical part”. Thirdly, it refers to the degree of match between the design of the MOOC and learners’ preferred way of learning in the MOOC environment (11 counts). For example, one comment stated “I am slowing in learning and taking notes of online materials and teachers’ instructions. This MOOC is too fast and I cannot catch with it”. Lastly, it refers to the degree of match between the difficulty level of instructions and learners’ language proficiency (11 counts).

Discussion

To answer the research questions, overall, MOOC learners left compliments on the teaching on my course, learning outcomes, and learning resources more frequently than other perspectives of their learning experiences, while in terms of negative comments or suggestions for improvement, they commented on learning opportunities and student voice more than other aspects. However, this study argues that MOOC learners left significantly more comments on the following four areas at the later stage when compared with those at the early stage of the MOOC course: learning resources, learning community, learning opportunity, and student voice. It suggests that learners at a later stage of a MOOC are looking for a learning environment which supports self-assessment, peer feedback practice, and one that matches with their personal needs and expectations. Moreover, qualitative thematic analysis suggests that learning resources mainly addresses three areas: the design of the tasks, helpfulness of the resources, quantity and diversity of the resources. Learning community largely refers to two areas: co-instructions, peer feedback and assessment. Learning opportunities refers to the tasks which improve professional knowledge, self-regulated learning strategies and language proficiency. Last, student voice here indicates four types of mismatches between learners and courses features: the need for pre-existing knowledge to study a MOOC, learners’ personal goals and teaching objectives of a MOOC, preferred learning strategies between MOOC designers and learners, and the difficulty level.

To start, although these identified differences cannot be simply interpreted as the evidence for a change in preferred instructional strategies in MOOCs, this study confirms that MOOC learners hold different expectations on their instructors and instructive strategies. The dynamic patterns in student evaluation of teaching practice have not been widely reported in the context of MOOCs; however, it has been discussed in face-to-face conditions. For example, Wei et al. (2021) reported that students’ changing expectations on teacher’s feedback and feedforward practice may be the result of students’ increasing level of feedback literacy as well as other context factors, such as the decreasing level of input from their teachers. Consequently, the interaction pattern between instructors and students slightly moves away from an information transmission model to a model where the shared responsibility is emphasized (Winstone et al., 2020) and the learners’ continuous intention to learn with MOOCs can be more likely sustained (Joo et al., 2018). This study offers an explanation to the contradictory findings from previous studies, as some of them suggested the positive impact of a strong presence of instructors on better learning outcomes and a high retention rate (Pi et al., 2017), while others advocated the greater use of learning communities and tasks of collaborative learning to maintain MOOC learners’ engagement level and intentions to stay with the e-learning platforms (S. Watson, 2017).

Secondly, the four identified areas reflect that students’ demand for more guidance on self-directed learning and support for developing strategies in learning autonomy look optimistic, even if the effect size is either moderate or small. This can be interpreted from at least two perspectives. On the one hand, it demonstrates MOOC learners’ lack of metacognitive skills in managing their learning process alone, just as D. Zhang and Perez-Paredes (2021) found in their study, where only a small number of learners were capable of evaluating and selecting online learning materials and therefore relied on the recommendation from instructors and social media. On the other hand, there are a small number of learners who may develop their self-directed learning skills, self-efficacy in learning with MOOCs, and internet navigation skills in managing their learning journeys (N. Li et al., 2014). In other words, MOOCs provide an opportunity for some of them to develop, especially those learners who are strongly self-motivated to stay until the later stage or even the end of the course. This finding echoes with the conclusion from previous MOOC literature in that users’ accumulated learning experience with online courses is a significant predictor on their use of metacognitive learning strategies, such as goal settings and environment structuring usage (K. Li, 2019). However, two issues have been reported: the number of them can be as low as 4.3% (Agonacs et al., 2020), and for those learners who are willing to experiment with some learning autonomy strategies, a large degree of variations can be observed (Ding & Shen, 2022; Meek et al., 2017).

Finally, as evidenced by the large number of comments in relation to MOOC instructors and instructive strategies, the role of instructors cannot be underestimated in the MOOCs. This can be explained as a higher degree of reluctance to become more active and self-regulated, as a majority of students may rely heavily on instructors’ guidance and supervision in a MOOC, and most of them can be described as viewers with very low levels of engagement and interaction with online materials (Martin-Monje et al., 2018). This finding expands some researchers’ conclusions with technology-assisted language learning to the context of language MOOCs. For example, Lai’s (2015, 2017) studies depicted the role of instructors in training, regulating, supporting language learners’ motivation level, use of learning strategies and establishing learning communities in and outside the classroom. This study argues that, with sustainable support and guidance from instructors, MOOC learners may start to build up their confidence level to play a more active role in planning and evaluating their own learning process and outcomes.

Conclusion

To conclude, this study suggest that MOOC learners at a later stage are looking for a learning environment which supports self-assessment, peer feedback, and coaching to fulfil their personal needs and expectations. MOOC learners left significantly more comments on four areas at the later stage compared with those at the early stage: learning resources, learning community, learning opportunity, and student voice. Learning resources addresses three areas: the design of the tasks, helpfulness of the resources and diversity of the resources. Learning community largely refers to peer-feedback, co-instructions of peers, and instructors. Learning opportunities refers to the tasks which improve professional knowledge, self-regulated learning strategies, and language proficiency. Last, student voice indicates three types of tensions: the need for pre-existing knowledge to study MOOCs, learners’ diversified personal goals and teaching objectives of MOOCs, preferred learning strategies between MOOC designers and learners.

This study’s contributions to the MOOC literature can be summarized from the following three perspectives. First of all, most of the MOOC evaluation studies relied on the data from end-of-course student evaluation surveys, which presumed that MOOC learners’ satisfaction level and evaluations on the MOOCs are rarely changed during the whole period of time. This study, however, collected students’ evaluative comments on their MOOCs at different stages of the course and therefore caught the dynamics of their evaluations on the online courses. Secondly, this study perceived MOOC experience as a multidimensional construct. Instead of using one single item to measure their satisfaction level, this study borrowed UK National Student Survey to evaluate their learning experience from seven dimensions. Last, MOOC in translation and interpretation has rarely been investigated before. However, research on conventional translation and interpreting courses has proved the effect of metacognition onto the success of interpreting performance and suggested the use of metacognitive tools, such as self-assessment and peer feedback to facilitate students’ self-monitoring and self-regulation over their learning process (Dogan et al., 2009). The conclusion also applies to online interpreting training. Our study suggested the designers and instructors of translation MOOCs need to consider the changes in students’ expectations over the online courses, better accommodate their personal needs and provide suitable tools to help develop their metacognitive learning strategies.

The limitations of this study cannot be ignored. First of all, we only collected data from the largest cohort of students in this MOOC; therefore, the findings need to be tested with more cohorts of learners in the future. Secondly, to better understand the changes of MOOC learners’ expectations on instructors and the courses, a longitudinal study could be conducted to track a small number of learners for a long period of time from a wide range of cultural backgrounds or contexts, rather than the currently popular ones such as USA, China, and Spain (Zhu et al., 2020). Thirdly, the effect of changing expectation onto learning outcomes were not discussed. Due to ethical issues, we did not have access to learners’ performance in the course. Thus, we are unable to explore how changing expectations effected the development of learners’ translation and interpreting competence.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research is funded by Higher Education Department of Ministry of Education, China (Certificate No. 2020110576, Online Open Course Project, Jiaogaohan (2020) No.8).

Ethical Approval

This study does not include any experiment with human.