Abstract

It is still less known how teachers organize classroom dialog to foster intellectual contributions to classroom talks. The current study developed a coding scheme to clarify science teacher questions and identify how different questions affect students’ talk productivity. The participants were 28 fifth-grade students and a science teacher who conducted argument-based implementations. The verbatim transcriptions were analyzed through a systematic observation approach. The teacher elaborated on the students’ background reasoning in a metacognitive learning setting. In addition, the teacher assigned the students as co-evaluators of the credibility of the presented ideas. The teacher invited the students to re-consider their ideas’ explanatory power by displaying discrepant questions. Moreover, the students had to propose justified claims once they were requested to support their propositions with ample and proper data. The teacher used his questions to guide the students to handle basic process skills and make inferences regarding natural phenomena under consideration. The legitimating, discrepant, and justified talk questions fostered the students’ talk productivity than the eliciting, metatalk, process skills, and inference questions. Recommendations are offered around the teacher noticing term regarding the relationship between questions asked in science classrooms and students’ talk productivity.

Keywords

Introduction

Teachers ask questions for different instructional purposes (e.g., scaffolding students to form concepts, improving their reasoning and communicative capabilities in science classrooms; Chin, 2007; Kawalkar & Vijapurkar, 2013; Molinari & Mameli, 2013; Oliveira, 2010; Smart & Marshall, 2013). Teacher questions may be used as a monitoring mechanism. Teacher questions can guide students to be aware of on-the-fly situations of classroom talks (Alexander, 2005, 2006). Some specific typologies of teacher questions may support or hinder students intellectual contributions to classroom talks (Lee & Kinzie, 2012). Science teachers may use particular questioning strategies to create meaningful learning opportunities for students (Chin, 2006; 2007). This is called discourse-cognition relation (Gee & Green, 1998) explored in-depth in the present study.

Theoretical Framework: Teacher Questions and Students’ Talk Productivity

Teacher questions can be classified into three dimensions: structural, functional, and cognitive demand. Teacher questions’ structural qualities mean that they can be open-ended and close-ended. Open-ended questions trigger alternative interpretations and require conceptual variations in responses resulting in students’ higher-order reasoning. Open-ended questions are not posed by taking an evaluative manner and mostly ask for elicited (Martin & Hand, 2009) or justified responses (McNeill & Pimentel, 2010). Close-ended questions are displayed to receive predetermined answers. Pervasive usage of close-ended questions legitimates a conventional classroom game: “know what that in my mind is!” (Oliveira, 2010). Close-ended questions may not be cognitively demanding since science teachers call for prescriptive responses favoring a specific thinking and talking system as school science social language (Soysal, 2018a). As van Booven (2015) showed, open-ended questions permit dialogical verbal exchanges where alternative/contradictory views are contrasted. Close-ended questions cause monological classroom talks that are mostly teacher-introduced and impose a single point of view.

In terms of discourse-cognition relation, open-ended questions are found scaffolding for students to achieve sophisticated arguments and achieve reasoned discourse (Martin & Hand, 2009; McNeill & Pimentel, 2010; Pimentel & McNeill, 2013). On the other hand, close-ended questions may not create a dialogic space for students to externalize their ideas, and their responses’ duration can be less than five minutes in the presence of frequent close-ended questions (Lefstein et al., 2015). Boyd and Rubin (2006) disagree with the above-stated arguments regarding the divergence between open-ended and close-ended questions to foster cognitive contribution. Boyd and Rubin (2006) showed that students’ talk productivity is more about contingent questions that are posed based on a student-led response’s content. If a follow-up question aims to consider and use a respondent’s primary conceptual intention, this would stimulate deeper thinking and results in higher talk productivity. The open-endedness or close-endedness of question-asking behavior is essential, but questions should also be contingent. Molinari et al. (2013) observed that contingent questions are one of the essential indicators of sophisticated talk productivity.

Science teachers may ask low (clarification and elaboration; Kawalkar & Vijapurkar, 2013; van Booven, 2015) and high (requesting students to comments on the others’ propositions; Chin & Osborne, 2008) cognitively demanding questions. A science teacher may urge students to criticize a presenter group’s experimental inferences (Christodoulou & Osborne, 2014). This request would necessitate a higher cognitive operation to analyze experimental inductions, compose criteria and standards for an evaluation, and decide whether experimental outcomes are credible and acceptable (Soysal, 2018b).

For science teachers, question-asking may be twofold. On the one hand, science teachers have to ask high intellectually demanding questions for higher-order reasoning and concept formation. On the other hand, science teachers should display low cognitively demanding questions to keep students away from an excessive cognitive load (Kirschner et al., 2006) that may hinder science learning. In this context, Chin (2006) proposed the cognitive ladder that implies that science teachers should first ask low cognitively demanding questions. Then, after elaboration sessions by discussing students alternative meanings regarding science concepts, science teachers may ask high cognitively demanding questions.

Science teachers may enact revoicing questions by rephrasing/re-formulating a student utterance to make it more understandable. Revoicing supports students’ talk productivity (Chapin et al., 2003). Once a teacher enacts revoicing questions, students are charged to modify, revise, or change their speeches to make them public/common. This may create a discursive space for interthinking that precludes classroom talk productivity (Mercer, 2019). A revoicing question may decrease the cognitive load of students with weak verbal abilities; in turn, they contribute to classroom talks intellectually (Chapin et al., 2003). Science teacher questions should trigger and sustain a student-student interaction pattern in which students are assigned as primary evaluators of presented ideas (Pimentel & McNeill, 2013; van Zee & Minstrell, 1997a, 1997b). In addition, science teacher questions should pose discrepancies to students who resolve conceptual, epistemological, or ontological dilemmas regarding science topics considered in the science talks (Brown & Kennedy, 2011; Christodoulou & Osborne, 2014; Oh & Campbell, 2013). Science teachers also use their questions to guide students to make rigorous critiques of classmates’ ideas (McMahon 2012; Soysal, 2018a).

Justification for the Study

The current study attaches importance in some respects in explicating discourse-cognition relation. This study presents a research-based typology of teacher questions that may be useful for professional learning and future research analysis. Describing questions’ typology and estimating their influence on the talk productivity would move the field forward because documenting and clarifying a phenomenon to be explained is the first step in theory building (Borsboom et al., 2021). Previous research mostly used observations of a few classroom practices or performed classroom discourse analyses through video-based datasets obtained from short-term in-class courses. The current study accepts that science learning occurs over time (Mercer, 2008; Soysal & Yilmaz-Tuzun, 2021) and recommends a longitudinal perspective (e.g., Chen et al., 2017). Thus, the questions asked by an experienced science teacher were analyzed from the video-based data obtained during a school term with more than one in-class implementation.

In the systematic review of Howe and Abedin (2013), none of the reported studies was conducted in the Turkish context in which the current study was conducted. Therefore, science classroom discourse analysis is a rare practice in Turkey, although it is crucial to foster the science discourse quality. The critical message of Howe and Abedin (2013) is that much more should be known about which specific modes of organization are more beneficial than others. This strictly requires examining science teacher questions by elaborated coding catalogs in a longitudinal manner adapted in the current study. The majority of science teachers (may) have restricted knowledge regarding question types and their effects on the productivity of student talk. Science teachers and educators can use outcomes obtained from the current study for professional development processes. Therefore, in terms of launching teacher awareness/noticing (Barnhart & van Es, 2015; Erickson, 2011), the outcomes of the current study about discourse-cognition relation may be guiding. Two research questions are addressed:

What types of questions did the teacher ask in the science classroom during the argument-based inquiry activities?

How and to what extent did the teacher questions affect the talk productivity of the students?

Methods

Research Approach

The current case study presented a science classroom discourse analysis (Mercer, 2008). A case study portrayed an in-depth qualitative picture of an individual, program, group, or practice (Stake, 2011). In the current study, each in-class implementation was a case since each had its thematic scope and discursive context where diversifying verbal interactions occurred. Each in-class activity observed in the current study had its science content that shaped the context of the social negotiations. Therefore, with the case study approach, it was observed how verbal interactions between the teacher and students, which reflected a linguistic orientation and were driven by social exchanges, affected the students’ talk productivity.

Participants, Research Context, and Role of the Researchers

The participants were 28 (Girl = 19; Boy = 9) fifth-grade students and a teacher with 22 years of experience. The students were between the ages of 11 to 12. The school was located in a district of one of the metropolitan cities of Turkey, which was at a middle socioeconomic level. For example, since there was no special facilitation reserved for laboratory activities in the school, the experiments were carried out in the classroom. The students had socio-cultural-economic status ranging from middle to low. The teacher had been employed for 19 years in the school. The teacher was involved in an international project, in which the researchers were also involved, to increase the adaptation of science teachers to student-centered classroom practices. The teacher participated in the in-service training processes that lasted for 1 year and were repeated six times in depth at the university that hosted the project. The primary purpose of in-service training was to contribute to the teacher’s adoption of the Argumentation-based Inquiry (ABI; explained in the following section) approach. The researcher worked closely with all the participating teachers (n = 11), had the opportunity to observe them systematically, and decided that the participant teacher could be a contributing co-researcher to investigate the discourse-cognition relation in asking questions. The participant teacher showed greater motivation to perform ABI practices effectively. The participating teacher and researchers established close interaction, and the teacher received professional-pedagogical support from other researchers in the project.

Selection of the ABI Implementations

The ABI is a learner-centered instructional tool accompanied by writing activities, in which learners create their arguments in science practices by collecting, analyzing, and interpreting data. In the ABI, learners conduct reasoning processes intensively and derive their evidence from the data while analyzing the data sets (Weiss et al., 2021). The primary purpose is to guide learners to compose and test their claims in the question-claim-evidence cycle (Weiss et al., 2021). As the learners add their reasoning to the data sets, they produce the evidence, which is the epistemology of ABI, formulated as “data + reasoning = evidence” (Cavagnetto & Hand, 2012; Cavagnetto, 2010). In the current study, it is accepted that producing evidence should not be considered independently of reasoning processes (e.g., “claim-evidence-reasoning” (Sampson, Grooms & Walker 2011); the students were, therefore, demanded to create evidence. The presumable relationships between the teacher questions and student talk productivity were explored in the ABI settings.

Five ABI implementations were observed (Heat and temperature (189 minutes); Substances (113 minutes); Shadow formation (145 minutes); Force and motion (188 minutes); Change of state (198 minutes)) and conducted by considering the intended elementary science curriculum’s objectives. Before the implementations, the teacher and researchers planned and conducted preliminary ABI sessions around various science subjects and grade levels. Before the main implementations formed the study’s verbal data corpus, the pilot studies were carried out. Observations obtained from the pilot implementations (n = 8; 946 minutes) constituted a feedback source for further implementations. The researchers were in the classroom during a school year. However, only five implementations were included in the classroom discourse analysis processes. Because in these implementations, there were more teacher-student and student-student patterns of interaction, or the teacher displayed more questions than others. In addition, the researchers’ in-class pedagogical support to the teacher was at the lowest level or absence in the selected five ABI implementations.

In-Class Implementations

The ABI implementations were carried out in three phases.

Phase-1: Introducing alternative ways of thinking and talking about a natural phenomenon

In this phase, the teacher initiated the discussions, stated the focus and scope of the negotiations, and expanded the learners’ ideas about the subject. The main instructional purpose was to invite the students to notice that their (common-sense) reasoning may be incomplete in illustrating nature’s mechanics. The teacher showed the contradictory points in the students’ utterances by asking specific questions and constantly reconstructing his questions based on the answers provided by the students. The teacher persuaded the students that other explanation systems (scientific language and thought) could be an alternative to their everyday understanding (Mortimer & Scott, 2003; Soysal, 2018a). The teacher and the researchers decided that three types of the students’ alternative conceptions, conceptual, epistemological, and ontological, could occur. The teacher finally reminded the students that data collection, analysis, and interpretation could eliminate the conceptual contradictions.

Phase-2: Experimenting

In the previous phase, students write a researchable question to which they are curious about the presumable answer. The students were engaged in data collection, analysis, and interpretation at the experimenting stage to react to their conceptual, epistemological, and ontological contradictions. The primary purpose was to execute original research by creating valid and reliable data sets and drawing inferences. The teacher checked the relevance of the research questions by visiting each group. Some groups had problems forming good research questions and placed the same variables in their research questions. The research variables that vary in a typical ABI practice are of great value in creating more profound science learning opportunities for students (Weiss et al., 2021). Therefore, the teacher provided group-based support to enable the students to deal with different questions. However, the students faced some difficulties in terms of how to handle an experiment. The students measured one variable and moved on to the other variables. Therefore, the teacher often reminded the groups that their results could lose credibility due to the number of trials.

Phase-3: The whole group presenting and discussing

After experimental processes, the students shared their evidence-based inferences. Audiences evaluated, criticized, or corrected each presenter group’s inferences. The groups investigating similar variables but reaching different results were invited to make presentations one after another. The groups that addressed more than one research variable were invited to present their findings last. Although some groups examined similar data sets, they reached different interpretations. The differentiation increased the depth of the negotiations. When the groups presented their research questions, claims, and evidence, the teacher demanded the audience evaluate the results, criticize, if necessary, and make suggestions to develop the experiments. The students made comments, elaborations, and evaluations on the relevance of the research questions, validity/reliability of data collection processes, and congruity between the claims and evidence.

Data Collection and Analysis

The ABI implementations were video recorded. The parents of the students and the teacher were informed before the video-based data collection. The parents signed consent forms on behalf of each student. The audio-visual data was verbatim transcribed. If needed, non-verbal interactions (gestures, intonations, and body language) were embedded in transcriptions. The transcribed speeches were analyzed through systematic observation (Mercer, 2010), incorporating coding and quantifying. Each teacher question and its corresponding student-led responses were individually coded. The teacher’s questions were coded regarding their discursive functions. The student utterances were coded to reveal at what cognitive level (cognitive contribution) the words were and how this might be related to the discursive functions of the teacher questions. Then, analytically coded question types (discursive functions) and the students’ cognitive contributions were placed into higher-order categories. The quantities of the questions asked by the teacher and the cognitive contributions of the students were determined for each ABI implementation. Then, the relationships between discourse and cognition were estimated by constantly comparing the frequencies across the ABI implementations.

Two catalogs were used: “Teacher Questions Coding Catalog” (TQCC; Table 1) and “The Revised Bloom Taxonomy” (RBT). The TQCC incorporates 7 categories and 21 analytical codes. The TQCC was developed data-driven and theory-laden (Mercer, 2010). While developing the TQCC, earlier studies on the teacher questions were analyzed, and emerging codes obtained during the data analysis were added until the verbal data was saturated.

Teacher Questions Coding Catalog.

The RBT is an effective tool to determine instructional outcomes (Anderson et al., 2001; Krathwohl, 2002; Table 2). With the RBT, the students’ talk productivity was coded from the lowest to the highest level. The levels of the RBT have a hierarchy regarding students’ cognitive processing as follows: remember (low), understand (low), apply (medium), analysis (medium), evaluate (high), and create (high; Krathwohl, 2002). Science educators use the RBT to determine, for instance, the cognitive level of teacher questions (e.g., Kayima, 2016). The RBT incorporates some aspects of higher-order reasoning in general (identification, decision making, inference, explanation, interpretation, analysis, evaluation; Ennis, 2011; Facione, 1990) and is used in the context of science education in particular (Hand & Grimberg, 2009). The cognitive processes indicated in the RBT are consistent with the science process skills such as observation, measurement, comparison, analysis, explanation, establishing the cause-effect relationship, induction, deduction (Hand & Grimberg, 2009; Soysal, 2018b). The cognitive process levels are pragmatically re-categorized for more representative systematic observations in the present study. More aggregated cognitive processes were presented as perception (“remember” + “understand”), conception (“apply” + “analyze”), and abstraction (“evaluate” + “create”).

The Revised Bloom for Identifying the Students’ Talk Productivity a .

For more rigorous statistical inferences, z-scores were used for each variable (question type and talk productivity) by taking observed values, subtracting the mean of all observations, and dividing the result by the standard deviation of all observations. Z-score is instrumental in identifying tendencies between two or more means (Field, 2013). Numeric distributions used for the question types and talk productivity were translated into z-scores as a new distribution with a mean of “0” and a standard deviation of “1.”

Trustworthiness of the Study

Two researchers participated in the coding procedures. For the coding conducted by the TQCC, the initial interrater reliability was .74 as the coders assigned some discordant codes. Through in-dialoguing, the researchers convinced each other regarding the inconsistent codes. Secondary intercoder reliability was .88 for identifying the types of teacher questions. The same procedures were conducted to code the students’ talk productivity. While using the RBT, there were inconsistent coding, particularly for distinguishing a student utterance at the level of analyze from another pitched at the evaluate level. The initial reliability coefficient was .78 for the RBT-based coding; then, the researchers constantly compared discordant codes. The final reliability coefficient found for the RBT-based coding was .91. For validity, a peer debriefing strategy in which external audits as three classroom discourse analysts intensely scrutinized the data collection, analysis, interpretation, and reporting processes was used. The external auditors had no connection to the current study. A member-checking strategy was also used by sharing and re-interpreting the initial outcomes of the data analysis and research outcomes obtained from the systematic observations with the participant teacher.

Findings

Types of the Teacher Questions

As clarified and exemplified in Table 1, the teacher enacted diversifying question types (7 higher-order categories and 21 sub-categories). The systematic observations showed that, on average, the teacher used the eliciting questions (36.14%) dominantly among others. Secondly, nearly 2 out of the 10 questions were allocated to the process skills category (18.44%). Moreover, about 1 out of 10 questions of the teacher were displayed under the legitimating (10.42%), discrepant (11.48%), or justified talk (10.74%) categories. The teacher questions under two types, the metatalk (7%) and inference (5.78%), were less enacted.

The Presumable Patterns Observed for the Discourse-Cognition Relation

As seen in Figure 1, the students’ talk productivity was different across the ABI implementations. The students’ talk productivity stayed mainly at the perception level. However, many student utterances pitched at the perception level, especially for the substances (91.4%; z-score: +1.35, more than 1 SD above the mean) and force and motion (72%; z-score: +0.51) implementations. This implies that there might be less talk productivity in these two implementations. In terms of the abstraction level, two ABI implementations (shadow formation: 31%; z-score: +0.80 or change of state: 35.9%; z-score: +1.14, more than 1 SD above the mean) were featured. In these two implementations, the students had a more discursive place to achieve higher-order reasoning compared to the others. Even though the heat and temperature implementation seemed to be more instrumental in triggering the students’ talk productivity than the substances and force and motion implementations, it was not better than the change of state and shadow formation implementations in terms of sustaining dialogic space for the students’ higher talk productivity. Figure 2 displays detailed comparative representations of the discourse-cognition relations across the implementations. A decreasing trendline (low talk productivity) was observed in the substances and force and motion implementations. On the other hand, an increasing trendline (high talk productivity) for the shadow formation and change of state implementations was detected. Finally, a more plane trendline (moderate talk productivity) was observed for the heat and temperature implementation.

Effects of the types of questions on the student talk productivity.

The presumable effects of the different teacher questions on the students’ talk productivity across the ABI implementations.

Eliciting questions

It was expected that the eliciting questions might be influential in fostering student talk productivity. The teacher tried to invite the students to make more sophisticated explanations regarding their propositions (e.g., see turn-6 and turn-10 in Table 4 or turn-3, turn-9, and turn-11 in Table 5). However, occurrence frequencies (Figure 2) or z-scores show that the eliciting questions’ effects on talk productivity were restricted and mixed. In the substances implementation (low talk productivity; z-score: +1.11; more than 1 SD above the mean), the eliciting questions mainly were used by the teacher compared to the shadow formation (z-score: −1.11; more than 1 SD below the mean) or change of state (z-score: −0.99; nearly 1 SD below the mean) implementations where the students succeed higher talk productivity. This mixed result implies that not only the individual effect of the eliciting questions but also the combined influences of other question types could be in action in fluctuating the students’ talk productivity.

Metatalk questions

A similar mixed result was also observed for the metatalk questions. As seen in Figure 2, the metatalk questions were less displayed in the shadow formation implementation (z-score: −1.64; more than 1 SD below the mean) than the substances (z-score: +0.82) or force and motion (z-score: +0.78) or heat and temperature (z-score: −0.04) implementations. The metatalk questions were also used in the shadow formation implementation rarely compared to the change of state implementation (z-score: +0.08), where higher talk productivity was observed like the shadow formation implementation. The metatalk questions might create a higher cognitive demand on the side of the students, and that might hinder other types of mental functions needed for an in-class science inquiry. As the exemplified below-located excerpt from the force and motion implementation, the teacher forced the students to re-consider what was occurring in the classroom by the consecutive metatalk questions. When the teacher invited the students to focus on an idea over another by selecting-eliminating questions, they had to ponder about why the teacher made the idea prominent, the relation of the selected idea with the whole of the classroom talks or the inappropriateness of the eliminated idea with the general flow of the classroom discourse. This might require a secondary/parallel cognitive activity on the side of the students, which might shadow other basic cognitive processing. As exemplified below, the students were already loaded with epistemic and content-based discussions. Even though the metacognitive activity fosters an understanding of concepts and skills for science learning, the overloaded ABI implementations due to parallelized metacognitive activity might hinder basic cognitive activity.

Process skills and inference questions

The process skills and inference questions presented mixed results similar to the eliciting and metatalk questions. As seen in Figure 2, the teacher displayed significantly more process skills questions in the force and motion (z-score: +1.27; more than 1 SD above the mean) and substances (z-score: 0.52) implementations than the shadow formation (z-score: −1.02; more than 1 SD below the mean) and change of state (z-score: −0.99; nearly 1 SD below the mean) implementations. This tendency was also valid for the inference questions (e.g., the z-score of the substances implementation: +1.67, more than 1 SD above the mean; or the z-score of the change of state: −0.91, nearly 1 SD below the mean). The process skills and inference questions were presumably in action in the ABI implementations where the students conducted in-class science inquiry requiring basic science process skills: observations, comparisons, predictions, and rough inductions in the form of inferencing (e.g., see turn-4 and turn-10 in Table 3; or turn-1 and turn-16 in Table 4; or turn-1 and turn-7 in Table 5). However, in the shadow formation implementations, the inference and process skills questions were replaced with other question types that might be more instrumental in scaffolding the students’ talk productivity. More homogeneous distribution of the inference and process skills categories in addition to the legitimating, discrepant, and justified talk categories might foster the students’ talk productivity, especially in the substances and shadow formation implementations.

The Examples of the Legitimating Questions Enacted in the Shadow Formation Implementation.

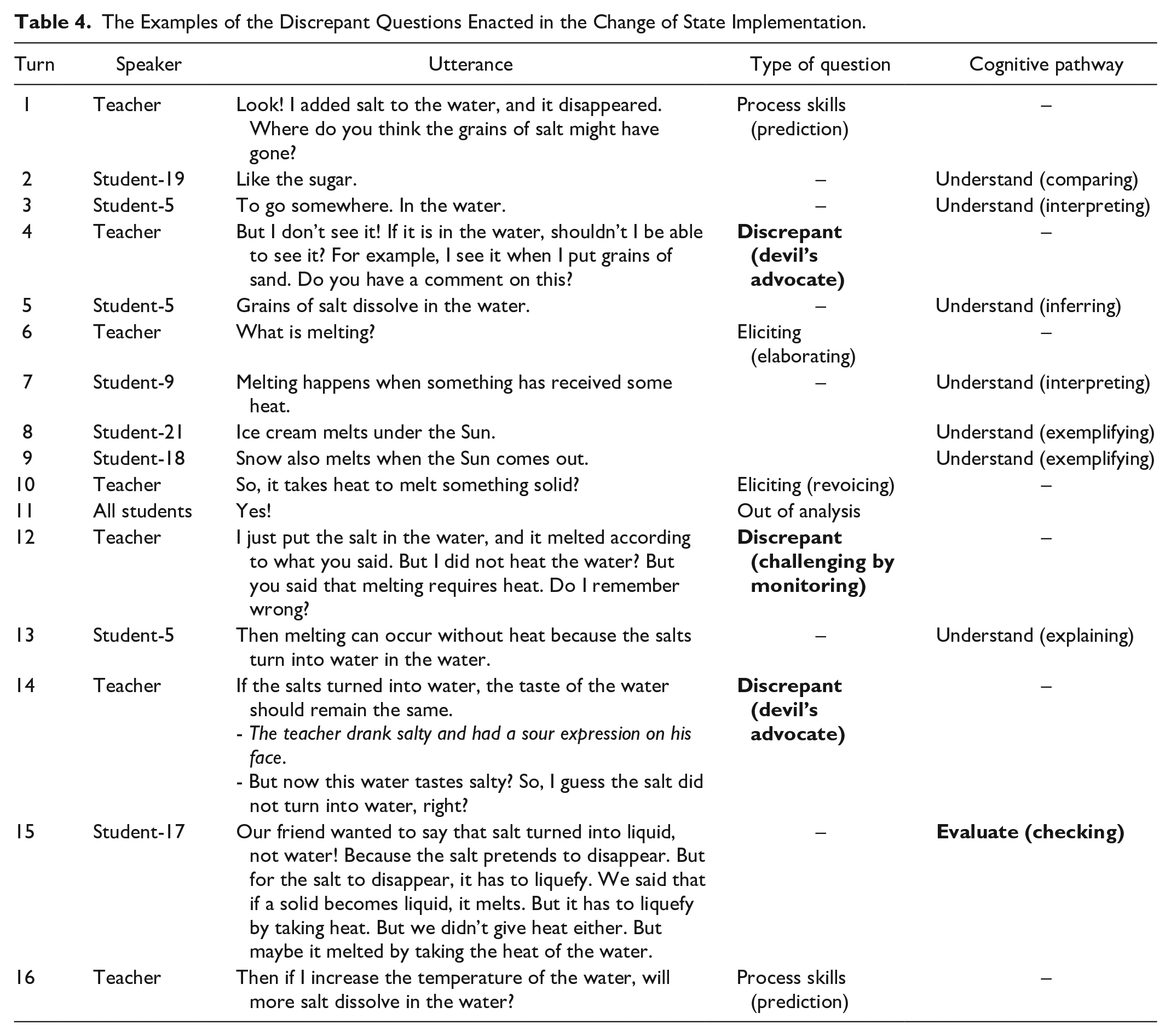

The Examples of the Discrepant Questions Enacted in the Change of State Implementation.

The Examples of the Justified Talk Questions Enacted in the Heat and Temperature Implementation.

Legitimating questions

As seen in Figure 2, in the shadow formation (z-score: +1.12; more than 1 SD above the mean) and change of state implementations (z-score: +0.96; nearly 1 SD above the mean), the legitimating questions were considerably used by higher occurrences compared to the heat and temperature implementation (z-score: −0.21) and especially than the substances (z-score: −1.02; more than 1 SD below the mean) and force and motion (z-score: −0.84) implementations. As exemplified in Table 3, by the legitimating questions (e.g., turn-2), the teacher permitted more student-student interactions where they challenged, evaluated, or criticized peers. In the presence of the legitimating questions (e.g., turn-6 or turn-13), the students had to test the logical inconsistency of their own and classmates’ ideas. By the legitimating questions, the students were required to detect the fallacies within the proposed ideas or determine whether the idea had internal consistency or whether the idea was relevant for the context of the on-the-fly classroom talks (e.g., turn-15 or turn-16).

Discrepant questions

In the ABI implementations where the lower talk productivity was observed, the teacher used the discrepant questions rarely (the substances, z-score: −1.31; more than 1 SD below the mean; or the force and motion, z-score: −0.96; nearly 1 SD below the mean). On the other hand, when the teacher enacted the discrepant questions frequently, observed in the shadow formation (z-score: +0.8) and change of state (z-score: +1.14; more than 1 SD above the mean) implementations, the students talk productivity pitched at the higher levels. As exemplified in Table 4, the teacher required the students to notice their ideas’ inconsistencies in conceptual, epistemological, or ontological aspects by the discrepant questions (e.g., turn-4). In responding to the teacher’s discrepant questions (e.g., turn-12 or turn-14), the students had to judge or defense their propositions demanding higher-order cognitive processing (e.g., turn-15).

Justified talk

In the shadow formation (z-score: +1.17; more than 1 SD above the mean) and change of state (z-score: +0.79) implementations, the teacher demanded a version of warranted reasoning frequently by directly guiding the students to present a piece of evidence to revise their baseless claims. However, this was not the case in the implementations (the substances, z-score: −0.95; nearly 1 SD below the mean; the force and motion, z-score: −1.12; 1 SD below the mean) where low talk productivity was detected. As exemplified in Table 5, the justified talk questions might boost talk productivity since the students had to expand their claims. In the presence of the justified talk questions (e.g., turn-13 or turn-15), the students had to show the coordination between the claim they proposed and the data they offered to support their claims. The justified talk questions seemed to guide the students to construct a well-supported argument needing higher-order cognitive processing (e.g., turn-16).

Discussion

In the current study, two aspects of science classroom discourse are revealed. First, the teacher displayed varying questions to handle the ABI activities. Second, the observed typologies had diversifying effects on the students’ talk productivity. The teacher’s question typology might vary since the ABI approach, in which the teacher tried to make the student-led ideas prominent (Weiss et al., 2021), is a student-centered science teaching strategy. However, once the students’ ideas were featured, there was a discursive tension for the teacher since some of the student ideas were invalid for the context of the science classroom discourse. Therefore, the teacher had to handle a well-tuned version of open-ended instruction where alternative points of view had to be welcomed and discussed. The students’ alternative ideas had included a spontaneous mixture of the school science social languages and common-sense reasoning that might be far from the formalized school science. The teacher focused on considering the alternative social languages; therefore, he had to diversify his questions to respond to the heterogeneity (Mortimer et al., 2012) that emerged in terms of social languages.

The eliciting questions encourage students to intellectually contribute to classroom discourse (VanLehn et al., 2021). The eliciting questions have an instructional function in increasing students’ speaking time (Shaughnessy et al., 2021) or creating a dialogic space for students who may dominate classroom talks. O’Connor et al. (2017) found that academically productive talk is possible when verbally participating students are in the classroom, attainable by eliciting questions. However, as the current study showed, the eliciting questions might not ensure higher-order talk productivity. Forman et al. (2017) indicated that for orchestrating productive classroom talks in a healthy communicative atmosphere with eliciting questions, teachers should share their epistemic authority with students in a specific learning environment where they propose context-based challenges. Forman et al.’s (2017) comments imply in the context of the current study that in addition to the eliciting questions, for higher-order student talk productivity, a challenging or argumentative or legitimating learning environment should be sustained. This was more tangible when the teacher used both the eliciting, legitimating, and challenging questions in a pragmatic manner in the ABI implementations where the students’ cognitive pathways pitched at the highest levels.

Particularly in the shadow formation and change of state implementations, once the teacher’s eliciting, legitimating, and discrepant questions were used together, the students seemed to reach higher talk productivity. German thinker Hans-George Gadamer (2004) commented that evaluating, criticizing, or judging someone’s ideas is to try to understand their genuine conceptual intentions better. Therefore, when the teacher frequently used the questions for communication (the eliciting category) and peer-led evaluation (the legitimating category), the students’ utterances became apparent in the public plane of classroom talks. The students who understand the utterances of others might have made cognitive contributions to negotiations at higher levels (e.g., evaluation: criticizing and checking the relevance of an utterance) by evaluating classmates’ propositions. In the shadow formation and change of state implementations, when the teacher frequently used the questions for the argumentative and communicative aims, unlike other implementations, the students did not only make evaluative reflections on the ideas of others but also understood the meanings of the claims of others (Gadamer, 2004, p. 271).

Gallardo-Virgen and DeVillar (2011) and Sinha et al. (2015) reported that when students intentionally share their ideas (the eliciting category) and reflect on the ideas of others (the legitimating category), their cognitive commitment and academic achievement may increase significantly. In the context of the current study, for instance, by the legitimating questions, the teacher invited the students to be the determiners of which claims should be accepted and why others should be eliminated. Furthermore, as observed and exemplified above, the legitimating questions encouraged the students to act as co-critiquers. Therefore, the students were assigned in the presence of the legitimating questions as the quality controllers (Resnitskaya & Gregory, 2013; Soysal & Radmard, 2018; van der Veen et al., 2015) of the uttered claims in addition to the teacher. As known, evaluating, judging, reviewing, and legitimating demand the highest cognitive processing from students; in the context of the current study, the pervasive usage of the legitimating questions might boost the students’ talk productivity.

Previous studies (e.g., Mercer et al., 2017; Mercer & Dawes, 2014; Wegerif et al., 2017) reported that teachers should encourage student-student verbal exchanges in the sense of collaborative or joint-thinking/interthinking. The current study showed how interthinking could be supported enacted in the science classroom discourse through teacher questions. In addition, the present study described the concrete effects of the legitimating questions as a way of sustaining joint-thinking on the students’ talk productivity. As observed in the current study, while the students were thinking and talking together, they had to consider their classmates’ claims’ contents since the teacher deliberately wanted the students to evaluate and criticize them. The students could engage in productive classroom talks once they were guided to benefit from the classmates’ ideas’ contents by evaluating and criticizing them. Boyd and Rubin (2006) claimed that contingent teacher questions are the most functional ones in promoting intellectually productive classroom interactions. The contingency of a question is achievable when science teachers enact the legitimating questions. For instance, in the shadow formation and change of state implementations, the students had to take the classmates’ ideas seriously to make cognitive contributions to the classroom talks. Therefore, while commenting on the alternative thinking of others that did not make sense to them, the students crossed the boundaries of their intellectual models as an indicator of talk productivity (van der Veen et al., 2017).

As previous research showed, when teachers make students’ conceptual inconsistencies public, they engage in deeper thinking processes, acquire science topics profoundly (e.g., Lee & Kinzie, 2012; Soysal, 2021a). In addition, students operate higher-order reasoning and construct sophisticated arguments while responding to the science teacher, who is a negotiator of alternative explanations (Forman et al., 2017). Resnick et al. (2010) stated that if teachers want their students to engage in productive classroom talks, they have to use the discrepant questions intentionally. In a large-scale study, Gillies and Khan (2008) found that the discrepant questions are worthwhile in terms of productive disciplinary engagement of students who may involve profound problem-solving and reasoning processes in the presence of the deliberately and properly enacted discrepant questions. In the context of the current study, in the shadow formation and change of state implementations, the teacher increased the frequency of the discrepant questions; thus, he held a constructively critiquing manner. In responding to the discussant-teacher or debater-teacher, the students had to protect or expand their claims as this is accepted as one of the significant indicators of talk productivity (Khong et al., 2019). As observed by the researchers, the teacher’s aim was not to falsify the students. The discursive purpose of the teacher was to avoid simplified and unelaborated student claims. The teacher tried to scaffold the students to empower their (baseless) claims in the presence of the discrepant/challenging questions. This had occurred frequently in all ABI implementations observed herein. However, for instance, in the shadow formation and change of state implementations, the students’ baseless claims were continuously tested and tested again, and their common-sense reasoning was constantly reviewed. In the negotiations cycles that were profound by the discrepant questions, the students had to evaluate, criticize, judge, and modify their claims. In other words, the knowledge construction and idea critiquing (Chen et al., 2017; Ford, 2008; 2012) were at the core of the classroom talks, especially in the shadow formation and change of state implementations. Therefore, while the students responded to the teacher’s discrepant questions, they could operate the highest cognitive processes (self-evaluation (checking the suitability of their ideas) self-criticism).

The teacher enacted the justified talk questions to sustain an ABI setting where the accountable talk was centralized. Once the teacher displayed the justified talk questions, the students were accountable to accepted standards of reasoning and accountable to knowledge (Michaels et al., 2008). In the present study, the students had to display logical thinking and propose warranted arguments or rely on evidence that had to be appropriate to the science concepts involved in the classroom discourse. Thus, the current study contributes to the accountable talk literature (e.g., Wolf et al., 2006) by exemplifying how explicit justified talk questions encourage the students to perform evidence-based elaborations as an indicator of productive classroom talk (Resnick et al., 2007).

In the science classroom, metacognitive activity motivates students to guide, monitor, regulate, and control their minds. These supra-cognitive processes are needed for reflective thinking (Berland & Hammer, 2012; Tang, 2017). However, in the present study, the metacognitive tasks demanded from the students through the metatalk questions were not simple, especially in the science classroom (Soysal, 2021b). In the presence of the metatalk questions, the students were required to monitor their statements, reflect on in-class explorations and execute modifications on the reasoning strategies (see Table 1). Students in the science classroom have severe difficulties engaging in a version of metacognitive activity (Zohar & Ben David, 2008). In terms of the cognitive load theory, in-class science inquiry activities incorporate higher cognitive demands of problem-solving procedures; therefore, students often experience exhausting loads of cognitive work (Kirschner et al., 2006). Due to the above-stated nature of metacognitive activity in the science classroom, the metatalk questions might not positively affect the students’ talk productivity. Hypothetically, the metatalk questions would show their contributory effects on the student talk productivity. However, as Berkovich (2016) indicates, having an on-the-fly conscious awareness regarding classroom talks requires more time and effort on the side of students and teachers than expected. Therefore, the current study concluded that after establishing a dialogic classroom discourse culture by automating or fossilizing the metacognitive activity by the metatalk questions, students would be able to execute both cognitive and metacognitive processes simultaneously.

Conclusions

The current study has two important conclusions. First, open-ended in-class science inquiry may press science teachers to diversify their questions’ types. This justifies the need for teachers to ask complex and varying questions, exemplified herein when they give importance to the different forms of student-led understandings of science concepts that inherently appear in classroom conversations. The differentiation of the types of teachers’ questions is also related to the tolerance of students’ ideas, which may sometimes be invalid. Instead of asking only open-ended or closed-ended questions, teachers should open up a considerable space for students to increase their speaking time during science classroom discourse. As shown herein, creating more discourse space for students is mostly possible to vary the question types by which learners can express themselves in the classroom discourse. Second, all teacher question types observed in the current study have a relationship with students’ cognitive activity. However, as observed, some teacher questions (e.g., challenging, legitimating, and evidencing) can enable students to exhibit higher levels of reasoning. The observed questions that demand more cognitive work from students paved the way for deeper cognitive contributions to classroom discourse. Nevertheless, subjecting students to too much cognitive load can hinder their intellectual contribution to classroom discourse, for instance, in the presence of intense metatalk questions.

Educational Implications

A significant number of science teachers do not have an instructional noticing questions types and their impacts on the students’ talk productivity (Oliveira 2010; Soysal, 2018a). Therefore, one of the main elements of teachers’ professional development should be to bring a version of academic awareness to science teachers. One of the most influential ways of involving a teacher in a professional development program where in-class questioning technics are centralized is to persuade teachers that change in questioning tactics may have higher impacts on student talk productivity (Cochran-Smith 2005, 2006; Guskey, 2002). As teacher educators agree, the professional changes of teachers have a sequence as follows: teachers develop in terms of, for instance, questioning strategies described above, students develop cognitively, and teachers witness the students’ intellectual development, teachers believe in the effectiveness of enacting certain types of questions (Orland-Barak & Wand, 2021). Therefore, if the presumable influences of question types on student talk productivity, detailed in the current study, can be demonstrated or proved to teachers through longitudinal professional development programs in an evidence-based manner, teachers would be eager to engage in professional development processes and enhance adaptive strategies to use effective questioning (Oliveira, 2010). To support, in the new era of teacher education, especially science teachers are seen as reflective practitioners. A teacher as a reflective practitioner should look into and critically comment on the discursive events happening in his/her classroom (Sherin et al., 2011). Science teachers should be able to systematically observe, analyze, and interpret the important events selectively to assess their questions’ impact on student talk productivity. It is recognized that the recent line of teacher education research guides teacher educators to persuade teachers to see and make sense of what they see in classrooms (Sherin et al., 2011). Teacher educators may use the current study’s outcomes or coding catalogs to design and conduct professional support programs to make science teachers reflective practitioners regarding academically productive talk via questions.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.