Abstract

In Finland, the discussion surrounding the impact of research on society has continued for two decades without a consensus on how impact should be part of research evaluation. In this paper, I examine the conceptions of social impact in the field of social sciences from the perspectives of policy authors and academics by reviewing policy documents and conducting semi-structured interviews with 14 policy advisors, evaluation experts, and faculty professors. The policy data sets are from 2003 to 2018, the period in which the Finnish discussion on research impact has been the most active. Interpretivist notions on the social impact of the social sciences argue that representations of social impact derive from juxtapositional conceptions of how social science knowledge is part of society. Grounded theory techniques were used to analyze policy artifacts and stakeholder interviews. I identified five interpretative frames. These are impact governance, operationalization of impact, politicization of research utilization, guiding arrangements, and social impact of the social sciences. A key finding is that policy advisors and academic experts tend to integrate divergent vocabularies of research utilization and its evaluation, resulting in uncertainty about the conceptualization and operationalization of impact. Integrity of research utilization in research policy and research evaluation could increase the social capacity of the social sciences by helping to understand the conceptual contribution of these fields to public policies and public discussion.

Introduction

During the past 20 years of European science policy discussion, the term “social impact” (sometimes societal impact) has become a formalized way to highlight the beneficial outcomes of research. At the European level, impact has become a steering mechanism and a criterion for representing the social relevance and ability of scholarly work to address challenges in different societal sectors (e.g., European Commission [EC], 2010, 2014, 2017a, 2017b). In this sense, the Finnish research evaluation system has followed European examples of the social impact criterion for research assessment, allocation, and strategy while cultivating its own public discussion and the formal rationality of impact evaluation. The Nordic perspective on social impact can provide an understanding of how policy advisors and academic experts represent impact in moderate impact policies and assessment significantly different from countries having rigorous frameworks, such as in the UK.

Various assessment styles have attempted to correspond to the rationality of political accountability and administrative cost efficiency while concurrently pursuing sophistication (Martin, 2011). Research evaluators have struggled under the burden of theoretical and managerial challenges to provide evidence of the abstract impact of the social sciences (Lauronen, 2020). Both research policy advisors and academic experts have debated what social impact means and which activities belong under the term (Bornmann, 2013). Instead of providing clear conceptual definitions of social impact, research evaluation scholars have often claimed that impact is contextual and can mean divergent activities and outcomes beyond academia (e.g., Penfield et al., 2014). This constructive perspective permits a wide range of disciplinary approaches, such as in the fields of the social sciences. The focus of policies and theoretical conceptualizations has shifted from direct social outcomes toward interaction and pathway schemes (Kearnes & Wienroth, 2011; Muhonen et al., 2020; Spaapen & van Drooge, 2011). Impact has become a general term to mean stakeholder engagement, research use, and accountability of research.

Purpose

Rather than pondering the correct definition or method for showing the impact of social sciences, in this paper I concentrate on the conceptions of impact represented by policy advisors and social scientists in the context of Finland. The aim of the research question is to identify the conceptions policy advisors and academic experts employ when they represent social impact within various interpretation contexts and how they employ these conceptions. The focus in the paper is what research ideals in policy and academia constitute the conceptual repertoire of impact—which, inversely, the academic community can use as a conceptual repertoire to maintain control over social science endeavors in a multitude of social settings, as Calvert (2006) has identified in the case of basic research. In this paper, I argue that the formal and informal understanding of the social impact of the social sciences relies on integrated juxtapositional conceptions of research utilization to justify public value of research in various contexts.

The paper examines the innate conceptual tensions between divergent conceptions through documentary and interview material and identifies five interpretative frames for research impact derived from two different accounts of research utilization. The contribution of the paper is to understand representations of the social impact of social science in policies and academia. The remainder of the paper is as follows. First, there is discussion about the divergent conceptualizations of impact and their relation to audit principles. Thereafter, the data and the method used are introduced. The analysis section outlines the policy material and the interviews. Finally, the conclusion summarizes the main findings and implications of the work.

Conceptual Framework

This section introduces the conceptual understanding of social impact that research evaluators have integrated into their theoretical understandings and practical implementations of assessment. Impact assessment methods are founded upon basic arguments of summative (outcome-oriented) and formative (process-oriented) assessment styles (Molas-Gallart, 2015); these define how impact ought to be operationalized under each assessment framework. Particularly, the summative approaches merge seamlessly into quality management principles. However, this section furnishes an alternative interpretivist understanding for knowledge use and impact characterized by dialogue and collective awareness.

Audit Principles and Impact Assessment

As the sciences have begun to lose ground in holding the monopoly of legitimate knowledge in public debates on the multiplicity of rationalities and uncertainty, extra-scientific justifications have increased dramatically (Beck et al., 2003). Funding systems have adopted a governance of new public management style that relies on the devolution of research goals using heavy indicator-based evaluations (Hansson, 2006; Hicks, 2012). Power (2008) argued that research evaluation has transformed into the scrutiny of audit society whereby evaluation is professionalized and self-audited to meet the effectiveness of research according to the standards of financial performance. Academia has encountered science of accounts in the form of economic calculations for greater institutional transparency. The pursuit of the greater openness, transparency, and accountability of academic endeavors has the downside of excluding crucial aspects of social structures, networks, and collective organizational knowledge in academic communities (Hansson, 2006).

Whereas research evaluation has become formalized under the rationale of the best practices of indicating academic effects, accountability by impact assessment has been constituted under similar audit principles of having evidence of social outcomes by knowledge use. As Nowotny et al. (2001) stated, knowledge governance has begun to highlight contextual problem-driven research in non-regulatory collaborations defined by the metaphor of the agora, where social sectors and their contextual knowledge interests and expertise co-mingle. Universities have attempted to manage the problem-driven interests of knowledge using overheated internalized research evaluation, which yields intense negotiations over the meaning and evaluation of impact and exhausting refinements of evaluation methods—yet remaining unsatisfactory by taking enormous amount of time and resources (Martin, 2011). Some scholars have considered the changes in knowledge governance as a reason for developing better research impact indicators for research evaluation (Ernø-Kjølhede & Hansson, 2011). However, this pursuit of connecting the ideas of research utilization in formal research evaluation has caused conceptual tension in knowledge ideals (Hansson et al., 2014). This tension can be understood by the logical differences between research management and disciplinary practice (Benneworth et al., 2016). These differences can be located in conceptualizations of impact and how they correspond with the legitimation problem of academic research.

Conceptualizations of Research Utilization and Impact

Weiss (1979) argued that the “utilization” of the social sciences can be categorized into six models: knowledge-driven, problem-solving, interactive, political, tactical, and enlightenment. The models represent the basic understanding of knowledge processes and their use in policymaking and institutions. Knowledge-driven and problem-solving models represent the intuitive understanding of social science research as a promise of sociotechnical applications and rationales in decision-making. The knowledge-driven model follows the assumption of a linear model of innovation. Godin (2006) illustrated three sequences from basic research toward applications and development in this model derived from statistical conceptualizations. The problem-solving model comprises similar linear argumentation emphasizing knowledge needs. This innovation model was known as the demand-pull model, and it was assimilated into more complex models (Godin & Lane, 2013). Knowledge needs appear in various research markets, such as in policymaking: policymakers have a problem, which requires identification of missing knowledge to be pursued by researchers (Ylijoki et al., 2011).

These sociotechnical and instrumental approaches are conceptually close to macroeconomic accounting of impact (Muhonen et al., 2020). They conceptualize research impact by countable applications and research outcomes for policies. Many impact assessment frameworks have emphasized the characteristics of social outcomes (Molas-Gallart, 2015). They have features from the econometric and sociometric approaches of impact evaluation, which later have resulted in reduced understanding of formative aspects of social wellbeing (Bozeman & Sarewitz, 2011). The payback framework (Hanney et al., 2004) applied to the social sciences (Klautzer et al., 2011) follows similar outcome-based logic, in which divergent impacts are distinguished in their own areas, such as policy outcomes. The payback framework develops the idea further by the logic model, which differentiates epistemic, discursive, and policy outcomes from each other. However, it lacks an understanding of various individual, collective, and institutional levels of knowledge processes in society. Social science and science endeavors have cyclical patterns rather than direct practical solutions (Miettinen et al., 2015).

The interactive model takes the social sciences closer to a contextual and cyclical understanding of knowledge processes. The interactional approach underlines the trans-institutional and contextual determination of research problems and processes in what Nowotny et al. (2001) called Mode 2 knowledge production. Leydesdorff (2012) located this idea in a system-level communication between institutions to produce economic and social innovations. Benneworth and Olmos-Peñuela (2018) criticized the naïve assumption of knowledge transformers in the existing literature on the relationship between researchers and society. Social impact is cognate with knowledge users’ contextual understanding, and knowledge use is dependent on prior knowledge production and established networks. The interactive approach saw further formalized development under a Dutch project on productive interactions, which distinguishes direct personal interactions, indirect interactions via artifacts such as policy recommendations, and financial interactions (Spaapen & van Drooge, 2011). Molas-Gallart and Tang (2011) have developed the idea by reducing the measurement aspects of the framework, and they have considered this approach to be the most appropriate regarding the social sciences, as it avoids a simplistic outcome orientation. Muhonen et al. (2020) developed a comprehensive framework of impact pathways based on productive interactions. Their framework considers a wide range of research and other activities based on academic expertise. These are dissemination, co-creation, and reacting/driving social change. Thus, research impact is built by a whole range of activities and operations, which comprise dissemination of knowledge and engagement of stakeholders by synthetizing, comparing, and translating knowledge.

The political and tactical models consider people’s and groups’ interests and manipulations. Researchers and other stakeholders of knowledge may have an interest in scholarly endeavors and their further reconfigurations in local and global networks (Callon, 1994). The social and political interests in explaining social phenomena and interpreting knowledge have had a minor role in understanding the interaction between researchers and nonacademic agencies. To understand the knowledge processes behind research impact, evaluators need to set knowledge in a context and frame divergent interests of social phenomena (Esko & Miettinen 2019; Esko & Tuunainen 2019). Disciplinary research agendas work vis-à-vis academic and societal contexts that determine the development of the research tradition and its relationship with other institutions (Miettinen et al., 2015).

Weiss’ (1979) enlightenment model of research utilization can be elaborated further by the cyclical, contextual, and both multi-leveled and multi-operational understanding of research impact. Sigurðarson (2020) argued that the possible enlightenment of research can be understood as social capacity, which means sustainable social improvements over time. Disciplines’ capabilities to provide useful knowledge are based on their goals and established practices to have functioning interactions with nonacademic institutions. In social science endeavors, the interaction often means criticism and raising social/political contradictions. This approach is interpretivist and considers both the operational and structural dimensions of research utilization. Consequently, the interpretivist approach has similarities with the idea of appreciative inquiry by underlining the constructivist reality of problems and avoiding mechanistic/naturalistic assumptions of interactions (e.g., Bushe, 2011). The paper contributes to understanding how policy advisors and social research experts from divergent positions construct their understanding of social impact through these conceptualizations.

Method

Data

This study design is the second part of a larger project investigating social impact in Finland. The first part focused on the methodological grounds for impact assessment (Lauronen, 2020). The current study utilized policy documents and interviews with the research policy advisors, research evaluation scholars, and academic professors in the social sciences to identify the conceptions of impact in contextually constructed statements. The analysis aimed to provide interpretations, re-interpretations, and idiosyncratic ideas in relation to policy and formal conceptualizations. The documentary material was considered a formal presentation of impact discourse as enmeshed with governmental policies.

The main empirical material in the present study included 14 semi-structured interviews (13 hours 8 minutes, 94 pages) combining face-to-face, telephone, and email communication. The interviews were conducted in Finnish or English between September and October 2016. They were recorded and transcribed for further analysis. The experts represented their academic field and expertise in terms of practical research evaluation, evaluation studies, and the social sciences in the areas of sociological, public policy, and cultural research (see Table 1). The interviewees were categorized into three groups: policy advisors, evaluation experts, and professors. These were analytical categories to ensure diverse representation in the study. Direct conclusions were not solely drawn from these. Many of the informants had overlapping experience from varied positions. Two of the informants came from outside Finland, providing a wider cross-national perspective on social impact and its policies. They also discussed Finnish policy and assessment. The discussions mainly considered the social sciences, but within the wider perspective of impact and research policy.

Background Information of the Interviewees.

The Finnish field of research evaluation and impact assessment is small, which required interviewing the people who were in touch with the question of impact on a regular basis and who had a professional point of view on the matter. Due to the small number of people with in-depth information on the topic, the number was limited to its saturation point (n = 14). Selection was based on recommendations of other experts in the fields. The informants were asked to describe their professional interpretations of the impact of the social sciences. The interview questions were semi-structured and had thematic prompts (e.g., definitions, mechanisms, and operationalization). The limited conceptual context of impact ensured that the informants discussed the same phenomenon. Because the answers had ambiguity and a variety of polar perspectives, the working hypothesis emphasized the discursive/interpretative approach. The informants’ opinions and arguments were considered constructions from diverse sources.

Documentary data (see references in this paper) were also utilized to examine the progressive and chronological development of interpretations of impact by Finnish research policy agencies. The policy material was based on reports from the Ministry of Education and Culture (MEC), the Finnish Higher Education Evaluation Centre (FINEEC), and the Academy of Finland (AF), which were chosen for the purpose of understanding the discourses of the main Finnish agencies of higher education and research policy. They play a decisive role in defining university and research evaluation directions. The documentary data represented a formal perspective by policy authors. The period to which the material related ranged from 2003 to 2018 and included a total of 12 documents. The material was collected using search engines and the repositories of the MEC, FINEEC, the AF, Finland’s government, and Google by searching for material discussing research impact and social impact.

Design

The study applied grounded theory methodology to understand the rationales behind research impact. Grounded theory provided conceptual clarity and allowed for interaction with previous conceptualizations (Timonen et al., 2018). The documentary and interview material were thematically analyzed on the methodological premises of Gilbert and Mulkay (1984) and their notions on discourses. According to Mulkay and Gibert (1982), one should ask how academic people construct their interpretations of impact regardless of why these interpretations exist. Policy authors and interviewees produced social contexts (Gibert & Mulkay, 1984) through their discourse, which could be evaluated against standardized verbal formulations (Mulkay, 1976). In this case, these formulations refer to the ideas of the social impact of the social sciences, which were discussed in the context of research practice and management.

The interest in the present investigation was in how these discourses thematically construct various interpretations and contexts, as Jennifer Attride-Stirling (2001) illustrated in great detail. Thematic analysis can reveal the explicit rationalizations and implicit significations of text that have been broken into smaller units. Here, thematic analysis was used to identify the interpretation repertoire of the policy authors and interviewees (global themes), their contextual frameworks (organizing themes), and the polarized conceptualizations within these frameworks (basic themes) constituted by key vocabularies comprising these formulations. The global themes were identified as accounts, through which the informants justified their interpretations via select vocabulary within certain frameworks. These frameworks provided a contextual repertoire with which the policy authors and informants could emphasize various conceptualizations when interpreting the meaning, purpose, and implementation of social impact in the case of the social sciences. The frameworks were clearly divided into formal policy formulations and informal discussions, reflecting impact policies and social science practices.

Data Analysis

First, the data were descriptively coded to categorize rudimentary topics (e.g., accountability, relevance). Second, the interpretations were conceptually examined to locate basic-level themes (e.g., the enlightenment purpose of the social sciences). Third, these conceptualizations were merged into organizing frameworks (e.g., impact governance), which indicated re-interpretations of similar formulations. Finally, these frameworks were examined for whether they constituted global themes based on common reasoning.

Results

The findings are placed together in Table 2 to concisely demonstrate the resultant vocabularies, conceptualizations, and frameworks. Table 2 illustrates five interpretative frames (main themes) for the impact of the social sciences within formal research policies and informal discussion among policy advisors, evaluation experts, and faculty professors. These are impact governance, operationalization of impact, politicization of research utilization, guiding arrangements, and social impact of the social sciences. Each interpretative frame incorporated the main conceptions (subthemes) and key vocabularies that policy advisors and academics used to emphasize divergent rationales for social impact in various contexts. These conceptions were contradictory, except in the formal policies for impact governance highlighting financial rationalization of research. The conceptions formed divergent ways of understanding the policy, evaluation, organization, and disciplinary aspects connected to the idea of impact. The interpretative frames showed the possibilities and limitations to use the concept of impact to understand the social capacity of the social sciences.

Frames for the Impact of the Social Sciences.

Formal Policies of Social Impact

The policy and evaluation documents illuminated how policy authors have defined social impact in relation to the social sciences, the purpose of the concepts, and how policies have attempted to rationalize impact assessment in research evaluation. This section includes analysis of how the main agencies of research policy (FINEEC, MEC, and the AF) have interpreted and used the concept of social impact.

This section includes two distinct frameworks. The first illuminates how policy authors have defined impact as legitimation for institutional higher education and research funding and as a concept to rationalize the productivity and effectiveness of research vis-à-vis public policy goals (impact governance). The second shows how the operationalization of impact struggles between a mechanical interpretation of the outcomes and the wider civilizing effects in society in competitive research funding (the Academy of Finland: operationalization of impact).

FINEEC is mainly interested in universities’ quality assurance systems and their educational excellence, but it has also been active in promoting higher education impact assessment. The MEC evaluates universities’ eligibility for government funding. The AF is a public funding body under the government and has several funding programs. It also acts as a research council when developing evaluation recommendations. The MEC and FINEEC are closely related, but FINEEC is an autonomous organization. The AF is closest to the operational level of research assessment, as it directly designs and implements assessments in research funding.

Impact Governance

Since the early 2000s, FINEEC and the MEC have conceptualized impact as a form of regional interaction between higher education institutes and social sectors (Kinnunen, 2001; MEC, 2002). According to one of FINEEC’s reports, higher education institutes can approach regional interaction through their basic tasks (research, education, and public engagement) that provide a multitude of ways to impact society (Kinnunen, 2001). The MEC has emphasized university profiles for research in relation to their impact policies. These profiles are supposed to be defined in university strategies in relation to societal collaboration (MEC, 2002).

A later MEC report by Jari Ritsilä et al. (2007) specified several forms of societal interaction. They concluded that the assessment of societal interactions should show how well universities’ responsibilities are integrated into the labor market, socio-ecological development, regional development, and policymaking or societal discussion (social development and decision-making). This approach improves the basic idea of research, adding a multitude of routes for influencing society.

FINEEC’s and the MEC’s interpretations of impact have been comprehensive, decontextualized, and unspecific, describing a wide range of social activities and collaborations with other social sectors. As an institution for audit control, FINEEC has also emphasized the impact (which can also be read as effectiveness) of evaluation itself. Universities should utilize evaluation information to improve their impact through social activities (Moitus & Saari, 2004). FINEEC’s (2005, 2007, 2010) manuals have not indicated a change in this discourse since the early 2000s. Their latest auditing summary (FINEEC, 2019) highlights the same comprehensive understanding of impact in academic work as well as in collaboration between academia and research associates from diverse social sectors.

The MEC’s policies have not undergone significant change, but they more strongly emphasize strategic priorities for research and profiles for universities’ development (MEC, 2011, 2015a). In the MEC’s funding model proposal for 2013 (MEC, 2011), there is no explicit indication of social impact in research evaluation. The section on impact considers rates of student graduation as the main source of societal influence from universities, measuring research impact by numbers of publications. In the latest proposal for a funding model, social impact has been given greater emphasis by adding strategic funding (10%) in the section on universities’ education and science policy goals (MEC, 2015a). The MEC hopes to see social impact in the independent strategic planning of universities (i.e., profiles of research fields and closer collaboration with other sectors of society). In 2015, the MEC published a report (MEC, 2015b) focusing specifically on the social impact of academic research and higher education. The report greatly expanded its emphasis on society to contain any forms of interaction, strategies, and dissemination pathways. The report highlights the importance of interaction for achieving social and technological innovations.

FINEEC and MEC policies on social impact have remained at the comprehensive level—referring, in principle, to any kind of collaboration and network locally or nationally. Instead of specifying impact, FINEEC and the MEC have reinforced their recommendations and demands for impact. They have placed very little effort in operationalizing the assessment forms and procedures, as it is unclear how they indicate impact within the pathway scheme and what kinds of indices and assessment forms can show impact. Universities have received more formalized frameworks for how to describe their impact in the context of strategic development and socio-economic utility (e.g., Saari & Moilanen, 2012).

In this sense, impact means the societal relevance of research and higher education in relation to public policy goals. Substance or forms of impact do not seem to matter as much as that impact assessment’s use of an appropriate framework of vocabulary to legitimize research and higher education as an integral part of wider social collaboration, strategic choices, and the development of universities in the context of national wellbeing and economy. The interaction and pathway models have a significant role in the exchange of ideas between universities and societal sectors. However, the conception of impact pathways has traits of the demand-pull innovation model, in which research is supposed to respond to sociotechnical needs (cf. Godin & Lane, 2013).

The ideas of interaction have focused on regional socio-economic development in their earliest formulations (cf. Esko, 2020, p. 28), and later have changed to mean universities as comprehensive producers of innovations through strategic partnerships. Impact emerges as an aggregating concept for coupling the institutional legitimization of higher education by emphasizing socio-economic utility and increasing the rationality of productivity and effectiveness of research outcomes. It does this by creating connections between public policy goals and research orientation as defined by research profiles. Thus, the concept of impact incorporates the ideals of various research needs outside of academia, but it emphasizes market-driven research model through technology, products, and socio-economic legitimation of publicly funded research (cf. Ylijoki et al., 2011). Bozeman and Sarewitz (2011) noticed that science policies have had a strong tendency to rely on economic thinking when evaluating research impact. Public value failure has often meant market failure.

Operationalization of Impact

The development of the AF’s discourse provides a more operational perspective on impact than does discourses in FINEEC and the MEC. In its report (AF, 2003), it considered social impact to be a part of increased collaboration with various research associates, which is a distinct criterion from academic research results. The report explicitly prefers an interpretation of diverse impacts from differentiated academic fields, as it points out complex impact processes of the social sciences and humanities. It builds on the premise that the impact of the social sciences and the humanities on policymaking is not currently satisfactory. Controversially, the report states that impact is not a mechanical process while also claiming that impact mechanisms can be examined. The report states that impact is out of the reach of political control and assessment, but at the same time, it is subject to the directions of research policy. It states that in the long term, cultural and educational impact materializes through people’s awareness and identity formation; this means influence through higher education, popular publications, and collaborative workshops. However, the report also stresses social innovations, such as new ways to organize work and technology. The report finds it difficult to define such innovations, but states that they are explicitly related to economic and technological arrangements that are constituted in multidisciplinary work together with service and product users (e.g., linguistic technology).

The AF’s further investigations into proposals for social impact processes have continued with contradictory policy recommendations. Its report on the social sciences and humanities impact, “Education and culture cannot be produced” (AF, 2006), underlined the civilizing role of the social sciences and humanities and criticized the mechanistic measuring logic of impact. The report emphasized that impact is an essential and traditional part of the social sciences and humanities because research can be interpreted as an active intervention in society. Further, the impact of intellectual endeavor cannot be reduced to utility, not even its broadest societal sense. In the same year, a report on the impact of basic research (Kanninen & Lemola, 2006) took a significantly more linear approach to impact by describing causal utilization transfers and writing asides about the idiosyncrasy of the social sciences. However, the report concludes with the idea of research collaborations and networks as sophisticated proxies for social impact, anticipating their emphasis in future impact frameworks.

Alongside these educational and cultural interpretations, the AF has promoted an idea of planned impact through systematic research programs and impact assessments. In its assessment report on the Centre of Excellence (Hjelt, 2009), the AF referred to impact as a research program’s limited collaboration and influence on people who immediately used its new knowledge; it also contained the wider perspective of a program’s policy goals and influence on policymaking. Although the report drew a picture of wide social impact, impact was rationalized as research outcomes that policymakers utilized. The Strategic Research Council of the AF took this one step further. Its framework operates solely upon the idea that research is organized around specific themes and problems by multidisciplinary and inter-sectorial collaborative partnerships (Mickwitz & Maijala, 2015). The government ratifies these themes, and although the social sciences play a significant role in strategic research programs, this role is reduced in the sociotechnical perspective on thematized problems. Impact simply means effectiveness in relation to meeting the targets of collaboration and interaction schemes.

The AF’s research policy has turned toward a rationale like that of the MEC. Social impact is related to profiles for universities and research units in the sense of rewarding and strengthening research fields that gather similar knowledge across disciplines to address specific societal problems (AF, 2016). The pathway framework has become a tool to comprehend the multitude of ways in which research knowledge can impact society. In its latest report (AF, 2018), the AF considered altmetrics to be a rational method for assessing social impact regardless of its earlier criticism of measuring impact and having a congruent metric-based approach to impact assessment. This contradiction occurs tangibly in juxtaposed employment of impact narratives and metrics within the same funding programs, such as strategic research.

The AF has clearly attempted to operationalize strategic problem-solving and enhanced societal collaborations, but it has had an implicit struggle between a mechanistic outcome-oriented approach and a more sophisticated perspective on the culture and education contained in the social sciences and humanities. This tension has resulted in discussing the social sciences as socio-economic and technological arrangements alongside the diminishing critical and emancipatory aspects of the social sciences emphasizing socio-economic wellbeing (cf. Bozeman & Sarewitz, 2011) and attempts to increase control over research agendas by convincing ways to represent knowledge to society and use knowledge (cf. Smith et al., 2011) in the guise of pathway and interaction frameworks. The discursive ambiguity of interactions and pathways can also be interpreted as reframing academic research as a strategic national capacity and as a way to conceptualize research value through new frameworks (Kearnes & Wienroth, 2011). The AF’s operationalization of these frameworks underlines guided collaborations and targeted knowledge use via policymaking evaluated both by formalized narratives and impact metrics. The formalized problem-oriented approach conforms to the socio-economic legitimation and financial rationalization of the MEC and FINEEC.

Politicization of Research Utilization

This section outlines a framework—the politicization of research utilization—for addressing the contradictory recognitions of the value of the social sciences regarding problematized relationships with social relevance and outcomes. The informants, particularly the policy advisors, highlighted the rationality of impact, which describes political accountability to the public. They interpreted impact as a conceptual apparatus for valuing the worth of academic endeavors and for defending public research funding. They also used the political impact demands to occupy space from traditional research evaluation concepts by redefining them in terms of research relevance and societal interaction. At the same time, the interviewees pointed out the political misconceptions of impact policies regarding the value of the social sciences.

Public Accountability

According to the informants, impact policies correspond to the external demands of accountability to public interests in academic research due to diminishing expectations for academic self-guidance. The informants showed real concern about research not contributing anything to society. The worry is mainly concerning the problem of the irrelevance of the social sciences and their role in the public sphere. They pointed out how legitimizing research requires more visibility and transparency. However, their perspectives on the rationality of impact differ radically. The policy advisors pointed out that the representation of impact is crucial because it provides evidence of allocated funding having value beyond academic curiosity. One informant described the fear of becoming labeled as esoteric and useless:

Perhaps, I don’t quite understand the transparency of science regarding impact, but we can talk about the legitimacy of science so that people could appreciate it more. Therefore, we could show some positive impacts, in the sense that science is not merely something locked up in an ivory tower. (Policy Advisor 1)

Several of the informants addressed their concern with the concept of the ivory tower by describing a problem with academic self-interest. In the informants’ discourse, the ivory tower of academia means intellectual seclusion from social contexts of research problems. Academic endeavor and its claim of originality and quality is not enough to maintain the production of societally relevant social science research. They argued that academia has maintained research traditions that have gone off course for long periods of time because they only followed academic discussions and interests:

Academia can create research traditions in the social sciences that have an imaginary world and that distance themselves from all socially relevant matters for decades. . . In these cases, the internal academic impact does not reveal the problem from having a tradition that has been in a bad way for a long time. (Professor 2)

However, regarding the implementations and assessment of social impact, the policy advisors give ambiguous explanations balancing policymaker wishes and academic research interests. They highlight research programs designed to tackle “strategic” problems in society, but they also emphasize the role of basic research in a two-fold manner. According to policy advisors, research funders can design basic research to evidence impact within a flexible framework. Academic research in general has an impact in one way or another, as several of the informants claim. One policy advisor expressed both perspectives:

It is clear that the social sciences and humanities are having an impact one way or another. They create discussions. In a sense, it is intrinsic to these academic fields. . . Even though we have new instruments, our intention is that basic research should be bottom-up. We do not control it in any specific direction but encourage the search for wider societal connections. (Policy Advisor 3)

The interviewees tended to politicize research utilization by creating internal and external threats and requirements for academic research. In particular, the concept of research quality is at the center of the debate. Policy advisors tend to relate the concept of academic quality, in the sense of intellectual significance, and basic research to impact in a supplementary way. The policy advisors feel that impact complements research quality. Hence, the policy advisors extend the meaning of quality and basic research to include social relevance/utility while assuring the necessity of independent basic research. The center of politicization of research utilization is public accountability through transparency, which balances between control over research agendas and self-organized capability of the social science research agendas having socially significant substance. The idea of transparency combines the ideal of basic research as priority (Godin, 2006) with the transformation thesis of knowledge production changing toward socially contextual research (Nowotny et al., 2001). Behind the concern of public accountability, there is the old doubt of social responsibility of research requiring more formal accounting of utility (Martin, 2011). Politicization of research utilization does not seem to displace the key conceptions of science policy. On the contrary, it incorporates the old symbols in the new discourses of social impact.

Political Misconceptions

The informants illuminated the political and conceptual misconceptions that policymakers have about social science research. Due to these misconceptions, the informants find it difficult to operationalize impact in research evaluation. This section presents the opposite interpretation of impact policies and formal assessment based on recording detectable outcomes.

The main problem with impact assessment seems to be showing positive changes or interactions and attributing the change to a research project. Several informants explained that the premises of impact assessment are flawed because of the limited perspective and rationalization within socio-economic utility. Particularly, they claimed that the concept itself is political and that political purposes define the quality judgments under the impact criterion. A policy advisor, who also blames researchers for the lack of socially sensible research questions, argues:

Everyone can define impact as they want. That is the problem. It becomes a problem when using it as a criterion for scores, which are used to compare funding applications. Does a piece of research have a positive or negative impact? That is a political question regarding the social sciences, which is political and a politicizing activity toward decision-making. (Policy advisor 1)

Most of the informants consider the social sciences to be socially relevant per se. They think the problem is that policymakers find it difficult to see the impact of the social sciences because they are considering misleading aspects of knowledge use. Instead of projects, departments and faculties, assessors should also comprehensively examine other participants’ actions in their collaboration and use of research knowledge.

If our gaze is only on socially relevant knowledge, social science research can look useless. We have political processes that provide us with decisions and legislation and that involve many agencies, including political interests. Thus, the question about impact is set rather the wrong way around. (Expert 3)

Although the policy advisors specifically tended to underline the importance of legitimizing research through incorporating criteria for social relevance in traditional academic research assessment, many of the informants are uncertain or critical about the logic of impact rationality. They are aware of the political and social reasons for legitimizing the impacts of academic endeavors, such as increasing research and its funding. At the same time, most of them display profound contradictions between social science research and impact policies. They pointed out that social scientists find it difficult to set their endeavors in impact policies because it is problematic to indicate social scientific outcomes in the assessment frameworks that represent influence through positive (meaning beneficial for public policy) interactions or effects and changes in policies. Politics are biased, and interpretations of research findings can be diverse (Esko & Tuunainen, 2019). They explained that the value of the social sciences lies in a different perspective than what formal assessment designs offer. Rather, the social sciences contribute to social disputes via theoretical and analytical discussion; they are not providers of undisputed solutions, as the opposite perspective argues:

The balance between critical social sciences and decision-making has been interesting. But how does one indicate the utility of critical social sciences? A researcher has a responsibility to enlighten people by her knowledge, but that is wishful thinking because politics do not work like that. Ultimately, it is a matter of societal atmosphere whether we appreciate critical research or whether it is regarded as shouting from an ivory tower. (Expert 1)

The informants’ interpretations of the purpose and form of impact criteria in research evaluation show tendencies to conform to impact policies due to diminishing trust in academic research; they simultaneously show the pretentious characteristics of impact policies as lacking competence in understanding social scientific practices. The informants’ understanding of the role of impact assessment regarding social science utilization is fundamentally divided. The informants represent the impact of the social sciences as a matter of political trends rather than a question of relevance in the sense of the value the social sciences can provide. Benneworth et al. (2016) reported this dissonance between policymakers and scholars, but it also seems to occur in contextual discourses between scholars and policy advisors depending on whether they happen to emphasize knowledge-driven models of socio-economic research applications for public policies or critical traditions of social science research focusing on intellectual dialogue with society—what Sigurðarson (2020) addressed as increasing the social capacity of society. In this approach, the vocabulary of applications and solutions becomes irrelevant, and the conceptions of interaction and pathways have dubious grounds.

Guiding Arrangements

This section presents a frame for guiding arrangements that includes two divergent conceptions of guiding research to have social implications (guidance for research design/support for knowledge usage). Along the perceptions of socio-political accountability, a question of research guidance by impact policies polarizes the informants’ interpretations. They do not support direct allocation through criteria for impact. The policy advisors have contradictory interpretations of allocation and do not favor university funding allocation through an impact criterion, as it could lead to spurious competition between universities and research units, contradicting the idea of collaboration. Instead, the idea of competition appears only within the format of research funding, which has an impact criterion for quality judgments.

There is a subtle contradiction between how the informants perceive the demands for impact being overly controlling and how the academic policy advisors present them as flexible via self-organizing frameworks. Regardless of this tension, both perspectives underline the enforcement of social collaborations very similarly and without heavily controlling policy priorities. However, their understanding of self-organizing is different in the sense that many of the informants see it as futile to set external incentives for social interaction. They argue that social scientists do not need additional control because they are intrinsically oriented toward social problems and because their academic quality and work ethics are already high. They highlight the serendipity of social scientific ideas and academically defined interpretations of social problems, as in the following extract. Theorizations on impact can find it difficult to consider serendipity (e.g., Penfield et al., 2014). Assessments based on audit mechanisms can hardly recognize the matter of serendipity (Power, 2008).

In the social sciences, it is not always the case that one has a societal problem, and then we have research related to the specific problem. . . I don’t oppose research priorities, since we have always had those. But questions of social sciences are tricky in nature, in the sense that if we try to achieve significant results, they appear to be a bit accidental. It is difficult for anyone to plan those. . . At the same time, one should let it develop organically. (Professor 5)

The informants struggle between the ideals of a responsible researcher and supporting structures for research. According to them, researchers should understand basic policymaking processes as having an impact, but research findings need supporting dissemination structures such as research advisors. As much as the interviewed experts represent researchers who are too “lazy” to attempt to comprehend policymaking, they also represent public and private organizations reluctant to adopt social scientific knowledge. Their arguments about responsibility fluctuate between orienting arrangements (programs, assessments) for researchers and supporting structures for knowledge and societal dialogue. This polarized frame of responsibility shows the contradictory relationship between conceptions of impact assessment, in which a research unit is under inspection, and conception of social research, which reflects on social phenomena as well as the reflexivity of a researcher. The tension in responsibility of knowledge dissemination represents different logics of knowledge. Hansson et al. (2014) described this contradiction between the linear problem-solving/evaluation approach and organizational learning. The learning approach underlines organizational structures for dialogue instead of control by indicators for pre-set goals. The interpretations conforming to impact policies are not likely to accept the uncertainty of research, whereas the ideas deriving from social research also underline the culture of criticism within academia and societal sectors.

The Social Impact of the Social Sciences

This section outlines a frame for the core conceptions of social impact (enlightenment/instrumental utility). The informants had several claims regarding the epistemic and social characteristics of the impact of the social sciences. They considered both how societal participation should be pursued in academic work and how the process of this participation is arranged through academic endeavors. The informants emphasized how the social sciences are inherently socially oriented and how formalized frames for impact assessment can make it difficult to indicate such impact. This section illuminates the interpretations of what makes the impact of the social sciences.

In this context, the informants refer to the practical usefulness of knowledge. This means the impacts that change people’s behavior through changes in policy, institutions, or organizational structures. The informants regard social changes as being implicitly or explicitly positive and rational in relation to the common good. However, intellectual orientation is also enlightening in the sense of providing a better insight into and awareness of complex phenomena via scholarly interpretation. One professor presented the basic misconception between enlightenment and instrumental utility of the social sciences as follows:

Interaction in working groups has a discursive impact. I can also imagine a sort of ritualistic impact when we have working groups between researchers and other experts. These working groups are sort of a ritual. One must have these. It has elements of political performance when researchers encounter the public sector. As if they would exchange valuable knowledge and strategies. However, the actual impacts happen in a completely different setting. (Professor 3)

The interviewees understand research impact as being collective and indirect. They think that research impact always accumulates in relation to previous research pursuits, related research fields, and collaboration with other researchers and social sectors. They claim that impact is always based on accumulating effects in wide networks. These networks also refer to networks between and within academic fields. This notion resembles the idea of local collectives where knowledge extends and where actors make knowledge useful by constantly reconfiguring and redefining ideas (Benneworth & Olmos-Peñuela, 2018; Miettinen et al., 2015). A few of the informants noted that a large part of social impact is the theoretical and methodological work across disciplines contributing to social science research. One professor sees poorly designed impact policies as a dangerous risk to theoretical work. The main argument is that the pathway to social impact is long, complex, and unpredictable:

The process from [social scientific] research outputs to policymaking and people’s understanding is tremendously long. . . In some cases, we can trace this process. It is not an individual research project but a collective result of an academic community. And after that, there are political decisions and factors relating to people’s understanding and behavior. (Professor 1)

The interviewees underline that impact cannot be limited to assessed outcomes. Several informants argued that the social sciences not only correspond to policy priorities, but they also create social movements and political agendas of their own. This notion moderately refers to the critical research traditions with emancipatory endeavors.

Research can collectively create an agenda. For example, no one was discussing the contamination of the Baltic Sea 30–40 years ago. But step by step, research has improved the discussion. This is the feature [of the impact of the social sciences] that we must mention. In the same vein, the social sciences can place topics on the agenda, for example, the equality of the education system. (Expert 4)

Several of the interviewees mentioned higher education as the most important pathway for research and how universities can have impact. They stressed that the social sciences rarely have a significant direct impact on other social sectors because knowledge dissemination is complex and understanding it happens slowly. According to them, students are the most significant group to adopt new knowledge, as it possibly shapes their understanding of society when entering working life. Education can be understood as having an enlightening impact on people’s understanding and on innovation.

Because most people leave academia, one could ask them about impact since they have must have some understanding of useful knowledge. Universities educate all people in the public sector and most in the private sector. Yet somehow, the Ministry and politicians tend to think that our societal relations are poor and that now we should do something about this. (Professor 5)

The informants demonstrated varying interpretations of the research practices of the social sciences and the logic in those practices constituting the social capabilities for social impact. A common baseline for their interpretations was that the impact of the social sciences is like its study objects: subtle cultural meanings, discourses, and the reconfiguration of problems and interpretations. It does not seem possible to separate social science research from discussion in other academic fields or social, political, and economic sectors, as it defines and reconfigures social conceptions vis-à-vis other sciences and institutions. Although their interpretations portray the conception of enlightenment and knowledge creep, their reasoning has traits of the linear problem-solving logic entangled with ideals of common good and rational development in public policy. In this regard, the representations merge several logics of knowledge utilization simultaneously from mechanistic/naturalistic ideals of research use to critical/interpretivist conceptions of knowledge and expertise in society, although the disciplinary considerations clearly emphasized the latter one. This tension considers basically what constitutes knowledge in society and how it can be used in policymaking and social life (Hansson et al., 2014).

Discussion

Thematic analysis of the interpretations of the impact of the social sciences revealed how academic experts construct various contextual meanings of research impact from governance to research practices. However, the findings from this paper indicated that academic experts constructed contextual formulations by using divergent and even polar interpretations depending on whether they wanted to underline formal accountability demands by impact policies or disciplinary aspects of knowledge processes. Weiss’ (1979) categorization proved to be a useful approach for understanding the premises of conceptualizing research utilization. The analysis showed signs of conceptual dissonance of research utilization by merging traditional innovation thinking and priority of basic research with ideas of comprehensive interaction with public and private agencies. The policy documents and interviewed experts constructed a dual vocabulary between a linear problem-solving approach and a multi-leveled and multi-operational understanding of knowledge processes and impact. This investigation clarified how policy advisors and social scientists walked a tightrope between these rationales for impact governance and disciplinary understanding of social opportunities for knowledge and how they merged these accounts in contextual frames.

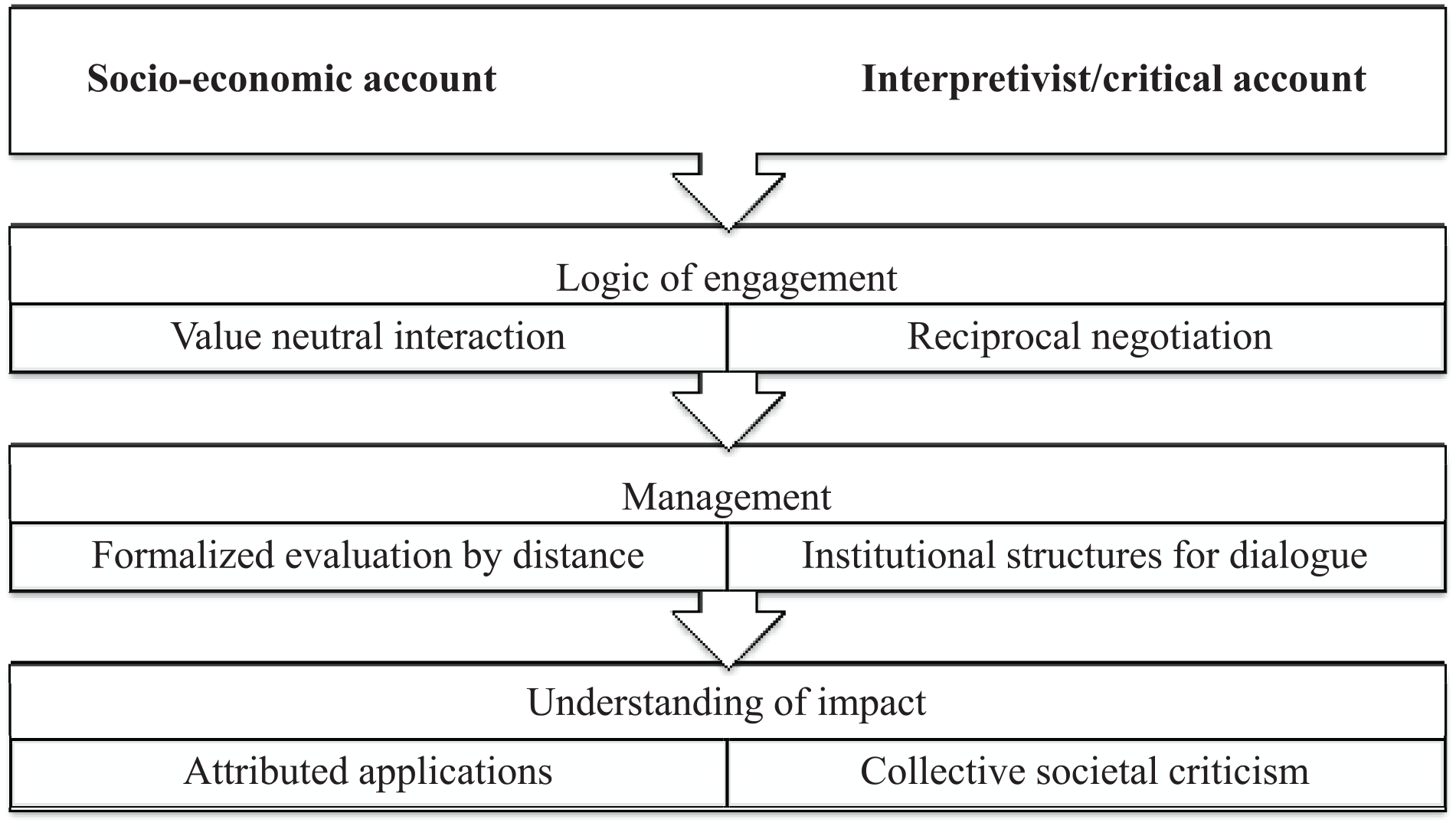

The study identified five frames by which the policy authors and the informants formulated their interpretations of social impact (Table 2). These frameworks were derived from two-fold accounts of research utilization (Figure 1). To a great extent, conceptualizations of impact policies derived from socio-economic legitimation of higher education. In this account, conceptualizations of impact aggregated various national political goals for social wellbeing and economic activity through knowledge governance and through the institutional legitimization of higher education and research funding. Impact meant the promise of positive solutions and progress by careful strategic planning under national policy interests. It resembled the linear problem-solving model but also had characteristics from the linear innovation model of academic research, which follows steps from relevant questions to applications (cf. Godin, 2006). In the socio-economic approach, the roadmap to “common wellbeing” lies in collaborations that integrate scholarly endeavors with public policy interests. Unlike the metaphor of the agora (Nowotny et al., 2001), socio-economic arrangements appeared to be bureaucratic interventions via the devolution of knowledge governance down to universities and competing research funding under the rationality of productivity. In this sense, the socio-economic account relies on the principles of new public management and formalized indicators in its strategy to organize societal knowledge processes (Hansson et al., 2014; Hicks, 2012).

Accounts of research utilization.

The policy authors considered social science research to be useful from the perspective of sociotechnical solutions. The socio-economic rationale manifested a reasoning based on national flourish defined by economic and financial terms in like Benneworth et al.’s (2016) notions on the economic discourse of impact. Whereas the discourse of the MEC and FINEEC has focused on aggregating conceptualizations, the actual operationalization of impact has been transferred to universities and competitive funding by criteria for impact and social relevance. The AF’s policies and operationalization have been equivocal. On the one hand, it has been obliged to follow the socio-economic rationale by developing direct utility indicators that ideally show useful effects and outcomes. On the other hand, the AF’s Research Council for Culture and Society has promoted broader human flourishment alongside problem-oriented approaches.

Whereas socio-economic reasons drove impact policies, the informal discussions emphasized the internal struggle between socio-economic legitimation of research funding and disciplinary endeavors of the social sciences. The common theme between policy recommendations and the informants was the responsibility of the academic community to engage in the problems of societal sectors. According to the account of socio-economic legitimation, researchers are responsible for showing their engagement through audited research outcomes. Socio-economic accountability emerges as an extension of the economization of research accounts (Bozeman & Sarewitz, 2011) and internal formalization of research performance (Hansson, 2006; Hansson et al., 2014) via impact evaluation. Linear innovation and problem-solving approaches apply seamlessly in socio-economic impact rationality, in which it is preferable for societal knowledge processes to be audited. Consequently, these models seem to dominate the formal discussion on the operationalization of impact, although the new public management style of decentralized evaluation (Hicks, 2012) down to competitive funding and universities provides opportunities for divergent implementations within the interaction and pathway frameworks.

The second account from which the policy authors and the interviewed experts constructed their interpretations was the interpretivist/critical perspective. In the policy documents, these interpretations took place when the policy authors had recommendations from social scientific perspectives. Research policy agencies have taken little notice on this account. The AF has been unable to reach any conclusive solution when operationalizing an impact criterion for accountability purposes, instead conforming to the MEC’s policies. Similarly, the interviewees struggled with these two accounts when considering research guidance in public policies. This tension led to uncertainty over the formal policy conceptualizations of impact. The informants tended to rely on contradictory interpretations. The main thread of the interpretivist account was that there were misconceptions about the formalization of the impact of social science research.

From this perspective, impact assessments have overlooked the knowledge processes of the social sciences and thus are based on conceptual fallacies of governing research priorities and processes by outcome-based evaluations. The value of the social sciences and its contribution to social life is misunderstood. Benneworth et al. (2016) described this tension as a structural (and intellectual) dissonance between impact policies defined by economic terms and cultural studies focusing on human flourish. The critical account turns the operationalization of formal impact assessment upside down since it focuses on how institutional structures and organizations can support the ideas of social science research. It focuses on arranging supporting structures and dialogue to increase awareness. Benneworth and Olmos-Peñuela (2018) called this coupling of academic and user knowledge. However, in the critical account, knowledge dissemination is rarely direct. In societal change, concepts percolate and creep slowly into people’s awareness (Muhonen et al., 2020). They challenge—but do not necessarily invalidate—their previous experiences and common sense. According to this understanding, social impact means the collective criticism between the social sciences and social institutions either through local engagement or through indeterminate wider networks (cf. Callon, 1994).

These polarized accounts cause uncertainty over how impact should be rationalized, operationalized, and managed. The uncertainty is due to the limitations of socio-economic vocabulary in relation to social science endeavors and the integration of these juxtaposed vocabularies in formal and informal contexts. The accounts become entangled with each other in contextually constructed frames that merge together the audit principles of new public management and disciplinary ideals of knowledge processes in society. In particular, the conceptions of interactions (collaborations) and pathways have had a significant meaning for understanding research use in impact policies within the socio-economic accountability of the higher education sector and simultaneously provided discursive space for the interpretivist paradigm. Refinements of this framework (Molas-Gallart & Tang, 2011; Muhonen et al., 2020) by social scientists have furnished the conceptions closer to the enlightenment ideals of utilization (e.g., Sigurðarson, 2020). The interpretivist assessment relies on organizational learning (Bushe, 2011; Hansson et al., 2014). However, social scientists should be aware of these divergent accounts when justifying their intellectual endeavors because disciplinary goals could easily become displaced when attempting to defend them by a logic derived from risk management and auditing (Hansson, 2006; Power, 2008). The Finnish context shows how subtle negotiations over research utilization become a tightrope walk between juxtaposed accounts and how sophisticatedly they merge into each other, regardless of the obvious tensions.

Limitations and Future Research Directions

This study limited its inspection to representations of impact and concepts incorporated in it within a national context. Comprehension of international isomorphism of impact policies requires wider material than provided in this paper. To furnish the impact of the social sciences with deeper theoretical understandings, one should apply more sophisticated and in-depth interview methods to study long-term changes in research traditions and their relationship to social structures and politics. In addition, one should consider the differences between fields that are intertwined with natural/technical sciences and traditional social sciences. Thus, arrangements for interacting with nonacademic organizations should be considered more comprehensively than just within the framework of research impact assessment and criteria for judging social outcomes and interactions. This comprehensive understanding should guide future studies on the social opportunities of research.

Conclusion

Policy advisors and academics relied on conflicting accounts of research utilization in their interpretations of the concept of impact. They were often unaware of their contradictory vocabularies within their overall arguments. This latent disharmony between the contradictory interpretations of impact means that the divergent conceptions of research utilization were entangled each other in policy and academic discourses of impact. One way to understand this disharmony is that researchers have adopted and blended the idea of impact into their scholarly vocabularies, as it is needed to gain funding and resources. However, at the same time the concept of impact was logically alien to disciplinary thinking of research utilization causing problems in the relationship between disciplinary integrity, research evaluation and policy discourses. Greater awareness of the disciplinary integrity of research utilization in research evaluation and policies could increase the social capacity of the social sciences by helping funders and research policymakers to see the potential of social science research for public policies and social life.

Footnotes

Acknowledgements

I would like to thank the contribution of the interviewees, the comments of Heikki Hiilamo and Risto Eräsaari, and the comments of the participants of the Totemi seminar of University of Helsinki, particularly Petri Ylikoski. I would also like to thank the comments of Ian Dobson and Ziyu Wang. Special thanks for the reviewers of the journal.

Declaration of Conflicting Interests

The author declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: This project was conducted impartially without any affiliations to the parties and organizations under the investigation of the project. The funding of the project is impartial without any political associations.

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research project has received funding from Kone Foundation and financial support from the University of Helsinki. Kone Foundation is a funder of social science research, humanities and arts, which supports impartial research endeavors and venturesome ideas.

Ethical Approval

This project is not applicable to a review of the University of Helsinki Ethical Review Board in the Humanities and Social and Behavioural Sciences.