Abstract

When an observer perceives and judges two persons next to each other, different types of social cues simultaneously arise from both perceived faces. Using a controlled stimulus set depicting this scenario (with two persons identified respectively as “target face” and “looking face”), we explored how emotional expressions, gaze, and head direction of the looking face affect the observers’ eye movements and judgments of the target face. The target face always displayed a neutral expression, gazing directly at the observer (“direct gaze”). The looking face showed either a direct gaze, looked toward the target face, or averted it. A total of 52 undergraduate students (25 males) freely viewed these scenes for 5 s while their eye movements were recorded, which was followed by collecting ratings of attractiveness and trustworthiness. Dwell times on target faces were longer when accompanied by a looking face with direct gaze, regardless of its emotional expression. However, participants looked longer on faces looking toward the target in the approach condition and fixated more often on target faces that were either next to an angry-looking face directly looking at them or to a happy-looking averted face. We found no gaze effect on faces that were looked at by another face and no significant correlation between observers’ dwell time and attractiveness or trustworthiness ratings of the target and looking face, indicating dissociated perception and judgment processes. Irrespective of the gaze direction, as expected, happy faces were judged as more attractive and trustworthy than angry faces. Future studies will need to examine this dynamic interplay of social cues in triadic scenes.

Introduction

In real-life situations, people sometimes make very fast judgments based on social cues (Willis & Todorov, 2006). In triadic scenes, when an observer is exposed to two other persons, many cues arise from the two perceived faces simultaneously (Wieser & Brosch, 2012). For example, the two faces might differ in their emotional expressions and either looks toward or away from each other. Presenting two people in a scene (i.e., a sender and a receiver) may imply a social interaction and a perceived relationship between them. In a situational context when two persons are involved, it is yet unclear how the different facial cues affect and how both are perceived and judged by a third person, the observer.

Previous research showed a clear impact of facial features on person judgments (Bayliss & Tipper, 2006; Krumhuber et al., 2007). Todorov and colleagues (2015) demonstrated that social attributions to faces are linked with perceptual and nonperceptual determinants of faces that modulate observers’ person judgments. For example, facial attractiveness relies on facial features, such as symmetry (Perrett et al., 1999) and averageness related to preference of mating (Langlois & Roggman, 1990), whereas perceived trustworthiness shows weaker relations with these features (Todorov, 2008) but might be more sensitive to social evaluations, the presence, and looking behavior of others.

However, in the current study we did not manipulate morphological face features, but we were especially interested in how the emotional context indicated by positive or negative emotional expressions of the “looking person” modulates observers’ judgments of the “target person.” Therefore, we used judgments of facial attractiveness as an aesthetic evaluation and judgments of perceived trustworthiness as a social evaluation. We examined whether the looked-at target face would be considered more trustworthy or attractive, an effect previously shown in triadic scenes where the gaze direction and head direction were manipulated (Kaisler & Leder, 2016, 2017). We introduced an additional facial cue, namely emotional expression, and embedded two faces showing different gaze, head directions, and emotional expressions (a and b) into 24 different natural scenes: (a) a “looking face,” looking either toward (three-quarter view) or away from (profile view) the “target face,” and showing either a happy or angry facial expression, and (b) a target face, always showing a neutral facial expression and direct gaze in frontal view. We combined these two faces displaying different gaze, head, and emotional expressions in the following three conditions: (a) control condition, both faces look directly at the observer, (b) approach condition, the looking face looks toward the target face, and (c) avoidance condition, where the looking face looks away from the target face. We investigated the visual exploration pattern by measuring observers’ eye movements while the scene was perceived (Block 1), as well as judgments of attractiveness and trustworthiness of both persons depicted in the scene (Block 2).

With this design we attempted to create ecologically valid stimuli (e.g., Kingstone, 2009) that allowed us to test observers’ visual behavior and preference toward two other persons, which might be difficult to study with isolated faces presented without a social context (e.g., without showing their bodies embedded in a scene). In particular, when the target face is being looked-at by another face indicating social approach, gaze, head, and emotional expression might influence observers’ judgments of the target person.

Effects of Face Gaze and Head Directions on Person Judgments

In social interactions, humans use the face, especially the eye region, to signal social intentions to others (Kleinke, 1986). Combined facial cues, such as gaze and head orientation, can be used to detect another person’s direction of attention especially when these cues are congruent. This implies that the gaze and head orientation play a large role in creating social attention and interaction (Langton, 2000; Langton et al., 2000).

A person’s gaze direction modulates the observers’ person judgments of the perceived target face. For example, single faces that directly looked at the observer were judged to be more trustworthy (Bayliss & Tipper, 2006) and attractive (Conway et al., 2008; Ewing et al., 2010; Mason et al., 2005) than faces with averted gaze. When engaging with another person, direct gaze seems to be a salient signal of social relevance for the observer (for a review, see Bayliss & Tipper, 2006). Even in triadic social scenes with only neutral expressions, faces showing direct gaze were considered as more attractive and trustworthy than the looking face showing averted gaze and neutral expression. Moreover, target faces are judged to me more trustworthy when looked at by another face (Kaisler & Leder, 2016). Gaze may also indicate the current attentional state of a person. To be specific, the direction of gaze indicates what object a person is currently interested in (Bayliss et al., 2006): observers rate objects as more likable when they have seen a face looking toward rather than away from the object (gaze-liking effect, or more generally, gaze-cueing effect), see also Tipples and Pecchinenda (2019) with additional, mixed results. Gaze-cueing effects occur when observed gaze shifts, such as attentional cues, produce orienting responses in people (for a review, see Frischen et al., 2007). We are interested in the interaction of gaze cueing and emotion because the impact of gaze cues on person judgments and liking might be modulated by the emotional expression, especially by positive emotions.

A recent study suggests that intact gaze cueing is important for social function as Hayward and Ristic (2017) showed alterations in gaze cueing are associated with reduced social competence. Larger effects of gaze cueing were observed with fearful compared with neutral expressions (Fichtenholtz et al., 2009; Holmes et al., 2010; Lassalle & Itier, 2013; Neath et al., 2013), and a similar effect with surprise (Bayless et al., 2011; Lassalle & Itier, 2013; Neath et al., 2013) and anger (Holmes et al., 2010; Lassalle & Itier, 2013) has been reported in studies measuring neural activity. Most of these studies have failed to modulate gaze cueing by happy expressions, therefore, it can be argued that emotional modulation of gaze cueing may reflect the attention orienting of (potential) threat. However, recently McCrackin and Itier (2018) showed that happy expressions can also increase gaze cueing compared with neutral expressions, but to a lesser extent than fearful faces, using dynamic sequences in which gaze shifts occurred prior to the expression. The smaller effect sizes might be the reason why previous studies failed to report this effect. In another study, McCrackin and Itier (2019a) supported the idea of a gradient in the modulation of gaze cueing by facial expressions rather than the threat/nonthreat hypothesis. The authors reported stronger overall gaze cuing in women than in men, which was not related to self-reported anxiety or depression.

Previous research explored the interplay between gaze directions and viewing angles. Faces were given consistent attractiveness ratings across viewing angles: judgments of trustworthiness were correlated more positively between frontal and three-quarter views compared with profile views of isolated faces (Rule et al., 2009). Also in triadic scenes displaying two faces with different gaze and head directions, three-quarter views of faces were considered as more attractive than frontal and profile views (Kaisler & Leder, 2017). The disadvantage of profile views might be due to the lack of full information availability of facial features such as eyes, nose, and mouth (Bruce et al., 1987; McKone, 2008; Stephan & Caine, 2007).

Effects of Emotional Expressions of Faces on Person Judgments

The speed of recognizing expressed facial emotions depends on the joint contribution of both gaze direction and emotional expression. For example, angry expressions were better categorized with direct gaze and fearful expressions with averted gaze in a speeded categorization task than vice versa (Adam et al., 2003). They authors reported that gaze and emotional information were processed together, however, this could only be found in this specific stimuli set. In contrast, other studies showed a stimulus- and task-dependent discrimination between emotional expression and gaze directions (Bindemann et al., 2008; Graham & LaBar, 2007). The categorization of happy and sad expressions, and angry and fearful expressions, were impaired when gaze was averted compared with the direct gaze condition (Bindemann et al., 2008); however, a combination of all four expressions in the same design did not support previous findings (Adam et al., 2003; Adams & Kleck, 2005). Graham and LaBar (2007) argued that emotional expressions generally interfere with gaze judgments, but gaze direction modulates expression processing only when facial emotion is difficult to discriminate. In a recent event-related potential (ERP) study, participants had more accurate emotion discrimination for direct gaze faces compared with averted gaze faces, while the opposite was seen for attention discrimination, and gender discrimination was not affected by gaze direction (McCrackin & Itier, 2019b). These findings indicate that perceived gaze direction modulates neural activity differently depending on task demands. Thus, the emotional expression of faces partly determines how we engage or disengage with others in social situations, and also how we perceive and evaluate them. For example, Main et al. (2010) showed that observers indicated social interest for frontal views when judging happy attractive faces, but they preferred three-quarter views relative to frontal views when judging happy unattractive faces or faces with disgust expression.

Effects of the emotional context in a scene may be reflected not only in observers’ judgments but also in the differences in the amount of time the target face is being looked at, as previous studies (Leder et al., 2016, 2010) reported longer looks at highly attractive faces when paired with a less attractive face in natural scenes. This interaction between participants’ visual behavior and judgments was also seen in a decision-making task. Bayliss et al. (2013) showed that participants’ evaluative behavior and judgments changed when participants’ saccades were mirrored by the image of a face on the computer screen compared with when participants’ eye movements caused the face on the screen to orient in the opposite direction. Further evidence form a free-viewing eye tracking task showed that the social context and emotional valence modulate gaze fixations in dynamics scenes (e.g., YouTube video clips) and that people orient their attention toward social features (head and bodies, people walking in the streets or playing ball games) compared with nonsocial scenes in natural scenes (Bayliss et al., 2013). However, these studies (Bayliss et al., 2013) did not systematically control and introduce different facial cues and analyze approach and avoidance situations in triadic scenes. Therefore, in our study we expected for the social interaction of two people, where joint attention is directed to another face that this would impact observers’ eye movements and judgments of the target face.

To investigate the effects of the emotional context in a social situation, Bayliss et al. (2010) presented happy and disgust faces as primes and neutral, pleasant and unpleasant International Affective Picture System (IAPS) pictures as targets (Lang et al., 2008). The faces were used to cue toward the IAPS images. The targets’ evaluations were affected by the facial expression of the faces: gaze-cueing effects were larger when the emotion of the gazing face (happy) matched that of the target (pleasant). Similar effects were observed when emotionally valenced words were used as targets, indicating context-based gaze cueing when attention is directed to the emotional content of the target (Pecchinenda et al., 2008). Faces expressing disgust and fearful faces elicited stronger gaze effects than happy and neutral faces. Also, Bayliss et al. (2009) showed that positive evaluations of faces when looking at someone were only found when faces “created a positive social context by smiling, but not in the negative context when all the faces held angry or neutral expressions“ (p. 1072) suggesting “that implicit processing of the reward contingencies associated with gaze cues relies on a positive emotional expression to maintain expectations of a favorable outcome of joint attention episodes“ (p. 1072).

In a recent study, Landes et al. (2016) replicated Bayliss and colleagues’ (2007) findings that evaluations of household objects were modulated by emotional expressions of faces looking toward the target object. However, this effect was not found for faces as target objects (Landes et al., 2016). The authors used isolated faces presented next to each other, where models were asked to express liking and disliking instead of showing happy and disgusted expressions. These stimuli might have sent more ambiguous signals because liking and disliking are not well-defined emotional expressions and this may have led to smaller effects than in Bayliss and colleagues’ (2007) study. Importantly, presenting isolated faces looking toward a target is a different type of scenario than two people presented in a scene indicating a social interaction.

The Present Study

In the present study, we investigated how combined facial cues affect observers’ looking behavior (visual exploration pattern of eye movements) and whether this correlates with their subsequent judgments of attractiveness and trustworthiness. Therefore, we employed a within-subject design by measuring observers’ eye movements and person judgments of both persons depicted in the social scene. We examined how positive or negative emotional expressions of the face looking towards a target face (with direct gaze) modulate observers’ visual exploration and judgments of that target face in natural scenes. Because observers’ attention is directed towards the target face, we expected longer looks (total dwell time) and more fixations (total fixation counts) on the target face in comparison to the nontarget face looking towards or away from the target face, especially in a positive emotional context (Hypothesis 1). Regarding person judgments, if the observer additionally uses the emotional expression of the looking person to interpret the emotional context, then a positive expression of the looking face would enhance observers’ attractiveness and trustworthiness judgments of the target face in the approach condition (Hypothesis 2), in accordance with effects found with objects (Bayliss et al., 2007) and faces in scenes with neutral expressions (Kaisler & Leder, 2016, 2017). Furthermore, the dynamic interplay of gaze and emotion might lead to hierarchical ordering of effects: the strong positive emotional cue might override other subtle gaze and head direction effects on participants’ perception (eye movements). If observers’ perception guides their judgment formation, a correlation between eye-gaze behavior and ratings of attractiveness and trustworthiness would be predicted (Hypothesis 3). We expected a positive correlation between dwell time and judgments of the target face. However, this might only be relevant in situations in which the looking person smiles and looks toward the target face because previous studies (Bayliss et al., 2010; Pecchinenda et al., 2008) showed a strong relationship between a positive emotional context and observers’ judgments.

Experiment

Method

The study was approved by the Ethics Committee of the University of Vienna. The experiment consisted of a free-viewing eye tracking task followed by two rating blocks of attractiveness and trustworthiness of the previously seen scenes, lasting for about 60 min. Participants rated both faces in the scenes in a random order. Prior to the experiment, the experimenter explained the procedure to the participants and obtained written informed consent in accordance with the ethical guidelines of the university and the Declaration of Helsinki. All participants gave written informed consent to publish these case details.

Participants

A total of 52 Austrian undergraduate psychology students with Caucasian background of the University of Vienna participated in the experiment (25 males, 27 females, M = 23.2 years, SD = 5.0). The participants had normal or corrected-to-normal vision. The participants were recruited via the university’s recruiting platform, where all psychology students are registered to obtain course credits for their study. We applied two exclusion criteria, namely, participants who had participated in previous experiments about emotion and perception and those having participated in pre-experiments for this study, to avoid preconceived expectations and bias toward this experiment. Participants received €8 for their participation in the experiment.

Stimuli

The stimuli were comprised of 24 high-quality grayscale photographs of natural scenes containing pairs of young adult models (university students). The target face always showed a neutral facial expression. The second “looking” face in the scene looked either toward or away from the target and showed either a happy or angry facial expression. In total we employed 24 scenes, with eight male–male pairs, eight female–female pairs, and eight male–female pairs (counterbalancing the position of female and male models).

The photographs of the scenes were taken in indoor and outdoor locations on the university campus. Two models were always standing side by side with a lateral separation of 1 m from the midpoint of the torso in the scene (Kaisler & Leder, 2016). We manipulated the gaze and head directions of both models in the scene (the gaze direction always being congruent with the head direction, as in Kaisler and Leder (2017) such that it could be frontal (0°, toward the observer/control condition), three quarter (45°, toward the target face/approach condition), and profile (90°, away from the target face/avoidance condition).

In all conditions, the target face shows direct gaze with neutral expression while the looking face displays either a happy (Figure 1A to 1C) or an angry expression (Figure 1D to 1F): In the control condition both faces display direct gaze. In the approach condition (Figure 1B, 1E), the looking face looks toward the target face. In the avoidance condition (Figure 1C, 1F), the looking face looks away from the target face. A comparison of these gaze conditions (Figure 1B vs. 1E) should reveal the effect of emotional context on observers’ judgments (Bayliss et al., 2010), with positive emotion increasing attractiveness of the target face, and further, whether being looked at by another face modulates observers’ judgments of the target face.

Example of gaze and emotion conditions of the present study. In all conditions (in total 24 different scenes), the target face shows direct gaze with a neutral expression, while the looking faces displays an emotional expression. Panel control condition: The looking face shows direct gaze with a happy expression (A) or angry expression (D). Panel approach condition: The looking person looks toward the target person with a happy (B) or angry (E) expression. Panel avoidance condition: The looking person looks away from the target face showing a happy (C) or angry (F) expression. The individuals in this manuscript have given written informed consent to publish these case details.

In addition, we employed two other control conditions (not shown in Figure 1) as follows: (a) neutral expressions, where the target (frontal view) and the looking face (frontal view) directly look at the observer and show neutral expression. To be able to analyze the pure effects of “being looked-at,” without the effect of direct gaze at the observer of the scene (Kaisler & Leder, 2016, 2017), and we included (b) an “ambiguous condition,” where the target face looks away from the looking face. The looking face looks toward the target face signaling social approach through a positive emotion (Figure 1B) or avoidance through a negative emotion (Figure 1E). Results of this ambiguous condition are not reported, as we found no effects of gaze and emotion on the target face (data shown in Supplemental Material S1 Table).

The position (left–right) of the looking and target faces in the stimuli set was counterbalanced across the 24 indoor- and outdoor-scenes displaying four gaze and emotion conditions (control, approach, avoidance, and ambiguous, with each happy and angry expression) each six trial using different scenes (4 conditions × 6 trials, 24 stimuli). We counterbalanced the gaze and emotions conditions across three gender pairs (female–female, male–male, female–male; 24 scenes × 3 gender, 72 stimuli). We also included 12 filler scenes without faces (background only). In total, we thus presented 84 stimuli in the experiment. We included the control, approach, and avoidance conditions for analyzing the effects of emotion and gaze on target faces. Analyses of the ambiguous gaze and emotion condition can be found in the Supplementary Material (S1 Table, S2 Table).

Eye Tracking Apparatus

Eye movements were recorded with an EyeLink 1000 desktop mounted eye tracker (SR Research Ltd.). Ocular dominance was determined by means of a dominant eye test card, and the dominant eye was tracked during eye movement measurements. A combined chin and headrest was used to keep the participants’ head stationary. Following a 9-point calibration, eye position errors were less than 1°. Detection of a saccade was set to a minimum motion of 0.1°, with a minimum velocity of 30°/s, and a minimum acceleration of 8.000°/s².

Software programming, randomization and stimulus delivery and initial data conditioning were performed with Experiment Builder 1.6.121 and Eye Data Viewer 1.10.123, respectively (SR Research Ltd.). Stimulus delivery was performed on a Windows XP computer (1,024 × 768 pixel) at a viewing distance of 57 cm of the screen, which was interfaced to a second computer, which in turn recorded all eye movement data. In addition to eye movement recordings, participants also made subjective rating responses on a standard computer keyboard located in front of them in a position that did not require them to move their head to respond.

Procedure

Participants first signed the informed consent form. As a standard procedure, we employed a Multidimensional Mood Questionnaire (MDBF, short version A; Steyer et al., 2004) to assess participants’ positive/negative mood (M = 17.20, SD = 0.26), alertness/fatigue (M = 14.38, SD = 0.40), and quietude/disquietude (M = 15.08, SD = 0.41) prior to the experiment. We considered checking participants’ mood important because, for example, increased fatigue and disquietude might bias participants’ performance exploring scenes and making person judgments. Analyzing these data we found that no participants displayed extreme mood scores (outliers in boxplot showing the interquartile range); therefore, we included all participants in the analyses.

Eye Tracking Task

In a dimly lit room, participants were seated in front of a computer screen with their heads fixated with a chin and forehead rest. Participants were instructed to freely view the scene. In a within-subject design, participants started with the free-viewing block showing a fixation cross (drift check), which was followed by a stimulus presented for 5,000 ms. The inter-trial interval was set to 5 s. Re-calibration was applied whenever the drift check failed. Participants first practiced with four trials showing filler scenes before taking part in the actual experiment with 84 trials started. The experiment lasted about 30 min.

Rating Block

We decided to conduct the rating blocks after the free-viewing eye tracking block, hence participants’ eye movement behavior was not biased by the rating tasks and knowing about the purpose of the study. After the eye tracking block, participants rated both target and looking faces embedded in scenes previously shown in the free-viewing task, randomized for the left and right position in two blocks, once for attractiveness and once for trustworthiness, presented in a random order. A rating scale was presented toward the bottom of the screen while the scene was shown, and participants used a computer mouse to select a number on a 1 to 7-point Likert-type scale with “1” being “very unattractive” or “not at all trustworthy” to “7” being “very attractive” or “very trustworthy.” The rating scale remained onscreen until a response was given. In total, participants gave 84 attractiveness and 84 trustworthiness ratings. Stimulus presentation, trial randomization and data collection were conducted with E-Prime (v.2, Psychology Software Tools). Participants completed the rating block during a single testing session lasting approximately 30 min. At the end of this block, participants were debriefed, thanked, and dismissed.

Statistical Analyses

In this study, we reported all measures, manipulations, and exclusions. Statistical analyses were conducted using IBM Statistics SPSS 20. The normality of distribution of variables was tested by one-sample Kolmogorov–Smirnov tests (S2 Table [Supplemental Material]). All variables were distributed normally. Unless otherwise indicated, all assumptions for the statistical tests were fulfilled and the alpha level was set to .05. All statistical tests were run as two-tailed tests and the Bonferroni–Holm correction was applied in order to control for multiple comparisons in the correlation analysis.

In repeated-measures ANOVAs (M and SD in text, MSE shown in S3 Table [Supplemental Material]) and mixed-design ANOVAs, Greenhouse–Geisser corrections were applied as appropriate. Furthermore, 3 × 2 × 2 mixed-design ANOVA with gaze (control, looking toward, looking away) and emotion (happy, angry) as within-subject factors and gender (male, female) as between-subject factor were conducted to compare the control, approach and avoidance conditions (M, SD, and MSE shown in S3 Table [Supplemental Material]). The dependent variable considered in these analyses was either dwell time, fixation counts, or judgments of attractiveness and trustworthiness of the target face. Eye movements were analyzed for two areas of interests (AOI), namely the two faces, including hair. AOIs were manually assigned to each scene. We excluded the ambiguous control condition because here the target face did not show direct gaze. Overall, there was no main effect of gender in ANOVA tests (eye movements and behavioral judgments), neither an effect of gender for two-way nor three-way interactions, therefore, we generally report the ANOVA results without considering gender as a factor in this result section. Gender only appeared to play a role in one analysis, for which we give the exact statistics.

Results

All eye tracking and behavioral data files are available at the permanent database PHAIDRA (accession no. 603202) of the University of Vienna via the link: http://phaidra.univie.ac.at/o:603202.

Emotional Context in Social Scenes

Analysis of Eye Movements

To test whether the positive and the negative emotional context modulates observers’ eye movement patterns, we calculated mean ratings (M) and standard deviations (SD) of the target and looking face in each gaze and emotion condition (Table 1).

Mean Values of Eye Movement Parameters of Target Faces and Looking Faces in Three Gaze and Emotion Conditions, Given for a Presentation Duration of 5 s.

Note. SD = standard deviation.

Eye-Behavior to Target Face

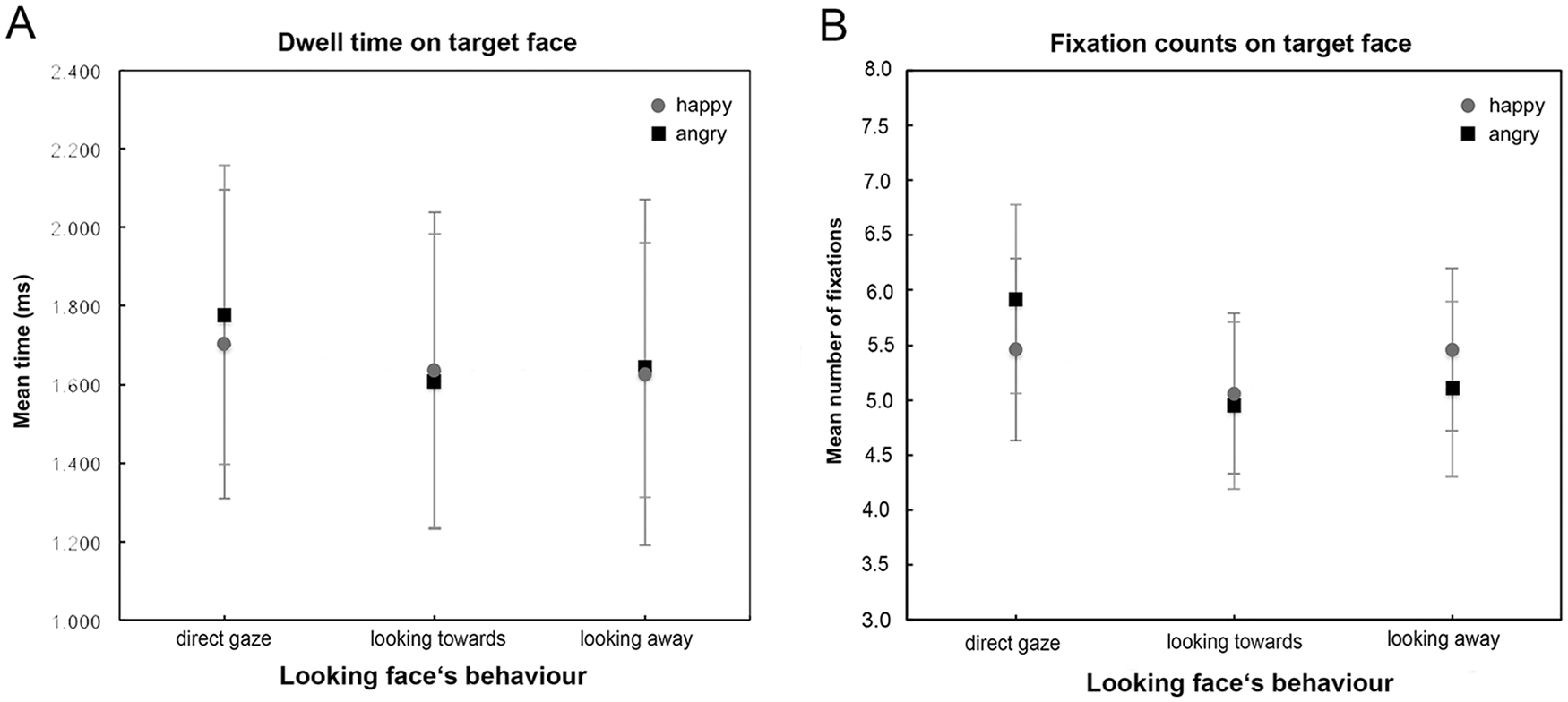

For dwell time, the ANOVA revealed a main effect of gaze (Figure 2A), F(2, 100) = 5.77, p = .004,

Eye movement data, dwell time and fixation counts, of different gaze directions and emotional facial expression conditions of the looking face on the target face. Black squares indicate positive facial expressions (happy) and gray dots indicate negative facial expressions (angry). Gaze directions: Direct gaze (frontal view), looking toward (three-quarter view), and looking away (profile view).

We found a similar pattern of results for mean fixation counts (Figure 2B). A strong main effect of gaze, F(2, 100) = 13.49, p = .001,

Eye-Behavior to Looking Face

The ANOVA revealed a main effect of gaze, F(2, 50) = 3.63, p = .040,

Comparison Between Eye-Behavior to Target and Looking Face

To further test our first hypothesis, we conducted three paired t-tests for dwell time, comparing the dwell time on the target face to the dwell time of the looking face in each of the three gaze and emotion conditions (control, approach, and avoidance). As we found no effect of emotion on dwell times in our previous analyses, we calculated the mean of the happy and angry values for each face and compared the target face with the looking face. In the approach condition, the t-test with Bonferroni correction revealed a significant difference: participants spent more time on faces looking toward (M = 1,754.80, SD = 334.11) than on the target faces (M = 1,621.44, SD = 345.98), t(51) = 3.69, p = .001. We neither found gaze effects in the control nor in the avoidance condition (S5 Table [Supplemental Material]).

Analysis of Attractiveness and Trustworthiness Judgments

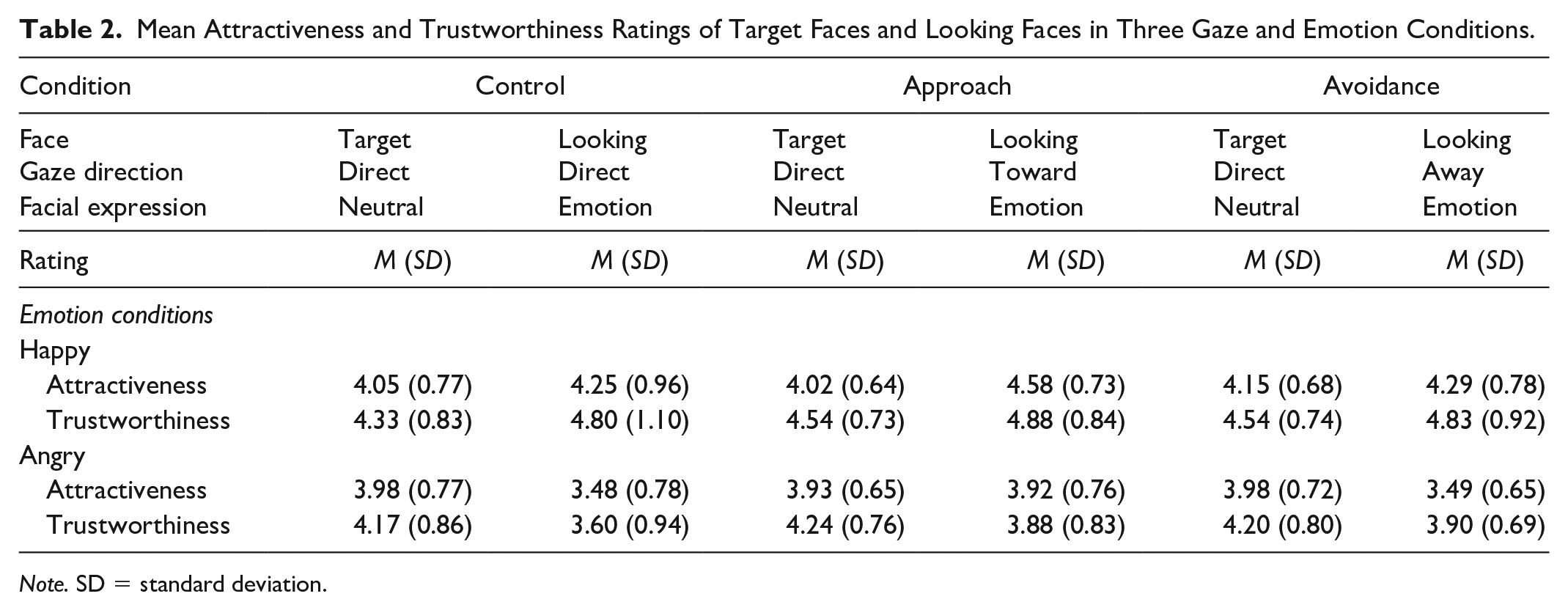

To test whether the emotional context and gaze and head orientation modulate observers’ judgments, we calculated M and SD of the target and looking face in each gaze condition (Table 2).

Mean Attractiveness and Trustworthiness Ratings of Target Faces and Looking Faces in Three Gaze and Emotion Conditions.

Note. SD = standard deviation.

Ratings of the Target Face

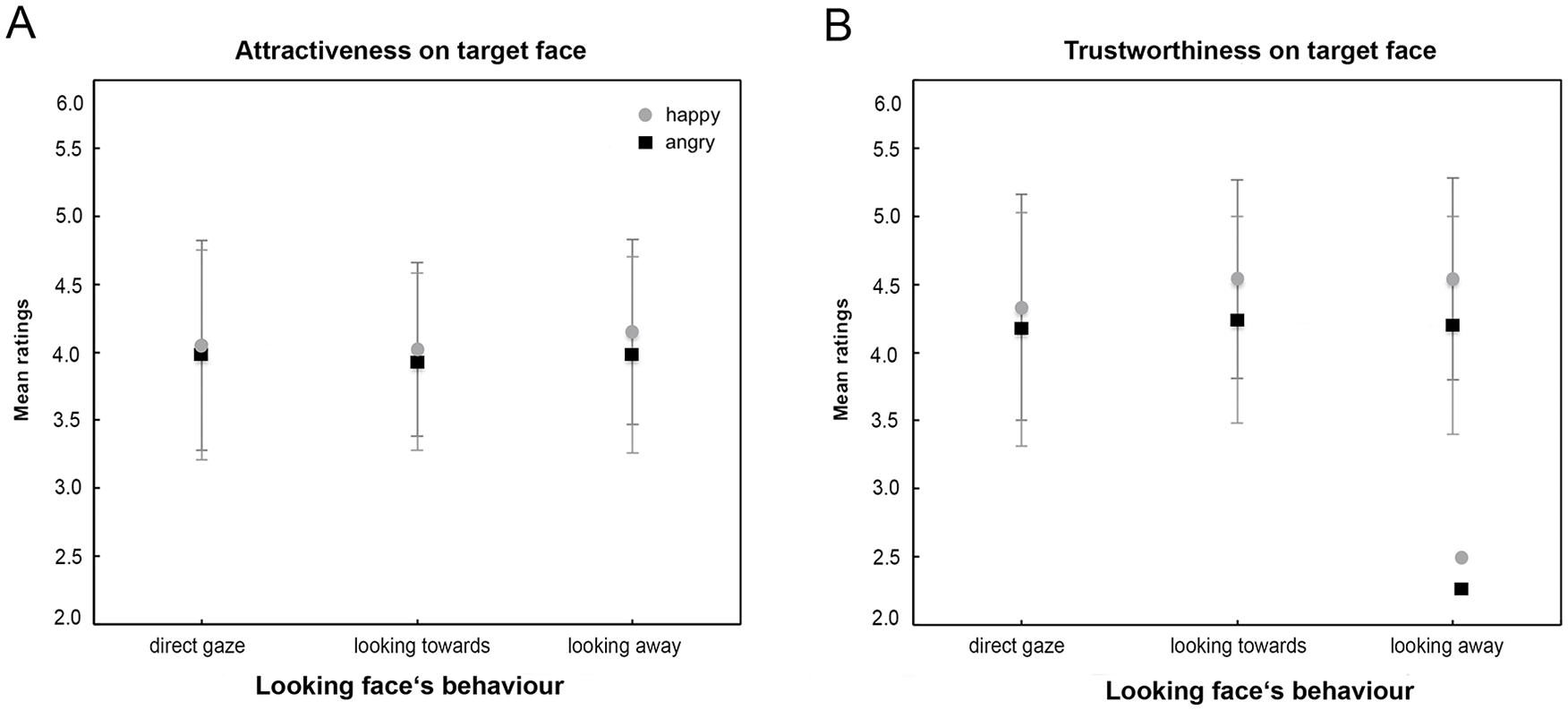

For attractiveness ratings (Figure 3A), the ANOVA revealed a main effect of emotion, F(1, 50) = 6.95, p = .01,

Mean attractiveness and trustworthiness judgments of different gaze directions and emotional expression conditions of the looking face on the target face. Black squares indicate positive facial expressions (happy), and gray dots indicate negative facial expressions (angry). Gaze directions: Direct gaze (full frontal view), looking toward (three-quarter view), and looking away (profile view).

For trustworthiness ratings (Figure 3B), the ANOVA revealed a main effect for emotion, F(1.00, 50.00) = 13.28, p = .001,

Ratings of the Looking Face

For attractiveness ratings of the looking face, the ANOVA revealed a main effect of gaze, F(2, 50) = 17.59, p = .001,

For trustworthiness ratings, the ANOVA revealed a main effect of gaze, F(2, 50) = 3.60, p = .031,

Comparison Between Rating-Behavior to Target and Looking Face

To further test our first hypothesis, we conducted three paired t-tests for attractiveness and trustworthiness ratings, comparing the ratings on the target face to the ratings of the looking face in each of the three gaze and emotion conditions (control, approach, and avoidance). The t-tests with Bonferroni correction revealed a significant difference (M, SD, t-, and p-values shown in S7 Table [Supplemental Material]): participants rated happy faces looking toward the target face as more attractive than the target face. We neither found gaze and emotion effects in the control nor in the avoidance condition. However, participants rated target faces in the control and avoidance conditions as more attractive when displayed with an angry face. We found a similar pattern with trustworthiness ratings. Participants rated happy faces independent of the gaze direction as more trustworthy, while rating target faces as more trustworthy when shown with an angry face.

Correlation Between Eye Movement– and Behavioural Data

To test our third hypothesis, whether observers’ visual exploration correlates with their subjective judgments, we conducted a set of two-tailed Pearson correlations and analyzed correlations between the corresponding measures of mean dwell time of target faces and mean attractiveness and trustworthiness judgments of target faces, respectively correlating dwell time of looking faces with ratings of looking faces, in each gaze and emotion condition (Table 3). Corresponding pairs revealed no significant correlations between dwell time and person judgments. Only in the approach condition, we found a trend, p = .06, in correlation of dwell time and attractiveness judgments on the face looking toward the target face. Overall, these findings indicate that observers’ time spent looking at faces does not inform about the judgment formation of these faces, at least not in a condition in which the participants were already familiar with the faces from the free viewing eye tracking task, and in which more than one facial cue was manipulated.

Bivariate Correlation (Pearson Correlation Coefficient r) Between Person Judgments of Attractiveness and Trustworthiness, and Dwell Time of the Target and Looking Face in Three Gaze and Emotion Conditions.

Note. After Bonferroni–Holm correction, no correlation reached significance (df = 51). r = Pearson correlation coefficient. p = p value.

To further analyze whether the perceived information of the looking face modulated participants’ judgments on the target face, we correlated participants’ dwell time on the looking face with attractiveness and trustworthiness ratings on the target face. The two-tailed Pearson correlations revealed no significant association between the pairs in the control, approach, and avoidance condition (S8 Table [Supplemental Material]).

Discussion

Social situations offer a variety of simultaneously available facial cues. In this study, we investigated how the combination of different facial cues of two people depicted in a social interaction influences the observers’ eye movements and judgments of the two people. For this purpose, we used triadic social scenes to study effects of combined gaze and head orientation cues while at the same time varying the emotional context (positive or negative facial expression) in the scene. We were particularly interested in whether being looked at by another person showing emotional expressions would influence observers’ person judgments, as it has been shown for objects (Bayliss et al., 2007) and in social scenes with neutral facial expressions (Kaisler & Leder, 2016, 2017).

Overall, our data revealed a consistent and clear pattern of results. First, mean dwell time on the target face was longer when it was shown together with a direct gaze face instead of a face looking at or away from it, regardless of emotional expression. Similarly, we observed the same main effect for fixation counts: however, in this case there was an additional significant interaction between gaze and emotion, suggesting that the target face was looked at more if it was accompanied by a direct gaze face with anger, compared with a direct gaze face with happiness, and by an averted gaze face with happiness compared with an averted face with anger. In the approach condition, fixation counts were similar for both emotions. Second, the gaze behavior of the accompanying face did not affect attractiveness and trustworthiness ratings of the target face, but its emotional expression did. Regardless of where the accompanying face was looking at, a happy-looking face led to increased attractiveness and trustworthiness ratings of the target face compared with an angry-looking face. The gender of the observer had no effect on these measures. Third, correlations between eye movement measures (dwell time) and subjective judgments for all individual faces in different conditions of gaze and emotion revealed no significant effects.

Our first hypothesis that the emotional context modulates observers’ eye movements was partly confirmed when analyzing eye movement parameters regarding the target face. During the 5 s duration, observers’ visual exploration patterns were modulated by the gaze direction of the looking face only. Observers looked longer and fixated more often on target faces, indicating a strong social and salient signal of a direct gaze to capture observers’ attention that has been shown in previous studies using eye tracking in social settings (Palanica & Itier, 2011, 2012), with isolated faces (e.g., Ewing et al., 2010; Mason et al., 2005) and in triadic scenes (Kaisler & Leder, 2016, 2017).

The additional analysis of dwell time on the looking faces showed a main effect of gaze: participants looked longer on faces looking toward the target faces compared with the direct gaze condition and faces looking away from the target face. This is also in-line with the results of a direct comparison between dwell times on the target and looking faces. A trend regarding a correlation between happy-looking faces and attractiveness judgments was found. Again, we found no effects of emotion on dwell time on looking faces even though they displayed the positive or negative emotion as well as no gaze and emotion effects on fixation counts.

In the current study, the emotional context created by the looking person in the scene modulated observers’ judgments. Target faces that were shown together with a happy face were judged as more attractive and trustworthy than target faces accompanied by an angry face. This finding is in-line with research on face–observer interaction (Adam et al., 2003; Adams & Kleck, 2005) and when expressing a positive emotional context by objects (Bayliss et al., 2010). In contrast to Bayliss and colleagues’(2007) findings on objects, participants’ judgments are influenced only by the emotion expressed by the looking face and not by their gaze direction. This positive effect of emotional context is probably linked to the affiliative behavior of the observer and might be due to the activation of the reward system of the human brain during joint attention and social interaction (Schilbach et al., 2008, 2010). For example, trustworthiness judgments of faces during social interaction are modulated more strongly when faces are smiling as compared with neutral and angry faces or objects (Bayliss et al., 2007, 2009).

Despite our expectations, our second hypothesis predicting that the interaction between gaze and happy expressions would lead to increased attractiveness and trustworthy ratings of the target face was not fully supported. As mentioned above, this might be due to the strong signal of direct gaze and longer looks on face looking toward the target face. We found no effect of the looking face’s gaze direction on the person judgment of the target faces that was previously reported for faces with neutral expressions gazing at objects (Bayliss et al., 2006). Neither had we found a correlation of dwell time on looking faces and judgments of target faces or a suggestion that the perception and judgment of faces might be dissociated. In general, this might be due to participants’ time spent on the looking face as well as the enhanced attractiveness of three-quarter views (Kaisler & Leder, 2017), which would explain the trend regarding the correlation between dwell time and attractiveness ratings presumably due to the recognition advantage of three-quarter views (Bruce et al., 1987; Kaisler & Leder, 2017). However, our behavioral findings are in-line with Landes and colleagues (2016) who did not find a gaze effect with faces as target stimuli (instead of objects) that were looked at even though the faces expressed “liking and happiness” and “disliking and mistrust.” The authors presented the neutral cue faces for 1,500 ms, then the face turned to the left or right with an emotional expression for 250 ms before the target face appeared. The authors found no effect of happy or disgust emotions on objects that are not looked at. The emotional expression of a person might convey only little information about the information regarding an object that is not looked at, however, it leaves room for many interpretations in a situation where another person is involved. To improve our understanding of the role of the emotional context in triadic situations, future studies may explore the interaction between the looking face expressing emotions and the observer when providing more information about the potential consequences for the target person. Considering that in the current study the positive emotional context itself was the main factor for enhanced attractiveness ratings (and not gaze and head orientation), gaze direction effects might have been overridden. Additional analyses within gaze and emotion conditions showed that a positive emotional context (happiness) increased participants’ judgments of looking faces, whereas a negative emotional context (anger) increased participants’ judgments of target faces. These results show that indeed gaze and emotion play a role in the judgment of attractiveness and trustworthiness modulating them differently, supporting previous findings (Kaisler & Leder, 2016, 2017). Further evidence is needed to support these conclusions in triadic scenes; it is possible that our results apply only to a specific type of stimuli and paradigm, which we address in the limitations of this study.

Because people look longer at highly attractive faces than less attractive faces in a free viewing eye tracking task (Leder et al., 2010, 2016; Mitrovic et al., 2016), we expected that other facial cues, such as emotion and gaze/head orientation, would enhance observers’ visual exploration pattern, especially when attention is directed toward the target face. Thus, looking longer on target faces would in this case lead to more positive evaluations of the target face. However, we did not find significant correlations between participants’ eye movement data and person judgments, thus our third hypothesis was not supported. This might be due to differences in focus and attention between the conditions. Observers’ were instructed to view the scene freely without focusing on a specific item, whereas in the rating block attention was directed toward the face to be rated, indicated by text appearing above the image. Moreover, the looking and smiling face might be a social signal indicating a tendency toward social approach, thus the observer might be attracted by the positive emotion and consider this face as more attractive and trustworthy. The observer might interpret the positively valenced looking face to decide on his or her potential behavioral intentions with respect to the other person (target). In the case of a positive emotional context, it might not be socially relevant whether the target face shows a neutral expression because in this situation there is no social threat. However, in the case of a negative emotional context, for example, such as anger and fear indicating a threat (Adams & Kleck, 2005), the observer might explore both faces perceived in the scene equally, to draw conclusion on the potential behavior of this situation. This is similar to the effect of threat priming (Leder et al., 2010).

Alternatively, it is possible that there was no correlation between the eye movement patterns and the subsequent subjective ratings due to task differences. In the Block 1, participants were unaware of the subsequent rating block, and it can be assumed that their eye movements were following a natural behavior. However, it is known since Yarbus’s (1967) seminal study that eye movements are determined by tasks and goals, and thus might be very sensitive to the given instructions. This opens up a range of possibilities for further experiments such as manipulating the instructions for the eye movement task.

Our findings, together with previous studies of social triadic scenes (Kaisler & Leder, 2016, 2017), suggest interplay of gaze and emotion in early perception processes of faces in social scenes and a strong effect of positive emotional context for preference judgment formation. When perceiving two other people that display different gaze/head orientations and emotional expressions in a natural scene, observers’ visual orientation is guided by the gaze/head direction. However, when observers’ are asked to judge these familiar faces regarding their attractiveness and trustworthiness, observers’ evaluation of others is modulated by the emotional context expressed by a face. Therefore, emotional expressions may have a strong influence on attractiveness and trustworthiness judgments. Effects of gaze direction (direct gaze as a strong signal of social interaction) are subtle and can probably be superseded or concealed by the former effects. In addition our results indicate that eye movements during a free viewing task (perception) and subsequent person judgments (preference) may be dissociated.

Limitations and Implication for Future Work

In the present study, we presented female, male, and mixed-gender pairs in natural scenes to move toward more ecologically valid stimuli of real-world scenes, which influenced participants’ judgments and eye movements. A recent study (McCrackin & Itier, 2019a) showed that female participants elicit stronger gaze cueing effects than male participants, however, Bayliss and colleagues (2005) showed no modulation of gaze cueing by the gender of the cued face. Similar, in triadic natural scenes, previous studies found no gender effects of male and female participants perceiving female face pairs (Kaisler & Leder, 2017) or female and male face pairs in scenes (Kaisler & Leder, 2016). However, in the present study, it was not investigated whether the faces’ gender may have interacted with participant’s gender to impact eye movements and ratings. At present we can only report that the gender of the observer did not show any effects overall. Only when comparing looking faces in the three gaze and emotion conditions, we found that female observers, in comparison to male observers, rated happy faces as more attractive than angry faces. However, due to a limited number of trials we could not perform further analyses on possible interaction effects between participants’ gender and the gender of the people shown in the scenes.

Previous studies reported larger gaze cueing with fearful, surprise, and anger compared with neutral expressions (Bayless et al., 2011; Fichtenholtz et al., 2009; Holmes et al., 2010; Lassalle & Itier, 2013; Neath et al., 2013) and happy expressions (McCrackin & Itier, 2018, 2019a). These mixed findings could be due to the task and stimulus dependent discrimination of emotional expression and gaze directions (Bindemann et al., 2008; Graham & LaBar, 2007). In the present study, the lack of specific contextual information might have weakened gaze effects in the approach condition. For example, Jones and colleagues (2006) found gaze effects in the context of mate selection. The authors asked participants to indicate how attractive they found one target face compared with another. In the present study, participants were instructed to rate the attractiveness and trustworthiness of the target and looking face, which might be based on their initial impression rather than thinking more carefully about their rating while comparing faces. A comparison of faces may integrate multiple sources of information, such as the gaze direction, in the decision-making process. This process might differ from the concept of preference (e.g., Bayliss & Tipper, 2006; Conway et al., 2008; Ewing et al., 2010; Mason et al., 2005) and may not be generalized beyond that context.

We found no effect of the looking face’s gaze direction on the target face, which might be due to the specific type of stimuli and used paradigm. We chose head orientations and viewing angles that have been used in previous studies (McKone, 2008; Rule et al., 2009; Stephan & Caine, 2007) to compare between different directions of gaze. For example, Rule and colleagues (2009) demonstrated consistency in judgments of faces in all three orientations (frontal, three-quarter, and profile view), accuracy when time is limited to 50 ms (Ballew & Todorov, 2007; Rule & Ambady, 2008), and consistency of attractiveness and trustworthiness judgments among viewing angles given unrestricted viewing-time (Rule et al., 2009). Our stimuli comprised three-quarter views in the approach conditions and profile views in the avoidance conditions, the latter having limited recognition availability because some parts of the face (e.g., eyes) are not fully visible (McKone, 2008; Stephan & Caine, 2007). We built on findings of previous studies (Kaisler & Leder, 2016, 2017) using the same head orientation and viewing angles. We chose these different viewing angles to clearly distinguish between the avoidance (looking away) and approach condition (looking toward), where participants should be able to fully recognize the emotional expression (happy, angry) to engage with the looking face guiding their attention toward the target face. As Kaisler and Leder (2017) showed, subtle effects of gaze direction were found when both viewing angles, three-quarter, and profile, were applied in social triadic scenes: looked-at target faces were considered as more attractive than faces looking away from the target. However, in the present study we hypothesized to replicate gaze effects even after the introduction of another facial cue, namely emotion. Interestingly, we found no effect of the looking face’s gaze direction, which might be due to the strong salient signal of direct gaze when comparing the control and the approach condition. Another limitation could relate to the viewing angle of 45° head orientations. Potentially, participants could look slightly to the left or right of the target face, thus not looking exactly at the target face’s gaze. However, in this study we asked models during stimuli production if they could see the target face when turning the head 45° to assure the ecological validity of stimuli, which was the case. Also heat map analyses from scenes showed that participants looked at the target and looking face (area of interests) and less at the middle of the screen during the adjustment of gaze in the beginning of each trail (fixation cross).

Again, task differences might have influenced our findings. We chose a free-viewing eye tracking task to explore observers’ natural eye movement behavior, which informs about their perception of such social scenes. The following affective judgment tasks served a different purpose of measuring attractiveness and trustworthiness of faces in social scenes. Both tasks involved different levels of observers’ cognition and attention. However, social cognition not only involves understanding other people’s behavior and interpreting their responses to our action: Future studies would benefit from interactive responses as in Bayliss et al. (2013), influencing decision-making after social interaction occurred. Responses from the gaze follower would give insights into the initial behavior tendency of the observer influencing their social engagement and interaction. Furthermore, future studies should explore the dynamics of gaze and emotion during presentation time that might inform about cognitive process and their interaction in decision-making.

In general, our finding might be limited due to the participants’ background and lack in diversity. We recruited Caucasian psychology students in their early twenties that are familiar with experimental procedures and may speculate about the experiments’ purpose or cater to the experimenter’s attitude. To avoid any priming, we told participants to freely view the scenes without giving them a specific task in the eye tracking experiment or further background information on the aims of the study.

Exploring real-life scenes in a social context is the first step toward studying complex social interactions and dynamics in groups. Our findings do not only contribute to an understanding of perceptual mechanisms, it might also inform practical applications or considerations when working and interacting with people. For example, in consultation sessions, pair therapy or group psychotherapy nonverbal communication plays an important role. The seating order of therapists or other clients, such as opposite or next to clients, might influence their perception and behavior. Furthermore, first impressions or introductions of new consumers or work colleagues might be influenced by the gazing behavior that may lead to person judgments. Our findings could also be applied in effective advertising of selling products and making advertisement of TV or print offers involving faces.

To conclude, the present study shows that positive emotional context influences observers’ preference in triadic scenes and suggests interplay of gaze and emotion effects on perception of faces and a strong effect by a positive emotional context on a person’s evaluation. Future work may investigate personality judgments in addition to social and aesthetic judgments of trustworthiness and attractiveness in order to understand observers’ behavior and intentions toward other people. Research will have to continue elaborating on these complex cues in social interactions and decisions-making processes in triadic scenes.

Supplemental Material

S1_Table – Supplemental material for Effects of Emotional Expressions, Gaze, and Head Orientation on Person Perception in Social Situations

Supplemental material, S1_Table for Effects of Emotional Expressions, Gaze, and Head Orientation on Person Perception in Social Situations by Raphaela E. Kaisler, Manuela M. Marin and Helmut Leder in SAGE Open

Supplemental Material

S2_Table – Supplemental material for Effects of Emotional Expressions, Gaze, and Head Orientation on Person Perception in Social Situations

Supplemental material, S2_Table for Effects of Emotional Expressions, Gaze, and Head Orientation on Person Perception in Social Situations by Raphaela E. Kaisler, Manuela M. Marin and Helmut Leder in SAGE Open

Supplemental Material

S3_Table – Supplemental material for Effects of Emotional Expressions, Gaze, and Head Orientation on Person Perception in Social Situations

Supplemental material, S3_Table for Effects of Emotional Expressions, Gaze, and Head Orientation on Person Perception in Social Situations by Raphaela E. Kaisler, Manuela M. Marin and Helmut Leder in SAGE Open

Supplemental Material

S4_Table – Supplemental material for Effects of Emotional Expressions, Gaze, and Head Orientation on Person Perception in Social Situations

Supplemental material, S4_Table for Effects of Emotional Expressions, Gaze, and Head Orientation on Person Perception in Social Situations by Raphaela E. Kaisler, Manuela M. Marin and Helmut Leder in SAGE Open

Supplemental Material

S5_Table – Supplemental material for Effects of Emotional Expressions, Gaze, and Head Orientation on Person Perception in Social Situations

Supplemental material, S5_Table for Effects of Emotional Expressions, Gaze, and Head Orientation on Person Perception in Social Situations by Raphaela E. Kaisler, Manuela M. Marin and Helmut Leder in SAGE Open

Supplemental Material

S6_Table – Supplemental material for Effects of Emotional Expressions, Gaze, and Head Orientation on Person Perception in Social Situations

Supplemental material, S6_Table for Effects of Emotional Expressions, Gaze, and Head Orientation on Person Perception in Social Situations by Raphaela E. Kaisler, Manuela M. Marin and Helmut Leder in SAGE Open

Supplemental Material

S7_Table – Supplemental material for Effects of Emotional Expressions, Gaze, and Head Orientation on Person Perception in Social Situations

Supplemental material, S7_Table for Effects of Emotional Expressions, Gaze, and Head Orientation on Person Perception in Social Situations by Raphaela E. Kaisler, Manuela M. Marin and Helmut Leder in SAGE Open

Supplemental Material

S8_Table – Supplemental material for Effects of Emotional Expressions, Gaze, and Head Orientation on Person Perception in Social Situations

Supplemental material, S8_Table for Effects of Emotional Expressions, Gaze, and Head Orientation on Person Perception in Social Situations by Raphaela E. Kaisler, Manuela M. Marin and Helmut Leder in SAGE Open

Footnotes

Acknowledgements

The authors thank Sandra Deringer for her contributions to the preparation of the stimuli and Katrin Mair and Denise Ramsperger for assistance with data collection. The authors are indebted to Bruno Gingras for comments on an earlier version of the manuscript.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported in part by the FWF Austrian Research Fund (grant no. P27355) and the WWTF Vienna Science and Technology Fund (grant no. CS18-021) to HL. There was no additional external funding received for this study.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.