Abstract

Connected speech produced by native speakers poses a challenge to second language learners. Video subtitles have been found to assist the decoding of English connected speech for learners of English as a foreign language (EFL). However, the presence of subtitles may divert the listeners’ attention to the visual cues while paying less attention to the speech signals. To test this proposal, we employed a bi-modal audio-visual listening test and examined whether EFL listeners were able to correctly identify the connected speech when misleading subtitles were present. We further tested whether connected speech with words of lower frequency further reduced the accuracy rate. Twenty-eight adolescent EFL learners, all with more than 10 years of experiences in learning English in schools, were tested with three major types of connected speech phonological processes, namely assimilation, elision, and juncture. The results of statistical analyses showed that matched and mismatched subtitles facilitated the comprehension of both familiar and unfamiliar connected speech. Error analyses revealed the degree of item-specific variations across the three types of connected speech processes as well as across the three subtitling conditions. This research provides insights on the immediate and long-term impact of subtitles on the decoding of English connected speech.

Introduction

Learners of English as a foreign language (EFL) have been found to experience difficulties in comprehending English speech uttered by native speakers even after prolonged listening training in schools (Shockey, 2003). One reason is that most of the English speech (presented by non-native English-speaking teachers or pedagogically designed audios spoken by native speakers) appears in EFL classrooms is uttered at a slower rate to accommodate the EFL learners’ abilities. To ensure English words are properly learned, they are presented to EFL students in the citation form. Citation form, also known as dictionary form, is the way English words are presented in audio dictionary, that is, each word is presented in isolation and the pronunciation of the consonants and vowels are unaltered by surrounding phonological environment. According to Johnson (2004), approximately 60% of words in a corpus of 88,000 American English word tokens were spoken in reduced forms (i.e., speech signals are altered or reduced as compared with the utterance of a word in isolation). The more reduction and alternation of speech signal, the harder it is for EFL learners to perceive, as more demanding phonological reconstruction is required (Ernestus et al., 2002).

Of the numerous ways to improve listening comprehension, one of the most commonly adopted means is through the use of subtitles, which are frequently displayed in movies as closed captions and can be understood as a textual form of visual aid shown on screen. The usefulness of subtitles in video clips has attracted some scholarly attention in recent decades, and it appears researchers have reached the consensus that they can benefit different cohorts of learners, including normal-hearing children and adults, as well as those with hearing impairments (Gernsbacher, 2015). In some empirical studies, subtitles are further classified as standard/inter-language (audio in a second language (L2) + subtitles in the first language (L1)), intra-language (audio in L2 + subtitles in L2), or reversed (dubbed audio in L1 + subtitles in L2). Through comparing performances between various subtitled conditions, studies on the effects of subtitling generally report a positive influence of intra-lingual (L2) subtitles in English (or other language) comprehension among second language learners (e.g., Bird & Williams, 2002). Similar findings of the positive effect of subtitles on English comprehension in animations, cartoons, movies, and TV series have been reported (Yang & Chang, 2014). Empirical studies on subtitling conducted so far mainly focused on the beneficial effects of subtitles on listening comprehension performance (e.g., Huang & Eskey, 2000), vocabulary acquisition, and recall of materials (e.g., Chun & Plass, 1996). Despite the presence of benefits provided by subtitles to EFL learners, subtitles are viewed differently by different EFL learners. Generally, lower-intermediate and intermediate learners do not have a choice by relying more on subtitles to comprehend the connected speech (Winke et al., 2010). It was revealed in an eye tracking study that the learners fixated on the subtitles 68% of the time. The interview data further suggested that these learners found reading subtitles easier than listening for extracting meaning and that reading also helped them more readily segment words from the stream of speech. These learners did not employ progressively fewer subtitles during the course of movie viewing (Pujola, 2002) and their learning goal was more oriented to improving reading rather than listening skills (Caimi, 2006; Chai & Erlam, 2008). In the long-run, without engagement with both the spoken and the subtitles signals, these less proficient EFL learners will hardly develop the ability to map phonological characteristics of sounds and the semantic meaning of words uttered in connected speech. Even worse, these groups of learners are less confident about their listening ability (Vanderplank, 2016).

Although general English language proficiency of the listeners plays a critical role in the use of subtitles (Yeldham, 2018), what awaits to be addressed is the large individual variations within the specific proficiency group. We speculate whether the nature of connected speech has an effect on listener’s performances. The two salient features about connected speech are the types of connected speech phonological processes as well as the familiarity of words within the connected speech. An introduction of the two features and relevant research findings is provided below.

Processing Efficiency of Familiar and Unfamiliar Connected Speech

As individual words are represented differently in connected speech, multiple phonological representations for individual words exist. A large body of research has shown a strong positive correlation between word frequency and the efficiency of auditory lexical access during spoken word recognition (e.g., Cleland et al., 2006; Connine et al., 1990, 1993; Goldinger, 1998; Marslen-Wilson, 1990), with the most frequently occurring words (high-production frequency) having the highest activation strength. In particular, the frequency at which the phonological variants are perceived in the context of continuous speech is shown to determine the efficiency of connected speech processing (Connine et al., 2008; Ernestus, 2014; Pitt & Samuel, 1995). For example, words that are frequently heard are recognized faster and more accurately in lexical decision tasks, as compared with those that are infrequently heard. Studies have also shown a reduced priming effect for low production frequency, compared with high production frequency, connected speech (Ranbom & Connine, 2007). Similarly, Mitterer and Russell (2013) demonstrated that higher production frequency is beneficial to the comprehension of reduced variants. Although the above studies examined the impact of word frequency on spoken word recognition in a uni-modal listening environment, it remains uncertain whether a similar word frequency effect can be observed in a bi-modal audio-visual listening context. The present study thus aims to fill this research gap.

The Consistency of Performances Across Phonological Processes of Connected Speech

For EFL learners, their first language (L1) background and L1 phonological system has been found to restrict the acquisition of L2 connected speech (Wong et al., 2017a). Among Chinese learners of English in particular, three connected speech phonological processes, namely assimilation, elision, and juncture, are largely affected by the cross-linguistic differences between Chinese and English sound systems. Assimilation is defined as the process in which phonemes assimilate to the place of the neighboring consonant while retaining their original voicing characteristics (Cruttenden, 2014). For example, the pronunciation of the word phrase /ten players/ is changed from [tεn ‘pleɪɚz] to [tem ‘pleɪɚz] in running speech. Assimilation is absent in Chinese utterances and therefore poses a challenge for Chinese EFL learners in English speech processing (Mao & Chen, 2013). In Liang’s (2015) study, 50 Chinese university sophomores majoring in English exhibited below-chance performances when identifying cases of assimilation. Another connected speech process that poses a challenge to Chinese EFL learners is (contextual) elision, in which a vowel or consonant occurring within either the body of a word or at a junction of word boundaries is lost (Cruttenden, 2014). This process is often evidenced in word phrases such as /iced tea/ (its citation form is /aɪst ‘ti/), which is pronounced as [aɪs ‘ti] (similar to /ice tea/). The process of elision is also absent in Chinese utterances (Mao & Chen, 2013). In a study conducted in Taiwan, Chinese EFL sophomores with low, mid, and high English proficiency levels correctly identified only 44%, 69%, and 77% of elision cases, respectively (Kuo, 2012). Finally, juncture is the connected speech process referring to the removal of a clear boundary between two syllables. For example, /a name/ is transformed from its citation form [ə neɪm] to its reduced form [ən eɪm] (like /an aim/). The obligatory gap between words to demarcate information is often absent in connected speech. In order to resolve juncture, a combination of contextual information and subtle cues in the speech signal is utilized by the listener to discern individual words (Setter et al., 2014). Moreover, it is suggested that English connected speech is like “singing in a legato way,” whereas Chinese connected speech is “articulated in a staccato way” (Duanmu, 2007, p. 71; Roach, 2008, p. 144). Junctures in the Chinese language can be clearly perceived due to the language’s syllable-timed pattern and the fact that the majority of words share the same degree of emphasis. As a result, Chinese EFL learners generally find it hard to identify junctures in English. When junctures are located before unstressed words or between words whose onsets or codas can be joined by the preceding or following words, they are almost unperceivable (Liang, 2015). Setter et al.’s (2014) empirical study indicated that Hong Kong listeners were only able to correctly identify 60% of junctures presented in British English speech. Given the suboptimal performances in Chinese EFL learners when identifying these three connected speech processes, it is therefore crucial to focus on these aspects in the present study.

The Use of Subtitles in Non-immersive English Environments

When learning English in a non-immersive English environment, EFL learners receive limited exposure to native English (Bradlow & Bent, 2002). Thus, these EFL learners have to rely heavily on listening materials in school settings as well as mass media, such as movies and TV channels (Vandergrift, 2011). As a common practice in Hong Kong, classroom materials are usually presented alongside a transcript, and the English-speaking movies are required to show Chinese subtitles (J. Y. H. Chan, 2013). Ironically, even if native English speech is readily available in non-English-speaking countries, listening skill is still not as good as expected (Wong et al., in press). This situation has motivated us to question the exact influences of subtitles on the decoding of connected speech.

In addition to the availability of listening materials, other factors such as language proficiency (e.g., Maleki & Rad, 2011), cognitive load of the multimedia (e.g., Winke et al., 2010), and design of subtitles (e.g., Chung, 1999) have also been investigated to understand the usefulness of subtitles. Still, a beneficial role of subtitles was assumed in these studies and the investigative focus remained on their degree of facilitation. However, as implied by the poor listening comprehension performances in EFL learners with various language backgrounds who can readily access subtitles (e.g., Chinese: Chung, 1999; French: Guillory, 1998; Spanish: Markham & Peter, 2003; Russian: Winke et al., 2010), we suspect that subtitles may not facilitate the acquisition of connected speech under all circumstances and reducing any anxieties triggered when listening to non-L1 speech (Behroozizad & Majidi, 2015), the negative aspects of subtitles require further investigation.

In an audio-visual environment, it is well known that the visual modality is dominant, as visual information is processed significantly faster than auditory information; hence, auditory processing is often subdued (Lukas et al., 2010). Moreover, if subtitles are treated as obligatory rather than supplementary during listening, EFL learners are likely to experience listening difficulties when no subtitles are provided (Grgurović & Hegelheimer, 2007; Pujola, 2002). Due to the more enduring nature on the screen of the subtitles than the connected speech (Hulstijn, 2003), ESL learners may develop the tendency to read subtitles for extracting meaning and/or segmenting words in the stream of connected speech (Winke et al., 2010). One negative effect we speculate is “subtitle-dependency,” whereby learners are more likely to trust what they read from subtitles over what they hear from the corresponding audio when they are presented with mismatched subtitles, that is, when the content of the subtitles does not match the content of the audio. Hence, we predict that EFL learners are more prone to listening errors when mismatched subtitles are provided.

The Present Study

In this section, we shift our focus and provide a brief description of the context of the present study. In Hong Kong, English language is a compulsory subject and is introduced in the curriculum as early as 3 years of age. Despite the official status of the language, it is not commonly spoken in daily life conversation. Students are, however, highly motivated to get good results in English, as proficiency in English directly affects the results of public examination and thus their chance of university admission. Apart from academic use, students in Hong Kong are also exposed to spoken native English through various forms of media such as movies and songs, as well as having lessons with their Native English-speaking teachers in school.

Despite the rich environment available for English learning, it is surprising to note that Hong Kong undergraduates who have been learning English for over 15 years were still unable to fully decode connected speech spoken by native English speakers (Wong et al., 2017b); furthermore, in an earlier study, Shockey (2003) found that Hong Kong Cantonese speakers who were English language teacher trainees made multiple perceptual errors when decoding English connected speech. It is essential to understand whether high school EFL students in Hong Kong are dependent on subtitles during listening comprehension. Although this enhances immediate decoding of speech, the use of subtitles may hinder auditory perceptual learning of connected speech, causing long-term suboptimal performances in connected speech processing. This potential negative effect motivates us to study how connected speech is processed when subtitles are presented. Do listeners trust their ears or their eyes more? In addition, we compare listeners’ accuracy in decoding speech across three types of connected speech (assimilation, elision and juncture) in order to reveal their unique characteristics and the level of difficulties they represent to listeners.

In light of the aforementioned research gaps, the four research questions of the present study are listed as follows:

Method

Participants

A total of 28 Cantonese-speaking EFL learners (16 females; 12 males) aged between 15 and 16 years were recruited for the current study. The students had no reported difficulties in learning or language acquisition. All participants were 10th graders from a local secondary school in which Cantonese is used as the medium-of-instruction for non-English subjects. These students were recruited by the second author, who was their English teacher. Based on the benchmark against the territory-wide English standard specified by the Hong Kong Education Bureau (2004) as well as via a medium-level listening test (Davis, 1998), the participants were rated “average” in terms of English proficiency.

Procedure

This work was conducted with the formal approval of Human Research Ethics Committee at (The Education University of Hong Kong). After obtaining informed consent from participants and their parents, respectively, the experiment was carried out in a classroom in the participants’ school. All students were presented with the same set of testing materials in a group-testing situation. The uni-modal connected speech decoding task (presented to the participants as an unseen dictation task) was administered first, followed by the bi-modal connected speech decoding test. The test administration order was designed in such a way to avoid the influence of prior exposure to the subtitles in the bi-modal task on the uni-modal task.

Preparation of Audio

We first generated a list of minimal pairs of connected speech while taking the English proficiency levels of the participants into consideration. The list was developed by our research team and was only adopted in the current study. The stimuli were recorded in a soundproof room using a high-quality Roland R-09HR recorder and digitized at a sample rate of 44.1 kHz with a 16-bit amplitude resolution. The set of stimuli was spoken aloud by a 25-year-old native female speaker who was born and raised in New York. This speaker also advised on the suitability of the test items for the use in this study. A General American (GA) accent was chosen for this study as Hollywood movies and TV programs are popular among Hong Kong Chinese adolescents and they are commonly exposed to this accent. Furthermore, as shown in J. Y. H. Chan’s (2013) study, young adults in Hong Kong regard the GA accent as native accent.

Measures

Bi-modal audio-visual connected speech comprehension test

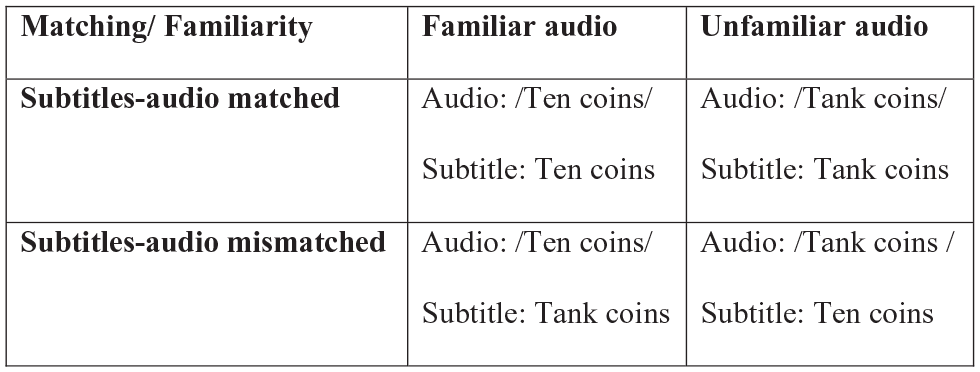

This test assesses listeners’ ability to decode minimal pairs of connected speech, distinguished by one differing segment within the whole phrase. By manipulating two parameters, namely the link between the content of the spoken word phrases and the subtitles (matched vs. mismatched) and the degree of word phrase frequency or “familiarity” (familiar vs. unfamiliar), four listening conditions were created: (a) a familiar phrase with matched subtitles, (b) a familiar phrase with mismatched subtitles, (c) an unfamiliar phrase with matched subtitles, and (d) an unfamiliar phrase with mismatched subtitles. The familiar items were connected speech phrases comprising high-frequency words or formulaic phrases (e.g., not at all). Conversely, the resulting unfamiliar items in the minimal pairs contained unfamiliar lexical items and/or non-formulaic phrases. It should be noted that “unfamiliar” phrases such as /hot takes/ may not make sense under normal circumstances. Yet, we cannot rule out the possibility that the phrase may be articulated in a playful situation. Thus, these phrases are regarded as unfamiliar instead of illegitimate.

The recordings were deliberately designed to include connected speech processes that have been shown to be difficult for Chinese EFL learners, namely assimilation, elision, and juncture (A. Y. Chan & Li, 2000; Liang, 2015; Setter et al., 2014; Yang & Chang, 2014). The stimuli were short phrases without filler sentences. As shown in Table 1, the number of words in canonical/citation form was limited to 5 to minimize the working memory load. They also did not carry contextual cues for top-down, meaning-driven predictions. The same set of stimuli was presented in the subtitles-matched and subtitles-mismatched conditions. The number of words was therefore the same across the two conditions. There were altogether 36 minimal pairs of target connected speech patterns (see Table 1 for details). As the whole battery of test can be potentially be used for language assessment, scale reliability was examined. We computed Cronbach’s α that inform the degree of relatedness among a set of test items in a battery of tests. Reliability of the whole test (k = 72) was .73 (Cronbach’s α) which was commonly recognized as acceptable to good reliability (Cortina, 1993).

The Connected Speech Minimal Pairs Speech Stimuli and Their IPA Transcription.

IPA = International Phonetic Alphabet.

Familiar and unfamiliar word phrases in each pair are presented as “A” and “B,” respectively. b This is just one of the possible utterances that confuse the perception of the minimal pairs.

Participants were told that the recordings would be played with either matched or mismatched subtitles. They were therefore encouraged to focus on aural, as opposed to visual, information when answering the multiple-choice questions. For each trial, the student participants were presented with audio and subtitles concurrently through a speaker and PowerPoint slides (Figure 1). As the experiment was run in a group setting, the experimenters had to make sure that the participants completed each item before proceeding to the next item. Therefore, the bi-modal listening test was controlled and paced by the experimenter.

An illustration showing the experimental setup.

For half of the trials, the audio stimuli did not match the content of the subtitles, that is, they were presented in the “mismatched” condition. For example, the audio of [I dome know] was played together with the subtitle /I don’t know/. The remaining half of the trials presented matched audio and subtitles, that is, the “matched” condition. For example, /I don’t know/ was presented in both auditory and visual forms (Figure 2). Students were given two choices on the answer sheet, for this example the choices would be “I dome know” and ‘I don’t know.’ They were instructed to listen and select the phrase that they heard from the two choices printed on the test paper. These 72 items were randomized for presentation. On the randomization list, 64% of the items were presented with the subtitles-matched condition first and 36% of them were presented with the subtitles-mismatched condition first. In addition, the familiar and unfamiliar items in the phrase pairs were presented first in 47% and 53% of the items. These percentages could minimize the possibility that listeners’ decision was conditioned by their decision of the previous items. Each correct response yields one mark, and the number of correct responses in each of the listening conditions was summed to yield a mean score for further statistical analyses.

The two independent variables and the resulting four conditions.

Uni-modal connected speech comprehension test

In the “no-subtitles” condition, the experimenter played the audio stimuli in a fixed order and asked listeners to dictate what they heard. No subtitles were provided within this condition. The total number of test items was 36. An all-or-none scoring criterion was adopted such that an answer is considered correct only if it matches exactly the same as our answer key (Table 1). The marking was carried out by the first and second authors and a 100% agreement was achieved. Each correct response yields one mark, and summing the correct responses would produce a mean score for comparing the listening performances across conditions.

Results

A mix of inferential and descriptive statistical analyses were performed to investigate the influence of subtitles on decoding three types of English connected speech in Chinese EFL learners. The data analyses were conducted on data obtained from the 28 participants who finished all the tests. With a 3 (type of connected speech: assimilation vs. elision vs. juncture) × 2 (familiarity: familiar vs. unfamiliar) × 3 (subtitling: without subtitles vs. with matched subtitles vs. with mismatched subtitles) design, yielding a total of eighteen listening conditions. The means and standard deviation of these conditions are listed in Table 2.

Descriptive Statistics for Perception Accuracy Across the Nine Listening Conditions.

Repeated-measures analysis of variance (ANOVA) with two within-subject factors was used to compare the performances across various listening conditions. Before the analysis, we tested the assumption using Mauchly’s test of sphericity. If this assumption is violated (p < .05), a correction of degrees of freedom by Huynh–Feldt estimates of sphericity was carried out (Field, 2013, p. 474). When a significant interaction was detected, contrast analysis was conducted to examine where the difference lied.

Connected Speech Perception Performance in the No-Subtitles Condition

First, we assessed the performance of connected speech decoding in the “no-subtitles” condition, by comparing the three types of connected speech and examined the effect of familiarity. A 3 (types: assimilation vs. elision vs. juncture) × 2 (familiarity: familiar vs. unfamiliar) repeated-measures ANOVA was computed. The main effect of types was significant, F(2, 54) = 29.96, p < .001,

The Influence of Subtitles on Decoding Assimilation, Elision, and Juncture

Next, we examined the interplay between subtitling and familiarity. We conducted a 3 (subtitling: without subtitles vs. with matched subtitles vs. with mismatched subtitles) × 2 (familiarity: familiar vs. unfamiliar) repeated-measures ANOVA for each of the three types of connected speech, namely assimilation, elision, and juncture. The results will allow us to evaluate whether the two hypothesized variables have the same effect on different types of connected speech.

Assimilation

The main effect of subtitling was significant, F(2, 54) = 301.717, p < .001,

Elision

Mauchly’s test indicated that the assumption of sphericity for the main effect of subtitling had been violated, χ2(2) = 17.06, p < .001; therefore, degrees of freedom were corrected using Huynh-Feldt estimate of sphericity (ε = .69). The main effect of subtitling was significant, F(1.13, 36.45) = 455.55, p < .001,

Juncture

Mauchly’s test indicated that the assumption of sphericity for the main effect of subtitling and the interaction effect between subtitling and familiarity had been violated, χ2(2) = 6.52, p = .038 and χ2(2) = 11.29, p = .004, respectively. Therefore, degrees of freedom were corrected using Huynh-Feldt estimate of sphericity (ε = .86 and ε = .77). The main effect of subtitling was significant, F(1.72, 46.65) = 780.91, p < .001,

In summary, decoding of speech embedded with assimilation, elision, and juncture was poor when no subtitles were provided. Interestingly, the matched subtitles did not always serve as better subtitles than mismatched subtitles as a significant difference was found only among the assimilation items. Accuracy in decoding familiar connected speech was higher than unfamiliar connected speech in assimilation and elision only.

Item Analysis Within Each of the Three Types of Connected Speech

Subsequently, we performed item analysis to further compare listeners’ decoding performances across the three subtitling conditions for each individual test item. Specifically, we examined whether speech tokens under the same category (e.g., assimilation) elicited similar levels of accuracy. As shown in Tables 3 to 5, the range of percentage accuracy in speech identification was large across the three types of connected speech as well as across the three subtitling conditions, suggesting large item-specific variations.

Error Analysis for Assimilation Test Items (N = 28).

F = familiar; U = unfamiliar.

Error Analysis for Elision Test Items (N = 28).

F = familiar; U = unfamiliar.

Error Analysis for Juncture Test Items (N = 28).

F = familiar; U = unfamiliar.

As noted in the no-subtitles condition, the maximum percentage accuracy in speech identification for assimilation was 100% and the minimum was 0%, yielding a range of 100%. The ranges for elision and juncture in the same condition were 64% and 57.1%, respectively. In both matched-subtitles and mismatched-subtitles conditions, a 100% accuracy rate could be obtained for some items across the three types of connected speech. Although the ranges of percentage correct were similar for elision and juncture (about 30%), the range for assimilation was almost double that of the former two types (about 60%). As shown in Table 3, a number of inaccurate regressive assimilation errors was recorded, particularly for unfamiliar items. For instance, participants commonly misinterpret “tank coins” /ˈtæŋk ˌkɔɪnz/ as “ten coins” /ˈtæŋ ˌkɔɪnz/. For speech items embedded with juncture, a constant pattern of mis-segmentation was observed, such as misinterpreting “not at all” as “not that tall.”

In general, the performances of decoding assimilation, elision, and juncture were enhanced with the presence of either matched or mismatched subtitles. However, it is worth noting one exception. The accuracy rate of perceiving “I don’t know” is in fact lower in the conditions with subtitles (82.1%) compared with the no-subtitles condition (100%). Based on the above results, it is suggested that individual items vary in terms of level of difficulties as well as the sensitivity to subtitles.

Discussion

The present study aims to examine the role of subtitles in connected speech decoding in Chinese EFL learners. We used both matched and mismatched subtitles to test how listeners process visual and audio information in the face of conflicting situations. Our results showed that the performances of decoding connected speech in both matched and mismatch subtitles could significantly facilitate the processing of the three types of connected speech examined, which is generally in line with previous studies about the usefulness of matched subtitles in listening. These findings can strengthen the claim that provision of subtitles is crucial for decoding native English connected speech for Chinese EFL learners. More importantly, the data in the mismatched condition provide new insight to the use of subtitles in listening comprehension among EFL learners, suggesting that EFL adolescent learners who have not yet attained native-like English listening skills still attempted to link visual information with audio information, rather than disregard the later completely in a bi-modal listening environment. Given that the experimental stimuli used in the present study were phrases of connected speech that differed only by one segment, the participants demonstrated their abilities to identify the critical segments that distinguished the minimal pairs of connected speech.

The findings of significant facilitation by mismatched subtitles further suggest that the potential detrimental effect of visual-dominance is minimal in a bi-modal listening environment (Lukas et al., 2010). Listeners were found to be able to process the auditory signals even in the presence of misleading visual information. Furthermore, our results suggest that the listeners had sufficient executive functioning to inhibit irrelevant information as well as to selectively attend to audio information (Leon-Carrion et al., 2004). However, it is important to note that our participants were only exposed to subtitles and audio. Taking the limited capacity of cognitive resources into consideration (Drijvers et al., 2016), a trade-off between auditory and visual information processing occurs in a bi-modal listening environment. If video is present as in movies and TV programs, listeners’ cognitive load may be further increased, leaving little attentional resources to process audio information. Therefore, the use of multimedia in training connected speech decoding needs to take into account the nature and amount of visual information presented to the listeners.

As evidenced across the three subtitling conditions, familiar word phrases were better recognized than unfamiliar word phrases. This implies that novel connected speech processes and signals are challenging to EFL listeners, whose phonological repertoire for decoding connected speech may not be versatile enough. We speculate that the immediate success of decoding connected speech with the aid of subtitles may hinder long-term perceptual training. As previously described by Schnotz and Kurschner (2007), cognitive task performance and learning are different. Cognitive task performances are those actions that operate on mental structures in working memory, whereas learning operates on mental structures in long-term memory. In other words, learning takes place only if the content in long-term memory is transformed and results in an increase in expertise. If EFL learners are used to processing connected speech in working memory for immediate outcome without activating the phonological repertoire in long-term memory, their listening skills cannot be improved even after constant exposure to native English speech. As a result, when encountering novel connected speech without subtitles, subtitle-dependent EFL listeners are more likely to struggle in decoding the connected speech signal, though this claim has yet to be verified in future studies. The ingrained “performing without learning” situation is further reinforced by the use of (or reliance on) subtitles. Thus, it is important to prevent the development of this vicious circle within connected speech acquisition.

The Influence of Frequency on Connected Speech Perception

The current study examines perception of speech embedded with three types of connected speech processes (assimilation, elision, and juncture). When considering word frequency within the no-subtitles condition, the identification of high familiarity words was higher than those of low familiarity. In other words, these three connected speech processes are frequency-sensitive: The higher the frequency, the higher the accuracy, and vice versa. However, when subtitling was considered, the three types of connected speech processes display varying degrees of frequency-sensitivity when presented without subtitles. Yet, both assimilation and elision were found to be more sensitive to familiarity effects than juncture. One possible explanation for this finding is the perceptual differences between various connected speech processes. For assimilation and elision, it may be that the neighboring phonemes serve as cues which contribute to the identification of modified segments. This hypothesis is consistent with the view of probabilistic phonotactics (see Vitevitch & Luce, 1999). When viewed in conjunction with the theory that these processes are segmental “overlaps” with gradient variations (e.g., Browman & Goldstein, 1992), residual speech sounds between neighboring phonemes’ transitions may be perceived. If this is the case, the frequency effect will become more beneficial as listeners become more experienced. This is due to the shorter phonetic distance and less effortful lexical activation that this entails. In contrast, for juncture, word boundaries are ambiguous. For this process, non-native listeners tend to rely more on sentential and lexical context to aid segmentation as opposed to acoustic-phonetic clues (Altenberg, 2005; Mattys & Melhorn, 2007). As only non-contextual minimal pairs were used in the study, the stimuli lacked supporting top-down information. Therefore, participants relied on limited speech decoding abilities for word segmentation. For these cases, frequency would impose a minimal effect on juncture, as acoustic-phonetic cues may not be the major route utilized for comprehension. Furthermore, the phonetic distance may not be as short as that exhibited for assimilation and elision. These assumptions call for further research to investigate whether EFL learners adopt different speech-decoding strategies for different connected speech processes.

Last but not least, it should be noted that listening performances were not perfect even when accurate subtitles were provided. It is possible that some EFL learners may not be able to decode the subtitles because of inadequate reading skill. Although we did not measure reading ability in the current study, our speculation is partly supported by several previous studies showing a strong link between overall language proficiency and the efficiency of subtitle use during listening tasks in EFL learners (e.g., Lwo & Lin, 2012; Maleki & Rad, 2011). Still, further research is needed to verify the specific links between reading skills and connected speech processing skills.

Pedagogical Implications

In line with other studies examining connected speech in EFL learners, suboptimal connected speech comprehension skills were observed in the current sample. This result again pinpoints the need for connected speech listening training in EFL classrooms. As implied in the current study, using onscreen subtitles for educational purposes should be promoted but with greater caution. Pedagogically speaking, the ideal situation is that EFL learners continuously attempt to connect the content of the subtitles and audio when being exposed to them. This is key to ensuring that characteristics of English speech are learned, such as phonotactic properties, novel vocabulary, and grammar of English sounds (Brown, 2012). However, multimedia may only be used for entertainment purposes and the motivation to learn connected speech may not be consistently high across EFL learners. In reality, EFL learners may devote most of their attention to the subtitles and very little attention would be allocated to the audio input. Teachers are advised to remind students of both the positive and negative effects of subtitles on listening training. The focus of English listening comprehension should be placed on training students to comprehend native English connected speech without relying on subtitles. Thus, upon the use of subtitles (or other visual cues) to facilitate the learning of English lexical knowledge, teachers should gradually remove the visual cues so that students can comprehend English connected speech eventually in a subtitles-free listening environment. In addition, the significant familiarity effect obtained in the present study suggests that there is a need to consider this linguistic parameter when planning the listening curriculum. Teachers may design a variety of learning activities to consolidate the lexical familiarity of their students, so as to facilitate listening comprehension. It is interesting to note that assimilation was more sensitive to familiarity than elision. Teachers can tell students this characteristic explicitly and should be more alert to the familiarity effect when training different types of connected speech. Teachers should also choose captioned videos that are of appropriate level to the students

However, the familiarity effect should not be over-interpreted as having to pre-teach all difficult vocabulary/phrases from a text prior to the introduction of a listening task (see Liao & Yeldham, 2015). Even though this is believed to scaffold their comprehension and to heighten their awareness of new items in the text (e.g., Chai & Erlam, 2008), doing so removes the chance for learners to practice inferring the meaning of such items from the context. Hence, teachers should strike a balance between giving vocabulary input and nourishing students’ skills on drawing inferences from the speech.

Limitations and Future Research Directions

The first limitation concerns the number of items for each of the listening conditions. In order to create minimal pairs for the selected connected speech processes, the script was written with a focus on phrases with similar pronunciation. Such constraint, together with another parameter we manipulated (i.e., word familiarity) had limited the number of minimal pairs we could generate. Although the number of items could be increased, the effect size obtained from this study was large enough to substantiate our results. In future studies, the inclusion of additional items is recommended.

Another limitation is the presentation of subtitles during the test. The participants were provided with a list of minimal pairs and were requested to listen and choose to make the test procedure easier to understand. However, the option of force-choice questions may provide additional visual cues for participants as they were not required to provide the answers themselves. Given the provision of differential visual cues and the difference in response format, a direct comparison between the performances under the subtitled conditions and no-subtitles condition is deemed to be unfair. Future studies could therefore include a dictation test in the subtitled conditions.

Conclusion

The usefulness of subtitles for the immediate decoding of connected speech in a bi-modal listening setting is well documented in the literature. However, it is worth reconsidering the impact subtitles have on the acquisition of acoustic, phonetic, and phonological aspects of connected speech in the long run. Consistent with previous research on subtitles, subtitles are shown to be beneficial to listening comprehension in the current study. Hence, we do not advocate to abandon them, especially as EFL learners were found to favor the use of subtitles in their daily lives (Dallas et al., 2016). Still, EFL learners are reminded to equip themselves to become visual-aid-free competent listeners. Moreover, instructors or self-taught learners should be mindful about the exact function of subtitles for various listening tasks and be able to shift their focus back and forth between audio and visual information.

Footnotes

Acknowledgements

We would like to thank all the participants.

Data Availability

The data that support the findings of this study are available from the corresponding author (S.W.L.W.), upon reasonable request.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was funded by Early Career Scheme of the Research Grants Council (RGC) of Hong Kong (ECS 846212).