Abstract

The article discusses the incorporation of individuals’ assessments regarding the effect of intervention program on themselves as a source of information in commonly used quantitative program evaluation methods. The incorporation of Self-Assessment Variables (SAV) into the evaluation process enables the researcher to utilize the information contained in SAV while utilizing other available sources of information as well (such as administrative data). The analysis is based on the assumption that individuals possess valuable and unique information which they employ before self-selection into a program. The theory of planned behavior is used as a framework for examining different aspects of integrating SAV into program evaluation. The article elaborates on the integration of SAV into the matching method and on the possible advantages of that approach. In addition, the article discusses different aspects of the process of eliciting SAV from individuals. Finally, the article outlines possible directions for future research.

Keywords

Introduction

Public social intervention programs are a major policy tool used in many fields, such as economics, education, public health, and criminology. To engage in comprehensive policy planning, it is essential to evaluate the impact of these intervention programs on the participants. The fundamental difficulty encountered in quantitative evaluation of these intervention programs is the lack of information about the outcomes of individuals based on their participation status. Notably, information is lacking because there is no way of observing an individual as a participant or a nonparticipant in the same intervention program at a given point in time. Heckman et al. (1999) and Imbens and Wooldridge (2009) reviewed the evaluation fundamental difficulty and a variety of empirical methods developed to cope with this challenge, which rely on the use of experimental and nonexperimental data sets. The reviews demonstrate that no one method will always be optimal for achieving a reliable evaluation of the intervention programs.

This article analyses the use of individuals’ assessments regarding the effect of the program on their own outcomes as a source of information to alleviate the fundamental evaluation problem. The individuals’ self-assessments are relevant in any social intervention program designed to change certain aspects of the participants’ lives: for example, self-assessments of the unemployed about the impact of vocational training on their employment prospects, self-assessments of college students about the impact of a program designed to reduce binge drinking, or self-assessments of youth at risk about the impact of a program designed to reduce dropping out of school. The analysis examines the theoretical justification for incorporating Self-Assessment Variables (SAV) into program evaluations and the methodological implications of that approach. In the analysis, the use of SAV was based on the assumption that individuals possess valuable and unique information about the program’s impact on their own outcomes, and that they use this information to decide whether or not to enroll in the program, as described by Heckman (1997). The analysis also explores the use of the theory of planned behavior (TPB; Ajzen, 1991, 2012) as a framework for examining different aspects of integrating SAV into program evaluation. In the context of the use of mixed methods in program evaluation, the integration of SAV into a quantitative evaluation method allows the researcher to integrate the personal “story” of each individual into the evaluation process. Thus, the integration of SAV is complementary to the use of mixed methods in program evaluation. (The reader is referred to Burch and Heinrich (2015) regarding the use of mixed methods in program evaluation.)

Usually, researchers who use quantitative methods to evaluate the effect of intervention programs do not integrate SAV as a source of information. SAV is unique because it refers to the impact of the intervention program on the individuals, whose estimation is the goal of the evaluation process itself. Moreover, SAV is the outcome of the assessment by individuals of the program’s effect on themselves. The uniqueness of SAV has implications for its elicitation, its integration into the estimation model, and the interpretation of the evaluation results.

SAV can be incorporated into a variety of estimation methods. However, due to methodological considerations, the present analysis focuses on integrating SAV into the matching method. To conduct comprehensive empirical research on the contribution of SAV to program evaluation, it is necessary to have an exceptionally rich and carefully designed data set. The article lists the necessary characteristics of the data set and deals extensively with various aspects of the process of eliciting SAV from individuals. Furthermore, the article outlines possible directions and topics for future research on the use of SAV in program evaluation.

SAV as a Source of Information

In 1997, James Heckman defined a research environment in which the effect of intervention programs is heterogeneous and in which “individuals possess and act on private information about gains from the program that cannot be fully predicted by variables in the outcome equation” (Heckman, 1997, in the Abstract). Four assumptions establish the prevalence of Heckman’s Research Environment (HRE; Eyal, 2010):

A1. The impact of the intervention program is heterogeneous.

A2. Individuals have an assessment about the expected impact of the program on themselves.

A3. The self-assessments of individuals are based on valuable information. At least some of that information is unique (i.e., not available to the researcher).

A4. Individuals take the information at their disposal into account when deciding whether or not to enroll in the program.

The prevalence of HRE in a given research environment justifies the integration of SAV into program evaluation. If individuals possess valuable and unique information about the impact of an intervention program and if they use that information when they decide whether to enroll in a program, SAV will be a useful source of information for estimating the program’s effect.

The TPB offers another perspective regarding the use of SAV in program evaluation. For a description of the theory, see Ajzen (1991, 2012, 2018). Figure 1 (Ajzen, 2018) depicts the TPB. According to the TPB, the intention to act (e.g., to participate in an intervention program) is influenced by attitude toward the behavior, subjective norm, and perceived behavioral control. Attitude toward the behavior refers to the individual’s evaluation of the behavior as favorable or unfavorable; subjective norm refers to perceived social pressure to engage in the behavior or refrain from engaging in it; and perceived behavioral control refers to the individual’s perceived ability to act. According to the TPB, the actual behavior of an individual is a function of intention and actual behavioral control. The determinants of intention—that is, attitude, subjective norm, and perceived behavioral control—are, respectively, based on beliefs about the probability that the behavior will lead to specified outcomes (behavioral beliefs), beliefs about the normative expectations of significant others (normative beliefs), and beliefs about the presence of factors that may affect the performance of behavior (control beliefs). The attitudes, subjective norms, and perceived behavioral control are conceptually independent, but still empirically interrelated. Armitage and Conner (2001) conducted a meta-analysis of research that used the TPB and found that the TPB accounted for 39% and 27% of the variance in intention and behavior, respectively. A number of factors that may affect the predictability of the TPB regarding the future behavior of individuals are reviewed by Ajzen and Dasgupta (2015).

The theory of planned behavior.

HRE and the TPB are related in that SAV is a behavioral belief about the probability that participating in an intervention program will lead to a specified outcome. According to the TPB, SAV will affect the individual’s attitudes toward the program, which in turn will affect the individual’s intentions and ultimately the probability of participation in the program. In terms of A2, A3, and A4 (the three behavioral assumptions), the TPB refers to assumptions A2 and A4, that is, it refers to the assumptions that individuals have an assessment about the expected effect of the program on themselves, and that they use this assessment when deciding whether to enroll in a program. However, the TPB has no bearing on the prevalence of A3, that is, the assumption that SAV contains valuable and unique information about the program outcome (Ajzen, 2011, 2012). Thus, TPB itself cannot justify the use of SAV in program evaluation.

The following discussion of SAV as a source of information for program evaluation uses the two potential outcomes model (Roy, 1951), where Y1 and Y0 represent the outcomes of participants and nonparticipants in the program, respectively. The subscript i, which denotes individuals, has been deleted to simplify the expressions. XR=k,I=k are individual and aggregate variables; R (Researcher) and I (Individual) indicate whether the specified variables are observed (K = 1) or not observed (K = 0) by the researcher or by the individual. Thus, the variables XR=1,I=1 are observed by the individual as well as by the researcher (e.g., gender), the variables XR=1, I=0 are observed only by the researcher (e.g., aggregate variables such as unemployment rate), and the variables XR=0,I=1 are observed only by the individual (e.g., SAT scores). Neither the researcher nor the individual observes the last group of variables XR=0,I=0 (e.g., local demand for a specified vocation such as computer programmers). The classification of a specified variable by the above categories might change according to the specific research environment. For example, SAT scores may be available to the researcher in one research environment but not in another.

T is a binary variable: 1/0 for participation/nonparticipation in the program, respectively.

U0, U1, UT are errors, and α0, α1, β are the coefficients of the model.

The following parametric estimation model is used by the researcher:

The first two equations (Equations 1 and 2) refer to the two potential outcomes Y0 and Y1, respectively. Naturally, only the variables observed by the researcher are used (XR=1,I=1, XR=1,I=0). The third equation refers to the selection process that determines who will actually participate in the program and whether Y0 or Y1 will be observed by the researcher for a specific individual. The errors (U0, U1, UT) refer either to unobserved variables (i.e., not observed by the researcher) or to measurement errors (Marschak, 1953).

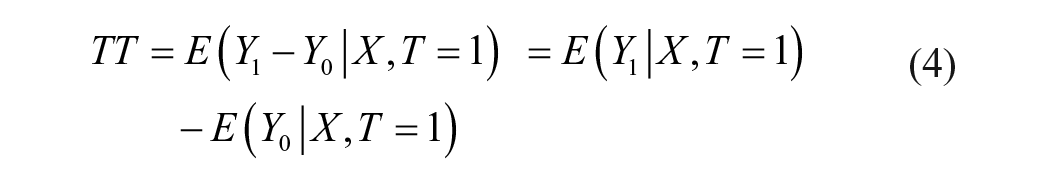

The treatment effect on the treated (TT) is a commonly used parameter for measuring the treatment effect:

where X represents conditioning variables, and TT is defined as the difference between the observed outcome (Y1) that the participants (T = 1) attain in the program and the counterfactual outcome (Y0) that they would have attained had they not enrolled in the program. The lack of information needed to identify the effect of the intervention program stems from the fundamental inability to observe the counterfactual outcome for the participants (Y0| X, T = 1).

The following equation describes how individuals derive SAV:

where J = 1, 0 for participation or nonparticipation in the program, respectively.

For example, SAV1 and SAV0 may refer to the individuals’ self-assessments of their earnings after they have either participated or not participated in a vocational training program. In this case, ∆ SAV = SAV1 − SAV0 denotes the individuals’ calculated assessments of the program’s effect on their future earnings based on SAV1 and SAV0. The process of deriving SAVJ will usually vary depending on the specific process (spJ) and on the specific data (XR=1,I=1, XR=0, I=1) used by each individual. For example, Dominitz et al. (2003) found that some individuals may simply rely on the opinions of acquaintances when formulating their expectations about receiving social security benefits.

Both the individuals and the researcher(s) have an interest in estimating the effect of the program. Individuals are in an advantageous position in that they possess a broader set of data, at least regarding issues related to personal abilities, possibilities, and plans (XR=0,I=1). Furthermore, individuals can choose the most appropriate assessment process for themselves (Equation 5), whereas researchers encounter an inherent difficulty in their attempt to construct a uniform quantitative model for the entire population (Equations 1 and 2). However, researchers possess theoretical and methodological knowledge, which may facilitate successful estimation of the program’s effect. Moreover, researchers have access to information (e.g., panel data) that is not available to individuals.

To alleviate the fundamental difficulty caused by the lack of information needed for program evaluations, researchers can utilize SAV as capsules of information that are elicited directly from individuals. As such, even though the researchers do not have full knowledge about the process or about the specific data that individuals use to derive SAV (Equation 5), these variables can still be a useful source of information.

The Value and Uniqueness of Self-Assessment Variables: Empirical Findings From the Literature

Unfortunately, literature on the use of SAV in program evaluation is scarce. Furthermore, it is limited in that it examines SAV as a criterion for evaluating the program effect, as a possible substitute for conventional estimation methods (experimental or nonexperimental). In contrast, the present analysis seeks a way to integrate SAV into the conventional estimation methods as a supplementary source of information to improve their performance. The currently available empirical studies in the literature use an experimental data set to estimate the “real” program effect as a benchmark and directly compare it with the program effect as is directly derived from SAV. These studies are important in that they examine the cognitive ability of individuals to make meaningful assessment of the program’s effect on themselves. It should be noted, though, that this cognitive ability has no direct bearing on the usefulness of SAV as a source of information in program evaluation. For example, SAV may be accurate but still not informative, given other information (variables) available in the program estimation model, and on the contrary, it may be informative though inaccurate.

The comparison of SAV to program impact yielded mixed results. Heckman and Smith (1998) and Smith et al. (2013) used the JTPA (U.S. Job Training Partnership Act) experimental data set to compare SAV to the impact of the JTPA on the participants’ outcomes in the labor market. The authors did not find evidence of a consistent relationship between the participants’ self-assessments and the estimations of program outcomes. Smith et al. noted that these findings should be interpreted with caution because the participants did not base their assessments on a well-posed question.

Mueller et al. (2014) and Mueller and Gaus (2015, 2018) conducted a series of studies of short-term interventions (surfing at an Internet portal, watching a televised documentary or an educational video) whose objectives were to bring about a change in a certain aspect of the participants’ behavior. Mueller et al. (2014) used experimental data on an intervention that aimed to change the motivation of consumers to engage in climate-friendly behavior. Six of the 12 intention variables that were examined using the participants’ self-assessments yielded an estimated treatment effect that was comparable to the one yielded by the experimental data. It was also found that gender and age were related to the precision of the participants’ self-assessments. A similar research design was used by Mueller and Gaus (2015) to examine an intervention that dealt with consumption of organic food. The author examined the program impact on intentions and attitudes and on self-reported behavior. SAV was found to be comparatively reliable regarding intentions and attitudes, but the results were inconclusive in regard to self-reported behavior. Mueller and Gaus (2018) studied an intervention that informed the participants about organ donation and encouraged them to get an organ donor card. The study used a series of random control trials (RCTs) to create an experimental data set to explore the accuracy of SAV under different conditions. The examined conditions were individual characteristics (education level), the examined outcome variables (attitudes vs. knowledge), and the way the data were collected (the placement of the rating of SAV relative to the rating of the current situation in the questionnaire). The study results indicated that SAV is a reliable indicator of program impact. These results were unaffected by changes in the examined conditions.

The Integration of SAV in Program Evaluation

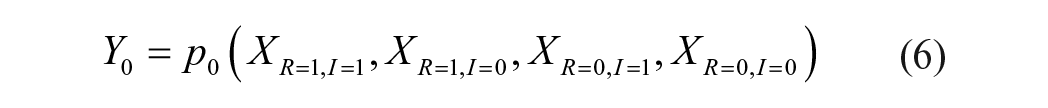

Although the prevalence of HRE implies that SAV would be a useful source of information in the evaluation process, it has no bearing on the causation between SAV and the potential outcomes (Y0, Y1). To clarify this point, Freedman’s (2006) approach was adopted for the Neyman–Rubin–Holland model (Holland, 1986; Neyman, 1923; Rubin, 1974). For a translation into English and discussion of Neyman (1923), see Splawa-Neyman et al. (1990). According to this model, to establish the causality of SAV, it is necessary to examine whether manipulation of SAV alone is related to a change in Y0/Y1. To further explore the causality of SAV, Equations 6 and 7 describe how Y0 and Y1 are determined:

Had we known p0, p1, and the values of XR=1,I=1, XR=1,I=0, XR=0,I=1, and XR=0,I=0, we could have fully predicted Y0/Y1 for each individual. Because a change in XR=1,I=1, XR=1,I=0, XR=0,I=1, or XR=0,I=0 included in Equations 6 and 7 will affect Y0 or Y1, these variables have a causal effect on the individual’s potential outcomes. In the framework of HRE, a change in SAVJ alone either will or will not affect Y0/Y1, depending on the specific research environment. If SAVJ does not affect Y0/Y1, it must not be included either in XR=1,I=1 or in XR=0,I=1 in Equations 6 and 7. In that case,

However, according to A3 (the assumption that SAV contains valuable and unique information), SAVJ is at least partially based on XR=1,I=1 and XR=0,I=1, which are included in Equations 6 and 7 and have a causal relationship with Y0/Y1. Hence, HRE implies an associational inference between SAVJ and Y0/Y1 (Holland, 1986). Yet, a possible path that creates causality between SAVJ and Y0/Y1 is suggested by the TPB. That possibility is based on the assumption that behavioral beliefs will not only affect the probability of program participation but will also affect the probability of behaviors that affect the participants’ outcomes. For example, high expectations of a vocational training program (high behavioral belief) will lead to a positive attitude, high intention, and finally to high prevalence of behaviors that improve the participants’ outcomes in the labor market (Y1). These kinds of behaviors will be evident during the training program itself (e.g., completing homework assignments, attendance in classes) and also at the program’s end (e.g., intensive job search in the field of training).

If a causal relationship between SAVJ and Y0/Y1 prevails, it will strengthen the value of SAV as a source of information for program evaluation. In any case, the researcher may include SAV in the estimation model to obtain better predictions of Y0 and Y1:

s0, s1—coefficients of SAV.

The inclusion of SAV1 to estimate Y0 in Equation 1′ and SAV0 to estimate Y1 in Equation 2′ is due to the possibility that both SAV0 and SAV1 will affect the probability of engaging in behaviors that may affect Y0 and/or Y1. Based on Equation 5, it is assumed that SAV0 and SAV1 correlate with XR=0,I=1, which is included in the error terms of Equations 1′ and 2′. In that case, SAV0 and SAV1 may add valuable and unique information to the estimation process. However, it is assumed that SAV0 and SAV1 correlate with XR=1,I=1 as well (Equation 5). Because XR=1,I=1 is already used in the estimation, the correlation between these variables and SAV0/SAV1 may bias the estimates of α0 and α1. Either way, as mentioned, caution should be exercised when interpreting the relationship between SAV0 /SAV1 and Y0/Y1 in terms of causality.

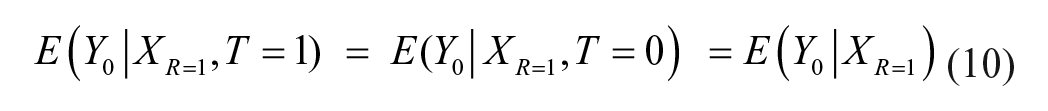

The integration of SAV into the nonparametric matching method is an appealing option for using SAV in the evaluation process, which circumvents the difficulties in interpreting the outcomes of the parametric estimation model. According to this method, each participant in the program is matched with one or more nonparticipants who have identical or similar observed characteristics to attain a balanced group for comparison with the treated individuals. The matching method is based on conditional independence assumption (CIA):

If Equation 9 holds, then given XR=1, the individual’s outcomes are independent of participation or nonparticipation in the program. In this case,

In light of the need to find individuals in the untreated group who match each individual in the treated group, the treated and untreated groups must have common support:

In practice, instead of matching the variables observed by the researcher (XR=1), the matching procedure can be reduced to one dimension by matching the propensity score, which is defined as P(T=1| XR=1) (Rosenbaum & Rubin, 1983).

Given Equations 9 and 11, it is possible to estimate TT by comparing the outcomes of the treated group with those of the matched comparison group:

Because both E(Y1 | XR=1, T = 1) and E(Y0 | XR=1, T = 0) can be directly estimated by means of the treated and matched comparison groups, TT can be identified.

The main advantage of the matching method is that it does not impose any structural constraints on the potential outcomes (Y0/Y1). In addition, the matching method is intuitively appealing, making it relatively easy for policy makers to interpret and utilize the evaluation outcomes. Nevertheless, the CIA is not a trivial precondition, and it holds in two situations (Heckman et al., 1997):

a. There is no individual or institutional selection into the program based on potential outcomes.

b. There are no unobserved variables (by the researcher, XR=0, I=1 or XR=0,I=0) that affect selection into the program as well as potential outcomes (Y0/Y1).

Assumption (a) is not consistent with Roy’s (1951) model and is implausible in most, if not all, relevant research environments; and assumption (b) requires a rich data set, which includes all the variables that affect selection into the program as well as the potential outcomes (Y0/Y1). The question regarding the actual prevalence of the CIA is an empirical one, and the answer may vary depending on the specific research environment. For a discussion on the use of the matching method, including the prevalence of the CIA and the data required to use that method, see Caliendo et al. (2017), Cook et al. (2008), Dehejia and Wahba (1999), Heckman et al. (1997, 1998), Lechner and Wunsch (2013), and Smith and Todd (2005). All these researchers except Cook et al. dealt exclusively with the evaluation of active labor market programs.

The main weakness of the matching method lies in its inherent inability to cope with selection into the program deriving from unobserved variables that also affect the program outcome. This selection process contravenes the CIA assumption. Therefore, the estimation bias may be reduced by incorporating SAV into the data set used for the evaluation. Equation 13 presents the estimation bias in the matching method without incorporating SAV:

If E(Y0 | XR=1, T = 0) = E(Y0 | XR=1, T = 1), or in other words, if the CIA holds, then BMatch(XR=1)= 0. Nevertheless, given XR=1, XR=0,I=1, and XR=0,I=0 and assuming that p0 and p1 are identical for all the individuals, the CIA holds, that is, (Y0, Y1) ⊥ T | XR=1, XR=0,I=1, XR=0,I=0 (see Equations 6 and 7). Thus,

Unfortunately, researchers have no access to XR=0,I=1 or to XR=0,I=0. Yet, if HRE prevails, the researcher may use SAV(spJ, XR=1,I=1, XR=0,I=1) as an additional source of information, which gives access, though indirectly, to the information contained in XR=0,I=1. In that case, the bias of the matching method will be

If (Y0, Y1) ⊥ T | XR=1, SAV0, SAV1, the CIA holds and BMatch(XR=1, SAV0, SAV1) = 0. The actual effect of integrating SAV into the evaluation process on BMatch(XR=1, SAV0, SAV1) compared with BMatch(XR=1) depends on the specific research environment. In general, if a significant estimation bias remains, the researcher may employ an additional estimation method which uses the matched comparison group as a basis for further adjustments. For example, Ho et al. (2007) used parametric methods, and Heckman et al. (1997) used the “difference in difference” method.

Eliciting Self-Assessment Variables

As an output of a cognitive process, SAV must be elicited directly from the individuals themselves, including participants in the program as well as nonparticipants. Furthermore, SAV must be elicited from the participants before the intervention takes place. Notably, changes in the participants’ SAV are expected to occur during the program as they gather information and update SAV accordingly (Eyal, 2010). Thus, SAV elicited from participants after the intervention has begun is incomparable to the participants’ SAV before the intervention or to nonparticipants’ SAV. Moreover, SAV should be obtained by asking well-posed questions that measure a clearly defined, relevant aspect of the individual’s performance after the intervention has taken place. For example, a question such as “If you do not attend the vocational training program, how would you predict your chances of being employed a year from now?” would yield much more useful information than a question such as “If you do not attend the vocational training program, how would you predict your chances of being successful in the job market a year from now?” The scale of responses also needs to be constructed carefully. Notably, SAV may be based on a verbal scale (e.g., very high, high, neither high nor low, low, very low) or a quantitative scale (e.g., 0%–100%). Another concern is whether to add the don’t know option to the possible responses. The advantage of adding the don’t know option is that it enables interviewees who do not have an assessment (because they are either unable to make an assessment or unwilling to invest the effort in doing so) to give a precise answer to the question. Furthermore, the rate of respondents who choose that option may be applied toward the empirical examination of whether HRE prevails in the specific research environment (Eyal, 2010). The disadvantage of providing the don’t know option is that some of the respondents may use it to avoid the cognitive burden of making an assessment. Finally, to elicit valuable and unique self-assessments, interviewees must have a comprehensive picture of the relevant intervention program. In addition, they need the ability to fully comprehend the assessment question as well as the answers. Thus, it would be useful to provide the interviewees (participants and nonparticipants) with information about the program (e.g., the target population, the length, and the contents) before they are asked about their assessments. However, eliciting SAV from people with low literacy levels might be challenging, even when they have comprehensive information of the program. For a study on eliciting probabilistic assessments in developing countries, in which a significant portion of the population is illiterate, see Delavande (2014).

Discussion

The TPB and HRE as a Framework for Program Evaluation

The current analysis used the HRE and the TPB as a framework for examining the use of SAV in program evaluation. It is worth noting that the TPB framework may be beneficial for program evaluation in other ways as well. First, the TPB could be used to construct a model of self-selection into the program (Equation 3), as a component of the overall program evaluation. The ability to appropriately model the process of selection into the program is especially important when using a nonexperimental database (Burch & Heinrich, 2015). Still, the TPB focuses on predicting a specific possible behavior rather than a choice between several behavioral options (i.e., self-selection). Thus, on the face of it, according to the TPB, SAV1 alone should be considered when predicting the probability of attending a program, whereas SAV0 which refers to the option of not attending a program should not be included. However, it is possible to adjust the model by adding other predictors (Ajzen, 2011). Furthermore, as mentioned above, attitudes toward the behavior may directly affect program outcome. Similarly, using the same rational, subjective norms, and perceived behavioral control may affect program outcome as well. It should be noted that findings in the literature support the notion that perceived behavioral control influence the amount of effort expended and the extent of perseverance in applying the intended behavior (Ajzen, 2012). In that case, the researcher may use attitudes, subjective norms, and perceived behavioral to obtain better predictions of Y0 and Y1.

Finally, to conduct a reliable and useful program evaluation, it is important to portray the broad picture of the program and its mechanisms (Deaton, 2010; Deaton & Cartwright, 2018; Heckman & Smith, 1995; Kabeer, 2019; White, 2009). Thus, exploring the process of selection into the program and the relationship of this process to the individuals’ program outcomes in the framework of both HRE and TPB will enhance the evaluation and the usefulness of its outcomes for policy makers. Actually, the TPB is already being used as a basis for planning interventions aimed at changing behavior and is often used to gain insight into the mechanisms through which these programs affect (or do not affect) the participant’s relevant behavior. See, for example, the review by Hardeman et al. (2002) on the application of TPB in program planning and evaluation; Van Ryn and Vinokur (1992) on job search behavior; Elliott and Armitage (2009) and Rosenbloom et al. (2009) on road safety; Todd and Mullan (2011) on reducing binge drinking; Kothe et al. (2012) and Lv and Brown (2011) on eating habits; and Aarø et al. (2006), Schmiege et al. (2009), and Tyson et al. (2014) on promoting healthy sexual behavior. In a meta-analysis conducted by Sheeran et al. (2016), modifying attitudes, norms or self-efficacy were shown to be affective in changing health behavior.

The Usefulness of SAV in a Specific Research Environment

Examination of the prevalence of HRE is essential in assessing the potential for using SAV in a specific research environment. As a first step, it will be useful to assess whether the assumption that HRE prevails is plausible. For example, if the program is mandatory, SAV will not affect selection into the program, contradicting A4 and implying that HRE does not prevail. One should also observe whether the participants have the knowledge and cognitive abilities required to make informative assessments (A3). If the assumption that HRE prevails is plausible, one can further follow the empirical method proposed by Eyal (2010), which examines each of the assumptions (A1–A4) to empirically establish the prevalence of HRE.

One of the key findings of the analysis is that the value of SAV as a source of information in program evaluation stems from its predictive power given XR=1, not from its accuracy (Equation 15). Notably, there are systematic and predictable cognitive biases in individuals’ assessments (Kahneman & Tversky, 1979; Tversky & Kahneman, 1974), which would make SAV inaccurate in many cases. When SAV is integrated with commonly used evaluation methods to complement other sources of information (i.e., other variables), researchers can utilize the information inherent in SAV even when SAV itself is biased. Juster (1966), for example, found that although the average assessments that individuals made regarding their purchasing probabilities were lower than the actual probabilities, their assessments were still a significant predictor of future purchasing. Dominitz (1998) and Eyal (2010) obtained similar findings regarding earning expectations and working in the field of training after vocational training, respectively.

Future Directions

To empirically examine the contribution of SAV as a source of information in the evaluation process, there is a need to conduct within-studies which rely on a combined experimental and nonexperimental data set. This data set should include a measure of SAV that relates to the treatment under examination and that is elicited appropriately. The use of nonexperimental methods with and without SAV, and comparison of the results of these methods with the results of the experimental estimation (the “real” program effect) allow for examination of the contribution of SAV as a source of information. For examples of the use of within-studies, see Cook et al. (2008), Dehejia and Wahba (1999), Heckman et al. (1997, 1998), Heckman and Hotz (1989), LaLonde (1986), Smith and Todd (2005), and Steiner and Wong (2018) who dealt with the possible criteria to determine whether the experimental and nonexperimental outcomes do correspond. For a comprehensive discussion of the design and implementation of within-studies, see Wong and Steiner (2018). To obtain the data sets required for studies of this nature, necessary steps need to be taken in the early stages of program planning and data collection. Evaluations of behavior-changing interventions based on the TPB may provide the infrastructure necessary to collect data and conduct these studies. Another opportunity for data collection may arise when using mixed methods to evaluate the intervention program. If a survey among the program target population is carried out during the course of the mixed methods study, it will create an opportunity to elicit SAV for later use when estimating the program impact. The proposed approach is consistent with the concept underlying the use of mixed methods of collecting information from a variety of sources by using qualitative and quantitative methods and integrating it into the overall program evaluation. For more information on mixed methods in general, see Creswell et al. (2011), Fetters et al. (2013), Greene et al. (1989), and Pluye and Hong (2014). For the use of mixed methods in program evaluation, see Burch and Heinrich (2015). When a variety of suitable data sets are available, they will be useful in mapping the settings and conditions under which SAV will contribute most substantially to program evaluation. One possible direction is to examine the usefulness of SAV in different areas of intervention (e.g., vocational training and treatment of drug abusers). Another possible direction is to explore the usefulness of SAV by various characteristics of the target population (e.g., age, education level, and cognitive abilities). A different direction would be to look at the impact of factors relating to the process of eliciting SAV (e.g., using verbal vs. quantitative scale assessments and using the option of don’t know).

Another possible direction for future research is to explore the use of assessments made by people involved in the institutional selection process (e.g., caseworkers) regarding the effect of the program on the outcomes of individuals (participants and nonparticipants). The same rationale that justifies the use of SAV also justifies the use of Institutional Assessment Variables (IAV). The use of assessments made by the individuals themselves (i.e., SAV) as well as by the people involved in the institutional selection process (i.e., IAV) can provide researchers with a wide range of information possessed by all parties involved in the selection process.

Summary and Conclusion

HRE and TPB were used to explore the theoretical and methodological aspects of integrating SAV as a source of information in the evaluation of social intervention programs. The analysis focused on using the matching method to integrate SAV into the evaluation process to enable researchers to utilize the information contained in the self-assessments while utilizing other available sources of information (variables) as well. SAV may allow researchers to benefit from the advantages of the matching method while at least partially overcoming the inherent inability of that method to control for unobserved variables which affect both selection into the program and program outcomes.

To shed further light on the possible contribution of SAV to program evaluation, there is a need for unique data sets that enable a within-study design. The article described the required data sets and expanded on various issues that should be considered to elicit useful SAV. A variety of suitable data sets can be used to map the conditions under which SAV contributes most substantially to program evaluation, with emphasis on different fields of research and different target populations, as well as on the process of eliciting SAV. Information about the different aspects of employing SAV will provide a comprehensive view of the empirical value of SAV and the proper way to use it so that the full potential of SAV in program evaluation can be realized.

Footnotes

Acknowledgements

I would like to thank Andrew Clark, Carolyn Heinrich, and Jeffery Smith for commenting on earlier drafts of the paper. I would also like to thank Mimi Schneiderman for her tremendous help in editing the manuscript. Judy Dotan and my son, Omer, assisted with the editing as well. Last, but certainly not least, I would like to thank my wife Sara for reviewing the paper and for her help in editing and organizing it.

Author’s Note

The views expressed in this paper do not necessarily reflect those of Myers-JDC-Brookdale Institute. Any errors are my sole responsibility.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.