Abstract

This essay considers the History of Psychology—its interests and boundaries—using the data behind the Journal Impact Factor system. Advice is provided regarding what journals to follow, which broad frames to consider in presenting research findings, and where to publish the resulting studies to reach different audiences. The essay itself has also been written for those with only passing familiarity with its methods. It is therefore not necessary to be an expert in network analysis to engage in “virtual witnessing” while considering methods or results: Everything is clearly explained and carefully illustrated. The further consequence is that those who are new to the History of Psychology as a specialty, distinct from its subject matter, are introduced to the myriad historical perspectives within and related to psychology from the broadest possible perspective. A supplemental set of exemplary readings is also provided, in addition to cited references, drawing from identified sources from beyond the primary journals.

The history of psychology has two aspects: the content and the activity. The stuff and the doing. Textbooks are mostly full of stuff, and light on doing (Flis, 2016; Thomas, 2007). So, unfortunately, are most teachers. Most have never done any history at all. 1 As a result, these teachers cannot authentically guide their students toward their own doings (Barnes & Greer, 2014; Bhatt & Tonks, 2002; Brock & Harvey, 2015; Fuchs & Viney, 2002; Henderson, 2006). And thus, the growth of the specialty has been stunted by an overabundance of the wrong kind of fertilizer: the history of psychology, rather than the History of Psychology (see Barnes & Greer, 2016; Capshew, 2014). 2 This essay therefore attempts to redress the imbalance by using tools from the Digital Humanities to begin to describe the latter, and its doings, from a new perspective.

Defining the Doing

Specialists have discussed the doing of the History of Psychology at some length (e.g., Danziger, 1994, 2013; Furumoto, 1989, 2003; Teo, 2013a). But not all of these discussions have been straightforward or easy to follow (see commentary by, for example, Brock, 2014, 2017; Burman, 2017; Danziger, 1997, 1998; Green, 2016; Pettit & Davidson, 2014; Weidman, 2016). Fortunately, the issue can be simplified with a single observation. Notably, the doing is focused in three “primary” journals: The Journal of the History of the Behavioral Sciences, History of the Human Sciences (HHS), and History of Psychology (quoting Pickren, 2012, p. 25; see also Capshew, 2014, pp. 151-152, 171-172; Teo, 2013a, p. 843; Weidman, 2016, p. 248). These venues are where the History that has been done in Psychology is most often reported, when it is not reported directly in books, and thus we need only examine them to observe the evidence of its doing.

That is the goal here. The primary journals were used to “seed” a citation analysis (following Park & Leydesdorff, 2009). Taking this seeding as representative of the History of Psychology’s “center,” I then appealed to quantitative tools like network analysis to identify its “periphery” (following Danziger, 2006; Pickren, 2009; Teo, 2013b). In this way, it was possible to test the specialists’ intuition regarding the relative importance and position of those three journals specifically. I also looked beyond the journals to identify disciplinary boundaries and more distant frontiers, thereby describing some of the recent “institutional ecology” that gives contemporary History of Psychology its shape (following Star & Griesemer, 1989).

To take such an approach is not, of course, to “do” a history of the History of Psychology. What follows is something entirely different, taking advantage of resources and tools to enable a higher level perspective. Whereas the approach typically used by specialist historians to do their work is micro-level “close reading” (usually of primary sources and often in archives of unpublished documents and correspondence), the methodological inspiration here is the macro-level “distant reading” that characterizes contemporary work in the Digital Humanities (following Moretti, 1994-2009/2013). The results are then consistent with importations recently made into Psychology generally (by, for example, Borsboom & Cramer, 2013; Burman, Green, & Shanker, 2015; Greenfield, 2013; but see also Pettit, 2016) and, more specifically, into the History of Psychology (by, for example, Burman, 2018; Green, Feinerer, & Burman, 2015a, 2015b; Pettit, Serykh, & Green, 2015).

That said, however, the goal here is somewhat different from what both Psychologists and Historians will expect. This is not a study of the content of the discipline, or of its trends. (The data to which I refer are much too limited for that.) Rather, it is a study of possibilities in presenting psychological knowledge in a particular domain.

The activity called the History of Psychology is therefore not represented here by what its members have said. Instead, I focus on the venues where this speech finds its audience. To the extent that these are closely tied to each other, and not to different outside areas, those venues can then in turn be understood to reflect the different discourses in which specialist authors are presently engaged: Different journals have different norms and standards, defended by different editorial teams who have been vetted in advance by disciplinary gatekeepers, reinforced by a variety of reviewers selected for their relevant expertise, and empowered by scholarly societies (as well as their publishers) that seek to reach particular audiences. And it’s the interests of these mostly invisible actors, ultimately, that I seek to identify (following Callon & Law, 1982; to provide an empirical answer to Richards, 1987: “of what is History of Psychology a history?”).

From Citations to Networks

Such analyses typically focus on content and thereby articulate the what of a body of work. Or they focus on people, and so examine the who behind the what. Here, though, I have looked at the places where those people have published: the institutional black boxes to which articles are sent when specialist authors intend to contribute to their professional discipline. In other words, I have sought to identify the where (see Shapin, 1988-2007/2010). And, in this way, the what is made examinable in a new way.

In considering the citations that locate these wheres relative to each other, it is necessary to look in two directions: journals cited by articles published in the primary journals (outgoing) and journals publishing articles citing material from the primary journals (incoming). These relations then collectively define a “directed network” (see Newman, 2010, for a gentle but comprehensive introduction). And that in turn enables the application of a set of specific quantitative tools. The strengths of connections are thus empirically demonstrable, clusters identifiable, and importance calculable.

It was in this way that I modeled the recent History of Psychology as a collective or organized doing. This has been illustrated in network terms as the tight and coherent grouping of centrally interconnected parts: The doing’s center is represented by the journals that are highly cited by the group as a whole—and which cite each other frequently—even as they are separated from peripheral others that are cited less often. That focus on the journals is then also what affords the wide-angle look at the doing as a discipline: The interests I’ve illustrated are taken to be generally representative, including of the interests of those authors who publish their histories in books (because there is no reason, in principle, for the interests examined in-depth in books to be different from those discussed more superficially in journals).

The data come from a widely used third-party source: the Journal Citation Reports, Social Science edition (hereafter simply JCR), published at the time of writing by Thomson Reuters and subsequently acquired by Clairivate Analytics. In particular, I examined two different facets of this database: the journal-level citation summaries (in Studies 1 and 2), and the subject classifications (in Study 3). That in turn afforded certain strengths and weaknesses.

The data reported-on here are identical with those behind the Journal Impact Factors (JIFs) that inform so many decisions in academia. As a result, I was able to take advantage of the controls implemented at the source to ensure that those metrics reflect real and substantive uses (see Hubbard & McVeigh, 2011). In other words, I treated the data as if the relations defined between journals were akin to meanings represented by a “controlled vocabulary” (following Burman et al., 2015). This is also what afforded my confidence in the results: Although JIFs are sometimes dismissed as a flawed measure of productivity or importance, their calculation requires access to carefully vetted citation data. And it is these data that I examined, not data gathered from the wild and filtered according to my own interpretation of what ought to count. (For criticisms of the use of JIFs in psychology, see Hegarty & Walton, 2012, and, more generally, Braun, 2012).

To format the data for analysis, I used Excel. Then I constructed and analyzed the networks using Gephi (Bastian, Heymann, & Jacomy, 2009). Similar procedures can now also be performed directly in R (see Costantini et al., 2015). The effect, however, is the same: Relational data are gathered or reconstructed from an existing database, formatted in relational terms and uploaded into an analysis program, and then visualized and analyzed as networks. It’s these that are usually presented as results, interpreted—sometimes using other quantitative tools—and discussed. That was my approach here too.

In what follows, a single investigation is presented through three connected empirical studies. This allows the narrative to build simply and incrementally. The parts are then discussed collectively, with new challenges, questions, and opportunities identified in conclusion.

Study 1. The Discipline as a Network of Influences

The first study looked specifically, and solely, at citation patterns—outgoing and incoming—at the level of the journals themselves. To do this, I relied on citation data from the summary reports provided by the JCR for each of the 7 years for which data exist for all three of the primary journals (2009-2015 inclusive).

The purpose, in this first study, was to use the primary journals to identify the main influences on the discipline: not just where its doings are done, but also where its methods come from and where its results find their audiences. The discussion then focuses on some of the power of network analysis, while highlighting a major pitfall into which the unwary traveler might easily stumble. The second study adds depth and detail, using the same data set, while focusing specifically on the doing of the History of Psychology. And the third study takes a step further, to address the question of what the History of Psychology is actually about; its ecology of interests, as well as how these can be grouped together into more meaningful superordinate categories.

Method

To begin, I accepted as given—as a premise—that specialist-insiders recognize three journals as primary. That enabled me to use the citations within and to these journals as evidence of reach and influence. I took note of every journal cited by a primary journal and marked it in Excel as an outgoing citation. I also did the same for every primary journal that was cited in turn, marking each of these as an incoming citation. The relations thus defined have strengths quantifiable by the number of these journal-to-journal citations.

To construct the data set, I first created a series of spreadsheets. Each journal’s incoming journal-level citations have an annual report in the JCR that spans several pages, and the citation data from each of these was imported and merged into a single page in a spreadsheet. The result, in my own work product, was a commonplace booklet for each journal: one page for every year, showing the citation patterns for all of the years considered.

From these booklets, I created summaries. The year-by-year details provided in the annual reports were collapsed into annual totals. I then consolidated those totals, so that the annual citation counts to each journal could be read horizontally across a single table: Each column gave the count for 1 year’s citations, and the sum along each row gave the total number of citations for all the years listed. Finally, using these consolidated workbooks, I created a single comma separated values (CSV) file with just three columns: the originating journal (labeled “source”), the target journal (labeled “target”), and the total number of citations reported by the JCR over the full period of study (labeled “weight”).

The CSV file is the output from Excel, but it is the input for Gephi. I then imported this file directly into Gephi’s data laboratory as an “edge” table, allowing the software to automatically create the individual “nodes” for each of the identified journals. (Edges and nodes are the meat-and-potatoes of network analysis: the connections and the things-being-connected.) Note, however, that the directions of these edge tables are reversed relative to each other when considering inbound and outbound citations: Inbound citations have the citing journal as their source and the primary journal as their target, and outbound citations have the primary journal as their source and the cited journal as their target. This distinction is crucial, too, because Gephi will not receive the data properly otherwise.

In the results that follow, I have reported two numbers for each journal: the citation counts and the number of years in which these citations were made over the study period. I did this because it was not initially obvious which of the two is more important for assessing the resulting network. Citation counts are the usual means of assessing productivity, and thus in this case present an obvious choice for defining strength of relation. But the consistency of citation could also be important for assessing connectedness between defining disciplinary features. And because that is what we are primarily interested in understanding, I chose to report both (with standard deviations calculated from citations but order-of-presentation influenced by consistency).

Results

Between 2009 and 2015, the three primary journals of the History of Psychology cited 357 different journals and were in turn cited by 247 different journals. In total, this reflects 5,245 outbound journal-to-journal citations and 2,257 inbound journal-to-journal citations. That said, however, each journal is also always its own biggest fan: self-citations, at the journal-to-journal level, are typical (and account for 557 of each of the two totals). But aside from suggesting that the authors themselves see a coherent discourse being presented at the journal level—and thus that a certain amount of self-citation is to be expected 3 —these are not in themselves meaningful for our purposes. Journals are obviously related to themselves.

Outbound citations

Outbound citations are a measure of the value and esteem in which sources are held by members of a discipline. They therefore afford a sense of what the History of Psychology is about, according to those who define it by their activities, as well as how it’s done: cited sources reflect importations of both content and method (with no distinction).

Examining the means and standard deviations of the journal-to-journal citation counts to derive a basic guide (while also controlling for the different number of articles published by each of the three journals), we see that authors published in the Journal of the History of the Behavioral Sciences (JHBS) regularly cited the American Journal of Sociology (137 citations over the full 7 years of citations examined), American Psychologist (96 citations over 7 years), Isis (70 citations/7 years), American Sociological Review (69/7), History of Psychology (56/7), and the American Journal of Psychology (59/6). These venues were cited more frequently than two standard deviations above the mean number of journal-to-journal citations. Other consistently popular sources, cited between one and two standard deviations above the journal mean, included Psychological Review (50/7), HHS (24/6), American Economic Review (35/5), American Journal of Psychiatry (34/5), Social Studies of Science (25/5), American Political Science Review (45/4), and Social Forces (26/4).

Authors published in HHS regularly cited JHBS (77 citations over 7 years), American Journal of Sociology (61 over 7), American Sociological Review (40/7), Isis (43/6), American Journal of Psychiatry (45/5), and Social Studies of Science (35/5). Other consistently popular sources included Theory & Psychology (34/7), American Psychologist (28/7), Theory, Culture, & Society (39/6), British Journal of Sociology (19/6), Science in Context (16/6), History of Psychiatry (28/5), Economy and Society (24/5), Sociology (22/5), Political Theory (14/5), Biosocieties (25/4), History of Science (23/4), Psychological Review (19/4), International Journal of Psychoanalysis (16/4), Archives of General Psychiatry (15/4), Social Science & Medicine (15/4), British Journal of Psychiatry (33/3), Sociological Review (27/3), Sociological Theory (21/3), Bulletin of the History of Medicine (17/3), Medical History (16/3), Journal of Child Psychology and Psychiatry (16/2), Journal of Consciousness Studies (15/2), and the Journal of the History of Biology (15/2).

Authors published in History of Psychology (HoP) regularly cited the Psychological Review (147/7), American Psychologist (139/7), JHBS (137/7), and the American Journal of Psychology (109/7). Other consistently popular sources included Theory & Psychology (49/7), Psychological Bulletin (47/7), HHS (36/7), American Journal of Psychiatry (32/5), Isis (30/5), Journal of Social Issues (28/5), the French-language journal Année Psychologique (60/4), and Psychology of Women Quarterly (31/3).

This then implies that, in addition to the three primary journals, scholars interested in the History of Psychology ought to consider following four other journals regularly: American Psychologist (263 citations/7 years), Psychological Review (216/6), Isis (143/6), and the American Journal of Psychiatry (111/5). Other key nonprimary journals worth considering on this basis, in the sense that they’re cited at significant levels by two of the primary journals, include the American Journal of Sociology (198/7), American Sociological Review (109/7), Theory & Psychology (83/7), American Journal of Psychology (168/6.5), and Social Studies of Science (60/5).

Inbound citations

Inbound citations are a measure of the uses to which work produced by members of a discipline are being put. It therefore gives a sense of what the History of Psychology is good for: citing sources reflect use and interest, and so provide a glimpse of different audiences.

Again using journal means and standard deviations as a guide, we see that JHBS’s inbound nonself citations came primarily from HoP (137 citations over the full 7 years of citations) and HHS (77 citations over 7 years). Less significant, but still noteworthy, are History of Psychiatry (37 over 6), Isis (28/6), and Theory & Psychology (32/5).

HHS’s inbound citations came primarily from HoP (36/7 years) and Theory & Psychology (36/7). I also found BioSocieties (30/6), JHBS (24/6), Sociology of Health & Illness (20/6), Social Studies of Science (13/4), Isis (16/3), Social Science & Medicine (14/3), and Theory, Culture, & Society (13/3).

HoP is the heaviest self-citer in the group: 217 citations of 574 inbound (almost 38%). This is double the rate in HHS (18.2%) and nearly double that of JHBS (21.7%). Indeed, the journal’s proclivity for self-reference skews the distribution of its citations so severely that no other journal rises to two standard deviations above the mean. Aside from itself, though, its main inbound sources are JHBS (46/7) and Theory & Psychology (27/6).

Again, we can look at overlap to identify the key journals to follow. This time, though, only two nonprimary journals are indicated: Theory & Psychology (95/6) and Isis (44/4).

Mutual citations

Even without looking at a network visualization, it’s clear from this that a small number of journals are both cited by and cite one or more of the primary journals at a significant enough level to consider them part of the “distant center” of the discipline. And only one is connected in both directions to all three: Theory & Psychology. But the question of how closely it’s related to them can only be answered quantitatively. We therefore turn to that examination next.

Discussion

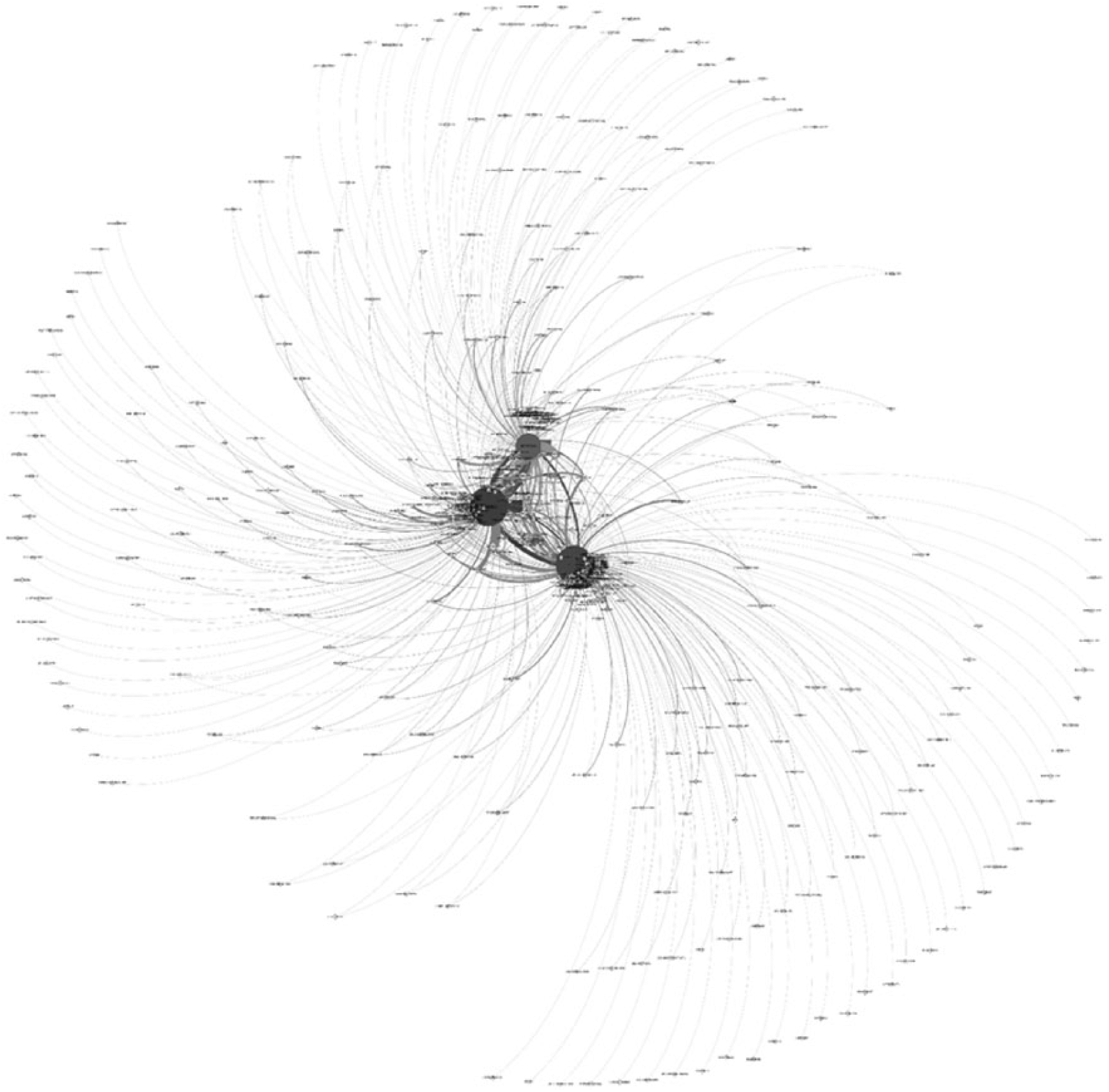

Two figures are presented to simplify these results, each illustrating one of the two aspects of the relational data reported in the JCR. Figure 1 presents a network using citation counts to set the strength of the connections between journals, and Figure 2 uses the number of years in which citations were made. Two technical elements are then also illustrated: Node size is a function of PageRank in both figures and shade is a function of Eigenvector Centrality. 4 These are different measures of “importance” in the network (bigger and darker imply greater influence), and they are consistent here both with each other and between images. Although the lists above might therefore have been reordered slightly by reversing this focus, we calculate that the difference would have been insignificant. 5

Network of citations.

Network of years in which citations were made.

My preferred layout algorithm is called “Force Atlas 2” (Jacomy, Venturini, Heymann, & Bastian, 2014). This is a force-directed 2D spatial organizer that takes advantage of the multithreaded processing of modern computers, and thereby reduces the time required to produce an accurate and intuitively useful network. Its primary weakness is also shared by its competitors: The resulting illustration is a projection of a multidimensional object onto a two-dimensional surface, so positions are often underdetermined for weakly connected nodes (the map could have multiple configurations) or even misleading (unconnected nodes that would appear distant in three dimensions are sometimes shown close to each other in two dimensions). Indeed, that very thing has happened between Figures 1 and 2: Weakly connected nodes vary widely in position along the outer edges of the network, even while strongly connected central nodes move very little. For this reason, the output from such analyses cannot simply be accepted as shown (cf. Burman, 2018). 6

The result that matters most for our purposes, however, is straightforward: These analyses suggest that this doing is influenced primarily by 12 key journals. Again, the ordering is slightly different depending on the metric used, but following standard deviations provides a useful guide: HHS and JHBS are further than two standard deviations above the mean on all four centrality metrics, and HoP is further than one standard deviation above the mean for both measures of Eigenvector Centrality but not for PageRanks. The other nine journals are then all positioned at or above average importance for the discipline, but by less than HoP.

Still, from my perspective, PageRank is the better metric given my intent: it is very widely used, its calculations reflect a recursive process in which global-connectedness plays an important role, and its outcome always sums to 1 across a data set (enabling the simple rescaling of subsets). It then follows from this that the most important nonprimary journal for specialist Historians of Psychology—in terms of its overall impact, but not its JIF—is the nonprimary journal with the highest PageRank score: American Journal of Sociology. However, this journal’s influence on the discipline is only a little greater than the others. Indeed, all nine are well within one standard deviation of HoP on the global metric of influence (PageRank of citations). Thus, I suggest that the key nonprimary influences ought to be considered collectively. They are American Journal of Sociology, American Psychologist, Isis, American Sociological Review, Psychological Review, American Journal of Psychiatry, American Journal of Psychology, Social Studies of Science, and Theory & Psychology. 7

Yet it is curious that HoP—a primary journal—is by this analysis itself insignificantly different from the next-closest near-peripheral journals. This could perhaps be a side effect of its relative youth. (It was founded in 1998 and first received a JIF in 2009). Yet it could also be a side effect of the means by which the data themselves were treated prior to visualizing the above textual summary: Using a network to illustrate relational lists derived using standard deviations is easy to understand, but it is also potentially misleading when it comes to calculating the strengths of the underlying relations. For this reason, Study 2 considers the influence of all of the connected journals prior to the use of any filters. But the easiest way to make sense of this requires a bit of a divergence.

Study 2. Close Friends and Distant Acquaintances

In life, we all routinely make a distinction between close friends and distant acquaintances. Yet no one would deny knowing somebody on the basis of the application of a statistical tool. Furthermore, in mathematical sociology, the statistically insignificant relations omitted from Study 1 are considered the primary means by which information flows through a network. Thus, in Study 2, my intent is to examine the full “strength of [the] weak ties” that bind the discipline together.

This expression—the strength of weak ties—is due to Mark Granovetter (1973, 1983). 8 He argues that the power of network analysis is that it enables the synthesis of micro- and macro-level perspectives. This is also a perennial problem in the History of Science (see Galison, 2008). And, indeed, similar methods as those used here are now being used by Historians of Psychology to address it (e.g., Green et al., 2015a, 2015b; Pettit et al., 2015). That said, however, one of Granovetter’s other insights is more germane to our particular interests; namely, the degree of overlap between two networks is directly proportional to the strength of their connection. In other words: to reach new audiences, specialist Historians of Psychology ought to target friendly journals that are nonetheless distant in the disciplinary network from the center and from each other. Of course, doing this requires that we know where the internal boundaries are. That’s what we examine in Studies 2 and 3.

In presenting these concepts, Granovetter (1973) distinguished between ties of different relational strengths: “strong, weak, or absent” (p. 1361). In his case, this reflects the quality of a relationship between two people. Thus, a strongly connected pair can be called friends; a weakly connected pair, acquaintances. 9 To operationalize these definitions in a way that was useful for the purposes of this study, I further defined the first two types of connection as involving mutual citation of greater or lesser strength and the last as involving a one-way citation that may nonetheless be influential. That then enabled the reuse of the data set from Study 1, which this time was not pretreated in any way prior to the network analysis. A filter was instead applied from within the analysis program to focus specifically on journals with mutual citations and thereby identify the network-of-doings.

Method

The initial import of data from Excel into Gephi at first affords a disorganized cloud, rather than a recognizable network. Nodes are distributed randomly in conceptual space, attached by their edges but otherwise without identifiable shape or form. Although analyses can still be performed on the underlying relations, it is helpful to use the software’s visualization tools as an interpretive guide. This serves as a check against error and as an aid to understanding.

When using the ForceAtlas2 layout algorithm, it is often useful to increase the separation between nodes and provide some additional spacing between clusters. For this reason, I like to turn on “Dissuade hubs.” This has the effect of pushing noncentral subnetworks toward the outer edges of the network, and keeps the center for the primary network. 10 The “expansion” layout algorithm is also both useful and straightforward in its effects: It increases the distance between each node by changing the scale of the network. (No substantive changes are made to the underlying geometry.)

Labels can be attached to nodes by selecting the Nodes table, in the data laboratory screen, and copying the ID column into the Label column. In the overview screen, the “show node labels” toggle must then be turned on. Font size will also inevitably need to be altered in order for labels to be legible.

In the visualizations prepared for Study 1, node size was set as a function of PageRank. This is done again in Study 2, so that the size of each node reflected its position in the overall network. In the statistics panel, this calculation is performed for a Directed Network with “Use edge weight” turned on. The results are then reflected in the visualization using the Appearance panel: node size must be changed using the PageRank attribute. I usually select a range of 10 to 100, but this is just so there’s a useful amount of visual difference between the smallest node and the largest.

For Study 1, node color was set as a function of Eigenvector Centrality. The same thing has been done again. The resulting images were then converted to grayscale for publication because only the relative brightness is meaningful here.

After making these various changes, it is useful to reapply the layout algorithm(s). Afterward, I applied the Expansion algorithm until I was happy with the way the resulting network looked. (It should be easy to read when zoomed-in.) The unfiltered results of this process are shown in Figure 3, which are obviously uninterpretable at this scale except for one feature: There are three large circles at the center of the network and they are highly interconnected. These are, unsurprisingly, the primary journals.

The collective, organized doing called History of Psychology according to its journal-to-journal citations (2009-2015).

Results

This network takes into account both the inbound and outbound citations provided by the JCR. The consequence is that 494 journals are represented, accounting for 7,502 citations. (The total number is not the same as in Study 1 because of overlap: One fifth of the journals identified have citations going in both directions.) This then represents the entirety of the History of Psychology, for the years studied, insofar as its doings can be represented by examining journal-to-journal citations (and where the data from all of the represented journals are controlled by the JIF system).

Taking this full network into account, the specialists’ intuition that there are three primary journals is confirmed: JHBS’s PageRank score is 14.6 standard deviations above the mean, HHS’s is 13.9σ above the mean, and HoP’s 8.9σ. No other journal scores higher than two standard deviations above the mean on this global measure of influence although both the American Journal of Sociology and American Psychologist do score higher than one standard deviation above the mean. But note too that this is also an “influence network” and what is needed for our purposes is more akin to a “social network.”

To make this shift requires the elimination of Granovetter’s (1973) “absent” ties. I did this by applying a “mutual degree” filter, set to hide all of the journals that do not have citations in both directions. In other words, all of the journals without both inbound and outbound connections to the primary journals are omitted from Figure 4. That then highlights the journals where most of the History of Psychology is done in its broadest interpretation: The journals shown are those that both cite the primary journals and which are cited by the primary journals.

The History of Psychology’s journals, from center to near-periphery (2009-2015), according to mutual citation (mutual degree ≥ 1).

From this perspective, the discipline’s social network includes 97 journals (20% of the total number identified in the influence network). And they account for 4,830 citations (64.4% of the previous total). Beyond them exist ties in one direction or the other, but—following Granovetter’s typology—these connections are unimportant given our goal. Yet we can certainly err on the side of inclusiveness: The highlighted journals can be understood to represent the History of Psychology from its center to its periphery. Not everything that could be included has been, of course, in part because journals without JIFs have been omitted (and, of course, books are absent too). That said, however, even journals on the near-periphery can be fairly distant; some are more “historical” than they are History, others are merely contextual, and still others publish only commentaries or book reviews discussing historical or contextual interests.

Discussion

Changing the parameters of the mutual degree filter enables the quick determination of the discipline’s different internal boundaries. This is again another measure of centrality and can be understood to reflect the strength of the shared connection to the three primary journals.

As a function of method, the primary journals alone are visible above 4 mutual degrees: They are cited by all three of the primary journals, plus at least one other, and they have links going in both directions. 11 This then in turn affords several levels of proximity, using Granovetter’s (1973) typology, in treating the History of Psychology as a discipline: primary (>4 degrees), strong (2-3), weak (1), and absent (<1). To make a potentially useful distinction, I have split the strong group in two. Thus, we can refer to the 3-degree journals as the discipline’s “outer center” and the 2-degree journals as its “near periphery.”

The full range of associated journals is shown in Figure 4: the discipline’s strong and weak ties. But because networks can be difficult to interpret close-up, in their details, I will proceed through the different layers in order of their PageRank scores. In the supplemental bibliography, I have also provided some examples of articles that struck me as especially interesting or relevant. I set an arbitrary cutoff of 1975, for these, and I used the number of citations since publication as a guide in helping me choose among them (albeit with a slight bias toward more recent and highly-relevant works because older high-citation articles are easier to find in a normal search [leading in turn to a self-reinforcing Matthew Effect; see Merton, 1968]). Collectively, these found-sources serve as a convenient illustration of the kinds of readings to be found outside the easily discoverable material published in the primary journals. 12

The discipline’s best friends, or outer-central nonprimary journals by greatest mutual degree, include several surprises. But the top two are to be expected from the preceding analyses: American Psychologist (e.g., Baker & Benjamin, 2000; Capshew, 1992; Furumoto & Scarborough, 1986; Harris, 1979; O’Donnell, 1979; Shields, 1975) and Isis (e.g., Carson, 1993; Dehue, 1997; Fancher, 1983; Green, 2010; Morawski, 1986; Todes, 1997). These are followed more distantly by History of Psychiatry (e.g., Engstrom & Weber, 2007; Mayes & Rafalovich, 2007; Scull, 1991; Sierra & Berrios, 1997), Science in Context (e.g., Curtis, 2011; Hayward, 2001; Rose, 1992; Schaffer, 1988), the British Journal for the History of Science (e.g., Canales, 2001; Cohen-Cole, 2007; Richards, 1987; Vicedo, 2011), the journal formerly known as The Pavlovian Journal of Biological Science and recently rebranded as Integrative Psychological and Behavioral Science (e.g., Suomi, van der Horst, & van der Veer, 2008; Toomela, 2007; Van der Veer & Yasnitsky, 2011; Zittoun, Gillespie, & Cornish, 2009), and The Psychologist (the British Psychological Society’s professional trade journal, which regularly features a column entitled “Looking back”). These references, and the others below, are included in the supplemental bibliography.

The discipline’s cohort of strong friends, in the near-periphery, is led by the American Journal of Psychology (e.g., Dehue, 2001; Vaughn-Blount, Rutherford, Baker, & Johnson, 2009; Winston, 1990; Winston & Blais, 1996). This is followed more distantly by Social Studies of Science (e.g., Bowker, 1993; Solovey, 2001; Viner, 1999), Theory & Psychology (e.g., Danziger, 1994; Michell, 2000; Richards, 2002), the French-language journal Année Psychologique (e.g., Nicolas, 1994, 2000; Vermès, 1996), Journal of Social Issues (e.g., Capshew & Laszlo, 1986; Finison, 1986; Torre & Fine, 2011), International Journal of Psychoanalysis (e.g., Angelini, 2008; Gyimesi, 2015; Wallerstein, 2009), Medical History (e.g., Jansson, 2011; Scull, 2011; Sommer, 2011), Bulletin of the History of Medicine (e.g., Casper, 2008; Grob, 1998; Mazumdar, 1996), Osiris (e.g., Dror, Hitzer, Laukötter, & León-Sanz, 2016; Nersessian, 1995; Rutherford, 2015), American Historical Review (e.g., Pettit, 2013; Sokal, 1984; Steedman, 2001), Social Science & Medicine (e.g., Crossley, 1998; O’Connor & Joffe, 2013; Väänänen, Anttila, Turtiainen, & Varje, 2012), Child Development (e.g., Jordan, 1985; also Burman et al., 2015, as an importation of history-derived methods for general use; but most recent works are more similar to Gelman, Manczak, Was, & Noles, 2016; Kiang, Tseng, & Yip, 2016), International Journal of Psychology (e.g., Aleksandrova-Howell, Abramson, & Craig, 2012; Oyama, Sato, & Suzuki, 2001; Sinha, 1994), History of Political Economy (e.g., Moscati, 2007; Pooley & Solovey, 2010; Sent, 2004), New Ideas in Psychology (e.g., Bunge, 1990; Goertzen & Smythe, 2010; Michell, 2013; Suppe, 1981), British Journal of Social Psychology (e.g., Danziger, 1983; Farr, 1983; Rudmin, Trimpop, Kryl, & Boski, 1987), and Feminism & Psychology (e.g., Lafrance & McKenzie-Mohr, 2013; Lazard, Boratav, & Clegg, 2016; Tavris, 1993).

Other journals identified by PageRank for their influence on the History of Psychology, but marked only as acquaintances having merely mutual recognition—weak friends, and thus describable as part of the discipline’s far-periphery—were led by the Psychological Bulletin (e.g., Rucci & Tweney, 1980; Wagemans et al., 2012). There are many other journals in this group too, of course, but the list quickly becomes unwieldy; just mentioning them by name pushes this essay over the journal’s strict word limit. (Note that they are all still visible in Figure 4, and that an example from each has been included in the supplemental bibliography).

It’s clear from this examination that a wide variety of interests is reflected. More, in fact, than any individual could ever hope to engage. Indeed, it doesn’t quite seem as though there is a unitary discipline reflected; the boundaries of the History of Psychology appear to be quite porous. But what of specific topical interests? To more clearly specify what it is that this collective is doing, separately and together, it would be useful to examine how the journals group together.

Study 3. From Friends to Interests

The usual way to group the members of a network is to conduct a “modularity analysis.” This detects higher order clusters by following the geometry of their relations to each other. In this case, however, the usual way won’t achieve the desired goal: We are not interested so much in the emergent properties of the network as we are in how its different parts connect to categories that have known meanings external to the network. Those are the focus of Study 3.

To gain access to these external meanings, I added a new layer to the data set. The resulting examination takes advantage of all of the citation data gathered for Study 1 and used in Study 2, but it also incorporates the topical categories provided in the JCR. I then used their meanings to provide a way to answer the big question that inspired this project: “What is History of Psychology?”

Method

Every entry in the JCR for each journal from Study 1—those cited by a primary journal, or which cites a primary journal—has been reexamined from a new perspective. In all cases, at least one category was provided for the journal that associated it with a topical interest. As a result, the three primary journals now all share one category—“History of social sciences”—even as HoP is also categorized as “Psychology, multidisciplinary” and HHS as “History & philosophy of science.”

Constructing the new data layer was simply a matter of creating a new spreadsheet from the JCR, with one row for each category. To make the use of a single filter possible, the choice of direction was also important: The journal was set as the source and the category as the target. No weight column is required as Gephi will assign a weight of 1 by default. This then means that the categories will have only negligible effects on network geometry, while still allowing the extant associations to be examined.

With these new data added, the layout algorithms were reapplied and the PageRanks recalculated. I also performed a new Modularity Analysis, and color-coded the nodes by cluster membership. 13 To do this, I selected “color” in the Appearance panel. Then I assigned “modularity class” as the attribute.

To focus on the categories rather than the journals, I used a new filter: “indegree.” Setting this equal to or greater than 4 meant that all of the nonprimary journals were eliminated from the visualization. (As a function of method, even the most important among the “friends” can receive only three inbound links: one from each of the primary journals.) What remained were the primary journals and the associated categories, with color-codings indicating group-memberships provided by modularity analysis. Because their importance has been calculated using PageRank, these scores can also be normalized to reflect this subgrouping (without recalculating on the filtered geometry) and given as percentages.

Results

After the filtering, only 45 nodes are visible (see Figure 5). Three of these are the primary journals, and the rest are categories: what it is that the History of Psychology is mainly about. These categories are then associated with one of three calculated clusters, each of which attaches to a journal according to the strength of the underlying relations. But the cluster analysis will be discussed separately, while taking advantage of what follows.

Categories of interest (in-degree ≥ 4), with color-coding according to unfiltered modularity analysis.

These analyses suggest that the largest single contributor to the doing is “Sociology” (as indeed was recently observed by Araujo, 2017). However, two other categories also rise above two standard deviations from the mean: “Psychology, Multidisciplinary” and “Psychiatry.” Between one and two standard deviations, we also find “Political Science” and “Social Sciences, Interdisciplinary.” But it is surprising that “History” ranks only half-a-deviation above the mean. This is perhaps because “History & Philosophy of Science” does too.

Removing this confound by combining the categories using their external meanings is not a straightforward thing to do. Of course, the Psychology categories are easy to bring together under one banner because they are all explicitly marked as “Psychology.” And the Health-related categories are only slightly less obvious. But the others required some interpretation. The resulting higher order clusters are debatable, of course, but it seems to me that there are seven groups. And these make good sense from the point of view of a specialist in this field. Sorting them by the percentage they own of the network then gives the following breakdown: Psychology (28.1%), 14 Social Control and Intervention (18.1%), 15 Money and Power (14.8%), 16 People and Places (13.7%), 17 Health (11.3%), 18 Historiography of Science (8.1%), 19 and general Social Sciences (5.9%) 20 the specifics of which could be associated with various other groups according to the contents of each article.

Discussion

The cluster analysis shows topical connections by journal. Following the convention of naming groupings after the top-ranked member, it is easy to see in Figure 5 that “sociological” articles will find their best fit with HHS (pink), “psychological” articles with HoP (green), and “political” articles with JHBS (yellow). To get a sense of the specific interests of each of the primary journals, we can then perform a similar operation as before, but following the cluster analysis.

JHBS covers nine categories, accounting for 19.8% of the network. HoP covers 12 categories, accounting for 32.2% of the network. And HHS covers 21 categories, accounting for 48% of the network. These percentages suggest different degrees of topical focus at each journal. But a second grouping can also be made—referring to the external meanings of categories—to give a more precise assessment. This can then serve as guidance to authors.

To construct this second grouping, the category-groups from the “Results” section can be combined with the associations provided by the modularity analysis. From this perspective, and rescaling the PageRanks, the ideal HoP article would target the following interests in an historical way: Psychology (81.1%), People and Places (10.6%), Money and Power (3.7%), Health (2.5%), and Social Control and Intervention (2.2%). The interests of JHBS can be defined in similar terms: Money and Power (47.7%), Historiography of Science (21.1%), Social Control and Intervention (18.4%), People and Places (4.4%), Health (4.3%), and Psychology (4.1%). And HHS: Social Control and Intervention (29.1%), People and Places (19.2%), Health (18.4%), Social Sciences (12.4%), Money and Power (9.1%), Historiography of Science (8.4%), and Psychology (3.3%).

These are quite different reflections of the same basic set of interests. It’s also clear that the three journals seek to publish very different kinds of articles. Thus, for example, we might expect that historical discussions of psychological theory or psychological findings (or significant people and their labs) would be more likely to find a home in HoP. Institutional histories and examinations of the internal politics of the discipline would be more likely to be accepted at JHBS. And discussions of control and power with implications for individuals and their mental health, which we might more simply refer to as issues of governmentality and subjectivity, in HHS.

This could be interpreted to mean that the primary journals have in a sense institutionalized the turn toward a “polycentric” approach to the doing of the History of Psychology and together reflect a polycentric historiography (cf. Danziger, 1991, 2006). But it could also be interpreted less charitably. Indeed, one might wonder about the strength of the disciplinarity represented: Only one of the three primary journals in the History of Psychology shows significant interest in Psychology as an explicit subject. So while the primary journals are where the History of Psychology finds its main outlets, the analyses presented here suggest that the activities reflected in them could also reflect other disciplinary concerns (cf. Weidman, 2016).

General Discussion

There has never been a comprehensive, quantitative, wide-angle look at the current state of History of Psychology as if it were a discipline in its own right (cf. Capshew, 2014; Hilgard, Leary, & McGuire, 1991). Methods now exist to examine similarities in language-use in a corpus of text, and these have been used to examine the early history of psychological publishing (e.g., Green et al., 2015a, 2015b). But the present approach to the quantitative examination of possibilities in the use of language—of a discipline’s discourses—arose within Psychology itself (Burman et al., 2015, 2018).

Still, this earlier work did not look at how possible meanings are reflected in actual use. The contribution of this article is thus an extension of those efforts into this new area, using a new data source. The novelty of the source then also enabled other innovations, such as the use of known-categories to identify interests at the level of journals according to the discourses they represent (rather than by the stated intent of the editors). And thus, we gain new insight into how the History of Psychology is actually done by those who do it.

That said, however, the investigation has also led me to reflect on issues that I had not previously considered. For example, what if the History of Psychology is not a discipline, but an interdiscipline? (A coming together of different groups with relatable interests and having a plurality of disciplinary allegiances, norms, and values.) This is potentially very unstable, as a configuration, and that instability is not conducive to placing students in a supply chain of talent that leads from the start of undergraduate training through to a tenured professorship. It is also not especially well-suited to serving the training needs of the interdiscipline’s largest audience (viz. psychologists with history requirements under accreditation). But the notion itself is, at least, testable.

Performing a further modularity analysis using the external meanings of the categories from Study 3 shows that the primary journals do indeed cluster together when considered relative to those meanings. We can therefore say that, relative to these primary interests—Psychology, Social Control and Intervention, Money and Power, People and Places, Health, and the Historiography of Science (see Figure 6) 21 —these three journals are indeed the primary publishing venues for historical scholarship as it is done in Psychology (or relating to psychology). But this is an emergence from the bottom-up, not a disciplining from the top-down. One is therefore also led to wonder, in consequence, if a different administrative approach might be required at the level of the governing disciplinary institutions—such as graduate programs and scholarly associations 22 —if the collective doing represented in these journals is to thrive.

The dominant interests of the History of Psychology, leading the three primary journals to cluster together relative to the external meanings of those interests.

Conclusion

There are a relatively small number of ways of contributing to the primary journals, in the History of Psychology, but looking outward toward the periphery shows a much more diverse and open field than I expected. I have attempted to represent these interests by including—in the supplemental bibliography—some of the articles that struck me as the most interesting at different levels through the circle of relations, but those choices undoubtedly reflect my tastes. Because I did not simply follow raw citation counts, and tried to find a balance between impact and recency (subject also to limits of space), there is room for debate. My hope, though, is that the list will be taken as an invitation to explore: Every journal identified has a lot more to offer that would be of lasting interest to this community.

By no means, in other words, should the list be taken as exhaustive. Some of what’s missing, too, is the result of coverage gaps between the different products in the JCR family of databases. It is certainly known that materials published in omitted sources continue to be cited within the interdiscipline (e.g., Brush, 1974; in the supplemental bibliography). But further research is required to reconcile the different sources in such a way as to reflect the same level of control. Indeed, the intermingling apparent in the raw citation data leads one to question why—aside from commercial considerations—there are multiple database products for JIFs at all: Journals in the Social Sciences cite journals in the Natural Sciences and vice versa, so why are these useful metrics omitted from those journals in the other’s database? In light of this structural problem, one might think it would be simpler to replace the JCR with a more transparent source of information in future research. Unfortunately, however, there aren’t many alternatives.

The cited references made accessible through PsycNET afford an interesting possibility for recent material, especially following the launch of the PsycINFO Data Solutions service (http://www.apa.org/pubs/psycinfodatasolutions/). What this would lose in giving up the JCR’s categories, it would then make up for in access to the American Psychological Association’s [APA] own controlled vocabulary (see Burman et al., 2015). But the resulting analyses would then have access only to articles published in areas the APA considers to be close enough to Psychology to merit inclusion in the database. Studies would therefore be blind in a different way. Every choice has its consequence.

Finally, but perhaps most importantly, I need to reiterate that the History of Psychology is not a journals-only discipline. Certain large-scale arguments can only be made by reflecting on a series of smaller demonstrations (“microhistories”), and the book is still the best tool for this job. The major limitation of examining journal-publishing is then that we are blind, from the outset, to that very important aspect of the doing. Here, though, that weakness has been turned to a strength: There is no reason to believe that journal-publishing and book-publishing would diverge substantially in the interests guiding their doings, so the perspective derived by leveraging one can help to remedy our blindness of the other.

In short, there are discoveries here, to be sure, but the lessons are incomplete. Still, it could be worse. At least the guidance is positive: where the maps err, they err on the side of conservatism. Thus, when something has been identified that would never have previously been considered, we can trust that we have learned something new. We just need to go looking for what was missed, from where, and why (see Burman, 2018; Green, 2016; Pettit, 2016). Onward!

Supplemental Material

R1_-_Supplemental_readings_-_final_(1) – Supplemental material for What Is History of Psychology? Network Analysis of Journal Citation Reports, 2009-2015

Supplemental material, R1_-_Supplemental_readings_-_final_(1) for What Is History of Psychology? Network Analysis of Journal Citation Reports, 2009-2015 by Jeremy Trevelyan Burman in SAGE Open

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Notes

Author Biography

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.