Abstract

Pediatric resident trauma education is suboptimal due to lack of a curriculum and limited trauma experience and education resources. The objective of the study was to test knowledge retention and acceptability of interactive spaced education (ISE) in pediatric trauma. Prospective, randomized trial involving 40 physicians in a pediatric emergency department was used. Instrument was comprised of 48 multiple-choice questions (evaluative component) and answer critiques (educational component) on pediatric trauma divided into two modules. The instrument was assessed for test–retest reliability, item difficulty, and construct validity. Intervention consisted of online administration of each module as eight spaced emails (3 questions each) over a course of 4 weeks and was repeated after 2 and 4 months. Participants received an answer critique on committing to an answer. Primary outcome was difference in mean percentage of correct answers at 2 and 4 months versus baseline. Paired t test and effect size (d) were performed. Secondary outcome was exit-survey of ISE acceptability. There was significant improvement at 2 months (8.0, 95% confidence intervel [CI] = [3.6, 12.5], d = 0.75), but improvement at 4 months (1.6, 95% CI = [−4.5, 7.7], d = 0.18) was not significant. Sixty percent would retake and recommend ISE to others. Interactive, spaced education improves knowledge in pediatric trauma and is well accepted. Studies are required to determine the optimal spacing interval for this form of education.

Introduction

Injuries are the leading cause of morbidity and mortality among children in the United States (Borse et al., 2008; Child Maltreatment, 2010). Although the well-known Advanced Trauma Life Support (ATLS; 2013) course and other resources in trauma education exist (Ali, Adam, Sammy, Ali, & Williams, 2007; Block, Lottenberg, Flint, Jakobsen, & Liebnitzky, 2002; Carley & Driscoll, 2001; Cherry & Ali, 2008; Cherry, Williams, George, & Ali, 2007; Jacobs et al., 2003; Lee et al., 2003; Marshall et al., 2001), educational resources for pediatric trauma are limited (Bevan, Officer, & Babl, 2008; Falcone et al., 2008; Mikrogianakis et al., 2008). ATLS has one chapter devoted to pediatrics, the Advanced Pediatric Life Support course (APLS; 2014) has a single section on pediatric trauma assessment and management, and Pediatric Advanced Life Support (PALS, 2014) mainly focuses on cardio-pulmonary resuscitation.

Barriers to imparting trauma education in children’s hospitals include lack of a trauma curriculum (Demorest, Bernhardt, Best, & Landry, 2005; Valani, Yanchar, Grant, & Hancock, 2010) and limited trauma experience (Lieberman & Hilliard, 2006; Trainor & Krug, 2000). As a result, pediatric residents may obtain inconsistent and incomplete education on trauma. Indeed, in a survey of all accredited pediatric residency-training program directors in the United States and Puerto Rico regarding the educational experience of pediatric residents, 37% of all respondents were not confident in their residents’ training in major trauma (Trainor & Krug, 2000). Moreover, experts in residency education have identified the need for a national curriculum for pediatric trauma (Valani et al., 2010). In a survey of our pediatric residents to determine the state of their current education on trauma (unpublished), 68% of residents were uncomfortable in managing pediatric trauma patients and 57% rated their education on trauma less than average. Besides pediatric emergency medicine (EM) specialists, U.S. children’s hospital emergency departments (EDs) are also staffed by general pediatricians and residents. Given the reasons stated above, the latter would most likely benefit from education on pediatric trauma.

Providing educational opportunities to learners in short bursts over a period of time has been found to achieve superior knowledge retention (Larsen, Butler, & Roediger, 2009; Price Kerfoot, 2008, 2009; Price Kerfoot, Armstrong, & O’Sullivan, 2008b; Price Kerfoot, Kearney, Connelly, & Ritchey, 2009). The term spaced education has been coined to refer to online educational programs that are structured to take advantage of the pedagogical benefits of the spacing effect, in which periodically repeated, educational encounters lead to improved knowledge attainment and retention compared with a single “bolus” educational opportunity. It has been successfully utilized in training urology residents in clinical practice guidelines (Price Kerfoot et al., 2009) and teaching physical examination (Price Kerfoot et al., 2008b), teaching anatomy among medical students (Evans, Zeun, & Stanier, 2014), and promoting patient comprehension about acne (Wang, Wu, Tuong, Schupp, & Armstrong, 2015).

To address the lack of educational resources in pediatric trauma, while also considering time constraints, we designed a spaced education curriculum. We hypothesized that learners would demonstrate superior knowledge attainment and retention of pediatric trauma, compared with baseline, when they were taught through interactive, spaced education (ISE). The objectives of this study were to evaluate knowledge retention and acceptability of ISE for teaching pediatric trauma.

Method

Instrument Development and Validation

Instrument

The course content was developed using trauma assessment and management principles from the ATLS textbook (ATLS for Doctors, 2008) and suggested trauma curriculum (Valani et al., 2010). The instrument consisted of an evaluative component (multiple-choice question) and an educational component (answer critique). A panel of four pediatric emergency medicine (PEM) physicians and two pediatric trauma surgeons constructed and content-validated 63 questions, from which 48 were selected based on a modified Delphi method. These questions were divided into two modules (Module A and Module B) each with 24 questions. Each module had eight sub-groups, each with three questions (Figure 1). The questions involved case scenarios in pediatric trauma, trauma life-support interventions, and selection of appropriate equipment, medications, and procedures. Questions were piloted for readability, clarity, and potential learner comprehension among a group of 10 pediatric residents and PEM fellows in the same center and revised based on feedback. Correct answers were assigned one point. Unanswered questions were counted as incorrect answers. The final score was calculated as a percentage of correct answers. The educational components were content-validated by three PEM physicians and one trauma surgeon. They were produced in a variety of formats: PowerPoint slides and audio and/or video presentations.

Interactive online education in pediatric trauma.

Procedure

Acceptable qualities of a test instrument include (a) item difficulty: wide range of difficulties so that the test can be used with both expert and novice groups; (b) content validity: covers all the main aspects of pediatric trauma; (c) construct validity: significant difference in scores between expert and novice groups; and (d) test–retest reliability: no significant difference in participants’ test scores at entry and after 1 month. We assessed these qualities among novices (PGY-3 and PGY-4 [post graduate year] pediatric residents and first- and second-year PEM fellows) and experts (third-year PEM fellows). Subjects were individually required to log into a web-based, education platform Moodle and answer the questions in one sitting. This session was not proctored, and no feedback was given. Analyses were performed using SAS 9.2 (Cary, NC).

Item difficulty was assessed using the proportion of correct answers across all subjects. The percent of correct answers for Modules A and B were 0.63 (0.58, 0.68) and 0.69 (0.64, 0.73), respectively, and for both Modules A and B combined: 0.66 (0.63, 0.69). No floor or ceiling effects were observed in Module A. Two out of 24 questions in Module B were answered correctly by all participants.

Content validity was assessed using a panel of four PEM physicians and three pediatric trauma surgeons who concurred with the accuracy and applicability of the items to cover all aspects of pediatric trauma.

Construct validity was assessed by demonstrating a significant difference in scores between expert and novice groups using the non-paired t test with a p value < .05 considered as significant. The combined scores obtained in both modules were added and expressed as a percentage of correct answers. There was a significant difference between the means of the two groups. Mean percentage of correct answers by experts (n = 7) was 71.4% (SD = 8.13), whereas the mean percentage of correct answers by novices (n = 9) was 55.4% (SD = 16.01); p value = .036.

Written test–retest reliability was calculated by comparing each learner’s test score at entry and after 1 month using the paired t test. Modules A and B were administered to eight residents and fellows. There was no significant change in means scores at entry and after 1 month for Module A (M difference = 2.8, 95% confidence interval [CI] = [−5.7, 11.25], p = .47) and for Module B (M difference = −5.2, 95% CI = [18.34, 7.93], p = .38).

Study Setting and Participants

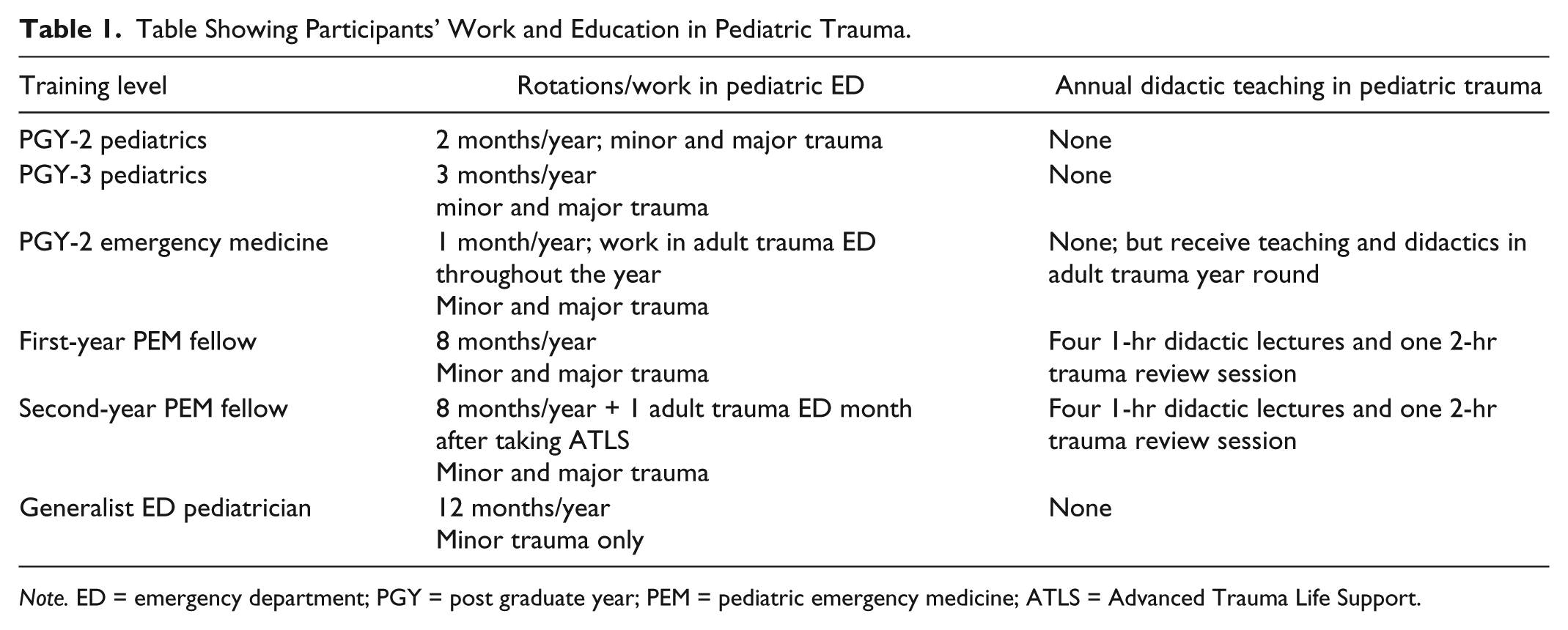

This study was conducted from October 2011 through May 2012 at a Level 1 trauma-designated, urban children’s hospital ED in Houston, Texas. The annual ED census is 84,000 patients. The study participants included second- and third-year pediatric residents, second-year EM residents, first- and second-year PEM fellows, and non-EM sub-boarded ED general pediatricians (“generalist” pediatricians) who worked in the ED. Pediatric residents rotate in the ED for 2 and 3 months during their second year and third years of training, respectively. PEM fellows work in the pediatric ED for 8 months per year during their first and second years of training. Second-year PEM fellows rotate for an additional month in an adult trauma ED and are required to take the ATLS course before commencing this rotation. Additional education on trauma for PEM fellows includes four 1-hr didactic lectures and one 2-hr trauma review session annually. EM residents rotate for 1 month in the pediatric ED during their second year of residency. During their residency, they regularly work adult trauma ED shifts and receive frequent didactics on trauma. Residents and fellows assist in the management of pediatric trauma patients with all degrees of severity. Generalist pediatricians who work in the ED do not routinely manage patients with severe traumatic injuries, although they may care for children with minor injuries (Table 1). The study was approved by the Baylor College of Medicine institutional review board.

Table Showing Participants’ Work and Education in Pediatric Trauma.

Note. ED = emergency department; PGY = post graduate year; PEM = pediatric emergency medicine; ATLS = Advanced Trauma Life Support.

Study Design

In this prospective randomized study, emails were sent to eligible subjects; 40 individuals agreed to participate. They are the trainees who provide care to trauma patients in the ED. We used the previously validated instrument (described above). The intervention consisted of online administration of each module as eight spaced emails (three questions each) over the course of 4 weeks and repeated after 2 or 4 months. After written consent was obtained, participants were stratified based on training level and randomized to two cohorts using a random number table. Participants and investigators were blinded to cohort assignment. As displayed in Figure 1, participants in Cohort 1 received items in Modules A and B over the course of 8 weeks, followed by a 4-week rest period. They received the same items in Modules B and A in the final 8 weeks of study. Participants in Cohort 2 received items in Modules B and A over the course of 8 weeks, followed by a 4-week rest period. They received the same items in Modules A and B in the final 8 weeks of study. Because the modules were presented simultaneously to both cohorts but at different time intervals, short-term (2 months) and long-term (4 months) learning gains of participants could be compared with baseline. To decrease the possibility of question familiarity and recall in Cycle 2, we relabeled Module A and Module B as Module C and Module D, respectively (Figure 1).

ISE online delivery system

The spaced education items were delivered through an online delivery platform Moodle. Participants received ISE emails with a hyperlink to Moodle twice weekly. On logging in, each participant was able to access three questions from the respective module and submit answers. On submission, the correct answers with an explanation of the learning point(s) were presented. Because the participant had to commit to a specific response before receiving the correct answer, the process required greater interaction. All submitted answers were scored and stored automatically. To accommodate participants with scheduling conflicts (night shifts, vacations, and away electives), access to all questions for a respective module was kept open for the entire month when it was being administered. Thereafter, access to the specific module was terminated. A program manager (PM) accessed the participants’ Moodle accounts weekly to ensure that the questions were being answered on time. The PM also helped resolve problems with account access. Delinquent participants were contacted by emails or by telephone weekly.

At course completion, participants were asked to complete an online survey to ascertain feedback on the educational program. The exit-survey consisted of five-level, Likert-type questions on participant comfort in performing certain pediatric trauma-related procedures. In addition, participants were asked if they would recommend ISE to others and if they would participate in this form of education again. The survey was constructed and administered online using a web-based platform (www.surveymonkey.com; Palo Alto, CA). On completion of the survey and submission of answers to more than 80% of test items, the participants received a US$50 gift certificate to a bookstore.

Outcomes

The primary outcome was the difference in mean scores at 2 and 4 months, compared with baseline, for combined modules for both cohorts among participants who completed the course. A secondary outcome measure was the acceptability of ISE to physicians as an online education method.

Sample size

Assuming that the education program would improve knowledge of trauma among learners, we chose an increase of 6.3% (1.5 out of 24 points) in test scores as a clinically useful improvement in knowledge based on pilot sample data. This improvement in mean test scores (SD = 1.73) would have an effect size (ES; Cohen’s d; Cohen, 1992) of 0.87 that is considered to be a large improvement. A sample size of 15 participants per group for pre- and post-tests would achieve a 95% power to detect an improvement of 1.5 points (6.3%) in test scores (SD = 1.73; M = 13.5) and alpha of .05 using a one-sided t test.

Statistical analysis

Completed learners’ tests were tabulated and scored using predetermined answer keys. Difference in mean pre- and post-intervention test scores were calculated and compared. The t tests and Cohen’s d or ES were used to examine the effect of education on participants’ knowledge. ES is calculated by dividing the average score difference between pre- and post-scores by the pooled standard deviation. An ES of >0.8 is considered large, 0.5 as moderate, and 0.2 as a small effect (Spencer, 1991).

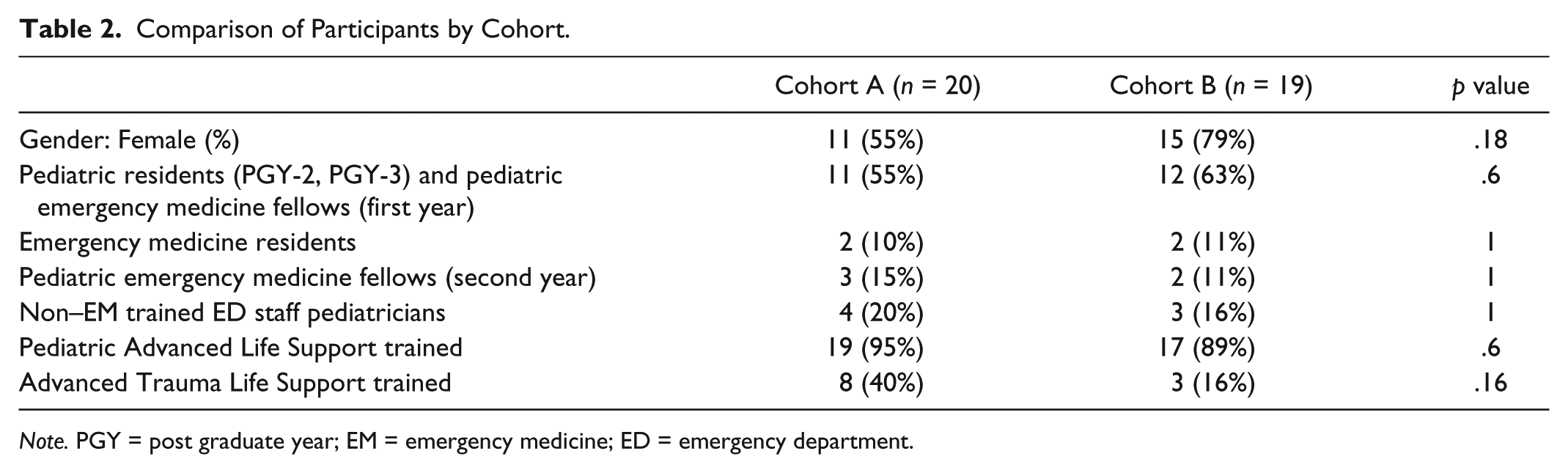

Results

Of the 40 enrollees, one dropped out before course commencement. Thirty-nine participants were randomized to two cohorts; 29 completed follow-up modules at 2 months, and 25 completed follow-up modules at 4 months (Figure 2). There were no differences between cohorts based on their level of training and prior training in life support (Table 2). Loss to follow-up was due to non-participation. A significant improvement in mean scores was observed at 2 months (8.0, 95% CI = [3.6, 12.5]; ES = 0.75), but the improvement at 4 months (1.6, 95% CI = [−4.5, 7.7]; ES = 0.18) was not significant. ATLS-certified participants had higher baseline scores (M = 75.5; SD = 9.7) than those who were noncertified (M = 64.7; SD = 11.3; p = .016). Although they demonstrated an improvement in 2-month scores (4.36, 95% CI = [−5.86, 14.59]), their scores at 4 months dropped slightly (−7.64, 95% CI = [−22.38, 7.10]). Non-ATLS certified participants showed significantly improved scores at 2 months (9.71, 95% CI = [4.62, 14.80]) and improvement in scores at 4 months (4.48, 95% CI = [−2.33, 11.29]) that was not significant.

Flowchart of participants who participated in online trauma education.

Comparison of Participants by Cohort.

Note. PGY = post graduate year; EM = emergency medicine; ED = emergency department.

We performed a sensitivity analysis by assigning the same baseline knowledge scores to the 2- and 4-month scores of participants who did not complete follow-up. Assuming that the missing values were randomly distributed, the mean improvement in scores would be significant at 2 months (9.5, 95% CI = [6, 13]; ES = 0.88) but not significant at 4 months (3.5, 95% CI = [−0.7, 7.7]; ES = 0.27).

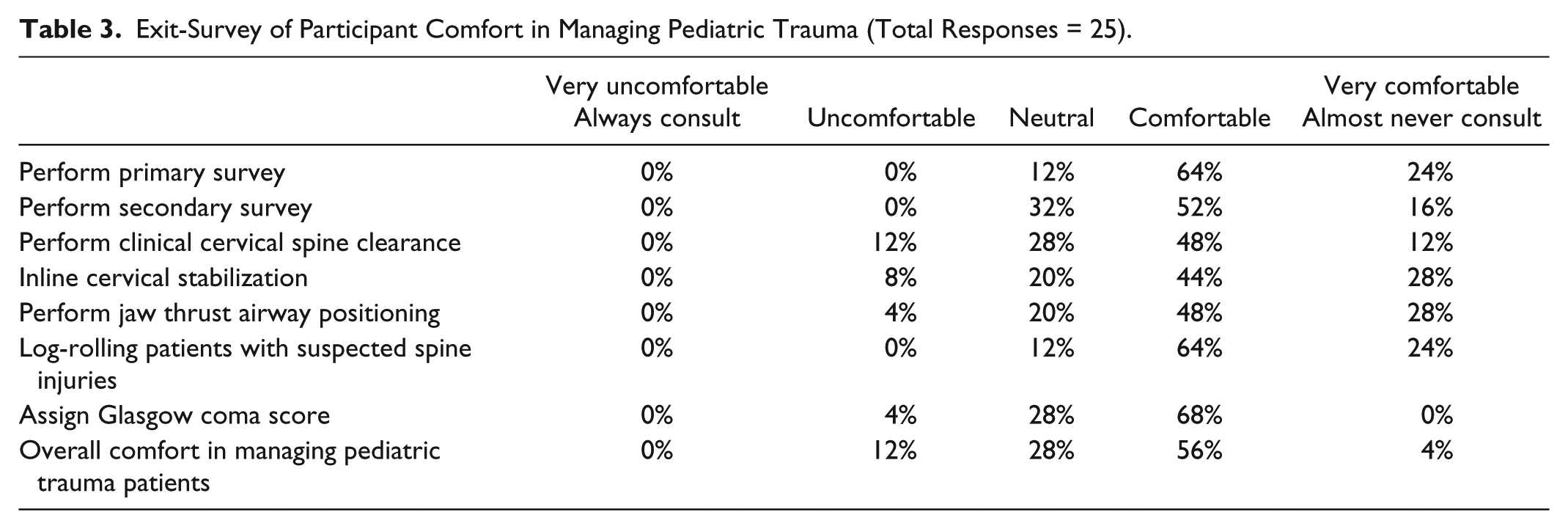

Table 3 shows the participants’ comfort in managing pediatric trauma at course completion. At course completion, 60% of the participants stated that they would undertake the ISE program again and recommend it to others.

Exit-Survey of Participant Comfort in Managing Pediatric Trauma (Total Responses = 25).

Discussion

In this prospective, randomized-controlled study on pediatric trauma spaced education, we observed a significant improvement in knowledge scores at 2 months. Knowledge scores also improved at 4 months, but not significantly. This form of education was moderately well accepted among physicians, with 60% of participants mentioning that they would recommend this program to others or that they would participate in it again. The results are in agreement with earlier studies that have demonstrated the success of spaced education in improving short- and long-term knowledge in medical trainees and that it is proving to be an acceptable form of education (Price Kerfoot, 2008, 2009; Price Kerfoot, Armstrong, & O’Sullivan, 2008a, 2008b; Price Kerfoot et al., 2009). We had, however, hoped that the improvement in 4-month scores and overall acceptability would be better. We posit certain factors that may explain the scores. Condensing the entire pediatric trauma curriculum into 48 items and administering them over 8 weeks may involve learning considerable information in a short time. Alternatively, trauma information may not be closely linked or directly relevant to trainees’ day-to-day practices. Trauma is a relatively small proportion of the pediatric curriculum, and our pediatric residents encounter trauma patients in a limited number of rotations (ED or intensive care). This factor is corroborated by the fact that we did not observe a large enrollment despite a sizable number of eligible participants. Finally, baseline knowledge of trauma was relatively low in most of our learners. As the education was conducted in small increments over an extended time, demonstrating a large improvement in knowledge scores at follow-up may be more difficult.

We also faced some problems during rollout of the education program. Initially, we planned to restrict access to module items for 3 days at a time. However, during the pilot phase, we realized that residents have varying schedules such as consecutive night shifts, vacation, and away electives, thereby limiting access to the educational items and contributing to decreased participation. Therefore, we extended access to all items from one module for 1 month. The trade-off was that some participants may have chosen to answer most or all questions at the same sitting, or that, as the course was non-proctored, participants may potentially have discussed the answers with their colleagues and hence, diminished the effect of spaced education.

We also faced some technological problems. Some learners had problems with the log-in. We appointed a PM (0.10 full-time equivalent [FTE]) to supervise learners’ participation and to assist with trouble-shooting. She sent periodic email reminders and made telephone calls to delinquent learners. In addition, some items were large electronic files that were housed on the departmental server. Some learners were unable to access these files at home because of the speed of their Internet connections. These learners were instructed to access the modules at work.

Still, we are hopeful about the program benefits. First, it could fulfill a general need for pediatric trauma education. ATLS is primarily meant for surgeons and health providers in EM and critical care. Our program is suited to deliver pediatric trauma education to pediatric residents in a comprehensive manner that considers their time constraints. The course is likely to benefit large pediatric residency programs that have several non–ED based clinical care lines and rotating residents from other facilities, as well as smaller programs that may not have enough faculty to teach pediatric trauma. The course may also be useful for non–EM trained ED staff pediatricians.

There were several limitations in our study. First, the education program did not measure performance in management of actual pediatric trauma patients. This would be difficult to assess in this group of learners, given the sporadic nature of moderate and major pediatric trauma. A more convenient and useful method to test performance would be to use simulated case scenarios. However, this was beyond the scope of our study. Second, despite input from PEM physicians and trauma surgeons, the test questions may not be a representative sample of the curriculum. Third, we were unable to control for the number of ED rotations and the prior clinical trauma experiences of learners. Fourth, because participation was voluntary, we were unable to enroll a sufficiently large number of pediatric residents, and we also enrolled other trainees and generalist pediatricians. Pediatric residents are the group most likely to benefit from the education. To address this issue, we repeated the analysis based on participant certification in ATLS. The results in non–ATLS certified participants were similar to the general results. However, the lower number of learners who were not pediatric trainees precluded sub-group analyses. Fifth, there was learner attrition during the course (2 month = 26%; 4 month = 36%), and some participants expressed concern that the education program dragged. Pediatric trainees were more likely to be lost to follow-up rather than the other group of learners. Conflicting professional and personal priorities, busy residency schedules, and away rotations may have been the reason for attrition. Attrition also may have biased results of the post-program survey on acceptability. Unfortunately, we are unable to determine whether the loss to follow-up was due to competing work responsibilities or lack of interest in the program per se. It is worth noting that the program could not be made mandatory. Nonetheless, a sensitivity analysis after imputing baseline scores to the follow-up scores of participants who failed to complete the course demonstrated results that were similar with the general results. Sixth, selection bias may have occurred. As this course was voluntary, only the more motivated learners may have chosen to participate and complete both cycles which could have biased the results favorably. Finally, this study was conducted at a single institution, and the results may not be generalizable to other institutions.

Despite these limitations, we are encouraged to think about “next steps.” Taking into account what we learned from this study, we may schedule a “bolus” didactic session on pediatric trauma at the start of the program followed by spaced education. Alternatively, we may condense this to a 1-month program that could be scheduled during a 1-month ED rotation, make the course mandatory, and use a PM with the hope to compare these methods of learning with more traditional didactic education.

Conclusion

Interactive, spaced education improves knowledge in pediatric trauma and is well accepted. Studies are required to determine the optimal spacing interval for this form of education.

Footnotes

Acknowledgements

The authors thank Dr. B. Lee Ligon, Center for Research, Innovation and Scholarship, Baylor College of Medicine, for editorial assistance.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research and/or authorship of this article: The education program received grant support from Texas Children’s Hospital.