Abstract

In the wake of the COVID-19 pandemic, media pundits and government officials have raised concerns about whether a rise in self-reported distrust in scientists might shape the public's willingness to comply with science-based regulations, both in relation to COVID-19 and in other areas. This concern is based on the assumption that distrust expressed in surveys can also affect real-world behaviour. However, existing research has not examined the extent to which expressed trust in scientists has a causal effect on the public's adherence to scientific regulations more generally. Using a between-groups experimental design, this study tests the hypothesis that increasing or decreasing trust in scientists causes a corresponding change in compliance with their recommendations across a range of domains. The results show that decreasing trust does reduce compliance, and scholars and scientists face great difficulty in raising public trust in scientists.

Introduction

The coronavirus pandemic has heightened anxiety about the public's waning faith in scientists. This anxiety has manifested in media headlines across a wide range of publications. For example, Cross (2021) asks, ‘Will public trust in science survive the pandemic?’ This headline exemplifies the increased salience of public trust in scientific advice because of the reluctance of many Americans to be vaccinated against COVID-19. This trend coincides with survey findings showing that an increasing number of people say they distrust science. Pew Research found that trust in scientists dropped 14 percentage points from the start of the pandemic through the end of 2024 (Tyson and Kennedy, 2024). Additionally, self-identified Republicans and racial minorities all report declines in trust in scientists (Funk et al., 2020a). This simultaneous rise in distrust in scientists and public reluctance towards vaccination is concerning, because it is reasonable to worry that an individual who says they distrust science might manifest such distrust through a lack of compliance with scientific recommendations. However, it is unknown whether these expressed levels of distrust actually cause people not to comply with scientific recommendations.

This study tests the causal effects of individuals’ expressed distrust in scientists on their voluntary compliance intentions in response to a scientific message, drawing from decades of research that demonstrates how message source effects can affect individuals’ intended behaviour (McEwen and Greenberg, 1970; Wilson and Sherrell, 1993; Yoon et al., 1998). The goal of this study is to extend this scholarship to study experimentally induced scientific source effects; specifically, to understand individuals’ intentions towards voluntary compliance with scientific protocols. This work is important because voluntary compliance with such protocols is crucial to public safety, and threats to this compliance are potentially deadly. Vaccines do not eradicate disease if the public does not agree to take them. Mammograms do not detect breast cancer if women do not follow through with recommended doctor visits. Weather alerts do not save lives if the public does not listen to their warnings. If scientists are not credible sources of advice, citizens might not comply with guidelines or other laws and might thereby trigger widespread withdrawal from collective endeavours (Nye et al., 1997). Evidence of this trend has already entered public discourse. The World Health Organization (2023) warned of a measles resurgence in heavily unvaccinated communities, which has manifested in a West Texas outbreak that recently claimed the life of one school-aged child (Mukherjee, 2025). It is therefore critical to understand the causal relationship between trust and compliance in order to stem this rising tide of distrust and, ultimately, to protect public welfare.

Using a survey experiment that seeks to manipulate participants’ level of trust in scientists, followed by measuring their likelihood of compliance, this study investigates whether this causal relationship exists and whether it carries over into other scientific domains; that is, whether distrust in COVID-19 scientists affects individuals’ compliance with environment-related regulations or safety recommendations. This analysis is an application of the notion of lateral attitude change, described by Glaser et al. (2015) as individuals’ attitude change towards focal objects tangential to the focus of a persuasive message. Any lateral attitude change effects are significant in predicting the behavioural effects of distrust in scientists in contexts beyond COVID-19.

Literature review

Previous scholarship establishes a strong empirical link between individuals’ trust in scientists and those individuals’ compliance behaviour, yet most of this evidence is correlational. What remains unclear is whether trust causes compliance, or whether both arise from shared underlying factors such as individuals’ partisanship or education level. The present study addresses this gap by experimentally manipulating trust in scientists to test its downstream causal effects on individuals’ compliance intentions. This literature review synthesizes prior research on trust and behaviour to justify this design.

Conceptualizing trust

Trust is fundamentally an individual's belief that another will operate with integrity while acting in the individual's best interest (Citrin and Stoker, 2018; Levi and Stoker, 2000). It is a multidimensional construct encompassing several interrelated dimensions (Bromme et al., 2022; Hendriks et al., 2016; Pfänder, 2025). Scholars distinguish among cognitive trust—confidence in scientists’ competence and expertise; affective trust—emotional security or comfort with scientists; and social trust—belief that scientists act in the public's interest and share societal values. Trust is both relational and conditional, as it is given to others only within certain domains. The domain in which an individual extends cognitive trust is usually within the recipient's occupation or area of expertise (Levi and Stoker, 2000). For example, an individual might trust their therapist for relationship advice but not for medical treatment or landscaping guidance. This study concerns individuals’ cognitive trust in scientists, as the experimental intervention primarily manipulates perceptions of scientists’ competence during the early stages of the COVID-19 pandemic.

Individuals’ cognitive trust in another is strongly associated with their perceptions of expertise and competence (Cologna, 2021; Hartman et al., 2017; Henkel et al., 2023). ‘Perceived expertise’ refers to ‘the extent to which a speaker is perceived to be capable of making correct assertions’ (Pornpitakpan, 2004: 244), while ‘perceived competence’ refers to the extent to which the speaker is ‘perceived to be able to act on their intentions’ (Linne et al., 2022: 2). Science-communication scholars similarly conceptualize cognitive trust as a function of perceived expertise, integrity and benevolence (Hartman et al., 2017; Hautea et al., 2024; Hendriks et al., 2015; Bromme et al., 2022). These dimensions parallel definitions of cognitive trust from the social-psychology literature and are often operationalized through experimental manipulations of source credibility. There is ongoing debate on whether trust or expertise is more important in driving individuals’ assessments of source credibility, as well as whether the COVID-19 pandemic has changed the landscape of trust and expertise research (Barnett White, 2005; McGinnies and Ward, 1980). Regardless, previous political-science and science-communication scholarship shows that trust and expertise are heavily intertwined in both experimental and non-experimental contexts (Hendriks et al., 2016; Stauffer et al., 2023). Thus, persuasive messages seeking to affect individuals’ level of trust in scientists are also likely to leverage perceptions of scientists’ expertise and competence.

This study concerns individuals’ trust in scientists as a professional group and in science as a social institution. Lenard (2008: 329) argues that, when placing trust in institutions, citizens are in fact placing trust in the individuals in charge of those institutions to ‘operate effectively and honestly’. There is a certain vulnerability that a person accepts when placing trust in others, as there is always a chance that the trusted party will turn out to betray the individual's interests. Van de Walle and Six (2014: 159) note the difficulty in the practical application of trust data because it cannot ‘ever provide certainty about the trusted party's future actions’. Even scholars who fundamentally define trust as a process or transaction between individuals acknowledge difficulty in extrapolating the behavioural effects of trust (Mollering, 2006). This study, in part, resolves this difficulty by experimentally manipulating trust as the independent variable to predict individuals’ likelihood of compliance with recommendations.

Current trends in public trust in scientists

This study adds to existing literature on public trust in the scientific community. Overall public trust in science has remained relatively high and stable over the past 50 years. At least seven out of 10 Americans have maintained at least a fair amount of trust in scientists during this period (Funk, 2017), but this percentage dropped by 14 points from the start of the pandemic through to the end of 2024 (Tyson and Kennedy, 2024). Six out of 10 US adults say that scientists should take a more active role in policy decisions (Funk, 2020; Funk et al., 2019).

The demographic divide in trust in scientists is also complex. Nearly twice as many black Americans as white Americans say they have little to no confidence in scientists (Funk et al., 2020a). There is also a substantial partisan discrepancy between self-identified Democrats and Republicans (Li and Qian, 2022). Just over six in 10 Democrats say they have a great deal of trust in scientists, compared to two in 10 Republicans who say the same (Funk et al., 2020a). Partisans rely on party cues to signal support for or opposition to specific issues. Thus, both Democrats and Republicans report distrust of science when the scientific information threatens their partisan beliefs (Nisbet et al., 2015). Altenmüller et al. (2024) confirmed this finding in a more recent study, showing that partisans’ trust in scientists can be explained by the degree to which they perceive themselves and scientists to be ideologically congruent. Partisans may also respond to scientific messages with a ‘backlash’ effect, whereby they react adversely to information that contradicts their partisan beliefs (Carter et al., 2016; Lewandowsky et al., 2012). It is worth investigating whether this effect occurs in the context of COVID-19 compliance. Additionally, college-educated individuals reported higher levels of trust in scientists compared to those without college experience, both before and during the COVID-19 pandemic (Funk et al., 2019; Funk et al., 2020b).

Correlation to causation: Evidence of the trust–compliance link

This section of the literature review discusses existing observational research linking trust and compliance. While these findings confirm the robustness of the trust–compliance correlation, they leave open the causal direction of this relationship.

Observational research

A range of studies shows a positive correlation between trust in scientists and public adoption of their recommendations (Dodd and Rife, 2024; Dohle et al., 2020; Sailer et al., 2021; Sehgal et al., 2023; Plohl and Musil, 2021), both in the United States and elsewhere. This correlation exists in numerous areas of science, including medicine, volcanology and meteorology. Within the health domain, individuals’ trust in public-facing medical practitioners during a crisis is crucial to securing the consumption of medications or the public adoption of protection guidelines (Bicchieri et al., 2021; Cologna et al., 2025; Krentel et al., 2013).

Recent literature further demonstrates this positive correlation during the COVID-19 pandemic. In a panel survey across 12 countries, trust in science was a strong predictor of the adoption of COVID-19 protection measures (Algan et al., 2021). Bicchieri et al. (2021) found that those with high trust in science were 23% more likely to comply with social distancing in all nine countries included in their study. Also in an international context, Dohle et al. (2020) found that trust in science and trust in politics were observed to have the strongest correlation with both the acceptance and the adoption of COVID-19 guidelines. In Germany, political orientation was observed to have a small to medium effect on the adoption of guidelines, which differs from research in the United States, where political differences play a larger role in dictating COVID-19 behaviours (Gadarian et al., 2021). Plohl and Musil (2021) likewise found that individuals’ perception of COVID-19 as a threat and trust in science correlated with an increased likelihood of following scientist-issued guidelines. Lastly, Han's (2022) systematic review found that individuals’ trust in scientists was strongly associated with the adoption of healthy behaviours across 35 countries during the initial COVID-19 outbreak.

The well-established positive correlation between trust and compliance may indicate a potential causal link between the two variables. Collectively, existing studies show that trust and compliance consistently co-occur across diverse scientific domains (Dodd and Rife, 2024; Dohle et al., 2020; Sailer et al., 2021; Sehgal et al., 2023; Plohl and Musil, 2021). However, their reliance on cross-sectional or self-reported data limits causal inference. It remains uncertain whether trust drives compliance, or whether compliant individuals retrospectively rationalize their behaviour through trust in scientists. Additionally, other factors could also affect both trust in scientists and compliance. For example, the theory of expressive responding suggests that factors other than their actual level of trust in scientists may lead people to report low trust in surveys. The following section outlines some of the reasons to be cautious about interpreting the relationship between trust and compliance as causal.

Rationale for the current study

Despite the agreement outlined above that trust predicts compliance, few studies have directly manipulated trust to test its behavioural consequences. Observational studies showing a correlation between trust and compliance are not evidence that trust causes a change in compliance. For example, Caplanova et al. (2021) found that people who are employed are more likely to comply with social distancing. This correlation does not indicate that being employed causes social distancing. There are a number of plausible reasons for the correlation. Perhaps employed individuals are more likely to find themselves in work environments that mandate social distancing. Employment status is often tied to variables such as education level and income, so perhaps a potential causal link lies within these other variables rather than employment status itself. Similarly, the association of low trust in scientists coupled with conservative political partisanship (Gadarian et al., 2021), low risk perception (Plohl and Musil, 2021), lack of prosocial behaviour and low educational attainment (Caplanova et al., 2021) suggests that there may be another variable driving both low trust in scientists and low compliance with scientists’ advice.

Another limitation of existing research is the inability to extrapolate the effects of individuals’ level of distrust into their daily lives. Observational survey research can only ascertain respondents’ behavioural intentions, which may be influenced by social desirability bias. In this situation, the research is ‘likely to measure responders’ views about how one should act in the situation, not how one will in fact act’ (Bicchieri et al., 2021: 13). Mollering (2006) and Van de Walle and Six (2014) echo this sentiment. Through no design flaw, existing research cannot offer a true representation of individual behaviour. While this study likewise cannot directly measure participants’ compliance, its manipulation of individuals’ trust in scientists lends greater credibility to causal claims supported by the data.

A behaviour called ‘expressive responding’ could also be responsible for the link between trust and compliance. Expressive responding occurs when individuals align their survey responses with the attitudes held by their political party (Jakesch et al., 2019). Thus, their reported opinions are really reflections of partisanship. This tendency is well documented across numerous political issues and ideologies (Jakesch et al., 2019; Nisbet et al., 2015). Conservatives and liberals are equally likely to show bias in evaluating scientific information when that information runs contrary to their pre-existing beliefs (Nisbet et al., 2015). The COVID-19 pandemic triggered expressed distrust in scientists among Republicans (Funk, 2017). This suggests that partisanship drives expressed distrust and, in turn, adherence to scientists’ guidelines. In this case, partisanship is a confounding variable that is controlled for in this experiment. By accounting for expressive responding and partisan alignment, this study isolates the unique effect of experimentally induced trust from confounding ideological factors.

In conclusion, existing research has documented a consistent correlation between trust and compliance across health domains, but the causal nature of this relationship remains unclear. Experimental studies within science communication have manipulated scientist cues in persuasive messages (Hendriks and Jucks, 2020; Bromme et al., 2022); however, these typically assess perceived trustworthiness rather than behavioural compliance. Thus, while they establish causal antecedents of trust, they rarely test trust itself as a causal determinant of compliance. Moreover, confounding influences such as partisanship and expressive responding complicate our understanding of individuals’ self-reported levels of trust in scientists. Taken together, research in political science highlights the role of partisanship in shaping trust in scientists, while science-communication studies identify how message and source characteristics foster or erode that trust. By integrating these perspectives, the current study tests whether trust, once experimentally induced, predicts compliance behaviour, thereby bridging disciplinary divides and advancing causal understanding of the trust–compliance relationship.

Research questions and hypotheses

This study uses a between-groups survey experiment to explore the following research questions and accompanying hypotheses. RQ1: What is the nature of the causal relationship between individuals’ level of trust in scientists and their protocol compliance intentions?

The first research question investigates how individuals’ trust in scientists and compliance intentions are causally related. Given the preponderance of observational research, I hypothesize that there is a statistically significant relationship between trust in scientists and overall compliance with recommendations. That said, I posit that increasing trust in scientists increases overall compliance compared to the control group (H1a), and decreasing trust in scientists reduces overall compliance compared to the control group (H1b).

Second, it is unclear whether this relationship persists outside of the health context to manifest as lateral attitude change. This spillover effect has yet to be explored in the literature on scientific trust and compliance. Therefore, the second research question is as follows: RQ2: Does altering trust in scientists in the health context affect compliance with scientific recommendations in other domains?

Third, Cintron et al. (2022) highlight the need for greater analysis of heterogeneous treatment effects within health-related social-science studies. Thus, the third research question asks: RQ3: How do individual-level characteristics (i.e., work experience in health care, education level and partisanship) moderate the relationship between trust and compliance?

This study hypothesizes that individuals’ health-care work experience, educational attainment and partisanship will moderate the relationship between trust and compliance. Those who work in health care are likely to have personal experiences with science and their colleagues that might insulate them from the effects of scientific messages in this study. Thus, participants with work experience in health-care professions will have a weaker treatment effect compared to those without work experience in health care (H3a).

In addition, individuals with at least some college education are likely to have greater scientific knowledge and could also be more insulated from the treatment messages. Survey data on the relationship between educational attainment and trust in science leads me to expect that they will be less influenced by a single treatment article in this study. I hypothesize that participants with college education will have a weaker treatment effect compared to those without college education (H3b).

Lastly, this study examines the effect of partisanship on participants’ compliance intentions. Recent surveys show that Democrats consistently report higher favourability towards scientists compared to Independents, while the inverse is true for Republicans (Funk, 2017). Thus, H3c and H3d follow that self-identifying Democrats will report higher levels of compliance likelihood compared to Independents (H3c), and self-identifying Republicans will report lower levels of compliance likelihood compared to Independents (H3d). Additionally, partisans tend to report greater distrust in scientists when they perceive the science to conflict with their partisan beliefs (Nisbet et al., 2015). If this finding holds in this study, it would manifest through stronger treatment effects for partisans exposed to an article that opposes their party's position towards COVID-19. Therefore, the final hypotheses predict that self-identifying Democrats will have a stronger treatment effect in the low-trust condition compared to Democrats in the control group (H3e), and self-identifying Republicans will have a stronger treatment effect in the high-trust condition compared to Republicans in the control group (H3f).

Methods

This study experimentally manipulated participants’ trust in scientists. Study approval was granted by the researcher's institutional review board. Participants were presented with a written consent form prior to the survey, and all methods were carried out ethically in accordance with the Declaration of Helsinki. After consenting to the survey, participants were randomly assigned to one of three groups (high trust, low trust, or control), constituting a between-subjects design. Random assignment ensures that observed differences in the outcome variables can be causally attributed to the manipulation of trust in scientists rather than to pre-existing differences among participants. The ‘high trust’ group received a ‘high trust’ article containing information depicting scientists as highly competent and trustworthy during the pandemic, emphasizing the accuracy and success of their scientific work. As discussed in later sections, this manipulation was unsuccessful at raising these participants’ level of trust in scientists. The ‘low trust’ group received a ‘low trust’ article describing scientists’ uncertainty and error (for instance, early misjudgements in casualty projections or mask advice), thereby reducing participants’ cognitive trust in scientists. Exposing participants to single treatment articles similar to those crafted in this study can alter science attitudes (Hameleers and Van der Meer, 2021). The treatment articles maintain informational equivalence by highlighting the same coronavirus protection measures (vaccines, masks, etc.), including similar quotes from citizens and medical experts, and maintaining the same approximate length and reading level for each article. The third group served as the control and read a television review article. The full text of each article is available in the Appendix. Following the treatment, all participants were asked to rate how likely they were to comply with various acts recommended by scientists using a novel scale.

The survey experiment was distributed via Qualtrics to 2571 participants from a sample gathered from Lucid between 29 March and 1 April 2022. This sample size well exceeds the minimum required (n = 400) for three groups with interaction effects, according to G*Power. The sample was demographically similar to the United States population, as shown in Table D2. After excluding participants who did not consent to the survey (n = 103), did not pass the pre-treatment attention check (n = 459) or did not complete the survey (n = 105), the final sample consisted of 1904 participants. The attention check consisted of one question administered prior to treatment. Participants were asked how much they use social media and were instructed to select two of the four answer choices to prove that they read the full question. Participants excluded by the attention check were, on average, eight years younger than the rest of the sample. However, this was the only difference between groups, and their exclusion did not change any experimental outcomes.

Outcome variables

Compliance likelihood was operationalized using a novel scale. The eight-question scale asked participants how likely they would be to comply with guidelines recommended by scientists in a variety of contexts. A pilot study asked participants to choose the scientist most likely to issue a specific protocol, informing the selection of both types of featured scientists and recommendations familiar to respondents. In the full study, participants were asked how likely they would be to comply with three recommendations from doctors (receiving a vaccination, taking an antibiotic drug and following nutritional advice), three from environmental scientists (evacuating from a hurricane, following air-quality warnings and recycling), and two from engineers (replacing lead pipes and updating in-home smoke detectors). The order of these questions was randomized for all participants. Compliance for each item was measured on a 5-point scale ranging from 1 (extremely unlikely to comply) to 5 (extremely likely to comply) for each of the eight questions. These scores were averaged into an aggregate compliance variable (M = 4.13, SD = 0.627, Cronbach's alpha = 0.82) and within each of the three fields of science represented in the data: health (M = 4.07, SD = 0.743), environment (M = 4.16, SD = 0.722), and engineering (M = 4.18, SD = 0.775). Likert scales can be treated as continuous scales when the study sample size is large and the data follows a normal distribution, both of which are satisfied in this dataset (Jamieson, 2004; Norman, 2010).

This scale offers a new way for scholars to understand the public's intentions towards compliance. Scholars face difficulty in measuring individuals’ compliance behaviour in the real world, but the compliance likelihood variable circumvents these challenges by assessing participants’ willingness to comply.

Manipulation check

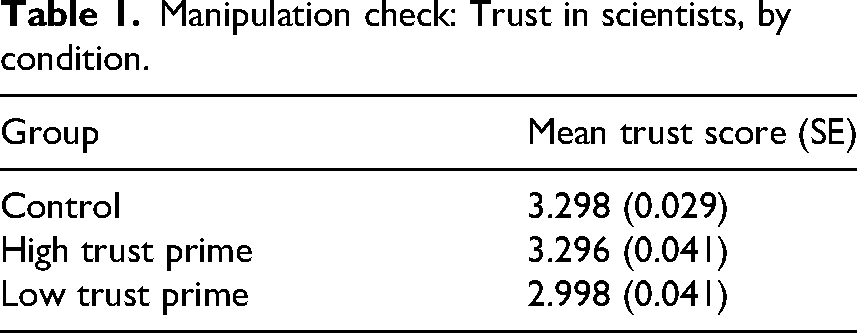

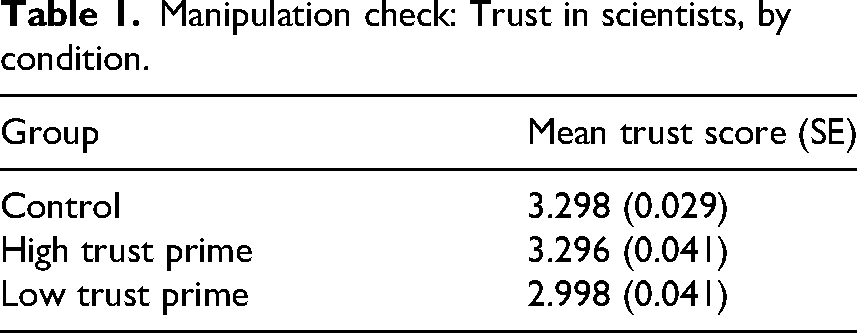

Manipulation checks are valuable components of experimental designs but have not been adequately utilized in previous research (Ejelöv and Luke, 2020). The purpose of a manipulation check is to assess whether a treatment (article) causes a change in the independent variable (trust in scientists) in the study. Researchers’ failure to include a manipulation check means it is not possible to determine whether the independent variable differs across groups, posing serious validity issues for causal claims. Prior to the main analyses in this study, a difference-in-means comparison assessed individuals’ post-treatment self-reported trust (measured on a 1–4 scale) across each condition to determine whether trust was manipulated by the treatment. As shown in Table 1, the low-trust group's mean trust score was significantly lower than the control group's by 0.3 points. This ensures that participants in the low-trust condition maintained lower levels of trust in scientists as a result of the treatment article. However, the high-trust group's level of reported trust in scientists was not significantly higher than the control group's. This limitation means that causal claims can only assess the relationship between declining trust and compliance intentions, given the lack of independent variable manipulation in the high-trust condition.

Manipulation check: Trust in scientists, by condition.

Manipulation check: Trust in scientists, by condition.

RQ1: What is the nature of the causal relationship between individuals’ level of trust in scientists and their protocol compliance intentions?

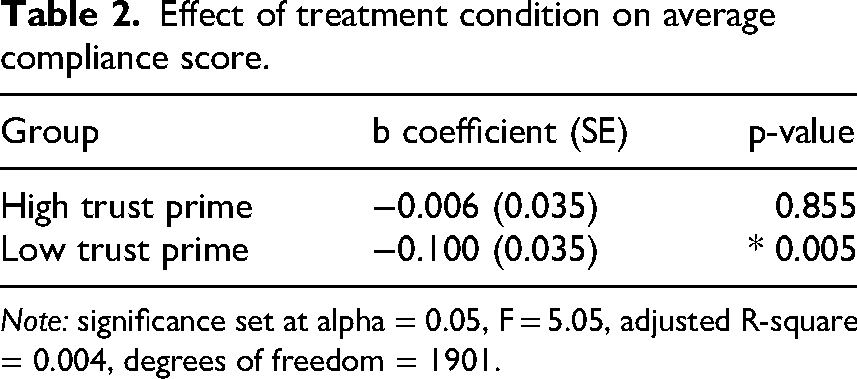

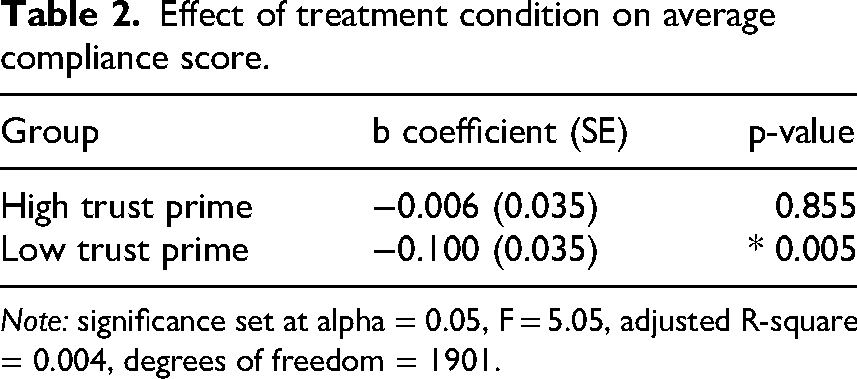

I find evidence that decreasing trust in scientists caused a decrease in overall compliance compared to the control group (see Table 2). This is a statistically significant effect with a small effect size (Gignac and Szodorai, 2016). High

Effect of treatment condition on average compliance score.

Effect of treatment condition on average compliance score.

Note: significance set at alpha = 0.05, F = 5.05, adjusted R-square = 0.004, degrees of freedom = 1901.

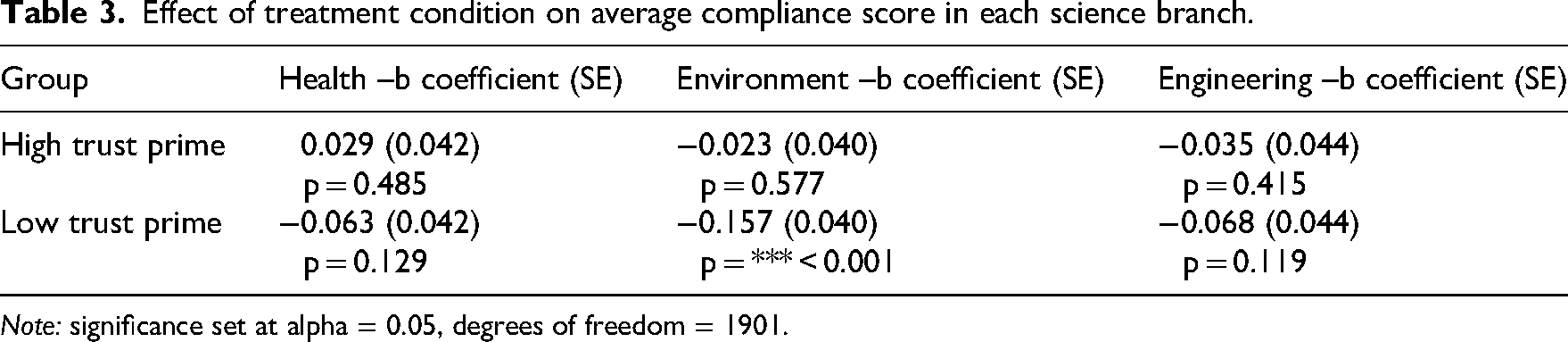

RQ2: Does altering trust in scientists in the health context affect compliance with scientific recommendations in other domains?

There is compelling evidence for a spillover effect of the negative causal relationship into other science contexts, as shown in Table 3. Despite the treatment articles’ focus on COVID-19, low-trust group participants showed a decline in compliance likelihood with environmental protocols compared to the control group. This is evidence of a spillover effect, because the primed distrust in COVID-19 scientists caused a decline in compliance in a different science context.

Effect of treatment condition on average compliance score in each science branch.

Note: significance set at alpha = 0.05, degrees of freedom = 1901.

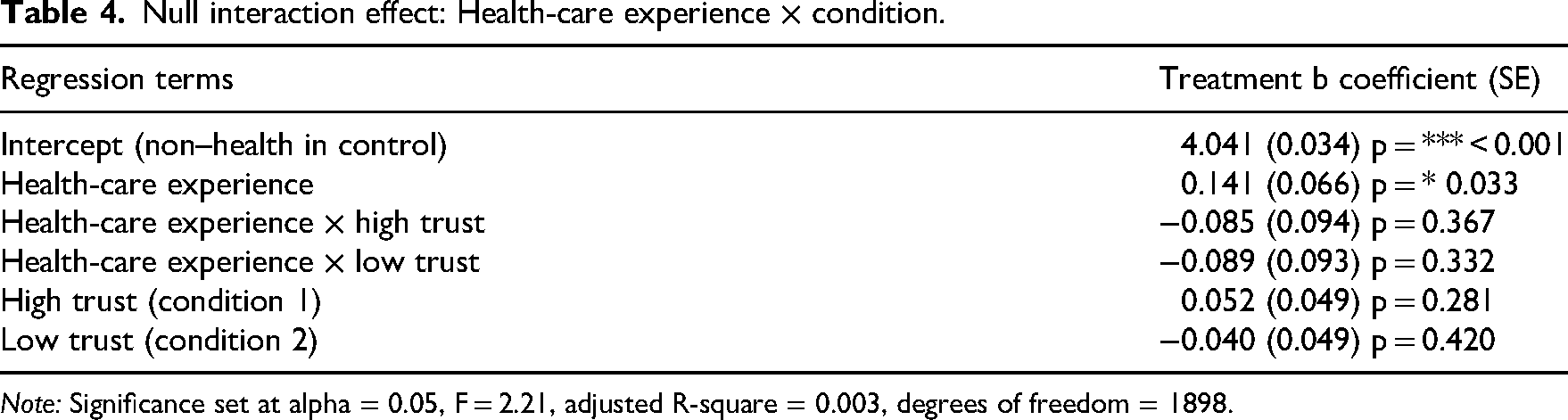

RQ3: How do individual-level characteristics (i.e., work experience in health care, education level and partisanship) moderate the relationship between trust and compliance?

I find that participants with health-care experience exhibited greater scientific compliance intentions than their peers. To examine H3a, I ran separate regressions using a dummy variable equal to 1 for experience in health care (n = 527) and 0 for no experience in health care (n = 1377). Those with health-care experience reported a statistically significantly higher health compliance likelihood score than those without health-care experience; however, they experienced homogenous treatment effects (see Table 4). This leads me to refute H3a.

Null interaction effect: Health-care experience × condition.

Note: Significance set at alpha = 0.05, F = 2.21, adjusted R-square = 0.003, degrees of freedom = 1898.

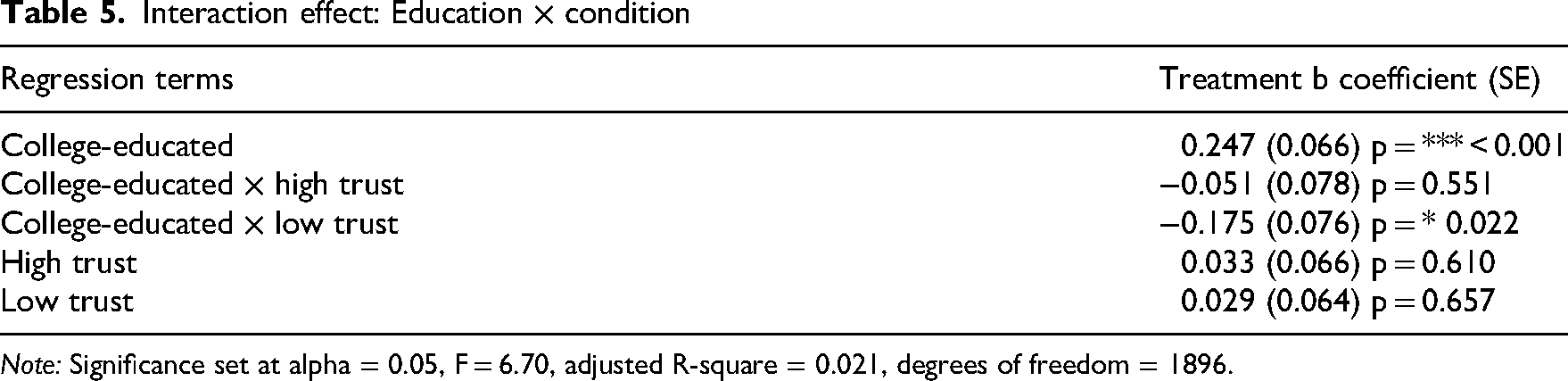

Similarly, college-educated participants reported higher compliance scores than those with a high-school education or less. In this regression, I dummy coded 1 for college education and above (n = 1344) and 0 for high school education or less (n = 555). The college-education × low-trust interaction term was significant at the 0.05 level, indicating partial support for H3b (see Table 5).

Interaction effect: Education × condition

Note: Significance set at alpha = 0.05, F = 6.70, adjusted R-square = 0.021, degrees of freedom = 1896.

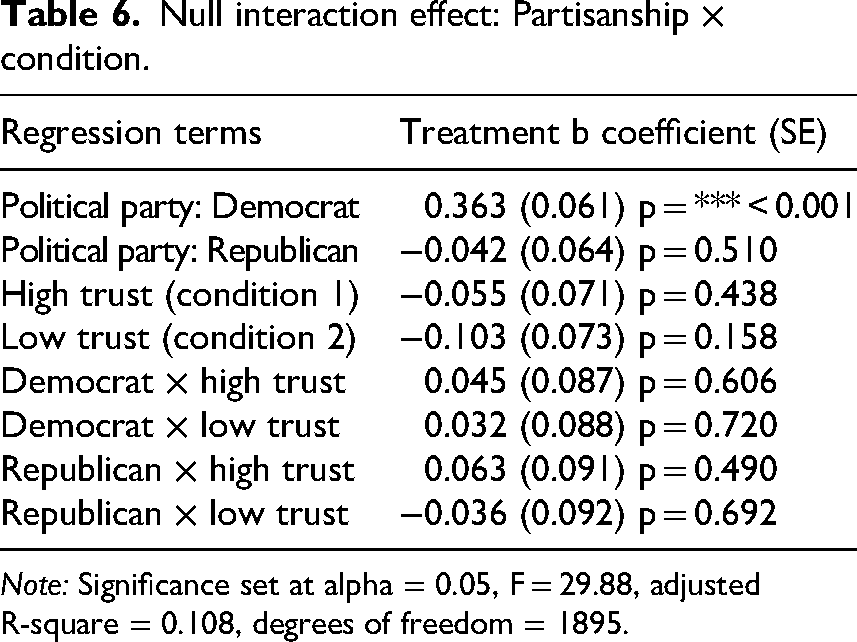

Partisanship is another important factor (F = 29.88) in shaping compliance with scientific protocols. Participants’ partisanship information was provided by the survey vendor on a nine-point scale capturing both party affiliation (Republican, Democrat, Independent) and strength of partisanship (very strong to not strong). Prior to analysis, this scale was collapsed into three partisan categories, with Independents serving as the baseline group. In support of H3c, self-identified Democrats (n = 842) reported greater compliance likelihood than Independents (n = 402). However, Republicans (n = 660) did not demonstrate lower compliance intentions compared to Independents. This study documents homogeneous treatment effects across party identification (Table 6), which refutes H3e and H3f. All results persist in regressions with covariates, which can be found in the Appendix. Additionally, all raw data and code needed to reproduce these findings are available online at: https://doi.org/10.3886/E208147V1.

Null interaction effect: Partisanship × condition.

Note: Significance set at alpha = 0.05, F = 29.88, adjusted R-square = 0.108, degrees of freedom = 1895.

This study set out to examine whether there is a causal relationship between individuals’ trust in scientists and the likelihood that those individuals would comply with scientific guidelines. Reducing participants’ trust in scientists did cause a decrease in compliance likelihood. These results were robust to the inclusion of controls for party affiliation, education level and gender, in regressions with and without covariates, which helps to rule out the effects of such confounding variables. While a small effect size was documented, a statistically significant effect was still documented after participants read only one article that emphasized scepticism about scientists. Although the effect from consuming one article may be small, individuals’ consumption of many similar articles in various media (e.g., news articles, television segments, social-media posts) over prolonged periods would be likely to magnify this effect (Lee et al., 2024). Conversely, the high-trust article—and its emphasis on the trustworthiness and expertise of scientists—was ineffective at altering individuals’ trust in scientists and, by extension, their compliance likelihood. This finding is attributable either to ceiling effects on the compliance-likelihood measures or to a lack of trust manipulation among high-trust participants. Regardless, no causal claims can be made about the impact of raising trust in scientists and compliance likelihood in this study. However, this null effect does indicate that it is difficult to substantially raise individuals’ trust in scientists through the consumption of only one high-trust news article.

Based on these results, it appears difficult for scientists and media alike to rely solely on communicating the trustworthiness of scientists to improve individuals’ compliance intentions. However, individuals’ compliance likelihood will decrease when scientists are perceived as untrustworthy, which is documented after individuals consume even one news article sceptical of scientists. This finding parallels results from the science-communication literature demonstrating that exposure to anti-scientist messages during the COVID-19 pandemic adversely affected evaluations of scientists (Hameleers and Van der Meer, 2021; Plohl and Musil, 2023). However, it diverges from Hendriks and Jucks (2020), as this study finds that individuals’ evaluations of scientists did decrease after a low-trust intervention.

These findings extend previous trust research showing that individuals’ trust in others builds over prolonged periods but can erode after even brief exposure to questionable behaviour (Ma et al., 2019). If this effect persists beyond the survey context and across media, it could have adverse consequences for public welfare. It is likely to result in further public hesitancy to follow through with life-saving measures, especially if public trust in scientists continues to decline (Funk, 2017; McCright et al., 2013). Not only does this pattern harm individuals who refuse to adopt these measures, but it also weakens the effectiveness of protocols for others who do comply. For example, the efficacy of a vaccine and the effectiveness of mask-wearing are partially dependent on the percentage of the public that follows such recommendations (Brooks and Butler, 2021; Van Boven et al., 2010). Individuals’ personal compliance decisions have public consequences. This is especially true during a crisis, because some people are more vulnerable to harmful consequences due to low socio-economic status, higher age or other factors (Gurtner and King, 2021; Khan et al., 2022). Declining compliance with recommendations during a pandemic may trigger a downward spiral in which the public withdraws from other safety recommendations and increases scepticism towards future guidelines. The results of this study underscore the urgency of addressing the erosion of trust in scientists to prevent such outcomes.

This study also finds a greater decline in participants’ compliance intentions towards environment-based recommendations (e.g., weather evacuations, recycling, etc.) as compared to health-based recommendations (e.g., vaccinations, nutritional advice, etc.), even when the treatment article focused solely on the health domain. This finding is evidence of lateral attitude change (Glaser et al., 2015). These effects have been documented previously in relation to attitudes towards demographic out-groups (Tausch et al., 2010; Ranganath and Nosek, 2008; Van Laar et al., 2005) and broader social policies (Linne, 2021), yet evidence for their effects in the scientific compliance domain remained limited prior to this study. Not only does this study show a spillover effect of distrust into other science domains, but it also shows that the public is more easily convinced to withdraw compliance from environmental safety recommendations, as indicated by the larger documented indirect effect than direct effect. This surprising finding warrants additional investigation.

This result is likely to be explained by several factors that shape individuals’ milder perceptions of risk from environmental threats compared to health threats. Individuals’ risk assessment of a threat depends on familiarity, proximity and personal experience with similar threats, among other factors (Carmi and Kimhi, 2015; Cerami et al., 2021; Chu, 2022). Prior to the study, participants may have experienced fewer environmental threats than health threats, leading them to assess environmental threats as less likely to occur and thus less severe. Additionally, individuals are less willing to risk harm to their personal health (Hahn et al., 2000), a belief that may have been cognitively activated when participants responded to prompts about health recommendations (e.g., taking a vaccination) but not environmental recommendations (e.g., recycling). In short, participants probably viewed the consequences of environmental non-compliance as milder than the consequences of non-compliance with health recommendations. Thus, a lower perceived risk of environmental threats may be driving the spillover effect. It is also possible that fewer people tend to follow environmental recommendations in daily life, so there is less social pressure to report non-compliance in a survey (Milfont, 2009). Future research should parse why individuals seem more willing to forgo compliance in this area. Regardless of the mechanism, this finding poses challenges for climate scientists and others who seek widespread public commitment to stop climate change. It is more difficult to obtain universal compliance with these recommendations, because the adverse effects of climate change are experienced unequally across geographical regions, socio-economic classes and other demographics. However, climate change poses an existential threat that arguably exceeds and exacerbates the harmful impacts of many health threats worldwide (Gould and Higgs, 2009; Stollberg and Jonas, 2021; Tjaden et al., 2018). Thus, it is critical for environmental scientists to engage in the work of promoting pro-environmental compliance.

Further analysis provides insight into the role of individual-level characteristics in determining compliance intentions. Participants with health-care experience reported higher levels of both trust in scientists and compliance intentions, but their compliance intentions declined similarly to those of their peers. This finding aligns with previous research showing that even a majority of health-care workers during the pandemic reported some level of non-compliance with COVID-19 protection measures (Daba et al., 2023; El-Sokkary et al., 2021). When examining educational attainment as a possible moderator, I found a significant low-trust × college-education interaction term, suggesting that college-educated individuals reading the low-trust article were more easily swayed to non-compliance. These results indicate that individuals, even among seemingly reliably compliant groups, such as those who work in health care, can exhibit declines in compliance as a result of sceptical COVID-19 messages.

This study finds that Independents and Republicans are significantly less likely to comply with recommendations compared to Democrats. However, Republicans and Independents in the high-trust group do exhibit greater compliance intentions than their co-partisans in the low-trust group. This is somewhat counterintuitive, given that partisans are more easily persuaded by like-minded partisan content (Benegal and Scruggs, 2024). It is encouraging for scientists seeking to improve public compliance that individuals may increase compliance intentions even after exposure to messages that appear to contradict their partisan beliefs. As partisan debate enshrouds scientists’ recommendations, opposing partisans will be likely to comply with scientific guidelines at varying levels. However, there is no evidence in this study to suggest that opposing partisans experience a backlash against public-health informational messages even when information contradicts their partisan beliefs (Nyhan, 2021; Wood and Porter, 2019). Scientists will still have to contend with declining baseline levels of trust in efforts to communicate health and safety guidelines effectively.

Conclusion

Using a novel compliance-likelihood scale, this study found that reducing individuals’ trust in scientists does reduce their voluntary compliance intentions; however, it is much more difficult to raise trust in scientists in the context of an experimental survey. There is some evidence of lateral attitude change as manifested through a spillover effect in which individuals’ expressed distrust in COVID-19 scientists led to declines in intended compliance with environmental recommendations. The study also found that self-identified Democrats, college-educated individuals and those with experience in health-care professions report higher overall levels of trust in scientists and compliance with their recommendations. This study's experimental treatment was unable to raise individuals’ trust in scientists or compliance intentions. These findings present a challenging scenario for scientists and science communicators moving forward: how to build individuals’ trust in scientists in the long term while contending with a tendency for trust to erode easily? Scientists involved in mass communication could benefit from employing communication strategies that increase interpersonal trust on a smaller scale, such as between patients and doctors (Chandra et al., 2018). These techniques, such as more frequent and transparent communication in non-technical language, can guide scientists’ broader communication strategies with the media and the public. Additionally, individuals seem more willing to forgo compliance with environmental recommendations, an effect that I expect is driven by milder perceptions of the severity of environmental threats. However, this study also finds encouraging results for science communicators in that Republicans and Independents did not exhibit a significant backlash to the high-trust treatment article.

This study has limitations and opportunities for future research. First, trust in scientists serves as the primary independent variable, which is only one of many factors that affect compliance with scientific guidelines. The treatment articles contained a range of pro- or anti-trust messaging to induce effects, but it is unclear which mechanism led to the observed effects among participants. Future research can build on this work to investigate the specific mediator in the treatment article that resulted in declines in individuals’ trust in scientists and compliance intentions. There was also a lack of trust manipulation in the high-trust condition relative to the control, which suggests some ineffectiveness of a single treatment article in sufficiently raising trust in scientists. It is unclear whether the null results in the positive direction reflect a lack of effects in the real world or insufficient manipulation in the experiment. However, this null effect is still an empirical finding in that it reinforces existing research showing the difficulty of bolstering trust in scientists compared to the relative ease with which such trust is diminished (Ma et al., 2019). Moreover, the background of the ongoing coronavirus pandemic is harnessed in the treatment. There is some risk that this highly politicized issue will not trigger a representative measure of the public's predicted likelihood of compliance with other recommendations. However, the benefits of highlighting COVID-19 in the experiment outweigh such risks. The pandemic has made scientists and officials more focused on securing voluntary compliance with recommendations than before. By using COVID-19 as a backdrop for this experiment, we can predict the behavioural effects of declining public trust in the scientific community and whether such effects are likely to occur during other crises, even if researchers cannot directly measure individual behaviour. Existing research has not investigated such implications, but this study advances scholarship in this area by concluding that declining trust in scientists is likely to have a negative downstream effect on compliance behaviour. Future work can assess the generalizability of these findings in other science contexts, for example during natural disasters, other medical emergencies or non-crisis times. By investigating what factors dictate individual decision-making in these events, we can determine whether this causal relationship persists when the salience of COVID-19 has diminished. Additionally, field research can serve the valuable function of measuring individuals’ adherence to protocols in the real world.

Overall, this research serves the important function of translating measures of public trust in scientists into predicted behavioural outcomes. It is concerning for public welfare that decreasing trust in scientists causes decreasing compliance likelihood, even after consuming a single anti-science slanted news article. Going forward, scientists will be forced to contend with countless obstacles, from public hesitancy to legislative gridlock, that hinder compliance with their recommendations. Distrust appears to be one of those obstacles. Future interventions will need to address public distrust in scientists to design successful campaigns that boost compliance behaviour and, ultimately, protect public welfare.

Footnotes

Acknowledgements

The author acknowledges and appreciates the support of Dr Emily Thorson, Dr Mark Brockway, Dr Simon Weschle and Dr Daniel McDowell of the Syracuse University Department of Political Science in completing this manuscript.

Ethical approval and informed consent statements

This research was approved by the Syracuse University Institutional Review Board (approval number: 21–364). Electronic written consent was secured from all participants, and no identifying information was collected.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability statement

All data is fully available without restriction. All anonymized participant data and code required to reproduce these findings are available at https://doi.org/10.3886/E208147V1.

Author biography

Ava Breitbeck is a PhD candidate in the Department of Science Teaching at Syracuse University. She also teaches in the Department of Physics at Syracuse University. Her research interests include the study of science attitudes and behaviours, high-impact STEM education and scientific civic engagement.