Abstract

Purpose

There is limited scholarship on artificial intelligence (AI) in higher education governance, despite the growing prevalence of AI-powered technologies in many fields, including education. However, as the technology is still nascent and has yet to reach its full potential, ideas and arguments abound, championing or cautioning against the use of these technologies.

Design/Approach/Methods

To fill this gap in research on policy networks and AI in British higher education, this article employs network ethnography and discourse analysis to study how ideas about AI-powered technologies in higher education circulate in policy networks in the United Kingdom.

Findings

The findings evidence a policy network showing signs of a heterarchy permeated by neoliberal rationales and populated by policy actors actively promoting artificial intelligence technologies to be used in education.

Originality/Value

This paper builds on existing research by looking at the university and not-for-profit sectors, in addition to the governmental and educational technology sectors. Using network ethnography, this article expands our understanding of the policy actors involved and critically analyzes ideas regarding the use of AI in education.

Keywords

Introduction

Artificial intelligence (AI) has been heralded as a cybernetic panacea able to improve and extend educational provisions. Although there have been significant strides in the development of this technology, which has started to gain space in higher educational management (Fischer et al., 2020; Francisco-Javier et al., 2019), it is still in its infancy and has yet to reach its full potential. Nonetheless, proponents of AI are actively advocating and imagining what an educational system built with AI might entail (e.g., Larsson, 2019; Luckin, 2018). Technology evangelists and their arguments abound, and it seems that they are being heard in universities, conferences, and parliament, with relatively few dissenting voices (Bostrom, 2019). In all cases, AI is treated as an inevitable element of the present and future. However, the public understanding of the policy implications of AI is poor (Feldstein, 2019), while current research on AI in higher education lacks critical reflection and theory (Zawacki-Richter et al., 2019), and scholarship on AI and education governance is limited (Sharma et al., 2020). Thus, Selwyn (2015) has called for critical studies of data and the digital in education.

Beyond the scholarly merits of this technology, the debates are timely and important, with AI touted as being able to change work, the world, and education. UNESCO has endorsed this technology (Pedro et al., 2019), and the European Union's prognosis is that AI will transform education and teaching drastically (Tuomi, 2018). It is altering how educational decisions are made through “new cognitive infrastructures” (Sellar & Gulson, 2019). Beyond the potential perils this technology might bring (Wang, 2020), this is an opportune time to understand what kind of futures policy actors envision as the technology is still in its infancy. In fact, digitalization has been argued as a hegemonic discourse within education (Vivitsou, 2019). According to Zdenek (2003), discourses around AI not only describe these technologies but create them. Thus, studying the circulating ideas regarding AI in education will help uncover critical insights into and potential disadvantages of this technology.

In fact, Williamson (2019) has started exploring the policy networks behind data infrastructures of higher education in the United Kingdom. Building on their work, this research asks the question: How does AI policy move and transform in the United Kingdom through the influence of different actors? As part of this investigation, I look at who is part of the policy network, what ideas circulate, and what policy actors are trying to achieve. I also look at the practices of these actors and what strategies they are employing to achieve their goals. In order to answer the main research question of how ideas circulate, I conduct a network ethnography. Network ethnography helps uncover the people, organizations, and ideas involved in creating AI futures in education. I also employ critical discourse analysis to scrutinize the ideas employed by this network.

The nascent and growing policy field of AI is showing signs of a heterarchy in which academics have a central role. AI is positioned as a solution to educational problems, and the policy documents are permeated by neoliberal rationales advancing posthuman futures and technology to fulfill the efficiency and productivity needs of the neoliberal paradigm. Business and knowledge production are deeply entangled. Academics are simultaneously entrepreneurs and business people. Indeed, universities themselves serve as incubators and accelerators of educational technology companies, while businesses also act as knowledge producers.

Artificial intelligence, policy, and education

Since the 1950s, when AI was defined (McCarthy et al., 1955) and Turing proposed his test (Russell et al., 2016), computational power has expanded exponentially at lower costs; similarly, technologies and definitions have expanded as well. There is considerable debate among various disciplines regarding whether this technology could be called intelligent, whether it is artificial, and whether computational thinking would be a better term (Russell et al., 2016). Popenici and Kerr (2017), following a thorough review of the literature, define AI in the context of education as “computing systems that are able to engage in human-like processes such as learning, adapting, synthesizing, self-correction and use of data for complex processing tasks” (p. 2). Beyond business uses, this technology is gaining space in the public sphere as well. There are applications of AI in the military (Johnson, 2019), such as highly contested autonomous weapon systems (Jones, 2018), intelligence and surveillance (Landon-Murray, 2016), and the judicial system (Nissan, 2013). AI has also been implemented in health care (Habli et al., 2020; Ho et al., 2020) and counseling (Fulmer, 2019), among other sectors. AI technologies are also used in bureaucracy and governance (Bullock, 2019) and sustainable development (Goralski & Tan, 2020).

There are applications of AI in education as well, though the technology has yet to reach its full potential. Although Dede (1992) is pessimistic about education not taking advantage of the new intelligence tools, since the 1990s, there has been a boom in AI applications in higher education and research on the topic. Fischer et al.'s (2020) synthetic review shows that the availability of, and the capability to process, big data affords micro-, meso-, and macro-level applications in educational systems, including student-, department-, or university-level uses. Hernandez-de-Menedez et al.'s (2020) state-of-the-art review of educational technology in engineering education reveals that intelligent tutoring systems—that is, systems that support learning by interpreting student responses and learning—are the most widely used technology. Such systems can measure how much a student acquired a skill and personalize educational content accordingly (Hernandez-de-Menendez et al., 2020). Learning analytics is part of EdTech companies’ business models, but they are not fully adopted and developed yet due to questions about data and “data sovereignty”(Renz & Hilbig, 2020). Intelligent Tutoring Systems can identify a student's learning style and personalize the learning content accordingly (Bajaj & Sharma, 2018) and predict student outcomes (Xiao & Yi, 2020). They can also use deep neural networks as part of data mining and predictive analysis (Kim & Kim, 2020) to accurately detect potentially failing students as early as three weeks into a semester (Gray & Perkins, 2019), even through semi-supervised machine learning models (Livieris et al., 2019). Spector et al. (2016) show that machine learning technology can and is already enhancing formative assessment to help individual teachers. When coupled with AI, the same technology can improve curriculum design (Ball et al., 2019) and help create educational applications for phones and tablets (Sánchez-Morales et al., 2020).

Although these technologies promise immense benefits to learners and teachers alike, criticism and calls for more transparency are growing. At the intersection of higher education and AI, lively research is burgeoning (Francisco-Javier et al., 2019). Although data mining tools for the prediction of academic success proliferate, these technologies are not yet accessible to non-tech savvy educators (Alyahyan & Düştegör, 2020). Educators and learning theories are missing from AI in education, and the ethical questions are seldom investigated (Zawacki-Richter et al., 2019). Academics are calling for increased transparency of open learner modeling (OLM), so that every step of machine learning and AI programs can be scrutinized and questioned (Conati et al., 2018), especially when AI systems are making decisions (Desouza et al., 2020). Wang (2020) argues that there are considerable perils in AI implementation in educational decision-making: It could exacerbate existing biases, algorithmic decision-making could clash with our morals (of fairness, equity, etc.), and careless handling of data could have long-lasting negative consequences. Feldstein (2019) points out the actuality and possibility of political repression in AI, highlighting that this technology can enhance already existing technologies of surveillance, among others, in unforeseen ways. They warn of a worrying phenomenon accompanying this, namely, the lack of public understanding of the policy implications of AI.

The critical analysis of digital data and education (Selwyn, 2015), and the importance of paying attention to the discourse around AI, points to the need for detailed research on policy and policymaking. The prominence of data in education coincides with a shift to governance in the United Kingdom (Ozga, 2009). Data are a “policy instrument” (Ozga, 2009, p. 150) that the government uses for control, and traditional bureaucracies are being replaced by networks (Ozga, 2012), where the use of data as a policy instrument is constantly negotiated. The proliferation of data and data platforms in education necessitates the study of “computational educational policy” (Gulson & Webb, 2017). Policies focused on prediction, transparency, and data are enabling AI to proliferate, reinforcing these policies in turn (Gulson & Webb, 2017). Voices are pushing for the adoption of AI in the public sector and in education (Desouza et al., 2020), going into specific detail about how this might be achieved (Chatterjee & Bhattacharjee, 2020). Writing about AI and policy, Webb et al. (2020) argue that, in fact, “most educational futures are already delineated, and machinic expressions of time are the chronologies, habits, and memories that the educated subject inhabits rather than produces” (p. 1). In this future building, digital data are at the center of education governance (Rowe, 2019; Williamson, 2016); this is not merely a question of data infrastructure, but a policy as well (Williamson, 2018).

There is a common theoretical element of current scholarship on AI and policy networks: They both use infrastructure studies (Gulson & Sellar, 2019; Williamson, 2019). Williamson (2019) argues that using infrastructure studies as a lens allows for uncovering the commercial connections in policymaking, as data infrastructure projects have become a prominent part of educational reform. In fact, data infrastructures have such a powerful influence that, according to Gulson and Sellar (2019), they can reshape power relations in policy and create new private–public partnerships. Education governance is changing, and technical standards have a new role in education policy data infrastructures (Gulson & Sellar, 2019 #261). Although Gulson and Sellar (2019) focus on Australia, Knox (2020) notes that—unlike previous conceptions—AI in China is not a unified field, but one in which public and private sectors and international companies are heavily involved, similar to the heterarchies described by Ball and Junemann (2012) that exist in the United Kingdom and by Ball et al. (2017) in respect to global education networks.

Although there has been research on data and educational policy, there has only been a limited number of studies on AI and educational policy, with even fewer set in the United Kingdom. Sharma et al.'s review (2020) notes that scholarship on AI and governance is lacking and that there is potential for further research. Gulson and Witzenberger (2020) argue, in the context of Australia, that education technology is an important policy space in educational governance as AI is gaining importance. Although the United Kingdom is advanced in educational data production compared to European countries (Ozga, 2009), there has been limited scholarship on AI in educational policy. To add to the scholarship on AI and educational policy in the United Kingdom, this article builds on and departs from Williamson's (2019) article on policy networks and data infrastructures in higher education in the United Kingdom. This research expands on the policy networks that Williamson (2019) study by looking at universities and not-for-profit organizations, in addition to the governmental and educational technology companies that Williamson scrutinize. This article departs from his research by adopting a network ethnography approach, which allows for an expanded understanding of the policy actors involved and a critical analysis of the ideas employed.

Methods

To study the policy networks and the ideas for AI in higher education, I employed a qualitative method (Bryman & Burgess, 1994), network ethnography, primarily using the Internet. As policymaking is shifting and “new global spatialities and new governance structures in education” (Hogan, 2016, p. 390) appear, this research embraced the Internet as a locus of study (Wittel, 2000). Specifically, network ethnography uses networks as an analytical tool to uncover how policy communities take shape and understand the relationships involved (Ball, 2012, 2016; Ball et al., 2017), digging deeper to look at the nuances of interactions by investigating electronic communications, among other sources (Howard, 2002). This network ethnography used a hybrid method of cyber ethnography (Hogan, 2016), multisited ethnography, and social network analysis to capture policymaking as a system as the local and global are being reconfigured in the current capitalist system (Marcus, 1995).

Thus, this network ethnography started by identifying prominent voices arguing for and against AI in higher education in the United Kingdom, inspired by participation in educational technology conferences before the research started. Data were collected from online sources between April and July 2020, by building a network of the people and organizations identified in initial searches; examining organization commissioned reports, their collaborators, and the people and organizations mentioned in these reports; and identifying technologies at the micro-, meso-, and macro-levels (Fischer et al., 2020). As global education policy networks involve social media (Adhikary et al., 2018), data were collected from relevant websites, Facebook and LinkedIn pages, Twitter posts and replies, blogs, and YouTube channels. This snowball sampling (von der Fehr et al., 2018) via the Internet (Baltar & Brunet, 2012) included think tanks, universities and academics, companies, and governmental organizations. To make sure that the sampling did not exclude dissenting voices, a second sampling was conducted to collect dissenting voices and map governmental organizations and frameworks independent from the previous two sampling strategies. The scope was spatially limited to people, organizations, and companies operating in the United Kingdom during 2015–2020.

Overall, 67 actors were identified and studied. Systematic searches were conducted on their websites to capture data about AI, partnerships, board members and executive teams, and social media links. On blogs, posts related to AI were captured; on LinkedIn, I captured CVs and company descriptions; from Facebook, I downloaded all posts with visual media; and from Twitter, due to the limitations of the API server, I could only download 3000 posts from each account. 1 All data, from reports to social media posts, are publicly available and not restricted by paywalls or privacy settings. Twitter and Facebook posts are publicly available, even to unregistered users. 2

As only publicly available data were collected, other documents—for example, internal memos and notes from meetings—would enrich the analysis and strengthen the claims. Additionally, the data are a cross section of the current network and thus lack historicity. Interviews would potentially enhance our understanding of what exists “behind the scenes.” This research only collected pieces of traditional media if a network actor published them, and thus a more robust collection of print media could enrich the analysis.

To analyze how ideas of AI in higher education circulate, I used methods of network analysis (Gruzd & Haythornthwaite, 2011; Hanneman & Riddle, 2011; Hollstein, 2011; Knoke, 2011) and critical discourse analysis (Fairclough, 2001; Howarth, 2010; Jäger, 2001; Meyer, 2001). In addition, analysis of posts by cybercommunities was employed, particularly on Twitter, Facebook, Instagram, and LinkedIn (Gruzd & Haythornthwaite, 2011). In analyzing the networks, geodesic distance, clustering, number of connections, and betweenness were used as empirical evidence for the influence of policy actors (Hanneman & Riddle, 2011). Betweenness, a measure of the relative power of an actor in a network, was calculated with the Brandes algorithm using the Stanford Network Analysis Platform in NVivo (NVivo, 2020b). Sociograms or network mapping, a form of visualization, used for this research employed the Yifan Hu algorithm (Hu, 2005) and were created in the open-source program, Gephi. All sociograms in this research used spring embedders, showing local connections (Krempel, 2011).

All data were read and manually coded except for social media posts. To ensure a nuanced coding, all coded data were recoded with more nuanced codes. Close attention was paid to the content primarily, also to the structure of discourse (Jäger, 2001), and to the visual images as well (Fairclough, 2001). Automated coding was only used on social media posts on Facebook and Twitter using NVivo. There were two kinds of automated coding: thematic and sentiment analyses. Thematic coding analyzes the posts and detects significant noun phrases to identify the most frequently occurring themes. Sentiment analysis looks at words relating to sentiment expressions and codes to positive, moderately positive, very positive, negative, moderately negative, very negative, and neutral sentiments. Analytical tools such as visualization (hierarchy charts, word clouds, word trees) and matrices (of codes, sentiments, themes) were employed as well. There are some limitations to the automatic coding using Nvivo. Sentiment analysis looks for sentiment expressions and does not measure the documents on a Likert scale. Additionally, it cannot detect sarcasm, double negatives, slang, dialect variations, idioms, or ambiguity (NVivo, 2020a). Manual coding also has limitations insofar as it was done by a single researcher. Two or more people coding the same texts could ensure more robust coding, especially if validated through Cohen's Kappa coefficient, a coefficient of interjudge agreement (Cohen, 1960). The next section outlines the heterarchical network and its characteristics of self-organization, vested interests, knowledge sharing, and the neoliberal ideas that posit AI as an efficient solution.

Spinning of(f) the network: Heterarchical policymaking centering academics and universities

The policy network of AI in higher education in the United Kingdom shows signs of a heterarchy. Heterarchy is a form of governance that involves “self-organized steering of multiple agencies, institutions, and systems which are operationally autonomous from one another yet structurally coupled due to their mutual interdependence” (Jessop, 1998, p. 29). Besides governmental bodies, it is populated by universities, academics, and for- and not-for-profit organizations. It is not surprising that universities and academics are present in the networks; however, their involvement goes beyond simply that of (future) users of artificially intelligent technologies. In fact, they are involved with all actors of the network in various functions: as knowledge producers and “legitimizers,” as tech incubators, and as influencers of policy and public opinion. Additionally, business permeates all levels that are often associated with academics. Conferences serve as primary platforms for sharing resources and connecting actors in the network, creating a burgeoning heterarchy of self-organizing actors connected through mutual interests, resource sharing, and dialogue.

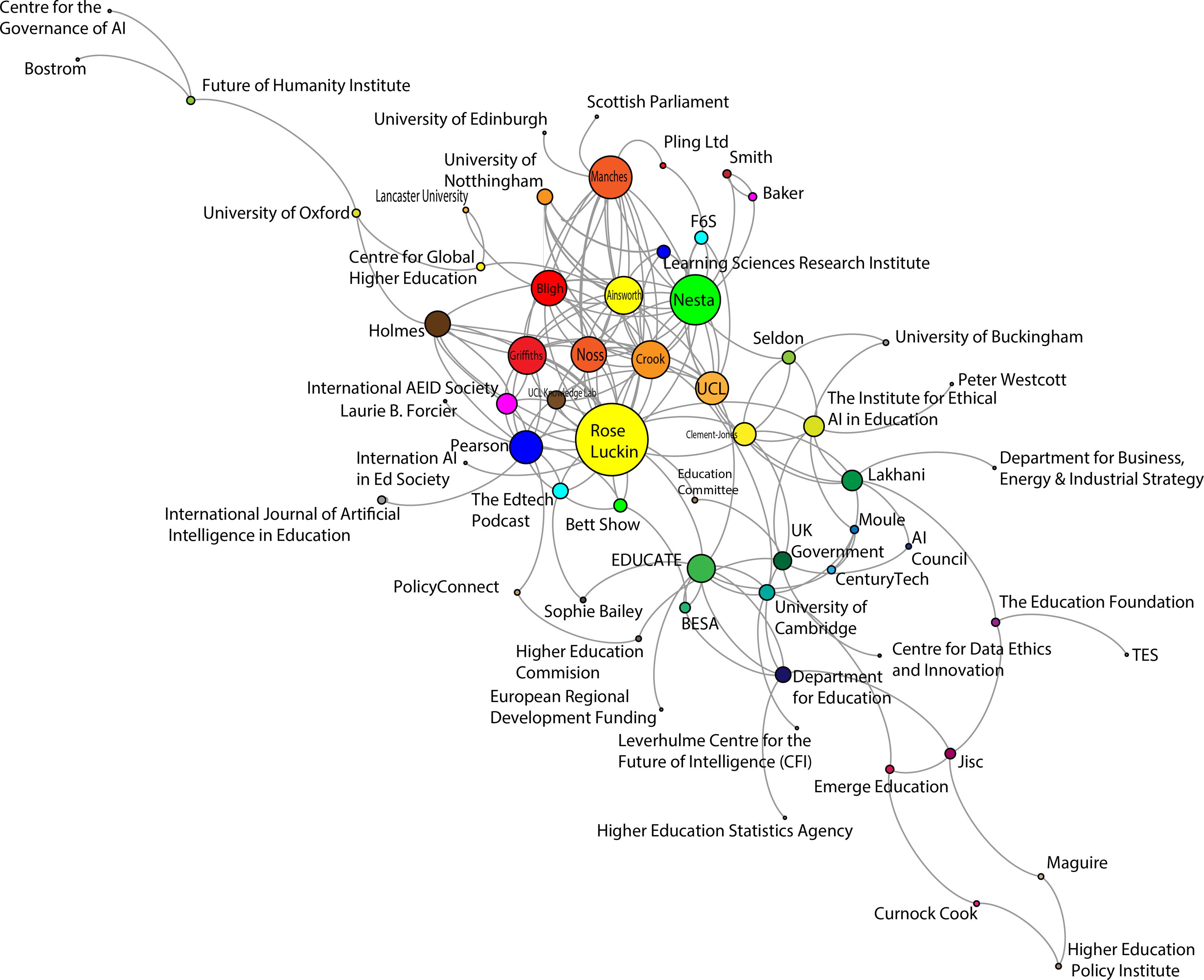

The policy actors involved in influencing how AI is envisioned are not limited to politicians and governmental bodies (see Figure 1); in fact, governmental bodies only make up a fraction of the network. In addition to the 10 governmental bodies, this research revealed that there were at least four companies, two conferences, 23 organizations (non- and for-profit, charities, research centers), 20 people (academics, researchers, entrepreneurs and so on), and seven universities active in the higher education policy space of AI.

Policy network of AI in higher education in the United Kingdom.

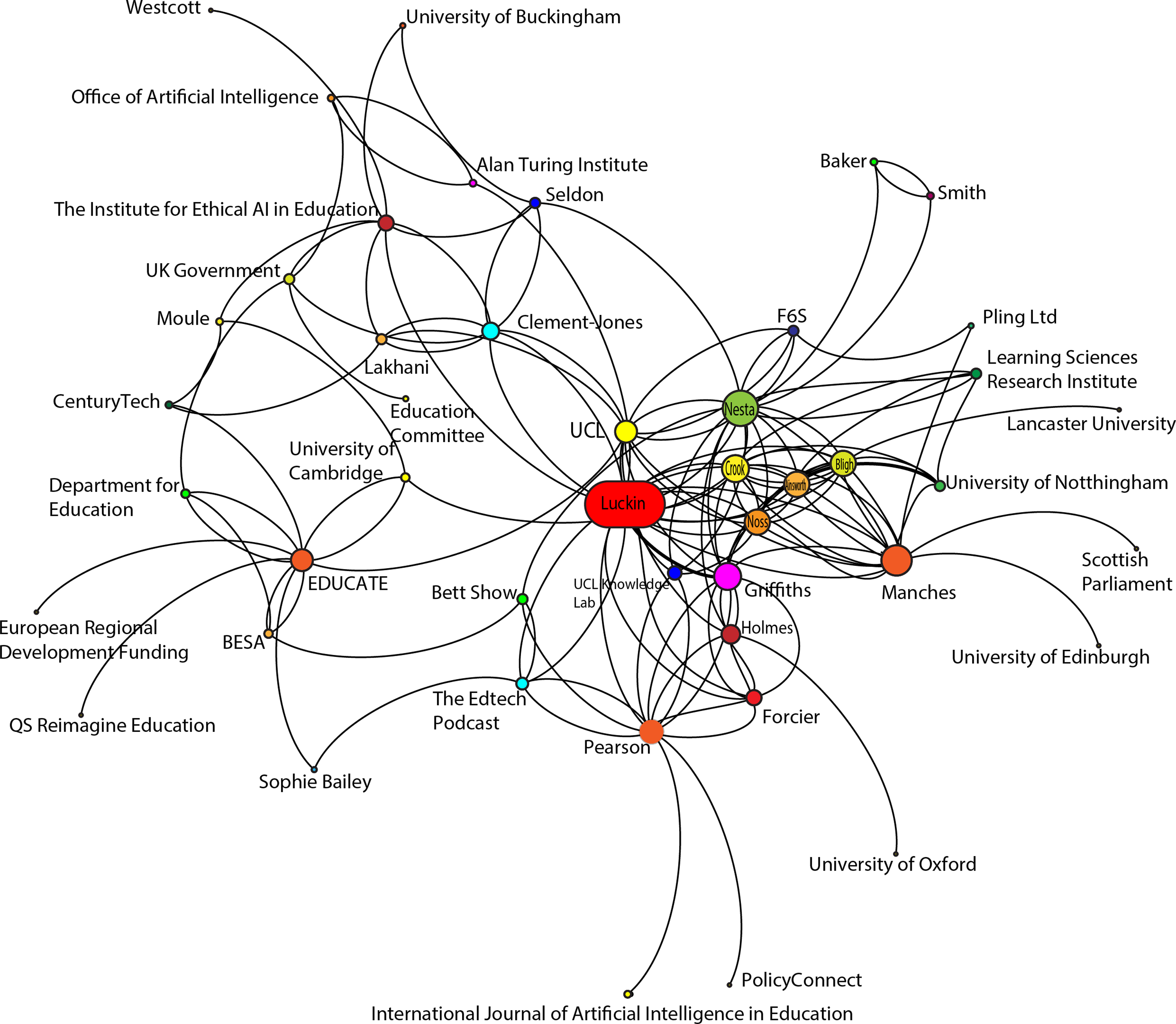

Immediate network of Rose Luckin.

Universities and academics serve as very important elements of the network. Out of the 67 nodes identified, 22 (32.83%) were academics, universities, or university-affiliated organizations (such as research centers or tech incubators in universities). Moreover, when the nodes are ordered by the number of connections, of the top three of the network, approximately two-thirds are universities, academics, or university-affiliated organizations. This means that this category has the most direct connections, which is a proxy for influence. It is important to note that some of those connections are understandably between academics, but there are also plenty of connections to government, businesses, and other organizations. In the network, one node stands out as the most influential due to having the highest betweenness and number of connections measure: Rose Luckin, a professor at University College London (UCL).

Governmental and self-organization

Signs of a heterarchical policy network are visible in how the governmental and independent actors organize policy work via “self-organizing interpersonal networks, negotiated inter-organizational co-ordination, and decentered, context-mediated inter-systemic steering” (Jessop, 1998, p. 29) and “interorganizational linkages” (Rhodes, 1997, p. 51). Beyond the Department for Education, which is involved in all education-related policies, the policy around AI is generally divided among multiple ministries, namely, the Department for Business, Energy, and Industrial Strategy and the Department for Digital, Culture, Media, and Sport, which created an interdepartmental organization for policy work: the Office of Artificial Intelligence. The government also created a Centre for Data Ethics and Innovation and an AI Council tasked with “[p]roviding an open dialogue and exchange of ideas between industry, academia and government.” 3

The AI Council is a good example of the heterarchical nature of the network, where different actors of public and private sectors' networks coordinate and steer policy. It is a consultative body of the government with members from businesses such as DeepMind, owned by the Google parent company Alphabet Inc. or Ocado, an online grocery store. 4 Similar to data infrastructures in higher education (Williamson, 2019), there are synergies between commercial and governmental actors. Self-organization of independent actors (Jessop, 1998) and network governance (Rhodes, 1997) also characterizes the AI Council and how it operates within the government. In the document titled AI Council Terms of Reference, 5 a section entitled “Ways of Working” describes how the council draws on its members and the wider AI community to “ensure the government is well placed to respond” and “maintain a dialogue with the Office for AI throughout any projects including likely findings, recommendations or advice.” 6 The council is an intergovernmental organization (Rhodes, 1997) characterized by a strong private–public partnership (Robertson & Verger, 2012). The council acts as a coordinating platform between multiple governmental organizations and private sector players. In fact, in their first meeting, the council members discussed how they could support the work of the government. 7

In addition to the government working to coordinate the network, the actors themselves are organizing to influence policy (Jessop, 2002). The Institute for Ethical Artificial Intelligence in Education (IEAIE) is a prime example of the advisory function of academics transforming into a policy influencing one imbued with business interests. Housed in the University of Buckingham, the IEAIE was founded by Sir Anthony Seldon, Rose Luckin, Priya Lakhani, and Baron Timothy Francis Clement-Jones.

8

Seldon and Luckin are professors at the University of Buckingham and UCL, respectively, Clement-Jones is a member of the House of Lords, and Lakhani is an entrepreneur and the founder of CenturyTech, an educational technology company. Lakhani is also a member of the AI Council discussed earlier. The institute “will work to develop frameworks and mechanisms to help ensure that the use of AI across education is designed and deployed ethically. We are achieving this by engaging with a wide range of stakeholders to develop a code of practice that protects the vulnerable and disadvantaged and maximizes the benefits of AI across society.”

9

This highlights the coordination characteristic of heterarchies (Jessop, 2002) that the IEAIE's interim report advocates for: [C]onversation has to be opened up to learners, educators, policymakers, software developers, academics, professional bodies, advocacy groups, and industry. […] Only a shared vision of ethical AI in Education will have sufficient gravitas to enable learners to experience optimal benefits from AI in Education whilst being safeguarded against the risks posed.

10

This call is echoed by other reports, which are not only aimed at the government, such as Educ-AI-tion Rebooted?, a report by Nesta, an innovation charity in the UK. 11 Policy mobilization involves both governmental and private sector actors, who share common interests and goals.

Vested interests: Direct and indirect business connections

In addition to independent actors’ self-organizing, mutual dependence is a characteristic of heterarchies (Jessop, 1998, 2002), and organizations in policy networks are resource-dependent on one another. Rhodes (1997) adds that the actors in heterarchies are not only resource-dependent but share common interests. They recognize that cooperation is needed to successfully pursue these common interests and are thus bound by future goals (Börzel, 1998).

From one perspective, governmental bodies show a vested interest in artificial intelligence technologies and data. A stated and reiterated goal of the United Kingdom is to be at the forefront of AI and data technologies in the world. 12 Governmental bodies are also expressly interested in education, beyond the societal good it brings to the United Kingdom. In fact, the International Education Strategy: Global potential, global growth policy paper states the desire to maintain the status of the country's educational institutions as world-renowned and prestigious and keep the “UK brand […] one that is earmarked by quality, excellence and pioneering thought leadership.” 13 Heterarchical governance is explicit in that the government is taking a step back in driving policy, giving it over to “the sector.” 14 Education is thought of as a product for global export driving financial and diplomatic benefits. 15

Additionally, the United Kingdom government is expressly invested in educational technology, as it would contribute to its profits from “educational exports.” The government is supportive of technologies that “reduce workloads, increase efficiencies, engage students and communities, and provide tools to support excellent teaching and raise student attainment.” 16 Similarly, in terms of international education strategy, the government is entangled with the business sector, 17 which is further encouraged by reports of network actors such as Nesta, an innovation charity. 18

The AI Council has 22 members in addition to officials who take part in the council's meetings from the Office of AI, the Department for Digital, Culture, Media and Sport, and the Department for Business, Energy, and Industrial Strategy. 19 Of the 22 members, ten are academics or researchers while eight represent businesses such as DeepMind (owned by Alphabet Inc., the parent company of Google), Microsoft, and Mastercard. The presence of these companies in the AI Council is indicative of how businesses, particularly multinational companies, have an impact on policy. Additionally, a philanthropic organization, Luminate, and an investment organization, Entrepreneur First, are also present.

The pervasiveness of business interests is highlighted by the only philanthropic organization in the council. Luminate was founded by the Omidyar Group, the philanthropic organization of the founder of eBay, Pierre Omidyar. 20 Luminate “fund[s] and support[s] non-profit and for-profit organizations and advocate[s] for policies and actions that can drive change.” 21 Junemann et al. (2016) point out that in a different context (global education policy), the Omidyar Group, along with other philanthropic organizations, operates with the idea of philanthrocapitalism, that is, “philanthropy to become more like for-profit markets” with “investors” and “social returns” (Ramdas et al., 2011). This further underscores the business interests present at the AI Council, where the only philanthropy present is operating with business rationales.

Looking beyond the council, business interest is also present among academics in the network. “Research spin-offs” are the primary way academics become entrepreneurs and business people in the network. Research spin-offs are companies that have sprung up from research done at universities, which are often publicly funded. These companies have proliferated in recent decades (Lockett et al., 2005). In the network, Andrew Manches, who also advised the Scottish Parliament on STEM education in early education, set up two companies (Pling Ltd. and PlayTalkLearn) that translate their early years’ education research into Internet of Things products. Pling is funded through Pitch@Palace, 22 which was also one of the funders of CenturyTech. 23 Manches has also advised the Scottish Parliament via the Education and Skills Committee's STEM on an inquiry regarding the application of STEM in early years education in 2019. 24 Universities of UK, an advocacy organization for universities in the United Kingdom, actively encourage businesses to collaborate with universities, who are “innovators and investors in places and people” and able to support businesses with a skilled workforce and ongoing professional development. 25

Education technology and its interests also permeate the network. Particularly, a single company, Pearson is a prominent and well-connected actor. Pearson is an international education company that has offices in the United Kingdom, and it specializes in educational technology. Pearson is the largest educational technology company in the world (Ball et al., 2017), and is actively working to affect policy (Hogan cited in Ball et al., 2017). Williamson (2019) noted that Pearson is an important actor in the policy network of data infrastructures in the United Kingdom. Pearson, not surprisingly, is also important in the policy network for AI. One of the ways this company—with business interests in educational uses of AI as exemplified by AIDA, their AI-powered mathematics teaching application—is involved in influencing policy is through knowledge creation via the commissioning of reports on AI. Intelligence Unleashed, 26 published in 2016, is a research report commissioned by Pearson and authored by Rose Luckin, Wayne Holmes, Mark Griffiths, and Laurie B. Forcier.

In addition to commissioning academics, businesses—particularly Pearson—are involved in knowledge creation in the network. The International Journal of Artificial Intelligence in Education is a journal of the International Artificial Intelligence in Education Society (IAIED), which is headed by Rose Luckin, the hub of the network. The journal has a long list of editors, most of whom are affiliated to different universities, except for three people. Out of the three, two people's affiliation is listed as Pearson, showing that the educational technology company has a strong foothold in the academic journal. Additionally, Pearson's chair, Sir David Melville, is a board member of the think tank Policy Connect, which houses the Higher Education Commission. The think tank's report on AI-powered tools, From Bricks to Clicks—The Potential of Data and Analytics in Higher Education, echoes the government's neoliberal ideas of market advantage and profitability. The following passage illustrates that profit-oriented thinking is present in the network: “The HE sector has always been a data-rich sector, and universities generate and use enormous volumes of data each day. However, the sector has not yet capitalized on the enormous opportunities presented by the data revolution, and is lagging behind other sectors in this area.” 27

Tied to Pearson through multiple relations is Rose Luckin, the hub of the network (see Figure 2). They are active via multiple avenues, and they connect educational technology, research and universities, and government. Rose Luckin was appointed an advisor to the UK Parliament's Education Committee's Fourth Industrial Revolution inquiry 28 and advised the UK Government's Industrial Strategy Green Paper on AI. 29 Their functions in the network may also reveal how CenturyTech and Priya Lakhani became prominent voices affecting policy vis-à-vis AI.

CenturyTech is a company that appears in multiple locations of the network. It is interesting that CenturyTech, via Priya Lakhani, is on the AI Council; when compared to the other companies, it is a relatively small and new organization (incorporated in 2013, and employing 83 employees in 2019 30 ). In addition to the AI Council, Priya Lakhani is involved with the Institute for Ethical AI in Education. CenturyTech's product—Century—is a “tried and tested intelligent intervention tool that combines learning science, AI and neuroscience” that helps teachers “[s]upercharge [their] teaching.” 31 Century, as of 2018, has been used in multiple countries, from teaching Syrian refugees to aiding professional development. 32 In fact, documents circulating in the network highlight and praise CenturyTech as well. 33

Priya Lakhani's CenturyTech was one of the cohorts of EDUCATE, 34 the “UK's leading research accelerator program for education technology.” 35 Rose Luckin founded EDUCATE in 2017 in partnership with F6S (a start-up incubator) and Nesta, 36 aiming to create and employ the best research in education technology. The business interest that springs up from this involvement in educational technology becomes clear in the way that alumni of the EDUCATE program are promoted in reports (e.g., CenturyTech in Nesta's report 37 ) and are invited to influence policy (e.g., Priya Lakhani, founder of CenturyTech, is a co-founder of the Institute for Ethical AI in Education with Rose Luckin). Business interest does not stop there, as Pearson is also indirectly involved with EDUCATE. Rose Luckin was commissioned by Pearson to co-write a report (Intelligence Unleashed 38 ), and Pearson is collaborating on Sophie Bailey's EdTech podcast (the Pearson Future Tech for Education Podcast Series). 39 Sophie Bailey is also an advisor and mentor at Rose Luckin's EDUCATE. 40

Mutual interests also manifest indirectly in career mobility and alumni hiring in the network. Particularly, there is mobility between not-for-profit and for-profit organizations, further highlighting converging interests. Larner and Laurie (2010) argue that both policymakers and “traveling technocrats” are important for policy. Priya Lakhani studied at UCL, 41 where Rose Luckin teaches, 42 and her company went through Rose Luckin's EDUCATE program, 43 and they recently cofounded the Institute for Ethical AI in Education. 44 The Institute's executive lead, Tom Moule, 45 used to work at CenturyTech. 46 Another example of career mobility, and particularly the movement between not-for-profit and for-profit organizations, is Mark Griffiths. Griffiths supported the writing of NESTA's Decoding Learning report, which was co-written by Rose Luckin. 47 They then went on to work for Pearson 48 and co-authored the Intelligence Unleashed report with Luckin, 49 and now works at The Queen Rania Foundation, 50 a Jordanian nongovernmental organization aiming to improve education.

What emerges is an intricate web of relations and interests. No wonder there is such a connection between government, universities, academics, and businesses. The line between academics and business people is frequently blurred, just as there is “institutional fuzziness” (Jessop, 2002, p. 229), in the heterarchical networks of policy for AI in higher education. Business and knowledge production are deeply entangled. Academics are simultaneously entrepreneurs and business people, while universities themselves serve as incubators and accelerators of educational technology companies and businesses act as knowledge producers.

Dialogue through conferences and reports

Jessop (2002) argues that because heterarchies are resource interdependent and have mutual interests, actors take part in the coordination of activities and resource-sharing. “The key to [heterarchy's] success is continued commitment to dialogue to generate and exchange more information (thereby reducing, without ever eliminating, the problem of bounded rationality)” (Jessop, 2002, p. 229). Börzel (1998) concurs that in pursuit of shared interests, the actors also share resources.

As discussed earlier, the AI Council is one of the prominent ways that dialogue is pursued in the network. In fact, one of the roles of the council is to “[p]rovide an open dialogue and exchange of ideas between industry, academia and government.” 51 In addition to the council, conferences are used as platforms for dialogue and networking, similar to how trade shows are becoming an important policy space (Gulson & Witzenberger, 2020). Networking, as Jessop (2002) notes, has become an important part of heterarchies, especially interpersonal networking. In fact, chair of the AI Council, Tabitha Goldstaub, organizes a popular conference around AI: CogX. The list of speakers 52 at the conference includes many AI Council members (such as Ocado Chief Technology Officer Paul Clarke, CenturyTech founder Priya Lakhani, and Mastercard Executive Vice Chairperson Ann Cairns) and the hub of the network Rose Luckin. Other prominent conferences attended by network members include Bett Show, Reimagine Education, and Future Edtech, 53 and other notable conferences include LearnIT and Digifest (Jisc's showcase). An important function of these conferences is to network, that is, to connect. For example, the Future Edtech conference was organized online in 2020, due to the coronavirus pandemic, but even the virtual platform had a networking function. In fact, the VIP Team organized personalized networking for select participants. 54

Jessop (2002) adds that knowledge in heterarchical networks is strategically used to “modify the structural and strategic contexts in which different systems function so that compliance with shared projects follows from their own operating codes rather than from imperative coordination” (p. 228). Knowledge is a resource that is shared in the network. In fact, part of the mandate of the AI Council is to “[s]hare research and development expertise.” 55 Beyond the conferences mentioned earlier, the reports produced by and for the network that was referenced in the previous section also serve as context-creating devices. The reports often reference one another; for example, Pearson's Intelligence Unleashed report references Nesta's Decoding Learning report, Nesta's Educ-AI-tion Rebooted? report references the Pearson report. 56 Additionally, EDUCATE, through its mandate of “[d]isseminating educational technology research and connecting enterprises to academia,” 57 is a prime example of resource and knowledge sharing in the network.

“Supercharge,” “efficient,” and “free to be human”: Neoliberal ideas

Laclau and Mouffe (2001) argue that discursive structures are not merely cognitive, they also organize social relations. Power and discourse are dynamically entangled (Foucault in Lynch, 2014), and “power and hegemony [are] constitutive of the practices of policy-making” (Howarth, 2010, p. 324). Thus, it is important to analyze the language used in policy as the last decades have seen the “mediatisation” (Fairclough, 2000, p. 4) of politics. Jäger (2001) also points to the fact that, through the interrogation of discourse, we can uncover the knowledge that it creates and how that knowledge is entangled in power. In particular, with respect to AI systems, Zdenek (2003) argues that the discourse not merely describes the technology, but, in fact, produces it.

The policy network of AI in Higher Education in the UK is overwhelmingly in support of the use of AI in higher education, with only the Future of Humanity Institute at Oxford University writing about the dangers of this technology in any detail. From one perspective, they see it as an inevitable technological leap. The UK Government posits AI as one of the four “Grand Challenges” that will shape our future. 58 Sir Anthony Seldon, co-founder of the Institute for Ethical Artificial Intelligence in Education (alongside with Rose Luckin, the hub of the network, and Priya Lakhani, who sits on the AI Council), calls the proliferation of this technology in education as the “Fourth Education Revolution,” 59 and the rhetoric of the Fourth Industrial Revolution (4IR) is also picked up by the United Kingdom's Parliament as well. 60 The UK Government sees that we are at the cusp of this technological revolution, which is just getting started 61 .

Policymakers and members of the network employ a positive discourse around AI, with assertions like “There is no doubt that machine learning and AI is already improving people's lives…” 62 and “The use of data and artificial intelligence (AI) is set to enhance our lives in powerful and positive ways.” 63 In addition to governmental actors, key documents in the network also argue that AI can change education for the better. Intelligence Unleashed's subtitle is “An Argument for AI in Education,” 64 and Nesta's Educ-AI-tion Rebooted? report argues that “[s]tudents, parents, teachers, government and regulators must wake up to the potential of artificial intelligence tools for education (AIEd).” 65 In addition to policy papers, consultations, and reports, the social media sites (Twitter and Facebook) of the network are overwhelmingly in favor of AI. A sentiment analysis of 9,652 social media posts about AI revealed that 72.25% of the posts had a positive tone. A common trope among these Tweets is that AI built with ethics in mind will improve education and help underprivileged students thrive. Social media posts and blogs have an important impact on popular opinion (Adhikary et al., 2018), especially when policy actors retweet and reply, that is, echo one another as is the case in the studied network.

Common concepts widely circulating in the network are efficiency, productivity, and performance, which are claimed to be the result of employing AI in education to solve the problem of teachers having a high workload. These concepts are, in fact, often used in neoliberal discourse (Shamir, 2008). Actors in the network argue that teacher-facing AI in education (AIED), including analytics, automation of tasks (such as grading), and predictive programs, “could free up a teacher's time to invest in other aspects of teaching. Insights gained about student's progress could enable teachers to target their attention more effectively.” 66 AI could also support measuring performance: “At a time when teaching quality in higher education institutions is increasingly coming under scrutiny, data about students’ learning behaviors are potentially a powerful measure for how well tutors are performing.” 67 However, the terms “effective” and “productive” are never defined in any of the documents. What is claimed is that effectiveness and productivity increase when AI is used in education. Content analysis of the surrounding words through word trees also shows that the terms are employed in various ways using neoliberal discourses (Shamir, 2008). They are primarily used to contrast between AI-powered and other approaches, as exemplified by “more,” “most,” and “very” preceding the words “effective” and “productive” in numerous contexts. These two terms are typically followed by “way,” “practice,” pointing to “improving student performance,” or “student productivity,” and “institutional success.” These echo the dominant discourse in education, namely, performativity (Ball, 2001), without being explicit in what the comparison points to concretely by employing the words “most” and “more.”

Even a policy document that is not primarily aimed at highlighting the benefits of AI does so with the empty signifiers employed. The interim report of the Institute for Ethical AI in Education highlights the benefits of AI in the language of productivity and scalability (e.g., “AI can increase capacity within education systems and increase the productivity of educators” 68 ) and positions this technology as a transformer of assessment, 69 which is a keystone technology of the neoliberal policies focusing on markets and performativity (Ball, 2003). “AI could continuously monitor and assess learning over a long period of time to build up a representative picture of a student's knowledge, skills, and abilities—and of how they learn.” 70 The neoliberal elements of increased productivity, scalability, and efficiency are employed in the potential benefits of AI—highlighting it as a necessary product for neoliberal governance.

This highlights how the network employs the “empty signifiers” (Laclau, 1996) of “effective” and “productive” in a political competition of securing a dominant position for AI technologies in education. It is likely that they are using these empty signifiers because they fit the dominant neoliberal ideology. As discussed earlier, government documents reveal that education is considered a product to be exported and profit from. 71 Ball (2001) argues that performativity has become a prominent feature of education under neoliberalism. Robertson and Verger (2012) corroborate that public policy is permeated by such neoliberal rationales and managerial discourse. The empty signifiers of the network position AI favorably in a language of performativity.

Artificial intelligence as a solution to educational issues

In the political competition, AI is positioned as a solution to multiple problems: high teacher workload and consequent retention issues, the “one-size-fits-all” model, and social justice questions of access, or “wicked problems” 72 as Nesta calls them. Robertson and Verger (2012) argue that Education Public–Private Partnerships (EPPPs) “promise to resolve some of the intractable problems facing development” (p. 31). Similarly, actors in the network argue that AI-powered technologies will solve these issues mentioned. CenturyTech's AI-powered product promises to “supercharge” 73 one's teaching and solve the issue of the “one-size-fits-all” 74 model of education by providing personalized learning to students. The “one-size-fits-all” model is “inadequate” and “leaves so many students struggling behind and so many underchallenged.” 75 Simultaneously, the actors argue that AI would also resolve the workload issue of teachers, solving the problem of retaining skilled professionals in turn. “Now that a cloud-based intelligent assistant for every teacher is a realistic possibility, we can provide support to reduce teacher stress and workload.” 76

These capabilities of AI systems are also marketed as solving social justice issues. Personalized tutoring systems are promised to close the education attainment gap: “For example students who need extra help can be offered one-to-one tutoring from adaptive AIEd tutors, both at school and at home, to improve their levels of success.” 77 Nesta's Educ-AI-tion Rebooted? report promises that AI could solve hindrances to social mobility. That said, as a sidenote, they also mention that “under certain circumstances AIEd can drive inequality and lack of social mobility” 78 , although they do not offer any solutions or clarification of under what “certain circumstances” AIEd exacerbates inequalities. Although there is a strong language of social justice and equity, Ball (2003) warns us that such rhetoric does not always get translated into policy and that concurrent discourse of meritocracy negates social mobility.

Conclusion

What emerges is a policy network of AI in higher education that shows signs of a heterarchy (Jessop, 2002) permeated by neoliberal rationales. Governance is extended to nonstate actors through institutions like the AI Council, while nonstate actors also seek out policy influence, such as by producing reports like The Institute for Ethical Artificial Intelligence in Education's interim report. Actors in the policy network are bound by mutual interests of monetizing education and educational technology directly and indirectly. The hub of the network, Rose Luckin, exemplifies how resource sharing and dialogue form an integral part of the network as they position the nascent technology and its future.

AI is positioned as a solution to educational problems, and the policy documents are permeated by neoliberal rationales, which further push for posthuman futures and technology to fulfill the efficiency and productivity needs of the neoliberal paradigm. Business and knowledge production are deeply entangled. Academics are simultaneously entrepreneurs and business people, and universities themselves serve as incubators and accelerators of educational technology companies, while businesses also act as knowledge producers. Multinational companies and ties with international organizations were revealed in this research project focusing on the United Kingdom.

With this technology being promoted and treated as an inevitable and salient feature of education, uncovering the vested interests shaping policy is becoming increasingly paramount. Through network ethnography, we can gain a greater understanding of how various actors attempt to shape the future of education through AI-powered technologies. It can deepen our understanding of how neoliberal rationales are employed to gain a favorable position for educational technology entrenching performativity (Ball, 2001) and data governance (Ozga, 2009).

Footnotes

Declaration of conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethical statement

There are no human participants of the research, it is minimal risk research and had been registered with King's College London as such (reference number: MRSU-19/20-18174).

Funding

The author received no financial support for the research, authorship, and/or publication of this article.