Abstract

Purpose:

Universities assess and evaluate students concerning competence in essential disciplinary knowledge and skills. Those assessments impact learners’ attitudes, beliefs, and emotions. Negative impacts may be overcome if students regulate their responses to assessment and feedback.

Design/Approach/Methods:

This article systematically locates research studies that cite three key early papers around student conceptions of assessment (SCoA). A narrative synthesis is based on 22 papers.

Findings:

In addition to the SCoA, 11 different research inventories reveal a variety of regulatory responses that are enhanced when assessments are deliberately formative, fair, and trustworthy. There is broad interest in this phenomenon but little consistency in methods, and even the SCoA has little consistency in factor structure across jurisdictions. Only one study provided an objective behavioral measure to validate self-reports, which are the dominant form of research.

Originality/Value:

This review gives readers insights into how assessment influences student thinking and how student cognition can regulate success.

Introduction

A decade ago, it was remarked, in an early volume of studies on student conceptions and experiences of assessment, that student voice is “remarkably absent” in the literature on assessment (Brown, McInerney et al., 2009, p. 5). More recently, McMillan’s (2016) review of research into the student psychology of assessment identified three substantial lines of research on student experiences of assessment. These included (a) the student self-report inventory

Hence, there is a need to examine the psychology of assessment among higher education students, which may be different to compulsory schooling. Higher education may engender different conceptions or perceptions because (a) evaluation practices are largely summative (Panadero et al., 2019) and high stakes and (b) students are legally adults and able to exercise considerable autonomy around assessment to achieve their own life goals (Harris et al., 2018). Thus, this review focuses on higher education students, exploiting McMillan’s (2016) summary, by focusing on just one of the three core lines of research he identified. Thus, for scope limitation reasons, this review limits itself to work that cites or uses the SCoA inventory. This approach tests claims developed in K–12 contexts with a sample of 22 higher education studies that student experiences and beliefs about assessment can be self-regulatory and that contextual factors matter to student perceptions/conceptions of assessment.

Assessment in Higher Education

Before describing the SCoA inventory, an overview of assessment practices and purposes in higher education is provided. Assessment is used throughout higher education to assure stakeholders that graduates have mastered sufficiently the material taught in the program and have been awarded a certificate that attests to this performance. Societies expect evidence that the rewards (e.g., employment, scholarships, entry to programs) attached to success are justified. The summative function (Scriven, 1967) of assessment is found in the widespread and dominant function of generating a final grade (i.e., a letter from A to F or a mark from 100 to 0) as the sum of the activities performed by the student. These grades allow student performances to be compared and organized hierarchically to select the “best” students. This summative use of assessment has always been the primary function of evaluative processes. Administrators have to decide who should be awarded scholarships, prizes, and honors or select candidates for elite or restricted programs and opportunities. Usually, a wide variety of assessment processes (e.g., examinations, tests, quizzes, coursework, projects, theses, etc.) are used to assemble evidence that student scores, grades, or ranks are defensible. Without assessment, such decisions would be prone to nepotism, corruption, or collusion—hence, the global use of competitive, invigilated, time-constrained, high-stakes examinations since the Han dynasty some 2–3,000 years ago (China Civilisation Centre, 2007).

Consequences attached to assessment processes are not inherent to assessment but rather reflect how societies use scores and grades. Such consequences are generally external to the learning process; consequently, some higher education researchers are concerned about the long-term effects on learners if assessments are always externally imposed. Removal of external accountability mechanisms (e.g., assessments) may contribute to better, not worse, learning (Boud & Falchikov, 2007). However, external accountability mechanisms exist in life (Lerner & Tetlock, 1999), and in life, those processes may be more subjective and less structured (e.g., salary or wage review, recruitment, retention, and promotion discussions) than formal assessment mechanisms. There are few people whose lives are so self-contained that they are not under some sort of authority; hence, learning to cope with the accountability demands of higher education can be a valid preparation for the accountability demands and rewards of life. Indeed, self-regulation of learning requires coping with such expectations among other objectives.

A significant constraint on SCoA are environmental factors controlled by instructors and systems external to the individual learner (Fulmer et al., 2015). In some societies (e.g., Chinese mainland, Hong Kong SAR, Singapore, India), access to higher education is strongly limited (perhaps just as few as 20% of candidates) by performance on high-stakes university entrance examinations. In other societies (e.g., New Zealand), there are multiple paths to university entrance: (a) entry to elite programs (e.g., medicine, law, engineering) depend very much on high school examination performance and (b) open entry to all programs is given once adults reach the age of 20. Furthermore, provision is made in higher and tertiary education for all New Zealand students who qualify rather than just an elite few, as is seen in Hong Kong SAR, for example. These differences in material conditions create very different ecologies in which student conceptions are formed. Given that different societies have different incentives and mechanisms, it should not be surprising that conceptions of assessment are ecologically rational (i.e., make sense within the reward system; Rieskamp & Reimer, 2007).

Contemporary efforts to modify the importance of summative examinations have influenced some higher education jurisdictions. For example, in Europe, the Bologna Process (High Level Group on the Modernisation of Higher Education, 2013) prioritizes the development of teaching and learning in higher education as a shared process, with responsibilities on both student and teacher so that students engage in learning far beyond simply getting through assessment or exams. Similarly, the UK Assessment Reform Group (2002), in reaction to the prevalence of K–12 summative examinations, advocated formative assessment as a better assessment for learning. Similar notions were advocated for higher education because “students themselves need to develop the capacity to make judgements about both their own work and that of others in order to become effective continuing learners and practitioners” (Boud & Associates, 2010, p. 1).

Despite these calls, university assessment practices have remained largely formal and terminal. In a recent analysis of 250 U.S.’s higher education grade syllabi, examinations accounted for 47% of grades, ranging from 16% in English to 63% in Psychology (Lipnevich et al., 2020). A survey of almost 1,700 Spanish university syllabi, a country participating in the Bologna Process, reported that final written examinations were present in 70% of all syllabi (Panadero et al., 2019), a state of affairs consistent with Spanish policy (i.e., Ley Orgánica de Educación [Organic Law for Education] 2006 and Ley Orgánica para la Mejora de la Calidad Educativa [Organic Law for the Improvement for Educational Quality] 2013). Earlier surveys of Spanish higher education instructors indicated that peer assessment was relatively infrequent (

This summary of European, American, and British studies fits well descriptions of assessment practices in Chinese mainland (Davey et al., 2007; Gan et al., 2019) and Brazil (Matos et al., 2009). Higher education assessment is largely summative, depends heavily on written examinations, and is consequential to students’ life chances. These circumstances are highly likely to color SCoA as being largely about student accountability and nonignorable because of the consequences attached to performance.

Understanding the psychology of learners under high-stakes and continuous assessment over a multiyear program of higher education has been a matter of considerable interest. McMillan (2016) provided an excellent overview of three major lines of research around student perceptions and experiences of assessment (i.e., Brown’s SCoA, Pekrun’s achievement emotions, and Dorman’s perceptions of assessment tasks). McMillan (2016) indicated that Brown’s work had been seminal, and so to limit the scope of this review, it is restricted to empirical research in higher education that has either cited or made use of the SCoA. The goals are: to establish the breadth of research inventories and methods that cite the SCoA inventory, to establish the generalizability of the SCoA across higher education contexts, to provide a narrative summary of research into student perceptions, attitudes, beliefs, and experiences of assessment.

SCoA Inventory: Self-Regulatory Beliefs

Through a series of iterative studies, Brown developed a self-report survey questionnaire (Brown, 2008) over a number of years. The research with New Zealand secondary school students' attitudes toward assessment has been reported in multiple studies and linked those beliefs to performance, interest, self-efficacy, assessment types, and levels of schooling (Brown & Harris, 2012; Brown & Hirschfeld, 2007, 2008; Brown, Irving et al., 2009; Brown, Peterson et al., 2009; Brown & Walton, 2017; Hirschfeld & Brown, 2009).

The SCoA has eight first-order factors that relate to four superordinate conceptions of assessment (i.e., assessment is for improved teaching and learning, assessment is irrelevant, assessment supports classroom climate and personal emotions, and assessment evaluates schools and individuals). These factors speak to the formative and summative purposes of assessment (i.e., improve learning and teaching vs. evaluate students and schools), as well as allowing students to identify a socio-emotional impact or even reject the validity of assessment.

Pekrun’s achievement emotions research (Vogl & Pekrun, 2016) has identified a wide range of emotional responses students experience around assessment events and processes. These emotions, despite being positive or negative, can have activating and deactivating consequences on effort and behavior. This means that sometimes negative emotions (e.g., anxiety) contribute positively to performance, while positive emotions (e.g., contentment) can be deleterious to performance. Enjoying assessment may not be very high on average, but lack of enjoyment may lead to positive performance consequences. The positive classroom climate factor speaks to the importance of collaboration and cooperation in contemporary higher education, which is associated with improved performance (Johnson, 1981). Nonetheless, the positive effects of peer collaboration can be undermined by free riding, social loafing, grandstanding, and other maladaptive interpersonal relational processes (Strijbos, 2016).

Originally, the SCoA was developed with a hierarchical structure (i.e., four correlated superordinate factors each predicting two subfactors; Brown, 2013), and when tested with New Zealand university students, it had marginal fit (Matos & Brown, 2015). By removing 2 items, a Farsi language version of the SCoA had good fit to the hierarchical model (Brown et al., 2014). However, because multiple models can fit the same data frame, that hierarchical model has been evaluated with different samples.

Bifactor analysis (Weekers et al., 2009) suggested that many of the items shared a strong general factor along with four unique factors for the four superordinate factors. A comparative study of multiple SCoA models using data from New Zealand and Brazilian university students concluded that a bifactor model (i.e., general plus unique factors for improvement, socio-emotion, and external attributes) was the best approach to understanding the structure of the inventory (Matos et al., 2019). This dominant general factor was found in a Filipino college student study (Delfino & Magno, 2012). While Smith and ten Hove (2009) found multiple factors, the first factor (assessment is good and promotes accountability) took 8 items from five of the SCoA factors.

By ignoring the first-order factors, Zaimoğlu (2013) reported a four-factor principal component result (i.e., improvement, irrelevance, accountability, and affect) among 400 Turkish young adults in a university preparatory program. In contrast, the eight first-order factors of the SCoA without the hierarchical structure had good fit to Brazil and New Zealand students (Matos & Brown, 2015), and with the removal of 4 items, it fit a survey of Portuguese students (Flores et al., 2020).

These analyses suggest that there are likely to be stable item sets or factors in the SCoA despite differences in jurisdictions. The widespread use of the SCoA internationally suggests that it has been influential and a useful starting point for researching this topic. Nonetheless, no claim is made that only these factors exist in how students experience, conceive of, or react to assessment.

Brown (2011) has argued that responses to the SCoA are aspects of self-regulation of learning. Boekaerts' (1996) self-regulation model has a growth pathway that would suggest students treat assessment as a mechanism for improvement. On the other hand, her model has an ego-protective or self-enhancement pathway in which the pain of learning is avoided to protect the “self.” Ignoring assessment because it is bad or seeing assessment as an indicator of school quality or a predictor of overall ability is construed as self-protective because these conceptions point to factors relatively outside the control of the student (i.e., a maladaptive external locus of control). Hence, a goal of this article is to establish if evidence of this claim has been reported.

Method

Because the scope of this review was to exploit research based on the SCoA, it was decided to conduct a systematic citation search of papers that cited three key publications.

Brown, Irving et al. (2009) reported Version 5 of the SCoA and showed that conceptions of assessment meaningfully predicted how students defined assessment.

Brown, Peterson et al. (2009) replicated the Version 5 SCoA study and extended it to Version 6 with linkages to mathematics performance and definitions of assessment.

Brown (2011) reviewed a raft of SCoA studies that showed the conceptions of assessment meaningfully related to self-regulated learning and academic performance in studies conducted in New Zealand, Brazil, Germany, and the U.S.

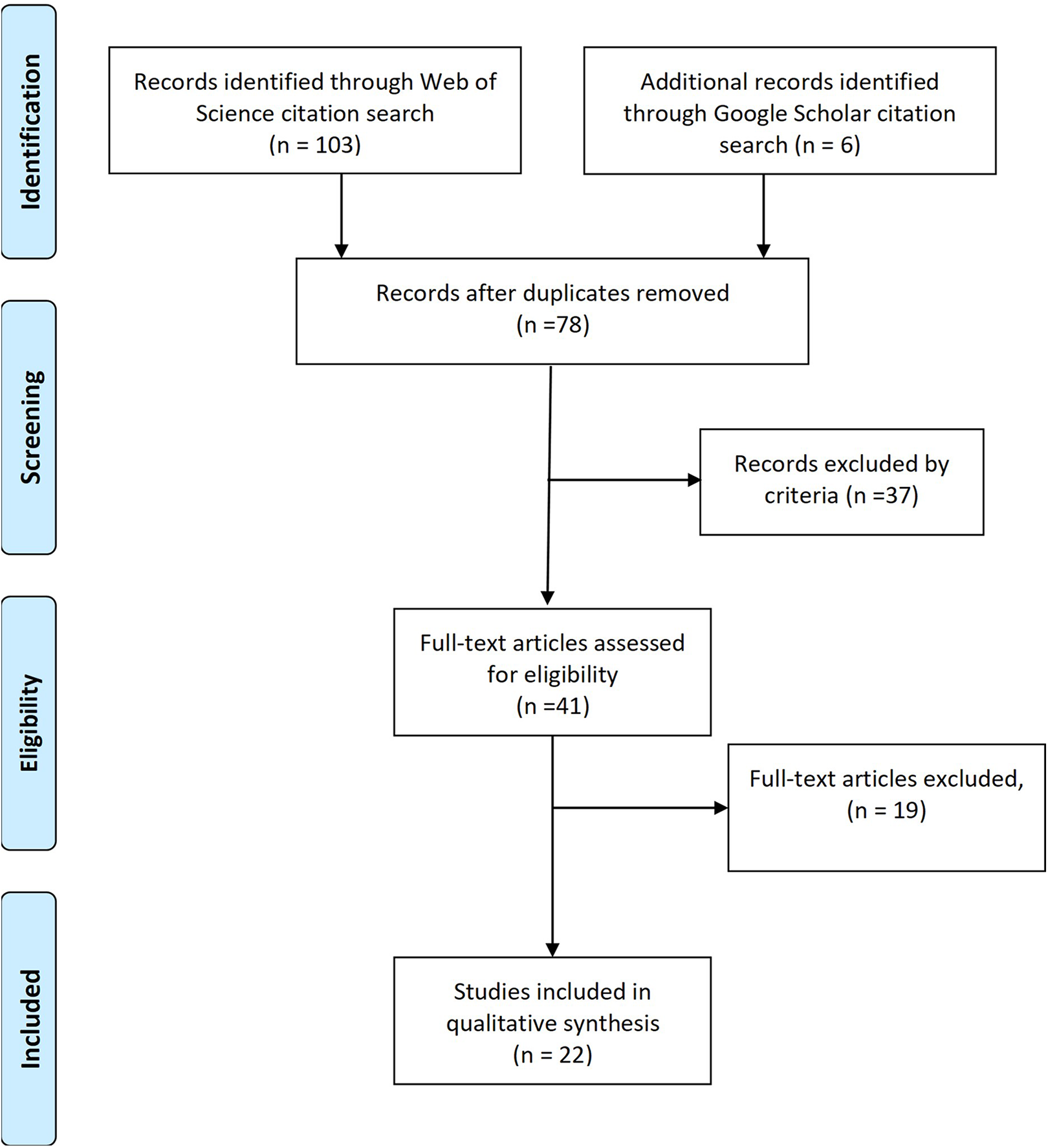

A citation search was conducted in the Web of Science database and supplemented with a hand search of citations for the same sources in Google Scholar. A total of 108 papers were located using these protocols, which after deletion of duplicates left 77 papers (Figure 1). Studies were excluded if (a) the language was not English (

Prisma flow diagram of literature search.

Reading of the papers excluded seven papers that were reviews (Brown, 2011; Double et al., 2020; McMillan, 2016, 2019; Van der Kleij & Lipnevich, 2021; Vogl & Pekrun, 2016, Wise & Smith, 2016), five that had no measure of student perceptions of assessment (Babaii & Adeh, 2019; Chen & Lin, 2020; Gordienko, 2015; Natsis et al., 2018; Taghizadeh & Kazemzadeh, 2019), one was a content analysis of syllabi (Panadero et al., 2019), two were qualitative analysis of student drawings (Brown & Wang, 2013; Wang & Brown, 2014), and two were qualitative analysis of student comments (Cleland, & Walton, 2012; Murillo & Hidalgo, 2017). Unfortunately, two studies cited the SCoA but instead used the Teacher Conceptions of Assessment inventory (Brown, 2003) to do comparative studies between faculty and students (Fletcher et al., 2012; Hodgson & Garvey, 2020). This left 22 valid papers.

Results

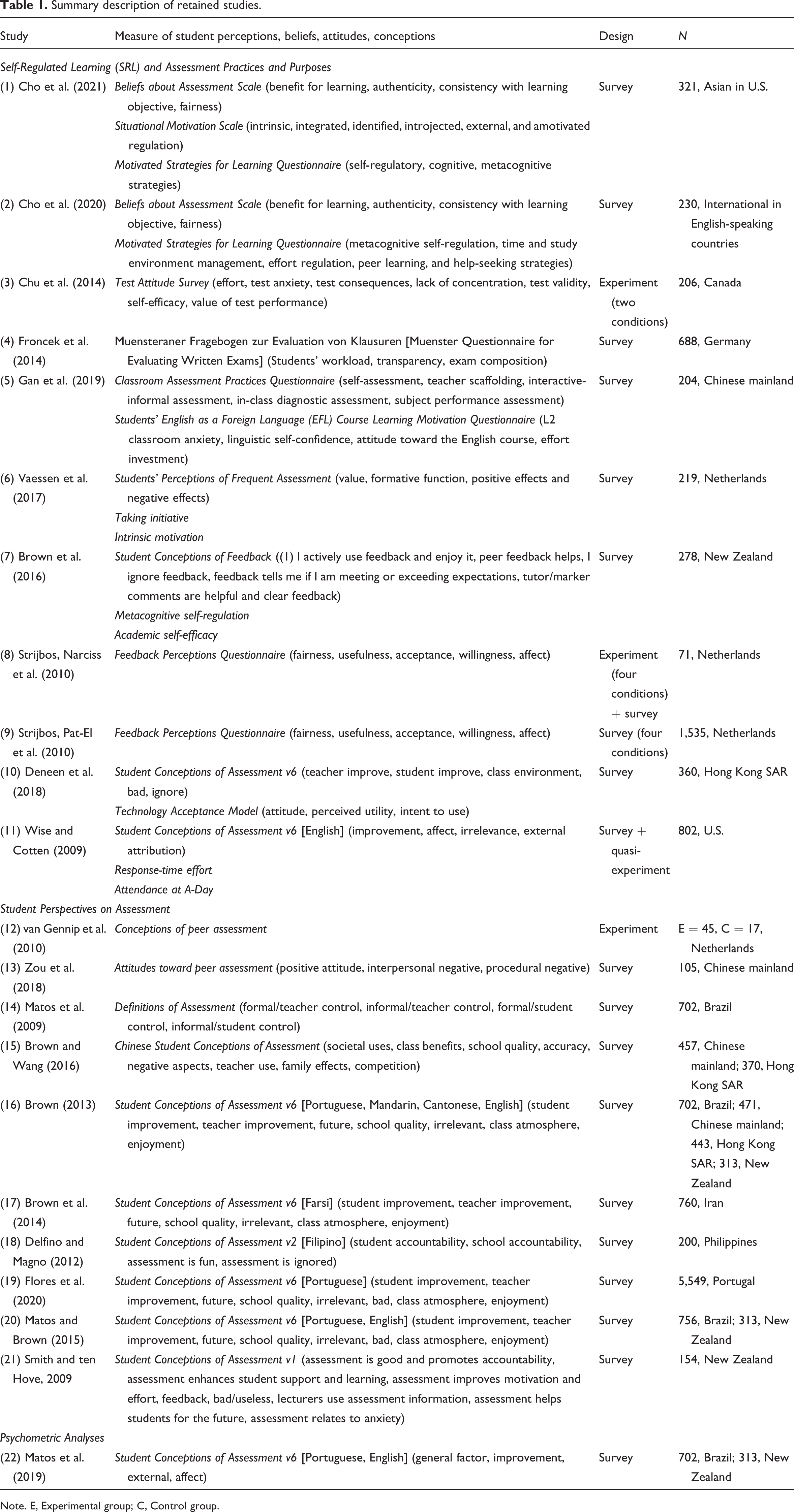

The 22 studies include those that used a version of Brown’s SCoA inventory (

To focus on how student perspectives on assessment function, the results are organized around (a) the relationship assessment-related beliefs to self-regulatory processes, (b) how different assessment types affect students, and (c) student responses to assessment practices and purposes as shown in Table 1.

Summary description of retained studies.

Note. E, Experimental group; C, Control group.

Self-regulatory role

Because the consequences attached to assessment matter for the quality of life (Wang & Brown, 2014), learners generally respond in productive ways by (a) using assessments to guide learning of course material, (b) engaging in greater diligence and effort, and (c) seeking or exploiting the information in assessment feedback to inform their subsequent learning. Students who do these things are regulating their own learning and understandably, those with greater control of learning, tend to do better (Boekaerts, 1996; Pintrich, 1995). Awareness of test consequences contributes to student value for doing well on a test (Chu et al., 2014).

Empirical evidence for this idea that ways of thinking about assessment relates to SRL has been independently reported. Using the

Consistent with Pekrun’s theory, greater value for performance on a test regressed positively on effort in a test, while anxiety and lack of concentration were reduced with greater self-efficacy for the content being tested (Chu et al., 2014). Strongly linked to personal emotions is the idea that assessment can stimulate positive cooperation among peers in or destructive competitive behaviors (e.g., hiding good material from classmates), which was found to be associated with improved performance (Chu et al., 2014).

Feedback, whether grades or comments, arises from assessment and is an essential facet of assessment processes. A study of New Zealand university students’ conceptions of feedback (Brown et al., 2016) found greater agreement with enjoying and actively using feedback to improve was associated robustly with greater GPA, self-regulated learning, and more weakly with academic self-efficacy. These results support the idea that self-regulating students are those who exploit the feedback they get for learning.

Assessment types

Unsurprising given the introductory data on university assessment practices, assessment is seen in a narrow traditional way as something controlled by and marked by the lecturer (Smith & ten Hove, 2009). A Brazilian study (Matos et al., 2009) reported assessment activities falling into four types according to type of assessment and who controlled the assessment processes (i.e., formal, teacher controlled; informal, teacher controlled; formal, student controlled; and informal, student controlled). Nonetheless, 9 of the 12 assessment practices evaluated by students could be classified as formal, test-like practices.

In higher education, assessments carry weight toward a final summative grade. Where they tend to differ is in the mixture of tests, exams, coursework, group work, oral work, participation, tutorials and labs, self- and peer assessment activities, and so on that are present. A German study found that students positively evaluated written exams that were transparent, well-organized, and which did not impose too great a workload (Froncek et al., 2014). Strijbos, Narciss et al. (2010) reported from an experimental study of the

A study of student perceptions of frequent tests in an undergraduate statistics course (Vaessen et al., 2017) reported means above the mid-point on a 5-point Likert-type scale only for the value of frequent tests. The formative function mean was close to but below the midpoint, while positive and negative emotions both were half-way between disagree and midpoint. Interestingly, belief in the formative function of frequent tests and positive consequences of tests had positive paths to intrinsic motivation, while negative paths were seen for value of tests and negative consequences on SRL.

Another novel assessment practice is the use of eportfolios as a course-based assessment. A survey study in Hong Kong SAR (Deneen et al., 2018) found that a positive attitude to eportfolio technology for assessment was strongly associated with three self-regulating SCoA beliefs: (a) teachers use assessment to improve teaching, (b) students use assessment to improve their own learning, and (c) assessment improves class environments.

Consistent with prior research on the importance of peer assessment interpersonal relations (Panadero, 2016), Zou et al. (2018) found that this factor was especially important for English majors rather than Engineering students. Greater concern for interpersonal relations contributed to doing more peer reviews and providing an evaluation of peer reviews received. They also reported that greater endorsement of positive attitudes toward peer assessment reduced the number of reviews completed, and, sensibly, fewer reviews or review evaluations were done when students endorsed negative views of peer assessment procedures.

Assessment purposes

With a moderate-sized sample of New Zealand university students (

Understandably, a weakness in self-report survey studies is the lack of control and behavioral measures. To address that, a study with students at one U.S. university that annually administers a low-stakes system evaluation test (Wise & Cotten, 2009) related SCoA responses to time taken to answer test questions on a computer-administered test (i.e., response time effort [RTE]) and attendance at the low-stakes testing day. Less guessing (i.e., longer response times) was associated with greater belief that assessment leads to improvement, while more guessing was predicted by lower affective benefit and greater irrelevance of assessment. Attendance on the day of the low-stakes test was considerably higher for those who endorsed improvement and affect and rejected irrelevance. In other words, self-regulating learners espouse adaptive conceptions of assessment that support growth over ego-protection, and it can be seen in their behavior. A similar SRL relationship between beliefs about assessment and performance was noted by Deneen et al. (2018) who reported that the more students agreed with ignoring assessment, the lower the self-reported GPA.

Extensive comparative research with the SCoA inventory has demonstrated that there are different structures in student thinking about assessment. Students in Hong Kong SAR agreed more than students in Chinese mainland that assessment was irrelevant, bad, and to be ignored, while students in Chinese mainland agreed more than students in Hong Kong SAR that assessment helped socially and affectively and that it contributed to improved teaching and learning (Brown, 2013). Brazil students in contrast had mean scores for irrelevance, improvement, personal future, and personal enjoyment factors indistinguishable from students in Chinese mainland, while having similar means to students in Hong Kong SAR for the social/affective construct. They differed to students in Hong Kong SAR, Chinese mainland, and New Zealand with much lower means for external school quality and class climate factors (Brown, 2013). These mean score differences suggest jurisdictional norms matter.

Working initially with university students in Hong Kong SAR (Brown & Wang, 2013; Wang & Brown, 2014), a new Chinese-SCoA inventory that was structured as a higher order factor (i.e., School Quality) with seven dependent factors (i.e., Societal Uses, Class Benefits, Accuracy, Negative Aspects, Teacher Use, Family Effects, and Competition) was developed (Brown & Wang, 2016). That model was found to be invariant among the two groups of students in Chinese mainland (i.e., postgraduate and pre-bachelor degree) but nonequivalent to students in Hong Kong SAR. In all but family effects and class benefit, students in Hong Kong SAR had higher means by moderate to large effect sizes compared to students in Chinese mainland. Given that entry to higher education is more restricted in Hong Kong SAR than Chinese mainland and is strongly based on examination results unlike Chinese mainland, it would seem that, despite the shared Confucian-heritage culture of Hong Kong SAR and Chinese mainland, the jurisdictional or institutional factors of each society contribute more to understanding the differences in responses.

Discussion

While this study began with the SCoA inventory as a requirement, 11 other inventories were discovered. These included measures of the quality of assessments (i.e.,

This list indicates that there is a breadth of interest in terms of how a variety of assessment types and processes affect students. It also makes clear that there is no consensus or universal mechanism for studying this phenomenon. The SCoA itself has been reported in seven different languages or dialects (i.e., Brazilian Portuguese, Cantonese, English, Farsi, Filipino, Mandarin, and Portuguese) but with little consistency in factor structure, despite persistent consistency in item to factor relations. Understandably, a large number of the reviewed studies simply report psychometric statistical analyses of the SCoA so as to determine a factor structure and, consequently, do not report substantive results.

A second important result is how few of the studies report behavioral outcomes. Most rely on anonymous self-report surveys, a few provided an academic outcome measure (e.g., GPA or test score), and only one provided an objective behavioral measure (i.e., RTE; Wise & Cotten, 2009). Clearly, the various measures reviewed here need to be linked to behaviors that are independent of participants’ potential ego-protective self-portrayals to achieve validation evidence beyond psychometric properties of the various scales.

The substantive results of the review indicate that positive evaluations of the integrity, purpose, and function of assessment result in better performance and are associated with aspects of SRL. Adaptive beliefs about different kinds of assessment were associated with a number of positive self-regulatory behaviors and beliefs, such as greater self-efficacy, greater use of metacognitive strategies, stronger self-determined motivation, greater value for performance, greater effort, reduced anxiety, and less lack of concentration. These features of greater self-regulated learning interact with the type of assessment and the consequences attached to the assessment. Believing assessments and feedback are fair, honest, and trustworthy generates confidence in the process and information generated. When assessments are clearly formative, students seem to trust and exploit them more for their learning potential. As a bonus, the quality of classroom student–student interrelationships may be increased when assessment is formative rather than summative. Summative high-consequence assessments that rank students may reduce the incentive to be cooperative and supportive in classroom environments.

The overwhelming message of research with the SCoA points to the importance students place on assessment for improvement. When students believe assessment practices are educational, they seem to be much more proactive in using the information arising from the process to increase learning. Achieving these goals requires that the types of assessment are educational rather than simply summative. This will be difficult for higher education because the traditional written summative examination is still so entrenched in practice and policy. It is possible to imagine higher education that is completely assessment free (i.e., no grades, no scores, just feedback from tutors, with opportunities for resubmission) where the validity of student learning is determined by the external realities of professions, vocations, and employment postgraduation. This would still give a place for formative assessment that leaves open the possibility of failure to remember, understand, analyze, or synthesize so that appropriate regulatory responses by learners and teachers are required (Brown & Hattie, 2012). Hence, for learners to learn, they must become aware of what it is they do and don’t know or can/can’t do. That is what formative educational assessment is capable of doing. Thus, totally removing assessment from higher education is unlikely to support greater learning.

However, society benefits when students have demonstrated competence and excellence however that is assessed or evaluated. When we know that our graduates have the competencies expected of new graduates, this gives civil society confidence that its future is in good hands. An interesting consequence of attaching consequences to performance is that conforming to the expectations of the assessor is a recognized effect of accountability (Lerner & Tetlock, 1999). The expectation of higher education is not just performance but also real attested learning. This is the very situation in which self-regulation of learning is essential.

The major limitation of this review is that it was based solely on citations of three key articles (Brown, 2011; Brown, Irving et al., 2009; Brown, Peterson et al., 2009) related to the

Conclusion

This review has demonstrated that there is a global interest in the higher education student voice within assessment. Based on highly restrictive selection criteria, more than 20 studies were found, which either used a version of the

Equally important, this review establishes that there is consistency in results across methods, samples, and jurisdictions. It seems clear from this review that the best performing students are ones who use assessment and feedback to support improvement, no matter what the cost might be to their self-worth or self-perception. Strength in the face of adversity, the ability to overcome, and the skill of knowing how to regulate behaviors, emotions, and cognitive strategies to maximize desired outcomes are signs self-regulated learning and successful performance.

It is hoped that this review inspires work to be done by some of these researchers to integrate the measures as a way of cross-validating their own work and to remove possible “jingle-jangle” (Kelley, 1927; Murphy & Alexander, 2000) in the field. By that, I mean that there is likely to be considerable content overlap among the various inventories designed to measure student perceptions of the quality of assessments, their attitudes toward specific assessment formats, and their attitudes toward feedback practices and possible implications. Having been developed in different contexts and languages means that the opportunity to seek universal results is handicapped by the battery of instruments that have not been calibrated one to another. It is hoped that greater coherence in the field will be motivated by this review.

Footnotes

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.