Abstract

The importance of good research questions is well recognised in the research community. The difficulty lies in actually formulating these research questions, an issue which both beginning and established researchers have expressed concerns about. This article reports on an innovative framing device which was developed for international trainers and teachers to help them ‘jump the first hurdle’ and write a ‘good’ research question to specifically drive a cycle of action research. Through a staged exploration of the thoughts and perceptions of Kazakhstani trainers who used the Ice Cream Cone Model, findings highlight strong support for the use of the framing device and the ‘logical steps’ it provides for producing small-scale action-based research questions. The value of this framing device for teacher researchers and those involved in supporting teachers to carry out action research will be considered following an exploration of model-generated research questions.

Introduction

Questions play a central role in the research process (White, 2013). Onwuegbuzie and Frels (2016) build on this claim, acknowledging the relevance, direction and coherence that questions provide to research activity, right from its conception to completion. Agee (2009), however, is quick to stress that it is the quality of the research questions that is of real importance. Given their clear significance, getting research questions right is crucial, especially if researchers want to collect data that pertain to their inextricably linked research aim and objective (Taylor and Martindale, 2014). Interestingly, Zhu (2015: 1) suggested that those who engage in research activity ‘have a hard time’ formulating research questions. Issues relating to the actual time needed to write a research question (Hine, 2013) and ensuring that they are ‘good’ help to fuel the perception that research questions are ‘not easy to write’ (Kimmond, 2012: 23). This article locates itself in the heart of these issues by reporting on the development and use of an innovative framing device to help teacher researchers and those involved in supporting teachers to carry out action research overcome the challenges of formulating research questions that drive a cycle of action research. The framing device, known as the Ice Cream Cone Model (ICCM), was devised in response to the professional needs of teacher trainers who were being trained to train teachers as part of the Center of Excellence (CoE) programme, a national in-service teacher development initiative in Kazakhstan led by teacher educators from a UK university (see Turner et al., 2014 for further details). The research reported in this article explores the thoughts and perceptions of teacher trainers who were introduced to the framing device as part of their training. Despite the research being seen as the first stage in evaluating the effectiveness of the framing device, findings suggest that the ICCM was regarded most favourably, with praise highlighting the way that the ICCM helped users to ‘really focus their research’ (Stage 1 findings). The value of the model for teacher researchers and those involved in supporting teachers to carry out action research will be considered following an exploration of model-generated action research questions.

Review of existing literature

Lewin (1952) is generally attributed with introducing the phrase action research to describe a form of inquiry that would enable ‘the significantly established laws of social life to be tried and tested in practice’ (p. 564). In the context of education, Hine (2013) defined action research as ‘the process of studying a school situation to understand and improve the quality of the educative process’ (p. 152). The importance of educational action research is recognised by Johnson (2011), with Mills (2014) seeing action research as allowing teachers to ‘gain insight, develop reflective practice, effect positive changes in the school environment (and educational practices in general), and improv[e] student outcomes and the lives of those involved’ (p. 8). To facilitate these changes, Jones (2006) suggested that teachers needed to continuously ask themselves questions about ‘the focus of change … why we need to make changes, what sort of changes and how, and to consider the expected outcomes of such change’ (p. 36). Those familiar with the action research model (see Kemmis et al., 2014) will recognise the value of these questions in helping teacher researchers to initiate work on the first major step of the cycle which is planning. In contrast to Jones’ numerous questions, McNiff and Whitehead (2011: 134) focused more on one key question, claiming that action research begins with the question: ‘How do I improve my work?’ The questions posed by Jones and McNiff and Whitehead collectively serve as general or ‘overarching’ ones for teachers to ask themselves when thinking about their practice in the classroom/workplace. Stringer (2014) discouraged teacher researchers from trying to drive their action research with these kind of questions given that they lack a specific focus on a problem, a core characteristic not only attributed to action research questions (New South Wales Department of Education and Training (NSWDET), 2010) but also of good research questions in general (Wood and Smith, 2016). But what is meant by ‘good’ research questions?

‘Good’ (action) research questions

Put simply, good research questions are questions (not statements) that are researchable and directly ‘address … the research problem that you have identified’ (Rose et al., 2015: 40–41). Research questions are also considered good when they help to guide researchers in making decisions about study design and population and subsequently what data will be collected and analysed (Farrugia et al., 2010). Typically though, good research questions are so called because they exhibit certain characteristics. Burns and Grove (2011) build on the NSWDET’s (2010) assertion of good research questions being focused, stating that they must be feasible, ethical and relevant. These latter three characteristics mirror those recognised as desirable research question ‘properties’ proposed by Hulley et al. (2013) with novel and interesting being added to the growing list of good research question attributes (the five characteristics being arranged to form the mnemonic FINER). Wyatt and Guly (2002: 320) appreciated the importance of research questions being interesting, arguing that research questions should ‘be of interest [not only] to the researcher but also the outside world’, be it at a local, national or international level. Other features of good research questions identified by Wyatt and Guly (2002) include the following:

A hypothesis can be formulated and tested;

The study is viable in terms of time, money, materials and expertise;

The results are potentially important and may change current ideas and/or practice;

The question has the potential to develop further research with a similar theme (see p. 320).

In contrast, Rust and Clark (2007: 5) acknowledged a number of ways in which research questions might be considered ‘ungood’ (the phrasing of the authors of this article); these include:

Questions that can be answered with a Yes or No response;

Questions to which you already know the answer;

Questions that are full of educational jargon.

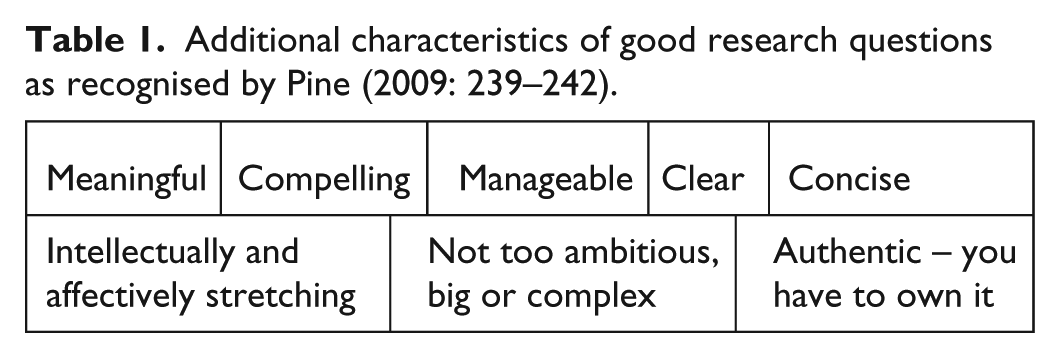

A couple of these ‘ungood’ characteristics listed above are echoed in the work of Pine (2009: 239–242) who offered, in contrast, a number of additional characteristics that help to shape good research questions (see Table 1).

Additional characteristics of good research questions as recognised by Pine (2009: 239–242).

The authors of this article assert that these characteristics are relevant to the questions which can be used to drive a cycle of action research. While teacher researchers may be aware of some of these characteristics, the actual application of these characteristics to the questions that teacher researchers formulate is deemed problematic (Ellis and Levy, 2008). One way for this problem to be alleviated is by giving teacher researchers pre-formulated questions which have already been devised by experts or scholars, a practice noted in Research Quarterly for Exercise and Sport (Zhu, 2015). The authors of this article challenge this approach by arguing that this clearly disempowers teacher researchers by making them unnecessarily reliant on perceived authority figures to provide research questions that they think the education field needs to address. The authors of this article strongly believe that researchers should be guided by educational research (and the questions which steer this inquiry) that is driven by their own professional interests and enthusiasm. Cronin et al. (2015: 30) proposed another way of addressing the problem by recommending that researchers undertook a review of the literature to help them formulate their research question by identifying gaps in ‘what is already known’. Creswell (2014) supported this idea, claiming that the ‘first step in any project is to spend considerable time in the library examining the research on a topic’ (p. 59). However, Craig (2009) asserted that ‘given the nature of the action research process, many feel that a literature review is not necessary due to the fact that [action research] is prompted by a practitioner’s expertise and experience in a specific environment’ (p. 56). The authors of this article also argue that time constraints and having ready access to relevant quality literature are likely to prevent busy teacher researchers from engaging in this kind of activity (at least at the level suggested by Creswell), particularly if they do not have to report their action research for assessment purposes. Despite these suggestions, the issue of teacher researchers being able to formulate (write) good action research questions still remains. Kloda and Bartlett (2013: 56) recognised how this issue could be positively addressed through the use of formulation structures in the shape of frameworks that expose ‘the “anatomy” of answerable questions’. But how do these frameworks actually work?

Research question frameworks

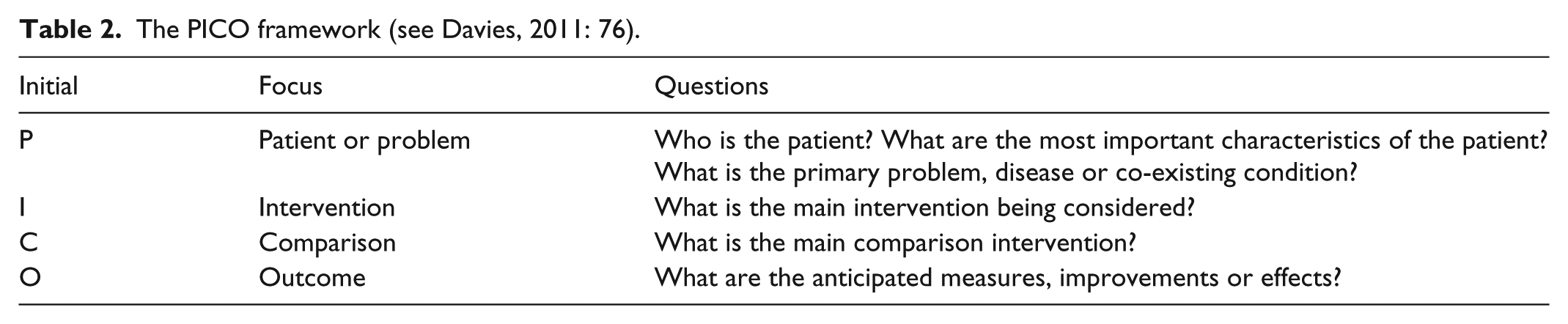

The first published framework is credited to Richardson et al. (1995). Using the acronym PICO, the framework (see Table 2) was developed to help those working in healthcare contexts to break down clinical questions.

The PICO framework (see Davies, 2011: 76).

While Thabane et al. (2009) asserted that this framework was clear, concise and easy to use in terms of framing all of the components of a research question, there are recognised difficulties for teacher researchers to specifically make use of the framework due to its designated use within the medical research community. However, with slight modifications to each of the four foci, teacher researchers are able to use the framework, for example, as follows:

Population/people. Pupils/students, parents/carers, teacher trainees, teaching assistants, teachers (part-time/full-time), senior management members/teams.

Intervention. Innovative teaching methods, use of new online resources, focused daily input from learning mentors, re-organisation of learning spaces in the classroom/school.

Comparison. Investment in a whole school/setting library, team-teaching opportunities, planned coaching and mentoring, collaborative learning initiatives with local businesses in the community.

Outcome. Improvements to the quality of learning and teaching, increases in pupil/student attainment, growth in pupil/student attendance.

Mantzoukas (2008), however,

expressed concerns about the ‘specificity’ of PICO, highlighting that qualitative research

questions (which tend to drive action research) ‘seek to answer more abstract

professional/practice issues and produce transferable knowledge [by] interpreting,

understanding and explaining … wider phenomena’ (p. 373). Despite these concerns, numerous

variations of the PICO framework have been proposed, with

Timeframe;

Duration;

Context;

Setting;

Environment;

Type of question;

Type of study design;

Professionals;

Exposure;

Results;

Stakeholders;

Situation.

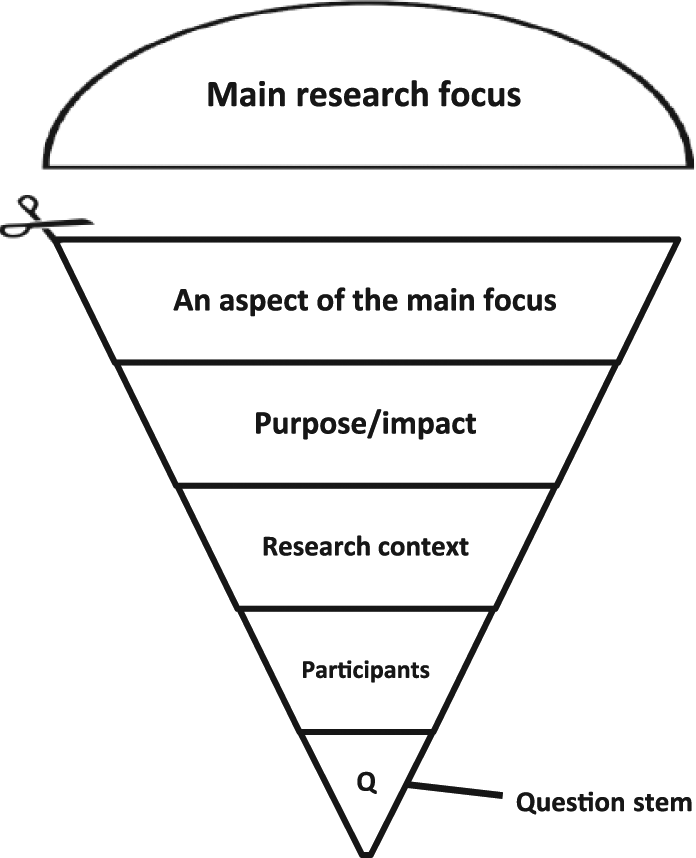

While an awareness of these different ‘elements’ may be known to teacher researchers, the enduring issue remains in them being able to use these to help them ‘shape’ their own action research questions. In response to this problem, a framing device was developed to specifically help teacher researchers actively construct action research questions, an explanation of which follows (see Figure 1).

The Ice Cream Cone Model (devised by Brownhill).

The ICCM: an explanation

The model design was inspired by the visual depiction of Maslow’s (1954) original five-stage hierarchy of needs. The rotation of the triangle onto its apex is purposeful in emphasising the notion of the model ‘funnelling-down’ (Barker, 2014: 61) to the specifics of the action research to be undertaken, the triangle thus metaphorically representing a drill bit or tip. The addition of the semi-circle at the top of the model (to represent the ‘ice cream’) not only helps to give the model its name but also serves as a useful starting point for teacher researchers to think about the main area of interest that they want or need to research. Separated into five parts, the ‘cone’ considers a number of key elements or ‘aspects’ that help to make up a good research question (as identified by Davies, 2011). A series of question prompts are offered below in an effort to explain each key aspect:

An aspect of the main focus. What aspect or ‘small part’ of the main research focus do you want/need to investigate? For example, if the main research focus is Assessment for Learning (AfL), what specific aspect of AfL would you like to explore, for example, peer assessment, self-assessment or questioning?

Purpose/impact. What is the purpose or impact of the action research that you want/need to undertake, for example, to explore, describe or explain something? Note that teacher researchers using the framing device are encouraged to think specifically about pupil/student learning and how this learning can be improved.

Research context. In what context is the action research being undertaken, for example, a subject area, a topic, a lesson/session or indoors/outdoors?

Participants. Who is the action research focusing on, for example, boys, girls, a specific year group, an age range of children/young people or those with a particular ability?

Question. Which question stem will be ‘opening’ your action research question, for example, Which …? What …? Why …? How …? The latter three question stems offered are recommended for teachers researchers to use as they ‘are usually broader and get at explanations, relationships, and reasons’ (Pine, 2009: 242).

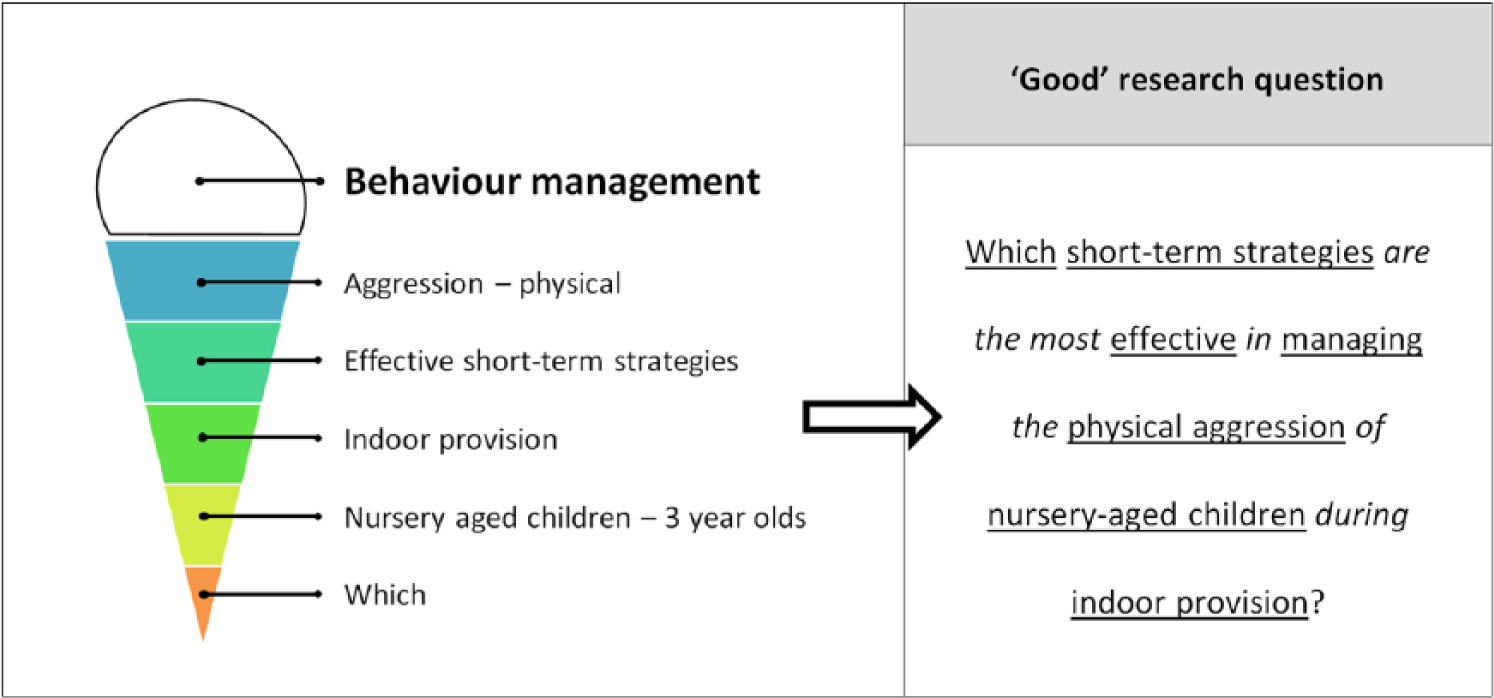

The framing device encourages teacher researchers to work their way sequentially through each of the five key aspects by completing a blank ice cream cone paper template. Once the cone is physically cut up into the separate key aspects, teacher researchers are then able to interact with the model, physically re-organising the key aspects to help them form the basis of a good research question. By then adding in suitable words, be they verbs, nouns, adjectives, definite articles or prepositions (the authors of this article consider these to be the mortar of the question), teacher researchers are able to ‘cement together’ the key aspects (which the authors of this article consider to be the building bricks of the question) to create a ‘complete’ action research question that exhibits good characteristics and avoids those that are considered ‘ungood’. Figure 2 illustrates an example of a good question generated by the framing device.

Key research aspects identified and ‘cemented together’ to form a good research question using the Ice Cream Cone Model.

The research

The ICCM was developed by the lead author of this article in response to the professional needs of teacher trainers who were being trained to train teachers as part of the CoE programme, a national in-service teacher development initiative in Kazakhstan led by teacher educators from a UK university. The programme is described extensively elsewhere (see Turner et al., 2014), but brief details are offered below to support readers’ understanding of the context in which the research was undertaken. Originally set up by the Government of the Republic of Kazakhstan in 2011, the primary aim of the CoE programme is to equip teachers in Kazakhstan with the skills to develop citizens of the 21st century. The programme was initially developed at three levels:

Level 3 (basic) – teachers leading learning in the classroom;

Level 2 (intermediate) – teachers leading the learning of colleagues through coaching and mentoring;

Level 1 (advanced) – teachers leading the strategic development of the school with others through school development planning.

An additional level of training was subsequently developed, focusing specifically on the change led by principals (head teachers) in schools. At Levels 3, 2 and 1, the training for both the trainers and the teachers involved three phases which comprised a face-to-face series of workshops with theoretical input (referred to as Face-To-Face 1 or F2F1), followed by an extended practice-based period (known as the School-Based Period), and culminating in a further face-to-face period of reflection (referred to as Face-To-Face 2 or F2F2). The knowledge base which was central to these different levels of the programme focused on a number of key topics including learning to think critically, dialogic teaching and assessment for and of learning. The programme also required both trainers and teachers at all levels to ‘reflect systematically on their practice and to engage in small-scale action research work’ (Nazarbayev Intellectual Schools (NIS), 2012a: 223). This research was specifically focused on the impact of changes made to professional thinking and classroom practice in relation to aspects of the key topics highlighted above. The programme embraced a cascade model of delivery, with UK-based educators training Kazakhstani professionals as CoE trainers (their training was referred to as Training the Trainers) who would subsequently train Kazakhstani teachers in different regions across the country (referred to as Training the Teachers).

Reflections made by UK-based educators of the training of CoE trainers at Level 3 highlighted how the Kazakhstani trainers had quickly developed an appreciation of action research as a way of promoting change but needed additional support to formulate good research questions that could instigate a cycle of action research related to aspects of one of the topics of the programme. The lead author of this article felt that this issue needed to be positively and swiftly addressed given that this was likely to be an area of need which Kazakhstani teachers would require specific support from CoE trainers during their training (Training the Teachers). The ICCM was shared with CoE trainers as part of the taught input on action research led by UK-based educators during Level 2 F2F1. The lead author of this article was curious about the thoughts and perceptions of the CoE trainers regarding the framing device as the lead author hoped that the Kazakhstani trainers would use the model with those that they subsequently trained. Thus, the question What are the thoughts and perceptions of CoE trainers with regard to the ICCM? was asked and used to focus a small-scale piece of exploratory research. An explanation of how relevant data were gathered and analysed to answer this question is offered below.

Research methodology

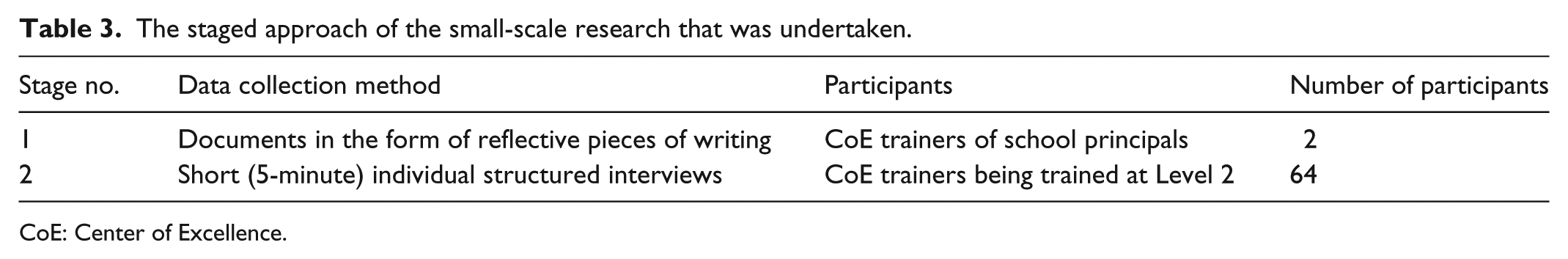

With a clear focus on exploring thoughts and perceptions, the research embraced a strong subscription to post positivism (Denzin and Lincoln, 2018). This reflected the shared epistemological positioning of the authors of this article, who believed that reading and listening to the views and opinions of CoE trainers would help them to gain valuable insight about how the ICCM was perceived. To capture these views and options, the authors of this article were keen for the research to be carried out with people as opposed to on them (Sharp, 2012); as such, documents in the form of reflective pieces of writing and individual structured interviews were selected from a suite of data collection methods available (as identified by Burton et al. (2014)). Thus, in an effort to ‘reject the traditional dichotomy between “qualitative methods” and “quantitative methods”’ (Plowright, 2011: 2), the research adopted a mixed-methods approach to data collection (Plano Clark and Ivankova, 2016), complementing the combined strengths of the different methods selected to gather rich and informative data. The research was conducted in two stages, a summary of which is presented in Table 3.

The staged approach of the small-scale research that was undertaken.

CoE: Center of Excellence.

Permission to undertake the research was sought by the then Deputy Director of the CoE programme in Kazakhstan and one of the UK–based principal developers of the programme. At stage 1 (S1), two participants who were available and willing to be part of the research were invited to produce a reflective piece of writing (approximately 600 words) about research questions in action research projects. The participants were experienced CoE trainers, working specifically with principals. In their reflective writing, the participants were encouraged to reflect on the following:

Their prior knowledge of research questions as part of the cycle of action research;

Their experiences of using and formulating research questions with educators in their own training;

Their thoughts and perceptions of the ICCM.

At Stage 2 (S2), the two co-authors of this article conducted short (5-minute) individual, structured interviews with 64 CoE trainers who were being trained at Level 2. In total, 43 (67%) interviewees described themselves as being teachers who were training to be CoE trainers; the remaining 21 (23%) identified themselves as CoE trainers who had either already been trained in a particular level of the programme or had delivered training to teachers. The co-authors actively sought the informed consent of willing interviewees through verbal means (British Educational Research Association, 2011), asking four questions about their thoughts and perceptions of the ICCM. These interviews were undertaken by the co-authors due to their ability to speak the native languages of the interviewees (Kazakh and Russian). The interviews were conducted in two training rooms where two individual groups of CoE trainers were being trained; the short duration of each interview was purposeful in ensuring that the research and the involvement of the interviewees did not interfere with the principal commitment of the UK–based educators in effectively training the trainers. The S2 data were gathered over two afternoons and were subsequently collated and presented in tabular and graphic form. These data, along with the two reflective pieces of writing from S1, were then translated into English by an experienced interpreter for the benefit of the lead author of this article to read, analyse and report on. Conventional content analysis (Hsieh and Shannon, 2005) was used in an effort to interrogate the data generated from S1; descriptive statistics (Cohen et al., 2011) was utilised at S2. Support from numerous colleagues in the form of peer-debriefing (Guba, 1981) was actively sought by the lead author of this article to provide scholarly guidance to improve the quality of the research findings and conclusions that are presented below.

Research findings

Stage 1 (S1)

When reflecting on their prior knowledge of research questions as part of a cycle of action research, both participants offered a number of points of interest. The importance of the research focus was clearly recognised, but difficulties were expressed in terms of either identifying what the focus actually was (Participant X) or how to ‘narrow [the focus] down’ (Participant Y). A slight misunderstanding was noted as to whether the research focus should be on student learning (Participant Y) or on school management (Participant X). The research focus was regarded as being more important than the research question; indeed, despite there being an understanding that the research question was ‘set’ from the research focus (Participant Y), the very ‘presence’ of the research question was challenged by Participant X: ‘… if we know our research [focus] then why would we need the research question?’

Sensitively reflecting on their experiences of formulating action research questions with principals in their own training highlighted numerous difficulties. The questions that were generated either prompted a Yes/No response or were too ‘complex [or] hard-to-measure’ (Participant Y). In the light of this reflection, when conducting their next set of training, the S1 participants taught the principals specific questioning skills and strategies in an effort to help them sharpen their research questions. However, further difficulties were noted in the principals’ understanding of questioning skills and ‘convinc[ing] them that complex issues [in school could] be dealt with one aspect at a time, step by step’ (Participant Y). The two participants were subsequently introduced to the ICCM as part of their continuing professional development (this involved them observing and taking part in the training led by UK–based educators at Level 2). In their reflective writing, they were both invited to comment on their engagement with and thoughts about the framing device. The authors of this article will present and explore these reflections as part of the ‘Discussion’ section.

Stage 2 (S2)

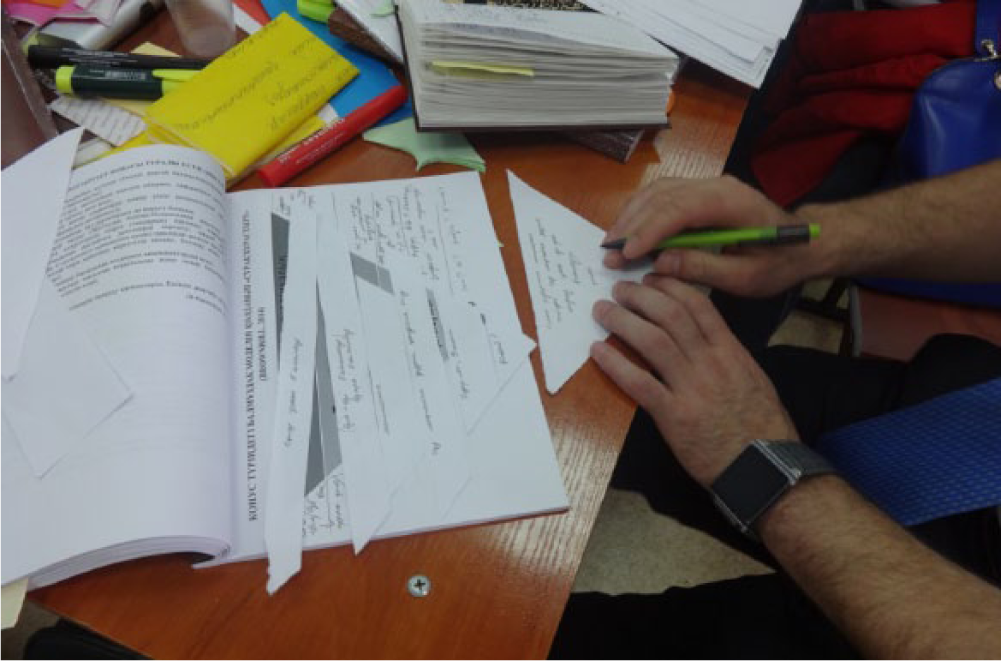

As has been previously mentioned, the ICCM was introduced by UK–based educators to CoE trainers as part of their Level 2 F2F1 training. Figure 3 shows a CoE trainer actively ‘cementing’ a good action research question together using key aspects that they had generated using the framing device.

Key aspects generated from the Ice Cream Cone Model being ‘cemented together’ to create a complete good research question to drive a cycle of action research (Brownhill).

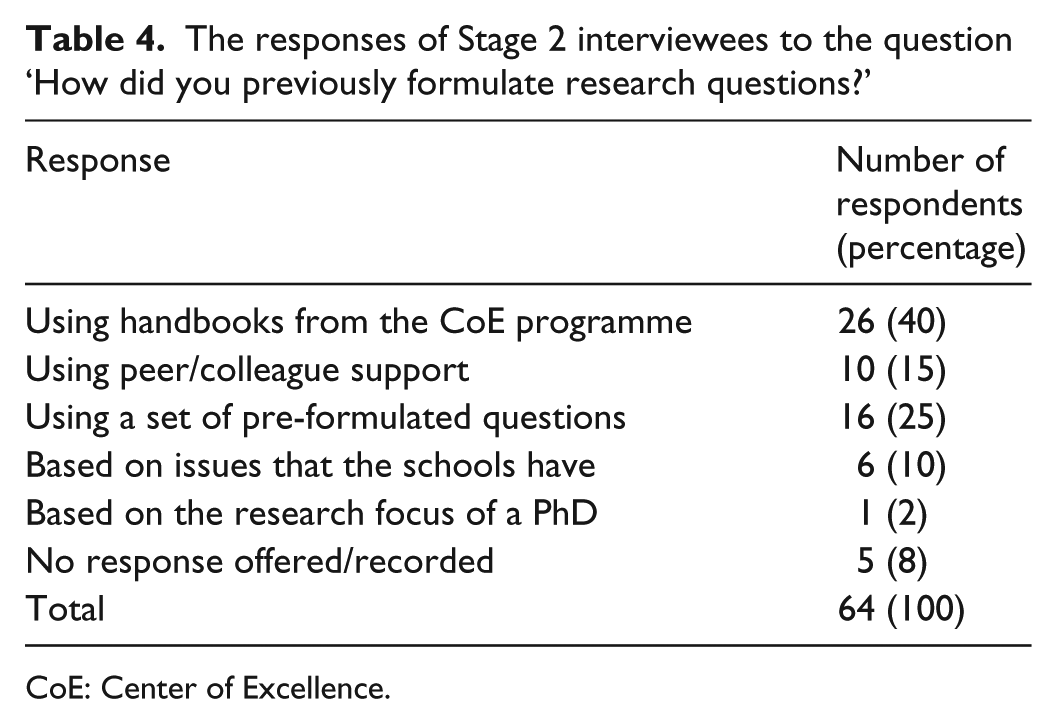

Following this input, two teaching groups were selected from the five groups of Level 2 CoE trainers that were being trained at the time. Members of each group were individually interviewed by one of the two co-authors to explore their thoughts and perceptions of the framing device. Interviewees were initially asked how they had previously formulated research questions in an effort to identify practices prior to the introduction of the ICCM. Five key responses were made (see Table 4).

The responses of Stage 2 interviewees to the question ‘How did you previously formulate research questions?’

CoE: Center of Excellence.

A closer examination of the data highlighted that over three-quarters of those identifying themselves as CoE trainers (76%, n = 16) predominantly used a set of pre-formulated questions, whereas those who described themselves as teachers who were being trained as CoE trainers (60%, n = 26) were more likely to use the handbooks that served as an integral paper-based feature of the CoE programme for both trainers and teachers.

Interviewees were then asked whether their previous method of formulating research questions was easy or difficult. Only 19% (n = 12) of the interviewees considered their previous method to be easy, attributing this to either ‘having a good understanding of the concept during the … training’ (n = 11) or a ‘scientific background’ (n = 1). 43 respondents (67%), on the other hand, stated that their previous method was ‘hard’; this was either as a result of them not knowing (50%, n = 32) or having not learned (17%, n = 11) any strategies for formulating action research questions. No response was offered/recorded from the remaining nine respondents (14%).

When asked whether they thought the ICCM was effective in formulating action research questions, 98% (n = 63) of interviewees responded positively. The primary reason for this response was credited to the framing device being ‘specific and follow[ing] logical steps’ (88%, n = 57), with six respondents (10%) acknowledging how the model ‘helped to systemise one’s thinking’. Only one respondent (a teacher training to be a CoE trainer) did not consider the model to be effective; no explanation as to why they thought this was recorded.

Interviewees were asked whether they intended to use the ICCM in their future training with teachers; the response was unanimously positive (100%, n = 64). Three main reasons were offered to substantiate interviewees’ responses; these related to the framing device being perceived as being:

‘Helpful for teaching others’ (28%, n = 18);

Useful in assisting teachers to ‘select their research, the research focus and objective’ (23%, n = 15);

Valuable to aid professionals in ‘achiev[ing] the specific research objective’ (48%, n = 31).

Interviewees were finally asked whether they would adapt or change the ICCM. About 30% (n = 19) of interviewees said that they would not make any changes; 34% (n = 22) said that they had not thought about making any changes, and 36% (n = 23) said that they would change the model if necessary, but only after they had used the model with teachers that they trained.

Discussion

When analysing the documents from S1, the participants’ reflections not only mirrored a number of key points discussed in the ‘Review of existing literature’ section but also helped to support decisions made by the lead author of this article when developing the ICCM. Putman and Rock (2018) asserted that ‘determining what will be the focus of your action research project is the first step in the … action research process’ (p. 27) which justifies the positional location of the main research focus (the ‘ice cream’) at the top of the framing device (see Figure 1). The importance of the research focus as ‘the first step’ was clearly recognised by both S1 participants, but a greater emphasis was placed on the focus as opposed to the research question, thus challenging the thinking of Walshaw (2015: 24) who argued that research questions are ‘the most important part’ of any research. The S1 participants, in part, show support for Eriksson and Kovalainen (2015) who maintained that by establishing the research focus ‘you might refine and frame the original idea into [a] more precise research question’ (p. 29). Following their active engagement with the ICCM, Participant Y acknowledged the importance of setting clear action research questions, suggesting these to be ‘more important than the research [focus] itself’.

Difficulties experienced by the two S1 participants in relation to helping principals formulate action research questions mirror those that are recognised by Agee (2009) and Borg Debono et al. (2013). The authors of this article believe that the ICCM alleviates these difficulties by effectively supporting teacher researchers in formulating a specific, exploratory and measurable question that they can use to drive a cycle of action research. This view is supported by S2 findings where 98% (n = 63) of interviewees saw the model as being effective for formulating action research questions. The authors of this article believe this efficiency also relates not only to the amount of time needed to generate a question (as highlighted by Hine and Lavery, 2014) but also the effort needed on behalf of teacher researchers. Laws et al. (2013: 200) stated that researchers should ‘draft your question … early, and expect to redraft [it] many times’. While this thinking is supported by Hunter et al. (2013), the authors of this article challenge Laws et al.’s assertion, arguing that busy teacher researchers do not have the time to draft and redraft action research questions given the many demands already on their time. As will be discussed, the ICCM helps teacher researchers to generate good research questions with the minimum amount of both time and effort.

The approach to training on the CoE programme actively promotes collaboration between trainers and teachers, and between the teachers themselves, because ‘[w]hen teachers are able to work together then they are able to share good ideas and amplify the effects of all their teaching approaches’ (NIS, 2012b: 215). Surprisingly, only 15% (n = 10) of S2 interviewees sought peer/colleague support in formulating their action research questions (Table 4). Given that so many S2 interviewees found formulating research questions difficult (67%, n = 43), the authors of this article are surprised that more of them did not utilise the support that can be provided by fellow professionals to help them refine their action research questions, particularly as this practice is encouraged by Gilmore (2012): ‘When developing your questions, seek to establish content validity by sharing them with colleagues … [asking] them to critique the questions and suggest ways to improve the wording’ (p. 69). Instead, interviewees sought support from printed materials (handbooks; 40%, n = 26) or pre-formulated questions (25%, n = 16), a practice noted by Zhu (2015) but one which the authors of this article have strongly argued against (see page 2). Using the ICCM, the authors of this article believe that the framing device effectively addresses this issue by encouraging CoE trainers and teachers to ‘take ownership’ of the research questions that they formulate, devising the question in response to known issues in school or professional areas of interest as opposed to those which experts in the field believe teacher researchers should investigate.

The authors of this article consider it to be important to reiterate Agee’s (2009) assertion that the quality of the research question is of real importance as ‘poorly conceived or constructed questions will likely create problems that affect all subsequent stages of a study’ (p. 431). As part of their reflection, the two S1 participants willingly offered examples of action research questions they had generated using the ICCM:

How can dialogic teaching increase the vocabulary of fifth grade (10–11 year olds) students in Kazakh Literature? (Participant Y);

How can I teach ninth grade (14–15 year olds) students to come up with effective solutions and provide evidence in Math using higher order questions? (Participant X).

Taking the latter question as an example, this question clearly demonstrates the different key aspects that are actively promoted by the ICCM (Figure 1):

Main research focus (the ‘ice cream’). Critical thinking;

Aspect of the main focus. Higher order questioning;

Purpose/impact. Generating effective solutions and providing evidence;

Research context. Mathematics;

Participants. Ninth grade pupils (15–16 year olds);

Question (stem). How?

The authors of this article feel that these two questions emulate some of the most significant elements of a good action research question, as recognised by Burns and Grove (2011) and Pine (2009), in terms of them being feasible, relevant, clear, concise, manageable, ‘easy to understand and achievable’, all characteristics which were also recognised as being important elements of a good action research question by both Participant X (S1) and interviewees at S2.

Conclusion

This article sought to report on the development and use of the ICCM as an innovative framing device to help teacher trainers (and subsequently teachers) overcome the known challenges of developing research questions to specifically drive a cycle of action research. The authors of this article believe that the ICCM is an empowering framework which helps teacher researchers to formulate good action research questions with relative ease. Through this article, the authors of this article have shown how the framing device helps users to establish a main research focus, narrow the focus down via a series of question prompts, concentrate the action research on pupils/students and their learning, and sharpen the action research question by making them specific and easy to measure, facilitating an exploratory response to develop critical thought and reflection. By engaging with the framing device in paper-based form, the ICCM presents a unique opportunity for teacher researchers to interact with key aspects of their research question, physically arranging and re-arranging them for cohesion purposes, something which the authors of this article believe established research question frameworks fail to offer.

The article also explored the thoughts and perceptions of Kazakhstani trainers with regard to the ICCM. Findings suggest that virtually all of the CoE trainers in the research saw the framing device as an effective way of formulating action research questions, with every one of them willing to use the model in their own training (S2). The validity of these findings may be questioned seeing as the CoE trainers were asked whether they thought the device was effective before they had had a chance to use the model with teachers. The authors of this article stress that this research is just the first stage in evaluating the effectiveness of the model; while the authors of this article appreciate the positivity of CoE trainers towards the framing device, the authors are keen to ascertain whether their attitudes have altered in any way following their subsequent sharing of the model with teachers. The authors of this article would like to not only explore the views of CoE trainers but also capture the thinking of the teachers who used the model to help them formulate research questions for their action research work, evaluating their thoughts about the perceived value and effectiveness of the framing device.

The authors of this article openly recognise the limitations of the research reported in terms of scope and generalisability. The research evidence presented is more self-reported rather than being observational in nature; CoE trainers at S2 believed the framing device to be useful, but no evidence has been suggested that their research questions, or those devised by their teachers, are actually better than if they did not use the framing device. To counter this observation, participants from S1 were subsequently approached and invited to share with the lead author of this article examples of model-generated action research questions that had been devised by those whom they had recently trained. A total of 65 questions were offered that had been created by school leaders – principals (n = 50), deputy principals (n = 9) and those with senior positions for learning and teaching (n = 6) – as part of the F2F1 training the S1 participants had individually delivered in two separate regions of Kazakhstan. An exploration of the generated action research questions was made in relation to the ‘good’ characteristics that they exhibited. Initial observations highlighted the following:

About 92% (n = 60) of the action research questions facilitated an exploratory response using ‘How’ as the opening question stem (as recommended by Pine, 2009), for example, How do role play games played in self-knowledge lessons (8th Grade) influence students’ expressive speech skills? (Principal);

Virtually, all of the research questions were focused (NSWDET, 2010) in terms of identifying the participants that the action research was ‘targeting’ and the purpose/impact of the action research, for example, How does formative assessment increase student interest in Math in the 5th grade? (Principal);

All of the questions had the potential to generate results that were ‘important and [could] change current ideas and/or practice’ (Wyatt and Guly, 2002: 320), for example, How can group work improve the motivation of 7th grade students to peer-teach? (Deputy Principal);

A good number of questions were considered to be interesting (Hulley et al., 2013) based on the level of intrigue on the part of the lead author of this article, for example, How does critical thinking improve the creativity of 6th grade students in Fine Arts lessons? (Principal).

While the authors of this article are able to show that the model actually works in practice, the authors recognise that further examination of these model-generated questions is needed to support our claims about the true efficacy of the ICCM. The authors of this article also aim to counter the limitations of the data that have been gathered to date by collecting more ‘objective’ evaluation evidence in future from CoE trainers and the teachers that they work with. At present, the authors of this article acknowledge that the framing device does not offer teacher researchers a ‘complete’ research question; further work is needed to consider how mortar words (see page 4) can be generated via the framing device to effectively produce ‘complete’ action research questions.

Freedman (2004: 100) argued that the future of research ‘rests in part on the quality of our research questions and their resulting investigations’. The authors of this article believe that the ICCM, with further development and research, could contribute positively to the future of action research. To that end, the authors of this article encourage teacher educators, and those involved in supporting teachers to carry out action research, to use and share the ICCM with professionals in an effort to help them ‘jump the first hurdle’ when engaging in action research.

Footnotes

Acknowledgements

The authors would like to express their deepest thanks to Fay Turner, Paul Warwick and Mary Christie, who willingly gave their valuable time to read and pass comment on earlier drafts of this paper. The lead author would also like to thank Rabiga Shokhan Rowell for her excellent translation of the research data gathered.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship and/or publication of this article.

Supplementary materials

Supplementary materials can be accessed by contacting