Abstract

Research on music psychology has increased exponentially over the past half century, providing insights on a wide range of topics underpinning the perception, cognition, and production of music. This wealth of research means we are now in a place to develop specific, testable theories on the psychology of music, with the potential to impact our wider understanding of human biology, culture, and communication. However, the development of more widely applicable and inclusive theories of human responses to music requires these theories to be informed by data that is representative of the global human population and its diverse range of music-making practices. The goal of the present paper is to survey the current state of the field of music psychology in terms of the participant samples and musical samples used. We reviewed and coded relevant details from all articles published in Music Perception, Musicae Scientiae, and Psychology of Music between 2010 to 2022. We found that music psychologists show a substantial tendency to collect data from young adults and university students in Western countries in response to Western music, replicating trends seen across psychology research as a whole. Even data collected in non-Western countries tends to come from a similar demographic to studies of Western participants (e.g., university students, young adults). Some positive trends toward increasing participant diversity have been evidenced over the past decade, although there is still much work to be done, and certain subtopics in the field appear to be more prone to these sampling biases than others. We discuss recent methodological developments in the field that promote further diversification of our research and highlight subsequent changes that will be needed at group or institutional levels.

Music psychology is a growing research field, with the number of published papers in this area increasing exponentially since the launch of its first dedicated journal, Psychology of Music, in 1973 (Anglada-Tort & Sanfilippo, 2019). Music psychology research has addressed a wide range of topics, from the perception of musical features to musical learning, memory, and development, from emotional and aesthetic responses to music to the psychological processes underpinning performing and composing music, and its rehabilitative potential (Hallam et al., 2014; Thompson, 2015). This work intersects with several other psychology subdisciplines, including cognitive, developmental, social, evolutionary, clinical, and performance psychology, whilst also drawing upon methods and theories from a range of other fields such as neuroscience, computer science, sociology, and musicology. Research in music psychology thereby has great potential to inform and impact broader theories of human evolution, perception, development, learning, emotion, creativity, and communication.

Given the now established and relatively mature state of this field, music psychologists should be adequately placed to develop specific, testable theories about how humans perceive, respond to, and make sense of music. However, the development of more widely applicable and inclusive theories on the psychology of music, which may subsequently shape broader theories about human cognition, requires a base of research from across the spectrum of human experiences with music. This includes, for instance, responses from participants across the lifespan, from varying geographic regions and cultural groups, and with a range of previous experiences with music. It also means “music” should be understood in a broad sense to encapsulate the diversity of musical styles and genres that humans engage with across the globe.

A range of research in psychology in general has demonstrated that theories and models based on responses from a limited participant demographic are biased or incomplete. That is, generalizations about human cognition that are made based on relatively demographically homogenous samples have in various cases failed to replicate in other participant groups. Perhaps the most highly cited example of such work is that of Joseph Henrich and colleagues on the “WEIRD” problem (Henrich et al., 2010). WEIRD stands for Western, Educated, Industrialized, Rich, and Democratic, and this acronym was developed in reference to the fact that most previous psychological research has been conducted with participants from societies where the majority of the population can be characterized as WEIRD, such as the US and UK. Specifically, “96% of psychological samples come from countries with only 12% of the world's population” (Henrich et al., p. 63). Henrich et al. (2010) go on to provide numerous examples of how research findings on a diverse range of topics including visual perception, spatial cognition, economic decision making, cooperation, conformity, and moral reasoning differ between more versus less WEIRD populations, thereby accentuating the potential pitfalls of developing theories about human cognition based solely on data from WEIRD samples. Henrich et al. (2010) also criticize the overreliance of psychology research on undergraduate student samples, mainly in relation to the “E” in WEIRD. It is worth furthermore noting the significant age biases introduced by such an approach, especially given the age differences known to exist in relation to various cognitive, emotional, and social processes (e.g., Defoe et al., 2015; Reed et al., 2014; Sparrow et al., 2021; Spencer & Raz, 1995). A follow-up article published 10 years after Henrich et al. (2010) summarizes many notable advances in the field since the introduction of the WEIRD concept, whilst also highlighting that the problem is far from solved (Apicella et al., 2020). For instance, a review of all papers published in Psychological Science in 2014 and 2017 showed that around 94% of studies still relied on participants sampled from Western countries (operationalized in this case as English-speaking countries, countries in Europe, or Israel; Rad et al., 2018).

Subsequent research has advocated for broadening or going beyond the WEIRD framework to consider other key demographic and cultural differences that predict behavioral diversity. For instance, differences in race and ethnicity, religion, community size, and cultures with strong versus weak social norms are not explicitly captured within the WEIRD framework, despite evidence that these variables can also have substantial impacts on human values and behaviors (Barrett, 2022; Clancy & Davis, 2019; Gelfand et al., 2011; Muthukrishna et al., 2020; Roberts et al., 2020). “WEIRDness” has also often been treated as a binary variable, whereby those living in certain countries are automatically classified as WEIRD or not without regard to their individual circumstances. Some recent research has gone beyond binary classification by computing “cultural distance from the US” as a continuous variable; this metric is still typically applied at a whole-country level, although this analysis technique can technically be extended to compare regional differences within a country, for instance (Muthukrishna et al., 2020). In general, there has been a prominent tendency within cross-cultural research to make comparisons between participants from different countries without capturing the wide cultural diversity that typically exists within a single country (Barrett, 2022; Hartmann et al., 2013; see Jacoby et al., 2024 for one recent music psychological study revealing rhythm perception differences between participant subgroups both across and within countries).

In music psychology, there is evidence of similar sampling biases, although a comprehensive summary of these is lacking. For instance, in a review of studies published in three music psychology journals from 1973 to 2017, Anglada-Tort and Sanfilippo (2019) found that the vast majority of the corresponding authors on these papers were from North America, Western Europe, or Australia. A review focused on the representation of authors from different European countries in articles published in Musicae Scientiae from 1997 to 2017 revealed a greater prevalence of authors from western and northern than eastern and southern Europe (Sloboda & Ginsborg, 2018). One might thereby reasonably assume that most research participants in these studies were likewise from these countries, although this was not examined in either of these two reviews. A preliminary study in our research group indicated that, within 90 studies on the effects of background music listening on concurrent task performance, 71% of the studies were from the US or UK, and 66% relied solely on university students, with a mean age across all the studies (weighted by sample size) of 22.6 years. 1 In addition, an analysis of articles published in Music Perception from 1983 to 2010 revealed that 75% of studies reviewed used musicians as at least one subgroup of their participants (Tirovolas & Levitin, 2011). This suggests a potential bias toward relying on participant samples with a higher level of domain-specific expertise than the general population, although the exact amount of musical training could not be definitively specified due to a lack of consistent reporting across studies. It remains to be seen whether such a trend has persisted in the field since 2010.

Another issue more specific to the music psychology field is how “music” is operationalized in research. The definition of what constitutes “music” varies widely across different countries and contexts, and thereby needs to be flexible and culturally informed (Jacoby et al., 2020). Beyond definitional issues, biases related to the background of the researchers and participant sample used can lead to biases in the stimuli selected for use in music psychological studies. For instance, 74% of the music collated in a review article on music stimuli previously used in emotion research by Warrenburg (2020) fell into the genre categories of (primarily Western) popular music (e.g., US chart-topping songs), Western art music (primarily by canonical composers such as Mozart and Beethoven), and Western film music. An analysis of the genres of music stimuli used in emotion research over time showed a heavy reliance on Western art (classical) music in the 1920s–1990s, followed by an upsurge of more studies using popular music in the 2000s–2010s (Warrenburg, 2020; see also similar results in Diaz & Silveira, 2014 and Tirovolas & Levitin, 2011). In a review of studies published in Music Perception from 1983 to 2010, a trend toward using more ecologically valid stimuli over time (i.e., sequential music, rather than “isolated sounds”) was also found (Tirovolas & Levitin, 2011).

However, large-scale studies focusing specifically on the demographic makeup and participant and musical sampling methods within music psychology as a whole are lacking. Given that previous research has found that perceptual, emotional, aesthetic, and imaginative responses to music may vary significantly across participants from different countries and cultural groups (Jacoby et al., 2019; Jacoby & McDermott, 2017; Jakubowski et al., 2022; Laukka et al., 2013; Margulis et al., 2022; McDermott et al., 2016), there is a need to provide appropriate contextualization and acknowledge the generalizability constraints on the conclusions drawn in our field to date. Similarly, theories about how music is perceived or responded to that are developed solely in relation to Western (art) music may not be applicable to groups of people whose music is organized in a different way (for example, in terms of its harmonic structure; Athanasopoulos et al., 2021; Lahdelma et al., 2021; Lahdelma & Eerola, 2023). Indeed, multiple recent position papers in our field have called for the inclusion of a greater diversity of musics, cultures, and collaborators in our research (Baker et al., 2020; Jacoby et al., 2020; Sauvé et al., 2023).

The aim of this article is to summarize the current state of the field of music psychology in relation to the participant samples and musical samples that are used. Specifically, we reviewed all articles published in Music Perception, Musicae Scientiae, and Psychology of Music between 2010 to 2022, and extracted details about both the participant samples and musical samples from each paper, as well as the keywords and bibliographical information. This allowed us to provide a comprehensive overview of the typical samples and music being employed in the field over the past 13 years, as well as within several subdomains in the field, with a view to making recommendations for future research priorities.

Method

Data Selection

We reviewed all articles published in Music Perception, Musicae Scientiae, and Psychology of Music between 2010 to 2022. These three journals were chosen as they represent the most prominent and longest-running journals in music psychology; our approach also follows other bibliometric reviews in this field (Anglada-Tort & Sanfilippo, 2019). 2 We note that there exist many other articles that could be classified within the field of “music psychology” that have been published in general psychology journals, journals on music education, neuroscience journals, and so on. However, we chose this approach to allow us to provide a comprehensive review of three journals that fit squarely within this field rather than, for instance, searching all existing journals in the fields of music and psychology, to avoid potentially missing particular relevant articles on account of our selected search terms, and to avoid having to make a subjective judgment on each individual article identified as to whether it should be considered a “music psychology” article. We excluded from consideration all book reviews, editorials, articles that were purely theoretical in nature, and review articles that did not report any new data. Our dataset thereby comprises 1,360 articles out of a total 1,668 items (81.5%) published in these three journals between 2010 and 2022, comprising 738 articles from Psychology of Music, 348 articles from in Music Perception, and 274 from Musicae Scientiae.

Data Coding

For each included article, we coded details from each reported study into a spreadsheet. Most articles (n = 1,158; 85%) reported only one study, but some articles reported as many as eight separate studies. For each study, the paper title, year published, journal, first author's name, and keywords of the paper were noted. To provide an overview of the geographic location of the work, the country of the first author's affiliated institution, country(s) the data were collected in, and the primary country(s) of origin of the participant sample(s) were also noted. If a first author reported two affiliated institutions in different countries, we retained only the first affiliation listed (this operation affected less than 1% of our dataset, specifically, 12 studies from 7 articles). We also coded the primary ethnicity of the participant sample, total sample size, sample age mean, sample age standard deviation, number of female participants, and number of male participants. 3 In addition, the mean years of education of the participant sample, any musicianship description of the participant sample, and any other description (e.g., “undergraduate students”) were coded, as well as the recruitment method (i.e., “volunteers”, “course credit”, “paid”, “other”).

If any music was used (for instance, as stimuli or in corpus analysis), we coded the primary musical genre(s) used for each study and origin country(s) of the music. Musical genre labels were ascertained directly from musical stimulus descriptions given in each article. We also coded the compositional source of the music, specifically, whether it was pre-existing music that the researchers utilized as is (labeled in our dataset as “precomposed”), or whether the music was specifically composed for research purposes (labeled in our dataset as “experimenter-created”).

In cases where the word “primary” is used in the above variable descriptions, such variables were coded on the basis of the majority of a participant sample or musical stimulus set falling into a particular category. For example, “primary country of origin of the participant sample” was coded as the country where the majority of participants came from (e.g., if 60% of participants were from the US and 40% were from Canada, this was coded as “US”); we acknowledge that this removes some granularity in the dataset but removed some complications in further processing steps for expanding the dataset into subsamples by study. If samples were equally divided (e.g., 50% of participants were from the US and 50% were from Canada), both countries were coded. In the case of the “primary country of origin of the participant sample” variable, we also coded multiple countries if an explicit cross-cultural comparison was made by the authors, regardless of whether the split of participants was exactly equal (e.g., if a cross-cultural study was conducted comparing musical responses between 60% participants from the US and 40% from India, both “US” and “India” were coded).

All missing data were coded as “NA” values. For example, papers that reported only musical corpus studies (with no human participants) received “NA” entries for all participant-related columns, but the details of the music sample were still included.

Data Availability Statement

A full list of instructions provided to the coders who processed the data (all authors on this paper), the data from this coding process, and all analysis scripts are openly available here: https://tuomaseerola.github.io/WEIRD/.

Data Analysis

In the data cleaning stage, we collapsed some subcategories that emerged in the coding process. For the musicianship description variable, we included the following subcategories as “musicians” for our subsequent analyses: professional musicians, amateur musicians, music students, and music teachers. For the broader description of the participant sample (beyond musicianship), the following subcategories of participants emerged: adults, amusics, audience, caregivers, children, clinicians, infants, older adults, patients, undergraduate students, university students, and university+ (“university+” comprised samples of primarily university students supplemented by community members, academic staff, etc.). For our analyses we considered any studies that involved the undergraduate students, university students, and university+ subcategories as “university samples.”

For analyses related to the keywords of articles, we created a simplified list of all keywords used, reducing variant spellings and forms (e.g., expressiveness, expressivity, and expression were all categorized under “expression”) and grouping similar concepts together (e.g., health, well-being, and wellbeing were all categorized as “health”). We also omitted the keyword “music”, given its high prevalence across the dataset. A full list of these operations can be found in the accompanying analysis scripts on GitHub.

We classified the country data (specifically, the first author's affiliation country, data collection country 4 , and country of origin of participants) as WEIRD or non-WEIRD using a recent list of WEIRD countries by Krys et al. (2024) which allocates countries in the EU or EFTA, Australia, Canada, New Zealand, UK, US, and Israel as WEIRD countries. Alternative metrics were also initially explored, including classifying countries by membership in the Western European and Other States Group (WEOG) and a measure of cultural distance from the US developed by Muthukrishna et al. (2020), and resulted in minimal differences from the results reported here (see full results in online data repository). For the origin country of the musical stimuli, we also coded music that was from one of the countries within our list of WEIRD countries (e.g., Western European classical music, US chart-topping pop songs) as “Western”.

Three levels of analysis were utilized as appropriate. The first was at the level of individual articles (N = 1,360). The second was at the study level, since some articles comprised multiple studies (N = 1,622). For analyses of features of musical samples, we focused on the 1,047 of these studies that utilized music (either for corpus analysis or as stimuli for research participants). Of the 1,622 total studies, 1,532 (94%) involved human participants (the others were solely corpus studies). Some of these human studies involved participants from multiple countries (e.g., a study comparing participant groups in Finland and India); for such studies we expanded the dataset into a third level which we refer to as the sample level (N = 1,589). At this sample level, there were 1,411 samples for which the researchers provided information on the primary country of data collection (89% of all samples). In our subsequent analyses, we compared demographic differences of these 1,411 participant samples between WEIRD vs. non-WEIRD countries (according to the Krys et al., 2024 classification); specifically, 1,251 of these samples came from WEIRD countries and 160 samples from non-WEIRD countries.

Results

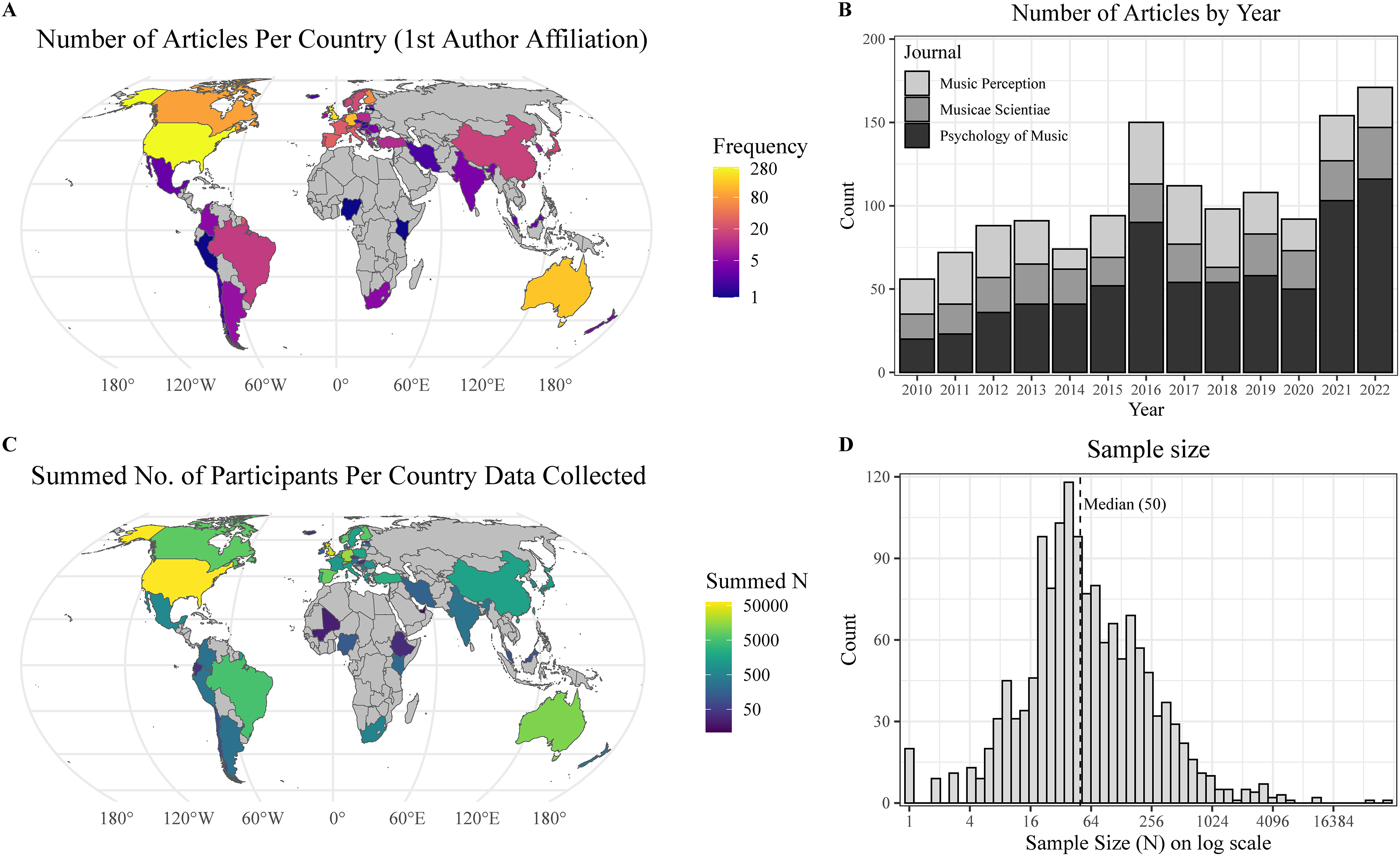

In total, 1,360 articles reporting 1,622 studies and 1,589 samples were included in our dataset. Over half of the articles were published in Psychology of Music (738), with 348 articles published in Music Perception and 274 in Musicae Scientiae (see Figure 1B for number of articles by year). In these 1,360 articles, the most frequently listed countries for the first author's affiliated institution were the US (22% of articles), UK (15%), Australia (11%), Germany (9%), Canada (6%), and Finland (5%); overall, 91% of first author affiliations were from WEIRD countries (see Figure 1A). Similarly, data for the studies reported in these articles were most frequently collected in the following countries: US (22% of studies), UK (11%), Australia (8%), Germany (7%), Canada (7%), and Finland (3%), while 9% of datasets were collected online. The non-WEIRD countries where data collection most frequently occurred were Japan (1.2%), China (1.0%), and Brazil (1.0%). Figure 1C shows the total number of participants tested across all studies by country of data collection. The primary country of origin of the participant sample was reported less frequently than the country where the data were collected (54% of the time versus 96% of the time, respectively), although we might presume that in cases where the former was not reported it is because the sample primarily originated from the country in which the data were collected. For studies where the origin country of participants was reported, the most frequently appearing six countries remain the same as the two lists above (although with Germany now listed third and Australia fourth in rank order).

Summary of dataset regarding A) number of articles per country based on first author affiliation, B) number of articles per year by journal, C) total number of participants collected per country across all participant samples, and D) distribution of sample size (log scale) across all samples. Country data prepared with countrynames (Arel-Bundock et al., 2018) and rnaturalearth (Massicotte & South, 2023) packages.

Overall, the sample size was reported for 96% of the 1,532 studies that used human participants. Sample size ranged from 1 to 56,626 participants (a study of Twitter users) (median = 50), and 68% of these studies had a sample size of fewer than 100 participants (see Figure 1D). Sample age data was reported in 70% of the 1,532 studies (for 202,710 participants in total). The mean age of all participant samples was 26.5 years, and the mean age weighted by study sample size was 27.1 years; 64% of all participant samples had a mean age between 18–30 years. For studies that reported these details (76% of studies with 214,367 participants in total), the percentage of female participants, weighted by sample size, was 61%.

Table 1 shows the participant and musical sample variables across studies with data collected in WEIRD versus non-WEIRD countries. Participant samples collected in non-WEIRD countries were somewhat larger but not significantly so (Mann–Whitney test, U = 103461, p = .486). However, samples collected in non-WEIRD countries were significantly younger (sample size-weighted, tw(203.7) = 9.65, p < .001) and showed less age variation (tw(151.2) = 8.26, p < .001) than samples collected in WEIRD countries. In both WEIRD (59%, 95% CI [57%, 61%]) and non-WEIRD (54%, 95% CI [49%, 56%]) countries there was a tendency to collect more data from female than male participants, but this bias was more exaggerated in WEIRD countries (tw(139.2) = 4.21, p < .001).

Summary of key variables across samples from WEIRD and non-WEIRD countries.

Notes. Binary country classification into WEIRD versus non-WEIRD is based on the method of Krys et al., 2024. All participant-related summaries are based on individual samples within studies utilizing participants where primary country of data collection was given (N = 1,411). The descriptors relating to musical samples (experimenter-created music, Western music, and music origin county unspecified) are derived from a subset of studies utilizing music (N = 1,047). Sample sizes are compared statistically with a Mann–Whitney test. Age and gender balance t-tests have been weighted by the sample sizes. Proportions of musicians/non-musicians, university students, volunteers, experimenter-created music, Western music, and cases in which these variables were not specified have been analyzed with χ 2 tests. Values in brackets refer to 95% confidence intervals obtained through bootstrapping. Each row name listed in italics shows analysis of missing data for the variable listed directly above it.

In total, 30% of all samples were solely musicians (e.g., performers, composers, music teachers, music students), 10% were solely non-musicians, and 22% involved both musicians and non-musicians; the musicianship of participants was not reported for 37% of samples. Table 1 shows that the percentage of samples comprising these different musicianship categories did not significantly vary across WEIRD and non-WEIRD countries (for the purpose of this comparison, the 37% of samples not reporting this information were classified as comprising both musicians and non-musicians).

Overall, 21% of samples comprised solely university student samples, and a further 12% were solely undergraduates, while 9% of samples involved children or infants, and information on this aspect of the sample was not reported in 53% of cases. The percentage of samples using university populations did not significantly differ between studies conducted in WEIRD (41%) and non-WEIRD countries (43%; see Table 1). Given the large number of cases in which this aspect of the sample was not specified by the researchers, we also examined whether the percentage of samples with missing data differed between WEIRD and non-WEIRD countries. This is denoted by the row labeled “Sample description unspecified” in Table 1. A significantly lower percentage of missing data was found in non-WEIRD countries (χ2 = 4.41, p = .039).

In terms of participant recruitment, 30% of samples were solely volunteers, 10% were solely paid participants, 9% comprised solely those recruited for course credit, and 9% used some combination of these strategies (or other rewards), while this factor was not reported for 41% of samples. The balance of volunteer versus non-volunteer participants did not significantly differ across samples collected in WEIRD (30%) versus non-WEIRD (34%) countries (see Table 1). The percentage of missing data in relation to this variable was significantly higher in non-WEIRD than WEIRD countries (“Recruitment method unspecified” in Table 1; χ2 = 3.88, p = .049).

Only 9% of samples included ethnicity data (within this, 53% of samples were primarily white and 26% were primarily Asian) and years of education were reported for 6% of samples (within this, the mean number of years of education was 12.6). Due to the large amount of missing data, these two factors of ethnicity and years of education were not considered further in our analyses.

In total, 65% of all studies (N = 1,047) used some sort of music (the others were, for example, interview or questionnaire studies that did not explicitly present any musical stimuli to participants or analyze a corpus of music). From this set of studies, 56% used precomposed music (e.g., existing recordings or notated music), 33% used experimenter-created music, and 5% used semi-precomposed music (e.g., existing music that was reworked or edited for the purposes of the experiment); the other 6% of studies used some combination of these categories. The percentage of studies using experimenter-created music did not significantly differ between studies conducted in WEIRD versus non-WEIRD countries (see Table 1). Artificial sounds (e.g., sine waves, single tones) were used as stimuli in 21% of all studies.

Solely Western music was used in 71% of studies, and the most frequently used Western genres were: classical (47% of studies using Western music), pop (15%), rock (9%), and jazz (9%). Western music stimuli were used significantly more often in WEIRD countries (73%, 95% CI [70%, 76%]) than non-WEIRD countries (χ2 = 11.95, p < .001, see Table 1), although even in non-WEIRD countries Western music was used in the majority of studies (59%, 95% CI [53%, 67%]). The country of origin of the music used was not specified in 23% of studies overall, with no significant difference between the amount of missing data for studies in WEIRD versus non-WEIRD countries (“Music origin country unspecified” in Table 1).

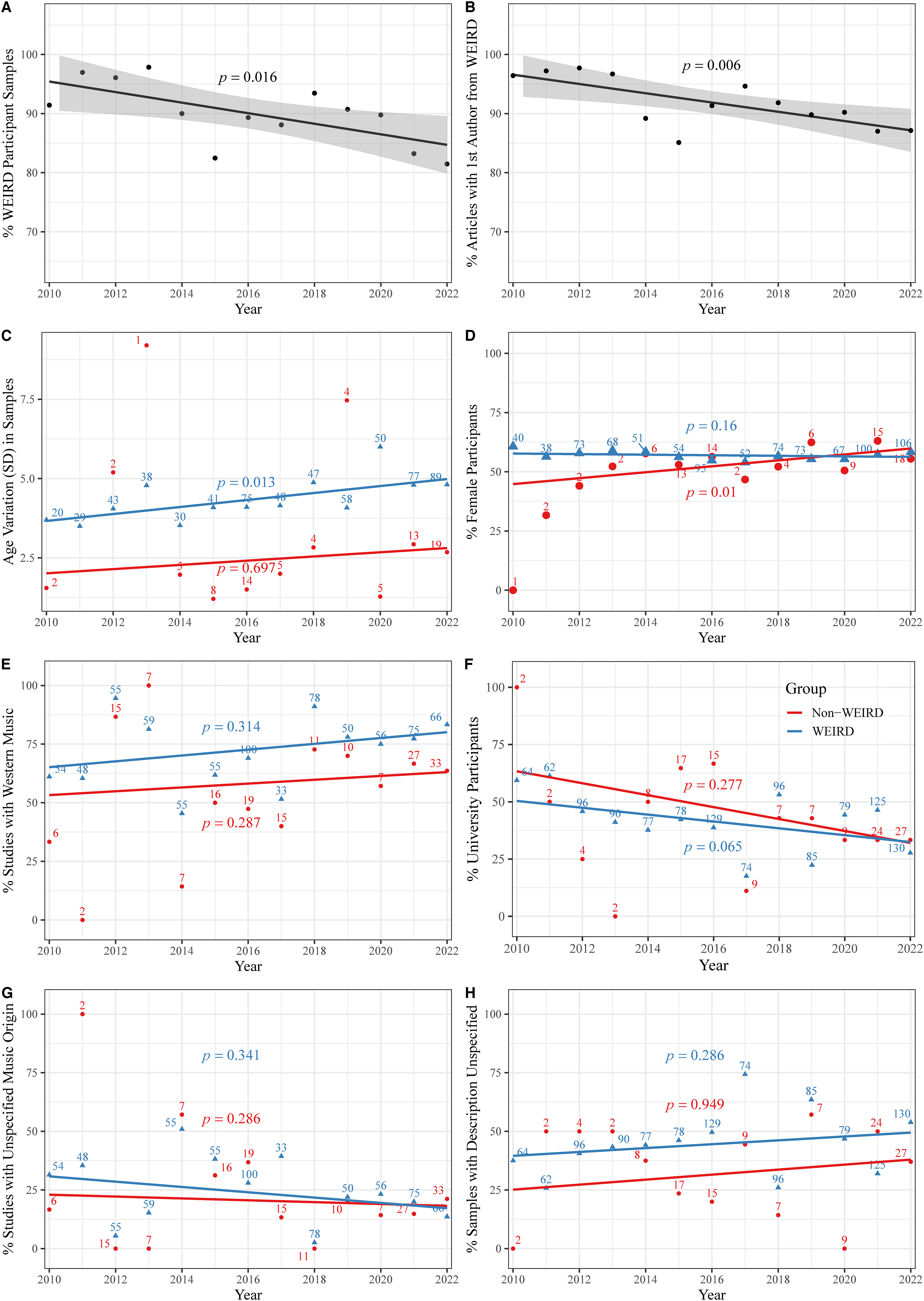

We also examined trends over time in relation to the year of publication of each study. Figure 2 (subplots A and B) shows that the percentage of participant samples from WEIRD countries is decreasing over time, as is the percentage of studies with first authors from WEIRD countries, although both at a relatively slow (but statistically significant) rate, with the number of samples and first authors from WEIRD countries in the final year of data collection (2022) still both above 80%. Samples from WEIRD countries are gradually becoming more varied in age; there is also a non-significant trend in this direction for samples from non-WEIRD countries, although it is difficult to make strong conclusions due to the low number of observations per year (Figure 2C). Studies conducted in both WEIRD and non-WEIRD countries are tending to use fewer university participant samples over time, although these trends are not statistically significant (Figure 2F). The percentage of missing data in relation to the sample description (Figure 2H) has not significantly changed over time in either WEIRD or non-WEIRD countries, though WEIRD countries show a greater percentage of missing data across the 13-year period (see also Table 1). The use of Western music samples and percentage of female participants show relatively little systematic change over time (Figure 2D & 2E). The percentage of missing data in relation to the country of origin for the music used does not show a significant change over time for either WEIRD or non-WEIRD countries (Figure 2G).

Trends across time for key metrics. The numbers next to the markers refer to the number of observations in the dataset. The p-values refer to statistical significance of frequency-weighted linear trends.

Finally, we conducted an initial exploration into whether particular subtopics within the field show more sampling biases than others. Figure 3 shows the simplified keywords, following the pre-processing steps described in the Method section, in relation to several key participant and musical sample variables. In this figure we focus specifically on the 25 most frequently occurring keywords in the dataset. As summarized above, many studies did not provide information about whether or not they utilized musicians, university samples, or Western music (e.g., for music stimuli this was often the case when the music was self-selected by participants); as such, for these variables we also categorize these cases as “Unspecified” in Figure 3.

Summaries of article keywords by A) data collection in WEIRD countries, B) musical expertise, C) music origin country, D) sample description, E) age (years from the median age), and F) gender balance. Numbers next to/on the bars refer to the counts of articles with the keyword.

Across all these keywords there was a consistent tendency to collect data more frequently in WEIRD than non-WEIRD countries; this bias tended to occur most often in relation to studies on musical performance and training (including learning and expertise), movement studies, studies using children or EEG, and (music) perception studies. Perhaps unsurprisingly, studies focused more on musician populations tended to be about anxiety, practice, performance, learning, and expertise. Studies on music preferences and music listening, as well as studies of children, seemed to be less targeted toward trained musicians, although the musicianship of samples was not described explicitly in more than half the studies on these topics. Studies on music perception and memory tended to utilize high percentages of both university students and female participants; these subtopics also fall fairly close to the median age of 24.1 years. Health-related studies tended to focus on older participant samples; with the exception of the keyword “children”, all other keywords fall within 5 years of the median age. Studies on harmony and movement showed the greatest tendency to explicitly utilize Western musical stimuli. Studies related to identity, anxiety, health, and personality were not often explicit about whether they used Western music (missing data in >75% of cases); studies of this nature often rely on personalized and self-selected music which may span across many different genres.

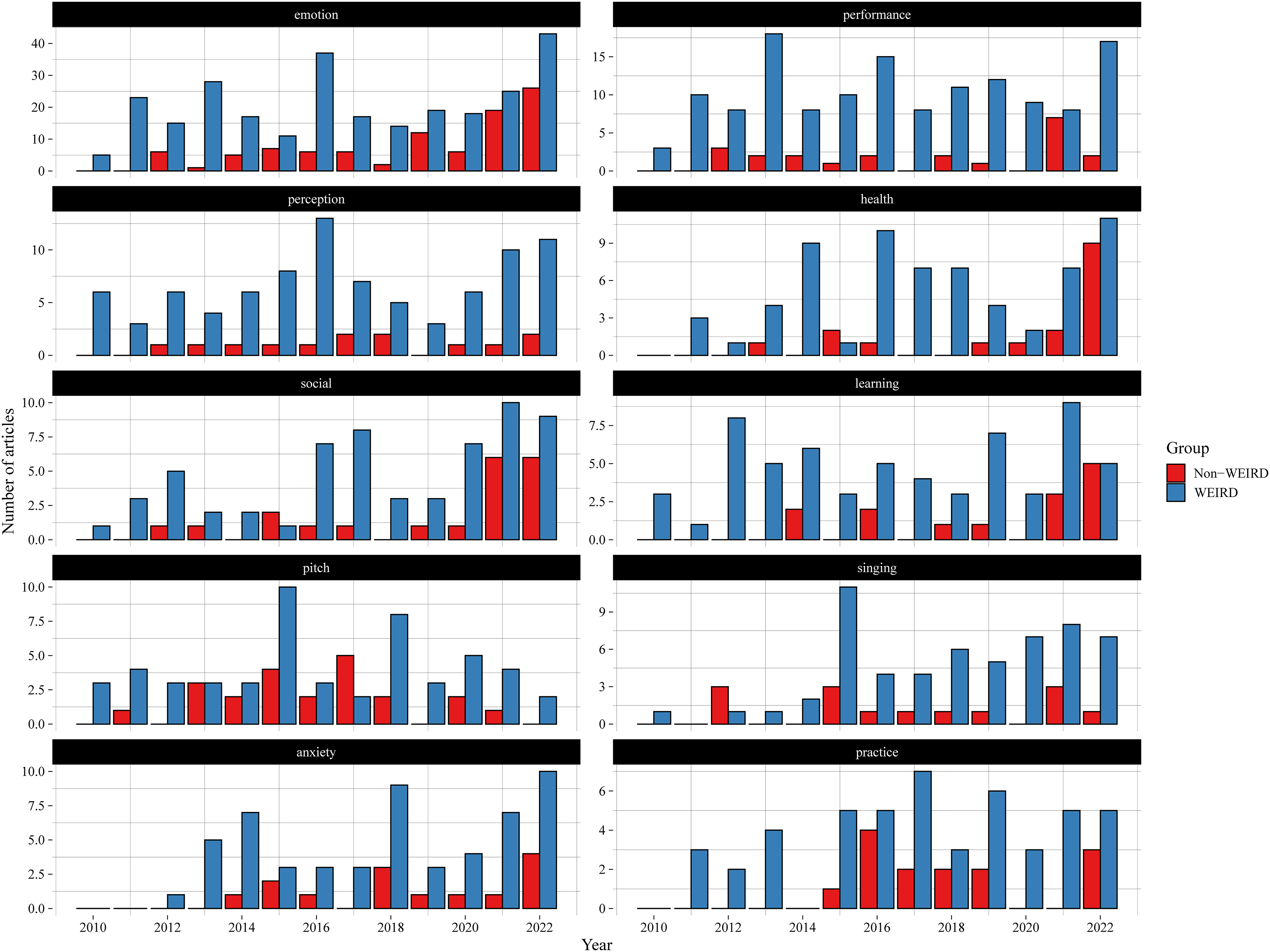

Figure 4 provides a summary of the 10 most frequently occurring keywords in the dataset and how their appearance has changed over time in studies using WEIRD and non-WEIRD samples. This shows that different subtopics have fluctuated in popularity over this period within articles published in these three journals. Some subtopics, like “perception” and “singing” show a consistently small number of non-WEIRD samples that do not vary much over time. For other subtopics, increases in interest in a topic seem to be linked to increased samples of both WEIRD and non-WEIRD participants. For example, more recent years have seen some increase in publications on “emotion”, “health”, “social” aspects, “learning”, and “anxiety” in both populations, though the number of WEIRD samples still outweighs the number of non-WEIRD samples on the whole.

The number of articles published using the 10 most frequently appearing keywords in the dataset, by year and country of data collection.

Discussion

The aim of this article was to survey the current state of the field of music psychology in terms of the diversity of its participant and musical samples. We have collected a range of measures of sampling practices from 1,360 articles published in three journals over 13 years, and considered how these practices vary across studies conducted in WEIRD versus non-WEIRD countries, over time, and in relation to frequently occurring article keywords. Our results indicate that sampling biases are present throughout several dimensions of music psychology research and provide new insights into areas where future work is most needed.

The bias toward recruiting participants within WEIRD countries that has been shown in psychology research as a whole is also prevalent throughout music psychology research, across a diverse range of subtopics in this field (see Figures 1 and 3). The percentages of music psychology studies conducted in WEIRD countries are roughly similar to other areas of psychology research (Apicella et al., 2020). For instance, around 94% of studies published in Psychological Science from 2014–2017 sampled their participants within Western countries (Rad et al., 2018). One positive point to note is that we have evidenced a gradual decrease over time in the number of studies conducted in WEIRD countries and the number of WEIRD first authors, although in both cases non-WEIRD groups are still consistently in the minority (see Figure 2). Although various recent calls have been made to increase the diversity of collaborators and participants in music psychology research (Baker et al., 2020; Jacoby et al., 2020; Sauvé et al., 2023) there is still much work to be done in practice, while the prevalence of first authors from WEIRD countries raises the need to carefully consider the inherent power dynamics within research collaborations and the privileged position of native English speakers in academic publishing practices (Amano et al., 2023).

It is notable that several sampling trends that are prevalent within data collected from WEIRD countries are also replicated across non-WEIRD samples in this dataset. For instance, the proportion of participants who were university students, volunteers, and musicians did not differ between samples from WEIRD versus non-WEIRD countries, and data collected in non-WEIRD countries was similarly skewed toward young adult samples (see Table 1). This suggests that even music psychological samples collected in non-WEIRD countries may be relatively more WEIRD than other subgroups from those countries. One recent large-scale, cross-cultural study that demonstrated the limitations of such an approach is the work of Jacoby et al. (2024), who showed that university students and/or online opportunity samples from non-WEIRD countries exhibit more similar rhythm priors to US participants than to other non-WEIRD samples. Such sampling methods may thus underestimate the true cross-cultural variability of participant responses.

Beyond consideration of the countries in which data is collected, there are several other biases inherent in the current dataset. The first is a notable age skew, with around two thirds of studies reporting a mean age between 18–30 years. As shown in Figure 3, very few subtopics showed much deviation from the median age of 24.1 years, although the age variation in samples does seem to be increasing gradually over time (see Figure 2). Over 40% of studies included university student samples; this proportion has also slightly (though not statistically significantly) decreased over the 13-year period of this dataset. This suggests that there has been some positive movement toward diversifying the age and educational background of music psychology participants, although there is still much work to be done. One potential explanation for this diversification is the increase of online studies in recent years; although they come with their own limitations, online recruitment services (e.g., Prolific, Qualtrics Online Sample, or Besample) allow for pre-screening and representative sampling in relation to a wide range of demographic variables, including age, socioeconomic and geographic factors, and even hobbies such as music-making (Eerola et al., 2021). Researchers within our field are also increasingly adopting web-based “citizen science” approaches, often combined with gamification to increase intrinsic motivation, to attract large samples (typically tens or hundreds of thousands) of participants from across the globe (Aljanaki et al., 2014; Burgoyne et al., 2013; Gutiérrez Páez et al., 2021; Hilton, 2024; Honing, 2021; Mehr et al., 2019).

In addition, certain subtopics within the field appear to be studied in less diverse populations than others. For example, studies on memory, (music) perception, and EEG tend to focus on relatively WEIRD, young adult populations, and studies on movement tend to use highly WEIRD participants and WEIRD music (see Figure 3). Studies on subtopics such as perception and singing also maintained a consistently low level of non-WEIRD samples over the 13-year timespan of this dataset (see Figure 4). This tendency is likely related to the paradigms and equipment used in such research, which have been traditionally less conducive to usages outside a “controlled lab” setting. Since many such lab settings, in particular those with costly facilities such as motion capture systems, tend to be based in WEIRD countries, this can perpetuate the bias to recruit WEIRD participants into studies on these topics. Some research hardware has also posed limitations on inclusive research; for instance, traditionally EEG systems have worked less well on Black or other thick hair, and eye tracking efficacy can vary in relation to iris color (which also correlates with race). However, technological advances continue to augment our ability to reach broader populations. Examples in music psychology include online adaptations of a range of behavioral tasks that have been traditionally constrained to lab-based implementations (e.g., sensorimotor synchronization, singing, eye tracking; Anglada-Tort et al., 2022, 2023; Saxena et al., 2023) and the development of more portable/flexible technologies for collecting and analyzing movement data, EEG data, etc. in fieldwork and naturalistic settings (e.g., Clayton et al., 2024; Jakubowski et al., 2017; Pearson et al., 2024; Pearson & Pouw, 2022; Zamm et al., 2019). 5

Around 30% of participant samples comprised solely musicians. This is perhaps to be expected for certain subtopics in the field, such as studies of expert performance routines and music performance anxiety, which may be chiefly of interest to people who make music. However, many other subtopics tended to include perhaps a disproportionate number of studies focused on musicians, if one considers the relative proportion of musicians to non-musicians in the general population. For instance, over 30% of articles containing the keywords “EEG”, “health”, “movement”, and “social” utilized samples of solely musicians (see Figure 3). This leaves questions as to whether certain theoretical conceptions about music cognition may be biased toward evidence from expert samples, although unpacking specific theories in this regard is beyond the scope of this article. In addition, the definition of what constitutes a “musician” varied widely across the papers we surveyed; this issue was highlighted in Tirovolas and Levitin's (2011) review and seems to have subsequently persisted in the field. Furthermore, what constitutes a “musician” is likely to vary widely across cultures. For instance, definitions specifying a certain number of years of “formal training” may not be globally relevant, while in some cultures there is no clear-cut differentiation between “musicians” and “non-musicians”, hence the definitions adopted across WEIRD and non-WEIRD countries may be necessarily varied.

Finally, our results indicate there is still a heavy focus on Western music throughout our field. This was most prominent in studies of participants sampled in WEIRD countries, and thus may partially reflect the desire to utilize musical stimuli of cultural relevance to one's research participants. But most studies (59%) within non-WEIRD countries also tended to employ at least some Western music, which could even be an underestimate if some of the 20% of missing data on this factor was Western music. This suggests there is still a strong tendency to use Western music as a baseline comparator from which cultural differences are sought, which propagates problematic contrasts between “the West and the rest” (Hall, 2018; Sauvé et al., 2023). Results from Figure 2 also indicate that this trend is not decreasing over time. In some ways this is surprising, given that music from non-WEIRD countries is arguably more easily accessible than participants from non-WEIRD countries. For instance, online streaming services and platforms such as YouTube host a diverse array of music from across the globe. Corpora that are specifically curated for research purposes are harder to come by; however, recent years have seen the compilation of research corpora of music from countries as wide-ranging as India, Uruguay 6 , Mali, Cuba, Tunisia, and Georgia (Clayton et al., 2021; Rosenzweig et al., 2020; Srinivasamurthy et al., 2021). The Global Jukebox project provides a database of music and extracted features from 5,776 songs from across the globe (Wood et al., 2022) and a recent global comparison of speech and song from over 50 languages is accompanied by openly shared data and audio recordings (Ozaki et al., 2024). Of course, simply having access to a diversity of music does not in itself enable meaningful research, and it is likely that music psychologists will need to continue to build collaborative links with researchers in a wider range of countries and disciplines (e.g., ethnomusicology, anthropology) to be able to fully exploit the possibilities afforded by these collections (Savage et al., 2023).

Limitations and Future Directions

There are several limitations to the current approach that should be noted. Due to substantial variation in reporting methods, it was not possible to break down our analyses into specific subpopulations within each country. It should be noted that within countries there are likely to exist many regional differences in demographic and socioeconomic factors, such that some regions/subpopulations of a “non-WEIRD country” may be better classified as WEIRD and vice versa. Furthermore, our classification of WEIRD and non-WEIRD countries in line with the method proposed by Krys et al. (2024) represents only one of myriad possible dimensions upon which these countries could be compared. Alternatives include postcolonial (or decolonial) distinctions between the Global North and South (Dussel, 2013; Grosfoguel, 2022; Mignolo, 2011; Quijano, 2000), as it is clear from our results that countries classified as being from the Global South are also highly underrepresented in music psychology research. In addition, broad cultural groupings of WEIRD versus non-WEIRD are likely to shift and change with time due to economic development and educational changes, industrialization/deindustrialization, climate migration from threatened zones, and so on.

Several dimensions which have proved useful in characterizing cultural variation in previous research were not readily available for analysis across our dataset. For example, we found that ethnicity and education data were rarely or inconsistently reported (interestingly, years of music education seemed to be reported relatively more often than general educational data). Only around half of samples (47%) were clearly described in terms of whether they were university students, older adults, children, or so on. This means it is entirely possible that the current dataset underestimates the true prevalence of university student samples used in our field and provides a strong impetus to include more specific descriptions of the communities from which we are sampling within our field. Interestingly, the detail with which the participant sample was described did not consistently vary depending on whether the data was collected in a WEIRD versus non-WEIRD country: in non-WEIRD countries researchers were more likely to explicitly report if a university sample was used, but were less likely to report whether the sample were volunteer or paid participants (or received some other incentive) than researchers collecting data in WEIRD countries (see Table 1). Other factors such as the religious background of participants and the degree to which they are sampled from urban versus rural populations are very rarely reported, despite previous evidence showing that these factors can have a substantial impact on behavioral diversity across different subpopulations (Barrett, 2022; Muthukrishna et al., 2020).

At the same time, it would be naïve for us to advocate that all researchers should or could collect data on these and all other potentially relevant demographic factors if they are not being considered as a factor of interest in the research design, given potential ethical concerns. For example, the data minimization principle of the European Union's General Data Protection Regulation states that “personal data shall be…adequate, relevant and limited to what is necessary in relation to the purposes for which they are processed (‘data minimisation’)” (Article 5(1)(c)). As such, the recommendation we make here is that some of these factors that have been relatively under-investigated (e.g., urban-rural comparisons) could be put at the forefront of future comparative music psychological studies of a wider range of populations from across this continuum. Furthermore, even if limited demographic information has been collected for ethical or practical reasons, researchers could strive to better characterize their samples by providing information about the general population from which their participant sample was drawn (e.g., average income, education level, and ethnicity breakdown of the region or university from which the sample was drawn). The inclusion of Constraints on Generality statements is another pragmatic option for increasing standardization in reporting and justification of participant and musical samples (Simons et al., 2017).

In this project we did not consider any articles published outside three specialist music psychology journals. We are aware that there exist many cross-cultural music psychological studies that are published in non-specialist journals, although arguably there are also a plethora of articles on WEIRD populations published in such journals as well, and attempts to identify all articles across any journal that may be classified as “music psychology” are likely to be biased themselves (e.g., in relation to the selection of keywords used to identify these, etc.). Furthermore, our focus on journals published in English means we have explicitly not included journals in which authors from countries where English is not the native language may be inclined to publish music psychology research (e.g., Germany: Jahrbuch Musikpsychologie; Japan: Journal of Music Perception and Cognition).

It should also be noted that the authors of this article are all affiliated to a university in a WEIRD country, and we thus acknowledge the biases that come with our own position within the field. We do, however, come from a diverse range of countries and disciplinary backgrounds. As such, the results of this work have benefited from reflection from researchers from music psychology, ethnomusicology, and music computing from both the Global North and Global South. We believe this variety of perspectives has strengthened and diversified the range of insights provided throughout this article.

What Next?

Our focus within this article has been to provide an empirical snapshot of the field of music psychology over the past 13 years, which highlights various sampling biases in both the participants and stimuli from which our conclusions about the nature of music perception and cognition are drawn. In discussing these results, we have focused primarily on highlighting approaches that individual researchers can take advantage of to expand the diversity of their own research, from using online platforms that allow for multinational representative sampling to methods that enable collection of multimodal and physiological data in fieldwork settings to curated corpora of non-Western music. However, it should be noted that a substantial shift of the needle toward diversification also requires initiatives at a larger group or institutional level. Such shifts will likely rely on multilab collaborations (Klein et al., 2014) that incorporate partners from across a range of geographic and socioeconomic backgrounds. Beyond the establishment of such networks, careful consideration and monitoring of the potentially disparate values of partners from different regional and disciplinary backgrounds and power dynamics within the group will be essential for meaningful collaboration (Savage et al., 2023). Other interventions require coordination at the level of publishers or institutions, such as strategic initiatives targeted at reducing the inherent inequities faced by non-native English speakers in academic publishing and researchers at institutions without access to costly journal subscriptions or funds to pay for open access publishing (Amano et al., 2023; Ayeni, 2023; Gray, 2020).

In sum, there are many actions that we as individual researchers can start to take to improve the diversity of our research, but these will need to be balanced with larger group and institutional initiatives. We also note that not every individual research study can or should aim to include highly diverse samples of participants or music to be of value to the field. For instance, some publications aim to document single case studies of unusual or exceptional abilities or others aim to elucidate the existence of particular perceptual phenomena (e.g., Shepard tones, speech-to-song illusion; Deutsch et al., 2008; Shepard, 1964), rather than their generalizability (see discussion in Hilton, 2024). Our aims with this article are simply to document the substantial gaps in the field as a whole (and various subfields within this) and encourage individual researchers and teams to reflect on contributions they might feasibly make toward addressing these gaps.

Conclusion

In conclusion, our results show that music psychology research exhibits an overwhelming tendency to collect data from young adults and university students in Western countries in response to Western music. Some positive trends toward increasing participant diversity have been evidenced over the past decade, although there is still much work to be done, and certain subtopics in the field appear to be more prone to these sampling biases than others. The dataset and all code used to produce these summaries have been made openly available with the hope that they serve as a starting point for investigating possible biases in other dimensions of music psychological samples. Continued efforts to expand the diversity of participant and musical samples require dedicated, strategic effort, but come with the fundamental benefit of increasing our confidence in music psychological theories and their relevance to humans and music in a broader sense. Beyond our immediate field, inclusive theories of music psychology have the power to inform and catalyze change in related areas, such as Music Information Retrieval, music-related artificial intelligence, and music education (Bull et al., 2023; Campbell et al., 2005; Huang et al., 2023; Sturm et al., 2019).

Footnotes

Acknowledgments

Thanks to all members of the Durham University Music & Science Lab for their feedback and support.

Action Editors

Michelle Phillips, Royal Northern College of Music; Scott Bannister, University of Leeds, School of Music.

Peer Review

Courtney Hilton, University of Auckland, School of Psychology. Sarah Sauvé, University of Lincoln, School of Psychology.

Author Contributorship Statement

KJ conceived of the study, and all authors contributed to co-designing the study and coding the dataset. TE and KJ led the analyses, and all authors contributed to interpreting the results. KJ wrote the initial manuscript draft, TE generated the figures and analysis scripts, and all authors contributed to revising the manuscript.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethical Approval

No new data from human participants were collected in this project, and all analyses are based on secondary data available in previously published journal articles. As such, no institutional ethical approval was required.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

ORCID iDs

Data Availability Statement

The data that support the findings of this study are openly available at: https://tuomaseerola.github.io/WEIRD/ (Eerola, (2025).