Abstract

Where does a listener's anticipation of the next note in an unfamiliar melody come from? One view is that expectancies reflect innate grouping biases; another is that expectancies reflect statistical learning through previous musical exposure. Listening experiments support both views but in limited contexts, e.g., using only instrumental renditions of melodies. Here we report replications of two previous experiments, but with additional manipulations of timbre (instrumental vs. sung renditions) and register (modal vs. upper). Following a proposal that melodic expectancy is vocally constrained, we predicted that sung renditions would encourage an expectation that the next tone will be a “singable” one, operationalized here as one having an absolute pitch height that falls within the modal register. Listeners heard melodic fragments and gave goodness-of-fit ratings on the final tone (Experiment 1) or rated how certain they were about what the next note would be (Experiment 2). Ratings in the instrumental conditions were consistent with the original findings, but differed significantly from ratings in the sung conditions, which were more consistent with the vocal constraints model. We discuss how a vocal constraints model could be extended to include expectations about duration and tonality.

Keywords

How is it that music engages? Familiar music can evoke extra-musical memories with strong associations (e.g., Jakubowski & Ghosh, 2021). But even unfamiliar music can engage in the absence of any obvious extra-musical meaning (e.g., a jazz trumpet solo). A prominent view is that listeners form expectancies about what will happen next, the fulfillment or denial of which can be emotionally and/or aesthetically engaging (Huron, 2006; Meyer, 1956). But where do these expectancies come from? Cognitive approaches assume that expectancies, whether innate or learned, are mediated by mental representations of musical structure (Thompson et al., 2022). Much research has focused on expectancies for local patterns in melody and modelled listener responses on pitch structure. In the most commonly-used task, listeners rate how well a final (probe) tone continues an unfamiliar melodic fragment. Pitch structure has been shown to explain a large proportion of the variance in probe-tone ratings but in limited contexts. For example, although fragments used in studies of melodic expectancy are generally of vocal origin (e.g., Pearce & Wiggins, 2006; Schellenberg, 1996), to our knowledge all previous listening studies have presented listeners with instrumental rather than sung renditions.

Alternatively, Russo and Cuddy (1999) propose that melodic expectancies – whether manifested in composition, production, or perception – share a common origin in vocal constraints. They define a vocal constraint as “the physical difficulty the average singer experiences in the vocal production of sound” (Russo & Cuddy, 1999). As anecdotal evidence, consider the novelty of yodeling, which lies in its rapid alternation between vocal registers, flouting listeners’ expectations for what is singable. Intuitively, it seems unlikely that the novelty would extend to, for example, a piano transcription of a yodel, since the piano is not similarly constrained by register. In this sense, instrumental renditions used in previous listening studies may be construed as impoverished in the extent to which they invoke expectations for what is singable. In what follows, we briefly review evidence for cognitive and vocal constraints approaches, before proposing a novel implementation of the latter based on vocal register. We report two listening experiments that manipulated timbre and register to test two predictions: (1) that listeners should expect the absolute pitch height of the next note will be singable; and (2) that this expectation of singability should emerge more robustly in response to sung melodies than instrumental ones.

Cognitive Approach

The cognitive approach has largely been from one of two perspectives. One view is that expectancies reflect both the top-down influence of enculturation to a musical style (e.g., tonality in Western melodies), and a bottom-up set of innate Gestalt principles for successive surface-level or non-successive emergent-level tones that may facilitate auditory grouping (Bregman, 1990; Narmour, 1990, 1992, 1999; Russo et al., 2015). The most parsimonious account of the bottom-up principles, Schellenberg's (1996) two-factor model, proposes that listeners expect that the next tone should be close in pitch to both the previous tone (proximity) and the next-to-previous tone (reversal), especially if it has initiated a large leap (see Figure 2). The two-factor model has been shown to predict listener ratings across a range of musical styles and across age groups and levels of musical training for listener ratings as well as sung continuations of melody fragments (Schellenberg, 1996; Schellenberg et al., 2002).

Sample fragment from Experiment 1. The fragment is from the Acadian folksong “La Lettre de Sang” with an A3 probe tone. For the two-factor model, the probe tone is coded as 8 for Proximity (distance from the previous tone in semitones) and 2.5 for Reversal because it forms a large interval (≥ 7 semitones) with the previous tone, and reverses to within 3 semitones of the next-to-previous tone (see Schellenberg et al., 2002 for a complete description of the coding procedure). For the linear version of the vocal constraints model, the probe tone is coded as 0 because it falls within the modal register; for the quadratic version described in note 1, the probe tone is coded as 57 (MIDI pitch).

A second view is that top-down and bottom-up expectancies can be subsumed by statistical learning through previous musical exposure (Pearce & Wiggins, 2006). For example, Pearce's (2005) computational model IDyOM makes predictions about upcoming musical events based on statistical regularities in music corpora. It has been shown to predict behavioral and neural responses to melodies across age groups and musical styles (e.g., Hansen & Pearce, 2014; Pearce et al., 2010). Another statistical learning approach has shown that listeners can learn regularities in novel melodies derived from an unfamiliar musical system (e.g., Loui, 2012, 2022; Rohrmeier & Cross, 2013; see Large & Kim's 2019 review for a more detailed discussion of cognitive approaches).

Vocal Constraints Approach

Support for the vocal constraints hypothesis has been limited to expectancies in production and composition. Russo and Cuddy (1999) showed that singing accuracy was correlated with listener expectancy ratings. Corpus studies suggest that expectancies for proximity and reversal in composition may be vocally constrained. For example, reversals tend to follow larger leaps in vocal melodies (Watt, 1924) but only when the leap is towards a pitch extreme (Von Hippel & Huron, 2001), suggesting reversals accommodate a restricted vocal range. Comparative studies show that, relative to human vocal melodies, melodic intervals are larger in birdsong (Tierney et al., 2011) and larger intervals occur more often in instrumental melodies (Ammirante & Russo, 2015). One explanation may be the number of available effectors. A large leap from a single set of human vocal folds is constrained by a speed-accuracy trade-off (Huron, 2001). On the other hand, leaps are facilitated for birds, who have two sets of independently-controlled vocal folds (Suthers, 2004), and for instrumentalists, who can use more than one finger, hand, or foot.

A Registral Implementation

Salomão and Sundberg (2008) define vocal registers as “regions of vocal quality that can be maintained over some ranges of pitch and loudness”. Vocal registers have distinct vibratory, muscular, and acoustic profiles (Keidar et al., 1987; Salomão and Sundberg, 2008). Yodeling exploits these differences, while other singing traditions train them out (Herbst et al., 2019). The low-to-mid vocal range, called the chest voice or modal register, is strongly favored among occasional singers (e.g., Moore 1991) and in everyday speech (Berg et al., 2017). For example, Larrouy-Maestri (2011) had participants spontaneously sing “Happy Birthday” without a referent pitch. As shown in Figure 1, secondary analysis showed that 88% of pitches produced by female singers fell within the female modal register of between F3 and F4 (Herbst et al., 2019). Yodeling notwithstanding, modal register singing is also a statistical universal across a wide range of musical cultures (Savage et al., 2015). This is thought to be due to its efficiency. Because modal register singing involves the entire glottis, louder phonation can be achieved without additional expenditure of subglottal air pressure (Savage et al., 2015). “Economy of action” has been shown to impact perception in other domains (Proffitt, 2006). For example, Proffitt (2006) showed that the steepness of hills and the distance to targets are perceived in terms of their associated effort. Despite the ubiquity of modal register in singing and speaking, its potential role in melodic expectancy has been given little consideration.

Data from Larrouy-Maestri (2011) showing the distribution of 2,424 absolute pitch heights (rounded to the nearest semitone) produced by 104 females spontaneously singing “Happy Birthday” without a referent pitch. Red bars identify pitches that fell within the modal register of between F3 (MIDI note 53) and F4 (MIDI note 65; Herbst et al., 2019).

An exception is Ammirante and Russo's (2015) corpus study, which proposed that proximity is favored in vocal melodies because smaller intervals minimize the possibility of an abrupt change between vocal registers. As evidence, they showed that melodic leaps in the lower part of a melody's range (which are likely sung in the modal register) are more common in vocal melodies relative to instrumental, but leaps in the mid-to-high part of a melody's range (which may involve a change between modal and upper registers) are avoided in vocal melodies relative to instrumental.

Here we tested a novel registral implementation of the vocal constraints hypothesis in perception. We predicted that listeners should expect that the next tone in an unfamiliar melodic fragment will be a “singable” one, operationalized as one having an absolute pitch height that falls within the modal register. To the extent that a listener's expectation of singability might involve a (covert) mapping of perception onto vocal-motor action (Russo, 2020), previous evidence suggests that timbre should matter. For example, occasional singers are more accurate at singing a target pitch or sequences of pitches from a vocal model than an instrumental one (Granot et al., 2013; Watts & Hall, 2008), especially when the model's voice is similar to one's own (Hutchins & Peretz, 2012; Moore et al., 2008). Other behavioral studies show that sung stimuli uniquely engage the vocal-motor system (Lévêque et al., 2012; Wood et al., 2020). Neurophysiological evidence supports voice-selective (Belin et al., 2000) and even vocal song-selective (Norman-Haignere et al., 2022) areas in auditory cortex, and a voice-specific sensorimotor integration region that is active during covert humming with a target melody (Pa & Hickok, 2008). Taken together, these findings suggest that an expectation of singability should be encouraged by sung melodies.

The model does not assume that expectancies are mediated by a mental representation of pitch structure available either innately or acquired through statistical induction. Therefore, it predicts effects of timbre even where pitch structure has been held constant. The model also differs from others in its assumptions about the role of context in generating expectancies. For example, the two-factor model is a higher-order model because it assumes that expectations about the pitch height of the next tone are relative to representations of the previous two tones. However, Von Hippel and Huron (2001) have shown that proximity and reversal may be artifacts of range. A melody with a narrow range of normally-distributed pitch heights will have a preponderance of small intervals (i.e., proximity), and leaps will tend towards pitch extremes and regress towards the middle of the distribution (i.e., a reversal) because “they lack the space to do otherwise” (Von Hippel and Huron, 2001). Thus, a composer's bias towards proximity and/or reversal need not be influenced by the two previous tones. It follows that the listener's expectation need not either. However, regression to the mean is still higher-order because it assumes that expectations about the pitch height of the next tone are relative to a representation of the average pitch inferred from the cumulative distribution of pitch heights heard in a melody to that point (Huron, 2006, p. 125; Von Hippel, 2000).

The vocal constraints model proposed here is zeroth-order in that it predicts that listeners should expect the absolute pitch height of the next tone should fall within the modal register regardless of preceding context. Responses consistent with proximity and reversal should obtain when successive notes extend beyond the modal register (Ammirante & Russo, 2015). But, like a polar planimeter, which measures the higher-order variable of area directly by tracing a region's boundary rather than computing from length, singability is assumed to generate expectations about relations between successive tones directly and without computation (Runeson, 1977).

To compare the model to others, two previous listening experiments were reproduced with additional manipulations of timbre (instrumental vs. vocal) and register (modal vs. upper). Experiment 1 replicated a test of the two-factor model (Schellenberg et al., 2002). Listeners heard either instrumental or sung melodic fragments and made retrospective goodness-of-fit ratings on a probe tone, half of which fell within the modal register and half of which fell within the upper register. It was predicted that if sung melodies encourage an expectation of singability, then ratings should be higher for sung than instrumental conditions in the modal register, and lower for sung than instrumental in the upper register. Experiment 2 replicated a test of a statistical learning model (Hansen & Pearce, 2014). Listeners made prospective uncertainty ratings on how a melody might continue. It was predicted that uncertainty ratings would be lower for sung than instrumental fragments because listeners should expect fewer possible melodic continuations due to vocal constraints. It was also predicted that this difference in uncertainty ratings would be exaggerated in the upper register, where vocal constraints would render most melodic continuations unsingable.

Experiment 1: Replication of Schellenberg et al.

Method

Participants

Participants were 48 undergraduate nonmusicians (Mage = 19.92, SD = 2.59; 38 females) from the University of Guelph-Humber and Toronto Metropolitan University (Ryerson University at the time of data collection). All self-reported normal vision and hearing. Number of years of private music lessons ranged from 0 (28 participants) to 10 (Myears = 1.71, SD = 2.75). Participants from University of Guelph-Humber received a $10 gift card for participation; participants from Toronto Metropolitan University received course credit. The study received IRB approval from both institutions.

Stimuli

The stimuli were four melodic fragments, each from a different Acadian (vocal) folksong, used in Schellenberg et al. (2002). Fragments were 14 or 15 notes long, in a major or minor key, and in duple meter. One fragment was transposed from the key of C minor to D minor. Fragments started at the beginning of a phrase and ended mid-phrase. The interval formed by the two tones preceding the probe tone was always ascending. For each fragment, 15 probe-tone stimuli were created that continued the melody. For a given fragment, the 15 probe tones had the same duration and metric position as in the original melody but differed in pitch height. One probe tone repeated the tone that preceded it, seven were the in-key tones within the octave above the previous tone, and seven were the in-key tones within the octave below the previous tone. In absolute terms, 30 of the probe tones (ranging between F3 and F4) were in a female's modal register (Herbst et al., 2019) and 30 were above (ranging between G4 and G5). A sample stimulus is shown in Figure 2.

Sung renditions were recorded with a Rode NT1-A microphone at a sampling rate of 44.1 kHz. The singer was a female college music student trained in jazz and Indian classical traditions. Her vocal range was E3 to B5 and she sang without vibrato. All tones were sung on the syllable “la.” The singer used some performance expression (variation in loudness and timing), but was instructed to remain consistent in that expression for each fragment. To minimize inconsistencies between renditions, recordings of 6 of the 15 probe tones were made for each fragment, including the lowest and highest probe tones and others approximately evenly spaced in between. The remaining probe-tone stimuli were generated from the original recording by pitch-shifting the final tone using Melodyne software. For example, to generate the A3 probe-tone stimulus in Figure 2, the recording with the G3 probe tone was duplicated and the probe tone was pitch-shifted up 200 cents. For all tones (probe and contextual), the software was also used to correct small deviations (Mean absolute deviation = 14.6 cents, SD = 11.1 cents) to their target frequencies (equal-tempered at standard pitch of A4 = 440 Hz). To evaluate timing consistency between renditions, inter-onset intervals were identified using the Aubio onset detection tool in Audacity. Correlations in inter-onset intervals between renditions for each of the four melodies exceeded .94 in all cases.

MIDI was used to create instrumental analogues that approximated the timing and loudness of the sung renditions. For timing, note onset times were converted from clock time to MIDI ticks. For loudness, mean amplitude estimates were obtained for each sung note using Praat and then converted to MIDI velocity values (in arbitrary units from 1 to 127). No established protocol exists for this procedure and conversion functions vary with hardware used (Bresin et al., 2002). As an approximation, MIDI velocity values were interpolated from recommendations in Bresin et al. (2002) and online forums. Two instrumental analogues were created from the MIDI data: piano (program number 1 in the General MIDI specification) and electric bass (program number 34). Because the latter sounded more like a generic electronic instrument than a bass at the pitch heights used in the experiment, it will hereafter be referred to as “electronic.”

As a final step, MIDI analogues and sung renditions were converted to mp3 and amplitude-normalized. The final 180 stimuli (4 fragments × 15 probe tones × 3 renditions [sung, piano, electronic]) are available at https://osf.io/y7zmt.

Procedure

Participants were randomly assigned to either the sung, piano, electronic, or singability condition. The first three conditions involved listening to either sung, piano, or electronic fragments over Scarlett HP60 headphones and providing goodness-of-fit ratings on the probe tone. Following each fragment, participants were asked “How well does the last tone continue the melody?” and gave ratings on a scale from 1 (extremely badly) to 7 (extremely well) on a computer keyboard. In the singability condition, participants heard the sung renditions with probe tones, and were asked “How hard does the last tone make it to continue the melody?” and gave ratings from 1 (extremely hard) to 7 (extremely easy) after each fragment. Since the melodies in the singability condition were sung, we assumed that listeners would provide ratings based on perceived vocal effort, although we acknowledge that the question may have been interpreted differently by listeners. All conditions were preceded by a practice session, in which it was stressed that the probe tones should be considered continuations of the melody rather than completions. Each participant completed 60 experimental trials (4 fragments × 15 probe tones). Trials were blocked by fragment (4 blocks) and the order of fragments as well as the order of trials within each block were randomized. The entire procedure lasted approximately 20 min.

Data Analysis

Due to experimenter error, 46 of 2160 trials (2.1%) were discarded. For the remaining trials, mean ratings were calculated for each probe tone and each condition. In order to allow for meaningful comparison with Schellenberg et al.'s (2002) original findings, we largely adopted their data analysis strategy. Separate regression analyses were used to fit a number of models to mean goodness-of-fit ratings. Adjusted R2 values were used to compare model fits, and squared semipartial correlations (sr2) were used to evaluate the unique contributions of each predictor variable. Whereas in Schellenberg et al.'s (2002) study, all stimuli were presented with piano timbre, here timbre was entered into the regression analyses as an interaction term.

For the two-factor model, Proximity and Reversal were coded as described in Schellenberg et al. (2002). For the vocal constraints model, we assumed that probe tones should be equally expected provided they fell within the female modal register but should be more unexpected the further they deviate from it. Since no probe tones fell below the lower bound of the female modal register (F3), deviations were coded as the distance of the probe tone in semitones from the upper boundary of the modal register (F4; range = 0–14 semitones, where probe tones coded as 0 fell within the modal register). 1

Because a tone's singability may be confounded by its frequency of occurrence (i.e., harder to sing tones occur less often), the computation model IDyOM (Pearce & Wiggins, 2006) was used to model statistical learning of absolute pitch heights through long-term musical exposure. IDyOM was separately trained on the distribution of absolute pitch heights in two training sets. The first was a canonical set of 903 tonal melodies (50,873 note events) of vocal origin drawn from Canadian and German folksongs and Bach chorales that have been used widely in previous studies (e.g., Gold et al., 2019; Hansen & Pearce, 2014; Pearce & Wiggins, 2006). The second training set contained vocal melodic transcriptions of 194 of the 200 songs in Temperley and de Clercq's (2011) Rolling Stone corpus (59,607 events; spoken word and rap songs excluded), which includes popular songs spanning 1953 to 2003. The latter training set was expected to be more representative of our participants’ listening histories. Based on each training set, and for each of the 60 probe tones, IDyOM estimated the Shannon Information Content (IC) or the negative logarithm of the probability of a probe tone's absolute pitch height. IC values were used to model mean listener goodness-of-fit ratings, where a higher IC value indicates a more “surprising” event (Hansen & Pearce, 2014).

Finally, empirically-derived values reported in Krumhansl (1990) were used to control for the tonal stability of a given probe tone (Schellenberg, 1996; Schellenberg et al., 2002). Because tonality uniquely explained less than 5.3% of variance in all models, these findings are omitted in the description below.

Results

Figure 3 shows mean ratings for sung and instrumental melodies and for modal and upper registers. These findings support the prediction that sung melodies should encourage listeners to expect probe tones in the singer's modal register. First, ratings on probe tones at or below F4 were highest for sung. Planned comparisons showed that while the difference between sung (M = 4.88) and piano (M = 4.31) ratings on probe tones in the modal register was significant, t = 2.41, p = .03, the difference between sung and electronic (M = 4.42) was not, t = 1.87, p = .078. Second, ratings on probe tones in the upper register were lowest for sung. Planned comparisons showed that while the difference between (M = 2.80) and piano (M = 3.76) was significant, t = −3.22, p = .005, the difference between sung and electronic (M = 3.37) was not, t = −1.94, p = .067. Mean ratings did not differ between piano and electronic timbres in either register (ps > .28).

Mean ratings as a function of timbre and register.

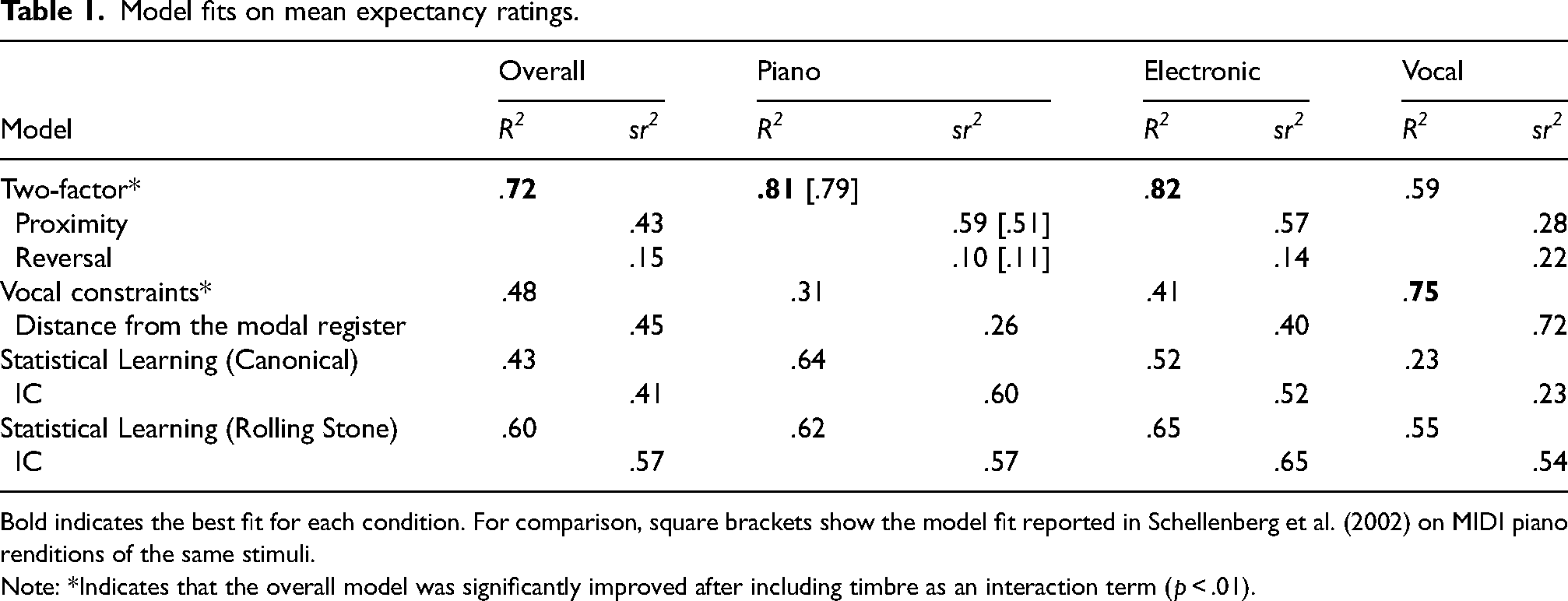

Next, separate regression analyses on the mean ratings were completed for the two-factor, vocal constraints, and statistical learning models. Model summaries are shown in Table 1.

Model fits on mean expectancy ratings.

Bold indicates the best fit for each condition. For comparison, square brackets show the model fit reported in Schellenberg et al. (2002) on MIDI piano renditions of the same stimuli.

Note: *Indicates that the overall model was significantly improved after including timbre as an interaction term (p < .01).

The two-factor model provided the best fit for ratings across timbres (R2 = .72), and was significantly improved by including timbre as an interaction term, F(6, 167) = 2.98, p = .009. The fit was better for electronic alone (R2 = .83) ratings and piano alone (R2 = .82). The latter closely approximated explained variance in Schellenberg et al. (2002) on the same stimuli with the same timbre (see Table 1). On the other hand, the fit was poorer for ratings on sung stimuli (R2 = .59).

Next, the vocal constraints model was tested. The fit was poorer than the two-factor model across timbres (R2 = .47), but was significantly improved by including the timbre interaction term, F(6, 167) = 6.84, p < .001. The vocal constraints model was a poorer fit than the two-factor model for piano ratings (R2 = .31) and electronic ratings (R2 = .41) but, as predicted, improved on the two-factor model for vocal ratings (R2 = .75). Model fits across timbres for the two statistical learning models did not exceed the two-factor model (Canonical R2 = .43; Rolling Stone R2 = .60), and neither was significantly improved by including timbre as an interaction term (ps < .098).

To further compare the predictive value of each of the models, we followed Schellenberg et al. (2002) in fitting each model to each participant's individual responses. Adjusted R2 values for each participant were then entered into a mixed ANOVA with Model (Two-Factor, Vocal Constraints, Statistical Learning – Canonical, Statistical Learning – Rolling Stone) as repeated measures factor and Timbre (Piano, Sung, Electronic) as between participants factor. The main effect of Timbre was significant, F(2, 33) = 3.296, p = .05, as was the main effect of Model, F(3, 99) = 22.172, p < .001, and the Timbre × Model interaction, F(6, 99) = 15.612, p < .001. Post-hoc comparisons showed that the effect of Model was significant for all three timbres (ps ≤ .001). In the sung condition, adjusted R2 values were significantly higher for the vocal constraints model than the other three models (ps ≤ .036). In the electronic condition, adjusted R2 values were significantly higher for the two-factor model than the other three models (ps ≤ .008). In the piano condition, adjusted R2 values were significantly higher for the two-factor model than the vocal constraints model (p ≤ .04), but did not differ between two-factor and either of the statistical learning conditions (ps ≤ .109). Thus, these findings for individual participants were consistent with the modelling of data aggregated across participants in supporting the two-factor model as the best fit in the instrumental conditions and the vocal constraints model as best fit in the sung condition. 2

Finally, mean ratings on the explicit measure of singability were significantly correlated with goodness-of-fit ratings for all three timbres, but the relationship was stronger for sung renditions (.87) than electronic (.67) or piano (.56). This supports expectancy ratings in the sung condition as an implicit measure of perceived singability.

Discussion

Findings from Experiment 1 supported both the prediction that listeners should expect that the next tone will fall in the modal register and that this should be encouraged by sung melodies. In the sung condition, absolute pitch height of the probe tone best predicted ratings.

Although these findings support a zeroth-order model under sung conditions, it's possible that this was an artifact of the task. Given repeated (and potentially monotonous) presentations of the same four melodies and explicit instructions to focus on the final tone, evaluating the singability of the final tone may have been an optimal strategy for “tuning out” the preceding context that was more achievable for sung condition listeners than instrumental. Experiment 2 aimed to obtain further evidence for effects of timbre and register consistent with the vocal constraints model, but for melodies that were not repeated and for which the task instructions did not encourage focus on the final tone. Another goal in Experiment 2 was to eliminate spectrotemporal modulation cues as a factor that might have contributed to melodic expectancies in the sung condition of Experiment 1. By spectrotemporal cues, we are referring to momentary changes in spectrum or pitch that may have been introduced in the sung versions inadvertently due to coarticulation or expressive intent (see the concept of “scoops” in Larrouy-Maestri & Pfordresher, 2018). This was done through the use of a synthesized singing voice.

Experiment 2: Replication of Hansen and Pearce

Background

Hansen and Pearce (2014) trained IDyOM on the statistical structure of the canonical set of Western tonal melodies described above. This was intended to model the long-term exposure to tonal music in a typical listener. Melody fragments for a listening experiment were chosen from two sets of test melodies (isochronous English hymns and Schubert lieder). For each tone in each melody in the test sets, the model outputted a probability distribution of continuation pitches. Fragments were chosen whose final-tone distribution was either low or high in entropy. Low entropy indicates a peaked distribution in which the actual pitch of the final tone in the fragment clearly emerges as the most likely continuation. High entropy indicates a flat distribution in which the actual final tone does not stand out among other possible continuations. Importantly, these final tones were omitted in the task replicated here. Listeners heard each fragment once in piano timbre and rated how certain they were about how the melody would continue. Results partially supported entropy as a model of listener uncertainty: for the English hymns but not the Schubert lieder, listener uncertainty ratings were significantly higher for high-entropy fragments.

Here low- and high-entropy English hymn fragments were again presented to listeners, but with additional between-subjects manipulations of timbre (piano vs. sung) and register (modal vs. upper). It was again predicted that an expectation of singability should emerge for sung stimuli. Specifically, uncertainty ratings were expected to be lower for the sung conditions (especially the upper register) regardless of entropy, because listeners should perceive fewer possible melodic continuations due to vocal constraints.

Method

Participants

A total of 102 undergraduate nonmusicians (Mage = 24.6, SD = 8.12; 82 females) enrolled in an introductory psychology course at Toronto Metropolitan University participated for course credit. All self-reported normal vision and hearing. Number of years of private music lessons ranged from 0 (58 participants) to 20 (Myears = 1.89). The study received ethics approval from the IRB.

Stimuli

MIDI renditions of each of fragment were recreated at the original tempo of ∼160 BPM but with two additional manipulations: Timbre (piano [as in the original] vs. sung) and Register (modal vs. upper). The singing synthesis MIDI software Synthesizer V (https://dreamtonics.com/en/synthesizerv/) was used to create sung versions. A vibrato-less female voice was used. For the upper register manipulation, the original fragments were transposed between −1 and 8 semitones from the originals so that they fell within reported norms for the female falsetto register (Herbst et al., 2019). Median pitch in each fragment was C5 (min: F4, max: B5). For the modal register manipulation, fragments were transposed between −4 and −13 semitones so that the median pitch in each fragment was C4 (min: F3, max: B4). Fragments were either in a major or minor key, had between 9 and 14 notes, and spanned between 7 and 14 semitones.

As a final step, MIDI files were converted to mp3 and amplitude-normalized. The final 48 stimuli (12 fragments [6 low entropy, 6 high] × 2 timbres × 2 registers) are available at https://osf.io/y7zmt.

Procedure

The study was completed online. Participants were randomly assigned to one of four conditions: Instrumental-modal (n = 27), Instrumental-upper (n = 25), Sung-modal (n = 25) or Sung-upper (n = 25). Low- and high-entropy fragments were presented in random order in a single block. After each fragment, participants were asked “How certain do you feel about how the melody would continue?” and gave a rating between 1 (highly certain) and 9 (highly uncertain). The entire procedure lasted approximately 10 min.

Results

A mixed-model ANOVA was completed, with Entropy as within-subjects factor and Timbre and Register as between-subjects factors. Effect sizes were estimated with generalized eta-squared, which allows for meaningful comparisons between within- and between-subjects factors (Olejnik & Algina, 2003).

Significant findings are shown in Figure 4. There was a main effect of Entropy, F(1, 98) = 5.74, p = .01, η2G = .014. Consistent with the original study (Hansen & Pearce, 2014), uncertainty ratings were significantly higher for high-entropy fragments (M = 4.86) than low-entropy fragments (M = 4.56). Main effects were not significant for Timbre, F(1, 98) = 1.7, p = .20, η2G = .013, or Height, F(1, 98) = .27, p = .60, η2G = .002. But there was a significant Timbre × Height interaction, F(1, 98) = 3.92, p = .05, η2G = .029. Post-hoc tests showed that in the upper register condition, uncertainty ratings were significantly higher for piano fragments (M = 5.13) than sung fragments (M = 4.39), t = 2.40, p = .02. These findings support the prediction that listeners should perceive fewer possible continuations due to vocal constraints. In the modal register condition, ratings did not significantly differ between piano (M = 4.56) and sung fragments (M = 4.72), t = −.47, p = .64. 3

(Left panel) Main effect of entropy; (right panel) timbre × register interaction.

Discussion

In Experiment 2, the effect of entropy originally found in Hansen and Pearce (2014) was again found here. However, uncertainty ratings in the upper register condition were significantly lower for sung melodies than instrumental, and the effect size of timbre in the upper register (η2G = .078) was larger than for entropy (η2G = .008), suggesting it was the singability of the final tone that largely contributed to listeners perceiving fewer possible continuations. For example, although it can’t be verified from the current task, listeners in the Sung-upper condition may have been biased towards expecting melodies to descend towards the modal register.

General Discussion

Two previous melodic expectancy experiments were replicated (Hansen & Pearce, 2014; Schellenberg et al., 2002), but neither was robust to novel manipulations of timbre and register. Ratings in the instrumental conditions were consistent with the original findings (and by extension their cognitive theoretical underpinnings), but differed significantly from ratings in the sung conditions, which were more consistent with the vocal constraints model. Sung condition ratings were better modelled on the absolute height of the probe tone than on pitch relations. Because musical structure was held constant between timbres, findings from the sung conditions of the two experiments are inconsistent with the assumption that listener expectancies are mediated by a mental representation of either pitch structure (Thompson et al., 2022) or the central tendency of the pitch heights of the stimulus tones (Von Hippel and Huron, 2001).

The most reasonable interpretation of these findings is that sung melodies encouraged an expectation that the next tone should fall within the modal register. The modal register is favored among occasional singers, in everyday speech, and among a wide range of the world's singing traditions. This may be due to its efficiency and ease. Vocal-motor mapping may be a mechanism for generating an expectation of singability. Pitch-matching studies support the primacy of absolute pitch height in vocal-motor mapping. Even without explicit instruction, singers’ reproductions of sequences of tones tend to be distributed around their absolute pitch heights rather than transpositions that preserve their pitch relations (Jacoby et al., 2019). Also, occasional singers are more accurate at matching the absolute pitch heights of target tones from a vocal model than an instrumental one (Granot et al., 2013; Watts & Hall, 2008), especially when the model is one's own voice (Hutchins & Peretz, 2012; Moore et al., 2008). More broadly, evidence for the role of covert vocal-motor activity in auditory perception has been demonstrated in behavioral (e.g., Wood et al., 2020) as well as neurophysiological studies (e.g., Brown & Martinez, 2007; Bruder & Wöllner, 2021; D'Auselio et al., 2011; Pruitt et al., 2019). Evidence for vocal-motor mapping here was indirect and modest; in Experiment 1, explained variance for the vocal constraints model on the subset of female listeners (who should have greater registral overlap with the sung stimuli) improved from 75% to 77%, and the effect size of timbre in the upper condition of Experiment 2 improved for female listeners (from η2G = .078 to .084). Further evidence of a role for vocal-motor mapping in melodic expectancy might be found using an individual differences approach. Measures could include listeners’ “comfort pitch” in singing and/or speech or surface EMG recordings of laryngeal activity. Another possibility could be to use a “shadowing” task in which listeners concurrently sing a tone sequence that varies unpredictably in pitch and inter-onset interval. For example, Leonard et al. (1987) showed that the latency at which singing stabilized on a target pitch (on average ∼250 ms) scaled with its proximity to the previous tone, suggesting latency could be a viable index of vocally constrained melodic expectancies.

Although singability may be partially confounded by familiarity (i.e., harder to sing pitches occur less frequently), findings from Experiment 1 did not support an alternative statistical learning account. Computational modelling of listener responses based on the distributions of pitch heights in two large corpora of vocal melodies did not improve on the vocal constraints model for sung melodies. Still, an expectation of singability based on familiarity rather than vocal-motor mapping remains possible. In this case, expectancies might just as easily be learned from long-term exposure to the distribution of pitch heights in singing and/or speech as from musical structure. Whether via vocal-motor mapping, passive exposure to singing and speech, or both, an expectation of singability could explain some studies’ failure to find differences between the responses of musically trained and untrained listeners (e.g., Cuddy & Lunney, 1995; Hansen & Pearce, 2014; Schellenberg, 1996). These findings are at odds with statistical learning since a trained listener should have a richer internal model of musical structure. One explanation might be that the trained participants are often instrumentalists who may have had no more singing or speech experience than untrained participants.

It is also possible that listeners anticipate the next tone in a melody in a variety of ways depending on context (Von Hippel, 2002). For sung tonal melodies, the current findings suggest listeners can make use of an expectation of singability that makes little or no working memory demands but is relatively insensitive to preceding context. For instrumental tonal melodies, the current findings suggest that listeners make use of Gestalt grouping principles (but see Pearce & Wiggins, 2006, exp. 2), and may thus be more sensitive to preceding context at the expense of greater working memory demands. Two predictions that follow are 1) that listeners should be less sensitive to longer-term dependencies in sung melodies than instrumental and 2) listeners should report the task of providing goodness-of-fit ratings to be more effortful for instrumental melodies than sung. In still other contexts, such as learning an unfamiliar musical system from instrumental melodies, previous studies suggest that listeners use a domain-general statistical learning mechanism based on pitch relations. Interestingly, some of these studies have shown that learning is impaired when proximity and reversal are violated (Loui, 2012; Rohrmeier & Cross, 2013). The authors speculated that this may reflect the influence of innate Gestalt grouping rules and/or prior knowledge. The latter, however, is complicated by the fact that trained instrumentalists were equally impacted by violations as untrained participants. A vocal constraints account predicts that there should be greater impairment of learning when such violations occur in sung melodies than instrumental ones, with no expected interactive effect of instrumental music training.

Potential Model Extensions

The vocal constraints model could be extended to include predictions about note duration. For example, an expectation for “leap lengthening,” i.e., that the duration of the first note in a large melodic interval should be extended, has been demonstrated in musical practice (Huron & Mondor, 1994; cited in Huron, 2001) and in listener evaluations of synthesized singing (Sundberg et al., 1983). Huron (2001) attributes these expectancies to Fitts’ Law, a constraint on muscular motion (including the vocal cords), which states that the time required to move from one target to another is a function of the distance separating the targets. This can be at least partially circumvented by musical instruments, such as through the redundant distribution of pitches in stringed instruments or by the recruitment in different effectors. Therefore, leap lengthening should be more expected for sung melodies than instrumental.

The model could also be extended to include predictions about tonality. Effects of tonality were negligible in Experiment 1, but may have been minimized by the use of strongly tonal melodies with only in-key probe tones. As convergent evidence, there was a significant effect of entropy across timbres in Experiment 2, in which three of the six low-entropy melodies had an out-of-key augmented fourth as the final tone, implying a tonic resolution in the dominant key. Eitan et al. (2017) recently showed that absolute pitch and key height (G major vs. Db major) impacted listeners’ judgments of tonal fit and tension. Thus, although not demonstrated here, a probe tone's perceived tonal stability might be predicted to covary with its perceived singability. It should be noted that scale systems based on interval ratios among scale degrees (such as those used here) are a recent development in human history applied to tuning instruments. Pfordresher and Brown (2017) proposed that certain regularities found across scale systems, such as their tendency towards having 6 ± 1 degrees, may have ultimately evolved from vocal tuning limitations. As evidence, they showed that fundamental frequencies of sung intervals often deviate from simple integer ratios (e.g., 2:1 for the octave) and even overlap with neighboring interval classes (e.g., an intended minor third sung closer to a major second), and that this has little impact on listener judgments of vocal quality. These findings imply that a fruitful extension of the vocal constraints model might investigate whether intonation rather than tonal stability impacts listener expectancies as a function of timbre and register.

The explicit measure of singability in Experiment 1 provided indirect evidence for a role of perceived vocal effort in listener expectancies. However, aside from using pitch heights that fell within normative limits for singers reported in the literature, any other potential indicators of vocal effort in the sung stimuli were deliberately minimized. Of course, this is far removed from musical practice. Future research might consider how factors such as intonation, timbre, expressive timing, and facial expressions (Ceaser et al., 2009; Thompson & Russo, 2007) could potentially contribute to perceived vocal effort and impact melodic expectancies.

Footnotes

Action Editor

Ian Cross, University of Cambridge, Faculty of Music.

Peer Review

Richard Ashley, Northwestern University, Bienen School of Music.

Emily Morgan, University of California Davis, Department of Linguistics.

Contributorship

PA was involved in study design, participant recruitment and data analysis, and wrote the first draft of the manuscript. PA and FAR reviewed and edited the manuscript and approved the final version of the manuscript.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethical Approval

The ethics committee of Toronto Metropolitan University approved this study (REC number: 2019-350). Participants provided written and electronically signed consent in Experiments 1 and 2, respectively.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Experiment 1 was supported by a University of Guelph-Humber Research Grant.