Abstract

Two behavioral studies are reported that ask whether listeners experience different emotions in response to melancholic and grieving musical passages. In the first study, listeners were asked to rate the extent that musical passages made them feel positive and negative, as well as to identify which emotion(s) they felt from a list of 24 emotions. The results are consistent with the hypothesis that listeners experience different emotions when listening to melancholic and grieving music. The second study asked listeners to spontaneously describe their emotional states while listening to music. Content analysis was conducted in order to find any underlying dimensions of the identified responses. The analysis replicated the finding that melancholic and grieving music led to different feelings states, with melancholic music leading to feelings of Sad/Melancholy/Depressed, Reflective/Nostalgic, Rain/Dreary Weather, and Relaxed/Calm, while grieving music led to feelings of Anticipation/Uneasy, Tension/Intensity, Crying/Distraught/Turmoil, Death/Loss, and Epic/Dramatic/Cinematic.

Introduction

Sadness appears to have a special attraction for music researchers. Music-related sadness accounts for roughly 23% of all of the passages used in studies of music and emotion—more than any other emotion, including happiness and fear (Warrenburg, 2020a). Part of the appeal of sadness as a research topic is related to the paradox of enjoyable sadness—the fact that sad music is able to induce positive emotions—which has attracted philosophical commentary and speculation from ancient to modern times (Levinson, 2014).

When people listen to music, they can both perceive emotion in the music and experience emotion from the music. A person may listen to a cello suite and think that the music sounds melancholic, for example. In this case, they perceive the music to be expressing a certain emotion: melancholy. As they listen to this mournful cello, however, they may experience nostalgia, tenderness, peace, melancholy, or a combination of these emotions. Sometimes listeners may perceive and experience the same emotions from listening to a piece of music, but at other times, the perceived and experienced emotions may not match. The difference between perceived and experienced emotion is of special interest to researchers of sad music because, although many people may view the music as expressing sadness, people’s emotional reactions to the sad music vary widely. In fact, it is known that people experience both positive and negative emotions from sad music (Eerola & Peltola, 2016). Researchers often wonder, then, what it is exactly that makes sad music unique.

Musical sadness, as previously defined, may in fact be a synthesis of more than one emotional state (Eerola et al., 2017; Huron, 2015; Laukka et al., 2013; Quinto et al., 2014; Taruffi & Koelsch, 2014; van den Tol, 2016). In other words, rather than having a single broad category of sad music, it might be possible to identify multiple sad affects. This body of work has also indicated that the experience of listening to sad music may vary widely across people. Research by Tuomas Eerola, Henna-Riikka Peltola, and Jonna Vuoskoski, for example, has called attention to the fact that what researchers call musical sadness may be a combination of incompatible emotions (Eerola & Peltola, 2016; Eerola et al., 2015; Peltola & Eerola, 2016). These researchers conducted a large, systematic survey asking about people’s attitudes toward sad music. Through thematic content analysis of the survey responses, Eerola and his colleagues found that three different kinds of emotions are induced by listening to sad music: grief, melancholia, and sweet sorrow (Peltola & Eerola, 2016). A factor analysis conducted on this data found three slightly different emotions evoked from nominally sad music: grief-stricken sorrow, comforting sorrow, and sublime sorrow (Eerola & Peltola, 2016). Of these three musically induced emotions, only grief-stricken sorrow represents a negatively valenced experience. It is clear, therefore, that nominally sad music can evoke both negative and positive emotions.

In addition to this research, it appears that the way researchers define sadness varies widely. Listeners appear to offer a wide range of descriptions when characterizing nominally sad music. These terms include bitter, bittersweet, crying despair, dark, depressed-sad, depression, despair, desperate, doleful, frustrated, funeral, gloomy, gloomy-tired, grief, heavy, melancholy, miserable, mournful, pathetic, quiet sorrow, sad, sad-depressed, sadness, sadness-grief-crying, solitary, sorrowful, tearful, and tragic (Çano & Morisio, 2017; Hevner, 1936; Juslin & Laukka, 2003; Laukka et al., 2013; Quinto et al., 2014; Tilson Thomas, 2011; Zentner et al., 2008). Using operationalizations made by psychologists, not all of these terms even correspond to emotions. Researchers distinguish three categories of affective responses: emotions, moods, and personality traits (Scherer & Zentner, 2001). Emotions are described as brief affective episodes that occur in response to a significant internal or external event; moods are described as longer affective states characterized by a change in subjective feelings of low intensity; and temperaments are described as a combination of dispositional and behavioral tendencies. According to this classic taxonomy, sad and desperate would be deemed emotions, gloomy, listless, and depressed would be moods, and morose would be a temperament or personality trait. Without specifying which of these three affective classes (emotions, moods, personality traits) are being examined in a particular experiment, it becomes impossible to compare results across studies. One indication that researchers have (unknowingly) compared results across these three affective classes is that meta-analyses on musical sadness provide seemingly null results. Despite the fact that many studies have shown that participants can perceive and experience musical sadness, a review found that there is no association of nominally sad music with positive or negative valence (Eerola & Vuoskoski, 2011). The current paper tests whether the large variance in responses to sad music could be a consequence of the failure to distinguish multiple states of sadness.

Recent research is consistent with the idea that musical sadness may be a synthesis of (at least) two distinctive affective states: melancholy and grief (Huron, 2015, 2016; Warrenburg, 2020b). The distinction between melancholy and grief dates back to Darwin (1872), who suggested that these emotions may have separate motivations and physiological characteristics. In simple terms, it appears that melancholy is a negatively valenced emotion associated with low physiological arousal, while grief is a negatively valenced emotion associated with high physiological arousal (Andrews & Thomson, 2009; Frick, 1985; Kottler, 1996; Mazo, 1994; Nesse, 1991; Rosenblatt et al., 1976; Urban, 1988; Vingerhoets & Cornelius, 2012).

In a series of studies, Warrenburg has presented evidence consistent with the idea that melancholic and grieving music exhibit different structural features (Warrenburg, 2020b) and that listeners perceive different emotions in melancholic and grieving musical passages (Warrenburg, 2019). This research notwithstanding, there is currently no empirical evidence supporting the idea that people experience different types of emotion in response to melancholic and grieving musics.

There is more than one way that music can induce emotion in listeners. In particular, the BRECVEMA model of Juslin (2013) posits that music is able to evoke emotional responses through brain stem reflexes, rhythmic entrainment, evaluative conditioning, emotional contagion, visual imagery, episodic memory, musical expectancy, and aesthetic judgment. The combination of these various mechanisms suggests that music may be able to elicit multiple emotions in parallel, making it possible to experience mixed-valenced emotions such as nostalgia, wonder, and pleasurable sadness.

Disparate emotional reactions to nominally sad music can also be explained, in part, by differences in listeners’ personality characteristics and dispositional factors, and by the environmental situations during music listening (Dobrota & Ercegovac, 2015; McCrae, 2007; Nusbaum & Silvia, 2011; Rentfrow & Gosling, 2003; Silvia et al., 2015). Some of the reasons why people have listened to nominally sad music, for example, are to reminisce, to get comfort, to experience new emotions, and to share emotions with others (Eerola & Peltola, 2016). People may also listen to music as a means of escape, to experience transcendence, to produce pleasure, to regulate emotions, or to provide diversion (Juslin et al., 2008; Saarikallio & Erkkilä, 2007; Schäfer et al., 2013; Schäfer & Eerola, 2020).

By asking the same person to describe their experiences from listening to melancholic and grieving music, however, we may be able to limit some of the variance due to dispositional, personality, and situational factors. The central research question addressed in the current paper is whether a person experiences different patterns of emotions in response to melancholic and grieving music. The paper tests this conjecture directly using two separate methodologies.

Study 1

Hypothesis

The principal goal of the first study was to explore which emotions are evoked or induced in listeners from grieving and melancholic musical passages taken from extant research. Given the complicating factors surrounding induced emotion from music listening, there were no specific a priori hypotheses made about which individual emotion classes or categories would differ in response to the different music types. Accordingly, the main hypothesis was simply that:

Stimuli

A review conducted by Eerola and Vuoskoski (2013) suggests that passages around 30–60 seconds in duration are sufficient to induce emotion in listeners. As such, it was decided to make use of one-minute passages of experimenter-selected music—specifically 16 musical excerpts. The selected stimuli have been previously validated as representing their respective emotions to listeners of a similar age and musical background to those in the current experiment. The melancholy and grief stimuli came from Warrenburg (2019; 2020b) and the tender and happy passages were taken from Eerola and Vuoskoski (2011). The complete list of stimuli used in Study 1 can be found in Table 1.

List of musical stimuli used in Study 1.

Note: Items in bold in were also used in Study 2.

Experimental Procedure

The protocols for Studies 1 and 2 were approved by the Ohio State University Institutional Review Board and were deemed compliant with state, federal, and university regulations. Study 1 was conducted online using the Qualtrics Research Suite and was 40–60 minutes in duration.

Participants were not told about the hypothesized differences between melancholy, grief, happiness, and tenderness, in order to minimize potential bias in favor of the experimenter’s theoretical perspective. The difference between induced and perceived emotion, however, was explained to participants. After learning about the difference between induced and perceived emotions, participants received the following instructions regarding the induced emotion tasks: This block is about experienced emotion. You will listen to some passages of music. During this time, we ask that you try to become absorbed in the music as much as you can. We would like you to pay attention to the passages and monitor how you feel when you listen to them. You may feel one or more emotions as you listen, or you might not feel any emotion. That is okay. We are just interested in how you feel as you listen to the music right now. Try not to think about how the music may have made you feel in the past, but rather focus on your experience as you listen to the music today.

After listening to each passage, participants were asked the following questions:

To what extent does this audio file make you feel a positive emotional reaction? (11-point Likert scale: 0 “I feel little or no positive emotion” to 10 “I feel an extreme amount of positive emotion”)

To what extent does this audio file make you feel a negative emotional reaction? (11-point Likert scale: 0 “I feel little or no negative emotion” to 10 “I feel an extreme amount of negative emotion”)

Identify which emotion(s) the audio file makes you feel by checking the appropriate emotion(s) from the following list. You may select as few or as many as you like: angry, bored, compassionate, disgusted, excited, fearful, grieved, happy, invigorated, joyful, melancholy, nostalgic, peaceful, power, relaxed, soft-hearted, surprised, sympathetic, tender, transcendent, tension, wonder, neutral/no emotion, other emotions.

Positivity and negativity have been shown to be separable in emotion experiences, which is why two unipolar scales were used instead of a single bipolar scale (Larsen & McGraw, 2011). The 24 emotions were selected because the terms are theoretically important to research on emotions possibly induced in music. Fourteen of the emotion terms were selected to replicate the list of emotion choices used in previous studies of perceived emotion (Warrenburg, 2019). Because the current study employed the same musical stimuli as the perceived emotion study, the use of the same emotion checklist allows there to be comparisons of perceived and induced emotions from the same musical stimulus.

In the perceived emotion studies, the terms melancholy, grieved, happy, tender, anger, fear, and disgust were chosen because the musical (and non-musical) stimuli were shown to express these emotions (Eerola & Vuoskoski, 2011, Warrenburg, 2019). As it is difficult for an observer to differentiate between people in melancholic, bored, relaxed, and sleepy states, despite the differences in valence in these states, the terms bored and relaxed were added in order to investigate whether melancholic or tender sounds would also give rise to experiences of relaxation or boredom (Andrews & Thomson, 2009; Nesse, 1991). The term surprised was used because music can be surprising when it differs from our expectations (Huron, 2006). Finally, the terms excited and invigorated were added to the list of emotions in order to balance the amount of emotion terms in each quadrant (in the perceived emotion study).

In addition to these 14 terms, the emotions identified in the GEMS-9 were included because they have been shown to be commonly induced by music: wonder, transcendent, nostalgic, peaceful, power, joyful, tension (Zentner et al., 2008). The term anxious was added, since music can induce anxiety (Trevor et al., 2020). Finally, sympathetic and soft-hearted were used in order to investigate directly the amount of compassion or empathy a person might feel toward the sounds (Greitemeyer, 2009; Huron & Vuoskoski, 2020). This resulted in a final list of 24 items: angry, anxious, bored, disgusted, excited, fearful, grieved, happy, invigorated, joyful, nostalgic, peaceful, power, relaxed, sad, soft-hearted, surprised, sympathetic, tender, transcendent, tension, wonder, neutral/no emotion, other emotion(s).

These 24 emotions belonged to four a priori dimensional classes, based on emotional theories (e.g., Russell et al., 1989) and music research (e.g., Eerola & Vuoskoski, 2011, 2013; Warrenburg, 2020a). Angry, fearful, grieved, and tension were classified as negative valence and high arousal (NV HA); bored, disgusted, and melancholy were classified as negative valence and low arousal (NV LA); compassionate, nostalgic, peaceful, relaxed, soft-hearted, sympathetic, and tender were classified as positive valence and low arousal (PV LA); and exited, happy, invigorated, joyful, power, transcendent, and wonder were classified as positive valence and high arousal (PV HA).

After participants selected which emotions they experienced from the list of terms, they were presented with the list of the terms they selected. They were then asked to respond to the following question:

4. Given this list of emotion terms you chose, which one(s), if any, strongly apply?

Question 4 was asked so that a three-point gradient of emotional intensity (does not apply, applies, strongly applies) could be created. Participants were allowed to identify any other emotion(s) they experienced when listening to the musical excerpts.

Listeners were also given the option to write down experiences they felt or thoughts they had when listening to the music. The free response format was chosen so the answers could be analyzed using thematic content analysis to uncover common themes (see Study 2 for methodological and statistical details). The specific prompt was the following:

5. What kinds of thoughts went through your head as you listened to this music? It could be images, metaphors, moods, or anything else you can think of.

Finally, participants were also asked to indicate their degree of familiarity with the musical passages on a three-point scale:

6. How familiar are you with this audio file? (not familiar, somewhat familiar, very familiar)

Participants

A total of 63 participants from Ohio State University School of Music and Department of Psychology participated in Study 1, of which 45 answered demographic questions. Of these 45 participants, 33 were male and 12 were female. The age of the participants ranged from 18 to 47 (mean = 21.6). Participants started music lessons, on average, at the age of 10 years (range 5–19 years), had been taking music lessons for an average of 6.5 years (range 0–18 years), and had been involved in daily practice for 7.6 years (range 0–30 years). On average, the participants had completed about one year of full-time coursework in a Bachelor of Music degree program (or equivalent), although the amount of college music courses participants had taken ranged from none through one or more graduate-level music courses or degrees.

Results

Positive and negative ratings

Recall that participants rated how positively and negatively the musical stimuli made them feel on a 0–10 Likert scale (0: I feel little or no positive/negative emotion; 10: I feel an extreme amount of positive/negative emotion). For the grieving stimuli, the mean score of experienced negative emotions was 5.923 (SD = 2.681), and for the melancholic stimuli, the mean score of experienced negative emotions was 4.624 (SD = 2.541). The mean score of experienced positive emotions from listening to grieving music was 2.491 (SD = 2.303), and the mean score of experienced positive emotions from listening melancholic music was 2.889 (SD = 2.367). The mean score of experienced positive emotions experienced from listening to happy music was 7.172 (SD = 2.220), and the mean score of experienced positive emotions from listening to tender music was 5.857 (SD = 2.390). For the happy stimuli, the mean score of experienced negative emotions was 0.916 (SD = 1.584), and for the tender stimuli, the mean score of experienced negative emotions was 2.067 (SD = 2.180).

Two ordinary least squares (OLS) regression analyses were conducted in order to investigate the effect of the stimulus types (melancholy, grief, happy, tender) and personality characteristics on positive and negative ratings of experienced emotions. An interaction term for stimulus valence and arousal was included in each regression. The adjusted R2 of the regression predicting positive emotion ratings was 0.453, indicating that the variables accounted for about 45% of the variance in the positive rating scores. Because of the inclusion of the interaction term, the interpretation of the valence and arousal coefficients are different than the usual partial regression effects. In this case, the coefficients of valence (where negative valence, NV, was coded as 0 and positive valence, PV, was coded as 1) and arousal (where low arousal, LA, was coded as 0 and high arousal, HA, was coded as 1) are conditional effects.

The valence coefficient in the regression model predicting positive emotion ratings (coef. = 3.005, p < 0.01) shows how much two cases that differ in valence—negative and positive—differ in positive emotion ratings when the arousal is coded as 0 (low arousal). In other words, when the music exhibits low arousal, positively valenced stimuli should result in scores that are 3.0 points higher than negatively valenced stimuli (on the 0–10 scale of experienced positive emotion). For example, using the regression model, the expected rating of experienced positive emotions when listening to NV LA music is 2.9 and the expected rating of experienced positive emotions when listening to PV LA music is 5.9.

The arousal coefficient in the regression model predicting positive emotion ratings (coef. = -0.463, p = 0.031) indicates how much two cases that differ in arousal—low and high—differ in positive emotion ratings when the valence is coded as 0 (negative valence). When the music is negatively valenced, stimuli that exhibit high arousal should result in scores that are 0.5 points lower on experienced positive emotion than stimuli that exhibit low arousal. Using the regression model, the expected rating of experienced positive emotions when listening to NV LA music is 2.9 and the expected rating of experienced positive emotions when listening to NV HA music is 2.4.

The non-zero valence-arousal interaction term (coef. = 1.743, p < 0.01) indicates that the effect of the change in valence on positive emotion ratings depends on the arousal level of the music. The interaction term represents how the conditional effect of valence (negative to positive) changes as arousal changes by one unit (low to high). Equivalently, this interaction term is the amount by which the conditional effect of arousal (low to high) changes as valence changes by one unit (negative to positive).

According to the regression model’s interaction term, the effect of valence (negative to positive) on experienced positive emotion ratings should change by 1.74 when listening to high-arousal music (compared with low-arousal music). For example, the regression model expects the difference in positive emotion ratings when listening to NV LA music versus PV LA music to be around 3.0 (expected NV LA rating = 2.9, expected PV LA rating = 5.9). However, the expected difference in positive emotion ratings when listening to NV HA music versus PV HA music is 4.8 (expected NV HA rating = 2.4, mean PV HA rating = 7.2). The interaction term captures the difference between 3.0 and 4.8, or (roughly) 1.74.

The interaction term also tells us that the effect of the music’s arousal (low to high) on experienced positive emotion ratings should change by 1.74 when listening to positively valenced stimuli, compared with negatively valenced stimuli. In this case, the expected difference in positive emotion ratings when listening to NV LA music versus NV HA music is around -0.5 (expected NV LA rating = 2.9, expected NV HA rating = 2.4). The expected difference in emotion ratings when listening to PV LA music versus PV HA music is 1.3 (expected PV LA rating = 5.9, expected PV HA rating = 7.2). The interaction term captures the difference between -0.5 and 1.3, or (roughly) 1.74. Table 4 in Appendix A, summarizes the complete regression model of ratings of positive emotional experiences.

The adjusted R2 of the regression predicting negative emotion ratings was 0.437, indicating that the variables accounted for about 44% of the variance in the negative rating scores (see Table 5 in Appendix A). Once again, the interpretations of the valence and arousal coefficients are conditional effects. The valence coefficient in the regression model predicting negative emotion ratings (coef. = -2.542, p < 0.01) shows how much two cases that differ in valence—negative or positive—differ in negative emotion ratings when the arousal is coded as 0 (low arousal). In other words, when the music exhibits low arousal, positively valenced music should result in negative emotion ratings that are 2.5 points lower than negatively valenced music. For example, using the regression model, the expected rating of experienced negative emotions when listening to NV LA music is 4.6 and the expected rating of experienced negative emotions when listening to PV LA music is 2.1.

The arousal coefficient in the regression model predicting negative emotion ratings (coef. = 1.280, p < 0.01) indicates how much two cases that differ in arousal—low or high—differ in negative emotion ratings when the valence is coded as 0 (negative valence). When the music is negatively valenced, music that exhibits high arousal should result in scores that are 1.3 points higher on experienced negative emotion than music that exhibits low arousal. The expected rating of experienced negative emotions when listening to NV LA music is 4.6 and the expected rating of experienced negative emotions when listening to NV HA music is 5.9.

Once again, the non-zero valence-arousal interaction term (-2.445, p < 0.01) indicates that the effect of the change in music’s valence on negative emotion ratings depends on the arousal level of the stimulus. The interaction term indicates that the effect of valence (negative to positive) on experienced negative emotion ratings should change by -2.45 when listening to high-arousal music (compared with low arousal music). That is, the expected difference in negative emotion ratings when listening to NV LA versus PV LA music is around -2.5 (expected NV LA rating = 4.6, expected PV LA rating = 2.1). However, the expected difference in negative emotion ratings when listening to NV HA versus PV HA music is -5.0 (mean NV HA rating = 5.9, mean PV HA rating = 0.9. The interaction term captures the difference between -2.5 and -5.0, or (roughly) -2.45.

The interaction term also tells us that the effect of arousal (low to high) on experienced negative emotion ratings should change by -2.45 when listening to positively valenced music, compared with negatively valenced music. In this case, the expected difference in negative emotion ratings when listening to NV LA music and NV HA music is around 1.3 (expected NV LA rating = 4.6, expected NV HA rating = 5.9). The expected difference in negative emotion ratings when listening to PV LA music versus PV HA music is -1.2 (expected PV LA rating = 2.1, expected PV HA rating = 0.9). The interaction term captures the difference between 1.3 and -1.2, or (roughly) -2.45.

Specific Emotion Ratings

The distribution of the experiences of the 24 emotion terms across happy, tender, melancholic, and grieving stimuli are shown in Table 6 in Appendix A. These four distributions were examined through a series of chi-square tests. Rather than use the 3-point emotion scale (emotion does not apply, emotion applies, emotion strongly applies) for a person’s experienced affective state, a binary coding of emotion induction was created (does not apply vs. applies/strongly applies). The chi-square tests utilized the binary coding of induced emotion. The results of the omnibus chi-square test—comparing emotional experiences to all four stimulus types—were consistent with the idea that people experienced different emotional profiles when listening to the four music types, X2 (df: 69) = 2421.66, Bonferroni-corrected p < 0.01. Follow-up chi-square tests directly compared the distributions of experienced emotions across two stimulus groups. The results of these tests are consistent with the idea that people experienced different emotions when listening to grieving vs. melancholic music, X2 (df: 23) = 221.46, Bonferroni-corrected p < 0.01, happy vs. tender music, X2 (df: 23) = 731.85, Bonferroni-corrected p < 0.01, melancholic vs. tender music, X2 (df: 23) = 377.34, Bonferroni-corrected p < 0.01, and in happy vs. grieving music, X2 (df: 23) = 905.31, Bonferroni-corrected p < 0.01.

Recall that the 24 emotion choices in Study 1 were not balanced for valence and arousal, in part because of previous findings that music listening induces more positive than negative emotions. In order to compare the relative amounts of positive and negative emotions felt when listening to music, a ratio of positive and negative emotions induced by each type of music was created. Once again, the binary coding of induced emotion was used. For each stimulus class—grieving music, melancholic music, happy music, and tender music—the proportions of positive and negative emotional experiences were calculated. To calculate negative emotion experiences, the number of times people reported feeling anger, fear, grief, tension, boredom, disgust, and melancholy was tallied and then divided by seven, because there were seven negative emotion choices. The sum of positive emotion experiences was divided by 14, for the 14 positive emotion choices.

As shown in Table 2, listening to grieving music resulted in negative emotional experiences 76% of the time and positive emotional experiences 24% of the time. After listening to melancholic music, participants felt negative emotions 65% of the time and positive emotions 35% of the time. In response to grieving music, then, people experienced approximately 3.17 times (76/24) more negative emotion than positive emotion. In response to melancholic music, people experienced about 1.86 times more negative emotion than positive emotion.

Percent of emotional experiences to grieving, melancholic, tender, and happy stimuli.

Negative valence, high-arousal emotions were defined as angry, fearful, grieved, and tension; negative valence, low-arousal emotions were defined as bored, disgusted, and melancholy. Positive valence, low-arousal emotions were defined as compassionate, nostalgic, peaceful, relaxed, soft-hearted, sympathetic, and tender; positive valence, high-arousal emotions were defined as excited, happy, invigorated, joyful, power, transcendent, and wonder.

Emotions experienced when listening to happy music were 94% positive and 6% negative, while induced emotions when listening to tender music were described as positive 84% of the time and negative 16% of the time. In response to happy music, then, people experienced approximately 15.67 times (94/6) more positive emotion than negative emotion. In response to tender music, people experienced about 5.25 times more positive emotion than negative emotion.

Finally, the utilization of specific emotion terms across stimuli types was examined using a series of Fisher’s exact tests. The use of the words melancholy and grief to describe experiences while listening to music differed when listening to melancholic and grieving stimuli (odds ratio = 1.703, Bonferroni-corrected p = 0.052). Comparing only the reports of experienced melancholy and grief (and ignoring the other 22 emotion terms), when listening to melancholic music, the word melancholy was used to describe experiences 61% of the time (112 times out of 185 descriptions), whereas the word grief was used to describe experiences 39% of the time (73 times out of 185 descriptions). For the grieving stimuli, grief was felt 53% of the time (101 times out of 192 descriptions) and melancholy was experienced 47% of the time (91 times out of 192 descriptions).

The use of the words happy and tender to describe experiences while listening to music differed when listening to happy and tender stimuli (odds ratio = 48.328, Bonferroni-corrected p < 0.01). Comparing only the reports of experienced happiness and tenderness, when listening to happy music, the word happy was used to describe experiences 96% of the time (134 times out of 139 descriptions), whereas the word tender was used to describe experiences 4% of the time (5 times out of 139 descriptions). For the tender musical stimuli, tenderness was felt 64% of the time (110 times out of 171 descriptions) and happiness was experienced 36% of the time (61 times out of 171 descriptions).

The use of the words melancholy and tender to describe experiences while listening to music differed when listening to melancholic and tender stimuli (odds ratio = 6.852, Bonferroni-corrected p < 0.01). Comparing only the reports of experienced melancholy and tenderness, when listening to melancholic music, the word melancholy was used to describe experiences 66% of the time (112 times out of 170 descriptions), whereas the word tender was used to describe experiences 34% of the time (58 times out of 170 descriptions). For the tender musical stimuli, tenderness was felt 78% of the time (110 times out of 141 descriptions) and melancholy was experienced 22% of the time (31 times out of 141 descriptions).

Finally, the use of the words happy and grief to describe experiences while listening to music differed when listening to happy and grieving stimuli (odds ratio = inf, Bonferroni-corrected p < 0.01). Comparing only the reports of experienced happiness and grief, when listening to happy music, the word happy was used to describe experiences 100% of the time (134 times out of 134 descriptions), whereas the word grieved was never used to describe experiences (0 times out of 134 descriptions). For the grieving musical stimuli, grief was felt 98% of the time (101 times out of 103 descriptions) and happiness was experienced 2% of the time (2 times out of 103 descriptions).

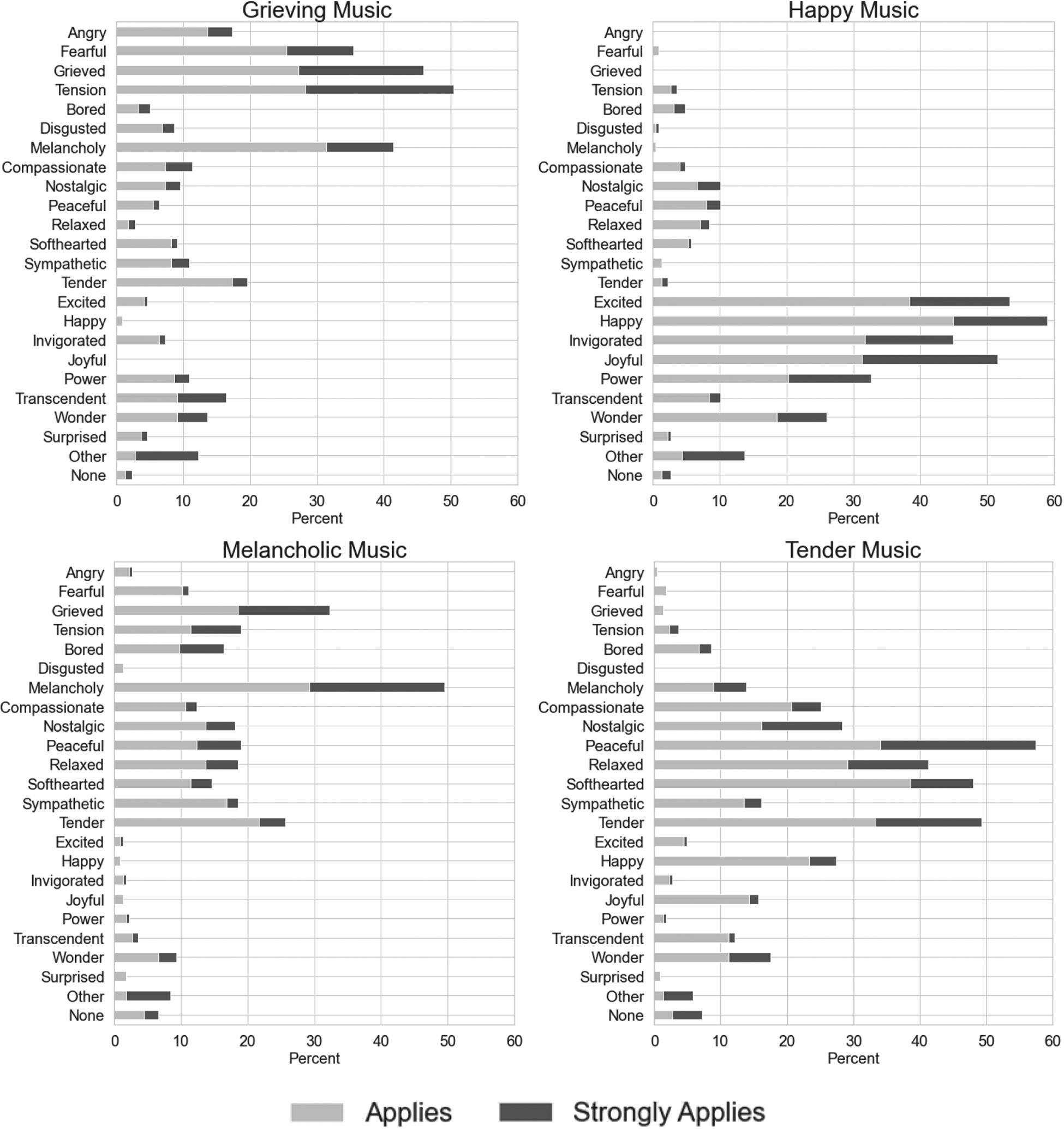

Further exploratory analysis revealed how all terms (angry, bored, compassionate, disgusted, excited, fearful, grieved, happy, invigorated, joyful, melancholy, neutral, nostalgic, peaceful, power, relaxed, soft-hearted, surprised, sympathetic, tender, transcendent, and wonder) were used across the four stimuli types (see Figure 1).

Induced emotions from the four stimulus types (melancholy, grief, tender, and happy) in Study 1.

Emotional experiences induced by grieving music were described best by the terms tension (applied or strongly applied 50% of the time), grieved (46%), melancholy (41%), and fearful (35%), followed by tender (20%), angry (17%), and transcendent (16%). The other terms identified by participants included the following: a mix-mash of indescribable emotion, angst, anguish (2 times), anxious (2 times), apathetic, creepy, crying, deathly, focused/expecting, hope/persistence, intrigued, lonely, mourning, painful, sad, sorrowful, spiteful, stressed (2 times), stressful, teary-eyed, unstable, wanting to stab my ex in the back, and worrisome.

For the a priori selected melancholic stimuli, the term melancholy was used to describe the listeners’ emotional experiences the most often (applied or strongly applied 50% of the time), followed by grieved (32%), tender (26%), tension (19%), peaceful (19%), sympathetic (19%), relaxed (19%), nostalgic (18%), and bored (16%). The other terms listed by participants included the following: coy, creepy, curious (4 times), intention, jumpy, lonely, longing (2 times), makes me think of rain, probing, sad, somber, spooky, unrest/uncanny, and wallowing.

When listening to nominally tender music, the word peaceful was used most often to describe people’s emotional experiences (applied or strongly applied 57% of the time), followed by tender (49%), soft-hearted (48%), relaxed (41%), nostalgic (28%), happy (27%), and compassionate (25%). The other terms listed by participants included: “annoyed. I don’t like oboe and this one sounded especially bad,” bittersweet, contemplative, content, longing, lost, love, playful, romantic (2 times), upset, and wistful.

Finally, participants reported most often feeling happy when listening to happy music (applied or strongly applied 59% of the time), followed by excited (53%), joyful (52%), and invigorated (45%), followed by power (33%) and wonder (26%). The other terms listed by participants included: alive, ambitious, anticipation, bold, curious, dancy, determination, doppie, eager (2 times), energetic (2 times), heroic, honor, hopeful, intoxicated, lively, nature, playful (2 times), pompous, pride, proud, triumph, and triumphant (7 times).

Summaries of the positive and negative ratings, the types of emotions felt, and the overall familiarity of participants with the musical clips are shown for each musical stimulus in Table 7 in Appendix A.

Categories of responses to melancholic and grieving stimuli from the content analysis in Study 2.

Discussion of Study 1

The results of Study 1 are consistent with the hypothesis that melancholic and grieving musical passages result in different kinds of emotional experiences. Among other emotions, listeners experienced more melancholy than grief when listening to the melancholic music and felt more grieved than melancholic when listening to the grieving music.

As might be expected, positively valenced music led to more reported feelings of positive emotions and negatively valenced music led to more reported feelings of negative emotion. Musical stimuli that exhibited high arousal led to higher ratings of positive and negative emotional experiences than musical samples that exhibited low arousal. In response to grieving music, people experienced approximately 3.17 times more negative emotion than positive emotion and in response to melancholic music, people experienced about 1.86 times more negative emotion than positive emotion. In response to happy music, people experienced approximately 15.67 times more positive emotion than negative emotion and in response to tender music, people experienced about 5.25 times more positive emotion than negative emotion. These ratios indicate that people experienced relatively more negative emotions in response to grieving music than melancholic music and experienced relatively more positive emotions in response to happy music than tender music.

The ratios also show that experiences of mixed emotion varied between the four music types (grieving, melancholic, tender, and happy). A ratio of 1 would mean that people experienced equal amounts of negative and positive emotions when listening to music. Large ratio scores (ratios much higher than 1) indicate that emotional experiences are dominated by either positive or negative emotions. Small ratio scores (ratios close to 1) indicate that emotional experiences are more mixed in valence. By comparing the ratios of responses to the four music types, then, we can examine the relative degrees of mixed emotional experiences.

In response to negatively valenced music, people seem to have experienced relatively more mixed emotions from the melancholic stimuli (negative to positive ratio = 1.86) than from the grieving stimuli (negative to positive ratio = 3.17). In response to positively valenced music, people may have experienced comparatively more mixed emotions in response to the tender stimuli (positive to negative ratio = 5.25) than to the happy stimuli (positive to negative ratio = 15.67). In both cases of positive and negative music, the arousal level of the music seems to have influenced induced emotions—low-arousal music (melancholic and tender) results in more mixed-valence emotions than does high-arousal music (grieving and happy).

Comparing music that exhibits high arousal, it seems that grieving music (negative to positive ratio = 3.17) results in higher amounts of mixed emotion than happy music (positive to negative ratio = 15.67). Examining music that displays low arousal, it seems that melancholic music (negative to positive ratio = 1.86) results in more mixed emotions than tender music (positive to negative ratio = 5.25). The ratio scores therefore show that when listening to music, people tend to experience relatively more positive emotions than negative emotions, in line with previous research (e.g., Zentner et al., 2008).

The results of Study 1 are also consistent with the idea that experiences of positive and negative emotions from music listening can be partially explained through self-reported personality characteristics. There are four components of trait empathy measured in the Interpersonal Reactivity Index (IRI): empathic concern, which is similar to sympathy or compassion, personal distress, which is similar to commiseration, perspective taking, which is similar to taking the point of view of another person, and fantasy, which can be related to being absorbed in your imagination. In the current study, people who scored higher on empathic concern experienced more positive emotions and less negative emotions than those low on this trait. Listeners who scored higher on personal distress reported feeling relatively more negative emotions, but the same amount of positive emotions, as those who score lower on this trait. There was no effect of perspective taking or fantasy on ratings of positive or negative emotions.

In term of the Big Five personality traits—extraversion, agreeableness, openness, conscientiousness, and neuroticism—people who scored higher on conscientiousness experienced more positive emotions than people who scored lower on this trait, while people who scored higher on extraversion and agreeableness experienced less positive emotions than did those who scored lower on these traits. There was no effect of neuroticism or openness on experiences of positive emotion. In ratings of negative emotional experiences, listeners who scored comparatively high on agreeableness experienced more negative emotions than did those who scored lower on this trait. There was no effect of extraversion, conscientiousness, openness, or neuroticism on ratings of experienced negative emotions.

People with higher musical sophistication scores, as defined by the Ollen Musical Sophistication Index, experienced more positive emotions than those with lower musical sophistication scores, but this effect was not present in ratings of negative emotion experiences. Similarly, familiarity with the musical stimuli led to higher ratings of experienced positive emotions, but did not affect ratings of experienced negative emotions. There was little effect of musical preferences or Absorption in Music Scores on ratings of positive or negative experienced emotions.

In summary, Study 1 provides initial support for the idea that melancholic and grieving music give rise to different emotional states. Although participants seem to have experienced different degrees of melancholy and grief while listening to melancholic and grieving musical stimuli, and different degrees of tenderness and happiness when listening to tender and happy stimuli, there was still a considerable overlap of emotional categories across all stimuli. In other words, participants did not only experience melancholy when listening to melancholic music, did not only experience grief when listening to grieving music, and so on. In addition, a limitation of Study 1 is that the list of 24 emotions used is not balanced along dimensions of valence and arousal—the checklist provided four terms that may be classified as negative valence and high arousal, three terms that may be labeled as containing negative valence and low arousal, seven terms that are related to positive valence and low arousal, and seven terms that can be described as exhibiting positive valence and high arousal. Study 2 provides a complementary approach to the methods of Study 1, as it uses open-ended free responses generated by participants, instead of using a checklist of emotions. The aim of Study 2 was to replicate the findings of Study 1 through the use of a different methodology and to explore differences in emotional experiences to melancholic, grieving, happy, and tender musics with more granularity.

Study 2

Hypothesis

The purpose of the second study was to determine whether listeners spontaneously make distinctions between experiences when listening to melancholic and grieving musical passages. Listeners were not provided with a checklist of emotional terms, but rather were asked to freely write about their emotional experiences while listening to music. In Study 2, listeners were not asked about their experiences while listening to happy or tender musical passages, but instead focused only on melancholic and grieving musical passages. The driving hypothesis was the same as in Study 1, namely, that listeners will experience different patterns of emotions in response to melancholic and grieving music.

Stimuli

Two versions of Study 2 were created, each with different musical stimuli (see Table 1). In Version 1, participants listened to the melancholic Fauré stimulus and the grieving Arnold & Price stimulus. In Version 2, participants listened to the melancholic Mozart stimulus and the grieving Marionelli stimulus. The order of the excerpts was randomized across participants.

Experimental Procedure

Study 2 was conducted online using the Qualtrics Research Suite and was between 5 and 10 minutes in duration. The study’s short duration was due to external time limit constraints and limited participant availability. For similar reasons as in Study 1, participants were not told about the hypothesized differences between melancholy and grief, in order to minimize potential bias in favor of the experimenter’s theoretical perspective. Once again, participants were asked to focus on experienced emotion and were given the following instructions: “We want to know what you are

Participants were not allowed to advance to the study questions until they listened to the entire identified musical passage. After the first listening, the musical passage appeared on a new page, along with a series of three questions (detailed below). The three questions prompted listeners to identify up to 30 words or phrases that described their experience while listening to the music. Specifically, participants were given the following three prompts: Please list up to 10 emotion-related words or phrases that you feel while listening to this music. Some examples of emotional words are “ecstatic” and “tranquil.” Please list up to 10 general phrases or metaphors that describe your experience while listening to this music. Some examples of a general phrase are “makes me feel like dancing” and “feels like a summer’s day.” Please list up to 10 associational words or phrases that describe your experience while listening to this music. Some examples of associational words are “pastoral,” “sunny,” “yellow,” and “childhood.”

The intention of using three separate prompts was to promote flexible thinking. The study aim was to compare the descriptions provided by participants in response to melancholic and grieving music.

Participants

A total of 27 participants took part in Study 2. These listeners were primarily first- and second-year music theory students at Ohio State University, of which 63% (17 participants) were female. The mean age was 24.2. Participants had an average of 1.6 years of music theory training (range 0–8 years) and an average of 4.4 years of instrument training (range 0–14 years).

Recall that one of the questions in Study 1 was the following: “What kinds of thoughts went through your head as you listened to this music? It could be images, metaphors, moods, or anything else you can think of.” This data was not analyzed in Study 1, but rather was analyzed along with the data from Study 2. Therefore, responses were collected from 90 participants (63 participants from Study 1 and 27 participants from Study 2).

Results

The 90 participants produced 849 responses (197 responses from the grieving music in Study 1, 195 responses from the melancholic music in Study 1, 241 responses from the grieving music in Study 2, and 216 responses from the melancholic music in Study 2). In order to decipher differences in experiences of melancholy and grief between the two music types, two independent assessors unfamiliar with the study were asked to classify the open-ended responses into three categories: grief-related words or phrases, melancholy-related words or phrases, and other words or phrases. Duplicate words or phrases were removed before the coders viewed the list, resulting in a list of 797 responses. The list of responses was presented in alphabetical order.

In describing the 375 responses to melancholic music, Coder 1 used the term melancholy 19% of the time, grief 17% of the time, and other 64% of the time. Coder 2 described the responses to melancholic music with the term melancholy 25% of the time, grief 9% of the time, and other 67% of the time. In describing the 403 responses to grieving music, Coder 1 used the term grief 18% of the time, melancholy 7% of the time, and other 74% of the time. Coder 2 described the responses to grieving music with the term grief 18% of the time, melancholy 12% of the time, and other 69% of the time. The agreement between the two coders is shown in Table 8 in Appendix B.

To test whether the distribution of participant responses categorized as melancholic and grieving was the same for the two music types, Fisher’s exact test was carried out. Only words or phrases that both coders agreed upon were analyzed; namely, if one coder thought a phrase was melancholic and the other coder thought that phrase was grieved, this description was not analyzed. The responses to melancholic music were categorized as melancholic 32 times and as grieving 20 times, while the responses to grieving music were categorized as grieving 47 times and as melancholic 9 times. The Fisher exact test revealed that there was a difference in participant responses across the two conditions (odds ratio = 0.12, p < 0.01). The results are therefore consistent with the hypothesis that listeners experienced more melancholy from the a priori deemed melancholic music and experienced more grief from the a priori deemed grieving music. The difference between experienced melancholy and grief, first reported in Study 1, was therefore replicated in Study 2. Among these responses, however, there was a high degree of other responses (201 in response to melancholic music and 245 in responses to grieving music), indicating that listeners experienced emotions other than melancholy and grief when listening to these passages.

In order to learn more about the other emotional experiences that could not be described as melancholic or grieving, the words or phrases generated by participants were also subjected to a content analysis. The purpose of this analysis was to check for any underlying dimensions of the responses that were not summarized by the terms melancholy and grief. Two new assessors were asked to independently sort the 797 unique responses into as many categories as they saw fit. The assessors were unaware of whether each response described a feeling evoked by melancholic or grieving music. The first assessor classified the responses into 21 categories, while the second assessor classified the responses into 36 categories. The assessors then met to finalize the number and names of the categories. The result of the collaboration was 26 categories. After the assessors agreed on the final categories, they worked together to re-classify all descriptions under these 26 categories. Table 9 in Appendix B provides information regarding how many descriptions were classified under each of these categories.

After all of the descriptions were classified under the final 26 categories, the distribution of responses generated when listening to melancholic music versus the distribution of responses generated when listening to grieving music was examined. The percentages of open-ended responses assigned to the 26 categories in response to melancholic and grieving music are shown in Table 3.

Discussion of Study 2

In this second study, participants were asked to describe their experiences while listening to melancholic and grieving music using open-ended responses. The results of Study 2 are consistent with the claim that listeners experience different emotion profiles in response to a priori deemed melancholic and grieving passages. Grieving music was dominated by feelings of Anticipation/Uneasy, Tension/Intensity, Crying/Distraught/Turmoil, Death/Loss, and Epic/Dramatic/Cinematic. Melancholic music resulted in feelings of Sad/Melancholy/Depressed, Reflective/Nostalgic, Rain/Dreary Weather, and Relaxed/Calm. Both melancholic and grieving stimuli resulted in high amounts of responses categorized as Darkness/Dark Colors.

In Study 1, in response to grieving music, people reported feeling grieved 46% of the time and melancholic 41% of the time. The open-ended responses to grieving music in Study 2 were categorized as Grief 3% of the time and Sad/Melancholy/Depressed 6% of the time. Similarly, in Study 1, when listening to melancholic music, people reported feeling melancholic 50% of the time and grieved 32% of the time. The free responses to melancholic music in Study 2 were categorized as Sad/Melancholy/Depressed 12% of the time and Grief 2% of the time.

Therefore, when asked to freely describe emotional experiences, participants reported feeling melancholic or grieved less often than when presented with a checklist. In Study 1, participants were able to select as many emotions from the checklist as they wanted, whereas in Study 2, each response was placed into a single emotion-related category. The discrepancy in grieving responses between Studies 1 and 2 could indicate that, in Study 1, participants primarily felt an emotion like tension, but also checked other emotion terms that seemed similar to tension. As tension and grief can both be categorized as negatively valenced and high arousal, the high percentage of grieving emotions in Study 1 could simply be a spurious correlation. In other words, without prompting participants with the term grieved in a checklist, listeners may not tend to experience grief when listening to music.

A recent paper by Huron and Vuoskoski (2020) proposes another reason why descriptions of music-induced emotional experiences may not spontaneously be described as grieving. When engaging with the Arts, people recognize the fictitious aspect of music, drama, and dance—they understand that expressions of tragedy are not real. When listening to nominally grieving music, there are no negative real-life implications—it is “just music.” Rather than describe their emotional experiences as grieved, a term that is not usually associated with music, listeners may instead elect to describe their experiences as tense, crying, and turmoil, which are terms more commonly associated with the Arts. Indeed, descriptions of emotional experiences to grieving music were often categorized into similar terms in Study 2. Future research is needed to explore this idea further.

The results of Study 1 suggested that people tend to experience relatively more positive or mixed emotions in response to melancholic music than to grieving music. The results from Study 2 replicate these findings. Descriptions of emotional responses to melancholic music resulted in more phrases that were classified into positively valenced or mixed valenced categories, like Relaxed/Calm (7% of responses) and Reflective/Nostalgic (9%), than the responses generated from listening to grieving music. Responses to grieving music were dominated by negatively valenced categories and contained relatively low amounts of positive or mixed emotion categories (3% Transcendent, 2% Reflective/Nostalgic, 2% Relaxed/Calm). The results of Studies 1 and 2 suggest that the positive emotions often associated with sad music may, in fact, be driven by listening to melancholic music; grieving music may not lead to many feelings of positive emotions.

Recall that some of the open-ended responses were generated in Study 1 and other responses were generated in Study 2. The 63 participants from Study 1 were mainly from the School of Music and had taken music lessons for an average of 6.5 years. The 27 participants from Study 2 included a greater proportion of participants outside of the School of Music. The Study 2 participants had an average of 4.4 years of instrument training. Moreover, the participants in Study 1 listened to 16 musical stimuli, including nominally melancholic, grieving, happy, and tender musical clips. In Study 2, participants only listened to two musical stimuli, one that was nominally melancholic and one that was nominally grieving. In order to compare whether these differences in study design and participant characteristics affected the experienced emotions to music, we can look at the distribution of responses to melancholic and grieving music in Studies 1 and 2, focusing on the stimuli that were used in both studies.

The melancholic Fauré and Mozart passages were used in Study 1 and Study 2. Looking at the participant responses to these two stimuli, it seems as if the biggest difference in responses across the two studies occurs in phrases that were categorized as Darkness/Dark Colors, Rain/Dreary Weather, Sad/Melancholy/Depressed, and Reflective/Nostalgic. In Study 1, only 1% of the responses were classified as Darkness/Dark Colors and 4% of responses were classified as Rain/Dreary Weather, whereas in Study 2, 14% of the responses were classified as Darkness/Dark Colors and 12% were classified as Rain/Dreary Weather. On the other hand, in Study 1, 22% of responses were classified as Sad/Melancholy/Depressed and 14% of responses were classified as Reflective/Nostalgic. In Study 2, only 9% of responses were classified as Sad/Melancholy/Depressed and 8% of responses were classified as Reflective/Nostalgic. Table 10 in Appendix B shows how responses to melancholic music differed in Studies 1 and 2.

The grieving musical passages composed by Arnold & Price and Marionelli were used in both studies. The differences in responses to grieving music in Studies 1 and 2 are mostly found in the categories of Darkness/Dark Colors, Longing, Crying/Distraught/Turmoil, Epic/Dramatic/Cinematic, and Tension/Intensity. Darkness/Dark Colors represented 3% of the responses in Study 1 and 12% of the responses in Study 2. Responses to grieving music were never categorized as Longing in Study 1, but were categorized as Longing 5% of the time in Study 2. In Study 1, the Crying/Distraught/Turmoil category represented 10% of the responses, the Epic/Dramatic/Cinematic category represented 11% of the responses, and the Tension/Intensity category represented 13% of the responses. On the other hand, in Study 2, the Crying/Distraught/Turmoil category represented 5% of the responses, the Epic/Dramatic/Cinematic category represented 4% of the responses, and the Tension/Intensity category represented 4% of the responses. Table 10 in Appendix B shows how responses to grieving music differed in Studies 1 and 2.

It is possible that the inclusion or exclusion of happy and tender stimuli biased the responses to melancholic and grieving music in Study 1 versus Study 2. Another possibility for the differences in responses across the two studies is the wording of the questions: the open-ended responses in Study 1 were prompted by the question, “What kinds of thoughts went through your head as you listened to this music? It could be images, metaphors, moods, or anything else you can think of,” whereas the open-ended responses in Study 2 were prompted by the questions, “Please list up to 10 emotion-related words or phrases, general phrases or metaphors, associational words or phrases that you feel while listening to this music.” Future research is needed in order to assess the relative effects of participant characteristics, inclusion or exclusion of both negatively and positively valenced stimuli, and question wording on induced emotions from music listening.

General Discussion

As mentioned in the Introduction, researchers have made use of at least 2,000 musical passages that they have labeled as sad (Warrenburg, 2020c). This collection of sad music includes seemingly disparate musical selections, such as Barber’s Adagio for Strings, Tori Amos’s Icicle, and Miles Davis’s Summer Night. Inspired by the wide range of sad musical samples in the previously-used musical stimuli (PUMS) database (Warrenburg, in press), the current study explored the types of emotions listeners experience when they listen to nominally sad music.

The theory driving the current paper is that there are at least two kinds of music-related sadness—melancholy and grief—that are consistent with the distinction between the two terms in the psychological literature. The results of both Studies 1 and 2 are consistent with the idea that listeners experience different kinds of emotions when listening to melancholic and grieving musical passages.

The current study is part of a series of studies on the musical structure, emotion perception, and emotional experiences of melancholic and grieving music, all of which utilized the same musical stimuli. To select these musical examples, first, musicians of three levels of training (untrained participants, participants trained with facial expressions of melancholy and grief, and experts in emotional theory of psychology and music) were asked to provide suggestions of melancholic and grieving musical passages (Warrenburg, 2020b). A total of 278 participant-selected works were collected, which were then combined with 650 sad-related works from the PUMS database (Warrenburg, 2020c; in press), for a total of 928 candidate works. After a screening and rating process, a subset of 10 of these melancholic and grieving passages was selected to be explored in detail. Highly skilled musicians rated these musical selections on 18 structural parameters (Warrenburg, 2020b). The results of the analysis were consistent with the idea that music that is louder, higher-in-pitch, contains more wide pitch intervals, gliding pitches, and harsh timbres, but contains less legato and staccato passages predicts music that has been previously categorized as grieving. Compared with grieving music, then, melancholic music tends to be quieter, lower-in-pitch, contains comparatively narrow pitch intervals, and comparatively more legato.

Next, a combination of four behavioral studies asked listeners which emotions they perceived (Warrenburg, 2019) and experienced (the current paper) from these melancholic and grieving musical passages. The emotions people perceive in melancholic and grieving stimuli was examined using two methodological designs, a five-alternative forced-choice paradigm, and a selection of emotion(s) from a list provided by the experimenter. The emphasis of the current paper was on exploring how listeners felt in response to melancholic and grieving stimuli. Again, two correlational studies were conducted; the first design employed an experimenter-provided list of 24 emotional terms, from which listeners could select emotion(s), while the second design asked participants to spontaneously describe their experiences while listening to music.

Combining the results of these studies, it appears that melancholic and grieving musical passages exhibit different structural characteristics (Warrenburg, 2020b), convey different emotions to listeners (Warrenburg, 2019), and result in distinctive emotional experiences (the current study). The latter two of these findings were each replicated with separate methodological designs.

Ultimately, the goal of this series of studies was to show that we cannot understand music’s influence on emotions by simply studying a few emotional states, such as happiness and sadness; instead, we may need to make subtle distinctions previously unrecognized in the music field. While there are consistent differences cited between tender music (which exhibits low arousal) and happy music (which exhibits high arousal), this arousal-based difference has not been recognized with regard to music-related sadness. The results of the current work on sad music suggest that there are also differences between melancholic music (which exhibits low arousal) and grieving music (which exhibits high arousal). Other research suggests that music previously classified as fear can be similarly broken down into categories of panic and anxiety (Trevor et al., 2020).

Underlying Theoretical Perspectives

There are several theoretical perspectives that could explain why melancholic music can be differentiated from grieving music. The current study does not provide evidence in favor of any of the following theoretical perspectives—the conclusions of the study are limited to the fact that people seem to experience different types of emotions from melancholic and grieving music.

One possible reason for the separation of emotional experiences in response to melancholic and grieving music can be related to the proposed theoretical foundations for melancholy and grief. The categories that best captured open-ended responses to grieving music, for example, can be related to grief’s purported psychological function. Experiences of grief often occur after a person suffers a significant loss, such as the death of a loved one, the end of a close personal relationship, or the loss of trust, safety, autonomy, or an identity (Archer, 1999; Epstein, 2019). Feelings of grief tend to be negatively valenced and are often associated with high physiological arousal (Andrews & Thomson, 2009; Darwin, 1872; Huron, 2016; Urban, 1988; Vingerhoets & Cornelius, 2012). Behaviors such as crying and wailing are often associated with experiences of grief (Frick, 1985; Gelstein et al., 2011; Mazo, 1994; Rosenblatt et al., 1976; Urban, 1988; Vingerhoets & Cornelius, 2012). In the current study, when participants listened to grieving music, they described their emotions as Crying/Distraught/Turmoil and Death/Loss, which are related to experiences of grief in nonaesthetic contexts. The reported feelings of Tension/Intensity and Anticipation/Uneasy when listening to music are also similar to nonaesthetic experiences of grief, as these emotions tend to be described as negatively valenced with high associated physiological arousal.

The categories that best described emotional responses to melancholic music included terms that could similarly be related to the purported function of melancholy. Psychological researchers like Paul Ekman and music researchers like David Huron have speculated that melancholy is an emotion that is usually experienced when a person needs to self-reflect about a failed goal (Ekman, 1992; Huron, 2015). This reflection tends to be a solitary activity that occurs when a person is alone. Melancholic individuals tend to have negatively valenced feelings that are accompanied by low physiological arousal (Andrews & Thomson, 2009; Darwin, 1872; Huron, 2016; Vingerhoets & Cornelius, 2012). A melancholic person does not usually exhibit any observable behavioral displays; rather, the behaviors we tend to associate with melancholy (muteness, relaxed facial expressions) are simply effects of low physiological arousal (Andrews & Thomson, 2009; Nesse, 1991). Some researchers have noted that expressions of relaxation, boredom, sleepiness, and melancholy are so similar that it is nearly impossible to differentiate these states in another person without directly asking them to label their emotion (Andrews & Thomson, 2009; Nesse, 1991). When participants listened to melancholic music, the descriptions of their experiences included terms like Sad/Melancholy/Depressed and Reflective/Nostalgic, both of which can be related to psychological experiences of melancholy. The use of words like Rain/Dreary Weather may have been a way to verbalize negatively valenced experiences with low physiological arousal. The results of both studies are consistent with the idea that listening to melancholic music results in higher amounts of mixed-valenced experiences than does listening to grieving, happy, or tender music. For example, when listening to melancholic music, participants often reported feeling Relaxed/Calm.

A second theory could also explain why people may experience different patterns of emotions in response to melancholic and grieving music. Work on emotional contagion suggests that people sometimes experience an emotion that is being expressed by another human being. Emotional contagion is also thought to be possible in response to non-human expressions, including music (Juslin, 2013). That is, an induced emotion could arise when a person hears musical features that emulate emotional features found in human vocal expressions (Juslin & Laukka, 2003). As melancholic music may mirror melancholic speech and grieving music may mirror grieving speech (Warrenburg, 2020b), it could be that when listening to melancholic and grieving music, listeners experience melancholic or grieving feelings through the mechanism of emotional contagion, in line with how people respond to other vocal homologues (Juslin, 2013). Since melancholic music may also exhibit similar features to tender music (Juslin & Laukka, 2003), this theory could also explain why listeners feel relaxed and calm when listening to melancholic music, as demonstrated in the current study. Similarly, grieving passages, which tend to be faster and more energetic, might increase a listener’s arousal levels and lead to feelings of passion, romance, or transcendence.

In a recent paper on the enjoyment of nominally sad music, David Huron and Jonna Vuoskoski propose a new theory for how sad music can induce emotions in listeners (Huron & Vuoskoski, 2020). In this theory, the Pleasurable Compassion Theory, they distinguish contagious emotions from repercussive emotions. When a listener feels an emotion similar in nature to the emotion being expressed in the music, the listener is experiencing a contagious emotion. In other words, if you listen to a piece of music that is representing grief—and you also feel grieved when listening to the music—you have “caught” the contagious grieving emotion from the music. When a listener feels an emotion that is different from the emotion expressed by the music, the listener is experiencing a repercussive emotion. If you listen to a grieving piece of music and it makes you feel compassionate, moved, or transcendent, your positively valenced experiences are repercussions of listening to the grieving music.

Most of the responses describing emotional experiences from listening to melancholic and grieving music resulted in what may be classified as contagious emotions. Although listeners experienced a wide range of emotions in response to both kinds of music, grieving music tended to lead to experiences of grief and other negatively valenced affective states with high arousal, whereas melancholic music tended to lead to experiences of melancholy and other negatively valenced affective states with low arousal. These findings could mean that the evoked emotions were induced by an associative process, such as emotional contagion.

Central to Huron and Vuoskoski’s theory, however, are the personality traits of the listeners, such as trait empathy. Citing previous studies, the authors note that those who score high on the empathic concern and fantasy components of empathy tend to most enjoy listening to sad music, which suggests that listeners with these traits may experience more positively valenced emotions than their peers who score lower on these traits (Eerola et al., 2016; Kawakami & Katahira, 2015; Sattmann & Parncutt, 2018; Vuoskoski & Eerola, 2017; Vuoskoski et al., 2012). Furthermore, Sattmann and Parncutt (2018) have shown that the differences in experienced emotion when listening to music that was thought to be due to openness to experience can be entirely explained by differences in trait empathy—openness to experience was merely a spurious correlation caused by the correlation between trait empathy and openness.

Huron and Vuoskoski’s Pleasurable Compassion Theory predicts that listeners with high levels of the personal distress component of empathy (similar to commiseration) will experience more negative emotions in response to sad music. In line with this theory, in the current study, people who scored higher on personal distress experienced more negative emotions, but not more positive emotions, in response to the musical stimuli than those who scored lower on this trait. The Pleasurable Compassion Theory also states that those with high levels of the empathic concern (similar to sympathy or compassion) and fantasy (similar to absorption in your imagination) components of empathy may experience more positive emotions in response to sad music. The current study is also consistent with the idea that people who score higher on empathic concern experience more positive emotions and less negative emotions than those who score lower on empathic concern. There was no effect of the fantasy component of empathy or openness to experience on positive or negative emotional experiences from music listening. The two studies presented here provide evidence consistent with the Pleasurable Compassion Theory—people who differ in empathy experience different emotions in response to music listening. An important caveat to these findings is that the two studies in the current paper asked listeners to respond to both negatively and positively valenced musical samples. In order to support the Pleasurable Compassion Theory, future work will need to replicate these findings with participants who only listened to negatively valenced music.

Finally, it is likely that melancholy and grief simply represent two kinds of sad emotional states out of, say, tens or hundreds of possible sad states. This idea would align with the emotional theory of psychological construction (Céspedes Guevara & Eerola, 2018; Warrenburg, 2020a). In other words, one could imagine a sadness spectrum that represents a continuum of emotional states, all of which have been previously combined under the label sadness. There has been little empirical work examining how to map various subjective feelings, behavioral characteristics, and physiological manifestations onto a continuous sadness spectrum, even in the psychological literature. In fact, the construction of such a spectrum may be impossible, as some theorists speculate that each instance of sadness may be incomparable (Barrett, 2017). It is not known how many types of sadness music can evoke or portray. It may be that music represents and elicits only a few distinct kinds of sadness, corresponding, say, to melancholy and grief, or to a total of five or ten specific sad categories. However, it is more probable that a collection of musical passages can be mapped onto an entire sadness continuum with an infinite number of categories. In order to test this theory, researchers would likely need to include methods like machine learning, statistical modeling, or other big data techniques, along the lines of the work of Alan Cowen and colleagues, who have created an interactive map showing how listeners’ responses to music can be represented as multi-dimensional continuous gradients (Cowen et al., 2020).

Emotional Granularity

One implication of the two studies presented here is that, in order to better understand emotional responses to music, it may be beneficial to change the way we use emotional terminology. The psychological phenomenon called emotional granularity can aid in this process (Barrett, 2004; 2017). Emotional granularity is a term most often used in psychological construction theories of emotion and refers to the degree of precision a person uses to describe their emotional states. Importantly, emotional granularity is both a trait personality characteristic and a skill that can be learned. As people’s emotional granularity increases, we may find that listeners differentially perceive and experience panic and anxiety or happiness and tenderness the same way that they differentially perceive and experience melancholy and grief.

The reliance of music and emotion studies on a small set of emotion words is likely due to the influence of basic emotions theory, with its focus on a small number of biologically grounded emotions (Warrenburg, 2020a). Using more emotionally granular terms can benefit research operating from multiple theoretical perspectives, including basic emotions, appraisal, and psychological construction theories of emotion. Whether or not musically expressed emotions are perceived as categorical or continuous, it can be helpful to make use of more precise terms in labeling emotions in the music domain.

The idea that nominally sad music leads to a variety of emotional experiences, including both negative and positive emotions, is well known. In addition to the effects of dispositional characteristics, personality traits, and situational differences, the current study adds a complementary perspective as to why there may be such a wide array of individual differences in response to sad music: people may respond differently to various subtypes of sad music, including melancholic and grieving music. As one example, the current studies suggest that the positive emotions often associated with sad music may be evoked primarily when listening to melancholic—but not grieving—music.

If melancholic and grieving musical passages have been conflated under a single term, like sadness, it could partially explain why studies report different effects of sad music on listeners’ emotions. In the future, a training program focused on emotional granularity can be used for researchers and participants, so that both groups can acquire a reasonable degree of precision when it comes to describing emotional experiences to music. Emotional granularity provides researchers with the ability to re-define broad categories of emotions, such as sadness. Its incorporation in studies of music and emotion can result in future researchers finding additional subtle shades of emotion previously unrecognized in the literature.

Limitations

One limitation of the current study is that the distinction between melancholic and grieving musical passages could be interpreted as supporting either of two major emotional theories: basic emotions or psychological construction. In other words, melancholy and grief could represent two universal, distinct emotions, with different phylogenetic origins (as suggested by basic emotions theorists) or as two separate points on a possible continuum of sad emotions (as suggested by psychological constructionists). In order to compare these two theories empirically, one would need to use many more musical samples, examine examples of music that contain features of both melancholy and grief, utilize machine learning techniques, and require participants to listen to the same musical passages in different contexts.

A second limitation of the current paper is the focus on nominally sad music. Although the narrow focus on sad affective states represents an important first step in the investigation of more granular emotions, the ability to generalize beyond music-related sadness is hindered. An important avenue for future research is to investigate whether the findings presented here generalize to other emotion families, such as anger or fear.