Abstract

The fields of music, health, and technology have seen significant interactions in recent years in developing music technology for health care and well-being. In an effort to strengthen the collaboration between the involved disciplines, the workshop “Music, Computing, and Health” was held to discuss best practices and state-of-the-art at the intersection of these areas with researchers from music psychology and neuroscience, music therapy, music information retrieval, music technology, medical technology (medtech), and robotics. Following the discussions at the workshop, this article provides an overview of the different methods of the involved disciplines and their potential contributions to developing music technology for health and well-being. Furthermore, the article summarizes the state of the art in music technology that can be applied in various health scenarios and provides a perspective on challenges and opportunities for developing music technology that (1) supports person-centered care and evidence-based treatments, and (2) contributes to developing standardized, large-scale research on music-based interventions in an interdisciplinary manner. The article provides a resource for those seeking to engage in interdisciplinary research using music-based computational methods to develop technology for health care, and aims to inspire future research directions by evaluating the state of the art with respect to the challenges facing each field.

Keywords

1. Introduction

Music is increasingly thought of as beneficial for health, however the scientific research supporting this claim is not yet entirely robust. Furthermore, our society is calling for more standardized, cost-effective methods in medicine, leading to a surge of interest in e-health and computer-based interventions. Although music lends itself exquisitely to technological applications, and great strides are being made in the computational analysis of music that may facilitate an enormous range of computerized (but personalized) interventions, relatively little research has focused specifically on the potential of music technologies for health applications. Meanwhile, there is a shift to person-centered care, prevention, and emphasizing resources, strengths, and self-management of patients. Therapies using music and arts-based interventions are characterized by personalized methods focusing on the strengths and capabilities of patients, having the potential to motivate patients, change behavior, stimulate adaptability, and reduce symptoms. Such interventions are therefore expected to play a key role in the future of health and well-being.

In order to explore how state-of-the-art technology, machine learning, and computing may be used to develop novel, music-based applications for health care, the Lorentz workshop 1 “Music, Computing, and Health” was convened in March 2019, bringing together researchers interested in the applications of music technology for health care. The workshop was attended by participants from the fields of music information retrieval (MIR), music psychology and neuroscience, and music therapy, along with neighboring areas such as robotics and human computer interaction (HCI). These different fields use the term “music technology” with different nuances. For instance, in computer science, music technology is seen as “a technical discipline, analogous to computer graphics, that encompasses many aspects of the computer’s use in applications related to music” (Keislar, 2011). In music therapy, it is “the activation, playing, creation, amplification, and/or transcription of music through electronic and/or digital means” (Hahna et al., 2012, p. 456). In the context of this interdisciplinary workshop, and the current roadmap article that resulted from it, we use the term music technology as the umbrella term for software and hardware devices supporting digital means to analyze, process, generate, perform, edit, and interact with music, while we specifically focus on how these digital means support the many aspects of using music in therapeutic interventions. This encompasses, for instance, supporting the creation, playing, and recording of music; enabling feedback mechanisms through the use of sound and music; employing musical interfaces for musical expression and creation; and analyzing musical data produced within music therapy sessions. We focus on MIR as a research field for developing computational methods that enable novel music technology to be employed in the music therapy context.

The three main contributions of this article are, first, to discuss how these fields may interact in order to develop music technology for health care settings; second, to describe the state of the art in using music technology in music therapy; and, finally, to provide suggestions regarding possible future directions for interdisciplinary research in the use of music technology for health and well-being. The remainder of the Introduction introduces the involved disciplines, discusses important aspects regarding their interaction for developing music technology (Section 1.2), and summarizes affordances of music that underpin the various therapeutic applications of music technology in health (Section 1.3). Section 2 of the paper paper provides a detailed overview of different use cases of music technology in health settings, and Section 3 covers general considerations and the road(map) ahead.

1.1 Introduction to the Different Disciplines

Music therapy, MIR, and music psychology and neuroscience are distinct fields that each afford valuable interdisciplinary research relevant to applications for health care and well-being. Specifically, MIR focuses on the development of efficient and effective methods and technology for extracting useful information from music data, while music psychology and neuroscience focus on advancing our understanding of the cognitive, perceptual and neural processes that underpin the perception, creation, and appreciation of music. Understanding these processes may play an important role in explaining the effect of music interventions used in music therapy with clinical populations. Before describing potential interdisciplinary collaborations between these fields for developing music technology for health care and well-being, we first briefly describe the constituent fields, emphasizing their distinct, but complementary, perspectives and approaches.

1.1.1. Music Therapy

Music therapy is the clinical and evidence-informed use of music interventions to accomplish individualized goals within a therapeutic relationship (American Music Therapy Association, 2014). Music therapy is applied in a wide spectrum of inpatient, outpatient, and community health care contexts, and in education. Interventions are conducted by professionals who are trained and licensed as a music therapist. Interventions involve a therapeutic process developed between the patient (or client) and therapist through the use of personally tailored music experiences (Bradt & Dileo, 2014; De Witte et al., 2019). These experiences are accomplished through shared music listening or shared music playing, using methods such as composition, improvisation, or songwriting (Leubner & Hinterberger, 2017). Music-therapeutic interventions can be subdivided into the two broad categories of

Music therapists work with people across the entire lifespan, from neonates to the elderly, and typically as part of an interdisciplinary team. The populations served include developmental and neuroatypical disorders, medical, behavioral health, palliative, forensic, and other at-risk populations in crisis or trauma. There has been a recent trend in music therapy to incorporate music technology in music therapy to incorporate music technology, which can be used across all of the music-therapeutic methods to facilitate patient/therapist interactions, to support data collection/analysis of these interactions, to create new opportunities for treatment, and more (Magee, 2014c, 2018). There is a rich body of research supporting the efficacy of music therapy across a range of medical, psychiatric, and subclinical populations, with theory drawing from medicine, neuroscience, psychology, and music (e.g., Aalbers et al., 2017; Bradt et al., 2016; Magee et al., 2017). In this article we speak of music

1.1.2. Music Information Retrieval

Computational approaches to the study of music emerged in the 1950s, and the broad range of methods in developing music technology today is reflected by the numerous research communities at the intersection of music and computer science, most notably MIR, computational and digital musicology, sound and music computing (SMC), and new interfaces for musical expression (NIME). Although there is significant overlap between these areas, we explicitly discuss MIR here.

MIR draws from research disciplines such as signal processing, information retrieval, machine learning, HCI, digital libraries, musicology, music cognition, and psychoacoustics, focusing on a wide range of computer-based music analysis, processing, and retrieval topics. The computational analysis of musical structures within and across musical pieces has formed a major research focus in MIR, taking digitized symbolic representations into account, which include any kind of score representation with an explicit encoding of musical events (such as notes), as well as digitized audio representations (digital representations of sound waves). Developing methods to rigorously evaluate computational analyses, concepts, models, interfaces, and algorithms in MIR constitutes a second major effort in this field. Both the computational analysis of musical structures and the evaluation strategies on measuring the outcomes of computational models in specific contexts can serve as promising starting points for employing computation in music in several scenarios of health and well-being.

Computational music analysis (e.g., Anagnostopoulou & Buteau, 2010; Meredith, 2016) aims at extracting and describing musical structures such as melodies (Van Kranenburg et al., 2013), motives, patterns and segments (Conklin, 2010; Janssen et al., 2017; Lartillot, 2005; Meredith et al., 2002), chords, harmonies, and tonality (Chew, 2014), or rhythms and meter (Volk, 2008), employing and extending techniques originating in disciplines such as linguistics, information theory, geometry, algebra, and machine learning. The automatic extraction of musical structures from audio signals (Müller, 2015) complements methods in the symbolic domain. An audio waveform provides a detailed encoding of a specific performance of a piece, including any temporal, dynamic, and tonal micro-deviations present. Besides techniques from digital signal processing, an increasing number of MIR approaches employ machine learning techniques in both the symbolic and audio domain, such as deep learning (see Müller et al., 2019, for an overview of audio-related MIR).

Using computational techniques to allow users to interact with music in different contexts, such as those for music recommendation, listening, and creation (Knees et al., 2019), constitutes another important research focus of MIR, which is crucial for developing music technology in the health and well-being context. Designing interactive music technologies that can support therapeutic interventions ties into research in the field of HCI, and requires additional considerations from the music therapeutic perspective, such as patients’ needs and the therapeutic goals (see Section 2.2).

While the application of MIR technology has expanded beyond the original retrieval and recommendation domains to areas such as education, gaming, and automatic music composition, the application within the health context has been considered as one of the future challenges of MIR (Serra et al., 2013; Future of Music-IR Research Panel at ISMIR 2017). 2

1.1.3. Music Psychology and Neuroscience

Humans—musicians, and non-musicians alike—have a natural tendency to react to music, produce it, and enjoy it. Across groups and cultures, from childhood to older age, people experience music on a daily basis by passive or active listening, playing an instrument, singing, and dancing. Understanding the cognitive and brain mechanisms supporting these widespread abilities, and more generally of musicality, is the main goal of music psychology and of the neurosciences of music (Deutsch, 2012; Peretz & Zatorre, 2003; Tan et al., 2018). Knowledge about these mechanisms is acquired using a variety of methods coming mostly from experimental psychology, cognitive neuroscience, and computational modelling. Musical abilities are examined in well-controlled behavioral experiments in the lab, using behavioral methods, psychophysiological methods (e.g., electro-encephalogram (EEG) or electromyogram (EMG)), neuroimaging techniques (functional magnetic resonance imaging (fMRI), or positron emission tomography (PET)-scan), and brain stimulation (e.g., transcranial magnetic stimulation, or TMS). Ultimately, the findings from music psychology and neuroscience contribute to our understanding of the roots of musicality, and show the relevance of both innate (e.g., genetic) and environmental factors in shaping our musical brain (Zatorre, 2013). Example areas of interest in this area are pitch and rhythm perception, emotion and reward evoked by music listening, music performance, and music-specific disorders (e.g., acquired and congenital amusia, cf. Stewart et al., 2009).

Musical training and performance provide an ideal human model for examining the brain effects of multisensory stimulation and learning. These multisensory experiences engage our sensory, motor, and cognitive systems, implicating a wide array of brain structures. The idea that music stimulates plasticity in neuronal networks subserving more general functions (Herholz & Zatorre, 2012; Zatorre, 2013) is particularly relevant when devising targeted music-based health interventions for the purpose of rehabilitation in various neurological diseases, such as stroke, dementia, and Parkinson’s disease (PD) (cf. Särkämö, 2018; Sihvonen et al., 2017). Rather than focusing on therapeutic processes and complex clinical presentations, music-based interventions based on experimental findings in music psychology and neuroscience use validated, and sometimes standardized, procedures and measurements, and highly-controlled protocols aimed at identifying the intervention’s underlying mechanisms. Many of these interventions have been evaluated through controlled studies focused on the neuronal networks underlying any beneficial effects (e.g., reward, plasticity of sensorimotor systems, arousal, affect regulation; for a recent review, see Sihvonen et al., 2017). Music has been argued to enhance well-being throughout the life span by activating multiple brain networks, showing high potential for supporting or aiding in the recovery of brain function (e.g., Särkämö et al., 2008), and by providing reward value (Salimpoor et al., 2015). Increasing understanding of the brain networks supporting music perception and performance allows theory-driven music interventions to be tested in clinical settings (Dalla Bella, 2016, 2018). A more prominent focus on evidence-based procedures also creates a different set of affordances to apply music technologies in health interventions, for instance by using gaming approaches that can be used independently of a therapist, or by using MIR technologies to automatically select appropriate music for a particular patient.

1.2. Interactions Between the Disciplines For Developing Music Technology for Health Care and Well-Being

Compared with collaborative research

1.2.1 Potential Ways of Working Together and Finding Shared Goals

The combination of these different disciplines for developing music technology for health care and well-being requires bridging several gaps. Reciprocal relationships between the different disciplines may emerge based on their shared goals.

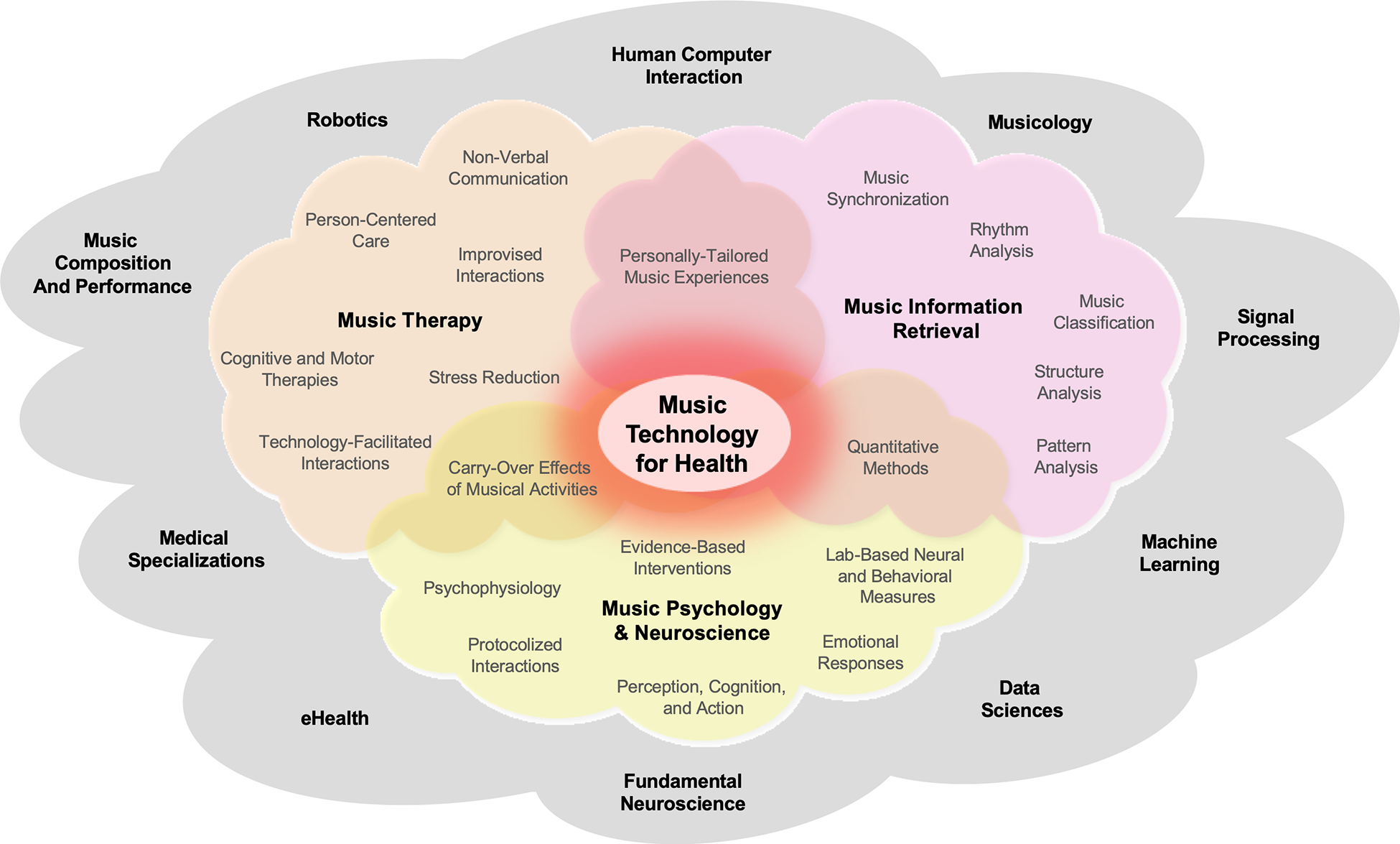

Cross-disciplinary approaches (i.e., when the methods from one domain are used to answer a question from another domain, but not addressing shared questions) may often be well suited for these purposes, and may be highly effective. For successful interdisciplinary collaboration, a shared problem space should be identified (i.e., specific questions that all the disciplines involved consider to be worth pursuing). The obstacles to collaboration between disciplines lie not only in their differences with respect to approach and methodology, but also in the need to establish a shared terminology. Although the overarching aim—creating applications that support patients or general wellness—binds the domains together, substantial effort is still necessary to create research initiatives that satisfy or at least do not reject the various disciplines’ more specific goals. Figure 1 depicts the (non-exhaustive) multidisciplinary space relevant to the current topic.

Multidisciplinary space relevant for developing music technology for health, with notable topics and methods from music therapy, MIR, and music psychology & neuroscience (in color), as highlighted in the current article. These terms are non-exhaustive, but rather display some of the concepts discussed in this article. The colored clouds encompass the concepts and approaches of the fields in bold font, and these fields naturally contain some overlap. Other potentially relevant fields not discussed in depth here are represented in the larger gray cloud.

1.2.2. Reductionism and Reproducibility Versus the Holistic Approach of Music Therapy

There are significant gaps between the approaches common for the different research areas. The tensions between disciplines with different levels of analysis have been described by Wilson (1977) and rely to some extent on the use of different methods and approaches to construct knowledge. One notable difference is that music therapy aims to employ a holistic approach in which the therapeutic use of music is central to the goal of stimulating social, linguistic, and affective dimensions. Music therapy not only examines functional outcomes such as measurable behaviors and physiological indicators of health and well-being, but also extensively relies on subjective, first-person accounts by patients and subjective second-person accounts by a significant other or the therapists, which often require self-reporting (e.g., pain, mood, and psychological distress). Music therapy offered by a trained music therapist is characterized by the presence of a therapeutic process and the use of personal music experiences (Bradt & Dileo, 2014; Gold et al., 2011), focusing on how best to benefit the patient. This may lead to a certain amount of skepticism towards experimental research—because experiments in fundamental research studies are seen not as directly or immediately benefiting the involved patients themselves but as potentially benefiting future patients. Conversely, the holistic perspective is often seen by experimental researchers as lacking methodological rigor or the ability to produce evidence that compares to medical standards. Fundamental research into the underlying mechanisms involves developing specific hypotheses, models, and methods to examine music perception, cognition, and behavior, typically in a controlled environment. Hypothesis testing involves reducing the number of variables present in natural (or “ecological”) contexts, in order to produce generalizable findings. Applied research in this area tends to focus specifically on effectiveness and generalizability (cf. Sihvonen et al., 2017). This approach has the advantage of being theory-driven, and based on the collection of quantitative data in clinical populations. Quantitative data is obtained by testing large samples of patients in randomized controlled trials (RCTs), employing standardized outcome measures spanning from behavioral tests to brain measurements (e.g., fMRI, EEG). RCTs usually include a (preferably active) control non-music condition, which allows pinpointing of the benefits selectively associated with music perception or music activities (e.g., Särkämo et al., 2008, 2014; for a review, see Särkämo, 2018).

Reductionism in empirical sciences might be seen as a limitation from a holistic perspective, as it over-simplifies complex phenomena. Although holistic methods may preclude strong conclusions, they offer specific hypotheses that can be tested. Moreover, some studies find that methodological differences (such as not having an active control group) may lead to inflated results (for an example from education, see Sala & Gobet, 2020). Developments in signal processing, machine learning, and big data provide tools to find regularities in complex data, which can be collected in more ecologically valid contexts. For example, the design and development of digital technologies has specific targets, and drastically reduces the multimodal complexity of the interaction between humans, and between humans and their environment. Simultaneously, new digital tools introduce variables with unpredictable outcomes with implications in the domain of health. Further, as smart phones and physiological sensors become ubiquitous, there are increasing opportunities to collect data from natural contexts, contributing to the integration between the above-mentioned disciplines. The need for varying levels of evidence is an ongoing discussion (e.g., Cheever et al., 2018) and collaborations between these fields require strategies to ensure that different levels of inquiry can be addressed while taking into account the limitations of the translations. Overall, the integration of different approaches would be for reductive, controlled experiments to aim for maximal clinical relevance, while clinicians would aim to use only methods that are supported by RCTs. The use of music technology may help to bridge this gap by quantifying and analyzing data from naturalistic settings.

1.3. Affordances of Music for Therapeutic Use

Music has certain characteristics and affordances that underpin various therapeutic applications in health care: for example, emotion regulation, motivation, perceptual entrainment and motor coordination, and social interaction. The potential to influence these processes should be considered when developing music technologies.

1.3.1. Emotion Regulation

Music and emotion are intrinsically connected (Meyer, 1956). The ability of music to induce affect and support emotion regulation in listeners has been the subject of extensive empirical investigation (Moore, 2013; Thoma et al., 2012; Van Goethem & Sloboda, 2011), and many approaches have used technology to leverage music to mediate affective states. For example, machine learning models for emotion and tension detection in music have become quite sophisticated (Herremans & Chew, 2016; Phuong et al., 2019; Yang & Chen, 2012), enabling specialized music recommendation (Han et al., 2010), playlist generation (Kabani et al., 2015), and affective automatic music generation (Scirea et al., 2017) to guide the listener to a target emotional state, or allow listeners to self-regulate their emotions using neurofeedback (Ehrlich et al., 2019; Ramirez et al., 2015; see Sections 2.3.1 and 2.4.3. Additionally, music is known to be capable of reducing stress (and induce calming states) in listeners (Chanda & Levitin, 2013; Gillen et al., 2008; Juslin & Västfjäll, 2008; Koelsch, 2015). The ability of music-assisted relaxation techniques to significantly decrease patients’ physiological arousal and improve stress reduction was confirmed in a meta-review by Pelletier (2004), and by more recent meta-analyses of randomized controlled trials (RCTs) focusing on the effects of music interventions and music therapy stress-related outcomes (De Witte et al., 2019, 2020).

1.3.2. Motivation and Adherence

Patients’ compliance and adherence to prescribed therapies—for example, exercise adherence (Forkan et al., 2006; Fritz et al., 2007)—is a notoriously difficult issue that can lead to less-successful patient outcomes. Motivational benefits may be enhanced through the use of personally preferred music, activating general reward mechanisms (Salimpoor et al., 2011; Zatorre, 2015). A recent review by Ziv and Lidor (2011) reports that adding music to therapy programs can positively impact patients’ exercise capacity and motivation, improve adherence and overall function in those suffering from neurological diseases, and even lead to improved life satisfaction in elderly individuals. For example, there is evidence that musical feedback systems for exercising (e.g., the Jymmin’ system) provide a sense of musical agency that reduces perceived exertion (Fritz et al., 2013). Even between music therapy sessions, adherence to at-home training exercises might be higher through music-based technologies such as serious games (see Section 2.4.1). Moreover, music therapy may benefit patients with mental health problems that lack motivation for other therapies (Gold et al., 2013). The decreased dropout rate seen for music therapy compared to treatment as usual may also reflect increased motivation (Gold et al., 2013).

1.3.3. Perceptual Entrainment and Motor Coordination

One of the most salient aspects of music is its often-regular temporal structure, leading to the perception of an underlying beat or pulse. This beat allows the coupling of perceptual processes to the rhythmic structure of the music, by what is referred to as perceptual entrainment (cf. Large & Jones, 1999). This process, thought to drive attentional fluctuations (e.g., Nobre & Van Eden, 2018), can be captured with electrophysiological methods such as EEG (Fujioka et al., 2012; Nozaradan et al., 2011). Phase-locking of perceptual processes with this pulse facilitates a variety of entrained periodic movements (or motor entrainment) via auditory-motor coupling, from small finger movements to whole-body involvement seen in walking and dancing (see Section 2.2.2 for more on the analyses of data). Perceptual entrainment to a musical beat thus generates temporal expectancies which foster the alignment of movements to the anticipated event times. This tight link between musical rhythm and movement is supported by a compelling body of evidence in music cognition and cognitive neuroscience (Grahn & Brett, 2007; Janata et al., 2012; Zatorre et al., 2007). Perceptual entrainment and auditory-motor coupling are core mechanisms underlying a range of responses to music, such as its beneficial effects in rehabilitation of motor disorders (e.g., Dalla Bella et al., 2015; Nombela et al., 2013), which is leveraged in some musical serious games for rehabilitation (see Section 2.4.1) and in technology focusing on rhythmic gait training (Magee et al., 2017).

In a therapeutic context, entrainment is also used as a means towards attunement between patient and therapist, allowing them to engage in a more connected process, such as through interpersonal synchronization during improvisation therapy, as described in Section 2.2.3. Having a common rhythm is thought of as a highly rewarding activity in various social contexts (McNeil, 1995); it provides a sense of togetherness or intimacy (Himberg et al., 2018; Noy et al., 2015) and has been proposed to play a major role in bonding (Tarr et al., 2014).

1.3.4. Social Interaction and Group Therapy

Although music-making can be highly individual, it is predominantly a social activity, triggering social effects and behaviors. Music arguably emerged from social interaction, has been proposed to be a communicative action (Cross, 2014), and plays a role in bonding (Schulkin & Raglan, 2014; Tarr et al., 2014). Music is an essential developmental tool in learning to relate to others and expressing one’s internal emotional state (Geretsegger et al., 2015; Koelsch, 2015; Magee & Bowen, 2008), and we engage in music-making through rituals with others across the lifespan. Listening to music in the presence of others may strengthen the stress-reducing effect of the music intervention, which is believed to be caused by increased emotional well-being (Juslin et al., 2008), and increased feelings of social cohesion (Boer & Abubakar, 2014; Linnemann et al., 2016; Pearce et al., 2015). The aforementioned aspect of rhythmic entrainment, allowing people to synchronize their movements with each other, can also evoke positive feelings of togetherness and bonding, and decrease stress levels (Tarr et al., 2014). Virtual partners have been used to study social interaction in the visual (Dumas et al., 2014) and musical (Fairhurst et al., 2013, 2019) domains, and have provided insights into the ideal degrees of self-other merging for successful performance (Fairhurst et al., 2019). These studies in the field of neuroscience suggest potential technological applications that can be used in the therapeutic domain, such as aiming to increase feelings of cooperation and merging with others. Music technologies may create opportunities for groups of individuals to engage in virtual music therapy who cannot, for example, be in the same physical location, or who would not normally be able to participate in musical activities due to motor constraints (e.g., through new, enabling interfaces as discussed in Section 2.4.2.)

2. Various Use Cases of Music Technology in Health Settings

2.1. Overview of Health Settings and the Purpose of Clinical Treatments

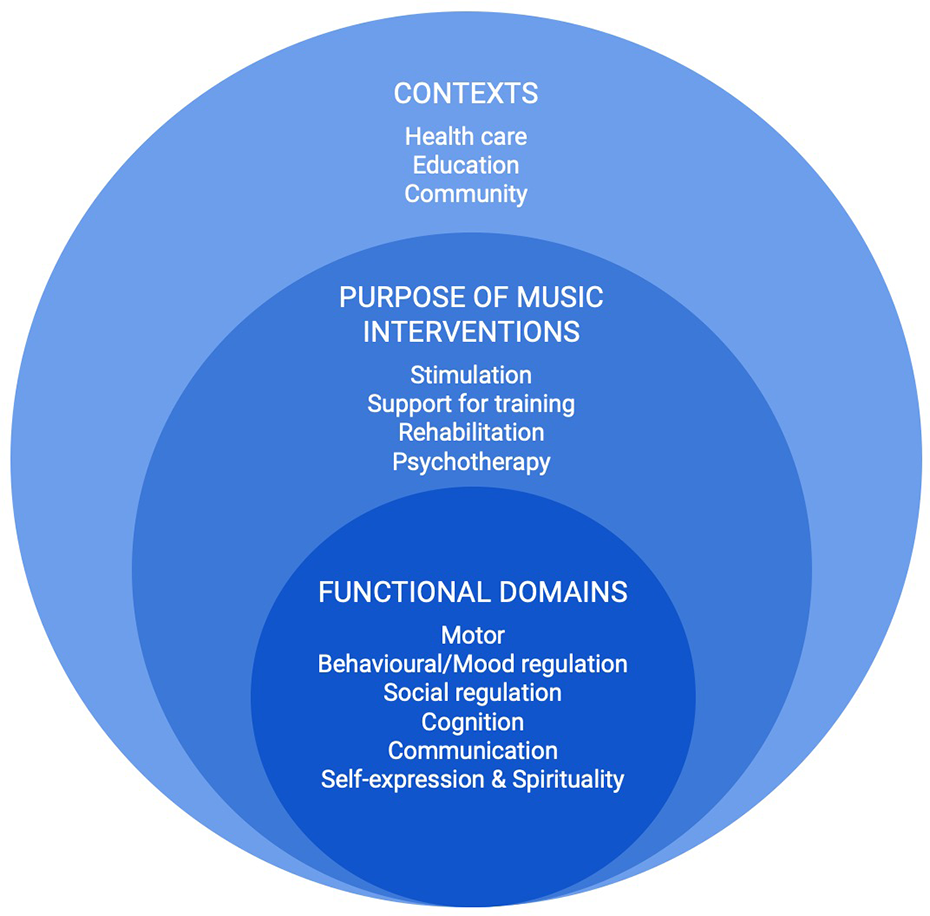

This section provides a short overview on the different settings and therapeutically oriented experiences in which music technology can be employed. This subsequently structures the overview on the different scenarios of developing music technology for health care and well-being discussed in Section 2.2 on Data Analysis. Music interventions and music therapy are applied in health care, education, and community settings to address clinical goals in functional domains (Wheeler, 2015) (see Figure 2). Music therapy is offered by a trained and qualified music therapist only, whereas music interventions are broader and are offered by both music therapists and other professionals. Music interventions for health and well-being may have several distinctly different functions. Depending on the problem or symptom that needs addressing (e.g., pain management, communication disorders, motor function), as well as what is possible for a specific group of patients or users, music-based activities may serve the function of (a) stimulation, (b) supporting functional training, or (c) being part of a broader rehabilitation program (also depicted in Figure 2). This categorization is not entirely comprehensive. Stimulation can be thought of as an activity that may not necessarily have a specific clinical goal and is often aimed at general enhancement of well-being or cognitive function through sensory stimulation, physical activity, or cognitive and social activities to address understimulation (Croom, 2015). Functional training relates to guided practice on a set of standard tasks, for an individual or in a group, intended to increase functioning in a specific area (Ripollés et al., 2016). Finally, in rehabilitation models, individually-tailored goals are identified and a personal plan is made to attain these goals, with an emphasis on reducing symptoms, promoting psychological development, and increasing everyday quality of life (Aalbers et al., 2017; De Witte et al., 2019). Notable functional domains where music can be used to address specific goals include motor activity or movement, cognition, communication, social skills, emotion and sensory regulation (including pain), as well as creative expression and spirituality.

A visualization of the contexts, purposes, and domains in which music technology may be applied to support health and well-being: Clinical and non-clinical contexts are the broadest, most general level to consider, followed by the purpose of the music intervention, and at the most specific level are the functional domains addressed in clinical treatments. Treatments (including the appropriate choice for technology for health) are determined by the functional domain that needs to be addressed, and every treatment has a specific purpose, and takes place within a broader context of health care or wellness. Taken together, the context, purpose, and functional domain helps to determine the type(s) of therapy required.

The care setting and the patient’s needs will direct the type of clinical activity: that is, not all people need rehabilitation unless they have active health problems, some may only need generalized training, and others may only need stimulation to maintain their current level of functioning. Musical activities can be varied to accommodate the level of need across each of these functional domains, and arguably, music technology can be incorporated into all of these scenarios as well.

In the following sections, we discuss various scenarios and use cases on how technology is currently used to enhance music therapy, or how music technologies are otherwise used for health and well-being, namely through applying computational data analysis methods for therapeutic goals, through providing support technologies for therapy sessions with the therapist, to provide support technologies for at-home-training in between therapy sessions, and for health and well-being outside the context of music therapy. While technologies that are developed do not necessarily apply to only one clinical setting (i.e., during therapy vs. in between sessions or independently), we primarily focus on the practical ways in which these technologies are used.

2.2. Data Analysis

Technology can assist in music (therapy) research and practice by measuring patient behaviors during interventions, as well as providing measurable outcomes and diagnostics. In this section we discuss different types of signals that are relevant to music therapy for which technologies may assist in their collection and analysis, namely biomarkers (Section 2.2.1) and kinematic data (Section 2.2.2), identifying meaningful moments from the therapeutic process in support of the therapists’ own awareness and record-keeping (Section 2.2.3), and the analysis and visualization of musical structures created by a patient or patient/therapist dyad (Section 2.2.4).

2.2.1. Biomarkers

In recent years, various

2.2.2 Kinematic Analyses

Relevant outcome measures in music-based interventions often come from kinematic analyses of behavior. Data recorded via motion-capture technologies (optics sensors, inertial measurement units (IMUs), accelerometers, and so forth; see Section 2.3.1) allow for the extraction of spatial and temporal motion features such as position, velocity and acceleration, movement smoothness, and joint angles. These measures play a critical role in assessing the efficacy of a music-based treatment on upper and lower extremity movements in patients with movement disorders resulting from, for example, stroke (Rodriguez-Fornells et al., 2012; Scholz et al., 2016) or neurodegenerative diseases such as PD (Ghai et al., 2018; Spaulding et al., 2013; Thaut et al., 1996).

Kinematic measures are particularly relevant, as they can drive dedicated music interventions exploiting innovative technological solutions. This approach has been recently applied with the purpose of individualizing rhythmic stimulation in the rehabilitation of motor disorders, for example in PD. It is well known that presenting a rhythmic stimulus such as a metronome or a musical excerpt with a salient beat has an immediate effect on patients’ gait kinematics, by increasing walking speed and stride length. This beneficial effect can be extended after several sessions of training with rhythmic stimuli (for reviews, Dalla Bella et al., 2015; Nombela et al., 2013). Although positive effects of a rhythmic auditory stimulus have been demonstrated (Arias & Cudeiro, 2010; McIntosh et al., 1997), individuals’ responses to rhythmic stimuli vary considerably; some patients show a clear benefit, while others may even experience deleterious effects of the stimulation (Dalla Bella et al., 2017, 2018). Thus, individualization of a treatment appears to be necessary in order to guarantee maximum effects of an intervention and overcome its potential deleterious effects. Mobile technologies coupled with sensors (e.g., IMUs) capable of monitoring patients’ motor behaviors provide an ideal opportunity for implementing individualized rhythmic stimulation (Dalla Bella, 2018). The adaptation of music features (e.g., the music’s beat) to movement kinematics (step time) in real time can be used to assist patients in synchronizing footsteps to the beat. Achieving step-to-beat synchronization via mobile technologies would allow targeting neuronal networks underlying audio-motor coupling, which are thought to play a compensatory role in rhythm-based interventions (Dalla Bella et al., 2015; Nombela et al., 2013; Schaefer, 2014). This idea has been implemented in different technological solutions building on bidirectional coupling (WalkMate: Hove et al., 2012; DJogger: Moens et al., 2014), leading to mutual coordination, where the cues are dynamic and two systems (the rhythmic cue and the user) adapt to each other. This functionality is particularly appealing as it makes predictions about the conditions in which spontaneous synchronization of gait is more likely (Dotov et al., 2019). As such, it is expected to encourage spontaneous step-to-beat synchronization, while taking patients’ individual motor skills into account. Development of other technological solutions for rhythmic and sensorimotor training, inspired by serious games for health, is also underway (Bégel et al., 2018; Dauvergne et al., 2018). Given increasing availability of different kinds of sensors that register movement, immediate future directions specifically focus on benchmarking sensors for their sensitivity in measuring temporal and spatial precision, and potentially aligning these inputs with music delivery. To further create systems that can make use of kinematic measures, the first concerns are whether they are fast enough, precise enough and synchronized to the extent that auditory-motor coupling can successfully emerge.

2.2.3. Identification of Meaningful Moments Using Techniques from Information Theory and Automatic Pattern Discovery

A novel suggestion for the evaluation of therapeutic interventions is to develop approaches to automatically identify moments within therapy sessions that mark noticeable and significant change in a patient’s behavior (Fachner et al., 2019). Defining “moments of interest” (MOIs) during music therapy sessions is a non-trivial task for various reasons. As a first step, therapists need to specify how they identify such moments in recordings of therapy sessions, and annotate a collection of such recordings. This requires a solid definition and operationalization of an MOI from a clinical perspective, including an examination of (1) when music therapists observe MOIs during therapy and which musical features might be related; (2) whether music therapists agree on where an MOI occurs; (3) whether observed MOIs match patient reports (e.g., MOIs identified by therapists correspond with patients’ feedback); and (4) whether therapists’ annotations of MOIs relate to functional improvements in behavioral/neural states, and are able to predict clinical outcomes.

It remains to be explored whether an operationalization of MOIs (in terms of when a significant moment of change occurs and whether it relates to a change in musical features) can be achieved through discussion and agreement between therapists alone, or whether the use of machine learning models to extract those factors from a large corpus of expert annotations can help. Without sufficient agreement between therapists about what constitutes an MOI, computational models would have to be tuned to individual therapists or raters.

To develop computational methods to support the automatic detection of MOIs, a database of MOIs must be compiled from clinical observations, focusing on particular pathologies for initial method development. If a sufficiently large collection of therapists’ annotations of sessions is gathered, deep learning models could derive potential characteristics of MOIs. Video resources with detected MOIs may provide orientation when exploring recordings of therapy sessions as a resource for practice and theory development, as well as for including patient perspectives on MOIs.

In order to then investigate whether therapists’ reports on MOIs are associated with systematic changes in musical structure, it is necessary to adapt computational approaches, such as change-point detection in time series (Eckley et al., 2011), information theory (to identify moments with high “Information Content,” or surprise; e.g., Pearce & Wiggins, 2012), and automatic pattern detection methods, to examine where distributions over certain measured variables change significantly. These variables may relate to musical sound (e.g., event density, regularity, sound level, structural salience), interaction dynamics, and/or physiological measures (e.g., heart or breathing rate).

In contexts where music therapists work with symbolic encodings, such as with MIDI-data produced during musical improvisations (Foubert et al., 2017), methods for detecting MOIs can be used for produced musical note events. Potential avenues include the identification of emerging temporal regularities in the musical material (Volk, 2008) and moments of interpersonal synchronization during improvisations, such as a shared pulse (Foubert et al., 2017) achieved through perceptual entrainment (see Section 1.3.3), the description of repetitiveness in the musical interaction through automatic discovery of repeated melodic, rhythmic and/or harmonic patterns (Conklin, 2010; Melkonian et al., 2019; Meredith, 2015; Ren et al., 2018), and the application of information theoretic measures to the musical score (e.g., Abdallah & Plumbley, 2009; Agres et al., 2017; Pearce & Wiggins, 2012).

2.2.4. Analysis/Visualization of Musical Structures

The previous subsections explore data that may be collected from the user/patient and from the music created by the patient (or patient–therapist dyad). In this subsection we discuss methods from MIR to visualize the data to gain insights into the efficacy of interventions, in order to gain a clearer picture of the current state/progress of the patient, and guide future research and interventions.

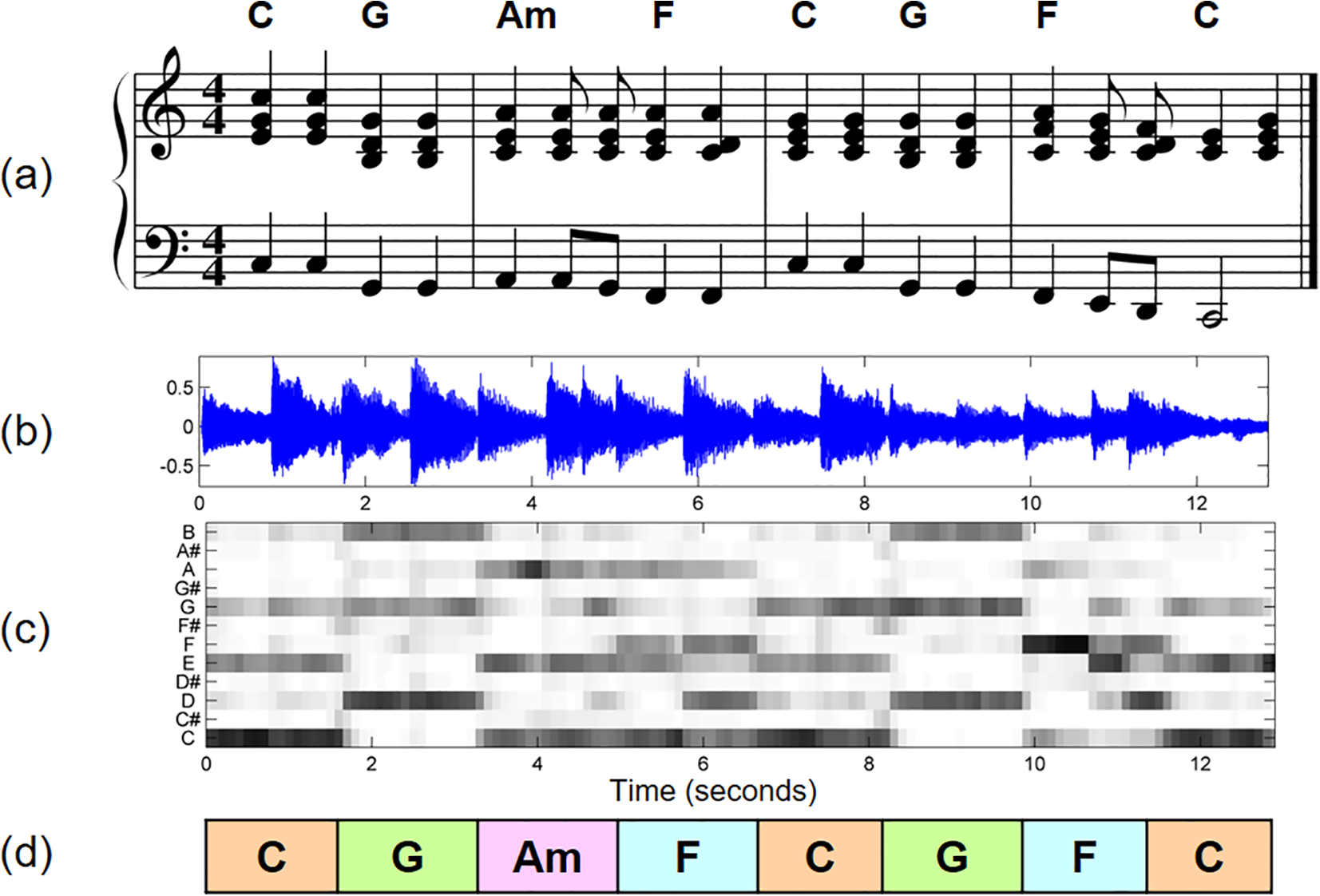

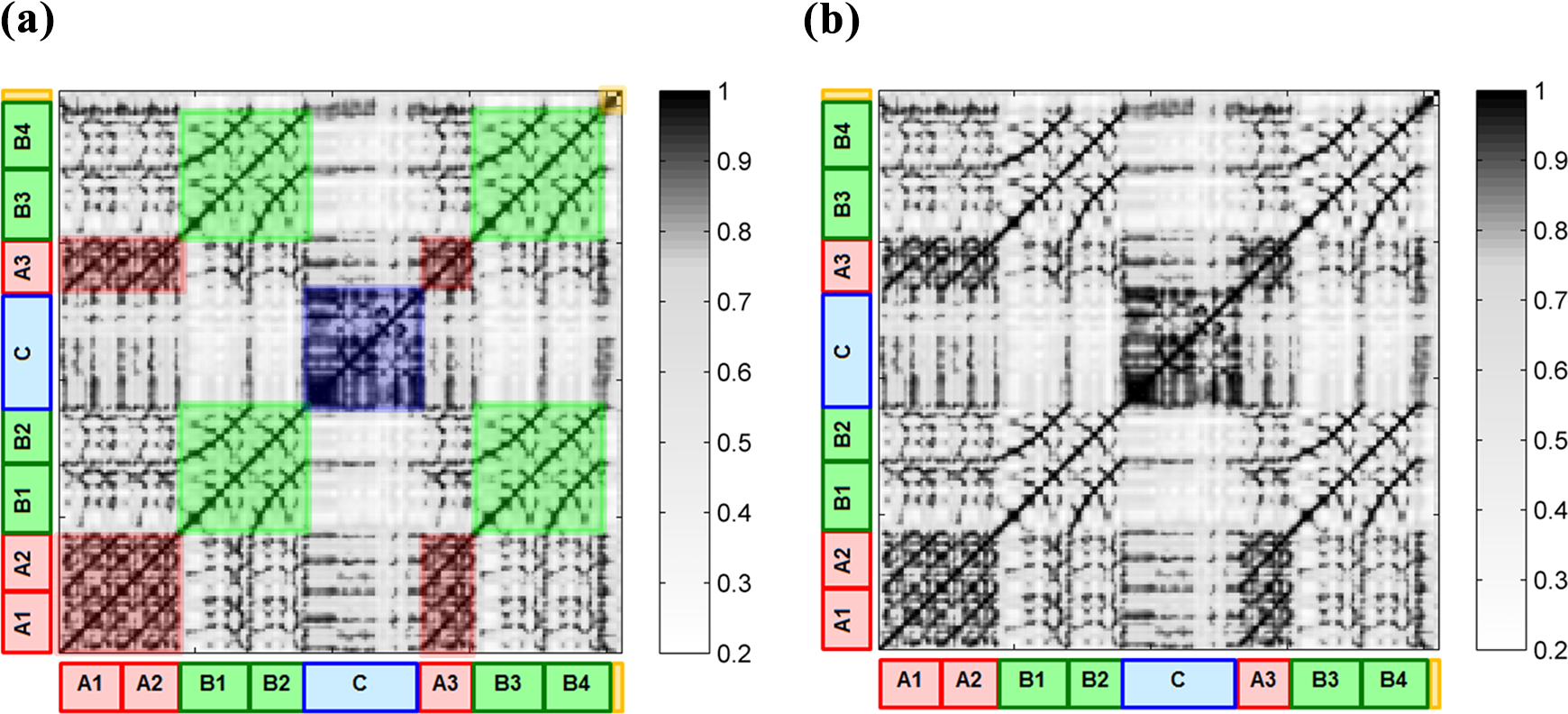

Typically, visualizations display the development of musical parameters over time. Whereas a majority of MIR approaches has focused on the analysis of audio signals, visualizations can be obtained from other time series as well, such as MIDI and motion data. Arguably the most widely used visualization in the audio domain emerges from a spectrogram, which displays the magnitude of a signal in a set of frequency bins over time, which can for instance, be used to focus on a clear representation of the main melody in a signal (see Figure 3 for an example). Derived from a spectrogram, a chromagram displays the energy of each of the 12 notes of the Western equal-tempered tonal system per time point, which is often used to estimate the sequence of chords or modalities (see Figure 4 for an example). Other visualizations are based on the detection of changes in a signal, for instance regarding timbre, tonal characteristics, or energy level, which have been considered to visualize the development of tempo-related processes over time (see Figure 5 for an example), and the organization of a piece of music into the structural units of a composition (see Figure 6 for an example). Research on the detection of repeated melodic and rhythmic patterns within music has also led to visualization approaches that focus on such patterns (e.g., Nikrang et al., 2014), yet, due to the typically enormous amount of repetition in musical material, this is not a solved problem. Visualizations of fine- and large-scale tonal structures in musical pieces (Sapp, 2005) as well as interactive systems for tonal visualization during musical performances (Chew & Francois, 2005) have been developed for symbolic music data. Symbolic representations of musical works (e.g., in MusicXML, Lilypond, or MIDI format) afford further possibilities for automatically generating visualizations (and sonifications) of musical structure, such as detailed representations of the motivic and thematic structure of a piece (see Figure 7).

(a) Musical score of a short excerpt of a soprano singer performing an aria from the opera

Chord recognition task illustrated by the first measures of the Beatles song “Let It Be”: (a) Score of the first four measures; (b) Visualization of a waveform of a recorded performance of these measures; (c) Visualization of a time-chroma representation; (d) Chord recognition result (idealized). Adapted from Müller, 2015, Chapter 5 (Adapted by permission).

Excerpt (corresponding to measures 26 to 36) of an orchestra recording conducted by Ormandy of Brahms’

Self-similarity matrix and annotated musical structure of a recording of Brahms’

A visualization of the output automatically generated by a point-set pattern discovery algorithm, COSIATEC (Meredith, 2015), for the fugue from J. S. Bach’s Prelude and Fugue in C major, BWV 846, from The Well-Tempered Clavier, Book 1.

Visualizations that are useful for therapists and patients need to be developed collaboratively. The aforementioned examples from MIR on visualizing musical structures such as chords, melodies, or repeated patterns can serve as starting points for potential adaptations to therapeutic scenarios, such as supporting the automatic detecting of MOIs. Previous examples of visualizations in other interdisciplinary contexts may provide useful directions. For instance, Zalkow et al. (2017) used chroma-based visualizations to explore the narrative in Wagner’s operas in collaboration with musicologists, and visualizations derived from MIR analyses have been incorporated in music education tools, such as Yousician. 3

In therapeutic contexts, visualizations can be computed from a collection of recordings of therapy sessions, which could inform the reflection of the therapist on the effects of an intervention, as discussed in the previous subsection. Visualizations may also provide useful visual feedback in real time during therapy sessions, as real-time visualization of musical structure has been used to augment artistic performances (Schedl et al., 2016). Whether such real-time visualizations provide a meaningful addition to therapeutic interventions, or if they rather disturb or disrupt the immediate interaction between therapist and patient, is a crucial question to be addressed. Defining specific use cases has to take into account the limitations of the existing MIR approaches, hopefully with the goal to attenuate these through collaborative development.

2.2.5. Data Analysis: Summary and Future Directions

Taken together, there are a range of data types that are suitable for the kinds of analytical approaches that are common in MIR but applied to a lesser degree in music psychology and neuroscience, and very rarely in music therapy. Within the MIR community, biomarkers and their relation to perceiving various aspects of music have attracted attention recently (Cantisani et al., 2019; Stober et al., 2014). A collaboration between MIR, music cognition, and music therapy would provide a highly promising area for research and application development. Using biomarkers to provide insight into, for example, affective responses to music (Koelstra et al., 2011) would also have a high potential as an additional perspective to discover MOIs in therapy sessions. Furthermore, there are many ways these methods could also include behavioral measures such as kinematic and self-report measures. A major challenge facing the automatic identification of MOIs is to achieve a detailed understanding of how clinically observed MOIs correlate with computable structural descriptions of musical excerpts and the musical actions of patients and therapists during a session. Another challenge is to understand how MOIs (particularly MOIs that can be automatically detected) can be used to guide the diagnosis, monitoring and treatment of patients. For the visualization of data, a major challenge is to develop computational tools for automatically analyzing, visualizing, and sonifying both digital music data and digital recordings of therapeutic sessions. Although these tools may help therapists understand their data and make clinical decisions, the technology must be sufficiently robust, fast, and easy to use for therapists to be able to beneficially integrate them into their daily workflow. Regarding the limitations of current MIR approaches, it is worth pointing out that the examination of synchronization between performers has been analyzed in a range of studies in music psychology and empirical musicology (Keller, 2014; Volpe et al., 2016), although the visualization of synchronization—which may be of value when analyzing therapy sessions—has only been approached recently (Maestre et al., 2017), and remains largely an open subject in MIR.

Overall, therapists and technologists need to work together to develop computational tools for automatically analyzing, visualizing, and sonifying both digital music data and digital recordings of therapeutic sessions. They need to determine which techniques are most suitable for enhancing therapists’ effectiveness and efficiency in analyzing interventions and making clinical decisions. As organized datasets will be required for most of the above use cases, the first steps require solidifying the definition and design of case studies, and the collection of the required data for collaborative system development.

2.3. Providing Support for Music Therapy Sessions with Therapist

Technology can assist music therapy sessions in a multitude of ways: enabling a patient with motor impairments to become an active agent in music-making through devices; acting as a “co-therapist” by providing musical loops within sessions and thus freeing the therapist to engage in more interactive live musical dialogues with the patient; providing music as a basis for movement exercises; and more. Primarily, the decision whether or not to use technology lies in empowering and enabling the patient to reach therapeutic goals. As discussed in Section 1.3.3, therapy sessions are examples of social interactions undergoing processes of attunement between affects and behaviors of therapists and patients. Therapists usually assess the patients’ behavioral responses and adjust their strategies accordingly. However, some of these responses might be invisible or difficult to interpret, which might be addressed by new measuring technologies or real-time feedback. The aim of using technology in such a setting is to increase, or at the very least not decrease this attunement; technology should not get in the way. In the following subsections we discuss the types of device, technologies or interfaces that are or can be used, and specific functionality that can be used to increase the quality of information received in the moment, namely providing real-time feedback.

2.3.1. Devices, Technologies, and Interfaces for Supporting Therapy Sessions

In applied settings involving people with clinical needs, music technologies can be loosely grouped into devices for creating music (e.g., electronic instruments, sonified wearable devices), devices for recording/listening to music, software for analyzing the music co-created in improvisations (e.g., audio software; MIDI Toolbox, see Eerola et al., 2004; Sonic Visualizer, see Cannam et al., 2010), and devices to target specific functions (such as brain–computer interfaces (BCIs) which target emotion mediation, and Serious Games to target specific cognitive or motor functions; Krout, 2014; Hadley et al., 2014). In addition to standard musical instruments and musical interfaces that can be used, there are various new devices that are created specifically for use in music therapy. Music-creating devices include self-contained music-creating devices (e.g., Theremin; 4 MIDIcreator, see Kirk et al., 1994; tablets; Soundbeam 5 ) and music software, usually combined with assistive devices or specialist controllers that can be used for improvisation, composition and sequencing (e.g., MIDIgrid, see Kirk et al., 1994; Ableton Live; 6 Switch Ensemble; 7 AUMI 8 ). This is distinct from apps developed for music generation that could also be adapted for therapeutic applications (see Section 2.4.3). Numerous other technologies have been developed or adapted to address specific clinical goals, for example, a pacifier that plays music when sucked to stimulate non-nutritive sucking in premature infants (Standley & Whipple, 2003), and the use of piezoelectric sensors combined with MIDI converters to engage and stimulate consistent motor performance within improvisation as part of motor rehabilitation after stroke (Ramsey, 2011).

The technology of optical motion capture delivers highly accurate measurements of body position and movement that can be ideal for tracking music-induced movement (Burger et al., 2013) but often relies on the existence of an extended, costly, and barely transportable laboratory setup. Less expensive, commercially available motion capture devices such as the Microsoft Kinect 9 and Leap Motion 10 sensors have been explored for their suitability for music-based solutions for health care (e.g., Agres et al., 2019; Beveridge et al., 2018; Agres & Herremans, 2017), although these devices offer less accuracy. Inertia-based motion capture provides an alternative, in which passive reflectors on the body are replaced by active body movement sensors (for a comparison of both technologies in a musical context, refer to Solberg & Jensenius, 2016). Whereas high-end inertia systems still come at a high cost, there are also low-cost alternatives available, which might be sufficient for many therapeutic applications where high accuracy of motion registration is not required. In particular, if it is only the movements of particular limbs that are the focus of the therapeutic intervention, single inertia-based accelerometers may be sufficient. It further remains to be explored what possibilities may emerge when using the sensors that are available in commercially available mobile phones.

Music-based BCIs, where a measured brain signal is being used as input to control a device or computer system, may offer an opportunity to support therapeutic processes addressing mental health, such as the treatment of depression (Ramirez et al., 2015) or self-regulation of emotions more generally (Ehrlich et al., 2019), or cases where the motivity of patients is severely impaired. EEG is the most commonly applied technology for recording brain signals. A variety of processing approaches and setups have been considered for BCIs (Lotte, 2014), and more specifically for brain–computer music interfaces (Miranda et al., 2011), but the complexity to set up meaningful interactions with the recorded signals remains a challenge. Alternatives that do not require movement being measured may lie in more focused measurements of, for instance, pupil oscillations (Duchowski et al., 2018).

2.3.2 Feedback Mechanisms and Sonification

When creating sounds on traditional instruments, one typically receives immediate auditory feedback of the performance, as one can directly hear what one is playing. This feedback, provided in a modality other than the visual or proprioceptive (which are the most common ways in which we receive information about our movements), adds an information stream that supports motor learning (Effenberg et al., 2016; Sigrist et al., 2015). This mechanism is at the foundation of many uses of music in motor rehabilitation that use sounded instruments (cf. Schneider et al., 2010), and has also been used in reverse, by visualizing sound, to assist auditory perception in speech therapy (Schaefer et al., 2016). However this additional perceptual modality can of course also be harnessed when using technological devices, which afford a much larger range of sounds that may be produced. One way in which implicit or latent processes can be made more explicit is by translating them to the sound domain as a feedback signal meant either for the therapist or the patient, already referred to previously for visualizations in Section 2.2.4. Of course, feedback may also be provided in the auditory domain, by translating into sound (or sonifying) a particular signal that is not auditory, such as (most often) some aspect of a movement (location, acceleration, smoothness, etc.). Once a specific state or activity is identified as desirable, its presence or absence may be signaled by creating a sound that does not derail the therapeutic process, but serves as an indication of where attention should be directed. Thus, a sonification can serve simply as an extra perceptual input to either amplify dimensions that are otherwise difficult to perceive, or give an additional indication of quality of performance and take on the role of a reward during practice or rehabilitation. In this way, an online monitoring system is devised not unlike the identification of meaningful moments described above, but devised beforehand, potentially specifically for an individual patient. Examples of sonified feedback being offered during movement rehabilitation after stroke are, for instance, provided by Scholz et al. (2016), where the location of an arm movement was translated to pitch height, offering an extra awareness of reach distance, or Kirk et al. (2016), who use percussion feedback sounds on bespoke instruments, allowing stroke patients to rehabilitate by drumming along to their own preferred music. These sonifications can also be extended to create new musical instruments, as described in the subsection “New Instruments for Musical Participation,” where the goal is to create music rather than to contribute to the rehabilitation process through direct feedback.

2.3.3. Providing Support for Music Therapy Sessions with Therapist: Summary and Future Directions

Taken together, there are various devices and technologies that can support music therapy sessions, making use of various input signals that range from standard manual manipulation of electronic instruments to a range of adapted, sometimes completely bespoke, instruments that allow patients to use any remaining ability to partake in musical activities. Numerous factors influence the choice of technologies that are used in therapy, most commonly the efficiency of the technology in meeting the range of needs that are frequently encountered in clinical settings. Other factors influencing the technology adopted include the cost of technology, its portability and ease of use, its consistency in performance and hardiness in surviving wear and tear, and whether therapists have the skills and knowledge in how to use technology and clear clinical indicators for its use in practice.

Future directions in the development of technology to be used during therapy sessions should take these factors into account, by focusing on practical aspects but also on versatility of the application. For instance, options could be included for users with different abilities by allowing different ways in which input is delivered to an instrument (varying from a button to a suck-switch to gaze direction to infrared motion detection, for example). By including multiple potential uses in an early stage of designing and developing new instruments, they can be opened up to a wider range of users. Also, more research on the influence of real-time feedback on learning paradigms will allow evidence-based application of this feature, to ensure optimal conditions for learning and/or re-gaining function.

2.4. At-Home Training In Between Music Therapy Sessions

In addition to approaches and applications specifically developed for music therapy or therapists’ use with patients, several music-based systems, games, and tools exist that allow patients to support their health and well-being outside of strictly clinical settings. Moreover, many of the systems used during therapy may, with some small adjustments, also be used in between sessions, if sometimes with a little help. Here, within the subsections of this part of the article, we discuss several technologies that are often intended to be used without a music therapist being present, but are still meant to be a part of a treatment program—that is, they are to be used toward specific, personalized clinical goals, often on the recommendation of a music therapist or other medical professional. We will focus specifically on serious games, go further into the adjusted instruments already mentioned above, and discuss music generation systems. While specially tailored instruments can of course be used as part of a therapy session, they are more often used in between sessions, with a more general goal of enabling musical expression, rather than focusing on a specific clinical goal. These kinds of applications not only allow more independence in musical activities but also provide an excellent means for patients to be able to practice specific skills in between music therapy sessions.

2.4.1. Serious Games

The term “Serious games” denotes games that have primary purposes other than entertainment, such as education, advertisement, training, simulation, or collecting data for scientific research (so-called games with a purpose). Serious games in the health context are used to train patients’ skills for rehabilitation, such as home-based training skills for PD patients (Dauvergne et al., 2018), treatment of traumatic brain injury (Martínez-Pernía et al., 2017), or training for patients with dementia (McCallum & Boletsis, 2013). Serious games offer rewards and different levels of challenges that help engage patients in the rehabilitation process and help increase motivation during the exercises. Moreover, serious games can be used to control training and to measure patients’ performances. Serious games for at-home scenarios can enable interactions between patients and family members, facilitating the improvements seen in rehabilitations when interaction with partners is involved (Takagi et al., 2019). In general, serious games for at-home training can complement therapy sessions with clinicians, continuing the rehabilitation process in between sessions with the therapist (especially if the game is introduced during a session but intended for practice in between sessions). Of course, many serious games also exist for subclinical problems (i.e., not in the context of a specific diagnosis or treatment), intended to be used outside of a personalized therapy program, which we will address briefly in Section 2.5.4.

Music within these serious games can play many different roles in the rehabilitation process, depending on the context within which the games are used. Part of this relates directly to the features of music discussed above, such as emotional effects, reward and motivation, rhythmic entrainment and social interaction. However, in gaming settings, music (or sound) is also often used to direct attention to a specific game element. These different elements may all be used in serious games (together or in isolation). While games themselves already have strong motivational elements, music may enhance this aspect. The rhythmic structure of music also lends itself for training rhythmic skills in various contexts, such as PD, dyslexia, and Attention Deficit Hyperactivity Disorder (ADHD) (see Bégel et al., 2017; for an overview). With specific regard to rhythmic entrainment and motor rehabilitation, a large number of game interfaces exist that register movement, based on various sensors (see earlier Section 2.2.2). The majority of these are directed at exercising, but newer games focus specifically on increasing temporal skills in healthy or clinical groups (e.g., Bégel et al., 2018; Dauvergne et al., 2018). As music can play an important role in these exercise games, it does not take much adaptation to conceptualize their use in movement rehabilitation. Some examples of musical games for movement rehabilitation developed outside of commercially available systems have been reported, such as the Music Glove (Friedman et al., 2011), for which tailored rehabilitation games have been designed. More recently, specific serious games have also been designed that use music-based motion capture systems for motor strengthening and rehabilitation (Agres & Herremans, 2017; Agres et al., 2019; Beveridge et al., 2018).

The attentional aspects also offer various ways of promoting clinical goals; for instance, several different mental disorders feature poor attentional focus, such that serious games can be used to monitor focus and refocus patients when they lose attention, with the help of music. Music’s inherent alternations between expected and unexpected moments in the musical structure offer the potential to maintain attention. While a range of musical serious games have been developed for music education (cf. Mandanici et al., 2018), games that are directly targeted at specific clinical problems, and that specifically use musical aspects as a main way to target these problems, are still relatively sparse.

2.4.2. New Instruments for Musical Participation

Several adaptive digital musical instruments (ADMI) have been developed that are generally intended for people with motor disabilities. As noted above, some of these instruments may be used during music therapy, but several are also meant to offer opportunities for musical expression without a clearly formulated clinical goal. In many cases, this entails creating a controller or other means of indicating one’s intentions, and connecting this controller to a musical output.

Different approaches for building ADMI have been taken for different degrees of motor disabilities. Solutions for people with partial motor disabilities (e.g., people with cerebral palsy without fine control but maintaining partial control of the upper limbs) have included a variety of motion sensors. Such sensors capture body movement and map it to music output. Sensors used in ADMI include ultrasonic distance sensors, pressure sensing foam (Kirk et al., 1994; Swingler, 1998), and a variety of touch sensors (Bhat, 2010). Other systems based on low-cost web camera systems are widely used (Lamont et al., 2000; Oliveros et al., 2011; Stoykov & Corcos, 2006; Winkler, 1997). In such systems, the screen is typically separated into distinct areas and when a movement is detected in a particular area, an event is triggered. All of the interfaces mentioned above are designed for people who retain a degree of limb movement. For people without adequate control of limb movements, interfaces have been developed based on breath control, or small head or tongue movements, such as the Magic Flute. 11

In very severe cases of motor disabilities, in which a patient is not able to move or communicate verbally due to complete paralysis of nearly all voluntary muscles in the body except the muscles which control the eyes (Bauer et al., 1979; Smith & Delargy, 2005), eye-tracking technology might be the only alternative. Vamvakousis and Ramirez (2012) proposed the EyeHarp, 12 an interface in which only gaze is used as input and that allows interaction and expressiveness on a level similar to that afforded by traditional musical instruments. The EyeHarp allows the control of chords, arpeggios, melody, and loudness using only the gaze as input. Evaluations of the EyeHarp show that it supports expressive performance from both the performer and audience’s perspective (Vamvakousis & Ramirez, 2016).

In cases in which no motor control whatsoever is preserved (including eye movement), musical playing using a BCI may be the only possibility. There have been several BCIs proposed for music performance (Miranda & Castet, 2014), for example, Vamvakousis and Ramirez (Ramirez et al., 2015; Vamvakousis & Ramirez, 2013, 2014, 2015) proposed BCIs based on motor imagery, event-related potentials (ERPs), and emotion estimation. In addition, there have been many proposed brain-activity sonification applications in which brain activity is mapped to sound (e.g., Gomez & Ramirez, 2011; Schmele & Gomez, 2014), however these applications do not decode the intention of the user and simply produce output based on an arbitrary brain-activity-to-sound mapping.

Additional overviews of ADMI have been provided by Larsen et al. (2016) and Frid (2018). Further developments in this area may focus on increasing treatment possibilities for a diverse group of users, and evaluating these applications in terms of their user experience. In addition, there is a need to better understand the effects of using these instruments, especially when they are used towards clinical goals or as part of a treatment protocol.

2.4.3. Music Generation and Recommendation for Therapy

Another direction for bespoke music creation that sometimes aids clinical goals and sometimes is used to stimulate expressivity as part of the therapeutic process, but outside of therapy sessions, is automatic music generation. Music generation systems compose music through a machine learning model, or ruleset, with minimal human input. The field of automatic music generation has matured significantly in recent years, and includes systems for melody generation, harmonization, interactive improvisation, narrative music for games and films, and others (Herremans et al., 2017). With the current advances in technology come opportunities for the field of health care.

Improvisation is an important tool for music therapists (Wigram, 2004). One type of music generation software focuses on interactive improvisation systems, an example of which is the “The Continuator” (Pachet, 2003). These systems can play together with an improvising user by learning from what has just been played. In the near future, there is an opportunity for music generation systems to be customized to therapy sessions. In this case, there are a number of requirements specific to music therapy. First, a one-on-one improvisation session requires

In addition to generating music with controllable affect, we could equally leverage existing musical pieces through playlist generation (music recommendation). For instance, Hirve et al. (2016)’s system, which was trained on a large database of digital recordings for biomedical research, recommends music based on the emotion predicted through a face detection model. A similar system by Dureha (2014) creates both mood-uplifting as well as mood-stabilizing playlists. Music recommendation for therapeutic purposes can extend beyond mood mediation, however. Zhao et al. (2010) have taken the first steps towards a system that recommends music based on sleep quality as measured by EEG; and Brimmer (2019) has devised a system for automatic personalized playlist generation for dementia patients, based on bio-sensor feedback. Ample opportunities exist for developing technology to augment situations in which the patient may benefit from listening to specific types of music or individually-tailored playlists.

2.4.4. At-home-training in between Music Therapy Sessions: Summary and Future Directions

Given the demonstrable benefit of continued practice in rehabilitation processes, there is a clear need for ways for patients to be able to engage in these exercises in between rehabilitation sessions. Music technologies provide excellent opportunities to facilitate this, as described above; many music technologies can be operated alone or at least without a music therapist present, but still contain the therapeutic elements that are part of an individual’s rehabilitation plan working towards specific clinical goals. The previously mentioned factors that feed into the choice of technology for a specific user and a specific clinical goal still hold here, the practical aspects of use and adaptability to a specific user are of primary importance in choosing to use a specific technology. Additionally, when a system or application is used as part of a therapeutic program designed for a specific patient, the application has to be even easier to use while alone, or require minimal assistance. This is a drawback of using complicated methods, such as research-grade EEG systems, which require some expertise to administer. That said, some more widely accessible, commercially available systems, such as the Muse13 EEG headset, 13 are bridging this training/accessibility gap. Moreover, the application should not easily allow use in any other way than intended, given that the user is not receiving any direct feedback other than on the aspects that are being measured by the application. This might be remedied in the future by adding more sensors to ensure correct use, and signal specific signs of use that may be maladaptive, such as penalizing anything that would deter from attaining clinical goals (as for instance a wrong movement in a movement game or in manipulating an instrument). Finally, it is important that if such an application is made a part of a therapeutic program, that its effects are supported by scientific evidence.

2.5. Technology for Health and Well-Being Outside the Context of Music Therapy

Many of the previously mentioned applications can also be used outside a specific therapeutic program, just based on the user’s own initiative. Often, these applications will address complaints that are subclinical, such as to regulate sleep or mood. Many commercial music services, apps, etc., claim to assist listeners’ emotional states or ability to focus (e.g., Brain.fm, 14 Relax Melodies, 15 Enophone 16 ) using music, and technology is increasingly being developed for individuals to use at home. The ensuing subsections describe technology that allows individuals to train or improve their wellness outside of traditional clinical contexts, but rather on the user’s own initiative.

2.5.1. Music Technology That is Used By Health Professionals Other Than Music Therapists

Various pieces of medical technology (medtech) are being developed for use with clinical populations that require some training to administer, but do not require a music therapist for their use. For example, the previously mentioned BCI systems have been developed for emotion regulation in listeners (Ehrlich et al., 2019; Ramirez et al., 2015); these kinds of medtech require someone with a basic knowledge of setting up EEG caps, but no specialized knowledge of music or music therapy. In addition, motion-capture systems for motor rehabilitation that incorporate music may be used with the help of physical or occupational therapists (see for example Agres & Herremans, 2017).

Other approaches use less-specialized technology. One such program uses iPods to deliver compiled playlists with personalized music from a dementia sufferer’s youth. This music listening program can be administered by nurses, carers or activity-coordinators; the music is played through an inexpensive mp3 player with headphones (Vinoo et al., 2017). This approach is attractive in part due to its easy accessibility; however, more robust research is needed to examine the outcomes of this activity, especially the potential risks and burdens given the level of dependency of the population. These are only a few examples of how modern medtech approaches or audio systems may allow some therapeutic aspects of music to reach patients who do not have access to a certified music therapist.

2.5.2. Robotics and HCI

Another direction of medical technologies that are used outside of music therapy, but can contain musical elements, are robots. By combining the rapidly progressing technologies in Robotics, Artificial Intelligence, Conversational Agents, and Connectivity, social robots are entering society. In education, for example, such robots can take different roles: teacher (or assistant teacher), peer, or novice (Bagga et al., 2019; Belpaeme et al., 2018). In therapeutic settings, research focuses more on the roles of coach or companion, sometimes in long-term health care processes (Neerincx et al., 2019; Robinson et al., 2019). Particularly for Autism Spectrum Disorders (ASD) treatment, research has shown promising results: robots can help therapists connect to people with ASD and support their learning of social skills (Pennisi et al., 2016). As an active companion in the joint performance of, or listening to music, the robots provide an interesting potential to advance music-based autism therapy (e.g., see Hoffman et al., 2016; Taheri et al., 2016).

Increasingly, robots will incorporate knowledge on the social, cognitive, affective and physical processes of human behavior, e.g., for harmonizing social and affective processes in educative child-robot sessions (Burger et al., 2017; Kaptein et al., 2016). A recent episodic memory model includes the role of music in the “ecphoric processing” (i.e., recording and retrieving) and “emotional appraisal” of situations (Dudzik et al., 2018). Based on such a model, a robot can be engaged in a social setting, and play the specific music that activates the memories of people with dementia in a desirable way. This way, the robot can help caregivers in providing a rich set of beneficial music-based activities for these people, through dyadic human–robot activities and robot-assisted group activities (De Kok et al., 2018; Psychoula, 2016). These robots learn over time to better adjust the music to the personal preferences and situation of the person involved. In general, the combination of social robots, music, and health care provides new opportunities for personalized situated therapies. A robot may have, for example, sensors to monitor the patient’s state and progress, a knowledge base to guide the patient’s therapeutic actions with music towards his or her personal goals, communication capabilities to inform and request the therapist, and methods to learn from the observed (interim) therapy outcomes. In this way, robots can augment therapists’ skills and, at the same time, reduce their workload. Current examples that demonstrate these ideas are robots that guide music-mediated activities to reminisce (e.g., people with dementia), to mitigate traumatic events (e.g., children with cancer), to rehabilitate (e.g., patients recovering from a fracture), or to socialize (e.g., persons with ASD).

2.5.3. New Music Interfaces for Musical Expression and Creation