Abstract

Expert musicians use a number of expressive cues to communicate specific emotions in musical performance. In turn, listeners readily identify the intended emotions. Previous studies of cue utilization have studied the performances of expert or highly trained musicians, limiting the generalizability of the results. Here, we use a musical self-pacing paradigm to investigate expressive cue use by non-expert individuals with varying levels of formal music training. Participants controlled the onset and offset of each chord in a musical sequence by repeatedly pressing and lifting a single key on a MIDI piano, controlling

Introduction

Music is pervasive in our everyday lives. One particularly compelling aspect of music is that it can be used as a nonverbal medium for emotional communication. Caregivers use affective songs and song-like speech in interactions with infants (e.g. Fernald et al., 1989; Ilari, 2003; Trehub & Trainor, 1998; Young, 2008) and adults commonly report using music to regulate their own emotions (Lonsdale & North, 2011). Surveys indicate that affective value is a primary motivation for listening to music (Juslin & Laukka, 2004; Sloboda & O’Neill, 2001). Likewise, musicians attest that communicating emotions is a central goal in their performances (Lindström, Juslin, Bresin, & Williamon, 2003).

It is widely accepted that there is a distinction between emotions that are

In general, adults agree on the emotion communicated by musical excerpts (Bigand, Vieillard, Madurell, Marozeau, & Dacquet, 2005; Juslin & Laukka, 2003; Mohn, Argstatter, & Wilker, 2011; Vieillard et al., 2008). In childhood,

Cues for emotional expression are embedded to some extent in the score by the composer. For example, complex rhythms are often perceived to express

Researchers have considered how various cues relate to different dimensions of emotions. Russell’s (1980) circumplex model of emotions, which characterizes emotions on the dimensions of valence (negative to positive) and arousal (low to high), has been widely used in this regard. Performers seem to have particular control over cues that distinguish between high- and low-arousal emotions. For example, performances conveying high-arousal emotions, such as

Though these studies have been very informative, it is interesting to note that “encoders” and “decoders” are usually mismatched—encoders must be musically experienced in order to perform the music, but decoders may have had no experience with formally producing music at all. It is not clear that formal music training discernably improves emotional decoding of professional performances (Bigand et al., 2005; Juslin, 1997), and these results are consistent with research demonstrating that day-to-day exposure to music confers a degree of sensitivity to many aspects of music, resulting in “musically experienced listeners” (for a review, see Bigand & Poulin-Charronnat, 2006). Nevertheless, there is reason to believe that the situation is different for musical production. While many societies have a

Technological advances have made it possible to examine musical production without using musical instruments. Several experiments have employed an apparatus that includes a series of sliders, each controlling a different musical feature. In the first such study, Bresin and Friberg (2011) allowed participants to systematically vary different musical features (including tempo, sound level, articulation, phrasing, register, timbre, and attack speed) to communicate

Two additional studies have reported using a similar apparatus with participants who were not musically trained. Saarikalio, Vuoskoski, and Luck (2014) examined adolescents’ emotional communication of

In the present study, we used a simple self-pacing apparatus to examine the use of expressive cues in musical production across non-expert undergraduate performers with different levels of formal music training. A similar apparatus has been used in previous self-pacing studies to examine expressive timing and musical phrase structure in nonmusicians and preschool children (Kragness & Trainor, 2016, 2018). In previous studies, participants controlled the onset of each successive chord in a prescribed musical sequence. In the present study, this setup was adapted such that participants additionally controlled the offset and sound level of each chord. Thus, they could control the timing and loudness of each chord in the sequence. We predicted that nonmusician participants would use similar patterns of expressive cues as expert participants in previous studies, based on shared representations of musical expression from years of music listening, and that expressive cues differentiating emotions would be enhanced in participants in our sample who had relatively high levels of training.

Method

Participants

This research was approved by the university’s research ethics board. Twenty-four undergraduates (

Stimuli

We selected four excerpts from the chorales of J. S. Bach. Each excerpt contained three sub-phrases that were each eight chords in length, for a total of 24 chords, and began with an anacrusis (or “pick up” chord). Two of the excerpts (“Maj1” and “Maj2”) were originally composed in the major mode and two of the excerpts (“Min3” and “Min4”) were originally composed in the minor mode. Each excerpt was transposed to the key of F and small alterations were made to eliminate passing tones and ornamentations between each quarter-note-length chord. For each excerpt, a second version was created in the parallel major or minor mode (“Min1,” “Min2,” “Maj3,” and “Maj4”). 2 Thus, there were eight excerpts in total.

Each participant “performed” the emotions (

Order number.

Apparatus

During the experiment, each chord was generated online in the default piano timbre in Max MSP (version 5). The interface used by participants was an M-AUDIO Oxygen-49 MIDI keyboard, and the sounds were presented through a pair of external speakers located to the left and right of the participant in the sound booth (WestSun Jackson Sound, model JSI P63 SN 0005). Participants were seated in a chair in front of a monitor at a distance of approximately 3.5 feet. In front of the participant was a desk on which the MIDI keyboard sat.

Using the MIDI keyboard, participants could control the onset and the offset of each chord in succession by pressing and releasing the key. Each chord was sustained until the key was released, which terminated the chord. Only the middle C key elicited a chord; the other keys did not respond if pressed. The middle C key was marked with an orange sticker to remind participants which key to use. Additionally, participants could control the sound level of each chord by pressing with more or less velocity (greater velocity produced louder chords).

Procedure

Training phase

After completing the questionnaires, participants were trained to use the MIDI keyboard apparatus. They were informed that only the key marked with a sticker (middle C) would elicit a note or chord, and instructed to use the index finger of their dominant hand to “perform” music. They were told to continue to press the key for each chord until pressing the key elicited no sound, which was an indication that the excerpt was complete. They were told that they did not control

Testing phase

Prior to the testing phase, we asked participants to jot down words or pictures to remind themselves of a time they felt each of the target emotions:

Next, there were four separate blocks, each containing a listening session, a mechanical performance, and four alternating practice and performance sessions (one practice and one performance session for each emotion). The experimenter informed the participant that they would be asked to perform each excerpt with the four different emotions, and that they should do their best to communicate each emotion, because a new set of participants would later be asked to guess their intended emotion. Then, the experimenter left the sound booth and they were guided for the rest of the experiment by instructions on the computer monitor.

In each block, participants first

After the testing phase was complete, participants were given a questionnaire. They were asked to indicate how easy they found it to play each of the emotions (1 =

Results

We investigated three expressive cues: tempo (time between consecutive onsets), key velocity (which was experienced as loudness), and articulation (for each interval between onsets, the proportion of that interval in which the chord was played). We additionally analyzed durational variability using the normalized pairwise variability index (nPVI; Grabe & Low, 2002). This measure of variability describes the degree of contrast between pairs of consecutive durations (e.g., Hannon, Lévêque, Nave, & Trehub, 2016, Huron & Ollen, 2003; Patel & Daniele, 2003; Quinto, Thompson, & Keating, 2013). Though rhythmic patterning is usually considered to be a compositional cue rather than expressive cue, in the current study participants could use rhythmic patterns to communicate emotions if they desired.

Because planned emotional performances were of particular interest, the “mechanical” trial and the practice trials were excluded in the present analyses. For each cue, a separate 2 × 2 within-subjects analysis of variance (ANOVA) was performed with factors arousal (high and low) and valence (positive and negative). All participants experienced all four performance blocks, except for one participant who completed only three performance blocks due to technical malfunction. Archived data are available at the link provided in the Supplemental Material.

Tempo

Tempo was defined as the average number of onsets per minute. The ANOVA indicated a significant main effect of arousal (

Use of expressive cues for each emotion. (a) Average tempo used to convey each emotion. Higher values indicate more onsets per minute and faster tempi. (b) Average nPVI used to convey each emotion. Higher values indicate more pairwise durational contrast. (c) Average velocity used to convey each emotion. Higher values indicate greater velocity (and higher sound level). (d) Average articulation used to convey each emotion. Higher values indicate more connected chords.

Rhythmic variation

Normalized pairwise variability was calculated using the formula developed by Grabe and Low (2002) for speech analysis and subsequently used in the context of music by Patel and Daniele (2003):

where

Sound level (key velocity)

Sound level was measured by the velocity with which the chord was pressed (recorded as MIDI velocity) on a scale of 0 (minimum velocity) to 125 (maximum velocity). The ANOVA revealed main effects of both valence (

Articulation

Articulation was considered to be the proportion of the inter-onset interval in which the chord was played (e.g., if a chord was played for 300 ms and the next onset was initiated 300 ms later, the articulation value would be .5; if the next onset was initiated 900 ms later, the articulation value would be .25). The ANOVA revealed main effects of both valence (

Correlations between expressive cues

To examine relationships between expressive cues, Spearman’s rho was calculated for each pair of expressive cues (Table 2). Because six correlations were examined, the Bonferroni-corrected significance cut-off of

Correlation coefficients between expressive cues.

The role of musical training

Although all participants were undergraduates and non-experts musically, they represented a wide range of musical experiences, as revealed by their responses to the self-report inventory of the Gold-MSI. Specifically, eight participants reported no formal music training at all (0 years), while others reported upwards of 10 years of training. In order to examine whether those with formal music training used cues differently from those with no training, we separated participants into three equal-sized groups based on their scores on the Formal Musical Training subscale of the Gold-MSI (see Table 3), which combines information about years of formal music lessons, music practice, music theory training, and more. Participants in the “no training” group reported 0 years of formal lessons (

Participant demographics (Gold-MSI self-report questionnaire, percentile scores).

Next, all of the previous ANOVAs were rerun with the additional between-subjects variable of

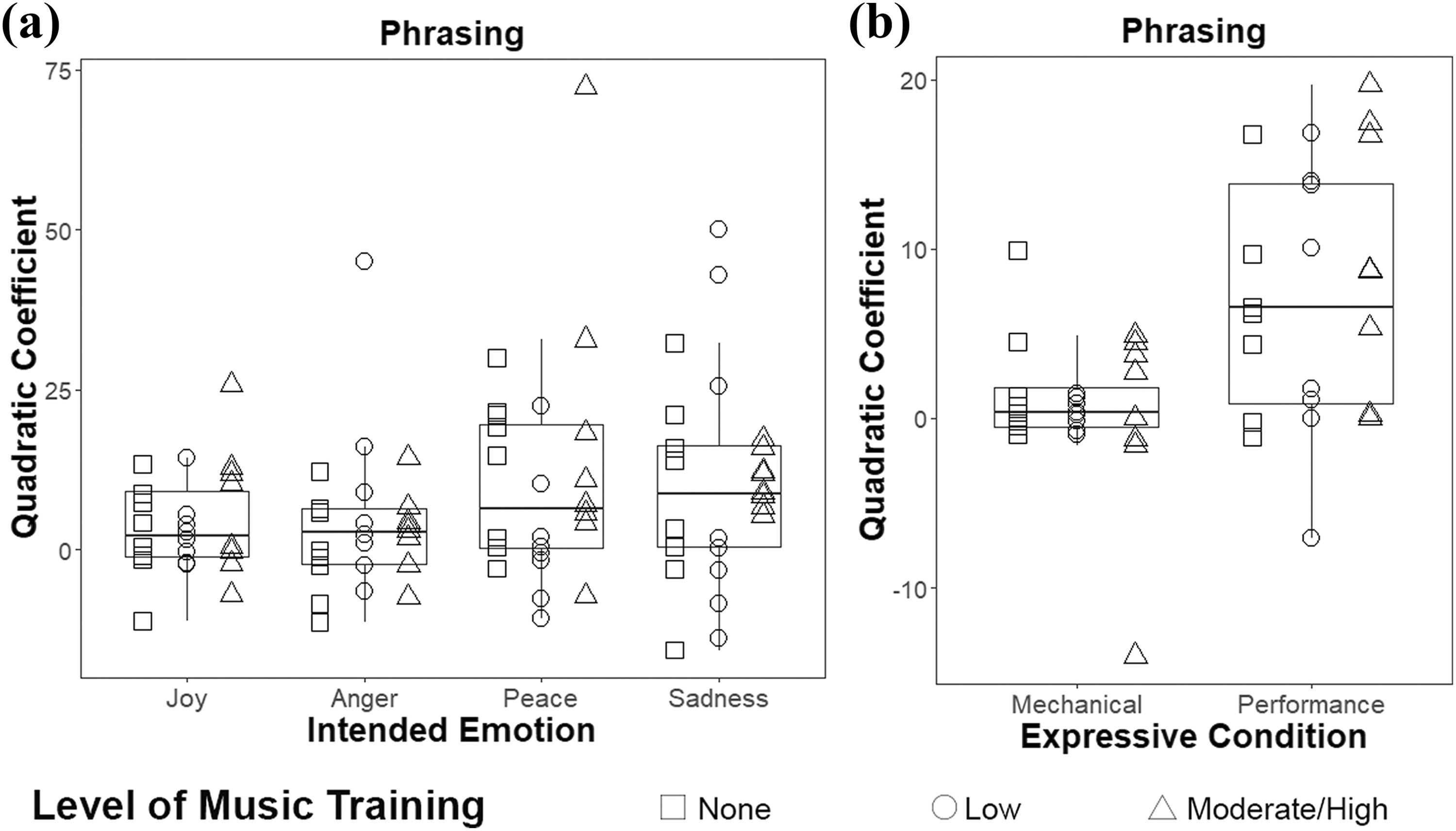

Phrasing

Although no differences were found between levels of music training for any of the expressive cues above, it was possible that differences would emerge in analyses of longer-term sequential aspects of the performances, such as occur at the phrase level. This idea was motivated by previous findings that musicians are more sensitive to longer-term musical structures than nonmusicians (Chiappe & Schmuckler, 1997; Drake, Penel, & Bigand, 2000). To examine the degree of expressive speeding and slowing in phrases, a quadratic equation was fit to the inter-onset intervals of each eight-chord phrase. Because the end of each trial was initiated by the offset of the final chord, no inter-onset interval was available for the final chord. Thus, the final phrase (chords 17–24) of each excerpt was discarded for this analysis. The quadratic coefficient (curvature) was compared across emotions. A larger quadratic coefficient indicates greater curvature (and thus more exaggerated demarcation of phrasing by time variation). An ANOVA with within-subject factors

Participants’ use of phrasing, operationalized by the curvature of a quadratic fit to participants’ inter-onset intervals across each eight-chord phrase. Higher values indicate greater curvature, and more exaggerating phrasing. (a) Average phrasing used to convey each emotion. (b) Average phrasing across all performance trials (collapsed across emotion) compared to phrasing in mechanical trials.

To examine whether participants used timing variation at the phrase level as part of their expressive performances at all, the quadratic coefficients across all performance conditions were collapsed and compared to the quadratic coefficients in mechanical conditions. An ANOVA with within-subject factor

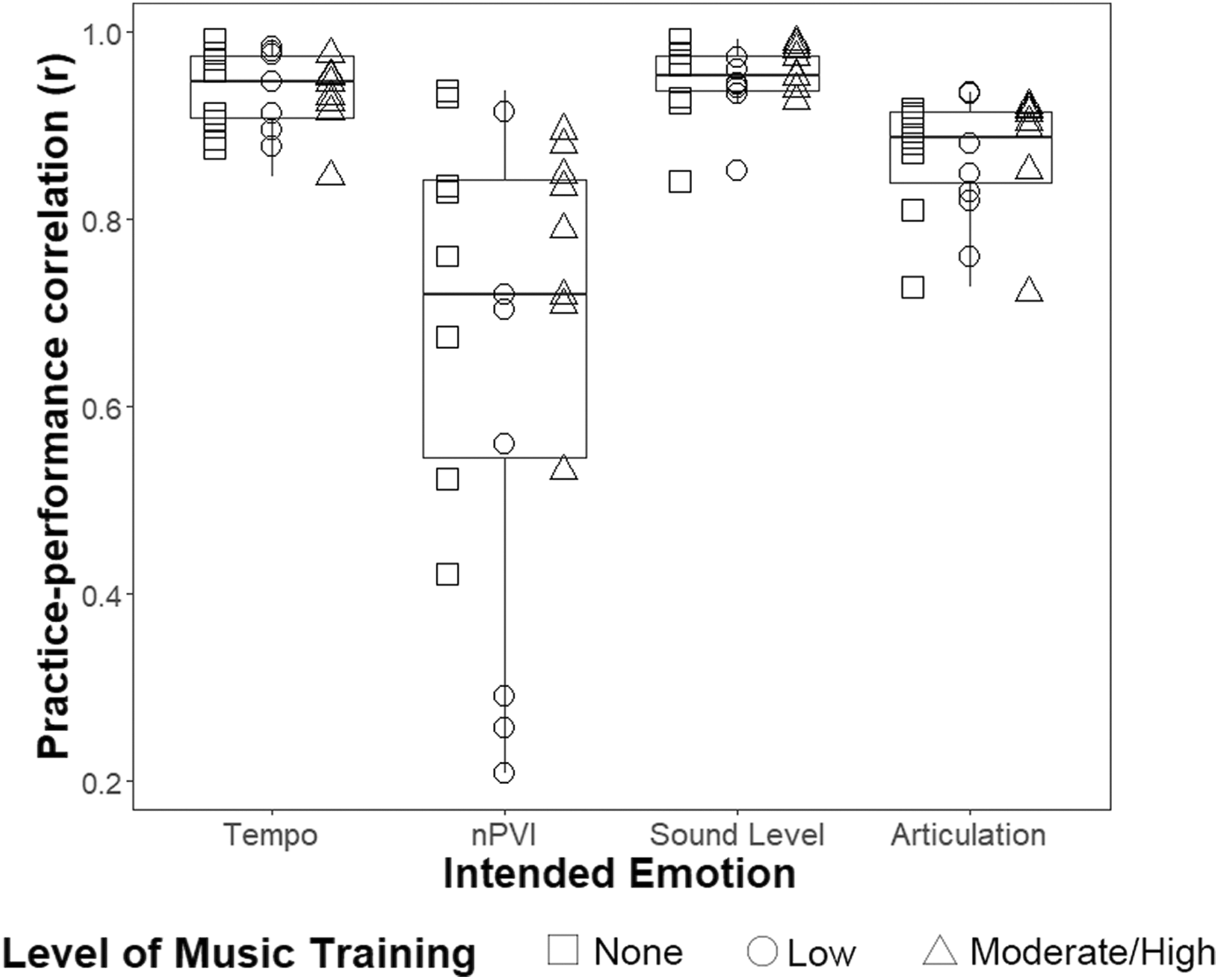

Associations between practice and performance

Because we were primarily interested in examining participants’ planned output, only performances were included in the previous analyses. As a post-hoc exploratory analysis, we examined participants’ degree of consistency for each expressive cue between practice trial and subsequent performance trial pairs. Pearson’s

In general, participants were highly consistent. One participant had correlations that were greater than two standard deviations away from the mean for each expressive cue, and was therefore excluded in the analyses (see archived data for details).

The correlation data for each expressive cue (tempo, nPVI, sound level, and articulation) were submitted to an ANOVA with

Correlations between practice and the subsequent performance trials, separated by expressive cue. Higher values indicate greater consistency from practice to performance.

Ratings

After participants completed all four performance blocks of the experiment, they were asked to rate the difficulty of conveying each emotion (Figure 4(a)). Because a Shapiro–Wilks test indicated that the data were non-normally distributed (

Participants’ ratings after the experiment. Error bars represent within-subject standard error of the mean (Cousineau, 2005). (a) Participants’ average rating for difficulty to convey each emotion (1 =

Participants were also asked to rate the extent to which they felt the intended emotion while playing it (Figure 4(b)). Because the data were non-normally distributed (

Discussion

Although many studies have investigated how performers use expressive cues to communicate emotions in music, few previous experiments have examined nonmusicians’ expressive productions. This study demonstrated that those with little to no musical training use timing and loudness cues to differentiate musical emotions in a production setting. Moreover, musically untrained participants used the available cues in ways that were nearly identical to those used by musically trained individuals in our sample. Consistent with previous studies using highly trained musicians, differences in expression were most strongly associated with differences in the arousal of the intended emotion, such that performances of joy and anger were played with a faster tempo, more loudly, and with more disconnected chords than peacefulness and sadness (e.g., Gabrielsson & Juslin, 1996; Juslin, 1997). Valence was also represented to a lesser extent - anger was played more loudly than joy, and within each arousal level (anger vs. joy; sadness vs. peacefulness) negatively valenced emotions were played with more connected articulation than positively valenced emotions.

Finally, durational contrast as measured by the nPVI was used to portray sadness more than other emotions. This is broadly consistent with Quinto, Thompson, and Keating’s (2013) previous finding that high-level musicians used greater nPVI values in brief compositions intended to portray sadness than other emotions, though this contrast was not significant in their analysis. The finding is somewhat inconsistent, however, with previous reports that rhythms with durational contrast are often perceived to convey positive emotions (Keller & Schubert, 2011; Thompson & Robitaille, 1992). One possible explanation for this apparent inconsistency is that the chord sequences were drawn from chorales by J. S. Bach in which the chords are primarily equally spaced. Also, the task itself tended to encourage an isochronous interpretation, so rhythmic opportunities for the production of different categories of note length (e.g., eighth notes and quarter notes, the latter being twice as long as the former) were constrained. If this is the case, larger nPVI values may have been observed in

Although this is among the first studies to examine non-expert participants, multiple levels of music training were represented. Thus, as a secondary analysis, we explored whether participants with formal training used cues in a different way from musically untrained participants. The profile of expressive cues used by those with

Interestingly, a previous observational study of music lessons found that instructors spend surprisingly little time on expressive instruction, tending to focus more on technique (Karlsson & Juslin, 2008), despite the widespread belief that expressivity is central to music performance (Juslin & Laukka, 2004; Lindström et al., 2003). It has been proposed that this is because teachers often conceptualize expressivity as instinctual and difficult to verbally communicate (Hoffren, 1964; Lindström et al., 2003). This lack of expressive instruction is especially interesting considering that feedback from music teachers

The present results are consistent with previous perceptual studies that have found that nonmusicians are equally adept as musicians at identifying emotions in music (Bigand et al., 2005; Juslin, 1997). In the present study, participants mainly controlled

We asked participants to report any strategies that they implemented while completing the task using a free response format (see Supplemental Material). Though no statistical analyses were conducted on these responses, they offer several interesting insights. First, it is clear that regardless of level of training, participants were often explicitly aware of the cues that they intended to use. For example, S22 (moderate/high training) reported, “I sustained the notes more to match the peaceful and sad emotion. I cut the notes short for angry,” and S09 (no training) wrote, “sad = slower/louder, angry = faster/louder, peaceful = slow/quiet, happy = loud/fast.” A number of strategies were reported, including “imagining movie scenes (as well as their soundtracks) to fit with each emotion,” and “thinking about what music provokes these emotions.” Though participants’ free responses were not explicitly analyzed, they suggest that participants have some capacity to introspect about expressive cues and emotional musical production. Exploring the relationship between participants’ introspections and their expressive productions would be an interesting future direction.

Many participants expressed that one primary strategy was to reflect on memories that incorporated the target emotions, as they were instructed. This is consistent with past studies with musicians, many of whom reported that they believed that feeling the intended emotion is important for expressive performance (Lindström et al., 2003) and that they used recalling emotional memories as one strategy for inducing the intended mood (Persson, 2001). Interestingly, although differences in “difficulty” and “feeling” ratings were not significant, participants indicated greater mood induction for

The present results should not be taken as evidence that music training has no effect on expressivity. It is important to note that the target emotions each belonged to different quadrants of the two-factor model (Russell, 1980) and had been previously observed to elicit relatively high agreement across listeners in emotion recognition tasks (e.g., Gabrielsson & Juslin, 2003; Juslin & Laukka, 2003; Vieillard et al., 2008). Mixed emotions and aesthetic emotions (such as longing, love, awe, and humor), which generally have lower levels of agreement, are likely to be more difficult to express. Future studies could investigate whether music training alters expressive cues in the context of complex emotions. It is possible that there were features that differed across emotions or participants that were not captured by the measures included in the present analyses. Archived data are openly available for further exploratory analyses at a link available in the Supplemental Material.

Additionally, though our “no training” participants had no formal lessons at all, a post-hoc one-way ANOVA did not offer any evidence for differences between the three formal training groups in the Active Musical Engagement subscale of the Gold-MSI. Given that expressivity is not typically a focus in music lessons, it is possible that active engagement with music is more important for expressive production than formal training. The participants also did not differ significantly on their self-reported emotional engagement in music. However, one previous study has reported that people who score higher on a measure of emotional intelligence are better at recognizing emotions in music (Rescinow, Salovey, & Repp, 2004). Recent work has shifted toward more sophisticated and multidimensional conceptions of musical experience using subscales and composite measures of overall engagement (e.g., Chin & Rickard, 2012; Müllensiefen et al., 2014). Informal musicianship and emotional engagement with music may be important contributors to expressive production, although they were not investigated in the present study.

Overall, the present study demonstrated that participants with a variety of musical backgrounds can use the self-pacing apparatus to express emotions in music. Future work could use similar paradigms to investigate questions of expressive timing in a variety of other populations. The self-pacing method provides a complementary technique to the slider apparatus described in previous studies (Bresin & Friberg, 2011; Saarikallio et al., 2014; Sievers et al., 2013). Though the slider apparatus is relatively simple to use, Sievers and colleagues (2013) reported that participants in rural Cambodia found the continuous sliders to be uncomfortable to use, leading to decision paralysis. The self-pacing apparatus emulates many of the properties of instruments used world-wide, allowing intuitive control of onsets, offsets, and loudness. Using our self-pacing apparatus, it would be possible to examine amateur and expert performers’ expressive tendencies while performing either music congruent with their own musical system or music of a foreign musical system, which could illuminate questions about the universality of expressive cues for basic emotions. Similarly, whether the slider technique would be conducive to testing young children’s musical expression is an open question, but the self-pacing apparatus can be used with children as young as three years old. Previous studies examining children’s expressive productions have been limited to analyzing singing (Adachi & Trehub, 2000; Adachi, Trehub, & Abe, 2004), and we are presently using this apparatus to investigate children’s expressive musical production.

Supplemental material

Supplemental Material, AdultExpressiveCue_SuppMat_26Feb2019 - Nonmusicians Express Emotions in Musical Productions Using Conventional Cues

Supplemental Material, AdultExpressiveCue_SuppMat_26Feb2019 for Nonmusicians Express Emotions in Musical Productions Using Conventional Cues by Haley E. Kragness, and Laurel J. Trainor in Music & Science

Footnotes

Author contribution

HEK and LJT jointly conceived and designed the study. HEK was responsible for participant recruitment, data analysis, and drafting the manuscript. Both authors revised the manuscript and approved the final version.

Acknowledgments

The authors thank Dr. Matthew Woolhouse for his guidance in stimulus creation and Dave Thompson for technical assistance. We additionally thank Farriyan Hossain and Mrinalini Sharma for their assistance running participants.

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was supported by grants to LJT from the Natural Sciences and Engineering Research Council of Canada (NSERC) and the Canadian Institutes of Health Research (CIHR).

Supplemental material

Supplemental material for this article is available online.

Notes

Peer review

Jonna Vuoskoski, University of Oslo, Department of Musicology & Department of Psychology.

Jean-Julien Aucouturier, IRCAM/CNRS/Sorbonne-Université, Science & Technology of Music and Sound Lab.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.