Abstract

Expressive music performance and cardiac arrhythmia can be viewed as deformations of, or deviations from, an underlying pulse stream. I propose that the results of these pulse displacements can be treated as actual rhythms and represented accurately via a literal application of common music notation, which encodes proportional relations among duration categories, and figural and metric groupings. I apply the theory to recorded music containing extreme timing deviations and to electrocardiographic (ECG) recordings of cardiac arrhythmias. The rhythm transcriptions are based on rigorous computer-assisted quantitative measurements of onset timings and durations. The root-mean-square error ranges for the rhythm transcriptions were (19.1, 87.4) ms for the music samples and (24.8, 53.0) ms for the arrhythmia examples. For the performed music, the representation makes concrete the gap between the score and performance. For the arrhythmia ECGs, the transcriptions show rhythmic patterns evolving through time, progressions which are obscured by predominant individual beat morphology- and frequency-based representations. To make tangible the similarities between cardiac and music rhythms, I match the heart rhythms to music with similar rhythms to form assemblage pieces. The use of music notation leads to representations that enable formal comparisons and automated as well as human-readable analysis of the time structures of performed music and of arrhythmia ECG sequences beyond what is currently possible.

Keywords

Introduction

Expressive music performance and cardiac arrhythmia share many common traits. An important one is that each can be viewed as the result of deviations from, or deformations of, an underlying pulse. Their origins suggest that the rhythms of such data streams can be encoded effectively using common music notation. I propose to treat these time displacements as bona fide rhythms and to represent them via a literal application of music notation. Music notation encodes abstract concepts of proportional relations among duration categories, and higher-level organizing constructs such as figural and metric groupings, and is typically used for abstract or conceptual, rather than literal, representations of time. The literal application (and consequent reading) of common music notation is counter to its common use by composers or performers. I co-opt the existing notation system to create symbolic representations that can enable formal comparisons and computer and human analysis of time sequences.

This work was inspired by a transcription of sight-reading wherein the rhythm notation captured both musical and cognitive disfluencies. The transcription, created by ear with the aid of the original score and audio and Musical Instrument Digital Interface (MIDI) recordings, demonstrated the possibility of notating serendipitous rhythms. In this article, I will use computer tools to rigorously mark onset times and obtain quantitative measurements of durations. The tempi and quantizations are chosen to maximize transcription precision, and the accuracies of the rhythm transcriptions are evaluated quantitatively against the original timings. Figural and metric groupings and any metric modulations are chosen manually, although this too can be automated, as will be discussed.

The technique is first illustrated using contrasting music examples that contain extreme timing deviations introduced in performance. The results highlight the gap between the score and performance, made all the more obvious by the use of the same notation to communicate the difference between the rhythms. Next, I apply the same transcription process to electrocardiographic (ECG) recordings of cardiac arrhythmias. While such rhythm transcription can be applied to any arrhythmia, I demonstrate the method on three excerpts of ECG recordings of atrial fibrillation. The notation shows clearly the rhythmic groupings and patterns such as repetitions and transformations through time. These are patterns normally obscured in the predominant ECG analysis approaches, which mainly consider individual beat morphology or features aggregated over windows of time.

Similarities between cardiac and music rhythms are further made tangible by matching the heart rhythms to music with similar rhythms to form musical assemblages. I apply the notation and retrieval-and-assemblage process to the atrial fibrillation ECG recordings, leveraging the innate rhythmic similarities to music with mixed meters, a siciliane, and a tango to generate the mirror pieces.

The precise and symbolic representation of performers’ idiosyncratic timings and of atrial fibrillation’s capricious rhythms points to a host of new computational analysis approaches for characterizing and comparing such time sequences. Once a formal representation for time structure exists in symbolic form, any number of encoding schemes can be employed to transform the symbols to machine-readable formats. These encoding schemes facilitate fast searches for specific rhythms and repeated patterns, allowing for data summarization and comparisons between sequences. Further analyses of the transcribed rhythms could reveal hierarchical structure in the time series data. Quantitative techniques can be devised to measure the distance, for example, between transcriptions of different performances of the same work. Finally, the symbolic representation lends itself to large-scale pattern search and categorization, for inferring performance style, characterizing arrhythmia subtypes, or predicting diagnostic outcomes.

The remainder of the article is organized as follows: first I review some related work on transcription, music performance, and mappings of cardiac information to music; next, I briefly review the sight-reading transcription that inspired this work; then, I present the transcriptions of the three performed music cases, followed by the three arrhythmia ECG transcriptions, and conclusions and discussions. An appendix documents the evaluations of the various transcriptions.

Transcription

The practice of transcription has an illustrious history. Olivier Messiaen’s (1956) use of birdsong in his compositions, such as Oiseaux Exotique, Vingt Regards, and others, is well known. The tradition of transcribing birdsong pre-dates Messiaen by many centuries. Hold (1970) gives a detailed account of composers’ and naturalists’ attempts at music representations of birdsong from 1240 onwards—these include staff, orthochronic, and graph notation, and the sound spectrograph.

Transcription has been especially useful for the study and reproduction of extemporaneous performances. Early examples can be found in Vaclav Pichl’s transcriptions of Luigi Marchesi’s (1792) elaborate note embellishments in four performances of the aria “Cara negl’occhi tuoi” and the rondo “Mi dà consiglio” in Nicola Antonio Zingarelli’s opera Pirrore d’Epiro, as described in Berger (2016). The Charlie Parker Omnibook (1978), a collection of transcriptions of the jazz saxophonist’s compositions and improvised solos, remains a staple in jazz studies. Historic performances like Keith Jarrett’s Köln Concert (1975) have been painstakingly notated for re-performance. Other transcriptions include those of Coltrane (1999), Duke Ellington (1971), Bill Evans (1967), and Ferdinand “Jelly Roll” Morton (1986), as reviewed in Tucker (1982).

Ethnomusicologists and composers transcribe field recordings of folk songs for analysis and as seed material for new compositions, respectively. Béla Bartók (1942) carefully converted to music notation the melodies of recorded songs in the Milman Parry Collection for re-use in compositions; see the discussion in Frigyesi (1985).

More recently, the push toward an empirical study of vernacular music has led to the transcription of traditional Chinese clapper music and rap music for systematic analysis; see Sborgi Lawson (2012) and Ohriner (2016), respectively.

This work was motivated in part by Practicing Haydn (Grønli, Child, & Chew, 2013), in which a sight-reading of a Haydn sonata movement is transformed into a performable score via transcription, complete with all the repetitions, pauses, starts, and stops. The transcription of sight-reading raises the following question: If the blundering mishaps and accidental discontinuities of a first encounter with a score can be recorded using music notation, what else might be amenable to such treatment? Here, we show that expressive performance and arrhythmia sequences can also be subject to such transcription processes, although the goal is not to produce a performable score, even though that is a desirable side effect, and the transcription will use a computer-assisted process that aims to minimize discrepancies between original and transcribed sequences.

Related to transcription of expressive performance, there exists a long tradition of scholarly work on the representation of intonation, dynamics, and pacing in expressive speech, dating back to Steele (1775). Recent forays into the transcription of speech melody and timing have turned to the direct use of music notation in a literal fashion. Leveraging commonalities between expressive speech and music, Simões and Meireles (2016) and Meireles, Simões, Ribeiro, and de Medeiros (2017) explored the use of music notation to represent the melody and rhythms of spoken language. In this work, the transcriptions are treated as literal expressions of the spoken pitches and durations. They are uniformly presented in 4/4 time, without regard to meter, although the authors propose that they will obtain natural metrical groupings from the stresses in speech in future work.

This article applies music transcription to unconventional contexts of expressive performance and cardiac arrhythmia, with the goal of recording these temporal processes and experiences, which are not normally preserved in writing. Special attention will be given to precise representation of timing and durations, and to the figural and metrical groupings that may be inferred from the patterns.

Representing performed music

The first application addresses the representation of performed music. The increased emphasis on music as performance rather than music as writing or notation, combined with the growth of computer tools for analysis of performed music, brings to a head issues of representing performed music. Anyone who has tried to generate a sound file from a digital score soon discovers that a performance rendered from the digital score is vastly different from that realized in practice. Thus, the information encoded in the score is insufficient for creating or re-creating a convincing rendition of the music. This is because, as Frigyesi (1993, pp. 60–62) points out, notation was not conceived to be transcription; it represents only an abstraction of the temporal experience, and not the actual rhythms of a performance. It is worth noting that the view of transcription as literal and notation as abstract is not universal. For example, Busoni considers the transfer of a piece from one instrument to another, the transfer of music concept to notation (composition), and the transfer from notation to performance to be different forms of transcription, see Knyt (2010, p. 111). The transcriptions in this article will use notation literally to encode time and other structures.

Music as performed differs from the information denoted on the page for many reasons. In performed speech, the measure of syllables and words in a text fall far short of the timings of the delivery. In music playing that aspires to the rhetorical style of spoken language á la Adolph Kullak, see Cook (2013, p. 74), performed rhythms privilege the cadences and pacing of speech over the music script. Owing to the relative nature of loudness and other constraints, notated dynamics are also frequently not what they seem: Kosta, Bandtlow, and Chew (2014) showed that notes marked pianissimo can sound objectively louder than notes marked fortissimo, depending on context. Furthermore, music performance practice often requires that performers deviate from the written score in prescribed ways. For example, in the French tradition of notes inégales, notes of equal duration are deliberately lengthened or shortened in performance, see Houle (2000, p. 86). In many folk traditions, notated pitches may be lavishly ornamented in practice, as shown in a study of Yang, Chew, and Rajab (2013), which compares erhu and violin performances of a Chinese folk piece.

However, what if actual performed rhythms were transcribed literally and precisely using conventional notation so as to make the nuances of performance concrete through writing? The encoding would still be incomplete as the symbolic representation would not capture fine details, such as the exact shapes of note articulations, transitions, and within-note embellishments. Some degree of approximation of the precise timings will be inevitable to ensure the readability of the transcription; even if the transcription could provide notation to millisecond precision, there are limits to what the human ear can distinguish as two separate time instants, see Bartlette, Headlam, Bocko, and Velikic (2006), Chafe, Caceres, and Gurevich (2010), and Chew et al. (2004). Nonetheless, a faithful transcription would give a much more accurate (literal) representation of the temporal experience, and would allow for direct comparison with the original score as a measure of the distance between the abstract representation and actual experience.

Notation of and for performance has taken many forms. Philip (1992) has explored the notation of nuances in expressive timing in less quantitative ways. Moving away from conventional music notation, Bamberger’s (2000) Impromptu software uses a number of representations, such as pitch contours, rhythm bars, and piano roll notation to allow users to manipulate properties of tune blocks. Many other graphical notations exist, including those of Farbood (2004) and Hope and Vickery (2015). As an intermediary between conventional notation and digital sound, OpenMusic (Bresson & Assayag, 2011), a programming environment designed for composition and analysis, allows notes to be positioned on staff lines in continuous locations indicating the times at which they sound, with impact on the readability of the score. A goal here will be to explore the limits of using conventional music notation to represent continuous time, bearing in mind that not all locations on the continuous time axis are equally likely, given the pulse-based origin of the input.

The first set of examples takes on this challenge to transcribe the actual timings of recorded expressive performances. In so doing, it provides, in a sense, a written record of the performers’ creative work. The three cases span music from a variety of Western music traditions: the Vienna Philharmonic Orchestra’s performances of Johann Strauss II’s The Blue Danube, a traditional New Year’s concert encore piece; Maria Callas’ rendition of Giacomo Puccini’s operatic aria “O Mio Babbino Caro,” and Marilyn Monroe’s sultry rendition of “Happy Birthday” on the occasion of John F. Kennedy’s 45th birthday.

The body of scientific research on performance practice has expanded rapidly, aided by recent software tools, such as Sonic Visualiser by Cannam, Landone, and Sandler (2010). It is now possible for any motivated person with modest computer literacy to be able to extract beat and loudness information from a recorded performance. Because of the visual and compact nature of information portrayed on graphs, the scientific study of music performance and music expression predominantly uses graphs of timing, tempo, or loudness data extracted from audio recordings—see, for example, Todd (1992), Cheng and Chew (2008), and Chew (2016). There has scarcely been any attempt to notate these captured rhythms, owing partly to the gulf between event-based (notation) and signal-based (audio) representations, and partly to the difficulty of representing free rhythms, which will be discussed in upcoming paragraphs.

An exception can be found in the work of Beaudoin and Senn, described in Beaudoin and Kania (2012), in which the exact timings and intensity levels of Martha Argerich’s recording of Chopin’s “Prelude in E minor,” Op. 28/4, is transcribed in standard notation as the framework for a series of pieces based on transformations on Chopin’s original material called Études d’un Prélude. Another is the work of Grønli, Child, and Chew (2013), in which Chew’s sight-reading of the finale movement of Haydn’s Piano Sonata in E♭, Hob XVI:45 is meticulously transcribed by Child for re-performance. The resulting composition, Practicing Haydn, was created for and premiered at Grønli’s solo art show at the grand opening of the Kunsthall Stavanger, and at other venues.

Musica humana

The second application lies in the domain of cardiac arrhythmia. The belief that music is inherent in the beating of the pulse was widely held in the Middle Ages. This was a specific instance of the more general idea that music is inherent in the rhythms of the human body—Boethius’ Musica humana, which is complementary to Musica instrumentalis (music of sounding instruments) and Musica universalis (music of the spheres), see Chamberlain (1970). Siraisi (1975) provides a rich survey of academic physicians’ detailed writings on the nature of the music of pulse in the 14th and 15th centuries. Since then, arts and medicine have diverged and developed along separate paths. Today, with the parallel development of annotation and visualization tools like WFDB by Silva and Moody (2014) and LightWAVE by Moody (2013) for ECG data, there is ample evidence that the human pulse would be amenable to modern musical treatment and analysis, as the fields are poised to collide again.

As a step toward facilitating this reconnection, the second set of examples applies transcription to represent, using conventional music notation, the rhythms of arrhythmia. Music representation of cardiac information is not new, although it has been used primarily to describe heart sounds. Renà Laennec (1826), the inventor of the stethoscope, used mainly onomatopoeic words to depict sounds he heard in the process of auscultation; however, on one occasion in 1824, he resorted to music notation to augment his word description of a venous hum. Segall (1962) points to this as the first symbolic representation of the sound of a heart murmur, with many more graphical notations to follow. More recently, Field (2010) used music notation to systematically transcribe signature heart sounds and murmur patterns in the teaching of cardiac auscultation to medical students to aid the diagnosis of heart valve disorders.

I will also use music notation to represent heart rhythms, but now focusing on the transcription of recorded rhythmic sequences of abnormal electrical activity in the heart. Conditions resulting from abnormal electrical conduction differ from those due to valvular disorders; the input will also be the ECG trace instead of sound.

Conventional ways to represent ECG data tend to focus on individual beats: their morphology (features of the waveform) or categorical labels, such as N (normal) and V (ventricular activity); or, frequency-domain characteristics like heart rate variability that aggregate features over larger windows of time. Counter to this trend, Bettermann, Amponsah, Cysarz, and van Leeuwen (1999) used a binary symbol sequence from African music theory to represent elementary rhythm patterns in heart period tachograms. Syed, Stultz, Kellis, Indyk, and Guttag (2010) consider motific patterns based on short strings of the categorical labels, and Qiu, Li, Hong, and Li (2016) have studied the semantic structure of symbols labeling parts of the waveform.

In the context of the transcription exercise, the notated rhythms are next matched to existing music with similar rhythms, and new compositions generated by collaging together appropriate parts of the selected piece. Since the chosen music already has the same or a very similar rhythmic structure, the collage gives pitch to the rhythms in ways that reinforce and make more readily perceptible the inherent time structures. If the pitch structures are in dialog with the time structures, the collage can add a layer of complementary structures. In either case, the temporal experience of arrhythmia can be made visceral through the performance of the resulting music.

Composing with rhythm templates is not new. Composer Cheryl Frances-Hoad’s piano piece Stolen Rhythm (2009) takes the notated rhythms of the finale movement in Haydn’s Piano Sonata in E♭, Hob XVI:45, and assigns new pitches to them. The computer program MorpheuS also takes rhythms from existing pieces and sets new notes to them in ways that preserve the repetition patterns, and tonal tension profiles of the template piece, see Herremans and Chew (2017). Practicing Haydn, in effect, creates a collage by traversing Haydn’s piece through a series of repetitions and pauses.

Heartbeat data have been used as a source for music composition or synthesis. The most common approach is to use heart rate variability indices, which are based on statistical aggregation over longer time spans. There is also a tendency toward direct data sonification. In the Heartsongs CD by Davids (1995), produced as part of ReyLab’s Heartsongs Project, heartbeat intervals were averaged over 300 beats to remove local fluctuations and mapped to 18 notes on a diatonic scale to create a melody. Yokohama (2002) maps each heartbeat interval to MIDI notes, so that an intervallic change such as a premature beat triggers a more significant change in pitch. In Ballora, Pennycook, Ivanov, Glass, and Goldberger (2006), heart rate variability data is mapped to pitch, timbre, and pulses over a course of hours for medical diagnosis; in Orzessek and Falkner (2006), heartbeat intervals are passed through a bandpass filter and mapped to MIDI note onsets, pitch, or loudness. The Heart Chamber Orchestra (Votava & Berger, 2011) uses interpretations of its 12 musicians’ heartbeats, detected through ECG monitors; relationships between them influence a real-time score that is then read and performed by the musicians from a computer screen. All but one of these studies—Yokohama (2002)—have focused on heartbeat data from non-arrhythmic hearts.

In this article, transcription serves as a means to represent the rhythms of arrhythmia using conventional music notation. Current analyses of ECG data predominantly use representations based on beat morphology and representations in the frequency domain. The music notation captures local rhythmic patterns that are lost in single-beat and frequency-based approaches. Three different excerpts, short summaries, showing a heart in different states of atrial fibrillation are chosen from a continuous 18-hour recording from a three-lead Holter monitor. The rhythms of the different states of the arrhythmia are made apparent in the extracted musical rhythms and collage pieces.

Transcription process

Conventional music notation is the representation of choice for the transcriptions in this article. The examples addressed in this article tend toward the practice of free rhythm, which is common in both folk and art, religious and secular traditions. Free rhythm is “the rhythm of music without pulse-based periodic organization” Clayton (1996, p. 329). The analysis of free-rhythm music remains an open challenge, with a major hurdle being the difficulty of representing free rhythms in writing. Existing efforts to notate free rhythms typically avoid time signatures or bar lines, sometimes simply arranging note heads on a horizontal timeline so as to avoid the implications of pulse or meter in staff notation.

The reason regular staff notation is adopted here is that the examples are all pulse-based; this includes both the performed music as well as the cardiac arrhythmia time series. While they generally lack periodic structure and do not possess ordinary metrical organization, owing to pauses and pattern repetitions, local grouping structures do emerge, which allow for the assignment of changing time signatures and indications of figural groupings through note beaming or phrase markings. In line with notations used in contemporary compositions, the transcription of Practicing Haydn, and indeed those for the performed durations and arrhythmia sequences make copious use of changing meters (as in Stravinsky’s compositions), and metric modulations (as in Elliot Carter’s works).

A main objective in the transcription process is to minimize the difference between the recorded and the transcribed sequence. When a design goal is to minimize transcription error, there exists the possibility of making the notation enormously complex to achieve the highest possible accuracy. To counter this, an important and competing aim is to ensure that the proportional durations in the notation are readily readable by human eyes, so that the transcription could serve as a source for visual analysis or performance. In this way, the notation is suitable as input for computer analysis as well as for visual inspection. Metric modulations are kept to a minimum, and utilized only when they lead to simpler proportional durations. To satisfy the second goal, when a human performer plays the notated transcription, it should be possible to reproduce the original rhythms without the aid of a click track, as in https://vimeo.com/226516952, although clearly it would be easy to choose to deviate significantly from the score. Thus, the labyrinthine density of notation like that employed by Brian Ferneyhough as a conduit for his complex composition process is avoided in favor of simpler forms. In the case of Ferneyhough’s intricate notation, designed to serve the purpose of lifting players beyond hackneyed readings of the score, some, such as Marsh (1994), have argued for simpler representations that directly reflect specific interpretations of the music. Distinct from Marsh’s goals, even though a secondary constraint of the transcription process here is human readability, the transcriptions do tend to make the score more complex in order to incorporate recorded nuances. Here, metric modulations that use irrational proportions, as in Conlon Nancarrow’s Study 33, are strictly avoided. One might argue that even Nancarrow’s irrational proportions can be closely approximated using conventional notation (rational durations) for playability, see Callender (2014).

Rhythmic disfluencies

The transcription of performed rhythms and the rhythms of cardiac arrhythmias draws inspiration from Practicing Haydn by Chew, Child, and Grønli (2013). Practicing Haydn originated as an idea by Grønli and Child to create a musical piece that sounds like musicians warming up and practicing before a concert. The result was a transcription of the serendipitous rhythms of a sight-reading of a Haydn sonata movement for re-performance, complete with all the repetitions, starts, and stops. The premiére of the piece took place concurrently at the grand opening of the Kunsthall Stavanger in Norway by Chew and at Performa13 in New York City by pianist Elaine Kang—see videos at https://kunsthallstavanger.no/en/exhibitions/practicing-haydn.

Three selections from the transcription are given in Figures 1 to 3. Each figure shows a snippet from the original Haydn score and a snippet from Child’s transcription of Chew’s sight-reading of the corresponding bars. The transcribed segments are inevitably longer than the original score, owing to repetitions, and to pauses and hesitations.

Chew’s sight-reading of Haydn’s Piano Sonata in E♭, Hob XVI:45 finale: pauses and repetitions.

Chew’s sight-reading of Haydn’s Piano Sonata in E♭, Hob XVI:45 finale: slow-downs, repetitions, and hesitations.

Chew’s sight-reading of Haydn’s Piano Sonata in E♭, Hob XVI:45 finale: repetitions and wrong note.

An interesting side effect of the exercise is that the transcription serves as a record of not only the musical but also the cognitive disfluencies. Unexpected events provide moments for pause. The transcribed sight-reading in Figure 1(b) shows a pause at the end of the second bar just before an unfamiliar turn in the 16th-note sequence; the excerpt in Figure 2(b) documents the hesitations just before the introduction of figural or directional changes; the pickup into the 2/4 bar re-starts an unexpected figure that was not fully apprehended on the first play in the preceding 3/8 bar; a similar re-start can be observed in Figure 3(b). Repetitions help refine harmonic direction: in Figure 1(b) the trill is repeated to reinforce its harmonic function; in Figure 2(b), the final tonic chord is elongated to balance the length (and emphasis) of the preceding dominant chord.

That sight-reading is associated with hesitation and fumbling is not particularly remarkable. What is interesting here is that these behaviors are clearly documented in the transcriptions. They may be obvious to a casual listener, but the fact that they show up clearly in the transcriptions means that it is possible to automate the detection process to enable large-scale analysis of disfluent or rhythmically irregular behavior.

Choreographed rhythms

When a score is interpreted by a human musician, the performed timings and durations are more often than not different, sometimes significantly so, from the notation in the score. Some of these deviations will be due to human inconsistency, but in skilled performance, the bulk of it can be ascribed to deliberate shaping of time, called rubato, either according to established convention or individual idiosyncracy. Cook (1987) encodes rubato using note and bar durations, and percentage deviation from the norm. Repp (1992) represents rubato in melodies using eighth-note durations (longer-duration notes are subdivided equally into eighth notes) and show the durations to frequently follow the shape of a quadratic curve. This method of representing tempo rubato persists to today and can be found, for example, in the work of Spiro, Rink, and Gold (2016).

This section seeks to represent several different kinds of timing deviations in music performance. Curve fitting, where present, is done with the Matlab spline function and the precisely quantified durations transcribed to common music notation.

Viennese waltz

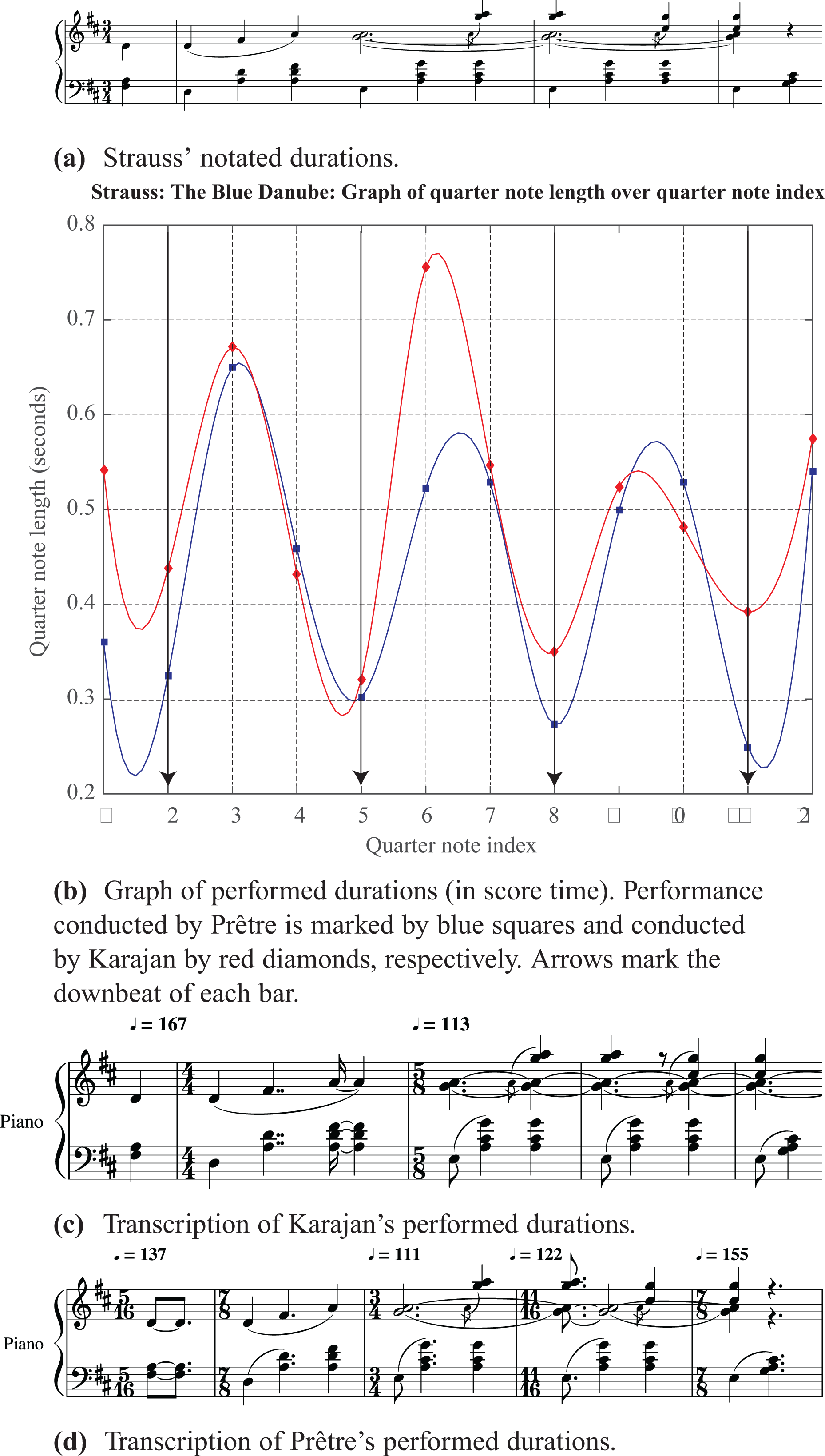

The Viennese waltz is a prototypical example of music in which there is systematic disparity between notated and performed rhythms. The social context and bodily movements (steps and twirls) behind this dance form is explored in McKee (2011). For the musicians performing, the three beats of a Viennese waltz are typically played unequally, normally with the first beat shortest followed by the third, and with the second beat longest, although exceptions exist. Assuming a steady pulse, this could be interpreted as the second beat being early, a deviation from its prescribed onset time, giving the impression that the third beat is late due to the resulting larger gap between the second and third beats. Figure 4 shows, using graphs and music notation, how the notated and performed rhythms differ in Johann Strauss II’s The Blue Danube.

Excerpt from The Blue Danube by Johann Strauss II and analysis and transcription of recorded performances by the Vienna Philharmonic Orchestra conducted by Herbert von Karajan (1987) and Georges Prêtre (2010).

Figure 4(a) shows Strauss’ original notated durations. To extract the performed durations from recorded performances, quarter-note beat onset times (in seconds),

The transcription of the performed durations can be described in the form of a heuristic. Suppose the second beat is considered to be early; then the third beat is the one that most closely resembles a full beat duration. Thus, we obtain the baseline beat duration from the third beat, which is assumed to be two or three eighth notes in length. The duration of the third beat served as the reference from which proportional relationships are derived for the preceding beats. Simple ratios—such as 3:2, 2.5:2, 1.5:2, and 2:3—were preferred over more complicated ones. Whether the third beat was two or three eighth notes in length is determined by which interpretation most closely approximated the simpler ratios. Suppose that ei is the duration of the eighth note when the third beat is i eighth notes long, that is,

After obtaining the duration ratios, the tempo for any contiguous set of beats, from i to j, is then computed from the total duration,

Any rhythm transcription necessarily requires some degree of quantization. The original rhythms exists on the real timeline, while the notation is categorical in nature. A transcription thus maps real numbers to duration categories. We are interested in the discrepancy between the real number and the categorical representation. We measure the accuracy of a transcription by the root-mean-square error (RMSE) between the transcribed inter-onset intervals as compared to the inter-onset intervals in the recorded performances. If

For the examples shown in Figure 4, the RMSE is 19.1 ms for the Karajan excerpt and 24.9 ms for the Prêtre excerpt, respectively. The numbers are given in Table 1 and graphed in Figure 18 in Appendix A. The small errors show the very slight degree of approximation introduced in the transcription process.

Squared difference between performed and transcribed durations and root-mean-square error (RMSE) (in seconds) for The Blue Danube as performed by the Vienna Philharmonic Orchestra conducted by Herbert von Karajan (1987) and by Georges Prêtre (2010).

The difference between the heuristic described here and a more conventional approach, such as transcribing the rhythms by ear, is that the heuristic can be readily translated into a computer program to automate the transcription process. Manual transcription is possible and often desirable for small examples, but scalability is an important consideration for rhythm transcription to be deployable on a large scale for analysis. The method also has the advantage of providing rigorous control over and monitoring of the quantization error.

Many automatic or semi-automatic methods for rhythm transcription exist, see Ycart, Jacquemard, Bresson, and Staworko (2016) and Nakamura, Yoshii, and Sagayama (2017), as well as algorithms for supporting tempo detection (Grosche & Müller, 2011) and tempo change (Quinton, 2017). However, frequency-based methods such as those described by Grosche and Müller (2011) and Quinton (2017) still lack the reactivity required for the frequent meter changes needed to encode free rhythms. The state-of-the-art methods described and evaluated in Nakamura et al. (2017) are designed to recover a score like the one originally written by the composer from the performance. They thus remove the temporal information that is inserted during performance. The work that comes closest to addressing the kind of transcription problem at hand is the interactive rhythm quantification software of Ycart et al. (2016), designed for use by composers as part of Open Music. At present, the software needs further optimization to make it comparable to human efficiency. The method given in this section has the advantage of having been optimized for the special case of the Viennese waltz, which, as it stands, lacks generality. Adaptations tailored to other music styles will be described in the following sections.

In Figure 4, apart from knowing at a glance that the performances are not metronomic, the information embedded in a transcription gives not only the degree of variation, but also the metric modulations, proportional time relations, the precise timing of each note, the distribution of time across the pulses in each bar, and patterns of stress. Figure 4(b) shows Karajan’s more steady (from bar to bar) Viennese waltz rhythm compared with Prêtre’s more variable rhythm, which has the short-long gesture growing larger then shrinking. This observation is reinforced in the notation of Figures 4 (c) and (d). Karajan’s performance could be captured with a constant 5/8 meter after the initial slow start; Prêtre’s performance had to be notated with many more metric modulations—with the beat rate slowing to make the larger gestures that then compress to speed up—and proportional duration category changes. In both cases, the performed durations show marked, and notatable, differences from the original score. The notation thus records the ways in which the score itself is changed and re-shaped by the performer; this is made more obvious by the fact that the original composition and the performed rendition are encoded using the same notational conventions.

Operatic aria

The performer has greatest latitude in creating free-sounding rhythms in solo performance. Among soloists, opera singers are well known for their flexible interpretation of notated rhythms. This second example examines the notation of extreme timing deviations or pulse elasticity in a solo performance of an operatic aria.

Figure 5 shows the duration of each eighth note in an excerpt of “O Mio Babbino Caro” from Giacomo Puccini’s opera, Gianni Schicchi, when performed by Kathleen Battle, Maria Callas, and Kiri te Kanawa. The performed timings of these three recordings have been graphed and analyzed in Chew (2016). The analysis is briefly described here before Callas’ performance is singled out for transcription.

Eighth-note durations in performances of an excerpt from Puccini’s “O Mio Babbino Caro” by Kathleen Battle, Maria Callas, and Kiri te Kanawa, with tipping points in Callas’ performance highlighted. Data are plotted in score time; vertical gridlines mark the first eighth note of each bar.

As before, the eighth-note beat onsets were annotated and overlaid on the audio signal for inspection in Sonic Visualiser and evaluated aurally for correctness; the eighth-note durations were then obtained from the annotated onsets. This was done for recordings by each of the three sopranos, and the durations plotted on the same graph. To allow for comparisons between the three recorded performances, the three sets of data were plotted in score time, that is, with eighth-note count as the x-axis. Again, interpolation between consecutive duration points was done using the Matlab spline function. The vertical dotted gridlines in the background mark the first eighth note of each bar. The corresponding solo melody is shown beneath the graph itself.

The first thing to note is the large degree of variation in eighth-note durations over the course of even this short excerpt. The baseline eighth-note duration hovers around 0.5 s, indicating that the underlying tempo is approximately 120 eighth notes per minute. In 6/8 time, with three eighth notes to a beat, this translates to a languid pulse of 40 beats per minute. The longest eighth-note duration, corresponding to the highest point in Kiri te Kanawa’s plot, exceeds 5 s, extending almost to 5.5 s. This is a remarkable more than ten-fold increase from the baseline duration. It is such extreme timing deviations that challenge conductors and collaborative artists to virtuosic feats of prediction and adaptation.

While there is a fair degree of commonality in where each performer chooses to invoke the most significant of these excursions from the underlying pulse grid, the ways in which they navigate these and other transitions form unique and often recognizable signatures of the performer or a performance. These time perturbations are the result of practiced choreography to influence the perceived musical context and impose structure on the musical text, to create emphases, and to elicit the desired emotion response from the listener. Such timing variations form the core evidence of the work behind each performance. Thus, it is helpful to be able to see this work represented concretely using an encoding familiar to any musically literate viewer.

In the special case where the stretched pulses coincide with the music structures to elicit a feeling of a roller coaster at the crest of a hill, they are called tipping points, see Chew (2016) or Chew (2017). In Figure 5, cue balls are perched atop each tipping point in Maria Callas’ performance. Not all extreme timing deviations are tipping points, and not all tipping points are signaled by a generous use of time. For an empirical study on what generates tipping points, see Naik and Chew (2017).

Figure 6 focuses on the excerpt within the rectangular box in Figure 5. Figure 6(a) shows the composer’s original notated durations. Figure 6(b) shows Maria Callas’ performed durations in performance (or real) time; listen to this excerpt at https://vimeo.com/127507105. Here, the durations of each eighth note are not plotted against an index of eighth-note counts, but are plotted at the time at which they occur to allow for synchronization with the audio. For a discussion on score time versus performance time, see Chew and Callender (2013). This plot is also interpolated using a spline function.

Excerpt from “O Mio Babbino” by Giacomo Puccini and Maria Callas’ recorded performance.

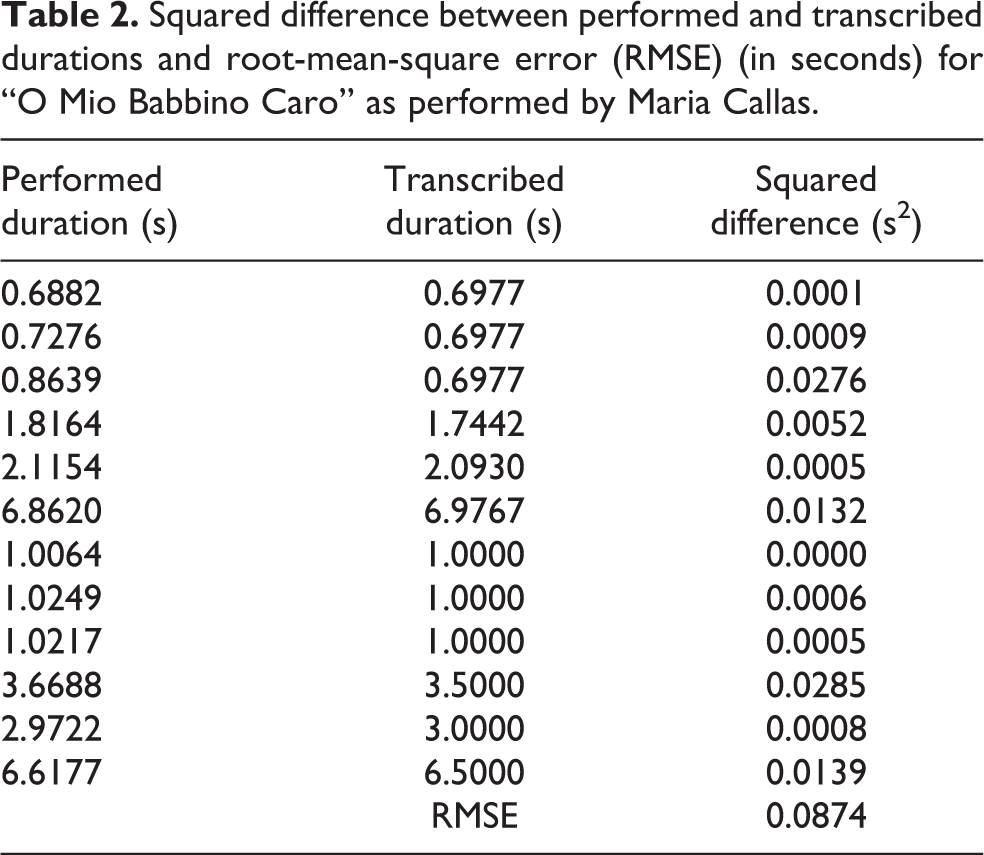

Figure 6(c) shows a transcription of Callas’ recorded performance. The transcription process is straightforward. Because the excerpt begins with a fairly steady (in performance) eighth-note sequence, an underlying pulse grid can be established quickly for the proportional durations. On the repeat of the phrase, the duration of the three-eighth-notes sequence is longer. Hence, the notation was greatly simplified by invoking a metric modulation, from 86 eighth notes per minute to 60 eighth notes per minute, which provided a new unit pulse length rather than persisting with the same unit pulse. The RMSE between the performed and transcribed durations for the notes of the excerpt shown in Figure 6 is 87.4 ms; the precise details are shown in Table 2 and Figure 19 in Appendix A.

Squared difference between performed and transcribed durations and root-mean-square error (RMSE) (in seconds) for “O Mio Babbino Caro” as performed by Maria Callas.

From both the graph and the transcription, it is clear that many notes are elongated beyond their notated durations for emphasis and expressive effect. Additional time has been inserted for breaths and to segment the phrases and subphrases. Musically, the first big elongation (the tipping point marked by the leftmost red cue ball in Figure 5) is part of a big ritenuto at the end of the first four bars, the second tipping point stretches the octave leap up to the top A♭, and the third tipping point provides a pause (and breath) before the final ritardando at the last two bars. Unlike conventional ways of marking these expressive gestures, such as by using labels like ritenuto and ritardando, the graph (Figure 6(b)) and notation (Figure 6(c)) show the details of exactly which eighth notes are lengthened, by how much, and which ones not. The notation additionally show the glissandi, marked by distinct note pairs connected by small slurs in the second and fifth bars in Figure 6(b). As indicated by the metric modulation, the two subphrases are performed at different tempi, with the second one a step slower than the first. Visual inspection of Figures 6(c) and 6(a) makes apparent the marked difference between Callas’ performance and the score.

“Happy Birthday”

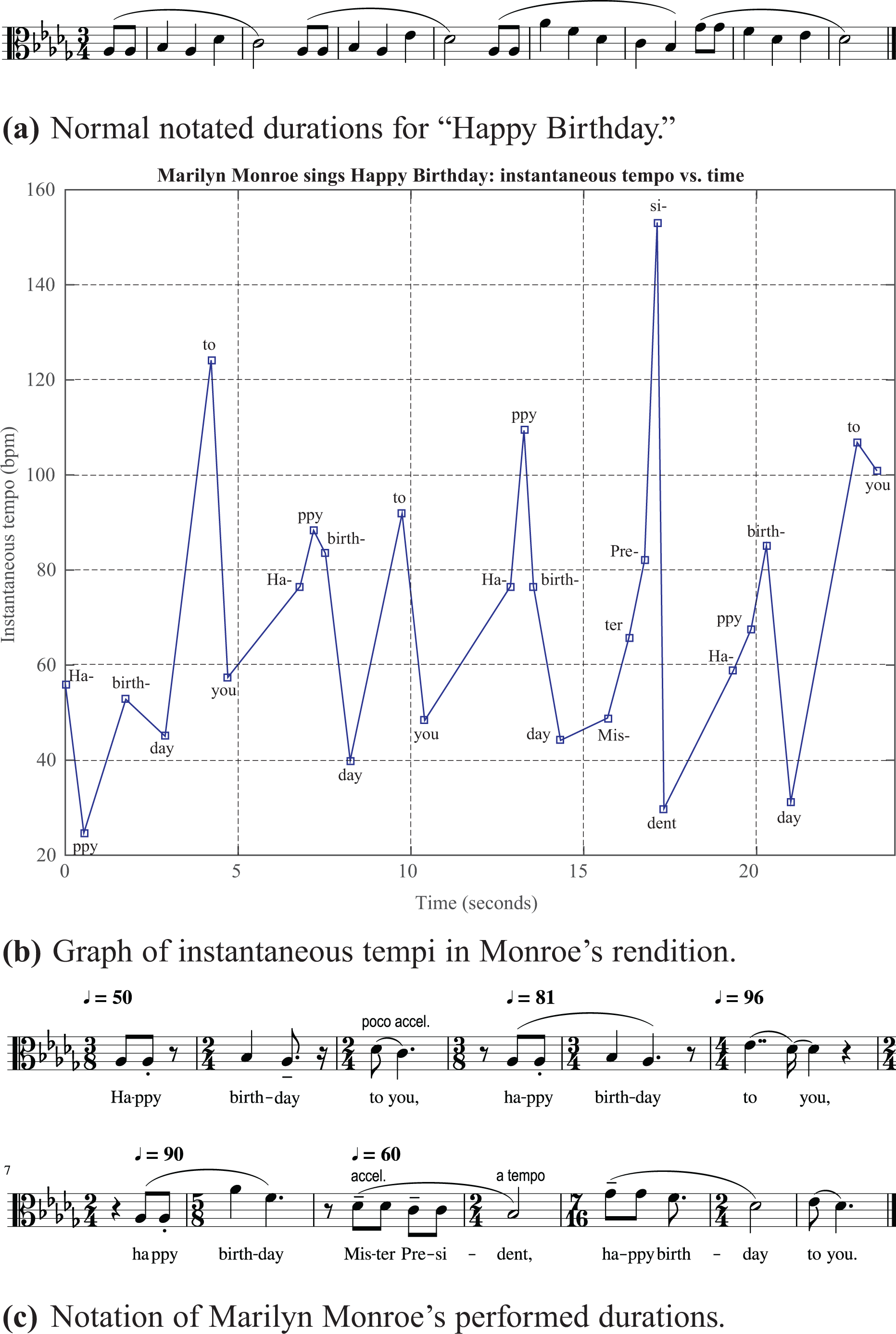

To show that the literal notation of expressive timing is not confined to classical singing, but also to vernacular forms, we turn our attention to Marilyn Monroe’s (1962) sultry rendition of “Happy Birthday,” performed and recorded live in Madison Square on the occasion of the U.S. President John F. Kennedy’s 45th birthday.

Figure 7(a) shows the conventional notation for “Happy Birthday.” Figure 7(b) shows a graph of the instantaneous tempo at each syllable in Monroe’s rendition of the tune. For greatest precision, the onsets of every syllable were annotated using Sonic Visualiser and checked against the audio signal and spectrogram of the audio signal. Each “Happy” gave the approximate duration of an eighth note and tempo for each new subphrase; sometimes the tempo had to be changed at “to you,” depending on the rate at which the words were sung. The RMSE between the performed and transcribed durations for the notes shown in Figure 7 is 51.5 ms; the details are shown in Table 3 and Figure 20 in Appendix A.

“Happy Birthday” as performed by Marilyn Monroe.

Squared difference between performed and transcribed durations and root-mean-square error (RMSE) (in seconds) for “Happy Birthday” as performed by Marilyn Monroe (1962).

Because the syllables map to a variety of duration categories in the score, it is not straightforward to generate a graph of eighth-note durations in a score or real time. Instead, the instantaneous tempo is plotted in real time (as opposed to score time) at the instance of the onset of each syllable. The instantaneous tempo at each syllable, Ts, is computed as a function of the onset times,

The breathlessness of Marilyn’s singing is marked by the many pauses she takes, which show up as local minima in the plot in Figure 7(b). The many pauses break the usual flow of the melody as well as the phrases in the text. For example, there is a short breath break after almost every instance of the words “Happy birthday.” These breath breaks register as rests in the notation of the performed durations. Portamenti in the sung melody are labeled with slurs in the third, sixth, and last bars. The tempo changes frequently, practically every time the voice re-enters following a breath break. The first “to you” pushes forward (poco accel.), the second “to you” holds back—the glissando arrives at the note before the “you” is vocalized, almost imperceptibly. In the next phrase, the octave leap builds to the climax, which is at a stately 60 beats/min but accelerates to the end of “Mister President” before the final “Happy birthday.”

These three examples demonstrate how performed durations, both carefully sculpted ones and those due to chance, can be captured through transcription using conventional music notation. The following section extends this practice to the transcription of heart rhythms in ECGs of cardiac arrhythmias.

Abnormal heart rhythms

This section considers the transcription of arrhythmic heartbeats using conventional music notation. When the normal electrical activity in the heart is disrupted or altered, arrhythmia results and the heart can beat irregularly, or excessively fast or slow. The ECGs of a heart in sinus (normal) rhythm can make for decidedly uninteresting transcriptions, but the abnormal heart rhythms of arrhythmia are much more varied, offering the potential for producing highly musical rhythm transcriptions.

Take, for example, the trigeminy rhythm, an abnormal heart rhythm in which every third beat is a premature ventricular contraction. Each premature ventricular contraction is followed by a full compensatory pause (a skipped beat) because the heart is still in its refractory period and cannot respond to a stimulus to initiate the next beat. Premature ventricular contractions tend to occur in repeated patterns, aptly named bigeminy (every other beat), trigeminy (every third beat), quadrigeminy (every fourth beat), and so on.

Figure 8 shows a trigeminy rhythm and its transcription. One can imagine extensions to the other premature ventricular contraction rhythms, such as the bigeminy and the quadrigeminy. Note the resemblance of the trigeminy rhythm—regular beat, early beat followed by a compensatory pause, regular beat—to a prototypical Viennese waltz rhythm. The onset of each beat is given by the peak, also known as the R of each QRS complex, in the signal of the upper graph. Given that the standard chart speed is 25 mm/s and a three-beat period is 56 mm on the chart, a beat is

ECG and transcription of a trigeminy rhythm. (ECG of trigeminal premature ventricular contractions [Online image]. (2013). Retrieved April 16, 2017, from http://floatnurse-mike.blogspot.com/2013/05/ekg-rhythm-strip-quiz-123.html.

ECG and transcription of atrial fibrillation excerpt Thu 20-07-45 VT 5 beats 210 beats/min (Summary of event) 1 min HR 109 beats/min.

Squared difference between ECG and transcribed durations and root-mean-square error (RMSE) (in seconds) for trigeminy example.

The next transcription examples are also derived from surface ECGs; they comprise short summaries from a continuous 18-hour recording taken using a three-lead Holter monitor and show interesting states of atrial fibrillation.

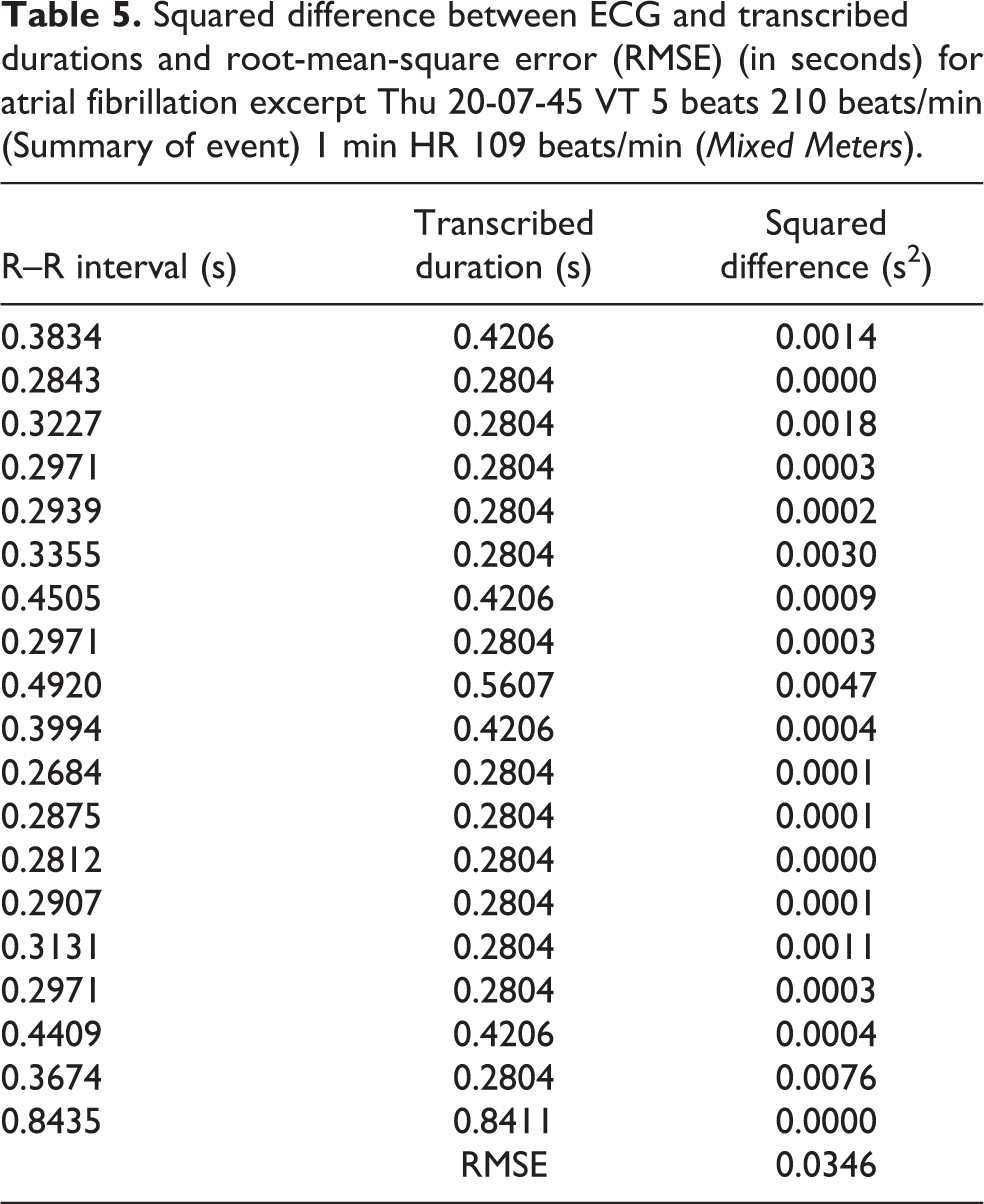

Mixed Meters

Figure 9 shows the first ECG excerpt and the corresponding transcription of the rhythm. In this example, beats that are slightly more prominent (having greater voltage change) in the ECG are given tenuto-staccato articulation markings; R–R intervals that are slightly short of the full value of a beat duration are marked staccato and R–R intervals that are slightly longer than the full beat value are marked tenuto. The meter changes are assigned to group beats with similar morphology, such as the six wide complex beats in the middle of the sequence, and repeated rhythm patterns, such as the 3 : 2 : 2 pattern. The RMSE between the R–R intervals in the ECG trace and the transcribed durations is 34.6 ms; the details are shown in Table 5 and Figure 22 in Appendix B.

Squared difference between ECG and transcribed durations and root-mean-square error (RMSE) (in seconds) for atrial fibrillation excerpt Thu 20-07-45 VT 5 beats 210 beats/min (Summary of event) 1 min HR 109 beats/min (Mixed Meters).

The toggle between 7/8, subdivided as 3 : 2 : 2, and 5/8, subdivided as 3 : 2, meters is reminiscent of the third movement in Libby Larsen’s Penta Metrics (2004). The composer describes the piece as a buoyant dance built around the 7/8 pattern: three beamed eighth notes, eighth note + eighth note rest, eighth note + eighth note rest. Highlighted in the transcription are the patterns of three onsets separated by three-eighth-note and two-eighth-note intervals that are part of the the 3 : 2 : x rhythmic motif. Note that the notation makes these patterns readily discernible.

Figure 10 shows the score of a short composition based (strictly) on the rhythm. It is made up of fragments cannibalized from Penta Metrics, movement III, sometimes transposed so as to fit with the local tonal context. For example, the first bar corresponds to the first bar of Penta Metrics III, the third bar corresponds to the second bar, and the ending chord is identical to that in Larsen’s piece. In between, Bars 2, 4, 5, and 6 are a mix of material, chords and descending octaves, from the sequences in Bars 57 to 60 and Bars 42 to 44, shown in Figures 11(b) and 11(a), respectively.

Composed fragment based on Thu 20-07-45 VT 5 beats 210 beats/min (Summary of event) 1 min HR 109 beats/min and Libby Larsen’s Penta Metrics, movement III.

Excerpts from Libby Larsen’s Penta Metrics, movement III.

A video comparing the ECG and rhythm transcription, and the collaged Mixed Meters can be viewed at https://vimeo.com/257248109.

Siciliane

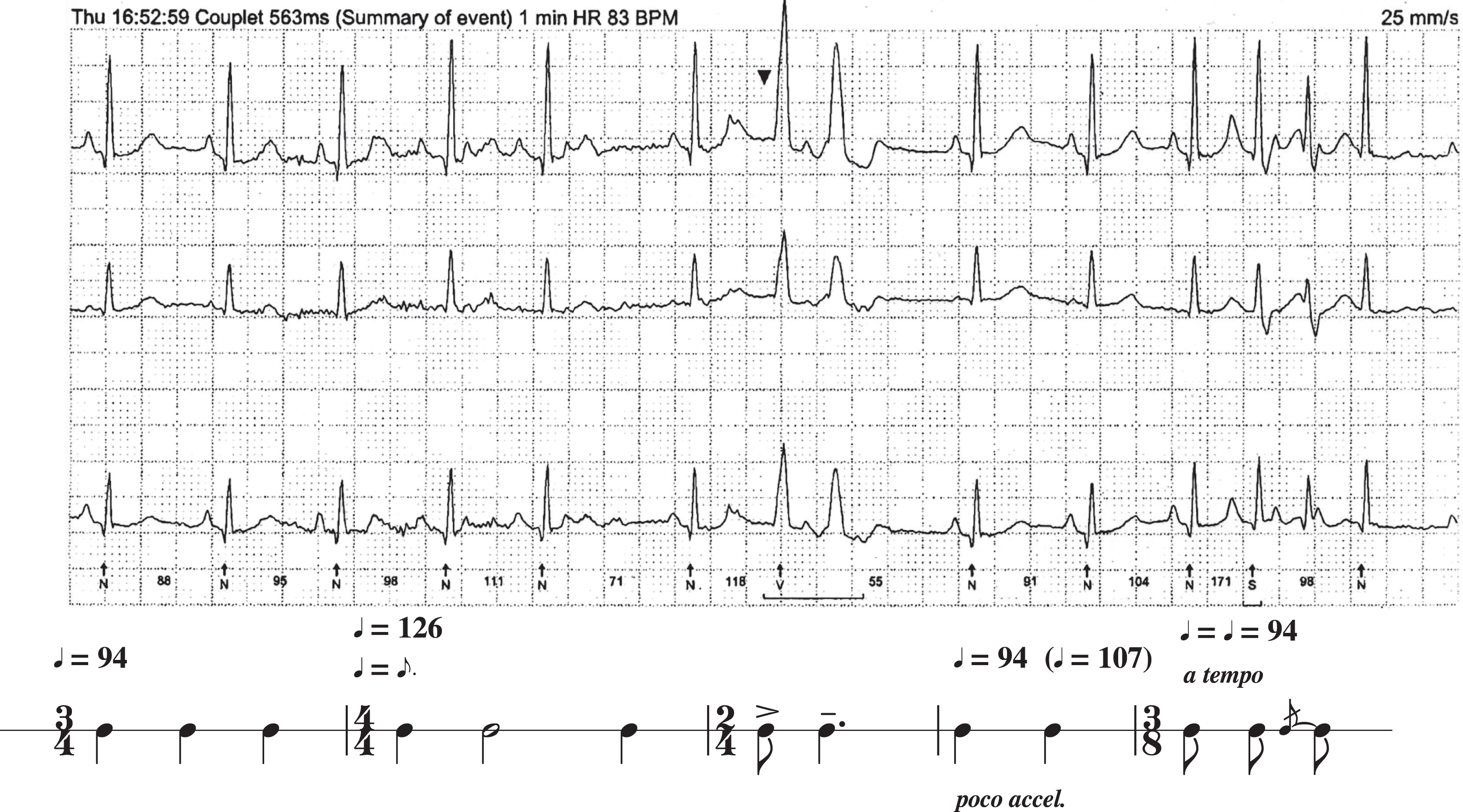

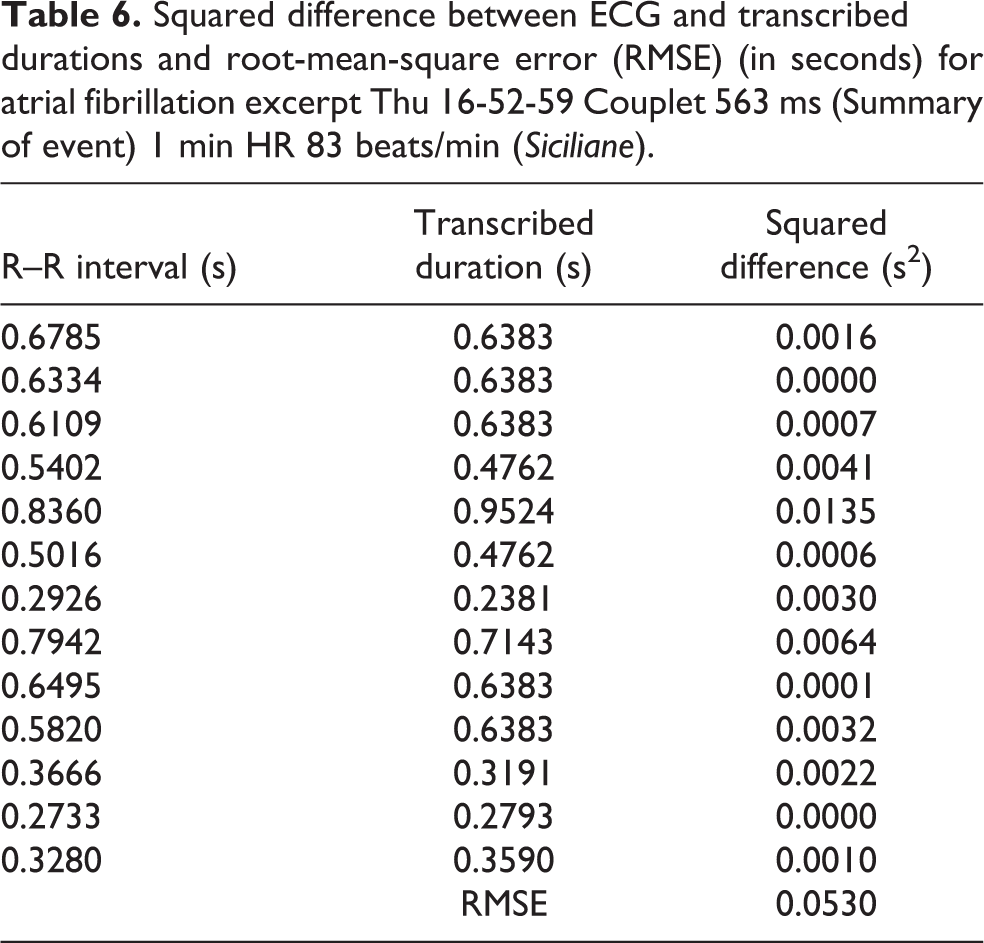

Figure 12 shows the second ECG excerpt and the corresponding transcription of the rhythm. For this example, the most prominent peak in the ECG sequence is highlighted with an accent on the corresponding note, and the wide complex beat with a tenuto mark; other ways to differentiate these waveform details are also possible. The notation in the middle section is simplified by invoking a metric modulation from 94 beats/min to 126 beats/min. This makes the new quarter note 3/4 the value of the previous quarter note, a 25% reduction in time for a beat or a 33% increase in tempo or beat rate. A slight acceleration (marked poco accel.) indicates that the duration of the second beat in the penultimate bar is slightly shorter than the tempo might suggest; the acciaccatura tied to the final note prompts the early onset of the final note, achieving the effect of shortening the penultimate note. The changing meters are chosen to accommodate the different grouping structures. The RMSE between the R–R intervals in the ECG trace and the transcribed durations for Figure 12 is 53.0 ms; the details are given in Table 6 and Figure 23 in Appendix B.

ECG and transcription of atrial fibrillation excerpt Thu 16-52-59 Couplet 563 ms (Summary of event) 1 min HR 83 beats/min.

Squared difference between ECG and transcribed durations and root-mean-square error (RMSE) (in seconds) for atrial fibrillation excerpt Thu 16-52-59 Couplet 563 ms (Summary of event) 1 min HR 83 beats/min (Siciliane).

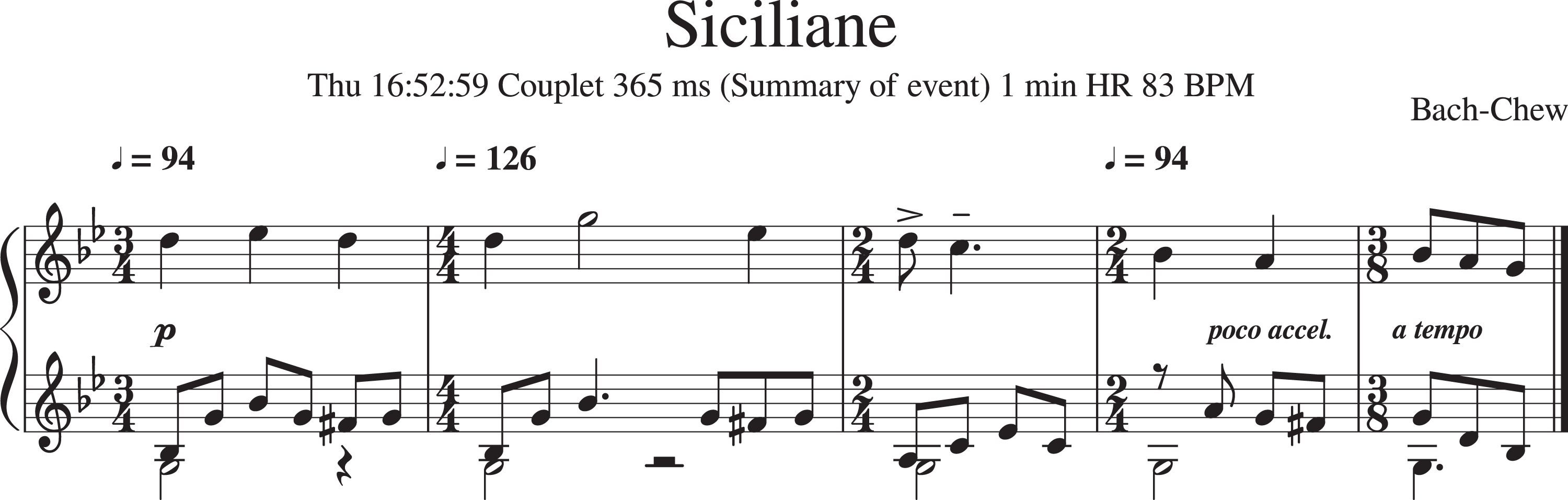

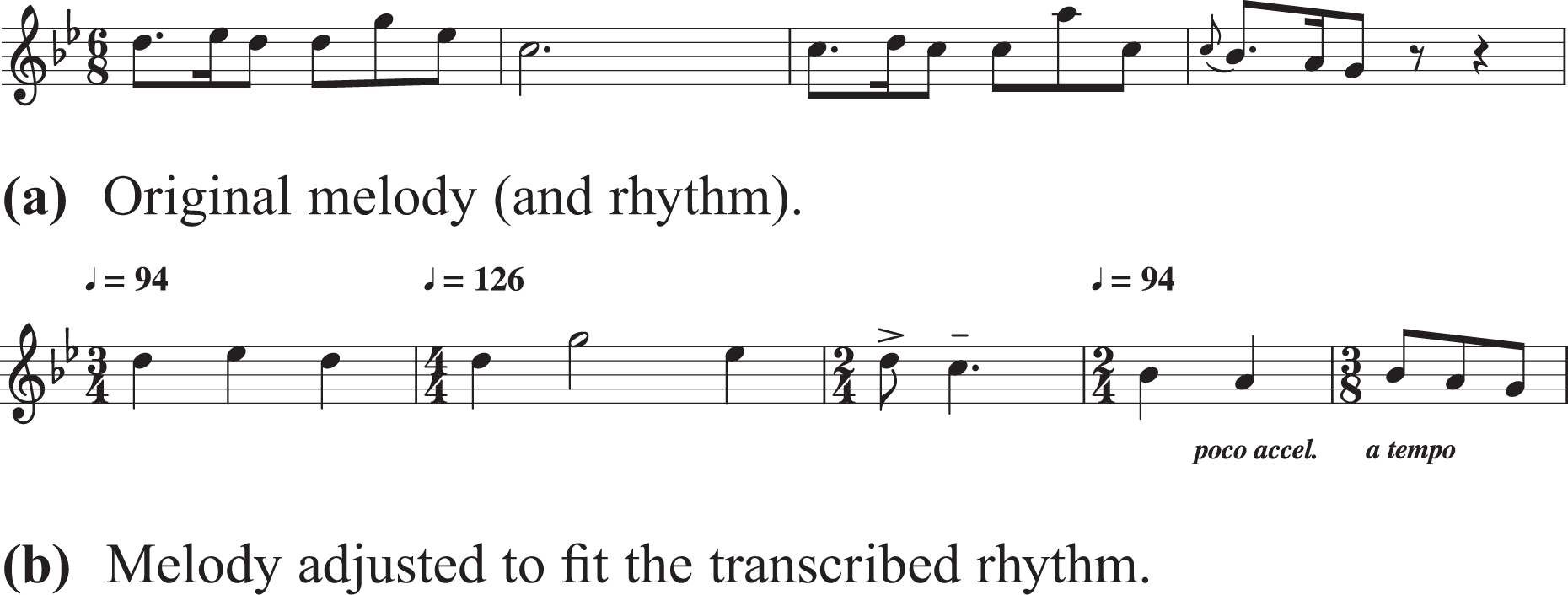

This excerpt is slower than the first one, and the long half note in the second bar requires a melodic profile that will fit with this temporal structure. The melody that comes to mind is that of the “Siciliane” in Johann Sebastian Bach’s Flute Sonata No. 2 in E♭ major, BWV 1031, and the piece provides the material for the short composition shown in Figure 13. The original rhythm of the “Siciliane” is lyrical and straightforward, and close to the atrial fibrillation rhythm, but not the same. The melodic profile fits the transcribed rhythm well. Small adjustments are made to the melody so that it fits the rhythm. Figure 14 shows the original melody and the one that has been tweaked to fit the transcribed rhythm. The new melody uses Bars 1, 2, and 4 of the original melody. A passing note was added in the third bar of the modified melody to fit the transcribed rhythm, and two notes from the last bar were inserted to provide a bridge to the concluding bar.

Composed fragment based on transcription of Thu 16-52-59 Couplet 563 ms (Summary of event) 1 min HR 83 beats/min and J. S. Bach’s “Siciliane” from his Flute Sonata No. 2 in E♭ major, BWV 1031.

Excerpt from Bach’s “Siciliane” and its modification to fit the transcribed atrial fibrillation rhythm.

An animation showing the correspondence between the ECG and the rhythm transcription, and between the modified Siciliane and the ECG can be viewed at https://vimeo.com/221351463.

Tango

Figure 15 shows the third and final ECG excerpt and the corresponding transcription of its rhythm. As before, the most prominent peaks in the ECG are assigned accent marks; the wide complex beats are given tenuto marks, as are notes of duration slightly longer than their notated values. The RMSE between the R–R intervals in the ECG and the transcribed durations is 40.1 ms; the details are given in Table 7 and Figure 24 in Appendix B.

ECG and transcription of atrial fibrillation excerpt Thu 17-38-26 VT 4 beats 200 beats/min (Summary of event) 1 min HR 105 beats/min.

Squared difference between R–R intervals in ECG and transcribed durations and root-mean-square error (RMSE) (in seconds) for atrial fibrillation excerpt Thu 17-38-26 VT 4 beats 200 beats/min (Summary of event) 1 min HR 105 beats/min (Tango).

Immediately apparent in the transcription are the 3 : 3 : 2 rhythmic pattern, characteristic of the tango, and variations on this pattern, 2 : 3 : x. Capitalizing on the tango reference, the material for the short composition draws from a cadenza-like piano solo in Astor Piazzolla’s Le Grand Tango for cello and piano (1982). The original excerpt from Piazzolla’s piece that provided material for the short composition in Figure 16 is given in Figure 17. In the modified score, the third iteration of the descending sequence is reduced to fit the 7/8 bar by removing the triplet figure. A bridge bar is inserted before material from the first and third bars are combined to reach the concluding bar, which also draws from material in the third bar but with a different finish.

Composed fragment based on transcription of Thu 17-38-26 VT 4 beats 200 beats/min (Summary of event) 1 min HR 105 beats/min and Astor Piazzolla’s Le Grand Tango.

Excerpt from Piazzolla’s Le Grand Tango used to fit the atrial fibrillation rhythm.

A video showing the ECG, rhythm transcription, and adapted Tango can be viewed at https://vimeo.com/257253528.

Conclusions and discussions

Having traversed a variety of transcription examples ranging from extreme rhythmic flexibility in performance and the natural flounderings of sight-reading (extreme in a different sense), to the dance-like rhythms of premature ventricular contractions and atrial fibrillation, it is time to reflect on what it means to be able to turn these rhythms accurately to music notation.

A symbolic representation can be used to encode knowledge that can serve as input to machine analysis of these time sequences, thus opening up new approaches for analyzing performed music and arrhythmia sequences. Further work needs to be done to gauge the stability of the transcriptions. Distance metrics can be devised to quantify distances between notations created by different transcribers for the same time sequence to determine consistency. Some key applications of the representation include large-scale deployment of motif detection, similarity assessment, and style classification. For example, after transforming heart period tachograms to elementary rhythm patterns, Bettermann et al. (1999) used a hierarchical pattern scheme to compute the predominance and stability of rhythm pattern classes. Further analyses of the transcribed rhythms could reveal hierarchical structure, like that in Lerdahl and Jackendoff (1996).

The main challenge, for both music performance and cardiac arrhythmia, lies in determining what it is we wish to represent. What are the essential structures of the information streams? What do they mean? Which of these structures are variable and subjective and which are fixed and invariant? Ideally, transcription should reveal the essential background structure of the temporal experience… In that sense, transcription is a form of analysis in itself. The difficulty of transcribing free rhythm may result from the inadequate nature of the notational system but, at the same time, it signals a deeper analytical problem. Graphic signs can be easily invented once it is clear what we want to represent.

Frigyesi (1993, pp. 60–62)

The transcriptions of the atrial fibrillation excerpts reveal the vast differences between experiences of irregular heartbeats at different times of the day. Mixed Meters was recorded in the evening at 20:07:45, the Siciliane and the Tango in the late afternoon, at 16:52:59 and 17:39:26, respectively. The rhythms differ not only in rate but also in rhythmic content. Conventional ways of describing atrial fibrillation as simply a condition with irregular heartbeats due to fibrillation in the upper (atrial) chambers of the heart fails to capture the finer features of these time-varying rhythmic structures. It may be that, as for musical styles, information encoded in these rhythmic patterns can be used to distinguish between different forms or phenotypic subtypes of atrial fibrillation, which may be helpful for disease stratification with impact on medical diagnostics and therapeutics.

Footnotes

Acknowledgments

This article is inspired by personal experiences with music performance and cardiac arrhythmias. I am grateful to Dr. Edward Rowland and Professor Pier Lambiase and their respective clinical and catheterization laboratory teams for treating and curing my arrhythmias; Dr. Jem Lane for sharing the story of his Christmas party quiz where he made his colleagues guess arrhythmia types by playing them music of different tempi—this prompted me to create more precise and tangible connections between musical and abnormal cardiac rhythms; and Matron Carolyn Brennan who likens atrial fibrillation to free jazz. Dr. Zongbo Chen helped retrieve my data for the early transcription experiments. Last but not least, Professor Peter Child and Lina Viste Grønli concocted and included me in the Practicing Haydn project, which started me on this marvelous journey.

Declaration of conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.

Peer review

Ian Pace, City, University of London, Department of Music. Jonathan Berger, Stanford University, Department of Music.

One anonymous reviewer.

Appendix A: Precision of performed music transcriptions

This section contains tables and graphs documenting the difference between the transcriptions and original rhythms derived from the recorded performances and ECGs. The differences are plotted as stem graphs and the tables provide the details of the RMSE calculations. Table 1 provides the numbers for the error calculations for Karajan’s and Prêtre’s recordings of The Blue Danube, with the corresponding stem plots in Figure 18. Table 2 gives the numbers for Maria Callas’ performance of “O Mio Babbino Caro”, with the stem plot in Figure 19; and Table 3 that for Marilyn Monroe’s rendition of “Happy Birthday,” with a stem plot in Figure 20.

Appendix B: Precision of ECG rhythm transcriptions

This section contains tables and graphs documenting the difference between the R–R intervals derived from the ECG traces and the transcribed durations. The squared error between the two are given, as well as the RMSE, in the tables, and stem plots of the difference are given in the figures. Table 4 provides the numbers for the error calculations for the trigeminy example and Figure 21 the corresponding stem plot. Table 5 provides the numbers for the error calculations for the atrial fibrillation excerpt that formed the basis of Mixed Meters, with the corresponding stem plot in Figure 22. Table 6 gives the numbers for the atrial fibrillation excerpt that became Siciliane, with the corresponding stem plot in Figure 23. Table 7 gives the numbers for the atrial fibrillation excerpt for the Tango, with the corresponding stem plot in Figure 24. Not reflected in the numbers and graphs are the effects of the accents and articulation markings incorporated in the transcriptions that mark amplitude (voltage) changes, waveform morphology, or slightly elongated or shortened durations.