Abstract

Musical expertise can lead to neural plasticity in specific cognitive domains (e.g., in auditory music perception). However, not much is known about whether the visual perception of simple musical symbols (e.g., notes) already differs between musicians and non-musicians. This was the aim of the present study. Therefore, the Familiarity Effect (FE) – an effect which occurs quite early during visual processing and which is based on prior knowledge or expertise – was investigated. The FE describes the phenomenon that it is easier to find an unfamiliar element (e.g., a mirrored eighth note) in familiar elements (e.g., normally oriented eighth notes) than to find a familiar element in a background of unfamiliar elements. It was examined whether the strength of the FE for eighth notes differs between note readers and non-note readers. Furthermore, it was investigated at which component of the event-related brain potential (ERP) the FE occurs. Stimuli that consisted of either eighth notes or vertically mirrored eighth notes were presented to the participants (28 note readers, 19 non-note readers). A target element was embedded in half of the trials. Reaction times, sensitivity, and three ERP components (the N1, N2p, and P3) were recorded. For both the note readers and the non-note readers, strong FEs were found in the behavioral data. However, no differences in the strength of the FE between groups were found. Furthermore, for both groups, the FE was found for the same ERP components (target-absent trials – N1 latency; target-present trials – N2p latency, N2p amplitude, P3 amplitude). It is concluded that the early visual perception of eighth note symbols does not differ between note readers and non-note readers. However, future research is needed to verify this for more complex musical stimuli and for professional musicians.

Today, it is widely accepted that neural plasticity is possible in principle (e.g., Becker, Kutz, & Voelcker-Rehage, 2016; Bezzola, Mérillat, Gaser, & Jäncke, 2011; Herholz & Zatorre, 2012; Maguire et al., 2003; McEwen & Morrison, 2013). This assumption is based, inter alia, on the finding that experts have an altered brain anatomy in comparison to non-experts (e.g., Maguire et al., 2003; Nakata, Yoshie, Miura, & Kudo, 2010; Schmithorst & Wilke, 2002), that they use different brain regions than non-experts when performing a task from their area of expertise (e.g., Masson, Potvin, Riopel, & Foisy, 2014), and that they use specific brain regions more efficiently than non-experts (so-called neural efficiency; e.g., Amalric & Dehaene, 2016; Babiloni et al., 2009; Babiloni et al., 2010; Hänggi, Koeneke, Bezzola, & Jäncke, 2010). This is especially true for musicians (Han et al., 2009; Herholz & Zatorre, 2012; Jäncke, 2009a, 2009b; Münte, Altenmüller, & Jäncke, 2002; Zatorre, 2005). Furthermore, it was found that musicians show generally better cognitive abilities (e.g., attention, working memory performance, visual spatial abilities, speech processing) than non-musicians (Brochard, Dufour, & Despres, 2004; George & Coch, 2011; Jakobson, Lewycky, Kilgour, & Stoesz, 2008; Patston, Hogg, & Tippett, 2007; Strait, Kraus, Parbery-Clark, & Ashley, 2010; Strait, O’Connell, Parbery-Clark, & Kraus, 2014; Weiss, Biron, Lieder, Granot, & Ahissar, 2014) and that musicians process musical stimuli differentially (Bangert et al., 2006; Proverbio, Manfredi, Zani, & Adorni, 2013). However, these effects were not found for all cognitive domains (e.g., for visual memory for objects; Cohen, Evans, Horowitz, & Wolfe, 2011). So far, most of the studies that have compared the cognitive abilities between musicians and non-musicians have investigated either auditory or higher-order (cognitive or motor) skills that are especially required for movement control, timing of the movement or perception of auditory rhythms in music (Zatorre, Chen, & Penhune, 2007). Nevertheless, in many cases (although music is performed without visual material in some cases) there are often at least two processing stages prior to the movement control: first, the perception of the musical notes and, second, the assigning of a meaning to the perceived note. To date, visual note perception has been sparsely investigated in previous research. Therefore, neural processing during the perception of note symbols was investigated and compared between note readers and non-note readers in the present study. The aim was to examine by means of event-related brain potentials (ERPs) whether the early visual processing of eighth notes already differs between note readers and non-note readers.

A phenomenon which occurs quite early during visual processing and which is based on prior knowledge or expertise, respectively, is the so-called Familiarity Effect (FE). The FE describes the observation that it is easier to find an unfamiliar element in a background of familiar elements than to find a familiar element in a background of unfamiliar elements (e.g., Frith, 1974; Meinecke & Meisel, 2014). It has been shown previously that the FE can show up quite early during visual processing (Becker, Smith, & Schenk, 2017; Zhaoping & Frith, 2011) and that it is, therefore, well suited to investigate the above-described research question. However, so far, the FE has mainly been examined for letters or numerical digits (Frith, 1974; Malinowski & Hübner, 2001; Meinecke & Meisel, 2014; Wang, Cavanagh, & Green, 1994). But first attempts were made to show that the FE can also be found for objects (e.g., animal silhouettes; Wolfe, 2001) or Chinese characters (Shen & Reingold, 2001). So far, it has not been investigated whether the FE can also be found for musical stimuli (e.g., notes or clefs). Therefore, the first goal of the present study was to investigate whether the FE can also be found for eighth notes at all. If so, the main research question was to investigate whether its strength differs between note readers and non-note readers. Furthermore, it was investigated by means of event-related potentials at which timepoint (i.e., at which ERP component) the FE shows up and whether this timepoint differs between groups. Event-related potentials were chosen because they can be assessed with a very high temporal resolution (in the range of milliseconds), making it possible to draw conclusions about the timing of the underlying processes. They have been used previously to detect differences between musicians and non-musicians when processing musical stimuli (e.g., Bouwer, Van Zuijen, & Honing, 2014; Patston, Kirk, Rolfe, Corballis, & Tippett, 2007; Seppänen, Brattico, & Tervaniemi, 2007; Tervaniemi, Just, Koelsch, Widmann, & Schröger, 2005; Yu, Liu, & Gao, 2015) or for investigating musical processing in general (e.g., Brattico, Jacobsen, De Baene, Glerean, & Tervaniemi, 2010; Istók, Brattico, Jacobsen, Ritter, & Tervaniemi, 2013; Koelsch, Gunter, Friederici, & Schröger, 2000; Koelsch, Gunter, Wittfoth, & Sammler, 2005; Nan, Knösche, & Friederici, 2006). Thus, ERPs seem to be a good means to investigate the actual research question. If an ERP component occurs earlier in the note-readers group, this would indicate a faster stimulus processing and, furthermore, an altered physiological processing, compared to the non-note-readers group.

Three ERP components that show up at different time points and under different electrode positions were investigated. The first component was the N1 that occurs quite early (between 100 and 200 ms) in visual processing under occipital electrodes. The function of the N1 was assigned to automatic, stimulus-driven processing (Wijers, Lange, Mulder, & Mulder, 1997) and to the first orientation of attention (Luck, 1995; Luck, Heinze, Mangun, & Hillyard, 1990). The second ERP component was the posterior N2 (N2p) that can be found in the intermediate time range (between 200 and 300 ms) under posterior electrodes. The function of the N2p was assigned to target processing (e.g., Luck & Hillyard, 1994; Schubö, 2009). The last component that was investigated was the P3 that occurs later (between 300 and 600 ms) than the N1 and N2p component and that reflects higher cognitive processes as well as task difficulty (e.g., Kok, 2001). It has been found that musicians show stronger P3 amplitudes when performing an auditory discrimination task (Tervaniemi et al., 2005).

Recently, Becker and colleagues (2017) have shown that the FE for the letter ‘N’ or its mirrored version the ‘И’ can be found for the N1 and the N2p component for homogeneous textures and for the N2p and the P3 component for inhomogeneous textures. Thus, there seems to be a difference between the timing of the FE with respect to the stimulus’ difficulty. From this it can be concluded that depending on the complexity of the stimulus, different processing stages seem to be influenced, as indicated by an FE occurring at later components. This complexity could either be due to the physical stimulus properties (e.g., the stimulus homogeneity as in the study by Becker et al., 2017) or to inherent individual cognitive processes (e.g., the grade of expertise with the stimuli). Therefore, it was expected that the FE for eighth notes would show up later for non-note readers than for note readers.

Methods

Participants

Forty-seven participants (mean age 22.8 ± 4.0 years; 11 male, 36 female; 41 right-handed) took part in the experiment. Nineteen (40.4%; 23.0 ± 3.8 years, 3 male, 16 female) of the participants were assigned to the non-note-readers group. All of the non-note readers were not familiar with notes, meaning that they were not able to read notes and most of the non-note readers (14, 77.8%) had never played an instrument or had ever sung in a choir. The remaining 28 participants (59.6%; 22.6 ± 4.3 years; 8 male, 20 female) were assigned to the note-readers group. All note readers were able to read notes (therefore, it was expected that they were highly familiar with eighth notes) and most of the note readers (22, 78.6%) were currently playing an instrument or were currently singing in a choir. Note-reading ability was further controlled for by means of a self-developed note-reading test. Additionally, after the experiment, the participants were presented with an eighth note symbol and a mirrored eighth note symbol and they had to state which symbol was a “real note”. Eleven (57.9%) of the non-note readers chose the wrong symbol. All participants had normal or corrected-to-normal visual acuity and reported no psychological or neurological diseases. Informed consent was obtained from all individual participants included in the study. The experiment was carried out in accordance with the Code of Ethics of the World Medical Association (Declaration of Helsinki) and was approved by the local ethics committee.

Stimuli

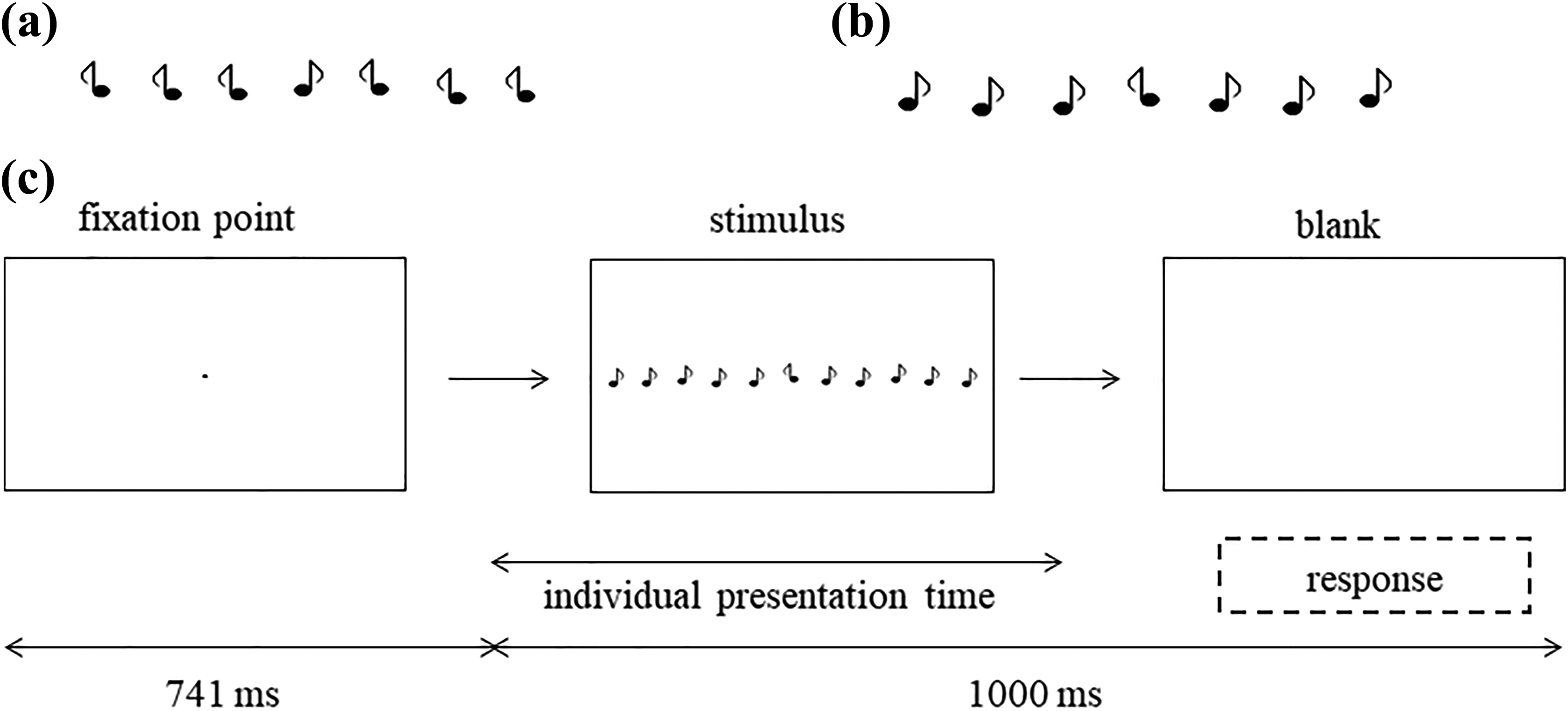

The display consisted of a row with 21 elements that were either eighth notes or vertically mirrored eighth notes (Figure 1a and b). The elements were black drawings (0.9 cd/m2) on a light-grey background (48.6 cd/m2). In half of the trials a target element was embedded. The target could occur at the ninth, 10th, 11th, 12th, or 13th position (at ± 1.8°, ± 0.9°, or 0°, respectively). Two conditions were investigated: (1) the “familiar target” condition in which the target element was a (familiarly oriented) eighth note and the background elements were (unfamiliarly oriented) mirrored eighth notes (Figure 1a), and (2) the “unfamiliar target” condition which consisted of an (unfamiliarly oriented) mirrored eighth note as the target element and familiar eighth notes as background elements (Figure 1b).

Part of the stimuli for the “familiar target” (a) and the “unfamiliar target” conditions (b). The whole stimulus consisted of 21 elements with target positions at the ninth, 10th, 11th, 12th, or 13th position. Half of the stimuli contained a target. In (c) the time course of the experiment is shown. The stimulus presentation time was adjusted individually for each participant between 47.1 and 94.1 ms, but was the same for the “familiar target” and the “unfamiliar target” condition. Responses were made by button presses.

Apparatus

The participants were seated in an electrically shielded and sound-attenuated booth in a comfortable chair with response buttons under their left and right index fingers. Stimuli were presented on a 21-inch computer monitor (Philips 201B4) with a refresh rate of 85 Hz and a resolution of 1600 x 1200 pixels. The distance between observers and the monitor was 1.10 m. Stimulus presentation was controlled by MATLAB programs using the Psychophysics Toolbox (Brainard, 1997; Pelli, 1997). Luminance measurements were conducted with a Minolta luminance meter (model LS110). Visual acuity was tested with a Rodenstock R22 vision tester (stimulus no. 212). The electroencephalogramm (EEG) was recorded with 64 active electrodes (actiCap; amplifier: BrainVision actiCHamp, Brain Products, Germany) positioned on the participant’s scalp according to the international 10–20 system. All electrodes were referenced online to FCz and re-referenced offline to the average of the left and right mastoids. The sampling rate was 500 Hz. No online filters were used. Electrode impedances were kept below 20 kΩ. To control for blinks and eye movements, vertical and horizontal electrooculograms were recorded from above the right eyebrow, from below the right eye and from the outer canthi of the eyes. Data analysis was conducted with the Brain Vision Analyzer 2.0 (Brain Products, Germany).

Procedure

The two conditions (“familiar target” and “unfamiliar target”) were presented in counterbalanced blocks to the participants. The participants had to decide whether there was a target element embedded in the stimulus or not by a button press. A left finger press indicated that there was no target, and a right press that there was a target in the previously presented stimulus. The time course of the experiment is shown in Figure 1c. Each trial started with a fixation point presented for 741 ms, followed by the stimulus. The stimulus presentation times were adjusted individually for each participant so that the mean hit rates were between 30% and 85% and equal for both the “familiar target” and the “unfamiliar target” conditions. The mean presentation times were 63.3 ± 14.4 ms (min: 47.1 ms, max: 94.1 ms). A blank screen was presented after the stimulus presentation, allowing the participants to give their responses. The entire stimulus duration interval (stimulus and blank) lasted for 1000 ms. Each block consisted of 60 trials. Between seven (in the easier “unfamiliar target” condition) and 10 blocks (in the harder “familiar target” condition) were performed. This was done with the aim of having approximately the same number of trials with correct responses for averaging for both conditions (i.e., about 210 trials). The participants were instructed to keep the false alarm rates as low as possible. They were allowed to take as many breaks as they needed between the blocks. The whole session lasted about 90 minutes.

Data processing and analysis

Data analysis consisted of two parts: first, the analysis of the behavioral data and, second, the analysis of the EEG data. For the analysis of the behavioral data, mean hit rates, reaction times and false alarm rates were computed for the “familiar target” and the “unfamiliar” conditions, averaged across all target positions (0°, ± 0.9°, ± 1.8°). From these mean hit rates and false alarm rates, sensitivities

For EEG analysis, the EEG was filtered with a 30 Hz low-pass filter (time constant: 1.59 s, 4th order) and a 0.1 Hz high-pass filter (4th order). After filtering, trials containing eye movements were excluded from further analysis. For this, an automatic algorithm, which is implemented in Brain Vision Analyzer 2.0, was applied. Furthermore, trials containing blinks were corrected according to the procedure by Gratton, Cole, and Donchin (1983). After ocular correction, EEG data were averaged for epochs of 1100 ms, starting 200 ms prior to stimulus onset and ending 900 ms after stimulus offset, separately for target-present and target-absent trials. Target-present trials were averaged for all five target positions (0°, ± 0.9°, ± 1.8°). Trials with incorrect or no responses were excluded from further analysis. Trials with voltages exceeding ± 70 µV, voltage steps between two sampling points exceeding 50 µV/ms, voltages lower than 0.1 µV in 100 ms and absolute voltage differences exceeding 300 µV in each segment were removed. After artifact correction, up to 3% of the trials were removed, leading to approximately 200 trials (from the previous 210 trials) that were left for averaging, for each of the target-present and the target-absent trials, as well as for each condition. Baseline correction was performed after artifact correction.

Event-related brain potential analysis was carried out in two steps: first, the ERP latencies were estimated for the peak maxima in pre-defined time windows, in which the ERPs usually occurred (120–180 ms for the N1, 240–330 ms for the N2p, and 350–520 ms for the P3; e.g., Eimer, 1993; Luck & Hillyard, 1994; Schaffer, Schubö, & Meinecke, 2011). Second, mean amplitudes were calculated in an interval around the individual peak maximum for the given ERP for this person. This interval was set to ± 10 ms around the peak maximum for the narrow N1 and N2p components, and around ± 30 ms for the broader P3 component. Peak detection was done semi-automatically: first, an automatic peak detection algorithm was applied (searching for the local peak in the pre-defined time interval); second, the found peak was visually inspected and corrected if necessary. The ERPs (latencies and amplitudes) were each estimated under three electrodes under which they can be found usually. The N1was estimated under O1, Oz, and O2, the N2p under PO3, POz, and PO4, and the P3 under P1, Pz, and P2. For further statistical analysis, mean latencies and amplitudes over the three investigated electrodes (N1: O1, Oz, O2; N2p: PO3, POz, PO4; P3: P1, Pz, P2) were calculated for each ERP component. For ERP evaluation, ANOVAs for repeated measurements with the within-subject factors “target type” (“familiar target” vs. “unfamiliar target”) and target presence (“target absent” vs. “target present”) and the between-subjects factor “group” (“non-note readers” vs. “note readers”) were computed, separately for the N1, N2p, and P3 components, and separately for the latencies and ERP amplitudes. All statistical analyses were performed using IBM SPSS Statistics 24.

Results

Behavioral data

A comparison of the behavioral data (sensitivity

Comparison of the mean sensitivities

Sensitivity

For sensitivity, a main effect of target type (

Reaction times

For the reaction times, main effects of target type (

EEG data

Exemplarily, the EEG data (averaged over all participants) for the Oz, POz, and Pz electrodes are shown in Figure 3. Additionally, topographic maps for the target-present trials are shown in Figure 3. Comparisons of the latencies and amplitudes of all the investigated ERP components between the note readers and the non-note readers are shown in Figures 4 and 5.

Event-related brain potentials under the Pz, POz, and Oz electrodes and topographic maps for the target-present trials for the “familiar target” (panels a, c, e) and the “unfamiliar target” (panels b, d, f) conditions.

Mean latencies for the “familiar target” and the “unfamiliar target” conditions, separately for the target-absent (a) and target-present trials (b), and for the N1, N2p, and P3 components.

Mean amplitudes for the “familiar target” and the “unfamiliar target” conditions, separately for the target-absent (a) and target-present trials (b), and for the N1, N2p, and P3 components.

N1

N2p

P3

Discussion

The aim of the present study was to investigate whether the visual perception of eighth notes differs between note readers and non-note readers. Therefore, the neural mechanisms that underlie the FE for eighth notes were compared between groups. As expected, a strong FE was found for the behavioral data for both groups. However, no difference was found in the strength of the FE between note readers and non-note readers. Furthermore, it was investigated whether the timepoint of the FE emergence would differ between groups. It has been shown previously that the timepoint of the FE differs with respect to the stimulus complexity (i.e., the heterogeneity of the stimuli; Becker et al., 2017). In the present study, an earlier onset of the FE would have indicated a faster processing in the note-readers compared to the non-note-readers group. However, this could not be confirmed by the present data. In none of the ERP components was a difference found between the note readers and the non-note readers. From this it can be concluded that the same processing mechanisms were involved in both groups and that, thus, differences in experience with notes do not influence visual perception of the symbols.

The present findings are in contrast to the findings of Ro, Friggel, and Lavie (2009) who found that musicians processed visual musical stimuli (images of instruments) with less cognitive load than non-musicians. However, the stimuli that were used in the present study were less complex than images of instruments and have probably been processed with low attentional load in both groups. Most of the previous research has mainly focused on auditory processing of musical stimuli (e.g., Kumagai, Arvaneh, & Tanaka, 2017; Sanju, & Kumar, 2016; Seppänen et al., 2007; Tervaniemi et al., 2009; Tervaniemi, Rytkönen, Schröger, Ilmoniemi, & Näätänen, 2001) or auditory (e.g., speech) processing in general (e.g., Musacchia, Strait, & Kraus, 2008; Strait, Kraus, Skoe, & Ashley, 2009a, 2009b). These previous studies found that the auditory system differs between musicians and non-musicians. Furthermore, it was found that other auditory, motor, and visual neuronal networks become more activated in musicians than in non-musicians when they watch others playing an instrument (Haslinger et al., 2005; Haueisen & Knösche, 2001; Huang et al., 2010; Patston et al., 2007; Proverbio, Calbi, Manfredi, & Zani, 2014). However, these processes are much more complex than those that were investigated in the present study. Furthermore, the results of the present study are in line with the findings of Strait and colleagues (2010) who found that musical experience led to improved auditory attention but did not improve visual attention. They are also in line with the findings of Cohen and colleagues (2011) who found better auditory but not visual memory in musicians than in non-musicians. Furthermore, Clayton and colleagues (2016) also found better auditory working memory and better performance in a spatial hearing task but not better visual attention in musicians than in non-musicians. In contrast, George and Coch (2011) found better auditory and better visual working memory in musicians than in non-musicians. To summarize, most studies support the view that musicians perform better in the auditory domain, but not necessarily in the visual domain – although there are some exceptions.

Beside the main research question, the present study offers interesting insights into the mechanisms underlying the FE. First, it was shown that the FE can also be found for symbols and not only for letter detection, numerical digits or the detection of animal silhouettes (e.g., Frith, 1974; Wolfe, 2001). Furthermore, there is an ongoing debate as to whether the FE is a target effect or a background effect. The first account stresses the processing of the context elements and assumes that familiar context elements are easier to group and to suppress (Richards & Reicher, 1978; Wang, Cavanagh, & Green, 1994). The second account emphasizes features of the target stimulus and argues that a non-familiar target stimulus creates a higher saliency and will, therefore, be more easily detected (Hawley, Johnston, & Farnham, 1994; Johnston, Hawley, & Farnham, 1993; Lubow, Kaplan, Abramovich, Rudnick, & Laor, 2000). The design of the present study might help to investigate this more closely. If the FE had been found only in the target-absent trials, this would mean that it was a background effect. If it had been found only in the target-present trials (and not additionally in the target-absent trials), this would mean that it was a target effect. If the FE had been found in both the target-absent and the target-present trials, drawing a conclusion would not be that clear and, probably, both processes would have been affected by familiarity. In this study, the FE was found for the latency of the early N1 component for the target-absent trials only, leading to the conclusion that the very early processing of the background elements was faster in the easier “unfamiliar target” condition, and that, thus, the familiar elements were processed faster than the unfamiliar elements at this processing stage. At later (mid-early and late) processing stages, the amplitude of the N2p (for the target-present trials) was affected by familiarity as well. From this, it is concluded that the FE is a target effect at intermediate time range and that the familiar target elements were processed more intensively (indicated by stronger N2p amplitudes) than the unfamiliar target elements. At the latest processing stage that was investigated, the FE was also found only for the target-present trials and was, thus, again a target effect. The P3 occurred later and had a reduced amplitude in the more difficult “familiar target” condition. This is in line with the general assumption that the P3 amplitude is lower for more difficult tasks. Therefore, it is concluded that there is a different influence of familiarity on different processing stages (i.e., on the background processing during the very early processing and on the target processing at mid-early and late processing stages).

The present study is subject to some limitations. One is that the analysis of the P3 component was restricted to the parietal P3 (under the P1, Pz, and P2 electrodes). This component is often referred to as P3b component which is associated with attention that is related to memory processes (Polich, 2007). Therefore, the processes that are associated with the investigated P3b component fit well to the research question that was investigated. As expected, an effect of familiarity on this component has been found. However, there is a further P3 component (often called the P3a component) which can be found under more frontal electrodes and which is mainly related to frontal-driven task processing (Polich, 2007). Since these frontal cognitive processes were not the subject of the actual study (which deals with visual processing) and because no differences between note readers and non-note readers were expected, this component has not been investigated. If a group effect had been found, it would have been a memory (or learning) effect which should have been found in the P3b component.

One further limitation is that the number of participants in each group was not equal (approximately 60% note readers and 40% non-note readers). However, because this ratio is not very far from equal sample sizes it was assumed that the results would remain the same with a ratio of 50/50 musicians versus non-musicians.

Furthermore, one might argue that the time windows that were used for ERP analysis were relatively small in the present study. These were chosen with the aim of making the analysis comparable with the study by Becker and colleagues (2017) who also investigated the time course of the FE by means of ERPs. However, an additional analysis with longer time windows has been performed, but the results remained the same (i.e., the same ERPs were affected by familiarity and also no difference between note readers and non-note readers was found) and, thus, they are not reported here.

One more, and probably the most severe, limitation is that the note-readers group did not include professional musicians, but instead people who could read notes and who played instruments in their leisure time. It was expected that a high familiarization with the note symbols had been acquired during the process of learning the reading of notes. However, most people in Western societies might be familiar with note symbols to some degree, even when they do not know what each symbol musically exactly represents. Although this seems very plausible, there is a residual uncertainty that both groups were (almost) to the same degree familiar with the eighth notes used in this study. Therefore, it should be investigated in future research whether the findings of the present study can be generalized to the visual perception of other more complex stimuli than eighth notes (e.g., clefs or instruments). Furthermore, future research could investigate a sample of professional musicians with a higher degree of expertise than the note-readers group that was used in the present study.

A further variant of the present experiment could be to change the mirrored eighth notes to other notes (e.g., quarter notes) which have different connotations for note readers and non-note readers. For non-note readers, a change from eighth notes to quarter notes would lead to a simplification of the stimulus. For note readers, it would mean a prolongation of the auditory image related to the visual stimulus while playing or singing. It would be very interesting to investigate in future research whether this also leads to a prolongation in ERP components in the note-readers group compared to non-note readers.

Conclusion

The present study supports the view that the very early and mid-early visual processing of very simple stimuli does not differ between note readers and non-note readers. Future research is needed to verify this for more complex musical stimuli (e.g., clefs) and for professional musicians with a very high degree of familiarization with eighth notes. It is concluded that the early visual perception of eighth note symbols does not differ between note readers and non-note readers. This extends previous knowledge about brain plasticity with respect to expertise of musical stimuli.

Footnotes

Acknowledgements

The author thanks Carmen Lewa and Nikoletta Lippert for data collection and Axel Newe for helpful comments on the manuscript.

Declaration of conflicting interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Linda Becker was supported by the Bavarian Equal Opportunities Sponsorship – Förderung von Frauen in Forschung und Lehre (FFL) – Promoting Equal Opportunities for Women in Research and Teaching.

Peer review

Mari Tervaniemi, Cicero Learning.

One anonymous reviewer.