Abstract

This article introduces the analytical approach of practice mapping, using vector embeddings of network actions and interactions to map commonalities and disjunctures in the practices of social media users, as a framework for methodological advancement beyond the limitations of conventional network analysis and visualization. In particular, the methodological framework we outline here has the potential to incorporate multiple distinct modes of interaction into a single practice map; can be further enriched with account-level attributes such as information gleaned from textual analysis, profile information, available demographic details, and other features; and can be applied even to a cross-platform analysis of communicative patterns and practices. The article presents practice mapping as an analytical framework and outlines its key methodological considerations. Given its prominence in past social media research, we draw on examples and data from the platform formerly known as Twitter to enable experienced scholars to translate their approaches to a practice mapping paradigm more easily, but point out how data from other platforms may be used in equivalent ways in practice mapping studies. We illustrate the utility of the approach by applying it to a dataset where the application of conventional network analysis and visualization approaches has produced few meaningful insights.

Keywords

Introduction

Social network analysis has become a key tool for the study of user actions and interactions on contemporary social media platforms, and beyond. Often, however, such analyses remain somewhat superficial, merely presenting the standard network graphs produced by the key visualization algorithms implemented in popular tools like Gephi (Bastian et al., 2009), or identifying the clusters of tightly connected accounts highlighted by popular modularity algorithms like Louvain (Blondel et al., 2008), without sufficient consideration of the limitations of such approaches. At worst, and especially in the hands of inexperienced researchers, such attempts to make sense of networks result in network “furballs” of severely limited value (and the authors of this article readily admit to having produced such visualizations ourselves at times); even if the network analysis and visualization produces networks with more distinct features, however, they often fail to represent more than a handful of obvious patterns, reducing complex and multilayered action and interaction patterns into overly simplified graphs and statistics.

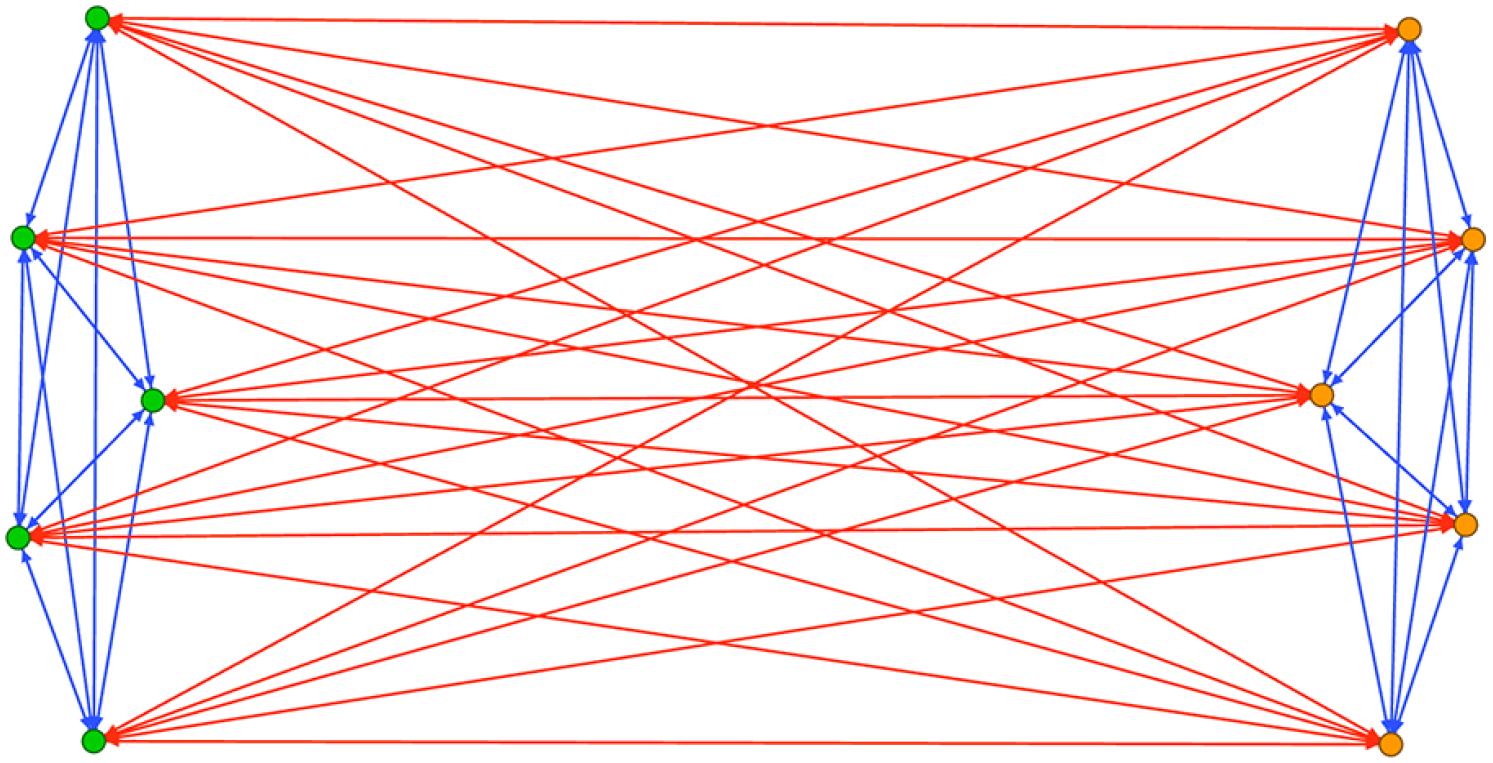

Figure 1, for instance, shows a typical network graph of Twitter interactions (@mentions and retweets) from a controversial political debate. At face value, the network visualization itself, as well as the interaction data underlying it, provide very limited insight into the structures and dynamics of interaction within the participating group of accounts; while the modularity algorithm appears to have identified a number of distinct network clusters (distinguished by color), their validity must be questioned since the visual network structure does not provide any strong evidence that supports their existence. It is possible to derive meaningful insights from such data, however, as we will show in the following.

A typical “furball” network of @mentions and retweets on a controversial topic on Twitter.

A number of key factors combine to produce such pragmatic but undesirable simplifications. First, in the context of social media platforms (as well as in many other comparable communicative situations), user actions and interactions are often multimodal: on Twitter, for instance, users were able to @mention, retweet, quote-tweet, follow, and direct-message each other, but in many publications drawing on Twitter data some or all of these interactions were combined to produce a single connection (i.e. network edge) between two accounts, whose strength (i.e. edge weight) represented the total number of @mentions, retweets, quote-tweets, and/or other forms of interaction from one account to another. Alternatively, some publications resorted to presenting multiple separate graphs of the different interaction types—Williams et al. (2015), for instance, show separate graphs of @mentions, retweets, and follower-followee relationships for each of the three Twitter hashtags they examined. As Howison et al. (2011) argue, the conceptual choice behind selecting one type of interaction over others or combining different types of interactions is rarely theorized, which affects the interpretability of such studies. Extra dimensions are often simplified into single edges between the nodes, which eliminates crucial distinctions in communication practices from the overall network.

Second, whether publications distinguish between different interaction types in this way or combine them into a single network edge to represent the connection between two accounts, such analyses also often struggle to fully consider (and visualize) directionality. Most social media interactions are not inherently reciprocal: while friendship relations between Facebook accounts are mutual, for instance, the same is not true for follower relations on Twitter, and communicative interactions between accounts (@mentions or retweets on Twitter, comments on Facebook or Reddit, etc.) are generally reciprocal only if the mentioned account chooses to respond. Standard network analysis tools like Gephi do provide the means to take the directionality of network edges into account in both analysis and visualization—but such details are often lost in the published research. (Gephi, for instance, uses curved edges to embed a clockwise connection logic, but even articles that draw on this functionality rarely explain how the curvature of an edge indicates which is its source and which is its target node.)

Third, even if the directionality of the underlying network has been reflected in the network data, common implementations of network modularity algorithms (colloquially known as “community detection” algorithms, though of course the clusters they detect may not represent true communities in the sense used by media, communication, and cultural studies scholars) must often necessarily ignore this directionality to produce meaningful results. The powerful Louvain algorithm (Blondel et al., 2008), for instance—available as a Gephi module and a stand-alone package for Python and other programming languages—does not take directionality into account as it identifies network clusters. This is problematic, since it will end up identifying, for instance, both the bots and the genuine users caught up in a mob of bot accounts trolling a number of target users through repeated @mentions as a tightly packed cluster (or even as a “community,” in the language Louvain itself uses), even if the targeted users at the center of the mobbing remain entirely passive; or assign politicians, journalists, and ordinary users to a large cluster of accounts discussing politics if those ordinary users tweet frequently enough at the accounts of politicians and journalists, even though the latter two types of accounts engage only with each other and never respond to members of the general public. Further, even when modularity algorithms take directionality into account, they will usually assign each account to one distinct network cluster, rather than also allowing for boundary cases: accounts whose communicative practices place them more or less equally in the affinity of two or more clusters. This does not sufficiently reflect patterns in most real-world social networks, where membership is rarely binary but instead expressed on a sliding scale from full commitment toward much more casual participation.

We provide this brief list of key concerns about the limitations of conventional social network analysis and visualization not as a fundamental critique of network analytics as a valuable component of the communication researcher’s toolkit—we have used many of these techniques ourselves, and at times struggled with their limitations, yet value the contributions they can make if used with the necessary care and rigor. However, we also suggest that social network analysis—or, more to the point, the analysis of participant practices in social networks—need not remain constrained by these limitations. Rather, in this article we present the concept of practice mapping, and the use of vector embeddings of network actions and interactions as a means to map such practices, as a framework for methodological advancement beyond the limitations of conventional network analysis and visualization. In particular, the methodological framework we outline here has the potential to incorporate multiple distinct modes of interaction into a single practice map; can be further enriched with account-level attributes such as information gleaned from textual analysis, profile information, available demographic details, and other features; and can be applied even to a cross-platform analysis of communicative patterns and practices.

This article, then, is designed to present practice mapping as an analytical framework and outline its key methodological considerations. Given its prominence in past social media research, we draw on examples and data from the platform formerly known as Twitter to enable experienced scholars to translate their approaches to a practice mapping paradigm more easily, but point out throughout how data from other platforms may be used in equivalent ways in practice mapping studies. We begin our discussion with an introduction to the concept of practice mapping, explaining why we have chosen this term; we then outline the key steps in its methodological implementation; this is followed by a brief example that illustrates its utility. We conclude with a discussion of the applicability and limitations of the practice mapping approach, and an outlook on further developments.

Why “practice mapping”?

As we have noted, conventional network analysis and visualization maps unidirectional and/or mutual connections between two accounts that might represent multiple modes of interaction between them, and often flattens them into a single network representation. This often oversimplifies the structure of the network, to be able to perform any network analysis at all.

Consider the example shown in Figure 2: here, two opposing sides of a polarized debate (shown in green and orange, respectively) engage in in-group support by retweeting each other’s posts (blue edges), as well as in out-group animosity by critically @mentioning members of the opposing side (red edges). A visualization of this network in a standard network analysis tool like Gephi would identify members of both groups as belonging to the same densely connected network cluster because of the strength of their connections both within and across the two sides (Figure 3). This is correct from a purely functional point of view, but any interpretation of such a cluster as a “community” in the colloquial as well as scholarly sense of the term—for example, as a community of practice (Lave & Wenger, 1991)—would be inherently incorrect.

Simplified representation of a highly polarized network—members of Group A (green, left) and Group B (orange, right) each only retweet within their own group (blue lines), and only @mention their opponents (red lines).

A Gephi visualization of the network between Group A (green nodes) and Group B (orange nodes), using the Force Atlas 2 algorithm. Both groups exclusively engage in reciprocal in-group retweeting (blue lines between members of the same group) and out-group @mentioning (red lines between members of opposing groups).

Instead of directly mapping the networks of connections between accounts, then, it would be preferable to understand the activity and interactivity patterns of each account as its distinct participation practice, and to assess how similar this practice is to the distinct practices of all other accounts. Accounts in Group A that are frequently retweeting their friends and frequently @mentioning their opponents would thus be identified to have distinctly different practices from accounts in Group B that are frequently retweeting their friends and frequently @mentioning their opponents (Figure 4).

Gephi visualization of the similarities in tweeting practices between members of the two groups, with gray lines representing the strength of similarity between the practices of any pair of two accounts.

We describe this transformation of the underlying connection network into a network showing similarities between account actions and interactions as practice mapping because we can understand the sum total of each account’s actions and interactions—including its patterns of engagement with other accounts, but also various other features from its use of language to its sharing of URLs, images, and videos—as its unique individual configuration of practices.

Scholars of sociology and media and communication understand “practices” as patterns of “doings and sayings” in a specific setting (Bakardjieva, 2020; Schatzki, 1996). Developed within fields of philosophy (Schatzki, 1996; Schatzki et al., 2000; Turner, 1994), education (Engeström, 2001), and organizational studies (Gherardi, 2000), the concept has been introduced to media and communication theory by Couldry (2004).

Couldry (2012) argued that the concept of practices is crucial for making sense of various phenomena ranging from everyday activities to political and social activism, as it allows studies to capture patterns of activity that display a level of regularity and sociality. While Couldry himself did not elaborate on the distinctions between the notions of action, activity, and practice, studies from the fields of practice and activity theory (Kuurti, 1995; Schatzki, 2012) distinguish between the more atomic doings, which are called actions or activities depending on the theorist, and the practices that arise from such doings being repeated by a group of people.

There is no scholarly consensus on how often or how many times an action needs to be performed to be considered a practice. What is more important is for practices to be informed by specific knowledge and values, directed toward specific objects, and undertaken in ways that make relevant others recognize them as practices (Nicolini, 2012). For actions using the affordances of social media platforms, such recognition may be achieved by tapping into a particular “texture of expression” (Papacharissi, 2015, p. 33). Drawing from Bourdieu’s (1977, 1992) articulation of practice, Papacharissi (2015) sees practices on social media as emerging organically through processes of socialization. Other social media scholars have increasingly adopted the notion of practice, though many have utilized the concept without deeper conceptualization. While this is justifiable for a notion common to everyday language (Bakardjieva, 2020), this complicates both the empirical identification of practices and the further theoretical synthesis of the results of such studies.

There are some recent efforts to operationalize practice from a media and communication perspective. Most notably, Mattoni (2020) has proposed an operational definition of practices that positions them as phenomena more general than actions, performed by social actors through their use of skills in interaction with material objects and systems of meaning. Building on this definition, she developed a method of interviewing that produces “media practice maps” which allowed her to explore political activists’ daily practices of media use. To examine the practices of larger groups of users engaging with transnational movements like MeToo, Mendes and colleagues (2023) have enhanced the definition of platform vernaculars (Gibbs et al., 2015, p. 257)—or “unique combinations of styles, grammars, and logics,” specific to each social media platform within its geographic, social, and political contexts—to introduce a notion of “vernacular practices” which can be identified through thematic analysis (Braun & Clarke, 2006). Building on the results of a cross-national interview study and systematic literature review, Trillò and colleagues (2022) produced a typology of social media rituals which they understand as “typified communicative practices” (p. 1). Finally, many studies of online communities, including seminal works such as Baym (1999), Cherny (1999), and Tepper (1997), used ethnographic methods to explore their doings and sayings, and these practices’ role in forming and sustaining such groups of users.

While these studies excel at capturing the social, symbolic, and material aspects of practices of smaller groups of users, qualitative approaches such as interviews or close reading are difficult to replicate at scale. This prevents us from understanding how practices are distributed within and across distinct groupings of users, how their prevalence changes with time, or whether and how they co-occur. Focussing on practices of knowledge-sharing on StackOverflow, Hillman and colleagues (2023) have demonstrated that “trace ethnography” (Geiger & Ribes, 2011), or statistical analysis of large-scale numerical and categorical data, complemented by interviews with high reputation scores and time series analysis of participants’ data, can provide insights on how trajectories of knowledge-sharing change as participants become more experienced. Following Howison and colleagues’ (2011) signposting of practice theory as a fruitful theoretical grounding for network analysis, we argue that constructing a map of practices can offer a view of the connections, ruptures, and overlaps between the doings and sayings of much larger groups of users (p. 790). A map of practices, constructed in the way we outline in the following, can also serve as a sampling frame for deeper contextual or interview analysis, which then adds the firsthand perspectives of practitioners to the birds-eye view offered by the map. Regardless of the method, such deeper analysis should aim for identifying the elements of practices that, in addition to action, support these collective patterns of doing and saying—namely, shared goals, values, knowledge, and material environments (Kasianenko et al., 2024; Reckwitz, 2002; Shove et al., 2012). The identified practices could be performed by members of a community of practice in its original definition, who share a sense of common identity, mutual accountability, and a repertoire (Wenger, 1998). However, as Gherardi and colleagues (1998) note, it is also valuable to identify sustained and collective activities where the community aspect is not as clear-cut. This means that practice mapping could be applied to a broader range of communicative formations, such as “issue publics” (Burgess & Matamoros-Fernández, 2016), or “hashtag publics” (Bruns & Burgess, 2015).

We note that the practice mapping approach we are outlining here is distinct from other well-known “mapping” approaches such as issue mapping (Burgess & Matamoros-Fernández, 2016; Marres, 2015) or controversy mapping (Venturini & Munk, 2021), for two reasons. First, these approaches continue to draw on conventional analysis and visualization of interaction networks between social media accounts, while practice mapping abstracts from these direct representations of communicative networks on social media platforms by mapping instead the patterns of similarity between the communicative actions—practices—of accounts more generally. This distinction also holds for advanced network mapping methods like the node2vec technique, which enhances conventional network mapping by conducting random walks through such network graphs to improve the classification of network neighborhoods (Grover & Leskovec, 2016): while it adds new network analysis opportunities, it does not inherently change the underlying network’s specific focus on interactions rather than the broader range of practices that our approach incorporates.

Second, issue and controversy mapping by definition focus on distinct topics and events, as identified for instance by a shared hashtag that is used by participating groups of accounts. While practice mapping can be applied to such datasets, too (as our discussion of the #robodebt example below will demonstrate), the existence of such a distinct issue or controversy is not required for its application: practice mapping can be used just as easily to identify distinct practices within a dataset of everyday activities within a given communicative context. The datasets our approach is applied to need not be determined by the existence of a thematic hashtag or other marker of a shared topic of discussion.

We also stress again that—in spite of our use of examples from Twitter as a convenient and well-understood platform example—the concept of practice mapping is by no means limited to this platform; indeed, we expect that the approach we outline here can be adapted for research tasks well outside the study of social networks in a narrow sense. As we have noted, an account’s practices can include how it interacts with other accounts (in other words, depending on a platform’s interactive affordances, how it views, clicks on, mentions, replies to, comments on, shares, likes, reacts to, up- and down votes, mutes, blocks, reports, and otherwise responds to other accounts’ posts); how it contributes original content of its own (e.g. what words, images, videos, URLs, hashtags, emoji, and other features it includes in its posts); where on the platform it does so (what pages, groups, hashtags, subreddits, discussion fora, group chats, channels, or other communicative spaces it posts to); what information about itself it or the platform provides (i.e. what profile details, activity metrics, or platform verification and participation badges are available for the account); or even when (during what hours of the day or days of the week) it is usually active. If available and ethically appropriate, such information might even be further enriched with other external information (for instance, party membership and roles for politicians’ accounts; news organization for journalists’ accounts; etc.).

This does not imply that all such features are or should be treated as equivalent and exchangeable; how they might be weighted in an application of the practice mapping approach will depend on the specific questions to be explored by the research. While we cannot provide universally applicable guidelines for every possible use case, in the following section we discuss and illustrate how to operationalize the practice mapping framework for a given dataset, and how to consider the implications of weighting different communicative features.

Practice mapping in practice

The central methodological innovation at the core of the practice mapping approach is to treat the interaction data that conventional network analysis would have used as a direct input, as well as any other activity patterns that can be derived from the data for each account, as input for an intermediary vector embedding stage of the data processing. Using the simple example of two polarized groups that we visualized in Figures 2–4, for instance, the first account in Group A—which we will name A1—engaged in the directed interactions shown in Table 1.

Retweet and @mention patterns for account A1.

In other words, A1 retweeted only the four other accounts in Group A (but not itself), and it @mentioned only the five accounts in Group B (but not any of the members of its own group). By treating every possible combination of account name and tweet type as a unique dimension, this means that we could represent A1’s interaction practice as the 20-dimensional practice vector < 0, 1, 1, 1, 1, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 1, 1, 1, 1, 1 > . If A2 similarly retweeted each account in Group A but itself, and @mentioned every account in Group B, its vector would be < 1, 0, 1, 1, 1, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 1, 1, 1, 1, 1 >, and so on. If accounts in Group B showed the opposite pattern, the comparison between vectors for A1, A2, B1, and B2 would look as shown in Table 2.

Comparison of interaction vectors for accounts A1, A2, B1, and B2.

Respectively, the pairs A1 and A2, and B1 and B2, each show considerably similar (but not entirely identical) practices, therefore, but there are no overlaps whatsoever between the practices of accounts in Group A and those of accounts in Group B.

In a second step, we can then apply a standard vector comparison approach to determine the similarity between the distinct practices of each pair of accounts. One common metric for doing so is cosine similarity, which is computed by taking the quotient of the dot-product of two vectors over the product of their magnitudes. In other words, cosine similarity parametrises the “pointing in the same direction”-ness of two vectors within a vector space as a comparative metric that produces real-valued outputs between 0 (not at all similar) and 1 (entirely identical). For the four sample accounts above, this results in cosine similarities of 0.89 between each pair of accounts in Group A and each pair of accounts in Group B, and cosine similarities of 0 for each pairing of an account of Group A with an account from Group B. (These cosine similarities never reach the maximum value of 1 because—at least in our theoretical example—none of the accounts ever retweets itself, so that retweet patterns even among accounts in the same group are never entirely identical.)

It is important to note here that these comparisons between the practice vectors for each pair of accounts are symmetrical: that is, the comparison of the vector for A1 with the vector for A2 produces the same value as the reverse comparison of A2 with A1. This halves the number of computational comparisons that need to be performed to compute these similarities; it also means that we can now analyze and visualize the set of pairwise comparisons between all accounts’ practice vectors as an undirected network. For our simple thought example, a visualization of the practice mapping network—where each account is a distinct network node, and the similarities between each pair of accounts’ practices are represented by an edge of an appropriate weight between 0 and 1—would result in a network map identical to that presented in Figure 4 above.

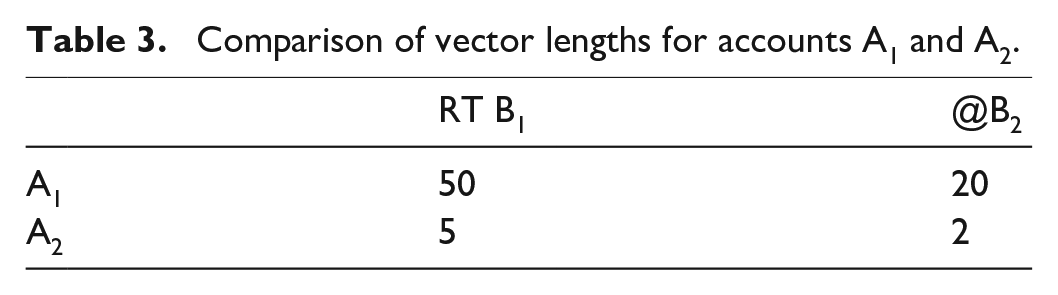

The example we have used here, however, represents an unusual case where all accounts directed exactly one retweet and/or one @mention toward those accounts they chose to interact with. This is highly unlikely to occur in everyday practice. Taking a practice mapping approach, then, how should we treat cases where the two accounts we intend to compare show similar overall patterns in their engagement with others in the network, but have different levels of activity? For instance, if A1 retweets B1 50 times and @mentions B2 20 times, and if A2 retweets B1 five times and @mentions B2 two times, the overall distribution of their interactions across the network is identical, yet the volume is different by a factor of 10—or in other words, the directionality of A1’s and A2’s vectors is identical, but their lengths are different, as shown in Table 3.

Comparison of vector lengths for accounts A1 and A2.

The various available measures of vector comparison can be affected by such differences. This may be welcome in contexts where activity volumes matter; elsewhere, however, we would still want to treat two accounts with the same interaction patterns as equivalent even if one account was somewhat more active than the other. Cosine similarity chiefly takes into account the angle between two vectors, rather than their lengths, and is therefore relatively robust; other similarity measures, such as Euclidean distance, are affected by differences in vector length considerably more strongly. Alternatively, to avoid impacts from different vector lengths, we can normalize the values for each dimension of an account’s vector by dividing them by their sum (in our example above, 50 + 20 = 70 for A1 and 5 + 2 = 7 for A2, as shown in Table 4.

Normalized vector lengths for accounts A1 and A2.

If we now apply cosine similarity to these normalized interaction vectors for A1 and A2, their practices will be regarded as identical—and in many applications of the practice mapping approach, this is likely to be the preferable outcome.

In spite of the eradication of activity volume differences that results from the normalization of vector lengths, it will usually still remain useful to filter the least active accounts from the dataset before the systematic comparisons between the accounts’ interaction vectors that practice mapping requires are applied. This has conceptual as well as practical reasons: at a conceptual level, the practices of accounts that show only very limited activity may well be similar to those of accounts that are highly active (for instance, after normalizing, the practices of an account A1 that @mentions each of B1 and B2 once can be considered to be identical to those of an account A2 that @mentions each of B1 and B2 1000 times), but for a highly active account we can safely assume that such a pattern represents clear intention, while for a largely inactive account the pattern may be merely accidental. To include such accidental matches in an analysis of the predominant practices that may be found in the dataset would be misleading; much as in many conventional network visualizations, then, it remains advisable in practice mapping, too, to define an appropriate threshold of minimum participation that accounts need to meet before they can be considered in the analysis. (The level of that threshold will depend on the nature of the dataset and the activities it describes; there is no universal solution for its selection.)

At a practical level, too, this reduction of the source dataset to those accounts which meet a minimum activity threshold is useful, as it reduces the computational load of the vector comparisons we must perform. Although, as we have noted, these cosine similarity calculations are symmetrical (so that we do not need to compare A1 to A2 and A2 to A1), for any given set of accounts A1 to An we still have to calculate the cosine similarity for each pair Ai and Aj where j is greater than i; this means that the computational load still grows at the rate of n2/2. Removing accounts with low levels of activity from the set beforehand can thus substantially reduce this load. Beyond this, more sophisticated approaches to reducing the load of performing pairwise comparisons for large-scale datasets could employ sparse matrices or non-linear dimensionality reduction (McInnes et al., 2018).

The systematic calculation of the cosine similarities for the normalized activity patterns of each pair of accounts that remain in our dataset after filtering out the least active accounts will thus finally result in a similarity value between a pair of accounts Ai and Aj that ranges between 0 (entirely dissimilar) and 1 (completely identical). As noted above, we can now treat this as the representation of an undirected network between these accounts, and apply the standard tools of network analysis to this similarity network. Note that this similarity network is substantially different from conventional interaction networks, however, in that there is an edge between every pair of nodes, even if the weight of many such edges (i.e. the similarity between their practice patterns) will in many cases be close to or even exactly zero—in other words, even a comparatively small set of 1000 accounts will have 10002/2 = 500,000 edges describing their pairwise similarities.

Since our practice mapping approach is predominantly interested in identifying groups of accounts with highly similar practices, it would therefore be appropriate to apply a further filter to the vector similarities network before attempting to analyze or visualize it: by setting a minimum threshold for cosine similarity values, we can filter out any edges that predominantly represent dissimilarity. Indeed, by retaining only those edges that point to considerable similarities in interaction patterns between accounts, it becomes even easier to identify those groups of accounts in our dataset that share common practices in their engagement with others. A possible cutoff value for filtering out predominantly dissimilar edges is the cosine of 45° (~0.707), since 45° represents the mid-point between entirely identical (0° angle between vectors) and entirely dissimilar (90° angle) practice vectors.

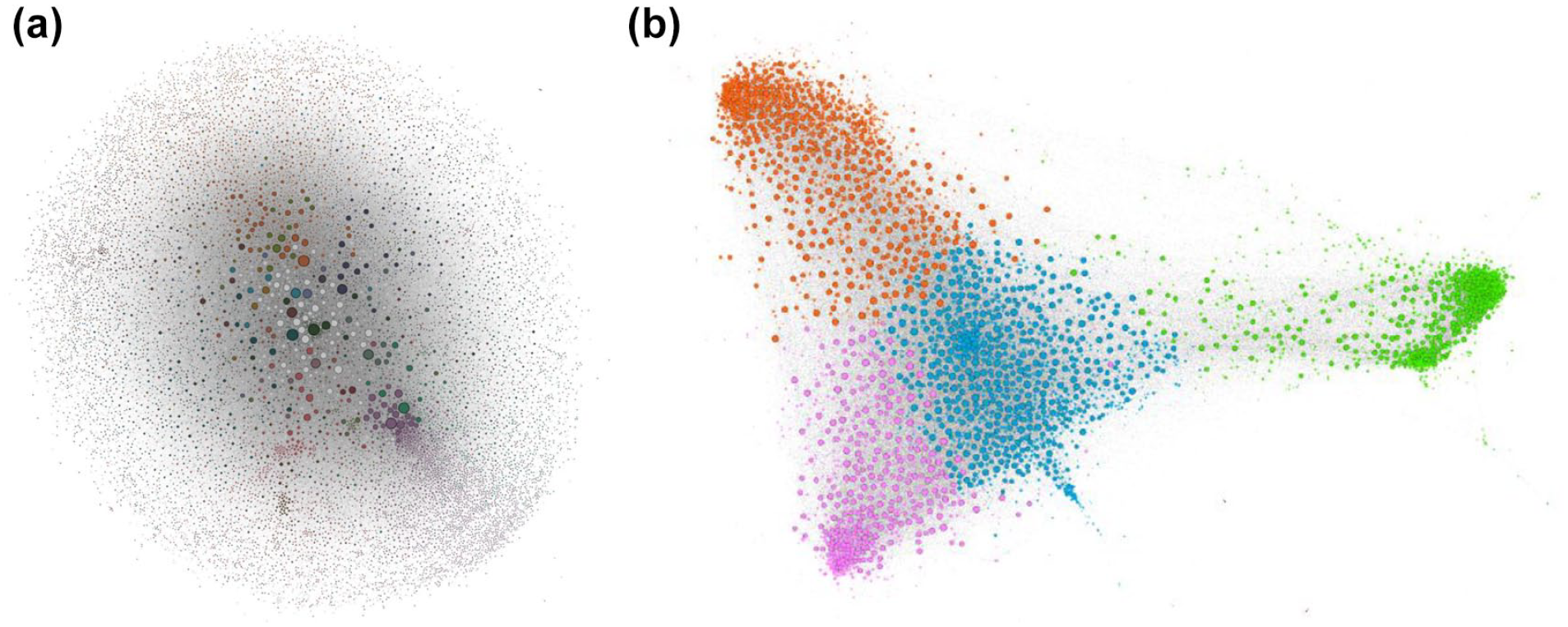

Practice mapping and its interpretation

We demonstrate the results of the application of our practice mapping approach with a real-life example in Figure 5. Here, Figure 5a (left) shows a conventional network map, with the network comprised of directed retweet and @mention interactions between a set of accounts discussing a controversial tax debt recovery scheme, dubbed “Robodebt,” using the hashtag #robodebt in Australia between late 2016 and mid-2023 (cf. Dehghan & Bruns, 2023). We included only accounts with a combined total of at least 100 retweets and @mentions, and visualized the network using the Force Atlas 2 algorithm (with Approximate Repulsion and LinLog mode switched on) in the network analysis software Gephi (Jacomy et al., 2014). The overall structure of this network remains largely amorphous, as a result of the various limitations of conventional network analysis and visualization that we have identified above. While network analysis and visualization is often exploratory and interactive, and popular tools like Gephi offer a wide range of visualization settings that can be used to enhance the visibility of a given network’s structural features (cf. Van Geenen and Wieringa, 2020), in a case like this no amount of (justifiable) experimentation with such settings could untangle this furball and produce more distinct internal network structures, simply because there are no distinct internal network structures in the data used for this conventional network analysis.

(a, b) Conventional interaction map (@mentions and retweets) for the #robodebt hashtag (left); practice mapping of account interaction similarities in the #robodebt hashtag (right). Nodes colored based on Louvain modularity algorithm clusters. Any color similarities between both maps are accidental.

Meanwhile, to generate Figure 5b we drew on the same interaction data, generated normalized interaction vectors for all accounts that posted a combined total of at least 100 retweets and @mentions, calculated the pairwise similarities between these vectors, and filtered the edges represented by those similarities so that we retained only those edges with a cosine similarity value of at least 0.6. Finally, we visualized the undirected similarity network created by those edges using the Force Atlas 2 algorithm (with the same settings as for Figure 5a) in Gephi, and used the Louvain modularity detection algorithm (Blondel et al., 2008) with a modularity threshold of 1.0 to detect and color clusters in the network. (Simplified Google BigQuery SQL code for producing these network data from the source Twitter data is included in the Appendix 1.)

The resulting map of practices in Figure 5b clearly shows considerably more defined clusters of accounts than the map of interactions in Figure 5a. Even though the various unidirectional or reciprocated interactions between accounts through retweeting and @mentioning that Figure 5a represents produced a graph that pulled participating accounts together into a tightly bound network with limited structural differentiation (or in other words, what network analysts have come to call a “furball”), it turns out that underneath this flurry of @mentions and retweets between participants there are some clearly distinct practices which are held in common by subsets of the overall population. (We acknowledge that this transformation from amorphous blob to differentiated structure represents an extreme—if not uncommon—case, and that in other cases conventional network maps certainly can contribute valuable insight. Even then, however, the analysis of both interaction and practice maps can substantially advance our ability to extract meaning from the data.)

Having identified—both visually through the practice mapping network and computationally through the modularity detection applied to that network—that these distinct practices are present, it is now also possible to determine what they represent. Approaches to doing so are necessarily dependent on the specific research context of the datasets being analyzed, and may range from qualitative close reading of account details and post content to further quantitative analysis of the various practice clusters and their activities; here we can outline only a selection of standard approaches that may produce useful insights, and show how they helped us understand the divergent practices in our Robodebt example dataset.

First, we examine the contribution of the four practice clusters identified by our practice mapping in Figure 5b to the overall volume of activity in our dataset. We pay particular attention here to their contribution over time, since the Robodebt dataset covers a period of more than 6 years, and we speculated that account participation practices may have evolved over time. Figure 6 shows the relative contribution of each of these four clusters, as well as of any other accounts in our Robodebt dataset that were not included in our practice mapping analysis because they did not meet the required threshold of a combined total of at least 100 retweets and/or @mentions; it retains the same cluster colors as shown in Figure 5b. It is clearly evident that the group of accounts in cluster A dominate the early stages of the Robodebt debate, at times contributing more than 50% of all weekly tweets, and that accounts in groups B, C, and D increase their posting activity only at various later stages (with B dominating the mid-period and C the late stages of the timeframe covered here, and D making a minor contribution especially between 2019 and 2022).

Second, we separately examine each cluster of the practice mapping network in turn, and for that cluster calculate the total weighted degree of each of its accounts (note that as the practice mapping network is undirected, there can be no distinction between weighted indegree and weighted outdegree here). The weighted degree measure is the sum total of the weight of all edges connecting an account to the rest of the network, and thus in a practice mapping network represents the sum of all similarity comparison values. Calculated only for the practice cluster that the account belongs to, weighted degree therefore helps us determine which accounts in the cluster are most similar to all other accounts in the same cluster. These accounts thus model the common practices that define a cluster in the practice map: their practices can be regarded as archetypal for the cluster as a whole. We suggest this approach rather than merely taking the sum or average of the practice patterns exhibited by all the accounts assigned to a given cluster because it is in the very nature of the practice similarity comparison framework we have outlined here that practice clusters consist of a central core of highly archetypal representatives surrounded by a periphery of less typical members; to interpret the common factors that make the cluster a cluster (and potentially a community) it is therefore most useful to examine its most typical representatives.

It may be possible to draw conclusions about the nature of each cluster already from the identities of these most typical accounts in the cluster; however, the practice mapping approach we have outlined here—at least if it chiefly takes into account active participation, for example, through @mentions and retweets—tends to identify the most active accounts as central members of each cluster, rather than those that are being addressed the most. In a third step, therefore, it might be useful for instance to examine whom these core members @mention or retweet most often, but perhaps also which hashtags they use most frequently, or what domains they share most regularly. This is likely to require some qualitative interpretation, and practice mapping is thus very clearly a mixed-methods rather than purely quantitative approach: while as part of the examination of these distinct practices we can quantitatively determine the most common interaction targets for the accounts representing each practice cluster, who or what these interaction targets represent (at a cultural, social, or political level) remains a qualitative question.

Combining these two steps for the Robodebt example, we first selected the 10 most central accounts in each of the four key clusters identified in Figure 5b; we then identified the 10 most frequent targets of these core representatives’ @mentions and retweets, respectively. This process produced some substantially different patterns of interaction and attention for each of the four clusters; combined with the temporal patterns in Figure 6 and interpreted against our further background knowledge about the Robodebt case it enabled us to formulate a preliminary interpretation of these clusters and their divergent practices:

Cluster A (green) represents early activists opposing the Robodebt scheme. They predominantly retweet their own community leaders, including a collective account (@not_my_debt) set up to protest the scheme, and @mention Australian government and opposition leaders as well as the Centrelink agency administering the scheme to raise awareness of its problems and demand action to remedy them.

Cluster B (blue), prominent especially during 2019 and 2022, represents the politicization of the Robodebt issue for party-political purposes. Members frequently @mention the then-Prime Minister Scott Morrison, as well as other ministers of the conservative government, but prominently retweet only Labor party and union movement accounts as well as the Twitter accounts of ordinary left-wing users. Notably, activity in this cluster stagnates following the change of government to the Labor party in 2022.

Cluster C (orange) is mostly active from 2022 onwards, and especially increases its posting volume from August 2022, as the Royal Commission into the Robodebt Scheme begins its public hearings. Its practices are somewhat similar to those of cluster B, yet feature more mentions of media accounts (and especially those related to the national public broadcaster, the ABC)—most likely because its members are referring to or critiquing the ABC’s live coverage of the Commission’s hearings.

Cluster D (purple) broadly follows the diachronic activity patterns of cluster B, if at a much lower volume (see Figure 6); Figure 5b shows it to be closely aligned with that larger cluster, too. Our preliminary analysis appears to indicate a somewhat stronger alignment with the Australian union movement rather than the Labor Party, and this may drive the small distinctions between these two clusters. Further analysis will need to confirm this assumption.

Contributions per week to the Robodebt debate by the four practice clusters identified in Figure 5b, and by all remaining accounts in the dataset.

We present these preliminary interpretations here not as a complete analysis of the distinctive participation practices in the Robodebt example, but to illustrate the analytical insights that the practice mapping approach can contribute. We note here, for example, that the brief practice mapping demonstration we have conducted is based solely on interaction data (@mentions and retweets), without taking into account any other of the potential attributes we could also have utilized to further describe each account’s distinct practice patterns (e.g. hashtags used, URLs shared, periods of activity, profile information, etc.)—and yet it has already managed to identify several groups of accounts that are distinct not only in their interaction practices, but also in when over the course of the total period covered by the dataset they were especially prominent. The addition of further account activity attributes to the practice vectors for each account would likely enable an even sharper picture of distinct practices to emerge, but this brief demonstration serves as a sufficient indication of the practice mapping framework’s utility. (These unexpected diachronic patterns also raise the question of whether and how temporality might be incorporated into the practice mapping process itself, of course; we return to this briefly in the Conclusion.)

It is important to note here that, unlike conventional network analysis, the most central accounts in a practice mapping cluster are not necessarily its most prominent or influential participants (as measured by the number of retweets received, or comparable metrics); instead, they are those accounts whose practices are most representative for the cluster’s practices overall—they may be part of the crowd rather than exceptional in any way, and it is this representativeness which practice mapping assesses. The most important or influential accounts, by contrast, are usually those that are most frequently addressed by a cluster’s distinct communicative practices: these may be identified by examining which accounts a cluster engages with most often, for instance. Such accounts may not themselves be depicted on the practice map, especially if they are mostly receiving attention but do not post especially actively; this is by design and avoids the “furball” issue that places universally targeted accounts (e.g. political leaders, celebrities, or institutional accounts) as common connectors at the center of a network map based on interactions. These accounts can instead be found by examining the defining practices of a practice cluster once it has been identified by the practice mapping.

In identifying these distinct practice clusters, we ought also to confront the question of whether these clusters can be said to represent genuine communities of practice (Lave & Wenger, 1991). As we have noted above, even though modularity detection approaches such as the Louvain algorithm we used in Figure 5a and b are often described as “community detection” tools, in the first place they can only identify network clusters; whether such groups of accounts—in the case of social media networks—are also genuine communities in a social science sense will depend on whether their participants are mutually aware of each other, and indeed consider themselves to be members of a community with shared beliefs, values, and ideas (cf. Baym, 1998). The study of such self-identification is likely to require further data beyond what is available in any given social media dataset (e.g. through interviews or other ethnographic work), but the question of mutual awareness can be addressed at least in part through the data we have.

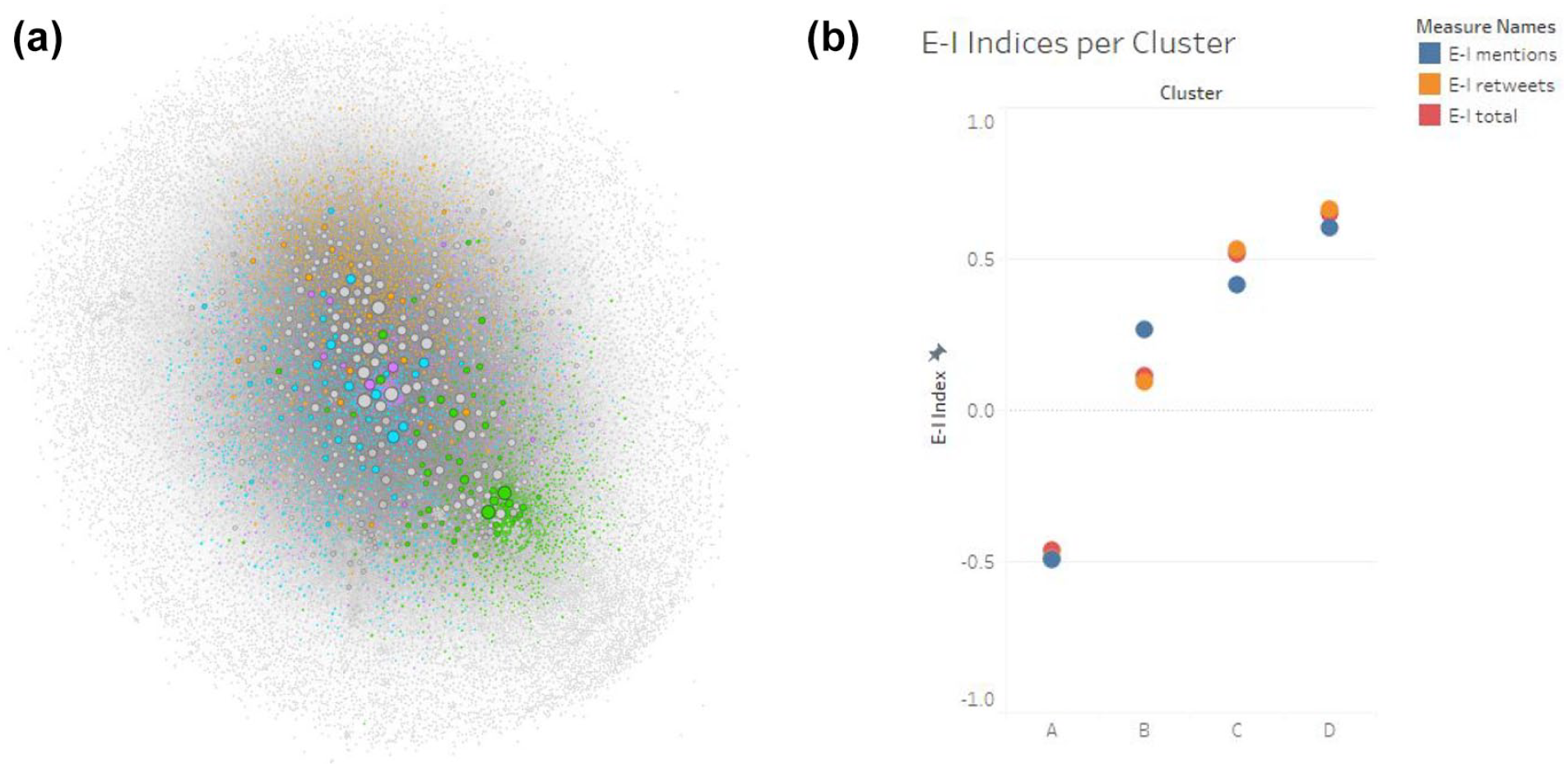

Several approaches may produce useful insights into this question, and two of these are illustrated by Figure 7. First, taking the example depicted in Figure 5, we transferred the coloring of clusters in the practice network (Figure 5b) back to the original interaction network (Figure 5a), to examine visually where in that network our practice clusters are located. The result of that process is shown in Figure 7a. Here, we can see that even amid the generally shapeless structure of the original interactions network, the accounts belonging to distinct practice clusters are unevenly distributed across the overall map. In light of the limitations of conventional network mapping that we have outlined, it is unsurprising that the practice clusters are not neatly arranged in distinct regions of the interaction network—otherwise there would be no need for the practice mapping approach in the first place—,but we can nonetheless see some concentrations of cluster members in specific parts of the interaction network. While the other practice clusters intermingle to a considerable degree, this is especially pronounced for the original activist accounts contained in Cluster A (in green), whom the practice map in Figure 5b also showed to be particularly distinct. This points to the fact that there is some degree of preferential interaction between practice cluster members that would suggest mutual awareness, especially in Cluster A.

(a) Original “furball” network map, with accounts belonging to the four major practice clusters highlighted in each cluster’s color; (b) E-I Index values for each of the four key clusters, divided by tweet types: @mentions (blue), retweets (orange), @mentions and retweets (red).

Further, it can also be instructive to calculate additional metrics that compare the engagement of practice cluster members among themselves and with other parts of the network. Of particular use here is the E-I Index (Krackhardt & Stern, 1988), which can be used to compute a value from +1 to −1 that describes the relative external or internal focus of a group’s interactions with others:

For any given practice cluster, a value of +1 for its combined interactions would indicate that members of the cluster engage only with outsiders, and never among themselves (implying no mutual awareness whatsoever), and a value of −1 would mean that members engage only among themselves, and never with outsiders (implying a strong awareness of and preference for in-group communication only). Importantly, the E-I Index can also be computed separately for retweets and @mentions, of course, and this may be useful where these and other communicative affordances are used in distinctly different ways (e.g. using retweets to amplify in-group views and using @mentions to attack outsiders). Figure 7b, then, presents the E-I Index values for each of the four practice clusters in Figure 5b, and again shows considerable differences between the clusters. Cluster A in particular is distinguished by its considerable inward focus: for all tweet types, it scores E-I Index values close to −0.5, indicating a strong inward focus. This aligns with our interpretation of this first cluster as representing early activists organizing and raising awareness about the Robodebt scandal, not least also by coordinating among themselves through @mentions and amplifying each other through retweets. Subsequent clusters, by contrast, are progressively more outward-focussed: while Cluster B shows relatively neutral E-I Index values for retweeting and (less so) for @mentions, Clusters C and especially D score E-I Index values near or above +0.5 for their tweets of various types. This accords with our interpretation of these clusters as later arrivals: coming to the topic later, these users have an increasingly large number of accounts from outside their own groups to choose from as they @mention and retweet participants in the Robodebt debate.

Setting strict requirements for mutual awareness and a preference for in-group engagement, then, we might consider only Cluster A to represent a true online community: its members show a clear and significant preference for engaging in the first place with each other, rather than with outsiders. With the benefit of hindsight, we can confirm this interpretation: a key actor in this early activist cluster has reported that they built a strong community of like-minded activists to perform their Robodebt-related activism (Topsfield, 2023). However, as the overall interaction network in Figures 5a and 7a shows, there also is considerable interaction between accounts throughout the entire Robodebt dataset; a more balanced reading of our findings here, therefore, might be that Cluster A, not least by virtue of including the earliest and most committed activists, represents the central core of the overall Robodebt community, and that the later-arriving Clusters B, C, and D constitute subsequent layers of that community that accumulated around that central core—and, at least in the case of Cluster B, also established an alternative, if less distinct, second center which (in our reading) focussed more on the political implications of the scandal than the flaws of the Robodebt scheme itself.

These two simple analyses are far from exhaustive, however: practice clusters may also be examined in a variety of other ways. In particular, it is possible that accounts with highly similar posting patterns may be coordinating their activities; it may therefore be useful to apply key tools like CooRNet (Giglietto et al., 2020) or the Coordination Network Toolkit (Graham et al., 2024) to the posts made by the members of each practice cluster to detect the presence of such (authentic or inauthentic) coordination. Whether such coordination is authentic or artificial, however, remains a matter for interpretation—prosocial activists may very legitimately coordinate their posting patterns to ensure greater visibility for their cause, for instance, while state-sponsored influence operations might engage in similar coordination for considerably more nefarious purposes.

Mapping practices beyond retweets and @mentions

We have used examples of the communicative affordances provided by Twitter in our discussion so far, since most scholars in our field will be familiar with these forms of interaction; however, subject to data availability it is just as easy to construct and compare practice vectors that represent an account’s interactions on other social media platforms. Retweets and @mentions on Twitter are equivalent to boosts and @mentions on Mastodon, for instance; on other, more forum-based platforms, we might distinguish between comments, replies, and even up- and down votes.

Indeed, even among the communicative affordances available on Twitter, we have focussed predominantly only on basic interaction practices, using retweets and @mentions. In applying the practice mapping approach to these kinds of interactions, we have focussed in the first place on the practices shared between accounts that actively contributed posts to the dataset; the resulting map of practice clusters therefore tends to highlight groups of those accounts that contributed most actively and (in terms of their interaction patterns) most consistently to the debate. (Usefully, this tends to exclude spam accounts, whose posting patterns are usually too idiosyncratic to be similar to genuine participants’ practices.) As we have shown, the key targets of these clusters’ interactions emerge only in a second step where we interpret the clusters that the practice mapping has identified. By contrast, at the center of conventional network maps we usually find the accounts of major news organizations, politicians, and other key stakeholders who are @mentioned and/or retweeted by all sides of a given issue, but who do not necessarily contribute particularly actively (or at all) to the debate.

However, in addition to the practice mapping of outgoing interactions from each account as we have described it so far, we could just as easily also construct and compare a second set of vectors describing the incoming interactions received by each account. We can do so in an entirely separate analysis—that is, considering and comparing only those incoming interaction vectors—or combine incoming and outgoing interactions into a single vector, to which we then apply our cosine similarity comparison (in the latter case, the outgoing and incoming components of the vector should be normalized separately, using the total sum of the account’s sent and received interactions, respectively).

The former approach—focussing only on incoming interactions—remains a form of practice mapping in the sense that it can be used to identify clusters of accounts that are frequently targeted by the same posters and their practices: similar to our thought experiment in Figure 2, for instance, this approach might identify distinct groups of political accounts that are commonly retweeted by their supporters and @mentioned by their opponents. The latter produces a combined map of similarities in both the accounts’ own practices and those of other accounts targetting them. This combination is likely to water down any clear distinctions in either form of practices if those outgoing and incoming practices do not clearly overlap with each other; however, in some cases it may also produce further insights. For instance, if a group of accounts is targetted in distinct ways by the various groups on both sides of a political debate, but chooses to engage only with one of those sides—practicing what Dehghan (2020) has called “active passivity” toward the other side (p. 228)—this interactive choice should stand out clearly.

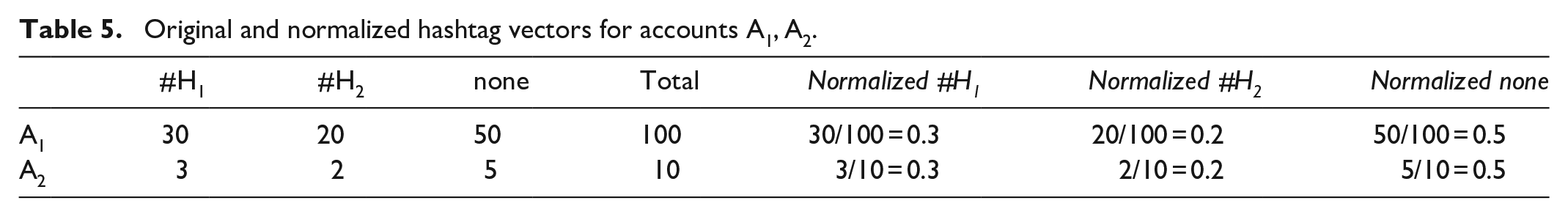

However, practice mapping does not need to limit itself to interaction practices in an explicit sense. In much the same way that we have constructed practice vectors that represent an account’s engagement with other accounts, we can also construct vectors that show the account’s practices in using hashtags, embedding images or videos, sharing external content (at the domain or URL level), or addressing certain topics. Indeed, the range of vectors we might consider here is limited only by what attributes of the account’s posts (or in fact any other information about the account) can be quantified: this also includes the results of any manual or computational coding efforts (e.g. of the account’s posts expressing support or opposition for specific political questions, or of the account profile’s self-identification in terms of location, interests, or political stance) and background information from other sources (e.g. the party affiliations of politicians’ accounts). In each case, these vectors can again be normalized against the total volume of the account’s posts or other relevant measures, to avoid interference from different underlying levels of platform activity for each account. For example, the normalized hashtag vectors for accounts A1 and A2, using hashtags #H1 and #H2 as well as posting some tweets without hashtags, might be calculated as shown in Table 5.

Original and normalized hashtag vectors for accounts A1, A2.

These extensions of interaction practice mapping to include other practice aspects are perhaps best demonstrated by the discursive practice of engaging with certain topics. Already, many topic modeling approaches identify a set of distinct topics from an overall corpus of data and then calculate the relative affinity of each unit of text with these various topics, expressed for instance as a value between 0 and 1. This approach could be applied separately to each individual post or, alternatively, to the combined total of all posts made by a given account, and would then show the account’s relative affinity with each one in the full list of identified topics. By converting these affinities into a vector and comparing these topic vectors for each pair of accounts, we can thus compute and map the similarity of topic choices for all accounts, producing either a practice mapping that shows these topical similarities in isolation (as a specific aspect of their practices), or a mapping that combines this aspect of the accounts’ practices with other aspects (such as their interactive practices, approaches to information sourcing, etc.).

In such a combined, multi-feature practice mapping approach, then, we must consider how best to integrate these different practice aspects. Several approaches are possible. First, we could construct separate vectors for each practice aspect, calculate the similarities between these various vectors for each pair of accounts, and add up the similarity assessment for each aspect to produce a combined similarity value. In doing so, it would also be possible to award different weightings to these similarity scores: for instance, we might consider a strong similarity in interactive practices to count for more than a strong similarity in hashtag practices, and a strong similarity in the use of external sources to count for even more than these. For example, given individual similarity values for a pair of accounts A1 and A2, then, their total similarity score would be calculated as:

The specific values for α, β, and γ will need to be carefully selected and justified, of course. Once the total similarities are calculated, however, the same analytical and interpretive processes as we have described them above for our interaction similarities network in the Robodebt case can be applied to the combined practice mapping outcome; the practice clusters identified from it will then be the result of shared practice patterns across the various component practices (interactions, hashtags, sources, . . .) that contribute to the total similarity assessment.

A second option, which constitutes a simplified version of this first approach, is to combine the various vector components directly into one composite vector for each account, and to calculate the cosine similarities for each pair of practice vectors as usual. This substantially reduces the number of cosine similarity comparisons that must be performed (again only one per pair of accounts, rather than one for each practice component and pair), and thereby also lessens the computational load required. It does remove the opportunity to weight the relative importance of the various practice components that contribute to the combined vector, however, which may remain desirable.

Beyond these simple approaches to the (weighted or unweighted) combination of practice similarities, however, vector mathematics (algebraic topology) offers several other approaches. For instance, practice manifolds are bundles of vectors that engage in similar practices in the higher-dimensional vector space; these can be mapped at scale as lower-dimensional spaces that embed and convey the vernacular of a practice. Similar to the non-linear dimensionality reduction algorithm Uniform-Manifold Approximation Projection (UMAP; McInnes et al., 2018), such practice manifolds are Reimann manifolds that use distance metrics rather than a O(n2) pairwise cosine similarity algorithm to compare vectors. This also addresses issues of computability and the Curse of Dimensionality one encounters when embedding practices at scale.

However the various practice components are combined in any given case, what results from this process is an output that integrates these distinct components into a single practice map. This enables the identification of clusters of accounts that share strongly similar practices, and the examination and interpretation of the distinct set of shared practices around which each cluster has formed. Especially for complex communicative contexts where networks of interaction alone fail to produce conclusive insights into the uses of social media for public debate, we argue that this practice mapping approach can offer considerably richer understandings.

While it is beyond the scope of this article to outline the full range of analytical possibilities, across the various communicative platforms available, that the practice mapping approach opens up, we suggest that its utility will be greatest when patterns of action and interaction on a given platform can be described by a rich set of features. Put simply, a practice mapping of @mentions alone may not provide particularly surprising new insights, compared to a conventional network map of such @mention interactions. A practice mapping that takes into account and combines data on accounts’ patterns of using @mentions, retweets, quote tweets, hashtags, shared URLs, embedded images, and more, however, may well show up considerable detail that the combination of all these features into a single interaction map might miss.

Further, practice mapping will also prove especially valuable where available platform data do not include substantial information on direct interactions between accounts. Here, conventional network mapping is either impossible or must resort to imperfect workarounds: data on public Facebook activities from Meta’s now defunct CrowdTangle service or its new Content Library, for instance, do not directly cover interactions between public pages or groups, for instance, but only posting activity on them; these data can be used to create bimodal networks (e.g. between Facebook pages and groups as one node type, and the domains, URLs, images, or videos they share as another), but such structures are considerably more difficult to interpret at face value. Using the same data as an input to practice mapping—that is, creating vectors per page and group that describe their use of these and other features, and then computing the pairwise similarities between these practices—enables new insights through the identification of clusters of Facebook spaces with distinct posting practices.

Conclusion and outlook

This article has introduced practice mapping as a novel and powerful new technique for the analysis of social media data; as we have shown, it is able to identify distinct patterns of activity where conventional network mapping fails to provide sufficient clarity. In demonstrating its utility, we have applied this approach to data from the now defunct Twitter API, since many scholars in our field will be familiar with the features of such datasets; however, we have noted that practice mapping excels especially also in situations where data on the structures of interaction networks between participants are limited: this is the case for instance for platforms that offer information on the text, image, and URL content of their communicative spaces, but where available information on connections between such spaces covers only the relatively uncommon practice of content crossposting or on-sharing from one space to another. By treating URL, image, and textual patterns as equally meaningful practice vectors alongside any observable interaction practices, our practice mapping approach makes it possible to draw connections between such spaces based on their shared practices, thereby substantially enhancing the insights we are able to generate from such data.

Indeed, while we have presented the practice mapping approach here chiefly as examining patterns of similarity between individual accounts, this application to public pages and groups already points to the fact that it is also possible to use this approach to study patterns of similarity between collective online spaces. It would be possible, for instance, to treat each individual subreddit on Reddit as an object of study: here, in addition to the overall textual and outlinking patterns observed in the subreddit, information on its participants and their respective volume of contributions could be converted into a further vector to describe the subreddit, and used to calculate a new similarity score that describes the similarity between two subreddits’ account populations and their posting patterns, for instance. (It would also be possible to conduct separate practice mapping analyses both within and across subreddits, of course, or at the level both of individual accounts and collective subreddits, to arrive at a hierarchical mapping that combines multiple levels of specificity.)

We note that while in this introductory article we have emphasized the analysis and visualization of practice mapping networks as networks, using well-known tools such as Gephi, the information on accounts’ individual and collective practices that the practice mapping workflow generates can also be productively utilized for other analytical purposes: there are approaches other than network modularity detection that enable the identification of clusters with similar practices within a given crowd of nodes.

Similarly, opportunities to generate additional data points from the available social media data are limited only by the researcher’s imagination. Using manual coding, computational processing, or AI-supported classification, it is possible to generate and extract a wide range of further attributes for each individual account or communicative space, which may then be systematically compared across the entire population as part of the practice mapping process. Such additional attributes could include assessments of the veracity, sentiment, or toxicity of the content posted by an account, for instance, or code for the distinct entities named, narrative frames used, or argumentative claims made.

In addition, as our brief case study of the Robodebt debate has shown, the role of temporality in practice mapping should also be further explored. In our example, practice communities that were predominantly active at different time periods emerged unexpectedly from a practice mapping process that did not explicitly incorporate temporal attributes; alternatively, the analysis of multi-year datasets could also incorporate practice mappings of distinct subset periods within the overall dataset (e.g. per month or quarter, or for periods as defined by external factors), and then trace the movement of accounts between clusters from one period to the next; or the clusters and their typical practice attributes (i.e. their centroids) might be identified for the entire timeframe covered by the dataset, but the affinity of each account to the various cluster centroids could be recalculated on a rolling basis for each distinct period in the dataset, to trace the trajectory of accounts through the overall cluster space.

We hope that our own future work as well as the extensions of this approach by other scholars will provide a wide range of methods for the extraction of practice attributes, and for their operationalisation in the practice mapping process. At the attribute extraction as well as at the network interpretation stage of the process, it is clearly evident that practice mapping is a thoroughly mixed-methods approach. While it involves a central computational component that facilitates the systematic comparison of available practice attributes across the entire population of accounts and produces a network map based on their similarities, we stress emphatically that the aim of this approach is not to simplistically quantify complex interactions between human and non-human participants in a communicative environment. Rather, practice mapping both enhances the systematic analysis and comparison of qualitative attributes across a large population of participants, and provides more rigorous foundations for the identification and interpretation of common patterns in their collective doings and sayings. Where the quantitative and qualitative analysis of these common patterns supports such an interpretation by suggesting that users share not only commonalities in their actions, but also goals, values, and knowledge, it enables us, finally, to state with significantly greater certainty than previously that we have identified the practices of communities, or communities of practice.

Footnotes

Appendix 1

In this appendix, we provide two brief queries that convert conventional social media data into a practice map. As in the article proper, we use Twitter data as an example here, and assume that such data are stored in the popular cloud data service Google BigQuery or a storage service with similar SQL functionality; the query below should also be easy enough to translate into Python, R, or other popular data processing languages, however.

While actual data formats will vary depending on the tool used to collect the data, we assume that at a minimum, researchers have or able to create a table that contains data in the following form shown in Table A1.

Here,

We use the following BigQuery SQL query to convert this interactions table into a table of interaction vectors:

This produces the following data structure, which we store in a new

Here, there is one row for each

Using the BigQuery function ML.DISTANCE, which calculates the distance between two vectors (i.e. the inverse of similarity), we then generate the practice mapping data which can be imported into Gephi or another network analysis tool for visualization. In the following query, we rename the output columns to

In this context, we also highlight that the most computationally intensive aspect of practice mapping is its pairwise comparison of practice vectors per account, which scales at a rate of n2/2. This is a common concern with vector embeddings, and may be addressed in one of a number of ways: first, deploying the computing power sufficient to manage the amount of pairwise comparisons in an acceptable timespan (which is why we rely on cloud-based data processing infrastructure in our own work); second, reducing the computational load by pre-filtering the full dataset for those accounts whose level of activity surpasses a relevant threshold (which we do by filtering for accounts with a total tweet count of at least 100 posts); and third, by employing more sophisticated comparison techniques—such as non-linear dimensionality reduction techniques like tSNE and UMAP—that scale better with larger datasets (McInnes, 2018).

This, then, finally produces a new table in the form

Acknowledgements

We would like to thank the anonymous reviewers of this article for their detailed and constructive comments and ideas for further extensions of the practice mapping approach, not all of which we have been able to address within the scope of this article.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was funded by the Australian Research Council through the Australian Laureate Fellowship project Determining the Drivers and Dynamics of Partisanship and Polarization in Online Public Debate and the Australian Future Fellowship Understanding Intermedia Information Flows in the Australian Online Public Sphere.