Abstract

The prevalence of the anti-vaccine movement in today’s society has become a pressing concern, largely amplified by the dissemination of vaccine skepticism. During the early stages of the COVID-19 pandemic, the vaccination debate sparked controversial debates on social media platforms such as Twitter, which can lead to serious consequences for public health. What determines anti-vax attitudes is an important question for understanding the source of the campaigns and mitigating the misinformation spread. Compared with other countries, Türkiye differentiates itself with high vaccination rates and lack of political support for anti-vaxxers despite its highly polarized political system. Analyzing Turkish Twittersphere, we explore several mechanisms capturing content production and behaviors of accounts within the pro- and anti-vax segments in online vaccine-related discussions. Our findings indicate there is no relation between political stance and anti-vaccine attitude. Both supporters of vaccination (pro-vaxxers) and opponents (anti-vaxxers) can be found across the political spectrum. Moreover, linguistic differences reveal that anti-vaxxers employ more emotional language, while pro-vaxxers express more skepticism. Notably, automated accounts are less prevalent leading to difficulty in assessing genuine support for vaccines, while anti-vaccine bots produce slightly more content. These findings have crucial implications for vaccine policy, emphasizing the importance of understanding diverse language patterns and beliefs among anti-vaxxers and pro-vaxxers to develop effective communication strategies at the national level.

Keywords

Introduction

During times of ambiguity concerning societal events, while reliable sources are busy gathering evidence, the public becomes vulnerable to manipulation. The COVID-19 pandemic was no exception. COVID-19-related misinformation on social media affected the public perception and drove negative behavioral outcomes such as less compliance with social distancing and mask-wearing (Bridgman et al., 2020; Roozenbeek et al., 2020), which resulted in increased transmission of the virus (Lyu & Wehby, 2020). During this period, one prominent topic of discussion was the COVID-19 vaccines, their efficacy, and potential side effects. Social media platforms allowed individuals to express their opinions on vaccines and vaccination, including those who shared false narratives and conspiracies surrounding the vaccines (Brennen et al., 2020; Jiang et al., 2021). Consequently, social media platforms not only accommodated the pre-existing sentiment toward vaccines but also allowed vaccine skepticism to find a voice in the online sphere. Although not all of the vaccine-related content on Twitter contained misinformation, the existing literature evidenced that anti-vaccine propaganda was mostly supported with false narratives and conspiracies, and the prevalence of anti-vaccine misinformation was conspicuous on social media platforms (Cha et al., 2021; Ferrara, 2020a; Gallotti et al., 2020; Gruzd et al., 2021; S. K. Lee et al., 2022; Singh et al., 2022).

Studies revealed that anti-vaccine content on social media is one of the main driving factors behind vaccine hesitancy (Carrieri et al., 2019; Puri et al., 2020; Wilson & Wiysonge, 2020). Wilson and Wiysonge (2020) found that vaccine-related misinformation spread on social media has a significant negative impact on vaccination rates and organized anti-vaccine propaganda through social media drives vaccine skepticism. Since effective vaccination is critical to creating herd immunity and thus successfully containing the COVID-19 pandemic (Aguas et al., 2020), exploring the dynamics of vaccine debate on social media platforms has become an important subject for the public good.

One country that provides an insightful case study into this phenomenon is Türkiye. In the Turkish context, both the incumbent and the main opposition parties have advocated for vaccination during the COVID-19 pandemic (English, 2021; Sabah, 2021). However, this united endorsement did not lead to a high vaccination uptake. Only 62% of the population received their second dose of the COVID-19 vaccines, positioning Türkiye’s vaccination rate notably below many other nations (Pivetti et al., 2023). This disparity can be explained with prevalent vaccine hesitancy among Turkish citizens (Lazarus et al., 2023), which might be fueled by online misinformation about vaccines.

In Türkiye, social media has become a popular alternative space for citizens to access news and voice opinions. Although television remains the primary news source, there has been a significant rise in the use of social media platforms (Karatas & Saka, 2017; O’Donohue et al., 2020). The Turkish public is well aware of censorship in their media, and the distrust in mainstream media intensified after 2011 (Tufekci, 2014), especially during the Gezi Park protests in 2013 when Twitter’s used mainly for information sharing (Ogan & Varol, 2017). Given both the opposition’s and the government’s strong pro-vaccination stance, it is likely that anti-vaccine sentiment finds its primary voice on social media platforms due to a lack of coverage by the mainstream media. Highlighting this phenomenon, a recent study by Furman et al. (2023) evidenced that the pro-Russian media outlet SputnikTR spread misinformation on Twitter about the vaccines developed by the West to increase skepticism toward the Western-sourced vaccines and promote the Russian vaccine.

In light of these, our study aims to expand the knowledge of vaccine debate in the Turkish Twittersphere by mapping the sources and mechanisms behind it. Although support against vaccination was evidenced in multiple social media platforms in other contexts (e.g., Gruzd et al., 2023), we chose Twitter for this particular study due to the platform being the prominent source of information among Turkish citizens during the COVID-19 pandemic (Gülsoy et al., 2022), as well as the higher volume of content it has about COVID-19 compared with other social media platforms (Cinelli et al., 2020).

In this study, we identified the accounts in the vaccine-related content in the Turkish Twittersphere and analyzed the stance on vaccines of their users employing label propagation algorithms from a partially labeled dataset. We investigated the political alignment of these users to explore whether political affiliation is a factor behind the vaccine stance. For this task, we developed a deep-learning model for political stance detection. Moreover, we explored two alternative mechanisms behind the vaccine debate by analyzing linguistic differences between pro-vaxxer and anti-vaxxer tweets and comparing the levels of automated activity between groups. This study is the first to integrate the mechanisms of political stance, linguistic patterns, and social bot activity in analyzing the online vaccine debate.

Related Work and Hypotheses

The primary question guiding our study is: “What mechanisms drove the vaccine debate in Türkiye during the COVID-19 pandemic?” To address this research question, we have reviewed relevant literature, examining various dimensions—political stance, linguistic patterns, and the influence of social bots—that potentially shaped the vaccine debate. Drawing from the insights gathered from the related studies, we formulated three hypotheses to guide our empirical examination. In the following sections, we discuss the literature underpinning each hypothesis and present the hypotheses themselves.

Vaccine Hesitancy and Political Stance

Vaccine hesitancy can stem from a variety of reasons, with demographic factors such as education (J. Lee & Huang, 2022) and socio-economic level playing a significant role (Truong et al., 2022). According to J. Lee and Huang (2022), areas with lower educational attainment and residents in geographically remote or transportation-challenged regions were more prone to COVID-19 vaccine hesitancy. While J. Lee and Huang’s (2022) findings are based on the context of the United States, Truong et al.’s (2022) research offers a broader perspective by reviewing research in various international contexts and finds that the influence of education and geographical factors on vaccine hesitancy is not limited to specific locales but is observed in different contexts.

However, the existing literature also evidenced that vaccine hesitancy can also be a contextual phenomenon where people’s vaccination attitudes can be determined by the availability of information, perceived risks of the vaccines, social norms (Mønsted & Lehmann, 2022), political views (Fournet et al., 2018), cognitive evaluations (Fridman et al., 2021), and trust in government institutions (Dal & Tokdemir, 2022). According to a comprehensive review of COVID-19 vaccine hesitancy from 60 published studies from around the world by Majid et al. (2022), several factors play a pivotal role in shaping COVID-19 hesitancy, including risk perceptions, trust in health care systems, solidarity, previous experiences with vaccines, misinformation, concerns about vaccine side effects, and political ideology. According to Dal and Tokdemir (2022), this trend is also held in the case of COVID-19 vaccination campaigns in Türkiye.

Political affiliations also wield significant influence over vaccine hesitancy (e.g., J. Lee & Huang, 2022). In diverse political contexts, political actors have played a pivotal role in instigating and exacerbating vaccine hesitancy, thereby fueling the mobilization of the anti-vaccine movement. In Brazil, former president Jair Bolsonaro conveyed his skepticism of COVID-19 vaccines in various public speeches despite the growing empirical evidence around the effectiveness of the COVID-19 vaccines (Fridman et al., 2021). Although vaccination rates in Brazil were arguably higher than in many countries (Mathieu et al., 2021), the political neglect of the pandemic and Bolsonaro’s leadership of the coronavirus-denial movement (Ricard & Medeiros, 2020) contributed to the human cost of COVID-19 for Brazil through the lack of inappropriate policy measures and slow vaccine roll out (Malta et al., 2021). In a similar fashion, former United States president Donald Trump referred to COVID-19 as a “new hoax” and downplayed the risks of COVID-19, resulting in delayed official action (Yamey & Gonsalves, 2020).

In European democracies, far-right political parties have exploited the discourse surrounding COVID-19 vaccines to politically mobilize supporters who perceive vaccination as a matter of personal liberty. The new measures and regulations adopted during the pandemic, such as vaccine passports, curfews, and travel restrictions, being highly unpopular among far-right individuals, transformed the anti-vax movements beyond mere vaccine hesitancy. In Italy, the leaders of the extreme right-wing party Forza Nuova were arrested by the authorities amid an anti-vaccination riot (Reuters, 2021). In Austria, a vaccine skeptic party, MFG (Menschen-Freiheit-Grundrechte) won three seats in Austrian local elections (Jones, 2021). In addition, in Germany, the right-wing populist party AFD demonstrated support for anti-vaccine protests (Hecking & Maxwill, 2021).

Furthermore, political partisanship itself is closely linked to vaccine stances. Havey (2020) found that strong partisan affiliation significantly correlated with participation in COVID-19-related conspiracy theories online. In Germany, politically polarized users, particularly those associated with the AFD and SPD, actively engaged in discussions and contributed to the dissemination of COVID-19-related conspiracy theories on Twitter (Shahrezaye et al., 2021). Another study, focusing on European citizens, revealed that those holding ideologically extreme views, whether on the right or left of the ideological spectrum, were more likely to express negative attitudes toward vaccines (Debus & Tosun, 2021). In addition, in the United States, vaccine adoption rates were lower among Republican Party voters (Albrecht, 2022).

Unlike the examples above, Türkiye, a country with high partisan polarization (Aydın-Düzgit & Balta, 2019; Çakır, 2020; Keyman, 2014) shows a relatively different and noteworthy path. According to Erdoğan (2016), political polarization in Turkey has vastly increased, leading to a greater social distance and prejudice between constituencies of different parties. Although polarization is not unique to Türkiye, societies with stronger institutional structures and democratic cultures will find it easier to manage this challenge. While the current political debates are driven by the rivalry between the incumbent and the opposition blocs, both sides actively promote COVID-19 vaccination. As a part of this comprehensive vaccination campaign against COVID-19, Health Minister Fahrettin Koca played a pivotal role by personally inviting leaders from major political parties, illustrating a unified front in public health efforts. Leaders from AKP (Recep Tayyip Erdoğan), CHP (Kemal Kılıçdaroğlu), MHP (Devlet Bahçeli), IYI Parti (Meral Akşener), and the co-chairs of HDP (Pervin Buldan and Mithat Sancar) not only accepted the invitation but also participated in the vaccination drive. This acceptance and participation by the political leaders underlined the importance of the vaccine and demonstrated a rare moment of cross-party cooperation in combating the public health crisis (BBC, 2021). This rare political consensus suggests that party identification in Türkiye might not be the primary mechanism of the vaccine debate. Although anti-vax rallies found support in the country (Hürriyet, 2021), political ties, partisan sorting, and effective polarization are not necessarily exacerbating factors of COVID-19 vaccine hesitancy. Instead, we presume that vaccine hesitancy in Türkiye stems more from issue polarization than from strict partisan divides. Building on this premise, we propose our first hypothesis:

Linguistic Patterns in the Vaccine Debate

The words people choose to express their opinions often offer profound insights into their beliefs, attitudes, and behaviors (Tausczik & Pennebaker, 2010). Recent studies showed that anti-vaxxers (Faasse et al., 2016; Memon & Carley, 2020; Wu et al., 2021) and conspiracy theorists (Fong et al., 2021; Giachanou et al., 2021) differ regarding the words they use in online platforms. One longitudinal and comprehensive study by Mitra et al. (2016) which captured over 3 million tweets for 4 years demonstrated that anti-vaxxers are linguistically similar to conspiracy theorists. Due to the scarcity of research on the language of anti-vaxxers and the given language similarity between conspiracy theorists and anti-vaxxers, we started to compile evidence from previous studies about both conspiracy theorists and anti-vaxxers.

A study comparing the tweets of conspiracy theorists, science influencers, and their followers found that the conspiracy group adopted an out-group focus and used more past-oriented and emotionally negative language (Fong et al., 2021). Regarding the themes, they used more words about power, death, and religion. While the use of the causality words between the two groups was not different, the study found that the conspiracy group used a lower number of certainty words (e.g., “must” and “truth”) than science influencers.

In another study, Rains et al. (2021) found that conspiracy tweets about COVID-19 include more words about certainty, causality, negative emotions, anger, and anxiety, and less about achievement, reward, health, family, relativity, space, and work compared with the other COVID-19 related tweets. Another study by Giachanou et al. (2021) detection examined both supporters and refuters of conspiracy theories. This research found that conspiracy supporters tend to use religion-related words more frequently. Thus, in addition to the heightened use of negative emotion words, and causation identified in previous research, this study highlighted the prominence of religious language and agreement expressions among conspiracy theory supporters.

In a similar vein, studies that have directly examined the language used by anti-vaxxers and pro-vaxxers have also revealed significant linguistic differences. Mitra et al. (2016) showed that anti-vaxxers, similar to conspiracy groups, tend to use more words related to anger, death, in-group, and certainty, whereas pro-vaxxers display better cognitive skills through their use of cause-and-effect language, expressions of insight, exclusion and tentative words, conjunctions, and interrogative sentences. In addition, pro-vaxxers place more emphasis on the present and use more words related to health, sex, and anxiety. Malagoli et al. (2021) found that tweets containing anti-vaccine keywords used death, anger, and negative emotion-related words more frequently, while pro-vaccine tweets included more words related to health, work, and religion. Furthermore, J. Shi et al. (2021) reported that anti-vaxxers used more negative emotion words and function words (e.g., pronouns, articles, and prepositions), but fewer positive emotion words and analytical words. The most commonly used words by anti-vaxxers were related to work and death, while pro-vaxxers primarily used words related to money, religion, and leisure. Thus, although the use of some words has changed between the two groups, negative emotion was consistently used more by anti-vaxxers. In addition to using negative emotions in their tweets, anti-vaxxers also preferred to retweet the negative and toxic replies of others written for neutral accounts’ tweets on Twitter (Miyazaki et al., 2022).

Given the established linguistic differences between anti-vaxxers and pro-vaxxers in previous research, we seek to determine whether these patterns are consistent within the Türkiye context. This leads us to our second hypothesis:

Bot Activity in Online Public Conversations

An important mechanism that potentially drives online debates is automated and coordinated activities through the use of social bots. It was previously demonstrated that social bots can manipulate social media agenda through amplifying or suppressing content, as well as distracting and polarizing the audience (Bradshaw et al., 2020; Ferrara et al., 2016; Yang et al., 2019). Especially during critical political events such as elections and referendums, it is preciously evidenced that social bots are used as a propaganda tool to target voters, promote certain narratives, and even influence the gate-keepers (Kollanyi et al., 2016; Santini et al., 2021; Varol & Uluturk, 2020). A study by Ferrara et al. (2020) demonstrated how social bots were actively used to support false narratives during the 2020 U.S. presidential election. Focusing on the dominant conspiracy theories during the election period, the study found that a large portion of conspiracy-related content was produced by bot accounts. Similarly, according to Shao et al. (2018), compared with the fact-checked ones, content from low-credibility sources is more likely to be shared by social bots. Their behavior is more strategic since they mention highly popular accounts and amplify content within seconds after its creation. Social bots were also an important subject during the acquisition of Twitter/X and the prevalence of bots raised significant concern by Elon Musk (Varol, 2023).

Social bots were also observed in online discussions on COVID-19 (Uyheng et al., 2022; Xu & Sasahara, 2022). Ferrara (2020b) examined 43 million tweets in English about COVID-19 and found that Twitter accounts that have high bot scores are more active in “conspiratorial content of political nature” in COVID-19 discussion. Moreover, Himelein-Wachowiak et al. (2021) looked at the percentage of active bot accounts in COVID-19 tweets by using the open bot datasets and concluded that more than half of the bots in the open datasets were actively tweeting about COVID-19.

Regarding vaccination, a handful of studies investigated bot activity in online debates on vaccines. Yuan et al. (2019) examined bot activity on the MMR (measles, mumps, and rubella) vaccine-related conversations on Twitter after the 2015 California Disneyland measles outbreak and found that bots were almost equally present in both pro-vaccine and anti-vaccine content. The authors also argued that bots display a “hyper-social” behavior by interacting with human accounts within the same opinion group. This suggests that social bots might be one of the driving mechanisms of high clustering in the MMR vaccine debate. In the context of COVID-19 vaccines, Ruiz-Núñez et al. (2022) also found similar levels of bot activity in anti- and pro-vaccination networks, although concluded that bots in the pro-vaccination networks had a bigger impact on the interactions. Likewise, Zhang et al. (2022) argued that social bots were effective in promoting vaccines and even helped debunk false information on vaccines.

Based on the reviewed literature, we presumed that social bots might be active during the COVID-19 vaccine debate on the Turkish Twittersphere. While previous work on social bot activity in vaccine debates suggests that bots can have a positive impact on vaccination, the fact that social bots were found to be more active in conspiracy-related content during COVID-19 still raises the possibility of social bots being a mechanism of anti-vaccine propaganda. Given the prominence of social bots in conspiracy-related content and their potential role in disseminating anti-vaccine propaganda during COVID-19, we posit the following hypothesis:

Methodology

Data Collection and Pre-Processing

To capture a holistic picture of the Turkish vaccine debate, we aimed for a large-scale Twitter dataset that reflects the overall opinion landscape concerning vaccines in Türkiye. We used a data-driven approach, supported by manual inspection, to compile a list of hashtags and keywords. Initially, we created a comprehensive list of keywords covering general vaccine-related discussions. To ensure inclusivity, we expanded our list by identifying additional relevant keywords that frequently co-occurred with the initial set, including their variations. This approach allowed us to effectively capture the vaccine discussion in Türkiye with high recall. This means that the collection encompassed most vaccine-related tweets while minimizing noise, resulting in a dataset with high precisions.

Using Twitter API V2 for Academic Research, we extracted tweets sent between September 11, 2021, and December 1, 2021, that contain at least one of the hashtags or keywords provided in Table 1. We deliberately chose the time frame spanning from September 2021, when the vaccination of children commenced, reigniting the debate surrounding vaccines, to December of the same year, just prior to the introduction of the third dose. This period was selected to encompass intense debates surrounding the advantages and disadvantages of vaccines (see Turcovid19, 2023 in Turkish). Our final dataset consists of 1,263,697 tweets sent by 177,542 publicly available accounts at the time of data collection.

Hashtags and Keywords Used for Extracting Tweets.

In the pre-processing step, we first tokenized the tweets using the Zemberek library, a Natural Language Processing library for Turkish (Akın & Akın, 2007). Next, we removed emojis and URLs and lemmatized the words using the pre-trained Turkish Lemmatizer model of the John Snow Labs’ Natural Language Understanding library (Kocaman & Talby, 2021). These models are readily available in Python programming language for running Natural Language Processing tasks efficiently by leveraging the Spark framework, which enables the scalable and efficient processing of large volumes of text data through distributed computing.

Annotation Process and Label Propagation

Previous work showed that homophily can be observed on Twitter through retweet networks which uncover homophilic ties among different communities such as political groups (Colleoni et al., 2014; Darwish et al., 2020; Magdy et al., 2016) and conspiracy theorists (Del Vicario et al., 2016; Fong et al., 2021). To exploit homophily for inferring the stance toward the COVID-19 vaccine of a large group of users, we created a list of representative users that enabled us to infer the stance of a larger sample. We used label propagation, a machine learning approach used to infer a property of an instance on a network, which is the vaccine stance in our case. With this method, each node in the retweet network gains the pro- or anti-vaxxer label possessed by a maximum number of its neighboring nodes.

To provide the initial user list for annotation, we used a data-driven approach and followed these steps: First, we applied the Louvain algorithm for modularity maximization, which revealed 5 distinct communities in the retweet network. Second, we employed a stratified sampling method and selected a maximum of 600 users with higher in-degree centrality than the median in each of these communities, prioritizing those who were more actively engaged and influential within their respective communities. This resulted in a list of 2,016 users in total. In addition, we included the top 200 users with the highest in-degree centrality in the retweet network to establish a strong foundation of central and highly influential accounts. These users served as key connectors within the network, facilitating information diffusion between different communities. Annotating these users allowed us to infer the vaccine stance of more accounts using label propagation in the next stage compared with a randomly selected group of users.

Four authors annotated a total number of 2,216 accounts as either “pro-vaxxer,” “anti-vaxxer,” “neutral,” or “unknown” by checking the users’ Twitter handle, screen name, profile description, and latest 20 tweets related to the vaccines. In the initial annotation phase, 200 accounts were randomly selected and shared among all annotators to assess the inter-rater reliability. The Kappa score was calculated as 62% during this annotation process, which indicates a substantial agreement among all four annotators (Landis & Koch, 1977). Once all annotators discussed the disagreements, they proceeded annotating their equally distributed set of accounts.

“Pro-vaxxer” and “anti-vaxxer” groups consist of users who implicitly or explicitly support and oppose COVID-19 vaccines, respectively. “Neutral” group comprises those who talk about vaccines without reflecting any standpoint, such as objective news channels. Users in the “Unknown” group, on the contrary, use the keywords and hashtags about vaccines but tweet irrelevantly (e.g., piggybacking trending topics and promoting a product).

For label propagation, we used annotated data to categorize the unlabeled users. We first checked whether an unlabeled user retweeted content from any of the labeled user groups: “pro-vaxxer,” “anti-vaxxer,” “neutral,” and “unknown.” If an unlabeled user retweeted content from both the “pro-vaxxer” and “anti-vaxxer” groups more than five times, we assigned them the label “unknown.” To classify a user as either a “pro-vaxxer” or “anti-vaxxer,” we looked for a distinct preference: they needed to predominantly retweet content from one group while retweeting content from the other group at most once. This method allowed us to label a total of 95,188 users, including 57,931 anti-vaxxers, 27,908 pro-vaxxers, 9,207 unknowns, and 142 neutrals. This larger set of labeled users provided us with a more representative sample for the data analyses.

Political Stance Detection

Using the text data provided by Twitter, we built a supervised machine learning model to estimate the political positions of the users in our dataset. For this task, we used streaming data which contains 10% of the Turkish tweets (approximately 11 million tweets) in December 2021. Subsequently, we randomly selected and labeled the stance of a total of 2,948 users as “Pro-government” (1,178), “Neutral” (686), and “Anti-government” (1,084) by investigating their profile descriptions, names, and last 20 tweets. While only those who supported the ruling government were labeled as “Pro-government,” those who either opposed the ruling government or supported an opposition party were labeled as “Anti-government.” Those who did not demonstrate any stance toward either side but used a keyword or hashtag in their tweets that might be related to a political discussion were labeled as “Neutral.”

We explored various architectures including text and network embeddings extracted by employing different models, which are then used in a downstream task to predict the user stance. Among the trials, we fine-tuned a BERTurk model 1 and found that the Fine Tuned BERTurk Model has the highest performance in predicting tweet stances (F1-Score 92.7%). We used this model to predict the user stance with a majority voting system (e.g., If a user has five tweets supporting the opposition and two tweets supporting the ruling government, this user will be considered as supporting the Anti-government since five is higher than two). A detailed explanation of the model is provided in the Supplementary Information. After completing the model training, we proceeded to gather timelines from users whose vaccine stance had been inferred and who had retweeted others at least once. This approach served a triple purpose: minimizing computational costs, ensuring a representative subset of our dataset and yielding higher accuracy since retweet information incorporates homophily. This methodology provided a total of 21,744 users, making up 23% of the users with a vaccine stance. Subsequently, we employed this dataset to predict the political stance of these users based on their timelines.

In addition, we wanted to see in which way the description linguistics change between two groups, which might lead us to shed light on the “politicness” of the users. For this task, we compared anti- and pro-vaxxers by computing the entropy shift of unigrams, bigrams, and trigrams extracted from their Twitter profile descriptions. Then, we removed the stopwords and the n-grams that are subsets of the other n-grams (e.g., “yeniden refah” and “refah partisi” are both subsets of “yeniden refah partisi”) and plotted the top 20 n-grams with highest entropy shift as shown in Figure 3.

Linguistic Styles

To understand the differences between anti- and pro-vaxxers, we analyzed both groups’ use of specific words from various categories (e.g., Health, Power, and Risk). We employed the Turkish version of dictionary of Linguistic Inquiry and Word Count (LIWC), which is a widely used tool to delineate the linguistic pattern of individuals (Pennebaker et al., 2015). LIWC is similar to a corpus consisting of several word categories. For instance, the Health category consists of 294 different words such as “clinic,” “flu,” and “pill,” whereas the Certainty category includes words such as “always” and “never.” This categorization and knowing the number of words used for each category enable us to calculate the ratio of the words from the specific category by dividing the total number of words from this category by all words used by individuals. For instance, if someone tweets “I will always swim,” given that only one Certainty word (”always”) is from a total of four words (I, will, always, and swim), the ”Certainty ratio” would be calculated as 25% (1 divided by 4).

Previous studies calculated the LIWC scores to compare two groups of people or text data to point out the differences. Fong et al. (2021) divided U.K./U.S. Twitter users into two groups “scientific” and “conspiracy” groups, computed LIWC ratios for each word category for each user regarding their timelines, and conducted mean comparison analysis. Giachanou et al. (2021) worked on English tweets and clustered two groups from their user dataset as “conspiracy propagators” and “anti-conspiracy propagators” and followed the same approach. Since we were interested in demonstrating the linguistic alterations between “pro-vaxxers” and “anti-vaxxers” on a user basis, we examined the tweets of users who tweeted (

Bot Detection

We generated bot scores of the accounts in our dataset using Botometer, a supervised bot detection algorithm developed by the Observatory on Social Media at Indiana University (Sayyadiharikandeh et al., 2019). Botometer is extensively used in the literature to detect social bot activity on Twitter in various subjects including stance polarization (Aldayel & Magdy, 2022), political propaganda (Ferrara et al., 2020), and also vaccine debate (Broniatowski et al., 2018). It is important to note that there are multiple bot detection algorithms adopting similar yet slightly different methods from each other. Apart from the machine learning models that use many parameters to generate a bot score, the difference in bot detection methods also comes from their definitions of bots, which determine what kind of behavior needs to be tracked by the software. Botometer defines bots as “social media accounts controlled in part by software that can post content and interact with other accounts programmatically and possibly automatically” (Varol et al., 2017). We presumed that this potential automation behavior can be practiced by bots, in some cases, humans demonstrating bot-like behavior.

We relied on the BotometerLite version introduced in the Botometer V4 upgrade (Yang et al., 2020). Compared with Botometer V4 which generates bot scores based on the 200 recent tweets of a user alongside the metadata, BotometerLite only considers information in the user profile for bot detection. The advantage of BotometerLite is that it checks accounts in bulk and therefore performs efficiently with high-volume users (Yang et al., 2020). In addition, since our data collection was carried out earlier than the bot analysis, the last 200 tweets of users would not reflect the same kind of behavior and some of the bot accounts could be suspended by Twitter from the time of data collection.

BotometerLite provides a bot score for each account, which ranges between 0 and 1. A bot score closer to 0 indicates a more human-like behavior, whereas a score closer to 1 is a sign of having higher chances of being a bot. To avoid misclassification, we decided not to use a threshold to label accounts as a bot or not. Instead, for each group (anti-vaccine, pro-vaccine, government, opposition) and their combination, we compared the distribution of bot scores. This method also allowed us to detect users having both human-like and bot-like characteristics, that is, humans demonstrating bot-like behavior.

Findings

User Characterization and Temporal Analysis

We started our analysis with descriptive findings on activity rates and temporal changes of pro- and anti-vaxxers activities. We first constructed a retweet network of users and their assigned groups as shown in Figure 1a. Anti-vaxxers have a more prominent and coherent cluster, while pro-vaxxers engage with them through more than one community. We observed that the number of anti-vaxxers in our dataset was over twice the number of pro-vaxxers; and, the anti-vaxxers sent seven times more tweets than the pro-vaxxers (see Figure 1b and c). Considering the volume of activity and the unique number of hashtags used and accounts participating in the discussion, we can argue the stance toward vaccines on Twitter was predominantly negative rather than positive.

Categorization of users Retweet network of vaccine discussion (a). Total number of users annotated (b). Total number of tweets shared (c). Total number of unique hashtags used (d). Daily tweet counts by group (e).

Exploring the activities of groups with different vaccine attitudes reveals the points where important events occurred during the vaccine debate. We found that vaccine-related discussions in our dataset were most frequent during various events, indicating that our dataset accurately reflected events in the offline world. For instance, the anti-vaxxer-organized rally held in Maltepe, “The Big Awakening Rally” (Büyük Uyanış Mitingi in Turkish), resulted in a peak of anti-vaccine tweets. Moreover, our analysis showed that contentious issues related to vaccination and COVID-19, such as mandatory vaccinations for babies and compulsory online education, were the subject of discussion by both anti- and pro-vaxxers, leading to increased engagement from both sides (Figure 1e).

Political Stance

Anti- and pro-government groups do not form two visibly distinct clusters in Figure 2a, unlike the clear separation observed when vaccine attitudes of users compared in this debate (see Figure 1a). However, our political stance model suggests that the groups of anti-vaxxers and pro-vaxxers have significantly different proportions of pro- and anti-government users (

Political stance of users Retweet network of vaccine discussion, colored according to their political stances (a). Political stance of anti-vaxxers by percentage (b). Political stance of pro-vaxxers by percentage (c).

Although the ratio of pro- and anti-government users differs between groups, suggesting a potential link between being pro-government and pro-vaccine engagement, a deeper examination of linguistic cues in user descriptions reveals that an anti-vax stance is not primarily rooted in political orientation, except for a small subset of users affiliated with the “Yeniden Refah Partisi” (see Figure 3).

Top 20 n-grams of user descriptions with highest entropy shift between anti and pro-vaxxers note that “medeniyettasavvuruyolculugu” and “mto” refer to an Islamic education program.

When we identified the n-grams with highest entropy shifts in the user descriptions of anti- and pro-vaxxers, we found that less than 1% (452 in total) of the anti-vaxxers mentioned a Turkish political party called New Welfare Party (Turkish: Yeniden Refah Partisi), we assume that the reason that New Welfare Party is mentioned within anti-vaxxers’ tweets is due to the party leader Fatih Erbakan’s anti-vaccine statements (BirGün, 2022) and he was also leading the organization of anti-vax rallies in Türkiye. We also found that 2.5% (686 in number) of the pro-vaxxers and 0.2% (142 in number) of the anti-vaxxers mentioned the ruling party, that is, Justice and Development Party (AKP) in their user description. Although this finding raises the possibility of a stronger association between pro-vaccine stance and supporting the AKP, the overall number is low and not significant enough to draw any conclusions.

We argue that the reason why the AKP appeared in some of the user descriptions in both pro-vaxxers and anti-vaxxers is due to strong political partisanship in Turkish politics. The fact that no other party appeared was mentioned in user descriptions suggests that the AKP members are more expressive about their political stance compared with other party supporters. Overall, our findings indicate that only a small number of users in our dataset explicitly mentioned their political affiliations, suggesting that the vaccine debate in Türkiye was not strongly linked to partisan politics and instead was a part of single-issue politics. Instead, we identified some non-political patterns in the descriptions of anti-vaxxers and pro-vaxxers. We found that word usage in religious topics (e.g., “muslim,” “islam,” and “faith”) was prevalent in the user descriptions of the anti-vaxxers, suggesting a link between anti-vaccine stance and religiosity. On the contrary, pro-vaxxers used more words denoting socio-economic status (e.g., “university,” “board member,” “phd,” and “doctor”).

In summary, our findings support our first hypothesis (H1), demonstrating that political affiliation in Türkiye does not relate to individuals’ anti-vaccine stance and political groups do not form different clusters in the vaccine debate although anti-vaxxers and pro-vaxxers have significantly different proportions of pro- and anti-government users. The findings of user descriptions also support that except from Yeniden Refah Partisi, political affiliations did not differ between anti- and pro-vaxxers groups, yet distinctions in cultural and socio-economic identities have been observed.

Psycholinguistic Differences of Anti-Vaxxers and Pro-Vaxxers

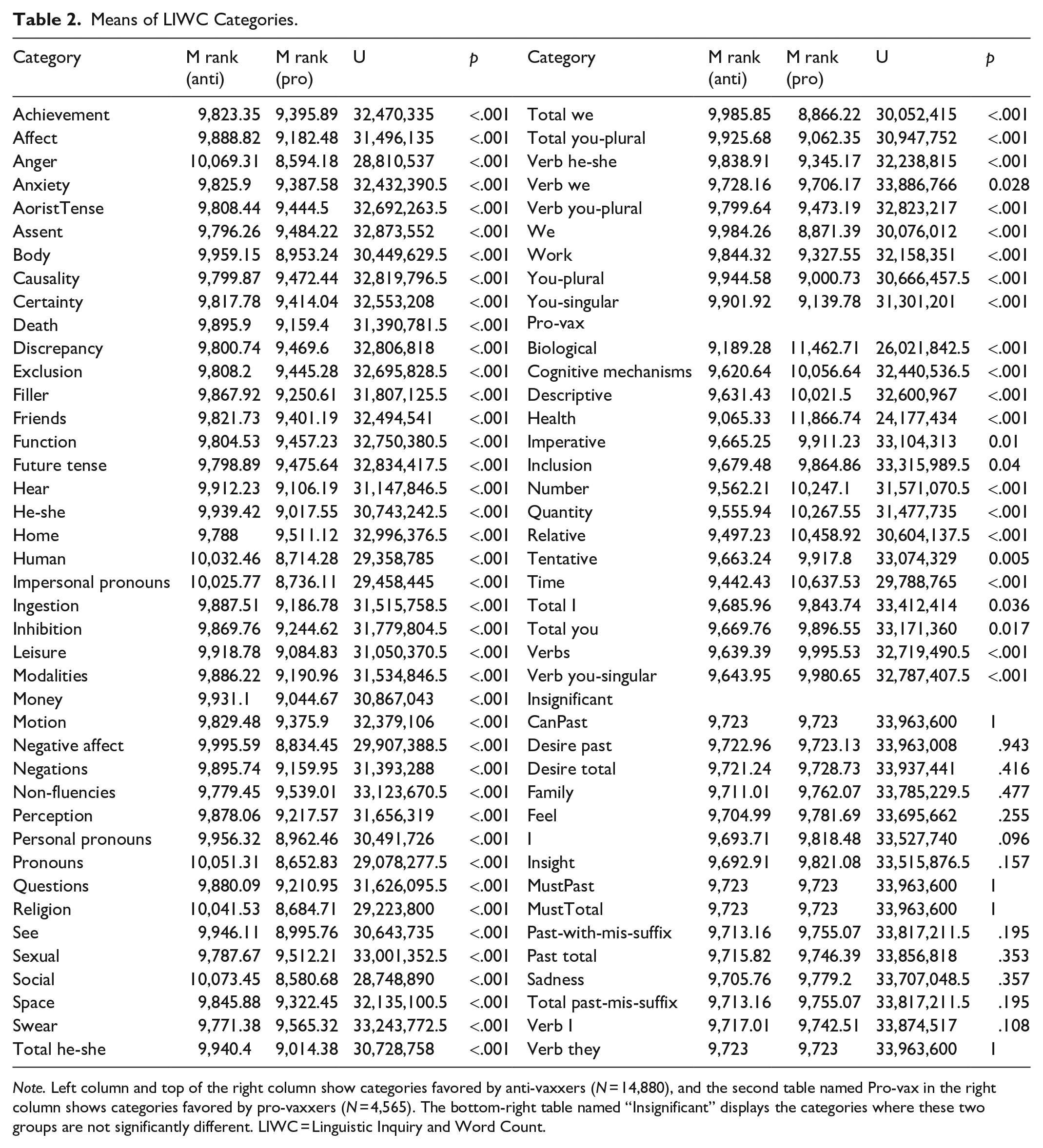

Considering the linguistic differences between anti and pro-vaxxers, we conducted Mann-Whitney U tests and reported the mean ranking of each group as well as insignificant differences (see Table 2). We observed that in line with previous literature, the language of these two groups diverges. Pro-vaxxers reflected a tendency to define and cognitively reason the situation with a usage of higher number of words from Cognitive Mechanism (e.g., cause, know), Biological (e.g., blood, pain), and Health (e.g., flu, pill) categories. They also tried to quantify the situation with related expressions from the following groups: Quantity (e.g., few, many), Number (e.g., second, thousand), Time (e.g., end, until), Relative (e.g., area, stop), Descriptive (e.g., urgent, beautiful). They also used more expressions from Tentative (“maybe”), Imperative (e.g., work, talk), and Inclusion categories. In addition to these, verbs (in Total Verbs, and for You-singular Verbs categories) and some of the personal pronouns (I, You categories) were used by pro-vaxxers more. Thus, these imply their intention to define COVID with quantitative metrics.

Means of LIWC Categories.

Note. Left column and top of the right column show categories favored by anti-vaxxers (N = 14,880), and the second table named Pro-vax in the right column shows categories favored by pro-vaxxers (N = 4,565). The bottom-right table named “Insignificant” displays the categories where these two groups are not significantly different. LIWC = Linguistic Inquiry and Word Count.

On the contrary, anti-vaxxers adopted a more emotional language with a focus on feelings. They use more words from Negative Affect (e.g., hurt and ugly) and Affect categories in general, as well as words from Swear, Anger, Anxiety words, and sensory categories (e.g., Perception, Body, Hear, and See). The number of words from the Death and Religion categories was also higher in the anti-vaccination group. Their usage of expressions from Certainty (e.g., always, never), Causation (e.g, because), Discrepancy (“would,” “could”), Questions, Negations, Inhibition, and Exclusion, Non-fluencies (“um, oh”), and Assents (“ok, yes”) categories were higher. In addition to their emotional words, they also included more words related to social and daily life including Religion, Money, Human, Home, Friends, Work, Leisure, Achievement, Sexual, Social (e.g., talk, mate), Motion (e.g., car, arrive), Space (e.g., down, in), Function, and Ingestion categories. They focused more on Future tense and Aorist tense. They used a higher number of words from Filler (e.g., “I mean” and “you know”), and Modality categories. They also were higher in Pronouns, Personal Pronouns, Total He-She, Total We, Total You-Plural, You-Plural, You-Singular, as well as verbs for We, He-She, and You-Plural. For a complete overview of all categories, please see Table 2.

Overall, our findings support the second hypothesis (H2), indicating a discernible difference in language usage between anti-vaccine and pro-vaccine communities. The results align with previous literature (Malagoli et al., 2021; Mitra et al., 2016; J. Shi et al., 2021), which emphasizes the prevalence of negative language in anti-vaccine discourse, as well as the health-centric and descriptive nature of pro-vaxxer communication. We observed that the anti-vaccine group tends to employ more emotion-focused language, often characterized by certainty, negative emotions, anger, anxiety, and expletives, while the pro-vaccine group adopts a more quantifying and descriptive language. Another noteworthy difference is the broader range of social and daily topics discussed within the anti-vaccine discourse, including leisure, money, and friends, compared with that of pro-vaxxers. These differences may highlight distinct communication strategies adopted by anti-vaccine and pro-vaccine advocates. However, one should also consider the sample size differences between these groups while interpreting the results. Variety in language may stem from the diversity due to a larger sample of anti-vaxxers. Nevertheless, understanding the variations in the Turkish language could contribute to a greater comprehension of universal linguistic patterns associated with vaccine hesitancy and support.

Bot Activity in the Vaccine Debate

We employed a label propagation approach to assign vaccine stances for each account. Out of the 95,189 unique users labeled as either “pro-vaxxer,” “anti-vaxxer,” “neutral,” or “unknown,” we calculated the bot scores for 92% of the accounts. The remaining accounts were either suspended or turned into private accounts. Accounts that were suspended by Twitter typically align with Twitter’s policy against spamming, which often includes bot-like activities. Therefore, our estimation for bot prevalence is a conservative estimate.

After conducting the Mann-Whitney U test, we found that anti-vaxxers have a slightly higher bot activity (U = 664,836,924.0, p <.001). Yet, as can be seen from Figure 4a, bot score distribution of both anti- and pro-vaxxers are very similar, suggesting that bots were not more active on one side or the other. When we compared the accounts labeled as “neutral” with both pro- and anti-vaxxers, interestingly, we found that the accounts with a neutral stance on vaccines had more extreme bot scores on each side of the spectrum, suggesting the presence of more definitely-human and definitely-bot accounts. On the contrary, we observed that bot scores of users categorized as either pro- or anti-vaxxers are primarily concentrated bot scores between [0.2,0.6]. This suggests that these accounts exhibit characteristics of both human and bot accounts. Notably, the density of scores in [0.4,0.6] range is higher for the anti-vaxxer group as shown in Figure 4a. We hypothesize that this may be an indication of human users displaying bot-like behavior while engaging in vaccine-related discussions on Twitter.

Bot analysis. (a) Mean bot scores and score distributions of groups determined by their vaccine stance. (b) Accounts with different bot scores are analyzed by their retweeting activity. Pro-vaccine accounts tend to retweet more than anti-vaxxers for a given bot score. (c) Content creators and retweeting accounts that amplify original content are compared for pro- and anti-vaxxers. Accounts are stratified by their corresponding bot scores.

We also investigated the retweet activity and its relation with the bot scores of the users. Our analysis revealed that pro-vaxxers have a higher fraction of retweets compared with the anti-vaxxers, for a given bot score. This indicates a higher level of amplification for Pro-vaxxers (see Figure 4b). Furthermore, we examined how the bot scores of retweeters relate to the bot scores of the tweet originators. Our findings suggest that, regardless of the bot score of the retweeter, users tend to retweet tweets from accounts with lower bot scores. This means that human accounts are retweeted more. However, we also observed that this trend deteriorates as the bot score of the originator increases for pro-vaxxers. In contrast, the distribution of retweets is almost uniformly distributed for anti-Vaxxers, as shown in Figure 4c.

In summary, our findings offer partial validation to our third hypothesis (H3). While anti-vaxxers had a marginally elevated bot activity, the overall landscape suggests that bots were not the primary driving force behind the vaccine debate in the Turkish Twittersphere. Yet, the evidence of bot-like behavior in both anti-vaxxer and pro-vaxxer camps suggests that the discussions were not entirely organic.

Discussion

In this study, we revealed the motivation of pro- and anti-vaccination groups by comparing their dominant political alignment, linguistic styles, and level of automation. We observed that the overall anti-vaccine discourse was more dominant on Twitter compared with pro-vaccine discourse. Not only were there twice as many individuals holding anti-vaccine views, but the prevalence of anti-vaccine content was also seven times higher. Considering the unified pro-vaccine stance of the political authorities, this result indicates that Twitter might have served as a platform for anti-vaxxers to spread vaccine skepticism.

We observed that anti-vaxxers displayed a more unified and coherent cluster whereas pro-vaxxers connected to each other through different sub-clusters. This structure of the vaccine debate as a whole suggests that anti-vaxxers were more organized and delivered more systematic anti-vaccine content. In addition, based on our temporal analysis, we found that the pattern of tweeting in both groups was similar as the fluctuation of tweeting over time demonstrated parallelism most of the time (see Figure 1e). This indicates that the anti-vaxxers and pro-vaxxers were not isolated from each other and online discussions and might have reacted to similar events in a conflicting manner.

We tested whether political stance is a mechanism behind anti-vaccine stance. In contrast to findings in other countries (Fridman et al., 2021; Shahrezaye et al., 2021; Yamey & Gonsalves, 2020), we discovered that political affiliation is not related to vaccine hesitancy in the context of Türkiye as we expected. Both anti-vaxxers and pro-vaxxers received support from users representing diverse political leanings online.

We did not also observe any linguistic clues that indicate active involvement in the anti-vaccine debate from either the pro- or anti-government groups, except for the “Yeniden Refah Partisi.” Therefore, we concluded that political stance did not play a significant role in pushing anti-vaccine views on COVID-19 vaccines. Considering the lack of incentives from both the government and the opposition to polarize the vaccine debate, this finding is not surprising. In other words, this can be attributed to the widespread consensus among the Turkish political elite regarding the importance of vaccines and vaccination, distinguishing it from countries such as Brazil. Despite the emergence of the New Welfare Party, which aligns itself with the anti-vaccine movement, both the ruling and prominent opposition parties in Türkiye have consistently advocated for vaccines, indicating a lack of substantial grounds for politicizing the vaccine debate.

The New Welfare Party, in contrast, adopted a distinct strategy to leverage the situation and enhance its visibility among the broader public. The New Welfare Party is the successor of the Welfare Party, founded by former Prime Minister Necmettin Erbakan. Active during the 1990s, the Welfare Party faced numerous controversies and political bans. Following Necmettin Erbakan’s death, its influence diminished, and eventually, the party closed. His son, Fatih Erbakan, revived the once-prominent party in 2018, but it struggled to regain its former prominence. To address this, the New Welfare Party sought to take a leading role in a single-issue political debate through proactive engagement. Party leader Erbakan frequently amplified conspiracy theories about Bill Gates and global domination through the pandemic in order to find new supporters (Demirhan & Bascoban, 2021). Among his statements were: “We will liberate the Ministry of Agriculture from the hands of the Gates Foundation” (ErbakanFatih, 2022) and claims about mRNA vaccines leading to the birth of “monkey-human hybrid creatures” (Cumhuriyet, 2021). These unconventional statements gained traction on Twitter, positioning him as a leading figure in the anti-vaccine movement. Following the introduction of the Turkish-made COVID-19 vaccine “TURKOVAC,” with a great support by the government, he suggested that TURKOVAC might be used as a “last resort.” (Independent, 2021). Despite their disagreement about vaccination with other parties, they formed alliances with AKP in the 2023 Turkish Presidential elections. Future studies are encouraged to explore the role of vaccine attitudes of the New Welfare Party on their political trajectory.

Moreover, we explored the linguistic differences between the two groups. Our results showed that anti-vaxxers used more emotive language, whereas pro-vaxxers tried to adopt a more objective discourse that is focused on numbers and quantifiers (Faasse et al., 2016). As previously discussed, emotive language is embedded in persuasive communication, and the motivation behind the use of this language is to target indecisive and vulnerable audiences. Moreover, emotional messages motivate individuals to share information (Chen et al., 2022). Based on this, our findings suggest that the language use might have contributed to the spread of vaccine skepticism. Overall, our findings support previous evidence on the different uses of pro- and anti-vaxxers and indicate that language was a mechanism driving the vaccine debate in the Turkish Twittersphere.

The linguistic patterns and user descriptions also revealed that compared with pro-vaxxers, anti-vaxxers explicitly stated their religious identity more and there were more religion-related words used in anti-vaxxers’ tweets. In contrast, pro-vaxxers preferred to identify themselves through their socio-economic status. Pro-vaxxers’ higher use of words related to cognitive mechanisms and descriptions of the COVID concept (e.g., quantifiers, numbers, and descriptives) implies their aim to objectively approach to the topic is also in line with their socio-economic identity. Since cognitive mechanisms rely on causation which is a strategy to rationalize, and education level is related to rational decision-making (Goll & Rasheed, 2005), these results are not incongruous. Our findings shed light on the potential connection between social identity and vaccine stance. However, it is important to note that we lack sufficient evidence to establish a causal relationship between religiosity, education level, and vaccine stance, as the Twitter data did not provide comprehensive information on users’ socio-economic status and religious beliefs. Moreover, although our LIWC analysis revealed linguistic differences between anti-vaxxers and pro-vaxxers, further investigation is required to determine whether these discourses are indeed linked to these factors. Still, we believe that our results provide valuable insights by highlighting the explicit differences in social identity between the two groups. These findings lay the groundwork for future research to explore and provide additional evidence regarding the connection between social identity and vaccine stance. Future work can delve deeper into the nuances of social identity and its impact on individuals’ attitudes toward vaccines, enabling a more comprehensive understanding of this complex relationship. By expanding the scope of research, we can gain a more robust understanding of how social identity influences vaccine acceptance or resistance.

Finally, our finding on levels of automation suggests that full automation through bots was not a driving mechanism of the vaccine debate. In other words, contrary to findings from studies analyzing English-language content on Twitter (W. Shi et al., 2020; Zhang et al., 2022), our research found no strong evidence of “bots,” that is, fully automated accounts as a driving mechanism of the vaccine debate within the Turkish Twittersphere. One possible reason might be again the lack of political incentives for government and opposition parties to polarize the vaccine debate. In simpler terms, since neither side found it advantageous to fuel strong divisions on this topic, the vaccine debate has not been reflected on bot activities on Twitter. Instead, we observed that in both the pro-vaxxers and anti-vaxxers, a significant proportion of users had non-extreme bot scores, suggesting that both groups exhibited characteristics of both human and bot behavior.

We argue that frequent bot scores observed around 0.5 might be an indication of humans engaging in bot-like behavior. While anti-vaxxers showed slightly higher bot-like activity, the distributions of bot scores were similar for both groups. The difference in bot-like behavior may represent anti-vaxxers’ motivations to drive the vaccine debate due to the popularity and monetary benefits they obtained with this debate (Teyit.org, 2022). Although we conclude that fully automated accounts were not a mechanism driving the vaccine debate, when we compare the level of automation in both groups with accounts labeled as “neutral,” we argue that this observed bot-like behavior can be considered a characteristic of online vaccine debate in Türkiye as a whole.

Limitations and Future Work

Like any scholarly research, this study has limitations, which are essential to acknowledge and consider to interpret the findings accurately. Our analysis focused on the early stage of vaccination as we wanted to capture the most relevant conversations from both pro- and anti-vaccine communities. Therefore, our findings reflect a brief period of the vaccine debate on Twitter and may not fully capture the evolving nature of the discourse. Future studies should explore temporal changes in the debate through prospective research methodologies.

Considering the dataset of this study, our data collection involved an archival search using the Twitter API, rather than real-time live streaming. Acquiring a complete collection of social media stream is not possible unless a massive collective effort is undertaken for specific time intervals (Pfeffer et al., 2023). One notable limitation is the loss of data from deleted accounts, which could potentially lead to an underestimation of automation levels in our dataset (Pasquetto et al., 2020). In addition, it is important to note that the Twitter API restricts access to tweets from private or protected accounts, allowing us to collect only tweets from public accounts either in real-time or at the time of the query. Consequently, our dataset exclusively comprised tweets from users whose accounts were public at the time of data collection. Furthermore, during the time of our data collection, Twitter was actively implementing measures to combat vaccine misinformation, including the removal of tweets that promoted harmful false or misleading narratives about COVID-19 vaccination. We acknowledge that these content removal actions may have had an impact on our dataset. In addition, owing to both the computational costs involved and the capabilities of our political stance detection model, we opted for a sample consisting of users who had at least one retweet. While this decision enhances the accuracy of our predictions, it could potentially introduce biases into our results. Therefore, we encourage future studies to consider these users with less available information.

In our bot detection process, several accounts received middle scores from Botometer, indicating uncertainty regarding whether these accounts were bots or humans. We interpret these mid-scored accounts as potentially genuine human users exhibiting bot-like behaviors. Such a prevalence of bot-like behavior could stem from issue polarization, which complicates the distinction between human and automated activities. Another possible explanation would be the existence of coordinated activities. The majority of machine learning solutions designed for countering online abuse primarily concentrate on identifying social bots, employing techniques that primarily center on individual account analysis. Nevertheless, nefarious collectives might employ strategies of coordination that seem benign when assessed at the individual account level. Suspicious activities of these groups may only become evident when scrutinizing the intricate network of interactions across multiple accounts (Pacheco et al., 2021; Zouzou & Varol, 2023). These issues underline that our conclusions present limitations, especially when characterizing these middle scores. Future studies would do well to integrate manual annotation and network analysis to facilitate a clearer distinction between genuine human users and automated accounts and to detect coordinated activities.

Finally, our findings are exclusively based on one particular social media platform. Although Twitter data may not accurately represent the entire population, it does offer a substantial sample of individuals who have an interest in the subject. Nevertheless, future studies can improve the research by considering other social media platforms, such as Gruzd et al. (2023), which compared Facebook and YouTube content, and incorporating additional types of data, such as surveys, to provide a more comprehensive understanding of the issue.

Conclusion

As a widely-encountered problem in behavioral studies, literature on anti-vax campaigns and conspiracies also dominantly revolves around the behavioral standards of the WEIRD (Western, Educated, Industrialized, Rich, and Democratic) populations (Henrich et al., 2010). Although the political ideologies and vaccine attitudes significantly correlate with most of the studies (Boberg et al., 2020; Shahrezaye et al., 2021), our findings highlight the fact that these findings may not apply universally and there might be different reasons for anti-vax movements beyond politics.

It is also crucial to recognize the global context of political polarization and its impact on public health issues, as exemplified by the unique case of Türkiye. Our study focuses on a scenario where, unlike in many other countries, the prevailing political dynamics do not conform to the expected patterns in vaccine debates. In Türkiye, the right-wing governing bloc, typically seen as a potential anti-vaccine proponent in other contexts, did not seek to politicize the vaccine issue for its own advantage. This situation presents a compelling example of how political leaders and their strategies can significantly influence the course of public health discussions and potentially other public emergencies and societal challenges.

Therefore, investigating countries like Türkiye with high partisan polarization presents a notable challenge for current literature in understanding the various dimensions beyond politics that may serve as determinants of vaccine hesitancy. Efforts to study political conversations in Türkiye can be further advanced by using large-language models that are specific to the domain (Najafi & Varol, 2023) and accessible datasets that are available online (Najafi et al., 2022).

This study examined the political stance, language, and automation activity of anti- and pro-vaccine communities in the Turkish Twittersphere, and it is the first to investigate the diverse mechanisms underlying the online vaccine debate, and we hope to encourage further research that thoroughly explores online vaccine debates and their underlying motivations across diverse contexts.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The authors thank Akin Unver and the participants of the Summer Institute in Computational Social Science, Istanbul for their feedback. OV thanks support from the Science Academy, Türkiye, and TÜBİTAK (Grant #121C220) for their support in this research. They also thank Ezgi Akpinar for providing the LIWC lexicon derived for Turkish.