Abstract

Recent right-wing extremist terrorists were active in online fringe communities connected to the alt-right movement. Although these are commonly considered as distinctly hateful, racist, and misogynistic, the prevalence of hate speech in these communities has not been comprehensively investigated yet, particularly regarding more implicit and covert forms of hate. This study exploratively investigates the extent, nature, and clusters of different forms of hate speech in political fringe communities on Reddit, 4chan, and 8chan. To do so, a manual quantitative content analysis of user comments (N = 6,000) was combined with an automated topic modeling approach. The findings of the study not only show that hate is prevalent in all three communities (24% of comments contained explicit or implicit hate speech), but also provide insights into common types of hate speech expression, targets, and differences between the studied communities.

On 15 March 2019, a right-wing extremist terrorist killed more than 50 people in mosques in Christchurch, New Zealand, and wounded numerous others—livestreaming his crimes on Facebook. Only 6 weeks later, on 27 April, another right-wing extremist attack occurred in a synagogue in Poway near San Diego, in which one person was killed and three more injured. The perpetrators were active in an online community within the imageboard 8chan, which is considered as particularly hateful and rife with right-wing extremist, misanthropic, and White-supremacist ideas. Moreover, both the San Diego and Christchurch shooters used 8chan to post their manifestos, providing insights into their White nationalist hatred (Stewart, 2019). Following the attack in New Zealand, Internet service providers in Australia and New Zealand have temporarily blocked access to 8chan and the similar—albeit less extreme—imageboard 4chan (Brodkin, 2019). After yet another shooting in El Paso was linked to activities on 8chan, the platform was removed 1 from the Clearnet entirely, with one of 8chan’s network infrastructure providers claiming the unique lawlessness of the site that “has contributed to multiple horrific tragedies” as the main reason for this decision (Prince, 2019).

Whether the perpetrators’ activities on 8chan and 4chan actually contributed to their radicalization or motivation can hardly be determined. However, especially the platforms’ politics boards (8chan/pol/ and 4chan/pol/, respectively) have repeatedly been linked to the so-called alt-right movement, “exhibiting characteristics of xenophobia, social conservatism, racism, and, generally speaking, hate” (Hine et al., 2017, p. 92; see also Hawley, 2017; Tuters & Hagen, 2020). 4chan/pol/, in particular, has attracted the broader public’s attention during Donald Trump’s 2016 presidential campaign, often being the birthplace of conservative or even outright hateful and racist memes that circulated during the campaign. In addition to the mentioned communities on 4chan and 8chan, the controversial subreddit “The_Donald” is often referenced as a popular and more “mainstreamy” outlet for alt-right ideas as well (e.g., Heikkilä, 2017).

Although these political fringe communities are considered as particularly hateful in the public debate, only few studies (Hine et al., 2017; Mittos, Zannettou, Blackburn, & De Cristofaro, 2019) have investigated these communities with regard to the extent of hate speech. Moreover, the mentioned studies are exclusively built on automated dictionary-based approaches focusing on explicit “hate terms,” thus being unable to account for more subtle or covert forms of hate. To better understand the different types of hate speech in these communities, it also seems advisable to cluster comments in which hate speech occurs.

Addressing these research gaps, we (a) provide a systematic investigation of the extent and nature of hate speech in alt-right fringe communities, (b) examine both explicit and implicit forms of hate speech, and (c) merge manual coding of hate speech with automated approaches. By combining a manual quantitative content analysis of user comments (N = 6,000) and unsupervised machine learning in the form of topic modeling, this study aims at understanding the extent and nature of different types of hate speech as well as the thematic clusters these occur in. We first investigate the extent and target groups of different forms of hate speech in the three mentioned alt-right fringe communities on Reddit (r/The_Donald), 4chan (4chan/pol/), and 8chan (8chan/pol/). Subsequently, by means of a topic modeling approach, the clusters in which hate speech occurs are analyzed in more detail.

Hate Speech in Online Environments

Hate speech was certainly not invented with the Internet. Being situated “in a complex nexus with freedom of expression, individual, group, and minority rights, as well as concepts of dignity, liberty, and equality” (Gagliardone, Gal, Alves, & Martínez, 2015, p. 10), it has been in the center of legislative discussion in many countries for many years. Hate speech is considered to be an elusive term, with extant definitions oscillating between strictly legal rationales and generic understandings that include almost all instances of incivility or expressions of anger (Gagliardone et al., 2015). For the context of this study, we deem both the content and the targets as crucial for conceptualizing hate speech. Accordingly, hate speech is defined here as the expression of “hatred or degrading attitudes toward a collective” (Hawdon, Oksanen, & Räsänen, 2017, p. 254), with people being devalued not based on individual traits, but on account of their race, ethnicity, religion, sexual orientation, or other group-defining characteristics (Hawdon et al., 2017, see also Kümpel & Rieger, 2019).

There are a number of factors—resulting from the overarching characteristics of online information environments—suggesting that hate speech is particularly problematic on the Internet. First, there is the problem of permanence (Gagliardone et al., 2015). Especially fringe communities are heavily centered on promoting users’ freedom of expression, making it unlikely that hate speech will be removed by moderators or platform operators. But even if hateful content is removed, it might have already been circulated to other platforms, or it could be reposted to the same site again shortly after deletion (Jardine, 2019). Second, the shareability and ease of disseminating content in online environments further facilitates the visibility of hate speech (Kümpel & Rieger, 2019). During the 2016 Trump campaign, hateful anti-immigration and anti-establishment memes were often spread beyond the borders of fringe communities, surfacing to mainstream social media and influencing discussions on these platforms (Heikkilä, 2017). Third, the (actual or perceived) anonymity in online environments can encourage people to “be more outrageous, obnoxious, or hateful in what they say” (Brown, 2018, p. 298), because they feel disinhibited and less accountable for their actions. Moreover, anonymity can also change the relative salience of one’s personal and social identity, thereby increasing conformity to perceived group norms (Reicher, Spears, & Postmes, 1995). Indeed, research has found that exposure to online comments with ethnic prejudices leads other users to post more prejudiced comments themselves (Hsueh, Yogeeswaran, & Malinen, 2015), suggesting that the communication behavior of others also influences one’s own behavior. Fourth, and closely related to anonymity, there is the problem of the full or partial invisibility of other users (Brown, 2018; Lapidot-Lefler & Barak, 2012): The absence of facial expressions and other visibility originated interpersonal communication cues makes hate speech appear less hurtful or damaging in an online setting, thus increasing inhibitions to discriminate others. Last, one has to consider the community-building aspects that are particularly distinctive for online hate speech (Brown, 2018; McNamee, Peterson, & Peña, 2010). Not least in alt-right fringe communities, hate is often “meme-ified” and mixed with humor and domain-specific slang, creating a situation in which the use of hate speech can play a crucial role in strengthening bonds among members of the community and distinguishing one’s group from clueless outsiders (Tuters & Hagen, 2020). Taken together, the mentioned factors facilitate not only the creation and use of hate speech in online environments, but also its wider dissemination and visibility.

Implicit Forms of Hate Speech

While many types of online hate speech are relatively straightforward and “in your face” (Borgeson & Valeri, 2004), hate can also be expressed in a more implicit or covert form (see Ben-David & Matamoros-Fernández, 2016 ; Benikova, Wojatzki, & Zesch, 2018; ElSherief, Kulkarni, Nguyen, Wang, & Belding, 2018; Magu & Luo, 2018; Matamoros-Fernández, 2017)—for example, by spreading negative stereotypes or strategically elevating one’s ingroup. Implicit hate speech shares characteristics with what Buyse (2014, p. 785) has labeled fear speech, which is “aimed at instilling (existential) fear of another group” by highlighting harmful actions the target group has allegedly engaged in or speculations about their goals to “take over and dominate in the future” (Saha, Mathew, Garimella, & Mukherjee, 2021, p. 1111). Indeed, one variety of implicit hate speech can be seen in the intentional spreading of “fake news,” in which deliberate false statements or conspiracy theories about social groups are circulated to marginalize them (Hajok & Selg, 2018). This could be observed in connection with the European migrant crisis during which online disinformation often focused on the degradation of immigrants, for example, through associating them with crime and delinquency (Hajok & Selg, 2018, see also Humprecht, 2019).

Implicitness is a major problem for the automated detection of hate speech, as it “is invisible to automatic classifiers” (Benikova et al., 2018, p. 177). Using such implicit forms of hate speech is a common strategy to even avoid automatic detection systems and to cloak prejudices and resentments in “ordinary” statements (e.g., “My cleaning lady is really good, even though she is Turkish,” see Meibauer, 2013). Thus, implicit hate speech points to the importance of acknowledging the wider context of hate speech instead of just focusing on the occurrence of single (and often ambiguous) hate terms.

Extent of Hate Speech

Considering the mentioned problems with the (automated) detection of hate speech, it is hard to determine the overall prevalence of hate speech in online environments. To account for individual experiences, extant studies have often relied on surveys to estimate hate speech exposure. Across different populations around the globe, such self-reported exposure to online hate speech ranges from about 28% (New Zealanders 18+, see Pacheco & Melhuish, 2018), to 64% (13- to 17-year-old US Americans, see Common Sense, 2018), and up to 85% (14- to 24-year-old Germans, see Landesanstalt für Medien NRW, 2018). In studies focusing both on younger and older online users (Landesanstalt für Medien NRW, 2018; Pacheco & Melhuish, 2018), exposure to online hate was more commonly reported by younger age groups, which might be explained by different usage patterns and/or perceptual differences. However, while these survey figures suggest that many online users seem to have been exposed to hateful comments, they tell us only little about the overall amount of hate speech in online environments. In fact, even a single highly visible hate comment could be responsible for survey participants responding affirmatively to questions about their exposure to online hate. Thus, to determine the actual extent of hate speech, content analyses are needed—although the results are equally hard to generalize. Indeed, the amount of content labeled as hate speech seems to differ considerably, depending on the studied platforms and (sub-)communities, the topic of discussions, or the lexical resources and dictionaries used to determine what qualifies as hate speech (ElSherief et al., 2018; Hine et al., 2017; Meza, 2016). Considering our focus on alt-right fringe communities, we will thus aim our attention at the presumed and actual hatefulness of these discussion spaces.

The “Alt-Right” Movement and Fringe Communities

What Is the Alt-Right?

The alt-right (= abbreviated form of alternative right) is a rather loosely connected and largely online-based political movement, whose ideology centers around ideas of White supremacy, anti-establishmentarianism, and anti-immigration (see Hawley, 2017; Heikkilä, 2017; Nagle, 2017). Gaining momentum during Donald Trump’s 2016 presidential campaign, the alt-right “took an active role in cheerleading his candidacy and several of his controversial policy positions” (Forscher & Kteily, 2020, p. 90), particularly on the mentioned message boards on Reddit (r/The_Donald), 4chan, and 8chan (/pol/ on both platforms). Similar to other online communities, the alt-right uses a distinct verbal and visual language that is characterized by the use of memes, subcultural terms, and references to the wider web culture (Hawley, 2017; Tuters & Hagen, 2020; Wendling, 2018). Another common theme is “the cultivation of a position that sees white male identity as threatened” (Heikkilä, 2017, p. 4), which is connected both to strongly opposing policies related to “political correctness” (e.g., affirmative action) and to condemning social groups that are perceived to be profiting from these policies (Phillips & Yi, 2018). Openly expressing these ideas often culminates in the use of hate speech, particularly against people of color and women. However, while discussion spaces linked to the alt-right are routinely described as hateful, there is little published data on the quantitative amount of hate speech in these fringe communities.

Hate Speech in Alt-Right Fringe Communities

To our knowledge, empirical studies addressing the extent of hate speech in alt-right fringe communities have exclusively relied on automated dictionary-based approaches, estimating the amount of hate speech by identifying posts that contain hateful terms (Hine et al., 2017; Mittos et al., 2019). Focusing on 4chan/pol/, Hine and colleagues (2017) use the hatebase dictionary to assess the prevalence of hate speech in the “Politically Incorrect” board. They find that 12% of posts on 4chan/pol/ contain hateful terms, thus revealing a substantially higher share than the two examined “baseline” boards 4chan/sp/ (focusing on sports) with 6.3% and 4chan/int/ (focusing on international cultures/languages) with 7.3%. However, 4chan generally seems to be more hateful than other social media platforms: Analyzing a sample of Twitter posts for comparison, the authors find that only 2.2% of the analyzed tweets contained hateful terms. Looking at the most “popular” hate terms used in 4chan/pol/, it is also possible to draw cautious conclusions about the (main) target groups of hate speech. The hate terms appearing most—“nigger,” “faggot,” and “retard”—are indicative of racist, homophobic, and ableist sentiments and suggest that people of color, the lesbian, gay, bisexual, transgender and queer or questioning (LGBTQ) community, and people with disabilities might be recurrent victims of hate speech.

Utilizing a similar analytical approach, but exclusively focusing on discussions about genetic testing, Mittos and colleagues (2019) investigate both Reddit and 4chan/pol/ with regard to their levels of hate. For Reddit, their analysis shows that the most hateful subreddits alluding to the topic of genetic testing are associated with the alt-right (e.g., r/altright, r/TheDonald, r/DebateAltRight), with posts displaying “clear racist connotations, and of groups of users using genetic testing to push racist agendas” (Mittos et al., 2019, p. 9). These tendencies are even more amplified on 4chan/pol/ where discussion about genetic testing are routinely combined with content exhibiting racial and anti-Semitic hate speech. Reflecting the findings of Hine and colleagues (2017), racial and ethnic slurs are prevalent and illustrate the boards’ close association with White-supremacist ideologies.

While these studies offer some valuable insights into the hatefulness of alt-right fringe communities, the dictionary-based approaches are unable to account for more veiled and implicit forms of hate speech. Moreover, although the most “popular” terms hint at the targets of hate speech, a systematic investigation of the addressed social groups is missing. Based on the literature review and theoretical considerations, our study thus sought to answer three overarching research questions:

Research Question 1. What percentage of user comments in the three fringe communities contains explicit or implicit hate speech?

Research Question 2. (a) In which way is hate speech expressed and (b) against which persons/groups is it directed?

Research Question 3. What is the topical structure of the coded user comments?

Method

Our empirical analysis of alt-right fringe communities focuses on three discussion boards within the platforms Reddit (r/The_Donald), 4chan (4chan/pol/), and 8chan (8chan/pol/), thus spanning from central and highly used to more peripheral and less frequented communities. While Reddit, the self-proclaimed “front page of the Internet,” routinely ranks among the 20 most popular websites worldwide, 4chan and 8chan have (or had) considerably less reach. However, due to their connection with the perpetrators of Christchurch, Poway, and El Paso, 4chan and 8chan are nevertheless of high relevance for this investigation. All three platforms follow a similar structure and are divided into a number of different subforums (called “subreddits” on Reddit and “boards” on 4chan/8chan). While Reddit requires users to register to post or comment, both 4chan and 8chan do not have a registration system, thus allowing everyone to contribute anonymously. The specific discussion boards—r/The_Donald, 4chan/pol/, and 8chan/pol/—were chosen due to their association with alt-right ideas as well as their relative centrality within the three platforms. Moreover, all three boards have previously been discussed as important outlets of right-wing extremists’ online activities (Conway, Macnair, & Scrivens, 2019).

In the following sections, we will first describe the data collection process and then outline the two methodological/analytical approaches used in this study: (a) a manual quantitative content analysis of user comments in the three discussion boards and (b) an automated topic modeling approach. While 4chan and 8chan are indeed imageboards, (textual) comments play an important role on these platforms as well. On Reddit, pictures can easily be incorporated in the original post that constitutes the beginning of a thread, but comments are by default bound to text. Due to our two-pronged strategy, the nature of these communities, and to ensure comparability between the discussion boards, we focused our analyses on the textual content of comments and did not consider (audio-)visual materials such as images or videos. However, we refer to their importance in the context of hate speech in the discussion.

Data Collection

Since accessing and collecting content from the three discussion boards varies in complexity, we relied on different sampling strategies. Comments from r/The_Donald were obtained by querying the Pushshift Reddit data set (Baumgartner, Zannettou, Keegan, Squire, & Blackburn, 2020) via redditsearch.io. Between 21 April and 27 April 2019, we downloaded a total of 70,000 comments, of which 66,617 could be kept in the data set after removing duplicates and deleted/removed comments. Comments from 4chan/pol/ were obtained by using the independent archive page 4plebs.org and a web scraper. Between 14 April and 29 April 2019, a total of 16,000 comments were obtained, of which 15,407 remained after the cleaning process. 2 Finally, comments from 8chan/pol/ were obtained by directly scraping the platform: All comments in threads that were active on 24 April 2019 were downloaded, resulting in a data set of 63,504 comments for this community. For the manual quantitative content analysis, 2,000 comments were randomly sampled from the data set of each of the three communities, thus leading to a combined sample size of 6,000 comments.

Approach I: Manual Quantitative Content Analysis

As our first main category, we coded explicit hate speech in accordance with recurrent conceptualizations in the literature. Within this category, we defined insults (attacks to individuals/groups on the basis of their group-defining characteristics, e.g., Erjavec & Kovačič, 2012) as offensive, derogatory, or degrading expressions, including the use of ethnophaulisms (Kinney, 2008). Instead of coding insults in general, we distinguished between personal insults (i.e., attacks of a specific individual) and general insults (i.e., attacks of a collective), also coding the reference point of personal insults and the target of general insults. The specific reference points [(a) Ethnicity, (b) Religion, (c) Country of Origin, (d) Gender, (e) Gender Identity, (f) Sexual Orientation, (g) Disabilities, (h) Political Views/Attitudes] or targets [(a) Black People, (b) Muslims, (c) Jews, (d) LGBTQ, (e) Migrants, (f) People with Disabilities, (g) Social Elites/Media, (h) Political Opponents, (i) Latin Americans*, (j) Women, (k) Criminals*, (l) Asians) were compiled on the basis of research on frequently marginalized groups (Burn, Kadlec, & Rexer, 2005; Mondal, Silva, Correa, & Benevenuto, 2018), and inductively extended (targets marked with *) during the coding process. Furthermore, we have coded violence threats as a form of explicit hate speech (Erjavec & Kovačič, 2012; Gagliardone et al., 2015), including both concrete threats of physical, psychological, or other types of violence and calls for violence to be inflicted on specific individuals or groups.

As our second main category, we coded implicit hate speech. To distinguish different subcategories of this type of hate speech, we relied more strongly on an explorative approach by focusing on communication forms that have been described in the literature as devices to cloak hate (see section “Implicit Forms of Hate Speech”). The first subcategory of implicit hate speech is labeled negative stereotyping and was coded when users expressed overly generalized and simplified beliefs about (negative) characteristics or behaviors of different target groups. The second subcategory—disinformation/conspiracy theories—reflects both “simple” disinformation and false statements about target groups and “advanced” conspiracy theories that represent target groups as maliciously working together toward greater ideological, political, or financial power (e.g., “the Jew media controls everything”). A third subcategory was labeled ingroup elevation and was coded when statements elevated or accentuated belonging to a certain (racial, demographic, etc.) group, oftentimes implicitly excluding and devaluing other groups. The last subcategory of implicit hate speech was labeled inhuman ideology. Here, it was coded whether a user comment supported or glorified hateful ideologies such as National Socialism or White supremacy, including the worshiping of prominent representatives of such ideologies.

In addition, a category spam was added to exclude comments containing irrelevant content such as random character combinations or advertisements. The entire coding scheme as well as an overview of the main content categories described in the previous paragraphs can be accessed via an open science framework (OSF) repository 3 .

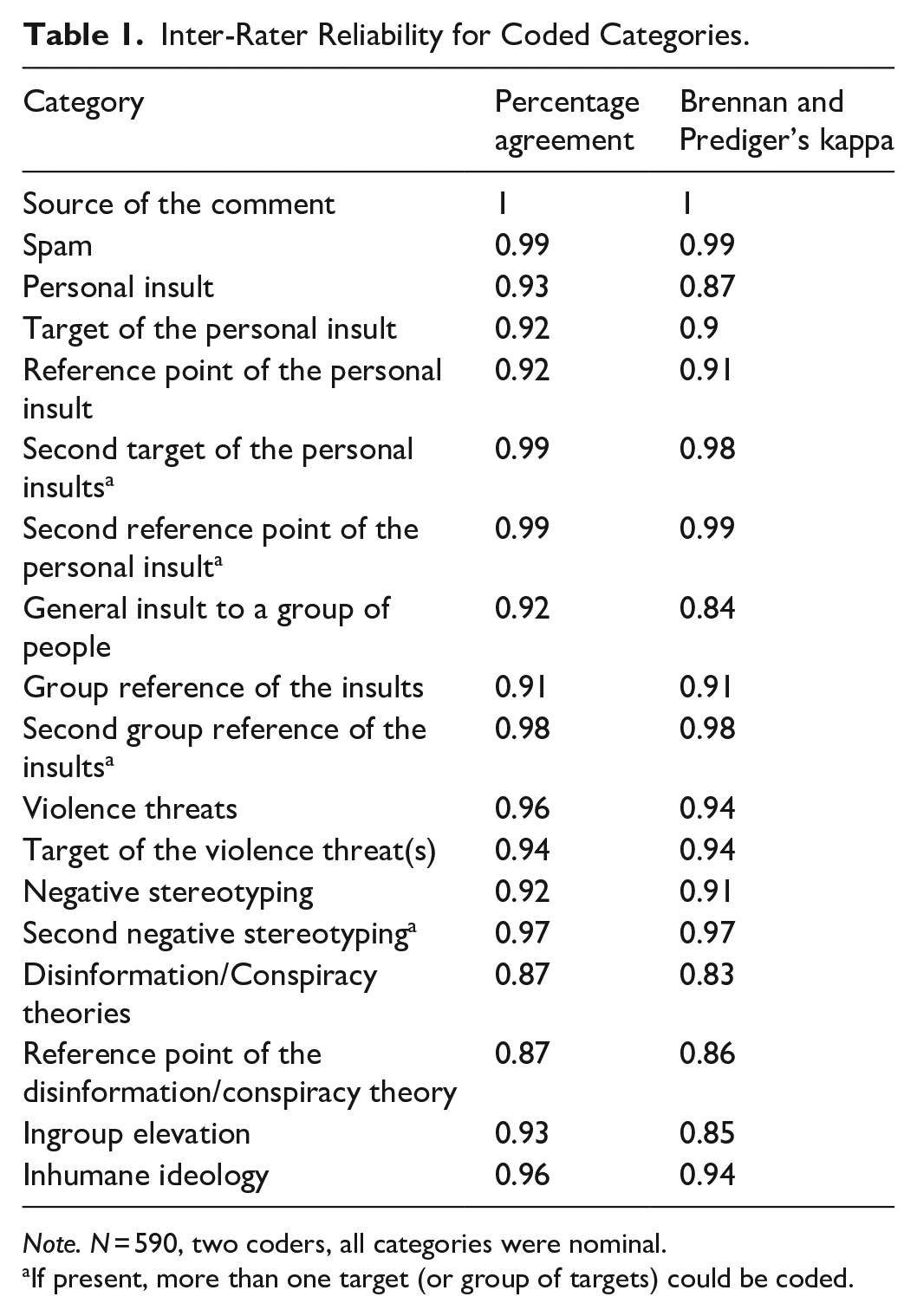

The manual quantitative content analysis was conducted by two independent coders. Both coders coded the same subsample of 10% from the full sample of comments to calculate inter-rater reliability with the help of the R package “tidycomm” (Unkel, 2021). Using both percent agreement and Brennan and Prediger’s Kappa, all reliability values were satisfactory (κ ⩾ 0.83, see also Table 1). Prior to the analyses, all comments coded as spam were removed, leading to a final sample size of 5,981 comments.

Inter-Rater Reliability for Coded Categories.

Note. N = 590, two coders, all categories were nominal.

If present, more than one target (or group of targets) could be coded.

Approach II: Topic Modeling

Topic modeling is an unsupervised machine learning approach to identify topics within a collection of documents and to classify these documents into distinct topics. Günther and Domahidi (2017) generally describe a topic as “what is being talked/written about” (p. 3057). Each topic would thus be represented in a cluster. Consequently, each cluster is assigned a set of words that are representative of the comments within the cluster. For our analysis, we first generated a topic model (TM1) for all 5,981 comments to gain an understanding of the topics within the entire data set. Combined with the manual coding, these results provide insights on which topics are more hateful than others. Second, another topic model (TM2) was created only for the comments identified as hateful (n = 1,438) to examine the clusters of the comments in which hate speech occurs. To do so, TM1 and TM2 were compared by investigating the transitions between the models. In addition, TM2 was also combined with the manually coded data, allowing to establish a connection between the cluster, type, and targets of hate speech.

CluWords was selected as the topic model algorithm—a state-of-the-art short-text topic modeling technique (Viegas et al., 2019). The reason for not choosing a more conventional technique such as Latent Dirichlet Allocation (LDA) is that these do not perform well on shorter texts because they rely on word co-occurrences (Campbell, Hindle, & Stroulia, 2003; Cheng, Yan, Lan, & Guo, 2014; Quan, Kit, Ge, & Pan, 2015). CluWords overcomes this issue by combining non-probabilistic matrix factorization and pre-trained word-embeddings (Viegas et al., 2019). Especially the latter allows enriching the comments with “syntactic and semantic information” (Viegas et al., 2019, p. 754). For this article, the fastText word vectors pre-trained on the English Common Crawl dataset were used because it is trained on web data and thus an appropriate basis (Mikolov, Grave, Bojanowski, Puhrsch, & Joulin, 2019).

One challenge of topic modeling is to find a meaningful number of clusters. Since topic modeling is an unsupervised learning approach, there is no single right solution. To cope with this problem, the following five criteria have been used to determine an appropriate number of clusters: (a) the same number of topics for TM1 and TM2, (b) a meaningful and manageable number of topics, (c) comprehensibility of the topics, (d) standard deviation of the topics’ sizes, and (e) (normalized) pointwise mutual information.

Results

Results of Manual Quantitative Content Analysis

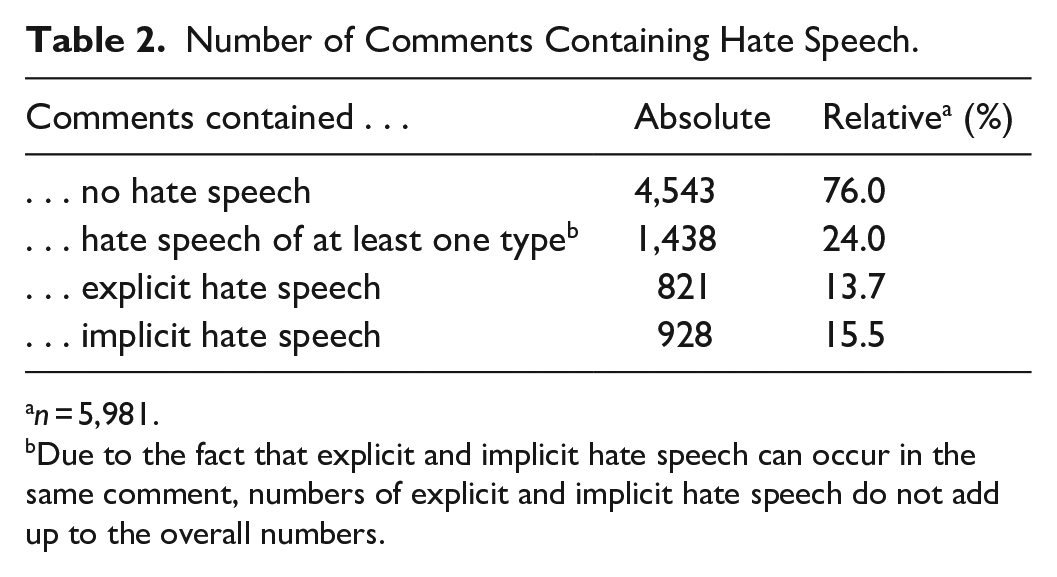

Addressing RQ1 (extent of explicit/implicit hate speech), we found that almost a quarter (24%, n = 1,438) of the analyzed 5,981 comments contained at least one instance of explicit or implicit hate speech (see Table 2). In 821 of the comments (13.7%), forms of explicit hate speech were identified (i.e., at least one of the categories personal insult, general insult, or violence threat was coded). Implicit hate speech (i.e., negative stereotyping, disinformation/conspiracy theories, ingroup elevation, and inhuman ideologies) occurred slightly more often and was observed in 928 comments (15.5%).

Number of Comments Containing Hate Speech.

n = 5,981.

Due to the fact that explicit and implicit hate speech can occur in the same comment, numbers of explicit and implicit hate speech do not add up to the overall numbers.

Focusing on RQ2a (forms of hate speech), general insults were the most common form of hate speech and observed in 570 comments: they were included in almost every 10th comment of the entire sample (9.5%) and in more than one-third of all identified hateful comments (39.6%). Disinformation and conspiracy theories followed next and made up 31.8% of all comments with hate speech (n = 458). Within this category, conspiracy theories (n = 294) were observed almost twice as often as mere disinformation (n = 164). In over a quarter of all hateful comments (25.7%), inhuman ideologies were referenced or expressed (n = 369), with 10.8% relating to National Socialism and 14.9% to White-supremacist ideologies. Violence threats were observed in 221 comments (3.7% total; 15.4% of hateful comments), negative stereotyping in 192 comments (3.2% total, 13.4% of hateful comments), and ingroup elevation was coded for 303 comments (5.1% total, 21.1% of hateful comments), Within our sample, personal insults emerged as the least common form of hate speech (n = 139), making up only 2.3% of all comments and 9.7% of all hateful comments.

Nevertheless, to answer RQ2b (reference points/targets of hate speech), we analyzed the reference points of these personal insults in more detail. Most personal insults attacked an individual’s sexual orientation (32.1%), their ethnicity (27%), their political attitude (10.9%), or referred to an actual or alleged disability (10.2%). Personal insults referring to one’s religion, country of origin, gender, or gender identity could only rarely be observed. For the categories general insults, violence threat, negative stereotyping, and disinformation/conspiracy theories, we further analyzed which groups were targeted with hateful sentiments (see Table 3). Jews were by far the most affected group and targets of explicit or implicit hate speech in 478 comments. When Jews were targeted, this happened most often in the context of disinformation/conspiracy theories and general insults. Black people were the second most targeted group in the sample (targeted in 277 comments), with attacks occurring primarily in the context of general insults. Other frequent targets were political opponents (targeted in 238 comments), Muslims (targeted in 148 comments), and the LGBTQ community (targeted in 127 comments).

Targets of Hate Speech Across Different Types of Hate Speech.

LBGTQ: lesbian, gay, bisexual, transgender and queer or questioning.

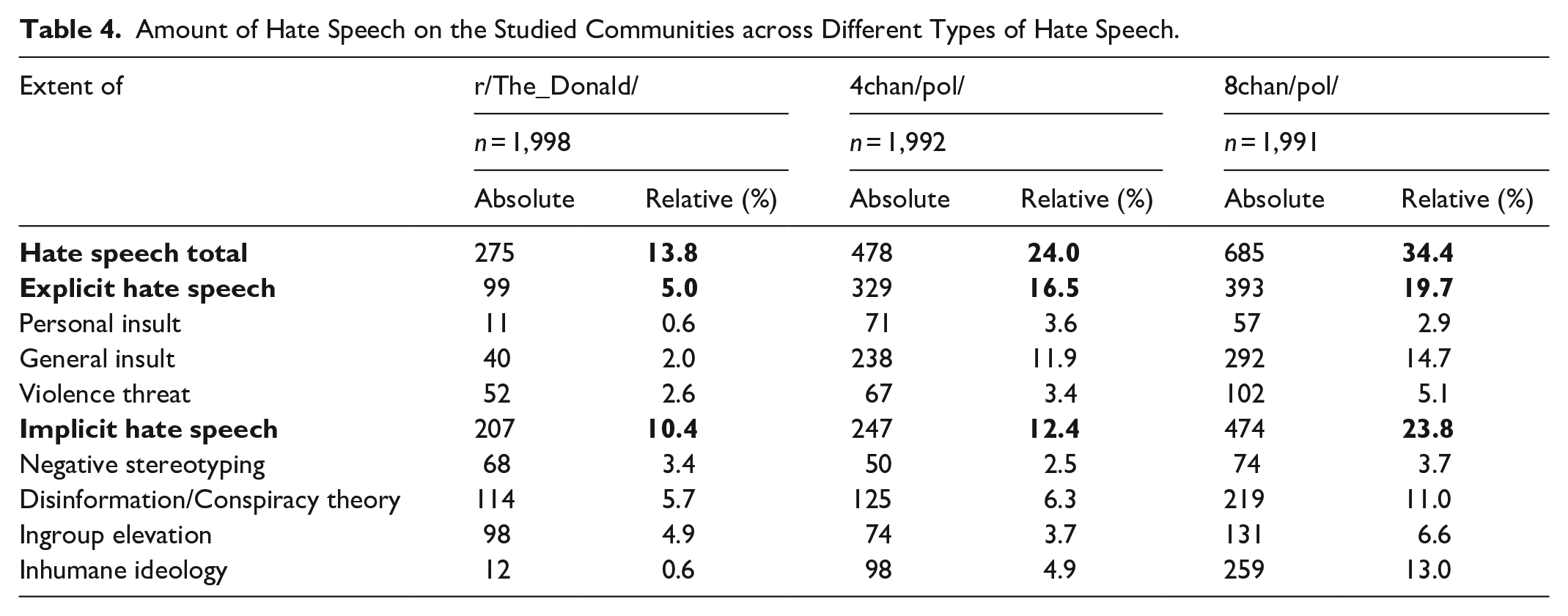

To identify differences between the three fringe communities, we also conducted the analyses separately for r/The_Donald, 4chan/pol/, and 8chan/pol/. Moving from the more “mainstreamy” r/The_Donald to the outermost 8chan/pol/, the amount of hate speech increases steadily: While 13.8% of all analyzed comments on r/The_Donald included at least one form of hate speech, we identified 24% of comments on 4chan/pol/ and even 34.4% of comments on 8chan/pol/ as containing hate speech. As can be inferred from Table 4, the amount of explicit and implicit hate speech also differed between the three communities: Particularly striking here is the low amount of explicit hate speech on r/The_Donald, which is mainly due to the fact that general insults are much less common than on 4chan/pol/ and 8chan/pol/. Looking more closely at implicit hate speech, we see that 8chan/pol/ emerged as the community with the highest share of such indirect, more veiled forms of hate speech, resulting mainly from the relatively high amount of comments featuring disinformation/conspiracy theories and inhuman ideologies.

Amount of Hate Speech on the Studied Communities across Different Types of Hate Speech.

Results of Topic Modeling

To answer RQ3 (topical structure of the coded comments), two topic models (TM1 and TM2) were generated and combined with the results of the manual quantitative content analysis. TM1 focuses of the entire data set, while TM2 is restricted to the comments that were identified as containing hate speech. Table 5 shows the topics of TM1, their relative distribution between the sources, the absolute number of comments, and the proportion of hate speech. After the evaluation of different numbers of topics, 12 topics turned out to be most appropriate. Overall, the topics can be considered meaningful, and their content meets the expectations for these fringe communities (e.g., focus on political affairs, conspiracy theories, anti-Semitism) 4 . A2–A8 have a thematic focus, while A9, A11, and A12 bundle foreign-language comments. As A9–A12 are relatively small compared to the total number of comments (and consequently less meaningful), they will be excluded from the following analyses.

Topics From TM1 and Their Frequency Distribution.

In general, each topic is equally distributed across the three sources with some noticeable exceptions: 47.3% and 45.5% of the comments from the political topics A3 and A4 originate from r/The_Donald. Topic A2—consisting exclusively of swear words—can mostly be allocated to 4chan/pol (40.4%) and 8chan/pol (32.0%), which is in line with the results from the manual content analysis. The topic with a focus on anti-Semitism and Islam (A6) also exhibits an unequal distribution: r/The_Donald/’s share is only 22.4%, while 4chan/pol’s share is 30.8% and 8chan/pol’s is 46.9%. In light of the observed hatefulness of 4chan/pol and 8chan/pol, it is remarkable that both are the main origin of the identified topic focusing on nutrition (A5), which might be explained by their broader scope. Focusing on the occurrence of hate speech, the topics A2 (32.7%), A6 (59.4%), and A7 (32.0%) have to be highlighted due to their higher-than-average share of hate. This is not surprising, as the keywords from A2 only contain swear words, A6 covers (anti-)Semitic and Islamic comments, and A7 refers to foreign countries which are often the target of hate due to the alt-rights’ nationalist orientation.

To better understand the clusters/topics in which hate speech occurs, a second topic model (TM2) was generated based on the 1,438 hateful comments only (see Tables 6 and 7). Both models show a similar topical structure and some topics from TM1 are reflected in TM2 as well: A1 is similar to H3 (generic topic), A2 to H1 (swear words), A6 to H2 (largely anti-Semitic), and A7 to H4 (foreign affairs). On the contrary, other topics emerged as more fine-grained when only considering hate speech–related comments (TM2). A good example is topic A3, which focuses on the government, politics, and society. Hateful comments from this topic can be found, among others, in the topics about US democrats and republicans (H5), political ideology (H9), and finances and taxes (H10).

Topics of TM2 Combined With Forms of Hate Speech From Manual Coding.

Topics of TM2 Combined With Targets of Hate Speech From Manual Coding.

LBGTQ: lesbian, gay, bisexual, transgender and queer or questioning.

Tables 6 and 7 depict the topics of TM2 in combination with the manual analysis to get a deeper understanding of thematic clusters in which the different types of hate speech occur: The first one distinguishes between the different forms of explicit and implicit hate speech, the second one between the different targets of hate speech. Concerning the forms of hate speech, the comments from the topic with swear words (H1) tend to be explicit hate speech, particularly general insults (238 out of 398). In contrast to that, all other topics contain more implicit hate speech—a difference that should not be surprising due to the nature of the topics. What is interesting is the difference between the two (anti-)religious topics H2 ([anti-]Semitism) and H7 ([anti-]Islam). While the first one contains many explicit general insults (138 out 265), the second one has a stronger focus on implicit hate speech, in particular on disinformation (39 out of 80) and negative stereotyping (25 out of 80). Beyond that, H4 and H5 have to be mentioned. H4, the topic about foreign affairs, has its maximum in the category inhuman ideologies (51 out of 96). The topic about US democrats and republicans (H5) exhibits a relatively large number of ingroup elevation (51 out of 86) and disinformation (40 out of 86).

Concerning the targets of hate speech, the automatically generated topics are in line with the manual coding, as shown in Table 7. The (anti-)Semitic and Islamic topic have their maximum in the respective target groups (230 out of 265; 61 out of 80). H4, the topic about US democrats and republicans, mainly contains comments targeting political opponents (56 out of 86). The two more generic topics (H1) and (H3) target a wider range of groups and their distribution is in line with the overall distribution of all topics.

Discussion

Building on ongoing public debates about alt-right fringe communities—that have been described as “the home of some of the most vitriolic content on the Internet” (Stewart, 2019)—this study investigates whether these public perceptions withstand empirical scrutiny. Focusing on three central alt-right fringe communities on Reddit (r/The_Donald), 4chan (4chan/pol/), and 8chan (8chan/pol/), we provide a systematic investigation of the extent and nature of both explicit and implicit hate speech in these communities. To do so, we combine a manual quantitative content analysis of user comments (N = 6,000) with an automated topic modeling approach that offers additional insights into the clusters in which hate speech occurs.

The most obvious finding to emerge from our analysis is that hate speech is prevalent in all three studied communities: In almost a quarter of the sample (24%), at least one instance of explicit or implicit hate speech could be observed. Reflecting results from an automated dictionary-based approach by Hine and colleagues (2017)—who identified 12% of comments on 4chan/pol/ to contain (explicitly) hateful terms—we found that 13.7% of all analyzed comments featured explicit hate speech. However, our manual quantitative content analysis allowed us to also examine the extent of more veiled, indirect forms of hate speech, which was found in 15.5% of all comments. Differences between platforms are in line with the expectations one might have when moving from the more moderate to the more extreme communities: Comparatively, r/The_Donald featured the lowest amount of hate speech, followed by 4chan/pol/, and 8chan/pol/, suggesting that the “fringier” communities are distinctly more hateful.

Looking more closely at hate speech expression and common targets of hate speech, the results show that general insults of groups, referencing, or spreading disinformation/conspiracy theories, as well as the expression or glorification of inhuman ideologies such as National Socialism or White supremacy occurred most frequently. The reason for the high incidence of general insults might partly result from including ethnophaulisms and other derogatory terms such as “newfag” and “oldfag” that are regularly used on 4chan and 8chan to refer to new versus experienced users. The observed prevalence of disinformation and conspiracy theories might thus be even more alarming than the use of “plain” insults.

With regard to the social groups affected by hate speech in alt-right fringe communities, our analysis shows that Jews were targeted most often, followed by Black people and political opponents. While Jews were similarly observed as being targets of general insults, they were most often referenced in the context of disinformation and conspiracy theories, which chimes in with the observed extent of National socialist and White-supremacist ideologies in the studied communities. Political opponents are most often referenced within disinformation and conspiracy theories as well, thus reflecting the communities’ close connection to populist attitudes that are associated with the demonization of institutions and political others (see Fawzi, 2019).

The topic models generated on the basis of the sampled user comments are in line with the results of the manual quantitative content analysis and provide additional insights into discussion topics that are likely to feature hate speech. They reflect the extent of (group-related) insults, anti-Semitic and anti-Islamic sentiments, and the strong nationalist orientation of the studied communities. Furthermore, the analysis shows that hate speech—although this might come as no surprise considering our focus on political fringe communities—often occurs in discussions about the government, the (US) political system, religious and political ideologies, or foreign affairs. Subsequent (computational) analyses could take these insights as a starting point to use specific contexts (= topics) for hate speech detection and artificial intelligence (AI) training sets.

Taking a look into potential directions for future studies, hate and antidemocratic content is not only conveyed through text: In an analysis of German hate memes, Schmitt and colleagues (2020) found that memes often display symbols, persons, or slogans known from National Socialism and the Nazi regime. Relatedly, Askanius (2021) traced an adaptation of stylistic strategies and visual aesthetics of the alt-right in the online communication of a Swedish militant neo-Nazi organization. Considering “that the visual form is increasingly used for strategically masking bigoted and problematic arguments and messages” (Lobinger, Krämer, Venema, & Benecchi, 2020, p. 347), and that images and videos tend to develop more virality than mere text (Ling et al., 2021), future studies should focus more strongly on such visual hate speech, which would also more adequately reflect the communication routines of the studied alt-right fringe communities.

Under the guise of “insider jokes,” humor, or memes, it is possible that hate speech is not recognized as such or is perceived as less harmful. Oftentimes, it cannot be judged as unequivocally criminal and is thus not deleted by platforms. Content that—due to this “milder” perception—also finds favor in groups that do not in principle share the hostile ideas behind it is thus increasingly becoming the norm (Fang & Woodhouse, 2017). Accordingly, it can be assumed that the frequent confrontation with hate speech is loosening the boundaries of what can be said and thought, even among initially uninvolved Internet users. This mainstreaming process is described, for example, by Whitney Phillips (2015), who notes the historical transition of hateful, racist memes from fringe communities on the Internet to an increasingly broader public. Sanchez (2020), therefore, warns against a normalization of the “dark humor” that occurs in viral hate memes and calls for critical consideration and research of a possible desensitization to hate and incitement as a consequence. This study adds to this body of literature by providing first evidence that implicit hate speech is as prevalent as explicit hate speech and should thus be considered when analyzing both the extent as well as the potential harm of online hate. In addition, future studies should emphasize the long-term perspective and potential dangers of this development in which mainstreaming would contribute to hate becoming more and more “normal.”

This work has limitations that warrant discussion. First, due to difficulties with the data collection, the initial number of comments on the analyzed communities varied, with 4chan/pol/ having a considerably smaller base of comments to sample from than r/The_Donald and 8chan/pol/. Moreover, all comments were scraped in April 2019, which might have influenced the results due to specific (political) topics being more or less obtrusive during that time period, possibly also influencing the general amount of hate speech. Second, it should be noted that we did not explicitly exclude hate terms that are part of typical communication norms within the studied communities. Terms such as the mentioned “newfag” were coded as hate speech although they may simply reflect 4chan jargon and are not used with malicious intentions. Nevertheless, we intentionally decided to code it as hate speech as even “normalized” or unintended hate speech can have negative effects (e.g., Burn et al., 2005). Third, our methodology and analysis were focused on textual hate speech, which is why we are unable to account for the amount of hate speech that is transmitted via shared pictures, (visual) memes, or videos. As we have outlined above, it is nevertheless an important endeavor to include the analysis of visual hate speech for which the results of our study might provide a fruitful starting point.

Notwithstanding its limitations, this study provides a first systematic investigation of the extent and nature of hate speech in alt-right fringe communities and shows how widespread verbal hate is on these discussion boards. Further research is needed to confirm and validate our findings, explore the effects of distinct forms of explicit and implicit hate speech on users, and assess the risks of virtual hate turning into real-life violence.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.