Abstract

Scholars often blame the occurrence of aggressive behavior in online discussions on the anonymity of the Internet; however, even on today’s less anonymous platforms, such as social networking sites, users write plenty of aggressive comments, which can elicit a whole wave of negative remarks. Drawing on the social identity and deindividuation effects (SIDE) model, this research conducts a laboratory experiment with a 2 (anonymity vs. no anonymity) × 2 (aggressive norm vs. non-aggressive norm) between-subjects design in order to disentangle the effects of anonymity, social group norms, and their interactions on aggressive language use in online comments. Results reveal that participants used more aggressive expressions in their comments when peer comments on a blog included aggressive wording (i.e., the social group norm was aggressive). Anonymity had no direct effect; however, we found a tendency that users’ conformity to an aggressive social norm of commenting is stronger in an anonymous environment.

Every day, millions of Internet users post aggressive online comments on participatory social media platforms, such as Facebook, YouTube, or weblogs, in order to voice public criticism, personal indignation, or to simply let off steam. In many cases, these comments include crude remarks and address companies, brands, or public characters like politicians or pop stars (Pfeffer, Zorbach, & Carley, 2013). The forms of aggression are manifold and vary from expressions of disgust and contempt, to treats, slander, insults, and hatred. If the aggression is met with approval by other users, it can escalate and elicit an “online firestorm,” which is described as a wave of negative and angry online comments in social media (Pfeffer et al., 2013).

One of the key reasons for online aggression is attributed to the anonymity on the Internet. This traces back to the theory of deindividuation (Festinger, Pepitone, & Newcomb, 1952), which states that people lose their inner constraints and feel less self-aware, inhibited, and responsible for their behavior when they are anonymous. Whenever users leave comments on a website, they are not physically present. Many platforms even enable users to comment without revealing personal information. In this anonymous online context, scholars have noticed that people experience greater feelings of disinhibition, which make them “say or do things that they would not say or do face-to-face” (Suler, 2004, p. 321). This was shown in early experimental studies, which found that people interacted less inhibited and more aggressively in anonymous computer settings than face-to-face (Siegel, Dubrovsky, Kiesler, & McGuire, 1986). Furthermore, current research found more aggressive language in anonymous computer-mediated communication (CMC) than in identifiable settings on the computer, for example, in which communicators reveal their names and personal information or use webcams (cf. Lapidot-Lefler & Barak, 2012).

However, users experience verbal aggression and uncivilized behavior even on less anonymous web platforms like social networking sites (cf. Rainie, Lenhart, & Smith, 2012), where most people are registered by their real name and share personal information. Since verbal aggression has great potential to escalate and set the tone in online discussions (Sood, Churchill, & Antin, 2012), the exerted social influence of other online users and the power of social norms might be another cause of aggressive language use in online communication. According to social influence theories, individuals affect each other’s opinions and behaviors in social context and tend to conform to prevalent social norms of a common social group—especially when they identify with the group (cf. Turner, 1982). In addition to that, research based on the SIDE model (Reicher, Spears, & Postmes, 1995) has found that (visual) anonymity of a group in CMC can foster group identification and conformity to social group norms (Postmes, Spears, Sakhel, & de Groot, 2001).

Concerning the multitude of aggressive online comments in social media, a key research aim of the present study is to identify the factors and to disentangle the mechanisms that affect users to comment online in an uncivil way. Based on deindividuation theory, for one thing, we would argue that anonymity is a key influencing factor for verbal aggression online. For another thing, theories on social influence and social identity posit that people are affected by the social behavior and social norms of others, which leads us to the assumption that users can adopt an aggressive tone in their own comments when other users comment aggressively. Moreover, we expect that users are most prone to use aggressive language in their comments when the situation is constituted by the combination of both, anonymity and an aggressive commenting norm among the group of commenters.

Against this background, we conducted a laboratory experiment with a 2 × 2 between-subjects design in order to elucidate the effects of anonymity (commenting without registration vs. commenting with the private Facebook account), the effects of social group norms (represented by aggressive vs. non-aggressive peer comments), and their interaction effects on participants’ aggressive language use when they write an own comment on a weblog.

Verbal Aggression Online

Verbal aggression refers to any behavior that uses words rather than physical attacks to do harm, such as insults, defamation, or threats. It describes a destructive form of communication, which can take place face-to-face as well as computer-mediated. In CMC, verbal aggression can be open, for example, when an aggressor directly attacks another person via chat or any other kind of message, or covert, when the aggression is directed at an absent target (e.g., a hostile statement about a third party). The latter is sometimes also referred to as venting (Kayany, 1998).

Analyses of aggressive language use in online communication date back to the 1980s and early studies on the phenomenon of flaming in CMC, which have been defined as “hostile and aggressive interactions via text-based computer mediated-communication” (O’Sullivan & Flanagin, 2003, p. 69). More recent research, especially in the political context, investigates verbal aggression in online discussions under the name of incivility, which refers to “features of discussion that convey an unnecessarily disrespectful tone toward the discussion forum, its participants, or its topics” (Coe, Kenski, & Rains, 2014, p. 660). Although the definitions and the operationalization of aggressive verbal behavior in online interactions vary among studies, the criteria which are most common in the literature convey uncivil language and attacks (Blom, Carpenter, Bowe, & Lange, 2014). In this regard, researchers have developed several category schemes in order to assess and explore the different expressions of aggression. Categories include, for example, “hostile words and expressions, swear words and derogatory names, direct and indirect threats, use of letters, symbols and punctuation marks conveying hostility or aggression, and insulting, sarcastic, teasing, negative, or cynical comments” (Lapidot-Lefler & Barak, 2012, p. 437).

In this study, we analyze the aggressive language use in participants’ comments including open and covert forms of verbal aggression directed at other users, persons who are not present in the discussion, or toward topics and ideas.

Anonymity, Deindividuation, and Aggression

According to Suler (2004), the anonymity experienced online may foster aggressive behavior in online communication because it makes people feel less inhibited in cyberspace than offline (online disinhibition effect). This feeling of disinhibition may lead to “benign” or “toxic” effects in CMC, with toxic consequences, such as uncivil language, harsh criticism, threats, or hate speech in online comments (Suler, 2004). From a psychological point of view, people in a deindividuated state feel less inhibited and less responsible for their behaviors, and, as a result, act more antisocially and aggressively (Festinger et al., 1952). Thus, the process of deindividuation describes how individuals lose their identities and, by that, control over their behaviors. This theory provides a useful framework for studying aggressive behavior in online communication because anonymity, reduced self-regulation, and reduced self-awareness—important conditions for deindividuation—are also common in CMC (Kiesler, Siegel, & McGuire, 1984).

And indeed, empirical research has shown that anonymity seems to affect the use of incivility in online commenting spaces: Comparing user comments from online newspaper forums that allow anonymity with those on news sites that require users to register by real name or to log in with their Facebook account, Santana (2014) found more incivility in anonymous comments. Similarly, Rowe (2015) conducted a comparative content analysis of user comments posted on the Washington Post website or the Washington Post Facebook page and found that the amount of incivility was higher in website comments than in Facebook comments, especially with regard to interpersonal aggression.

The current study builds on these findings and extends existing research by examining the influence of anonymity on aggressive language use in online comments in an experimentally controlled approach. More specifically, we analyze comments on a weblog, which were either posted anonymously without registration or via Facebook plug-in by using the personal Facebook account. In this respect, we hypothesize as follows:

H1. Language is more aggressive in anonymous comments than in identifiable comments.

Social Identity, Social Norms, and Social Influence

According to the social identity perspective, a social identity is defined by a person’s knowledge of being part of an emotionally relevant social group (Tajfel, 1972). This stems from the assumption that an individual’s self-concept includes not only his or her personal identity (which describes a person’s unique idiosyncratic personal attributes), but also several social identities (one for each group he or she belongs to; Tajfel, 1972). People assimilate themselves to their in-group (Hogg, 2001) and perceive greater consonance with other in-group members’ opinions and attitudes. If a social identity becomes salient, that is, a person perceives himself or herself more in terms of group membership rather than as a unique individual, this process is described by depersonalization. In contrast to the process of deindividuation, which describes the losing of identity, depersonalization is defined as a shift from the personal to the social identity. In this state, group members are perceived as highly similar to each other and have the greatest influence on the individual.

Concerning social influence processes in CMC, it is important to note that group-based influence can also affect individuals when the group is not physically present. Indeed, numerous studies in this context have shown that other users’ comments in online environments have substantial influence on readers’ attitudes and opinions (cf. Lee & Jang, 2010; Walther, DeAndrea, Kim, & Anthony, 2010). Based on social identity theory, this influence should be even stronger when a shared social identity is salient (e.g., when individuals visit a common interest website or a joint online community and interact with like-minded others about shared topics of interest). However, little is known about how language use in comments (or rather the social norm regarding which tone is appropriate and typically used in an online setting) affects users on a behavioral level in their own commenting style. First experimental findings show that participants who were exposed to other users’ comments with a high degree of thoughtfulness wrote longer comments, took more time for writing a comment, and mentioned more topic-related aspects than participants who were exposed to less thoughtful comments (Sukumaran, Vezich, McHugh, & Nass, 2011). Against the background of these findings and based on the theoretical considerations of the social identity theory, we argue that peer comments on a participatory website can be perceived as normative guidelines for typical social behavior in this context and affect readers’ own commenting behavior:

H2. Participants’ comments contain more aggressive expressions when the social group norm is aggressive (peer comments display aggressive wording) than when the social group norm is not aggressive.

In CMC, the SIDE model (Reicher et al., 1995; Spears, Postmes, Lea, & Watt, 2001) states that (visual) anonymity in online interaction does not necessarily lead to a loss of identity and anti-normative behavior. On the contrary, SIDE researchers assume that anonymity reduces the salience of inter-individual differences and fosters a salient social identity, which intensifies conformity to a prevalent social norm. In this regard, a recent experimental study provides first indications that exposure to aggressive user comments and an anonymous setting have a combined influence on readers to use aggressive expressions in their own comments (Zimmerman & Ybarra, 2016). Based on these findings and in line with the argument of the SIDE model, we argue that a salient social norm for aggressive behavior (salient due to the presence of other users’ aggressive comments) affects aggressive commenting behavior, especially in an anonymous environment.

H3. In anonymous conditions, the effect of the social group norm on aggressive language use is stronger than in identifiable conditions.

Moreover, we argue that identification with the commenters on the blog affects conformity to the prevailing social group norm as people who strongly identify with a group perceive themselves more as a part of the group and committed to its norms and standards. Therefore, we hypothesize as follows:

H4. Greater identification with commenters leads to more aggressive language use when the social norm is aggressive, but to less aggressive language use when the social norm is not aggressive.

In addition to that, we assume that the perceived anonymity as well as the perceived similarity of the group of commenters, that is, perceptions that are related to less perceived inter-individual differences among group members and linked to the state of depersonalization, reinforce conformity to the prevailing social group norm. Thus, we hypothesize as follows:

H5. (a) Greater perceived anonymity as well as (b) greater perceived similarity of commenters leads to more aggressive language use when the social norm is aggressive, but to less aggressive language use when the social norm is not aggressive.

Method

To test the hypotheses, we conducted a laboratory experiment with a 2 (aggressive vs. non-aggressive social group norm) × 2 (anonymity vs. non-anonymity) between-subjects design. Participants were exposed to a blog entry and four ostensible user comments. In order to manipulate the aggressive versus non-aggressive norm, these comments contained either aggressive or non-aggressive wording. The sense of anonymity in the commenting setting was manipulated by two measures: On one hand, we presented the user comments either with or without identifiable author information (name, profile picture); on the other hand, we manipulated the commenting function on the blog so that comments could be posted either without registration or by using a Facebook account.

In order to create a shared social identity context for our study participants, we recruited German soccer fans as participants and exposed them to a blog entry about a common interest topic (the [political] discussion on prohibiting the standing in German soccer stadiums for safety reasons). Moreover, they were told that the four user comments were written by other fans. The topic was chosen as the tradition of standing is deeply entrenched among European soccer fans and the discussion about the prohibition of standing terraces in soccer stadiums was a topical and hotly debated subject in Germany (due to violent incidents and riots) at the time the study was conducted.

Sample

Eighty-four soccer fans (22 females) took part in the experiment. Based on a manipulation check, 16 were excluded because they did not report the correct level of anonymity (anonymous or identifiable) of the user comments presented as stimuli. Further six participants, who had been exposed to non-aggressive comments, were excluded because they stated that the comments contained one to four or more aggressive expressions (chosen from the categories: none/1-4/5-8/9-12/more than 12). The remaining sample comprised 62 participants (18 female, 44 male) aged 20–42 (M = 23.35, SD = 3.26), who were almost equally distributed across conditions (anonymous/aggressive: n = 19; anonymous/non-aggressive: n = 13; identifiable/aggressive: n = 15; identifiable/non-aggressive: n = 15). Participants had at least a university entrance level of education; most of them were students (91.9%). Furthermore, 44 participants stated to visit soccer stadiums at least “now and then,” 10 said “often,” and 11 said “on a regular basis.” Only three participants stated to have “never” been to a stadium. The topic (violence in soccer stadiums and prohibition of the standing areas) was rated on a scale from 1 = not at all to 7 = very much as medium relevant (M = 4.55, SD = 1.65). Regarding familiarity with online comments, participants indicated (on a scale from 1 = never to 5 = very often) to sometimes read (M = 3.46, SD = 0.77) and seldom write online comments (M = 2.26, SD = 0.70). Moreover, the disposition of verbal aggressiveness (assessed by the subscale “verbal aggression” of Buss and Perry’s (1992) aggression questionnaire from 1 = not at all to 7 = absolutely) was on a medium level (M = 3.40, SD = 0.63, α = .70).

Participants were recruited on the campus of a large German university and via postings in online forums and student Facebook groups of the university. The study was approved by the local ethic committee.

Stimulus Material and Pilot Study

Weblog

A fictitious weblog for soccer fans was created and manipulated in four versions with regard to the experimental conditions. The main part of the blog was identical across conditions: All participants saw a page containing a blog entry citing a news article about violence in soccer stadiums. This article was created for the study and included statements of the German Secretary of the Interior and the chief of the police union, who threatened to prohibit the standing in German soccer stadiums (which is for many soccer fans the preferred way to watch a game and has a long-lasting tradition in Germany). Underneath the article, a comment section was included, which was different across conditions and comprised four user comments as well as an input field for new comments.

Comments

We created 12 comments (six aggressive and six non-aggressive) arguing against the prohibition of standing in soccer stadiums. The contents of the aggressive and non-aggressive comments were matched, but in the aggressive comments, we used offensive and vulgar words, sarcasm, insults, slander, and punctuation, such as capitalization or many exclamation marks and question marks to express the arguments. To ensure that the aggressive comments were perceived as more aggressive than the comments with non-aggressive wording, that the arguments were convincing, and that the stance (against the prohibition of standing) was perceived correctly for all comments, we conducted a pretest with 20 additional participants. Results revealed that—in line with the manipulation—all comments were perceived as contra the prohibition of standing. Perceived argument strength varied between medium to high (M = 6.25–10.65, SD = 1.99–3.41). Based on this, we chose the four most convincing and plausible comments for each condition for the main study. A repeated-measures ANOVA revealed a significant difference, showing that aggressive comments were perceived to include more insults and slander, F(1, 19) = 1430.78, p < .001, η2 p =.99; offensive and vulgar language, F(1, 19) = 328.44, p < .001, η p 2 = .95; and hostility, F(1, 19) = 402.65, p < .001, η p 2 = .96, than the non-aggressive comments (see Table 1).

Means (and Standard Deviations) for Perception of Stance, Aggressive Language, and Argument Strength of Pretested Comments.

Selected for the main study.

Manipulation of Anonymity

Besides the level of aggression, we manipulated comments’ level of anonymity in two ways: First, anonymous comments were displayed with the guest account “Anonymous” without revealing names or information about the commenters (Figure 1), while identifiable comments were displayed with names and profile pictures, like comments on Facebook (fictional persons with typical German names were used; Figure 2). Second, the commenting functionality was varied: In the conditions which displayed anonymous comments, we used the default WordPress function, which was adjusted so that participants were able to post comments with the guest account “Anonymous” without entering a name. In the conditions which displayed identifiable user comments, we implemented a social plug-in for Facebook comments (Facebook Developers, 2016). Thus, commenting was only possible via logging in to a Facebook account. Comments posted via this plug-in appeared on the blog with the name and profile picture of the user’s Facebook account.

Screenshot of the anonymous comments section on the weblog.

Screenshot of the identifiable comments section on the weblog.

Measures

Aggressive Language Use

In order to measure the aggressive language use in participants’ comments, a content analysis was conducted based on deductively derived categories from the literature. Each comment was coded with regard to the following four categories blind to the experimental conditions: offensive/crude wording (e.g., “shit,” “witless”), disparaging remarks (e.g., “antisocial people,” “this suit”), sarcasm (e.g., “what’s the point of watching a soccer match, if it’s like in an opera house?”), and punctuation (e.g., “!?,” “NOT GOOD”). Categories were not mutually exclusive; comments could comprise more than one manifestation of aggression. For each comment, we counted how many expressions for each category were included and generated a sum score for the overall number of aggressive expressions. Twenty-five percent of the comments were coded by a second independent rater. Percent agreement was calculated and revealed an agreement of 81%, which can be interpreted as acceptable (Neuendorf, 2002). Additionally, Krippendorff’s alpha was calculated as a more conservative index that accounts for agreement expected by chance (α = .703). The number of aggressive expressions per comment ranged from 0 to 13, about half of the comments (n = 29) contained at least one aggressive expression (see Table 2 for means and standard deviations).

Means (and Standard Deviations) for the Number of Words and Number of Aggressive Expressions in Participants’ Comments.

Identification

Participants’ identification with weblog commenters was measured using six items previously applied in SIDE research (cf. Doosje, Ellemers, & Spears, 1995; Postmes et al., 2001). The items were adjusted to the weblog scenario and rated on a 7-point scale with higher scores indicating greater identification. Sample items included, “It was easy for me to identify with the persons who had commented on the article” and “I had the feeling that I have a lot in common with the persons who had commented on the article” (α = .93, M = 4.03, SD = 1.38).

Perceived Anonymity and Similarity Among Group Members

We measured participants’ perceived anonymity as well as their perceived similarity of the group of people who commented on the blog as indicators for perceptions linked to depersonalization. Perceived anonymity was measured by three items (“The other persons who had commented on the article have been anonymous to me,” “I was not able to get an idea of the other persons who had commented on the article,” and “I was able to identify the other persons who had commented on the article” [reversed coded], α = .49, M = 4.94, SD = 1.36) and perceived similarity by two items (e.g., “The other persons who had commented on the article were similar to each other” and “the other persons who had commented on the article were all members of the same group,” α = .55, M = 4.81, SD = 1.19).

Attitude

Before and after the interaction on the weblog, we measured participants’ attitude toward the prohibition of standing in soccer stadiums among some filler items on other means to gain control over the violence in soccer stadiums. The three items were rated on a 10-point scale (1 = do not agree at all, 10 = strongly agree). Factor analysis revealed reliable factors for the pre and post measurement (pre: α = .79; post: α = .90), which explained a considerable amount of variance (pre: 23.31%, post: 29.99%). Descriptive values show that participants were clearly set against the prohibition of standing areas (pre: M = 3.76, SD = 2.41; post: M = 3.26, SD = 2.38).

Credibility of Commenters and Argument Strength

Perceived credibility of commenters was measured by means of the two subscales on trustworthiness and expertise by McCroskey and Teven (1999). Each subscale comprises six bipolar semantic differentials, such as “dishonest/honest” or “not intelligent intelligent,” which were rated on a 7-point scale (α = .82, M = 4.63, SD = 0.67). Furthermore, participants rated the comments’ argument strength by indicating on two bipolar items on 7-point scales how persuasive and how reasonable they were (α = .83, M = 4.78, SD = 1.16).

Procedure

Participants completed a short pre-questionnaire inquiring their attitude toward the issue of violence in soccer stadiums. Then, the weblog was presented and participants were instructed to read the article and the comments and to post an own comment on the page. There was no time limitation. Afterward, participants filled out a post questionnaire, which measured the dependent variables described above as well as individual characteristics, such as socio-demographics (gender, age, level of education). Participants were debriefed and had the opportunity to take part in a raffle for one of two coupons for a large e-commerce site (each €25). Moreover, the comment created on the weblog was saved in a separate document for the content analysis and deleted from the blog page in the presence of the participant.

Results

Descriptive analyses of participants’ comments reveal that the number of words per comment ranged from 11 to 414 (see Table 2 for means and standard deviations). Moreover, we conducted preliminary analyses with the socio-demographic variables gender and age in order to determine their potential influence on participants’ aggressive language use in online comments: In this regard, a t-test revealed no significant differences between female and male participants (t = −0.68, p = .499, d = .19). Furthermore, there was no significant correlation between age and aggressive language use (r = .006, p = .962). Therefore, we refrained from including these variables as covariates in the following analyses.

Language Use in Online Comments

To analyze effects of anonymity (H1), social group norm (H2), and their interaction (H3) on aggressive language use in online comments, we had to deal with unequal homogeneity of variance in our depended variable number of aggressive expressions. To address this issue, a Scheirer–Ray–Hare test, which is a conservative non-parametric equivalent of a two-way ANOVA, was conducted as described by Dytham (2011, Chapter 7). 1 This analysis is based on ranked data and uses the cumulative distribution function of chi-square to test for significances. Results reveal a significant effect of the group norm (H = 6.24, SS = 3,060.96, df = 1, p = .012), but no significant effect of anonymity on the number of aggressive expressions in participants’ comments. Thus, H1, which predicts that the language is more aggressive in anonymous than in identifiable comments, has to be rejected. Concerning the significant influence of the group norm, descriptive values show that participants who were exposed to aggressive comments (M = 1.5, SD = 2.45) used significantly more aggressive expressions than participants who saw comments with non-aggressive wording (M = 0.36, SD = 0.56). Thus, H2, which predicts that participants’ comments contain more aggressive expressions in a setting with an aggressive norm, is supported. Furthermore, our analysis reveals that the interaction effect of group norm and anonymity leans toward significance (on the 10% significance level: H = 3.43, SS = 1,682.92, df = 1, p = .064). Results show that participants who were exposed to the aggressive norm used more aggressive expressions when the anonymous WordPress account was installed on the blog than when comments were posted via Facebook plug-in (see Table 2). However, the effect is only significant on the 10% significance level; thus, H3 is partly supported.

To test H4, which predicts that identification with the group moderates the effect of the group norm on aggressive language use, a moderated hierarchical regression analysis was calculated. The predictors were group norm, identification with the group of commenters, and the interaction of group norm and identification. The final model reveals that the group norm (β = .305, p = .023, R2 = .092) is a significant predictor for the number of aggressive expressions (which has already been shown in the analysis above). However, neither identification nor the interaction explained further variance. Thus, H4 has to be rejected.

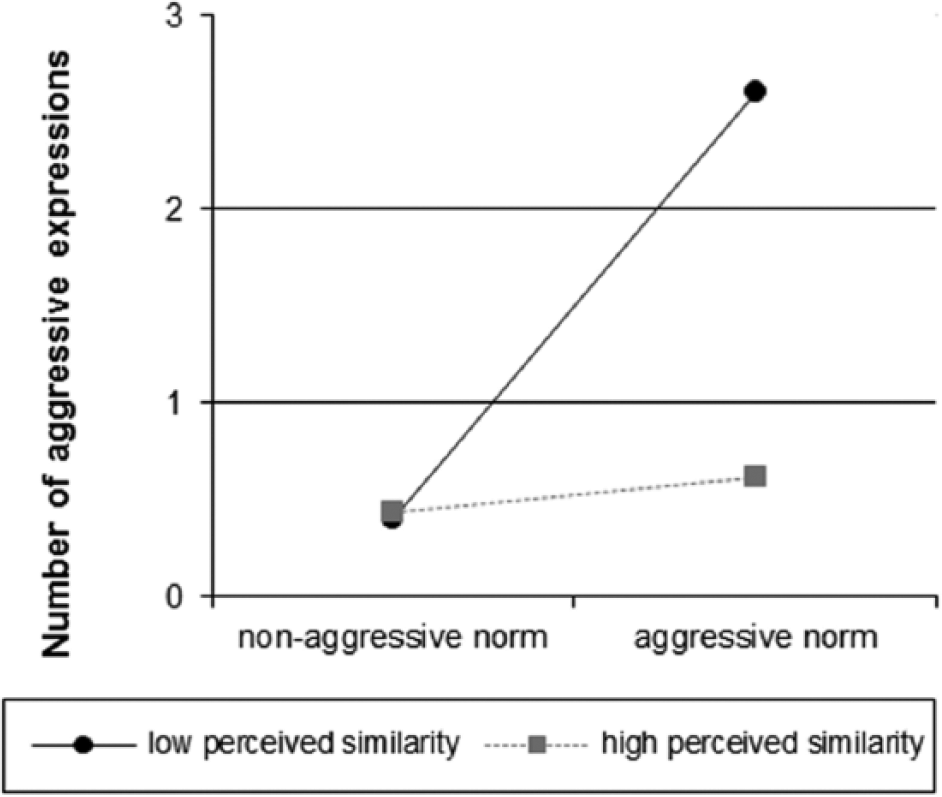

To test whether the group norm has a greater effect on aggressive language use when the commenters on the blog are perceived as highly anonymous (H5a) or highly similar (H5b), we calculated two moderated hierarchical regression analyses. The first analysis (for H5a) included the predictors group norm, perceived anonymity, and their interaction term. Results reveal a significant effect of the group norm (β = .274, p = .029) and a significant effect of the interaction (β = .249, p = .046) on aggressive language use in the final model (R2 = .163). To investigate this interaction, we conducted simple slope analyses (Aiken & West, 1991), which showed that participants who reported higher perceived anonymity of commenters used more aggressive expressions in conditions with an aggressive group norm compared with a non-aggressive group norm (b = 2.02, standard error [SE] = 0.67, t = 3.00, p = .004). The difference was not significant for participants who reported lower perceived anonymity of commenters (Figure 3). This finding supports H5a.

Interaction of group norm and perceived anonymity of commenters.

The second regression analysis (for H5b), including the predictors group norm, perceived similarity of commenters, and their interaction term, revealed significant effects of all three predictors on aggressive language use in the final model (R2 = .261): group norm (β = .309, p = .008), perceived similarity (β = −.254, p = .042), and the interaction (β = −.248, p = .046). However, the direction of the effect of perceived similarity was other than expected: The less similar the commenters were perceived, the more aggressive expressions were used by participants. Furthermore, simple slope analyses revealed that participants who reported lower perceived similarity used more aggressive expressions in conditions with the aggressive group norm compared with conditions with the non-aggressive group norm (b = 2.19, SE = 0.65, t = 3.39, p = .001). There was no difference between conditions when perceived similarity of commenters was high (Figure 4). Since he perceived similarity of commenters was negatively related to aggressive language use and influenced the effect of the group norm on aggressive language use in a direction opposed to what was expected, H5b has to be rejected by our data.

Interaction of group norm and perceived similarity of commenters.

Additional Results: Word Count, Attitude, and Evaluation of Comments/Commenters

Since the number of words per comment varied widely between conditions, we additionally tested whether our manipulations affected how much participants wrote in their comments: Due to unequal homogeneity of variance in the number of words, a Scheirer–Ray–Hare test was conducted, which revealed a significant effect of anonymity (H = 5.89, SS = 1930.14, df = 1, p = .002) as well as of the group norm (H = 11.66; SS = 3,818.83; df = 1; p < .001) but no significant interaction effect. 2 Participants in the anonymous conditions as well as participants in the aggressive norm conditions wrote longer comments than their counterparts (see Table 2).

Concerning the influence of the comments on participants’ attitudes, repeated-measures ANOVA revealed that participants’ attitude has changed in line with the direction advocated in the comments displayed on the blog: Participants were more opposed to the prohibition of standing, F(1, 58) = 7.71, p = .007, η p 2 = .12, after they had read the comments. There was, however, no difference between the conditions with regard to attitude change.

To get some more insights about (a) how strong the arguments of the displayed comments were perceived and (b) how credible participants gauged the commenters, a MANOVA was conducted. The analysis revealed significant multivariate main effects of the group norm (Wilks’s λ = .88), F(2, 57) = 3.92, p = .025, η p 2= .12; and of anonymity (Wilks’s λ = .84), F(2, 57) = 5.64, p = .006, η p 2 = .17; but no multivariate interaction effect. Tests of between-participants effects revealed a significant effect of group norm, F(1, 58) = 7.09, p = .010, η p 2 = .11, and an almost significant effect of anonymity, F(1, 58) = 3.98, p = .051, η p 2 = .06, on comments’ argument strength. The perception of argument strength was weaker for the comments containing aggressive expressions (M = 4.43, SD = 1.19) than for the comments that did not contain aggressive expressions (M = 5.21, SD = 0.98). Moreover, arguments displayed in anonymous comments (M = 4.48, SD = 1.14) were perceived weaker than arguments in identifiable comments (M = 5.10, SD = 1.11). A similar pattern was found for the evaluation of commenters’ credibility. Commenters were evaluated significantly less credible when comments included aggressive expressions (M = 4.44, SD = 0.75) than when no aggressive expressions (M = 4.85, SD = 0.48) were included, F(1, 58) = 5.77, p = .020, η p 2 = .09. Furthermore, anonymous commenters (M = 4.36, SD = 0.68) were perceived significantly less credible than identifiable commenters (M = 4.92, SD = 0.52), F(1, 58) = 11.45, p = .001, η p 2 = .17.

Discussion

This study aimed to investigate whether the use of aggressive expressions in online comments is affected by the anonymity of the environment, other users’ aggressive commenting behavior, or a combination of both. Moreover, we analyzed the role of identification and depersonalization in the social influence process. Our results reveal that anonymity (on its own) did not affect the use of aggressive expressions in online comments: There was no difference between participants who had commented with the anonymous WordPress guest account and participants who had used their Facebook account. Based on deindividuation theory (Festinger et al., 1952) and research on incivility in online discussions (Santana, 2014), we had expected anonymity to be a driving factor for verbal aggression in online comments. The method of this study could be one reason for not finding any effects of anonymity because, other than most studies in the field of online aggression, we used a controlled experimental design and did not analyze existing data from web forums or online discussion groups. Anonymity might have been reduced due to the laboratory setting and, thus, did not affect the language use. Moreover, the setting itself (participants’ knowledge of taking part in a study) could have inhibited participants’ use of aggressive language and limited the variance in the data. However, another explanation is that public venting in asynchronous online comments on social media platforms might follow a different pattern than forms of direct verbal aggression toward other users, such as flaming. If aggression is directed at a third party, such as in an “online firestorm,” anonymity might not be the key factor for aggressive expressions of outrage.

Results concerning the influence of a prevalent norm of commenting revealed that participants were influenced by the tone of other users (ostensible other soccer fans) and used significantly more aggressive expressions when the group norm was aggressive (comments displayed aggressive wording) than when the norm was not aggressive (comments displayed non-aggressive wording). This finding emphasizes the substantial influence of descriptive social norms derived from other users’ comments on participatory websites in affecting the language used when writing a comment. Thus, our results show that online comments not only influence viewers’ opinions and attitudes, as shown in multiple studies (e.g., Lee & Jang, 2010; Walther et al., 2010), but also affect behaviors.

In summary, we found a significant main effect of the group norm, but no (direct) effect of anonymity on aggressive language use in online comments. Moreover, results show that anonymity interacted with the group norm and indirectly affected aggressive language use, however, only on a 10% level of significance: Participants exposed to an aggressive norm used more aggressive expressions when they were anonymous. This interaction is in line with research reporting combined effects of anonymity and exposure to aggressive models (Zimmerman & Ybarra, 2016). However, while the authors argue that their “results suggest that social modeling moderated the effects of anonymity” (p. 190), our findings rather show that the aggressive behavior of others has a greater effect on the commenting behavior than the anonymity in the online setting. The marginal significant interaction effect found in our data, furthermore, suggests a tendency that users’ conformity to an aggressive social norm of commenting is stronger in an anonymous environment, which is in line with the SIDE model.

With regard to the influence of socio-demographic factors, our analyses revealed that neither age nor gender affected participants’ aggressive language use. The finding that male and female participants used an equal amount of aggressive expressions in their comments might be surprising considering prior work on gender differences in linguistic online behavior. Research shows, for example, that males engage more in flaming than females (Alonzo & Aiken, 2004). However, effects of gender on aggressive communication behavior in online settings have not been observed consistently (e.g., Huffaker & Calvert, 2005). In addition, the aggressive language use analyzed in this study cannot be equated with flaming since we also captured more covert and indirect forms of aggressive venting and incivility for which gender might not be a key predictor. Testing this, however, was not the focus of this study and has to be investigated in future work. Moreover, drawing on the SIDE model, we would argue that gender-specific commenting behavior could be expected, if gender, as a social category, is made explicit and salient in an online environment. In this study, however, we focused on the category of soccer fans, which might have overwritten other social identity cues based on socio-demographic characteristics such as gender.

Against the background of the SIDE model and in order to exceed current research, we analyzed the effects of identification and depersonalization variables in the social influence process. According to Turner’s (1982) concept of referent informational influence, identification is a key factor for social influence processes and empirical research has emphasized its importance in influencing attitudes in social media settings (Walther et al., 2010). In this study, however, identification with the commenters did not affect language use. This suggests that identification might be essential with regard to attitudes but not for adapting a behavioral social norm regarding the use of language. Concerning the perceived anonymity and similarity of the commenters, results show that both variables interacted with the group norm and indirectly affected aggressive language use, however, in opposite directions: While participants used more aggressive language in the aggressive norm condition compared with the non-aggressive norm condition when perceived anonymity was high (i.e., the group was perceived as an anonymous union), there was no difference when perceived similarity was high (i.e., the group was perceived as a homogeneous union); instead, when perceived similarity was low, participants used more aggressive expressions in aggressive norm conditions. Based on the social identity approach, in which depersonalization is described as an identity shift from the personal level to a group level of identity due to reduced perception of inter-individual differences, and the SIDE model, which argues that (visual) anonymity of group members is a key factor for the process of depersonalization (Spears et al., 2001), we expected that both the perception of anonymity and the perception of homogeneity of the group of commenters foster depersonalization and social influence. Our results, however, show that perceived anonymity and perceived homogeneity of group members seem to be independent constructs, which have different effects in social influence processes. In this study, high perceived anonymity but low perceived similarity of the group increased the effect of an aggressive group norm on aggressive language use. With regard to the length of the comments, our additional analysis revealed significant effects of anonymity and of the group norm. On one hand, comments written in anonymous conditions were significantly longer than comments written in the identifiable conditions. This finding is in line with research postulating disinhibiting effects of anonymity, which lead to increased willingness in expressing opinions and disclosing information (Haines, Hough, Cao, & Haines, 2014). However, another explanation for the differences in comments’ length might be related to the different input forms: The space for Facebook comments is typically smaller than the standard input field on WordPress blogs. Concerning the group norm, results showed that participants used more words in their comments when an aggressive norm was salient. An explanation for this might be that expressions of aggression simply need more space.

Additionally to the influence of peer comments on language use, our results emphasize the persuasive power of online comments on attitudes as shown in other research (Lee & Jang, 2010; Walther et al., 2010). Before reading the comments of ostensible other soccer fans, participants held already a negative attitude toward the prohibition of standing in soccer stadiums, but this was significantly increased by the comments. The effect was consistent for all conditions, which suggests that persuasive effects of online comments regarding attitudes are not affected by anonymity or aggressive language. However, the perception of the displayed comments’ argument quality as well as the evaluation of commenters’ credibility was significantly decreased by anonymity as well as by the aggressive group norm.

Limitations

The study was conducted in a laboratory using a forced exposure design. Participants were faced with a (fictitious) soccer weblog and were asked to comment on the article. Although the four presented user comments do not represent the richness of online comments typically found on participatory websites, we tried to simulate the typical online situation as realistic as possible, for example, by using authentic stimulus material (comments included original statements from online comments of soccer fans). Moreover, we recruited a specific group of participants (soccer fans), for whom the topic was relevant, in order to increase a shared social identity. Please note, however, that our sample was homogeneous regarding socio-demographics (consisting of highly educated young adults), which can be seen as a limitation. We admit that our experimental approach limits the external validity of the study; however, we decided to use this method as it enables us to investigate causal relations by experimentally manipulating specific conditions while holding all other variables constant.

Concerning the coding of aggressive expressions, we found a relatively low level of aggression and coded for light forms of aggression (e.g., insults were not worse than “idiot”). Moreover, the comments were analyzed with regard to four categories and all categories were given the same importance.

Theoretical and Practical Implications

In this work, the SIDE model was used as a framework to analyze conformity effects on participatory websites with regard to language use in online comments. Results emphasize a substantial influence of peer comments in affecting not only opinions, but also behavioral consequences, such as the use of aggressive expressions. Based on SIDE research, we expected that anonymity would foster identification processes and conformity to group norms. This was, however, not the case. Moreover, our results suggest that perceived anonymity and perceived similarity of group members have different effects on the influence of a social group norm and on normative behavior. Further research is needed to examine these effects in more detail. The SIDE model was originally developed to explain group processes and social influence in CMC, such as group discussions via chat. With the rise of Web 2.0, SIDE was adopted to the context of social media. However, research on online social influence in participatory environments, such as blogs or social networking sites, has just begun. Here, researchers have primarily focused on persuasive effects of user comments and shown that user-generated content has great influence in affecting and changing readers’ attitudes and opinions (cf. Lee & Jang, 2010; Walther et al., 2010). Thus, further studies are needed to examine SIDE effects in social media applications—especially with regard to normative effects on language use, more insight is needed since the effects of peer comments on behavioral outcomes might be different from the effects on attitudes.

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.