Abstract

Facebook facilitates more extensive dialogue between citizens and politicians. However, communicating via Facebook has also put pressure on political actors to administrate and moderate online debates in order to deal with uncivil comments. Based on a platform analysis of Facebook’s comment moderation functions and interviews with eight political parties’ communication advisors, this study explored how political actors conduct comment moderation. The findings indicate that these actors acknowledge being responsible for moderating debates. Since turning off the comment section is impossible in Facebook, moderators can choose to delete or hide comments, and these arbiters tend to use the latter in order to avoid an escalation of conflicts. The hide function makes comments invisible to participants in the comment section, but the hidden texts remain visible to those who made the comment and their network. Thus, the users are unaware of being moderated. In this paper, we argue that hiding problematic speech without the users’ awareness has serious ramifications for public debates, and we examine the ethical challenges associated with the lack of transparency in comment sections and the way moderation is conducted in Facebook.

Introduction

Incivility and hate speech’s presence in digital debates has been identified as a challenge to democracy, and action plans have been launched internationally and nationally to deal with this issue (European Commission, 2016; Ministry of Children and Families, 2016; United Nations, 2019). As public debates have proliferated across a huge and complex array of platforms and services, questions concerning who has the power, control and responsibility to watch over debates have become increasingly important (Camaja and Santana, 2015; Gillespie, 2018). How digital debates and user-generated content should be moderated has been thoroughly addressed within the fields of communication and journalism. Studies have shown how different moderation practices have been tested and implemented with varied outcomes in newsrooms and online political discussion forums (Edwards, 2002; Frischlich et al., 2019; Hermida and Thurman, 2008; Ihlebæk and Krumsvik, 2015; Marchionni, 2013; Singer et al., 2011).

Following the rise of global platforms, calls for better monitoring measures in social media platforms have also intensified. Facebook, in particular, has received negative attention for performing both too little and too much moderation and for a lack of transparency in their decision-making processes (Gillespie, 2018; Van Dijck et al., 2018). The present study’s starting point was that, as social media platforms have become vital to structuring public dialogues, moderation has increasingly become a concern for new actors that operate in these arenas. Therefore, more knowledge is needed about how this kind of responsibility is understood and carried out by often inexperienced facilitators.

This research focused specifically on how political actors conduct moderation. That political actors use social media platforms such as Facebook and Twitter to communicate with citizens is a well-established fact (Graham et al., 2016; Lilleker et al., 2011). Facebook facilitates more extensive dialogue between citizens and politicians, and political candidates’ presence in this platform has the potential to enhance democratic processes by providing a new space for political interactions (Utz, 2009). The main incentive for candidates to enter Facebook is to gain strategic advantages, especially during election campaigns when it has mainly been used as a marketing platform for political candidates (Enli and Skogerbø, 2013; Ross et al., 2020; Schwartz, 2015).

While using social media and, in particular, Facebook as a communication platform to engage in dialogues with their followers, politicians and political parties also face difficult decisions concerning how to manage public debates. In Norwegian contexts, various examples of negative press coverage have appeared concerning the presence of hate speech on politicians’ Facebook pages, and there has been calls for more responsibility and better moderation practices in the political field. Concurrently, moderation requires both resources and skills that these actors do not necessarily have. A starting point for the article is that more emphasis must be put on how moderation partly depends on the technological tools provided by powerful global platforms, with Facebook becoming a dominant player in this regard.

This study thus examined how political actors utilise Facebook as a platform for political communication and, more specifically, how they use Facebook’s moderation tools to manage debates. We sought to address the following research questions:

Which comment moderation functions are available on Facebook and how transparent are the different functions?

How do political actors understand their responsibility to moderate online debates and how do political actors conduct comment moderation to deal with incivility?

In terms of methodology, the study carried out a platform analysis based on an interactive testing of Facebook’s comment moderation functions for page owners, in particular focusing on how moderation is made visible to users. In addition, we conducted interviews with the communication department heads of the eight political parties represented in the Norwegian Parliament. The informants were all in charge of their political party’s Facebook page. The interviewees were asked about how they viewed their responsibility regarding debates, their views on and use of Facebook’s functionalities and their moderation routines and practices.

This research’s theoretical framework combined insights from political communication, journalism and platform studies to highlight how moderation has become a political and cultural practice embedded in the digital sphere. Transparency, in particular, is an important aspect with regard to uncivility and moderation in both a technological and performative sense because transparency provides users necessary insights into and knowledge about how boundaries are set in public debates. As a result, users are given the chance to learn from and adjust to – or resist and reject – this kind of control. The present study is important because its results shed light on fundamental challenges associated with Facebook as a powerful platform for public debates. This research’s main contribution is an analysis of how moderation practices and their lack of transparency affect the premises regarding participation in public debates.

Theoretical background

Moderators

The proliferation of digital platforms in which citizens can engage in public debates has generated important questions concerning who moderates debates and how moderation is conducted. Moderation and the moderators’ role have become more important as online incivility, harassment, threats and hate speech have become more prevalent and frequently identified as an issue within democratic processes. Many studies have explored what triggers this kind of problematic participation and why it occurs (Coe et al., 2014; Graham and Wright, 2015; Ksiazek, 2018; Ksiazek and Springer, 2018; Løvlie et al., 2018a; Su et al., 2018; Wright and Street, 2007; Ziegele et al., 2014). The current study, however, focused more closely on how incivility is dealt with by those who facilitate and moderate debates.

Various actors can handle moderation duties. Within the news sector, moderation has most often been performed by journalists, who somewhat reluctantly take on the task of engaging with audiences (Domingo, 2008; Graham and Wright, 2015; Hermida and Thurman, 2008; Ihlebæk and Krumsvik, 2015; Ihlebæk and Larsson, 2016; Ksiazek, 2018; Larsson, 2011; Lewis, 2012; Stroud et al., 2015). Moderation has also been conducted by specialised companies, such as Interactive Security in Scandinavia, or by often low-paid moderators specifically hired for this task, which, for instance, is currently the case for Facebook (Gillespie, 2010). In addition, activist and citizen moderators have been an integral component in the moderation of user-driven forums (Gibson, 2019; Grimmelmann, 2015; Wright, 2006).

Thus, the proliferation of online platforms and spaces dedicated to dialogues has made everyone who facilitates debates in some measure responsible for moderating them according to specific rules and standards. The present study sought in particular to understand how politicians perform the task of moderation. Facebook has allowed politicians and citizens to interact outside of traditional party politics and without interference by critical journalists. Previous research has characterised politicians’ early presence online as mostly exhibiting a facade of interaction (Stromer-Galley, 2000). While social networks such as Facebook have been presented as platforms for participation and deliberation, their interactive features could be described as interaction-as-product rather than interaction-as-process (Schwartz, 2015; Stromer-Galley, 2004).

More recently, interactions have typically taken place through a range of modes, such as liking or following politicians’ Facebook page or reacting, sharing or commenting on politicians’ updates. Previous studies have shown that the most typical interaction between politicians and citizens are ‘liking’, while commenting is a more demanding form of interaction with a higher threshold of involvement in which fewer individuals engage (Kalsnes et al., 2017). Nevertheless, commenting is a highly visible feature, and politicians can receive many comments on their posts.

This volume has led to discussions of to what degree political actors should moderate their Facebook pages to deal with uncivil comments. As the focus on hate speech and incivility has intensified, pressures and public expectations have increased for political actors to pay attention to and delete comments perceived as hateful or derogatory (Council of Europe, 2019). However, how political actors view this responsibility is less clear, including how they act as moderators on their own Facebook pages and profiles.

Moderation practices

Moderators’ presence is considered to have a potentially powerful impact on online discussions’ quality and to be one way to deal with incivility (Camaja and Santana, 2015; Frischlich et al., 2019). Edwards (2002: 5) observes that moderators can play three crucial functions in online discussions. The first is a strategic function of shaping discussions’ boundaries by determining objectives. The second is a conditioning function whereby moderators enrich the discussion by providing information and attracting participants. The last is a process function in which moderators determine the rules to be followed and perform housekeeping functions. Other studies have demonstrated how moderation can be done in various ways, for instance, by engaging in dialogue, conducting pre- or post-moderation, closing debates during certain hours, limiting the discussion topics, altering or deleting text or expelling users who break the rules (Ihlebæk and Krumsvik, 2015; Reich, 2011; Ruiz et al., 2011; Singer et al., 2011).

How moderation is conducted depends on a variety of preconditions. These include the resources available, legal boundaries set by hate speech legislation and questions about who can be held responsible. How moderation is done also depends on platforms’ affordances, that is, the technological possibilities and limitations shaping moderation – a point discussed in greater depth in the following section. In addition, normative, ethical and professional ideals shape how debates ideally should function (Frischlich et al., 2019; Ihlebæk and Krumsvik, 2015; Singer et al., 2011). Gillespie (2010) argues that no moderator is ever neutral, and all moderation requires value-based judgments. These values can be expressed in documents such as guidelines or rules, and these typically provide moderators with a framework they can use to implement different forms of sanction or intervention. Previous studies have shown that internal guidelines’ form and content vary across organisations. While some have an extensive set of rules in terms of what is deemed acceptable or undesirable behaviour, others only have a few sentences that describe their policy, and some have none (Ihlebæk and Krumsvik, 2015; Schwartz, 2015). If users continue to break the guidelines, moderators can perform different forms of sanctions, for instance issue warnings, remove text, take away the possibility to comment, or exclude these participants. In sum, moderation can be seen as a cultural practice that occurs in particular contexts, in which specific rules and norms are developed to guide those who moderate.

A general dilemma faced by all moderators is deciding how strict or liberal they should be and where boundaries should be set. Of course, these standards vary greatly so that, while some moderators enforce strict rules, others favour a more liberal approach based on free speech (Ihlebæk and Krumsvik, 2015). Studies have also identified lack of transparency in how moderation is performed as a challenge. Løvlie et al.’s (2018b) study highlighted how online commenters experience frustration over not knowing why or when they were being moderated and how they felt censored for no apparent reason. Boberg et al. (2018: 66) reached a similar conclusion, arguing that ‘the fact that moderation decision-making processes are often not fully comprehensible might unintendedly fuel censorship-critique among readers, thus damaging the image of participatory journalistic media in the long run’. Schwartz’s (2015) research on interactivity on political parties’ Facebook pages found evidence that the process of moderation – or what constitutes appropriate behaviour – is not always transparent to citizens participating in comment sections. Lack of transparency in moderation practices, therefore, can breed distrust and suspicion between facilitators and users, as well as hindering potential learning among those who break the participation rules. Transparency depends on how moderation is practiced in specific contexts, but it is also linked to the platform where the debate takes place and to what degree different forms of moderation is made visible to the participants.

Moderation and platforms

Global platforms’ power has attracted massive attention in the last decade, especially with regard to tackling hate speech and incivility in public debates (Bossetta, 2018; Gillespie, 2010, 2018; Van Dijck et al., 2018). How platforms such as Facebook moderate debates and to what degree it can be held responsible for the distribution of hate speech has become a matter of great concern (Gillespie, 2017). The present study focused more closely on the tools Facebook provides for users to moderate comments.

Previous research has argued that digital environments are subject to rapid, transformative change. Studies have shown how platforms’ network structure, functionality, algorithmic filtering and datafication model affect political campaign strategy in social media (Schwartz, 2015), as well as how political discussions work (Wright and Street, 2007). Social media platforms are often described as open, public spaces, but, in reality, social media platforms are highly contentious spaces with multiple stakeholders and a strong tension between commercial and private users’ interests.

Researchers have argued that the quality of deliberation in online forums depends on the way in which discussions are organised. Namely, the technology’s structure and design determines quality rather than the technology itself (Edwards, 2002; Papacharissi, 2009). Schwartz (2015) conceptualises Facebook as a ‘dinner party for public debate’. The dinner party consists of a dinner table (i.e. the platform), a host (i.e. the page owner or politician) and the invited guests (i.e. citizens). Schwartz found that Facebook pages favoured mass supportive behaviour over individual criticism in line with politicians’ strategic goals during Denmark’s 2011 election campaign.

According to Braun and Gillespie (2011), social media companies use the word ‘platform’ to obscure the complicated power relations between users and social media providers. Papacharissi (2009), in turn, argues that the underlying structure of social network spaces such as Facebook may set the tone for specific types of interaction.

A central term used to describe communication technology’s characteristics is ‘affordances’, that is, what ‘various platforms are actually capable of doing and perceptions of what they enable, along with the actual practices that emerge as people interact with platforms (Kreiss et al., 2018: 19). Thus, communication technology facilitates certain forms of utilisation, but technological practices are also bounded by people’s perceptions of what can be done with that technology. In this context, restrictions can be imposed by service providers who censor content and platforms’ technological architecture that favours certain actions. Moderators are, therefore, dependent on the technological tools available at any given time.

Norwegian context

Norway’s democracy can be characterised as a multiparty parliamentary political system, also described as a media welfare state (Syvertsen et al., 2014). Eight parties were represented in the Norwegian Parliament at the time of the current study, which were all included in the dataset (i.e. the Labour Party, Conservative Party, Progress Party, Centre Party, Socialist Left Party, Christian Democratic Party, Green Party and Liberal Party). Since the early 2010s, Norwegian political parties and politicians have maintained profiles on social media platforms, especially Facebook but also Twitter, Instagram and YouTube (Kalsnes, 2016).

The parties’ social media presence has generated much media attention over the years, including negative press coverage. Politicians have been criticised for not moderating their comment section. For example, the Minister of Immigration and Integration, Sylvi Listhaug of the Progress Party, was criticised for not deleting death threats in her Facebook page’s comments in due time (Tiller et al., 2017). Several news reports have further documented that Prime Minister Erna Solberg has had to delete posts and that she had to close her Facebook wall to new postings after hate speech appeared there (Norsk Telegrambyrå, 2018, 2019).

The problem of incivility and hate speech is currently perceived as a serious threat to democracy, both internationally and nationally (Council of Europe, 2019). In response, the Norwegian government implemented a political strategy to combat hate speech in 2015 (Ministry of Children and Families, 2016). Consequently, increasing pressure has been put on political actors to keep comment sections free of hateful content.

Methods

The present study combined insights from interviews and an interactive testing of Facebook’s moderation tools. First, we conducted qualitative elite interviews (Harvey, 2011) with the communication advisers of all political parties represented in the Norwegian Parliament (number = 8). The semi-structured interviews took place in November and December 2017, in the informants’ offices, and lasted for 30 to 60 minutes. The interviewees were all in charge of their respective political parties’ Facebook page. These communication advisors were asked about their use of Facebook for political communication. The parties’ comment moderation was the interviews’ overarching theme. More specifically, the focus was on the type of content the parties publish, ways they have adapted to Facebook’s technological functionalities and the parties’ comment moderation routines and practices.

Based on the two research questions mentioned previously, the interview data were analysed and classified into three main categories: perceived responsibility, moderation practices and sanctions. These categories were defined based on previous research on moderation as outlined in the theoretical section and on an inductive reading of the interviews. Thus, the coding was a hermeneutic process that involved a close interplay between the empirical material and categories, in which the former set the latter’s premises.

Based on the insights gained from the interviews, we conducted an analysis of Facebook’s moderation tools and functionalities for pages, groups and individual profiles. We carried out a platform analysis (Bucher and Helmond, 2018) through interactive testing of the tools provided to page owners and group administrators, focusing particularly on which moderation options are made visible to which users. Facebook’s different moderation options for page or group administrators were outlined, including, among other affordances, the profanity filter and the option to delete or hide comments. The testing involved identifying differences and similarities between the pages and groups to which we personally had access.

This part of the research was done in January 2019, so later changes in Facebook’s comment moderation options were not captured in the data gathered. Based on the analysis, we developed a conceptual model of this platform’s different moderation functions. The model represents to what degree different moderation options give visible, transparent feedback – or lack thereof – to users who have overstepped the pages’ civility rules.

To gather more relevant information, we started the analysis by mapping out Facebook’s different comment moderation functions, specifically focusing on how the different tools work in terms of transparency. The insights obtained from this study provide a useful basis for an analysis of political actors and their moderation practices.

Results

Facebook’s comment moderation options

To address the first research question (i.e. which comment moderation functions are available on Facebook and how transparent the different functions are), we conducted a platform analysis to provide an overview of Facebook’s comment moderation functions on pages and groups. We tested different moderation options with a particular focus on which options are made visible or invisible to which users. The analysis involved mapping various options in comment sections, as well as settings for pages and groups.

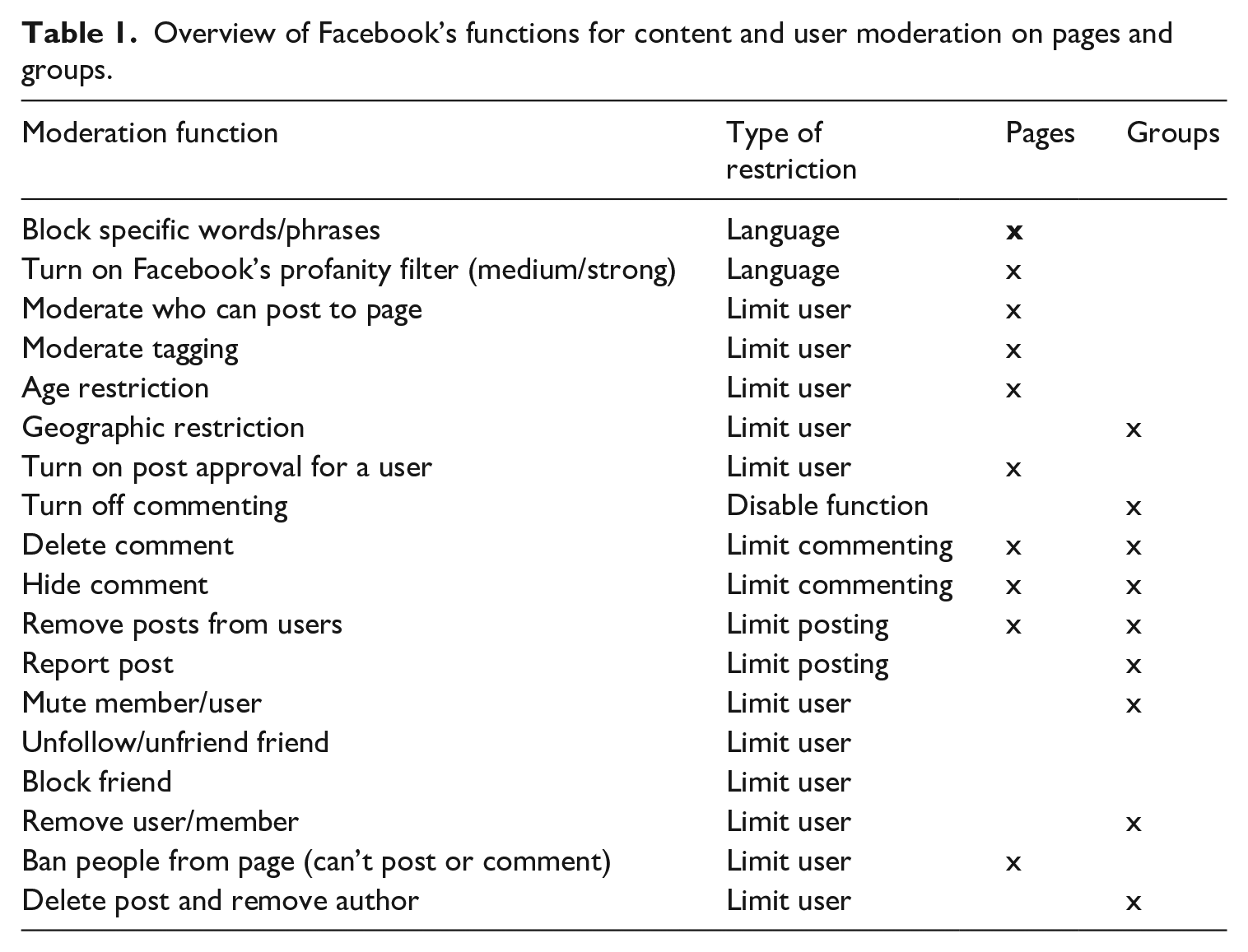

The main focus was pages since these are used by political actors and they provide the most professional service on Facebook with a host of different tools and functions such as statistics, targeted advertising and advanced publishing tools. By comparing the options available for pages and groups, we were able to analyse Facebook’s entire range of comment moderation options. As expected, pages offer the largest number of options compared with groups. Table 1 outlines which functions are available in which environments.

Overview of Facebook’s functions for content and user moderation on pages and groups.

The overview revealed that Facebook’s comment moderation functions mainly focus on how to restrict specific users, filter uncivil language and disable certain functions (i.e. posting or commenting). Some functions allow page owners and group administrators to adjust settings in advance in order to avoid uncivil language or limit who can post on a page. Uncivil or hateful content can be targeted by using Facebook’s predefined profanity filter or adding a list of relevant, uncivil words that might appear in comment sections. Comments mentioning these words become invisible to users.

A range of different functions target specific users based on age, geography or previous behaviour. These functions allow page owners to limit who can post or comment on specific sites. The most crucial difference between pages and groups is that the latter can turn off the option to comment on posts, which can be crucial for political actors and other Facebook users if an online debate ‘gets out of control’.

The most prevalent options are to limit posting (i.e. delete or hide comments) and restrict users (i.e. mute or block users). User-generated content can be restricted in three main ways: by deleting or hiding a comment or reporting a post. Users are subject to different ways of being disallowed to interact with a page or group. Specific commentators can either be muted, removed or banned to prevent them from interacting with posts. However, if the banned user creates a new profile, the restrictions will not apply.

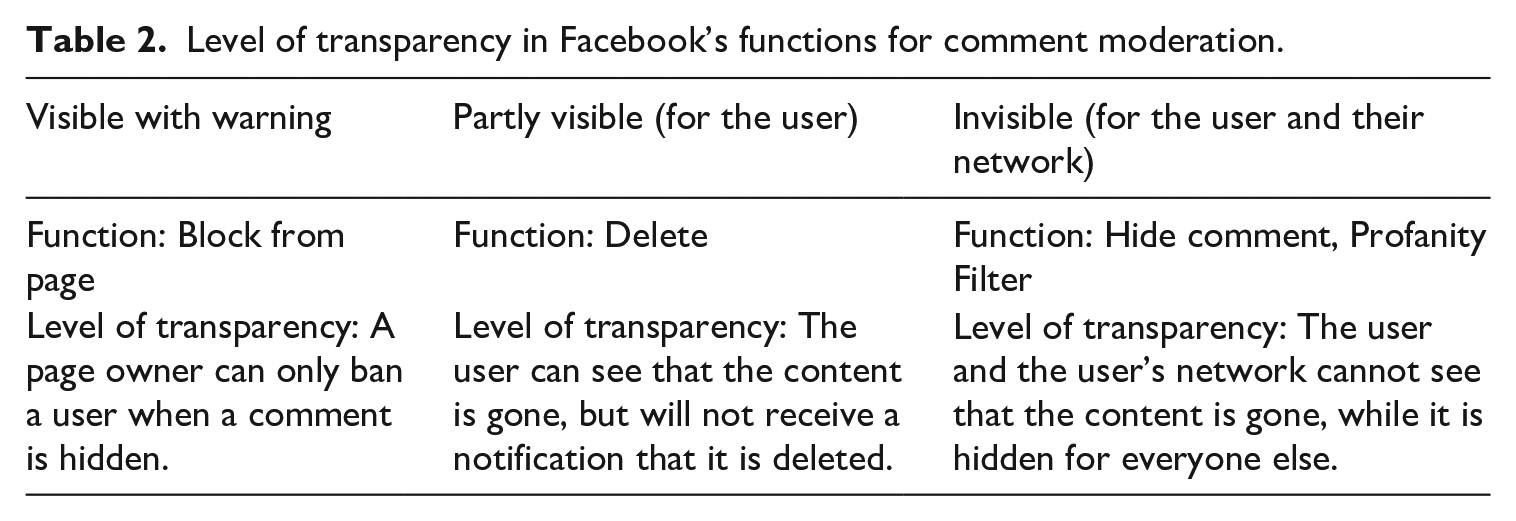

The results also address the question of the different forms of moderation’s transparency. Table 2 provides information on how some of the most common types of sanctions used by political parties appear to users.

Level of transparency in Facebook’s functions for comment moderation.

The analysis showed that, the most visible repercussion for users is to be blocked by a page or banned from a group. When blockages are imposed, users get a warning and become aware that their online behaviour, for different reasons, is unacceptable. If comments get deleted, users can see that their content is gone, but they do not get a notification from Facebook that the comments were deleted. Thus, users must pay attention to their comments in order to notice that they have been taken down by the moderator.

Finally, if a page or group administrator decides to hide comments, the users and their network (i.e. friends) do not see that the comments are hidden, and they are not notified about the moderation. In other words, the comments appear in the users’ comment section, but the content will be invisible to other people. The same is true of comments caught in the profanity filter.

The analysis thus revealed that Facebook offers various moderation tools that page owners can use to moderate debates. These functions are necessary because moderators cannot turn off the comment section. The different sanctions inhibit transparency at various levels for users, a point discussed more thoroughly in the following section.

Political parties’ moderation practices

To address the second research question (i.e. how political actors understand their responsibility to moderate online debates and how political actors conduct comment moderation to deal with incivility), we interviewed the communication advisors of the eight parties represented in the Norwegian Parliament at the time of the study. Three main topics are discussed below: perceived responsibility, rules and moderation and sanctions.

Perceived responsibility

A general finding of the interview analysis is that all political parties recognise they are responsible for moderating their Facebook page’s comment section. The parties have prioritised resources, implemented routines and trained communication advisors to conduct moderation, particularly during elections when Facebook activity is higher. The Green Party and Socialist Left’s advisors called this ‘an editorial responsibility’, while others described this duty in more general terms. The Progress Party’s moderator said the party’s main concern is to create a ‘well-functioning debate’ and is less concerned about what to call this responsibility.

The informants all expressed a need to moderate public debates for ethical reasons rather than as a legal obligation. For example, the Labour Party’s advisor stated: If we don’t deal with the uncivil comments and the hate, then we let down those who want to be in a constructive dialogue with us and who want to talk to the Labour Party. That is even worse because then we let down the participants and ourselves.

Political actors thus acknowledge that communicating through social media entails a responsibility to monitor the debates that arise and moderate uncivil behaviour. This responsibility has become more natural to the parties over time as Facebook has become the main platform for disseminating political messages.

Rules and moderation

The study’s results reveal that all the parties have developed commenting rules. Ideally, these guidelines can provide parametres in terms of what is allowed and transparency regarding how and why moderation occurs. Some parties’ rules are short and minimal, while others have published lengthier, more detailed guidelines. The Labour Party’s moderator said their debates have followed three main rules: Yes, we have very simple rules that say that you should respect your fellow debaters, be factual and tackle the ball, not the player. Those are our three simple rules, which we enforce in the comment section in a strict but fair manner.

To encourage constructive debates, the Conservative Party similarly encourages debaters to discuss the issue, not the person. Other political actors, such as the Liberal Party, describe their debate rules as a ‘customary’ understanding of what is acceptable behaviour.

In general, the parties’ debate rules are quite comparable, echoing each other and those found in newspapers (Ihlebæk and Krumsvik, 2015). To find the debate rules, however, can prove to be cumbersome. Most parties publish them in the ‘About’ section of their Facebook page, which is not immediately visible to commentators and thus requires some searching to find. Consequently, most users are most likely unaware of the rules until moderators refer to the guidelines directly when someone breaks them.

A closer look at how the informants view the problem of incivility and hate speech and how they practice moderation showed that all the informants were clearly careful to stress that they take a liberal approach to moderation and that they do not moderate comments because they disagree with the content politically. This perspective evidently reflects a normative position, and we cannot confirm if this is always the case in practice. In addition, the informants emphasised that they regularly experience problems with unwanted and uncivil participation, in particular when debating controversial political topics, which makes closely following these debates important.

The communication advisors interviewed raised another crucial point, which was confirmed by the analysis of Facebook’s moderation tools, that the comment section on Facebook pages is impossible to turn off, in contrast to Facebook groups. Consequently, to post content without opening it up for comments is not an option. This technological restriction indicates that the moderators need to have specific knowledge and skills to decide when to publish posts about what not only to attract attention but also to deal with uncivility. The informants pointed out that some topics – most typically about immigration, toll roads, climate change and wolf hunting – can trigger a large volume of comments within a short timeframe and, consequently, these posts require more attention from moderators.

Nonetheless, the parties still need to post content about core political issues that their supporters care about even if the issues are controversial. The Green Party’s moderator explained, ‘actually I have become almost allergic to wolves, so every time it is necessary to post something about it, I am a bit like: do we really have to post something about wolves? We hide comments quite often instead of deleting. If you delete a. comment, the will get a notice, and often, we experience that people become “hot-headed.” It is no use to spend time on those that do not behave. So I hide thing, but it is still visible for them and their friends. They can sit there and be “smug” about it, but no one else sees their comment and they are not notified’. To overcome the challenges presented by incivility, the informants pointed out that they often recruit volunteers and political supporters to follow debates, take part in discussions and engage in dialogue with users, especially during elections when posting frequencies are higher. When dialogues do not help, the moderators can apply different forms of sanctions.

Sanctions

Among Facebook’s affordances and toolbox for moderation, three main approaches are most often used by political actors to deal with incivility: delete comments, hide comments or block users. The political parties also screenshot threats made against their party leader or other politicians and report hate speech to the police. As mentioned previously, the interviewees were careful to stress that they tolerate a great deal of abuse, but measures to restrict debates are sometimes necessary since the moderators cannot choose to turn off the commenting function.

If debates ‘explode’ with uncivil language and hate comments that the moderators cannot handle, they can choose to delete the original post so that the associated comment section disappears. This option is naturally undesirable because the political parties must use the platform to communicate about core party issues. A useful tool to detect uncivil comments is Facebook’s profanity filter, a tool that can be turned on or off as needed. The filter identifies posts that include swear words or racism, and it can be set to medium or strong.

In addition, Facebook allows page owners to add specific unwanted words or phrases to the filter, which moderators know might trigger uncivil posts. The Centre Party’s advisor noted: We have Facebook’s profanity filter, and then I have added maybe 10 words myself. This is because I am the only one moderating, and I know from experience that, if a particular word is in a comment, it is not a comment that we want on the page.

When asked about which words they have added to the profanity filter, informants mentioned words such as quisling, whore, crone, bitch, nigger, negro, shot, neck shot, national traitor, Nazi, Arab party, racist, monkey party, monkey, fuck, hell, Satan, cunt, Asia hell and clown. The moderators also reported that they sometimes add misspelled words since the filter mechanism does not catch these words (e.g. ‘bitch’ and ‘bietch’). If a comment contains a word included in the profanity filter, it becomes invisible, a point discussed in more detail below.

When the moderators have to deal with comments that break the rules, they can choose to delete or hide them, as well as blocking the users. This study’s results show that deleting comments is relatively common across the political spectrum, sometimes on a daily basis, but all the informants said that they seldom block users because they want people to participate. The Socialist Left Party’s advisor suggested that they have become more liberal as they gained more experience with managing the comment section. This moderator said, ‘Before they used to block many people, which we have almost stopped doing. The only reason why we block now is if people publish direct threats, if they are repeatedly completely off topic or if they harass someone’.

In terms of transparency, the users are notified about being blocked by the Facebook system. However, when a comment is deleted, users are not notified, but they can see that the comment has disappeared. The informants pointed out that these sanctions can trigger a string of negative reactions from the users.

Various moderators explained that, as a result, they would rather use the hide function. Hiding comments makes them invisible to other people in the comment section, but the comments are still visible to the commentators and their network. In other words, the users remain unaware of the moderator’s decision to hide their comments. The informants said that they use this function to avoid generating tension in the comment section. The Liberal Party’s moderator reported, with ‘hiding, . . . it [the comment] does not become invisible to those who posted the comments and their friends, but the comment is invisible to anyone outside their network. So, it is much more common than blocking’. The Green Party’s advisor similarly related that: We hide comments quite often, instead of deleting them. If you delete a comment, the person will get a notice, and often, we experience that people become ‘hot-headed’. It is no use to spend time on those [users] when they do not behave. So, I hide things, and it is still visible to them and their friends. They can sit there and be smug about it, but no one else sees it and they are not notified.

While these responses clarify why the hide function is useful to moderators, they raise some questions about transparency, a point returned to in the next section.

Discussion

Moderation has become an important part of online public debates. No moderator is ever neutral, according to Gillespie (2010), and neither are the tools used to moderate public speech. The present study explored moderation practices on Facebook by investigating this platform’s technological tools and the ways that political actors understand and fulfil their responsibility to moderate debates. As this research demonstrated, Facebook offers a different set of functions depending on whether a page or group are involved. An important difference is that page owners cannot turn off the comment section, so, if a political party wants to post a message, they have to allow comments.

This study also found that all the parties in question recognise that they are responsible for the comment section, and some informants even compared moderation to an editorial responsibility. Notably, political parties’ acknowledgement of this responsibility has developed over time. In the early days of utilising Facebook as a platform, parties were less clear about whether and how this responsibility should be fulfilled. The current attitudes can be viewed as a sign of the political parties and their communication advisors’ maturation in terms of what communication on social media implies.

The results shed light on how political actors talk about these housekeeping practices, especially when unwanted comments appear. Even though all parties have developed rules for participation, moderators have difficulty drawing a line between harsh political disputes and uncivil speech. Moderating also draws on parties’ resources and requires these organisations’ members to acquire new skills regarding when to post and how to utilise the technological tools available. The informants described how they seek to engage users in dialogues, but these require them to delete comments and block users that continue to break the rules.

Communication advisors suggest that the hide function helps them to balance facilitating open debates with taking responsibility for controversial and sometimes uncivil comments. This tool is particularly important as Facebook does not allow page owners to close the comments section on posts, which is closely linked to this platform’s business model of user engagement. The hide function is effective because it conceals uncivil comments that might damage debates’ dynamics and avoids alienating the most ‘hot-headed’ commentators.

The interviewees’ view that hiding comments is a valuable moderation tool is understandable, yet the argument can be made that this practice conceals the conditions for participating in Facebook debates from the users. When commentators are not notified of sanctions, these users are unaware of being moderated and silenced. Consequently, users’ perception that they are participating in a public debate on a political Facebook page is an illusion, which is highly problematic from the users’ perspective. This lack of transparency in Facebook’s comment moderation functions makes understanding the limits set on civil debate much harder, thereby constituting a disservice to users and an obstacle to deliberative democratic learning.

We argue, therefore, that political actors must strive for more transparency in their comment moderation practices and use of Facebook’s tools. This platform has created and appointed a new Oversight Board to advise on ‘the most difficult and significant decisions around content’ on Facebook (Clegg, 2020). However, transparency also needs to be incorporated more thoroughly into the platform and the tools for moderating comment sections.

The current study has some clear limitations in that it only covered one political system, namely, eight Norwegian parties, for a limited period. Comparative research is urgently needed to evaluate attitudes and practices of online debate moderation across political systems and parties. In addition, this study only focused on one platform, Facebook, so further research should be conducted to outline and compare different digital platforms’ moderation functions, such as those available on YouTube, Instagram and Reddit, to clarify further the technological premises shaping public debates.

The current findings also relied on insights from political actors describing their moderation practices, so the results describe their views and attitudes. Future studies need to focus on using other methodological approaches to gain more detailed insights into the gatekeeping function of political actors’ moderation practices. More specifically, researchers should identify which comments are deleted or hidden and why. Despite these limitations, this research’s findings demonstrate why greater awareness is needed regarding political communication’s dynamics and its dependence on global media platforms in an increasingly digitalised and hybridised public sphere.