Abstract

Trainee psychologists, like those completing a postgraduate master’s programmes are vulnerable to experiencing impostorism. To help assess this risk and develop intervention strategies, there is a need for a brief validated measure of impostorism. The Leary Impostorism Scale (LIS) is a brief self-report measure that demonstrates satisfactory psychometric properties but has not been validated with trainees undertaking professional psychology training. The current study involves an exploratory factor analysis (EFA) of the LIS and describes levels of impostorism in a sample of Australian trainee psychologists. Participants were 161 postgraduate students enrolled in their first year of a psychology master’s programme. A maximum likelihood (ML) EFA revealed a single factor accounted for 73.67% of total variance. Parallel analysis verified the unidimensional model. A Cronbach’s α of .94, was obtained indicating high internal consistency. Overall, the sample scored at ‘moderate’ levels with 23% having high levels of impostorism. Further research is required to investigate further item reduction of the LIS due to high internal consistency. In addition, to clarifying factors that may account for differences between levels of impostorism between participants in different training programmes, there is a need to develop and assess interventions to reduce the negative effects of impostorism.

Keywords

Introduction

Impostorism is a psychological phenomenon of self-perceived fraudulence. Individuals experiencing impostorism perceive their abilities and accomplishments as overestimated by others, or achieved by means beyond their control (Clance & Imes, 1978). They lack internal experiences of success, believing their accomplishments are overvalued by others and perceiving themselves as fraudulent (Cisco, 2020). Further success perpetuates the perceived fraudulence, leading to increased self-doubt and fear of being exposed as dishonest (Clance, 1985), leading to significant psychological distress.

Although impostorism was initially considered a phenomenon unique to high-achieving and professional women (Clance & Imes, 1978), recent evidence suggests the phenomenon can present in any individuals who obtains high achievement and professional success (Rohrmann et al., 2016). Its development in professional contexts is thought to be exacerbated by a disconnect between one’s external presentation and internal perception (de Vries, 2005), particularly during stressful and uncertain transitional periods (e.g., during academic/career progression; Hutchins & Rainbolt, 2017). This explanation has been observable across health disciplines, including Medicine (Gottlieb et al., 2020; Rosenthal et al., 2021) and Nursing (Edwards-Maddox, 2022). The combination of rigorous academic training and demanding professional transitions (Gottlieb et al., 2020) may prime a perception of fraudulence.

This feeling of pervasive inauthenticity has been associated with negative outcomes for both professionals and students, like burnout (Edwards-Maddox, 2022) and reduced psychological wellbeing (Rosenthal et al., 2021). Research conducted by Malouf et al. (2023), highlighted the link between impostorism and burnout specifically in mental health professionals. Impostorism was also found to be correlated with intolerance of uncertainty and psychological inflexibility which were in turn associated with burnout. The authors speculated impostorism may mediate or moderate the relationship between these variables and burnout (Malouf et al., 2023). Comparable results were observed by Clark et al. (2022), with impostorism being positively associated with compassion fatigue and burnout in mental health professionals. Given the observable link between impostorism and negative psychological outcomes, there is a growing recognition that an effective method of assessing impostorism may aid in intervening early intervention and prevention.

This need for a valid measure of impostorsim may be particularly useful amongst trainee psychologists. As trainees can be particularly vulnerable to impostorism early in development, impostorism may contribute to inaccurate perceptions of competency development, and risks to psychological wellbeing. Psychology students report experiences of impostorism (Maftei et al., 2021) comparable to other related health fields (Freeman et al., 2022; Gottlieb et al., 2020) and postgraduate education (Cisco, 2020). The ability to accurately evaluate personal competencies is crucial to professional development (Wise et al., 2010), adapting to professional challenges and maintaining evidence-based practice (Creed et al., 2016). For example, impostorism-related underestimation of competence is associated with disengagement from professors and supervisors in postgraduate students (Cisco, 2020). Individuals with high self-rated scores of impostorism demonstrated reduced self-reflection capacity (Gadsby & Hohwy, 2024), underestimating their own ability and overestimating peers. An impostorism-associated inability to accurately evaluate ability could hamper ongoing competency development. Furthermore, impostorism can potentially negatively impact clinical outcomes (Falender & Shafranske, 2017). The associated symptoms of impostorism, including persistent self-doubt, and external evaluation sensitivity (Neureiter & Traut-Mattausch, 2016) could disrupt the client-patient relationship. Given the impostorism’s potential impact, a well validated measure would be beneficial in early identification and intervention on these challenges (Clark et al., 2022; Rosenthal et al., 2021). While there is limited validation using trainee psychologists, there are several potential measures of impostorism currently utilised in the literature.

The most utilised measure of impostorism (Mak et al., 2019) is the 20-item Clance Impostor Phenomenon Scale (CIPS; Clance, 1985; Clance & Imes, 1978). The CIPS conceptualises impostorism as consisting of three factors: ‘Fake’: Doubt of intelligence, abilities and/or achievements, ‘Luck’: Attributing success towards external circumstances and, ‘Discount’: propensity to downplay or not acknowledge success. Overall, the measure has well established reliability and validity (Brauer & Wolf, 2016). Clarity around the factor structure of the CIPS remains uncertain and while some evidence supports the three-factor interpretation (Brauer & Wolf, 2016; Chrisman et al, 1995), recent research suggests a unidimensional factor structure may be more appropriate (Erekson et al., 2024; Simon & Choi, 2018). In their impostorism measures review, Mak et al. (2019) noted that while the CIPS and other measures conceptualise impostorism multidimensionally, the measure in applied settings is operationalised unidimensionally. In contexts where a unidimensional measure is suitable, the shorter 7-item Leary Impostorism Scale (LIS; Leary et al., 2000) may be satisfactory.

In developing the LIS, impostorism was conceptualised as a core feeling of inauthenticity (Leary et al., 2000). Internal consistency of the LIS is reported to be satisfactory (e.g., α’s ranging .87–.94; Freeman et al., 2022; Leary et al., 2000) and established concurrent validity with other measures of impostorism (e.g., Pearsons r correlations ranging .60–.83; Freeman et al., 2022; Leary et al., 2000). However, the current pool of psychometric studies for this measure is limited to healthcare simulation educators (Freeman et al., 2022) and undergraduate students (Leary et al., 2000). Mak et al. (2019) state that the LIS along with other measures of impostorism ‘. . .require further validation to establish its strengths and utility as a potential gold standard measure’ (p. 12). Given the sample dependent factor structure variation of several impostorism measures (Mak et al., 2019), there is a need to validate an impostorism measure specifically in a trainee psychologist sample. The LIS was selected since we could locate no other studies that had validated the measure with a trainee psychologist sample. Further, its brevity allows for multiple administrations over time with minimal respondent burden. Currently, no studies have attempted to validate the LIS within the target population. Therefore, this study aims to examine the internal consistency and factor structure of the LIS and to provide descriptive data from a sample of trainee psychologists.

Method

Participants and procedure

Eligible participants were provisional psychologists enrolled in an accredited postgraduate psychology programme at an Australian university, either a Master of Professional Psychology (MPP), Master of School Psychology (MSP) or Master of Clinical Psychology (MCP). Participants had completed a 4-year degree in psychology and were enrolled in the current fifth-year programme to attain a general psychologist registration. Participants enrolled in the MCP programme also complete a sixth year to attain an area of practice endorsement. All eligible participants were invited to participate in a larger study evaluating a curriculum-relevant mindfulness workshop programme, commencing in Week 2 of the first year of the programme. Of a total eligible sample of 174, 12 individuals were excluded due to not providing consent. One participant was excluded due to not provide enrolment information. This resulted in a final sample of n = 161, with 129 participants being female and 32 male. Age ranged between 21 and 60 years (M = 27.33, SD = 7.05). MPP students represented 18.6% (n = 30), MSP 44.1% (n = 71) and MCP 37.3% (n = 60), respectively. The proportion of females in each programme were: MPP (n = 25); MSP (n = 53) and MCP (n = 51). The mean age for each programme was: MPP 26.43 (SD = 6.02); MSP 28.83 (SD = 8.86); and MCP 26.02 (SD = 4.39).

Participants completed the LIS alongside measures of mindfulness via on online survey before training commenced. We were interested in baseline levels of impostorism and whether levels changed over time with training. Participants were provided with an information sheet and opportunity to ask questions regarding the purpose of the study. Tacit consent was attained through completion of the survey. No incentives were provided for participation research. Participants were asked to create a unique identifier post survey completion to allow matching of future responses. All survey data was stored in a secure online database. To determine the effects of training, mindfulness was measured using the Five Facet Mindfulness Questionnaire - Short Form (FFMQ-SF; Bohlmeijer et al., 2011). These measures were repeated following completion of the mindfulness programme. Results relating to the effects of training and the mindfulness-impostorism relationship are described in a separate report (reference blinded for review).

The study was approved by the University of Wollongong Human Research Ethics Committee (Approval number 2019/012). Ethical considerations included ensuring sufficient freedom to decline participation. To address this, participants could participate in other online learning activities during the time allocated to completing the survey, and there was clear delineation between course-based learning expectations and voluntary research participation. Survey data was deidentified and was not accessed until the completion of trainees’ academic requirements.

Measures

The LIS (Leary et al., 2000) is a self-report, 7-item questionnaire utilsiing a 5-point Likert-type response scale (1 = Not at all characteristic of me, 2 = Slightly characteristic of me, 3 = Moderately characteristic of me, 4 = Very characteristic of me and 5 = Extremely characteristic of me). An example is: I tend to feel like a phony (Item2). No items were reverse scored. Possible total scores range from 7 to 35, with higher scores representative of greater impostorism. The LIS has no subscales, the total score serving as a measure of impostorism.

Statistical analysis

Factor analysis was conducted using SPSS (IBM Corp, 2021). Suitability of data for factor analysis was assessed through Bartlett’s test of sphericity (Bartlett, 1954), Kaiser–Meyer–Olkin’s measure of sampling adequacy (Kaiser, 1974) and data matrix review, identifying scores above 0.3 (Tabachnick & Fidell, 2013). Once suitability was determined, the 7-items of the LIS were subjected to a ML factor analysis with (orthogonal) varimax rotation. Using Kline’s (2016) guidelines, in samples with a minimum of 100 participants, a loading of 0.3 and above were considered significant. The criteria used for factor retention included Kaiser-Guttman’s criterion of an eigenvalue over 1, visual inspection of the scree plot and Horn’s parallel analysis (Horn, 1965). Based on Hair et al. (2018) the minimum acceptable variance explained was set at 60%. Differences in age and gender between the study programmes (MCP, MSP and MPP) were explored initially with one-way ANOVA or nonparametric analyses.

Results

The data were screened following recommendations by Hills (2011). Screening revealed no univariate outliers (z-scores ⩾±3.29, Tabachnick & Fidell, 2013). Multivariate outliers were assessed for using Mahalanobis distance. Using the probability of a −χ2-distribution, only one case held p-values less than .001 (Hair et al., 2018). Following case inspection, all values fell within the scale range and the total for all items was not extreme (M = 22). The EFA was conducted both with and without this multivariate outlier with no discernible difference in results, so results with the case included are reported. Multicollinearity was assessed through inspection of the item correlation matrix, Variance Inflation Factors (VIF) and Tolerance. Item correlations ranged r = .60 to .76. The highest VIF value was 4.15 with only 4/21 VIF values above 4.0. All these >4 values occurred for item #2. The highest tolerance was 0.24. Together these results suggested only moderate risk of multicollinearity (Kyriazos & Poga, 2023). Based on Mundfrom and Shaw (2005) recommendations of minimum sample size, the sample of 161 was considered sufficient to proceed with EFA.

Exploratory factor analysis

Scale development literature suggests an appropriate threshold to demonstrate internal item consistency is a total-item correlation value of .3 or higher (DeVellis, 2003). All seven items on the LIS were above this threshold (0.76–0.85; M = 0.80) indicating that the LIS is discriminating between those who score low and high on the scale (Miller et al., 2012).

Bartlett’s test of sphericity indicates the overall significance of the correlations amongst the items of the LIS. The results (χ2 = 929, df = 21, p < .001), further supported the matrices factorability. Kaiser–Meyer–Olkin’s (KMO) measure of sampling adequacy determined the sample size gathered appropriate for factor analysis. The KMO index of sampling adequacy was 0.91, demonstrating sufficient common variance amongst observed variables (Kaiser, 1974). Internal consistency reliability (Cronbach’s α) for the LIS was 0.94. Deletion of any item produced negligible changes in α (range .93–.94). Corrected item-total correlations ranged .76–.85.

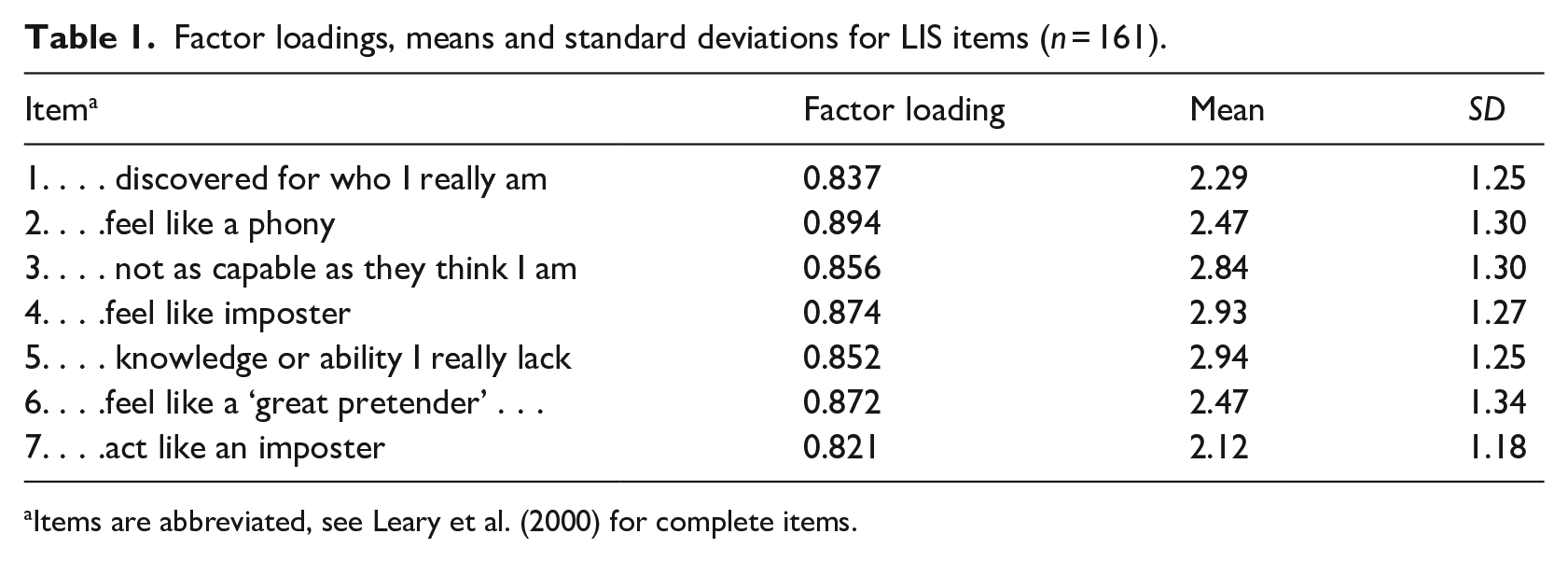

A ML EFA with varimax rotation was utilised. A ML extracts each factor to explain the maximum possible variance within the population correlation matrix using successive factoring, plus providing statistical significance for the extracted factors. With eigenvalue set to 1.0 (Guttman, 1956; Horn, 1965), a single factor accounted for 73.67% of total variance. Examination of the scree plot revealed a probable single factor solution to the model. All items loaded onto a single factor, with item loadings ranging 0.82–0.89 (see Table 1).

Factor loadings, means and standard deviations for LIS items (n = 161).

Items are abbreviated, see Leary et al. (2000) for complete items.

Parallel analysis utilised the procedures and SPSS syntax provided in Hayton et al. (2004). The original EFA eigen value for the first factor was 5.16 (73.67% of variance). The second eigen value was 0.48 (6.82% of variance). For the parallel analysis we extracted eigen values from 30 randomly generated datasets. The mean eigen value for the first factor was 1.19 below the eigen value for the first factor of the original data, the second and subsequent eigen values from the random datasets were all above those of the original dataset. Thus, the parallel analysis confirmed only one factor should be retained.

Comparisons between programmes of study groups

Age and gender

A one-way ANOVA was conducted to assess differences between programmes of study group for age. The homogeneity of variance assumption was violated (Levene statistic = 8.20, p < .001). Thus, a nonparametric Kruskal-Wallis Test was conducted and indicated that the mean rank age between programmes of study was not significant (Kruskal-Wallis H = 1.92, p > .05).

A 2 (gender) x 3 (programme of study) Chi-square indicated there was no significant difference in the proportion of females and males between the programmes of study (χ2 = 2.43, p > .05). Given there were no between group differences for age and gender these variables were not analysed further.

Levels of impostorism

No LIS scoring guidelines for determining problematic levels of impostorism are available. The mean total score for the full sample was 18.04 (SD = 7.62). Using response scale anchors as a guide, then this mean is closest to the ‘Moderately characteristic of me’ descriptive anchor.

About 18.6% of respondents scored 10 or below reflecting ‘not at all characteristic of me’ or very low levels of impostorism. About 49.7% of respondents produced a total score of 18 or more (i.e., on average selecting 3 = moderately characteristic of me). About 23% of respondents scored 25 or more placing them in the ‘Very’ and ‘Extremely’ characteristic of me range.

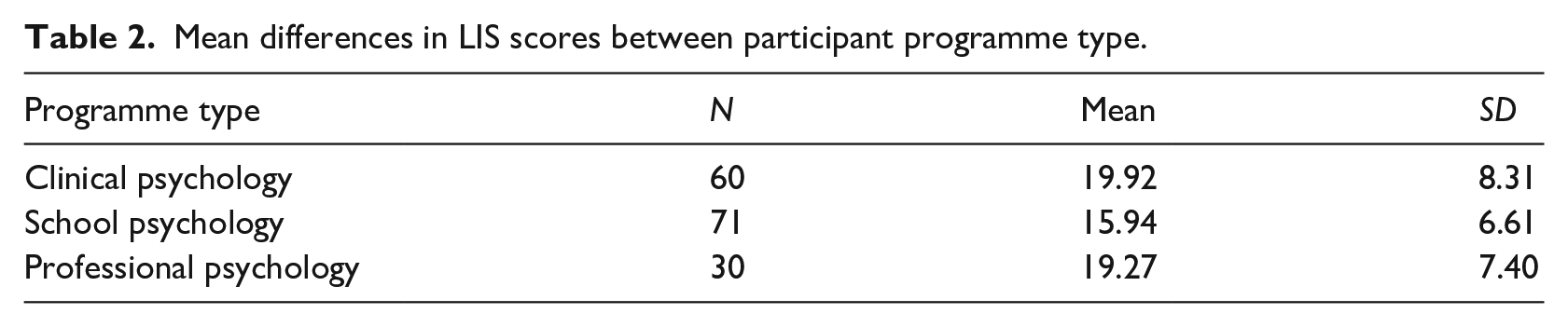

Means scores for each course type are provided in Table 2. Descriptively, MSP group scored lowest with a mean score closest to ‘slightly characteristic of me’ whereas those in the MCP and MPP courses had means closest to ‘moderately characteristic of me’.

Mean differences in LIS scores between participant programme type.

A one-way ANOVA was run to determine if there were differences in total impostorism scores between the MSP, MPP and MCP groups. There was a small positive skew in the data (skewness = 0.49), but ANOVA is robust to small variations from normality. Thus, we proceeded with the ANOVA (conducting a non-parametric equivalent test as a precaution). Both Levene’s test of homogeneity of error variances (Levene’s statistic = 2.94, p > .05) and the Brueusch-Pagan test of heteroskedasticity were not significant (χ2 = 2.99, p > .05). The ANOVA revealed a significant effect of course type on total impostorism score, [F(2, 158) = 5.15, p = .007]. Post-hoc analysis using Bonferroni adjustment indicated the mean difference between the MCP and the MSP group was significant (Mdiff = 3.97, p = .01), but the mean differences between MCP and MPP (Mdiff = 0.65, p = 1) and between MSP and MPP (Mdiff = 3.32, p = .13) was not significant.

A non-parametric Kruskal-Wallis H-test confirmed the ANOVA results, indicating there were differences in impostorism scores between the MSP, MPP and MCP groups, H (2, 161) = 8.58, p < .014. Pairwise comparisons (Mann Whitney U-test) replicated the ANOVA results indicating higher median impostorism scores for the MCP vs. MSP group (p < .01) and no significant differences for the MCP vs. MPP and MSP vs. MPP comparisons (p > .05)

Discussion

The current study aimed to assess the LIS factor structure and provide descriptive data for a sample of postgraduate psychology students undertaking training. The factor analysis revealed a single factor accounted for 73.6% of model variance. The LIS internal consistency reliability coefficient was high (α = .94) suggesting potential item redundancy. The current study outcomes resemble previous LIS validations, with comparable psychometric properties to undergraduate student populations (α = .87; Leary et al., 2000). Freeman et al. (2022) investigation of healthcare simulation educators obtained a single factor that explained 73.4% of the variance. Factor loadings ranged 0.61 (item 7) to 0.91 (item 1). The factor loadings in the current study had a more restricted but comparable range (0.82 (item 7) to 0.89 (item 2)). Freeman et al. Cronbach’s coefficient (α = .94) was identical to the current study. A notable difference was the range of individual item scores. Healthcare simulation educators had mean items scores ranging from 1.74 (item 7) to 2.47 (item 4). In the current study item means were overall higher, ranging from 2.12 (item 7) to 2.94 (item 5). Despite descriptive differences in overall levels of impostorism between both studies, the psychometric results for the LIS are highly similar. This provides preliminary evidence that the LIS has consistent unidimensional properties across different samples and is likely to be valid for use amongst postgraduate professional psychology students.

The brief LIS may be used to screen trainees at risk of impostorism. This could be implemented in an individual or group format, discussing the phenomenon and its potential influence on trainees’ confidence, reflective practices and willingness to disclose vulnerabilities in supervision. Descriptive data on the LIS provides a reference point for screening, indicating that in the current sample almost 50% were experiencing at least ‘moderate’ levels and 23% were experiencing very high levels of impostorism. This indicates that impostorism is a significant phenomenon in professional psychology trainees. This highlights the need to assess and address impostorism in these cohorts. The current study provides validity data and preliminary normative information to help identify those at risk.

The LIS is a brief measure of impostorism, likely to have utility as a low burden assessment. While early presence of impostorism may be expected, it is preferable these experiences do not persist or worsen as training progresses. This is crucial in preventing development of secondary concerns like loss of confidence or burnout (Clark et al., 2022; Malouf et al., 2023; Rosenthal et al., 2021). It is also essential given the potential negative effects on accurate self-reflective capacity and perceptions of competence (Gadsby & Hohwy, 2024). The current study provides normative reference data to help identify students experiencing high levels of impostorism. This may support targeted responses by helping identify high impostorism as an issue to be addressed in supervision. In addition, more universal interventions may also be helpful. Such strategies might include highlighting the impostorism phenomenon as common in high achieving professional cohorts, challenging the unhelpful thinking and associated responses and providing skills to minimise the secondary effects of impostorism such as avoidance. For example, avoidance may manifest as reluctance of a trainee to reveal struggles they are having in their clinical work with clients. This may occur in a misguided attempt to protect themselves from being exposed. Leary and colleagues early research with the LIS revealed that, high impostors, ‘. . .. appeared to use a protective style that would avoid making a blatantly negative impression and, thereby, avoid disapproval’ (Leary et al., 2000, p. 750).

MCP enrolment is highly competitive, typically has three times the applicants and higher average grades compared to MSP programmes. This may overinflate the individuals’ perception of required capabilities, or an attribution of acceptance towards circumstances beyond their abilities. Specific expectations about the different roles and types of client problems seen by Clinical/Professional Psychologists compared to School Psychologists could also explain the different impostorism levels. For example, school-based problems of young people may be viewed as less severe because they are on average of shorter duration due to lower ages. It is possible that the shared educational context between trainees and school students may mean they believe they better understand the types of problems that present. This could result in a greater sense of capability that contributes to lower impostorism in MSP trainees. Finally, the MSP programme was conducted online and while the LIS was administered early in the programme, this possibly restricted opportunity for peer comparison compared to those in the MCP and MPP programmes. Although further research is needed to test the potential hypotheses explaining the differences between training programmes, the findings do have potential implications. Firstly, programme administrators must understand that those in the MCP and MPP programmes appear to be more susceptible to high levels of impostorism and require a focus on impact reduction strategies. Besides recognising some groups may be more vulnerable to such feelings, other differential responses based on training types require additional research.

Future research could test potential explanations for differing impostorism levels associated with the observed differences between those in different study programmes. If differences are due to variations in perceived competitiveness for entry into the programmes, then items exploring perceived competitiveness could be used as impostorism prediction moderators. Qualitative research may help reveal additional factors explaining programme differences. As noted, the LIS’s high internal consistency coefficient suggests the measure could potentially be reduced further and still provide a valid global measure of impostorism.

A limitation of the current study was the mixed sample of participants across different training programmes. Finding differences in levels of impostorism was not expected and the low group sizes prevented separate factor analyses to be conducted within programmes. In addition, we did not collect additional data that might help explain these differences. If as proposed, the brevity of the LIS provides the opportunity for repeated administrations over time, assessment of test-retest reliability of the measure for varying time periods and different points in training is required. Finally, a second measure of impostorism would have allowed for the assessment of concurrent validity.

Conclusion

In conclusion, this study was able to identify a unidimensional factor structure of the LIS, replicating findings of previous research (Freeman et al., 2022; Leary et al., 2000). It was also able to identify descriptive data of the prevalence of impostorism amongst trainee psychologists. identifying it as a noticeable concern for a significant proportion of participants. Future research should consider exploring variations in impostorism across different programmes of study, as well as intervention strategies to address the presentation of impostorim in trainee psychologists.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The authors received financial support from the University of Wollongong Educational Strategies Development Fund.