Abstract

LLM-driven healthcare chatbots for preliminary medical consultation are a promising innovation to improve healthcare accessibility and efficiency. However, public acceptance of this technology in the Chinese context, especially the impact of users’ previous experience with relevant technologies on user behavior, remains underexplored. To address this gap, we extended the classical Unified Theory of Acceptance and Use of Technology (UTAUT) framework by examining the moderating effects of users’ previous experience with telemedicine and large language models (LLMs). Using a scenario-based survey, we collected 502 valid responses from general Chinese users and analyzed the data through Structural Equation Modelling (SEM). Our results demonstrated that performance expectancy, social influence, trust, and facilitating conditions were significant contributing factors, whereas effort expectancy was not, which contradicts previous literature. Moreover, users’ previous experience with LLMs exhibited significant moderating effects whereas previous experience with telemedicine didn’t. These findings contribute to the literature by suggesting that as LLMs become more widely adopted, users’ familiarity with them may enhance trust and, consequently, increase the general acceptance of LLM-driven healthcare chatbots.

Keywords

Introduction

Given the rapid development of Artificial Intelligence (AI) across various sectors, including healthcare, healthcare providers are increasingly incorporating AI in healthcare services, by means such as AI assisted triage, enquiry, treatment plan and CT report generation to improve efficiency and parity.1,2 Among them, LLM-driven healthcare chatbots, the intelligent conversational agents that utilize generative artificial intelligence to provide medical information, symptom assessment, and preliminary consultations in conversations have achieved notable breakthroughs and are being promoted by healthcare providers, 3 which may save them from repetitive labor and reduce users’ waiting time for consultation services.4,5 Tech-savvy users have embraced LLM-driven chatbots as their preferred method of medical consultation. However, the general public may perceive interacting with AI as cognitively demanding or may doubt the chatbot’s diagnostic accuracy compared to human doctors. 6 The unfamiliarity and complicated procedure may impede the users’ willingness to use such services. 7 Moreover, the user’s trust in LLM-driven chatbots may also affect their willingness to use them for healthcare purposes. 8 Considering these potential challenges, it is important to examine and understand the mechanism of which users choose to accept these LLM-driven healthcare chatbots. 9

Previous research has investigated the acceptance of LLM-driven chatbots from different orientations. 10 For example, researchers focused on individual-level determinants and found that perceived usefulness, subjective norms, and trust significantly influence users’ intention to use LLM-driven health chatbots. 11 Similarly, another study that explored seniors’ adoption of AI-driven healthcare services highlighted the importance of ease of use, perceived usefulness, and emotional support. 12 However, the mechanism of which users’ expectations and perceptions influences the adoption of LLM-driven healthcare chatbots through moderation effects of previous user experiences of related technology remains unexplored.

Behavioral studies often adopt a technology-psychology-society framework to examine the acceptance of LLM-driven chatbots in healthcare and other domains. From a technological perspective, factors such as perceived usefulness, performance expectancy, and system quality are commonly identified as key drivers of user adoption. 13 From a psychological perspective, trust, privacy concerns, and perceived risk often shape users’ emotional and cognitive responses to chatbot use. 13 Meanwhile, the societal dimension captures the impact of subjective norms, peer influence, and organizational endorsement, which can significantly affect behavioral intention. 14 We also incorporate this framework in this study to investigate the contributing factors that affect users’ behavioral intention to adopt the LLM-driven healthcare chatbots.

Users’ previous experience has been identified as an important factor in the adoption of new technology. For instance, researchers grouped survey participants into 3 clusters based on their previous user experience to examine the factors affecting their acceptance of commercial healthcare apps.

15

Another research investigated the acceptance of AI health assistants and incorporated user experience as a control variable.

16

However, its moderation effects were not considered in previous studies. From our knowledge, few studies have considered the impact of previous experience with LLMs and telemedicine on the acceptance of LLM-driven healthcare chatbots. Telemedicine has been increasingly used by patients. Its functions include online appointment, follow-up care, health education, and remote consultations. For remote consultation, text and graphic communication are mostly widely used, which is similar to talking with an LLM-driven healthcare chatbots. However, remote consultations in telemedicine involve communication with actual physicians, which fundamentally differs from engaging with LLM-driven healthcare chatbots. Consequently, the impact on public acceptance of healthcare chatbots may vary. Therefore, investigating the effects of users’ previous experience on the adoption of healthcare chatbots would extend our current understanding of users’ acceptance for these chatbots, which could potentially guide the chatbot developers to fulfil the users’ expectations. Therefore, in this study, we seek to address this gap by examining the moderation effects of users’ previous experience with LLMs and telemedicine on users’ behavioral intention to adopt the healthcare chatbots. We propose the following research questions: 1. How do technological, psychological and societal contributing factors influence users’ behavioral intention to adopt the LLM-driven healthcare chatbots? 2. How does previous user experience with telemedicine moderate the relationship between contributing factors and users’ behavioral intention to adopt LLM-driven healthcare chatbots? 3. How does previous user experience with LLMs moderate the relationship between contributing factors and users’ behavioral intention to adopt LLM-driven healthcare chatbots?

The Unified Theory of Acceptance and Use of Technology (UTAUT) framework is applied for our analysis and discussion, which comprehensively captures the technological, psychological, and social dimensions of user behavior. In this study, we identified five key constructs: performance expectancy, effort expectancy, social influence, trust, and facilitating conditions, and we employed a survey-based research to address our proposed research questions. Firstly, we conducted a survey to explore users’ underlying perceptions, expectations, and concerns regarding healthcare chatbots related to the constructs, and their previous experience with telemedicine and LLM chatbots. Secondly, we performed a Structural Equation Modeling (SEM) analysis with the collected data from the questionnaire and then conducted a multi-group analysis (MGA) to identify the moderation effects of users’ previous experience with telemedicine and LLM chatbots.

Our study makes several contributions to the existing study. First, it integrates trust and previous experience with telemedicine and LLM chatbots into the well-established UTAUT framework to examine users’ acceptance of LLM-driven healthcare chatbots. To our knowledge, this is one of the first attempts to investigate the moderation effects of users’ previous experience on their behavioral intention to adopt LLM-driven healthcare chatbots. Furthermore, this research provides developers of LLM-driven healthcare chatbots with a comprehensive view of user adoption from a UTAUT perspective, which can help guide the design and implementation of LLM-based conversational agents in healthcare contexts.

Methods

LLMs and LLM-driven healthcare chatbots

Generative artificial intelligence is a class of machine learning models designed to produce content by learning patterns from large datasets. 17 It has increasingly been recognized as a transformative technology with profound implications across multiple domains, including education, journalism, and financial technology.18–20 Meanwhile, it has also been revolutionizing the way medical services are used to be delivered and becomes an integral component of the healthcare industry. 21 For example, emerging research shows that GPT-4 has demonstrated significant potential in clinical diagnostics, outperforming simulated human readers, 22 highlighting its capability as a powerful supportive tool in medical diagnostics.

Recently, LLM-driven healthcare chatbots are increasingly being adopted to enhance efficiency, accessibility, and accuracy in healthcare services.23–25 These chatbots have been utilized for managing chronic conditions, providing health education, behavior change support, and self-care guidance globally, 26 which is expected to improve the efficiency of healthcare services. It is expected that soon they will also be integrated into primary care settings to assist with patient consultations, aiming to alleviate physicians’ workload and improve patient access to medical care. 27

In the context of China, the integration of technological advancements into healthcare services has been actively embraced by local governments, hospitals and the healthcare industry, driven by persistent systemic challenges such as the unequal distribution of medical resources, the disparities in access to high-quality care, and the growing pressure of an aging population. Despite notable progress in expanding healthcare coverage and infrastructure in recent years, these structural issues continue to necessitate innovative, technology-driven solutions. For example, telemedicine has experienced rapid development in China with the potential to provide high-quality healthcare services, especially for remote and rural populations, who has limited access to traditional healthcare services. The emergence of AI technologies and their integration with healthcare services, including chatbot doctors and others, have presented a novel and efficient solution to enhance healthcare accessibility and equity.25,28–31 However, the user acceptance of these LLM-driven healthcare chatbots in China have yet to be comprehensively examined. In this research, we apply the Unified Theory of Acceptance and Use of Technology (UTAUT) framework to investigate the key factors influencing Chinese users’ willingness to adopt LLM-driven healthcare chatbots, using a structured survey to assess constructs such as performance expectancy, effort expectancy, social influence, trust, facilitating conditions, and behavioral intention.

Theoretical lenses on user acceptance to LLM-driven healthcare chatbots

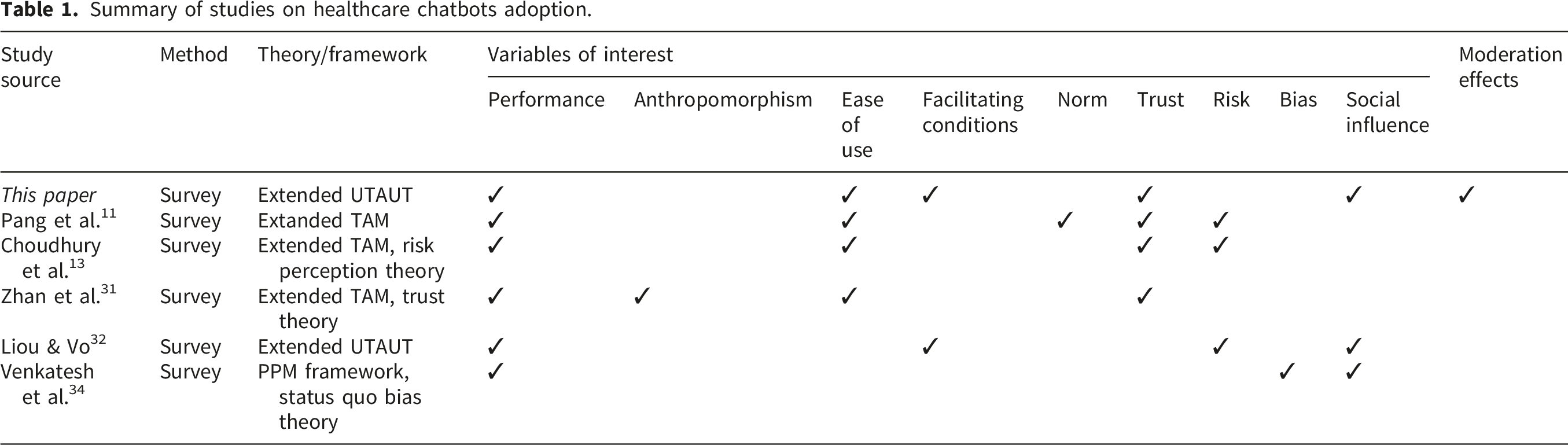

Summary of studies on healthcare chatbots adoption.

Direct effects

This study adopts the Unified Theory of Acceptance and Use of Technology (UTAUT) to examine the factors influencing users’ intention to adopt LLM-driven healthcare chatbots, including performance expectancy, effort expectancy, social influence, and facilitating conditions. 35 Moreover, trust, a commonly added construct in healthcare technology research, is also included as an additional predictor. 10

Performance expectancy refers to users’ belief that adopting an LLM-driven healthcare chatbot will enhance efficiency and effectiveness in healthcare-related tasks. Research indicates that it plays a crucial role in the adoption of LLM-driven healthcare chatbots. Researchers identified performance expectancy as a key factor influencing both patients’ and healthcare professionals’ willingness to adopt conversational agents. 14 Moreover, another study demonstrated that performance expectancy is a significant predictor of behavioral intention toward AI applications in healthcare. 36 Based on these results, we proposed the following hypothesis:

Performance Expectancy positively influences users’ behavioral intention to adopt LLM-driven healthcare chatbots.

Effort expectancy refers to users’ perception of how easy it is to interact with a chatbot. Existing studies have highlighted that the ease of use is a key determinant of AI chatbot adoption. For instance, researchers found that older users’ acceptance of healthcare chatbots is driven by the ease of use, suggesting that user-friendly chatbot interfaces can facilitate users’ adoption. 11 In addition, others also emphasized the role of effort expectancy in chatbot acceptance, particularly among patients with limited technical skills. 14 Based on these arguments, we proposed the following hypothesis:

Effort Expectancy positively influences users’ behavioral intention to adopt LLM-driven healthcare chatbots.

Social influence captures the impact of external opinions, such as those from healthcare professionals, peers, and social norms, on chatbot adoption. Previous research emphasized that social influence plays a crucial role in patients’ and healthcare professionals’ decisions to adopt chatbots. 14 Similarly, a study found that subjective norms significantly influence the intention to use LLM-driven health diagnostic chatbots, suggesting that recommendations from trusted individuals could shape users’ intention to adopt new technology. 10 Based on these findings, we proposed the following hypothesis:

Social Influence positively influences users’ behavioral intention to adopt LLM-driven healthcare chatbots.

Research suggests that trust in AI chatbots significantly impacts user acceptance, as concerns over accuracy, security, and reliability influence behavioral intention. Research found that trust in AI-led chatbot services is a key driver of acceptability, while concerns about cybersecurity remain a challenge 37 Researchers also emphasized that trust in AI-driven chatbots is crucial for medical students’ acceptance, especially regarding data security and workplace monitoring. 38 Additionally, another study found that the skepticism about AI chatbots’ ability to provide accurate medical advice limits the overall adoption, indicating the necessity of trust-building measures. 39 Researchers further highlighted that trust in AI-based home care systems directly influences users’ perceived usefulness and willingness to adopt such technology. 40 Based on the arguments above, we proposed the following hypothesis:

Trust positively influences users’ behavioral intention to adopt LLM-driven healthcare chatbots.

Facilitating conditions refer to the availability of resources, technical support, and training that are necessary for the adoption of LLM-driven healthcare chatbots. Prior research suggests that these supportive environments can enhance AI chatbot usage in healthcare. For example, researchers noted that physicians indicated that better support systems could improve adoption of these healthcare chatbots. 41 Based on these findings, we proposed the following hypothesis:

Facilitating Conditions positively influence users’ behavioral intention to adopt LLM-driven healthcare chatbots.

Moderation effects

In addition to the direct effects, this study further examines how previous user experience with telemedicine and LLMs influences the strength and direction of the relationships between the five core predictors and users’ intention to adopt LLM-driven healthcare chatbots. In light of the current research gap, we propose that users’ previous experience with telemedicine and LLMs could act as moderators.

Individuals who have used online hospitals are more likely to perceive AI-based health chatbots as accessible, useful, and credible. For instance, researchers highlighted that users familiar with digital health tools, including online platforms, perceive AI chatbots as credible and effective for mental health support. 42 and another study identified that individuals with prior experience to digital healthcare services (e.g., online hospitals) are more likely to adopt LLM-driven healthcare chatbots. 43 Based on these findings, we propose the following hypothesis:

Previous experience with telemedicine moderates the relationships between the contributing factors and users’ behavioral intention to adopt LLM-driven healthcare chatbots.

Also, previous experience with AI chatbots may significantly influence users’ cognitive processing, trust, and acceptance of healthcare chatbots. Previous research indicated that experience with LLMs can reduce uncertainty, increase confidence, and enhance perceived ease of use. For instance, Researchers highlighted that previous interaction experience with AI could reduce users’ uncertainty and increase their overall confidence in the technology. 43 and others demonstrated that familiarity with LLM tools would lower users’ risk perception in downstream applications, thereby increasing trust and behavioral intention. 44 Based on the research above, we propose the following hypothesis:

Previous experience with LLMs moderates the relationship between the contributing factors and users’ behavioral intention to adopt LLM-driven healthcare chatbots.

From the above hypotheses, we can present the conceptual model as follows (Figure 1). Conceptual model.

Research design

Our study employed a structured questionnaire survey with a vignette. The study was designed and reported in accordance with the STROBE guidelines. All relevant items for cross-sectional studies, including study design, participants selection, variables, data sources, bias, study size, statistical methods, and results, were addressed as recommended by STROBE.

Following the data collection process, reliability and validity were assessed through construct reliability, convergent reliability, discriminant validity, and confirmatory factor analysis. Subsequently, direct effects were examined using covariance-based structural equation modeling (CB-SEM), and moderation effects were analyzed through multi-group analysis (MGA). The sequential analysis of the model is depicted in Figure 2. Sequential approach for validation of the model.

Survey

At the beginning of the questionnaire, a contextual example of an LLM-driven healthcare chatbot was presented with a case of medical consultation process, as shown in Figure 3. A contextual example of AI healthcare chatbots.

In the example, the LLM-driven healthcare chatbot collected information by asking about the user’s symptoms and medical background. At the end of the dialogue, the chatbot provided an assessment report suggesting the possible causes of the symptoms and treatment.

Secondly, questions were provided to the respondents to assess the factors influencing their willingness to use such LLM-driven healthcare chatbots. A total of 18 questions related to 5 model constructs were presented, with responses measured on a five-point Likert scale ranging from “strongly disagree” to “strongly agree.” The questions were based on previous research on the adoption of healthcare chatbots using the UTAUT framework.12,31,32

Thirdly, the survey also included questions related to respondents’ demographic characteristics, as well as measures of their previous experience with telemedicine and LLMs.

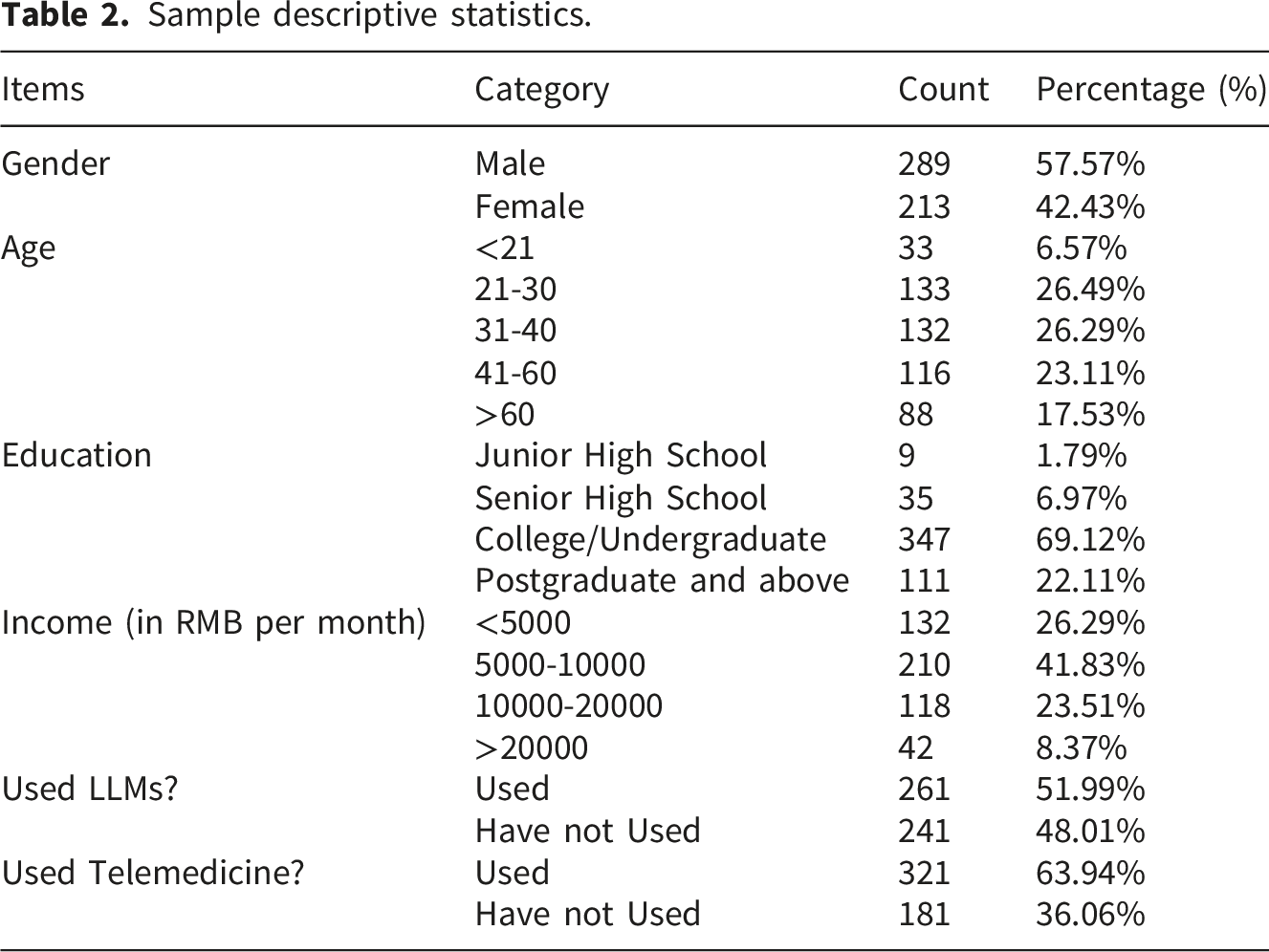

Samples and data collection

Sample descriptive statistics.

Results

Reliability and validity analysis

Convergent validity and reliability.

Note. ***p<0.001; Unstd.=Unstandardized Loading; S.E.=Standard Error; Std.=Standardized Loading; SMC = Squared Multiple Correlation.

HTMT analysis.

The model has a GFI of 0.908, an AGFI of 0.869, a CFI of 0.912, a TLI of 0.888, a SRMR of 0.058 and a RMSEA of 0.071. Overall, these Goodness of fit statistics indicate a satisfactory level of fit of the model.51,52

Direct effects analysis

Direct effects analysis.

Note. ***p<0.001; **p<0.01; *p<0.05; Unstd.=Unstandardized Estimate; S.E.=Standard Error; C.R.=Critical Ratio; Std.=Standardized Estimate.

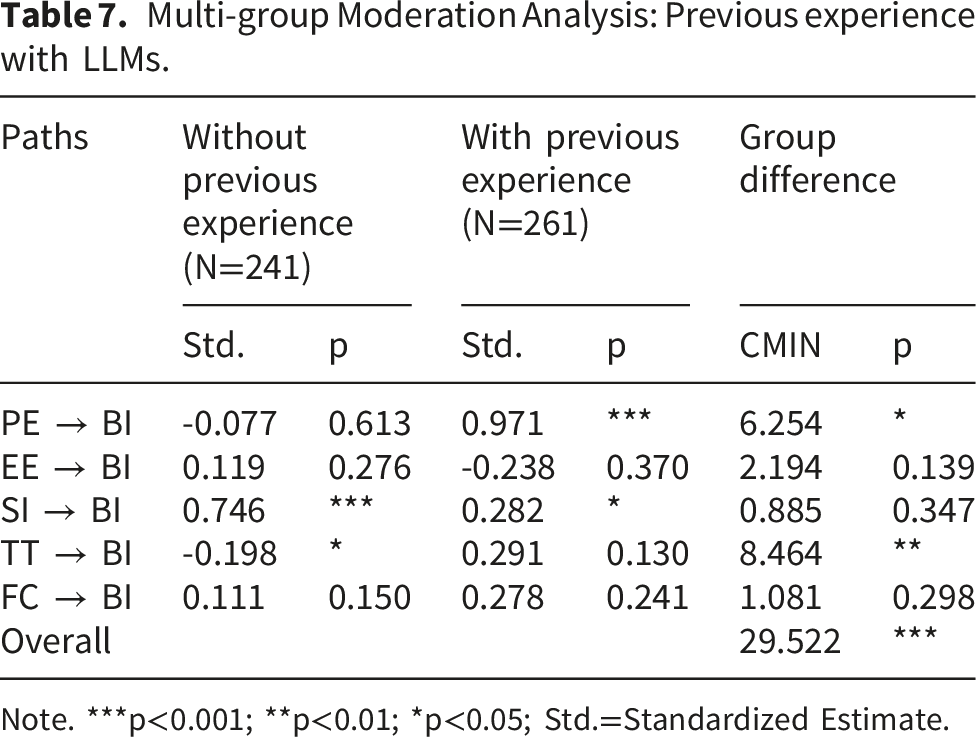

Moderation effects analysis

The moderating role of previous experiences with telemedicine was examined using nested model comparisons in Amos with 2000 bootstrapped samples, which relies on Chi-Square difference tests to determine whether a moderator produces statistically significant differences in structural relationships.

Multi-group moderation analysis: Previous experience with telemedicine.

Multi-group Moderation Analysis: Previous experience with LLMs.

Note. ***p<0.001; **p<0.01; *p<0.05; Std.=Standardized Estimate.

Discussion

Key findings

In this study, we developed a context-specific research model with five contributing factors that would potentially influence users’ behavioral intention to adopt LLM-driven healthcare chatbots based on the existing literature. Our results revealed the relationships between each contributing factor and users’ behavioral intention. The findings also highlight the importance of the moderation effects of previous user experience with LLMs on the adoption of the LLM-driven healthcare chatbots.

First, social influence emerges as the strongest predictor affecting user adoption of LLM-driven healthcare chatbots, highlighting the critical role of perceived support or endorsement from peers and healthcare professionals. This finding indicates that the peers and healthcare professionals’ endorsement would enhance the users’ acceptance of LLM-driven healthcare chatbots. This finding also shows that the “words-of-mouth” effect is valid in this context. Additionally, performance expectancy positively influences the users’ behavior intention, suggesting that efficiency, convenience, and health outcomes are vital factors when users are making their adoption decisions. Facilitating conditions such as reliable internet connectivity and accessible technical infrastructure also significantly boost users’ intention to adopt LLM-driven healthcare chatbots by easing adoption barriers.

Second, our results also underscore the significant role of trust as a distinct factor shaping users’ acceptance of LLM-driven healthcare chatbots. Greater trust in these AI systems corresponds to higher adoption intentions, reinforcing the importance of credibility, security, and transparency in technological design. Users who perceive chatbots as trustworthy are less constrained by privacy risks, thus increasing their willingness to rely on these digital health solutions. This finding also underscores the belief that trust serves as a crucial psychological factor as far as healthcare services are concerned.

However, effort expectancy did not show significant effects in the adoption process, potentially reflecting users’ growing familiarity and comfort with new APPs. As individuals become more accustomed to install new APPs on their laptops or mobile phones, the perceived effort required to use a new APP, including healthcare chatbots, may diminish. This shift suggests that perceived ease-of-use is no longer a primary barrier to user adoption. Consequently, traditional constructs like effort expectancy may play a less prominent role in shaping users’ behavioral intentions in this context.

More importantly, regarding the moderating effects, previous experience with telemedicine services did not exhibit substantial effects on the user adoption of LLM-driven healthcare chatbots. One plausible explanation is that the form of telemedicine is inherently different from LLM-driven healthcare chatbots. In particular, telemedicine platforms often involve functionalities including appointment scheduling, retrieval of medical results, and remote consultations with human doctors, aligning closely with familiar internet services. Users still interact with human service providers when using these telemedicine services, which indicates that the experience with telemedicine cannot be directly applied in their interactions with LLM-driven healthcare chatbots. Therefore, users’ previous experience with telemedicine has a limited impact due to the minimal overlap with the unique cognitive or trust-related challenges posed by generative AI.

In contrast, previous experience specifically with LLMs significantly moderate adoption intentions. Users who had previous interactions with LLMs tend to better comprehend their underlying operational principles, thus mitigating concerns regarding AI-generated content’s reliability and accuracy. Their previous engagements with LLMs could reduce trust-related barriers, allowing these users to emphasize functional benefits rather than perceived risks. Conversely, users with limited or no generative AI experience face higher uncertainty, leading to increased skepticism. Instead of performance-based evaluations, their adoption decisions are therefore influenced by their level of trust in these LLM-driven healthcare chatbots, including their risk perceptions and ethical concerns.

Implications for research

This paper makes several important contributions to the technology acceptance literature by addressing the existing gaps. Our foremost contribution is that we have identified the moderating effects of users’ previous experience with LLMs and clarified its role in the user adoption of LLM-driven healthcare chatbots. This approach was often overlooked by previous research on technological and psychological features of adoption in technology acceptance literature.10,12 Our findings highlighted that previous experience with LLMs could decrease the impact of trust in users’ adoption decisions and make users focus more on performance expectancy. To our knowledge, this study is one of the first attempts to incorporate previous experience as a moderator to investigate user adoption behavior in LLM-driven healthcare chatbots. By doing so, our research provided a foundation for future research examining experience-based differences in technology adoption.

Moreover, our research advances technology acceptance literature by investigating users’ adoption decisions from the extended UTAUT perspective. We have systematically explored the intricate mechanisms associated with users’ adoption of LLM-driven healthcare chatbots. Previous literature has focused on the influence of trust on user intent to use LLMs for healthcare purposes but have not holistically considered the role of other technological, societal, and psychological factors in user adoption of LLM-driven healthcare chatbots. 12 We address this gap in our study from a more comprehensive perspective in terms of the user adoption process.

Implications for practice

Our findings offer valuable guidance for developers and practitioners of healthcare chatbots. First, in order to enhance user acceptance, chatbot providers should emphasize building and maintaining user trust through transparent communication, robust security protocols, and clear disclosure of data privacy policies. Offering users insights into how their data is used and protected can further bolster confidence in the service.

Second, chatbot developers should prioritize user-centric functional performance by focusing on key aspects such as information reliability and diagnostic accuracy, which ensures that the tools provide precise and dependable results. Information reliability is fundamental, which helps the chatbots to generate accurate results, ultimately improve users’ adoption of the technology. Higher diagnostic accuracy can also promote trust from users, and produce new realiable information (e.g. feedback) to optimize diagnostics, which may enhance user engagements in the long run. Moreover, user-friendly interface is essential, as a clear navigation and interface design could greatly enhance the user experience.

Additionally, given the significant positive effect of social influence, healthcare organizations should take an active role in promoting LLM-driven services through endorsements by trusted professionals and well-designed informational campaigns. Adoption is likely to increase when these LLM-driven healthcare chatbots are explicitly recommended and supported by healthcare institutions and qualified medical practitioners, as such endorsements would enhance credibility and reduce user uncertainty in the technology.

Besides, our findings also carry important practical implications for policymakers and healthcare organizations seeking to promote the responsible adoption of LLM. Given that prior user experience is positively associated with trust, targeted strategies to facilitate safe initial exposure may be particularly effective among populations who have limited familiarity with such technologies before. For example, healthcare institutions could implement pilot programs or guided demonstration sessions that allow patients to interact with LLM-based tools in supervised settings, thereby reducing uncertainty and perceived risk. Transparent communication and legislation regarding system capabilities, limitations, data usage, and privacy protections is also essential to mitigate concerns about misuse of private data. In addition, policymakers may consider strengthening regulatory oversight and establishing clear accountability frameworks to enhance institutional trust. Finally, digital literacy initiatives tailored to vulnerable or less technologically experienced groups could further support informed and confident engagement with LLM-enabled healthcare services.

Limitations and future research

Despite its contributions, this study also faces certain limitations.

First, our research utilized survey data from a single country, China. Perceptions of technology are likely to be shaped by culturally embedded values, of which may differ substantially across national contexts. Therefore, the attitudinal patterns observed in this study should not be assumed to generalize automatically to other countries. While the effects of prior user experience may exhibit a certain degree of cross-contextual consistency due to common behavioral mechanisms, the magnitude and expression of these effects may still vary depending on local norms and institutional settings. Future research employing cross-national or comparative designs would be necessary to assess the external validity of our findings more rigorously.

Second, our sample predominantly consisted of younger individuals with generally high levels of education, potentially limiting the generalizability of the findings to older and less educated populations. Previous research suggests that individuals with lower level of education may hold more skeptical attitudes toward AI in healthcare. For instance, Erul et al. found that less-educated cancer patients exhibited significantly higher discomfort and concern regarding AI-assisted diagnosis, particularly regrading to the accuracy and data privacy. 53 This indicates that education level can shape perceptions of AI reliability and safety. Therefore, while our results reflect strong acceptance among a more educated and digitally literate population, users that are less-educated may have opposite attitudes. However, as our findings show, previous experience with AI or LLM technologies may mitigate such skepticism, suggesting that familiarity could play a key role in fostering broader acceptance across educational groups. Future studies should consider broader, more diverse populations to better capture the full spectrum of user acceptance.

Lastly, although previous experience with LLMs was identified as a significant moderator, the mechanisms through which user experiences are translated into trust remain underexplored. Future research should delve deeper into these psychological processes using quantitative or mixted research methods to enrich theoretical and practical insights.

Conclusion

This study examined the factors influencing users’ behavioral intention to adopt LLM-driven healthcare chatbots by developing an extended UTAUT framework that incorporates users’ previous experience with LLMs and telemedicine. The results reveal that social influence, performance expectancy, trust, and facilitating conditions significantly shape users’ adoption intentions, while effort expectancy plays a limited role. These findings suggest that as users become increasingly familiar with digital technologies, traditional usability concerns may diminish, giving greater importance to social and trust-related factors.

Moreover, prior experience with LLMs enhances user acceptance by reducing uncertainty and strengthening confidence in LLM-driven healthcare chatbots. This highlights the role of familiarity in promoting positive attitudes toward emerging healthcare technologies.

From a practical perspective, building user trust, ensuring data transparency, and promoting LLM-driven services through healthcare professionals and credible institutions can effectively increase users’ adoption. Moreover, fostering digital literacy through education and training may help mitigate the skepticism among users with limited technical experience.

Supplemental material

Supplemental Material - Public acceptance of LLM-driven healthcare chatbots in China: An empirical study

Supplemental Material for Public acceptance of LLM-driven healthcare chatbots in China: An empirical study by Ying Qian, Yuxin Fu, Yanting Chen, Ke Lu, Xin Zhao in DIGITAL HEALTH.

Footnotes

Acknowledgement

We sincerely appreciate the support and assistance provided by University of Shanghai for Science and Technology.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the National Social Science Fund of China (Grant Number: 20CSH092 & 22BGL240).

Declaration of conflicting interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Contributorship

Ying Qian conceptualized the research, completed the data analysis, and wrote the original manuscript. Yuxin Fu wrote the original manuscript and completed the risk-of-bias assessment and data extraction. Yanting Chen, Ke Lu and Xin Zhao revised and edited the manuscript. Xin Zhao is the Corresponding Author. All authors have read and approved the manuscript.

Guarantor

Ying Qian

Supplemental material

Supplemental material for this article is available online.