Abstract

Background

Older adults (65 and above) represent a significant portion of trauma admissions in U.S. hospitals, primarily due to falls and motor vehicle accidents. Managing pain effectively and mitigating social isolation in this population is crucial. Traditional opioid treatments, while common, pose risks such as addiction, delirium, and constipation, leading to extended hospital stays and increased costs. Social isolation is another common problem faced by this population that can also exacerbate pain. Nonpharmacological interventions are thus highly recommended.

Objective

This pilot study explores the feasibility and acceptability of employing social virtual reality (SVR) as a novel, nonpharmacological approach to address both pain and social isolation among older adult trauma patients.

Methods

The study employs a two-phase design to evaluate SVR's potential. This article describes Phase 1, which employed a user-centered, iterative design approach to enhance SVR's feasibility, acceptability, and usability in the target population. We presented existing versions of SVR applications using three platforms, VTimeXR, Spatial, and Engage, to 10 hospitalized older adult trauma patients and used in-depth interviews to gain patient feedback. We made iterative refinements based on this feedback.

Results

We present the results of Phase 1, which employed a user-centered, iterative design approach to develop a test environment for use in the second phase of the study. Participants who completed the study indicated environments could serve as effective distractions, facilitate social interaction, and evoke calming emotions. Participants suggested enhancing the realism of nature elements and offering more interactive features, such as tasks, games, or narratively compelling videos.

Conclusion

We successfully developed an SVR environment for older adult trauma patients, and early feasibility indicators showed interest and engagement from participants, providing insights to guide refinements for Phase 2 deployment.

Introduction

Over 25% of all trauma admissions to hospitals in the United States are of older adults (i.e., those aged 65 and above), primarily due to falls and motor vehicle accidents. 1 As the population ages, this proportion is expected to rise sharply, with projections suggesting that by 2050, nearly 40% of trauma patients will fall into this age group. 1 These patients often experience moderate-to-severe levels of pain, for which opioids are commonly prescribed despite their significant risks—such as addiction, delirium, constipation, and falls. 2 Opioid-related adverse events lead to longer hospital stays, increased costs, higher infection risks, and delays in outpatient rehabilitation. 2 Few nonpharmacological pain management approaches for this demographic are available, leading to calls for development and testing of such solutions. 3

In addition to pain experienced by many older adult patients, social isolation is also a growing concern, with far-reaching consequences for health and well-being. In younger adults, social isolation has been linked to suboptimal recovery, impaired psychological functioning, slower wound healing, and decreased sleep efficiency. 4 These effects are even more pronounced in older adults, who are at greater risk of developing depression, anxiety, and cognitive decline.5,6 Social support has long been noted to play a significant role in pain management7–9. Even a brief period of acute isolation, just one month, can lead to a disproportionate decrease in quality of life for older adults compared to their younger counterparts. 10

Effective patient care extends beyond treating physical ailments, requiring a holistic approach that addresses the patient's physical, mental, and social well-being. 11 Because social connection modulates pain perception and mood, social virtual reality (SVR) may concurrently address isolation and pain by enabling meaningful, synchronous interaction during hospitalization. This study aimed to develop an SVR environment to facilitate social connection among older adult trauma patients, using an iterative, user-centered design process to refine the system based on patient feedback.

Related work

Solo virtual reality (VR) experiences, which replace sensory information from the physical world with virtual content12,13 have demonstrated clinical efficacy for various symptoms that occur commonly in trauma patients, such as reducing pain 14 and facilitating routine care. 15 Pain reduction in solo VR experiences is often attributed to distraction, but other mechanisms such as affect or novel embodiment experiences have also been proposed to affect pain. 16 Virtual reality provides the experience of presence, or the “illusion of going into the virtual world,” 17 which not only provides novel content but can also distance patients from unpleasant aspects of their hospital experience. Indeed, research has shown that a higher self-reported sense of presence in virtual environments is linked to reduced pain levels.18,19

These salutary effects can be augmented by adding a social component to the virtual experience. Social presence, the sense of being with another person, can augment participants’ experience of presence overall. With the rapid commercialization of VR devices, various SVR applications have been created that can provide even greater feelings of presence and engagement. For example, Campbell et al. demonstrated that SVR, compared to traditional videoconferencing software, provided participants with greater feelings of social closeness, arousal, and presence. 20 Social interactions in VR have been linked to increased pain thresholds in induced-pain tasks in nonclinical populations compared to solo experiences in VR.21–23

For isolated patients, connecting with their loved ones through phone and video calls has been shown to reduce anxiety, 24 loneliness, 25 and physical distress. 26 In a small study, Kenyon et al. demonstrated that SVR reduced feelings of loneliness and social anxiety for young and old patients who were socially isolated during the pandemic. 4 Thus, SVR could be a viable way to bridge the gap between patients and their loved ones and improve patient symptoms and distress. As a preliminary step towards understanding the feasibility, usability and acceptability of SVR, we conducted an iterative design study, working with older adult patients hospitalized secondary to trauma, to iteratively create and refine a virtual environment appropriate for social interactions with friends and family. We asked participants to consider the feasibility and acceptability of using VR socially, that is, with friends or family members, while hospitalized, although in this phase of the study they experienced the virtual environments alone.

Previous work has demonstrated that older adults can benefit from immersive technologies such as virtual, augmented, and mixed reality (XR).27–31 For example, participants who used a VR system to view travel and relaxation content over a two-week intervention reported feeling less socially isolated, exhibiting fewer signs of depression, experiencing more frequent positive affect, and having an improved sense of overall well-being compared to a control group that viewed the same content on a TV. 32

Methods and materials

This article describes Phase 1 of a two-phase investigation designed to assess the feasibility of employing an SVR intervention in a hospital setting among older adult (65+ years) trauma patients. We began with a user-centered, iterative design process in which we gathered patient feedback on patients’ experiences with different VR environments. We then built and tested a custom virtual environment to be used by patients and their friends or family members in Phase 2. Phase 1 was not intended to test feasibility, acceptability, or usability, but rather to collect patient feedback on how the environment could be developed to meet these aims.

Equipment

We used Meta Quest 2 standalone headsets, which do not require an accompanying computer. This was particularly important in a hospital setting to avoid the risk of tripping or tangling in wires. The headset and its left and right hand controllers tracked participants’ head and hand positions and orientations, enabling them to interact with virtual content and for their avatars to mirror their movements in real time within VR.

Virtual environments

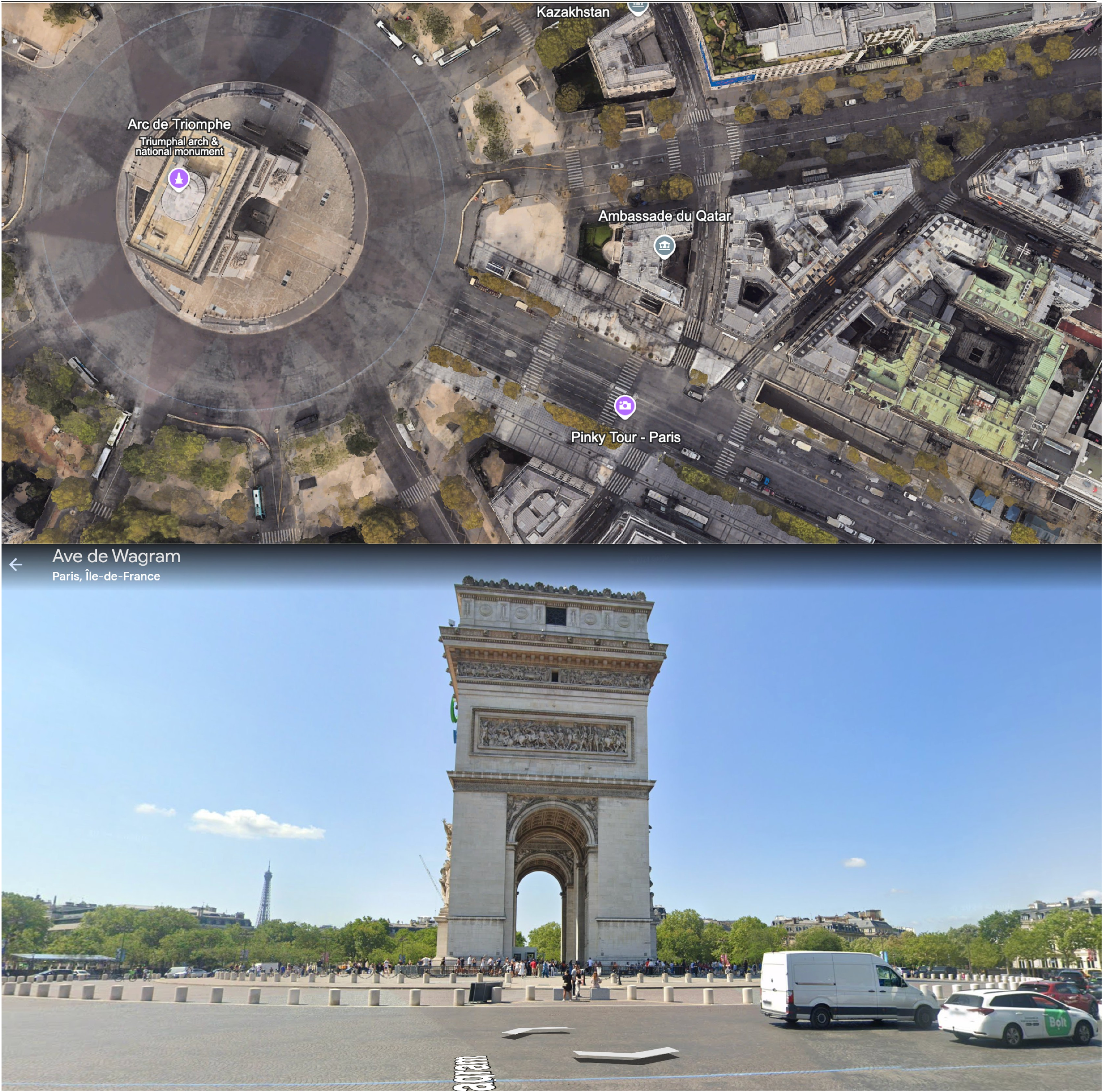

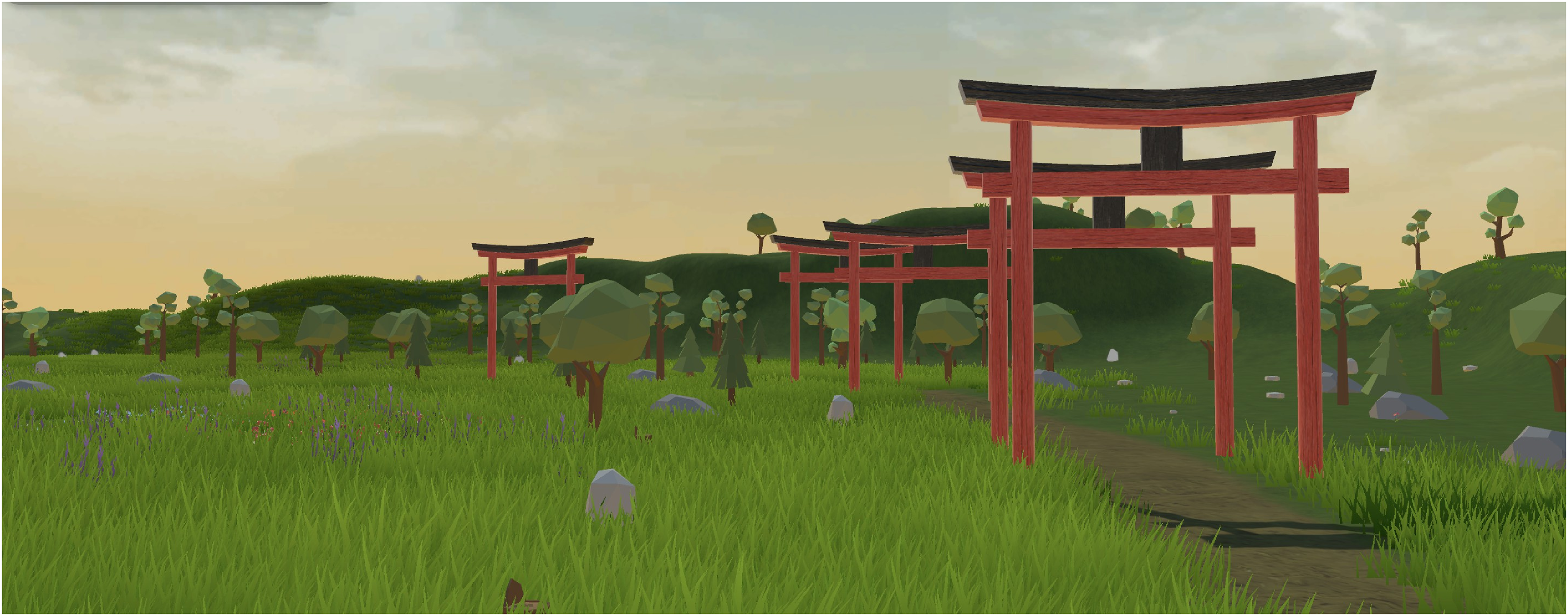

We had several criteria for virtual environments: with phase 2 in mind, they needed to have the option for networked connection to other users; they had to be usable from a seated position; and they needed to have nonpublic options so patients could view them privately without interacting with strangers. Based on these criteria, we selected several consumer applications that were widely available at the time of the study: vTime XR, 33 Google Earth, 34 YouTube, 35 and Spatial. 36 The social platform vTime XR (Figure 1) offers dozens of virtual environments with dynamic aspects such as moving animals, objects, and water, but users must remain seated and cannot explore within these environments. Google Earth allows participants to look at the Earth from above and then zoom in on a specific spot and view a static 360° image of the chosen location (Figure 2). (We only used Google Earth with the first three patients due to frequent technical difficulties.) We also showed participants a 360° video of a panda sanctuary on YouTube (Figure 3), which featured footage of baby pandas with narration giving background on the sanctuary. Finally, we custom-built an environment using the platform Spatial (Figure 4) based on the first five patients’ feedback. We introduced this environment halfway through the study, after hearing patients’ initial feedback, as it features game-centered virtual environments that can be experienced either in a closed room with invited users or in an open-multiplayer room with strangers, allowing free movement. We continued to show patients vTimeXR and the YouTube 360° video.

Two vTime XR Environments. Screenshots of the two vTime XR environments shown to participants. The top image is “Wilderness River,” a scenic wilderness scene with a calm river in the foreground, flowing past trees and mountains. A red canoe rests on the riverbank, and a waterfall is visible in the distance. The bottom image is “Paradise Resort,” a sunny beach with white sand, turquoise waters, and palm trees. Wooden lounge chairs and umbrellas are positioned near the shore, with a peaceful view of a sailboat in the distance.

Google Earth VR views. Two perspectives of the Google Earth environment used in the study. The top image shows a bird's-eye view of Paris; the bottom image shows a street-level view.

360° YouTube Panda video. A still from the 360° YouTube video featuring baby pandas, created by National Geographic. A baby panda looks up at the viewer as it climbs a tree, mouth open.

Custom Spatial.io environment. The custom-built environment created in Spatial based on feedback from study participants. It shows a series of red gates set against a grassy green backdrop.

Participants

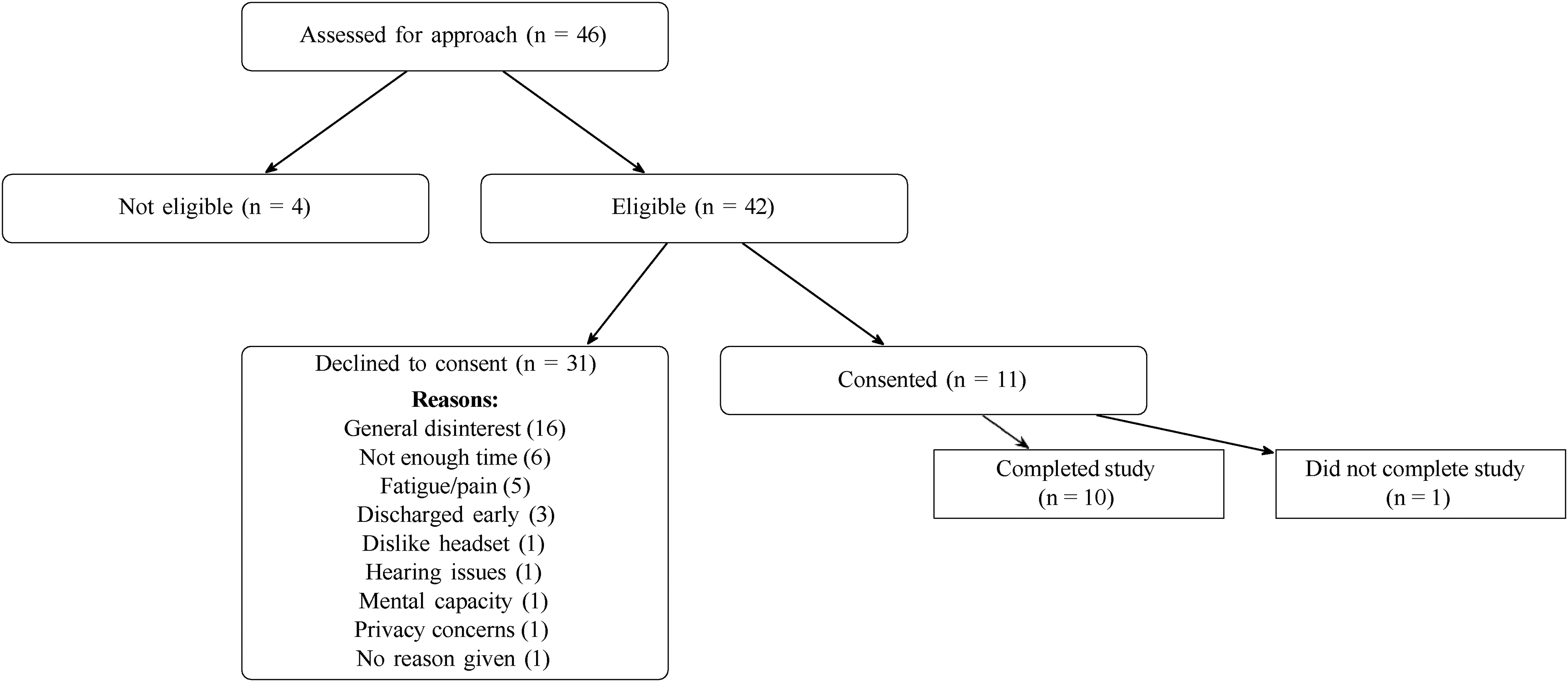

We recruited 11 participants. Ten of the eleven completed the study. (The patient who did not complete the study was withdrawn after reporting a minor cut on his head upon setup for the VR session, which had not been documented in the chart or noted by nursing staff prior to enrollment.) (Figure 5). Of the participants who completed the study, seven were female, and three were male, with an average age of 79.7 years. One identified as Black, one as mixed (Latino), one as White and Hispanic, six as White, non-Hispanic, and one as “other” (Table 1). All participants had normal or corrected-to-normal vision. We included patients 65 years or older who were hospitalized for physical trauma on the Institutional Review Board–approved floors of our hospital. This included surgical, burn, and surgical intensive care units. Burn patients were also eligible; however, we did not successfully recruit any burn patients. Patients with any of the following conditions were deemed ineligible:

Significant wounds or trauma in the areas of the head, arms, or hands that would make the use of VR controllers or headsets uncomfortable. Not oriented and alert to person, place, and time Under quarantine Not fluent in English Exhibiting signs of dizziness and/or imbalance Hearing or vision impairment preventing them from experiencing VR

CONSORT flow diagram of participant enrollment and retention. Flow diagram depicting patient eligibility screening, consent outcomes, and study completion. The figure shows the distribution of assessed patients (N = 46), reasons for declining participation, and final completion counts.

Participant demographics and injury characteristics.

Table 1 shows participant demographic information, including the injuries that led to our patients being hospitalized. This study protocol was reviewed by the Biomedical Research Alliance of New York under the protocol number 22–08–528–380, and all patients signed informed consent forms.

Selection

At the beginning of each week, we compiled a list of eligible participants using Weill Cornell Medicine (WCM) electronic medical record system, EPIC. A comprehensive patient list was generated within EPIC, drawing from all authorized units, and was continually updated with current admissions. Patients were initially sorted by age, and those aged 65 and older were screened further for eligibility based on diagnosis. Individuals with trauma- or burn-related injuries were flagged as potential candidates, while those with exclusionary conditions were moved to an ineligible list. For each eligible or potentially eligible patient, medical record numbers were shared with the principal investigators and hospital administrators for confirmation of eligibility prior to approach. When on unit, we then consulted the patients’ nurses to assess patient suitability for the study, accounting for limb weakness, confusion, and mood instability. Research assistants visited the hospital between 10 am and 5 pm for one or two days midweek to recruit patients. We approached patients between July and December of 2023. All identifiable data were kept on WCM's secure, HIPAA-compliant server, accessible only to chosen researchers who had to use their Weill Cornell logins to access the data. Transcripts were deidentified by research assistants and kept on Cornell Box afterward.

Procedure

After providing consent, participants were instructed by a research assistant on how to use the headset and hand controllers. Participants were then presented with the different VR environments described in Table 2. The interview section consisted of two distinct sets of questions, primary and follow-up, inspired by the UX laddering technique, which probes interviewees about their preferred environments. 37 After participants viewed each environment, we administered the questions, as shown in Table 3. After all environments were shown to participants, they were then asked to rank the environments, point out aspects of the environment that remained memorable, and identify differences that would lead them to choose one environment over another. The full script is available in Appendix A.

Audit trail of iterative design changes.

Note: Participant 7 did not complete the study and, as such, is not included on the list.

Interview questions the participants were asked after each VR experience.

Interviews were conducted in-person at the bedside of patients on the SICU and general surgery units at the Weill Cornell Medical Center. The interview team varied depending on availability, typically including two trained research assistants (one PhD student and one postbaccalaureate) and, in some cases, a principal investigator. Research assistants were trained by the PIs in qualitative interviewing and patient approach procedures prior to data collection. All interviews were audio-recorded using Zoom, and transcripts were subsequently verified for accuracy by a separate set of postbaccalaureate research assistants. The full interview guide is provided in Appendix A.

The whole in-headset experience took roughly an hour with no required follow-up. Participants were compensated with a $25 “Clincard,” a preloaded gift card that could be used similarly to a Visa gift card, and used at any place one would use a credit or debit card.

Results

To analyze participants’ open-ended responses, we employed a conventional content analysis strategy. 38 Two researchers first reviewed the audio transcriptions to gain a general sense of the content, then reread the transcripts to summarize each participant's comment and “shrink” these summaries into initial codes. The coders highlighted words and phrases that captured key thoughts or concepts and then regrouped and recoded transcripts using the finalized code set. Throughout the coding process, the first, second, third, and last authors met frequently to ensure consensus on all codes. Once consensus was reached, the team examined the full set of codes holistically to identify patterns and group them into higher-order categories. We monitored for thematic saturation and observed that no new codes emerged during further analysis. All codebooks, annotated transcripts, and code reorganizations were logged in dated Excel sheets to maintain an audit trail. Analysis began with participants’ preferences for virtual environments and progressed to insights related to the feasibility, usability, and acceptability of VR use for older adult trauma patients. Unfortunately, two out of the 10 participant interview recordings failed. While we kept note of their remarks and preferences, we thus cannot map them to exact codes, and they have been excluded from the interview analysis below. A table with all of our themes and codes is included in Appendix C.

Presence

The experience of presence varied among participants, with some expressing concerns about a lack of engagement and others finding the environments usefully distracting or therapeutic. Several participants noted that certain environments (n = 5), particularly vTime (n = 5), lacked engagement. For vTime, one participant noted that “[Using it] just to watch [something] I don’t see much point” (P5). On the other hand, some participants reported feeling positively distracted or “lost” in the environment (n = 5) in vTime (n = 2), YouTube (n = 2), Spatial (n = 1), and Google Earth (n = 1). As one participant mentioned, “I think it's very soothing and it feels as if you’re sitting by the riverbanks and you don’t think about anything else” (P3). A few participants (n = 3) noted that VR environments evoked nostalgia, reminding them of familiar places or past experiences. Additionally, two participants mentioned that VR distraction would help manage discomfort or pain. Unique concerns also emerged during feedback. For example, one participant did not like the YouTube content because the scientists’ lab coats in the panda video reminded them too much of being in a hospital.

Several participants found aspects of the VR environments to be unrealistic. Comments about the overall lack of realism were directed primarily at vTime and Spatial. Some noted that specific elements within these environments, such as the textures or proportions of objects, felt artificial. Part of the reason participants judged the environment as unrealistic was due to a lack of natural activity, as one participant noted that “…it lacks certain things. If I’m looking, and of course, it's supposed to be relaxing, but no one is going to look at the beach and see nothing. There's always activity” (P6). However, a few participants also characterized some aspects within vTime as realistic, highlighting how the birds flying or the water flowing added to the immersion.

Environmental features

Six out of 10 participants stated that natural elements, such as forests, rivers, and birds, evoked a sense of calm and relaxation. The beach and river environments featured in vTimeXR, in particular, were often associated with tranquility (n = 5). As one patient noted: “The first one was really very soothing. You could be there for a long while” (P3). Many comments related generally to the overall nature-based scenery, “[It was] very calm. Yeah, almost made me close my eyes” (P8), while others highlighted specific sensory details, such as the moving water, the colors of the scene, or the trees, which enhanced their experience. Beyond relaxation, participants commented on specific natural features that stood out. Eight out of 10 participants mentioned the presence of animals, with birds in vTime (n = 5) being a particularly popular aspect. As one participant remarked, “[it's] very really very well done how these birds are coming in and out. And the fish is coming in and out. And the deer is there and there and there. It's very well done, there's no question” (P10). Others appreciated the playfulness of the pandas (n = 3) featured in the YouTube environment, calling them “absolutely adorable.”

Interactivity

Most participants expressed a desire for an environment for content with a storyline or narrative elements (n = 7). As one individual stated, “The second one was more interesting because there was something going on” (P5). Following from this, the YouTube environment's nature video about pandas sparked interest and curiosity from most participants (n = 7) as well, although one desired a video even more casual or entertaining: “I would have probably liked to—you know, like, just relax, and would’ve liked to just watch them [the pandas] playing, not where it's like the scientists talking and the lab coats” (P4). Although two participants noted they wouldn’t rewatch the specific video again in the future, the environment's narrative framework was highly acceptable to the participants. Overall, participants supported incorporating a storyline or progression, noting that such features added depth to their experience.

The Spatial environment allowed participants to navigate throughout the environment by button press or using the joystick. Three different participants preferred to be able to move around compared to a stationary environment, while two were indifferent to the feature. Another patient identified drawing in the Spatial environment as his favorite because “you could do something” (P5). Two participants wanted gamification of the environments with tasks or activities. One patient mentioned interactivity specifically to engage younger users: “Even though the place is nice and engaging. They’ll [grandchildren] just be sitting there. Children are not going to want to just sit, right” (P6).

Usability, acceptability, and accessibility

While the focus of our interviews was on identifying desirable features of the environment, the recorded interviews also provided a rich record of participants’ challenges while using the VR interface. We present these comments below.

Physical Interface: Hand controllers emerged as a notable source of difficulty for several participants. Five participants expressed frustration or at least initial difficulty with finger placement, indicating that the design required a period of adjustment. As one participant remarked: “I’m just having trouble manipulating. I’m having trouble with my hands” (P5). Difficulty with learning how to use the controller caused issues for subjects when using essential items (i.e., navigating the menu) or interactive features within environments (i.e., controlling the avatar).

The headset itself also posed challenges. Four participants found wearing glasses within the headset challenging. Maintaining the fit of the headset was also challenging as at times the headset would slip and require multiple adjustments (n = 2). Two participants even noted discomfort due to the headset being “heavy” or “starting to hurt” their face. These physical discomforts not only interrupted immersion but also hinted at barriers to prolonged use. The visual scale in YouTube specifically presented another sensory challenge, as three participants described the visuals as appearing too large or too close, which disrupted their ability to fully engage with the environment. One participant shared that, “It could be because of my lenses. It's almost as if I was sitting in the 2nd row of the theater. And I would need it to be pushed back” (P6); this suggests that an imbalance in visual scale can be a barrier to immersion. Visual blurriness, which could be fixed by adjusting the vertical position of the headset on patients’ faces, also decreased participants’ sense of presence. While one participant found that sound effects added depth to the environment, others noted that low or unclear audio disrupted their immersion.

UX and Navigational Issues: Participants encountered various usability issues tied to the user interface and navigation of the VR system, which at times disrupted the flow of the experience. A recurring challenge was the presence of pop-up notifications (n = 4), such as microphone or unmute prompts. Such intrusive notifications detracted from the experience. In our first few sessions, passing the headset from researcher to participant occasionally required resetting the device's “boundary.” This can be partially fixed by using a room-scale boundary (a safety feature that detects how close someone is to the edge of a preset area that is free of obstacles). Participants then only need to press the reset button to recalibrate the headset to their seated height. Some participants struggled with basic tasks, such as navigating menus to switch applications (n = 1), while others faced technical difficulties, such as a YouTube video failing to load (n = 1) or long wait times during transitions (n = 1) and in-app navigation, for example, Google Earth (n = 1).

Acceptability: Participants’ feedback on the overall acceptability of VR equipment revealed a spectrum of perspectives, often shaped by personal experiences and expectations. Some participants (n = 3) suggested VR is especially suitable for the younger demographic, with two participants explicitly expressing skepticism about its suitability for older adults. Three participants felt that they would be likely to choose more familiar devices over VR, as one participant noted: “I mean, if I want to watch animal videos, I can turn on the TV and watch them much more easily” (P5). Two participants pointed out the learning curve associated with using the headset for the first time. While they adapted over time, the initial unfamiliarity added a layer of complexity to their introduction to VR. One participant suggested adding more options, choices, and/or environments to VR because “so far it's limited” (P10). Another participant speculated that hospitalized patients might focus more on their recovery than on exploring novel technologies like VR. Pain from a patient's preexisting condition was also cited as a reason for interrupting the VR experience. Despite these challenges, some participants recognized the potential and utility of VR. A few expressed prior knowledge (n = 2), with one stating, “I’ve read a lot about it. I know more or less what's going on” (P5), which reflected his openness to engage with the technology. Others saw promise in VR's future utility (n = 3), saying that it has potential for substantial future impact. However, one participant raised the question of whether VR currently offers meaningful value, suggesting that the patient does not perceive it as something that suits his needs. Several participants (n = 6) noted that their family members or friends might be inclined to join them in certain VR environments: vTime (n = 5). YouTube (n = 3), Spatial (n = 2), and Google Earth (n = 1). Participants attributed this interest to the environments’ engaging content and design, and noted the potential for these experiences to help combat loneliness. However, some participants (n = 3) were uncertain whether these environments would resonate with their loved ones. For instance, one participant reflected on the appeal of Spatial, stating: “I mean, there's nothing to not like about it, but I don’t know that they would think it was a unique place to hang out, go together. I mean, in real life it would be, but I feel like it doesn’t translate as much virtually.”

Feasibility

Feasibility was measured by recruitment numbers. Of the 46 patients who were approached, 11 consented to participate (Figure 5). Availability was a particularly significant factor. Six individuals stated they did not have enough time due to other obligations, and another three were unavailable to participate because they were too close to discharge.

Cybersickness

Seven participants explicitly reported no issues with cybersickness, one individual experienced cybersickness in the YouTube environment (related to the large size of images mentioned above), and two other participants did not provide information regarding motion sickness symptoms. Upon participants expressing any cybersickness symptoms, we removed them from the VR headset and waited with the participant until they felt more balanced. As we were in a hospital, we could call for a nurse if symptoms were more severe, but that did not occur.

Resulting environment

The resulting environment went through two iterations. We first generated a draft version in Spatial to elicit comments during the second half of the interview study, and then moved to Engage VR 39 for the final version to be deployed in Phase 2 in order to take advantage of that platform's ability to record data. The initial environment focused on creating a soothing, nature-based atmosphere. It featured a wide grassy plane with a series of arches that participants could walk under, as well as lots of trees and plants. The second environment mimicked the first in terms of layout and nature (see Figure 6), but added animals at the request of participants who felt that there was not enough happening. To address interest in narratives or games, we included small game-like tasks that users could complete, mostly locating various elements in the environment. Completion of each task would trigger audio feedback in the form of a bell. The games were slightly different in both versions, due to the affordances of each platform. But, both challenged the participants to walk through a series of gates and to find a hidden garden (Figure 7).

Initial vs. Revised Environment. Side-by-side comparison of the initial VR environment (left), built in Spatial, and the revised environment (right), built in Engage. Both are grassy nature environments, but the animation style is different, and the Engage environment includes animals.

Gamified environment prompts. Screenshots of gamification elements added to the virtual environments. From left to right: gates from the original Spatial version, gates from the Engage version, a prompt to find the flower garden in Spatial, and the corresponding prompt in Engage.

Discussion

This design study represents the first phase of a two-phase feasibility pilot, with the primary goal of developing an SVR environment to be deployed in the subsequent trial. While this stage focused on building and refining the intervention, we also examined early feasibility indicators. Despite a recruitment rate of 22%, many patients expressed interest in participation but were otherwise ineligible, most often due to scheduling conflicts or imminent hospital discharge. While some participants preferred more familiar technologies, many participants expressed enthusiasm for the potential of SVR as a therapeutic tool. They appreciated the calming and engaging nature of the environments, particularly those with natural elements and narrative content. These findings indicate that, while participation can be challenging, the SVR environment developed in this study may be suitable for future exploration with older trauma patients.

Some participants experienced usability barriers with the physical interface and controllers, specifically with finger placement and the need to adjust the headset. Additionally, some participants experienced challenges with the visual scale and audio clarity, which disrupted their immersion. While these issues are not uncommon in this age group,40,41 these findings highlight the importance of user-friendly designs and clear, high-quality sensory input.

Nature-based environments were generally well-received, eliciting a sense of calm and relaxation.42,43 Moving water, colors, and the presence of animals, particularly birds, were highlighted as enhancing the experience, supporting recent work on moving rather than static images. 44 The desire for narrative or storytelling elements was also notable, with participants showing greater interest in environments that included a storyline or engaging content. These findings informed the design of the environment created for Phase 2 of this study.

Limitations

The study team encountered several challenges in the recruitment process. Trauma patients are frequently going in and out of physical therapy, speaking to doctors and nurses, and coordinating their discharge from the hospital and into rehabilitation facilities. When they are not occupied, they are often napping. The focus on getting people out of the hospital and back home after surgery as quickly as possible meant there was a very narrow window for the research team to approach patients and complete the study. Another possible limitation is that people who are on the trauma unit early in the week might be demographically different from those who are there on the weekend. Finally, patients in this hospital were generally of higher income, and as such had access to their own personal devices to keep them entertained, as well as sometimes rooms with pleasant views of the surrounding city. Such amenities may reduce the need for this kind of intervention.

Our eligibility criteria likely biased the sample toward patients who were cognitively intact, physically able, English-speaking, and able to tolerate VR. As a result, patients with more severe injuries, sensory impairments, dizziness, or cognitive or language barriers were underrepresented.

Additionally, while we did work with hospital care providers to screen and gain access to patients, we did not formally interview providers about their thoughts on SVR. This is intended to be a part of phase 2. Consistent with recommendations in prior digital health research with older adults, 41 future work should also examine longer-term use and outcomes of SVR interventions.

Next steps

Phase 2 of the study builds on the environment we created and the feedback we received in Phase 1. In Phase 2, headsets are provided to patients and a family member or friend designated by the patient. The participant and their partner will be able to interact in the SVR environment we created. We aim to further explore feasibility, acceptability, and usability through self-report surveys and by tracking the frequency and duration of headset use. A full record of the measures we plan to use is included in Appendix B. The results from both phases will provide comprehensive insights into the practicality and therapeutic potential of SVR for older patients in a clinical setting.

We will also seek to accommodate the accessibility issues we encountered in the study. For issues with hand controllers, we can employ alternate navigation, such as hand tracking, or even navigation through head movement. The hardware issues are also addressed by employing glasses spacers to make more room in headsets, replacing the existing Quest 2 straps with alternate straps that have more size variability, and allowing participants to switch out the facial interface for an alternate-sized one.

Supplemental Material

sj-pdf-1-dhj-10.1177_20552076261430608 - Supplemental material for Patient input on the design of a social virtual reality environment for hospitalized older adult trauma patients: Phase 1 of a usability, acceptability, and feasibility pilot study

Supplemental material, sj-pdf-1-dhj-10.1177_20552076261430608 for Patient input on the design of a social virtual reality environment for hospitalized older adult trauma patients: Phase 1 of a usability, acceptability, and feasibility pilot study by S Isabelle McLeod Daphnis, Reece Simpson, Max Accurso, Ella Blicker, Mariel Emrich, Olivia Baryluk, Chun Yun (Amy) Hsu, Robert J Winchell, Sara Czaja, M Carrington Reid, JoAnn Difede and Andrea Stevenson Won in DIGITAL HEALTH

Footnotes

Acknowledgements

The authors would like to thank Jewel Escosio and Jean Fitzgerald from the New York-Presbyterian (NYP)/Weill Cornell Medical Center Trauma team for their help reviewing patients.

ORCID iDs

Ethical approval

This study protocol was reviewed by the Biomedical Research Alliance of New York (BRANY) under the protocol number 22–08–528–380, “Social Virtual Reality for Pain Management in Burn and Trauma Patients: Feasibility Component.”

Consent to participate

All participants read and signed printed consent forms, which research assistants also explained to them verbally.

Consent for publication

Participants were aware that data collected from their participation would be published. They signed a consent form which explained: “The results of this research project may be presented at meetings or in publications; however, you will not be identified in these presentations and/ or publications.”

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the National Institute on Aging [grant number 1R03AG080413–01].

Declaration of conflicting interests

The authors declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: Author Andrea Stevenson Won has previously received funding from Meta. All other authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data availability

The data supporting the findings of this study are not publicly available due to strict privacy and confidentiality considerations. The dataset includes audio recordings, screen recordings, and information extracted from participants’ medical charts, all of which contain sensitive and potentially identifiable information from hospitalized patients. In accordance with IRB-approved protocols and ethical standards for working with vulnerable populations, these data cannot be shared.

Supplemental material

Supplemental material for this article is available online.